Abstract

Living and non-living active matter consumes energy at the microscopic scale to drive emergent, macroscopic behavior including traveling waves and coherent oscillations. Recent work has characterized non-equilibrium systems by their total energy dissipation, but little has been said about how dissipation manifests in distinct spatiotemporal patterns. We introduce a measure of irreversibility we term the entropy production factor to quantify how time reversal symmetry is broken in field theories across scales. We use this scalar, dimensionless function to characterize a dynamical phase transition in simulations of the Brusselator, a prototypical biochemically motivated non-linear oscillator. We measure the total energetic cost of establishing synchronized biochemical oscillations while simultaneously quantifying the distribution of irreversibility across spatiotemporal frequencies.

Similar content being viewed by others

Introduction

In many-body systems, collective behavior that breaks time-reversal symmetry can emerge due to the consumption of energy by the individual constituents1,2,3. In biological, engineered, and other naturally out of equilibrium processes, entropy must be produced so as to bias the system in a forward direction4,5,6,7,8,9. This microscopic breaking of time reversal symmetry can manifest at different length and time scales in different ways. For example, bulk order parameters in complex reactions can switch from exhibiting incoherent, disordered behavior to stable static patterns10,11 or traveling waves of excitation12,13 that break time reversal symmetry in both time and space simply by altering the strength of the microscopic driving force. Recent advances in stochastic thermodynamics have highlighted entropy production as a quantity to measure a system’s distance from equilibrium14,15,16,17,18,19. While much work has been done investigating the critical behavior of entropy production at continuous and discontinuous phase transitions20,21,22,23,24,25,26,27,28, dynamical phase transitions in spatially extended systems have only recently been investigated, and to date no non-analytic behavior in the entropy production has been observed29,30.

To address this, we introduce what we term the entropy production factor (EPF), a dimensionless function of frequency and wavevector that measures time reversal symmetry breaking in a system’s spatial and temporal dynamics. The EPF is a strictly non-negative quantity that is identically zero at equilibrium, quantifying how far individual modes are from equilibrium. Integrating the EPF produces a lower bound on the entropy production rate (EPR) of a system. We illustrate how to calculate the EPF directly from data using the analytically tractable example of Gaussian fields obeying partly relaxational dynamics supplemented with out of equilibrium coupling31. We then turn to the Brusselator reaction-diffusion model for spatiotemporal biochemical oscillations to study the connections between pattern formation and irreversibility. As the Brusselator undergoes a Hopf bifurcation far from equilibrium, its behavior transitions from incoherent and localized to coordinated and system-spanning oscillations in a discontinuous transition. The EPF quantifies the shift in irreversibility from high to low wave-number as this transition occurs, but the EPR is indistinguishable from that of the well-mixed Brusselator where synchronization cannot occur. Importantly, the EPF can be calculated in any number of spatial dimensions, making it broadly applicable to a wide variety of data types, from particle tracking to 3+1 dimensional microscopy time series.

Results

Entropy production factor derivation

Consider a system described by a set of M real, random variables obeying some possibly unknown dynamics. A specific trajectory of the system over a total time T is given by X = {Xi(t)∣t ∈ [0, T]}. Given an ensemble of stationary trajectories, the average EPR, \(\dot{S}\), is bounded by5,6,32

where we have set kB = 1 throughout and DKL denotes the Kullback–Leibler divergence which measures the distinguishability between two probability distributions. \(P\left[{\bf{X}}\right]\) and \(P[{\widetilde{\bf{X}}}]\) are the steady-state probability distribution functionals of observing the path X(t) of length T and the probability of observing its reverse path, respectively. Therefore, the KL divergence in Eq. (1) measures the statistical irreversibility of a signal, and saturates the bound when X contains all relevant, non-equilibrium degrees of freedom.

We further bound the irreversibility itself by assuming the paths obey a Gaussian distribution. Writing the Fourier transform of Xi(t) as xi(ω), where ω is the temporal frequency, and writing the column vector \({\bf{x}}(\omega )={\left({x}^{1}(\omega ),{x}^{2}(\omega ),\ldots \right)}^{T}\):

where x† denotes the conjugate transpose of the vector x evaluated at the discrete frequencies ωn = 2πnT−1. C(ωn) is the covariance matrix in Fourier space with elements \({C}^{ij}({\omega }_{n})=\left\langle {x}^{i}({\omega }_{n}){x}^{j}(-{\omega }_{n})\right\rangle {T}^{-1}\), and Z is the partition function. The expression for \(P[{\widetilde{\bf{x}}}]\) is identical but with C−1(ωn) → C−1(−ωn) [see Supplementary Note 1]. Combining Eq. (1) with Eq. (2) and taking T → ∞, we arrive at our main result:

This defines the EPF, \({\mathcal{E}}(\omega )\), which measures time reversal symmetry breaking interactions between M ≥ 2 variables, while integrating \({\mathcal{E}}\) gives \(\dot{S}\). \({\mathcal{E}}(\omega )={D}_{{\rm{KL}}}(P[{\bf{x}}(\omega )]\,| | \,P[{\widetilde{\bf{x}}}(\omega )])\) measures the Kullback–Leibler divergence between the joint distribution of M modes at a single frequency ω. While this quantity does not scale with trajectory length, the density of modes near a particular frequency is related to the total trajectory time by Δω = 2πT−1. Since ± ω modes must be complex conjugates of each other and an overall average phase is prohibited by time translation invariance, asymmetry between these distributions can only be captured by relative phase relationships, quantified by their correlation functions. \({\mathcal{E}}\) is large when one variable tends to lead another in phase, implying a directed rotation between these variables in the time domain.

As mentioned above, P[x(ω)] describes the dynamics of a non-equilibrium steady state, and no reversal of external protocol is assumed. Further, in writing an expression for \(P[{\widetilde{\bf{x}}}(\omega )]\), we assume that the observables are scalar, time-reversal symmetric quantities, such as the chemical concentrations we analyze below.

The Gaussian assumption we make here makes Eq. (3) exact only for systems obeying linear dynamics. Nevertheless, \({\mathcal{E}}\) is still defined for non-linear systems, where the integrated \({\mathcal{E}}\) lower bounds the true \(\dot{S}\). To see this, consider projecting complex dynamics onto Gaussian dynamics by choosing a data processing procedure which preserves two point correlations but which removes higher ones. This can be accomplished by multiplying every frequency by an independent random phase — a post-processing procedure which can be applied to individual trajectories. Post-convolution, the integrated EPF is equal to the KL divergence rate between forward and backwards rates. From the data processing inequality, the KL divergence rate of the true fields must be higher, so that the integrated EPF lower bounds the true entropy production rate [see Supplementary Note 2]. In addition to bounding the true \(\dot{S}\), we expect the integral of \({\mathcal{E}}\) to be a good approximation for the wide class of systems where linearization is reasonable. Such Gaussian approximations are starting points in many field theories, with higher order interactions accounted for by adding anharmonic terms in the action of Eq. (2). While this is not our focus here, we expect these additional terms to systematically capture corrections to \(\dot{S}\) that do not appear in Eq. (3). As Cii(ω) = Cii(−ω), the only contributions to \({\mathcal{E}}\) come from the cross-covariances between the random variables of interest. As such, this bound yields exactly 0 for a single variable even though higher order terms may contribute to \(\dot{S}\).

This formulation extends naturally to random fields. For M random fields in d spatial dimensions, \({\boldsymbol{\phi }}=\{{\phi }^{i}({\bf{x}},t)| t\in [0,T],{\bf{x}}\in {{\mathbb{R}}}^{d}\}\), the EPR density, \(\dot{s}\equiv \dot{S}/V\) where V is the system volume, is:

where Cij(q, ω) is the dynamic structure factor and \({\mathcal{E}}({\bf{q}},\omega )\) is now a function of wavevector q and frequency ω [see Supplementary Note 1].

Even without an explicit, analytic expression for the structure factor, C, we can estimate \({\mathcal{E}}\) from data. To use Eq. (4), we consider data of N finite length trajectories of M variables over a time T in d spatial dimensions. Each dimension has a length Li. We create an estimate of the covariance matrix, \(\widetilde{C}({\bf{q}},\omega )\), from time-series using standard methods [see Methods]. These measurements will inevitably contain noise that is not necessarily time-reversal symmetric, even for an equilibrium system. Noise due to thermal fluctuations and finite trajectory lengths in the estimate of \(\widetilde{C}\) from a single experiment (N = 1) will systematically bias our estimated \({\mathcal{E}}\) by \(\Delta {\mathcal{E}}=\frac{M(M-1)}{2}\) at each frequency and will thereby introduce bias and variance in our measurement of \(\dot{s}\). We can simply remove the bias from our measured \({\mathcal{E}}\), but to reduce the variance, we smooth \(\widetilde{C}\) by component-wise convolution with a multivariate Gaussian of width \({\boldsymbol{\sigma }}=({\sigma }_{{q}_{1}},\ldots ,{\sigma }_{{q}_{d}},{\sigma }_{\omega })\) in frequency space, giving \(\hat{C}\). This is equivalent to multiplying each component of the time domain \(\widetilde{C}({\bf{r}},t)\) by a Gaussian, cutting off the noisy tails in the real space covariance functions at large lag times. We then use \(\hat{C}\) in Eq. (4) to create our final estimator for the EPF, \(\hat{{\mathcal{E}}}\), and thereby the EPR, \(\hat{\dot{s}}\). We calculate and remove the bias in \(\hat{{\mathcal{E}}}\) and \(\hat{\dot{s}}\) in all results below [see Methods]. Smoothing \(\widetilde{C}\) with increasingly wide Gaussians in ω and q leads to a systematic decrease in \(\hat{\dot{s}}\) due to reduced amplitudes in \(\widetilde{C}\) (Supplementary Notes 3 and 4, Supplementary Fig. 1).

To illustrate the information contained in \({\mathcal{E}}\), its numerical estimation, and the accuracy of \(\hat{\dot{s}}\), we analyze simulations of coupled, one-dimensional Gaussian stochastic fields for which \({\mathcal{E}}\) and \(\dot{s}\) can be calculated analytically. We then study simulations of the reaction-diffusion Brusselator, a prototypical model for non-linear biochemical oscillators, and use \({\mathcal{E}}\) to study how irreversibility manifests at different time and length scales as the system undergoes a Hopf bifurcation33.

Driven Gaussian fields

Consider two fields obeying Model A dynamics31 with non-equilibrium driving parametrized by α:

where ξ(x, t) is Gaussian white noise with variance \(\left\langle {\xi }^{i}({\bf{x}},t){\xi }^{j}({\bf{x}}^{\prime} ,t^{\prime} )\right\rangle ={\delta }^{ij}\delta ({\bf{x}}-{\bf{x}}^{\prime} )\delta (t-t^{\prime} )\), D is a relaxation constant, and \(\delta {\mathcal{F}}/\delta \phi\) is the functional derivative with respect to ϕ of the free energy \({\mathcal{F}}\) given by:

so that the fields have units of ℓ1/2 and r penalizes large amplitudes.

The EPR density, \(\dot{s}\), is calculated analytically in two ways. First, we solve Eq. (1) directly using the Onsager–Machlup functional for the path probability functional of \({\boldsymbol{\eta }}(x,t)={\left(\phi (x,t),\psi (x,t)\right)}^{T}\)4,34. Second, the covariance matrices are calculated analytically, used to find \({\mathcal{E}}\) through Eq. (4), and integrated to find \(\dot{s}\). Both cases give the same result for \(\dot{s}\). The result for both \({\mathcal{E}}\) and \(\dot{s}\) are [see Supplementary Note 5]:

We see that \({{\mathcal{E}}}^{{\rm{DGF}}}\ge 0\) and exhibits a peak at (q, ω) = (0, ω0(0)), where \({\omega }_{0}(q)=\sqrt{{(D(r+{q}^{2}))}^{2}+{\alpha }^{2}}\), indicating that the system is driven at all length scales with a driving frequency of α, dampened by an effective spring constant Dr. In addition, it is clear that multiple combinations of α, r, and D can give the same value for \(\dot{s}\) while \({\mathcal{E}}\) distinguishes between equally dissipative trajectories in the shape and location of its peaks. In this way, \({\mathcal{E}}\) gives information about the form of the underlying dynamics not present in the total EPR. We note that \({{\mathcal{E}}}^{{\rm{DGF}}}\) is also recovered using an appropriately modified version of the generalized Harada–Sasa Relation introduced in 34 [see Supplementary Note 6].

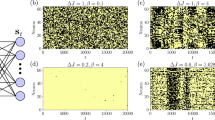

We perform simulations to assess how well \({\mathcal{E}}\) can be extracted from time series data of fields [see methods for details]. The estimated \(\hat{{\mathcal{E}}}\) shows excellent agreement with Eq. (7) (Fig. 1). Integrating \(\hat{{\mathcal{E}}}\) gives \(\hat{\dot{s}}\), which also shows good agreement with \({\dot{s}}^{{\rm{DGF}}}\).

a Snapshot of typical configurations of both fields, ψ (blue solid line) and ϕ (orange dashed line) obeying Eq. (5) for α = 7.5. b Subsection of a typical trajectory for one field for α = 7.5 in dimensionless units. Colors indicate the value of the field at each point in spacetime. c \(\hat{{\mathcal{E}}}\) for α = 7.5 averaged over N = 10 simulations. Contours show level sets of \({{\mathcal{E}}}^{{\rm{DGF}}}\). d Measured \(\dot{s}\) vs. α for simulations of total time T = 50 and length L = 12.8. Red line shows the theoretical value, \({\dot{s}}^{{\rm{DGF}}}\). Mean ± s.d. of \(\hat{\dot{s}}\) given by black dots and shaded area. See Supplementary Table 1 for all simulation parameters.

Our estimator gives exact results for the driven Gaussian fields because the true path probability functional for these fields is Gaussian. In contrast, the complex patterns seen in nature arise from systems obeying highly non-linear dynamics. For such dynamics, our Gaussian approximation is no longer exact but provides a lower bound on the total irreversibility. To investigate how irreversibility correlates with pattern formation, we study simulations of the Brusselator model for biochemical oscillations35. We begin by describing the various dynamical phases of the equations of motion. Next, we calculate \({\mathcal{E}}\) and \(\dot{S}\) for only the reactions before adding diffusion to study the synchronized oscillations that arise in the one-dimensional reaction-diffusion system.

Reaction-diffusion Brusselator

We use a reversible Brusselator model30,35,36,37 with dynamics governed by the reaction equations:

where {A, B, C} are external chemical baths with fixed concentrations {a, b, c}, and all the reactions occur in a volume V (Fig. 2a). The system is in equilibrium when the external chemical baths and reaction rates obey \(b{k}_{2}^{+}{k}_{3}^{+}=c{k}_{2}^{-}{k}_{3}^{-}\). When this equality is violated, the system is driven away from equilibrium and exhibits cycles in the (X, Y) plane. Defining

the Brusselator is at equilibrium when Δμ = 0 and is driven into a non-equilibrium steady state when Δμ ≠ 0. We vary b and c to change Δμ while keeping the product \((b{k}_{2}^{+}{k}_{3}^{+})(c{k}_{2}^{-}{k}_{3}^{-})=1\), keeping the rate at which reactions occur constant for all Δμ38.

a Typical trajectory in (X, Y) space for Δμ = 6.2. The occupation probability distribution is shown in blue, with a subsection of a typical trajectory shown in black. The end of the trajectory is marked by the white circle. Inset shows the same information for the system at equilibrium, where Δμ = 0, with the same colorbar as the main figure. b \(\hat{{\mathcal{E}}}\) for Δμ = [3.5, 5.3, 6.2] shown in green, orange, and purple, respectively. Shaded area shows mean ± s.d. of \(\hat{{\mathcal{E}}}\) for N = 50 simulations. \(\hat{{\mathcal{E}}}\) is symmetric in ω, so only the positive axis is shown. Inset shows the same curves on a log-log scale. c \(\dot{S}\) as a function of Δμ. Blue squares, orange triangles, and black circles show results for \({\dot{S}}_{{\rm{true}}}\), \({\dot{S}}_{{\rm{blind}}}\), and \(\hat{\dot{S}}\), respectively. Shaded area shows mean ± s.d. of \(\hat{\dot{S}}\) for N = 50 simulations. Vertical red dashed line indicates ΔμHB. See Supplementary Table 2 for all simulation parameters.

As Δμ increases, the macroscopic version of Eq. (8) undergoes dynamical phase transitions. For all Δμ, there exists a steady state (Xss, Yss), the stability of which is determined by the relaxation matrix, R [Supplementary Note 7]. The two eigenvalues of R, λ±, divide the steady state into four classes33:

-

\({\lambda }_{\pm }\in {{\mathbb{R}}}_{\,{<}\,0}\to\) Stable attractor, no oscillations

-

\({\lambda }_{\pm }\in {\mathbb{C}},\,{\rm{Re}}[{\lambda }_{\pm }] \, < \, 0\to\) Stable focus

-

\({\lambda }_{\pm }\in {\mathbb{C}},\,{\rm{Re}} [{\lambda }_{\pm }] \, > \, 0\to\) Hopf Bifurcation, limit cycle

-

\({\lambda }_{\pm }\in {{\mathbb{R}}}_{ \,{> }\,0}\to\) Unstable repeller

The eigenvalues undergo these changes as Δμ changes, allowing us to consider Δμ as a bifurcation parameter. We define ΔμHB as the value of Δμ where the macroscopic system undergoes the Hopf bifurcation.

Non-equilibrium steady states are traditionally characterized by their circulation in a phase space39,40,41,42,43. One may then question how it is possible to detect non-equilibrium effects in the Brusselator when the system’s steady state is a stable attractor with no oscillatory component. While this is true for the macroscopic dynamics used to derive λ±, we simulate a system with finite numbers of molecules subject to fluctuations. These stochastic fluctuations give rise to circulating dynamics, even when the deterministic dynamics do not36. We see persistent circulation in the (X, Y) plane when \({\lambda }_{\pm }\in {{\mathbb{R}}}_{\,{<}\,0}\), with the vorticity changing sign around Δμ = 0 (Supplementary Fig. 2).

In order to assess the accuracy of our estimated EPR, \(\hat{\dot{S}}\), we calculate an estimate of the true EPR, \({\dot{S}}_{{\rm{true}}}\), for a simulation of Eq. (8) by calculating the exact entropy produced by each reaction that occurs in the trajectory44, and then fitting a line to the cumulative sum (Supplementary Fig. 3, Methods). We find that \(\hat{\dot{S}}\) significantly underestimates \({\dot{S}}_{{\rm{true}}}\) (note the logged axes in Fig. 2c) due to the Brusselator’s hidden dynamics. In the Brusselator, information is lost because the observed trajectories are coarse-grained — they do not distinguish between reactions that take place forward through the second reaction or backwards through the third reaction in Eq. (8). These pathways would be distinguishable if trajectory of B and C were also observable. Our method relies purely on system dynamics to give \(\hat{\dot{S}}\). Eq. (1) is true only if all microscopic details are captured by trajectories X. If X is already coarse-grained, multiple microscopic trajectories will be indistinguishable and Eq. (1) will underestimate the true entropy production rate due to the data processing inequality 19,45,46.

In order to account for this, we recalculate \(\dot{S}\) by considering the rate at which a given transition can occur as the sum over all chemical reactions that give the same dynamics [see Methods]. For example, a transition from (X, Y) → (X − 1, Y + 1) can occur via reaction \({k}_{2}^{+}\) or \({k}_{3}^{-}\) in the Brusselator, each of which produces a different amount of entropy in general. Looking only in the (X, Y) plane, it is impossible to tell which reaction took place. When calculating the entropy produced by only the observable dynamics, the rate of making the transition (X, Y) → (X − 1, Y + 1) is \({k}_{{\rm{f}}}={k}_{2}^{+}+{k}_{3}^{-}\), while the rate of making the reverse transition is \({k}_{{\rm{r}}}={k}_{2}^{-}+{k}_{3}^{+}\), and the entropy produced is \({\mathrm{log}}\,\frac{{k}_{{\rm{f}}}}{{k}_{{\rm{r}}}}\). This estimate of the EPR, which we name \({\dot{S}}_{{\rm{blind}}}\), is a coarse-graining of \({\dot{S}}_{{\rm{true}}}\), giving the relation \({\dot{S}}_{{\rm{blind}}}\le {\dot{S}}_{{\rm{true}}}\)47. We find that \({\dot{S}}_{{\rm{blind}}}\) shows excellent agreement with \(\hat{\dot{S}}\), indicating that the Gaussian approximation provides a good estimate for the observable dynamics even when the system is highly non-linear.

To further benchmark our estimator, we calculate \(\dot{S}\) using two alternative methods, one based on the thermodynamic uncertainty relation (TUR)7,48 and one based on measuring first passage times (MFPT)49. The prior method measures a macroscopic current based on a weighted average of a system’s trajectory, jd, and estimates the EPR using the TUR for diffusive dynamics, \(\dot{S}\ge 2\langle {j}_{{\bf{d}}}^{2}\rangle {\left({\tau }_{{\rm{obs}}}{\rm{Var}}[{j}_{{\bf{d}}}]\right)}^{-1}\), where 〈〉 and Var[] denote an ensemble average and variance taken after an observation time τobs17. The latter method requires measuring the MFPT of an observable \({\mathcal{O}}\) constructed from the system’s dynamics to reach a threshold that depends on a user-defined error tolerance. We choose \({\mathcal{O}}\) and the threshold based on a drift-diffusion approximation for the winding number of the Brusselator (Supplementary Note 8). Similarly to \(\hat{\dot{S}}\), both of these methods saturate to the true \(\dot{S}\) for systems obeying linear dynamics. As such, they also approximate \({\dot{S}}_{{\rm{blind}}}\), but we find that they provide a looser bound than \(\hat{\dot{S}}\) (Supplementary Fig. 4).

Prior to ΔμHB, both \(\hat{\dot{S}}\) and \({\dot{S}}_{{\rm{blind}}}\) show a shift in their trends, but \({\dot{S}}_{{\rm{true}}}\) does not. The smooth transition is due to the finite system size we employ, and gets sharper as a power law as the system gets larger (Fig. 3a). The power law exponent measured from \(\hat{\dot{S}}\) is nearly linear, consistent with the Gaussian assumption. The exponent differs from that of \({\dot{S}}_{{\rm{blind}}}\) because our Gaussian assumption breaks down at the high values of Δμ where the maximum slope occurs (Fig. 3b).

a \({\dot{S}}_{{\rm{true}}}/V\) (blue squares) and \({\dot{S}}_{{\rm{blind}}}/V\) (orange triangles) for system volumes V = [10, 50, 100, 500, 1000, 5000, 10000], showing an increasingly sharp transition in \({\dot{S}}_{{\rm{blind}}}\), but not in \({\dot{S}}_{{\rm{true}}}\). \({\dot{S}}_{{\rm{blind}}}\) shows no volume dependence below the transition, and is linear dependent on V above it. Vertical red dashed line shows ΔμHB. b Maximum value of \(\partial {\dot{S}}_{{\rm{blind}}}/\partial \Delta \mu\) shows a power-law dependence with volume. Inset shows the same measurement for \(\partial \hat{\dot{S}}/\partial \Delta \mu\). c Forward and reverse fluxes, JF (green squares) and JR (red diamonds), obtained from numerical integration of deterministic equations of motion for the Brusselator. Inset shows \({J}_{{\rm{blind}}}^{{\rm{F}}}\) (green upright triangles) and \({J}_{{\rm{blind}}}^{{\rm{R}}}\) (red rightward triangles). Vertical black dashed line shows ΔμHB.

The Hopf bifurcation for the Brusselator is supercritical23, meaning the limit cycle grows continuously from the fixed point when Δμ − ΔμHB ≪ 1. Further from the transition point, the trajectory makes a discontinuous transition. At our resolution in Δμ, this discontinuous transition is what underlies the shift in \({\dot{S}}_{{\rm{blind}}}\) of the Brusselator. This same transition is present in \({\dot{S}}_{{\rm{true}}}\), but is difficult to detect numerically for reasons we explain here. In the deterministic limit, \({\dot{S}}_{{\rm{true}}}=\Delta \mu \left({J}^{{\rm{F}}}-{J}^{{\rm{R}}}\right)\), where \({J}^{{\rm{F}}}=b\langle x\rangle {k}_{2}^{+}\) and \({J}^{{\rm{R}}}=c\langle y\rangle {k}_{2}^{-}\) are the forward and reverse fluxes for transforming a B molecule into a C molecule. 〈x〉 is a constant, but by numerically integrating the deterministic version for Eq. (8), we observe a discontinuity in 〈y〉 above the Hopf bifurcation. However, JF ≫ JR, obscuring the discontinuity in \({\dot{S}}_{{\rm{true}}}\) (Fig. 3c). Upon coarse-graining, we have \({\dot{S}}_{{\rm{blind}}}=\Delta \mu \left({J}_{{\rm{blind}}}^{{\rm{R}}}-{J}_{{\rm{blind}}}^{{\rm{F}}}\right)\), with \({J}_{{\rm{blind}}}^{{\rm{F}}}=b\langle x\rangle {k}_{2}^{+}+{\langle x\rangle }^{3}{k}_{3}^{-}\) and \({J}^{{\rm{R}}}=c\langle y\rangle {k}_{2}^{-}+{\langle x\rangle }^{2}\langle y\rangle {k}_{3}^{+}\). These two terms are equal to each other for Δμ < ΔμHB and diverge continuously when Δμ ⪆ ΔμHB, followed by the relatively large discontinuity in \({J}_{{\rm{blind}}}^{{\rm{R}}}\) (Fig. 3c, inset).

One gains further insight into the dynamics through the transition by studying \(\hat{{\mathcal{E}}}\) (Fig. 2b). For Δμ < ΔμHB, \(\hat{{\mathcal{E}}}\) exhibits a single peak that increases in amplitude while decreasing in frequency as Δμ increases. Above ΔμHB, the peak frequency makes a discontinuous jump, the magnitude of the peak grows rapidly, and additional peaks at integer multiples of the peak frequency appear due to the non-linear shape of the limit cycle attractor. These harmonics are expected for dynamics on a non-circular path. For Δμ < ΔμHB, the magnitude of the peak is independent of system volume, while it gains a linear volume dependence in the limit cycle. The width of the peak is also maximized near the transition, reflecting a superposition of frequencies present in the trajectories (Supplementary Fig. 5).

To investigate how dynamical phase transitions manifest in the irreversibility of spatially extended systems, we simulate a reaction-diffusion Brusselator on a one-dimensional periodic lattice with L compartments, each with volume V, spaced a distance h apart. The full set of reactions are now

where dj = Dj/h2, and Dj is the diffusion constant of chemical species j = {X, Y}. Qualitatively different dynamics occur based on the ratio DX/DY. DX/DY ≪ 1 yields static Turing patterns10,29. We focus on the DX/DY ≫ 1 regime which exhibits dynamic, excitable waves. All values of {ai, bi, ci} are kept constant in each compartment.

In the steady state, the reaction-diffusion Brusselator has the same dynamics as the well mixed Brusselator, and so it is not surprising that it’s EPR curve as a function of Δμ is similar (Supplementary Fig. 6). However, unlike the well-mixed system, the Hopf bifurcation signals the onset of qualitatively distinct dynamics in the reaction-diffusion system. Prior to the Hopf bifurcation, there are no coherent, spatial patterns in the system’s dynamics (Fig. 4a). Above the Hopf bifurcation, system-spanning waves begin to emerge that synchronize the oscillations across the system (Fig. 4b). Following standard methods50,51, we define the synchronization order parameter, 0 ≤ r < 1, using

where θj(t) is the phase of the oscillator at position xj and time t, M is the number of oscillators (here, the number of lattice sites in our simulation), and T is the temporal extent of the data [Methods]. ψ denotes the overall phase, and r is close to zero in the asynchronous phase and approaches one as the oscillators synchronize.

a Subsection of a typical trajectory for X(x, t) and Y(x, t) for a Δμ = 3.5, below the Hopf Bifurcation and b Δμ = 6.2, above it. Color indicates the local number of the chemical species. c Synchronization order parameter, 〈r〉, as a function of Δμ. Vertical red dashed line indicates ΔμHB. Inset shows the same measurement for volumes V = {101, 102, 103} shown by blue circles, orange squares, and green triangles, respectively, at each lattice site over a smaller region of Δμ. Dots and shaded areas show mean ± s.d. of N = 10 simulations. d \(\dot{s}\) as a function of Δμ. Blue squares, orange triangles, and black circles show results for \({\dot{s}}_{{\rm{true}}}\), \({\dot{s}}_{{\rm{blind}}}\), and \(\hat{\dot{s}}\), respectively. Shaded area shows mean ± s.d. of N = 10 simulations. Vertical dashed red line indicates ΔμHB. See Supplementary Tables 3 and 4 for all simulation parameters.

Below ΔμHB, r is low and rapidly approaches one as the system approaches the macroscopic bifurcation point (Fig. 4c). Like \(\dot{S}\), this transition occurs more sharply and closer to ΔμHB as the system size increases, approaching the discontinuous transition to the limit cycle behavior (Fig. 4c, inset)52. Throughout these changes, the system is driven further from equilibrium, as reflected in the increasing \(\hat{\dot{s}}\) (Fig. 4d). The shift to collective behavior is not reflected in \(\dot{s}\) as it is almost identical to \(\dot{S}\) found for the well-mixed Brusselator (Supplementary Fig. 6). Instead, \({\mathcal{E}}\) carries the signature of the dynamical phase transition. For Δμ < ΔμHB, \(\hat{{\mathcal{E}}}\) shows peaks at high wavenumbers, reflecting that irreversibility is occurring incoherently over short length scales. Above ΔμHB, as the system shows synchronized oscillations, there is an abrupt shift in the peaks of \(\hat{{\mathcal{E}}}\) to low q, indicating that this collective behavior carries the majority of the irreversibility (Fig. 5b,c). We also infer that the collective behavior is partially composed of traveling waves due to the streaks in \(\hat{{\mathcal{E}}}\) (Fig. 5b). The slight offset in the transition occurs for high values of Δμ < ΔμHB where small regions synchronize for short periods of time, but system wide oscillations are not observed (Supplementary Fig. 7). Furthermore, the transition moves closer to the macroscopic transition point with increased volume of the individual compartments (Supplementary Fig. 7).

a \({\mathcal{E}}\) averaged over N = 10 simulations for Δμ = 4.0, i.e., Δμ < ΔμHB. Line plots on top and left of figure show marginals over ω and q, respectively. b Similar to a, but for Δμ = 6.2, i.e., Δμ > ΔμHB. c Wavenumber, q, that maximizes \(\hat{{\mathcal{E}}}\) as a function of Δμ. Vertical dashed red line shows ΔμHB. Black dots and shaded area show mean ± s.d. over N = 10 simulations.

Discussion

Previous work has investigated the behavior of \(\dot{S}\) at thermodynamic phase transitions with the work of 22 finding general signatures of discontinuous phase transitions in \(\dot{S}\) which agree with our results. While 26 found \(\dot{S}\) to have a discontinuity of its first derivative with respect to Δμ in a slightly modified version of the well-mixed Brusselator, work on the same system presented here did not find any non-analytic behavior in \({\dot{S}}_{{\rm{true}}}\)30. We show that a discontinuous phase transition exists in our model, but the magnitude of the discontinuity is small and difficult to detect in \({\dot{S}}_{{\rm{true}}}\) and is more easily seen in the coarse-grained \({\dot{S}}_{{\rm{blind}}}\) (Fig. 3). Further, other spectral decompositions of the dissipation rate either assume a particular form for the underlying dynamics27 or require the measurement of a response function in addition to the correlation function34, which is often difficult to perform in experiments.

Here, we illustrated that the total irreversibility rate cannot distinguish between the dynamical phase transitions in the well-mixed and the spatially extended Brusselator (Supplementary Fig. 6). While the EPR quantifies the emergence of oscillations, the synchronization of the oscillations across space is only captured in \({\mathcal{E}}\) by its peak shifting from high to low wavenumber (Fig. 5). By simulating systems with increasing compartment volumes, this shift occurs closer to the macroscopic transition point (Supplementary Fig. 7), similarly to the increasing sharpness of the shift in \(\dot{S}\) for the well-mixed Brusselator (Fig. 3). Thus, synchronization is intimately related to the emergence of oscillations. We hypothesize that synchronization occurs due the presence of a slow segment of the Brusselator dynamics (Fig. 2a). The time spent in the slow portion of the dynamics allows neighboring oscillators to reduce their relative phase through their diffusive coupling, allowing previously out-of-sync lattice sites to synchronize via the low-cost mechanism of diffusion. This is further seen by the higher value of \({\dot{s}}_{{\rm{blind}}}\) for the reaction-diffusion Brusselator compared to \({\dot{S}}_{{\rm{blind}}}\) for the well-mixed Brusselator when Δμ < ΔμHB, but not for Δμ > ΔμHB (Supplementary Fig. 6). Once the oscillations are synchronized, diffusion between lattice sites at equal concentrations is an equilibrium process and does not produce entropy.

In summary, we have introduced the entropy production factor, \({\mathcal{E}}\), a dimensionless, scalar function that quantifies irreversibility in macroscopic, non-equilibrium dynamics by measuring time-reversal symmetry breaking in the cross-covariances between multiple variables. Integrating \({\mathcal{E}}\) gives a lower bound on the net entropy production rate, \(\dot{s}\). Calculating \({\mathcal{E}}\) does not require knowledge about the form of the underlying dynamics and is easy to calculate for many types of data, including both random variables, such as the positions of driven colloidal particles53 (Supplementary Note 9, Supplementary Fig. 8 and 9, Supplementary Table 5), and random fields, such as spatially heterogeneous protein concentrations in cells54. Furthermore, we stress that we are only able to resolve the irreversibility present in the observable dynamics of our chemical example. As discussed above, the presence of hidden dynamics will provide underestimates of irreversibility measured via Eq. (1) due to the data processing inequality55. Using other observable information, such as asymmetric transition rates56 or the ratio of populations in observed states under stalled conditions46 in Markov jump processes, can give tighter bounds on the entropy produced when unobserved, dissipative processes are present. While the examples considered here are simulations of 1+1 dimensional fields, there is nothing inherently different in the methodology if one were to analyze experimental data in 2 or 3 spatial dimensions, such as the 3+1 dimensional time series data attained using lattice-light sheet microscopy57.

In active matter, both living and non-living, the non-equilibrium dissipation of energy manifests in both time and space. With the method introduced here, compatible with widely-used computational and experimental tools, we provide access to these underexplored modes of irreversibility that drive complex spatiotemporal dynamics.

Methods

Calculating \({\mathcal{E}}\) from data

Estimate \({\mathcal{E}}\) requires estimating frequency-space covariance functions, or cross spectral densities (CSDs). Considering a set of M discrete, real variables measured over time: {Xi(t)}, where t = Δt, …, T, with T = NΔt, and i = 1, …, M indexes the variables, we estimate the CSD using the periodogram,

where \({x}^{i}(\omega )={\mathcal{F}}\{{X}^{i}(t)-\left\langle {X}^{i}(t)\right\rangle \}\) are the Fourier transforms of the centered variables over the frequencies ωn = 2πnT−1 for \(n=[-\frac{N}{2},\frac{N}{2}]\).

The periodogram, is known to exhibit a systematic bias and considerable variance in estimating the true CSD. Both of these issues can be resolved by smoothing \({\widetilde{C}}^{ij}\) via convolution with a Gaussian with width σ. This is equivalent to multiplying \({\widetilde{C}}^{ij}\) in the time domain by a Gaussian of width σ−1. We then define our smoothed CSD as

Once \(\hat{C}\) is calculated, we then use the discrete version of Eq. (3) to estimate \({\mathcal{E}}\). The extension to higher-dimensional data is done as follows: taking into account the spatial lattice on which the data is taken in Eq. (12), convolving the result with a multivariate Gaussian in Eq. (13), and finally estimate \(\dot{s}\) using the discrete version of Eq. (4). The choice of smoothing width, σ, should be guided by the maximum curvature seen in the structure factor, Cij58.

Bias in \(\hat{{\mathcal{E}}}\) and \(\hat{\dot{S}}\)

Our estimates of \(\hat{{\mathcal{E}}}\) and \(\hat{\dot{S}}\) are biased. The bias is found by calculating the expected value of \(\hat{\dot{S}}\) for a system in equilibrium. To do this, we assume that the true covariance function is Cij = δij and measurement noise plus finite sampling time and rate gives rise to Gaussian noise in both the real and complex parts of \({\widetilde{C}}^{ij}(\omega )\), obeying the symmetries required for Cij to be Hermitian. We only cite the results here and refer the reader to Supplementary Note 4 for a full derivation. The bias for random variables is

where M is the number of variables, \({\omega }^{\max }\) is the maximum frequency available, σ is the width of the Gaussian used to smooth \(\widetilde{C}(\omega )\), and T is the total time. The bias for random fields is

where Li is the length, \({q}_{i}^{\max }\) is the maximum wavenumber, and \({\sigma }_{{q}_{i}}\) is the width of the Gaussian used to smooth \(\widetilde{C}({\bf{q}},\omega )\) in the ith spatial dimension.

Simulation details

To simulate the driven Gaussian fields, Eq. (5), we nondimensionalize the system of equations using a time scale τ = (Dr)−1 and length scale λ = r−1/2. We use an Euler–Maruyama algorithm to simulate the dynamics of the two fields on a periodic, one-dimensional lattice.

We simulate Eq. (8) using Gillespie’s algorithm59 to create a stochastic trajectory through the (X, Y) phase plane with a well-mixed volume of V = 100. We calculate the true \(\dot{S}\) of any specific trajectory z = {mj∣j = 1, …, N} as follows. For each state \(m^{\prime}\), there exists a probability per unit time of transitioning to a new state m via a chemical reaction μ, denoted by \({W}_{m,m^{\prime} }^{(\mu )}\). At steady state, the true entropy produced is44

Note that ΔStrue is now itself a random variable that depends on the specific trajectory. We estimate \(\left\langle {\dot{S}}_{{\rm{true}}}\right\rangle\) by fitting a line to an ensemble average of ΔStrue (Supplementary Fig. 3), and compare that to \(\hat{\dot{S}}\). We calculate \({\dot{S}}_{{\rm{blind}}}\) by considering the "rate" at which a transition can occur as the sum over all the rates that give rise to the observed transition in (X, Y), i.e.,

where \({\sum }_{\{{\mu }_{j}| {m}_{j-1}\to {m}_{j}\}}\) denotes a sum over all reaction pathways μ that give rise to the transition mj−1 → mj. This procedure coarse-grains ΔStrue, giving ΔSblind ≤ ΔStrue47. ΔSblind is the maximum entropy production that can be inferred by any method that observes trajectories in (X, Y), but which does not have access to the reaction pathways followed.

To simulate the reaction-diffusion Brusselator, Eq. (10), we take a compartment-based approach60 where we treat each chemical species in each compartment as a separate species, and treat diffusion events as additional chemical reaction pathways. We nondimensionalize time by \(\tau ={({k}_{1}^{+})}^{-1}\) and use a Gillespie algorithm to simulate all reactions on a one-dimensional periodic lattice with L sites.

See Supplementary Tables 1–5 for all simulation parameters used in each figure.

Synchronization order parameter

The synchronization order parameter given in Eq. (11), r, is a function of the oscillator phase at every lattice site j at time t, θj(t). In order to calculate θ from our data, we measure the oscillator’s phase with respect to a trajectory’s mean position over time, namely

where the angle is taken over space and time. This phase is then used to calculate r as given in Eq. (11).

Data availability

The data that support the findings of this study are available from the corresponding authors upon reasonable request.

Code availability

The code used to calculate the EPR and EPF from data, as well as run all the simulations in this study, can be found at https://github.com/lab-of-living-matter/freqent.

References

Toner, J. & Tu, Y. Flocks, herds, and schools: a quantitative theory of flocking. Phys. Rev. E 58, 4828–4858 (1998).

Ramaswamy, S. The mechanics and statistics of active matter. Ann. Rev. Cond. Matter Phys. 1, 323–345 (2010).

Marchetti, M. C. et al. Hydrodynamics of soft active matter. Rev. Mod. Phys. 85, 1143–1189 (2013).

Onsager, L. & Machlup, S. Fluctuations and irreversible processes. Phys. Rev. 91, 1505–1512 (1953).

Parrondo, J. M. R., Van den Broeck, C. & Kawai, R. Entropy production and the arrow of time. N. J. Phys. 11, 073008 (2009).

Seifert, U. Stochastic thermodynamics, fluctuation theorems and molecular machines. Rep. Prog. Phys. 75, 126001 (2012).

Barato, A. C. & Seifert, U. Thermodynamic uncertainty relation for biomolecular processes. Phys. Rev. Lett. 114, 158101 (2015).

Machta, B. B. Dissipation bound for thermodynamic control. Phys. Rev. Lett. 115, 260603 (2015).

Bryant, S. J. & Machta, B. B. Energy dissipation bounds for autonomous thermodynamic cycles. Proc. Natl Acad. Sci. USA 117, 3478–3483 (2020).

Turing, A. M. The chemical basis of morphogenesis. Philos. Trans. R. Soc. B 237, 37–72 (1952).

Ouyang, Q. & Swinney, H. L. Transition from a uniform state to hexagonal and striped Turing patterns. Nature 352, 610–612 (1991).

Prigogine, I. Introduction to Thermodynamics of Irreversible Processes (Wiley, 1967).

Cross, M. C. & Hohenberg, P. C. Pattern formation outside of equilibrium. Rev. Mod. Phys. 65, 851–1112 (1993).

Roldan, E. & Parrondo, J. M. R. Estimating dissipation from single stationary trajectories. Phys. Rev. Lett. 105, 150607 (2010).

Fodor, È. et al. How far from equilibrium is active matter? Phys. Rev. Lett. 117, 038103 (2016).

Seara, D. S. et al. Entropy production rate is maximized in non-contractile actomyosin. Nat. Commun. 9, 4948 (2018).

Li, J., Horowitz, J. M., Gingrich, T. R. & Fakhri, N. Quantifying dissipation using fluctuating currents. Nat. Commun. 10, 1666 (2019).

Frishman, A. & Ronceray, P. Learning force fields from stochastic trajectories. Phys. Rev. X 10, 021009 (2020).

Martínez, I. A., Bisker, G., Horowitz, J. M. & Parrondo, J. M. R. Inferring broken detailed balance in the absence of observable currents. Nat. Commun. 10, 3542 (2019).

Gaspard, P. Fluctuation theorem for nonequilibrium reactions. J. Chem. Phys. 120, 8898–8905 (2004).

Tomé, T. & de Oliveira, M. J. Entropy production in nonequilibrium systems at stationary states. Phys. Rev. Lett. 108, 020601 (2012).

Noa, C. E. F., Harunari, P. E., de Oliveira, M. J. & Fiore, C. E. Entropy production as a tool for characterizing nonequilibrium phase transitions. Phys. Rev. E 100, 012104 (2019).

Zhang, Y. & Barato, A. C. Critical behavior of entropy production and learning rate: ising model with an oscillating field. J. Stat. Mech. Theor. Exp. 2016, 113207 (2016).

Ge, H. & Qian, H. Thermodynamic limit of a nonequilibrium steady state: Maxwell-type construction for a bistable biochemical system. Phys. Rev. Lett. 103, 148103 (2009).

Ge, H. & Qian, H. Non-equilibrium phase transition in mesoscopic biochemical systems: from stochastic to nonlinear dynamics and beyond. J. R. Soc. Interface 8, 107–116 (2011).

Nguyen, B., Seifert, U. & Barato, A. C. Phase transition in thermodynamically consistent biochemical oscillators. J. Chem. Phys. 149, 045101 (2018).

Tociu, L., Fodor, É., Nemoto, T. & Vaikuntanathan, S. How dissipation constrains fluctuations in nonequilibrium liquids: diffusion, structure, and biased interactions. Phys. Rev. X 9, 041026 (2019).

Nguyen, B. & Seifert, U. Exponential volume dependence of entropy-current fluctuations at first-order phase transitions in chemical reaction networks. Phys. Rev. E 102, 022101 (2020).

Falasco, G., Rao, R. & Esposito, M. Information thermodynamics of turing patterns. Phys. Rev. Lett. 121, 108301 (2018).

Rana, S. & Barato, A. C. Precision and dissipation of a stochastic turing pattern. Phys. Rev. E 102, 032135 (2020).

Hohenberg, P. C. & Halperin, B. I. Theory of dynamic critical phenomena. Rev. Mod. Phys. 49, 435–479 (1977).

Kawai, R., Parrondo, J. M. R. & Van den Broeck, C. Dissipation: the phase-space perspective. Phys. Rev. Lett. 98, 080602 (2007).

Strogatz, S. H. Nonlinear Dynamics and Chaos (CRC Press, 2015).

Nardini, C. et al. Entropy production in field theories without time-reversal symmetry: quantifying the non-equilibrium character of active matter. Phys. Rev. X 7, 021007 (2017).

Prigogine, I. & Lefever, R. Symmetry breaking instabilities in dissipative systems. II. J. Chem. Phys. 48, 1695–1700 (1968).

Qian, H., Saffarian, S. & Elson, E. L. Concentration fluctuations in a mesoscopic oscillating chemical reaction system. Proc. Natl Acad. Sci. USA 99, 10376–81 (2002).

Fei, C., Cao, Y., Ouyang, Q. & Tu, Y. Design principles for enhancing phase sensitivity and suppressing phase fluctuations simultaneously in biochemical oscillatory systems. Nat. Commun. 9, 1434 (2018).

Maes, C. & van Wieren, M. H. Time-symmetric fluctuations in nonequilibrium systems. Phys. Rev. Lett. 96, 240601 (2006).

Schnakenberg, J. Network theory of microscopic and macroscopic behavior of master equation systems. Rev. Mod. Phys. 48, 571–585 (1976).

Zia, R. & Schmittmann, B. Probability currents as principal characteristics in the statistical mechanics of non-equilibrium steady states. J. Stat. Mech. 2007, P07012 (2007).

Battle, C. et al. Broken detailed balance at mesoscopic scales in active biological systems. Science 351, 604–607 (2016).

Gingrich, T. R., Rotskoff, G. M. & Horowitz, J. M. Inferring dissipation from current fluctuations. J. Phys. A Math. Theor. 50, 184004 (2017).

Weiss, J. B., Fox-Kemper, B., Mandal, D., Nelson, A. D. & Zia, R. K. P. Nonequilibrium oscillations, probability angular momentum, and the climate system. J. Stat. Phys. 179, 1010–1027 (2020).

Esposito, M. & Van den Broeck, C. Three detailed fluctuation theorems. Phys. Rev. Lett. 104, 090601 (2010).

Shiraishi, N. & Sagawa, T. Fluctuation theorem for partially masked nonequilibrium dynamics. Phys. Rev. E 91, 012130 (2015).

Polettini, M. & Esposito, M. Effective thermodynamics for a marginal observer. Phys. Rev. Lett. 119, 240601 (2017).

Esposito, M. Stochastic thermodynamics under coarse graining. Phys. Rev. E 85, 041125 (2012).

Horowitz, J. M. & Gingrich, T. R. Proof of the finite-time thermodynamic uncertainty relation for steady-state currents. Phys. Rev. E 96, 020103 (2017).

Roldán, É., Neri, I., Dörpinghaus, M., Meyr, H. & Jülicher, F. Decision making in the arrow of time. Phys. Rev. Lett. 115, 250602 (2015).

Acebrón, J. A., Bonilla, L. L., Vicente, C. J., Ritort, F. & Spigler, R. The Kuramoto model: a simple paradigm for synchronization phenomena. Rev. Mod. Phys. 77, 137–185 (2005).

Zhang, D., Cao, Y., Ouyang, Q. & Tu, Y. The energy cost and optimal design for synchronization of coupled molecular oscillators. Nat. Phys. 16, 95–100 (2020).

Chandra, S., Girvan, M. & Ott, E. Continuous versus discontinuous transitions in the d-dimensional generalized kuramoto model: odd d is different. Phys. Rev. X 9, 011002 (2019).

Sun, B., Lin, J., Darby, E., Grosberg, A. Y. & Grier, D. G. Brownian vortexes. Phys. Rev. E 80, 010401 (2009).

Bement, W. M. et al. Activator-inhibitor coupling between Rho signalling and actin assembly makes the cell cortex an excitable medium. Nat. Cell Bio. 17, 1471–1483 (2015).

Cover, T. M. & Thomas, J. A. Elements of Information Theory (Wiley-Interscience, 2006).

Martiniani, S., Chaikin, P. M. & Levine, D. Quantifying hidden order out of equilibrium. Phys. Rev. X 9, 011031 (2019).

Chen, B.-C. et al. Lattice light-sheet microscopy: imaging molecules to embryos at high spatiotemporal resolution. Science 346, 1257998 (2014).

Wang, S.-W., Kawaguchi, K., Sasa, S.-i & Tang, L.-H. Entropy production of nanosystems with time scale separation. Phys. Rev. Lett. 117, 070601 (2016).

Gillespie, D. T. Exact stochastic simulation of coupled chemical reactions. J. Phys. Chem. 81, 2340–2361 (1977).

Erban, R., Chapman, J. & Maini, P. A practical guide to stochastic simulations of reaction-diffusion processes. Preprint at https://arxiv.org/abs/0704.1908 (2007).

Acknowledgements

We would like to thank Samuel J. Bryant, Pranav Kantroo, Maria P. Kochugaeva, Rui Ma, Pierre Ronceray, A. Pasha Tabatabai, John D. Treado, Artur Wachtel, Dong Wang, and Vikrant Yadav for insightful discussions. D.S.S. acknowledges support from NSF Fellowship grant #DGE1122492. B.B.M. acknowledges a Simons Investigator award in MMLS and NIH R35 GM138341. M.P.M. and D.S.S. acknowledge support from Yale University Startup Funds. M.P.M. acknowledges funding from ARO MURI W911NF-14-1-0403, NIH RO1 GM126256, Human Frontiers Science Program (HFSP) grant # RGY0073/2018, and NIH U54 CA209992. Any opinion, findings, and conclusions or recommendations expressed in this material are those of the authors and do not necessarily reflect the views of the NSF, NIH, HFSP, or Simons Foundation.

Author information

Authors and Affiliations

Contributions

D.S.S., B.B.M., and M.P.M. conceived the work. D.S.S. wrote and analyzed simulations. All authors contributed to the interpretation of the data and the preparation of the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Peer review information Nature Communications thanks the anonymous reviewer(s) for their contribution to the peer review of this work. Peer reviewer reports are available.

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Seara, D.S., Machta, B.B. & Murrell, M.P. Irreversibility in dynamical phases and transitions. Nat Commun 12, 392 (2021). https://doi.org/10.1038/s41467-020-20281-2

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41467-020-20281-2

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.