Abstract

Active vision reconstruction is widely used in industrial manufacturing and three-dimensional inspection. The reconstruction accuracy is an important problem to be investigated for the inspection process. The paper conducts an analysis study of the reconstruction error for the vision reconstruction with a planar laser, two cameras and a 3D orientation board. The variation principles of the spatial coordinates caused by the variations of the extrinsic parameters of the cameras, intrinsic parameters of the internal camera, and image coordinate points of the internal camera, are modeled and analyzed in this paper. The analysis is also proved by the verification experiments, which provides the application potential for other active-vision-based reconstructions.

Similar content being viewed by others

Introduction

3D reconstruction has been considered as an important approach to acquire the necessary information for the mobile robot1, industrial inspection2,3, medical image4,5, industrial manufacture6, vehicle navigation7, and pattern recognition8,9,10. The spatial reconstruction initially captures the image of the measured object and then transfers 2D information to 3D information by the additional conditions.

The binocular vision is a reconstruction method using two or more cameras with the overlapping view fields. The second camera contributes a projection relationship and complements two degrees of the unknown 3D point. Stelzer et al.11 present a navigation method for the robot with six legs, which walks on the sand surface. In the navigation, the binocular vision images are employed to construct the depth map, which is solved by the semi-global matching algorithm. The visual odometry and inertial information are computed for the pose estimation. Lee et al.12 propose a vehicle detection algorithm with the stereo vision technology. The pavement characteristic and disparity histogram are employed to recognize the vehicle in the complicated traffic situations, especially the obstacle situation. The obstacle positioning, segmentation and vehicle recognition are the components of the algorithm. Correal et al.13 present a terrain reconstruction method with stereo images to achieve the autonomous navigation of the robots in complex environment. The information of the inertial measurement unit is fused in the robot navigation system. The stereo pair is refined by the adjustment of a function. Then, the pair set is computed by the disparity and the terrain is reconstructed by the re-projection operation. Chen et al.14 establish a multi-view measurement system to increase the observation range in optical reconstructions. The local calibration and global calibration are realized to unify the point clouds from the cameras. A point cloud matching method is designed to generate the global cloud and improve the precision of the reconstruction. Tang et al.15 propose a vision system with four cameras to achieve the 3D reconstruction of large-scale steel tubular structures. The point cloud is generated and filtered by the four-camera system. A deep learning algorithm of geometrical features is performed to matching the point clouds. The reconstruction diameters of the tested objects are considered as the benchmark, which proves the validity and performance of the reconstruction method. Chen et al.16 provides a 3D perception approach of orchard banana central stock with the adaptive multi-vision system. The semantic segmentation network is trained to realize the stereo matching that ensures the 3D triangulation for different positions of the system. The reconstruction of the stereo or binocular vision depends on the feature matching, which is often effective for the rough terrain and unavailable for the lubricous surface without feature points. The active vision recoveries the 3D point from the image by the active light mark which is generated from the laser-projection on the object or the coded-light-projection on the object from the DLP projector. Zhang et al.17 introduce the inspection method based on a cross-light projector and a camera. The laser stripe of the cross-light on the weld is captured by the camera and extracted from the image. A planar target is designed to calibrate the system. Xu et al.18 detect the 3D surface of the vehicle part by the active vision. A structured monochromatic light with the one-shot pattern is proposed to reconstruct the 3D area. The benefits of the method include the availability in the ambient illumination and the part with the reflective characteristic. Yee et al.19 outline a profilometry method with the color-sinusoidal-structured-light to simplify the fringe analysis. The direct arccosine function and De Bruijn sequence are adopted to demodulate the fringe images. Newcombe et al.20 realize the real-time 3D reconstruction based on RGB-D camera for the first time. In this paper, the model of the truncated signed distance function is used to continuously fuse the depth image and reconstruct the 3D surface. In addition, it is more accurate to calculate the pose by registration of the current frame and the image of the model projection than by the registration of the current frame and the previous frame. Xu et al.21 introduce a reconstruction system with a laser plane, a 3D moveable board, a camera inside the board and an external camera. The 3D moveable reference constructs the Cartesian coordinate system. As the external camera observes the board and the internal camera obtains the image of the intersection between the laser plane and the object, the measurement system is more flexible than the fixed one. The structured-light-based reconstruction actively marks the light label on the object. Thus, it is reliable in the industry and the environment with the complicated illumination field.

The 3D reconstruction with the camera and laser is based on the triangulation. Therefore, the precision of the measurement system relies on the system structure parameters. Llorca et al.22 present an error estimation approach for the stereo vision system to detect the pedestrian ahead of the vehicle. The pedestrian is inspected by the 3D cluster method and support vector machine (SVM). The parameters of the sensors are analyzed to study the influence on the reconstruction errors. The two corresponding points on the two cameras are unified to a vector to analyze the 3D point error. The influences of the focal length and the baseline are also studied in the paper. Belhaoua et al.23 analyze the reconstruction errors of the stereo-vision-based system. The analysis of the edge detection of straight line segments and elliptical arcs is performed in the reconstruction process. The singular value decomposition is adopted to estimate the parameters of the fitting lines. The perpendicular distances from the pixels to the fitting line stand for the errors. The uncertainties of the 3D reconstruction from the feature extraction process are estimated by the stereo vision model. Sankowski et al.24 introduce a computation model and formulas to estimate the precision of the binocular vision system. The method only requires a 2D calibration board and a laser distance meter. Yang et al.25 study the errors of the system structure parameters of the stereo vision and the correlation model is also analyzed for the system. Jiang et al.26 investigate the accuracy of 3D reconstruction from the Kinect-based RGB-D camera. In the method, a CAD model from a simulation program is adopted to generate the depth and pose data, which is considered as the benchmark of the evaluation. The reconstruction model is introduced in the paper and realized by a 6-DOF robot manipulator. The previous studies focus on the analysis of the pose and parameters of the monocular vision system, stereo vision system, and single-camera-based active vision system. However, the reconstruction system in Ref.21 consists of a laser plane, a 3D orientation board, an internal camera inside the reference and an external camera. In the reconstruction of the system, the external camera only observes the 3D cubic board and generates the 3D homography of the board. Then the internal camera inside the board captures the projection of the laser plane on the measured object. The object outside the view field of the external camera can be reconstructed by the homograph of the cubic board, as the cubic board freely moves in the view field of the external camera. Thus, the vision system provides a large view scope, especially for the area that cannot be directly observed by the external camera. The reconstruction system is a chained-form system including a laser plane, a 3D orientation board, an internal camera inside the reference and an external camera. The different poses among the above instrumentations conduct different reconstruction errors in a transfer chain. Thus, we investigate the chained-form transmission of the errors generated from the system structure parameters and camera parameters by simulations and experiments.

The rest paper includes three sections. Section “Analysis model” introduces the reconstruction system in Ref.21 and constructs the analysis model for the intrinsic parameters and extrinsic parameters. Section “Results of precision analysis and experimentation” demonstrates the simulations and experiments of the analysis results. Section “Summary” concludes the paper.

Analysis model

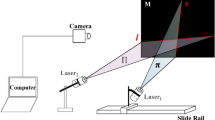

The active vision system in Ref.21 is established to provide the spatial coordinate of the object point. For the reconstruction purpose, the measurement system consists of a planar laser emitter, a 3D orientation board covered by the LED array, a camera inside the orientation board, and a camera outside the orientation board. The internal camera captures the image of the laser projection point on the object. The orientation board is placed in the view field of the external camera. The external camera only contributes the orientation board image, which does not contain the projection laser point on the object. The reconstruction process is exhibited in Fig. 1. The extrinsic parameters and the intrinsic parameters of the reconstruction instrumentation are calibrated by the calibration object and the calibration details are explained in Ref.21. Here, we employ the method in Ref.25 to represent the variations by the derivatives of the point coordinates. However, the system studied in Ref.25 is a stereo vision system, rather than a chained-form active vision system with a planar laser, a 3D moveable board, an internal camera inside the orientation board and an external camera. OE-XEYE, OD-XDYDZD, OC-XCYCZC, OB-XBYB and OA-XAYAZA stand for the coordinate systems of the internal-camera-image (CSICI), the orientation board (CSOB), the external-camera-image (CSECI), the external camera (CSEC), and the internal camera (CSIC), individually. The study assumes that the random variables of the system parameters are independent and identical distributed.

The principle of the vision reconstruction with the laser plane, internal camera, 3D reference and external camera in Ref.21. The planar laser intersects the measured object with the 3D point \({\mathbf{X}}_{j}^{{\text{O,D}}}\) in CSIC. Then the 3D point \({\mathbf{X}}_{j}^{{\text{O,D}}}\) in CSIC is transformed to the 3D laser point \({\mathbf{X}}_{j}^{{\text{C}}}\) in CSOB. Finally, the 3D point is represented by \({\mathbf{X}}_{j}^{{\text{A}}}\) in CSEC.

The basic measurement model of the vision system can be concluded by three steps21. In the first step, the planar laser intersects the measured object with the 3D point \({\mathbf{X}}_{j}^{{\text{O,D}}} = \left[ {X_{j}^{{\text{O,D}}} ,Y_{j}^{{\text{O,D}}} ,Z_{j}^{{\text{O,D}}} ,1} \right]^{{\text{T}}}\) in CSIC. According to the conditions of the point-projection and the point on the laser plane, \({\mathbf{X}}_{j}^{{\text{O,D}}}\) satisfies27,28

where \({\mathbf{x}}_{j}^{{{\text{O}} ,{\text{E}}}} = \left[ {x_{j}^{{{\text{O}} ,{\text{E}}}} ,y_{j}^{{{\text{O}} ,{\text{E}}}} ,1} \right]^{\text{T}}\) is the projection of \({\mathbf{X}}_{j}^{{\text{O,D}}}\), \({\mathbf{\Pi}}^{{\text{D}}} = [\pi_{1} ,\pi_{2} ,\pi_{3} ,\pi_{4} ]^{{\text{T}}}\) is the planar laser coordinate, \({\text{K}}^{{\text{D}}} = \left[ {\begin{array}{*{20}c} {\alpha^{{\text{D}}} } & {\gamma^{{\text{D}}} } & {u^{{\text{D}}} } \\ 0 & {\beta^{{\text{D}}} } & {v^{{\text{D}}} } \\ 0 & 0 & 1 \\ \end{array} } \right]\) is the intrinsic parameter matrix of the internal camera. The 3D laser point \({\mathbf{X}}_{j}^{{{\text{O}},{\text{D}}}}\) in CSIC can be solved by Eqs. (1) and (2).

In the second step, the spatial coordinate of the laser point on the object is transformed to CSOB by

where \({\mathbf{X}}_{j}^{{{\text{O}},{\text{D}}}}\) and \({\mathbf{X}}_{j}^{{\text{C}}}\) are the spatial coordinate of the laser point in CSIC and CSOB, respectively. \({\text{R}}^{{\text{D,C}}} = \left[ {\begin{array}{*{20}c} {r_{11}^{{\text{D,C}}} } & {r_{12}^{{\text{D,C}}} } & {r_{13}^{{\text{D,C}}} } \\ {r_{21}^{{\text{D,C}}} } & {r_{22}^{{\text{D,C}}} } & {r_{23}^{{\text{D,C}}} } \\ {r_{31}^{{\text{D,C}}} } & {r_{32}^{{\text{D,C}}} } & {r_{33}^{{\text{D,C}}} } \\ \end{array} } \right]\) and \({\mathbf{t}}^{{\text{D,C}}} = \left[ {\begin{array}{*{20}c} {t_{x}^{{\text{D,C}}} } & {t_{y}^{{\text{D,C}}} } & {t_{z}^{{\text{D,C}}} } \\ \end{array} } \right]^{{\text{T}}}\) are the rotation and the translation from CSIC to CSOB.

In the third step, the spatial coordinate of the laser point in CSOB is transformed to CSEC by

where \({\mathbf{X}}_{j}^{{\text{A}}}\) is the spatial coordinate of the laser point in CSEC, \({\text{R}}^{{\text{C,A}}} = \left[ {\begin{array}{*{20}c} {r_{11}^{{\text{C,A}}} } & {r_{12}^{{\text{C,A}}} } & {r_{13}^{{\text{C,A}}} } \\ {r_{21}^{{\text{C,A}}} } & {r_{22}^{{\text{C,A}}} } & {r_{23}^{{\text{C,A}}} } \\ {r_{31}^{{\text{C,A}}} } & {r_{32}^{{\text{C,A}}} } & {r_{33}^{{\text{C,A}}} } \\ \end{array} } \right]\) and \({\mathbf{t}}^{{\text{C,A}}} = \left[ {\begin{array}{*{20}c} {t_{x}^{{\text{C,A}}} } & {t_{y}^{{\text{C,A}}} } & {t_{z}^{{\text{C,A}}} } \\ \end{array} } \right]^{{\text{T}}}\) are the rotation and the translation from CSOB to CSEC.

According to Eqs. (3) and (4), the 3D laser point \({\mathbf{X}}_{j}^{{\text{A}}}\) in CSEC is

where the homography \({\text{H}}^{{\text{D,A}}} = \left[ {\begin{array}{*{20}c} {h_{11}^{{\text{D,A}}} } & {h_{12}^{{\text{D,A}}} } & {h_{13}^{{\text{D,A}}} } & {h_{14}^{{\text{D,A}}} } \\ {h_{21}^{{\text{D,A}}} } & {h_{22}^{{\text{D,A}}} } & {h_{23}^{{\text{D,A}}} } & {h_{24}^{{\text{D,A}}} } \\ {h_{31}^{{\text{D,A}}} } & {h_{32}^{{\text{D,A}}} } & {h_{33}^{{\text{D,A}}} } & {h_{34}^{{\text{D,A}}} } \\ 0 & 0 & 0 & 1 \\ \end{array} } \right]\), \(h_{11}^{{\text{D,A}}} = r_{11}^{{\text{C,A}}} r_{11}^{{\text{D,C}}} + r_{12}^{{\text{C,A}}} r_{21}^{{\text{D,C}}} + r_{13}^{{\text{C,A}}} r_{31}^{{\text{D,C}}}\), \(h_{12}^{{\text{D,A}}} = r_{11}^{{\text{C,A}}} r_{12}^{{\text{D,C}}} + r_{12}^{{\text{C,A}}} r_{22}^{{\text{D,C}}} + r_{13}^{{\text{C,A}}} r_{32}^{{\text{D,C}}}\),

\(h_{13}^{{\text{D,A}}} = r_{11}^{{\text{C,A}}} r_{13}^{{\text{D,C}}} + r_{12}^{{\text{C,A}}} r_{23}^{{\text{D,C}}} + r_{13}^{{\text{C,A}}} r_{33}^{{\text{D,C}}}\), \(h_{14}^{{\text{D,A}}} = r_{11}^{{\text{C,A}}} t_{x}^{{\text{D,C}}} + r_{12}^{{\text{C,A}}} t_{y}^{{\text{D,C}}} + r_{13}^{{\text{C,A}}} t_{z}^{{\text{D,C}}} + t_{x}^{{\text{C,A}}}\),

\(h_{21}^{{\text{D,A}}} = r_{21}^{{\text{C,A}}} r_{11}^{{\text{D,C}}} + r_{22}^{{\text{C,A}}} r_{21}^{{\text{D,C}}} + r_{23}^{{\text{C,A}}} r_{31}^{{\text{D,C}}}\), \(h_{22}^{{\text{D,A}}} = r_{21}^{{\text{C,A}}} r_{12}^{{\text{D,C}}} + r_{22}^{{\text{C,A}}} r_{22}^{{\text{D,C}}} + r_{23}^{{\text{C,A}}} r_{32}^{{\text{D,C}}}\),

\(h_{23}^{{\text{D,A}}} = r_{21}^{{\text{C,A}}} r_{13}^{{\text{D,C}}} + r_{22}^{{\text{C,A}}} r_{23}^{{\text{D,C}}} + r_{23}^{{\text{C,A}}} r_{33}^{{\text{D,C}}}\), \(h_{24}^{{\text{D,A}}} = r_{21}^{{\text{C,A}}} t_{x}^{{\text{D,C}}} + r_{22}^{{\text{C,A}}} t_{y}^{{\text{D,C}}} + r_{23}^{{\text{C,A}}} t_{z}^{{\text{D,C}}} + t_{y}^{{\text{C,A}}}\),

\(h_{31}^{{\text{D,A}}} = r_{31}^{{\text{C,A}}} r_{11}^{{\text{D,C}}} + r_{32}^{{\text{C,A}}} r_{21}^{{\text{D,C}}} + r_{33}^{{\text{C,A}}} r_{31}^{{\text{D,C}}}\), \(h_{32}^{{\text{D,A}}} = r_{31}^{{\text{C,A}}} r_{12}^{{\text{D,C}}} + r_{32}^{{\text{C,A}}} r_{22}^{{\text{D,C}}} + r_{33}^{{\text{C,A}}} r_{32}^{{\text{D,C}}}\),

\(h_{33}^{{\text{D,A}}} = r_{31}^{{\text{C,A}}} r_{13}^{{\text{D,C}}} + r_{32}^{{\text{C,A}}} r_{23}^{{\text{D,C}}} + r_{33}^{{\text{C,A}}} r_{33}^{{\text{D,C}}}\), \(h_{34}^{{\text{D,A}}} = r_{31}^{{\text{C,A}}} t_{x}^{{\text{D,C}}} + r_{32}^{{\text{C,A}}} t_{y}^{{\text{D,C}}} + r_{33}^{{\text{C,A}}} t_{z}^{{\text{D,C}}} + t_{z}^{{\text{C,A}}}\).

Stacking Eqs. (1)–(5), the coordinates of \({\mathbf{X}}_{j}^{{\text{A}}} = \left[ {\begin{array}{*{20}c} {X_{j}^{{\text{A}}} } & {Y_{j}^{{\text{A}}} } & {Z_{j}^{{\text{A}}} } & 1 \\ \end{array} } \right]^{{\text{T}}}\) in CSEC are

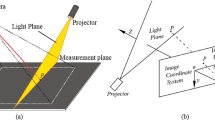

The above process explains the generation process of the reconstruction laser point on the object in CSEC and expresses the elements of the point coordinate by the elements of the extrinsic parameters, intrinsic parameters and image coordinates. Furthermore, we analyze the impacts of the extrinsic parameters, intrinsic parameters and image coordinates on the reconstruction precision by partial derivatives, as the partial derivatives represent the stability of the measurement to the perturbations. Thus, the generations of the partial derivatives of system structure parameters and image coordinates are shown in Fig. 2.

The generations of the partial derivatives of system structure parameters and image coordinates. HD,A is the homography from CSIC to CSEC. \({\mathbf{X}}_{j}^{{{\text{O}},{\text{D}}}}\) is the 3D reconstruction point in CSIC. \({\mathbf{X}}_{j}^{{\text{A}}}\) is the spatial coordinate of the laser point in CSEC. The partial derivatives of the external parameters, internal parameters and image coordinates are generated to the variation ratio of the spatial point in CSEC.

The variation ratio of the spatial point coordinate is evaluated with respect to the extrinsic and intrinsic parameters of the active vision system with the orientation board and two cameras. As the partial derivative represents the variation ratio of function with regard to the argument, the partial derivatives of the extrinsic and intrinsic parameters of the system are generated to indicate the influences of the parameters on the reconstruction precision. Firstly, the relative positions among the external camera, orientation board, and the internal camera are studied by the derivatives. Based on Eqs. (6)–(8), the partial derivatives of the spatial point \({\mathbf{X}}_{j}^{{\text{A}}}\) related to \({\mathbf{t}}^{{\text{C,A}}}\) are.

The partial derivatives of the spatial point \({\mathbf{X}}_{j}^{{\text{A}}}\) related to \({\mathbf{t}}^{{\text{D,C}}}\) are

Then, the influences of the intrinsic parameters of the internal camera on the spatial point \({\mathbf{X}}_{j}^{{\text{A}}}\) are evaluated by partial derivatives. The partial derivatives of the spatial point about \(\alpha^{{\text{D}}}\) are

The partial derivatives of the spatial point about \(\beta^{{\text{D}}}\) are

The partial derivatives of the spatial point about \(\gamma^{{\text{D}}}\) are

The partial derivatives of the spatial point about \(u^{{\text{D}}}\) are

The partial derivatives of the spatial point about \(v^{{\text{D}}}\) are

Finally, as there is noise on the captured image, the influences of the image point \({\mathbf{x}}_{j}^{{\text{O,E}}}\) on the spatial point \({\mathbf{X}}_{j}^{{\text{A}}}\) are also estimated by partial derivatives. The partial derivatives of the spatial point about \(x_{j}^{{\text{O,E}}}\) are

The partial derivatives of the spatial point about \(y_{j}^{{\text{O,E}}}\) are

The influence factors including the translation vectors, the intrinsic parameters of the internal camera, and the related image projection point are considered in the analysis model. The partial derivatives of the above arguments are generated to further conduct the results of the precision analysis and experimentation.

Results of precision analysis and experimentation

The structural parameters of the system and image coordinates contribute to the positioning accuracy of the reconstruction with the 3D orientation board and two cameras. The experiments are performed by a camera with the resolution of 1280 × 960, the focal length of 8 mm, the horizontal view of 41.7°, the vertical view of 31.8°, and the sub-pixel-level accuracy of laser projection. Therefore, the impacts of 3 main factors of the system are analyzed precisely, including the translation vectors from CSEC to CSOB and from CSOB to CSIC, intrinsic parameters of the internal camera and the image coordinate of the feature point.

Translation vector

The extrinsic parameters obtained in the calibration process of the active vision system are demonstrated in Table 1. According to Eqs. (12)–(14), for the increasing translation vector from CSOB to CSIC, the partial derivatives are the elements in the rotation matrix of the coordinate transformation from the CSEC to CSOB. As the rotation matrix is a unit orthogonal matrix, that is, the absolute value of each element is not greater than 1. As a result of Eqs. (9)–(11), the coordinates of the reconstruction feature points are linearly related to the CSOB-CSIC translation vector.

In addition, a series of verification experiments are performed to analyze the relationship between the spatial coordinate of reconstruction feature point and the CSEC to CSOB translation vectors. Firstly, the distance between the 3D orientation board and the measured object is controlled to be a constant. In this way, the image coordinate of the projection point does not vary in the test. Then, the experiments are carried out for the different distances between the CSOB and CSEC. The experimental process is described in Fig. 3. Figure 3a–d and i–l are the images captured by the external camera, and the distance between the CSOB and CSEC are 600 to 900 mm and 1000 to 1300 mm with the 100 mm interval respectively. Figure 3e–h and m–p are obtained by the internal camera, and the object is located on the same position. The reconstruction coordinates of the laser projections on the chessboard pattern are shown in Fig. 4. It demonstrates that the spatial coordinates of reconstruction points increase evenly with the growth of the distance from CSOB to CSEC. The experiment results prove the conclusion that the reconstruction coordinates of the laser points linearly increase with the rising distance between the external camera and the orientation board, which is consistent with the result of the partial derivatives of translation vectors.

Experimental images of the internal and the external cameras. (a–d) the experimental images of the external camera. The camera-board distances are 600, 700, 800, 900 mm. (i–l) the experimental images of the external camera. The camera-board distances are 1000, 1100, 1200, 1300 mm. (e–h) and (m–p) the experimental images of the internal camera related to (a–d) and (i–l).

Intrinsic parameter

The simulation analysis of the intrinsic parameters is based on the data obtained from the calibration experiment. The calibration experiment is performed when the external camera is 600 mm away from the 3D orientation board. The intrinsic parameters are listed in Table 2. According to the data in the Table 2, the scopes of parameters in the simulation analysis are selected reasonably.

The simulation results of intrinsic parameters are shown in Figs. 5, 6 and 7. Figure 5a–c illustrate the three coordinates of the reconstruction feature points varying with \(\alpha^{{\text{D}}}\) and \(\beta^{{\text{D}}}\). In Fig. 5a–c, there are several observed tendencies. For the increasing value of \(\alpha^{{\text{D}}}\), \(X_{j}^{{\text{A}}}\) value of the reconstructed feature point increases steadily. \(Y_{j}^{{\text{A}}}\) falls gradually, and \(Z_{j}^{{\text{A}}}\) value rises slightly. It is worth noting that the value of \(Y_{j}^{{\text{A}}}\) is negative and its absolute value is also increasing. However, the variation trends of \(X_{j}^{{\text{A}}}\), \(Y_{j}^{{\text{A}}}\), \(Z_{j}^{{\text{A}}}\) values are the opposite to the above ones, with the increment of \(\beta^{{\text{D}}}\). In order to have a deep insight into the effects of \(\alpha^{{\text{D}}}\) and \(\beta^{{\text{D}}}\), Fig. 5d–i further discusses the trends of the variations of partial derivatives. It aims to describe the speed of parameter variation by the partial derivative. When the value of \(\alpha^{{\text{D}}}\) goes up in Fig. 5d–f, the partial derivative \(\partial X_{j}^{{\text{A}}} {/}\partial \alpha^{{\text{D}}}\) is positive, and also shows an increasing trend. However, the partial derivative \(\partial Y_{j}^{{\text{A}}} {/}\partial \alpha^{{\text{D}}}\) is negative and declines slightly. The partial derivative \(\partial Z_{j}^{{\text{A}}} {/}\partial \alpha^{{\text{D}}}\) is positive and grows up gradually.

Simulation results of the intrinsic parameters \(\alpha^{{\text{D}}}\), \(\beta^{{\text{D}}}\). (a–c) the relationship between the coordinates \(X_{j}^{{\text{A}}}\), \(Y_{j}^{{\text{A}}}\), \(Z_{j}^{{\text{A}}}\) and the intrinsic parameters \(\alpha^{{\text{D}}}\), \(\beta^{{\text{D}}}\). (d–f) the relationship between the partial derivatives \(\partial X_{j}^{{\text{A}}} {/}\partial \alpha^{{\text{D}}}\), \(\partial Y_{j}^{{\text{A}}} {/}\partial \alpha^{{\text{D}}}\), \(\partial Z_{j}^{{\text{A}}} {/}\partial \alpha^{{\text{D}}}\) and the intrinsic parameters \(\alpha^{{\text{D}}}\), \(\beta^{{\text{D}}}\). (g–i) the relationship between the partial derivatives \(\partial X_{j}^{{\text{A}}} {/}\partial \beta^{{\text{D}}}\), \(\partial Y_{j}^{{\text{A}}} {/}\partial \beta^{{\text{D}}}\), \(\partial Z_{j}^{{\text{A}}} {/}\partial \beta^{{\text{D}}}\) and the intrinsic parameters \(\alpha^{{\text{D}}}\), \(\beta^{{\text{D}}}\).

Simulation results of the intrinsic parameters \(u^{{\text{D}}}\), \(v^{{\text{D}}}\). (a–c) the relationship between the coordinates \(X_{j}^{{\text{A}}}\), \(Y_{j}^{{\text{A}}}\), \(Z_{j}^{{\text{A}}}\) and the intrinsic parameters \(u^{{\text{D}}}\), \(v^{{\text{D}}}\). (d–f) the relationship between the partial derivatives \(\partial X_{j}^{{\text{A}}} {/}\partial u^{{\text{D}}}\), \(\partial Y_{j}^{{\text{A}}} {/}\partial u^{{\text{D}}}\), \(\partial Z_{j}^{{\text{A}}} {/}\partial u^{{\text{D}}}\) and the intrinsic parameters \(u^{{\text{D}}}\), \(v^{{\text{D}}}\). (g–i) the relationship between the partial derivatives \(\partial X_{j}^{{\text{A}}} {/}\partial v^{{\text{D}}}\), \(\partial Y_{j}^{{\text{A}}} {/}\partial v^{{\text{D}}}\), \(\partial Z_{j}^{{\text{A}}} {/}\partial v^{{\text{D}}}\) and the intrinsic parameters \(u^{{\text{D}}}\), \(v^{{\text{D}}}\).

Simulation results of the intrinsic parameters \(\gamma^{{\text{D}}}\). (a) The relationship between the coordinates \(X_{j}^{{\text{A}}}\), \(Y_{j}^{{\text{A}}}\), \(Z_{j}^{{\text{A}}}\) and the intrinsic parameter \(\gamma^{{\text{D}}}\). (b) The relationship between the partial derivatives \(\partial X_{j}^{{\text{A}}} {/}\partial \gamma^{{\text{D}}}\), \(\partial Y_{j}^{{\text{A}}} {/}\partial \gamma^{{\text{D}}}\), \(\partial Z_{j}^{{\text{A}}} {/}\partial \gamma^{{\text{D}}}\) and the intrinsic parameter \(\gamma^{{\text{D}}}\).

For the rising value of \(\beta^{{\text{D}}}\), the partial derivative \(\partial X_{j}^{{\text{A}}} {/}\partial \alpha^{{\text{D}}}\) declines gradually. The partial derivative \(\partial Y_{j}^{{\text{A}}} {/}\partial \alpha^{{\text{D}}}\) rises slightly and the partial derivative \(\partial Z_{j}^{{\text{A}}} {/}\partial \alpha^{{\text{D}}}\) decreases slightly. The variation trend in Fig. 5d–f is correspondingly opposite to that in Fig. 5g–i. Nevertheless, it’s remarkable that each partial derivative tends to be zero when the value of \(\alpha^{{\text{D}}}\) decreases and \(\beta^{{\text{D}}}\) increases.

Figure 6 describes that the influences of \(u^{{\text{D}}}\) and \(v^{{\text{D}}}\) on the spatial coordinates of the reconstruction feature point and the partial derivatives, respectively. In Fig. 6a–c, for the increasing \(u^{{\text{D}}}\), there is a downward trend of \(X_{j}^{{\text{A}}}\) and \(Z_{j}^{{\text{A}}}\) of the reconstruction feature point. The \(Y_{j}^{{\text{A}}}\) value raises gradually. However, the variation trend of \(X_{j}^{{\text{A}}}\), \(Y_{j}^{{\text{A}}}\) and \(Z_{j}^{{\text{A}}}\) values are opposite for the increasing \(v^{{\text{D}}}\). When \(u^{{\text{D}}}\) rises in Fig. 6d–f, the partial derivatives \(\partial X_{j}^{{\text{A}}} {/}\partial u^{{\text{D}}}\), \(\partial Z_{j}^{{\text{A}}} {/}\partial u^{{\text{D}}}\) increase steadily. But the partial derivative \(\partial Y_{j}^{{\text{A}}} {/}\partial u^{{\text{D}}}\) decreases slightly. Furthermore, when \(v^{{\text{D}}}\) increases, the partial derivatives \(\partial X_{j}^{{\text{A}}} {/}\partial v^{{\text{D}}}\),\(\partial Z_{j}^{{\text{A}}} {/}\partial v^{{\text{D}}}\) decrease steadily and the partial derivative \(\partial Y_{j}^{{\text{A}}} {/}\partial v^{{\text{D}}}\) rises slightly. The trends of partial derivatives in Fig. 6g–i provide contrary variations to the ones in Fig. 6d–f.

Finally, Fig. 7 describes the variation trends of the spatial coordinates and the partial derivatives about the twist parameter \(\gamma^{{\text{D}}}\). In Fig. 7a, the three plotlines show that the spatial coordinates are linearly related to the twist parameter \(\gamma^{{\text{D}}}\). The coordinates of \(X_{j}^{{\text{A}}}\) and \(Z_{j}^{{\text{A}}}\) increase and \(Y_{j}^{{\text{A}}}\) derivative shown in Fig. 7b. The partial derivatives limited within a narrow range for the 0–1 scope of \(\gamma^{{\text{D}}}\). The above results are in accord with the trends of partial \(\partial X_{j}^{{\text{A}}} {/}\partial \gamma^{{\text{D}}}\) and \(\partial Z_{j}^{{\text{A}}} {/}\partial \gamma^{{\text{D}}}\) are positive, whereas \(\partial Y_{j}^{{\text{A}}} {/}\partial \gamma^{{\text{D}}}\) is negative. All the partial derivatives are around 0.

Image point

In order to investigate the influences of the image coordinates on the spatial coordinates of reconstruction points, the simulation analysis and verification experiment are carried out and the results are shown in Fig. 8. Figure 8a–c present the great influence of image coordinates on the spatial coordinates of reconstruction points. There is evidently rising or falling phenomenon. Moreover, the experiments are implemented to test the simulation model. In the experiment, the image coordinates of the laser projections that locate on the same laser plane are adopted to the reconstruction. It can be found that the blue dots in Fig. 8a–c are all in the surface obtained by the simulation analysis. So the conclusion can be drawn that the simulation analysis results are consistent with the experiments.

Verification experiments and simulation results of the coordinates of the image point. (a–c) the relationship between the coordinates \(X_{j}^{{\text{A}}}\), \(Y_{j}^{{\text{A}}}\), \(Z_{j}^{{\text{A}}}\) and the image coordinates \(x_{j}^{{{\text{O}} ,{\text{E}}}}\), \(y_{j}^{{{\text{O}} ,{\text{E}}}}\). (d–f) the relationship between the partial derivatives \(\partial X_{j}^{{\text{A}}} {/}\partial x_{j}^{{{\text{O}} ,{\text{E}}}}\), \(\partial Y_{j}^{{\text{A}}} {/}\partial x_{j}^{{{\text{O}} ,{\text{E}}}}\), \(\partial Z_{j}^{{\text{A}}} {/}\partial x_{j}^{{{\text{O}} ,{\text{E}}}}\) and the image coordinates \(x_{j}^{{{\text{O}} ,{\text{E}}}}\), \(y_{j}^{{{\text{O}} ,{\text{E}}}}\). (g–i) the relationship between the partial derivatives \(\partial X_{j}^{{\text{A}}} {/}\partial x_{j}^{{{\text{O}} ,{\text{E}}}}\), \(\partial Y_{j}^{{\text{A}}} {/}\partial x_{j}^{{{\text{O}} ,{\text{E}}}}\), \(\partial Z_{j}^{{\text{A}}} {/}\partial x_{j}^{{{\text{O}} ,{\text{E}}}}\) and the image coordinates \(x_{j}^{{{\text{O}} ,{\text{E}}}}\), \(y_{j}^{{{\text{O}} ,{\text{E}}}}\).

The relationships between the image coordinates and the partial derivatives are illustrated in Fig. 8d–i. \(\partial X_{j}^{{\text{A}}} {/}\partial x_{j}^{{{\text{O}} ,{\text{E}}}}\) and \(\partial Z_{j}^{{\text{A}}} {/}\partial x_{j}^{{{\text{O}} ,{\text{E}}}}\) show the augmentation and \(\partial Y_{j}^{{\text{A}}} {/}\partial x_{j}^{{{\text{O}} ,{\text{E}}}}\) descends slightly with the increment of \(x_{j}^{{{\text{O}} ,{\text{E}}}}\). However, the values of \(\partial X_{j}^{{\text{A}}} {/}\partial y_{j}^{{{\text{O}} ,{\text{E}}}}\) and \(\partial Y_{j}^{{\text{A}}} {/}\partial y_{j}^{{{\text{O}} ,{\text{E}}}}\) grow up and \(\partial Z_{j}^{{\text{A}}} {/}\partial y_{j}^{{{\text{O}} ,{\text{E}}}}\) decreases significantly. When \(y_{j}^{{{\text{O}} ,{\text{E}}}}\) increases, \(\partial X_{j}^{{\text{A}}} {/}\partial x_{j}^{{{\text{O}} ,{\text{E}}}}\) and \(\partial Y_{j}^{{\text{A}}} {/}\partial x_{j}^{{{\text{O}} ,{\text{E}}}}\) descend and \(\partial Z_{j}^{{\text{A}}} {/}\partial x_{j}^{{{\text{O}} ,{\text{E}}}}\) ascends obviously, Nevertheless, the values of \(\partial X_{j}^{{\text{A}}} {/}\partial y_{j}^{{{\text{O}} ,{\text{E}}}}\) and \(\partial Z_{j}^{{\text{A}}} {/}\partial y_{j}^{{{\text{O}} ,{\text{E}}}}\) rise and \(\partial Y_{j}^{{\text{A}}} {/}\partial y_{j}^{{{\text{O}} ,{\text{E}}}}\) falls down gradually. Generally, the six partial derivatives tend to zero with the increasing value of \(y_{j}^{{{\text{O}} ,{\text{E}}}}\).

For the situation of adding one extra camera, the chained-form system should give one cubic board for the original external camera. Then, the original external camera is considered as the new internal camera inside the cubic board in the new system. The added camera is considered as the new external camera. For the point reconstruction in CSEC, there is a new homography from the new external camera to the original external camera ahead of the HD,A in Eq. (5). According to the chain rule of the partial derivatives, the extrinsic parameters, intrinsic parameters and image coordinates of the added camera can be investigated by the similar process of the partial derivatives of the parameters.

Summary

The analysis models are built to determine the reconstruction accuracy of the spatial coordinates reconstructed by the active vision system with two cameras and a 3D orientation reference. The influences of the structure parameters on the reconstruction accuracy are analyzed in details. The factors are divided into three groups: extrinsic parameters of two cameras, intrinsic parameters of the internal camera and image coordinates of laser projection. The influences of the factors on the spatial point reconstruction are analyzed for the active vision system. Then the variations caused by the multiple parameters are analyzed in each group. The variation principles of the spatial coordinates are demonstrated for the vision system with two cameras and a 3D orientation reference. The research also provides a useful reference for the parameter selection of the application measurement fields and the calibration verification of the active vision system.

Data availability

The datasets generated during the current study are available from the corresponding author on reasonable request.

References

Kim, J. H., Kwon, J. W. & Seo, J. Multi-UAV-based stereo vision system without GPS for ground obstacle mapping to assist path planning of UGV. Electron. Lett. 50, 1431–1432 (2014).

Glowacz, A. & Glowacz, Z. Diagnosis of the three-phase induction motor using thermal imaging. Infrared Phys. Technol. 81, 7–16 (2017).

Xu, G., Chen, F., Chen, R. & Li, X. T. Vision-based Reconstruction of Laser Projection with Invariant Composed of Point and Circle on 2D Reference. Sci. Rep. 10, 11866 (2020).

Othman, S. A., Ahmad, R., Asi, S. M., Ismail, N. H. & Rahman, Z. Z. A. Three-dimensional quantitative evaluation of facial morphology in adults with unilateral cleft lip and palate, and patients without clefts. Br. J. Oral Max. Surg. 52, 208–213 (2014).

Heike, C. L., Upson, K., Stuhaug, E. & Weinberg, S. M. 3D digital stereophotogrammetry: a practical guide to facial image acquisition. Head Face Med. 6, 18 (2010).

Samper, D., Santolaria, J., Brosed, F. J. & Aguilar, J. J. A stereo-vision system to automate the manufacture of a semitrailer chassis. Int. J. Adv. Manuf. Technol. 67, 2283–2292 (2013).

Murray, D. & Little, J. J. Using real-time stereo vision for mobile robot navigation. Auton. Robot. 8, 161–171 (2000).

Mian, A. Illumination invariant recognition and 3D reconstruction of faces using desktop optics. Opt. Express 19, 7491–7506 (2011).

Xu, H., Hou, J., Yu, L. & Fei, S. 3D Reconstruction system for collaborative scanning based on multiple RGB-D cameras. Pattern Recognit. 128, 505–512 (2019).

Schmalz, C., Forster, F., Schick, A. & Angelopoulou, E. An endoscopic 3D scanner based on structured light. Med. Image Anal. 16, 1063–1072 (2012).

Stelzer, A., Hirschmueller, H. & Goerner, M. Stereo-vision-based navigation of a six-legged walking robot in unknown rough terrain. Int. J. Robot. Res. 31, 381–402 (2012).

Lee, C. H., Lim, Y. C., Kwon, S. & Lee, J. H. Stereo vision-based vehicle detection using a road feature and disparity histogram. Opt. Eng. 50, 178–200 (2011).

Correal, R., Pajares, G. & Ruz, J. J. Automatic expert system for 3D terrain reconstruction based on stereo vision and histogram matching. Expert Syst. 41, 2043–2051 (2014).

Chen, M. Y. et al. High-accuracy multi-camera reconstruction enhanced by adaptive point cloud correction algorithm. Opt. Laser. Eng. 122, 170–183 (2019).

Tang, Y. et al. Vision-Based three-dimensional reconstruction and monitoring of large-scale steel tubular structures. Adv. Civ. Eng. 2020, 1236021 (2020).

Chen, M. Y. et al. Three-dimensional perception of orchard banana central stock enhanced by adaptive multi-vision technology. Comput. Electron. Agric. 13, 105508 (2020).

Zhang, L., Ke, W., Ye, Q. & Jiao, J. A novel laser vision sensor for weld line, detection on wall-climbing robot. Opt. Laser Technol. 60, 69–79 (2014).

Xu, J., Xi, N., Zhang, C., Shi, Q. & Gregory, J. Real-time 3D shape inspection system of automotive parts based on structured light pattern. Opt. Laser Technol. 43, 1–8 (2011).

Yee, C. K. & Yen, K. S. Single frame profilometry with rapid phase demodulation on colour-coded fringes. Opt. Commun. 397, 44–50 (2017).

Newcombe, R. A. et al. KinectFusion: real-time dense surface mapping and tracking. 2011 IEEE International Symposium on Mixed and Augmented Reality, 127–136 (2011).

Xu, G., Chen, F., Li, X. & Chen, R. Closed-loop solution method of active vision reconstruction via a 3D reference and an external camera. Appl. Opt. 58, 8092–8100 (2019).

Llorca, D. F., Sotelo, M. A., Parra, I., Ocana, M. & Bergasa, M. Error analysis in a stereo vision-based pedestrian detection sensor for collision avoidance applications. Sensors 10, 3741–3758 (2010).

Belhaoua, A., Kohler, S. & Hirsch, E. Error evaluation in a stereovision-based 3d reconstruction system. EURASIP J. Image Vide. 6, 1–12 (2010).

Sankowski, W., Wkodarczyk, M., Kacperski, D. & Grabowski, K. Estimation of measurement uncertainty in stereo vision system. Image Vis. Comput. 61, 70–81 (2017).

Yang, L., Wang, B., Zhang, R., Zhou, H. & Wang, R. Analysis on location accuracy for the binocular stereo vision system. IEEE Photonics J. 10, 1–16 (2018).

Jiang, S. Y., Chang, Y. C., Wu, C. C., Wu, C. H. & Song, K. T. Error analysis and experiments of 3D reconstruction using a RGB-D sensor. 2014 IEEE International Conference on Automation Science and Engineering. 1020–1025 (2014).

Hartley, R. & Zisserman, A. Multiple view geometry in computer vision (Cambridge University, Cambridge, 2003).

Xu, G., Yuan, J., Li, X. & Su, J. Profile reconstruction method adopting parameterized re- projection errors of laser lines generated from bi-cuboid references. Opt. Express 25, 29746–29760 (2017).

Acknowledgements

This work was funded by National Natural Science Foundation of China under Grant No. 51875247.

Author information

Authors and Affiliations

Contributions

G.X. contributed the idea, G.X., F.C., R.C., H.S., and X.T.L. provided the data analysis, writing and editing of the manuscript, F.C. and J.Y. contributed the program and experiments, G.X. and F.C. prepared the figures. All authors contributed to the discussions.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Chen, R., Chen, F., Xu, G. et al. Precision analysis model and experimentation of vision reconstruction with two cameras and 3D orientation reference. Sci Rep 11, 3875 (2021). https://doi.org/10.1038/s41598-021-83390-y

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-021-83390-y

This article is cited by

-

Industrial camera model positioned on an effector for automated tool center point calibration

Scientific Reports (2024)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.