Abstract

Infrared machine vision system for object perception and recognition is becoming increasingly important in the Internet of Things era. However, the current system suffers from bulkiness and inefficiency as compared to the human retina with the intelligent and compact neural architecture. Here, we present a retina-inspired mid-infrared (MIR) optoelectronic device based on a two-dimensional (2D) heterostructure for simultaneous data perception and encoding. A single device can perceive the illumination intensity of a MIR stimulus signal, while encoding the intensity into a spike train based on a rate encoding algorithm for subsequent neuromorphic computing with the assistance of an all-optical excitation mechanism, a stochastic near-infrared (NIR) sampling terminal. The device features wide dynamic working range, high encoding precision, and flexible adaption ability to the MIR intensity. Moreover, an inference accuracy more than 96% to MIR MNIST data set encoded by the device is achieved using a trained spiking neural network (SNN).

Similar content being viewed by others

Introduction

Infrared (IR) machine vision that can efficiently perceive, convert, and process the massive amount of IR optical information of the observed objects has become an important technology for various scenarios requiring crucial decisions, which include autonomous driving, intelligent night vision, military defense and medical diagnosis1,2. The current IR machine vision systems usually rely on physically separated IR imaging devices and von-Neumann computing architectures to perform the real-time information perception and processing, respectively1,2. This system generates large amounts of redundant data being exchanged between sensory terminals and processing units, resulting in high data latency, large computing load and low energy efficiency3,4,5. The lack of compactness and computing efficiency is rapidly making the existing system obsolete in the era of big data and the internet of things.

In contrast to the inefficient machine vision system, the human visual sensory system consists of a very compact retina that can perceive, encode and process a huge visual data set by harnessing distributed and parallel neural networks. In the real world, continuous light stimuli are first received by the sensory neurons in the human retina and then encoded as discrete spike trains generated via a set of neural algorithms6,7. These encoded spike trains are subsequently transmitted to the visual cortex of the brain for information processing8,9. The discretization and stochasticity of spike-encoded information allow long-distance communication and efficient neural computation8. Following the infrastructure and operation mechanism of human visual sensory system, it is highly desired to have the perception and encoding of external optical stimuli integrated in one neuromorphic device for realizing a compact, efficient, and intelligent IR machine vision system.

2D van der Waals (vdWs) heterostructures become the promising candidates for achieving such a goal due to their superior optical functionalities such as strong light-matter interaction, tunable bandgap and the potential compatibility with CMOS platform10,11. Recently, notable progress has been made with 2D vdWs heterostructures in developing neuromorphic sensors, encoders and processors12,13,14,15,16,17,18, presenting a development trend towards all-in-one devices with functionalities integration19,20. However, these studies focus only on the visible and near-infrared (NIR) spectral ranges, while such integrated neuromorphic devices operating in the MIR range would greatly advance IR machine vision systems for autonomous driving, intelligent night visions, defense, and medical applications, and improve the versatility of neuromorphic systems. In addition, the demonstrations of encoding functionality in previous studies are limited in electronic approaches with electrical bias8,17,19,20,21,22. An integrated MIR neuromorphic device with the perception and encoding functionalities driven by an all-optical approach is expected to shed light on the technological development of high-speed and zero-bias information coding of IR machine vision.

In this work, we report an all-optical driving 2D MIR optoelectronic retina with simultaneous perception and encoding functionalities without inducing electrical bias. The neuromorphic 2D vdWs heterostructure composed of b-AsP and MoTe2 is designed such that it can perceive external light in the MIR spectral range (at ∼4.6 μm) while simultaneously encode the received MIR information into spike trains by harnessing a stochastic NIR sampling terminal (at ∼730 nm excitation). Featuring high MIR detectivity (9.6 × 108 cm Hz0.5/W) and fast NIR photoresponse rate (∼600 ns), the device successfully demonstrates a typical neural encoding algorithm of rate-based encoding with wide dynamic working range and high encoding precision for MIR illumination intensities. Our device demonstrates the adaption ability to intensity variation of MIR signal, which is analog to the human eye’s visual adaption to the change in ambient light intensity in the visible range. Furthermore, a trained SNN achieves an inference accuracy of more than 96% to the MIR MNIST data set which is encoded into spikes by the device. The retina-inspired 2D MIR optoelectronic device integrating perception and encoding functionalities has the potential to perform MIR machine vision in a highly compact and efficient way.

Results

Human visual system and the 2D MIR optoelectronic retina

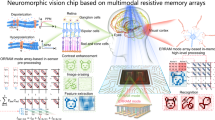

The visual system is one of the important sensory organs for humans to perceive the external world as more than 80% of the environment information is captured in human eyes10,22. Figure 1 shows the implementation of perception, encoding and processing of stimulus signals from external objects in the human visual system (top) and presents the proposed 2D MIR optoelectronic device that can mimic the key functionalities (bottom). For the human visual system, the external stimulation signals are perceived by photoreceptors and converted into electrical impulses (spikes) by ganglion cells following neural encoding algorithms, and eventually transmitted to the visual cortex in the brain for processing8. Notably, the encoding process exhibits the inherent stochasticity which is involved in the spike generation and enhances the noise tolerance of spikes. Inspired by the human visual system, in this work, a 2D optoelectronic retina capable of simultaneously perceiving and encoding MIR optical stimuli is proposed and demonstrated by using a 2D b-AsP/MoTe2 vdWs heterostructure. Upon the stimulation of MIR signals, the photo-excited current (IDS) of the 2D optoelectronic device is measured from source/drain electrodes at zero bias, which mimics the optical signal collection and conversion of the photoreceptors in the human retina. Meanwhile, programmable NIR optical pulses with stochastic intensity cause corresponding fluctuation of IDS, where a spike is generated when the IDS exceeds the threshold line (ITC), emulating the encoding scheme of ganglion cells. The as-generated spike trains with coded MIR information are finally processed by a trained SNN for intelligent tasks, such as classification and decision8,16,17.

In the human visual system, the stimulus signal from external object entering the eye is first converted into corresponding graded potentials by the photoreceptors (rod and cone cells) in the retina. The potentials are then encoded into spikes by ganglion cells with the participation of inherent stochasticity (random signal) exhibited in sensory transduction. Finally, the spikes with coded stimulus information are transferred to the visual cortex in brain for further information processing. In the proposed optoelectronic retina (lower), the 2D b-AsP/MoTe2 vdWs heterostructure mimics the retina to realize integrated perception and encoding functionality for MIR objects with the help of a random NIR-light terminal. The encoded spike signal can be obtained from source-drain current (IDS) under the threshold current (ITC) serving for the input of spiking neural network for information processing.

Perception and encoding characteristics of the 2D MIR optoelectronic retina

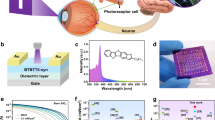

In the proposed 2D MIR optoelectronic retina, the b-AsP is used as the MIR photosensitive layer owing to its narrow bandgap of ∼0.15 eV and high MIR optical absorption efficiency of ∼10%23,24, and MoTe2 with an appropriate bandgap of ∼1.0 eV serves as the NIR sensitizer25,26. Both b-AsP and MoTe2 exhibit high hole mobility of ∼145 and ∼15 cm2/Vs (Supplementary Figs. 1–4), respectively, allowing for fast photoresponse of the b-AsP/MoTe2 devices. The NIR and MIR photoresponse characteristics are discussed in the Supplementary Information (see Supplementary Figs. 1–12 and Note 1 and 2), where the photovoltaic (PV) and photothermoelectric (PTE) effects are identified as the dominant mechanisms for perceiving NIR and MIR illumination, respectively. The schematic diagram of the photocurrent generation in the b-AsP/MoTe2 device under MIR and NIR global illumination are depicted in Fig. 2a, b, respectively. Under MIR laser global illumination, an unbalanced lattice temperature distribution is generated in b-AsP layer due to the asymmetric contacts of b-AsP with MoTe2 and Au electrode. The lattice temperature of b-AsP at the MoTe2 contact side is higher than that at Au electrode contact side because the Seebeck coefficient of b-AsP (723.66 μV/K, see Supplementary Fig. 9) is higher than that of MoTe2 (142.59 μV/K, see Supplementary Fig. 10) and the thermal conductivity of MoTe2 (∼40 W/mK)27,28 is lower than that of Au (∼200 W/mK)29. Such lattice temperature distribution promotes the diffusion of holes in the b-AsP from the MoTe2 contact side to Au electrode contact side, thus forming a positive PTE photocurrent under zero bias with b-AsP as the source terminal. Under NIR laser global illumination, both b-AsP and MoTe2 layers generate electron-hole pairs which are separated by the built-in electrical field with direction pointing from b-AsP to MoTe2 side at the junction. The photo-generated electrons and holes move toward b-AsP and MoTe2, respectively, which contributes to the negative photovoltaic photocurrent. As shown in Fig. 2c, d, the NIR and MIR photoresponse rate of the heterostructure are as fast as 600 ns/3.7 μs and 2.3 μs/20 μs, respectively. The asymmetric response time may be caused by the trapping of photo-excited charge carriers by the defect state in the junction interface or by phosphorus oxide on the b-AsP surface30,31,32. Moreover, the detectivity of the device to MIR illumination can reach up to ∼9.6 × 108 cm Hz0.5/W. More details and discussions on the photoresponse performance under MIR and NIR illumination are provided in Supplementary Figs. 13–19 and Note 3.

a, b Photoresponse mechanisms of the b-AsP/MoTe2 heterostructure under MIR and NIR global illumination at VDS = 0 V. c, d Photoresponse rate of the heterostructure at VDS = 0 V. The NIR (730 nm) and MIR (4.6 μm) photoresponse rate of the heterostructure are as fast as 600 ns/3.7 μs and 2.3 μs/20 μs, respectively. e, f Output photocurrents (IDS) of the heterostructure under an input of simultaneous MIR and NIR illuminations. The power densities of MIR and NIR are shown in (e). g The dependence of output photocurrent on the MIR power density under the modulation of NIR light with various power densities. h One train of NIR optical pulses (100 time-steps (pulses) for one train) that are randomly sampled from a Gaussian distribution for spike rate-based encoding. i The Gaussian distribution of NIR power densities with mean of u = 130 mW/cm2 and a standard deviation of σ = 75 mW/cm2. j Analog value of MIR power density. k Corresponding time-domain transduction current (IDS) waveform output from the source electrode for each MIR power density in (j). The sampling rate for NIR light is 100 kHz. l Corresponding spike train for each IDS waveform in (g) when the spike threshold current (ITC) is set to 0 nA. The IDS higher than ITC could stimulate one spike. m Mean spike rate as a function of PMIR when u, σ, ITC are 130 mW/cm2, 75 mW/cm2, 0 nA, respectively. Error bars in (m) represent the variation (standard deviation) of spike rate.

For encoding operations, the MIR stimulus signals and NIR sampling terminal are simultaneously input onto the device. We first demonstrate the photoresponse of the device under simultaneous illuminations of both MIR and NIR laser. As shown in Fig. 2e, f, distinct output photocurrents (IDS) can be observed when the device is simultaneously illuminated by MIR with a certain power density and NIR with various power densities. The photoresponse under the simultaneous illuminations shows high repeatability and stability, evidenced by multiple and reproducible switching (Supplementary Fig. 20). Figure 2g depicts the dependence of IDS on the MIR illumination intensity at different NIR power densities, which is an important reference to obtain dynamic encoding range for MIR power density (PMIR) once the ITC and NIR power density (PNIR) distribution are given. More important, the stable photoresponse can be still maintained under NIR illumination with a frequency of 100 kHz (Supplementary Fig. 21). Such a fast and stable response makes it possible to generate higher spiking rates and provides a guarantee for high-precision MIR intensity coding.

Next, we experimentally demonstrate the function of simultaneous perception and spike rate-based encoding for PMIR. The NIR laser is applied as sampling pulses with amplitude following a Gaussian distribution with a sampling period (TS) of 10 μs (on/off = 5/5 μs), which is analogous to inherent stochasticity8. This sampling period is determined by taking into account the NIR response rate. Figure 2h shows a train of NIR optical pulse that is randomly sampled from a Gaussian distribution with the mean, u = 130 mW/cm2, and standard deviation, σ = 75 mW/cm2 for spike rate-based encoding (Fig. 2i). When the NIR sampling pulse and MIR light with a specific intensity are simultaneously illuminated on the device, the response corresponding to each PMIR (Fig. 2j) is recorded by IDS. The PMIR is encoded by one train of NIR optical pulses (100 time-steps for one train) and therefore results in a train of IDS with 100 sampling points. As-recorded IDS trains with ITC = 0 nA and corresponding spike trains are shown in Fig. 2k, l, respectively. The delineation rule of ITC is discussed in Supplementary Fig. 24. The IDS value higher than ITC = 0 nA stimulates one spike. Average spike rate for each PMIR is calculated according to the generated spike train (spike rate = \(\frac{1}{{{{T}}}_{{{{{{\rm{s}}}}}}}}\cdot \frac{{{n}}}{{{{{{\rm{Time}}}}}}-{{{{{\rm{steps}}}}}}}\) (Hz), where n is the number of spikes in the output spike train), as shown in Fig. 2m. It can be clearly observed that the device is capable of simultaneously perceiving and encoding the PMIR within ∼80.21 W/cm2. The error in spike rate is about 0.9% due to the fluctuation of IDS waveform. Notably, a fast response speed to NIR light for our device is helpful to increase time-steps over a fixed encoding time which equals the multiplication of time-steps and TS. Insufficient time-steps for one MIR intensity cannot guarantee high encoding accuracy (analyzed in Supplementary Fig. 25).

Visual adaption ability of the 2D MIR optoelectronic retina

Adaption occurs in all sensory systems to help them efficiently encode external stimuli as the stimuli distribution changes33. For example, the human eyes can identify objects both in starlight and in sunlight by changing neural encoding strategy during the adaption process34. For intelligent MIR vision tasks, a high-performance MIR optoelectronic retina should also have such visual adaption ability to satisfy various application scenarios. Two related aspects of the visual adaption ability, namely, dynamic working range and encoding precision are discussed here. A high dynamic working range allows the device to respond to the MIR targets with distinct PMIR difference. For example, the temperature of pig iron and steel strips in industrial process is 427 K and 1457.85 K, respectively35. Their PMIR differs over a dynamic range of ~24 dB if they are regarded as two ideal blackbodies according to Plank’s radiation law36. To identify them at the same time, a dynamic working range of PMIR over 24 dB is required. However, the wide dynamic working range sacrifices the encoding precision defined as the resolution of spike rate for unit PMIR in encoded images. The dynamic working range is hence required of compression to attain high encoding precision for some cases that the details of PMIR distribution inside targets need to be accurately identified, such as MIR imaging of human body for medical diagnosis37.

To demonstrate the adaption ability of our MIR optoelectronic retina, we establish a testing setup shown in Fig. 3a. A metal mask with nine hollow figures “3” illuminated by MIR laser is used to imitate the real MIR targets. The mask can move along the x and y axis to allow MIR light to pass each target in order. By adjusting the output optical power of MIR laser, the PMIR distribution of each target “3” is different. The real PMIR distribution of nine targets “3” is measured by photocurrent mapping method (seen in “Methods” section) and presented in Fig. 3b. For convenience, nine targets “3” are named as (i) to (x) in the incremental order of PMIR. To encode the PMIR distribution of targets into corresponding spike trains, another NIR light whose PNIR is sampled from a Gaussian distribution with u and σ of 130 and 75 mW/cm2 is also incident into the device at the same time. The recognized image after rate encoding by our device is shown in Fig. 3c. The correlation coefficient (CC) which refers to the similarity of the encoded target and original one, all exceed 97% for targets (i–x) (Bottom curve of Fig. 3f), validating that our device has an excellent encoding precision. This is attributed to the fast response reaching 100 kHz that provides sufficient rate encoding resources for high PMIR resolution.

a Schematic diagram of test setup for the optoelectronic retina to perceive and encode the MIR targets. A 2D-moveable metal mask provides nine “3”-shaped MIR targets whose average optical power density (PMIR) are linearly distributed within 0 to 80.21 W/cm2 by adjusting the output optical power of 4.6 μm laser. b The directly detected images of nine MIR targets “3”, namely (i) to (x) in order. The color distribution is linearly mapped to PMIR. c The encoded images of nine MIR targets, whose color distribution is linearly mapped to spike rate ranging from 0 to 100 kHz. The rate of every pixel is calculated based on the spike train with 100 time-steps under u, σ, ITC of 130 mW/cm2, 75 mW/cm2, 0 nA, respectively. d Experimental result of spike rate as a function of PMIR for different u and σ used for sampling 730 nm light. e Results of encoding images under different u and σ. Such parameter adjustment allows device have flexible dynamic working range and encoding precision to adapt targets with different PMIR. f The correlation coefficient between each encoded target “3” in (e) and their original image in (b). A higher correlation coefficient indicates higher encoding precision. Four encoding cases with different (u, σ) are discussed.

The adjustment of u and σ for sampling the PNIR can conveniently tune the dynamic working range. The increase of σ extends the dynamic working range, while the increase of u shifts the dynamic working range to a high PMIR range. The experimental and simulation results are presented in Fig. 3d and Supplementary Fig. 26a–c, respectively. Such dependence can also be observed from the encoded images in Fig. 3e and CCs in Fig. 3f in different cases of (u, σ). For example, when the (u, σ) changes from (70, 35) to (130, 35), the dynamic working range shifts to the high PMIR range, which results in the correct encoding of the high-power target (ix) with CC improving from 83% to 98% but failed encoding of the low-power target (ii) with CC = 0. When the σ is increased from 35 to 75 at u = 130, the CCs of targets (ii) and (ix) both reach 98% without any encoding failure, which verifies the function of σ used to extend dynamic working range. The u should keep a high power when σ is relatively high (like σ = 75 here). Otherwise, the background noise of the encoded image will be magnified due to the no-zero spike rate at PMIR = 0, such as the results at (u, σ) = (70, 75), causing an extra interference for identifying targets. To magnify the details of PMIR distribution inside one certain target, a high encoding precision is required and can be achieved by decreasing σ under a suitable u. For example, the target (ii) at (u, σ) = (70, 35) has a higher contrast than the case at (u, σ) = (70, 75). Therefore, optimizing the u and σ values is critical in achieving a suitable dynamic working range and high encoding precision and help exhibit the eye’s visual adaption ability to different MIR targets in our device.

Encoding a perceived image for classification using spiking neural network

Lastly, we utilize the device to encode the MIR MNIST data set into spike trains, which enables the successful realization of SNN-based digit classification tasks with inference accuracy of more than 96% (see the “Methods” section for the details about preparing the MIR MNIST data set). Compared to traditional artificial neural network (ANN)38, SNN is believed to be a more efficient neural network that rarely requires high-precise multiplication. Also, the density of binary spikes required for SNN is much sparser than that for ANN, mitigating the storage memory and energy requirements38. The energy-delay product of SNN running on a spike-based neuromorphic hardware has been proved by four-orders magnitude lower than that of the traditional DNN running on a CPU over one batch size39. We use the snnTorch platform introduced by Eshraghian38 to establish a fully-connected three-layers SNN that consists of the input layer, hidden layer and output layer with 784, 200 and 10 neurons, respectively, as shown in Fig. 4a. Each image in the MIR MNIST data set with a size of 28 × 28 pixels is perceived and encoded by our device into 784 spike trains that concurrently enter into the input layer of a trained SNN. The training and parameters optimization methods for SNN are described in the Methods section. The 10 spiking neurons in the output layer shown in Fig. 4a represent digits from 0 to 9. The spiking neuron producing the spike train with the highest spike rate corresponds to the digit that SNN predicts. Each spiking neuron in every layer is described by a leaky integrated-and-fire (LIF) neuron model40, as shown in Fig. 4b. The input pre-neuronal spikes Xi(t) of the ith spiking neuron are modulated by synaptic weights Wi to produce a resultant current \({\sum }_{i=1}^{k}{W}_{i}^{T}{X}_{{{{{{\rm{i}}}}}}}\left(t\right)\), which affects the membrane potential Vmem of the post-neuron in the next neuron layer, given as:

where β and k are membrane potential decay rate and the number of neurons in this layer, respectively. The T is the transposition operation. The Vmem of the post-neuron will integrates incoming spikes until it reaches membrane threshold VTH where the Vmem is reset to zero. Meanwhile, the post-neuron generates an output spike which acts as the input spike of next neuron layer. In our device, ITC is equivalent to the VTH.

a The MIR digit image is detected and rate-based encoded into several series of spike trains which enter a trained fully-connected SNN to realize digit classification task. The corresponding digit of the output neuron having the highest spike rate is the predicted result. b The leaky integrate-and-fire (LIF) neuron model used in each nodes of SNN. The membrane voltage (Vmem) increases with the weighted input spikes (\({{{{{\boldsymbol{W}}}}}}\cdot {{{{{\boldsymbol{X}}}}}}\)) until it reaches a constant threshold (VTH) at which an output spike appears in the output spike train (Y) and the Vmem is reset to zero. During the period without input spike, the Vmem decays with the membrane potential decay rate (β) of 0.95. Classification accuracy of SNN vs. different Pmax of MIR MNIST images at different σ and u is described in (c) and (d), respectively. The u, ITC and time-steps in (c) are 130 mW/cm2, 0 nA and 100, respectively. The σ, ITC and time-steps in (d) are 15 mW/cm2, 0 nA and 100, respectively. e Classification accuracy of SNN vs. time-steps of one spike train when Pmax is 21 W/cm2. The σ and u are optimized to 25 and 70 mW/cm2, respectively, with the ITC of 0 nA. Insets: (i–iii) show the encoded images of “3” when time-steps are 1, 5 and 100, respectively. Too few sampling points result in an inadequate representation of digits after encoding.

The classification performance of SNN significantly depends on the dynamic working range and encoding precision of the device. As mentioned in Fig. 3, the u and σ values of Gaussian distribution for sampling NIR light control the dynamic working range and encoding precision. If the dynamic working range mismatches the PMIR range of the target within [0, Pmax] or the encoding precision is insufficient, the inaccurate translation of the target by encoded spikes will cause inference error of SNN. Figure 4c, d shows the classification accuracy of SNN when the Pmax of MIR MNIST test set varies from 0 to 80.21 W/cm2 at different values of u and σ. A relatively low σ of 35 makes the dynamic working range too narrow to encode the digits with Pmax lower than 10 W/cm2, resulting in 9.8% classification accuracy. When σ increases to 55, the enlarged dynamic working range can cover both low and high Pmax and allows the classification accuracy to become higher than 96%. However, the further increase of σ to 75 decreases the encoding precision. The spike rate resolution is not sufficient to support accurate classification for the low-Pmax case. Additionally, the background noise is a little magnified, hampering the inference of SNN. The u value controls the position of the dynamic working range, and it therefore controls the position of high-accuracy working range of SNN. For example, the working range with classification accuracy higher than 96% gradually moves to higher PMIR range when u increases from 100 to 130 with σ = 15, shown in Fig. 4d. The time-steps, representing the number of sampling points for NIR light to encode one MIR intensity, also influences the classification accuracy of SNN. As shown in Fig. 4e, classification accuracy increases as the increase of time-steps, and reaches 96% at the time-steps of 100 at an optimal (u, σ) = (70, 25) to encode the target with Pmax of 21 W/cm2. The performance of our device is already comparable to an ideal linear encoder. However, insufficient time-steps result in inadequate representation of targets, and therefore significantly decline the classification accuracy. The encoded images of target “3” using time-steps of 1, 5 and 100 are given in Fig. 4e (i–iii), respectively, which highlights the significance of sufficient time-steps for accurate encoding and inference of SNN. The results of the accuracy vs. time-steps for other Pmax are also provided in Supplementary Fig. 28, which suggests low-power MIR objects require more time-steps to achieve accurate classification compared to high-power MIR objects. These facts indicate a fast response speed to NIR light in our device is critical to help SNN realize accurate MIR objects classification using short encoding time. Besides, the impact of device thicknesses, different wavelengths and distribution of the sampled stochastic light on encoding precision and classification accuracy of SNN are also discussed in Supplementary Figs. 29–31. Overall, by optimizing the encoding parameters, our device can ensure the fast and accurate encoding ability on MIR objects, as well as help SNN realize MIR objects classification tasks with the inference accuracy up to 96%.

Discussion

Inspired by the human vision system with the function of perceiving, transmitting and processing the external environment information, we demonstrate a compact retina-inspired MIR optoelectronic device using a 2D b-AsP/MoTe2 vdWs heterostructure. The device features a high MIR (∼4.6 μm) detectivity of 9.6 × 108 cm Hz0.5/W and a fast NIR (730 nm) response rate of ∼600 ns without inducing electrical bias. Impressively, the proposed device could not only perceive the MIR illumination stimuli, but also encode it into rate-based spike trains with the assistance of a stochastic NIR sampling terminal. Moreover, device’s encoding range and precision can be flexibly adjusted for different MIR illumination intensities. The device encodes the MIR MNIST data set into spike trains which enables SNN to achieve digit classification with an accuracy higher than 96%. Our work provides a promising routine for constructing compact and efficient MIR neuromorphic devices for night machine vision, military, defense, and medical diagnosis. We anticipate that the optical approaches of realizing neuromorphic functions based on 2D vdWs heterostructures have the potential of wide bandwidth up to tens of gigahertz when combined with integrated guided-wave nanophotonics25,41, bringing in the advantages of low data latency and high energy efficiency.

Methods

Device fabrication and characterization

Because 2D b-AsP and MoTe2 flakes are sensitive to the water and oxygen in the surrounding environment, a dry transfer method was applied to fabricate the 2D b-AsP/MoTe2 vdWs heterostructure. The contact electrodes (5/50 nm Cr/Au) were first patterned on a SiO2/Si substrate by standard photolithography and electron beam evaporation. The exfoliated 2D b-AsP and MoTe2 flakes from bulk crystals were then dry transferred onto the electrodes. Finally, h-BN encapsulation was used to protect the device from degradation. The morphology and thickness of as-fabricated device were characterized by optical microscope (Nikon), atomic force microscope (Bruker Dimension Icon). Scanning photocurrent mapping was performed by using confocal micro-Raman spectroscopy (WITec alpha300) equipped with a focused 532 nm laser.

Detection and encoding measurements

The measurements of electrical and photoelectric properties were performed at room temperature and under ambient air conditions. A digital source meter (Keysight, B2912A) was used to apply voltage to the device and record the generated current. A MIR quantum cascade laser (QCL) (Daylight Solution, MIRCat) with tunable wavelength from 3.5 to 11.0 μm was employed as the external stimuli. The power of MIR laser was recorded by a thermal power meter (OPHIR, Nova display-ROHS). A power adjustable 730 nm laser (HÜBNER Photonics, Cobolt 06-MLD) was applied as the stochastic terminal and its power density was measured using a power meter (Thorlabs, PM100D). The laser spots of MIR laser and 730 nm laser are about 100 μm, which is larger than the size scaling of the as-fabricated 2D b-AsP/MoTe2 vdWs heterostructure. For the encoding measurements, the device is simultaneously illuminated by 4.6 μm MIR laser with a fixed power density and pulsed 730 nm laser with Gaussian distribution power densities. The sampling period (TS) of 730 nm laser is set to 10 μs and its amplitude is determined by the desired encoding algorithm. The fast current sampling was collected by means of an oscilloscope (Keysight, DSOX3054T).

Photocurrent mapping method to recognize P MIR distribution image

To recognize the PMIR distribution image of figure “3” targets in mask, the responding photocurrent of device to every pixel of mask is collected by oscilloscope. The mask has 300 × 300 pixels in which each “3” target occupies 100 × 100 pixels. The PMIR of 4.6 μm laser from QCL on every “3” region (100 × 100 pixels) is different. When the mask is scanned by pixels, the responding photocurrent of each pixel depends on the optical flux of 4.6 μm laser passing through this pixel region. According to the mapping relation of photocurrent and PMIR given in Supplementary Fig. 16b, the corresponding PMIR for every pixel can be estimated from the photocurrent obtained by experiment, and finally constitutes the PMIR distribution image shown in Fig. 3b.

Preparation of MIR MNIST data set

The MIR MNIST data set is obtained by mapping pixel values of traditional MNIST data set ranging in [0, 255] to optical power density of 4.6 μm laser ranging in [0, Pmax]. Once the Pmax is set, every image in the prepared MIR MINST data set with a size of 28 × 28 pixels is first flatten to obtain 784 analog optical power density of MIR laser. The MIR laser with a certain optical power density can be detected and encoded by our device into spike trains as the input of SNN.

Training and parameters optimization of SNN

For training of SNN, a surrogate gradient descent algorithm is used to update synaptic weights38 in order to avoid dead neuron problem. The loss function and optimizer used here are cross-entropy loss and Adam optimizer. There are 60,000 and 10,000 MIR MNIST images used for training and test, respectively. The number of hidden neurons and membrane potential decay rate are two super-parameters affecting classification ability of SNN. More hidden neurons and higher β can enhance the classification accuracy (seen in Supplementary Fig. 27a, b). The β of real synaptic devices hardly reaches 100%, and therefore the β in our work is set to 0.95. The number of hidden neurons is set to 200 considering the trade-off between performance and complexity. After training around 450 iterations in one epoch with the batch size of 128, the loss of train and test sets all converge to a steady level, verifying SNN is well trained without under-fitting and over-fitting problems (seen in Supplementary Fig. 27c).

Data availability

The data that support the findings of this study are available within the main text and Supplementary Information. Any other relevant data are available from the corresponding author upon reasonable request. Source data are provided with this paper.

Code availability

The code can be available from the corresponding author upon reasonable request.

Change history

30 November 2023

A Correction to this paper has been published: https://doi.org/10.1038/s41467-023-43859-y

References

Dudek, P. et al. Sensor-level computer vision with pixel processor arrays for agile robots. Sci. Robot. 7, eabl7755 (2022).

He, Y. et al. Infrared machine vision and infrared thermography with deep learning: a review. Infrared Phys. Technol. 116, 103754 (2021).

Zhou, F. et al. Optoelectronic resistive random access memory for neuromorphic vision sensors. Nat. Nanotechnol. 14, 776–782 (2019).

Zhou, F. & Chai, Y. Near-sensor and in-sensor computing. Nat. Electron. 3, 664–671 (2020).

Wang, S. et al. Networking retinomorphic sensor with memristive crossbar for brain-inspired visual perception. Natl. Sci. Rev. 8, nwaa172 (2021).

Choi, S. Y. et al. Encoding light intensity by the cone photoreceptor synapse. Neuron 48, 555–562 (2005).

Meister, M., Lagnado, L. & Baylor, D. A. Concerted signaling by retinal ganglion cells. Science 270, 1207–1210 (1995).

Subbulakshmi Radhakrishnan, S., Sebastian, A., Oberoi, A., Das, S. & Das, S. A biomimetic neural encoder for spiking neural network. Nat. Commun. 12, 2143 (2021).

Kim, Y. et al. A bioinspired flexible organic artificial afferent nerve. Science 360, 998–1003 (2018).

Chen, W., Zhang, Z. & Liu, G. Retinomorphic optoelectronic devices for intelligent machine vision. iScience 25, 103729 (2022).

Wu, P. et al. Next‐generation machine vision systems incorporating two‐dimensional materials: progress and perspectives. InfoMat 4, e12275 (2021).

Wang, C. Y. et al. Gate-tunable van der Waals heterostructure for reconfigurable neural network vision sensor. Sci. Adv. 6, eaba6173 (2020).

Liao, F. et al. Bioinspired in-sensor visual adaptation for accurate perception. Nat. Electron. 5, 84–91 (2022).

Pi, L. et al. Broadband convolutional processing using band-alignment-tunable heterostructures. Nat. Electron. 5, 248–254 (2022).

Zhang, X. et al. An artificial spiking afferent nerve based on Mott memristors for neurorobotics. Nat. Commun. 11, 51 (2020).

Tan, H. et al. Tactile sensory coding and learning with bio-inspired optoelectronic spiking afferent nerves. Nat. Commun. 11, 1369 (2020).

Wu, Q. et al. Spike encoding with optic sensory neurons enable a pulse coupled neural network for ultraviolet image segmentation. Nano Lett. 20, 8015–8023 (2020).

Zhang, Z. et al. All-in-one two-dimensional retinomorphic hardware device for motion detection and recognition. Nat. Nanotechnol. 17, 27–32 (2021).

Dodda, A., Trainor, N., Redwing, J. M. & Das, S. All-in-one, bio-inspired, and low-power crypto engines for near-sensor security based on two-dimensional memtransistors. Nat. Commun. 13, 3587 (2022).

Subbulakshmi Radhakrishnan, S. et al. A sparse and spike-timing-based adaptive photoencoder for augmenting machine vision for spiking neural networks. Adv. Mater. 34, 2202535 (2022).

Chen, C. et al. A photoelectric spiking neuron for visual depth perception. Adv. Mater. 34, 2201895 (2022).

Vijjapu, M. T. et al. A flexible capacitive photoreceptor for the biomimetic retina. Light Sci. Appl. 11, 3 (2022).

Amani, M., Regan, E., Bullock, J., Ahn, G. H. & Javey, A. Mid-wave infrared photoconductors based on black phosphorus-arsenic alloys. ACS Nano 11, 11724–11731 (2017).

Long, M. et al. Room temperature high-detectivity mid-infrared photodetectors based on black arsenic phosphorus. Sci. Adv. 3, e1700589 (2017).

Flory, N. et al. Waveguide-integrated van der Waals heterostructure photodetector at telecom wavelengths with high speed and high responsivity. Nat. Nanotechnol. 15, 118–124 (2020).

Maiti, R. et al. Strain-engineered high-responsivity MoTe2 photodetector for silicon photonic integrated circuits. Nat. Photon. 14, 578–584 (2020).

Shafique, A. & Shin, Y. H. Strain engineering of phonon thermal transport properties in monolayer 2H-MoTe2. Phys. Chem. Chem. Phys. 19, 32072–32078 (2017).

Zulfiqar, M., Zhao, Y., Li, G., Li, Z. & Ni, J. Intrinsic thermal conductivities of monolayer transition metal dichalcogenides MX2 (M = Mo, W; X = S, Se, Te). Sci. Rep. 9, 4571 (2019).

Dai, M. et al. High-performance, polarization-sensitive, long-wave infrared photodetection via photothermoelectric effect with asymmetric van der Waals contacts. ACS Nano 16, 295–305 (2022).

Bullock, J. et al. Polarization-resolved black phosphorus/molybdenum disulfide mid-wave infrared photodiodes with high detectivity at room temperature. Nat. Photon. 12, 601–607 (2018).

Ahmed, T. et al. Fully light-controlled memory and neuromorphic computation in layered black phosphorus. Adv. Mater. 33, 2004207 (2021).

Liu, C. et al. Polarization‐resolved broadband MoS2/black phosphorus/MoS2 optoelectronic memory with ultralong retention time and ultrahigh switching ratio. Adv. Funct. Mater. 31, 2100781 (2021).

Wark, B., Lundstrom, B. N. & Fairhall, A. Sensory adaptation. Curr. Opin. Neurobiol. 17, 423–429 (2007).

Laughlin, S. B. The role of sensory adaptation in the retina. J. Exp. Biol. 146, 39–62 (1989).

Usamentiaga, R. et al. Infrared thermography for temperature measurement and non-destructive testing. Sensors 14, 12305–12348 (2014).

Adkins, C. J. Equilibrium Thermodynamics 3rd edn (Cambridge University Press, 1983).

Houdas, Y. & Ring, E. Human Body Temperature: Its Measurement and Regulation (Springer Science & Business Media, 2013).

Eshraghian, J. K. et al. Training spiking neural networks using lessons from deep learning. https://arxiv.org/abs/2109.12894 (2021).

Rao, A., Plank, P., Wild, A. & Maass, W. A long short-term memory for AI applications in spike-based neuromorphic hardware. Nat. Mach. Intell. 4, 467–479 (2022).

Izhikevich, E. M. Simple model of spiking neurons. IEEE Trans. Neural Netw. 14, 1569–1572 (2003).

Liu, C. et al. Silicon/2D-material photodetectors: from near-infrared to mid-infrared. Light Sci. Appl. 10, 123 (2021).

Acknowledgements

This work was supported by the Singapore Ministry of Education (MOE-T2EP50120-0009 (Q.J.W.)), Agency for Science, Technology and Research (A*STAR) (A18A7b0058 (Q.J.W.) and A2090b0144 (Q.J.W.)), National Medical Research Council (NMRC) (MOH-000927 (Q.J.W.)), and National Research Foundation Singapore (NRF-CRP22-2019-0007 (Q.J.W.)), National Key Research and Development Program of China (2022YFB2802803 (N.C.)), the Natural Science Foundation of China Project (61925104 (N.C.), 62031011 (N.C.)) and Major Key Project of PCL (N.C.), and F.H. acknowledges the support from the China Scholarship Council.

Author information

Authors and Affiliations

Contributions

F.W. and F.H. designed the experiments and analyzed the data. F.W. and F.H. wrote the manuscript. F.W., F.H. and M.D. fabricated the devices. S.Z. performed the atomic force microscope measurements. F.S., R.D., C.W., J.H., W.D., W.C., M.Y., S.H., B.Q., Y.J., and Y.C. provided experimental testing support. D.N., S.H.C., Q.J.W., N.C., S.Y. and Z.L. revised the manuscript. Q.J.W. supervised the project. All authors have discussed the results and commented on the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Communications thanks the anonymous reviewers for their contribution to the peer review of this work. Peer reviewer reports are available.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Source data

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Wang, F., Hu, F., Dai, M. et al. A two-dimensional mid-infrared optoelectronic retina enabling simultaneous perception and encoding. Nat Commun 14, 1938 (2023). https://doi.org/10.1038/s41467-023-37623-5

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41467-023-37623-5

This article is cited by

-

Deeply subwavelength mid-infrared phase retardation with α-MoO3 flakes

Communications Materials (2024)

-

An artificial visual neuron with multiplexed rate and time-to-first-spike coding

Nature Communications (2024)

-

Multidimensional detection enabled by twisted black arsenic–phosphorus homojunctions

Nature Nanotechnology (2024)

-

Cross-layer transmission realized by light-emitting memristor for constructing ultra-deep neural network with transfer learning ability

Nature Communications (2024)

-

Non-volatile rippled-assisted optoelectronic array for all-day motion detection and recognition

Nature Communications (2024)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.