Abstract

Study Design:

Validation study.

Objectives:

To describe the development and validation of a computerized application of the international standards for neurological classification of spinal cord injury (ISNCSCI).

Setting:

Data from acute and rehabilitation care.

Methods:

The Rick Hansen Institute-ISNCSCI Algorithm (RHI-ISNCSCI Algorithm) was developed based on the 2011 version of the ISNCSCI and the 2013 version of the worksheet. International experts developed the design and logic with a focus on usability and features to standardize the correct classification of challenging cases. A five-phased process was used to develop and validate the algorithm. Discrepancies between the clinician-derived and algorithm-calculated results were reconciled.

Results:

Phase one of the validation used 48 cases to develop the logic. Phase three used these and 15 additional cases for further logic development to classify cases with ‘Not testable’ values. For logic testing in phases two and four, 351 and 1998 cases from the Rick Hansen SCI Registry (RHSCIR), respectively, were used. Of 23 and 286 discrepant cases identified in phases two and four, 2 and 6 cases resulted in changes to the algorithm. Cross-validation of the algorithm in phase five using 108 new RHSCIR cases did not identify the need for any further changes, as all discrepancies were due to clinician errors. The web-based application and the algorithm code are freely available at www.isncscialgorithm.com.

Conclusion:

The RHI-ISNCSCI Algorithm provides a standardized method to accurately derive the level and severity of SCI from the raw data of the ISNCSCI examination. The web interface assists in maximizing usability while minimizing the impact of human error in classifying SCI.

Sponsorship:

This study is sponsored by the Rick Hansen Institute and supported by funding from Health Canada and Western Economic Diversification Canada.

Similar content being viewed by others

Introduction

Spinal cord injury (SCI) results in impairment to motor and sensory function.1 A reliable and a valid assessment of the extent and severity of these physical impairments is critical to supporting clinical care, prognosis and research.2 The International Standards for Neurological Classification of Spinal Cord Injury (ISNCSCI;3) were developed for this purpose. They were originally published in 1982 by the American Spinal Injuries Association (ASIA;4) and are now overseen by the International Standards Committee within ASIA. The most recent update was made in 2011, which includes updates to the worksheet and introduction of the non-key muscle examination.3

The ISNCSCI examination and classification provide a common language to describe the extent of motor and sensory dysfunction due to SCI. Using the classification rules as outlined in the ISNCSCI, there are a number of derived elements that include the following: motor and sensory levels — right and left, a single neurological level of injury (NLI), the completeness of SCI, zone of partial preservation, and the severity of SCI using the ASIA Impairment Scale (AIS).

Monitoring the level (motor, sensory and NLI) and severity (AIS) of SCI and the total motor and sensory scores from the time of injury throughout the individual’s lifetime is routinely done as a part of clinical care to detect an improvement or a deterioration in neurological impairment5, 6 or to evaluate the influence of clinical interventions on neurological recovery.7, 8 The ISNCSCI examination is also often utilized as the primary outcome of clinical studies, and its classification components are used to determine eligibility and stratification of participants in clinical trials.9, 10, 11, 12, 13 As such, it is imperative that the ISNCSCI be applied both accurately and reliably.

The reliability of the raw individual motor and sensory scores and the rectal examination has been previously studied and optimized through education and training of examiners.14, 15, 16, 17, 18 With the implementation of the Rick Hansen Spinal Cord Injury Registry (RHSCIR;19), a pan-Canadian prospective, observational registry for traumatic SCI, the majority of RHSCIR examiners received training in the ISNCSCI examination and classification. Training has been demonstrated to improve accuracy in scoring and classification.20, 21 We noted that, although the ISNCSCI was widely used, when the RHSCIR central coordinating site staff examined the raw motor, sensory and rectal examination data and compared the AIS and NLI derived from the local RHSCIR site, there was a significant problem with classification errors. Other studies have also reported high misclassification rates in the AIS (11.9–13%) and motor level (18–26%) determination.16, 21, 22 Reasons for some of these classification errors include multiple revisions to the ISNCSCI rules over the decades, as well as inconsistent interpretation and implementation of scoring and classification rules among examiners.

Concerns regarding errors in the interpretation and application of the ISNCSCI classification led to the development of the Rick Hansen Institute-ISNCSCI Algorithm (RHI-ISNCSCI Algorithm), a computerized algorithm to provide the correct interpretation of the ISNCSCI neurological exam and improve the accuracy and validity of the derived AIS, NLI, motor score and other classification elements. The advantages of a computer algorithm include the following: improving the accuracy of the classification (particularly in deriving the AIS, total motor score and NLI), reducing the time to classify large numbers of cases and providing education on the ISNCSCI by producing immediate feedback to confirm clinician classification.

The purpose of this paper is to describe the development and validation of the RHI-ISNCSCI Algorithm.

Materials and methods

ISNCSCI algorithm working group

The RHI-ISNCSCI Algorithm (henceforth referred to as the Algorithm) was built based on the 2011 version of the ISNCSCI and 2013 version of the worksheet.23 To ensure that the ISNCSCI rules were interpreted correctly and to promote collaboration, an international group of experts from ASIA and International Spinal Cord Society (ISCoS), including members of the ASIA International Standards and Education Committees, were engaged in an advisory capacity. These international experts and the RHI team of clinical experts and software developers formed the ISNCSCI Algorithm Working Group (IAWG). The IAWG collaborated on design, development and validation of the Algorithm.

Architecture

The Algorithm is made up of two components: a library containing the logic required for performing the ISNCSCI calculations and classifications and a web interface. Both components were developed using an agile process where the capabilities of the application were increased incrementally. Every increment was reviewed by the IAWG and tested in an internal test website before proceeding onto the next increment.

The algorithm library

The algorithm library component, written in C#.Net, uses raw motor and sensory examination grades as an input to calculate the following derived elements:

-

Motor and sensory subscores

-

Neurological Levels — sensory right and left, motor right and left

-

NLI

-

Completeness of Injury — Complete or Incomplete

-

AIS

-

Zone of partial preservation — sensory right and left, motor right and left

The web interface

A web interface was built to make the Algorithm publicly accessible and to provide a method of supporting beta testing by members of the international SCI community. The dermatome map is in Standard Vector Graphics format compatible with modern web browsers. The interface is freely accessible and is located at http://www.isncscialgorithm.com/ (Figure 1).

The web application approach was chosen as it provides many benefits. The Algorithm is not tied to a specific operating system or a software version that allows it to be shared and used easily, thus minimising associated developmental costs and distribution efforts. Given that the application is centralized at a specific web address, there is only one application that requires updating when a new version becomes available. Users can be sure that they are using the latest version of the application. In addition, providing frequent updates ensures that there are no delays in releasing new features, which is a frequent problem with formal product re-releases. An internet connection and a modern web browser are the only requirements for accessing the application, which makes the Algorithm easily accessible internationally.

Development and validation

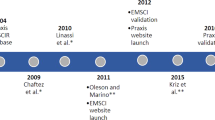

Once the development of the application was complete, it underwent validation testing of both the Algorithm and the web interface components. Each of the five phases is briefly described below (Figure 2).

Phase one

During phase one (the initial logic development), a logic model was developed to classify cases where all raw scores were known (that is, cases did not include ‘not testable’ (NT) values) using the 2011 version of the ISNCSCI.3 The initial logic model was developed using International Standards Training e-learning Program24 cases originally developed by ASIA for ISNCSCI training and hypothetical cases designed to assess specific areas of the logic model (including unlikely scenarios, for example, where every dermatome and myotome are left blank or have the value ‘0’, so on.). These included expert cases that were accumulated from the literature25 and provided by Dr Ralph Marino to test what were considered to be uniquely challenging classification rules.

Phase two

Because of the importance of real world testing, the developed logic was tested in phase two using the real-life cases obtained and scored by trained clinicians at acute and rehabilitation hospitals in one of the 31 Canadian RHSCIR sites. Any discrepancies between the Algorithm and the clinician-generated results were independently reviewed by at least two of the IAWG experts, and the Algorithm was updated accordingly. On completion of internal testing, the Algorithm was presented at both the ISCoS and Academy of Spinal Cord Injury Professionals 2012 meetings at which time the public beta testing website was launched. The beta testing website was used to further validate the Algorithm and to gather feedback.

Phase three

In phase three, additional features of the Algorithm were developed that included incorporating logic for NT data and other user-friendly features. This logic was developed using cases from phase one and additional hypothetical cases with NT values.

Phase four

Phase four included re-running cases used in phase two and additional randomly selected RHSCIR cases including those with NT values. This tested the new logic developed in phase three and ensured the ongoing accuracy of logic previously developed in phase one. Any discrepancies between the Algorithm and the clinician-generated results were independently reviewed by at least two of the IAWG experts, and the Algorithm was updated accordingly.

Phase five

In phase five, cross-validation testing of the logic with new RHSCIR cases was conducted to confirm accuracy of the Algorithm. Version 1.0 of the Algorithm was published on the public website in May 2014. The Algorithm and interface were then made available in an open source format in http://www.isncscialgorithm.com/SourceCode.

Results

Algorithm features

A list of key features of the algorithm is available in Table 1. Features designed to classify challenging cases include the following: addition of the ability to record non-key muscle functions and the ability to classify cases with NT dermatomes/myotomes. This NT logic provides AIS classification and calculation of all derived variables even when one or more dermatomes/myotomes have a value of NT, provided that only a single option is possible based on the other values entered. If more than one option is possible, unable to determine (UTD)23 along with all possibilities will be displayed. Also, unique to this Algorithm is the use of a new symbol (‘!’) to denote motor or sensory deficit not related to SCI. This allows standardized documentation and tracking of non-SCI-related changes on top of providing accurate AIS classification.

Features increasing usability include the following: a colour-coded dermatome man who provides visual feedback on sensory scores entered and the presence of non-SCI-related deficits, value entry restriction, downward value propagation (to speed entry of identical sensory and motor exam values), saving and printing the entered examination in 2013 ISNCSCI worksheet format, tablet compatibility, user feedback function, so on. These features were developed to minimize errors in data entry while maximizing usability, user education and the ability to capture comprehensive information about a patient’s neurological impairments. On the basis of user feedback and input from the IAWG, the Algorithm is being continually evaluated. As of 01 July 2015, the Algorithm website has had 29 266 visits from 139 countries.

Validation results

Eleven International Standards Training e-learning Program cases and 37 hypothetical cases were used for logic development in phase one. These cases and 15 additional cases were used for NT logic development in phase three. Logic testing was performed in phases two and four using 351 and 1998 real-life RHSCIR cases, respectively. There was a 6.6% (23/351 cases) discrepancy in phase two logic testing with 8.7% (2/23) of discrepant cases leading to changes in the Algorithm. In phase four, 14.3% (286/1998) of the cases were discrepant with only 2.1% (6/286) of discrepant cases leading to changes in the Algorithm. The remaining 97.9% (280/286) contained clinician errors. Phase five, the cross-validation testing, had a 8.3% (9/108) discrepancy between the clinician and the Algorithm determined classification, but no changes to the Algorithm were required, as all were determined to be clinician classification errors. See Table 2 for more details.

Of the 295 (286 in phase four, 9 in phase five) RHSCIR cases with discrepancies between the Algorithm and the clinician classifications in phases Four and Five, 289 cases contained either single or multiple clinician errors. The errors involved NLI in 151 cases, AIS in 80 cases, motor level in 86 cases and sensory level in 39 cases.

Discussion

The Algorithm was developed and validated to assist clinicians and researchers with correctly performing the neurological classification as per the ISNCSCI standards. The Algorithm builds on the current ISNCSCI standards by including logic to classify cases with NT dermatomes/myotomes and logic to support previous grey areas of classification, specifically the non-key muscle function and dermatome/myotome changes due to pathology other than SCI.

The Algorithm development was initiated in November 2011, and three other computerized algorithms for ISNCSCI are known to have been developed and validated before or since that time.22, 26, 27 Two of these three ISNCSCI computerized algorithms published data on the validation; one used a small group of patients22 and the other used patients from only one phase of the care continuum (sub-acute SCI patients undergoing rehabilitation).27 The Algorithm in this paper was created and validated on data from 930 patients (2106 unique exams) in both acute and rehabilitation phase of care, which may better reflect the heterogeneity and challenges associated with classifying real-life patient cases. In addition to accurately reflecting the latest version of ISNCSCI classification rules and worksheet, the Algorithm includes user-friendly features (for example, dropdown propagation of initially entered exam values, provision of tools to support integration into electronic medical record systems) and the ability to record details of a patient’s neurological impairment (for example, non-key muscles, impairments not related to SCI), which are currently not included in other algorithms.

When comparing the clinician classifications with the Algorithm, the Algorithm identified clinician errors in 14.0% (280/1998) and 8.3% (9/108) of the cases in phases four and five of the validation testing, respectively, which are comparable to other studies that reported 10.2–13% of clinician errors in ISNCSCI classification.22, 28 The most common type of clinician error was miscalculating NLI (151 cases) followed by motor level (86 cases), AIS (80 cases) and sensory level (39 cases). This is similar to the results of other studies examining ISNCSCI classification errors with29 or without16, 21 algorithms, both reporting greater difficulty in classifying AIS over the sensory level. A greater percentage of the discrepancies was due to clinician classification error in phase four, which contained NT values when compared with phase two, which did not contain any NT values (91.3% in phase two vs 97.9% in phase four). The increased clinician error seen in this phase may indicate that clinicians have a harder time classifying cases with NT values. Further clarification and education of classification in cases with NT values should be provided and can be supported by the use of the Algorithm, which provides all possible options for calculated fields.

Clinician errors persist even after receiving training on performing the ISNCSCI classification likely due to staff turnover, the complexity of the classification rules and the fact that there are some cases where the classification rules are not very clear. This suggests that there is a need to incorporate computer algorithms into both research and clinical settings to ensure the use of the most current classification rules in a standardized manner and support ongoing clinician education. Use of the Algorithm will improve the accuracy of the neurological data used in clinical trials (for example, stratifying participants, determine inclusion/exclusion criteria, ensure quality of clinical data), assist clinicians in targeting appropriate interventions based on the AIS2 and educate clinicians on how to apply the ISNCSCI classification rules.22, 27 The importance of accurate assessment of NLI, AIS and the total motor score in monitoring patients for neurological deterioration and the influence of therapeutic interventions, be they pharmacological, surgical or medical, is paramount.

Despite its many uses, the Algorithm, like other computerized algorithms, remains vulnerable to data entry errors and cannot compensate for inaccurate scores obtained during the clinical examination.22 There will always be scenarios that preclude the use of a standardized computer algorithm (for example, an individual sustains a SCI at two different levels). As such, no algorithm can replace the clinical reasoning required to accurately classify these exceptional cases. For this reason, the Algorithm has included a clinician sign off section on its printable PDF, and its feedback feature encourages users to submit discrepant cases to allow for ongoing improvements.

ASIA has provided ongoing publications3, 23, 25 and educational tools24 to help clarify both the examination and classification components of the ISNCSCI as they have changed over the years. Despite this, many challenges remain in obtaining a reliable level and severity of neurological classification of SCI. Reliable classification requires correctly performing the clinical examination to determine motor, sensory and rectal examination scores and then accurately applying the classification rules according to the most updated version of the ISNCSCI while ensuring that non-SCI-related changes are appropriately identified as such during the assessment and classification. Additional challenges emerge if any of the dermatomes/myotomes are NT, as no formally recognised method exists to account for this during the classification even with the 2011 update of the ISNCSCI.23, 25 Other areas that could use further definition by the Standards Committee include: capturing non-SCI-related weakness so that it is clearly not included in the classification but utilised to track patient function, developing a standardized method of incorporating NT scores into the classification, clarification of how to determine motor complete or incomplete status (that is, AIS B vs C) in an individual where the motor level is below S1, etc. Collaborating with members of the ASIA Standards Committee on the IAWG allowed for discussion and communication of these issues so that they could be considered by the Standards Committee in the future, while ensuring that the resulting Algorithm aligns with expert opinion. Ongoing clinician training on the current methods of examination and classification is required, and the use of a computerized algorithm to support classification accuracy and learning (that is, reconcile clinical classification with computerized algorithm) will help ensure more accurate classification of individuals with SCI.

The Algorithm is currently being used for data validation (for both clinical trials and observational studies) and as a part of clinical training on how to complete and classify cases using ISNCSCI. Also, version 1.0 of the Algorithm provides tools to support the implementation of the Algorithm components into existing electronic medical records and research databases. As the Algorithm is a valuable tool for those who are learning how to perform the examination, additional features to support accurate, real-time clinical assessments are being planned. These include tablet and/or smart phone compatibility, ability to link to ASIA’s learning resources for motor and sensory testing of a specific myotome/dermatome, etc. Ongoing support for integration of the ISNCSCI examination into electronic medical records and research databases and efforts to maintain alignment to ISNCSCI as they are updated by ASIA’s Standards Committee are also needed. Additional ideas for features submitted by users will also be considered to ensure that the application continues to meet the needs of the international SCI clinical and research communities.

Conclusion

The Algorithm provides a current, validated and a standardized method to determine the level and severity of a SCI in alignment with version 2011 of the ISNCSCI and version 2013 of the worksheet. The web interface and Algorithm library were designed to maximize usability while minimising the impact of human error in performing the derivations required to complete the classification. Although there are areas of the ISNCSCI that require clarification moving forward, the integration of international experts from both ASIA and ISCoS in this project provided a unique collaboration opportunity that will continue as the ISNCSCI evolves.

Data Archiving

There were no data to deposit.

References

Burns A, Marino R, Flanders A, Flette H . Clinical diagnosis and prognosis following spinal cord injury. Handb Clin Neurol 2012; 109: 47–62.

Ditunno JF, Burns AS, Marino RJ . Neurological and functional capacity outcome measures: essential to spinal cord injury clinical trials. J Rehabil Res Dev 2004; 42: 35.

Kirshblum SC, Waring W, Biering-Sorensen F, Burns SP, Johansen M, Schmidt-Read M et al. International standards for neurological classification of spinal cord injury (revised 2011). J Spinal Cord Med 2011; 34: 547–554.

American Spinal Injury Association Standards for neurological classification of spinal injury patients. Chicago. 1982.

Chafetz RS, Gaughan JP, Calhoun C, Schottler J, Vogel LC, Betz R et al. Relationship between neurological injury and patterns of upright mobility in children with spinal cord injury. Top Spinal Cord Inj Rehabil 2013; 19: 31–41.

Scivoletto G, Tamburella F, Laurenza L, Molinari M, Stand I, Classification N et al. Distribution-based estimates of clinically significant changes in the International Standards for Neurological Classification of Spinal Cord Injury motor and sensory. Eur J Phys Rehabil Med 2013; 49: 373–384.

Jones ML, Evans N, Tefertiller C, Backus D, Sweatman M, Tansey K et al. Activity-based therapy for recovery of walking in individuals with chronic spinal cord injury: results from a randomized clinical trial. Arch Phys Med Rehabil 2014; 95: 2239–2246.

Hammond ER, Recio AC, Sadowsky CL, Becker D . Functional electrical stimulation as a component of activity-based restorative therapy may preserve function in persons with multiple sclerosis. J Spinal Cord Med 2015; 38: 68–75.

Coleman WP, Geisler FH . Injury severity as primary predictor of outcome in acute spinal cord injury: retrospective results from a large multicenter clinical trial. Spine J 2004; 4: 373–378.

Fawcett JW, Curt A, Steeves JD, Coleman WP, Tuszynski MH, Lammertse D et al. Guidelines for the conduct of clinical trials for spinal cord injury as developed by the ICCP panel: spontaneous recovery after spinal cord injury and statistical power needed for therapeutic clinical trials. Spinal Cord 2007; 45: 190–205.

Geisler FH, Coleman WP, Grieco G, Poonian D . Measurements and recovery patterns in a multicenter study of acute spinal cord injury. Spine J 2001; 26: S68–S86.

Fehlings MG, Theodore N, Harrop J, Maurais G, Kuntz C, Shaffrey CI et al. A phase I/IIa clinical trial of a recombinant Rho protein antagonist in acute spinal cord injury. J Neurotrauma 2011; 28: 787–796.

Casha S, Zygun D, Mcgowan MD, Bains I, Yong VW, Hurlbert RJ . Results of a phase II placebo-controlled randomized trial of minocycline in acute spinal cord injury. Brain 2012; 135: 1224–1236.

Vasquez N, Gall A, Ellaway PH, Craggs MD . Light touch and pin prick disparity in the International Standard for Neurological Classification of Spinal Cord Injury (ISNCSCI). Spinal Cord 2013; 51: 375–378.

El Masry WS, Tsubo M, Katoh S, El Miligui YH, Khan A . Validation of the American Spinal Injury Association (ASIA) motor score and the National Acute Spinal Cord Injury Study (NASCIS) motor score. Spine J 1996; 21: 614–619.

Cohen ME, Ditunno JF Jr, Donovan WH, Maynard FM Jr . A test of the 1992 International Standards for Neurological and Functional Classification of Spinal Cord Injury. Spinal Cord 1998; 36: 554–560.

Marino RJ, Graves DE . Metric properties of the ASIA motor score: subscales improve correlation with functional activities. Arch Phys Med Rehabil 2004; 85: 1804–1810.

Savic G, Bergström EMK, Frankel HL, Jamous MA, Jones PW . Inter-rater reliability of motor and sensory examinations performed according to American Spinal Injury Association standards. Spinal Cord 2007; 45: 444–451.

Noonan VK, Kwon BK, Soril L, Fehlings MG, Hurlbert RJ, Townson A et al. The Rick Hansen Spinal Cord Injury Registry (RHSCIR): a national patient-registry. Spinal Cord 2012; 50: 22–27.

Chafetz RS, Vogel LC, Betz RR, Gaughan JP, Mulcahey MJ . International standards for neurological classification of spinal cord injury: training effect on accurate classification. J Spinal Cord Med 2008; 31: 538–542.

Schuld C, Wiese J, Franz S, Putz C, Stierle I, Smoor I et al. Effect of formal training in scaling, scoring and classification of the International Standards for Neurological Classification of Spinal Cord Injury. Spinal Cord 2013; 51: 282–288.

Chafetz RS, Prak S, Mulcahey MJ . Computerized classification of neurologic injury based on the international standards for classification of spinal cord injury. J Spinal Cord Med 2009; 32: 532–537.

Kirshblum S, Waring W . Updates for the international standards for neurological classification of spinal cord injury. Phys Med Rehabil Clin N Am 2014; 25: 505–517.

American Spinal Injury Association. INSTeP (Internet). 2013 (cited 26 Nov 2014). Available from: http://lms3.learnshare.com/home.aspx.

Kirshblum SC, Biering-Sorensen F, Betz R, Burns S, Donovan W, Graves DE et al. International Standards for Neurological Classification of Spinal Cord Injury: cases with classification challenges. J Spinal Cord Med 2014; 37: 120–127.

Linassi G, Li Pi, Shan R, Marino RJ . A web-based computer program to determine the ASIA impairment classification. Spinal Cord 2010; 48: 100–104.

Schuld C, Wiese J, Hug A, Putz C, Hedel HJa, Van, Spiess MR et al. Computer implementation of the international standards for neurological classification of spinal cord injury for consistent and efficient derivation of its subscores including handling of data from not testable segments. J Neurotrauma 2012; 29: 453–461.

Marino RJ, Burns S, Graves DE, Leiby BE, Kirshblum S, Lammertse DP . Upper- and lower-extremity motor recovery after traumatic cervical spinal cord injury: an update from the national spinal cord injury database. Arch Phys Med Rehabil 2011; 92: 369–375.

Schuld C, Franz S, van Hedel H, Moosburger J, Maier D, Abel R et al. International standards for neurological classification of spinal cord injury: classification skills of clinicians versus computational algorithms. Spinal Cord 2015; 53: 324–331.

Acknowledgements

We acknowledge Juliet Batke for her assistance in providing ISNCSCI data from the Vancouver RHSCIR. We would also like to acknowledge the ISCoS for their support.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

LMB and MFD have received funding from the Rick Hansen Institute. KW, EE, VKN and SEP are employees of the Rick Hansen Institute. Production of this manuscript has been made possible through a financial contribution from Rick Hansen Institute and Western Economic Diversification Fund. The views expressed herein represent the views of the authors.

Rights and permissions

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in the credit line; if the material is not included under the Creative Commons license, users will need to obtain permission from the license holder to reproduce the material. To view a copy of this license, visit http://creativecommons.org/licenses/by-nc-sa/4.0/

About this article

Cite this article

Walden, K., Bélanger, L., Biering-Sørensen, F. et al. Development and validation of a computerized algorithm for International Standards for Neurological Classification of Spinal Cord Injury (ISNCSCI). Spinal Cord 54, 197–203 (2016). https://doi.org/10.1038/sc.2015.137

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/sc.2015.137