Abstract

Syntheses of literature on psychological interventions have defined the state of knowledge and helped to identify evidence-based practices for researchers, practitioners, educators and policymakers. Nevertheless, it is complicated to appraise the usefulness of syntheses owing to long-standing methodological issues and the rapid rate of research production. In this Perspective, we examine how syntheses of psychological interventions could be more useful. We argue that syntheses should move beyond the myopic lens of intervention impact based on a one-time, contested selection of literature and comprehensible only to intensively trained readers. Rather, syntheses should become ‘living’ documents that integrate data on intervention impact, consistency, research credibility and sampling inclusivity, all of which must then be presented in a modular way that is also accessible to people of limited expertise. Although existing resources make pursuit of this goal possible, reaching it will require a dramatic change in the ways in which psychologists collaborate and in which syntheses are conducted, disseminated and institutionally supported.

This is a preview of subscription content, access via your institution

Access options

Subscribe to this journal

Receive 12 digital issues and online access to articles

$59.00 per year

only $4.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

References

Green, L. W. Making research relevant: if it is an evidence-based practice, where’s the practice-based evidence? Family Pract. 25, i20–i24 (2008).

Berlin, J. A. & Golub, R. M. Meta-analysis as evidence: building a better pyramid. J. Am. Med. Assoc. 312, 603 (2014).

Murad, M. H., Asi, N., Alsawas, M. & Alahdab, F. New evidence pyramid. Evid. Based Med. 21, 125–127 (2016).

Tomlin, G. & Borgetto, B. Research pyramid: a new evidence-based practice model for occupational therapy. Am. J. Occup. Ther. 65, 189–196 (2011).

Oliver, K. & Cairney, P. The dos and don’ts of influencing policy: a systematic review of advice to academics. Palgrave Commun. 5, 21 (2019).

Sutton, A. J., Cooper, N. J. & Jones, D. R. Evidence synthesis as the key to more coherent and efficient research. BMC Med. Res. Methodol. 9, 29 (2009).

Hunter, J. E. & Schmidt, F. L. Cumulative research knowledge and social policy formulation: the critical role of meta-analysis. Psychol. Public Policy Law 2, 324–347 (1996).

Noar, S. M. In pursuit of cumulative knowledge in health communication: the role of meta-analysis. Health Commun. 20, 169–175 (2006).

Cuijpers, P., Karyotaki, E., de Wit, L. & Ebert, D. D. The effects of fifteen evidence-supported therapies for adult depression: a meta-analytic review. Psychother. Res. 30, 279–293 (2020).

Öst, L.-G., Riise, E. N., Wergeland, G. J., Hansen, B. & Kvale, G. Cognitive behavioral and pharmacological treatments of OCD in children: a systematic review and meta-analysis. J. Anxiety Disord. 43, 58–69 (2016).

McLean, C. P., Levy, H. C., Miller, M. L. & Tolin, D. F. Exposure therapy for PTSD: a meta-analysis. Clin. Psychol. Rev. 91, 102115 (2022).

Smith, M. L. & Glass, G. V. Meta-analysis of psychotherapy outcome studies. Am. Psychol. 32, 752–760 (1977).

Leichsenring, F., Steinert, C., Rabung, S. & Ioannidis, J. P. A. The efficacy of psychotherapies and pharmacotherapies for mental disorders in adults: an umbrella review and meta‐analytic evaluation of recent meta‐analyses. World Psychiat. 21, 133–145 (2022).

Cipriani, A. et al. Comparative efficacy and acceptability of 21 antidepressant drugs for the acute treatment of adults with major depressive disorder: a systematic review and network meta-analysis. Lancet 391, 1357–1366 (2018).

Kirsch, I. & Jakobsen, J. C. Network meta-analysis of antidepressants. Lancet 392, 1010 (2018).

Wampold, B. E. et al. A meta-analysis of outcome studies comparing bona fide psychotherapies: empirically, ‘all must have prizes’. Psychol. Bull. 122, 203–215 (1997).

Rind, B., Tromovitch, P. & Bauserman, R. A meta-analytic examination of assumed properties of child sexual abuse using college samples. Psychol. Bull. 124, 22–53 (1998).

Ioannidis, J. P. A. The mass production of redundant, misleading, and conflicted systematic reviews and meta-analyses: mass production of systematic reviews and meta-analyses. Milbank Q. 94, 485–514 (2016).

Gloaguen, V., Cottraux, J. & Cucherat, M., Ivy-Marie Blackburn. A meta-analysis of the effects of cognitive therapy in depressed patients. J. Affect. Disord. 49, 59–72 (1998).

Wampold, B. E., Minami, T., Baskin, T. W. & Callen Tierney, S. A meta-(re)analysis of the effects of cognitive therapy versus ‘other therapies’ for depression. J. Affect. Disord. 68, 159–165 (2002).

Macnamara, B. N. & Burgoyne, A. P. Do growth mindset interventions impact students’ academic achievement? A systematic review and meta-analysis with recommendations for best practices. Psychol. Bull. https://doi.org/10.1037/bul0000352 (2022).

Burnette, J. L. et al. A systematic review and meta-analysis of growth mindset interventions: for whom, how, and why might such interventions work? Psychol. Bull. https://doi.org/10.1037/bul0000368 (2022).

Cuijpers, P., Karyotaki, E., Reijnders, M. & Ebert, D. D. Was Eysenck right after all? A reassessment of the effects of psychotherapy for adult depression. Epidemiol. Psychiat. Sci. 28, 21–30 (2019).

Munder, T. et al. Is psychotherapy effective? A re-analysis of treatments for depression. Epidemiol. Psychiat. Sci. 28, 268–274 (2019).

Cristea, I. A. et al. The effects of cognitive behavioral therapy are not systematically falling: a revision of Johnsen and Friborg (2015). Psychol. Bull. 143, 326–340 (2017).

Jones, E. B. & Sharpe, L. Cognitive bias modification: a review of meta-analyses. J. Affect. Disord. 223, 175–183 (2017).

Cuijpers, P., Miguel, C., Papola, D., Harrer, M. & Karyotaki, E. From living systematic reviews to meta-analytical research domains. Evid. Based Ment. Health https://doi.org/10.1136/ebmental-2022-300509 (2022).

Elliott, J. H. et al. Living systematic review: 1. Introduction — the why, what, when, and how. J. Clin. Epidemiol. 91, 23–30 (2017).

Simonsohn, U., Simmons, J. & Nelson, L. D. Above averaging in literature reviews. Nat. Rev. Psychol. 1, 551–552 (2022).

Aromataris, E. et al. Summarizing systematic reviews: methodological development, conduct and reporting of an umbrella review approach. Int. J. Evidence-based Healthc. 13, 132–140 (2015).

Papatheodorou, S. Umbrella reviews: what they are and why we need them. Eur. J. Epidemiol. 34, 543–546 (2019).

Rebar, A. L. et al. A meta-meta-analysis of the effect of physical activity on depression and anxiety in non-clinical adult populations. Health Psychol. Rev. 9, 366–378 (2015).

Chambless, D. L. & Hollon, S. D. Defining empirically supported therapies. J. Consult. Clin. Psychol. 66, 7–18 (1998).

Tolin, D. F., McKay, D., Forman, E. M., Klonsky, E. D. & Thombs, B. D. Empirically supported treatment: recommendations for a new model. Clin. Psychol. Sci. Pract. 22, 317–338 (2015).

Donnelly, C. A. et al. Four principles for synthesizing evidence. Nature 558, 361–364 (2018).

Platt, J. R. Strong inference: certain systematic methods of scientific thinking may produce much more rapid progress than others. Science 146, 347–353 (1964).

McGuire, W. J. A perspectivist approach to theory construction. Pers. Soc. Psychol. Rev. 8, 173–182 (2004).

Lilienfeld, S. O. in Oxford Research Encyclopedia Of Psychology (ed. Beck, J. G.) 1–20 (Oxford Univ. Press, 2017).

Przeworski, A., Peterson, E. & Piedra, A. A systematic review of the efficacy, harmful effects, and ethical issues related to sexual orientation change efforts. Clin. Psychol. Sci. Pract. 28, 81–100 (2021).

IJzerman, H. et al. Use caution when applying behavioural science to policy. Nat. Hum. Behav. 4, 1092–1094 (2020).

Lilienfeld, S. O. Psychological treatments that cause harm. Perspect. Psychol. Sci. 2, 53–70 (2007).

Schechinger, H. A., Sakaluk, J. K. & Moors, A. C. Harmful and helpful therapy practices with consensually non-monogamous clients: toward an inclusive framework. J. Consult. Clin. Psychol. 86, 879–891 (2018).

Tipton, E., Pustejovsky, J. E. & Ahmadi, H. A history of meta-regression: technical, conceptual, and practical developments between 1974 and 2018. Res. Synth. Meth. 10, 161–179 (2019).

Moeyaert, M. et al. Methods for dealing with multiple outcomes in meta-analysis: a comparison between averaging effect sizes, robust variance estimation and multilevel meta-analysis. Int. J. Soc. Res. Methodol. 20, 559–572 (2017).

Cheung, M. W.-L. Modeling dependent effect sizes with three-level meta-analyses: a structural equation modeling approach. Psychol. Meth. 19, 211–229 (2014).

Konstantopoulos, S. Fixed effects and variance components estimation in three-level meta-analysis: three-level meta-analysis. Res. Synth. Meth. 2, 61–76 (2011).

Hedges, L. V. A random effects model for effect sizes. Psychol. Bull. 93, 388–395 (1983).

Tipton, E., Pustejovsky, J. E. & Ahmadi, H. Current practices in meta-regression in psychology, education, and medicine. Res. Synth. Meth. 10, 180–194 (2019).

Gronau, Q. F., Heck, D. W., Berkhout, S. W., Haaf, J. M. & Wagenmakers, E.-J. A primer on Bayesian model-averaged meta-analysis. Adv. Meth. Pract. Psychol. Sci. 4, https://doi.org/10.1177/25152459211031256 (2021).

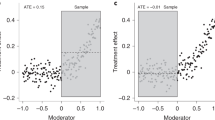

Bryan, C. J., Tipton, E. & Yeager, D. S. Behavioural science is unlikely to change the world without a heterogeneity revolution. Nat. Hum. Behav. 5, 980–989 (2021).

Linden, A. H. & Hönekopp, J. Heterogeneity of research results: a new perspective from which to assess and promote progress in psychological science. Perspect. Psychol. Sci. 16, 358–376 (2021).

Borenstein, M., Higgins, J. P. T., Hedges, L. V. & Rothstein, H. R. Basics of meta-analysis: I2 is not an absolute measure of heterogeneity. Res. Synth. Meth. 8, 5–18 (2017).

Tipton, E. et al. Why meta-analyses of growth mindset and other interventions should follow best practices for examining heterogeneity. Psychol. Bull. https://doi.org/10.13140/RG.2.2.34070.01605 (in the press).

Sakaluk, J. K., Kim, J., Campbell, E., Baxter, A. & Impett, E. A. Self-esteem and sexual health: a multilevel meta-analytic review. Health Psychol. Rev. https://doi.org/10.1080/17437199.2019.1625281 (2019).

Aczel, B. et al. Consensus-based guidance for conducting and reporting multi-analyst studies. eLife 10, e72185 (2021).

Hunter, J. E. & Schmidt, F. L. Dichotomization of continuous variables: the implications for meta-analysis. J. Appl. Psychol. 75, 334–349 (1990).

Rosenthal, R. The file drawer problem and tolerance for null results. Psychol. Bull. 86, 638–641 (1979).

Sterne, J. A. C. et al. Recommendations for examining and interpreting funnel plot asymmetry in meta-analyses of randomised controlled trials. Br. Med. J. 343, d4002–d4002 (2011).

Hunter, J. E. & Schmidt, F. L. Methods of Meta-analysis: Correcting Error and Bias in Research Findings (Sage, 2004).

Stanley, T. D. & Doucouliagos, H. Meta-regression approximations to reduce publication selection bias. Res. Synth. Meth. 5, 60–78 (2014).

Iyengar, S. & Greenhouse, J. B. Selection models and the file drawer problem. Stat. Sci. 3, 109–135 (1988).

Carter, E. C., Schönbrodt, F. D., Gervais, W. M. & Hilgard, J. Correcting for bias in psychology: a comparison of meta-analytic methods. Adv. Meth. Pract. Psychol. Sci. 2, 115–144 (2019).

McShane, B. B., Böckenholt, U. & Hansen, K. T. Adjusting for publication bias in meta-analysis: an evaluation of selection methods and some cautionary notes. Perspect. Psychol. Sci. 11, 730–749 (2016).

Carter, E. C. & McCullough, M. E. Publication bias and the limited strength model of self-control: has the evidence for ego depletion been overestimated? Front. Psychol. 5, 823 (2014).

Hagger, M. S., Wood, C., Stiff, C. & Chatzisarantis, N. L. D. Ego depletion and the strength model of self-control: a meta-analysis. Psychol. Bull. 136, 495–525 (2010).

Mertens, S., Herberz, M., Hahnel, U. J. J. & Brosch, T. The effectiveness of nudging: a meta-analysis of choice architecture interventions across behavioral domains. Proc. Natl Acad. Sci. USA 119, e2107346118 (2022).

Nelson, L. D., Simmons, J. & Simonsohn, U. Psychology’s renaissance. Annu. Rev. Psychol. 69, 511–534 (2018).

Vazire, S. Implications of the credibility revolution for productivity, creativity, and progress. Perspect. Psychol. Sci. 13, 411–417 (2018).

Spellman, B. A. A short (personal) future history of revolution 2.0. Perspect. Psychol. Sci. 10, 886–899 (2015).

Fanelli, D. “Positive” results increase down the hierarchy of the sciences. PLoS One 5, e10068 (2010).

Open Science Collaboration. Estimating the reproducibility of psychological science. Science 349, aac4716 (2015).

Cheung, I. et al. Registered replication report: study 1 from Finkel, Rusbult, Kumashiro & Hannon (2002). Perspect. Psychol. Sci. 11, 750–764 (2016).

O’Donnell, M. et al. Registered replication report: Dijksterhuis and van Knippenberg (1998). Perspect. Psychol. Sci. 13, 268–294 (2018).

Wagenmakers, E.-J. et al. Registered replication report: Strack, Martin & Stepper (1988). Perspect. Psychol. Sci. 11, 917–928 (2016).

Eerland, A. et al. Registered replication report: Hart & Albarracín (2011). Perspect. Psychol. Sci. 11, 158–171 (2016).

Guest, O. & Martin, A. E. How computational modeling can force theory building in psychological science. Perspect. Psychol. Sci. 16, 789–802 (2021).

Robinaugh, D. J., Haslbeck, J. M. B., Ryan, O., Fried, E. I. & Waldorp, L. J. Invisible hands and fine calipers: a call to use formal theory as a toolkit for theory construction. Perspect. Psychol. Sci. 16, 725–743 (2021).

Hussey, I. & Hughes, S. Hidden invalidity among 15 commonly used measures in social and personality psychology. Adv. Meth. Pract. Psychol. Sci. 3, 166–184 (2020).

Flake, J. K. & Fried, E. I. Measurement schmeasurement: questionable measurement practices and how to avoid them. Adv. Meth. Pract. Psychol. Sci. 3, 456–465 (2020).

Haucke, M., Hoekstra, R. & van Ravenzwaaij, D. When numbers fail: do researchers agree on operationalization of published research? R. Soc. Open Sci. 8, 191354 (2021).

Simmons, J. P., Nelson, L. D. & Simonsohn, U. False-positive psychology: undisclosed flexibility in data collection and analysis allows presenting anything as significant. Psychol. Sci. 22, 1359–1366 (2011).

Silberzahn, R. et al. Many analysts, one data set: making transparent how variations in analytic choices affect results. Adv. Meth. Pract. Psychol. Sci. 1, 337–356 (2018).

Bakker, M. & Wicherts, J. M. The (mis)reporting of statistical results in psychology journals. Behav. Res. 43, 666–678 (2011).

Nuijten, M. B., Hartgerink, C. H. J., Assen, M. A. L. M., van, Epskamp, S. & Wicherts, J. M. The prevalence of statistical reporting errors in psychology (1985–2013). Behav. Res. 48, 1205–1226 (2016).

Wicherts, J. M., Borsboom, D., Kats, J. & Molenaar, D. The poor availability of psychological research data for reanalysis. Am. Psychol. 61, 726–728 (2006).

Chambers, C. D., Feredoes, E., Muthukumaraswamy, S. D. & Etchells, P. J. Instead of “playing the game” it is time to change the rules: registered reports at AIMS Neuroscience and beyond. AIMS Neurosci. 1, 4–17 (2014).

King, K. M., Pullmann, M. D., Lyon, A. R., Dorsey, S. & Lewis, C. C. Using implementation science to close the gap between the optimal and typical practice of quantitative methods in clinical science. J. Abnorm. Psychol. 128, 547–562 (2019).

Bakker, M., van Dijk, A. & Wicherts, J. M. The rules of the game called psychological science. Perspect. Psychol. Sci. 7, 543–554 (2012).

Nosek, B. A., Spies, J. R. & Motyl, M. Scientific utopia: II. Restructuring incentives and practices to promote truth over publishability. Perspect. Psychol. Sci. 7, 615–631 (2012).

Hengartner, M. P. Raising awareness for the replication crisis in clinical psychology by focusing on inconsistencies in psychotherapy research: how much can we rely on published findings from efficacy trials? Front. Psychol. 9, 256 (2018).

Tackett, J. L. & Miller, J. D. Introduction to the special section on increasing replicability, transparency, and openness in clinical psychology. J. Abnorm. Psychol. 128, 487–492 (2019).

Tackett, J. L. et al. It’s time to broaden the replicability conversation: thoughts for and from clinical psychological science. Perspect. Psychol. Sci. 12, 742–756 (2017).

Tackett, J. L., Brandes, C. M., King, K. M. & Markon, K. E. Psychology’s replication crisis and clinical psychological science. Annu. Rev. Clin. Psychol. 15, 579–604 (2019).

Cuijpers, P. & Cristea, I. A. How to prove that your therapy is effective, even when it is not: a guideline. Epidemiol. Psychiat. Sci. 25, 428–435 (2016).

Fried, E. I. Lack of theory building and testing impedes progress in the factor and network literature. Psychol. Inq. 31, 271–288 (2020).

Reardon, K. W., Smack, A. J., Herzhoff, K. & Tackett, J. L. An N-pact factor for clinical psychological research. J. Abnorm. Psychol. 128, 493–499 (2019).

Fried, E. I., Flake, J. K. & Robinaugh, D. J. Revisiting the theoretical and methodological foundations of depression measurement. Nat. Rev. Psychol. 1, 358–368 (2022).

Driessen, E., Hollon, S. D., Bockting, C. L. H., Cuijpers, P. & Turner, E. H. Does publication bias inflate the apparent efficacy of psychological treatment for major depressive disorder? A systematic review and meta-analysis of US national institutes of health-funded trials. PLoS One 10, e0137864 (2015).

Cristea, I. A., Gentili, C., Pietrini, P. & Cuijpers, P. Sponsorship bias in the comparative efficacy of psychotherapy and pharmacotherapy for adult depression: meta-analysis. Br. J. Psychiat. 210, 16–23 (2017).

Pittelkow, M.-M., Hoekstra, R., Karsten, J. & van Ravenzwaaij, D. Replication target selection in clinical psychology: a Bayesian and qualitative reevaluation. Clin. Psychol. Sci. Pract. 28, 210–221 (2021).

Williams, A. J., Botanov, Y., Kilshaw, R. E., Wong, R. E. & Sakaluk, J. K. Potentially harmful therapies: a meta‐scientific review of evidential value. Clin. Psychol. Sci. Pract. 28, 5–18 (2020).

Sakaluk, J. K., Williams, A. J., Kilshaw, R. E. & Rhyner, K. T. Evaluating the evidential value of empirically supported psychological treatments (ESTs): a meta-scientific review. J. Abnorm. Psychol. 128, 500–509 (2019).

Williams, A. J. et al. A metascientific review of the evidential value of acceptance and commitment therapy for depression. Behav. Ther. https://doi.org/10.1016/j.beth.2022.06.004 (2022).

Higgins, J. P. T. et al. The Cochrane Collaboration’s tool for assessing risk of bias in randomised trials. Br. Med. J. 343, d5928 (2011).

Munder, T., Brütsch, O., Leonhart, R., Gerger, H. & Barth, J. Researcher allegiance in psychotherapy outcome research: an overview of reviews. Clin. Psychol. Rev. 33, 501 (2013).

Egger, M., Smith, G. D., Schneider, M. & Minder, C. Bias in meta-analysis detected by a simple, graphical test. Br. Med. J. 315, 629–634 (1997).

Guyatt, G. H. et al. GRADE: an emerging consensus on rating quality of evidence and strength of recommendations. Br. Med. J. 336, 924–926 (2008).

Sterne, J. A. et al. ROBINS-I: a tool for assessing risk of bias in non-randomised studies of interventions. Br. Med. J. 355, https://doi.org/10.1136/bmj.i4919 (2016).

Katikireddi, S. V., Egan, M. & Petticrew, M. How do systematic reviews incorporate risk of bias assessments into the synthesis of evidence? A methodological study. J. Epidemiol. Commun. Health 69, 189–195 (2015).

Losilla, J.-M., Oliveras, I., Marin-Garcia, J. A. & Vives, J. Three risk of bias tools lead to opposite conclusions in observational research synthesis. J. Clin. Epidemiol. 101, 61–72 (2018).

Armijo-Olivo, S., Stiles, C. R., Hagen, N. A., Biondo, P. D. & Cummings, G. G. Assessment of study quality for systematic reviews: a comparison of the Cochrane Collaboration risk of bias tool and the effective public health practice project quality assessment tool: methodological research: quality assessment for systematic reviews. J. Eval. Clin. Pract. 18, 12–18 (2012).

Lundh, A. & Gøtzsche, P. C. Recommendations by Cochrane Review Groups for assessment of the risk of bias in studies. BMC Med. Res. Methodol. 8, 22 (2008).

Remedios, J. D. Psychology must grapple with Whiteness. Nat. Rev. Psychol. 1, 125–126 (2022).

Klein, V., Savaş, Ö. & Conley, T. D. How WEIRD and androcentric is sex research? Global inequities in study populations. J. Sex Res. https://doi.org/10.1080/00224499.2021.1918050 (2021).

Andersen, J. P. & Zou, C. Exclusion of sexual minority couples from research. Health Sci. J. 9, 1–9 (2015).

Müller, A. Beyond ‘invisibility’: queer intelligibility and symbolic annihilation in healthcare. Cult. Health Sex. 20, 14–27 (2018).

Williamson, H. C. et al. How diverse are the samples used to study intimate relationships? A systematic review. J. Soc. Pers. Relat. 39, 10871109 (2021).

Spates, K. “The missing link”: the exclusion of black women in psychological research and the implications for black women’s mental health. SAGE Open 2, 215824401245517 (2012).

Cooper, A. A. & Conklin, L. R. Dropout from individual psychotherapy for major depression: a meta-analysis of randomized clinical trials. Clin. Psychol. Rev. 40, 57–65 (2015).

Falconnier, L. Socioeconomic status in the treatment of depression. Am. J. Orthopsychiat. 79, 148–158 (2009).

Finegan, M., Firth, N., Wojnarowski, C. & Delgadillo, J. Associations between socioeconomic status and psychological therapy outcomes: a systematic review and meta‐analysis. Depress. Anxiety 35, 560–573 (2018).

Willner, P. The effectiveness of psychotherapeutic interventions for people with learning disabilities: a critical overview. J. Intellect. Disabil. Res. 49, 73–85 (2005).

Mak, W. W. S., Law, R. W., Alvidrez, J. & Pérez-Stable, E. J. Gender and ethnic diversity in NIMH-funded clinical trials: review of a decade of published research. Adm. Policy Ment. Health 34, 497–503 (2007).

Polo, A. J. et al. Diversity in randomized clinical trials of depression: a 36-year review. Clin. Psychol. Rev. 67, 22–35 (2019).

Akinhanmi, M. O. et al. Racial disparities in bipolar disorder treatment and research: a call to action. Bipolar Disord. 20, 506–514 (2018).

Nalven, T., Spillane, N. S., Schick, M. R. & Weyandt, L. L. Diversity inclusion in United States opioid pharmacological treatment trials: a systematic review. Exp. Clin. Psychopharmacol. 29, 524–538 (2021).

Mullarkey, M. C. & Schleider, J. L. Embracing scientific humility and complexity: learning “what works for whom” in youth psychotherapy research. J. Clin. Child Adolesc. Psychol. 50, 443–449 (2021).

Sue, D. W. et al. Racial microaggressions in everyday life: implications for clinical practice. Am. Psychol. 62, 271–286 (2007).

Spengler, E. S., Miller, D. J. & Spengler, P. M. Microaggressions: clinical errors with sexual minority clients. Psychotherapy 53, 360–366 (2016).

Alegría, M. et al. Disparity in depression treatment among racial and ethnic minority populations in the United States. Psychiat. Serv. 59, 1264–1272 (2008).

Hayes, J. A., Owen, J. & Bieschke, K. J. Therapist differences in symptom change with racial/ethnic minority clients. Psychotherapy 52, 308–314 (2015).

Imel, Z. E. et al. Racial/ethnic disparities in therapist effectiveness: a conceptualization and initial study of cultural competence. J. Couns. Psychol. 58, 290–298 (2011).

Owen, J. et al. Racial/ethnic disparities in client unilateral termination: the role of therapists’ cultural comfort. Psychother. Res. 27, 102–111 (2017).

Melfi, C. A., Croghan, T. W., Hanna, M. P. & Robinson, R. L. Racial variation in antidepressant treatment in a medicaid population. J. Clin. Psychiat. 61, 16–21 (2000).

Ward, E. C. Examining differential treatment effects for depression in racial and ethnic minority women: a qualitative systematic review. J. Natl Med. Assoc. 99, 265–274 (2007).

Gregory, V. L. Cognitive–behavioral therapy for depressive symptoms in persons of African descent: a meta-analysis. J. Soc. Serv. Res. 42, 113–129 (2016).

Cuijpers, P. et al. A meta-analysis of cognitive–behavioural therapy for adult depression, alone and in comparison with other treatments. Can. J. Psychiat. 58, 376–385 (2013).

Wendt, D. C., Huson, K., Albatnuni, M. & Gone, J. P. What are the best practices for psychotherapy with indigenous peoples in the United States and Canada? A thorny question. J. Consult. Clin. Psychol. 90, 802–814 (2022).

Lorenzo-Luaces, L., Dierckman, C. & Adams, S. Attitudes and (mis)information about cognitive behavioral therapy on TikTok: an analysis of video content. J. Med. Internet Res. 25, e45571 (2023).

Lorenzo-Luaces, L., Zimmerman, M. & Cuijpers, P. Are studies of psychotherapies for depression more or less generalizable than studies of antidepressants? J. Affect. Disord. 234, 8–13 (2018).

Grant, B. F. & Harford, T. C. Comorbidity between DSM-IV alcohol use disorders and major depression: results of a national survey. Drug Alcohol. Depend. 39, 197–206 (1995).

Moher, D. et al. Preferred reporting items for systematic review and meta-analysis protocols (PRISMA-P) 2015 statement. Syst. Rev. 4, 1 (2015).

Appelbaum, M. et al. Journal article reporting standards for quantitative research in psychology: the APA Publications and Communications Board task force report. Am. Psychol. 73, 3–25 (2018).

Marshall, I. J. & Wallace, B. C. Toward systematic review automation: a practical guide to using machine learning tools in research synthesis. Syst. Rev. 8, 163 (2019).

Marshall, I. J. et al. Trialstreamer: a living, automatically updated database of clinical trial reports. J. Am. Med. Inform. Assoc. 27, 1903–1912 (2020).

Boness, C. L. et al. An evaluation of cognitive behavioral therapy for insomnia: a systematic review and application of Tolin’s criteria for empirically supported treatments. Clin. Psychol. Sci. Pract. 27, e12348 (2020).

Boness, C. L. et al. The Society of Clinical Psychology’s manual for the evaluation of psychological treatments using the Tolin criteria. Preprint at OSFPreprints https://doi.org/10.31219/osf.io/8hcsz (2022).

Eysenbach, G. & Consort-Ehealth Group. CONSORT-EHEALTH: improving and standardizing evaluation reports of web-based and mobile health interventions. J. Med. Internet Res. 13, e126 (2011).

Uhlmann, E. L. et al. Scientific utopia III: crowdsourcing science. Perspect. Psychol. Sci. 14, 711–733 (2019).

Ebersole, C. R. et al. Many Labs 3: evaluating participant pool quality across the academic semester via replication. J. Exp. Soc. Psychol. 67, 68–82 (2016).

Byers-Heinlein, K. et al. Building a collaborative psychological science: lessons learned from ManyBabies 1. Can. Psychol. 61, 349–363 (2020).

Moshontz, H. et al. The psychological science accelerator: advancing psychology through a distributed collaborative network. Adv. Meth. Pract. Psychol. Sci. 1, 501–515 (2018).

Tsuji, S., Bergmann, C. & Cristia, A. Community-augmented meta-analyses: toward cumulative data assessment. Perspect. Psychol. Sci. 9, 661–665 (2014).

Boutron, I. et al. The COVID-NMA project: building an evidence ecosystem for the COVID-19 pandemic. Ann. Intern. Med. 173, 1015–1017 (2020).

Sutherland, W. J. Building a tool to overcome barriers in research-implementation spaces: the conservation evidence database. Biol. Conserv. 238, 108199 (2019).

Vuorre, M. & Curley, J. P. Curating research assets: a tutorial on the git version control system. Adv. Meth. Pract. Psychol. Sci. 1, 219–236 (2018).

Moshontz, H., Ebersole, C. R., Weston, S. J. & Klein, R. A. A guide for many authors: writing manuscripts in large collaborations. Soc. Personal. Psychol. Compass 15, e12590 (2021).

Tennant, J. P., Bielczyk, N., Tzovaras, B. G., Masuzzo, P. & Steiner, T. Introducing massively open online papers (MOOPs). KULA 4, 63 (2020).

Holcombe, A. O. Contributorship, not authorship: use credit to indicate who did what. Publications 7, 48 (2019).

Pierce, H. H., Dev, A., Statham, E. & Bierer, B. E. Credit data generators for data reuse. Nature 570, 30–32 (2019).

Graham, I. D. et al. Lost in knowledge translation: time for a map? J. Contin. Educ. Health Prof. 26, 13–24 (2006).

Higgins, J. P. T. & Thompson, S. G. Quantifying heterogeneity in a meta-analysis. Stat. Med. 21, 1539–1558 (2002).

Jones, A. Multidisciplinary team working: collaboration and conflict. Int. J. Ment. Health Nurs. 15, 19–28 (2006).

Hall, K. L. et al. The science of team science: a review of the empirical evidence and research gaps on collaboration in science. Am. Psychol. 73, 532–548 (2018).

Mavridis, D. & White, I. R. Dealing with missing outcome data in meta‐analysis. Res. Synth. Meth. 11, 2–13 (2020).

Enders, C. K. Applied Missing Data Analysis (Guilford Press, 2022).

Schauer, J. M., Diaz, K., Pigott, T. D. & Lee, J. Exploratory analyses for missing data in meta-analyses and meta-regression: a tutorial. Alcohol Alcohol. 57, 35–46 (2022).

Schauer, J. M., Lee, J., Diaz, K. & Pigott, T. D. On the bias of complete‐ and shifting‐case meta‐regressions with missing covariates. Res. Synth. Meth. 13, 489–507 (2022).

Schleider, J. L. The fundamental need for lived experience perspectives in developing and evaluating psychotherapies. J. Consult. Clin. Psychol. 91, 119–121 (2023).

Ramadier, T. Transdisciplinarity and its challenges: the case of urban studies. Futures 36, 423–439 (2004).

MacLeod, M. What makes interdisciplinarity difficult? Some consequences of domain specificity in interdisciplinary practice. Synthese 195, 697–720 (2018).

Mazzocchi, F. Scientific research across and beyond disciplines: challenges and opportunities of interdisciplinarity. EMBO Rep. 20, e47682 (2019).

Bauer, H. H. Barriers against interdisciplinarity: implications for studies of science, technology, and society (STS). Sci. Technol. Hum. Values 15, 105–119 (1990).

Tourani, P., Adams, B. & Serebrenik, A. Code of conduct in open source projects. In 2017 IEEE 24th Int. Conf. on Software Analysis, Evolution and Reengineering (SANER) 24–33 (IEEE, 2017).

Strack, F. Reflection on the smiling registered replication report. Perspect. Psychol. Sci. 11, 929–930 (2016).

Norris, P. Cancel culture: myth or reality? Polit. Stud. 71, 145–174 (2021).

Baumgartner, H. A. et al. How to build up big team science: a practical guide for large-scale collaborations. R. Soc. Open. Sci. 10, 230235 (2023).

Michelson, M. & Reuter, K. The significant cost of systematic reviews and meta-analyses: a call for greater involvement of machine learning to assess the promise of clinical trials. Contemp. Clin. Trials Commun. 16, 100443 (2019).

Dicks, L. V., Walsh, J. C. & Sutherland, W. J. Organising evidence for environmental management decisions: a ‘4S’ hierarchy. Trends Ecol. Evol. 29, 607–613 (2014).

Maseroli, E. et al. Outcome of medical and psychosexual interventions for vaginismus: a systematic review and meta-analysis. J. Sex. Med. 15, 1752–1764 (2018).

Kothari, A., McCutcheon, C. & Graham, I. D. Defining integrated knowledge translation and moving forward: a response to recent commentaries. Int. J. Health Policy Manag. 6, 299–300 (2017).

Franconeri, S. L., Padilla, L. M., Shah, P., Zacks, J. M. & Hullman, J. The science of visual data communication: what works. Psychol. Sci. Publ. Interest 22, 110–161 (2021).

Fitzgerald, K. G. & Tipton, E. The meta-analytic rain cloud plot: a new approach to visualizing clearinghouse data. J. Res. Educ. Eff. 15, 848–875 (2022).

Acknowledgements

We appreciate the talents of J. Marchment in creating the original draft of the illustration of the bottom-up and top-down CDA synthesis pathways, that eventually became (a very different looking) Fig. 3. D.C.W. is supported by a Chercheur-Boursier Award from the Fonds de recherche du Québec–Santé.

Author information

Authors and Affiliations

Contributions

J.K.S. is the lead author; all other authors are listed in (inverse) professional rank, and alphabetically within rank. All authors reviewed and/or edited the manuscript before submission.

Corresponding author

Ethics declarations

Competing interests

J.K.S. is often a principal investigator on grant applications focused on syntheses of impact, consistency, credibility and/or inclusivity for psychological interventions. C.L.B. is a member of the APA Division 12 Committee on Science and Practice. The other authors declare no competing interests.

Peer review

Peer review information

Nature Reviews Psychology thanks Jonas Dora, Michael Hengartner and Elizabeth Tipton for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Sakaluk, J.K., De Santis, C., Kilshaw, R. et al. Reconsidering what makes syntheses of psychological intervention studies useful. Nat Rev Psychol 2, 569–583 (2023). https://doi.org/10.1038/s44159-023-00213-9

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s44159-023-00213-9