Abstract

Crowdsourcing involves the use of annotated labels with unknown reliability to estimate ground truth labels in datasets. A common task in crowdsourcing involves estimating reliabilities of annotators (such as through the sensitivities and specificities of annotators in the binary label setting). In the literature, beta or dirichlet distributions are typically imposed as priors on annotator reliability. In this study, we investigated the use of a neuroscientifically validated model of decision making, known as the drift-diffusion model, as a prior on the annotator labeling process. Two experiments were conducted on synthetically generated data with non-linear (sinusoidal) decision boundaries. Variational inference was used to predict ground truth labels and annotator related parameters. Our method performed similarly to a state-of-the-art technique (SVGPCR) in prediction of crowdsourced data labels and prediction through a crowdsourced-generated Gaussian process classifier. By relying on a neuroscientifically validated model of decision making to model annotator behavior, our technique opens the avenue of predicting neuroscientific biomarkers of annotators, expanding the scope of what may be learnt about annotators in crowdsourcing tasks.

Similar content being viewed by others

Introduction

Crowdsourcing involves the use of a crowd (a collection of annotators) to label datasets, and generally assumes that reliabilities of annotators in the crowd are unknown1. In the past few decades, crowdsourcing has proliferated to many domains, including medicine and public health2,3, physics and engineering4,5,6, and behavioral science.7 Novel applications of crowdsourcing models with machine learning involve scoring of histological and immunochemistry images8,9, detection of gravitational waves10 and flood forecasting11.

In crowdsourcing, an important task is to estimate annotator reliability. In the case where annotators must provide binary labels to data (e.g., 0 or 1), annotator reliability may be understood through the sensitivity and specificity of each annotator (a confusion matrix may more generally be used in the non-binary case)12. A common objective is to use the annotated labels to build a classifier to generalize to new data, which typically involves use of the sensitivities and specificities of the annotators8,12. Frequentist approaches directly estimate the sensitivities and specificities of annotators (or the confusion matrix), such as through the Expectation Maximization algorithm12. In the Bayesian approach, beta or dirichlet distributions are typically used as priors to the confusion matrix of the annotators8,12,13.

In this paper, we consider the case where annotators must provide binary labels to data in a crowdsourcing task. Rather than imposing a beta or dirichlet distribution as a prior on annotator reliability, we assume that annotators provide labels based off of a model of decision making known as the drift-diffusion model. Drift-diffusion models have been studied extensively in the context of human decision making in neuroscience and psychology14,15. Drift-diffusion models apply to two-choice decisions, where the decision maker most make a decision between two options, typically in a matter of seconds16,17. Drift-diffusion models have popularized in neuroscience due to studies showing that drift-diffusion parameters have neurological correlates with brain regions such as the prefrontal cortex, subthalamic nucleus and basal ganglia16,18, and correlate to dopaminergic activity in perceuptual decision making19.

To the knowledge of the authors, no related work considers psychological or neuroscientifically validated models as priors in crowdsourcing tasks; however, there is novel and emerging (albeit nascent) literature merging crowdsourcing in the context of neuroscience and psychology20. In previous literature, functional near-infrared spectroscopy (fNIRS) in conjunction with brain-computer interface (BCI) integrates neuroscience into crowdsourcing tasks21. Crowdsourcing tasks have also been used to collect psychological and neurological properties of annotators, which allow for determination of behavioral factors of the annotators. One study implemented an alcohol purchase task via crowdsourcing platform Amazon Mechanical Turk to identify behavioral economic factors of alochol misuse22. Other examples further include possibilities for cross-sectional designs for addiction science23,24 and even other health related challenges25,26, including autism27. Novel literature has also studied synchrony related metrics via brain coupling between individuals28. Finally, as may be inferred from large language models such as ChatGPT, the advent of large crowdsourced datasets have allowed advances in psycholinguistics and semantic models (see29 for a review).

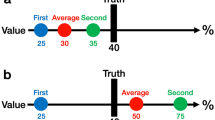

Drift-diffusion models are illustrated as follows. Consider a decision making task, where the decision maker must make a decision between two options. In drift-diffusion models, it is assumed that the decision making process may be modeled by a latent stochastic process. This process is informally known as the ’evidence level’. Intuitively, the value of this process at any given time is interpreted as the amount of evidence the decision maker has obtained in making their decision. Further, it is assumed that this process evolves throughout time according to a drift-diffusion process, and terminates when the process reaches either an upper boundary or lower boundary. A common convention is that termination at the upper and lower boundaries respectively correspond to correct and incorrect decisions being made (Fig. 1). The evidence level, which evolves according to a drift-diffusion process, is specified by the drift rate (\(\mu\)) and the diffusion rate (\(\sigma\)). The evidence level (denoted X(t), where t is time) evolves in a time period dt according to the equation below (Fig. 2):

where W(t) is a Wiener Process (i.e. W(t) is normally distributed with mean zero and variance t).

This paper presents a framework for the usage of drift-diffusion models in a crowdsourcing task as a prior on annotator decision making. Specifically, it is assumed that annotators in a binary labeling task produce labels according to drift-diffusion processes that depend on the ground truth labels. Variational inference is used to obtain estimates on annotator parameters (such as the drift and diffusion rates), as well as estimates of ground-truth labels. The technique additionally allows for construction of a Gaussian process classifier, so that labels may be predicted from data not labeled by annotators. While the binary labeling case is considered as the first step for this new framework (such as in seminal work work in crowdsourcing12), the analysis of the non-binary label case is outside the scope of this study and considered an item for future work (see discussion for notes on the computational complexity in the non-binary case and additional future work).

The drift-diffusion model of decision making in a two-choice task. Two hypothetical decision-making trials are displayed (Trial 1 and Trial 2). For each trial, the evidence level starts off at the offset. The evidence level evolves throughout time according to a drift diffusion process. The process terminates when the evidence level reaches either the upper boundary or lower boundary, after which a decision is made. The time for the process to reach reach the upper or lower boundary is known as the decision time. Termination of the process at the upper boundary generally corresponds to a correct decision being made. Termination of the process at the lower boundary generally corresponds to an incorrect decision being made.

Sample paths of a drift diffusion process with positive and negative drift (which bias the processes upwards and downwards respectively), simulated with 1000 steps and a time spacing of 0.001 seconds. (A) Three random sample paths are simulated of a drift diffusion process with drift rate of.01 and diffusion rate of 2. (B) Three random sample paths are simulated of a drift diffusion process with drift rate of \(-0.01\) and diffusion rate of 1.

Mathematical description of crowdsourcing problem

Consider a set of inputs \(x_i \in \mathbb {R}^m\) (\(1 \le i \le n\), \(m \ge 1\)) with unknown binary ground truth labels \(z_i\) (i.e. \(z_i = 0\) or \(z_i = 1\)). Let there be k annotators who annotate each input \(x_i\), providing a binary label \(y^j_i\), where j denotes the j-th annotator (\(1 \le j \le k\)). Drift-diffusion models generally provide parameters specific to a task. It is therefore assumed that conditional on the ground truth label (e.g., a ground truth recognition task), annotators produce labels according to a drift-diffusion process (Figure 3), which in particular is conditionally independent of the input. Assume that the offset, upper boundary and lower boundary for all annotators are given by 0, +1 and −1, so that the drift and diffusion parameters are the only unknown parameters of the annotators. Let \(\mu ^j_0\) and \(\sigma ^j_0\) denote the respective drift and diffusion parameters of annotator j conditional on \(z = 0\), and similarly, let \(\mu ^j_1\) and \(\sigma ^j_1\) denote the respective drift and diffusion parameters of annotator j conditional on \(z = 1\). The following objectives are considered in the manuscript.

-

1.

Determine the drift-diffusion parameters \(\mu ^j_0,\sigma ^j_0,\mu ^j_1,\sigma ^j_1\) for every annotator j.

-

2.

Determine the sensitivity \(P(y^j = 1|z^j=1)\) and specificity \(P(y^j=0|z^j=0)\) for each annotator j.

-

3.

For each input \(x_i\), determine a probabilistic label \(p_i = P(z_i = 0)\).

-

4.

Build a classifier \(f:\mathbb {R}^m \rightarrow \{0,1\}\) that assigns a label \(z=f(x)\) for any input x, where x need not be in the crowdsourced dataset.

Generation of annotator labels. For simplicity, superscripts and subscripts identifying the annotator, input and ground truth are dropped. An image x is crowdsourced to an annotator to be labeled as a cat (label y = 0) or dog (label y = 1). Conditional on the ground truth z of image x, the annotator generates a label according to drift diffusion process. If the image is a cat (\(z = 0\)), a drift-diffusion process occurs with drift and diffusion rates \(\mu _0\) and \(\sigma _0\) respectively, with a correct label (\(y=z=0\)) being generated if the process hits the upper boundary. If the image is a dog (\(z=1\)), a drift-diffusion process occurs with drift and diffusion rates \(\mu _1\) and \(\sigma _1\) respectively, with a correct label (\(y=z=1\)) being generated if the process hits the upper boundary. The drift and diffusion rates are independent of the input, conditional on the ground truth.

Bayesian implementation

Following similar approaches12,13, the log-likelihood of the observed data is shown below. Subsequently, the log likelihood is used for variational inference.

Derivation of log likelihood

For ease of notation, let \(\textbf{Y}_i = \{y_i^j\}_{j=1}^k\), \(\textbf{Y} = \{\textbf{Y}_i \}_{i=1}^n\) and \(\textbf{X} = \{x_i\}_{i=1}^n\). Define \(\varvec{\mu }_0 = \{ \mu _0^j \}_{j=1}^k\), \(\varvec{\mu }_1 = \{ \mu _1^j \}_{j=1}^k\), \(\varvec{\sigma }_0 = \{ \sigma _0^j \}_{j=1}^k\), and \(\varvec{\sigma }_1 = \{ \sigma _1^j \}_{j=1}^k\). Let \(\textbf{p} = \{p_i\}_{i=1}^n\), where \(p_i = \mathbb {P}(z_i=1)\). Note that by application the optional stopping theorem for a bounded drift diffusion process (see Ross (1996)30), the sensitivities and specificities for annotator j may be obtained conditional on the drift and diffusion parameters (see Supplemental Information) as follows, where S denotes the logistic function (e.g., \(S(a) = \frac{1}{1+e^{-a}}\)).

The log-likelihood may be derived as (see Supplemental Information),

Probabilistic graph of crowdsourcing model with variational inference. For simplicity, subscripts and superscripts are excluded. Orange circles denote observed variables, whereas grey circles are unobserved. Conditional on the input x, a gaussian process classifier f produces the unobserved ground truth label z. For annotator j, conditional on the ground truth z (i.e., \(z=0\) or \(z=1\)), the drift and diffusion parameters (\(\mu ^j_0\) and \(\sigma ^j_0\) when \(z=0\), or \(\mu ^j_1\) and \(\sigma ^j_1\) when \(z=1\)) are used in a drift-diffusion model to generate the label y.

Gaussian process classifier

Similar to related work in crowdsourcing10, a Gaussian process classifier f is chosen to relate inputs to ground truth labels (see Fig. 4). Inputs are denoted by \(x \in \mathbb {R}^m\) so that \(f: \mathbb {R}^m \xrightarrow {} \mathbb {R}\) is defined with mean function \(E[f(x)] = 0\) and covariance function (kernel) \(K(x,x') = Cov[f(x),f(x')]\). A typical choice of covariance function is the radial basis function (i.e. \(Cov(f(x),f(x')) = K_{\delta ,\gamma }(x,x') = \delta ^2 \exp {(-\gamma ||x-x'||^2})\), with |.| denoting the standard Euclidean norm. In this section, we do not define the choice of kernel. We denote \(\varvec{F} = \{f_i\}_{i=1}^n\), which represents the values of the Gaussian Process at the inputs (i.e. each \(f_i = f(x_i)\)). Finally, a logistic function relating the gaussian process to the true outputs is assumed, i.e.,

Variational inference

Variational inference simplifies the objective of maximizing the likelihood of the observations by maximizing the evidence lower bound (see10,31). Variational inference may be used to find an approximation \(q(\varvec{Z},\varvec{F},\varvec{\mu }_0,\varvec{\mu }_1,\varvec{\sigma }_0,\varvec{\sigma }_1)\) of the true posterior distribution \(p(\varvec{Z},\varvec{F},\varvec{\mu }_0,\varvec{\mu }_1,\varvec{\sigma }_0,\varvec{\sigma }_1|\varvec{Y})\). This is done by finding the distribution q that maximizes the evidence lower bound (ELBO), shown below on the right hand side of the equation below,

Prior distributions

Each latent variable \(f_i\) is assumed to be normally distributed with mean \(m_i\) and variance \(v_i^2\). Uniform priors are put on the variables \(\mu _0^j,\mu _1^j,\sigma _0^j\) and \(\sigma _1^j\) with supports \([m_{l0},m_{u0}]\),\([m_{l1},m_{u1}]\),\([s_{l0},s_{u0}]\),\([s_{l1},s_{u1}]\) respectively, where \(m_{l0},m_{u0},m_{l1},m_{u1},s_{l0},s_{u0},s_{l1},s_{u1}>0\).

Evidence lower bound

The mean field approximation is used, restricting the parametric form of \(q(\varvec{Z},\varvec{F},\varvec{\mu }_0,\varvec{\mu }_1,\varvec{\sigma }_0,\varvec{\sigma }_1)\) (eq. 7),

where \(\mathbf{N}(f_i|m_i',v_i'^2)\) denotes the PDF of a normal distribution with mean \(m_i'\) and variance \(v_i'^2\) evaluated at \(f_i\), and \(\mathbf {U}(a,b)\) denotes a uniform distribution with support [a, b]. The ELBO may be simplified as,

Additivity of the logarithm and linearity of the expectation yields,

Objective function

By calculation of Eq. (9) (see Supplemental Information), the objective is to vary the variational parameters to maximize the objective function (i.e., the ELBO) below,

Upon obtaining the optimal parameters from the ELBO, the distributional parameters may be estimated (see Supplemental Information),

Experiments

Overview of experiments

Two experiments were conducted on synthetic data in order to test the efficacy of the crowdsourcing technique. Synthetic data was generated with binary labels on a non-linear sinusoidal decision boundary (see “Generation of synthetic data” below). Reaction time data and annotations for ten hypothetical annotators were generated for the two experiments on different distributions of the annotator drift-diffusion parameters (see “Generation of annotator labels and reaction times”). Following, the crowdsourcing technique was implemented (see “Implementation of crowdsourcing technique”) with two unique cost functions (see Supplemental Information), and results were compared to a state-of-the-art technique, SVGPR8. Performance of the techniques were evaluated by the mean-squared errors of recovered drift-diffusion parameters, annotator sensitivity and specificity, and accuracy, sensitivity and specificity of the technique itself (see “Evaluating model performance” for more details).

Generation of synthetic data

In order to test the efficacy of the proposed crowdsourcing framework, two experiments were conducted on synthetic data, wherein annotator expertise and inputs varied. Corresponding to inputs, two-hundred points \(\{x_i = (x_{1i},x_{2i})\}_{i=1}^{200}\) were sampled randomly (via the uniform distribution) on the grid \([0,2\pi ]X[-1,1]\) for each of the two experiments. In order to measure the technique’s ability to recover a non-linear decision boundary, ground truth labels for the inputs were assigned \(z_i=0\) if \(sin(x_{1i}) < x_{2i}\) and \(z_i=1\) otherwise (Fig. 5) in both experiments. For every point \(x_i\), a corresponding label and reaction time was generated for each annotator by sampling from their corresponding drift-diffusion model conditioned on \(z_i\) defined by their drift and diffusion parameters, generated as shown below.

Generation of annotator labels and reaction times

For each input \(x_i\), conditional on the ground truth label \(z_i\), an annotator label and reaction time was generated for each annotator as follows. The process X(t) started at the origin (i.e. \(X(0) = 0\)) and was updated with time-step \(dt = 0.01\) according to the following equation,

where Y(t) was drawn from a normal distribution with mean zero and variance dt (i.e., Y(t) represented the Wiener process), and \(\mu\) and \(\sigma\) represented the drift and diffusion parameters for each annotator (which was conditionally dependent on \(z_i\)). The process terminated at the minimum time t when \(|X(t)| \ge 1\) (where the annotator label was set to the ground truth label \(z_i\) if and only if \(X(t) \ge 1\)).

Annotators in Experiment 1

Ten annotators, defined by ground truth dependent drift and diffusion parameters, were generated (Table 1a). Conditional on \(z=0\), annotator drift and diffusion parameters were drawn from uniform distributions with supports [0.1, 0.5] and [0.5, 0.9] respectively. Similarly, conditional on \(z=1\), annotator drift and diffusion parameters were drawn from uniform distributions with supports [0.1, 0.5] and [0.5, 0.9] respectively. The assumption of uniformly distributed parameters in drift-diffusion models has previously been made in the literature32.

Annotators in Experiment 2

Ten annotators, defined by ground truth dependent drift and diffusion parameters, were generated (Table 1a). Conditional on \(z=0\), annotator drift and diffusion parameters were drawn from uniform distributions with supports [0.1, 0.4] and [0.5, 0.7] respectively. Similarly, conditional on \(z=1\), annotator drift and diffusion parameters were drawn from uniform distributions with supports [0.2, 0.5] and [0.5, 0.9].

Implementation of crowdsourcing technique

Three methods were implemented in both experiments. Method 1 (see Supplemental Information) involved maximization of the ELBO with gradient ascent, with a regularizer term in the ELBO to enforce reaction time statistics. Method 2 (see Supplemental Information) involved maximization of the ELBO with gradient ascent, followed by rescaling of the drift and diffusion parameters based on reaction time data constraints. Method 3 involved implementation of a technique in the literature known as scalable variational Gaussian processes for crowdsourcing (SVGPCR)8, with uniform priors on the sensitivity and specificities of annotators. Method 3 served as a control experiment to assess the performance of methods 1 and 2 with respect to inference of ground-truth labels and annotator sensitivities and specificities.

Gradient ascent

The following implementation was used for all three methods across the two experiments. For maximization of the ELBO, gradient ascent was implemented in Python with the package autograd with learning rate \(\alpha = 0.01\). Initial values were set to 0.5 for all parameters. All variational parameters were constrained to be between 0 and 1. This was enforced by adding the following function to the ELBO (where v denotes a vector of parameters, for example, \(\mathbf {\mu _0}\)),

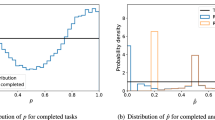

Simulations were implemented with \(\beta = 30\). Gradient ascent terminated either when three hundred iterations were completed, or when the change in the ELBO across iterations was less than 0.1 (whichever came later). In all cases, this led to three hundred iterations of gradient ascent (Fig. 6).

Gaussian process classifier

The values of the Gaussian process (\(f_i\)) obtained from variational inference were used with the inputs (\(x_i\)) to train the Gaussian process classifier. Specifically, a Gaussian process regressor relating \(x_i\) to \(f_i\) was trained in Python with the package Sklearn33 implementing algorithm 2.1 in Rasmussen34. A radial basis function (RBF) summed with a white kernel was chosen as the underlying kernel of the Gaussian process. During training, the length scale bounds for the RBF kernel and white kernel respectively were constrained between .001 to 1000 .001 to 10. Upon obtaining the kernel parameters, training of the Gaussian process classifier terminated. Given a point \(x^*\), with predicted-value predicted \(f^*\), the label \(z^* = 1\) was assigned if and only if \(S(f^*) > 0.5\), i.e., \(f^* > 0\).

Evaluating model performance

The following metrics were reported to evaluate the efficacy of the crowdsourcing framework: (1) the mean-squared error (MSE) of the recovered drift and diffusion parameters, (2) the MSE of the annotator sensitivity and specificity, (3) the overall accuracy, sensitivity and specificity of the crowdsourcing technique on the predicted labels of the inputs and (4) the accuracy, sensitivity and specificity of the crowdsourcing technique on a classification task. The classification task involved testing of the Gaussian process classifier on 10,000 points uniformly sampled on \([0,2\pi ]X[-1,1]\). These points were held constant across the three methods, but resampled for each of the two experiments. Ground-truth labels were as described in Fig. 7.

Ground truth labels for crowdsourcing task. In each experiment, two hundred inputs \(\{x_i = (x_{1i},x_{2i})\}_{i=1}^{200}\) were generated from uniformly sampling on the grid \([0,2\pi ]X[-1,1]\) above. A nonlinear decision boundary, \(sin(x_1)\) separates the ground truth labels \(z=0\) and \(z=1\), so that \(z_i = 0\) if \(sin(x_{1i}) < x_{2i}\), and \(z_i=1\) otherwise.

Evaluation of the ELBO from experiment 1 (A–C) and experiment 2 (D–F) during gradient ascent. The vertical axis depicts the value of the ELBO, whereas the horizontal axis depicts the iteration number in gradient ascent. The number of iterations in gradient ascent was the latter of 300 iterations, or a change in the ELBO of less than 0.1 across two subsequent iterations. In all cases, this resulted in 300 iterations of gradient ascent.

Visualization of Gaussian process classifier predictions for the three methods in experiment 1 (A–C) and experiment 2 (D–F) on the sinusoidal dataset. The horizontal axis (\(x_1\)) refers to the first dimension of the input, while the vertical axis (\(x_2\)) refers to the second dimension. Gaussian process predicted labels of 0 are depicted in orange; predicted labels of 1 are depicted in blue.

Simulation results

Method 2 yielded lower MSE values (Table 1) for the drift parameters than method 1 in experiment A (0.0684 vs. 0.0755 respectively for \(\mu _0\), and 0.0841 vs. 0.126 respectively for \(\mu _1\)). In experiment B, method 2 resulted in lower MSE for \(\mu _0\) than method 1 (0.0296 vs. 0.0765 respectively), but resulted in a slightly greater MSE for \(\mu _1\) (0.0319 vs. 0.0305 respectively). On the other hand, method 2 yielded higher MSE values for the diffusion parameter in both experiment A (0.114 vs. 0.0903 respectively for \(\sigma _0\), and 0.126 vs. 0.0841 respectively for \(\sigma _1\)) and experiment B (0.0907 vs. 0.0698 respectively for \(\sigma _0\), and 0.134 vs. 0.121 respectively for \(\sigma _1\)). For both experiments across all three methods, the maximum MSE of annotator sensitivity was 0.156 (obtained in experiment A, method 3) and the maximum MSE of annotator specificity was 0.0702 (also obtained in experiment A, method 3).

Accuracy, sensitivity and specificity on crowdsourced labels ranged between 97.9 to 99.1 percent across the two experiments and three methods. In experiment A, the highest metrics were achieved in methods 1 and 2; in experiment B, all three methods achieved the same metrics. Evaluation of the Gaussian process classifier (Fig. 6) provided slightly lower accuracies, sensitivities and specificities, which ranged between 87.8 to 97.6\(\%\) across both experiments and the three methods.

Discussion

Distributional assumptions in crowdsourcing, when made about annotators, typically focus on priors of the sensitivity and specificity of annotators (or more generally, priors on a confusion matrix of annotators), treating models of decision making that may be applied to annotators as disparate from the crowdsourcing task. This study sought to serve as a preliminary investigation into the usage of a neuroscientifically validated model of decision making to model behavior of annotators in a crowdsourcing task. Specifically, results showed that when drift-diffusion models were the generative process for annotator labels, variational inference allowed for recovery of annotator parameters with small MSE (<0.0841 for the drift parameter and <0.134 for the diffusion parameter) and similar accuracies to a state-of-the-art (SVGPR8) approach (accuracies were either greater or the same in method 1 and method 2 in all cases). To the authors’ knowledge, application of neuroscientifically validated models of decision making have not been previously applied to crowdsourcing tasks.

The benefit of usage of models of decision making in crowdsourcing tasks relates to the ability to learn extensively about annotators. Typically, only the reliabilities of annotators are learned in crowdsourcing tasks. Drift-diffusion modeling makes it possible to learn neurological correlates. Studies show that drift-diffusion parameters can be used to predict neural activity15,35. Given the integrative nature of machine learning with computational neuroscience, neural activity of accurate annotators in turn may be useful in development of machine learning algorithms, in line with literature showing the synergetic nature between the two fields36. An additional benefit is neurological correlates may be used as selection criter for annotators to be used in future labeling tasks, which may not be the same as the original crowdsourcing task. One study found that activity in the frontal eye fields correlate to drift-diffusion parameters in annotators37. Annotators ascertained to have greater frontal eye field activity may be selected in future tasks related to visual discrimination.

An interesting facet of the drift-diffusion model is the implicit relationship between response time and accuracy. The relationship can be seen by the following two observations: (1)The drift rate represents the average change in the process with respect to time; therefore, a larger drift rate (with the diffusion rate held constant) implies earlier termination of the process in expectation. (2) Larger drift rates imply greater accuracy. Consider an annotator annotator with drift and diffusion rate (conditional on \(z=0\)) given by \(\mu _0\) and \(\sigma _0\) respectively. The specificity of the annotator, given by \(S(\frac{2\mu _0}{\sigma _0^2})\), is monotonically increasing in the drift rate \(\mu _0\). Observations (1) and (2) together allude that an annotator with lower average reaction times may be deduced to have a higher drift rate, and hence accuracy, when controlling for the diffusion parameter. In practice, however, this may not always be the case. For example, an annotator who arbitrarily assigns a label to every input in a decision making task may have low average reaction time, but also low accuracy. Therefore, the assumption that annotators make decisions according to a drift-diffusion model should be validated in practical applications. It is also worth noting that the diffusion rate plays a role in the reaction times of the annotators. The optional stopping theorem relates the expected value of the drift-diffusion process at the reaction time (denoted \(E[X(\tau )]\)) to the reaction time \((\tau )\) and drift rate (\(\mu\)), i.e. \(E[X(\tau )] = \mu E[\tau ]\). Because \(E[X(\tau )]\) is monotonically decreasing in the diffusion rate, increasing the diffusion rate (while fixing the drift rate) corresponds to lower reaction times.

Limitations of the study are considered. Firstly, experiments relied upon the synthetic data, which relied upon the underlying assumption that annotator decisions were indeed made through the drift-diffusion model of decision making. While literature supports the drift-diffusion model as a neuroscientifically validated model of decision making14,15,17,35, a robust approach would be to test the performance of the technique on legitimate data, which may contain noise. For example, in the drift-diffusion model, response times are dependent solely on the decision making process. In practice, response times may not be dependent on the decision making process. For example, consider a hypothetical situation where a loss of internet connection occurs while an annotator completes an online annotation task from a personal device, such as a smart-phone or laptop. The loss of internet connection may result in a lag-period in their response, distorting the dynamics of the decision making process. The decision making process effectively incurs a discontinuity, even though the drift-diffusion model assumes a continuous-time process. It is also possible that more complex models, such as those that consider the drift and diffusion rates to be functions of time, may be warranted, which are not considered in the scope of this study. Therefore, it is of utmost significance that future work consider non-synthetic data, in order to evaluate the predictive power of the technique in practice.

Another limitation of the study is the increased computational complexity when compared to traditional crowdsourcing techniques. In this study, four parameters were introduced (\(\mu _0,\mu _1,\sigma _0,\sigma _1\)) per annotator into the ELBO, increasing the number of parameters per annotator by two to the traditional approach (which only considers the sensitivity and specificity, such as SVGPR). Therefore in the binary case, such as when compared to SVGPR, this technique imposes an additional 2N parameters, where N is the number of annotators. In the non-binary case, such as with R labels, SVGPR makes use of an RxR confusion matrix for each annotator, resulting in \(R^2\) parameters per annotator.8 In our case, however, each label corresponds to a unique drift diffusion process per annotator; hence, this results in an additional R parameters per annotator. Therefore, in the case of R labels and N annotators, SVGPR uses \(R^2N\) parameters for annotator reliability, whereas our approach uses \((R^2 + R)N\) parameters (i.e., RN more parameters). As the number of annotators grow, these considerations may add significantly more parameters, which may significantly increase computation time. In terms of scalability, this means that in I iterations of gradient ascent, RNI additional derivatives must be calculated in the proposed technique. These additional computations should be kept in mind when deciding in which approach to crowdsourcing to take.

It is also worth noting that the drift-diffusion model introduced in this paper assumes constant drift and diffusion parameters. More general models may assume that the drift and diffusion rates are functions of time38,39. Such a model would more accurately incporate changes in decision making, as well as annotator learning that might occur in a crowdsourcing task. The drift-diffusion model also treats the decision making process soley dependent on the individual, but if annotators collaborate, the model may not include interactions between annotators. The framework presented also assumes that, conditional on the ground truth, annotator decisions are independent of the ground truth, and generated from the drift-diffusion model. In practice, this may not be the case, as two distinct inputs with the same ground truth label may contain unique information involved in annotator decision making.

Futher challenges and limitations involved with the usage of drift-diffusion models in crowdsourcing tasks may be dependent on the specific crowdsourcing task. For example, it is possible that some crowdsourcing tasks may dynamically adjust in difficulty over time, introducing dependency on previous annotations of the annotator; however, the dependency of such a task on previous annotations is outside the scope of this study. Additionally, cognitive fatigue and motivational factors (i.e., the amount of value different annotators attribute to compensation for participation in crowdsourcing tasks, or perceived importance of the crowdsourcing task), may result in atypical annotator on a case-by-case basis. This factors should be kept in mind when designing crowdsourcing tasks and implementing crowdsourcing models.

While this study did not involve human participants, a number of ethical considerations may be of relevance when obtaining crowdsourcing data for the purpose of drift-diffusion modeling in future work. Due to the literature linking drift-diffusion parameters to neurological correlates and behavior, informed consent should be obtained from participants in crowdsourcing tasks wherein drift-diffusion modeling is implemented. In particular, it is suggested that participants be notified about the intended use of their data, provisions of withdrawal of their data, and details about the objectives in relation to their data. Data privacy and anonymization should be implemented wherever possible. Study protocols should be implemented in accordance to a standard framework, such as the Declaration of Helsinki40. Further, the authors believe that dissemination of results from crowdsourcing tasks implementing drift-diffusion modeling should protect the anonymity of individuals. This can act to ensure prevention of misuse of annotator data, such as for behavioral based marketing purposes or monetization of annotator data.

Finally, in addition to addressing ethical considerations, a comprehensive comparison of the performance of various models of decision making (such as the ballistic linear accumulator41 and reinforcement learning models42) and crowdsourcing priors in the literature should be conducted.

Data availability

All data generated in the experiments will be made publicly available on Open Science Framework (OSF) pending acceptance of the manuscript.

References

Sheng, V. S. & Zhang, J. Machine learning with crowdsourcing: A brief summary of the past research and future directions. Proc. AAAI Conf. Artif. Intell. 33, 9837–9843. https://doi.org/10.1609/aaai.v33i01.33019837 (2019).

Ranard, B. L. et al. Crowdsourcing-harnessing the masses to advance health and medicine, a systematic review. J. Gen. Intern. Med. 29, 187–203. https://doi.org/10.1007/s11606-013-2536-8 (2014).

Thawrani, V., Londhe, N. D. & Singh, R. Crowdsourcing of medical data. IETE Tech. Rev. 31, 249–253. https://doi.org/10.1080/02564602.2014.906971 (2014).

Zevin, M. et al. Gravity spy: Integrating advanced Ligo detector characterization, machine learning, and citizen science. Classic. Quantum Grav. 34, 064003. https://doi.org/10.1088/1361-6382/aa5cea (2017).

Using crowdsourcing for scientific analysis of industrial tomographic images. ACM Trans. Intell. Syst. Technol. 7, 1–25 https://doi.org/10.1145/2897370 (2016).

LaToza, T. D. & van der Hoek, A. Crowdsourcing in software engineering: Models, motivations, and challenges. IEEE Softw. 33, 74–80. https://doi.org/10.1109/MS.2016.12 (2016).

Litman, L., Robinson, J. & Abberbock, T. Turkprime.com: A versatile crowdsourcing data acquisition platform for the behavioral sciences. Behav. Res. Methods 49, 433–442. https://doi.org/10.3758/s13428-016-0727-z (2017).

López-Pérez, M. et al. Learning from crowds in digital pathology using scalable variational gaussian processes. Sci. Rep. 11, 11612. https://doi.org/10.1038/s41598-021-90821-3 (2021).

Irshad, H. et al. Crowdsourcing scoring of immunohistochemistry images: Evaluating performance of the crowd and an automated computational method. Sci. Rep. 7, 43286. https://doi.org/10.1038/srep43286 (2017).

Ruiz, P., Morales-Ãlvarez, P., Coughlin, S., Molina, R. & Katsaggelos, A. K. Probabilistic fusion of crowds and experts for the search of gravitational waves. Knowl.-Based Syst. 261, 110183. https://doi.org/10.1016/j.knosys.2022.110183 (2023).

Puttinaovarat, S. & Horkaew, P. Flood forecasting system based on integrated big and crowdsource data by using machine learning techniques. IEEE Access 8, 5885–5905. https://doi.org/10.1109/ACCESS.2019.2963819 (2020).

Raykar, V. C. et al. Learning from Crowds (2010).

Li, S.-Y., Huang, S.-J. & Chen, S. Crowdsourcing aggregation with deep Bayesian learning. Sci. China Inf. Sci. 64, 130104. https://doi.org/10.1007/s11432-020-3118-7 (2021).

Myers, C. E., Interian, A. & Moustafa, A. A. A practical introduction to using the drift diffusion model of decision-making in cognitive psychology, neuroscience, and health sciences. Front. Psychol.https://doi.org/10.3389/fpsyg.2022.1039172 (2022).

Gupta, A. et al. Neural substrates of the drift-diffusion model in brain disorders. Front. Comput. Neurosci.https://doi.org/10.3389/fncom.2021.678232 (2022).

Ratcliff, R. & Tuerlinckx, F. Estimating parameters of the diffusion model: Approaches to dealing with contaminant reaction times and parameter variability. Psychon. Bull. Rev. 9, 438–481. https://doi.org/10.3758/BF03196302 (2002).

Ratcliff, R. & McKoon, G. The diffusion decision model: Theory and data for two-choice decision tasks. Neural Comput. 20, 873–922. https://doi.org/10.1162/neco.2008.12-06-420 (2008).

Herz, D. M., Zavala, B. A., Bogacz, R. & Brown, P. Neural correlates of decision thresholds in the human subthalamic nucleus. Curr. Biol. 26, 916–920. https://doi.org/10.1016/j.cub.2016.01.051 (2016).

Beste, C. et al. Dopamine modulates the efficiency of sensory evidence accumulation during perceptual decision making. Int. J. Neuropsychopharmacol. 21, 649–655. https://doi.org/10.1093/ijnp/pyy019 (2018).

World medical association declaration of helsinki. JAMA 310, 2191. https://doi.org/10.1001/jama.2013.281053 (2013).

Ruotsalo, T., Mäkelä, K. & Spapé, M. Crowdsourcing affective annotations via FNIRS-BCI. IEEE Trans. Affect. Comput. 15, 297–308. https://doi.org/10.1109/TAFFC.2023.3273916 (2024).

Morris, V. et al. Using crowdsourcing to examine behavioral economic measures of alcohol value and proportionate alcohol reinforcement. Exp. Clin. Psychopharmacol. 25, 314–321. https://doi.org/10.1037/pha0000130 (2017).

Strickland, J. C. & Stoops, W. W. The use of crowdsourcing in addiction science research: Amazon mechanical Turk. Exp. Clin. Psychopharmacol. 27, 1–18. https://doi.org/10.1037/pha0000235 (2019).

Pennington, C. R., Jones, A. J., Tzavella, L., Chambers, C. D. & Button, K. S. Beyond online participant crowdsourcing: The benefits and opportunities of big team addiction science. Exp. Clin. Psychopharmacol. 30, 444–451. https://doi.org/10.1037/pha0000541 (2022).

Wazny, K. Applications of crowdsourcing in health: An overview. J. Glob. Healthhttps://doi.org/10.7189/jogh.08.010502 (2018).

Brabham, D. C., Ribisl, K. M., Kirchner, T. R. & Bernhardt, J. M. Crowdsourcing applications for public health. Am. J. Prevent. Med. 46, 179–187. https://doi.org/10.1016/j.amepre.2013.10.016 (2014).

Washington, P. et al. Validity of online screening for autism: Crowdsourcing study comparing paid and unpaid diagnostic tasks. J. Med. Internet Res. 21, e13668. https://doi.org/10.2196/13668 (2019).

Dikker, S. et al. Crowdsourcing neuroscience: Inter-brain coupling during face-to-face interactions outside the laboratory. NeuroImage 227, 117436. https://doi.org/10.1016/j.neuroimage.2020.117436 (2021).

Keuleers, E. & Balota, D. A. Megastudies, crowdsourcing, and large datasets in psycholinguistics: An overview of recent developments. Q. J. Exp. Psychol. 68, 1457–1468. https://doi.org/10.1080/17470218.2015.1051065 (2015).

Ross, S. Stochastic Processes. 2 edn. (Wiley, 1996).

Blei, D. M., Kucukelbir, A. & McAuliffe, J. D. Variational inference: A review for statisticians. J. Am. Stat. Assoc. 112, 859–877. https://doi.org/10.1080/01621459.2017.1285773 (2017).

Wiecki, T. V., Sofer, I. & Frank, M. J. HDDM: Hierarchical Bayesian estimation of the drift-diffusion model in python. Front. Neuroinform.https://doi.org/10.3389/fninf.2013.00014 (2013).

Pedregosa, F. et al. Scikit-learn: Machine learning in Python. J. Mach. Learn. Res. 12, 2825–2830 (2011).

Rasmussen, C. & Williams, C. K. I. Gaussian Processes for Machine Learning (The MIT Press, 2005).

Purcell, B. A. & Palmeri, T. J. Relating accumulator model parameters and neural dynamics. J. Math. Psychol. 76, 156–171. https://doi.org/10.1016/j.jmp.2016.07.001 (2017).

Savage, N. How AI and neuroscience drive each other forwards. Nature 571, S15–S17. https://doi.org/10.1038/d41586-019-02212-4 (2019).

Hanks, T. D. & Summerfield, C. Perceptual decision making in rodents, monkeys, and humans. Neuron 93, 15–31. https://doi.org/10.1016/j.neuron.2016.12.003 (2017).

Lund, S. P., Hubbard, J. B. & Halter, M. Nonparametric estimates of drift and diffusion profiles via Fokker–Planck algebra. J. Phys. Chem. B 118, 12743–12749. https://doi.org/10.1021/jp5084357 (2014).

Fengler, A., Bera, K., Pedersen, M. L. & Frank, M. J. Beyond drift diffusion models: Fitting a broad class of decision and reinforcement learning models with HDDM. J. Cognit. Neurosci. 34, 1780–1805. https://doi.org/10.1162/jocn_a_01902 (2022).

World Medical Association Declaration of Helsinki. JAMA 310, 2191. https://doi.org/10.1001/jama.2013.281053 (2013).

van der Velde, M., Sense, F., Borst, J. P., van Maanen, L. & van Rijn, H. Capturing dynamic performance in a cognitive model: Estimating act-r memory parameters with the linear ballistic accumulator. Top. Cognit. Sci. 14, 889–903. https://doi.org/10.1111/tops.12614 (2022).

Sutton, R. S. & Barto, A. G. Reinforcement Learning: An Introduction. 2 edn. (The MIT Press, 2018).

Author information

Authors and Affiliations

Contributions

SL conceived and designed the study, wrote the manuscript, and conducted the experiments. AK edited the manuscript, and verified the technique, experiments, conclusions and equations. All authors reviewed and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Lalvani, S., Katsaggelos, A. Crowdsourcing with the drift diffusion model of decision making. Sci Rep 14, 11311 (2024). https://doi.org/10.1038/s41598-024-61687-y

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-024-61687-y

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.