Abstract

The application of thermodynamic reasoning in the study of learning systems has a long tradition. Recently, new tools relating perfect thermodynamic adaptation to the adaptation process have been developed. These results, known as fluctuation theorems, have been tested experimentally in several physical scenarios and, moreover, they have been shown to be valid under broad mathematical conditions. Hence, although not experimentally challenged yet, they are presumed to apply to learning systems as well. Here we address this challenge by testing the applicability of fluctuation theorems in learning systems, more specifically, in human sensorimotor learning. In particular, we relate adaptive movement trajectories in a changing visuomotor rotation task to fully adapted steady-state behavior of individual participants. We find that human adaptive behavior in our task is generally consistent with fluctuation theorem predictions and discuss the merits and limitations of the approach.

Similar content being viewed by others

Introduction

The study of learning systems with concepts borrowed from statistical mechanics and thermodynamics has a long history reaching back to Maxwell’s demon and the ensuing debate on the relation between physics and information34. Over the last 20 years, the informational view of thermodynamics has experienced great developments, which has allowed to broaden its scope form equilibrium to non-equilibrium phenomena10, 22. Of particular importance are the so-called fluctuation theorems7, 20, 42, which relate equilibrium quantities to non-equilibrium trajectories allowing, thus, to approximate equilibrium quantities via experimental realizations of non-equilibrium processes32, 53. Among the fluctuation theorems, two results stand out, Jarzynski’s equality4, 19, 21 and Crooks’ fluctuation theorem6, 8, as they aim to bridge the apparent chasm between reversible microscopic laws and irreversible macroscopic phenomena29.

The advances in non-equilibrium thermodynamics have recently also led to new theoretical insights into simple learning systems12, 13, 16, 31, 35, 46. Abstractly, thermodynamic quantities like energy, entropy or free energy can be thought to define order relations between states14, 25, which makes them applicable to a wide range of problems. In the economic sciences, for example, such order relations are typically used to define a decision-maker’s preferences over states30. Accordingly, a decision-maker or a learning system can be thought to maximize a utility function, analogous to a physical system that aims to minimize an energy function. Moreover, in the presence of uncertainty in stochastic choice, such decision-makers can be thought to operate under entropy constraints reflecting the decision-maker’s precision31, 34, resulting in soft-maximizing the corresponding utility function instead of perfectly maximizing it. This is formally equivalent to following a Boltzmann distribution with energy given by the utility. Therefore, in this picture, the physical concept of work corresponds to utility changes caused by the environment, whereas the physical concept of heat corresponds to utility gains due to internal adaptation46. Like a thermodynamic system is driven by work, such learning systems are driven by changes in the utility landscape (e.g. changes in an error signal). By exposing learning systems to varying environmental conditions, it has been hypothesized that adaptive behavior can be studied in terms of fluctuation theorems12, 16, which are not necessarily tied to physical processes but are broadly applicable to stochastic processes satisfying certain constraints18.

Fluctuation theorems are usually deployed in statistical mechanics; particularly, the study of nonequilibrium steady states in thermodynamics. In this setting, one normally assumes a probabilistic description of an ensemble of many particles, i.e., the kinds of systems usually considered in statistical thermodynamics. However, as described in41, 42, exactly the same principles and fluctuation theorems also apply to the path of a single particle, leading to stochastic thermodynamics. This suggests that fluctuation theorems may not only be applicable to the statistics of ensembles of many learners, but also when describing the trajectory of a single participant during a learning process.

Although fluctuation theorems have been empirically observed in numerous experiments in the physical sciences1, 5, 11, 28, 37, 44, there have been no reported experimental results relating fluctuation theorems to adaptive behavior in humans or other living beings. Here, we test Jarzynski’s equality and Crooks’ fluctuation theorem experimentally in a human sensorimotor adaptation task. In this context, the fluctuation theorem establishes a linear relationship between the externally imposed utility changes driving the learning process (which are directly related to non-predicted information and energy dissipation46) and the log-probability ratio between forward and backward adaptation trajectories, when exposing participants to the sequence of environments either in the forward or reverse order. Accordingly, such learners can be quantitatively characterized by a hysteresis effect that can also be observed in simple physical systems.

Results

In a visuomotor adaptation task, human participants controlled a cursor on a screen towards a single stationary target by moving a mechanical manipulandum that was obscured from their vision under an overlaid screen—see Fig. 1A. Crucially, in each trial n, the position of the cursor could be rotated with angle \(\theta _n\) relative to the actual hand position so that participants had to adapt when moving the cursor from the start position to the target. To measure participants’ adaptive state, we recorded their movement position at the time of crossing a certain distance from the start position, so that their response could be characterized by an angle \(x_n\). The deviation between participants’ response \(x_n\) and the required movement incurs a sensorimotor loss \(E_n\)24 in trial n, that can be quantified as an exponential quadratic error

that depends on the actual rotation angle \(\theta _n\) set in trial n. The parameter b is a participant-specific parameter allowing for bias due to posture, biomechanics, the mechanics of the manipulandum, or other influences—see Fig. 1D. The loss (1) is taken to be the energy (or negative utility) of a participant’s stochastic response \(X_n = x_n\). For a bounded rational decision-maker26, 27, 31, 39 that optimizes this loss under uncertainty, the optimal pointing behavior after a suitably long adaptation time is described by a Boltzmann equilibrium distribution \(p_n^{eq}\) of the form

for all \(x_n\in A_n\), where the sensorimotor error \(E_n(x_n)\) plays the role of an energy, the free energy term \(F_n = \frac{1}{\beta } \log \int _{A_n} \exp \left( -\beta E_n(x_n) \right) dx_n\) is caused by the normalization, and \(A_n\) is the support of the equilibrium distribution \(p_{n}^{eq}\), which will vary for each participant, as we explain in Sect. A.3.3. See Fig. 1C for a representation of (2). Moreover, the softness-parameter \(\beta\), also known as inverse temperature or precision, controls the trade-off between entropy maximization and energy minimization, essentially interpolating between a purely stochastic choice (\(\beta = 0\)) and a purely rational choice (\(\beta \rightarrow \infty\)) minimizing the energy perfectly.

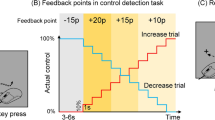

(A) Schematic representation of an experimental trial with deviation angle \(\theta\). The dotted line represents the participant’s hand movement and the continuous line represents the rotated movement observed on the screen. (B) Experimental protocol. The continuous line represents the deviation angles \(\theta\) imposed during one experimental cycle, where trials 1 to 25 constitute the forward process and trials 34 to 58 constitute the backward process. The dotted line represents the beginning of the next cycle. (C) Illustration of the equilibrium distributions (2) with \(b,\theta _n=0\) resulting from the exponential quadratic error (1) and, respectively, \(\beta =1,1.5,2\). The shaded area represents the target, which tolerates, at most, an error of \(2 ^\circ\). (D) Comparison between the equilibrium distributions that we fit using the initial 100 trials (before participants experience any perturbation) and participants’ performance in the washout plateaus between cycles (the sequence of trials with \(\theta = 0\) that separate forward and backward protocol), to check whether participants equilibrate between cycles, as required by the fluctuation theorem. Red shows the normalized error histogram for the in-between plateaus exemplarily for participant 7, green shows the histogram of the fitted equilibrium distribution for the initial block of 100 trials of the same participant. The comparison for all other participants can be found in Fig. 7.

The task consisted of a sequence of target reaching trials, where the rotation angle \(\theta _n\) changed from one trial n to the next trial \(n+1\) according to a given up-down protocol—see Fig. 1B—, so that participants’ responses over trials could be represented by a trajectory \(\varvec{x}=(x_0,x_1,\ldots ,x_N)\). When the environment is changing over trials, we can distinguish cumulative error changes \(\Delta E_{ext}(\varvec{x}) {:=}\sum _{n=0}^{N-1} (E_{n+1}(x_n)-E_{n}(x_n))\) that are induced externally by changes in the environmental parameter \(\theta _n\), from cumulative error changes \(\Delta E_{int}(\varvec{x}) {:=}\sum _{n=1}^{N} (E_{n}(x_n)-E_{n}(x_{n-1}))\) due to internal adaptation when subjects change their response from \(x_{n-1}\) to \(x_{n}\). Crucially, it is exactly the externally induced changes in error, \(\Delta E_{ext}(\varvec{x})\), analogous to the physical concept of work, that drive the adaptation process: if \(\Delta E_{ext}(\varvec{x})\) is large, the system is more surprised and has to adapt more. In the following, we thus refer to \(\Delta E_{ext}(\varvec{x})\) as driving error or driving signal. When applying Crooks’ fluctuation theorem for general adaptive systems18 to the above setting, we obtain the linear relation

where \(\varvec{x}^R = (x_N,\ldots ,x_1)\) is the reverse trajectory, \(\Delta F\) denotes the free energy difference \(F_N-F_0\) and the distributions \(\rho ^F(\cdot )\) and \(\rho ^B(\cdot )\) denote the probability of observing a certain trajectory when the learner faces a series of environments in some specific order or the order is reversed, respectively. This form of Crooks’ theorem allows for an intuitive interpretation, in that any difference in probability of a trajectory and its reverse signifying a hysteresis can be directly related to an excess loss that is irretrievably generated because of imperfect adaptation. Unfortunately, Equation (3) is hard to determine from data, as it would require to estimate probability distributions over paths. However, there is an equivalent form of Crooks’ theorem that groups all trajectories according to their associated value of \(\Delta E_{ext}(\varvec{x})\) with corresponding distributions \(\rho ^F\) and \(\rho ^B\) over these values, such that

The distribution \(\rho ^F(\cdot )\) can be interpreted as the probability that the learner experiences a certain overall surprise when being exposed sequentially to a series of environments and \(\rho ^B(\cdot )\) is the analogous concept when the order in which the environments are presented is reversed. In equation (4), these densities are evaluated at the actual driving errors \(\Delta E_{ext}(\varvec{x})\) and \(-\Delta E_{ext}(\varvec{x})\), respectively, for a particular adaptive trajectory \(\varvec{x}\).

A direct consequence of (4) is Jarzynski’s equality6, which states that

where \(\langle ~ \cdot ~ \rangle {:=}{\mathbb {E}}[~\cdot ~]\) denotes the expectation operator, considering \(\varvec{X} = (X_n)_{n=0}^N\) a Markov chain with transition densities \(\Pi _n\) that have \(p^{eq}_n\) as stationary distributions, that is, for each n, \(p^{eq}_n\) is the stationary distribution for \(X_n\). In our experiment, \(\varvec{X}\) represents participants’ responses that are repeated over multiple repetitions of the forward-backward protocol. In the following, we will test the relationships (4) and (5) experimentally with \(\Delta F = 0\) as our human learners start and end in the same environmental state (i.e. \(F_N=F_0\)). Note that, in our particular setting where there is no overall change in the free energy \((\Delta F=0)\), Equation (5) suggests that the expected value \(\big \langle e^{-\beta \Delta E_{ext}(\varvec{X})}\big \rangle\) equals \(e^{-\beta 0}=1\) irrespective of the value taken by \(\beta\). This provides a quantitative prediction that we will evaluate empirically below.

In our experiment the task is divided into 20 cycles of 66 trials each, following the protocol (9) illustrated in Fig. 1B. We refer to trials 1 to 25 of each cycle as a realization of the forward process and trials 34 to 58 as a realization of the backward process. Notice the backward process consists of the same angles as the forward process, that is, the same utility functions, but in reversed order. Thus, we record for each participant 20 values for \(\Delta E_{ext}(\varvec{x})\) in both the forward and backward processes that we use to estimate participants’ probability densities of the forward and backward processes, \(\rho ^F\) and \(\rho ^B\), respectively, using kernel density estimation. As the amount of data is limited to test the linear relation in (4), we will use simulation results in the following to compare against participants’ behavior.

Simulation of Crooks’ fluctuation theorem. (A) Simulation with 1000 cycles. In black, the theoretical prediction; in red, the linear regression for the simulated data and, in green, the simulated points. Since the simulated data set adjusts pretty well to Crooks’ fluctuation theorem (4), Jarzynski’s equality (5) is fulfilled. (B) Simulation with 20 cycles and bootstrapping. The black line is the theoretical prediction (4) while the red line and shaded area are, respectively, the mean and the 99 % confidence interval of (4) after 1000 bootstraps of the driving error values obtained in a single run (which consists of 20 cycles).

When simulating an artificial decision-maker based on a stochastic optimization scheme with Markovian dynamics, for example a Metropolis-Hasting algorithm with target distribution \(p_n^{eq}\propto \exp (-\beta E_n)\), it is clear that we can recover the linear relationship (4), provided that sufficient samples are collected18—see, for example, a simulation with 1000 cycles in Fig. 2A, where we can see a good adjustment between the theoretical prediction (in black) and the linear regression of the observed data (in red). As a result, (5) also holds in this scenario. The more critical question is what happens when only few samples are available. To this end, we use the stochastic optimization algorithm to simulate the protocol of our experiment, that is, 20 cycles, and indicate confidence intervals using 1000 bootstraps. It can be seen in Fig. 2B that the theoretical prediction is consistent with the \(99\%\) confidence interval in the region where \(|\Delta E_{\text {ext}}| \le 4\) (which is the region where our experimental data lies). Using the same bootstrapped data, we obtain several estimates of \(\langle e^{- \Delta E_{ext}(\varvec{X})}\rangle\) (the mean of \(e^{- \Delta E_{ext}(\varvec{X})}\) for the observed values of \(\Delta E_{ext}(\varvec{X})\) at each bootstrap) which we use to calculate a confidence interval for it. This results in the \(99\%\) confidence interval for \(\langle e^{-\Delta E_{ext}(\varvec{X})}\rangle\) being (0.48, 1.64), which is consistent with the theoretical prediction \(\langle e^{-\Delta E_{ext}(\varvec{X})}\rangle = 1\) for \(\Delta F = 0\) according to Equation (5). Accordingly, we will expect a similar behavior for our experimental data. Note we take, for simplicity, \(b=0\), \(\beta =1\) and, for all n, \(A_n=[-90,90]\) in these simulations (see Methods).

Hysteresis effect. The filled triangles are the mean of the observed angles for every deviation in both the forward process, in green, and the backward process, in red. The black line is the forward protocol. Note that we have mirrored the triangles for the backward process to make them coincide with those in the forward process that are exposed to the same true angle. Participants that achieve at least \(50\%\) adaptation are shaded by a green background color. Hysteresis can be observed between trials 1 and 5, 9 and 17 and 21 and 25. Notice, as expected, the forward means are below the backward in the first region, above in the second and below again in the third.

Participants’ average adaptive responses can be seen in Fig. 3 compared to the experimentally imposed true parameter values (the trial-by-trial responses can be seen in Fig. 6). The green and red lines distinguish the forward and backward trajectories, respectively, so that, from the contrast between the two curves, hysteresis becomes apparent, as common in simple physical systems22 and as reported previously in similar experiments for sensorimotor adaptation50. Participants that achieve at least \(50\%\) adaptation are shaded by a green background color and are our participants of interest. The three participants that fail to achieve this minimum adaptation level are marked by a red shade. Instead of excluding these participants entirely from the analysis, we keep them in to show the contrast to the well-adapted participants and to highlight that the results reported for the well-adapted participants do not hold trivially for any participant producing inconsistent behavior.

Figure 4 shows participants’ data compared to the theoretical prediction from (4) and the 99 % confidence interval after 1000 bootstraps as in the case of the simulations in Fig. 2B. There, we see that our data follow the trend of the theoretical prediction and lie within or close to the confidence interval bounds of the prediction in broad regions for several participants. This is not a trivial result, as can be easily seen, when randomizing the temporal order of the trajectory points or when replacing the utility function with another one that does not fit the setup. Figure 5A,B show this, for example, for an inverted Mexican hat ((10) with \(\sigma =4\)) that assigns low utility to the target region, and for resamples of the trajectory points in a random order, respectively. Both results are clearly incompatible with the theoretical prediction.

When conducting an additional robustness analysis in Fig. 8, we found that, under the proposed utility function, participants’ behavior is compatible with Crooks’ fluctuation theorem for a broad neighbourhood of parameter settings, but breaks down when choosing implausible parameters. Regarding Jarzynski’s equality (5), the confidence intervals for the majority of participants are consistent with the theoretical prediction when using the bootstrapped values to calculate \(\langle e^{-\beta \Delta E_{ext}(\varvec{X})}\rangle\) (cf. Table 1). In contrast, when following the same procedure for both the inverted Mexican hat and the randomized procedure, we obtain consistency for a considerably smaller number of participants. In particular, for the inverted Mexican hat, we obtain consistency for only two participants. Moreover, these participants are \(S_8\) and \(S_9\), which belong to the group that did not reach at least \(50\%\) adaptation (indicated by the red background area in the figures). For the randomized procedure, the expected number of participants that show consistency is also close to two, although the specific participants which are consistent vary with the realization of the randomized procedure. More specifically, after 1000 runs of the randomized procedure, the mean number of consistent participants we observed was 2.33.

Discussion

Experimental results for Crooks’ fluctuation theorem when the sensorimotor loss behaves as an exponential quadratic error (1). The black line is the theoretical prediction of Crooks’ fluctuation theorem (4) while the curves stand for the mean path after 1000 bootstraps of the observed driving error values. Participants that achieve at least \(50\%\) adaptation (c.f. Fig. 3) are shaded by a green background color. The shaded areas inside the graphs are the 99% confidence intervals which result from bootstrapping. Note we fit the parameters for each participant according to Sect. A.3.3.

Control results for Crooks’ fluctuation theorem in two scenarios: (A) the sensorimotor loss behaves like a Mexican hat function and (B) the sensorimotor loss behaves as an exponential quadratic error but we sample the observed angles randomly with repetition. The black line is the theoretical prediction of Crooks’ fluctuation theorem (4) while the curves stand for the mean path after 1000 bootstraps of the observed driving error values. The shaded areas inside the graphs are the 99% confidence intervals which result from bootstrapping. Note, for simplicity, we assume \(\beta =1\) for all participants when using the Mexican hat to demonstrate that the result in (A) does not trivially hold for any cost function. For (B), we fit the parameters for each participant according to Sect. A.3.3.

In our experiment we have investigated the hypothesis that human sensorimotor adaptation may be participant to the thermodynamic fluctuation theorems first reported by Crooks7 and Jarzynski20. In particular, we tested whether changes in sensorimotor error induced externally by an experimental protocol are linearly related to the log-ratio of the probabilities of behavioral trajectories under a given forward and time-reversed backward protocol of a sequence of visuomotor rotations. We found that participants’ data, in all cases where participants showed an appropriate adaptive response, was consistent with this prediction or close to its confidence interval bounds, as expected from our simulations with finite sample size. Moreover, we found that the exponentiated error averaged over the path probabilities was statistically compatible with unity for these participants, in line with Jarzynski’s theorem.

Together these results not only extend the experimental evidence of Boltzmann-like relationships between the probabilities of behavior and the corresponding order-inducing functions—such as energy, utility, or sensorimotor error—from the equilibrium to the non-equilibrium domain, but also from simple physical systems to more complex learning systems when studying adaptation in changing environments, deepening, thus, the parallelism between thermodynamics in physics and decision-making systems31.

When testing for the validity of thermodynamic relations, one of the most critical issues is the choice of the energy function, that is, in our case, the error cost function. In physical systems, the energy function is usually hypothesized following from simple models involving point masses, springs, rigid bodies, etc., and generally requires knowledge of the degrees of freedom of the system under consideration. Here we have used an exponential quadratic error as a utility function, as it has been suggested previously that human pointing behavior can be best captured by loss functions that approximately follow a negative parabola for small errors and then level off for large errors24. In the absence of very large errors, many studies in the literature on sensorimotor learning have only used the quadratic loss term48, 52. Quadratic errors have also been advocated in the context of the central limit theorem and in terms of prediction errors in the context of predictive coding36, 45,46,47. Thus, our assumptions regarding the loss function are compatible with the literature at large. Crucially, the reported results fail when assuming non-sensical cost functions, like the Mexican hat.

Experimental tests of both Jarzynski’s equality (5) and Crooks fluctuation theorem (4) have been previously reported in classical physics5, 11, 28, 37, 49 and also, in the case of Jarzynski’s equality, in quantum physics1, 44. Importantly, these results have been successfully tested in several contexts: unfolding and refolding processes involving RNA5, 28, electronic transitions between electrodes manipulating a charge parameter37, rotation of a macroscopic object inside a fluid surrounded by magnets where the current of a wire attached to the macroscopic object is manipulated11, and a trapped ion1, 44. Despite differences in physical realization, protocols, and energy functions (and thus work functions), all the above experiments follow the same basic design behind the approach presented here. This supports the claim that fluctuation theorems do not necessarily rely on involved physical assumptions but are simple mathematical properties of certain stochastic processes18, although originally they were derived in the context of non-equilibrium thermodynamics6, 19.

Mathematically, Crooks theorem (4) holds for any Markov process (i), whose initial distribution is in equilibrium (ii), and whose transition probabilities satisfy detailed balance with respect to the corresponding equilibrium distributions (iii)18. Our experimental test of Equation (4) can be seen, thus, as a test for the hypothesis that human sensorimotor adaptation processes satisfy conditions (i), (ii), and (iii). Condition (i) requires adaptation to be Markovian, which is in line with most error-driven models of sensorimotor adaptation43 that assume some internal state update of the form \(x_{t+1}=f(x_t, e)\) with adaptive state x and error e. While such models have proven fruitful for simple adaptation tasks like ours, they also have clear limitations, for example when it comes to meta-learning processes that have been reported in more complex learning scenarios2, 17. Condition (ii) is supported by our data in the second and last rows of Fig. 7, where it can be seen that participants’ behavior at the beginning of each cycle is at least approximately consistent with the equilibrium behavior recorded prior to the start of the experiment. Condition (iii) requires that the adaptive process converges to the equilibrium distribution (2) dictated by the environment and that the behaviour remains statistically unchanged when staying in that environment. Moreover, it requires that the equilibrium behavior at each energy level is time-reversible, that means, once adaptation has ceased the trial-by-trial behavior would have the same statistics when played forward or backward in a video recording. Note, however, that does not imply time-reversibility over the entire adaptation trajectory, but is only required locally for each transition step. In our sensorimotor setting, this would mean that after a suitably long adaptation time with perfect adaptation there would ultimately be no hysteresis, and accordingly it would be impossible to tell where the learner has come from. If we regard, for example, Metropolis-Hastings as a plausible model of adaptation, as some kind of stochastic optimization scheme, detailed balance and time reversibility would be fulfilled16, 38. What kind of model describes human adaptive behavior best, and whether such a model is compatible with detailed balance is ultimately an open question. In our experiment at least, the condition seems to be fulfilled well enough to stay within the confidence intervals associated with the predictions made by Crooks’ theorem.

While Jarzynski’s equality (5) directly follows from Crooks theorem, weaker assumptions are sufficient to derive it18, 19. In particular, condition (iii) regarding detailed balance is not necessary, as it is only required that the behavioral distribution does not change anymore once the equilibrium distribution is reached. Thus, Equation (5) can be used as a test for the weaker hypothesis that human sensorimotor adaptation satisfies conditions (i), (ii) and stationarity after convergence. While Jarzynski’s equality only requires samples from the forward process, Crooks theorem also tests the relation between the forward and the backward processes. In particular, Crooks theorem decouples the information processing with respect to any particular environment from the biases introduced by the adaptation history, that is, it assumes the transition probabilities for any given environment are independent of the history. In other words, the conditional probabilities have no memory and, thus, all memory effects are explained in terms of the state of the learning system prior to making some decision. Hence, the observed difference in behaviour after having adapted to the same environment, the hysteresis, is solely explained in terms of the information processing history before encountering the environment. Such hysteresis effects are not only common in simple physical systems like magnets or elastic bands, but have also been reported for sensorimotor tasks23, 40, 50. The hysteresis effects we report in Fig. 3 are in line with a system obeying Crooks theorem and can be replicated using Markov Chain Monte Carlo simulations of adaptation16.

Our study is part of a number of recent studies that have tried to harness equilibrium and non-equilibrium thermodynamics to gain new theoretical insights into simple learning systems12, 13, 31, 35, 46. For example, the information that can be acquired by learning in simple forward neural networks has been shown to be bounded by thermodynamic costs given by the entropy change in the weights and the heat dissipated into the environment42. More generally, when interpreting a system’s response to a stochastic driving signal in terms of computation, the amount of non-predictive information contained in the state about past environmental fluctuations is directly related to the amount of thermodynamic dissipation46. This suggests that thermodynamic fundamentals, like the second law, can be carried over to learning systems. Consider, for example, a Bayesian learner where the utility is given by the log-likelihood model and where the data are presented either in one chunk for a single update, or consecutively in little batches with many little updates. Rather than having one big surprise, in the latter case the cumulative surprise is much smaller as prior expectations can be continuously adapted, up to a point where the cumulative surprise reaches a lower bound given by the log-likelihood of the data, which corresponds to the free energy difference before and after learning16. Fluctuation theorems have recently also been attributed a fundamental role in the context of the Free Energy Principle, with relations to information geometry and decision-theoretic concepts like risk, ambiguity, expected information gain and expected value9, 33. Due to the central role of the concept of variational free energy in inference processes15, this raises the interesting question in how far our results may generalise to any belief-updating process, including for example perceptual inference and perceptual hysteresis. Finally, it has even been suggested that the dissipation of absorbed work as it is studied in a generalized Crooks theorem may underlie a general thermodynamic mechanism for self-organization and adaptation in living matter12, raising the question of whether such a general principle of adaptive dissipation could also govern biological learning processes35.

Data Availability

The datasets generated and analysed during the current study are available from the corresponding author on reasonable request.

References

Shuoming, A. et al. Experimental test of the quantum Jarzynski equality with a trapped-ion system. Nat. Phys. 11(2), 193–199 (2015).

Braun, D. A., Mehring, C. & Wolpert, D. M. Structure learning in action. Behav. Brain Res. 206(2), 157–165 (2010).

Chib, S. & Greenberg, E. Understanding the metropolis-hastings algorithm. Am. Stat. 49(4), 327–335 (1995).

EGD Cohen and David Mauzerall. A note on the Jarzynski equality. J. Stat. Mech: Theory Exp. 2004(07), P07006 (2004).

Delphine, C. et al. Verification of the crooks fluctuation theorem and recovery of RNA folding free energies. Nature 437(7056), 231–234 (2005).

Gavin, E. C. Nonequilibrium measurements of free energy differences for microscopically reversible Markovian systems. J. Stat. Phys. 90(5), 1481–1487 (1998).

Gavin, E. C. Entropy production fluctuation theorem and the nonequilibrium work relation for free energy differences. Phys. Rev. E 60(3), 2721 (1999).

Gavin, E. C. Path-ensemble averages in systems driven far from equilibrium. Phys. Rev. E 61(3), 2361 (2000).

Da Costa, L., Friston, K., Heins, C. & Grigorios, A. P. Bayesian mechanics for stationary processes. Proc. R. Soc. A 477(2256), 20210518 (2021).

De Groot, S.R. & Mazur, P. Non-equilibrium Thermodynamics (Courier Corporation, 2013).

Douarche, F., Ciliberto, S., Petrosyan, A. & Rabbiosi, I. An experimental test of the Jarzynski equality in a mechanical experiment. EPL (Europhys. Lett.) 70(5), 593 (2005).

Jeremy, L. E. Dissipative adaptation in driven self-assembly. Nat. Nanotechnol. 10(11), 919–923 (2015).

Goldt, S. & Seifert, U. Stochastic thermodynamics of learning. Phys. Rev. Lett. 118(1), 010601 (2017).

Gottwald, S. & Braun, D. A. Bounded rational decision-making from elementary computations that reduce uncertainty. Entropy 21(4), 1 (2019).

Gottwald, S. & Braun, D. A. The two kinds of free energy and the Bayesian revolution. PLoS Comput. Biol. 16(12), 1–32 (2020).

Jordi, G.-M., Matthias, K. & Daniel, A. B. Non-equilibrium relations for bounded rational decision-making in changing environments. Entropy 20(1), 1 (2018).

Griffiths, T. L. et al. Doing more with less: Meta-reasoning and meta-learning in humans and machines. Curr. Opin. Behav. Sci. 29, 24–30 (2019).

Pedro, H., Sebastian, G., & Braun, D.A. Jarzyski’s equality and crooks’ fluctuation theorem for general markov chains. arXiv preprint arXiv:2202.05576 (2022).

Jarzynski, C. Equilibrium free-energy differences from nonequilibrium measurements: A master-equation approach. Phys. Rev. E 56(5), 5018 (1997).

Jarzynski, C. Hamiltonian derivation of a detailed fluctuation theorem. J. Stat. Phys. 98(1), 77–102 (2000).

Jarzynski, C. Nonequilibrium work theorem for a system strongly coupled to a thermal environment. J. Stat. Mech: Theory Exp. 2004(09), P09005 (2004).

Jarzynski, C. Equalities and inequalities: Irreversibility and the second law of thermodynamics at the nanoscale. Annu. Rev. Condens. Matter Phys. 2(1), 329–351 (2011).

Kelso, J. J., Buchanan, J. A. S. & Murata, T. Multifunctionality and switching in the coordination dynamics of reaching and grasping. Hum. Movement Sci. Curr. Opin. Behav. Sci. 13, 63–94 (1994).

Konrad, P. K. & Daniel, M. W. The loss function of sensorimotor learning. Proc. Natl. Acad. Sci. 101(26), 9839–9842 (2004).

Lieb, E. H. & Yngvason, J. The physics and mathematics of the second law of thermodynamics. Phys. Rep. 310(1), 1–96 (1999).

Lindig-León, C., Gottwald, S. & Braun, D. A. Analyzing abstraction and hierarchical decision-making in absolute identification by information-theoretic bounded rationality. Front. Neurosci. 13, 1 (2019).

Cecilia, L.-L., Gerrit, S. & Braun, D. A. Bounded rational response equilibria in human sensorimotor interactions. Proc. R. Soc. B 288(1962), 20212094 (2021).

Jan, L., Sophie, D., Steven, B. S., Ignacio, T. & Carlos, B. Equilibrium information from nonequilibrium measurements in an experimental test of Jarzynski’s equality. Science 296(5574), 1832–1835 (2002).

Joseph, L. Ueber den zustand des wärmegleichgewichtes eines system von körpern. Akademie der Wissenschaften, Wien. Mathematisch-Naturwissenschaftliche Klasse, Sitzungsberichte 73, 128–135 (1876).

Mas-Colell, A., Whinston, M. & Green, J. Microeconomic theory (Oxford University Press, 1995).

Pedro, A. O. & Daniel, A. B. Thermodynamics as a theory of decision-making with information-processing costs. Proc. R. Soc. A: Math. Phys. Eng. Sci. 469(2153), 20120683 (2013).

Park, S., Khalili-Araghi, F., Tajkhorshid, E. & Schulten, K. Free energy calculation from steered molecular dynamics simulations using Jarzynski’s equality. J. Chem. Phys. 119(6), 3559–3566 (2003).

Parr, T., Da Costa, L. & Friston, K. Markov blankets, information geometry and stochastic thermodynamics. Phil. Trans. R. Soc. A 378(2164), 20190159 (2020).

Parrondo, J. M. R., Horowitz, J. M. & Sagawa, T. Thermodynamics of information. Nat. Phys. 11(2), 131–139 (2015).

Nikolay, P., Robert, A. M. & Jeremy, L. E. Statistical physics of adaptation. Phys. Rev. X 6(2), 021036 (2016).

Rajesh, P. N. R. & Dana, H. B. Predictive coding in the visual cortex: a functional interpretation of some extra-classical receptive-field effects. Nat. Neurosci. 2(1), 79–87 (1999).

Saira, O.-P. et al. Test of the Jarzynski and crooks fluctuation relations in an electronic system. Phys. Rev. Lett. 109(18), 180601 (2012).

Sanborn, A. N. & Chater, N. Bayesian brains without probabilities. Trends Cogn. Sci. 20(12), 883–893 (2016).

Schach, S., Gottwald, S. & Braun, D. A. Quantifying motor task performance by bounded rational decision theory. Front. Neurosci. 12, 1 (2018).

Schütz, C., Weigelt, M., Odekerken, D., Klein-Soetebier, T. & Schack, T. Motor control strategies in a continuous task space. Mot. Control 15(3), 321–341 (2011).

Seifert, U. Entropy production along a stochastic trajectory and an integral fluctuation theorem. Phys. Rev. Lett. 95(4), 040602 (2005).

Seifert, U. Stochastic thermodynamics, fluctuation theorems and molecular machines. Rep. Prog. Phys. 75(12), 126001 (2012).

Shadmehr, R. & Mussa-Ivaldi, S. Biological Learning and Control: How the Brain Builds Representations, Predicts Events, and Makes Decisions (MIT Press, 2012).

Andrew, S. et al. Verification of the quantum nonequilibrium work relation in the presence of decoherence. New J. Phys. 20(1), 013008 (2018).

Still, S. Information-theoretic approach to interactive learning. EPL (Europhys. Lett.) 85(2), 28005 (2009).

Still, S., Sivak, D.A., Bell, A.J., Crooks, G.E. Thermodynamics of prediction. Phys. Rev. Lett. 109(12), 120604 (2012).

Todorov Emanuel. General duality between optimal control and estimation. In 2008 47th IEEE Conference on Decision and Control, pp. 4286–4292. IEEE, (2008).

Emanuel, T. & Michael, I. J. Optimal feedback control as a theory of motor coordination. Nat. Neurosci. 5(11), 1226–1235 (2002).

Toyabe, S., Sagawa, T., Ueda, M., Muneyuki, E. & Sano, M. Experimental demonstration of information-to-energy conversion and validation of the generalized Jarzynski equality. Nat. Phys. 6(12), 988–992 (2010).

Edward, J. A. T., Daniel, A. B. & Daniel, M. W. Facilitation of learning induced by both random and gradual visuomotor task variation. J. Neurophysiol. 107(4), 1111–1122 (2012).

Weglarczyk Stanislaw. Kernel density estimation and its application. In ITM Web of Conferences, 23. EDP Sciences (2018).

Daniel, M. W., Zoubin, G. & Michael, I. J. An internal model for sensorimotor integration. Science 269(5232), 1880–1882 (1995).

Marty Ytreberg, F. & M Zuckerman, D. Efficient use of nonequilibrium measurement to estimate free energy differences for molecular systems. J. Comput. Chem. 25(14), 1749–1759 (2004).

Funding

Open Access funding enabled and organized by Projekt DEAL.

Author information

Authors and Affiliations

Contributions

The authors confirm contribution to the paper as follows: study conception and design: P.H., C.L.-L., D.B.; data collection: P.H., C.L.-L.; analysis and interpretation of results: P.H., D.B.; draft manuscript preparation: P.H., C.L.-L.; S.G.; D.B.. All authors reviewed the results and approved the final version of the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethics declaration

The studies involving human participants were reviewed and approved by the Ethics committee of Ulm University. The participants provided their written informed consent to participate in this study.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

A Appendix: Methods

A Appendix: Methods

A.1 Theoretical methods

The derivation of (4) and (5) in the context of general Markov chains can be found in18. A similar proof of (5) under stronger assumptions was derived in6 and a different one using the same assumptions was given in19. Regarding (4), a similar proof can be found in6. Note, however, that the usual definition of work in thermodynamics is slightly different for the forward and backward process, based on the physical definition of time reversal and the associated symmetry for the work values. In our case, we define the driving signal that is analogous to the work concept in the same way, for both forward and backward process. In this case, for Equation (4) to hold, we need to assume that \(E_1=E_0\) both in the forward and backward process18. Fortunately, this is true for our protocol, since we begin both forward and backward protocol with some washout trials without perturbation. It should also be pointed out that, in order for the elements involved in Jarzynski’s and Crooks’ derivations to be well-defined, the equilibrium probability density associated to each step in the Markov chain ought to be non-zero at both the starting and ending point of that step18. This will play a relevant role in the choice of the support \(A_n\) for the equilibrium distributions \(p_n^{eq}\) in Sect. A.3.3.

A.2 Simulation methods

In this section, we explain in detail how we simulated (4) and (5).

A.2.1 Metropolis–Hastings algorithm

We use3 as reference for this section. However, for simplicity, we skip over several technical details and may oversimplify some notions.

The Metropolis-Hastings algorithm is a procedure which allows to obtain samples x from a distribution p that is proportional to some function f, that is, \(p(x) = \frac{1}{Z} f(x)\). There are three concepts relevant to this algorithm: U, q and \(\alpha\). They are defined as follows

-

U(A) stands for the uniform distribution over some set \(A \subseteq {\mathbb {R}}\).

-

\(q(\cdot ,\cdot )\) is called the candidate generating density. The role of q in the algorithm is to generate a new point y given a previous point x, with y being sampled from the distribution \(q(x,\cdot )\). In our case we define the density function in y with \(\int _{-90}^{90} q(x,y) dy = 1\), as we assume that movements will be towards the target (\(0^\circ\) direction) under a maximally induced error of \(20^\circ\). Accordingly, we can expect that practically all responses will be covered by choosing a support of \(\pm 90^\circ\).

-

\(\alpha (\cdot ,\cdot )\) is defined as follows:

$$\begin{aligned} \alpha (x,y)&= \text {min} \Big \{ \frac{f(y)q(y,x)}{f(x)q(x,y)},1\Big \} \text { if } f(x)q(x,y)>0,\\&= 1 \qquad \text { otherwise} \end{aligned}$$and is included in the algorithm as a filter on the samples proposed by q, so that some of these samples will be accepted and some will be rejected, to make the samples appear to be sampled from p.

We can now introduce the Metropolis-Hastings algorithm. The algorithm is initialized at an arbitrary value \(x_0\) and then repeats the following steps for \(i = 1,2,\ldots ,M\):

-

(i)

Generate y from \(q(x_{i-1},\cdot )\) and u from U(0, 1).

-

(ii)

If \(u \le \alpha (x_{i-1},y)\), then \(x_i=y\).

-

(iii)

Otherwise, \(x_i=x_{i-1}\).

Finally, the algorithm returns the values \((x_1,\ldots ,x_M)\).

Note that the density of transitions from x to y is therefore given by

which satisfies detailed balance with respect to \(p\propto f\)3. Thus, p is the stationary distribution of the resulting Markov process, and so the \(x_i\) can be regarded samples from p after the chain has passed a transient stage after which the effect of the initialization is negligible. Notice, in our implementation, described below, we only require the burn-in phase for the initial energy in order to make sure that the process starts in the corresponding stationary state. However, since we are interested in the adaptation process during a changing energy signal, we only use the first sample (\(M=1\)) for the remaining steps, conditioned on the sample from the previous step.

Comparison between participants’ behaviour in washout trials (between perturbation cycles) with the fitted equilibrium distribution (recorded before participants experienced any perturbation). The first and third rows compare the normalized histogram of the angles observed during the initial 100 trials (blue color), with the histogram of the fitted equilibrium distribution (2) over the same trials (green color). The second and the forth rows compare the same fitted equilibrium distribution (green color) with the normalized histogram of the angles observed in the \(0^\circ\) deviation plateaus (washout trials) which separate forward and backward protocol (red color). Note the plateau in each cycle consists of 10 points, from which we only include the last 8 to avoid large aftereffects. The application of Crooks’ theorem requires that subjects fully equilibrate between protocols, that is, in our case their behavior in washout trials should return to the fitted equilibrium behavior at the start of the experiment. Compare the discussion on condition (ii) on page 11.

A.2.2 Implementation

Given a set of equilibrium distributions \((p_0,\ldots ,p_N)\), we use the Metropolis-Hastings algorithm on their proportional functions \((f_0,\ldots ,f_N)\) to generate two paths: the forward path where we apply the algorithm once at step i (\(M=1\) in Sect. A.2.1, as explained above), with \(p=p_i\), and the backward path where we do the same but with the distributions in the reverse order. In particular, we consider

for \(n=0,\ldots ,24\) for the forward process, where, for \(n=1,\ldots ,24\), we take

with \(\theta _n\) given by (9), and for \(n=0\) we consider

We will refer to the application of the algorithm following the sequence in (6) with \(M=1\) for each \(n=1,\ldots ,25\) as a cycle. Note \(E_0\) in (8) differs from \(E_n\) in (7) for \(n=1,\ldots ,24\). While we would like to take \(E_0\) as in (7) with \(\theta _0=0\), since one of our hypothesis is the simulations sample the first point in each cycle from

the values of \(p_0\) for \(x \not \in [-2,2]\) are quite indistinguishable once we fix a certain precision. As a result, the algorithm does not converge to \(p_0\) in the long run. To avoid this difficulty, we simply modify the function outside \([-2,2]\) such that points there become distinguishable. This results in the algorithm converging to a distribution close to \(p_0\). Note this modification only applies to the generation of the initial samples, hence, we use (7) to calculate \(\Delta E_{ext}(\varvec{x})\).

The candidate generating density we use for the nth step with \(n=1,\ldots ,24\) is a normal distribution with mean equal to the \((n-1)\)th sample and standard deviation equal to the mean of the distances between subsequent points in the observed data, which turns out to be around 5. Using the values generated by the algorithm during a cycle, we calculate \(\Delta E_{ext}(\varvec{x})\) for the forward process via the utilities in (7), and, after generating several of them, we apply kernel density estimation (see Sect. A.3.4) to estimate \(\rho ^F\) in (4). We proceed analogously to estimate \(\rho ^B\) and, finally, use the obtained values of \(\Delta E_{ext}(\varvec{x})\) for the forward process together with the estimates of \(\rho ^F\) and \(\rho ^B\) to test (4). This test is done differently for the simulation with the large number of sample and that with a small number of them. For the larger one, we simply use the least squares method as the estimate of (4) (cf. Fig. 2A). For the smaller one, however, we produce 1000 bootstraps from the produced values of \(\Delta E_{ext}(\varvec{x})\) and find a confidence interval for (4) from the curves we obtain from the pair (\(\rho ^F\), \(\rho ^B\)) for each bootstrap (cf. Fig. 2B).

A.3 Experimental methods

In this section, we explain the specifics of how we tested experimentally both (4) and (5).

A.3.1 Participants

Ten participants \(P_1,\ldots ,P_{10}\), five females and five males, participated in this study. Three of the authors were among the participants (\(P_1\), \(P_2\) and \(P_3\)). All other participants provided written informed consent for participation and were remunerated with 10 Euros per hour. The participants were undergraduate and graduate students. The procedures were approved by the Ethics committee of Ulm University. All methods were performed in accordance with the relevant guidelines and regulations.

A.3.2 Setup

The experiment was run on a vBOT. Each participant performed the task using the handle of the right arm of the vBOT, which was manipulated with the dominant hand. The participants had no direct view of the handle but of a screen where its position, altered according to a protocol we describe in the following, was represented by a cursor.

A.3.3 Experimental design

Participants were asked to reach the center of a yellow rounded target on the screen with the center of their cursor. To begin each trial, the participants were asked to place the cursor inside a rounded initial position whose center was 15 cm away from the target’s center along the same vertical. Once the cursor crossed the horizontal containing the center of the target, the target became green if participants successfully situated the center of the cursor inside the target and red otherwise. Once the target changed its color, participants were asked to return the cursor to the initial position to begin the following trial. While both the target and the initial position were at the same place each trial, the cursor did not represent the movement of the handle veridically each trial. In particular, after 100 trials where the cursor position and the handle coincided, there were 1420 trials divided in 20 cycles of 66 trials where the cursor position was determined by rotating the vector going from the center of the initial position to the handle’s position. The rotation angle \(\theta _n\) for each n in any cycle \(n=0,\ldots ,65\) was

where all angles are in degrees and

Graphical representation of the accuracy of Crooks’ fluctuation theorem for several pairs of parameters \((b,\beta )\), which we measure through \(d_{b,\beta }\) as explained in Sect. A.3.5. The color intensity grows monotonically with the distance \(d_{b,\beta }\) and is divided into six regions, namely, \(d_{b,\beta } \le 1\), \(1<d_{b,\beta }\le 3\), \(3<d_{b,\beta } \le 6\), \(6< d_{b,\beta } \le 11\), \(11< d_{b,\beta } \le 23\) and \(23< d_{b,\beta }\). The actual values of \(d_{b,\beta }\) can be found in Table 2.

For each \(n=0,\ldots ,65\), we extract \(\theta _n'\), the angle between the vertical segment joining the center of the initial position and the center of the target and the segment joining the center of the initial position and the handle in the first recorded point which is more than 12 cm apart from the center of the initial position. One can find the recorded angles \((x_0,\ldots ,x_{65})\) for both the forward and backward processes in Fig. 6. For participant \(P_j\), with \(1 \le j \le 10\), we take \(p_{n,j}=p^{eq}_n\) as the equilibrium distribution for the n-th trial, where \(b_j\) represents the bias introduced by the machine for participant \(P_j\). We determine the bias as the mean of the initial 100 trials (where the cursor veridically represents the handle). The value \(c_j\) represents the maximum deviation for participant \(P_j\) among the distances \(|x_n-(\theta _n+b_j)|\) and \(|x_{n-1}-(\theta _n+b_j)|\), which we use to fix the support of the equilibrium distribution for \(P_j\) as \(A_n = [\theta _n+b_j-c_j,\theta _n+b_j+c_j]\). The parameter \(\beta _j\) represents the spread around the bias, which we pick once the bias and the support of the equilibrium distributions are fixed by requiring these distributions to maximize the likelihood of the observed values for the first 100 trials. We observe the best spread parameters are between \(\beta _j=0.25\) and \(\beta _j=5\) for all participants. In order to choose the most suitable one for each participant, we consider the values between 0.25 and 5 that result from sequentially adding 0.25 to the lowest value and pick as \(\beta _j\) the one that maximizes the likelihood on the observed angles in the 100 initial trials— see Fig. 7 for a comparison between the observed angles and the equilibrium distribution. We discuss, in Sect. A.3.5, how the choice of the parameters \(b_j\) and \(\beta _j\) affect the results. Note the choice of \(c_j\) does not directly affect how we measure the accuracy of the predictions, but is key in the maximum likelihood estimation of \(\beta _j\).

Using the angles recorded during a cycle, we calculate \(\Delta E_{ext}(\varvec{x})\) via \(p_{n,j}\) for both the forward and backward processes, and, using the 20 values per participant, we estimate \(\rho ^F\) and \(\rho ^B\) in (4) through kernel density estimation (see Sect. A.3.4). Finally, we bootstrap the obtained values of \(\Delta E_{ext}(\varvec{x})\) for the forward and backward process to obtain several estimates of \(\rho ^F\) and \(\rho ^B\). Each of these pairs is used to produce a curve that estimates (4). The mean of these curves for each participant is what we compare to (4) in Fig. 4. The same values of \(\rho ^F\) are used to test (5) (cf. Table 1).

A.3.4 Kernel density estimation

In order to determine the probability distributions \(\rho ^F\) and \(\rho ^B\) in (4), we use kernel density estimation51. Kernel density estimation consists of choosing a function K, the kernel, and a positive number \(h>0\), the bandwidth, and approximating p by distributions of the form

We consider here K to be a standard normal distribution. Notice we simply estimate p as a sum of standard normal distributions around each observed point \(x_i\), for \(i=1,\ldots ,n\), and decide how much each \(x_i\) influences other points in \({\mathbb {R}}\) via h. We fix \(h=0.7\) throughout this work.

A.3.5 Robustness analysis

In this section, we measure model robustness using two approaches: (i) using the exponential quadractic error (1) and varying the parameters we fitted, i.e. b and \(\beta\), and (ii) fixing a pair of parameters that are close to the optimal ones for each participant and taking convex combinations of the exponential quadractic error and the Mexican hat as sensorimotor errors.

As pointed out in Sect. A.3.3, we fix the parameters in (1) and (4), via the initial 100 trials (where no perturbation is applied). To assess model robustness, we consider the effect of assuming the same model with different parameters. We consider, in particular, all pairs \((b,\beta )\) with \(b \in \{-10,-3,-1,0,1,3,10\}\) and \(\beta \in \{0.01,0.1,1,3,4,10,100\}\), since they cover a wide scope of the possible behaviour of (4) using the model in (1). For the robustness analysis we fit the data of all participants with the same parameter sets. In Fig. 9, we show the histogram of the driving signals \(\Delta E_{ext}(\textbf{x})\) for different pairs of parameters \((b,\beta )\). Then, we follow the bootstrapping procedure from Sect. A.3.3 using the different values of b and calculate the mean distance between the mean of the curves we obtain from the bootstraps and the theoretical prediction (4) with the different values of \(\beta\). In particular, we consider the mean horizontal distance between the prediction and the mean curve at the points between \(\Delta E_{ext}(\varvec{x})=-4\) and \(\Delta E_{ext}(\varvec{x})=4\) (that is, the range of values of \(\Delta E_{ext}(\varvec{x})\) we present in Fig. 4) with steps of 0.1. We denote the obtained mean distance as \(d_{b,\beta }\).

Histogram of the forward driving signal values using different values of b. In particular, we present the histograms for \(b=1,-1,10,-10\). We include the first two since they are close to the values of b we fit from the initial 100 trials (where no deviation is applied) and the last two to illustrate the grounds on which we discard certain parameter pairs. As expected from the observed hysteresis effect (cf. Fig. 3), the histograms in (A) and (B), which correspond to \(b=1\) and \(b=-1\), respectively, are biased towards positive values of the driving signal. When assuming implausible parameters, like the ones in (C) and (D), which correspond to \(b=10\) and \(b=-10\), respectively, the bias shifts towards negative values (cf. C,D) and, even, shows a significant concentration of values around 0 (cf. C). Note we observe, respectively, the same biases in the backward driving signals.

To assess how well the parameters fit the data, we have to consider the plausibility of the data being generated by our model using the different parameter settings \((b,\beta )\). Accordingly, it is not enough to simply look at \(d_{b,\beta }\) as a goodness-of-fit measure. This is the case, as the underlying assumption in our model is that the data comes from a Markov chain where the equilibrium distributions at each step are given by the Boltzmann distribution (2) with parameters \((b,\beta )\). In this situation, we expect participants to lag behind the utility they are adapting to most of the time, and hence, by definition, we expect the driving signal to be biased towards positive values. We can discard any parameter settings where this is not the case. Accordingly, we can disregard all pairs that have \(b=10,-10\) —see Fig. 9. The values of \(d_{b,\beta }\) for all pairs \((b,\beta )\) we considered can be found in Table 2 (see Fig. 8 for a graphical comparison). As we can see there, the best parameters have \(\beta =1\), \(-3 \le b \le 3\), and mean distances which are both close to each other and significantly better than the rest. This was expected, since the hypothesis that the data observed at the plateaus follows (2) for these parameters is not completely implausible (cf. Fig. 7). The values \(b \in \{-1,0,1\}\) and \(\beta \in \{3,4\}\), which are the closest to the fitted parameters, also have a small mean distance (although larger than the best cases). In contrast, \(d_{b,\beta }\) becomes significantly larger for the parameters that are clearly unlikely, that is, those that present a huge concentration of the probability around some point, i.e. the ones where the value of \(\beta\) is large. In contrast, whenever the values of \(\beta\) become small, the equilibrium distributions become all closer to a uniform and, although the mean distance does worsen when compared to the best cases, its values do not increase much.

Histogram of the forward driving signals using an inverted Mexican hat (10) with \(\sigma =4\) as sensorimotor loss. Because of unexpected bias towards negative values of the driving signal we observe, it is unlikely the data was generated by a a Markov chain following such a sensorimotor loss and we can discard this model. Note we observe the same bias in the backward driving signals.

To assess robustness with an obviously non-fitting utility, we consider an inverted Mexican hat as utility function, that is, we substitute (1) by

where we take \(\sigma =4\). In this scenario, the bootstrapped data does not reflect the trend of the theoretical prediction (cf. Fig. 5). Moreover, as illustrated in Fig. 10, the model presents an unexpected bias towards negative values of the driving signal. Hence, as discussed above, the likelihood of the data coming from such a Markov chain is small and we can disregard this model. Furthermore, when following the same robustness analysis we performed on the pairs \((b,\beta )\) using the convex combinations \(\lambda f + (1-\lambda ) g\) as sensorimotor loss, where \(\lambda =0,0.25,0.5,0.75,1\), f is the exponential quadratic error with \(b=0\) and \(\beta =4\) (which are close to the values fitted for the participants) and g is the Mexican hat with \(\sigma =4\), we obtain that the mean distance decreases as \(\lambda\) increases, as one can see in Table 3.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Hack, P., Lindig-Leon, C., Gottwald, S. et al. Thermodynamic fluctuation theorems govern human sensorimotor learning. Sci Rep 13, 869 (2023). https://doi.org/10.1038/s41598-023-27736-8

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-023-27736-8

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.