Abstract

Advanced neural interfaces show promise in making prosthetic limbs more biomimetic and ultimately more intuitive and useful for patients. However, approaches to assess these emerging technologies are limited in scope and the insight they provide. When outfitting a prosthesis with a feedback system, such as a peripheral nerve interface, it would be helpful to quantify its physiological correspondence, i.e. how well the prosthesis feedback mimics the perceived feedback in an intact limb. Here we present an approach to quantify this aspect of feedback quality using the crossmodal congruency effect (CCE) task. We show that CCE scores are sensitive to feedback modality, an important characteristic for assessment purposes, but are confounded by the spatial separation between the expected and perceived location of a stimulus. Using data collected from 60 able-bodied participants trained to control a bypass prosthesis, we present a model that results in adjusted-CCE scores that are unaffected by percept misalignment which may result from imprecise neural stimulation. The adjusted-CCE score serves as a proxy for a feedback modality’s physiological correspondence or ‘naturalness’. This quantification approach gives researchers a tool to assess an aspect of emerging augmented feedback systems that is not measurable with current motor assessments.

Similar content being viewed by others

Introduction

The performance of clinically-available upper-limb prostheses has been partly hindered by a lack of intuitive and useful feedback1,2. Direct neural or cortical stimulation to convey force or other feedback to a user controlling a prosthetic hand may lead to improved systems that better mimic the dynamics of the intact human hand. Peripheral nerve interfaces (PNIs) with bidirectional communication between device and body have been shown effective in controlled settings3,4,5,6. Advances in feedback applied via spinal or cortical stimulation also show promise to drive forward prosthetic technologies7,8,9,10. Efforts to improve long term viability, once a concern for such invasive interfaces with the nervous system, have also advanced using wireless signal transmission11, osseointegration approaches12, and stable electrode designs13. While the promise of emerging prosthetic feedback systems is apparent, the development of feedback assessment tools has lagged these emerging technologies.

Traditional performance-based movement assessments may not capture the overall quality of a novel feedback modality. Standard clinical motor assessments such as the Box and Blocks Test14, the Nine Hole Peg Test15, the Southampton Hand Assessment Procedure16 and the Assessment of Capacity for Myoelectric Control17 focus on quantifying motor performance but do not provide insight into other potential factors of particular feedback systems. Emerging feedback systems may provide intrinsic value beyond motor performance gains. Evidence suggests that user-trusted feedback can lead to aspects of incorporation and embodiment18, that could improve prosthesis acceptance2, reduce phantom pain19,20 or provide other benefits that would not be detected by traditional motor assessments21.

Emerging prosthetic feedback studies have relied on qualitative subjective user descriptions of feedback quality4,22. Users report the location and sensation of the applied feedback and the quality of that feedback is inferred. For example, in one study participants described sensations in terms of pressure, tingle, vibration, or light moving touch4. As stimulation intensity was varied, one subject described the sensation as changing from “tingly” to “as natural as can be”4. Some conclusions are clearly justifiable, for example, self-described “natural, non-tingling feedback” is presumably better than “uncomfortable, deep, dull vibration”4. But not all self-reported sensory descriptions are as easy to interpret. Recent focus has shifted to make these qualitative observations more reliable and quantifiable23. When a user perceives touch through a feedback system, how closely does that percept match the touch sensation experienced with intact anatomy? Unnatural feedback may be difficult to interpret, or even painful24. In this study we have sought to objectively quantify the physiological correspondence, or naturalness, of supplementary sensory feedback modalities.

Quantifying the quality of a feedback system is the goal of this work but the spatial alignment of the perceived feedback can be a confounding factor. When stimulating the nervous system via a PNI or other brain-machine interface, the perceived location of the feedback may differ from the intended feedback location. For example, a visually-observed contact on a prosthetic fingertip may be felt, as the result of neural stimulation, several centimeters away at the base of the palm (see Fig. 1, top right panel)22. This spatial misalignment between actual and expected percept may greatly affect the feedback’s usability. Assessments of feedback quality must consider both the effect of misaligned percepts and the naturalness of the stimulation.

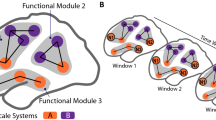

Crossmodal congruency effect framework. Participants rapidly select the target feedback (e.g. vibration shown in red). The target feedback is presented concurrently with distractor visual feedback (green LED). Feedback can be presented congruently, i.e. visual and target feedback collocated in the top row, or incongruently with mismatched visual and target feedback. The crossmodal congruency effect (CCE) score is the difference between the response time to congruent and incongruent stimuli. The cross-modal effect can also manifest as an elevated error rate during incongruent trials25. Spatial separation, the physical distance between the two paired sensory percepts, can be varied, and is indicated with the rulers.

The crossmodal congruency effect (CCE) score provides an objective measure of incorporation of a feedback modality25,26,27,28. The degree of feedback incorporation, indicated by the CCE score, is affected by the two interacting factors affecting feedback quality: spatial alignment of percepts and physiological correspondence, or naturalness25,29,30 (Fig. 1). This metric has been used to quantify incorporation in the rubber hand illusion28,31 and shows promise for assessment of neuroprosthetics32. In this study we aim to remove the spatial bias from the CCE score to arrive at an adjusted CCE score that is a proxy for physiological correspondence.

The CCE task is an established psychophysics protocol that involves the speeded discrimination of target stimuli25. Participants are presented with a target stimulus in one of two locations. Concurrent distractor feedback in the form of an illuminated LED is presented in one of two alignments: 1. Congruent, the illuminated LED is on the same side as the target feedback (Fig. 1, top row); or 2. Incongruent, the illuminated LED is on the opposite side of the target feedback (Fig. 1, bottom row). The participant is asked to rapidly select the location of the target feedback with one of two foot pedals that each correspond to one “side” of the target feedback. In one of our implementations, the left and right foot pedals correspond to vibratory target feedback on the thumb and index finger, respectively (Fig. 1, left column).

The CCE score is calculated as the difference between the reaction time to incongruent stimuli and the reaction time to congruent stimuli. An increase in the incongruent reaction time relative to the congruent reaction time is indicative of longer cognitive processing times required to ignore the distractor LED and select the target feedback with incongruent stimuli. Incongruent reaction time, and therefore CCE score, is maximized when the conditioned response to the target feedback is strongest, i.e. the feedback modality is highly incorporated into a person’s body schema33,34. As the target feedback becomes less natural feeling, it is more poorly incorporated, resulting in a weaker conditioned response and lower CCE scores28. CCE is a useful measure, however, its value as a direct measure of physiological correspondence is conflated by its dependency on spatial co-location25. Here we show that the spatial separation factor can be removed from the CCE score, enabling a measurement of physiological correspondence that is independent of spatial co-location.

We completed a comprehensive study of 60 able-bodied participants controlling a bypass prosthesis under different feedback conditions. We found that incorporation after training, as measured by CCE score, changes with feedback modality (i.e. physiological correspondence). After extended training, CCE score increased as the spatial separation between expected and perceived feedback decreased. The model we generated from these data provides an adjusted CCE score with the effect of spatial separation removed. This provides a bias-free quantification of physiological correspondence, or naturalness. Since the target feedback applied in the CCE task is analogous to the feedback applied via a PNI with a sensorized prosthesis, this adjusted CCE score assessment can be used to quantify an aspect of the naturalness of a prosthetic feedback system. The approach we present provides an important first step towards quantitatively measuring the degree to which feedback actually feels physiologically accurate.

Results

To determine if different modalities of feedback provided to a person could be incorporated, we measured the CCE score25,35 of 60 able-bodied individuals after training with a bypass prosthesis36 (Fig. 2). Participants trained using the bypass prosthesis to move mechanical eggs with one of three feedback modalities: vibration, electrical stimulation or skin deformation36. Training duration (short vs. extended) and spatial separation between the expected feedback on the fingertip contact point and the perceived feedback (matched on fingertip vs. >12 cm away) were also varied (see Supplementary Table S1 for all experimental conditions). We first show that CCE is a useful measure of physiological correspondence, i.e. it is sensitive to changes in feedback modality. We then demonstrate that the CCE is affected by changes in spatial separation. Then we generate a regression model that can remove the effect of spatial separation from the CCE score, resulting in an adjusted CCE score that captures an unbiased measure of physiological correspondence.

Experimental setup. Able-bodied participants donned a bypass prosthesis36 during a training phase that immediately proceeded the CCE score assessment. During the CCE protocol, participants were provided target feedback (e.g. vibration) coupled with visual feedback via the distractor LEDs. Participants were asked to respond as quickly as possible, with foot pedals, to select the location (left vs. right) of the target feedback. See Methods for more details on the protocol. During training, participants controlled the bypass prosthesis with electromyographic signals to move mechanical eggs over a barrier. The sensorized prosthesis could detect force applied to the thumb and the index finger via embedded strain gauge sensors and provide proportional feedback to the user conveyed as vibration, electrical stimulation or skin deformation. During training and testing the intact hand and harness are covered with black fabric.

We observed a main effect of feedback modality on CCE score (Fig. 3). A three-way ANOVA with CCE score as the dependent variable and three categorical independent variables representing spatial separation, training level and the three feedback modalities resulted in a statistically significant effect of feedback (F(2,48) = 6.015, p = 0.0047, ω = 0.28). None of the interaction terms between independent variables were statistically significant (p > 0.05). Bonferroni post-hoc tests showed that the CCE score was significantly higher for vibration feedback [µ = 120 ms ± 53] compared to skin deformation feedback [µ = 71 ms ± 46] (p = 0.0048) and non-significantly higher than electrical stimulation [µ = 84 ms ± 36] (p = 0.052). A higher CCE score indicates a higher level of incorporation for that feedback modality25. The trends observed with data binned for each modality are also seen with the CCE scores of each of the 12 treatment groups (Supplementary Fig. S1). Feedback modalities differ in their level of physiological correspondence to intact biological feedback. Changes in the provided feedback modality thus represent a change in physiological correspondence, a manipulation that had a significant effect on the measured CCE score.

The spatial separation between perceived and expected feedback affects CCE score, but only after extended training (Fig. 4, Supplementary Fig. S1). To isolate the effect of spatial separation from the differences in CCE score observed across modalities (Fig. 3), we normalize CCE scores within each modality and combine normalized scores into one group for comparison (Fig. 4). Within each modality the same trend is observed: after extended training, raw CCE scores are higher with low spatial separation than with high spatial separation (Supplementary Fig. S1). In participants with extended training periods, there was a significant effect of spatial separation on normalized CCE score (unpaired t-test, 2-tailed, p = 0.035) (Fig. 4a). CCE scores decreased as spatial separation increased, indicating that incorporation diminished as the perceived feedback did not align with the expected feedback location. Spatial separation appeared to have no effect on CCE score in participants with a short training period (unpaired t-test, 2-tailed, p = 0.88) (Fig. 4b). Short training involved 50 minutes of practice moving mechanical eggs and extended-training lasted 80 minutes. Since spatial separation only had a significant effect on the measured CCE score after extended training, we do not include the short training data in further analysis.

Spatial separation affects CCE score after training. (a) CCE score decreased with increased spatial separation for extended-training participants. (b) There was no observed effect of spatial separation on CCE score after short periods of training. Statistical significance tested using unpaired two-sample equal variance t-tests: *p < 0.05. Reported results are normalized to the group mean within each feedback type to account for global differences across modalities.

Given that CCE score is an indicator of physiological correspondence, but spatial separation has a biasing effect on the metric, we attempted to fit a model to calculate an adjusted CCE score. The adjusted CCE score, the CCEA, would be unbiased by the confound of spatial separation and solely represent the physiological correspondence of the provided feedback. To approximate the baseline adjusted CCE score (CCEA) for each feedback modality, we used the average CCE score for all treatment groups within that modality. This allowed for the conversion of the categorical feedback modalities to a continuous scale. Since we established that CCE score is biased by spatial separation, we used a corrective model to remove this influence. We first fit a multiple linear regression to the data from the 30 extended-training participants (F(2,27) = 4.93, p = 0.015, R2 = 0.27). The model can be expressed as

where B0 = 0.98 (p = 0.028), B1 = −37.6 (p = 0.043) and B2 = 22.85 (p = 0.58). The spatial separation term was defined as zero for spatial separations of 3.0 cm or less, and one for spatial separations greater than 12.0 cm. Note that the intercept coefficient B2 is not statistically different from zero but was included so that the linear model could be used with low CCE scores under high spatial separation conditions. Given measured values for CCE score and spatial separation, the CCEA of a feedback system can be estimated by rearranging Equation 1 as

This equation was used to calculate the CCEA for all extended-training participants (Supplementary Fig. S2). The spatial separation bias on CCE score (Fig. 4a) was observed as trends within the extended-training results for each modality (Fig. 5, top panel). This bias was not present when observing CCEA results of the same participants calculated using Equation 2 (Fig. 5, bottom panel), supporting the model’s ability to account for the effect of spatial separation.

Adjusted CCE score is not affected by spatial separation. Top panel. CCE score means and standard error for the extended-training participants. For each modality, the CCE score is lower for the high spatial separation group. Bottom panel. CCEA results for the extended-training participants. The effect of the spatial separation observed in the CCE score results is not present.

We further analyzed the data to investigate possible explanations for the low CCE score observed with skin deformation feedback. The low CCE score could not be attributed to the latency of the skin deformation feedback application (Supplementary Data S1). Additionally, we observed no significant trend between motor performance (movement success rate during training) and CCE score (Supplementary Fig. S3).

Discussion

We have demonstrated that CCE scores can be used to assess feedback quality. We collected CCE scores from 60 able-bodied participants using a bypass prosthesis with different feedback systems. From the results we developed a corrective model to output adjusted CCE scores, unaffected by spatial separation biases, that are a proxy for physiological correspondence. The adjusted CCE score can be used to assess other novel sensory feedback systems, such as patients with peripheral nerve interfaces or cortical stimulators. The psychophysics-based technique we have presented fills a need for more informative assessment of advanced feedback systems.

Given the adjusted CCE quantification model (equation (2)) and the extended-training data we collected, we provide a scale to contextualize CCEA scores (Fig. 6). Researchers who measure the CCE score and spatial separation of a feedback system can calculate the CCEA using Equation 2. When assessing the physiological correspondence of novel feedback modalities, researchers can use the scale in Fig. 6 as a benchmark for the analysis of results. For example, if a feedback modality’s assessed CCEA is 130, then the feedback would have a similar level of correspondence to vibration feedback. This example feedback modality would have a high level of physiological correspondence.

Physiological correspondence benchmark scale. Benchmark data for different feedback modalities to allow for comparison and contextualization of results from the assessment of novel feedback systems. Maximum CCE scores using intact physiology and no spatial separation are indicated35.

Our results support previous findings25,35 showing that CCE score is influenced by feedback modality and spatial separation, i.e. the offset between the target feedback and the distractor visual feedback. Although advanced feedback systems strive to minimize spatial separation, the imprecision of neural stimulation makes the generation of perfectly-aligned percepts quite difficult. A high spatial separation will affect the incorporation of the feedback, resulting in a lower CCE score25. Additionally, the dynamics and timing of feedback can affect a user’s subjective assessment of its “naturalness”4. Researchers often strive to elicit natural feeling percepts with experimental feedback systems. But to our knowledge we have not seen any attempts at objectively quantifying this “naturalness” sensation. We use the CCEA score to capture the degree of physiological correspondence, i.e. how well a feedback modality mimics the feedback experienced with intact anatomy, as a proxy for “naturalness”. Since CCE score and spatial separation are easily measurable, we can use the relationship between these three variables to quantify the CCEA of supplementary sensory feedback.

One potential limitation of the model we developed is that CCE scores may be affected by additional factors besides physiological correspondence, spatial separation and training. We did not factor in the effect of fatigue, time of day, baseline reaction time, and feedback characteristics such as latency, consistency and dynamics. Variability in feedback characteristics were limited by our use of a real-time embedded system to provide feedback with correspondingly low latency (<30 ms for vibration and skin deformation). However, the method used to calibrate the skin deformation feedback may have led to variable levels of incorporation. For example, the haptic tactor starting position was not clearly visible in the experimental setup and for some participants it may have been in contact with the skin or arm hair at zero-force levels. Although we could not eliminate the effects of all potential factors affecting CCE score, the most significant factors seem to be physiological correspondence, spatial separation and training as evidenced by their effects on our CCE results and by previous observations25,37.

CCE scores were affected by training duration: spatial separation only affected CCE score in the extended-training participants (Fig. 4). It seems that the short-training participants did not have enough exposure to the feedback to reach a maximum level of incorporation, a qualitative finding observed elsewhere although on different timescales38. Therefore, to generate the CCE adjustment model we used only extended-training data. The extended-training group had 80 minutes of practice with the feedback system, which is less training than would be typical for patients using this assessment. A patient with a novel feedback system will often complete a take-home trial, wearing a device for days to weeks, before running this assessment. Therefore, we consider only extended-training data and define the 80-minute duration as the minimum exposure necessary for this assessment to be effective. We expect the effect of training to plateau and that the model should be applicable to longer training times; nevertheless, this should be verified with an additional study.

Although the model’s goodness-of-fit seems low (R2 = 0.27), this is a consequence of the noisiness of human psychophysical data and it can still be used to assess supplementary feedback quality. However, the variability of CCE scores across individuals may make one-to-one comparison between individuals difficult. We based our model development on a population level analysis that, while statistically valid, could lead to misinterpretation of a single CCE result. Therefore, we recommend comparisons of CCE scores from the same individual across different feedback modalities or with different training periods. Alternatively, when data from several individuals are available, a population-level comparison can be made. A power analysis should be run to determine the number of individuals necessary to detect a certain level of improvement supplied by a novel feedback system39. To detect the maximum intergroup difference observed in our study (93 ms), and assuming the observed overall variability across all 60 participants (SD = 46 ms, normalized to the mean within each group), three individuals would be needed to achieve a statistical power of 0.8 at a confidence level of 95%. Increasing the number of individuals tested would allow for smaller CCE score differences to be detected. For example, to detect half of the maximum difference observed (δ = 47 ms), eight individuals should be tested. The exact number of individuals required depends on the CCE score variability that will vary depending on the feedback system and patient population. In either the repeat-individual testing approach or the small-group population analysis, a CCE task learning effect must be considered when scheduling test sessions35.

The low CCE score observed for skin deformation feedback (Fig. 3) was an unexpected result. Originally we hypothesized that using skin deformation feedback to represent the grasp force of the training movements would result in the highest level of incorporation. Skin deformation more closely resembles the physical activation of a grasping force compared to vibration and electrical stimulation. However, skin deformation feedback resulted in the lowest CCE score compared to electrical stimulation and vibration, a statistically significant result that does not appear to be the result of noise or random fluctuation. We confirmed that the poor incorporation of skin deformation was not due to mediocre actuation as latency results were consistent across feedback modalities. In some participants the tactor may have been in contact with the skin at a zero-force level. Variable skin contact would result in variable perception across participants as the discrete initial skin contact has been shown to be important in improving feedback effectiveness40. The low incorporation of skin deformation feedback could alternatively be explained by long-term depression of afferents due to repeated stimulation or slipping actuators; both explanations would be supported by an observed change in detection threshold over the course of the experiment (see Supplementary Data S1). A future study is planned to combine the CCE score assessment with an outcome metric that assesses feedback uncertainty to more carefully characterize the utility of the skin deformation feedback41.

Participants performed well using skin deformation feedback (Supplementary Fig. S3), but we still observed poor incorporation. There did not seem to be a trend between motor performance, measured as the percentage of mechanical eggs moved during the training phase, and CCE score, implying that performance and incorporation are distinct concepts. An individual’s quantifiable motor performance may be inflated through the adoption of alternative strategies and compensatory movements42,43. Further, motor performance does not necessarily correspond to other important aspects of prosthesis use such as device acceptance18, phantom pain reduction19,20 or cognitive burdens21. Therefore, clinical movement assessments relying only on motor performance may not be suitable to analyze the performance of advanced feedback systems. These assessments also suffer from other limitations such as a reliance on movement timing and variability introduced by rater subjectivity44. Available motor assessments may be sufficient to monitor clinical progress but no single outcome metric captures all relevant performance information45. The CCE-based assessment and supporting model we have presented could augment the battery of performance-based assessments currently in use to provide more detailed insight into supplementary feedback system performance.

We have presented a data-driven model approach that can remove the spatial separation bias from the CCE score to provide an indicator of the physiological correspondence of a feedback modality. This approach represents a way to provide more informative assessment of prosthetic feedback systems. Further steps will require the clinical validation of this assessment tool in patient populations, such as amputees outfitted with peripheral nerve feedback systems. Novel feedback systems for amputees require novel assessment tools; this work provides an advanced outcome metric to fill that need.

Methods

Participant recruitment

Participants were recruited by word-of-mouth and provided informed consent under the guidelines and approval of University of New Brunswick’s Research Ethics Board. All methods were performed in accordance with relevant ethics guidelines and regulations. Sixty volunteer participants completed the study [mean age = 31.9 yrs, range = 18–76 yrs, 22 female, 5 left-handed]. Participants were randomly assigned to a treatment condition which specified feedback modality [vibration, electrical stimulation or skin deformation], training duration [short or extended], and spatial separation between visual and target feedback [low or high]. There were ten treatment groups and each participant completed training and CCE assessment for one modality at a given spatial separation and for a particular training duration.

Bypass prosthesis

Participants first trained using a bypass prosthesis with myoelectric control and embedded force sensors in the thumb and index finger that proportionally drove feedback intensity. The bypass hardware is described in detail elsewhere36. Each participant only received the assigned feedback modality at the assigned spatial separation condition.

Feedback implementation

Skin deformation feedback was applied using linear mechanotactile haptic tactors attached to the subject (design courtesy of the University of Alberta46). Each tactor used a rack and pinion gear system to convert rotational motion generated by a servo motor (HiTec, HS-35HD) to linear motion that was applied to the subject’s skin via an 8 mm diameter domed head. Measured force from the sensorized prosthetic hand (custom retrofitting of Ottobock MyoHand VariPlus Speed by HDT Global) was mapped to servo displacement. Zero force was mapped to a displacement that was a step below the minimum detectable level. The maximum displacement was based on the current draw of the servos and limited to approximately 100 mA. This level was selected to keep the actuation at a level below which the plastic rack and pinion system would not slip. During the training phase, the tactor displacements were proportionally controlled to match the measured forces on the thumb and index finger of the instrumented prosthetic hand. During the CCE score assessment, the tactors were displaced to approximately 20–25% of the maximum experienced during the training phase.

Vibration feedback was provided by two 10 mm linear resonant actuators (LRAs: Precision Microdrives, C10–100) taped to the skin with medical tape (3 M, Micropore). During the training phase, the LRAs were proportionally controlled to correspond to the measured forces on the thumb and index finger of the instrumented prosthetic hand. During the CCE score assessment, the stimuli were set to approximately 20–25% of the maximum intensity experienced during the training phase.

Electrical stimulation was provided by a 2-channel TENS electro-stimulator (Proactive, Pulse). The device was modified such that the electrical stimulation intensity could be controlled with isolated analog outputs from a myRIO embedded hardware system (National Instruments). During the training phase, the stimulator outputs were proportionally controlled to produce paresthetic sensations that corresponded to the measured forces on the thumb and index finger of the instrumented prosthetic hand. During CCE score assessment, the stimuli were set to the maximum intensity experienced during the training phase. The protocol for electrical stimulation was modified compared to the other modalities to limit participant discomfort and avoid painful percepts.

Feedback thresholds

Feedback detection thresholds were measured for each subject to calibrate the stimulation before the training phase. The stimulus intensity was slowly increased until the subject indicated that the stimulation was felt. This was repeated three times and the lowest reported stimulus level was used to set the range of stimulus. A proportional mapping was used to convert the hand’s force detection range to the subject’s stimulus detection range. The low end of the force detection range was set slightly below the reported detection threshold (~1% PWM duty cycle decrease for vibration and skin deformation feedback; ~10 mV decrease for electrical stimulation). The maximum feedback was set to correspond to 1.2× the breaking threshold of the heaviest egg (19.4 N). The maximum stimulus level was set based on the type of feedback. The LRAs were set to their maximum achievable intensity for the maximum stimulus level. The electrical stimulus maximum level was set based on the subject’s comfort and to avoid muscle twitch. The feedback detection threshold was measured again after the training phase, immediately preceding CCE score assessment.

Spatial separation

For feedback with low spatial separation, the target feedback was applied at the fingertip to match the visually-observed contact point on the prosthetic hand. For feedback with high spatial separation, the actuators were attached to the wrist for skin deformation and vibration. For the electrical stimulation low spatial separation group, the self-adhesive electrical stimulation pads were wrapped around the index finger or thumb. Electrical stimulation on the wrist interfered with EMG control signals so for the high spatial separation group the pads were placed on the back of the hand near the major knuckles of the index finger and thumb.

Electromyographic control

During training, the one-degree-of-freedom prosthetic hand of the bypass was controlled with a Complete Control (Coapt) pattern recognition system. Participants trained hand open and close control using isometric wrist flexor and wrist extensor muscle contractions using the commercial software provided by Coapt.

Training protocol

Participants in the short-training group completed five training sessions, each lasting ≤10 minutes with 10-minute intervening breaks, for 50 minutes of total training. Extended-training participants completed eight sessions for 80 minutes of total training. In each training session participants attempted to move instrumented mechanical eggs of three different weights and “breaking” thresholds over a 5 cm high barrier (Fig. 7). The lightest egg weighed 2.78 N with a breaking threshold of 6.84 N. The medium-weight egg weighed 5.45 N with a breaking threshold of 10.52 N. The heaviest egg weighed 9.55 N with a breaking threshold of 16.19 N. Each session ended after 100 movement attempts or ten minutes, whichever occurred first. Successful and unsuccessful movements were recorded manually by the experimenter. When too much force was applied to the mechanical egg, an on-egg LED would illuminate to indicate a broken egg. After breaking a mechanical egg, the subject had to release the egg and restart the movement. Participants wore earplugs and over-ear noise-canceling headphones playing Brownian noise to mask actuator and background noise.

CCE assessment

Following the bypass training, the participant’s CCE score was assessed25 using a modified protocol35. Participants were asked to make speeded responses to select the location of target feedback presented randomly to one of two locations (see Fig. 1) using foot pedals. Left and right foot pedals corresponded to thumb and finger feedback locations, or the spatially separated corollaries of those locations. Participants focused on a centrally-located fixation LED that illuminated at the start of each trial. After 1000 ms, the left or right distractor LED attached to the prosthesis would illuminate concurrent with target feedback applied to the participant’s hand or arm at the left or right location (see Fig. 2). Four conditions were possible, congruent and incongruent stimuli for left or right target feedback, and were presented in a random order over sequential trials. Visual and target feedback was provided for 250 ms and participants were asked to respond as quickly as possible to select the target feedback location.

Participants first completed three familiarization sessions of ten trials each and then four assessment blocks of 64 trials each. Participants were seated beside a height adjustable table that was set to a comfortable height. A pillow was placed under each subject’s arm to ensure forces and vibrations were not transmitted through the table surface. CCE score for each block was computed as mean incongruent time minus mean congruent time. The overall CCE score was calculated as the mean of the scores from the four blocks.

Statistical analysis

Statistical analysis was run using IBM’s SPSS Statistics and MATLAB software. A multi-way ANOVA was run with dummy categorical variables used to represent feedback modality, spatial separation and training level. Effect size was calculated as ω2 and reported as the square root, ω47. For the linear regression analysis (see equation (1)), only extended-training data were used. CCE score was the dependent variable and Feedback Location and baseline CCE score were the independent variables. The Feedback Location variable was set to either zero (distances of 0 to 3 cm, at or near the fingertips) or one (distances of more than 12 cm from finger tips, on the wrist or back of hand). Baseline CCE score for each modality was set as the mean CCE score for a particular feedback type (71 for skin deformation feedback, 120.5 for vibration, 84.1 for electrical stimulation).

Data availability

All data are available in the Dataset 1 file that accompanies this manuscript.

Change history

12 September 2018

A correction to this article has been published and is linked from the HTML and PDF versions of this paper. The error has not been fixed in the paper.

References

Østlie, K. et al. Prosthesis rejection in acquired major upper-limb amputees: a population-based survey. Disabil. Rehabil. Assist. Technol. 7, 294–303 (2011).

Biddiss, E. A. & Chau, T. T. Upper limb prosthesis use and abandonment: A survey of the last 25 years. J. Prosthet. Orthot. Int. 31, 236–257 (2007).

Raspopovic, S. et al. Restoring natural sensory feedback in real-time bidirectional hand prostheses. Sci. Transl. Med. 6, 222ra19 (2014).

Tan, D. W. et al. A neural interface provides long-term stable natural touch perception. Sci. Transl. Med. 6, 257ra138 (2014).

Ortiz-Catalan, M., Håkansson, B. & Brånemark, R. An osseointegrated human-machine gateway for long-term sensory feedback and motor control of artificial limbs. Sci. Transl. Med. 6, 257re6 (2014).

D’anna, E. et al. A somatotopic bidirectional hand prosthesis with transcutaneous electrical nerve stimulation based sensory feedback. Sci. Rep. 7(1), 10930 (2017).

Flesher, S. N. et al. “Intracortical microstimulation of human somatosensory cortex”. Sci. Transl. Med. 8, 361ra141 (2016).

Kim, S. et al. Behavioral assessment of sensitivity to intracortical microstimulation of primate somatosensory cortex. Proc. Natl. Acad. Sci. USA 112(49), 15202–15207 (2015).

O’Doherty, J. E., Lebedev, M. A., Hanson, T. L., Fitzsimmons, N. A. & Nicolelis, M. A. A brain-machine interface instructed by direct intracortical microstimulation. Front. Integr. Neurosci. 3, 20 (2009).

Romo, R., Hernández, A., Zainos, A., Brody, C. D. & Lemus, L. Sensing without touching: psychophysical performance based on cortical microstimulation. Neuron. 26(1), 273–278 (2000).

Clark, G. A., Ledbetter, N. M., Warren, D. J. & Harrison, R. R. Recording sensory and motor information from peripheral nerves with Utah Slanted Electrode Arrays. Conf. Proc. IEEE Eng. Med. Biol. Soc. 2011, 4641–4644 (2011).

Mastinu, E., Doguet, P., Botquin, Y., Håkansson, B. & Ortiz-Catalan, M. Embedded System for Prosthetic Control Using Implanted Neuromuscular Interfaces Accessed Via an Osseointegrated Implant. IEEE Trans. Biomed. Circuits Syst. 11, 867–877 (2017).

Christie, B. P. et al. Long-term stability of stimulating spiral nerve cuff electrodes on human peripheral nerves. J. Neuroeng. Rehabil. 14, 70 (2017).

Mathiowetz, V., Volland, G., Kashman, N. & Weber, K. Adult norms for the Box and Block Test of manual dexterity. Am. J. Occup. Ther. 39, 386–391 (1985).

Mathiowetz, V., Weber, K., Kashman, N. & Volland, G. Adult Norms for the Nine Hole Peg Test of Finger Dexterity. Occup. Ther. J. Res. 5, 24–38 (1985).

Light, C. M., Chappell, P. H. & Kyberd, P. J. Establishing a standardized clinical assessment tool of pathologic and prosthetic hand function: Normative data, reliability, and validity. Arch. Phys. Med. Rehabil. 83, 776–783 (2002).

Hermansson, L. M., Fisher, A. G., Bernspång, B. & Eliasson, A.-C. Assessment of capacity for myoelectric control: a new Rasch-built measure of prosthetic hand control. J. Rehabil. Med. 37, 166–171 (2005).

Marasco, P. D., Kim, K., Colgate, J. E., Peshkin, M. A. & Kuiken, T. A. Robotic touch shifts perception of embodiment to a prosthesis in targeted reinnervation amputees. Brain. 134, 747–758 (2011).

Tyler, D. J. Neural interfaces for somatosensory feedback. Curr. Opin. Neurol. 28, 574–581 (2015).

Ortiz-Catalan, M. et al. Phantom motor execution facilitated by machine learning and augmented reality as treatment for phantom limb pain: a single group, clinical trial in patients with chronic intractable phantom limb pain. Lancet. 388, 2885–2894 (2016).

Makin, T. R., de Vignemont, F. & Faisal, A. A. Neurocognitive barriers to the embodiment of technology. Nat. Biomed. Eng. 1, 0014 (2017).

Davis, T. S. et al. Restoring motor control and sensory feedback in people with upper extremity amputations using arrays of 96 microelectrodes implanted in the median and ulnar nerves. J. Neural Eng. 13, 036001 (2016).

Kim, L. H., McLeod, R. S. & Kiss, Z. H. T. A new psychometric questionnaire for reporting of somatosensory percepts. J. Neural Eng. 15, 013002 (2018).

Shannon, G. F. A comparison of alternative means of providing sensory feedback on upper limb prostheses. Med. Biol. Eng. Comput. 14, 289–294 (1976).

Spence, C., Pavani, F. & Driver, J. Spatial constraints on visual-tactile cross-modal distractor congruency effects. Cogn. Affect. Behav. Neurosci. 4, 148–169 (2004).

Spence, C., Nicholls, M. E., Gillespie, N. & Driver, J. Cross-modal links in exogenous covert spatial orienting between touch, audition, and vision. Percept. Psychophys. 60(4), 544–557 (1998).

Spence, C., Pavani, F., Maravita, A. & Holmes, N. Multisensory contributions to the 3-D representation of visuotactile peripersonal space in humans: Evidence from the crossmodal congruency task. J. Physiol. Paris. 98(1–3), 171–189 (2004).

Zopf, R., Savage, G. & Williams, M. A. Crossmodal congruency measures of lateral distance effects on the rubber hand illusion. Neuropsychologia 48(3), 713–725 (2010).

Maravita, A., Spence, C., Kennett, S. & Driver, J. Tool-use changes multimodal spatial interactions between vision and touch in normal humans. Cognition. 83(2), B25–34 (2002).

Frings, C. & Spence, C. Crossmodal congruency effects based on stimulus identity. Brain Res. 1354, 113–122 (2010).

Zopf, R., Savage, G. & Williams, M. A. The Crossmodal Congruency Task as a Means to Obtain an Objective Behavioral Measure in the Rubber Hand Illusion Paradigm. J. Vis. Exp. 77, e50530 (2013).

Spence, C. The cognitive neuroscience of incorporation: Body image adjustment and neuroprosthetics. In Kensaku, K., Cohen, L. G., & Birbaumer, N. (Eds), Clinical Systems Neuroscience. New York, NY: Springer. pp 151–168 (2015).

Holmes, N. P. & Spence, C. The body schema and the multisensory representation(s) of peripersonal space. Cogn. Process. 5(2), 94–105 (2004).

Cardinali, L. et al. Tool-use induces morphological updating of the body schema. Curr. Biol. 19(12), R478–9 (2009).

Gill, S., Blustein, D., Wilson, A. W. & Sensinger, J. Crossmodal congruency effect scores decrease with repeat test exposure. Preprint at, https://www.biorxiv.org/content/early/2017/09/10/186825 (2018).

Wilson, A. W., Blustein, D. H. & Sensinger, J. W. A third arm - Design of a bypass prosthesis enabling incorporation. IEEE Int. Conf. Rehabil. Robot. 2017, 1381–1386 (2017).

Mayer, A. R., Franco, A. R., Canive, J. & Harrington, D. L. The effects of stimulus modality and frequency of stimulus presentation on cross-modal distraction. Cereb. Cortex 19, 993–1007 (2009).

Marini, F. et al. Crossmodal representation of a functional robotic hand arises after extensive training in healthy participants. Neuropsychologia 53, 178–186 (2014).

Lieber, R. L. Statistical significance and statistical power in hypothesis testing. J. Orthop. Res. 8, 304–309 (1990).

Cipriani, C., Segil, J. L., Clemente, F., ff Weir, R. F. & Edin, B. Humans can integrate feedback of discrete events in their sensorimotor control of a robotic hand. Exp. Brain Res. 232, 3421–3429 (2014).

Blustein, D., & Sensinger, J. W. Extending a Bayesian estimation approach to model human movements. Program No. 486.10. 2016 Neuroscience Meeting Planner. San Diego, CA: Soc. for Neurosci. (2016).

Hussaini, A., Zinck, A. & Kyberd, P. Categorization of compensatory motions in transradial myoelectric prosthesis users. J. Prosthet. Orthot. Int. 41, 286–293 (2016).

Hebert, J. S. & Lewicke, J. Case report of modified Box and Blocks test with motion capture to measure prosthetic function. J. Rehabil. Res. Dev. 49, 1163–1174 (2012).

Hermansson, L. M., Bodin, L. & Eliasson, A.-C. Intra- and Inter-Rater Reliability of the Assessment of Capacity for Myoelectric Control. J. Rehabil. Med. 38, 118–123 (2006).

Wright, V. Prosthetic Outcome Measures for Use With Upper Limb Amputees: A Systematic Review of the Peer-Reviewed Literature, 1970 to 2009. J. Prosthet. Orthot. 21, P3–P63 (2009).

Schoepp, K., Dawson, M., Carey, J., & Hebert, J. Design and integration of an inexpensive wearable tactor system. Myoelectric Controls Sympos. Fredericton, NB, Canada. ID# 89, (2017).

Field, A. Discovering Statistics Using SPSS. Los Angeles, CA.: SAGE Publications. (2009).

Acknowledgements

The authors thank Heather Daley, Satinder Gill, and Wendy Hill for their assistance in the implementation of this work. This work was partially funded by the Defense Advanced Research Projects Agency (DARPA) through the HAPTIX program (award number N66001-15-C-4015) and the New Brunswick Health Research Foundation (NBHRF) through an Establishment Grant.

Author information

Authors and Affiliations

Contributions

D.B. wrote the main manuscript text and prepared the figures. A.W. designed and built the experimental hardware and collected all data. J.S. edited the manuscript. All authors contributed to the experimental design, data analysis and manuscript review.

Corresponding author

Ethics declarations

Competing Interests

J. Sensinger is a co-owner of Coapt Engineering whose product was used in this study.

Additional information

Publisher's note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Electronic supplementary material

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Blustein, D., Wilson, A. & Sensinger, J. Assessing the quality of supplementary sensory feedback using the crossmodal congruency task. Sci Rep 8, 6203 (2018). https://doi.org/10.1038/s41598-018-24560-3

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-018-24560-3

Keywords

This article is cited by

-

Prosthetic embodiment: systematic review on definitions, measures, and experimental paradigms

Journal of NeuroEngineering and Rehabilitation (2022)

-

A multi-dimensional framework for prosthetic embodiment: a perspective for translational research

Journal of NeuroEngineering and Rehabilitation (2022)

-

Advanced technologies for intuitive control and sensation of prosthetics

Biomedical Engineering Letters (2020)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.