Abstract

Everyday decisions frequently require choosing among multiple alternatives. Yet the optimal policy for such decisions is unknown. Here we derive the normative policy for general multi-alternative decisions. This strategy requires evidence accumulation to nonlinear, time-dependent bounds that trigger choices. A geometric symmetry in those boundaries allows the optimal strategy to be implemented by a simple neural circuit involving normalization with fixed decision bounds and an urgency signal. The model captures several key features of the response of decision-making neurons as well as the increase in reaction time as a function of the number of alternatives, known as Hick’s law. In addition, we show that in the presence of divisive normalization and internal variability, our model can account for several so-called ‘irrational’ behaviors, such as the similarity effect as well as the violation of both the independence of irrelevant alternatives principle and the regularity principle.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 print issues and online access

$209.00 per year

only $17.42 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

Data sharing is not applicable to this article since no datasets were generated or analyzed during the current study.

Code availability

These results of this article were generated using code written in MATLAB. The code is available at https://github.com/DrugowitschLab/MultiAlternativeDecisions.

References

Gold, J. I. & Shadlen, M. N. The neural basis of decision making. Annu. Rev. Neurosci. 30, 535–574 (2007).

Platt, M. L. & Glimcher, P. W. Neural correlates of decision variables in parietal cortex. Nature 400, 233–238 (1999).

Wang, X. J. Decision making in recurrent neuronal circuits. Neuron 60, 215–234 (2008).

Churchland, A. K. & Ditterich, J. New advances in understanding decisions among multiple alternatives. Curr. Opin. Neurobiol. 22, 920–926 (2012).

Ditterich, J. A comparison between mechanisms of multi-alternative perceptual decision making: ability to explain human behavior, predictions for neurophysiology, and relationship with decision theory. Front. Neurosci. 4, 184 (2010).

Krajbich, I. & Rangel, A. Multialternative drift-diffusion model predicts the relationship between visual fixations and choice in value-based decisions. Proc. Natl Acad. Sci. USA 108, 13852–13857 (2011).

Tajima, S., Drugowitsch, J. & Pouget, A. Optimal policy for value-based decision-making. Nat. Commun. 7, 12400 (2016).

Louie, K., Grattan, L. E. & Glimcher, P. W. Reward value-based gain control: divisive normalization in parietal cortex. J. Neurosci. 31, 10627–10639 (2011).

Louie, K., LoFaro, T., Webb, R. & Glimcher, P. W. Dynamic divisive normalization predicts time-varying value coding in decision-related circuits. J. Neurosci. 34, 16046–16057 (2014).

Churchland, A. K., Kiani, R. & Shadlen, M. N. Decision-making with multiple alternatives. Nat. Neurosci. 11, 693–702 (2008).

Louie, K., Khaw, M. W. & Glimcher, P. W. Normalization is a general neural mechanism for context-dependent decision making. Proc. Natl Acad. Sci. USA 110, 6139–6144 (2013).

Shadlen, M. N. & Shohamy, D. Decision making and sequential sampling from memory. Neuron 90, 927–939 (2016).

Drugowitsch, J., Moreno-Bote, R., Churchland, A. K., Shadlen, M. N. & Pouget, A. The cost of accumulating evidence in perceptual decision making. J. Neurosci. 32, 3612–3628 (2012).

Brockwell, A. E. & Kadane, J. B. A gridding method for Bayesian sequential decision problems. J. Comput. Graph. Stat. 12, 566–584 (2003).

Baum, C. W. & Veeravalli, V. V. A sequential procedure for multihypothesis testing. IEEE Trans. Inf. Theory 40, 1994–2007 (1994).

Dragalin, V. P., Tartakovsky, A. G. & Veeravalli, V. V. Multihypothesis sequential probability ratio tests. II. Accurate asymptotic expansions for the expected sample size. IEEE Trans. Inf. Theory 46, 1366–1383 (2000).

Bogacz, R. & Gurney, K. The basal ganglia and cortex implement optimal decision making between alternative actions. Neural Comput. 19, 442–477 (2007).

Carpenter, R. H. & Williams, M. L. Neural computation of log likelihood in control of saccadic eye movement. Nature 377, 59–62 (1995).

Brown, S. & Heathcote, A. A ballistic model of choice response time. Psychol. Rev. 112, 117–128 (2005).

Thura, D. & Cisek, P. Deliberation and commitment in the premotor and primary motor cortex during dynamic decision making. Neuron 81, 1401–1416 (2014).

Thura, D. & Cisek, P. Modulation of premotor and primary motor cortical activity during volitional adjustments of speed-accuracy trade-offs. J. Neurosci. 36, 938–956 (2016).

Carandini, M. & Heeger, D. J. Normalization as a canonical neural computation. Nat. Rev. Neurosci. 13, 51–62 (2012).

Keller, E. L. & McPeek, R. M. Neural discharge in the superior colliculus during target search paradigms. Ann. N. Y. Acad. Sci. 956, 130–142 (2002).

Hick, W. E. On the rate of gain of information. Q. J. Exp. Psychol. 4, 11–26 (1952).

Hyman, R. Stimulus information as a determinant of reaction time. J. Exp. Psychol. 45, 188–196 (1953).

Usher, M. & McClelland, J. L. The time course of perceptual choice: the leaky, competing accumulator model. Psychol. Rev. 108, 550–592 (2001).

Pastor-Bernier, A. & Cisek, P. Neural correlates of biased competition in premotor cortex. J. Neurosci. 31, 7083–7088 (2011).

Mendonça, A. G. et al. The impact of learning on perceptual decisions and its implication for speed-accuracy tradeoffs. Preprint at bioRxiv https://doi.org/10.1101/501858 (2018).

Luce, R. D. Individual Choice Behavior: a Theoretical Analysis (Wiley, 1959).

Samuelson, P. A. Foundations of Economic Analysis (Harvard Univ. Press, 1947).

Stephens, D. W. & Krebs, J. R. Foraging Theory (Princeton Univ. Press, 1986).

Shafir, S., Waite, T. A. & Smith, B. H. Context-dependent violations of rational choice in honeybees (Apis mellifera) and gray jays (Perisoreus canadensis). Behav. Ecol. Sociobiol. 51, 180–187 (2002).

Tversky, A. & Simonson, I. Context-dependent preferences. Manage. Sci. 39, 1179–1189 (1993).

Huber, J., Payne, J. W. & Puto, C. Adding asymmetrically dominated alternatives: violations of regularity and the similarity hypothesis. J. Consum. Res. 9, 90–98 (1982).

Tversky, A. Elimination by aspects: a theory of choice. Psychol. Rev. 79, 281–299 (1972).

Gluth, S., Spektor, M. S. & Rieskamp, J. Value-based attentional capture affects multi-alternative decision making. eLife 7, e39659 (2018).

Tsetsos, K., Chater, N. & Usher, M. Salience driven value integration explains decision biases and preference reversal. Proc. Natl Acad. Sci. USA 109, 9659–9664 (2012).

Tsetsos, K. et al. Economic irrationality is optimal during noisy decision making. Proc. Natl Acad. Sci. USA 113, 3102–3107 (2016).

Pettibone, J. C. Testing the effect of time pressure on asymmetric dominance and compromise decoys in choice. Judgm. Decis. Mak. 7, 513–523 (2012).

Trueblood, J. S., Brown, S. D. & Heathcote, A. The multiattribute linear ballistic accumulator model of context effects in multialternative choice. Psychol. Rev. 121, 179–205 (2014).

McMillen, T. & Holmes, P. The dynamics of choice among multiple alternatives. J. Math. Psychol. 50, 30–57 (2006).

Kveraga, K., Boucher, L. & Hughes, H. C. Saccades operate in violation of Hick’s law. Exp. Brain Res. 146, 307–314 (2002).

Lawrence, B. M., St John, A., Abrams, R. A. & Snyder, L. H. An anti-Hick’s effect in monkey and human saccade reaction times. J. Vis. 8, 26.1–7 (2008).

Treisman, A. & Souther, J. Search asymmetry: a diagnostic for preattentive processing of separable features. J. Exp. Psychol. Gen. 114, 285–310 (1985).

Steverson, K., Brandenburger, A. & Glimcher, P. Choice-theoretic foundations of the divisive normalization model. J. Econ. Behav. Organ. 164, 148–165 (2019).

Bogacz, R., Usher, M., Zhang, J. & McClelland, J. L. Extending a biologically inspired model of choice: multi-alternatives, nonlinearity and value-based multidimensional choice. Philos. Trans. R. Soc. Lond. B 362, 1655–1670 (2007).

Beck, J. M., Ma, W. J., Pitkow, X., Latham, P. E. & Pouget, A. Not noisy, just wrong: the role of suboptimal inference in behavioral variability. Neuron 74, 30–39 (2012).

Simonson, I. Choice based on reasons: the case of attraction and compromise effects. J. Consum. Res. 16, 158–174 (1989).

Howes, A., Warren, P. A., Farmer, G., El-Deredy, W. & Lewis, R. L. Why contextual preference reversals maximize expected value. Psychol. Rev. 123, 368–391 (2016).

Li, V., Michael, E., Balaguer, J., Herce Castañón, S. & Summerfield, C. Gain control explains the effect of distraction in human perceptual, cognitive, and economic decision making. Proc. Natl Acad. Sci. USA 115, E8825–E8834 (2018).

Roe, R. M., Busemeyer, J. R. & Townsend, J. T. Multialternative decision field theory: a dynamic connectionist model of decision making. Psychol. Rev. 108, 370–392 (2001).

Furman, M. & Wang, X. J. Similarity effect and optimal control of multiple-choice decision making. Neuron 60, 1153–1168 (2008).

Albantakis, L. & Deco, G. The encoding of alternatives in multiple-choice decision making. Proc. Natl Acad. Sci. USA 106, 10308–10313 (2009).

Teodorescu, A. R. & Usher, M. Disentangling decision models: from independence to competition. Psychol. Rev. 120, 1–38 (2013).

Mahadevan, S. Average reward reinforcement learning: foundations, algorithms, and empirical results. Mach. Learn. 22, 159–196 (1996).

BellmanR. E. Dynamic Programming. (Princeton Univ. Press, 1957).

Drugowitsch, J., Moreno-Bote, R. & Pouget, A. Optimal decision bounds for probabilistic population codes and time varying evidence. Preprint at Nature Precedings http://precedings.nature.com/documents/5821/version/1/files/npre20115821-1.pdf (2011).

Acerbi, L.& Ma, W. J. Practical Bayesian optimization for model fitting with Bayesian adaptive direct search. Adv. Neural Inf. Process. Syst. 2017, 1837–1847 (2017).

Acknowledgements

A.P. was supported by the Swiss National Foundation (grant no. 31003A_143707) and a grant from the Simons Foundation (no. 325057). J.D. was supported by a Scholar Award in Understanding Human Cognition by the James S. McDonnell Foundation (grant no. 220020462). We dedicate this paper to the memory of S. Tajima, who tragically passed away in August 2017.

Author information

Authors and Affiliations

Contributions

S.T., J.D. and A.P. conceived the study. S.T. and J.D. developed the theoretical framework. S.T., J.D. and N.P. performed the simulations and conducted the mathematical analysis. S.T., J.D., N.P. and A.P. interpreted the results and wrote the paper.

Corresponding authors

Ethics declarations

Competing interest

The authors declare no competing interest.

Additional information

Peer review information: Nature Neuroscience thanks Jennifer Trueblood and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Integrated supplementary information

Supplementary Figure 1 Addition of variability to the accumulator affects models’ relative performance.

The race model variants without constrained evidence accumulation approximating the optimal policy perform much worse than our model’s variants with that constraint, a result that is demonstrated in Fig. 5c. Here, we show that reducing the amount of variability in the decision bounds brings the models’ relative performances closer to each other as was the case in Fig. 3. As in Figs. 3 and 5c, this figure shows the reward rate of the race model with (green) and without (orange) the urgency signal relative to our full model with urgency and constrained evidence accumulation (blue). Each point represents the mean reward rate across 106 simulated trials.

Supplementary Figure 2 Dependencies of the stopping boundaries on task parameters.

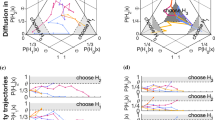

We show how the decision boundaries change as a function of time (a), inter-trial interval (b), noise variance (c), and with symmetric (d) and asymmetric (e) prior mean of reward. (a) Dynamics of decision boundaries over time, t. The decision boundaries approach each other over time. Here, we used the following parameters: reward prior, \(\left( {\bar z_1,\bar z_2,\bar z_3} \right) = \left( {\bar z,\bar z,\bar z} \right) = (0.1,0.1,0.1)\); inter trial interval (ITI, including non-decision time), tw = 0.5; noise variance, \(\sigma _x^2 = 2\). In (b)-(e) we varied a single parameter, while keeping all other parameters constant. The shown boundaries are the initial ones, at time t = 0. (b) Effect of inter trial interval (ITI), tw. The boundaries start further apart for longer ITIs. tw = 0.5 corresponds to the leftmost plot in panel a. (c) Effect of the evidence noise variance, \(\sigma _x^2\). The boundaries start further apart for larger noise. \(\sigma _x^2 = 2\) corresponds to the leftmost plot in panel a. (d) Effect of the reward prior mean, \(\bar z\). The boundaries start closer to each other for larger mean rewards. \(\bar z = 0.1\) corresponds to the leftmost plot in panel a. (e) Effect of the asymmetric reward prior, \((\bar z_1,\bar z_2,\bar z_3)\), where \(\bar z_1\), \(\bar z_2\), and \(\bar z_3\) can be different from each other. The boundaries remain parallel to the cube diagonal but the asymmetric priors cause a shift of the boundary positions when projected on the triangle orthogonal to the diagonal, such that the boundaries corresponding to the most rewarded options start closer to the center of the triangle. \((\bar z_1,\bar z_2,\bar z_3) = (0.1,0.1,0.1)\) is identical to the leftmost plot in panel a. We have not been able to derive analytical approximations to the stopping bounds but note that the neural network provides a close approximation to the optimal bound with only three parameters. Given the shape and time dependence of the bounds, it is unlikely that it is possible to obtain an analytical solution with fewer parameters.

Supplementary Figure 3 The optimal urgency signal is only weakly dependent on accumulation cost and nonlinearity.

Each panel shows combinations of urgency signal parameters (vertical axis; offset or slope) and cost (left panels) or nonlinearity (right panels) setting the reward rate (value-based decisions; top) or correct rate (perceptual decision; bottom) as a color gradient. For each parameter combination, reward and correct rate were found by simulating 500,000 trials. The black line in each panel indicates for each cost or nonlinearity setting the value of the urgency signal parameter that maximizes the reward/correct rate. This line is noisy due to the simulation-based stochastic evaluation of the reward/correct rates. In general, both optimal slope and offset only weekly depend on the accumulation cost. The same applies to the nonlinearity, except for a narrow band around 1.5, where it is best to decrease both slope and offset for an increase in this nonlinearity.

Supplementary information

Rights and permissions

About this article

Cite this article

Tajima, S., Drugowitsch, J., Patel, N. et al. Optimal policy for multi-alternative decisions. Nat Neurosci 22, 1503–1511 (2019). https://doi.org/10.1038/s41593-019-0453-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41593-019-0453-9

This article is cited by

-

Reinforcement learning of adaptive control strategies

Communications Psychology (2024)

-

The online metacognitive control of decisions

Communications Psychology (2024)

-

Degenerate boundaries for multiple-alternative decisions

Nature Communications (2022)

-

Alternative female and male developmental trajectories in the dynamic balance of human visual perception

Scientific Reports (2022)

-

Rational use of cognitive resources in human planning

Nature Human Behaviour (2022)