Abstract

Machine learning is becoming a valuable tool for scientific discovery. Particularly attractive is the application of machine learning methods to the field of materials development, which enables innovations by discovering new and better functional materials. To apply machine learning to actual materials development, close collaboration between scientists and machine learning tools is necessary. However, such collaboration has been so far impeded by the black box nature of many machine learning algorithms. It is often difficult for scientists to interpret the data-driven models from the viewpoint of material science and physics. Here, we demonstrate the development of spin-driven thermoelectric materials with anomalous Nernst effect by using an interpretable machine learning method called factorized asymptotic Bayesian inference hierarchical mixture of experts (FAB/HMEs). Based on prior knowledge of material science and physics, we were able to extract from the interpretable machine learning some surprising correlations and new knowledge about spin-driven thermoelectric materials. Guided by this, we carried out an actual material synthesis that led to the identification of a novel spin-driven thermoelectric material. This material shows the largest thermopower to date.

Similar content being viewed by others

Introduction

Recent progresses of materials science technologies enable the collection of large volumes of materials data in a short time1,2,3,4. Accordingly, the development of tools to process such big data sets is becoming necessary. Machine learning technologies are extremely promising, not only due to their ability to rapidly analyze data5,6,7,8, but also for their potential to discover novel knowledge, not rooted in conventional theories.

To apply machine learning for actual materials development, cooperation between scientists and machine learning tools is necessary. Materials scientists often try to understand the rationale behind the data-driven models in order to obtain some actionable information to guide materials development. However, such attempts have been impeded so far by the low interpretability of many machine learning methods. For example, it is difficult for a human to understand the models constructed by a deep neural network9, expressed as the connections between large numbers of perceptrons (neurons). Therefore, the notion of interpretable machine learning (explainable or transparent machine learning), which has not only high predictive ability but also high interpretability, has recently seen a resurgence10,11, especially in the field of scientific discovery.

Here, we show an actual material development by using state-of-the-art interpretable machine learning called factorized asymptotic Bayesian inference hierarchical mixture of experts (FAB/HMEs)12,13. The development demonstrated the synergy between the FAB/HMEs and the materials scientists. In the field of material development, the machine learning algorithm must meet the following three requirements: “sparse modeling”; “prediction accuracy”; and “interpretability”, as shown in Fig. 1. The seizes of materials-related data sets are often quite small compared with the data sets in other scientific fields (e.g., astrophysics or particle physics), due to the time necessary to carry out the experiments and calculations/simulations. This results in a significant data sparsity in a material space; therefore, the sparse modeling approach, which automatically selects only the important descriptors (attributes) and reduces the dimension of the search space, is extremely useful. One of the most popular sparse modeling methods is LASSO, which is a linear model with L1 regularization14. However, such linear models do not always have high prediction accuracy, because material data often includes non-linear relationships (for example, due to proximity to phase transitions). To achieve high prediction accuracy, non-linear models, such as support vector machine (SVM), deep neural network (NN), or random forest (RF), are often required14. However, such non-linear models commonly lack interpretability. Although they can tell us which descriptors are important for the machine learning model, they rarely clarify how the descriptors actually contribute to it. Extracting actionable information from such non-linear models is not easy.

Three requirements of machine learning in materials developments. Good collaboration between machine learning tools and scientists in materials developments requires sparse modeling, prediction accuracy, and interpretability. One type of interpretable machine learning called factorized asymptotic Bayesian inference hierarchical mixture of experts (FAB/HMEs) satisfies all three criteria

Interpretable machine learning FAB/HMEs constructs a piecewise sparse linear modeling12 that meets the three requirements of “sparse modeling”, “prediction accuracy”, and “interpretability”. Therefore, the actionable information from the data-driven model provided by the FAB/HMEs can leads us to discoveries of novel materials.

For the case study, we applied the interpretable machine learning for the development of a new thermoelectric material. Thermoelectric technologies are becoming indispensable in the quest for a sustainable future15,16. In particular, the emerging spin-driven thermoelectric (STE) materials, which employ spin-Seebeck effect (SSE)17,18 and anomalous Nernst effect (ANE)19,20,21, has garnered much attention as a promising path toward low cost and versatile thermoelectric technology with easily scalable manufacturing. In contrast to the conventional thermoelectric (TE) devices, the STE devices consist of simple layered structures, and can be manufactured with straightforward processes, such as sputtering, coating, and plating, resulting in lower fabrication costs. An added advantage of the STE devices is that they can double their function as heat-flow sensors, owing to their lower thermal resistance22. However, STE material development is hampered by the lack of understanding of the fundamental mechanism of STE material. Research on the STE phenomena, studying the complicated relationship between spin, heat and charge currents and called spin caloritronics, is at the cutting edge of materials science and physics23. A data-driven approach utilizing machine learning can exhibit its full potential in such rapidly developing scientific field.

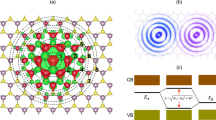

Figure 2 shows the general configuration of one of the STE devices using the anomalous Nernst effect (ANE). It is composed of a magnetic layer, and a single crystal substrate. When a temperature difference ΔT and a magnetic field H are applied along the z and the x direction, respectively, a heat current is converted into an electric current by ANE due to spin–orbit interaction, then one can detect the thermopower SSTE (one of the most important figures of merit for thermoelectric phenomena24) along the y direction. By searching for the STE materials using the ANE with improved thermopower SSTE, we have used the interpretable machine learning to discover non-trivial behaviors of material parameters governing the STE phenomena. We have successfully leveraged this machine-learning-informed knowledge to discover a high-performance STE material, whose thermopower is greater than that of the best known STE material to date25.

Schematic of the spin-driven thermoelectric (STE) device. The device is composed of a magnetic layer, and a single crystal substrate. When a temperature difference ΔT and a magnetic field H are applied along the z and the x direction, respectively, then one can detect the thermopower SSTE along the y direction. The \(L_x,L_y,\) and \(L_z^{{\mathrm{film}}}\) are 2 mm, 8 mm, and 150 nm, respectively. The substrate thickness \(L_z^{{\mathrm{sub.}}}\) of Si, AlN, and GGG are 381, 450, and 500 μm, respectively

Results

Material data

Figure 3a–c shows the material data of STE devices using a M100−xPtx binary alloy, where M = Fe, Co, and Ni. The thermopower SSTE is experimental data on different experimental conditions (different substrates) C ≡ {Si, AlN, GGG}. A temperature difference ΔT was applied between the top and bottom of the STE tips shown in Fig. 2 by sandwiching the tips between copper heat baths at 300 K and 300 + ΔT K. Magnetic field H was applied along the x direction. Under these conditions, the thermopower SSTE can be detected along the y direction. The details about the experimental conditions are in Supplementary Methods. The material parameters X ≡ {X1, X2, X3… X14}, whose simple descriptions are shown in Fig. 3d, were obtained by density function theory (DFT) calculation based on composition data experimentally obtained from X-ray fluorescence (XRF) measurement. However, it is difficult to simulate disordered (random) phases by using common DFT methods such as projector-augmented wave (PAW) method. For example, to simulate Fe50.1Pt49.9 binary alloy, we have to make a very large unit cell, calculation of which is not feasible. Therefore, a Greens-function-based ab initio method, Korringa-Kohn-Rostoker coherent-potential approximation (KKR-CPA) method26, was employed to calculate the disordered M100−xPtx binary alloys. The KKR-CPA, where the CPA deal with random (disordered) material systems and allows us to simulate band structures of multicomponent materials with a single unit cell, is known for its good agreement with experimental results, especially in disordered alloy systems27,28,29. The details about the data, experiments, DFT (KKR-CPA) calculations, data-preprocessing, and the reason we use these material parameters X are shown in Supplementary Methods.

Machine learning modeling by the interpretable machine learning

We used the interpretable machine learning to construct the following data-driven model

where X are the material parameters (with X ≡ {X1, X2, X3 … X14}), their interaction terms I ≡ {X1X2, X1X2, X1X3 … X13X14}, their square terms \({\mathbf{S}} \equiv \{ X_1^2,X_2^2,X_3^2 \ldots X_{14}^2\} ,\), and experimental condition terms C ≡ {CAlN, CSi, CGGG}, respectively. The experimental condition terms C are binary parameters (i.e., CAlN = {0, 1}, CSi = {0, 1}, CGGG = {0, 1}). More details are provided in Supplementary Methods. The interpretable machine learning can solve data-classification and data-regression problems simultaneously, by maximizing a novel information criterion (factorized information criterion, which is referred to as FIC) with an Expectation-Maximization-like algorithm (factorized asymptotic Bayesian inference, referred to as FAB), thus constructing a piecewise sparse linear model12,13.

Figure 4a, b shows the visualization of the model constructed by the interpretable machine learning. The data is classified according to the tree structure, as shown in Fig. 4a. For each data group, regression models (component 1, 2, 3, and 4, as shown in Fig. 4b) are created. Note that the interpretable machine learning does not sequentially carry out the data classification and data regression. The FAB/HMEs searches for proper regression models while at the same time creating proper data groups, thus selecting a better combination of regression models and data groups from a very large space of potential groupings. The prediction accuracy of this model is comparable with other non-linear machine learning models. The details are given in the Discussion section.

Interpretable data-driven model created by FAB/HMEs. One type of interpretable machine learning called factorized asymptotic Bayesian inference hierarchical mixture of experts (FAB/HMEs) creates piecewise sparse linear model, which is visualized with a tree structure and b regression models. Scientists can interpret tree structure and regression models from viewpoint of materials science and physics

The structure of the model can guide our understanding of the data; we can focus on each group separately (shown by the tree structure in Fig. 4a), and interpret the regression models (component 1, 2, 3, and 4) for each data group on its own (shown in Fig. 4b). Therefore, it becomes much easier for materials scientists to connect the data-driven model with the existing body of knowledge of physics and materials science.

For instance, we notice that the data is first classified by average spin moment (X14) at gate 1. The data with small average spin moment X14 go to component 1, where the thermopower SSTE is equal to zero (SSTE = 0, as shown in Fig. 4b). This is natural from the viewpoint of materials science and physics. It is known that STE phenomena are not observed on non-magnetic materials with small average spin moment X1420,25. Only magnetic materials with large average spin moment X14 can have finite SSTE values; accordingly, they go to gate 2. Thus, we see that the interpretable model can confirm relationships that are consistent with existing knowledge (this is discussed in Supplementary Discussion).

Fortunately, sometimes it is possible to obtain entirely novel knowledge from the data-driven model. We notice that there is a positive correlation between SSTE and the product term X2X8, where X2 and X8 are the amount of Pt atoms and the Pt spin polarization, respectively. All regression models of magnetic materials (component 2, 3, and 4) have positive coefficient in front of the X2X8 (see Fig. 4b). As with the case of the positive X2X8 term, we also notice the existence of negative X6X13 terms, where X6 and X13 are the orbital moment of Pt atom and spin–orbit interaction energy, respectively, in component 2, 3, and 4 as shown in Fig. 4b. These correlations, uncovered by the machine learning models, appear to be beyond our current understanding of STE. The details of the physical interpretation underlying these relations are also discussed in Supplementary Discussion. These surprising connections can lead to a more comprehensive theory of the fundamental mechanism of STE phenomena.

Actual material development guided by the interpretable machine learning modeling

A theoretical discussion about the possible physical origins of the newly discovered correlations is provided in Supplementary Discussion. We now focus on demonstrating that this unanticipated result of the interpretable machine learning can indeed help us to develop novel STE materials.

It has been difficult to develop STE materials because the fundamental mechanism of the STE phenomena have not been well understood yet. The materials development in such a novel scientific field can be significantly accelerated by application of the interpretable machine learning modeling. One of the insights of the machine-learning model, which is the positive correlation between SSTE and X2X8 on the condition of the magnetic material data (data with large X14), suggests that we have to search for magnetic materials with large X2X8 values to obtain a large SSTE. Searching for a material with large X2X8 value is feasible because we can simulate it by using conventional computational methods.

As a result of screening of materials for large X2X8 by the computational tools, we found that Co50Pt50N10 has a large X2X8 (the details are shown in Supplementary Discussion). Therefore, as an initial example, we carried out actual material synthesis of Co50Pt50Nx and measured its thermopower SSTE. Figure 5 shows the SSTE values of Co50Pt50Nx materials with different N concentration. It is clear that SSTE increases with increasing X2X8 driven by an increase in N concentration. The SSTE of Co48.9Pt51.1N7.2 achieves the value of 13.04 μV/K, which is larger than that of the current generation of STE materials (according to Guin et al., SSTE of ferromagnetic materials are typically <10 μV/K25). Details of the actual material synthesis are given in Supplementary Discussion. Since the discovered STE materials have high thermopower as well as high thermal conductivity, they are promising candidates for heat flow sensor components, which can detect a heat flow with little parasitic thermal resistance.

Spin-driven thermopower SSTE of CoPtN thin films. SSTE increases with increasing X2X8 in CoPtN, which was selected by material screening guided by the knowledge obtained from the FAB/HMEs model, namely, the positive correlation between SSTE and X2X8. SSTE of Co48.9Pt51.1N7.2 thin film reaches 13.04 μV/K, which is larger than all other known STE materials

Discussion

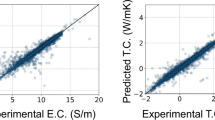

Figure 6a shows the fourfold cross-validation root mean square error (CV-RMSE) of the FAB/HMEs and other commonly used machine learning algorithms, including neural network (NN), support vector machine (SVM), random forest (RF), least absolute shrinkage and selection operator (LASSO), and multiple linear regression (MLR). Non-linear models using the FAB/HMEs, NN, SVM, and RF have better prediction accuracy than linear models using LASSO and MLR. The prediction performance of the FAB/HMEs is comparable to that of other non-linear models.

Comparison of machine learning models. a Comparison of fourfold cross-validation root mean square error (CV-RMSE) of several major machine learning methods. FAB/HMEs has not only high interpretability but also prediction accuracy that is comparable to black box machine learning such as NN and SVM. b Top 10 importance of descriptors in the RF model. c Top 10 regression coefficients of LASSO model. d Top 10 regression coefficients in the MLR model. The positive X2X8 term was not discovered by the RF, LASSO, and MLR models

Although the NN and SVM models are highly predictive, they are also difficult to interpret. On the other hand, the highly interpretable FAB/HMEs, LASSO, and MLR models, as well as RF, which is somewhat interpretable, allow us to obtain novel information based on the data. Because it exhibits the smallest CV-RMSE in these interpretable models, the FAB/HMEs was employed in our materials development process. In fact, even using the other interpretable models (RF, LASSO, and MLR) it is difficult to notice the positive correlation between SSTE and X2X8 for magnetic materials (X14 > 0.6325). Figure 6b shows the top 10 importance of descriptors in RF model, and we can see no X2X8 term. This term is also missing from the top 10 regression coefficient of LASSO and MLR shown in Fig. 6c, d, respectively. The difference between the FAB/HMRs and the other interpretable models can be attributed to its hierarchical nature. In the case we are studying, magnetic materials data (X14 > 0.6325) is completely different from non-magnetic materials data (X14 < 0.6325) as for the STE phenomena. Therefore, a non-magnetic STE model and magnetic STE model should be constructed individually. The FAB/HMEs automatically divides the data into magnetic materials data and non-magnetic materials data at gate 1 in Fig. 4a, and constructed different models. On the other hand, the other models try to express the STE phenomena by a single model using both magnetic and non-magnetic materials data. Detailed descriptor dependencies in each magnetic and non-magnetic STE model are averaged and buried in Fig. 6b–d. Such data hierarchies often appears in materials data (because of differences due to, for example, phase transition, experimental conditions, calculation conditions, etc.). Therefore, the FAB/HMEs, which is able to discover and represent this hierarchical structure of the data, can help scientists both better model and understand physical phenomena, as well as accelerate material discoveries.

In summary, we have demonstrated the utility of interpretable machine learning modeling (FAB/HMEs) in the material development process. Because of their high predictive power and interpretability, materials scientists can obtain from such models non-trivial knowledge useful for novel materials development. Guided by the surprising correlation discovered by the interpretable model, we have succeeded in developing a spin-driven thermoelectric material, whose thermopower SSTE is larger than that of the current generation of thermoelectric materials. In addition, the novel insight we found from the data-driven model can lead to a more comprehensive understanding of the mechanism of emerging STE phenomena. Thus, the interpretable machine learning can help not only in the development of novel materials, but also in guiding the theoretical studies.

Methods

FAB/HMEs

The factorized asymptotic Bayesian inference hierarchical mixture of experts (FAB/HMEs) constructs a piecewise sparse linear model that assigns sparse linear experts to individual partitions in feature space and expresses whole models as patches of local experts12,13. By maximizing the factorized information criterion including two L0-regularizations for partition-structure determinations and feature selection for individual experts, the FAB/HMEs performs the partition-structure determination and feature selection at the same time. To maximize the FIC, a factorized asymptotic Bayesian inference (FAB), which combines an expectation-maximization (EM) algorithm with an automatic shrinkage of non-effective experts, is used. In this paper, we set the termination condition δ = 10−5, shrinkage threshold ε = 0.062, and number of initial experts was 32 (i.e., 5-depth symmetric tree). The fourfold cross-validation root mean square error (CV-RMSE) was 0.188253, as shown in Fig. 6.

NN

The neural network (NN) models the data by means of a statistical learning algorithm mimicking the brain14. Here, we have utilized a simple 3-layer perceptron. The cross-validation with “caret (nnet)” package in the R programming language decides the number r of hidden units NH = 8 and the decay value D = 3.91 × 10−3. The CV-RMSE was 0.169454, as shown in Fig. 6.

SVR

The support vector regression (SVR) constructs a data-driven model with a kernel method14. Here, we have used the radial basis function (RBF) kernel. We set the cost value C = 16 and sigma of the RBF σ = 3.125 × 10−2, which were decided by the cross-validation with “caret (svmradial)” package in the R programming language. The CV-RMSE was 0.132847, as shown in Fig. 6.

RF

The random forest (RF) is an ensemble learning method using a multitude of decision trees14. The number of trees (ntree) and the number of features (mtry) were set to 1000 and 120, respectively. We have performed the RF by using the “caret (rf)” package in the R programming language. The CV-RMSE was 0.246274, as shown in Fig. 6.

Lasso

The least absolute shrinkage and selection operator (LASSO) creates a linear regression model with feature selection by using a L1-regularization term14. The complexity parameter λ was set to 3.052 × 10−4, which was decided by the cross-validation with the “caret (glmnet)” package in the R programming language. The CV-RMSE was 0.583379, as shown in Fig. 6.

Data preparation of thermopower S STE

The spin-driven thermoelectric performance (thermopower SSTE) data were obtained by experiments including material fabrication processes and material characterization processes. The details are shown in Supplementary Methods.

Data preparation of descriptors X

The descriptors X data were calculated by the conventional material simulation technique, the Korringa-Kohn-Rostoker and coherent-potential approximation (KKR-CPA)26. The details are shown in Supplementary Methods.

Data availability

The data and the code that support the results within this paper and other findings of this study are available from the corresponding author upon reasonable request.

References

Koinuma, H. & Takeuchi, I. Combinatorial solid-state chemistry of inorganic materials. Nat. Mater. 3, 429 (2004).

Takeuchi, I. et al. Identification of novel compositions of ferromagnetic shape-memory alloys using composition spreads. Nat. Mater. 2, 180–184 (2003).

Curtarolo, S. et al. The high-throughput highway to computational materials design. Nat. Mater. 12, 191–201 (2013).

Nishijima, M. et al. Accelerated discovery of cathode materials with prolonged cycle life for lithium-ion battery. Nat. Commun. 5, 4553 (2014).

Butler, K. T., Davies, D. W., Cartwright, H., Isayev, O. & Walsh, A. Machine learning for molecular and materials science. Nature 559, 547–555 (2018).

Xia, R. & Kais, S. Quantum machine learning for electronic structure calculations. Nat. Commun. 9, 4195 (2018).

Kusne, A. G. et al. On-the-fly machine-learning for high-throughput experiments: search for rare-earth-free permanent magnets. Sci. Rep. 4, 6367 (4014).

Iwasaki, I., Kusne, A. G. & Takeuchi, I. Comparison of dissimilarity measures for cluster analysis of X-ray diffraction data from combinatorial libraries. npj Comput. Mater. 3, 4 (2017).

Dimiduk, D. M., Holm, E. A. & Niezqoda, S. R. Perspectives on the impact of machine learning, deep learning and artificial intelligence on materials, processes and structures engineering. Integr. Mater. Manuf. Innov. 7, 157–172 (2018).

Guidotti, R. et al. A survey of methods for explaining black box models. ACM Comput. Surv. 51, 5 (2018).

Chen, J., Song, Le. Wainwright M. J., & Jordan, M. I. Learning to explain: an information-theoretic perspective on model interpretation. Proceedings of International Conference on Machine Learning (ICML). PMLR 80, 883–892 (2018).

Asahara, M. & Fujimaki, R. An emperical study on distributed bayesian approximation inference of piecewise sparse linear models. IEEE Trans. Parallel Distrib. Syst. https://doi.org/10.1109/TPDS.2019.2892972 (2019).

Eto, R., Fujimaki, R., Morinaga, S. & Tamano, H. Fully-automatic bayesian piecewise sparse linear models. Proceedings of Artificial Intelligence and Statistics (AISTAT). PMLR 33, 238–246 (2014).

Bishop, C. M. Pattern Recognition and Machine Leaning (Springer, 2006).

Goldsmid, H. J. Introduction to Thermoelectricity (Springer, 2010).

Bell, L. E. Cooling, heating, generating power, and recovering waste heat with thermoelectric systems. Science 321, 1457–1461 (2008).

Kirihara, A. et al. Spin-current-driven thermoelectric coating. Nat. mater. 11, 686–689 (2012).

Uchida, K. et al. Observation of the spin-Seebeck effect. Nature 455, 778–781 (2008).

Sakuraba, Y. Potential of thermoelectric power generation using anomalous Nernst effect in magnetic materials. Scr. Mater. 111, 29 (2016).

Ikhlas, M. et al. Large anomalous Nernst effect at room temperature in a chiral antiferromagnet. Nat. Phys. 13, 1085–1090 (2017).

Iwasaki, Y. et al. Machine-learning guided discovery of a new thermoelectric material. Sci. Rep. 9, 2751 (2019).

Kirihara, A. et al. Flexible heat-flow sensing sheets based on the longitudinal spinSeebeck effect using one-dimensional spin-current conducting films. Sci. Rep. 6, 23114 (2016).

Bauer, G. E. W., Saitoh, E. & van Wees, B. J. Spin caloritronics. Nat. mater. 11, 391 (2012).

Uchida, K. et al. Thermoelectric generation based on spin seebeck effects. Proc. IEEE 104, 1946 (2016).

Guin, S. N. et al. Anomalous Nernst ettect beyond the magnetization scaling relation in the ferromagnetic Heusler compound Co2MnGa. NPJ Asia Mater. 11, 16 (2019).

Akai, H. Electronic structure Ni-Pd alloys calculated by the self-consistent KKR-CPA method. J. Phys. Soc. Jpn. 51, 468–474 (1982).

Khan, N. S., Staunton, J. B. & Stocks, G. M. Statistical Physics of multicomponent alloys using KKR-CPA. Phys. Rev. B 93, 054206 (2016).

Yang, L. et al. Investigation of the site preference in Mn2RuSn using KKR-CPA-LDA calculation. J. Magn. Magn. Mater. 382, 247–251 (2015).

Jin, K. et al. Tailoring the physical properties of Ni-based single-phase equiatomic alloys by modifying the chemical complexity. Sci. Rep. 6, 20159 (2016).

Acknowledgements

This work was supported by JST-PRESTO “Advanced Materials Informatics through Comprehensive Integration among Theoretical, Experimental, Computational and Data-Centric Sciences” (Grant No. JPMJPR17N4), JST-ERATO “Spin Quantum Rectification Project” (Grant No. JPMJER1402), I.T. is supported in part by C-SPIN, one of six centers of STARnet, a Semiconductor Research Corporation program, sponsored by MARCO and DARPA.

Author information

Authors and Affiliations

Contributions

Y.I., M.I., A.K., H.S. and Y.O. designed the experiment, fabricated the samples, and collected all of the data. Y.I., R.S. and E.S. contribute to the theoretical discussion. Y.I., R.S., V.S. and I.T. discussed the results of machine learning modeling. Y.I., V.S., I.T. and M.I. wrote the paper. S.Y. supervised this study. All the authors discussed the results and commented on the paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Iwasaki, Y., Sawada, R., Stanev, V. et al. Identification of advanced spin-driven thermoelectric materials via interpretable machine learning. npj Comput Mater 5, 103 (2019). https://doi.org/10.1038/s41524-019-0241-9

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41524-019-0241-9

This article is cited by

-

The mastery of details in the workflow of materials machine learning

npj Computational Materials (2024)

-

Explainable machine learning in materials science

npj Computational Materials (2022)

-

A comparison of explainable artificial intelligence methods in the phase classification of multi-principal element alloys

Scientific Reports (2022)

-

Automated stopping criterion for spectral measurements with active learning

npj Computational Materials (2021)

-

Machine learning autonomous identification of magnetic alloys beyond the Slater-Pauling limit

Communications Materials (2021)