Abstract

Neural computations are often fast and anatomically localized. Yet, investigating such computations in humans is challenging because non-invasive methods have either high temporal or spatial resolution, but not both. Of particular relevance, fast neural replay is known to occur throughout the brain in a coordinated fashion about which little is known. We develop a multivariate analysis method for functional magnetic resonance imaging that makes it possible to study sequentially activated neural patterns separated by less than 100 ms with precise spatial resolution. Human participants viewed five images individually and sequentially with speeds up to 32 ms between items. Probabilistic pattern classifiers were trained on activation patterns in visual and ventrotemporal cortex during individual image trials. Applied to sequence trials, probabilistic classifier time courses allow the detection of neural representations and their order. Order detection remains possible at speeds up to 32 ms between items (plus 100 ms per item). The frequency spectrum of the sequentiality metric distinguishes between sub- versus supra-second sequences. Importantly, applied to resting-state data our method reveals fast replay of task-related stimuli in visual cortex. This indicates that non-hippocampal replay occurs even after tasks without memory requirements and shows that our method can be used to detect such spontaneously occurring replay.

Similar content being viewed by others

Introduction

Many cognitive processes are underpinned by rapidly changing neural activation patterns. Most famously, memory and planning have been linked to fast replay of representation sequences in the hippocampus, happening approximately within 200–300 milliseconds (ms) while the animal is resting or sleeping, e.g.1,2,3,4,5,6,7,8,9. Similar events have been observed during behavior10,11, as well as outside of the hippocampus12,13,14,15,16,17. Likewise, internal deliberations during choice are reflected in alternations between orbitofrontal value representations that last less than 100 ms18, while perceptual learning has been shown to result in sub-second anticipatory activation sequences in visual cortex19,20,21. Investigating fast-paced representational dynamics within specific brain areas therefore promises important insights into a variety of cognitive processes. Such investigations could be crucial for understanding replay, which is characterized by a widespread co-occurrence of neural reactivation events throughout the brain of mostly unknown functional significance, in particular outside of the hippocampus, see, e.g.17,22. These aspects are still understudied in humans.

Studying fast neural dynamics is particularly difficult in humans because signal recording must mainly occur non-invasively. How fast and anatomically localized neural dynamics can be investigated using non-invasive neuroimaging techniques is therefore a major challenge for human neuroscience, see, e.g.23,24. The main concern related to functional magnetic resonance imaging (fMRI) is that this technique measures neural activity indirectly through slow sampling of an extended and delayed blood-oxygen-level-dependent (BOLD) response function25,26,27 that can obscure temporal detail. Yet, the problems arising in BOLD fMRI might not be as insurmountable as they seem. First, BOLD signals from the same participant and brain region show reliable timing and last for several seconds. Miezin et al.28, for instance, reported a between-session reliability of hemodynamic peak times in visual cortex of r2 = 0.95, see also29,30. Even for closely timed events, the sequential order can therefore result in systematic differences in activation strength31 that remain in the signal long after the fast sequence event is over, effectively mitigating the problems that arise from slow sampling. Moreover, Misaki et al.32 were able to decode onset differences in visual stimulation of only 100 ms when two stimuli were shown to one eye before the other. Interestingly, Misaki et al.32 indicated that timing differences become most apparent in peak activation strength, rather than temporal aspects of the hemodynamic response function (HRF). A second reason that makes the investigation of fast neural dynamics feasible is that some fast sequence events have properties that make it easier to detect them. Replay events, in particular, involve reactivation of spatially tuned cells in the order of a previously traveled path. But these reactivated paths do not typically span the entire spatial environment and only involve a local subset of all possible places the animal could occupy7,8. This locality means that even when measurement noise causes some elements of a fast sequence to remain undetected, or leads to partially re-ordered detection, the set of detected representations will still reflect positions nearby in space. In this case, successive detection of elements nearby in space or time would still identify the fast process under investigation even under noisy conditions.

If fMRI analyses can capitalize on such effects, this could allow the investigation of fast sequential activations. As mentioned above, one important application of such methods would be hippocampal replay, a topic of intense recent interest, for reviews, see, e.g.24,33,34,35,36,37. To date, most replay research has studied the phenomenon in rodents because investigations in humans and other primates either required invasive recordings from the hippocampus38,39,40,41,42, used techniques with reduced hippocampal sensitivity and spatial resolution43,44,45,46,47,48, or investigated non-sequential fMRI activation patterns over seconds or minutes49,50,51,52,53. Recently, we have hypothesized that the properties of BOLD signals mentioned above should enable the investigation of rapid neural dynamics. Indeed, using fMRI, we identified fast sequential hippocampal pattern reactivation in resting humans54. However, Schuck and Niv54 did not yet answer questions about how fMRI could be used to measure the speed of replay. One additional exploratory question is whether replay occurs outside of the hippocampus, and even following simple visual detection tasks.

Here, we provide and experimentally validate a multivariate analysis approach for fMRI that addresses the challenges and questions outlined above. The main idea of our approach is that fast neural event sequences will cause characteristic time courses of overlapping activation patterns. While the effects of co-occurring activations on individual voxels is complex, we reason that characteristic overlap will nevertheless lead to predictable and simple fluctuations in the time courses of pattern classifiers. The present experiment tests this idea and our results confirm that logistic regression classifier time courses reveal the content and order of fast sequential neural events using fMRI. Importantly, we use this method to ask whether sequential reactivations of sensory events occur outside of the hippocampus, even if task experiences did not require memorization or involve repeated sequential structure. Our study extends our previous work in several ways. First, our controlled experimental design provides evidence for the decodability of fast sequential neural events in a setting where the speed and order of fast neural event sequences are known. We also show that sequence detection can be achieved in the presence of high levels of signal noise and timing uncertainty, and is specific enough to differentiate fast sequences from activation patterns that could reflect slow conscious thinking. Second, we develop a modeling approach of multivariate fMRI pattern classification time courses that validates our experimental results and allows inference of the speed of fast sequential neural processes from the frequency spectra of our fMRI sequentiality metric. Third, we report that our task induced fast sequential replay in sensory brain areas during post-task rest, although it did not require any memorization, did not feature strong sequential structure, and did not elicit systematic hippocampal responses. Finally, our results have implications for the interpretation of our own previous results in Schuck and Niv54 and future fMRI studies investigating fast neural event sequences, like hippocampal replay.

Results

As discussed above, we investigated the possibility that fMRI can be used to address two cornerstones of understanding signals resulting from fast activation sequences: order detection and element detection. The first effect, order detection, pertains to the presence of order structure in the signal that is caused by the sequential order of fast neural events. We evaluated this effect by investigating the impact of item order on (a) the relative strength of activations within a single measurement, and (b) the order of decoded patterns across successive measurements. The second effect, element detection, quantifies to what extent fMRI allows detection of elements that were part of a sequence versus those that were not. While event detection is a standard problem in fMRI, we focused on the special case relevant to our question: detecting neural patterns of brief events that are affected by patterns from other sequence elements occurring only tens of milliseconds before or afterwards, causing backward and forward interference, respectively. Using full sequences of all possible elements in our experimental setup that tested sequence ordering, our design ensured that the two effects can be demonstrated independently, i.e., the order effect could not have been a side effect of element detection.

Participants viewed images of five different objects. During slow trials (Fig. 1a, 600 trials in total), individual images were shown with inter-trial intervals (ITIs) of approximately 2.5 s, as is common in fMRI decision-making experiments (cf.44,47,52,54). In fast trials (120 trials in total), the same images were shown as either a random sequence of all five objects (sequence trials, 75 trials, Fig. 1b), or two objects were repeated several times (repetition trials, 45 trials, Fig. 1c). Importantly, image presentation rate was greatly increased in sequence and repetition trials, with as little as 32 ms between stimuli and a presentation time of 100 ms per stimulus. Logistic regression classifiers were trained on data from slow trials and applied to sequence and repetition trials, as well as to resting-state data. We then asked whether the order and the elements of fast sequences are detectable from fMRI signals, depending on sequence speed, number of repetitions, level of background noise, and timing uncertainty. To this end, visual stimuli in sequence and repetition trials were presented in a precisely timed and ordered manner, as detailed below. Since activation patterns were primarily visual in nature, only data from visual and ventral temporal cortex were considered. A corresponding analysis using hippocampal data did not yield comparable results, see below. The analyses included N = 36 human participants who underwent two fMRI sessions with four task runs each, i.e., eight runs in total. Four additional participants were excluded from analyses due to insufficient performance, see Methods and Supplementary Information (SI) (Supplementary Fig. 1a). Sessions were separated by 9 days on average (SD = 6 days, range: 1–24 days).

a On slow trials, individual images were presented and inter-trial intervals (ITIs) were 2.5 s on average. Participants were instructed to detect upside-down visual stimuli (20% of trials) but not respond to upright pictures. Classifier training was performed on fMRI data from correct upright trials only. b Sequence trials contained five unique visual images, separated by five levels of inter-stimulus intervals (ISIs) between 32 and 2048 ms. c Repetition trials were always fast (32 ms ISI) and contained two visual images of which either the first or the second was repeated eight times (causing backward and forward interference, respectively). In both task conditions, participants were asked to detect the serial position of a cued target stimulus in a sequence and select the correct answer after a delay period without visual input. One sequence or repetition trial came after five slow trials. fMRI analyses focused on the time from sequence onset to the end of the delay period (16 s ≈ 13 TRs, 1 TR = 1.25 s). d Illustration of the three fastest sequence speed conditions of 32, 64, and 128 ms ISI between images. e Mean behavioral accuracy in sequence trials (in %) as a function of sequence speed (ISI, in ms; N = 36, ts ≥ 23.78, ps < 0.001, ds ≥ 3.96, linear mixed effects (LME) model and five one-sided one-sample t-tests against chance (50%), false discovery rate (FDR) correction). f Mean behavioral accuracy in repetition trials (in %), as a function of which sequence item was repeated (fwd = forward, bwd = backward condition; N = 36, ts ≥ 2.94, ps ≤ 0.003, ds ≥ 0.49, two one-sided one-sample t-tests against chance (50%) with FDR-correction). All error bars represent ±1 standard error of the mean (SEM). All statistics have been derived from data of N = 36 human participants who participated in one experiment. The horizontal dashed lines in (e) and (f) indicate 50% chance level. The original authors of Haxby et al.55 hold the copyright of the stimulus material (individual images of a cat, chair, face, house, and shoe) shown in (a), (b), and (c) and made it available under the terms of the Creative Commons Attribution-Share Alike 3.0 license (see http://data.pymvpa.org/datasets/haxby2001/and http://creativecommons.org/licenses/by-sa/3.0/ for details). Source data are provided as a Source Data file.

Training fMRI pattern classifiers on slow events

In slow trials, participants repeatedly viewed the same five images individually for 500 ms (images showed a cat, chair, face, house, and shoe, taken from55). Temporal delays between images were set to 2.5 s on average, as typical for task-based fMRI experiments56. To ensure that image ordering did not yield biased classifiers through biased pattern similarities (cf.57), each possible order permutation of the five images was presented exactly once (120 sets of 5 images each). Participants were kept attentive by a cover task that required them to press a button whenever a picture was shown upside-down (20% of trials; mean accuracy = 99.44%; t(35) = 263.27, 95% CI [99.13, + ∞]; p < 0.001, compared to chance (50%); d = 43.88; Supplementary Fig. 1a–c). Using data from correct upright slow trials, we trained five separate multinomial logistic regression classifiers, one for each image category (one-vs.-rest; see Methods for details; cf.55). fMRI data were masked by a gray-matter-restricted region of interest (ROI) of occipito-temporal cortex, known to be related to visual object processing (11,162 voxels in the masks on average; cf.55,58,59,60). Spatial patterns associated with image categories indicated a mix of overlapping and non-overlapping sets of voxels, and average correlations between the mean voxel patterns were negative (see SI). We accounted for hemodynamic lag by extracting fMRI data acquired 3.75–5 s after stimulus onset (corresponding to the fourth repetition time (TR), see Methods). Cross-validated (leave-one-run-out) classification accuracy was on average 69.22% (SD = 11.18%; t(35) = 26.41, 95% CI [66.07, + ∞], p < 0.001, compared to chance (20%); d = 4.40; Fig. 2a). In order to examine the sensitivity of the classifiers to pattern activation time courses, we applied them to seven TRs following stimulus onset on each trial. This analysis confirmed delayed and distinct increases in the estimated probability of the true stimulus class given the data, peaking at the fourth TR after stimulus onset, as expected, given that the classifiers were trained on data from the fourth TR following stimulus onset (Fig. 2b). The peak in probability for the true stimulus shown on the corresponding trial was significantly higher than the mean probability of all other stimuli at that time point (ts ≥ 17.95, ps < 0.001, ds ≥ 2.99; Bonferroni-corrected). Decoding in an anatomical ROI of the hippocampus did not surpass the chance level (decoding accuracy: mean (M) = 20.52%, SD = 1.49%; t35 = 2.10, 95% CI [20.02, 21.03], p = 0.05, compared to chance (20%), d = 0.35; using the same decoding approach, see SI for details).

a Cross-validated classification accuracy in decoding the five unique visual objects in occipito-temporal data during task performance (in %; N = 36, t(35) = 26.41, 95% CI [66.07, + ∞], p < 0.001, d = 4.40, one one-sided one-sample t-test, no multiple comparisons). Chance level is 20% (dashed line). Each dot corresponds to averaged data from one participant. Error bar represents ±1 SEM. b Time courses (in TRs from stimulus onset) of probabilistic classification evidence (in %) for all five stimulus classes. Substantial delayed and extended probability increases for the stimulus presented (black lines) on a given trial (gray panels) were found. Each line represents one participant (N = 36, ts ≥ 17.95, ps < 0.001, ds ≥ 2.99, 35 two-sided two-sample t-tests, Bonferroni-corrected). c Average probabilistic classifier response for the five stimulus classes (gray lines) and fitted sine-wave response model using averaged parameters (black line). d Illustration of sinusoidal response functions following two neural events (blue and red lines) time-shifted by delta seconds (dashed horizontal line). The resulting difference between event probabilities (black line) establishes a forward (blue area) and backward (red area) time period, split into early and late phases. The sine-wave approximation without flattened tails is shown in gray. e Probability differences between two time-shifted events predicted by the sinusoidal response functions depending on the event delays (delta) as they occurred in the five different sequence speed conditions (colors), based on Eq. (6). All statistics have been derived from data of N = 36 human participants who participated in one experiment. Source data are provided as a Source Data file.

Single event and event sequence modeling

The data shown in Fig. 2b highlight that multivariate decoding time courses are delayed and sustained, similar to single-voxel hemodynamics. We captured these dynamics elicited by single events by fitting a sine-based response function to the time courses on slow trials (a single sine wave flattened after one cycle, with parameters for amplitude A, response duration λ, onset delay d, and baseline b; Fig. 2c and Supplementary Fig. 4; see Methods). Based on this fit to single events, we derived expectations for probabilistic time courses during sequential events. The sequentiality analyses reported below essentially quantify how well successive activation patterns can be differentiated from one another depending on the speed of stimulus sequences. We therefore considered two time-shifted response functions and derived the magnitude and time course of differences between them. Based on the sinusoidal nature of the response function, the time course of this difference can be approximated by a single sine wave with duration λδ = λ + δ, where δ is the time between events and λ is the average fitted single event duration, here λ = 5.24 TRs (see Eqs. (4) and (5), Methods). This average parameter was used for all further analyses (Fig. 2c, d; see Methods). In this model, the amplitude is proportional to the time shift between events (until time shifts become larger than the time-to-peak of the response function). Consequently, after an onset delay (d = 0.56 TRs), the difference in probability of two time-shifted events is expected to be positive for the duration of half a cycle, i.e., 0.5λδ = 0.5(5.24 + δ) TRs, and negative for the same period thereafter. Simply put, this means that the strength of overlapping activations will initially be ordered forward, in the same way as the sequence, i.e., earlier items will be activated stronger. In a later period, however, this will reverse and result in backwards ordering, i.e., earlier items will be activated less. In summary, three predictions therefore arise from this model: (1) the first event will dominate the signal in earlier TRs, and activation strengths will be proportional to the true event order during the sequential process; (2) in later TRs, the last sequence element will dominate the signal, and the activation strengths will be ordered backwards; and (3) the duration and strength of these two effects will depend on the fitted response duration and the timing of the stimuli as specified above (Fig. 2e and Eqs. (1)–(5); see Methods). For sequences with more than two items (as in sequence trials, see below), δ is defined as the interval between the onsets of the first and last sequence item. To reflect the relation between the true order and the activation strength, we henceforth term the above-mentioned early and late TRs as the forward and backward periods, and consider all results below either separately for these phases, or for both relevant periods combined (calculating periods depending on the timings of image sequences and rounding TRs, see Methods).

Detecting sequentiality in fMRI patterns following fast and slow neural event sequences

Our first major aim was to test detection of sequential order of fast neural events with fMRI. We therefore investigated the above-mentioned sequence trials in which participants viewed a series of five unique images at different speeds (Fig. 1b). Sequence speed was manipulated by leaving either 32, 64, 128, 512, or 2048 ms between pictures, while images were always presented briefly (100 ms per image, total sequence duration 0.628–8.692 s). Note, that we refer to the inter-stimulus interval (ISI) as “sequence speed” (see Fig. 1d). Sequences always contained each image exactly once. Every participant experienced 15 randomly selected image orders that ensured that each image appeared equally often at the first and last position of the sequence (all 120 possible orders counterbalanced across participants). The task required participants to indicate the serial position of a verbally cued image 16 s after the first image was presented. This delay between visual events and response (roughly spanning 13 TRs; see x-axes in Fig. 3a, b) allowed us to measure sequence-related fMRI signals without interference from following trials, while the upcoming question did not necessitate memorization of the sequence during the delay period. Performance was high even in the fastest sequence trials (32 ms: M = 88.33%, SD = 7.70, t35 = 29.85, 95% CI [86.16, + ∞], p < 0.001 compared to chance (50%), d = 4.98), and only slightly reduced compared to the slowest condition (2048 ms: M = 93.70%, SD = 7.96, t35 = 32.95, 95% CI [91.46, + ∞], p < 0.001 compared to chance (50%), d = 5.49; Fig. 1e and Supplementary Fig. 1d).

a Time courses (TRs from sequence onset) of classifier probabilities (%) per event (colors) and sequence speed (panels). Forward (blue) and backward (red) periods shaded as in Fig. 2d. b Time courses of mean regression slopes between event position and probability for each speed (colors). Positive/negative values indicate forward/backward sequentiality, respectively. c Mean slope coefficients for each speed (colors) and period (forward vs. backward; N = 36, ts ≥ 2.85, ps ≤ 0.009, ds ≥ 0.47 (significant tests only), ten two-sided one-sample t-tests against zero, FDR-corrected). Asterisks indicate significant differences from baseline. d Between-participant correlation between predicted (Fig. 2e, Eq. (6)) and observed (b) time courses of mean regression slopes (13 TRs per correlation, Pearson’s rs ≥ 0.81, ps < 0.001). Each dot represents one TR. e Mean within-participant correlations between predicted and observed slopes as in (d) (N = 36, mean Pearson’s rs ≥ 0.23, ts ≥ 3.76, ps ≤ 0.001, compared to zero, ds ≥ 0.63, FDR-corrected). f Time courses of mean event position for each speed, as in (b). g Mean event position for each period and speed, as in (c) (N = 36, ts ≥ 4.78, ps < 0.001, ds ≥ 0.75 (significant tests only), ten two-sided one-sample t-tests against baseline, FDR-corrected). h Mean step sizes of early and late transitions for each period and speed (N = 36, ts ≥ 2.88, ps ≤ 0.006, ds ≥ 0.48 (significant tests only), ten two-sided one-sample t-tests against zero, FDR-corrected). Asterisks indicate differences between periods, otherwise as in (c). Each dot represents data of one participant. Error bars/shaded areas represent ±1 SEM. All statistics have been derived from data of N = 36 human participants who participated in one experiment. Effect sizes indicated by Cohen’s d. Asterisks indicate p < 0.05, FDR-corrected. 1 TR = 1.25 s. Source data are provided as a Source Data file.

We investigated whether sequence order was detectable from the relative pattern activation strength within a single measurement. Examining the time courses of probabilistic classifier evidence during sequence trials (Fig. 3a) showed that the time delay between events was indeed reflected in sustained within-TR ordering of probabilities in all speed conditions. Specifically, immediately after sequence onset, the first element (red line) had the highest probability and the last element (blue line) had the lowest probability. This pattern reversed afterwards, following the forward and backward dynamics that were predicted by the time-shifted response functions (Fig. 2d; forward and backward periods adjusted to sequence speed, see above and Methods). A TR-wise linear regression between the serial positions of the images and their probabilities confirmed this impression. In all speed conditions, the mean slope coefficients initially increased above zero (reflecting higher probabilities of earlier compared to later items) and decreased below zero afterwards (Fig. 3b and Supplementary Fig. 6a). Considering mean regression coefficients during the predicted forward and backward periods, we found significant forward ordering in the forward period at ISIs of 128, 512, and 2048 ms (ts ≥ 2.85, ps ≤ 0.009, ds ≥ 0.47) and significant backward ordering in the backward period in all speed conditions (ts ≥ 3.89, ps < 0.001, ds ≥ 0.65, FDR-corrected; Fig. 3c). Notably, the observed time course of regression slopes on sequence trials (Fig. 3b) closely matched the time course predicted by our modeling approach (Fig. 2d), as indicated by strong correlations for all speed conditions between model predictions and the averaged time courses (Fig. 3d; Pearson’s rs ≥ 0.81, ps < 0.001) as well as significant within-participant correlations (Fig. 3e; mean Pearson’s rs ≥ 0.23, ts ≥ 3.76, ps < 0.001, compared to zero, ds ≥ 0.63, FDR-corrected).

Choosing a different index of association like rank correlation coefficients (Supplementary Figs. 5a, b and 6c) or the mean step size between probability-ordered events within TRs (Supplementary Figs. 5c, d and 6d) produced qualitatively similar results (for details, see SI). Removing the sequence item with the highest probability at every TR also resulted in similar effects, with backward sequentiality remaining significant at all speeds (p ≤ 0.002) except the 32 and 128 ms conditions (p ≥ 0.20), and forward sequentiality still being evident at speeds of 512 and 2048 ms (p ≤ 0.004; Supplementary Fig. 7a, b). To identify the drivers of the apparent asymmetry in detecting forward and backward sequentiality, we ran two additional control analyses and either removed the probability of the first or the last sequence item (forward and backward periods adjusted accordingly). Removal of the first sequence item had little impact on sequentiality detection (Supplementary Fig. 7c, d and SI), but removing the last sequence item markedly affected the results such that significant forward and backward sequentiality was only evident at speeds of 512 and 2048 ms (Supplementary Fig. 7e, f and SI).

Next, we investigated evidence of pattern sequentiality across successive measurements, similar to Schuck and Niv54. Specifically, for each TR we only considered the decoded image with the highest probability and asked whether earlier images were decoded primarily in earlier TRs, and whether later images were primarily decoded in later TRs. In line with this prediction, the average serial position fluctuated in a similar manner as the regression coefficients, with a tendency of early positions to be decoded in early TRs, and later positions in later TRs (Fig. 3f). The average serial position of the decoded images was therefore significantly different between the predicted forward and backward period at all sequence speeds (all ps < 0.001, Fig. 3g, Supplementary Fig. 6d). Compared to baseline (mean serial position of 3), the average serial position during the forward period was significantly lower for speeds of 512 and 2048 ms (all ps < 0.001). The average decoded serial position at later time points was significantly higher compared to baseline in all speed conditions, including the 32 ms condition (all ps < 0.001). Thus, earlier images were decoded earlier after sequence onset and later images later, as expected.

This sequential progression through the involved sequence elements had implications for transitions between consecutively decoded events. The transitions will be a direct function of the slope of the average decoded position shown in Fig. 3f. When the slope is negative, the steps between successive sequence items are backward and reflect the transition from a later position to an earlier position. When the slope is positive, the steps are forward, reflecting a progression from an earlier event position to a later event position. This can be verified by computing the step sizes between consecutively decoded serial events as in Schuck and Niv54. For example, observing a 2 → 4 transition of decoded events in consecutive TRs would correspond to a forward step of size +2, while a 3 → 2 transition would reflect a backward step of size −1. As can be seen from Fig. 3f, both the early and late phase of the response (see phases in Fig. 2d) included periods with a negative and a positive slope, in line with our predictions (formally, the prediction can be obtained by taking the derivative with respect to time of Eq. (6), see Methods, i.e., the function shown in Fig. 2e). We therefore considered the periods with a positive and negative position slope separately for the early and late phase. As expected, the early transitions were mainly forward during the period of a positive slope as compared to the negative slope periods for speed conditions of 512 and 2048 ms (ps ≤ 0.01, Fig. 3h). Similarly, the late transitions were also forward and backward during the positive and negative slope periods, respectively, and differed in all speed conditions (ps ≤ 0.01, Fig. 3h), except the 64 and 128 ms conditions (p = 0.12 and p = 0.10; FDR-corrected). This analysis suggests that transitions between decoded items reflect the ordered progression from early to late and then from late to early sequence events, even when events were separated only by tens of milliseconds.

Detecting sequence elements: asymmetries and interference effects

We next turned to our second main question, asking whether we can detect which patterns were part of a fast sequence and which were not. One important reason why detecting which patterns were activated during sequence events might be more difficult than in a standard setting is that co-activation of multiple patterns close in time could lead to interference. We therefore investigate such interference in detail below.

We analyzed classification time courses in repetition trials, in which only two out of the five possible images were shown. One of the two images was repeated, while the other one was shown only once. This setup allowed us to study to what extent another activation (the repeated image) can interfere with the detection of a brief activation pattern of interest (the image shown only once). The repeating image was shown eight times, which created maximally adverse effects for the detection of the single image. To ask if detection of brief activations is differently affected by events occurring before versus after the single event, we varied whether the single item was preceded or followed by the repeated item. We pose this question because the backward effects were consistently larger than forward effects in our sequentiality analyses reported above (Fig. 3c), suggesting asymmetric detection sensitivity. This implies that one briefly presented item at the end of a sequence will be easier to detect than a briefly presented item at the beginning of a sequence, even though both were equally close in time to another strong activation signal. To test this idea, we considered the two order conditions described above. We will term the case in which the first image was shown briefly once and followed immediately by eight repetitions of a second image the forward interference condition, because the forward phase of the sequential responses suffers from interference. Correspondingly, trials in which the first image was repeated eight times and the second image was shown once will be termed the backward interference condition. In all cases, images were separated by only 32 ms. Participants were kept attentive by the same cover task used in sequence trials (Fig. 1c). Average behavioral accuracy was high on repetition trials (M = 73.46%, SD = 9.71%; Fig. 1f and Supplementary Fig. 1a) and clearly differed from a 50% chance level (t(35) = 14.50, 95% CI [70.72, + ∞], p < 0.001, d = 2.42). Splitting up performance into forward and backward interference trials showed performance above chance level in both conditions (M = 82.22% and M = 63.33%, respectively, ts ≥ 2.94, ps ≤ 0.003, ds ≥ 0.49, Fig. 1f).

As before, we applied the classifiers trained on slow trials to the data acquired in repetition trials and obtained the estimated probability of every class given the data for each TR (Fig. 4a and Supplementary Fig. 9). The expected relevant time period was determined to be from TRs 2 to 7 and used in all analyses (see rectangular areas in Fig. 4a).

a Time courses (in TRs from sequence onset) of probabilistic classifier evidence (in %) in repetition trials, color-coded by event type (first, second and the three remaining non-sequence items, see legend). Data shown separately for forward (left) and backward (right) interference conditions. Gray background indicates relevant time period independently inferred from response functions (Fig. 2d). Shaded areas represent ±1 SEM. 1 TR = 1.25 s. b Mean probability of event types averaged across all TRs in the relevant time period, as in (a). Each dot represents one participant, the probability density of the data is shown as rain cloud plots (cf.141). Boxplots indicate the median and interquartile range (IQR, i.e., distance between the first and third quartiles). The lower and upper hinges correspond to the first and third quartiles (the 25th and 75th percentiles). The upper whisker extends from the hinge to the largest value no further than 1.5* IQR from the hinge. The lower whisker extends from the hinge to the smallest value at most 1.5* IQR of the hinge. The diamond shapes show the sample mean and error bars indicate ±1 SEM (N = 36, ts ≥ 3.31, ps ≤ 0.006, LME model with post hoc Tukey’s honest significant difference (HSD) tests). c Average probability of event types, separately for forward/backward conditions as in (a), plots as in (b) (N = 36, ts ≥ 4.14, ps < 0.001, LME model with post hoc Tukey’s HSD tests). d Mean trial-wise proportion of each transition type, separately for forward/backward conditions, as in (a) (N = 36, ts ≥ 4.64, ps < 0.001, four two-sided paired t-tests, Bonferroni-corrected). e Transition matrix of decoded images indicating mean proportions per trial, separately for forward/backward conditions, as in (a). Transition types highlighted in colors (see legend). All statistics have been derived from data of N = 36 human participants who participated in one experiment. Source data are provided as a Source Data file.

We first asked whether our classifiers indicated that the two events that were part of the sequence were more likely decoded than items that were not part of the sequence. Indeed, the event types (first, second, non-sequence) had significantly different mean decoding probabilities, with sequence items having a higher probability (first: M = 20.19%; second: M = 24.78%) compared to non-sequence items (M = 7.72%; both ps < 0.001, corrected; main effect: F2,57.78 = 110.13, p < 0.001; Fig. 4b). Moreover, the probability of decoding within-sequence items depended on the condition and whether the item was repeated or not. Considering both interference conditions (forward/backward) in the same analysis revealed a main effect of condition, F2,41.64 = 146.15, p < 0.001, as well as an interaction between condition and whether the item was repeated, F2,140.00 = 122.59, p < 0.001. This indicated that the forward phase suffered from much stronger interference than the backward phase. In the forward interference condition, the repeated second event had an approximately 18% higher probability than the single first event (31.55% vs. 13.50%, p < 0.001). In the backward interference condition, the repeated first event had only 9% higher probability than the single second event (26.87% vs. 18.00%, p < 0.001, corrected). This means that the item shown only once was easier to detect when it followed a sustained activation of a different pattern, compared to when it preceded an interfering activation (Fig. 4c). We found no main effect of repetition, p = 0.91 (Fig. 4c).

Importantly, however, both sequence elements still differed from non-sequence items even under conditions of interference (forward: 7.75% and backward: 7.69%, respectively, all ps < 0.001, corrected), indicating that sequence element detection remains possible under such circumstances. Using data from all TRs revealed qualitatively similar significant effects (p ≤ 0.04 for all but one test after correction, see SI). Repeating all analyses using proportions of decoded classes (the class with the maximum probability was considered decoded at every TR), or considering all repetition trial conditions, also revealed qualitatively similar results. Thus, brief events can be detected despite significant interference.

We next asked which implications these findings have for the observed pattern transitions (cf.54). To this end, we analyzed the trial-wise proportions of transitions between consecutively decoded events, and asked whether forward transitions between sequence items were more likely than transitions between a sequence and a non-sequence item (outward transitions) or between two non-sequence items (outside transitions; for details, see Methods). This analysis revealed that forward transitions (5.89%) were more frequent than both outward transitions (2.46%), and outside transitions (1.04%, both ps < 0.001, ts ≥ 4.64, Bonferroni-corrected; Fig. 4d) in the forward interference condition. The same was true in the backward interference condition (forward transitions: 7.22%; outward transitions: 2.67%; outside transitions: 1.06%, all ps < 0.001, ts ≥ 5.14). The full transition matrix is shown in Fig. 4e. Repetitions of the first or second item are shown on the upper two diagonal elements (with all consecutive repetitions of items labeled repetition in Fig. 4e), and were not considered in this analysis.

Together, the results from repetition trials indicated that: (1) within-sequence items could be clearly detected despite interference from other sequence items; (2) event detection was asymmetric, such that items occurring at the end of sequences can be detected more easily than those occurring at the beginning; and (3) the detection of sequence items made it possible to observe within-sequence transitions between decoded items.

Note that our analyses focused on the two extreme cases of repetition trials with one versus eight repetitions of the first or second item while the experiment also included repetition trials with intermediate levels of repetitions (see SI). Specifically, other repetition trials included cases in which the second item began to appear at each possible position from 2 to 9. The other repetition trials could therefore include, for instance, three repetitions of the first and six repetitions of the second image, or four repetitions of the first and five repetitions of the second item, etc. The results reported in the SI indicate that effects in these trials show smooth transition between the extremes shown in the main manuscript.

Detecting sparse sequence events with lower signal-to-noise ratio (SNR)

The results above indicate that detection of fast sequences is possible if they are under experimental control. In most applications of our method, however, this will not be the case. When detecting replay, for instance, sequential events will occur spontaneously during a period of noise. We therefore next assessed the usefulness of our method under such circumstances.

We first characterized the behavior of sequence detection metrics during periods of noise. To this end, we applied the logistic regression classifiers to fMRI data acquired from the same participants (n = 32 out of 36) during a 5-min (233 TRs) resting period before any task exposure. Classifier probabilities during rest fluctuated wildly, often with a single category having a high probability, while all other categories had probabilities close to zero. During fast sequence periods, in contrast, the near-simultaneous activation of stimulus-driven activity led to reduced probabilities, such that category probabilities tended to be closer together and less extreme. In consequence, the average standard deviation of the probabilities per TR during rest and slow (2048 ms) sequence periods was higher (M = 0.23 and M = 0.22, respectively) compared to the average standard deviation in the fast sequence condition (32 ms; M = 0.20; ts ≥ 4.17; ps < 0.001; ds ≥ 0.74; Fig. 5a).

a Mean standard deviation of classifier probabilities in rest and sequence data (n = 32, ts ≥ 4.17, ps < 0.001, ds ≥ 0.74, two two-sided paired t-tests comparing rest and 2048 ms conditions against 32 ms condition, FDR-corrected). b Mean absolute regression slopes, as in (a) (n = 32, ts ≥ 4.64, ps < 0.001, ds ≥ 0.82, two two-sided paired t-tests comparing rest and 2048 ms conditions against 32 ms condition, FDR-corrected). c Time courses of the regression slopes (signed values, not magnitudes) in rest and sequence data. Vertical lines indicate trial boundaries. d Normalized frequency spectra of regression slopes in rest and sequence data. Annotations indicate predicted frequencies based on Eq. (5). e Mean power of predicted frequencies in rest and sequence data, as in (a). Each dot represents data from one participant (n = 32, ts ≥ 3.10, ps ≤ 0.002, two-sided paired t-tests, FDR-corrected). f Mean standard deviation of rest data including a varying number of SNR-adjusted sequence events (fast or slow). Dashed line indicates indifference from sequence-free rest (n = 32, ts ≥ 2.22, ps ≤ 0.04, 30 two-sided one-sample t-tests against chance, FDR-corrected). g Base-20 log-transformed p values of t-tests comparing the standard deviation of probabilities in (f) with sequence-free rest. Dashed line indicates p = 0.05 (N = 32, ts ≥ 2.22, ps ≤ 0.04, 30 two-sided one-sample t-tests against chance, FDR-corrected). h Frequency spectra of regression slopes in SNR-adjusted sequence-containing rest relative to sequence-free rest. Rectangles indicate predicted frequencies, as in (d). i Mean relative power of predicted frequencies in SNR-adjusted sequence-containing rest (n = 32, ts ≥ 2.28, ps ≤ 0.03, two-sided t-tests against baseline, FDR-corrected). Shaded areas/error bars represent ±1 SEM. All statistics have been derived from data of n = 32 human participants who participated in one experiment. 1 TR = 1.25 s. Source data are provided as a Source Data file.

As before, we fitted regression coefficients through the classifier probabilities of the rest data and, for comparison, concatenated data from the 32 and 2048 ms sequence trials (Fig. 5b, c). As predicted by our modeling approach (Fig. 2e), and shown in the previous section (Fig. 3b), the time courses of regression coefficients in the sequence conditions were characterized by rhythmic fluctuations whose frequency and amplitude differed between speed conditions (Fig. 5c). To quantify the magnitude of this effect, we calculated frequency spectra of the time courses of the regression coefficients in rest and concatenated sequence data (Fig. 5d; using the Lomb-Scargle method, e.g.61 to account for potential artifacts due to data concatenation, see Methods). This analysis revealed that frequency spectra of the sequence data differed from rest frequency spectra in a manner that depended on the speed condition (Fig. 5d, e). As foreshadowed by our model, power differences appeared most pronounced in the predicted frequency ranges (Fig. 5e; ps ≤ 0.002; see Eq. (5) and Methods). Specifically, when the 32 ms condition was considered, the analyses revealed an increased power around 0.17 Hz, which corresponds to the frequency predicted to occur by our model. Data from the 2048 ms condition, in contrast, exhibited an increased power around 0.07 Hz, as predicted.

Finally, we asked whether these differences would persist if (a) only few sequence events occurred during a 5-min rest period, while (b) their onset was unknown, and (c) their SNR was lower. To this end, we synthetically generated data containing a variable number of sequence events that were inserted at random times into the resting-state data acquired before any task exposure. Specifically, we inserted between 1 and 6 sequence events into the rest period by blending rest data with TRs recorded in fast (32 ms) or slow (2048 ms) sequence trials (12 TRs per trial, random selection of sequence trials and insertion of time points, without replacement). To account for possible SNR reductions, the inserted probability time courses were multiplied by a factor κ of \(\frac{4}{5}\), \(\frac{1}{2}\), \(\frac{1}{4}\), \(\frac{1}{8}\), or 0 and added to the probability time courses of the inversely scaled (1−κ) resting-state data. Effectively, this led to a step-wise reduction of the inserted sequence signal from 80% to 0%, relative to the SNR obtained in the experimental conditions reported above. Thus, here we use the term SNR to describe the relative mixing proportion of (a) data from the task, which contain sequential signal, with (b) data from the pre-task resting-state session, which contain only noise. Note that this is different from the common definition of SNR in univariate fMRI as the ratio of average signal to standard deviation over time.

As expected, differences in the above-mentioned standard deviation of the probability gradually increased with both the SNR level and the number of inserted sequence events when either fast or slow sequences were inserted (Fig. 5f). In our case, this led significant differences to emerge with one insert and an SNR reduced to 12.5% in both the fast and slow conditions (Fig. 5g; comparing against zero, the expectation of no difference with a conventional false-positive rate α of 5%; all ps FDR-corrected).

Importantly, the presence of sequence events was also reflected in the frequency spectrum of the regression coefficients. Inserting fast event sequences into rest led to power increases in the frequency range indicative of 32 ms events (~0.17 Hz, Fig. 5h, i, left panel), in line with our findings above. This effect again got stronger with higher SNR levels and more sequence events. Inserting slow (2048 ms) sequence events into the rest period showed a markedly different frequency spectrum, with an increase around the frequency predicted for this speed (~0.07 Hz, Fig 5h, i, right panel). Comparing the power around the predicted frequency (±0.01 Hz) of both speed conditions indicated significant increases in power compared to sequence-free rest when six sequence events were inserted and the SNR was reduced to 80% (ts ≥ 2.28, ps ≤ 0.03, ds ≥ 0.40). Hence, the presence of spontaneously occurring sub-second sequences during rest can be detected in the frequency spectrum of our sequentiality measure, and distinguished from slower second-scale sequences that might reflect conscious thinking.

Detecting fast reactivations in post-task resting-state data

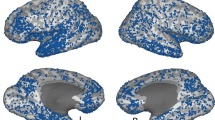

Finally, we asked whether our task elicited spontaneous replay of image sequences in object-selective brain areas during rest after the task. Based on the above findings, we reasoned that potentially reactivated sequences should become apparent in a frequency spectrum analysis. We therefore applied this analysis to resting-state data recorded after participants performed the task. Crucially, because the true sequence of potential replay events was not known, we repeated the analyses for all possible image orders, averaged the resulting frequency spectra, and compared the results to the same analysis performed on the pre-task rest session (see Methods). As shown in Fig. 6, the frequency spectrum analyses revealed a significant increase specifically in the power spectrum of the high frequency range (Fig. 6a, F1,94.99 = 6.17, p = 0.02 when testing pre- versus post-task data at the predicted frequency of 0.17 Hz, as before). Directly comparing pre- versus post-task rest revealed a large power difference at 0.17 Hz, indicative of replayed sequence speeds of 32 ms, as in our fastest sequence speed condition (Fig. 6b). In addition, we found a second peak at around 0.04 Hz, indicating long activations of individual items of several seconds. Thus, post-task rest seemed to be characterized by fast sequential reactivations as well as longer constant activations. We next asked whether specific sequences that had been experienced slightly more often by participants were more likely to be reactivated than less frequent sequences. During slow trials, all participants experienced all 120 possible sequential combinations of images. But in addition, each participant experienced only a subset of 15 image orders during the sequence trials. Hence, image orders experienced in sequence trials were slightly more frequent and we asked if they were reactivated more strongly during the post-task resting-state session. This was not the case. A power increase in the fast frequency range when comparing pre- to post-task rest was found for both sets of sequences, i.e., the 15 image orders that occurred in sequence and slow trials, and the 105 that occurred only in slow trials (Fig. 6c, ps ≥ 0.13). In summary, applying the frequency spectrum analyses to post-task resting-state therefore suggests that (1) task stimuli are reactivated during post-task rest, and (2) this reactivation happens fast, but (3) appears unspecific and not directly related to the sequences presented more frequently to participants during the task.

a Normalized frequency spectra of regression slopes in pre- and post-task resting-state data. Annotations indicate predicted frequencies based on Eq. (5). Shaded areas represent ±1 SEM. b Relative power (difference between pre- and post-task rest) of normalized frequency spectra shown in (a) (n = 32, F1,94.99 = 6.17, p = 0.02, LME model comparing pre- vs. post-task resting-state data at 0.17 Hz). c Mean power at predicted fast frequency (0.17 Hz) in pre- and post-task resting-state data for less and more frequent stimulus sequences (n = 32, ts ≥ 4.17, ps < 0.001, ds ≥ 0.74, two two-sided paired t-tests comparing rest and 2048 ms conditions against 32 ms condition, FDR-corrected). Each dot corresponds to averaged data from one participant. Error bars represent ±1 SEM. All statistics have been derived from data of n = 32 human participants who participated in one experiment. Source data are provided as a Source Data file.

Discussion

Here, we demonstrated that BOLD fMRI in combination with multivariate probabilistic decoding can be used to detect sub-second sequences of closely timed neural events non-invasively in humans. We combined probabilistic multivariate pattern analysis with time course modeling and investigated human brain activity recorded following the presentation of sequences of visual objects at varying speeds, as well as activity during rest. In the fastest case a sequence of five images was displayed within 628 ms (32 ms between pictures). Stimulus sequences were not masked. Even when using a TR of 1.25 s, achievable with conventional multi-band (MB) echo-planar imaging (EPI), the image order could be detected from activity patterns in visual and ventral temporal cortex. Detection of briefly presented sequence items was also possible when their activation was affected by interfering signals from a preceding or subsequent sequence item and could be differentiated from images that were not part of the sequence. Our results withstood several robustness tests, and also indicated that detection is biased to most strongly reflect the last event of a sequence. Analyses of augmented resting data, in which neural event sequences occurred rarely, at unknown times, and with reduced signal strength, showed that our method could detect sub-second sequences even under such adverse conditions. Moreover, we showed that frequency spectrum analyses can be used to distinguish sub-second from supra-second sequences under such circumstances. Our approach therefore promises to expand the scope of BOLD fMRI to fast, sequential neural representations by extending multivariate decoding approaches into the temporal domain, in line with our previous findings54.

Importantly, we applied this method not only to experimentally controlled data, but also used it to ask whether task experience might elicit spontaneous replay of sequential stimuli in post-task resting-state data, as suggested by previous studies, for reviews, see, e.g.33,35,62,63. Indeed, our results indicate that such reactivations occur during post-task rest and can be detected using the proposed analysis. Our analyses suggest that the reactivated sequences were fast and occurred at replay-like speeds, similar to the fastest sequence trials used in our task (32 ms between activations). Evidence for fast sequential replay was accompanied by a relative increase in power in the slower frequency range (peaking at 0.04 Hz). This could reflect an increase of slower long-lasting activations, possibly reflecting conscious thinking about the task. This supports our conclusion that the frequency spectrum of the sequentiality metric is a useful approach to detect fast replay and to distinguish it from slow activations. Our analysis did not find any evidence that only those sequences were replayed that were more frequent than others or that were presented at a fast speed during the task. Rather, our results suggest that replay seemed to equally involve all stimulus orders. However, it is important to note that our task was not optimized to elicit replay of particular sequences at all. In fact, the more frequent sequences were arranged such that the same stimuli appeared equally often at the first and last position, which makes it difficult to distinguish them from other sequences.

Of note, replay during post-task rest reflected cortical reactivations in occipito-temporal brain regions. Given that we were not able to decode on-task stimulus representations in the hippocampus, it remains unclear if reactivations occurred independently from (task-related) involvement of the hippocampus or if we were simply not able to detect concurrent reactivation in the hippocampus. This possibility of hippocampus-independent cortical reactivations raises important questions regarding the functional significance of such events. One potential reason why we found no hippocampus involvement could be that the oddball detection paradigm used for slow trials to train the classifiers involved no mnemonic task component, and therefore was not suitable to activate the hippocampus. Our previous work54 has already demonstrated the success of our methods in hippocampal data. Taken together, our results indicate that our method allows the uncovering of fast task-related reactivations during rest and highlight the importance of task design for detecting replay in humans using fMRI.

This contrasts with previous fMRI studies in humans (for reviews see, e.g.24,64) that measured non-sequential reactivation as increased similarity of multi-voxel patterns during experience and extended post-encoding rest compared to pre-encoding baseline49,50,51,53,65,66,67,68,69 or functional connectivity of hippocampal, cortical, and dopaminergic brain structures that support post-encoding systems-level memory consolidation66,67,68,70,71,72. In the current study we open the path toward a better understanding of the speed and sequential nature of the observed phenomena.

The fastest sequences studied in our experiments lasted 628 ms and were therefore longer than the average hippocampal replay event of about 300 ms, e.g.17. Yet, several factors support the idea that our method is still relevant for the study of replay. First, previous studies have shown that a significant proportion of replay events indeed lasts much longer than 300 ms. Davidson et al.7 report sequence lengths of up to 1000 ms and the data by Kaefer et al.17 indicate that about 20% of events in the hippocampus are longer than 500 ms. In addition, the median duration of replay events in medial prefrontal cortex (PFC) reported in Kaefer et al.17 was 740 ms. This indicates that a significant proportion of replay events will be covered by our method. Second, our ISI was as fast as 32 ms, which corresponds to the time lag between activations reported in magnetoencephalography (MEG) studies (e.g.,47) and therefore might capture the important aspect of temporal separation between activation patterns well. Third, while effect sizes showed a pronounced decrease when comparing the slower conditions (2048 ms: 3.13; 512 ms: 1.36; 128 ms: 0.75, for the backwards effect of regression slopes, Fig. 3c, effect sizes indicate Cohen’s d), accelerating sequence speeds beyond 128 ms seemed not to be associated with a comparable decrease in effect sizes (64 ms: 0.65, 32 ms: 1.00). This indicates that the sensitivity of our methods for even faster event sequences might not be catastrophically diminished. Fourth, the sequence duration of 628 ms was to a large extent due to the stimulus duration of 100 ms. Evidence from previous work using electroencephalography (EEG) suggests that the neural response to successive visual stimuli is more strongly influenced by the ISI than the stimulus duration73,74. Hence, we speculate that our methods may also work in cases with shorter pattern activations and thereby overall shorter sequences.

Our results deepen the understanding of our previous findings54 in two ways. First, we provide additional empirical evidence that our sequentiality analyses based on multivariate fMRI pattern classification are indeed sensitive to fast neural event sequences. To this end, we used an experimental setup where the order of sequential events is known—in contrast to analyses of resting-state data in Schuck and Niv54 where the order and speed of event sequences can only be assumed. Second, Schuck and Niv54 observed forward-ordered replay. Our present study clarifies the origins of forward and backward ordering of fMRI activation patterns. We show that probabilistic classifier evidence in earlier TRs reflects the forward order of the sequences while this pattern reverses in later TRs. Importantly, we demonstrate an asymmetry in decoding early versus late sequential events. This can therefore lead fMRI pattern sequences to appear in the reverse order relative to the underlying neural sequences. This represents a crucial insight, given the different functional roles assigned to forward and backward replay (see e.g.33). We note that future research should be careful when interpreting directionality, as the relationship between decoded and true directionality is not straightforward. One approach in this context could be to investigate the order of sequence direction itself. If items appear to be ordered first in direction A, and a few TRs later in direction B, then direction A seems to be the true one. Probabilistic classifiers might prove particularly useful for such analyses as they make it possible to characterize sequential ordering within a single measurement. The origins of this asymmetry are not entirely clear. It seems possible that they reflect the benefits of the last item not being followed by another activation that could impede its detection. A relation to the asymmetric shape of the HRF, to changing HRF variability with time and even to inhibitory retrograde neurotransmitters (e.g.75) cannot be ruled out. Third, we have shown that the interference of activation patterns of fast sequential neural events is stronger for early events compared to late events. Importantly, early events remained detectable despite this interference, demonstrating that our method can detect the elements of a replay event with fMRI despite interference effects. The prominence of the last sequence item implies that the apparent over-occurrence of one particular item might reflect that this item was a frequent start or end point of replayed trajectories. Past research has shown that task aspects, such as goals, heavily influence which items the replayed sequences start or end with76.

In addition, our study introduces important methodological advancements that go beyond our original publication54. We show that the analyses of classifier probabilities provide major statistical improvements compared to analyses focused on the decoded category with the highest classification probability (as in Schuck and Niv54). The key advantage is that probabilistic classifiers provide a continuous metric of classification evidence and thereby allow the detection of sequential ordering within a single measurement (i.e., within a single TR). This results in significant information gain compared to the assessment of sequential ordering that considers only a single label per TR. Moreover, we leverage frequency spectrum analysis in an approach to make inferences about the speed of the sequential neural process. Although the sampling rate (i.e., the TR) of fMRI is usually less than the speed of replay events, frequency spectrum analyses can characterize the speed of fast sequential events during rest. Together, these methodological advances offer insights into previous fMRI studies investigating hippocampal replay in humans, including our own work54.

Additionally, some caveats have to be noted. Our results indicate that the sequentiality in fMRI analyses is mainly influenced by the first and last element of a fast sequence. Given that replay events are often structured by task-relevant features like the start and goal location in a spatial environment (e.g.,76), analyzing the transitions between the corresponding decoded events will offer insights into the content and functional role of fast replay events. Moreover, it is important to keep in mind that the benefits of our experimental setting came at the cost that they also introduced important differences from a replay study in various regards, including the focus on extra-hippocampal activations and sensory stimulation.

Our fMRI-based approach has advantages as well as disadvantages compared to existing EEG and MEG approaches44,46,47. In particular, it seems likely that our method has limited resolution of sequence speed. While we could distinguish between supra- and sub-second sequences, a much finer distinction might prove difficult in practice. Yet, EEG and MEG investigations suggest that the extent of temporal compression of previous experience is an important aspect of replay and other reactivation phenomena45,77,78,79,80. In addition, the differential sensitivity to activity depending on sequence position complicates interpretations of findings, and can lead to statistical aliasing of sequences with the same start and end elements but different elements in the middle. Finally, because a single sequence causes forward and backward ordering of signals, it can be difficult to determine the direction of a hypothesized sequence. One major advantage of fMRI is that it does not suffer from the low sensitivity to hippocampal activity and limited ability to anatomically localize effects that characterizes EEG and MEG. This is particularly important in the case of replay, which is hippocampus-centered but co-occurs with fast neural event sequences in other parts of the brain including primary visual cortex12, auditory cortex15, PFC13,14,16,17,81, entorhinal cortex22,82,83, and ventral striatum84. Importantly, replay events occurring in different brain areas might not be mere copies of each other, but can differ regarding their timing, content, and relevance for cognition, e.g.16,17. Precise characterization of replay events occurring in different anatomical regions is therefore paramount. The present finding of fast and slow reactivations in visual cortex underlines the importance of knowing the anatomical origin of replay events. Because EEG and MEG cannot untangle the co-occurring events and animal research is often restricted to a single recording site, much remains to be understood about the distributed and coordinated nature of replay. One particular problem is that localizer tasks frequently used to train classifiers in MEG studies might only partially reflect hippocampal activity. In fact, our own data here show that simple visual tasks do not elicit reliable hippocampal activation patterns. Thus, EEG or MEG classifiers trained on such data risk to not reflect any hippocampal activity.

Finally, our study provides additional insights for future research. We have shown that the mere fact that detecting which elements were part of a sequence is beneficial if sequences mostly contain a local subset of all possible events. Thus, experimental setups with a larger number of possible events will be insightful. At the same time, a larger number of to-be-decoded events will likely impair baseline classification accuracy, which in turn impairs sequence detection. Researchers should thus take the trade-off between these two aspects into account. Moreover, several other factors could influence the success of future investigations: the sampling rate (the TR); the choice of brain region; and the properties of the resulting HRFs23. Whether an increased sampling rate would be beneficial for the detection of fast event sequences is difficult to predict. First, longer TRs provide better SNR as they allow more time for longitudinal magnetization. In addition, faster sampling will not affect the underlying (slow) HRF dynamics that impede the identification of temporal order of fast neural event sequences. Sampling the activation time courses at a faster rate might not reveal more information about the sequential process under investigation. Whether shorter TRs can make up for the downsides in spatial resolution and SNR therefore seems an empirical question. Moreover, the choice of brain region will impact results only if the stability of the HRF within that brain region is low, whereas between-region differences between HRF parameters might have less impact. But HRF stability is generally high30,85,86,87, and previous research noting this fact has therefore already indicated possibilities of disentangling temporally close events28,29,30,31,88,89. Further, increased spatial resolution might improve detection due to less partial volume averaging of non-activation-related signals. Our approach has shown how multivariate and modeling approaches can help exploit these HRF properties in order to enhance our understanding of the human brain.

Methods

Participants

In all, 40 young and healthy adults were recruited from an internal participant database or through local advertisement and fully completed the experiment. No statistical methods were used to predetermine the sample size but it was chosen to be larger than similar previous neuroimaging studies, e.g.51,52,54. Four participants were excluded from further analysis because their mean behavioral performance was below the 50% chance level in either or both the sequence and repetition trials suggesting that they did not adequately process the visual stimuli used in the task. Please note that this exclusion was based on mean behavioral performance across all conditions of sequence and repetition trials. This means that participants who, for example, performed below chance in only one of the conditions of either the sequence or the repetition trials, but above chance in all other conditions, might still be included in the final sample because their mean behavioral performance across all conditions ended up to be above the level of chance performance. Thus, the final sample consisted of 36 participants (age: M = 24.61 years, SD = 3.77 years, range: 20–35 years, 20 female, 16 male). All participants were screened for magnetic resonance imaging (MRI) eligibility during a telephone screening prior to participation and again at the beginning of each study session according to standard MRI safety guidelines (e.g., asking for metal implants, claustrophobia, etc.). None of the participants reported to have any major physical or mental health problems. All participants were required to be right-handed, to have corrected-to-normal vision, and to speak German fluently. Furthermore, only participants with a head circumference of 58 cm or less could be included in the study. This requirement was necessary as participants’ heads had to fit the MRI head coil together with MRI-compatible headphones that were used during the experimental tasks. The ethics commission of the German Psychological Society (DGPs) approved the study protocol (reference number: NS 012018). All volunteers gave written informed consent prior to the beginning of the experiments. Every participant received 40.00 Euro and a performance-based bonus of up to 7.20 Euro upon completion of the study. None of the participants reported to have any prior experience with the stimuli or the behavioral task.

Task

Stimuli

All stimuli were gray-scale images of a cat, chair, face, house, and shoe taken from Haxby et al.55 with a size of 400 × 400 pixels each, which have been shown to reliably elicit object-specific neural response patterns in several previous studies, e.g.55,58,59,60. Participants received auditory feedback to signal the accuracy of their responses. A high-pitch coin sound confirmed correct responses, whereas a low-pitch buzzer sound signaled incorrect responses. The sounds were the same for all task conditions and were presented immediately after participants entered a response or after the response time had elapsed. Auditory feedback was used to anatomically separate the expected neural activation patterns of visual stimuli and auditory feedback. While auditory feedback is more likely to engage primarily temporal brain regions, visual stimuli are more likely to activate primarily occipital brain regions. We recorded the presentation time stamps of all visual stimuli and confirmed that all experimental components were presented as expected. The task was programmed in MATLAB (version R2012b; Natick, MA, USA; The MathWorks Inc.) using the Psychophysics Toolbox extensions (Psychtoolbox; version 3.0.11)90,91,92 and run on a Windows XP computer with a monitor refresh-rate of 16.7 ms.

Slow trials

The slow trials of the task were designed to elicit object-specific neural response patterns of the presented visual stimuli. The resulting patterns of neural activation were later used to train the classifiers. In order to ensure that participants maintained their attention and processed the stimuli adequately, they were asked to perform an oddball detection task (for a similar approach, see44,47). Specifically, participants were instructed to press a button each time an object was presented upside-down. Participants could answer using either the left or the right response button of an MRI-compatible button box. In contrast to similar approaches, e.g.,44,47, we intentionally did not ask participants for a response on trials with upright stimuli to avoid neural activation patterns of motor regions in our training set which could influence later classification accuracy on the test set.

Participants were rewarded with 3 cents for each oddball (i.e., stimulus presented upside-down) that was correctly identified (i.e., hit) and punished with a deduction of 3 cents for (incorrect) responses (i.e., false alarms) on non-oddball trials (i.e., when stimuli were presented upright). In case participants missed an oddball (i.e., miss), they also missed out on the reward. Auditory feedback (coin and buzzer sound for correct and incorrect responses, respectively) was presented immediately after the response (in case of hits and false alarms) or at the end of the response time limit (in case of misses) using MRI-compatible headphones (VisuaStimDigital, Resonance Technology Company Inc., Northridge, CA, USA). Correct rejections (i.e., no responses to upright stimuli) were not rewarded and were consequently not accompanied by auditory feedback. Together, participants could earn a maximum reward of 3.60 Euro in this task condition.

Across the entire experiment, all five unique images were presented in all possible sequential combinations which resulted in 5! = 120 sequences with each of the five unique visual objects in a different order. Thus, across the entire experiment, participants were shown 120 × 5 = 600 visual objects in total for this task condition. Of all visual objects, 20% were presented upside-down (i.e., 120 oddball stimuli). All unique visual objects were shown upside-down equally often, which resulted in 120/5 = 24 oddballs for each individual visual object category. The order of sequences as well as the appearances of oddballs were randomly shuffled for each participant and across both study sessions.

Each trial (for the trial procedure, see Fig. 1a) started with a waiting period of 3.85 s during which a blank screen was presented. This ITI ensured a sufficient time delay between each slow trial and the preceding trial (either a sequence or a repetition trial). The five visual object stimuli of the current trial were then presented as follows: after the presentation of a short fixation dot for a constant duration of 300 ms, a stimulus was shown for a fixed duration of 500 ms followed by a variable ISI during which a blank screen was presented again. The duration of the ISI for each trial was randomly drawn from a truncated exponential distribution with a mean of 2.5 s and a lower limit of 1 s. We expected that neural activation patterns elicited by the stimuli can be well recorded during this average time period of 3 s (for a similar approach, see55). Behavioral responses were collected during a fixed time period of 1.5 s after each stimulus onset. In case participants missed an oddball target, the buzzer sound (signaling an incorrect response) was presented after the response time limit had elapsed. Only neural activation patterns related to correct trials with upright stimuli were used to train the classifiers. Slow trials were interleaved with sequence and repetition trials such that each of the 120 slow trials was followed by either one of the 75 sequence trials or 45 repetition trials (details on these trial types are given below).

Sequence trials

In the sequence trials of the task, participants were shown sequences of the same five unique visual objects at varying presentation speeds. In total, 15 different sequences were selected for each participant. Sequences were chosen such that each visual object appeared equally often at the first and last position of the sequence. Given five stimuli and 15 sequences, for each object category this was the case for 3 out of the 15 sequences. Furthermore, we ensured that all possible sequences were chosen equally often across all participants. Given 120 possible sequential combinations in total, the sequences were distributed across eight groups of participants. Sequences were randomly assigned to each participant following this pseudo-randomized procedure.

To investigate the influence of sequence presentation speed on the corresponding neural activation patterns, we systematically varied the ISI between consecutive stimuli in the sequence. Specifically, we chose five different speed levels of 32, 64, 128, 512, and 2048 ms, respectively (i.e., all exponents of 2 for good coverage of faster speeds). Each of the 15 sequences per participant was shown at each of the 5 different speed levels. The occurrence of the sequences was randomly shuffled for each participant and across sessions within each participant. This resulted in a total of 75 sequence trials presented to each participant across the entire experiment. To ensure that participants maintained attention to the stimuli during the sequence trials, they were instructed to identify the serial position of a previously cued target object within the shown stimulus sequence and indicate their response after a delay period without visual input.