Abstract

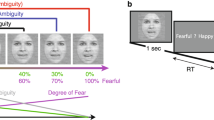

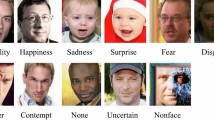

Facial affect analysis aims to create new types of human–computer interactions by enabling computers to better understand a person’s emotional state in order to provide ad hoc help and interactions. Since discrete emotional classes (such as anger, happiness, sadness and so on) are not representative of the full spectrum of emotions displayed by humans on a daily basis, psychologists typically rely on dimensional measures, namely valence (how positive the emotional display is) and arousal (how calming or exciting the emotional display looks like). However, while estimating these values from a face is natural for humans, it is extremely difficult for computer-based systems and automatic estimation of valence and arousal in naturalistic conditions is an open problem. Additionally, the subjectivity of these measures makes it hard to obtain good quality data. Here we introduce a novel deep neural network architecture to analyse facial affect in naturalistic conditions with a high level of accuracy. The proposed network integrates face alignment and jointly estimates both categorical and continuous emotions in a single pass, making it suitable for real-time applications. We test our method on three challenging datasets collected in naturalistic conditions and show that our approach outperforms all previous methods. We also discuss caveats regarding the use of this tool, and ethical aspects that must be considered in its application.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The datasets analysed during the current study are available from the original authors in the AFEW-VA (https://ibug.doc.ic.ac.uk/resources/afew-va-database/), AffectNet (http://mohammadmahoor.com/affectnet/) and SEWA (https://db.sewaproject.eu/) repositories. The list of cleaned images for the validation and test sets of AffectNet employed in this paper are available on the authors’ Github repository (https://github.com/face-analysis/emonet).

Code availability

The pretrained network, testing code and the annotations of the cleaned AffectNet test and validation sets are available at https://github.com/face-analysis/emonet under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International Licence (CC BY-NC-ND).

References

Posner, J., Russell, J. A. & Peterson, B. S. The circumplex model of affect: an integrative approach to affective neuroscience, cognitive development, and psychopathology. Dev. Psychopathol. 17, 715–734 (2005).

Russell, J. A circumplex model of affect. J. Pers. Soc. Psychol. 39, 1161–1178 (1980).

Gunes, H. & Schuller, B. Categorical and dimensional affect analysis in continuous input: current trends and future directions. Image Vision Comput. 31, 120–136 (2013).

Sariyanidi, E., Gunes, H. & Cavallaro, A. Automatic analysis of facial affect: a survey of registration, representation, and recognition. IEEE Trans. Pattern Anal. Mach. Intell. 37, 1113–1133 (2015).

Mollahosseini, A., Hasani, B. & Mahoor, M. H. AffectNet: a database for facial expression, valence, and arousal computing in the wild. IEEE Trans. Affect. Comput. 10, 18–31 (2019).

Kossaifi, J., Tzimiropoulos, G., Todorovic, S. & Pantic, M. AFEW-VA database for valence and arousal estimation in-the-wild. Image Vision Comput. 65, 23–36 (2017).

Kossaifi, J. et al. SEWA DB: a rich database for audio-visual emotion and sentiment research in the wild. IEEE Trans. Pattern Anal. Mach. Intell. https://doi.org/10.1109/TPAMI.2019.2944808 (2019).

He, K., Zhang, X., Ren, S. & Sun, J. Deep residual learning for image recognition. In Proc. IEEE Conference on Computer Vision and Pattern Recognition 770–778 (2016).

Lin, L. I.-K. A concordance correlation coefficient to evaluate reproducibility. Biometrics 45, 255–268 (1989).

Ringeval, F. et al. AV+ EC 2015: The first affect recognition challenge bridging across audio, video, and physiological data. In Proc. 5th International Workshop on Audio/Visual Emotion Challenge (ACM, 2015).

Valstar, M. et al. AVEC 2016: Depression, mood, and emotion recognition workshop and challenge. In Proc. 6th International Workshop on Audio/Visual Emotion Challenge (ACM, 2016).

Ringeval, F. et al. Avec 2017: Real-life depression, and affect recognition workshop and challenge. In Proc. 7th Annual Workshop on Audio/Visual Emotion Challenge (ACM, 2017).

Ringeval, F. et al. Avec 2018 workshop and challenge: Bipolar disorder and cross-cultural affect recognition. In Proc. 2018 on Audio/Visual Emotion Challenge and Workshop (ACM, 2018).

Kollias, D., Cheng, S., Ververas, E., Kotsia, I. & Zafeiriou, S. Deep neural network augmentation: generating faces for affect analysis. Int. J. Comp. Vision 128, 1455–1484 (2020).

Jang, Y., Gunes, H. & Patras, I. Registration-free face-ssd: Single shot analysis of smiles, facial attributes, and affect in the wild. Comput. Vision Image Understanding 182, 17–29 (2019).

Bulat, A. & Tzimiropoulos, G. How far are we from solving the 2D & 3D face alignment problem? (And a dataset of 230,000 3D facial landmarks). In Proc. IEEE International Conference on Computer Vision (2017).

Vaswani, A. et al. Attention is all you need. In Advances in Neural Information Processing Systems 5998–6008 (2017).

Hinton, G., Vinyals, O. & Dean, J. Distilling the knowledge in a neural network. In NIPS Deep Learning and Representation Learning Workshop (NIPS, 2015).

Furlanello, T., Lipton, Z., Tschannen, M., Itti, L. & Anandkumar, A. Born-again neural networks. In International Conference on Machine Learning 1602–1611 (2018).

Mitenkova, A., Kossaifi, J., Panagakis, Y. & Pantic, M. Valence and arousal estimation in-the-wild with tensor methods. In 14th IEEE International Conference on Automatic Face & Gesture Recognition (2019).

Kollias, D. et al. Deep affect prediction in-the-wild: Aff-Wild database and challenge, deep architectures, and beyond. Int. J. Comput. Vision 127, 907–929 (2019).

Russell, J., J.A, B. & Fernandez-Dols, J. Facial and vocal expressions of emotions. Annu. Rev. Psychol. 54, 329–349 (2003).

Grimm, M. & Kroschel, K. in Robust Speech (eds Grimm, M. & Kroschel, K.) Ch. 16 (IntechOpen, 2007).

Marsh, A. A., Kozak, M. N. & Ambady, N. Accurate identification of fear facial expressions predicts prosocial behavior. Emotion 7, 239–251 (2007).

Clark, C. M., Gosselin, F. & Goghari, V. M. Aberrant patterns of visual facial information usage in schizophrenia. J. Abnorm. Psychol. 122, 513–519 (2013).

Kring, A. M. & Elis, O. Emotion deficits in people with schizophrenia. Annu. Rev. Clin. Psychol. 9, 409–433 (2013).

Bishay, M., Palasek, P., Priebe, S. & Patras, I. Schinet: Automatic estimation of symptoms of schizophrenia from facial behaviour analysis. IEEE Trans. Affective Comput. https://doi.org/10.1109/TAFFC.2019.2907628 (2019).

Caligiuri, M. P. & Ellwanger, J. Motor and cognitive aspects of motor retardation in depression. J. Affective Disord. 57, 83–93 (2000).

Dibeklioğlu, H., Hammal, Z. & Cohn, J. F. Dynamic multimodal measurement of depression severity using deep autoencoding. IEEE J. Biomed. Health Inform. 22, 525–536 (2017).

Harms, M. B., Martin, A. & Wallace, G. L. Facial emotion recognition in autism spectrum disorders: a review of behavioral and neuroimaging studies. Neuropsychol. Rev. 20, 290–322 (2010).

Rudovic, O., Lee, J., Dai, M., Schuller, B. & Picard, R. W. Personalized machine learning for robot perception of affect and engagement in autism therapy. Sci. Robot. 3, eaao6760 (2018).

Boy, N., Lidén, K. & Jacobsen, E. K. Societal Ethics of Biometric Technologies (SOURCE Societal Security Network, 2018).

Cowie, R. in The Oxford Handbook of Affective Computing 334–348 (Oxford Univ. Press, 2015).

Gendron, M., Crivelli, C. & Barrett, L. F. Universality reconsidered: diversity in making meaning of facial expressions. Curr. Directions Psychol. Sci. 27, 211–219 (2018).

Bryant, D. & Howard, A. A comparative analysis of emotion-detecting ai systems with respect to algorithm performance and dataset diversity. In Proc. 2019 AAAI/ACM Conference on AI, Ethics, and Society 377–382 (2019).

Rhue, L. Racial influence on automated perceptions of emotions. Preprint at https://doi.org/10.2139/ssrn.3281765 (2018).

Pantic, M. & Bartlett, M. S. in Face recognition (eds Delac, K. & Grgic, M.) Ch. 21 (IntechOpen, 2007).

Smith, F. W. & Rossit, S. Identifying and detecting facial expressions of emotion in peripheral vision. PLoS ONE 13, e0197160 (2018).

Gastaldi, X. Shake–Shake regularization. Preprint at https://arxiv.org/abs/1705.07485 (2017).

Paszke, A. et al. PyTorch: an imperative style, high-performance deep learning library. In Advances in Neural Information Processing Systems 32 (eds Wallach, H. et al.) 8024–8035 (Curran Associates, 2017).

Kingma, D. P. & Ba, J. Adam: A method for stochastic optimization. Preprint at https://arxiv.org/abs/1412.6980 (2014).

Author information

Authors and Affiliations

Contributions

The code was written by A.T., J.K. and A.B., and the experiments were conducted by A.T. and J.K. The manuscript was written by A.T., J.K., A.B. and M.P.; G.T. helped with discussions regarding the face-alignment network. M.P. supervised the entire project.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Supplementary Information

Supplementary text, Figs. 1 and 2, Tables 1 and 2 and refs.

Rights and permissions

About this article

Cite this article

Toisoul, A., Kossaifi, J., Bulat, A. et al. Estimation of continuous valence and arousal levels from faces in naturalistic conditions. Nat Mach Intell 3, 42–50 (2021). https://doi.org/10.1038/s42256-020-00280-0

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s42256-020-00280-0

This article is cited by

-

Emotion-wise feature interaction analysis-based visual emotion distribution learning

The Visual Computer (2024)

-

Assessing the effectiveness of virtual reality serious games in post-stroke rehabilitation: a novel evaluation method

Multimedia Tools and Applications (2024)

-

Bag of states: a non-sequential approach to video-based engagement measurement

Multimedia Systems (2024)

-

Migration and emotions in the media: can socioeconomic indicators predict emotions in images associated with immigrants?

Journal of Computational Social Science (2024)

-

Affect-driven ordinal engagement measurement from video

Multimedia Tools and Applications (2023)