Abstract

Recently, much attention has been paid to interpreting the mechanisms for memory formation in terms of brain connectivity and dynamics. Within the plethora of collective states a complex network can exhibit, we show that the phenomenon of Collective Almost Synchronisation (CAS), which describes a state with an infinite number of patterns emerging in complex networks for weak coupling strengths, deserves special attention. We show that a simulated neuron network with neurons weakly connected does produce CAS patterns, and additionally produces an output that optimally model experimental electroencephalograph (EEG) signals. This work provides strong evidence that the brain operates locally in a CAS regime, allowing it to have an unlimited number of dynamical patterns, a state that could explain the enormous memory capacity of the brain, and that would give support to the idea that local clusters of neurons are sufficiently decorrelated to independently process information locally.

Similar content being viewed by others

Introduction

Much progress has been made in recent decades for the understanding of brain function1,2. Brain function is determined by the way in which neurons are connected (both the topology of the neuron network and the synaptic strength among the neurons), and the intrinsic dynamics of the neurons forming the brain. Typically, one obtains an approximate course-grained information of the brain by inference from time-series measurements. The EEG signal3, used to obtain information about a local state of the brain, has been widely utilized in medical diagnosis and analysis due to its many advantages: non-invasive, low cost implementation, high-temporal analysis, tolerant to subject movements and so on. For that reason, the characterisation of this signal and the understanding of which kind of neuron dynamical behaviour produces an EEG signal have become an area of intense research4. One of the greatest challenges in brain research, which relies also on EEG measurements, is the understanding of memory formation. The binding hypothesis5, referring to a process in which perception happens by making different cognitive and memory areas of the brain synchronous (bind), is a representative class of work in brain research that also relies on EEG measurements. EEG data, as well as data obtained from several other methods, is thus used to shed light into the binding connecting network structures6,7,8,9,10 and the strengths of the neuron synapses in the brain. Modelling EEG signals is essential to understand the anatomy and histophysiology of the brain, and therefore provide support to medical imaging analysis and to the development of neuroscience11. However, consensus about what is the topology of the brain or its synaptic modus operandi is still an open problem. On the one hand, the understanding of signals from living brain is limited by non-invasive biological techniques. On the other hand, the available methods to infer the brain connectivity structure from measurements, such as EEG12, can only provide a rough estimate of the large-scale structure of the brain, little being known about the connectivity of the local clusters of neurons generating EEG signal.

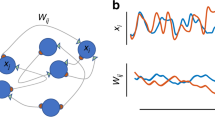

To elucidate the fundamental dynamical mechanisms behind observable brain behaviour, there has been a lot of research based on simulations of neuron networks and their behaviour as a function of the intensity of the coupling strengths13,14,15,16,17,18,19,20 and the connecting topology21,22,23,24. Under strong coupling strengths, neuron networks of equal neurons support the appearance of complete synchronisation (CS)17,18,19,20, as shown in Fig. 1. In this case, all the neurons in the network tend to have the same oscillatory dynamic behaviour. Thus, these neurons could be equivalent to a single neuron, and thus being not amenable to exhibit complex oscillatory brain patterns. With the decrease of the coupling strength, neuron networks can exhibit some well known weak forms of synchronisation14, such as phase synchronisation (PS)16, partial phase synchronisation (PPS)14, bursting phase synchronisation (BPS)14, and collective almost synchronisation (CAS), as shown in the intervals (2) and (1) of the Fig. 1, respectively. The CAS, a phenomenon where nodes are weakly connected, is characterised by the existence of local clusters of neurons possessing roughly constant local mean fields, consequence of neurons being very weakly connected. It terminates when local mean field starts to oscillate. The conventional wisdom is that the neurons are completely disordered when coupling strength is very weak. However, a recent research work25 shows that CAS is present in complex networks for very weak coupling strengths. In this situation, the neuron networks can process an infinite number of possible oscillatory patterns. CAS phenomenon is thus a plausible explanation for the existence of local cluster of neurons that are sufficiently decorrelated to independently process information locally. Those infinitely many patterns that can appear in simulated neural networks working under the CAS regime could provide the basis and shed light into the process of memory formation in the brain.

Whereas the small-scale and global connecting topologies are still a major unknown, much is understood about the intrinsic dynamical features of neurons, i.e., their equations of motion. The pioneering work on neuron models was done by Hodgkin and Huxley26. They proposed a fourth-order model to study dynamical behaviour of individual neurons. To simplify the model, second-order models, such as the ones by FitzHugh-Nagumo27 and Morris-Lecar28, were proposed. However, the second-order models are not able to reproduce some phenomena, such the triggering of a set of stable firings29. Hence, a third-order model, referred to as Hindmarsh-Rose (HR) model, was introduced to solve that problem30,31,32,33,34. For our analysis, we consider the HR model.

In this work, we are aimed at explaining the dynamical and structural fundaments of the neuronal networks responsible for generating experimental EEG signals. Our goal is to shed light into the characteristics that a simulated neuron network (i.e., coupling strengths and topological structure) needs to have in order to model experimental EEG signals. We show that networks operating in a CAS regime optimally model EEG signals, manifesting the activity of a local part of the outer layer of the brain. Our results suggest that locally the brain has neurons that are only weakly connected. Moreover, since the CAS regime is weakly dependent on the neuron connectivity, our results imply that at smaller scales the synapses strength is the most relevant parameter for brain behaviour. Because the CAS regime allows a network to exhibit an infinite number of patters, our work also provides an explanation about the brain mechanism for its enormous memory capacity. Finally, our model of the EEG signal is based on similar protocol to produce weighted average outputs of complex networks that integrate information of various clusters to produce a logical “intelligent” output. Consequently, our work also suggests that information in the brain is being locally processed by independent local clusters of neurons.

In the following, we provide the organisational presentation of our main results in this paper:

(1) We show in Sec.2 that the CAS phenomenon occurs in neuron networks with both chemical and electrical synapses.

(2) We show that a mean field from a random cluster of neurons in the simulated networks exhibits CAS, optimally reproducing experimental EEG signals (in Sec.3). This result supports the argument that CAS is present in localised regions of the brain, suggesting that local clusters of neurons does not have strong synaptic strengths. Knowing that CAS allows for the formation of an infinite number of collective clusters and patterns, the presence of CAS in local regions of the brain could be advantageously used as a memory reservoir.

(3) Neuron networks with both linear (electric) and nonlinear (chemical) couplings, as known to exist in our brain32,33,34, reproduce surprisingly well the experimental EEG signals for the coupling strengths in the range responsible to produce the CAS regime.

Criteria to exhibit the CAS phenomenon in neuron networks with both electrical and chemical synapses

Neuron network

The Hindmarsh-Rose(HR) neuron model35 is the following:

where x represents the membrane potential, y is the recovery variable associated with the fast current of Na+ or K+ ions, z is the adaptation current associated with the slow current of, for instance, Ca+ ions, Iext is the externally applied current that mimics the membrane input current for biological neurons, r is a small parameter that governs the bursting behaviour, and x0 is the resting potential. The following choice of system parameters, a = 1, b = 3, c = 1, d = 5, s = 4, r = 0.005, x0 = 1.618 and Iext = 3.25, yields the HR neuron model to exhibit a multi-time-scale chaotic behaviour characterised by spiking bursting36.

The dynamical equations of an HR neuron network with both electrical and chemical synapses is the following:

where  are state variables of the neuron i with random initial conditions, i = 1, 2, …, N. N is the number of neurons in the network.

are state variables of the neuron i with random initial conditions, i = 1, 2, …, N. N is the number of neurons in the network.  is the internal coupling function among the neurons in the network; we consider H(xj) = xj − xi. σ and g are electrical (linear coupling strength) and chemical synapses (nonlinear coupling strength), respectively. The matrix Aij represents the Laplacian matrix for the electrical synapses; the adjacency matrix Bij describes the topology of the chemical connections, thus

is the internal coupling function among the neurons in the network; we consider H(xj) = xj − xi. σ and g are electrical (linear coupling strength) and chemical synapses (nonlinear coupling strength), respectively. The matrix Aij represents the Laplacian matrix for the electrical synapses; the adjacency matrix Bij describes the topology of the chemical connections, thus  , where Ki represents the number of chemical connections that neuron i (the post-synaptic neuron) receives from all the other j neurons (pre-synaptic neurons) in the network. The chemical synapse function is modelled by the sigmoidal function

, where Ki represents the number of chemical connections that neuron i (the post-synaptic neuron) receives from all the other j neurons (pre-synaptic neurons) in the network. The chemical synapse function is modelled by the sigmoidal function

with θsyn = −0.25, λ = 10, and Vsyn = 2.0 for excitatory and Vsyn = −2.0 for inhibitory neurons36. In Fig. 2 we show examples of dynamical behaviours of the membrane potentials x (solid line) and ensemble average of the neuron network (dashed line) when the CAS phenomenon is present, where Iext = 3.25, N = 1000, σ = 0.001, g = 0, and other parameters are the same as the ones used to simulate the HR neuron in Eq. (1). Simulations were performed using Matlab Simulink.

Revisiting CAS phenomenon in networks whose neurons are coupled with only electrical synapses

The CAS phenomenon was first described and analysed in ref. 25. It describes a universal way of how patterns could appear in complex networks for weak coupling strengths. The local mean field of node i is defined as:

ki is the electrical coupling degree of node i. The expected value of the local mean field is defined as

The CAS patterns of node i is described by:

where  are the three-dimensional CAS patterns, pi = σki is the product of the coupling strength σ and the degree of node i, i.e., ki, for linear coupling. Equation (6) is derived from a local mean field approximation describing approximately the evolution of a cluster of neurons that have all the same connectivity degree.

are the three-dimensional CAS patterns, pi = σki is the product of the coupling strength σ and the degree of node i, i.e., ki, for linear coupling. Equation (6) is derived from a local mean field approximation describing approximately the evolution of a cluster of neurons that have all the same connectivity degree.

There are two criteria for the network to present the CAS phenomenon. They are as follows:

Criterion 1: The Central Limit Theorem should be applied, i.e.,

. Therefore, the larger the degree of a node, the smaller the variance of the local mean field

. Therefore, the larger the degree of a node, the smaller the variance of the local mean field  about its expected value Ci, as shown in Fig. 3(a). Here,

about its expected value Ci, as shown in Fig. 3(a). Here,  denotes the variance of

denotes the variance of  .

.Figure 3: The two criteria for the existence of the CAS phenomenon; we choose a scale-free network (a network whose degree distribution follows a power law) with N = 1000, σ = 0.001, g = 0, Iext = 3.25 and r = 0.005. Criterion 2: There must be a CAS pattern described by a stable periodic orbit, to which two locked neurons can demonstrate phase or delay synchronisation (or other functional synchronisation25), as shown in Fig. 3(b).

The different CAS patterns are obtained by varing pi, which can be analysed by using a bifurcation diagram as shown in Fig. 4, where we plot the local maximal points of the CAS patterns. We find that when  , there is only one pattern in the neuron network. With the decrease of pi, the patterns have a period-adding transition from period one to period two, period three, and so on, followed by an infinite period doubling cascade leading to a chaotic state, the latter occurring when pi is close to 0, as shown in the inset plot. Therefore, this is the bifurcation scenario toward an infinite number of patterns.

, there is only one pattern in the neuron network. With the decrease of pi, the patterns have a period-adding transition from period one to period two, period three, and so on, followed by an infinite period doubling cascade leading to a chaotic state, the latter occurring when pi is close to 0, as shown in the inset plot. Therefore, this is the bifurcation scenario toward an infinite number of patterns.

Notice that the existence of the CAS pattern only requires an approximately constant local mean field Ci, and it does not depend on the topology of the network. So, our subsequent analysis is reproducible in any arbitrary network, as long as there is a sufficient number of well connected nodes. Our numerical results suggest that a minimum degree of about 10 is necessary to create a constant Ci. Equation (6) is an approximate equation to describe the evolution of nodes of the network with weak coupling. If two nodes in the network would be in the CAS regime, they would have their dynamics described approximately by Eq. (6). If this equation would describe a stable periodic orbit, as in the requirement for the CAS regime, the real trajectory of these nodes would be a perturbation around the same stable periodic orbit. The trajectory would never escape the periodic orbit, which would make these nodes to become phase synchronous, after a time-delay would be taken into consideration.

The CAS phenomenon in networks whose neurons are coupled with both electrical and chemical synapses

Due to H(xj) = xj − xi, the electrical coupling term of the first state variable can be rewritten as

where pi = σki,  .

.

The chemical coupling term  can also be rewritten as

can also be rewritten as

where  ,

,  , Ki is the chemical coupling degree of node i. Hence, the CAS patterns with both coupling are given by

, Ki is the chemical coupling degree of node i. Hence, the CAS patterns with both coupling are given by

where  ,

,  . To illustrate the presence of the CAS phenomenon, we consider a scale-free network, formed by N = 1000 neurons, with both electrical and chemical couplings. The simulations show that the two criteria for the CAS phenomenon are also satisfied in this neuron network with both electrical and chemical synapses.

. To illustrate the presence of the CAS phenomenon, we consider a scale-free network, formed by N = 1000 neurons, with both electrical and chemical couplings. The simulations show that the two criteria for the CAS phenomenon are also satisfied in this neuron network with both electrical and chemical synapses.

Figure 5(a,b) show that the Central Limit Theorem can be applied, which happens when the variables are weakly correlated. The error bars indicate the variance of Ci and Wi, behaving as ∝

and ∝

and ∝ , respectively.

, respectively.Figure 5: Results validating the two criteria for the neurons to exhibit the CAS phenomenon; we choose a scale-free network with N = 1000, σ = 0.001, and g = 0.001. (a) Expected value of the local mean field of node i with respect to its electric coupling degree ki. (b) Expected value of the local mean field of node i with respect to its chemical coupling degree Ki. (c) The CAS pattern for neurons i and j, both with electrical coupling degree ki = 20 and chemical coupling degree Kj = 22.

In Fig. 5(c), the CAS pattern describes a stable periodic orbit. The node trajectory can be considered a perturbed version of its CAS pattern.

A bifurcation diagram is shown in Fig. 6, where we set Aij = kiI − Bij for simplicity, where I is the diagonal identify matrix. For the case where  , separate bifurcation diagrams for electrical and chemical couplings can be obtained. From Figs 5 and 6, a conclusion is drawn that the CAS phenomenon is typical in neuron networks with both weak electrical and chemical couplings.

, separate bifurcation diagrams for electrical and chemical couplings can be obtained. From Figs 5 and 6, a conclusion is drawn that the CAS phenomenon is typical in neuron networks with both weak electrical and chemical couplings.

Methods

Modelling the EEG signal by simulated neural networks

According to ref. 25, if we wish the CAS phenomenon to be seen in a physiology experiment37, we need to set up the experiment to probe the firing behaviour of pairwise neurons with the same degree, or equal weighted degree if neurons are connected with non-uniform coupling strengths. As a signature of CAS, these neurons exhibit phase or delay synchronous behaviour. It is difficult to measure individual neurons in living tissue, not to mention searching for neurons with equal degree, or with similar weighted degree connections. Therefore, in this work, we propose to study another signature of CAS, an output function defined by weighted mean field measurements of a cluster of randomly chosen neurons in the network. Our goal is to show that this output function does share remarkable similarities with typical output local mean field measurements of the brain, i.e., the EEG.

EEG is a graphical record of electrical activity of a localised region of the brain, obtained by placing an electrode in a specified location of the head, such as the scalp and subdural placements. The electrode collects a signal that is a function of a weighted sum of postsynaptic potential of nearby neurons in a localised region in the outer layer of the brain. This signal is then compared to a reference electric potential in another region of the brain, and the potential difference is a bipolar signal called EEG. Therefore, EEG is a bipolar electric signal related to a measure of the potential of a local cluster of neurons as compared to the potential generated by another region. Suppose that there are n neurons, one fraction influencing the measurement at an electrode and the other fraction influencing the potential produced at the probe measuring the reference signal. Each of these neurons produce a potential  . We then model the EEG experimental signal, represented by

. We then model the EEG experimental signal, represented by  , by the following linear sum,

, by the following linear sum,

where  are the linear weights that allow a mapping between the sum of the neuron potential and the EEG signal. We want to find the set of linear weights that minimises the difference

are the linear weights that allow a mapping between the sum of the neuron potential and the EEG signal. We want to find the set of linear weights that minimises the difference  . Linear models based on a weighted sum of membrane potentials from simulated neuron network have been previously proposed to model EEG signals4. Our novel contribution is to first determine under which network conditions the models optimally fits the EEG signal, and to withdraw general conclusions about the characteristics of neuron network, capable of reproducing these signals, i.e., the parameter conditions of the neurons and their connectivity. Assuming that the mapping of Eq. (8) describes well the link between electric potential and EEG signals, our goal is to show that an experimental EEG signal can be well reproduced by a weighted sum, i.e., SN, but where xi in Eq. (8) is the membrane potential generated by the simulated neuron network in Eq. (2). In this interpretation, the simulated neural network is actually representing two local areas of the brain, one close to where the EEG main probe is placed and another where the reference probe is placed.

. Linear models based on a weighted sum of membrane potentials from simulated neuron network have been previously proposed to model EEG signals4. Our novel contribution is to first determine under which network conditions the models optimally fits the EEG signal, and to withdraw general conclusions about the characteristics of neuron network, capable of reproducing these signals, i.e., the parameter conditions of the neurons and their connectivity. Assuming that the mapping of Eq. (8) describes well the link between electric potential and EEG signals, our goal is to show that an experimental EEG signal can be well reproduced by a weighted sum, i.e., SN, but where xi in Eq. (8) is the membrane potential generated by the simulated neuron network in Eq. (2). In this interpretation, the simulated neural network is actually representing two local areas of the brain, one close to where the EEG main probe is placed and another where the reference probe is placed.

Equation (8) can be written in a matrix form for all t ≥ 0, as

where  is a matrix comprised of the membrane potential of the simulated neurons at sampled time ti,

is a matrix comprised of the membrane potential of the simulated neurons at sampled time ti,  .

.  is the weight vector,

is the weight vector,  is the sampled EEG signal, and m is the EEG signal data length. By using pseudo-inverse method38, we can derive

is the sampled EEG signal, and m is the EEG signal data length. By using pseudo-inverse method38, we can derive  and define the fit deviation as

and define the fit deviation as

The solution of Eq. (9) provides us with the constant parameters of our EEG model in Eq. (8). We choose randomly a group of nodes (n neurons) in the HR network in order to model the EEG data, and calculate the deviation D. Observability theory39 shows that in nonlinear networks a produced sample of nodes in a network carries information about the whole network. Notice that the approach proposed, which searches for a solution of Eq. (9) by the pseudo-inverse method, is a typical method used to compute a ‘best fit’ (least squares) solution and can be seen as a practical use of the so called computing reservoir paradigm40. This paradigm is an approach to calculating the weights of an output weighted average in order to reproduce EEG. The calculation of the weights employs other techniques besides the pseudo-inverse, which minimises the best mean square fitting between the target signal (in this case, the EEG signal,  ), and the weighted average (represented by SN(t)). The success of our model to reproduce the EEG signal thus suggests that the brain processes information locally by a small-scale cluster of neurons.

), and the weighted average (represented by SN(t)). The success of our model to reproduce the EEG signal thus suggests that the brain processes information locally by a small-scale cluster of neurons.

Notice that our model in Eq. (8) is a bottom-up model. We depart from microscopic dynamical properties that lead a network to have CAS regime in its microscopic components to model the EEG experimental signal, a higher level signal, generated by a complicated composition of signals coming from several places in the subject head. As such, our modelling comparison must be done at the level of the signal. In other words, validation of the accuracy of our modelling Eq. (8) by Eq. (10) is at the core of the analysis to be presented in the following.

The EEG signal approximation using the network with only linear coupling

We consider an HR network to model the actual EEG data at four brain locations. The four experimental EEG data from clinical trials were measured continuously in 64 channels at a sampling rate of 100 Hz and lasting 10 s, i.e., the EEG signal includes 1000 sampling points with the sampling interval equal to 10 ms. The electrode locations are C4, F1, Fpz and Pz, corresponding to the EEG data sets defined as E1, E2, E3, and E4, respectively, as shown in Fig. 7. E1 and E2 were measured in epilepticus state, and E3 and E4 were measured in sleep state. The neuron signals are obtained by using Eq. (2) with a scale-free network; it consists of N = 1000 Hidmarsh-Rose neurons with uniform linear (electrical) coupling σ = 0.001 and g = 0. The time sequences of the selected neurons are sampled using the interval equal to 10 ms, as well. The parameters of the neuron model are the same as in Eqs (1) and (2). Then Eq. (9) is used to fit by Pseudo-Inverse method. Repeated tests are performed, the goodness of fitting results are shown in Fig. 8, where n represents the number of neurons randomly selected for data modelling in Eq. (9), D is the smallest deviation in 200 tests, σ is the linear coupling strength in Eq. (2).

In the Fig. 8(a,b), the results of the modelling for E1 and E2 are given, and the results for E3 and E4 are given in Fig. 8(c,d), respectively. Notably, we can gather some interesting observations from the results in Fig. 8. Firstly, when the coupling strength is equal to zero, the deviation is relatively large. Therefore, if we tried to model the EEG data using time-series generated by disconnected (and therefore, decorrelated) neurons, we would not have achieved a so good fitting as the one when it is done by considering time-series trajectories obtained from a neuron network of weakly connected nodes, possessing the CAS phenomenon. The interpretation of this result is that the EEG signals are not possible to be generated out of a mean field of a set of deterministic variables that are decorrelated. Therefore, the variables generating the local weighted mean field producing the EEG signals must be weakly correlated. Secondly, for fixed n, when the coupling strength increases to the range corresponding to CAS, the deviation is the smallest. These two observations suggest not only that local clusters of the brain could be functioning in a CAS state to generate the EEG signals, but also that the computing reservoir paradigm, applied to reproduce an EEG signal, reaches its best performance if complex networks operate in a CAS regime. Therefore, the level of correlation between the deterministic variables must be weak. Thirdly, when the coupling strength increases further to the range leading to the onset of weak and strong forms of synchronisation, the deviation increases accordingly. In summary, the EEG cannot be reproduced by local weighted mean field of variables strongly correlated. When the CS occurs, the deviation is the largest. Finally, we notice that, with the increasing in the number of neurons, the deviation decreases, but it is not as significant as the change for the coupling strength. The minimum degree of scale-free networks grows with the square root of the size of the network, and an increase in the coupling σ increases the stability of the CAS pattern (making it more likely to occur). This provides another evidence that the reason for the local mean field (SN) to model the EEG signal ( ) is the presence of the CAS phenomenon in the network, which is strongly dependent on σ and moderately dependent on the size of the network. Notice that since the minimum degree grows with the square root of the number of nodes, the range of σ values that induces CAS does only moderately change in the interval considered,

) is the presence of the CAS phenomenon in the network, which is strongly dependent on σ and moderately dependent on the size of the network. Notice that since the minimum degree grows with the square root of the number of nodes, the range of σ values that induces CAS does only moderately change in the interval considered,  . Notice also that the larger the number of neurons considered in the simulations, the smaller the coupling strength needed for CAS to emerge. For the EEG signals from other positions, we obtain similar results.

. Notice also that the larger the number of neurons considered in the simulations, the smaller the coupling strength needed for CAS to emerge. For the EEG signals from other positions, we obtain similar results.

To show how good SN(t) approaches  , the fitting for the model in Eq. (8), are shown in Fig. 9(a–d), corresponding to the results for E1, E2, E3, and E4, respectively. The actual EEG is shown in red full lines and the model signal obtained by the fitting from Eq. (9) by star marked blue lines, and denoted by S1, S2, S3, and S4, respectively. The number of neurons used in our simulations to produce the results in Fig. 9 is 400, the linear coupling strength is 0.001.

, the fitting for the model in Eq. (8), are shown in Fig. 9(a–d), corresponding to the results for E1, E2, E3, and E4, respectively. The actual EEG is shown in red full lines and the model signal obtained by the fitting from Eq. (9) by star marked blue lines, and denoted by S1, S2, S3, and S4, respectively. The number of neurons used in our simulations to produce the results in Fig. 9 is 400, the linear coupling strength is 0.001.

In order to investigate the relationship between the number of neurons used for solving Eq. (9), and the successful rate of fitting in the sense of the deviation D in Eq. (10), being smaller than a threshold T, we show the simulation results for σ = 0, σ = 0.04, σ = 0.001 in Fig. 10, respectively. This figure shows the relationship between the number of neurons used for solving Eq. (9) and producing the modelled signal SN(t), and the successful rate with respect to the predefined deviation, where the successful rate is obtained from 200 runs using selected number of neurons. The criterion of successful modelling is the deviation being smaller than T = 6.5 (microvolt). We also define the quantity P as the percentage of fittings that produce D < T from all fittings, considering randomly selected neurons in each run. We conclude from Fig. 10 (lower panel) that the successful fitting rate P is 100 percent when the number of neurons used is more than 450 and the coupling strength is 0.001, where the CAS phenomenon is present. However, for the coupling strength equal to 0 (Fig. 10, upper left panel) and 0.04 (upper right panel), the fitting fails (P = 0, regardless of n and σ). From these observations, we provide further evidence that the brain operates on a CAS state. That being true, it would imply that the brain, in spite of using very small amounts of energy to maintain the weak connections, is capable of reproducing a remarkable large number of patterns.

The EEG signal approximation using the neuron network with both electrical and chemical couplings

Previous works show that both electrical and chemical couplings are present in the brain12,13. The EEG signal modelling using the neuron network with both couplings is analysed and compared with the neuron network with only electrical coupling. The comparison results are shown in Table 1. The results are the statistical value of 30 simulations. The electrical coupling strength σ = 0.001, the chemical coupling strength g = 0.001, the number of neurons used is n = 400, the Laplacian matrix representing the network of electrically coupled neurons (scale-free HR network) A is different from the adjacency matrix representing the network of chemically coupled neurons B; the excitatory chemical synapses are set by making Vsyn = 2. From Table 1, we learn that, under the same conditions, the neuron network with both couplings can model the EEG signal better than the neuron network with only linear coupling. This provides further evidence that CAS is very important to reproduce local brain behaviour (e.g., EEG signals), and that neuron networks with neurons coupled by both synapses provide better modelling, reinforcing the idea that in the brain one needs to have these two types of connections.

Discussion

In this work, we show that the Hindmarsh-Rose neuron networks, with both chemical and electrical couplings and operating25 in a regime that exhibits the so called Collective Almost Synchronisation (CAS), produces an output signal that model very well the EEG signal recorded from four randomly selected regions of the brain. The model is therefore neither subject dependent nor region dependent. This suggests that local regions of the brain could be functioning in a CAS state. This implies that small-scale clusters of neurons in the brain are weakly connected, and that there is a large number of neurons that are almost synchronous. Each local cluster of neurons is roughly decoupled from other brain locations. Neuron networks connected with both types of synapses (electrical and chemical) reproduce better the EEG signals, reinforcing the idea that both connections are fundamental for the working of the brain. This work therefore provides evidence that CAS is present in the brain. Neurons that are in the CAS regime in the local clusters have the same capabilities as our simulated neurons, presenting an infinite number of patterns that appear for small changes in the synaptic couplings. These patterns, in turn, could explain the brain large memory capacity. Our work thus also support the idea that local clusters of neurons are sufficiently decorrelated, so they process information independently. Therefore, we can interpret the EEG signal as a manifestation of a local cluster of weakly connected neurons integrating information and capable of emulating an infinite number of almost periodic oscillatory patterns. A spectral analysis reveals that the power spectrum curves of the EEG signals are reasonably different. However, the same simulated network can model the EEG from every region. Moreover, our simulations show that simulated networks with different topologies and different number of neurons can fit with similar accuracy the experimental EEG signals. Therefore, the success of the model does not rely on any spectral similarities between the simulated network and the EEG signal, but rather on the nodes behaving in the CAS regime.

Additional Information

How to cite this article: Ren, H.-P. et al. Weak connections form an infinite number of patterns in the brain. Sci. Rep. 7, 46472; doi: 10.1038/srep46472 (2017).

Publisher's note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

References

Sudhof, T. C. & Malenka, R. C. Understanding synapses: Past, present, and future. Neuron 60, 469–476 (2008).

Gerstner, W., Sprekeler, H. & Deco, G. Theory and simulation in neuroscience. Science 338, 60–65 (2012).

Swartz, B. E. The advantages of digital over analog recording techniques. Electroencephalography and Clinical Neurophysiology 106, 113–117 (1998).

Sanei, S. Adaptive processing of brain signals. John Wiley & Sons Chapter 3 (2013).

Pareti, G. & Palma, A. Does the brain oscillate? The dispute on neuronal synchronization. Neurological Sciences 25, 41–47 (2004).

Morelli, A., Grotto, R. L. & Arecchi, F. T. Neural coding for the retrieval of multiple memory patterns. BioSystems 86, 100–109 (2006).

Perin, R., Berger, T. K. & Markram, H. A synaptic organizing principle for cortical neuronal groups. Proceeding of the National Academy of Sciences 108, 5419–5424 (2011).

Arenkiel, B. R. & Ehlers, M. D. Molecular genetics and imaging technologies for circuit-based neuroanatomy. Nature 461, 900–907 (2009).

Stetter, O., Battaglia, D., Soriano, J. & Geisel, T. Model-free reconstruction of excitatory neuronal connectivity from calcium imaging signals. Plos Computational Biology 8, e1002653 (2012).

Haimovici, A., Tagliazucchi, E., Balenzuela, P. & Chialvo, D. R. Brain organization into resting state networks emerges at criticality on a model of the human connectome. Physical Review Letters 110, 178101 (2013).

Bischoff, J. S., Luther, S. & Parlitz, U. Estimability and dependency analysis of model parameters based on delay coordinates. Physical Review E 94, 032221 (2016).

Wendling, F., Asl, K. A., Bartolomei, F. & Senhadji, L. From eeg signals to brain connectivity: A model-based evaluation of interdependence measures. Journal of Neuroscience Methods 183, 9–18 (2009).

Baptista, M. S., Pereira, T., Sartorelli, J. C., Caldas, I. L. & Kurths, J. Non-transitive maps in phase synchronization. Physical D 212, 216–232 (2005).

Baptista, M. S. & Kurths, J. Transmission of information in active networks. Physical Review E 77, 026205 (2008).

Baptista, M. S. et al. Mutual information rate and bounds for it. Plos One 7, e46745 (2012).

Pikovsky, A., Rosenblum, M. & Kurths, J. Synchronization: A universal concept in nonlinear sciences. Cambridge Nonlinear Science Series Cambridge, London (2001).

Checco, P., Checco, M., Biey, M. & Kocarev, L. Synchronization in networks of hindmarsh-rose neurons. IEEE Trans. Circuits Syst. II 55, 1274–1278 (2008).

Wang, Q., Chen, G. & Perc, M. Synchronization bursts on scale-free neuronal networks with attractive and repulsive coupling. Plos One 6, 15851 (2011).

Luccioli, S., Olmi, S., Olmi, A. & Torcini, A. Collective dynamics in sparse networks. Physical Review Letters 109, 5136–5141 (2012).

Belykh, I., Lange, E. D. & Hasler, M. Synchronization of bursting neurons: What matters in the networks topology. Physical Review Letters 94, 1880114 (2005).

Baptista, M. S., Szmoski, R. M., Pereira, R. F. & Souza Pinto, S. E. Chaotic, informational and synchronous behaviour of multiplex networks. Scientific Reports 6, 22617 (2016).

Borges, F. S. et al. Complementary action of chemical and electrical synapses to perception. Physica A 430, 236–241 (2015).

Lameu, E. L., Borges, F. S., Batista, A. M., Baptista, M. S. & Viana, R. L. Network and external perturbation induce burst synchronization in cat cerebral cortex. Communications in Nonlinear Science and Numerical Simulation 34, 45–54 (2016).

Itoh, K. & Nakada, T. Human brain detects short-time nonlinear predictability in the temporal fine structure of deterministic chaotic sounds. Physical Review Letters 87, 042916 (2013).

Baptista, M. S. et al. Collective almost synchronization in complex networks. Plos One 7, e48118 (2012).

Hodgkin, A. L. & Huxley, A. F. A quantitative description of membrane current and its application to conduction and excitation in nerve. Bulletin of Mathematical Biology 117, 500–544 (1952).

FitzHugh, R. Impulse and physiological states in theoretical models of nerve membrane. Biophysical Journal 1, 445–466 (1961).

Morris, C. & Lecar, H. Voltage oscillators in the barnacle giant muscle fiber. Biophysical Journal 35, 193–213 (1981).

Jhou, F. J., Juang, J. & Liang, Y. H. Multistate and multistage synchronization of hindmarsh-rose neurons with excitatory chemical and electrical synapses. IEEE Trans. Circuits Syst. I 59, 1335–1347 (2012).

Izhikevich, E. M. Which model to use for cortical spiking neurons? IEEE Trans. Neural Netw. 15, 1063–1070 (2004).

Innocenti, G. & Genesio, R. On the dynamics of chaotic spiking-bursting transition in the hindmarsh-rose neuron. Chaos 19, 023124 (2009).

Hestrin, S. & Galarreta, M. Electrical synapses define networks of neocortical gabaergic neurons. Trends Neurosci 28, 304–309 (2005).

Galarreta, M. & Hestrin, S. Electrical synapses between gaba-releasing interneurons. Nature Reviewers Neuroscience 2, 425–433 (2001).

Connors, B. W. & Long, M. A. Electrical synapses in the mammallan brain. Annual Review of Neuroscience 27, 393–418 (2004).

Hindmarsh, J. L. & Rose, R. M. A model of neuronal bursting using three coupled first order differential equations. Proceedings of the Royal Society of London 221, 87–102 (1984).

Baptista, M. S., Kakmeni, F. M. M. & Grebogi, C. Combined effect of chemical and electrical synapses in hindmarsh-rose neural networks on synchronization and the rate of information. Physical Review E 82, 036203 (2010).

Buzsaki, G., Anastassiou, C. A. & Koch, C. The origin of extracellular fields and currents-eeg, ecog, lfp, and spikes. Nature Reviews Neuroscience 13, 407–420 (2012).

Penrose, R. A generalized inverse for matrices. Proceedings of the Cambridge Philosophical Society 51, 406–413 (1955).

Martinez, E. B., Baptista, M. S. & Letellier, C. Symbolic computations of nonlinear observability. Physical Review E 91, 062912 (2015).

Verstraeten, D., Schrauwen, B., Hance, M. D. & Stroobandt, D. An experiment unification of reservoir computing methods. Neural Networks 20, 391–403 (2007).

Acknowledgements

This work is supporting in part by NSFC (61172070), Innovation Research Team of Shaanxi Province (2013KCT-04), Key Program of Nature science Foundation of Shaanxi Province (2016ZDJC-01), EPSRC (EP/I032606/1), and Chao Bai was supported by Excellent Ph.D. research fund (310-252071603) at XAUT.

Author information

Authors and Affiliations

Contributions

H.P. Ren designed the project and the simulation framework, analysed the results and wrote the paper; C. Bai did the simulations and wrote the draft paper; M.S. Baptista analysed the simulation results and contributed to writing the manuscript; C. Grebogi wrote the paper and discussed the problem.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing financial interests.

Rights and permissions

This work is licensed under a Creative Commons Attribution 4.0 International License. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in the credit line; if the material is not included under the Creative Commons license, users will need to obtain permission from the license holder to reproduce the material. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/

About this article

Cite this article

Ren, HP., Bai, C., Baptista, M. et al. Weak connections form an infinite number of patterns in the brain. Sci Rep 7, 46472 (2017). https://doi.org/10.1038/srep46472

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/srep46472

This article is cited by

-

Collective almost synchronization-based model to extract and predict features of EEG signals

Scientific Reports (2020)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.

. Therefore, the larger the degree of a node, the smaller the variance of the local mean field

. Therefore, the larger the degree of a node, the smaller the variance of the local mean field  about its expected value Ci, as shown in

about its expected value Ci, as shown in  denotes the variance of

denotes the variance of  .

.

and

and  , respectively.

, respectively.