Abstract

Earth’s climate has remained continuously habitable throughout 3 or 4 billion years. This presents a puzzle (the ‘habitability problem’) because loss of habitability appears to have been more likely. Solar luminosity has increased by 30% over this time, which would, if not counteracted, have caused sterility. Furthermore, Earth’s climate is precariously balanced, potentially able to deteriorate to deep-frozen conditions within as little as 1 million years. Here I present results from a novel simulation in which thousands of planets were assigned randomly generated climate feedbacks. Each planetary set-up was tested to see if it remained habitable over a period of 3 billion years. The conventional view attributes Earth’s extended habitability solely to stabilising mechanisms. The simulation results shown here reveal instead that chance also plays a role in habitability outcomes. Earth’s long-lasting habitability was therefore most likely a contingent rather than an inevitable outcome.

Similar content being viewed by others

Introduction

The evolution of intelligent life on a planet requires not only that planetary conditions are conducive to life at the start, as it first evolves, but also that the planet remains habitable subsequently, without interruption. This is because there has to be enough time to allow life to increase in complexity from simple cells to more sophisticated single cells to multicellular life and eventually to intelligent life. On Earth this development took something like 3 or 4 By1,2,3, throughout which time liquid water appears to have been present somewhere on Earth’s surface4, implying temperatures between its freezing and boiling points.

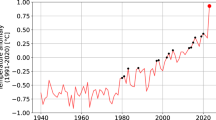

Conditions remained habitable, and on first consideration this may not seem surprising. However, observations and deductions have accumulated to show that such a long duration of habitability is in fact a puzzling phenomenon requiring explanation. The principles governing the internal dynamics and temporal evolution of stars were worked out in the 1950s5,6—when applied to the Sun, they revealed that solar heating of today’s Earth is about 30% more intense than of the early Earth5. Sagan & Mullen7 realised that, if all else had remained constant, the early Earth would have been totally frozen under the dimmer early Sun. Or, conversely, given that the early Earth was not frozen, if the rest of the climate system had remained unaltered then the oceans would now be boiling under today’s brighter Sun. Evidently neither occurred, for reasons that are still debated8,9,10,11,12; although plausible solutions exist, in particular when combining multiple processes13, as yet there is no firm consensus as to how in reality the ‘Faint Young Sun Paradox’ was overcome14.

Probes sent to land on Earth’s neighbours in the 1970s provided more evidence that Earth’s thermal habitability requires explanation. Venus, with its strong greenhouse and closer to the Sun, was found to be too hot (average surface temperature >460 °C15) and Mars, with its thin atmosphere and further from the Sun, too cold (average < −50 °C16). Moreover, subsequent observations point to Mars having once been warm enough to have liquid water on its surface17,18; if correct then the history of Mars shows that a planet can lose habitability—that once obtained it is not guaranteed to persist.

That habitability may be precarious was also emphasized in the 1990s by theoretical calculations19 showing Earth’s considerable potential for climate volatility. A sustained imbalance of 25% between rates of input and output of carbon would, it was pointed out, lead to the oceans freezing within a few million years (if loss of atmospheric CO2 exceeds gain) or boiling away within a few tens of millions of years (if gain exceeds loss). The reason for this predicted susceptibility to rapid climate deterioration is the short residence time with respect to geological fluxes of carbon in the surficial Earth system (ocean, atmosphere, soils and biota)—about 100 ky19.

After the realisation that neither Mars nor Venus presently have temperatures conducive to life, and that Earth’s carbon dioxide greenhouse is potentially unstable, two main classes of solution were advanced to try and answer the ‘habitability problem’20 for Earth. The first class involves stabilising mechanisms. It was argued19,21 that the duration of continued habitability (3 to 4 By) so greatly exceeds the potential time to climate failure (~0.001 to 0.01 By) that destabilising tendencies must have been actively opposed by strong negative feedbacks throughout. Earth must possess an inbuilt thermostat and have possessed it since its early history. Otherwise climate would have followed a random walk with, they argued, an impossibly small likelihood of remaining within habitable bounds.

One proposal in this class, the Gaia hypothesis22, suggested that once life becomes established on a planet then it intervenes in climate regulation to keep conditions stable and habitable. However, the Gaia hypothesis lacks a clear mechanistic basis and is not consistent with the most recent scientific evidence23. Another proposal in this class suggests that silicate weathering contributes to a thermostat by removing carbon dioxide more rapidly under warmer climates12,24,25,26,27,28,29,30. Controls on continental silicate weathering rate remain somewhat uncertain, however, with field studies suggesting a strong influence from the rate of supply of newly-exposed (easily weathered) rock31,32, as well as from climate28. Proxy evidence also casts some doubt on whether silicate weathering effectively countered climate changes during Earth’s history33,34; for instance, its rate appears not to have decreased as climate cooled gradually through the Cenozoic33,35 and climate recovery following the intense warming of the Paleocene Eocene Thermal Maximum has been attributed to organic carbon burial rather than silicate weathering36,37. As questions have arisen about whether silicate weathering on the continents provides Earth’s thermostat, new proposals have suggested that silicate weathering in other locations is key: either islands27 or the sea floor12,29.

A second, very different, class of solution has also been put forward, based on the principle of observer selection (the weak anthropic principle)38. Logically, we can only ever observe facts that are consistent with our own existence. This has been extended to the scenario where a prolonged duration of planetary habitability occurs only extremely infrequently amongst a large population of planets but does happens sometimes by chance. According to observer selection, in this scenario we are bound to find ourselves on one of the lucky planets39,40, however scarce they might be. This view presupposes a large number of potentially habitable planets if there is to be any likelihood of even one planet surviving due to good fortune alone. There is, however, no shortage of planets. In our galaxy alone there are estimated to be billions of small, rocky planets orbiting within the habitable zones of their parent stars41,42.

The most widely held view at this time is that “Earth has been a habitable planet for [billions] of years because of the existence of stabilising feedbacks in the global carbon and silicon cycles.”12. Although there are a few exceptions23,39,40, the possible role of chance is typically not even mentioned12,30,43,44,45. Here I describe a new model of habitability and report its results. The results suggest strongly that chance also played a role.

Results

Approach

The model used here (see Methods for details) is a conceptual (simplest) representation of planetary climate regulation. It includes the fundamentals necessary for simulating climate feedbacks and habitability tendencies and purposefully excludes any other details deemed to be non-essential, even those that can be incorporated accurately. In contrast to previous modelling studies of Earth habitability, it allows for evaluation of observer selection effects by taking a multi-planet perspective. For this reason, the model is not in any way a model of Earth specifically but rather is of potentially habitable planets in general. Because of the difficulty in directly observing exoplanets, there is a lack of data and consequently a high degree of uncertainty about how to model some aspects. However, sensitivity analyses, described in the Supplementary Information, show that the exact parameter values and assumptions chosen do not affect the main results presented here.

The model is described in detail in the Methods but a brief outline is given here. The model has only one state variable, planetary surface temperature (T). Other environmental conditions are not modelled and therefore habitability in this paper refers to thermal habitability only. At the outset, each planet is allocated its own distinct set of positive and negative feedbacks using random numbers. These feedbacks have random signs and magnitudes and are made to vary as a function of temperature in a random way.

In addition to using random numbers to generate a diverse collection of planets, each with different feedbacks, random numbers are also used to determine challenges to habitability. Planets are made to experience a random number of perturbations to temperature, occurring at random times and with random signs and magnitudes. In addition, each planet experiences a randomly-determined long-term climate forcing, to represent drivers that vary with time such as the luminosity of the parent star.

Example planet runs

It is now shown how these modelling procedures work in practice. Four examples were chosen to illustrate the procedures used. The left-hand side of Fig. 1 displays four example planets generated automatically by this scheme. The right-hand side of Fig. 1 shows an individual run for each of these planets, starting from a random initial temperature and faced with a random set of perturbations.

Each row of the figure is a different planet. On the left-hand plots (panels a, c, e and g): black circles are nodes and the thick black line is f(T) (see Methods for explanation); light grey shading indicates a region of runaway warming or runaway cooling; red circles show initial positions of attractors; red stippled lines demarcate associated basins of attraction. On the right-hand plots (panels b, d, f and h): blue line shows the evolution of planetary temperature; black shading shows limits to habitability; red circles show positions of attractors; green squares are the randomly determined initial temperatures for each run; red triangles indicate the largest perturbations, with triangle size proportional to perturbation magnitude. a and b are for example planet 1, c & d planet 2, e & f planet 3 and g & h planet 4. Note the different scale on the time axis of each right-hand panel.

Figure 1a shows a planet that ended up with a single basin of attraction (zone of negative feedback), on the warmer side of the habitable range. An attractor (a value which the system tends to converge towards) occurs where the horizontal axis (dT/dt = 0) is crossed with a negative slope, or in other words where the planet cools at temperatures slightly above the attractor value and warms at temperatures slightly below it. An attractor is a stable equilibrium point. Zero-crossing points of positive slope are, conversely, unstable equilibrium points that occur at the edges of basins of attraction. This first planet has a region of runaway cooling within which temperatures decrease inexorably until too cold for habitability. In the run shown in Fig. 1b, the planet happened to start within this runaway feedback zone and hence immediately became uninhabitable.

A second planet (Fig. 1c) ended up with five attractors and a small zone of runaway warming. This time the random starting temperature was within the middle (third) basin of attraction. The planet again failed rapidly (Fig. 1d), this time because, coincidentally, the three cooler attractor points are all situated very close to the lower boundaries of their respective basins of attraction. As a result, random perturbations soon knocked the system from the first basin of attraction into the one to its left, and this repeated until departure from the habitable range.

The third planet (Fig. 1e) has two basins of attraction and started off in the warmer one. Habitable conditions were maintained for over 500 My (Fig. 1f), with jumps between two basins of attraction, before a perturbation knocked the temperature to above the upper limit. A strong long-term forcing (\(\phi\) = −45.2 (°C ky−1) By−1) would anyway have prevented equable temperatures persisting for 3 By.

Some planets can stay habitable for the full 3 By. The planet in Fig. 1g has only one basin of attraction, spanning nearly the whole of the habitable range. A long-term forcing (\(\phi\) = −24.6 (°C ky−1) By−1) caused the attractor to move slowly further away from the upper limit. Despite a large perturbation at about 200 My, the planet stayed habitable for the full 3 By (Fig. 1h).

These examples illustrate how the “habitability problem”, stabilising mechanisms and chance are incorporated in this approach. Next, I present results obtained with this approach and consider their implications.

Neither pure chance nor pure mechanism: results for artificial planets

As a first step, some particular planets were each run 1000 times in order to test two hypotheses based on the classes of solution: (a) that planetary habitability is a matter of good fortune alone, with a planet’s properties having no effect on the likelihood of habitability (henceforth referred to as Hypothesis 1, or just H1); and (b) that planetary characteristics, such as possession or lack of stabilising feedbacks, either guarantee or rule out habitability from the outset, with no influence of random events such as asteroid impacts (Hypothesis 2, or just H2). For these tests, each rerun had a different (random) initial temperature and perturbations each time, there were no long-term forcings (\(\phi\) = 0) and perturbations were at the lower end of severity.

A planet with unfavourable feedbacks (the second one in Fig. 1) went uninhabitable every time, consistent with H2. A purposefully-created planet with no feedbacks (dT/dt = 0 for all T) also had 0 successes out of 1000, consistent with H2 and the contention19 that pure chance alone (in the form of a random walk driven by random perturbations) leads to an extremely small probability of remaining habitable over such an immense duration. An optimal planet (dT/dt = +300 °C ky−1 for T < Tmid, −300 °C ky−1 for T > Tmid) stayed habitable on all 1000 occasions, again consistent with H2.

Results for populations of randomly generated planets

The results of a much larger simulation are now reported. In this, the habitability tendencies of 100,000 planets were evaluated. The planets were generated by allocating random rather than purposefully designed climate feedbacks, using the procedure described in Methods. The 100,000 distinct planets were each run 100 times, with different chance factors (different random sets of perturbations and initial temperatures) for each of the 100 repeats. The results show probabilistic relationships between success rate and inherent planetary characteristics that agree with expected behaviour (Supplementary Fig. 1).

The results were analysed to give further insights into the roles of destiny and chance. Figure 2a shows outcomes for a subset of 200 planets: 15 of them remained habitable on 1 or more runs and the other 185 had no successes. The most successful planet stayed habitable on 60 out of 100 repeats. No planet was successful on all of its 100 repeats. Similar results were obtained, on a larger scale, for the whole simulation (blue line, Fig. 2b). Out of a population of 100,000, ~9% of planets (8,710) were successful at least once, but only 1 planet was successful on all 100 occasions. Success rates of individual planets were not limited to 0% or 100% but instead spanned the whole spectrum. Some planets were successful only 1 time in 100, others 2 times, and so on. All degrees of planet success are seen to occur in the simulation (Fig. 2b). It can be seen, as found in a previous study46, that climate stabilisation can arise occasionally out of randomness—a proportion of the planets generated by the random assembly procedure had some propensity for climate regulation.

a Success rates of a set of 200 planets, ranked in order of success; b success rates of all planets that were successful at least once, ranked in order, from a population of 100,000 planets (unsuccessful planets not shown); c histogram of habitability durations. Success rates in a and b were calculated by running each planet 100 times and counting the number of times it stayed habitable for 3 By. Habitability durations in c are the times until temperature departs the habitable range. Blue lines and bars show simulation results; red lines and bars show expected results if planetary properties dictate outcomes absolutely (no influence of chance, i.e. H2); black lines and bars show the expected results if outcomes are caused by chance alone (no influence of a planet’s feedbacks, i.e. H1). The green dotted line in b shows the spectrum of success rates of only those planets that were successful on the first of their 100 runs. H1 and H2 are made to have the same proportion (0.0145) of successful runs as the simulation.

Also shown in Fig. 2 are the expected distributions for hypothetical cases in which there were exactly the same total number of successes in the 10 million runs, but in which successes were distributed either completely according to chance (H1: black line and grey shading) or else only among always-successful planets (H2: red line and shading). The frequency distribution of run durations in the simulation is completely distinct from either H1 or H2 (Fig. 2c). Furthermore, a runs test47 carried out on the simulation results presented planet by planet (the first 100 being those for planet 1, the second 100 for planet 2, etc) conclusively rejects random order (p ≪ 0.001), again ruling out H1.

In terms of the question addressed in this paper, the answer from the simulation results is unambiguous: in the simulation, the distribution of planet successes is very different from that expected from either H1 or H2 and is intermediate between them; in the simulation, both mechanism and chance play a role in determining whether, for each run of each planet, habitability lasts as long as 3 By. When considering whether habitability persistence is determined in the same way in reality, however, it is of course necessary to take into account the limitations of the model. The simplifications and uncertainties in the model design mean that it must be unrealistic in some respects. Caution is therefore required in extrapolating from model results to reality. However, it should also be noted that extensive sensitivity analyses (37 in total, addressing a wide range of biological, geophysical, geochemical, biogeochemical and astronomical uncertainties—see Table 1, Supplementary Methods and Supplementary Tables 1 and 2) find the result put forward here to be remarkably robust to model uncertainties (simulation results between H1 and H2 for all 37 model variants tested). In other words, while overall success rates (for instance) differ greatly between model variants, the shared nature of the control over habitability durations does not. For this reason, the complete set of model results, including sensitivity analyses, gives confidence in the overall conclusion. Taken as a whole, the model results suggest strongly that the occurrence of long-term habitability in the real universe is also a function of both mechanism and chance. Independently conceived models should, however, also be applied to this problem, to see if similar results are obtained.

Rerunning the tape

It was a precondition for the coming-into-existence of Homo sapiens that Earth had remained habitable over geological timescales, and the results just described give some insight into how it might have occurred. Additional analysis was carried out to further investigate the possibility that Earth’s long and unbroken duration of habitability was not inevitable from the outset. A set of 1,000 planets were each run twice, with the perturbations and initial temperatures differing between the two runs. Stephen Jay Gould introduced the idea of re-winding the tape of Earth’s history to see if biological evolution would yield the same results on a repeat run48; here the same idea was investigated for climate evolution. As shown in Fig. 3, 15 out of the 1000 planets stayed habitable on the first run and 10 on the second. 6 planets stayed habitable both times. In other words, 6 of the 15 that were successful on the first run were also among the 10 that were successful on the second run. More detailed analysis of a larger set of results found there to be a 39% overlap; in other words, if a planet stayed habitable on an initial run then, on average, there was a 39% chance that it would stay habitable on a second run and a 61% chance that it would not. The overall success rates of the more than 1,400 out of 100,000 planets that stayed habitable for 3 By on their first run are shown in Fig. 2b (green line). This is the most appropriate comparison to Earth, which stayed habitable on its one ‘run’ but for which the overall success probability is not known. This distribution differs from that of planets that were successful on any run (blue line, Fig. 2b) but again is seen to be controlled by a mixture of both destiny and chance.

1,000 different planets were generated randomly. a Results when each planet was run one time: those planets which remained habitable are shown as green circles and those which did not as black circles; b results when the same 1000 planets were run a second time (same planets but different starting temperatures and perturbations compared to the first run). In this particular example, 15 planets stayed habitable on the first occasion, 10 on the second, with 6 remaining habitable on both occasions (additional analysis shows that on average there is a 39% overlap, or in other words there is a 39% chance of a planet being habitable in the repeat set if it was habitable in the original set).

Discussion

The obvious implication from these results is that Earth’s success was not an inevitable outcome but rather was contingent—it could have gone either way. If Earth’s climate system had been subjected, for instance, to different magnitudes of volcanic supereruptions or different timings of impacting asteroids then the outcome could have been different. A role for chance in the eventual outcome is, arguably, also supported by Earth’s geological record, which includes evidence of extreme climate perturbations that may have come very close to extinguishing all life (for instance the Snowball Earth episodes of the PaleoProterozoic49 and NeoProterozoic50).

The initial prospects for Earth staying habitable could have been poor. If so, this suggests that elsewhere in the Universe there are Earth-like planets which had similar initial prospects but which, due to chance events, at one point became too hot or too cold and consequently lost the life upon them. As techniques to investigate exoplanets improve and what seem at first to be ‘twin Earths’ are discovered and analysed, it seems likely that most will be found to be uninhabitable.

Methods

Motivation and underlying principles

The overall goal is to investigate how long-term planetary habitability (necessary for intelligent life to evolve) can occur in the universe. To address this, it is helpful to have a model that can run for billions of years and be capable of simulating many very different planets. Billion-year runs of complex climate models are not feasible because of excessive run times. An alternative approach is attempted here: a simplest possible representation of climate feedbacks and climate regulation.

The first design principle of this idealised model is to aim for simplicity. Details are avoided wherever possible, retaining only those fundamentals that are deemed essential for addressing the overall goal. The focus is exclusively on the most important aspects (climate feedbacks and the possibility of stabilisation). The second principle is to make the model general, not specific. It is definitely not a model of Earth. It is instead a model intended to be equally capable of representing any potentially habitable planet, whether inside or outside the solar system and whether inside or outside conventionally defined habitable zones44,51. The third design principle, following from the second, is to avoid assumptions wherever possible. Where there is any doubt about its universal applicability then an assumption is usually not made. The use here of random numbers to generate multiple and diverse instances of planets allows ranges rather than precise values to be specified for each parameter, removing the need to assume exact values. Where assumptions did have to be made during model design, sensitivity analyses (see Supplementary Methods) were used to explore the consequences of alternative assumptions (signalled below by (SA)).

The model has only one state variable, planetary surface temperature (T), and consists of one sole ordinary differential equation:

where t is time, dT/dt is the rate of change of temperature with time (positive values corresponding to planetary warming and negative values to cooling), and \(\phi\) is a long-term climate forcing, summing over drivers that vary with time such as the luminosity of the parent star. Time (t) is the time elapsed since life first evolved (i.e., since life appeared anywhere on Earth; it is not, for instance, the time since the Earth formed or the time since life first appeared on land). The overall sum feedback response is represented by f(T) and varies as a function of temperature T. In other words, at any planetary temperature T, f(T) is the net rate of warming or cooling that results from all processes operating in combination. Unlike in standard climate models, individual climate feedbacks (such as ice-albedo feedbacks) or processes (such as the interception of outgoing infrared radiation by greenhouse gases) are not separately represented. An advantage of this parsimonious approach is that few assumptions are made, maximising the variety of different types of system that can be represented. A disadvantage is loss of realism, if an important aspect of system behaviour is lost through oversimplification and the omission affects the results obtained.

Feedbacks are set as follows. First, a random number generator is used to determine the number of climate nodes for the planet (~Ui(2,20), where Ui is a uniform distribution). These are then distributed evenly across the habitable temperature range (−10 to +60 °C). Random numbers are then used again to set the value of f(T) for each node, with positive and negative values being equally probable (~N(0,100) °C ky−1, where N is a Gaussian distribution). f(T) values between nodes are linearly interpolated (SA). Each planet is also allocated a random long-term forcing (∅), which can be either positive or negative with equal probability (~N(0,50) (°C ky−1) By−1).

The ability of planets to stay habitable is tested by calculating their temperature evolutions when subject to random perturbations and a long-term forcing. Each run is started at a random initial temperature and is simulated from time zero (origin of life) until 3 By (evolution of intelligence) or until temperature leaves the habitable range. Random temperature perturbations occur at random times (red triangles in Fig. 1), representing climate-altering events such as eruptions of supervolcanoes and asteroid impacts. There are three size classes of perturbation (~N(2,1), ~N(8,4), and ~N(32,16) °C), with larger size classes occurring less frequently. Chance is defined here as consisting of the perturbations and the initial temperatures; these are varied for each rerun of the same planet whereas inherent characteristics (feedbacks and forcing) are not.

The model executes rapidly. Runs take a few minutes for 3 billion years, allowing the habitability propensities of thousands of different planets to be investigated.

Habitable temperature range

The model has only one state variable, planetary surface temperature (T, in °C, converted to kelvin where necessary; neither vertical atmospheric temperature gradients nor top-of-the-atmosphere temperature are represented; surface environmental conditions other than temperature are also not represented (SA)). It is assumed here that life on other planets requires liquid water, as it does on Earth. The lower limit to habitability is set to −10 °C (SA), somewhat below the freezing point of salt water. More complex (eukaryotic) life on Earth is mostly unable to survive above about 50 °C, at which point DNA and other proteins start to denature unless protected. While simple microbes can grow at temperatures up to 122 °C (ref. 52), it seems unlikely that more complex (intelligent) life could survive at such temperatures23. The pressure-dependent boiling point of water also sets a higher limit but it is assumed here that the most critical factor is enzyme stability, and the upper limit is therefore set to +60 °C (SA). It is likely that climate needs to stay within narrower bounds during the later stages of the evolution of intelligence, although this was not included in the standard simulation (SA). Geographical variability implies that more extreme average global surface temperatures might be required to force extinction everywhere (SA). Microbial life can potentially survive periods of inhospitable surface conditions within refuges, such as in subsurface rocks or deep in an ice-covered ocean at hydrothermal vents, emerging later to recolonise the surface; evidence from Neoproterozoic Snowball Earth events suggests however that eukaryotic photosynthetic algae persisted through the events and therefore that surface habitability was maintained at some locations53. Other environmental conditions can affect habitability, but only temperature (and therefore water availability) are considered here (SA).

Randomly configured feedbacks

It is assumed that there is no inherent bias in the climate systems of planets as a whole towards either negative (stabilising) or positive (destabilising) feedbacks (SA). In other words, it is assumed here that the feedback systems of planets are the end result of a set of processes which do not in aggregate contain any overall inherent predisposition either towards or against habitability. Planets are randomly configured such that some end up being more prone to climate stability and others do not, but without any inbuilt bias either way (SA). This assumption could be incorrect. For instance, all planets are subject to the Stefan-Boltzmann Law whereby the total heat energy radiated outwards (the black body radiation) increases in proportion to the fourth power of its temperature in kelvin20. In the absence of systematic effects of temperature on other energy fluxes, this would impart an overall bias towards temperature stability. But other factors may also change in a systematic way with temperature. For instance, all planets with water oceans are also subject to potentially destabilising ice-albedo54 and temperature-water vapour55,56 positive feedbacks with the potential to lead to a runaway icehouse or greenhouse.

When a new planet is generated in the simulation it is given a random set of feedbacks using the following procedure. First, each planet is allocated a number of nodes (NN), where NN is equally likely to be set to any number between 2 and \(N_{\mathrm{N}}^{{\mathrm{max}}}\), the maximum number of nodes (20 in the default model (SA)):

where \(U_i\left( {2,N_{\mathrm{N}}^{{\mathrm{max}}}} \right)\) denotes an integer between 2 and \(N_{\mathrm{N}}^{{\mathrm{max}}}\), chosen randomly from a uniform distribution (i.e. equal probability of any number).

After setting the number of nodes, the properties of each node are then calculated. The first node is positioned at the lowest (coldest) temperature (Tmin) and the last node at the highest (hottest) temperature (Tmax) in the habitable range. Feedback strengths outside the habitable range are not considered because a planet is deemed to have ‘failed’ as soon as it has left the habitable range (SA). The temperatures (Ti) of the remaining nodes (if NN > 2) are then set so as to distribute them evenly (SA) across the habitable temperature range:

Finally, each node is also given a random feedback strength (fi). The term ‘feedback strength’ is used here to refer to the rate at which the planet tends to cool down or heat up at a particular temperature, as a result of feedbacks. Each value of fi is determined by choosing a number randomly from a Gaussian (normal) statistical distribution of mean 0 and standard deviation σf (in units of degrees C per thousand years):

σf is given a value of 100 °C ky−1 (SA). As a result, 99.7% of feedbacks calculated using Eq. (4) have an absolute magnitude less than or equal to 300 °C ky−1 (3σf).

It is difficult to be certain about the most realistic value for σf. Some estimated potential heating and cooling rates are shown for Earth in Supplementary Table 3, for comparison. However, because Earth has remained habitable for 3 or 4 By, observer selection means that these cannot be considered representative of planets as a whole. Different rates of temperature change can be expected on other planets, if, for instance, they have different amounts of ocean (different heat capacities).

Feedback strengths at intervening temperatures are calculated by linear interpolation (SA) between values at nodes. Some example results of this procedure for randomly determining climate feedbacks are seen on the left-hand side of Fig. 1 of the paper. The procedure generates a heterogeneous mixture of planets. This is consistent with conclusions, derived from observations from Kepler and other missions, of considerable exoplanet diversity. It is also consistent with theoretical exoplanet studies that have started to investigate the possible climate system consequences of this exoplanet diversity, for instance of planets with much deeper oceans57 or tidally locked about their parent stars58 or with different rotation rates59. Diversity of planets is thus supported by exoplanet studies; universality of Earth-like feedbacks is not.

Gradual changes over time

Various climate-relevant factors can change over geological time. Most notably, a planet in an unchanging orbit around a single star does not receive an unvarying heat input from that star, because stellar luminosities change over time. Stars are most stable while on the main sequence, but even while on the main sequence their rate of output of radiation increases with age (hence the Faint Young Sun paradox for Earth). The Sun, a G-type star, has increased its output at a faster rate (30% increase over the last 3 By)14 than most other potentially life-supporting stars. The rate of heat output of the more numerous M-dwarf stars increases more slowly during their longer (>10 By) lifetimes on the main sequence.

There are other factors that can also change gradually over the lifetime of a planet60. Some of them will amplify or counteract the effects of stellar brightening; they could even outweigh it, leading to a net change in the opposite direction, tending to make the planet get progressively cooler over time. Factors tending to promote long-term cooling include: (1) a gradual increase in continental area over time61, producing an increase in albedo (land has a higher albedo than water); (2) declining geothermal heating due to decay of radioactive elements in a planet’s interior, with the stock of radioactive elements dwindling over time (this is a small factor in the radiation budget of Earth – Supplementary Table 3 – but is probably a larger factor for some other planets and moons); (3) progressive leakage out to space of low molecular mass gases including hydrogen, facilitating oxygenation of the atmosphere and removal of methane and other greenhouse gases62. Another potential source of long-term change is the life on the planet itself. As life increases in complexity over time through evolution, the intensity with which it draws resources (including greenhouse gases such as CO2) from the environment may also increase63.

From the foregoing, it seems likely that some planets experience a drift towards more negative dT/dt values over time, even as the heat received from their host star increases. For this reason, no bias either way is coded into the model (SA). Instead, the overall sum forcing for each planet (\(\phi\)) is set randomly from a Gaussian distribution, with equal probabilities of it being either positive or negative:

where \(\sigma _\phi\) is set to 50 (°C ky−1) By−1 (SA). 99.7% of forcings in the simulation therefore lie between −150 and +150 (°C ky−1) By−1.

By way of comparison, the Sun has increased its rate of energy output by about 30% over the last 3 billion years, with the area-averaged solar flux received by the Earth increasing from about 270 W m−2 to 340 W m−2 (ref. 14). This would correspond to a rate of change of ∅ of (+70/3) W m−2 By−1 ≈ +70 (°C ky−1) By−1 if no other radiative fluxes also changed over the same interval64.

Instantaneous perturbations

Planetary climates are also subject to occasional short-lived external perturbations, of varying magnitude and frequency of occurrence. On Earth, volcanic eruptions and super-eruptions (such as Toba) produce aerosols that block sunlight and cool the planet. Careful comparison of climate and volcanic ash records over the last 2,500 years has confirmed that temperatures on the surface of the Earth are depressed for up to 10 years in the aftermath of eruptions65. Other sources66,67 of relatively short-term perturbations to a planet’s temperature include impacts of comets or asteroids, orbital instabilities, carbon dioxide emissions associated with emplacements of large amount of basalt onto the surface of the Earth, stellar flares, and nearby supernovae or other cosmic explosions. Perturbations can also be biological in origin68. For instance, extreme glaciations on Earth were triggered by the advent of oxygenic photosynthesis and the spread of the first forests across the land23. Some perturbations (e.g. those from nearby supernovae or gamma-ray bursts) could be so severe as to preclude any possibility of survival, whatever feedbacks a planet possesses, although perturbations with enough energy to instantaneously boil all of Earth’s oceans (raise temperatures throughout the ocean by 100 °C) are calculated to be vanishingly rare67.

Three classes of perturbations (PS, PM and PL: small, medium and large) are used in the simulations, with magnitudes determined as follows:

where Ui(−1 or 1) makes each perturbation randomly positive or negative and \(N\left( {\mu ,\sigma } \right)\) is a random number from a normal (Gaussian) distribution with mean µ and standard deviation σ (both in °C). For comparison, temperature effects of different catastrophic events on Earth are shown in Supplementary Table 4, while again keeping in mind that Earth is not likely to be typical because of observer selection bias.

Different planets are subject to different perturbation probabilities depending on how geologically active they are and where they are. Planets in densely-packed galaxies or regions of a galaxy have a higher risk during the course of 3 By of experiencing large perturbations from nearby supernovae and/or gamma-ray bursts. The centres of galaxies, where stars are more tightly packed, are, for this reason, thought to be less likely locations for the evolution of intelligence69. Other known factors include the nature of the parent star (how often and how strongly it flares), the stability of the orbits of other planets in the planetary system, and the number and orbital stability of asteroids and comets in the system.

It is likely, as seen for volcanic eruptions65 and asteroids70, that smaller climate perturbations occur more frequently and larger perturbations less frequently. To reflect this variable exposure to perturbations, random numbers are used to allocate each planet different expected numbers (λ) of perturbations in each class:

The actual numbers of perturbations (NS, NM and NL) during each 3 By simulation rerun of a planet, if it completes, are then calculated randomly as follows:

where P(λ) is a random number from a Poisson distribution with an expected (mean) value of λ. The perturbations are made to occur at random times (from a uniform distribution) across the 3 By (SA). Some examples of the results of applying these equations are shown on the right of Fig. 1 of the paper (the red triangles).

Required duration of uninterrupted habitability

An enormous duration of continued habitable conditions is required for the evolution of intelligent life. It does not by itself guarantee that complex life will evolve (for instance, life may never emerge in the first place) but evolution of complex life is obviously impossible without prolonged habitability. It is difficult to know whether 3 billion years of habitability is always required, or just the time that it happened to take on Earth. Perhaps intelligent life could develop more rapidly on a planet with a more dynamic environmental history without periods of stagnation such as the so-called ‘boring billion’ on Earth71. A minimum requirement of only 100 million years has been suggested72. Alternatively, perhaps longer durations are more typical73. In the absence of a detailed understanding of the time required, it was set to 3 billion years in this study (SA).

Numerical integration

Numerical integration of Eq. 1 was carried out in Matlab (ode23s stiff solver, adaptive timestep), with an initial timestep of 10 years, a maximum timestep of 1 million years, a relative error tolerance of one in ten thousand (0.0001) and an absolute tolerance of 0.05 °C. The large ensemble runs reported here were executed on a parallel computing cluster using MDCS (Matlab Distributed Computing Server).

The initial temperature (T0) was set equal to a different random value between Tmin and Tmax (equal probability of any value) at the beginning of each run:

Because perturbations are abrupt changes which can lead to integration errors if not properly handled, the model was run from one perturbation to another with the numerical integration restarted after each perturbation. Simulations were continued for each planet until it went sterile (SA) (its temperature left the habitable range, at which point it was deemed to have ‘failed’) or until 3 By had elapsed, at which point it was deemed to have ‘succeeded’ (intelligence to have evolved).

Multiple runs of the same planet

Because random numbers are used, a statistically robust evaluation of the tendency of a planet to maintain habitable conditions requires multiple runs; the result of a single run is unlikely to be representative. In order to run a planet multiple times, a separation has to be made between factors that are intrinsic to the planet, and are therefore fixed for that planet, and those which are changed for each different run of that planet. This separation defines which factors are categorised as part of chance or hazard, and which as part of a planet’s inherent make-up and hence mechanism. In these simulations, the feedbacks (NN and fi), forcing (∅) and expected numbers of perturbations (λS, λM, λL) were kept fixed for each planet, whereas the initial temperatures (T0) and actual numbers (NS, NM, NL) and magnitudes (PS, PM, PL) of perturbations were randomly set to different values for each run of a planet (SA). λS, λM, λL and ∅ are properties that depend in part on the planet’s location (reflecting the nature of the surrounding planetary system and star, as well as of the adjacent part of the galaxy). Reruns were therefore of the same planet in the same astronomical location, rather than of the same planet in different astronomical locations (SA).

Data availability

No primary data was generated in this study.

Code availability

Matlab code for the model and sensitivity analyses is publicly available74 (https://doi.org/10.5281/zenodo.4081451). Results for this paper were generated using the parallel version of the code. The model is also made available in Octave, a free-to-use alternative to Matlab.

References

Nutman, A. P., Bennett, V. C., Friend, C. R., Van Kranendonk, M. J. & Chivas, A. R. Rapid emergence of life shown by discovery of 3,700-million-year-old microbial structures. Nature 537, 535–538 (2016).

Allwood, A. C., Walter, M. R., Kamber, B. S., Marshall, C. P. & Burch, I. W. Stromatolite reef from the Early Archaean era of Australia. Nature 441, 714–718 (2006).

Lepot, K., Benzerara, K., Brown, G. E. & Philippot, P. Microbially influenced formation of 2,724-million-year-old stromatolites. Nature Geoscience 1, 118–121 (2008).

Langmuir, C. H. & Broecker, W. S. How to Build a Habitable Planet: The Story of Earth from the Big Bang to Humankind. (Princeton University Press, Princeton, 2012).

Hoyle, F. Remarks on the computation of evolutionary tracks. Ricerche Astronomiche 5, 223–230 (1958).

Schwarzschild, M. Structure and Evolution of Stars. (Princeton University Press, 1958).

Sagan, C. & Mullen, G. Earth and Mars: evolution of atmospheres and surface temperatures. Science 177, 52–56 (1972).

Kasting, J. F. Early Earth: faint young Sun redux. Nature 464, 687–689 (2010).

Rondanelli, R., Lindzen, R. S. Can thin cirrus clouds in the tropics provide a solution to the faint young Sun paradox? J. Geophys. Res. 115, https://doi.org/10.1029/2009JD012050 (2010).

Airapetian, V. S., Glocer, A., Gronoff, G., Hébrard, E. & Danchi, W. Prebiotic chemistry and atmospheric warming of early Earth by an active young Sun. Nat. Geosci. 9, 452–455 (2016).

Sauterey, B., Charnay, B., Affholder, A., Mazevet, S., Ferrière, R. Co-evolution of primitive methane-cycling ecosystems and early Earth’s atmosphere and climate. Nat. Commun. 11, https://doi.org/10.1038/s41467-020-16374-7 (2020).

Isson, T. T. et al. Evolution of the global carbon cycle and climate regulation on Earth. Global Biogeochem. Cycles 34, e2018GB006061 (2020).

Charnay, B. et al. Is the Faint Young Sun Problem for Earth Solved?. Space Sci. Rev. 216, 90 https://doi.org/10.1007/s11214-020-00711-9 (2020).

Feulner, G. The faint young Sun problem. Rev. Geophys. 50, RG2006, https://doi.org/10.1029/2011RG000375 (2012).

Avduevsky, V. S., Marov, M. Y., Rozhdestvensky, M. K., Borodin, N. F. & Kerzhanovich, V. V. Soft landing of Venera 7 on the Venus surface and preliminary results of investigations of the Venus atmosphere. J. Atmos. Sci. 28, 263–269 (1971).

Hess, S. L. et al. Mars climatology from Viking 1 after 20 sols. Science 194, 78–81 (1976).

Grotzinger, J. P. et al. Deposition, exhumation, and paleoclimate of an ancient lake deposit, Gale crater, Mars. Science 350, 177 (2015).

Hynek, B. M., Beach, M. & Hoke, M. R. T. Updated global map of Martian valley networks and implications for climate and hydrologic processes. J. Geophys. Res., 115, https://doi.org/10.1029/2009JE003548 (2010).

Berner, R. A. & Caldeira, K. The need for mass balance and feedback in the geochemical carbon cycle. Geology 25, 955–956 (1997).

Pierrehumbert, R. T. Principles of Planetary Climate. (Cambridge University Press, New York, 2012).

Broecker, W. S. & Sanyal, A. Does atmospheric CO2 police the rate of chemical weathering? Global Biogeochem. Cycles 12, 403–408 (1998).

Lovelock, J. E. Gaia: A New Look at Life on Earth. (Oxford University Press, Oxford, 1979).

Tyrrell, T. On Gaia: A Critical Investigation of the Relationship Between Life and Earth. (Princeton University Press, Princeton, 2013).

Walker, J. C., Hays, P. B. & Kasting, J. F. A negative feedback mechanism for the long-term stabilization of Earth’s surface temperature. J. Geophys. Res. Oceans 86, 9776–9782 (1981).

Kump, L. R., Brantley, S. L. & Arthur, M. A. Chemical weathering, atmospheric CO2, and climate. Annu. Rev. Earth Planet. Sci. 28, 611–667 (2000).

Zeebe, R. E. & Caldeira, K. Close mass balance of long-term carbon fluxes from ice-core CO2 and ocean chemistry records. Nat. Geosci. 1, 312–315 (2008).

Li, G. & Elderfield, H. Evolution of carbon cycle over the past 100 million years. Geochim. Cosmochim. Acta 103, 11–25 (2013).

Li, G. et al. Temperature dependence of basalt weathering. Earth Planet. Sci. Lett. 443, 59–69 (2016).

Isson, T. T. & Planavsky, N. J. Reverse weathering as a long-term stabilizer of marine pH and planetary climate. Nature 560, 471–475 (2018).

Kasting, J. F. The Goldilocks planet? How silicate weathering maintains Earth “just right”. Elements 15, 235–240 (2019).

Raymo, M. E., Ruddiman, W. F. & Froelich, P. N. Influence of late Cenozoic mountain building on ocean geochemical cycles. Geology 16, 649–653 (1988).

Riebe, C. S., Kirchner, J. W. & Finkel, R. C. Erosional and climatic effects on long-term chemical weathering rates in granitic landscapes spanning diverse climate regimes. Earth Planet. Sci. Lett. 224, 547–562 (2004).

Willenbring, J. K. & von Blanckenburg, F. Long-term stability of global erosion rates and weathering during late-Cenozoic cooling. Nature 465, 211–214 (2010).

Foster, G. L. & Vance, D. Negligible glacial–interglacial variation in continental chemical weathering rates. Nature 444, 918–921 (2006).

Raymo, M. E. & Ruddiman, W. F. Tectonic forcing of late Cenozoic climate. Nature 359, 117–122 (1992).

Bowen, G. J. & Zachos, J. C. Rapid carbon sequestration at the termination of the Palaeocene-Eocene Thermal Maximum. Nat. Geosci. 3, 866–869 (2010).

Gutjahr, M. et al. Very large release of mostly volcanic carbon during the Palaeocene-Eocene Thermal Maximum. Nature 548, 573–577 (2017).

Carter, B. Large Number Coincidences and the Anthropic Principle in Cosmology. IAU Symposium 63: Confrontation of Cosmological Theories with Observational Data. D. Reidel, Dordrecht, pp. 291–298 (1974).

Watson, A. J. Gaia and observer self-selection. In: Scientists Debate Gaia, (eds Schneider, S. H., Miller, J. R., Crist, E. & Boston, P. J.) 201–208 (MIT Press, Cambridge MA, 2004).

Waltham, D. Lucky Planet: Why Earth is Exceptional and What That Means for Life in the Universe. Icon Books, London (2014).

Petigura, E. A., Howard, A. W. & Marcy, G. W. Prevalence of Earth-size planets orbiting Sun-like stars. Proc. Natl Acad. Sci. USA 110, 19273–19278 (2013).

Dressing, C. D. & Charbonneau, D. The occurrence of potentially habitable planets orbiting M dwarfs estimated from the full Kepler dataset and an empirical measurement of the detection sensitivity. Astrophys. J. 807, https://doi.org/10.1088/0004-637X/807/1/45 (2015).

Kump, L. R., Kasting, J. F. & Crane, R. G. The Earth System, 2nd edition, (Prentice Hall, New Jersey, 2004).

Kasting, J. F. & Catling, D. Evolution of a habitable planet. Annu. Rev. Astron. Astrophys. 41, 429–463 (2003).

Lunine, J. I. Earth: Evolution of a Habitable World. (Cambridge University Press, 2013).

Nicholson, A. E., Wilkinson, D. M., Williams, H. T. & Lenton, T. M. Gaian bottlenecks and planetary habitability maintained by evolving model biospheres: the ExoGaia model. Monthly Notices R. Astron. Soc. 477, 727–740 (2018).

Bradley, J. V. Distribution-Free Statistical Tests. (Prentice-Hall, 1968).

Gould, S. J. Wonderful Life: The Burgess Shale and the Nature of History. (WW Norton & Co, New York, 1989).

Kirschvink, J. L. Late Proterozoic Low-Latitude Global Glaciation: the Snowball Earth. In: The Proterozoic Biosphere: a Multidisciplinary Study, (eds Schopf, J. W. & Klein, C.) 51-52 (Cambridge University Press, New York, 1992).

Hoffman, P. F., Kaufman, A. J., Halverson, G. P. & Schrag, D. P. A Neoproterozoic snowball earth. Science 281, 1342–1346 (1998).

Heller, R. & Armstrong, J. Superhabitable worlds. Astrobiology 14, 50–66 (2014).

Takai, K. et al. Cell proliferation at 122 °C and isotopically heavy CH4 production by a hyperthermophilic methanogen under high-pressure cultivation. Proc. Natl Acad. Sci. USA 105, 10949–10954 (2008).

Olcott, A. N., Sessions, A. L., Corsetti, F. A., Kaufman, A. J. & De Oliviera, T. F. Biomarker evidence for photosynthesis during Neoproterozoic glaciation. Science 310, 471–474 (2005).

Budyko, M. I. The effect of solar radiation variations on the climate of the earth. Tellus 21, 611–619 (1969).

Held, I. M. & Soden, B. J. Water vapor feedback and global warming. Annu. Rev. Energy Environ. 25, 441–475 (2000).

Myhre, G. et al. Anthropogenic and Natural Radiative Forcing. In: Climate Change 2013: The Physical Science Basis. Contribution of Working Group I to the Fifth Assessment Report of the Intergovernmental Panel on Climate Change, (eds Stocker, T. F. et al.) 33-115 (Cambridge University Press, Cambridge, UK, 2013).

Kitzmann, D. et al. The unstable CO2 feedback cycle on ocean planets. Monthly Notices R. Astron. Soc. 452, 3752–3758 (2015).

Checlair, J., Menou, K. & Abbot, D. S. No Snowball on habitable tidally locked planets. Astrophys. J. 845, 132–141 (2017).

Jansen, T., Scharf, C., Way, M. & Del Genio, A. Climates of warm Earth-like planets. II. Rotational “Goldilocks” zones for fractional habitability and silicate weathering. Astrophys. J. 875, 79–86 (2019).

Bradley, D. C. Secular trends in the geologic record and the supercontinent cycle. Earth Sci. Rev. 108, 16–33 (2011).

Condie, K. C. Earth as an Evolving Planetary System. 3rd Edition. (Elsevier Academic Press, Burlington MA, 2015).

Catling, D. C., Zahnle, K. J. & McKay, C. P. Biogenic methane, hydrogen escape, and the irreversible oxidation of early Earth. Science 293, 839–843 (2001).

Tyrrell, T. Biotic plunder: Control of the environment by biological exhaustion of resources. In: Scientists Debate Gaia, (eds Schneider, S. H., Miller, J. R., Crist, E. & Boston, P. J.), MIT Press, Cambridge MA, pp. 137–147 (2004).

Hansen, C. J., Kawaler, S. D. & Trimble, V. Stellar interiors: physical principles, structure, and evolution. 2nd edition. (Springer, New York, 2012).

Sigl, M. et al. Timing and climate forcing of volcanic eruptions for the past 2,500 years. Nature 523, 543–549 (2015).

Hanslmeier, A. Habitability and Cosmic Catastrophes. (Springer, New York, 2008).

Sloan, D., Batista, R. A. & Loeb, A. The resilience of life to astrophysical events. Sci. Rep. 7, https://doi.org/10.1038/s41598-017-05796-x (2017).

Ward, P. The Medea Hypothesis: Is Life on Earth Ultimately Self-Destructive? (Princeton University Press, NJ, 2009).

Gowanlock, M. G., Patton, D. R. & McConnell, S. M. A model of habitability within the Milky Way Galaxy. Astrobiology 11, 855–873 (2011).

Bland, P. A. & Artemieva, N. A. The rate of small impacts on Earth. Meteor. Planet. Sci. 41, 607–631 (2006).

Holland, H. D. The oxygenation of the atmosphere and oceans. Philos. Trans. R. Soc. B Biol.Sci. 361, 903–915 (2006).

McKay, C. P. Time for intelligence on other planets. In: Circumstellar Habitable Zones, (ed. Doyle, L.) 405–419 (Travis House, Menlo Park, CA, 1996).

Kipping, D. An objective Bayesian analysis of life’s early start and our late arrival. Proc. Natl Acad. Sci. USA 117, 11995–12003 (2020).

Tyrrell, T. Planets Model code. Zenodo. https://doi.org/10.5281/zenodo.4081451 (2020, October 12).

Acknowledgements

I am grateful to the following for discussions and comments: Adrian Martin, Gavin Foster, Eelco Rohling, Mathis Hain, John Shepherd, Ford Doolittle, Sami Mikhail, Sarah Rugheimer, Dave Armstrong McKay and Phil Goodwin. Mark Garnett assisted with programming during a research experience placement funded by the UK Natural Environment Research Council through the Spitfire Doctoral Training Programme (NE/L002531/1). I acknowledge the use of the IRIDIS High Performance Computing Facility, and associated support services at the University of Southampton.

Author information

Authors and Affiliations

Contributions

T.T. carried out all analysis and interpretation and wrote the manuscript.

Corresponding author

Ethics declarations

Competing interests

The author declares no competing interests.

Additional information

Peer review information Primary handling editors: Mojtaba Fakhraee, Joe Aslin.

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Tyrrell, T. Chance played a role in determining whether Earth stayed habitable. Commun Earth Environ 1, 61 (2020). https://doi.org/10.1038/s43247-020-00057-8

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s43247-020-00057-8

This article is cited by

-

Recent advances in 2D semiconductor nanomaterials for photocatalytic CO2 reduction

Nano Research (2023)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.