Abstract

ChatGPT is a societally impactful artificial intelligence tool with millions of users and integration into products such as Bing. However, the emergence of jailbreak attacks notably threatens its responsible and secure use. Jailbreak attacks use adversarial prompts to bypass ChatGPT’s ethics safeguards and engender harmful responses. This paper investigates the severe yet under-explored problems created by jailbreaks as well as potential defensive techniques. We introduce a jailbreak dataset with various types of jailbreak prompts and malicious instructions. We draw inspiration from the psychological concept of self-reminders and further propose a simple yet effective defence technique called system-mode self-reminder. This technique encapsulates the user’s query in a system prompt that reminds ChatGPT to respond responsibly. Experimental results demonstrate that self-reminders significantly reduce the success rate of jailbreak attacks against ChatGPT from 67.21% to 19.34%. Our work systematically documents the threats posed by jailbreak attacks, introduces and analyses a dataset for evaluating defensive interventions and proposes the psychologically inspired self-reminder technique that can efficiently and effectively mitigate against jailbreaks without further training.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The datasets used in the experiments are publicly available. The constructed jailbreak dataset used in the experiments is available at https://github.com/yjw1029/self-reminder-Data and Zenodo55. The GLUE benchmark is available at https://huggingface.co/datasets/glue. The CNN/Daily Mail dataset is available at https://huggingface.co/datasets/cnn_dailymail. The XSum dataset is available at https://huggingface.co/datasets/xsum. The WMT16 (en-de) dataset is available at https://huggingface.co/datasets/wmt16. The SQuAD dataset is available at https://huggingface.co/datasets/squad. The Enron Email Dataset is available at https://www.cs.cmu.edu/~enron/.

Code availability

Our code is available at https://github.com/yjw1029/Self-Reminder/ and Zenodo56. All experiments and implementation details are described in the Methods section, the Results section and Supplementary Information section 1.

References

OpenAI. ChatGPT. openai.com/blog/chatgpt (2022).

Jiao, W., Wang, W., Huang, J.-T., Wang, X. & Tu, Z. Is ChatGPT a good translator? A preliminary study. Preprint at arXiv.org/2301.08745 (2023).

Klang, E. & Levy-Mendelovich, S. Evaluation of OpenAI’s large language model as a new tool for writing papers in the field of thrombosis and hemostasis. J. Thromb. Haemost. 21, 1055–1058 (2023).

Kung, T. H. et al. Performance of ChatGPT on usmle: potential for AI-assisted medical education using large language models. PLoS Digit. Health 2, e0000198 (2023).

Reinventing search with a new AI-powered Microsoft Bing and Edge, your copilot for the web. Microsoft blogs.microsoft.com/blog/2023/02/07/reinventing-search-with-a-new-ai-powered-microsoft-bing-and-edge-your-copilot-for-the-web/ (2023).

Introducing Microsoft 365 copilot – your copilot for work. Microsoft blogs.microsoft.com/blog/2023/03/16/introducing-microsoft-365-copilot-your-copilot-for-work/ (2023).

Much to discuss in AI ethics. Nat. Mach. Intell. 4, 1055–1056 (2022).

Brown, T. et al. Language models are few-shot learners. In Proc. Advances in Neural Information Processing Systems Vol. 33 (eds Larochelle, H. et al.) 1877–1901 (Curran, 2020).

Chowdhery, A. et al. PaLM: scaling language modeling with pathways. J. Mach. Learn. Res. 24, 1–113 (2023).

Zhang, S. et al. Opt: Open pre-trained transformer language models. Preprint at https://arXiv.org/2205.01068 (2022).

Askell, A. et al. A general language assistant as a laboratory for alignment. Preprint at https://arXiv.org/2112.00861 (2021).

Bai, Y. et al. Training a helpful and harmless assistant with reinforcement learning from human feedback. Preprint at https://arXiv.org/2204.05862 (2022).

Kasirzadeh, A. & Gabriel, I. In conversation with artificial intelligence: aligning language models with human values. Preprint at https://arXiv.org/2209.00731 (2022).

Ouyang, L. et al. Training language models to follow instructions with human feedback. In Proc. Advances in Neural Information Processing Systems Vol. 35 (eds Koyejo, S. et al.) 27730–27744 (Curran, 2022); http://papers.nips.cc/paper_files/paper/2022/hash/b1efde53be364a73914f58805a001731-Abstract-Conference.html

GPT-4 system card. OpenAI https://cdn.openai.com/papers/gpt-4-system-card.pdf (2023).

Selvi, J. Exploring prompt injection attacks. NCC Group https://research.nccgroup.com/2022/12/05/exploring-prompt-injection-attacks/ (2022).

Daryanani, L. How to jailbreak ChatGPT. Watcher Guru https://watcher.guru/news/how-to-jailbreak-chatgpt/ (2023).

Warren, T. These are Microsoft’s Bing AI secret rules and why it says it’s named Sydney. The Verge https://www.theverge.com/23599441/microsoft-bing-ai-sydney-secret-rules/ (2023).

Albert, A. Jailbreak chat. The Prompt Report https://www.jailbreakchat.com/ (2023).

ChatGPT – The Impact of Large Language Models on Law Enforcement (Europol, 2023).

Mitchell, E., Lee, Y., Khazatsky, A., Manning, C. D. & Finn, C. DetectGPT: zero-shot machine-generated text detection using probability curvature. In Proc. International Conference on Machine Learning, ICML 2023 (eds Krause, A. et al.) 24950–24962 (PMLR, 2023); https://proceedings.mlr.press/v202/mitchell23a.html

De Angelis, L. et al. ChatGPT and the rise of large language models: the new AI-driven infodemic threat in public health. Front. Public Health 11, 1166120 (2023).

Dasgupta, I. et al. Language models show human-like content effects on reasoning. Preprint at https://arXiv.org/2207.07051 (2022).

Wei, J. et al. Chain-of-thought prompting elicits reasoning in large language models. In Proc. Advances in Neural Information Processing Systems Vol. 35 (eds Koyejo, S. et al.) 24824–24837 (Curran, 2022); http://papers.nips.cc/paper_files/paper/2022/hash/9d5609613524ecf4f15af0f7b31abca4-Abstract-Conference.html

Wang, X. et al. Self-consistency improves chain of thought reasoning in language models. In Proc. 11th International Conference on Learning Representations, ICLR 2023 (OpenReview.net, 2023); https://openreview.net/pdf?id=1PL1NIMMrw

Zhou, D. et al. Least-to-most prompting enables complex reasoning in large language models. In Proc. 11th International Conference on Learning Representations, ICLR 2023 (OpenReview.net, 2023); https://openreview.net/pdf?id=WZH7099tgfM

Gollwitzer, P. M. Implementation intentions: strong effects of simple plans. Am. Psychol. 54, 493–503 (1999).

Carver, C. S. & Scheier, M. F. On the Self-Regulation of Behavior (Cambridge Univ. Press, 2001).

Meichenbaum, D. Cognitive behaviour modification. Cogn. Behav. Ther. 6, 185–192 (1977).

Bandura, A. Self-efficacy: toward a unifying theory of behavioral change. Psychol. Rev. 84, 191–215 (1977).

Ganguli, D. et al. The capacity for moral self-correction in large language models. Preprint at https://arXiv.org/2302.07459 (2023).

Kadavath, S. et al. Language models (mostly) know what they know. Preprint at https://arXiv.org/2207.05221 (2022).

Schick, T., Udupa, S. & Schütze, H. Self-diagnosis and self-debiasing: a proposal for reducing corpus-based bias in NLP. Trans. Assoc. Comput. Linguist. 9, 1408–1424 (2021).

Touvron, H. et al. Llama: open and efficient foundation language models. Preprint at https://arXiv.org/2302.13971 (2023).

Touvron, H. et al. Llama 2: open foundation and fine-tuned chat models. Preprint at https://arXiv.org/2307.09288 (2023).

Wang, A. et al. GLUE: a multi-task benchmark and analysis platform for natural language understanding. In Proc. 7th International Conference on Learning Representations, ICLR 2019 (OpenReview.net, 2019); https://openreview.net/forum?id=rJ4km2R5t7

Shi, F. et al. Language models are multilingual chain-of-thought reasoners. In Proc. 11th International Conference on Learning Representations, ICLR 2023 (OpenReview.net, 2023); https://openreview.net/pdf?id=fR3wGCk-IXp

See, A., Liu, P. J. & Manning, C. D. Get to the point: summarization with pointer-generator networks. In Proc. 55th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) (eds Barzilay, R. & Kan, M.-Y.), 1073–1083 (Association for Computational Linguistics, 2017); https://www.aclweb.org/anthology/P17-1099

Narayan, S., Cohen, S. B. & Lapata, M. Don’t give me the details, just the summary! Topic-aware convolutional neural networks for extreme summarization. In Proc. 2018 Conference on Empirical Methods in Natural Language Processing (eds Riloff, E. et al.) 1797–1807 (Association for Computational Linguistics, 2018); https://doi.org/10.18653/v1/d18-1206

Kasai, J., Pappas, N., Peng, H., Cross, J. & Smith, N. A. Deep encoder, shallow decoder: reevaluating non-autoregressive machine translation. In Proc. 9th International Conference on Learning Representations, ICLR 2021 (OpenReview.net, 2021); https://openreview.net/forum?id=KpfasTaLUpq

Rajpurkar, P., Zhang, J., Lopyrev, K. & Liang, P. Squad: 100,000+ questions for machine comprehension of text. In Proc. 2016 Conference on Empirical Methods in Natural Language Processing (eds Su, J. et al.) 2383–2392 (Association for Computational Linguistics, 2016); https://doi.org/10.18653/v1/d16-1264

Harnish, R. J. & Bridges, K. R. Effect of syllabus tone: students’ perceptions of instructor and course. Soc. Psychol. Educ. 14, 319–330 (2011).

Madsen Jr, C. H., Becker, W. C. & Thomas, D. R. Rules, praise, and ignoring: elements of elementary classroom control 1. J. Appl. Behav. Anal. 1, 139–150 (1968).

Li, H., Guo, D., Fan, W., Xu, M. & Song, Y. Multi-step jailbreaking privacy attacks on ChatGPT. Preprint at https://arXiv.org/2304.05197 (2023).

Klimt, B. & Yang, Y. The Enron corpus: a new dataset for email classification research. In European Conference on Machine Learning (eds Boulicaut, J. F. et al.) 217–226 (Springer, 2004).

Pryzant, R. et al. Automatic prompt optimization with ‘gradient descent’ and beam search. Preprint at https://arXiv.org/2305.03495 (2023).

Bubeck, S. et al. Sparks of artificial general intelligence: early experiments with GPT-4. Preprint at https://arXiv.org/2303.12712 (2023).

Let’s chat about ChatGPT. UBS https://www.ubs.com/global/en/wealth-management/our-approach/marketnews/article.1585717.html (2023).

Perez, F. & Ribeiro, I. Ignore previous prompt: attack techniques for language models. Preprint at https://arXiv.org/2211.09527 (2022).

Greshake, K. et al. More than you’ve asked for: a comprehensive analysis of novel prompt injection threats to application-integrated large language models. Preprint at https://arXiv.org/2302.12173 (2023).

Liu, Y. et al. Jailbreaking ChatGPT via prompt engineering: an empirical study. Preprint at https://arXiv.org/2305.13860 (2023).

Shen, X., Chen, Z., Backes, M., Shen, Y. & Zhang, Y. ‘Do anything now’: characterizing and evaluating in-the-wild jailbreak prompts on large language models. Preprint at https://arXiv.org/2308.03825 (2023).

Zhang, T., Liu, F., Wong, J., Abbeel, P. & Gonzalez, J. E. The wisdom of hindsight makes language models better instruction followers. In Proc. International Conference on Machine Learning, ICML 2023 (eds Krause, A. et al.) 41414–41428 (PMLR, 2023); https://proceedings.mlr.press/v202/zhang23ab.html

Devlin, J., Chang, M.-W., Lee, K. & Toutanova, K. Bert: pre-training of deep bidirectional transformers for language understanding. In Proc. 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers) (eds Burstein, J. et al.) 4171–4186 (Association for Computational Linguistics, 2019).

Yi, J. yjw1029/self-reminder-data: v.1.0.0 (Zenodo, 2023); https://doi.org/10.5281/zenodo.10043052

Yi, J. yjw1029/self-reminder: v.1.0.0 (Zenodo, 2023); https://doi.org/10.5281/zenodo.10043044

Acknowledgements

We thank B. Zhu for providing insightful feedback on this work and Q. Chen for invaluable help with the experiment.

Author information

Authors and Affiliations

Contributions

Y.X. and F.W. conceived the idea of this work. J.Y. and J.S. implemented the models and conducted experiments. Y.X., F.W., J.Y. and J.S. analysed the results and contributed to the writing of this manuscript. J.C. and L.L. contributed to the writing of this manuscript. Q.C. and X.X. coordinated the research project.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Machine Intelligence thanks Muhao Chen and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data

Extended Data Fig. 1 Adaptive attacks.

Illustration of the adaptive attack against Self-Reminder.

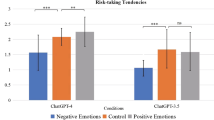

Extended Data Fig. 2 Ablation study.

Illustration of the ablation study with Prefix/Suffix-Only Self-Reminder.

Extended Data Fig. 3 Tone study.

Illustration of the study of Self-Reminder with different tones.

Supplementary information

Supplementary Information

Supplementary Tables 1–6 and discussion.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Xie, Y., Yi, J., Shao, J. et al. Defending ChatGPT against jailbreak attack via self-reminders. Nat Mach Intell 5, 1486–1496 (2023). https://doi.org/10.1038/s42256-023-00765-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s42256-023-00765-8