Abstract

With rapid progress in simulation of strongly interacting quantum Hamiltonians, the challenge in characterizing unknown phases becomes a bottleneck for scientific progress. We demonstrate that a Quantum-Classical hybrid approach (QuCl) of mining sampled projective snapshots with interpretable classical machine learning can unveil signatures of seemingly featureless quantum states. The Kitaev-Heisenberg model on a honeycomb lattice under external magnetic field presents an ideal system to test QuCl, where simulations have found an intermediate gapless phase (IGP) sandwiched between known phases, launching a debate over its elusive nature. We use the correlator convolutional neural network, trained on labeled projective snapshots, in conjunction with regularization path analysis to identify signatures of phases. We show that QuCl reproduces known features of established phases. Significantly, we also identify a signature of the IGP in the spin channel perpendicular to the field direction, which we interpret as a signature of Friedel oscillations of gapless spinons forming a Fermi surface. Our predictions can guide future experimental searches for spin liquids.

Similar content being viewed by others

Introduction

As our ability to simulate quantum systems increases, there is a corresponding need for determining how to characterize unknown phases realized in simulators. Going from measurements to the nature of the underlying state is a challenging inverse problem. Full quantum state tomography1 of the density matrix is impractical. Although the classical shadow2 scales better than full tomography, the approach does not prescribe to researchers the proper observables to evaluate. Viewing the inverse problem as a data problem invites adopting machine learning methods: a quantum-classical hybrid approach. Machine learning has been widely applied for characterizing quantum states3. Such methods have been most fruitful with symmetry-broken states, with a diverse set of approaches increasingly bringing more interpretability and reducing bias4,5,6. The characteristic features of ordered phases are ultimately local and classical, hence ML models tuned for image processing have readily learned such features. By contrast, past learning of quantum states defined without order parameters has relied on theoretically guided feature preparation7,8. However, such reliance on prior knowledge blocks the researchers’ access to new insights into unknown states: the ultimate goal of simulating quantum states.

To push the limits of the nascent quantum-classical hybrid approach, we need a setting known to host a non-trivial quantum phase of unknown nature. Recent investigations into extended Kitaev models9,10,11,12 have led to the observation of a mysterious intermediate gapless phase (IGP) sandwiched between the Kitaev spin liquid and the trivial polarized state under a non-perturbative [111] magnetic field13,14,15,16, whose identification presents an interesting and important puzzle away from the perturbative limit. However, the nature of this field-induced IGP has raised debate in the community.

Several theories have shown evidence that supports a gapless quantum spin liquid phase with an emergent U(1) spinon Fermi surface15,17,18,19, while there are also mean field theories indicating that the low energy effective theory of the intermediate phase is gapped with a non-zero Chern number20,21. This tension between theories arises due to the challenge in determining the nature of the IGP that forms in a non-perturbative region under a magnetic field. Unlike the gapped topological phase adiabatically connected to the exactly solvable limit with known loop operators7,22,23, the absence of measurable positive features for the possible candidate IGP states18,19 also makes this problem a worthy challenge for machine learning.

We present a quantum-classical hybrid approach, QuCl, to reveal characteristic motifs associated with states without known signature features. We treat variational wavefunctions obtained from density matrix renormalization group (DMRG)24,25,26 as output of a quantum simulator. Namely, we sample snapshots from the ground state and train an interpretable neural network architecture, i.e. the correlator convolutional neural network (CCNN)4 (Fig. 1c). Based on the trained network, we use regularization path analysis27 to determine the distinct correlation functions learned by the CCNN as characteristic features of the state captured by snapshots. We benchmark the performance of this hybrid approach on the known phases and confirm that the CCNN learned features are consistent with the known characteristic features. Importantly, we reveal the signature feature of the IGP to imply the existence of a spinon Fermi surface, as proposed in refs.18,19.

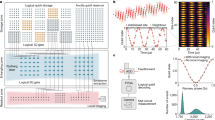

a Honeycomb lattice of Kitaev model with bond-dependent interactions indicated by the three different colored bonds. b Phase diagram of Kitaev-Heisenberg model in an external magnetic field (Eq (1)) long three axes of Kz, h, and J. c A schematic description of QuCl: (i) From a pair of variational wavefunctions\(\left\vert {\Psi }_{0}\right\rangle\) and \(\left\vert {\Psi }_{1}\right\rangle\), labeled projective measurements ("snapshots'') B0 and B1 are generated. (ii) The collection of labeled snapshots are used to train the correlator convolutional neural network (CCNN). (iii) The CCNN is configured with four filters (k = 0, ⋯ , 3) each with three channels, for the binary classification problem minimizing the distance between the prediction \(\hat{y}\) and the label. (iv) Once the training is completed, we fix the filters and use regularization path analysis to reveal signature motifs of the two phases, 0 and 1, under consideration. The correlator weight β onsetting, upon reduction of the regularization strength λ, to a negative (positive) value signals a feature of the phase 0 (1).

Results and discussion

Model

The Kitaev-Heisenberg model under an external field is defined by

where γ = x, y, z enumerates the three colorings of bonds on the honeycomb lattice (Fig. 1a), and Sγ is the γ projection of spin-1/2 degrees of freedom on each site. We also add a uniform Zeeman field h along the [111] direction, i.e., the out-of-plane \({\hat{e}}_{3}\) direction in the lab frame (see also Supplementary Note III), as well as a ferromagnetic Heisenberg term of strength J. Here we investigate the antiferromagnetic Kitaev intereaction (K > 0), for which a field in the [111] direction gives rise to an intermediate phase over a significant field regime. For the ferromagnetic Kitaev interaction, on the other hand, the intermediate phase is either absent or exists over a very small field regime18. Starting from the exactly solvable point at Kx = Ky = Kz = 1, h = J = 0, which is a nodal \({{\mathbb{Z}}}_{2}\) spin liquid28, we consider three axes of the phase diagram that are controlled by the parameters h, J, and Kz (Fig. 1b).

For the J axis of the phase diagram in Fig. 1b, the system undergoes a sequence of transitions through magnetically ordered states11. For small values of J the system preserves time-reversal symmetry and the system remains a gapless \({{\mathbb{Z}}}_{2}\) spin liquid. As J is increased, the system acquires a zigzag magnetic order (also experimentally observed in α-RuCl311,29,30). At even larger values of J, the system eventually becomes a Heisenberg ferromagnet. On the other hand, a small magnetic field h∥[111] breaks the time reversal symmetry of the Kitaev model and opens a gap in the spectrum of free majorana fermions, resulting in a CSL28. However, upon leaving the perturbative regime, numerical evidence through DMRG19 and exact diagonalization16,18 have shown that the system goes through an IGP before entering a partially polarized (PP) magnetic phase. Although the precise nature of the IGP is unknown, a U(1) spinon Fermi surface has been proposed recently18,19, which, as we are to show, is in agreement with our CCNN results. Finally, the Kitaev model has an exact solution along the Kz axis when J = h = 028, where the system undergoes a transition to a gapped \({{\mathbb{Z}}}_{2}\) spin liquid upon increasing Kz. We use this axis for benchmarking the QuCl outcome to known exact results.

In order to generate a single snapshot from a wavefunction, we perform the following procedure sequentially on each site i of the lattice:

-

1.

Find the reduced density matrix for site i, and exactly evaluate the expectation value of the spin operator projected along the chosen axis αi.

-

2.

Choose eigenvalue + or − with probabilities \({P}_{+}=\frac{1+\langle {\sigma }_{i}^{\alpha }\rangle }{2}\), P− = 1 − P+; record the eigenvalue and axis of projection.

-

3.

Collapse the wavefunction onto the associated eigenstate of site i using the projector \({\left\vert \pm \alpha \right\rangle }_{i}{\left\langle \pm \alpha \right\vert }_{i}\).

-

4.

Repeat 1-3 until every site is addressed.

-

5.

Organize the snapshots into channels, one for each unique axis αi; see Fig. 1c.

The choice of axis αi is random for the J and Kz axes but tailored to the target phases for the h axis. The wavefunctions at phase space points of interest are obtained using DMRG on finite size systems composed of 6 × 5-unit-cell (60 sites). In Supplementary Note III we also show results from an extended 12 × 3-unit-cell (120 sites) cylinder geometry. In both cases we used a maximum of 1200 states, giving converged results with a truncation error ~ 10−7 or less in all phases. Within a phase, we generated 10,000 snapshots for each wavefunction in question. Each resulting snapshot forms a three-dimensional array of bit-strings, with two spatial dimensions and a “channel” dimension (see Fig. 1c). Such a collection of snapshots is a classical shadow of the quantum state2. Since our goal is to characterize a quantum state without prior knowledge of the best operator to measure, we treat the snapshot collection as data rather than using them to estimate an operator expectation value as in refs. 2,31.

For each axis of the phase diagram, we set up a binary classification problem between a pair of phase space points, \(\left\vert {\Psi }_{0}\right\rangle\) and \(\left\vert {\Psi }_{1}\right\rangle\), each deep within a phase. The machine learning architecture of choice, CCNN, was introduced in Ref. 4 as an adaptation of a convolutional neural network where a controlled polynomial non-linearity splits into different orders of correlators for the neural network to use (Fig. 1c). Compared to the more standard CNN architecture, the CCNN has reduced expressibility due to using a low-order polynomial as the nonlinearity. However, at the expense of this reduction, we gain access to interpreting the network’s learning that can be analytically connected to the traditional notion of correlation functions. Combined with regularization path analysis (RPA; see Methods and Supplementary Note I for details)32, the CCNN can reveal spatial correlations or motifs that are characteristic of a given phase.

For a given channel α of filter k to be learned, fk,α, the CCNN samples correlators for each snapshot bit-string Bα(x) through an estimate for the n-th order spatially averaged correlator associated with filter k

where the inner sum is over all n unique pairs of filter positions a and filter channels α. These correlator estimates are then coupled to coefficients \({\beta }_{k}^{(n)}\) of the linear layer (Fig. 1c; green arrows) according to \(\hat{y}={\left[1+\exp (-{\sum }_{n,k}{\beta }_{k}^{(n)}{c}_{k}^{(n)})\right]}^{-1}\), where \(0\le \hat{y}\le 1\) is the CCNN output for the given input snapshot. We reserved 1000 samples from each wavefunction as a validation set, and used the remaining 9000 for training. The orders of correlators were restricted to be between 2 and 6, inclusive. We allowed the neural network to learn up to 4 different filters, corresponding to 0 ≤ k ≤ 3. The training optimizes the model parameters, namely the filters and the weights, by comparing the output \(\hat{y}\) to the training label (see Methods).

Once the CCNN is successfully trained for a given phase, we uncover the characteristic motif that is most informative for the contrast using RPA32. For this, we fix the filters and relearn the weights of each learned correlation \({\beta }_{k}^{(n)}\) with regularization that penalizes the magnitude of the \({\beta }_{k}^{(n)}\)’s with strength λ (see Methods). The \({\beta }_{k}^{(n)}\) that turns on at the lowest value of 1/λ points to the specific filter k and the correlation order (n) of that filter which is most informative for the contrast task. The sign of the onsetting \({\beta }_{k}^{(n)}\) reveals whether the associated correlation is a feature of phase 0 ( − sign) or of phase 1 ( + sign); see Fig. 1(c).

Gapless \({{\mathbb{Z}}}_{2}\) versus Heisenberg phases

As a benchmark, we first focus on the phases along the J axis (Fig. 2a). At intermediate J, the system has zigzag order, while it is a Heisenberg ferromagnet for large J. We trained the CCNN to distinguish wavefunctions from the two points marked by stars in Fig. 2a, at J/K = 0 and K/J = 0, corresponding to the Kitaev spin liquid and Heisenberg ferromagnetic states, respectively. The snapshots were generated by choosing a random axis from x, y, or z for each site. The RPA shown in Fig. 2b reveals that the most informative correlation functions are the two-point functions of filters 2 and 3 presented in Fig. 2a. The negative sign of the onsetting β’s means these features are positive indicators of the ordered phase (see Supplementary Note I). Given that the correlation length vanishes at the exactly solvable point at the origin (phase 0), the network’s choice to focus on features of phase 1 is sensible. Moreover, the learned motif of phase 1 is clearly consistent with a ferromagnetic correlation. Hence this benchmarking confirms that the CCNN’s learning is consistent with our theoretical understanding when both phases 0 and 1 are known.

a Ground state wavefunctions from the gapless \({{\mathbb{Z}}}_{2}\) phase and ferromagnetically ordered phase are obtained at the points on the J-axis marked by stars. The highlighted box shows the two most informative filters for the classification task. The pink and blue dots in filters correspond to projections in z and y basis. b The regularization path analysis results pointing to the filters in panel a as signature motifs of the ordered phase.

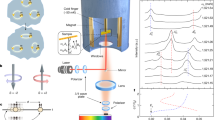

Chiral spin liquid

Next, we contrast the CSL phase (phase 1) and the IGP (phase 0) along the h axis (Fig. 3a). Neither of these phases is characterized by a local order parameter. However, the chiral phase is known to be a \({{\mathbb{Z}}}_{2}\) quantum spin liquid characterized by non-local Wilson loop expectation values28. To confirm that such non-local information can be learned with our architecture, we first use snapshots with a fixed basis shown in Fig. 3c so that the architecture can access the necessary information. The RPA with the positive onset of \({\beta }_{0}^{(6)}\) (Fig. 3d) implies that a sixth-order correlator of the filters shown in Fig. 3a is learned to be the key indicator of phase (1), the CSL phase. Remarkably, the relevant correlator \(\langle {\sigma }_{1}^{z}{\sigma }_{2}^{x}{\sigma }_{3}^{y}{\sigma }_{4}^{z}{\sigma }_{5}^{x}{\sigma }_{6}^{y}\rangle\) is exactly the expectation value of the Wilson loop associated with the plaquette p consisting of the six sites 〈Wp〉, shown in Fig. 3b. Theoretically, 〈Wp〉 ≈ 1 implies the state is well-described by the \({{\mathbb{Z}}}_{2}\) gauge theory of the zero-field gapless phase28. The fact that none other than 〈Wp〉 was learned to contrast the CSL phase from the intermediate gapless phase reveals that the latter is a distinct state. However, discovering the indicator of the intermediate phase requires a different approach, as we discuss below.

a Ground state wavefunctions from the chiral spin liquid (CSL) phase and the intermediate gapless phase (IGP) are obtained at the points on the h-axis marked by stars. The highlighted box shows the most informative filter that signifies the CSL phase. Inset shows the bond anisotropy for the three colorings of bonds, e.g., x refers to a \({S}_{i}^{x}{S}_{j}^{x}\) coupling. b The plaquette operator Wp is a operator defined on the six sites around a hexagonal plaquette. c A sample snapshot from the CSL phase showing the measurement basis that makes the plaquette operator Wp accessible. d The regularization path analysis pointing to the six-point correlator of filter in panel a as indicator of the CSL.

Intermediate gapless phase

We next discuss how we discover the physically meaningful features of the IGP (phase 0). Previous work has focused on mapping out the low energy excitations S(q, ω ≈ 0) in momentum space. Also, in real space, the spin-spin correlations averaged over all directions show power-law decay, indicating gapless spin excitations for intermediate fields. However, it has not been clear how to translate these correlations to positive signatures of a particular state that can be experimentally detected. While the QuCl approach has the potential to reveal such signatures, we have to first overcome a ubiquitous challenge accompanying using ML for scientific discoveries: the need to guide the machine away from trivial features. While the unbiased pursuit of representative feature in data is the benefit of using ML, a non-trivial cost is that the neural network’s learning can be dominated by features that are trivial from the physicist’s perspective. The neural network’s propensity to make decisions based on what appears most visible to the network means it is essential that we guide the CCNN away from the trivial yet dominant difference between phase 0 and phase 1: the field-driven magnetization along the e3 axis (see Supplementary Note II). This basic requirement for extracting meaningful information using ML led us to supply CCNN with snapshots in the basis orthogonal to the field direction, such as e1 basis (see Fig. 4a). This decision to guide the CCNN away from trivial features led to a sought-after discovery.

a The rotated basis vectors in relation to the cardinal axes; the external field is along e3. The signature of the intermediate gapless phase (IGP) is targetted using e1 basis snapshots from the same pair of wavefunctions as in Fig. 3a. b The regularization path analysis results pointing to the filter in c as an indicator of the IGP. c) Most significant filter learned by the correlator convolutional neural network to be associated with the IGP. d Fourier transform of filter in c, with black lines indicating first and extended Brillouin zones. Green circles mark six Bragg peaks associated with the filter tiling pattern in e. e Simplest possible tiling of filter shown in panel c, resulting in a superlattice of antiferromagnetic stripes. The Bragg peaks of this tiling pattern are marked by green circles in panels d and g. f On-site magnetization \(\langle {S}_{i}^{{e}_{1}}\rangle\) of the wavefunction \(\left\vert {\Psi }_{0}\right\rangle\) in the IGP. g Real part of Fourier transform of f, again with Bragg peaks of antiferromagnetic tiling marked (imaginary part is negligible). h Real part of Fourier transform of \(\langle {S}_{i}^{{e}_{1}}\rangle\) of the wavefunction \(\left\vert {\Psi }_{1}\right\rangle\) in the CSL phase showing no discernable features. i The perpendicular magnetization \(\langle {S}^{{e}_{1}}(r)\rangle\) as a function of distance from boundary for various values of field strength h, showing decreasing modulation period with increasing field strength. Solid lines show fitted curve based on Equation (3).

The RPA shown in Fig. 4b unambiguously points to two-point correlators of the filter shown in Fig. 4c as a signature feature of the IGP. As is clear from its Fourier transform shown in Fig. 4d, the filter implies the emergence of a length scale in the e1 component of the magnetization. Given that the e1 direction is perpendicular to the direction of h-field, the repeating arrangement of the motif the filter is detecting must be anti-ferromagnetic. One such ansatz we conjecture shown in Fig. 4e will single out specific momenta points marked in Fig. 4d from the Fourier intensity of the filter (see Supplementary Note IV for more details). To confirm this conjecture, we explicitly measure the per-site e1-magnetization, \(\langle {S}^{{e}_{1}}(r)\rangle\), of the two states. The measurement outcome (Fig. 4f) and its Fourier transform (Fig. 4g) confirms indeed the IGP state has a modulating e1-magnetization that we inferred from the CCNN learned filter and the ansatz tiling the filter. Furthermore, the contrast between Fourier transforms from the IGP Fig. 4g and from the CSL Fig. 4h establishes that the pattern and the associated length scale are unique features of the IGP. Remarkably, we find such modulation to be consistent with a conjecture15,16,17,18,19,22,33 that the IGP is a U(1) spin liquid with a spinon Fermi surface. Note here that in a translationally invariant system the corresponding quantity is the two-point spin-spin correlation function \(\langle {S}^{{e}_{1}}(0){S}^{{e}_{1}}(r)\rangle\) and its Fourier transform.

As detailed in Supplementary Note III, \(\langle {S}^{{e}_{1}}(r)\rangle\) can be mapped to fermionic spinon density in the Kitaev model. If spinons are gapless and deconfined to form a spinon Fermi surface, the Friedel oscillation of the spinon density due to the open boundary34,35,36,37 will be reflected in the modulation of \(\langle {S}^{{e}_{1}}(r)\rangle\):

where C and θ are constants, and r the distance measured from the boundary. We confirm the spinon Friedel oscillation origin of the observed modulations by fitting the \(\langle {S}^{{e}_{1}}(r)\rangle\) measured at different field strengths h to Eq. (3). The resulting excellent fit in Fig. 4i shows that the modulation period increases with the increase in the perpendicular field h. This is consistent with a mean field picture in which the magnetic field plays the role of the chemical potential; the spinon bands successively get depleted upon increasing field until the system enters a trivial phase through a Liftshitz transition38. Evaluation of \({S}^{{e}_{1}}\) on a longer 20 unit cell system of a 3-leg ladder shows a modulation pattern that agrees with the 6-leg ladder results in Fig. 4i (see Supplementary Note III).

Gapless \({{\mathbb{Z}}}_{2}\) vs gapped \({{\mathbb{Z}}}_{2}\)

Finally, we contrast the gapless and gapped \({{\mathbb{Z}}}_{2}\) phases along the Kz axis as a sanity check in distinguishing two spin liquid phases. As one tunes Kz, the model Eq. (1) is known to go through a phase transition between a gapless \({{\mathbb{Z}}}_{2}\) and a gapped \({{\mathbb{Z}}}_{2}\) spin liquid phases28. However, since both phases have only short-range correlations in the ground state the distinction cannot be learned from the correlation lengths, unlike usual transitions between a gapless phase and a gapped phase. Hence it is a non-trivial benchmarking test for QuCl-based state characterization. Contrasting the two points marked by stars in Fig. 5a, again using the random basis snapshots, we find signature motifs consistent with exact solutions. Specifically, the RPA (Fig. 5b) shows that nearest-neighbor correlation functions of x and y axes are a feature of the gapped \({{\mathbb{Z}}}_{2}\) phase while the z axis nearest-neighbor correlation function is the feature of the gapped \({{\mathbb{Z}}}_{2}\) phase. These results are consistent with the exact solution of the zero-field Kitaev model39,40.

a Ground state wavefunctions from the gapless \({{\mathbb{Z}}}_{2}\) phase and gapped \({{\mathbb{Z}}}_{2}\) phase are obtained at the points on the Kz-axis marked by stars. The highlighted boxes shows the most informative filters that signifies the each phases. b The regularization path analysis results associate two-point correlators of filters 0 and 2 to the gapped phase and that of filter 1 to the gapless phase.

Conclusion

The significance of our findings is threefold. Firstly, we gained insight into the intermediate field spin liquid phase in the Kitaev-Heisenberg model. Confronted by two complementary predictions: a gapless spin liquid based on exact diagonalization and DMRG versus a gapped spin liquid in the same region from mean field theory, an identification of a positive signature for either possibilities was critical. The need for guiding the CCNN away from a trivially changing feature led to the discovery that it is critical to focus on snapshots taken along a direction e1 perpendicular to the magnetic-field e3 axis. Remarkably, the network then learned a geometric pattern characteristic of Friedel oscillations of spinons in the IGP. This observation strongly supports earlier theoretical proposals of a spinon Fermi surface in the IGP, thus advancing our understanding of this phase.

Secondly, our discovery translates to a prediction for experiments by providing a direct evidence of spinon FS in the modulated magnetization and the spin–spin correlations perpendicular to the field direction along e1. Such a feature in the computational data has been previously missed since the focus has been on isotropic spin correlation \(\langle {{{\bf{S}}}}_{i}\cdot {{{\bf{S}}}}_{j}\rangle\) which is dominated by the e3 component. Our results can guide future experimental searches for spin liquids with spinon Fermi surfaces.

Finally, on a broader level, we have demonstrated that hidden features of a quantum many-body state can be discovered using QuCl: a data-centric approach to snapshots of the quantum states, employing an interpretable classical machine learning approach. Conventionally, quantum states have been studied through explicit and costly evaluation of correlation functions. However, when the descriptive correlation function is unknown in a new phase, the conventional approach gets lost in the overwhelming space of expensive calculations. Although our method does not explicitly evaluate the correlation functions that it extracts, snapshots that can be readily treated with QuCl will enable computationally efficient identification of new phases associated with a quantum state, including topological states or states with hidden orders. Finally, our method is also broadly applicable to searches for physical indicators of states prepared on quantum simulators which are naturally accessed through projective measurements.

Methods

In this section, we describe the architecture of the neural network and the training procedure. The CCNN, as first proposed in ref. 4, consists of two layers: the correlator convolutional layer and the fully connected linear layer. We fed as input to the CCNN three-spin-channel (two-spin-channel for rotated basis measurements) snapshot data. Since the CCNN was originally applied to square lattice data at its conception, we reinterpreted our hexagonal lattice geometry as a rectangular grid with a 1 × 2 unit cell forming its two-site basis. We modified the convolutional layer to consist of 4 different learnable filters of dimension 2 × 2 unit cells, for a total receptive field of 8 sites each. To accommodate the 1 × 2 unit cell, we also introduced a horizontal stride of 2 in the convolution operation between filters and snapshots.

The filter weights are learnable nonnegative numbers indicated by fα,k(a), where 0 ≤ k ≤ 3 indexes the filter, 1 ≤ α ≤ 3 indexes the channel of the weight, and a is a spatial coordinate. The weights are convolved with the input snapshots using the recursive algorithm described in ref. 4 to produce per-snapshot correlators as

where \({C}_{k}^{(n)}(x)\) is the position-dependent n-th order correlator of filter k, and \({B}^{{\alpha }_{j}}({{{{{{{\bf{x}}}}}}}}+{{{{{{{{\bf{a}}}}}}}}}_{{{{{{{{\bf{j}}}}}}}}})\) indicates the snapshot value at location x + aj in channel αj. The correlator estimates are then defined as the spatially-averaged correlators, \({c}_{k}^{(n)}={\sum }_{x}{C}_{k}^{(n)}(x)\), which are coupled to coefficients \({\beta }_{k}^{(n)}\) of the linear layer, and summed to produce the logistic regression classification output

so that they are constrained to the range \(0\le \hat{y}\le 1\). For a visual overview of the architecture, see Fig. 6.

Input snapshots Bα(x) (with α enumerating the spin channels) are shaped into rectangular data arrays with a 1 × 2 unit cell. The inputs are convolved with filters to obtain orders n = 2…6 of correlations \({C}_{k}^{(n)}(x)\) (Equation (4)), then spatially averaged to obtain correlator estimates \({c}_{k}^{(n)}\). Correlator estimates are then used in a logistic regression to predict the class of the input snapshot.

During training, the weights of the network are updated with stochastic gradient descent to optimize the loss function

where y ∈ {0, 1} is the ground truth label of the snapshot, and γ1 and γ2 are L1 and L2 regularization strengths, respectively. We took γ1 = 0.005 and γ2 = 0.002. The training was performed for 20 epochs consisting of 9000 snapshots each with a learning rate of 0.006, using Adam stochastic gradient descent. For the regularization path analysis, the weights f are kept fixed, and the model is retrained in the same way with loss function

where γ is the regularization strength to be swept over.

Data availability

Data is available upon request to the authors.

Code availability

Code is available upon request to the authors.

References

James, D. F. V., Kwiat, P. G., Munro, W. J. & White, A. G. Measurement of qubits. Phys. Rev. A 64, 052312 (2001).

Huang, H.-Y., Kueng, R. & Preskill, J. Predicting many properties of a quantum system from very few measurements. Nat. Phys. 16, 1050–1057 (2020).

Carrasquilla, J. Machine learning for quantum matter. Adv. Phys.: X 5, 1797528 (2020).

Miles, C. et al. Correlator convolutional neural networks as an interpretable architecture for image-like quantum matter data. Nat. Commun. 12, 3905 (2021).

Arnold, J. & Schäfer, F. Replacing neural networks by optimal analytical predictors for the detection of phase transitions. Phys. Rev. X 12, 031044 (2022).

Miles, C. et al. Machine learning discovery of new phases in programmable quantum simulator snapshots. Phys. Rev. Res. 5, 013026 (2023).

Zhang, Y., Melko, R. G. & Kim, E.-A. Machine learning \({{\mathbb{Z}}}_{2}\) quantum spin liquids with quasiparticle statistics. Phys. Rev. B 96, 245119 (2017).

Huang, H.-Y., Kueng, R., Torlai, G., Albert, V. V. & Preskill, J. Provably efficient machine learning for quantum many-body problems. Science 377, eabk3333 (2022).

Hermanns, M., Kimchi, I. & Knolle, J. Physics of the Kitaev model: fractionalization, dynamic correlations, and material connections. Annu. Rev. Condens. Matter Phys. 9, 17–33 (2018).

Knolle, J. & Moessner, R. A field guide to spin liquids. Annu. Rev. Condens. Matter Phys. 10, 451–472 (2019).

Gohlke, M., Verresen, R., Moessner, R. & Pollmann, F. Dynamics of the Kitaev-Heisenberg model. Phys. Rev. Lett. 119, 157203 (2017).

Trebst, S. & Hickey, C. Kitaev materials. Phys. Rep. 950, 1–37 (2022).

Zhu, Z., Kimchi, I., Sheng, D. N. & Fu, L. Robust non-Abelian spin liquid and a possible intermediate phase in the antiferromagnetic Kitaev model with magnetic field. Phys. Rev. B 97, 241110 (2018).

Gohlke, M., Moessner, R. & Pollmann, F. Dynamical and topological properties of the Kitaev model in a [111] magnetic field. Phys. Rev. B 98, 014418 (2018).

Jiang, Y.-F., Devereaux, T. P. & Jiang, H.-C. Field-induced quantum spin liquid in the Kitaev-Heisenberg model and its relation to α-RuCl3. Phys. Rev. B 100, 165123 (2019).

Ronquillo, D. C., Vengal, A. & Trivedi, N. Signatures of magnetic-field-driven quantum phase transitions in the entanglement entropy and spin dynamics of the Kitaev honeycomb model. Phys. Rev. B 99, 140413 (2019).

Jiang, H.-C., Wang, C.-Y., Huang, B. & Lu, Y.-M. Field induced quantum spin liquid with spinon Fermi surfaces in the Kitaev model (2018).

Hickey, C. & Trebst, S. Emergence of a field-driven U(1) spin liquid in the Kitaev honeycomb model. Nat. Commun. 10, 530 (2019).

Patel, N. D. & Trivedi, N. Magnetic field-induced intermediate quantum spin liquid with a spinon Fermi surface. Proc. Natl Acad. Sci. USA 116, 12199–12203 (2019).

Jiang, M.-H. et al. Tuning topological orders by a conical magnetic field in the Kitaev model. Phys. Rev. Lett. 125, 177203 (2020).

Zhang, S.-S., Halász, G. B. & Batista, C. D. Theory of the Kitaev model in a [111] magnetic field. Nat. Commun. 13, 399 (2022).

Feng, S., Agarwala, A., Bhattacharjee, S. & Trivedi, N. Anyon dynamics in field-driven phases of the anisotropic kitaev model. Phys. Rev. B 108, 035149 (2023).

Liu, K., Sadoune, N., Rao, N., Greitemann, J. & Pollet, L. Revealing the phase diagram of Kitaev materials by machine learning: cooperation and competition between spin liquids. Phys. Rev. Res. 3, 023016 (2021).

White, S. R. Density matrix formulation for quantum renormalization groups. Phys. Rev. Lett. 69, 2863–2866 (1992).

White, S. R. Density-matrix algorithms for quantum renormalization groups. Phys. Rev. B 48, 10345–10356 (1993).

Fishman, M., White, S. & Stoudenmire, E. The ITensor software library for tensor network calculations. SciPost Physics Codebases 4 https://doi.org/10.21468/SciPostPhysCodeb.4 (2022).

Efron, B., Hastie, T., Johnstone, I. & Tibshirani, R. Least angle regression. Ann. Stat. 32, 407–499 (2004).

Kitaev, A. Anyons in an exactly solved model and beyond. Ann. Phys. 321, 2–111 (2006).

Jackeli, G. & Khaliullin, G. Mott insulators in the strong spin-orbit coupling limit: from Heisenberg to a quantum compass and Kitaev Models. Phys. Rev. Lett. 102, 017205 (2009).

Kasahara, Y. et al. Majorana quantization and half-integer thermal quantum Hall effect in a Kitaev spin liquid. Nature 559, 227–231 (2018).

Ferris, A. J. & Vidal, G. Perfect sampling with unitary tensor networks. Phys. Rev. B 85, 165146 (2012).

Tibshirani, R. Regression shrinkage and selection via the lasso. J. R. Stat. Soc. Ser. B (Methodol.) 58, 267–288 (1996). 2346178.

Pradhan, S., Patel, N. D. & Trivedi, N. Two-magnon bound states in the Kitaev model in a [111] field. Phys. Rev. B 101, 180401 (2020).

White, S. R., Affleck, I. & Scalapino, D. J. Friedel oscillations and charge density waves in chains and ladders. Phys. Rev. B 65, 165122 (2002).

Mross, D. F. & Senthil, T. Charge Friedel oscillations in a Mott insulator. Phys. Rev. B 84, 041102 (2011).

He, W.-Y., Xu, X. Y., Chen, G., Law, K. T. & Lee, P. A. Spinon Fermi surface in a cluster mott insulator model on a triangular lattice and possible application to 1T- TaS2. Phys. Rev. Lett. 121, 046401 (2018).

Ruan, W. et al. Evidence for quantum spin liquid behaviour in single-layer 1T-TaSe2 from scanning tunnelling microscopy. Nat. Phys. 17, 1154–1161 (2021).

Feng, S., Alvarez, G. & Trivedi, N. Gapless to gapless phase transitions in quantum spin chains. Phys. Rev. B 105, 014435 (2022).

Baskaran, G., Mandal, S. & Shankar, R. Exact results for spin dynamics and fractionalization in the Kitaev model. Phys. Rev. Lett. 98, 247201 (2007).

Feng, S., He, Y. & Trivedi, N. Detection of long-range entanglement in gapped quantum spin liquids by local measurements. Phys. Rev. A 106, 042417 (2022).

Acknowledgements

We thank Leon Balents, Natasha Perkins, John Tranquada, and Simon Trebst for helpful discussions. K.Z. acknowledges support by the NSF under EAGER OSP-136036 and the Natural Sciences and Engineering Research Council of Canada (NSERC) under PGS-D-557580-2021. Y.L. and E.A.K. acknowledge support by the Gordon and Betty Moore Foundation’s EPiQS Initiative, Grant GBMF10436, and a New Frontier Grant from Cornell University’s College of Arts and Sciences. E.A.K. acknowledges support by the NSF under OAC-2118310, EAGER OSP-136036, the Ewha Frontier 10-10 Research Grant, and the Simons Fellowship in Theoretical Physics award 920665. S.F. acknowledges support from NSF Materials Research Science and Engineering Center (MRSEC) Grant No. DMR-2011876, and N.T. from NSF-DMR 2138905.

Author information

Authors and Affiliations

Contributions

E-AK and NT conceived the idea and supervised the project. KZ, YL, and E-AK formed the machine learning and data processing strategies. SF performed the DMRG optimization and data processing. KZ performed the machine learning analysis. All authors contributed to interpreting the results and writing the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Communications Physics thanks the anonymous reviewers for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Zhang, K., Feng, S., Lensky, Y.D. et al. Machine learning reveals features of spinon Fermi surface. Commun Phys 7, 54 (2024). https://doi.org/10.1038/s42005-024-01542-8

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s42005-024-01542-8

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.