Abstract

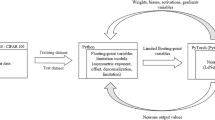

Deep neural networks offer considerable potential across a range of applications, from advanced manufacturing to autonomous cars. A clear trend in deep neural networks is the exponential growth of network size and the associated increases in computational complexity and memory consumption. However, the performance and energy efficiency of edge inference, in which the inference (the application of a trained network to new data) is performed locally on embedded platforms that have limited area and power budget, is bounded by technology scaling. Here we analyse recent data and show that there are increasing gaps between the computational complexity and energy efficiency required by data scientists and the hardware capacity made available by hardware architects. We then discuss various architecture and algorithm innovations that could help to bridge the gaps.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

References

Krizhevsky, A. et al. ImageNet classification with deep convolutional neural networks. In Adv. Neural Inf. Proc. Sys. 1097–1105 (2012).

Szegedy, C. et al. Going deeper with convolutions. In Proc. IEEE Conf. Computer Vision and Pattern Recognition 1–9 (2015).

He, K. et al. Identity mappings in deep residual networks. In Eur. Conf. Computer Vision 630–645 (Springer, 2016).

Silver, D. et al. Mastering the game of Go with deep neural networks and tree search. Nature 529, 484–489 (2016).

Zhang, L. et al. Carcinopred-el: Novel models for predicting the carcinogenicity of chemicals using molecular fingerprints and ensemble learning methods. Sci. Rep. 7, 2118 (2017).

Ge, G. et al. Quantitative analysis of diffusion-weighted magnetic resonance images: Differentiation between prostate cancer and normal tissue based on a computer-aided diagnosis system. Sci. China Life Sci. 60, 37–43 (2017).

Egger, M. & Schoder, D. Consumer-oriented tech mining: Integrating the consumer perspective into organizational technology intelligence-the case of autonomous driving. In Proc. 50th Hawaii Int. Conf. System Sciences 1122–1131 (2017).

Rosenberg, C. Improving photo search: A step across the semantic gap. Google Research Blog (12 June 2013); https://research.googleblog.com/2013/06/improving-photo-search-step-across.html

Ji, S. et al. 3D convolutional neural networks for human action recognition. IEEE T. Pattern Anal 35, 221–231 (2013).

Balluru, V., Graham, K. & Hilliard, N. Systems and methods for coreference resolution using selective feature activation. US Patent 9,633,002 (2017).

Sermanet, P. et al. Overfeat: Integrated recognition, localization and detection using convolutional networks. Preprint at https://arxiv.org/abs/1312.6229 (2013).

Simonyan, K. & Zisserman, A. Very deep convolutional networks for large-scale image recognition. Preprint at https://arxiv.org/abs/1409.1556 (2014).

He, K. et al. Deep residual learning for image recognition. In Proc. IEEE Conf. Computer Vision and Pattern Recognition. 770–778 (2016).

Szegedy, C. et al. Rethinking the inception architecture for computer vision. In Proc. IEEE Conf. Computer Vision and Pattern Recognition. 2818–2826 (2016).

Boris, H. Universal function approximation by deep neural nets with bounded width and ReLU activations. Preprint at https://arxiv.org/abs/1708.02691 (2017).

Liang, S. & Srikant, R. Why deep neural networks for function approximation? Preprint at https://arxiv.org/abs/1610.04161 (2016).

Dmitry, Y. Error bounds for approximations with deep ReLU networks. Neural Networks. 94, 103–114 (2017).

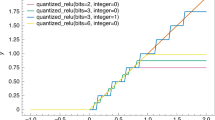

Ding, Y. et al. On the universal approximability of quantized ReLU neural networks. Preprint at https://arxiv.org/abs/1802.03646 (2018).

https://en.wikipedia.org/wiki/List_of_Nvidia_graphics_processing_units (2017).

Farabet, C. et al. Neuflow: A runtime reconfigurable dataflow processor for vision. 2011 IEEE Conf. Computer Vision and Pattern Recognition Workshops 109–116 (2011).

Moloney, D. et al. Myriad 2: Eye of the computational vision storm. Hot Chips 26 Symp. 1–18 (2014).

Chen, T. et al. Diannao: A small-footprint high-throughput accelerator for ubiquitous machine-learning. Proc. 19th Int. Conf. Architectural Support for Programming Languages and Operating Systems. 269–284 (2014).

Chen, Y. et al. DaDianNao: A machine-learning supercomputer. 2014 47th Ann. IEEE/ACM Int. Symp. Microarchitecture. 609–622 (2014).

Chen, Y. H., Krishna, T., Emer, J. S. & Sze, V. Eyeriss: An energy-efficient reconfigurable accelerator for deep convolutional neural networks. IEEE J. Solid-St. Circ. 52, 127–138 (2017).

Park, S. et al. A 1.93TOPS/W scalable deep learning/inference processor with tetra-parallel MIMD architecture for big-data applications. 2015 IEEE Int. Solid-St. Circ. Conf. 1–3 (2015).

Du, Z. et al. ShiDianNao: Shifting vision processing closer to the sensor. ACM SIGARCH Computer Architecture News 43, 92–104 (2015).

Han, S. et al. EIE: Efficient inference engine on compressed deep neural network. 2016 ACM/IEEE 43rd Ann. Int. Symp. Computer Architecture 243–254 (2016).

Jouppi, N. P. et al. In-datacenter performance analysis of a tensor processing unit. 2017 ACM/IEEE 44th Ann. Int. Symp. Computer Architecture 1–12 (2017).

Moons, B. & Verhelst, M. A 0.3–26 TOPS/W precision-scalable processor for real-time large-scale ConvNets. IEEE Symp. VLSI Circuits 1–2 (2016).

Liu, S. et al. Cambricon: An instruction set architecture for neural networks. 2016 ACM/IEEE 43rd Ann. Int. Symp. Computer Architecture 393–405 (2016).

Whatmough, P. N. et al. 14.3 A 28nm SoC with a 1.2 GHz 568nJ/prediction sparse deep-neural-network engine with 0.1 timing error rate tolerance for IoT applications. 2017 IEEE Int. Solid-St. Circ. Conf. 242–243 (2017).

Wei, X. et al. Automated systolic array architecture synthesis for high throughput CNN inference on FPGAs. 2017 54th ACM/EDAC/IEEE Design Automation Conf. 29, 1–6 (2017).

Zhang, C. et al. Optimizing FPGA-based accelerator design for deep convolutional neural networks. 23rd Int. Symp. Field-Programmable Gate Arrays https://doi.org/10.1145/2684746.2689060 (2015).

NVIDIA TESLA P100 (NVIDIA, 2017); http://www.nvidia.com/object/tesla-p100.html

Sutter, H. The free lunch is over: A fundamental turn toward concurrency in software. Dr Dobb’s J. 30, 202–210 (2005).

Toumey, C. Less is Moore. Nat. Nanotech. 11, 2–3 (2016).

Mutlu, O. Memory scaling: A systems architecture perspective. Proc. 5th Int. Memory Workshop 21–25 (2013).

Using Next-Generation Memory Technologies: DRAM and Beyond HC28-T1 (HotChips, 2016); available at https://www.youtube.com/watch?v=61oZhHwBrh8

Dreslinski, R. G., Wieckowski, M., Blaauw, D., Sylvester, D. & Mudge, T. Near-threshold computing: Reclaiming Moore’s law through energy efficient integrated circuits. Proc. IEEE. 98, 253–266 (2010).

Microsoft unveils Project Brainwave for real-time AI. Microsoft (22 August 2017); https://www.microsoft.com/en-us/research/blog/microsoft-unveils-project-brainwave/ (2017).

Kung, H. T. Algorithms for VLSI processor arrays. In Introduction to VLSI Systems 271–292 (1979).

Zhang, J., Ghodsi, Z., Rangineni, K. & Garg, S. Enabling extreme energy efficiency via timing speculation for deep neural network accelerators. NYU Center for Cyber Security (2017); http://cyber.nyu.edu/enabling-extreme-energy-efficiency-via-timing-speculation-deep-neural-network-accelerators/

Cloud TPUs (2017); https://ai.google/tools/cloud-tpus/

Kim, D., Kung, J., Chai, S., Yalamanchili, S. & Mukhopadhyay, S. Neurocube: A programmable digital neuromorphic architecture with high-density 3D memory. 2016 ACM/IEEE 43rd Ann. Int. Symp. Computer Architecture 380–392 (2016).

Gao, M., Pu, J., Yang, X., Horowitz, M. & Kozyrakis, C. TETRIS: Scalable and efficient neural network acceleration with 3D memory. Proc. 22nd Int. Conf. Architectural Support for Programming Languages and Operating Systems 751–764 (2017).

LiKamWa, R., Hou, Y., Gao, J., Polansky, M. & Zhong, L. RedEye: Analog ConvNet image sensor architecture for continuous mobile vision. 2016 ACM/IEEE 43rd Ann. Int. Symp. Computer Architecture 255–266 (2016).

Li, C. et al. Analogue signal and image processing with large memristor crossbars. Nat. Electron. 1, 52 (2018).

Ali, S. et al. ISAAC: A convolutional neural network accelerator with in-situ analog arithmetic in crossbars. 2016 ACM/IEEE 43rd Ann. Int. Symp. Computer Architecture 14–26 (2016).

Ping, C. et al. Prime: A novel processing-in-memory architecture for neural network computation in ReRAM-based main memory. 2016 ACM/IEEE 43rd Ann. Int. Symp. Computer Architecture 27–39 (2016).

Jain, S., Ranjan, A., Roy, K. & Raghunathan, A. Computing in memory with spin-transfer torque magnetic RAM. Preprint at https://arxiv.org/abs/1703.02118 (2017).

Kang, W., Wang, H., Wang, Z., Zhang, Y. & Zhao, W. In-memory processing paradigm for bitwise logic operations in STT–MRAM. IEEE T. Magn. 53, 1–4 (2017).

Burr, G. W. et al. Experimental demonstration and tolerancing of a large-scale neural network (165,000 synapses), using phase-change memory as the synaptic weight element. IEEE T. Electron Dev. 62, 3498–3507 (2015).

Yao, P. et al. Face classification using electronic synapses. Nat. Commun. 8, 15199 (2017).

Prezioso, M. et al. Training and operation of an integrated neuromorphic network based on metal-oxide memristors. Nature 521, 61–64 (2015).

Guo, X. et al. Fast, energy-efficient, robust, and reproducible mixed-signal neuromorphic classifier based on embedded NOR flash memory technology. 2017 IEEE Int. Electron. Dev. Meet. 6.5.1–6.5.4 (2017).

Yu, S. et al. Binary neural network with 16 Mb RRAM macro chip for classification and online training. 2016 IEEE Int. Electron. Dev. Meet. 16.2.1–16.2.4 (2016).

Zhang, J., Wang, Z. & Verma, N. In-memory computation of a machine-learning classifier in a standard 6T SRAM array. IEEE J. Solid-St. Circ. 52, 915–924 (2017).

Jaiswal, A., Chakraborty, I., Agrawal, A. & Roy, K. 8T SRAM cell as a multi-bit dot product engine for beyond von-Neumann computing. Preprint at https://arxiv.org/abs/1802.08601 (2018).

Lee, J. H., Delbruck, T. & Pfeiffer, M. Training deep spiking neural networks using backpropagation. Front. Neurosci. 10, 508 (2016).

O’Connor, P. & Max, W. Deep spiking networks. Preprint at https://arxiv.org/abs/1602.08323 (2016).

Hesham, M. Supervised learning based on temporal coding in spiking neural networks. IEEE T. Neural Networks and Learning Systems PP, 1–9 (2017).

Wen, W. et al. A new learning method for inference accuracy, core occupation, and performance co-optimization on TrueNorth chip. 2016 53nd ACM/EDAC/IEEE Design Automation Conf. 1–6 (2016).

Mostafa, H., Pedroni, B. U., Sheik, S. & Cauwenberghs, G. Fast classification using sparsely active spiking networks. 2017 IEEE Int. Symp. Circuits and Systems 1–4 (2017).

Qiao, N. et al. A reconfigurable on-line learning spiking neuromorphic processor comprising 256 neurons and 128K synapses. Front. Neurosci. 9, 141 (2015).

Esser, S. K. et al. Convolutional networks for fast, energy-efficient neuromorphic computing. Proc. Natl Acad. Sci. USA. 113, 11441–11446 (2016).

Yu, R. et al. NISP: Pruning networks using neuron importance score propagation. Preprint at https://arxiv.org/abs/1711.05908 (2017).

Xu, X. et al. Empowering mobile telemedicine with compressed cellular neural networks. IEEE/ACM Int. Conf. Computer-Aided Design 880–887 (2017).

Xu, X. et al. Quantization of fully convolutional networks for accurate biomedical image segmentation. Preprint at https://arxiv.org/abs/1803.04907 (2018).

Han, S., Pool, J., Tran, J. & Dally, W. Learning both weights and connections for efficient neural network. Proc. 28th Int. Conf. Neural Information Processing Systems 1135–1143 (2015).

Yang, T. J., Chen, Y. H. & Sze, V. Designing energy-efficient convolutional neural networks using energy-aware pruning. Preprint at https://arxiv.org/abs/1611.05128 (2017).

Jorge, A. et al. Cnvlutin: Ineffectual-neuron-free deep neural network computing. 2016 ACM/IEEE 43rd Ann. Int. Symp. Computer Architecture 1–13 (2016).

Ullrich, K., Meeds, E. & Welling, M. Soft weight-sharing for neural network compression. Preprint at https://arxiv.org/abs/1702.04008 (2017).

Louizos, C., Ullrich, K. & Welling, M. Bayesian compression for deep learning. Proc. 30th Int. Conf. Neural Information Processing Systems 3290–3300 (2017).

Brandon, R. et al. Minerva: Enabling low-power, highly-accurate deep neural network accelerators. 2016 ACM/IEEE 43rd Ann. Int. Symp. Computer Architecture 267–278 (2016).

Jaderberg, M., Vedaldi, A. & Zisserman, A. Speeding up convolutional neural networks with low rank expansions. Preprint at https://arxiv.org/abs/1405.3866 (2014).

Denton, E. L., Zaremba, W., Bruna, J., LeCun, Y. & Fergus, R. Exploiting linear structure within convolutional networks for efficient evaluation. Proc. 27th Int. Conf. Neural Information Processing Systems 1269–1277 (2014).

Wen, W., Wu, C., Wang, Y., Chen, Y. & Li, H. Learning structured sparsity in deep neural networks. Proc. 29th Int. Conf. Neural Information Processing Systems 2074–2082 (2016).

Wang, Y., Xu, C., Xu, C. & Tao, D. Beyond filters: Compact feature map for portable deep model. Int. Conf. Machine Learning 3703–3711 (2017).

Huang, Q., Zhou, K., You, S. & Neumann, U. Learning to prune filters in convolutional neural networks. Preprint at https://arxiv.org/abs/1801.07365 (2018).

Luo, J. H., Wu, J. & Lin, W. Thinet: A filter level pruning method for deep neural network compression. Preprint at https://arxiv.org/abs/1707.06342 (2017).

Li, D., Wang, X. & Kong, D. DeepRebirth: Accelerating deep neural network execution on mobile devices. Preprint at https://arxiv.org/abs/1708.04728 (2017).

Masana, M., van de Weijer, J., Herranz, L., Bagdanov, A. D. & Alvarez, J. M. Domain-adaptive deep network compression. Network 16, 30 (2017).

Zhang, X., Zhou, X., Lin, M. & Sun, J. Shufflenet: An extremely efficient convolutional neural network for mobile devices. Preprint at https://arxiv.org/abs/1707.01083 (2017).

Sotoudeh, M. & Sara S. B. DeepThin: A self-compressing library for deep neural networks. Preprint at https://arxiv.org/abs/1802.06944 (2018).

Hashemi, S., Anthony, N., Tann, H., Bahar, R. I. & Reda, S. Understanding the impact of precision quantization on the accuracy and energy of neural networks. 2017 Design, Automation & Test in Europe 1474–1479 (2017).

Jiantao, Q. et al. Going deeper with embedded FPGA platform for convolutional neural network. Proc. 2016 ACM/SIGDA Int. Symp. Field-Programmable Gate Arrays 26–35 (2016).

Han, S., Mao, H. & Dally, W. J. Deep compression: Compressing deep neural networks with pruning, trained quantization and Huffman coding. Preprint at https://arxiv.org/abs/1510.00149 (2016).

Hubara, I., Courbariaux, M., Soudry, D., El-Yaniv, R. & Bengio, Y. Quantized neural networks: Training neural networks with low precision weights and activations. Preprint at https://arxiv.org/abs/1609.07061 (2016).

Li, F., Zhang, B. & Liu, B. Ternary weight networks. Preprint at https://arxiv.org/abs/1605.04711 (2016).

Courbariaux, M., Bengio, Y. & David, J. P. BinaryConnect: Training deep neural networks with binary weights during propagations. Proc. 28th Int. Conf. Neural Information Processing Systems 3123–3131 (2015).

Zhu, C., Han, S., Mao, H. & Dally, W. J. Trained ternary quantization. Preprint at https://arxiv.org/abs/1612.01064 (2016).

Rastegari, M., Ordonez, V., Redmon, J. & Farhadi, A. XNORNet: ImageNet classification using binary convolutional neural networks. Eur. Conf. Computer Vision 525–542 (2016).

Miyashita, D., Lee, E. H. & Murmann, B. Convolutional neural networks using logarithmic data representation. Preprint at https://arxiv.org/abs/1603.01025 (2016).

Zhou, A., Yao, A., Guo, Y., Xu, L. & Chen, Y. Incremental network quantization: Towards lossless CNNs with low-precision weights. Preprint at https://arxiv.org/abs/1702.03044 (2017).

Courbariaux, M. & Bengio, Y. BinaryNet: Training deep neural networks with weights and activations constrained to +1 or –1. Preprint at https://arxiv.org/abs/1602.02830 (2016).

Zhou, S. et al. DoReFaNet: Training low bitwidth convolutional neural networks with low bitwidth gradients. Preprint at https://arxiv.org/abs/1606.06160 (2016).

Cai, Z., He, X., Sun, J. & Vasconcelos, N. Deep learning with low precision by half-wave Gaussian quantization. 2017 IEEE Conference on Computer Vision and Pattern Recognition 5918–5926 (2017).

Hu, Q., Wang, P. & Cheng, J. From hashing to CNNs: Training BinaryWeight networks via hashing. Preprint at https://arxiv.org/abs/1802.02733 (2018).

Leng, C., Li, H., Zhu, S. & Jin, R. Extremely low bit neural network: Squeeze the last bit out with ADMM. Preprint at https://arxiv.org/abs/1707.09870 (2017).

Ko, J. H., Fromm, J., Philipose, M., Tashev, I. & Zarar, S. Precision scaling of neural networks for efficient audio processing. Preprint at https://arxiv.org/abs/1712.01340 (2017).

Ko, J. H., Fromm, J., Philipose, M., Tashev, I. & Zarar, S. Adaptive weight compression for memory-efficient neural networks. 2017 Design, Automation & Test in Europe 199–204 (2017).

Chakradhar, S., Sankaradas, M., Jakkula, V. & Cadambi, S. A dynamically configurable coprocessor for convolutional neural networks. Proc. 37th Int. Symp. Computer Architecture 247–257 (2010).

Gysel, P., Motamedi, M. & Ghiasi, S. Hardware-oriented approximation of convolutional neural networks. Preprint at https://arxiv.org/abs/1604.03168 (2016).

Higginbotham, S. Google takes unconventional route with homegrown machine learning chips. The Next Platform (19 May 2016).

Morgan, T. P. Nvidia pushes deep learning inference with new Pascal GPUs. The Next Platform (13 September 2016).

Judd, P., Albericio, J. & Moshovos, A. Stripes: Bit-serial deep neural network computing. IEEE Computer Architecture Lett. 16, 80–83 (2016).

Zhang, C. & Prasanna, V. Frequency domain acceleration of convolutional neural networks on CPU-FPGA shared memory system. Proc. 2017 ACM/SIGDA Int. Symp. Field-Programmable Gate Arrays 35–44 (2017).

Andrew, K. Reducing deep network complexity with Fourier transform methods. Preprint at https://arxiv.org/abs/1801.01451 (2017).

Mathieu, M., Henaff, M. & LeCun, Y. Fast training of convolutional networks through FFTs. Preprint at https://arxiv.org/abs/1312.5851 (2013).

Cheng, Y. et al. An exploration of parameter redundancy in deep networks with circulant projections. Int. Conf. Computer Vision 2857–2865 (2015).

Cong, J. & Xiao, B. Minimizing computation in convolutional neural networks. Proc. 24th Int. Conf. Artificial Neural Networks 8681, 281–290 (2014).

Ding, C. et al. CirCNN: Accelerating and compressing deep neural networks using block-circulant weight matrices. Proc. 50th Ann. IEEE/ACM Int. Symp. Microarchitecture 395–408 (2017).

Lu, L., Liang, Y., Xiao, Q. & Yan, S. Evaluating fast algorithms for convolutional neural networks on FPGAs. 25th IEEE Int. Symp. Field-Programmable Custom Computing Machines 101–108 (2017).

Fischetti, M. Computers versus brains. Scientific American (1 November 2011); https://www.scientificamerican.com/article/computers-vs-brains/.

Meier, K. The brain as computer: Bad at math, good at everything else. IEEE Spectrum (31 May 2017); https://spectrum.ieee.org/computing/hardware/the-brain-as-computer-bad-at-math-good-at-everything-else

Hachman, M. Nvidia’s GPU neural network tops Google. PC World (18 June 2013); https://www.pcworld.com/article/2042339/nvidias-gpu-neural-network-tops-google.html

Digital reasoning trains world’s largest neural network. HPC Wire (7 July 2015); https://www.hpcwire.com/off-the-wire/digital-reasoning-trains-worlds-largest-neural-network/

Wu, H. Y., Wang, F. & Pan, C. Who will win practical artificial intelligence? AI engineerings in China. Preprint at https://arxiv.org/abs/1702.02461 (2017).

Sze, V., Chen, Y. H., Yang, T. J. & Emer, J. S. Efficient processing of deep neural networks: A tutorial and survey. Proc. IEEE. 105, 2295–2329 (2017).

Canziani, A., Paszke, A. & Culurciello, E. An analysis of deep neural network models for practical applications. Preprint at https://arxiv.org/abs/1605.07678 (2016).

Author information

Authors and Affiliations

Contributions

X.X. contributed to data collection, analysis and writing. Y.D. contributed to data collection. S.H., M.N., J.C. and Y.H. contributed to discussion and writing. Y.S. contributed to project planning, development, discussion and writing.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Xu, X., Ding, Y., Hu, S.X. et al. Scaling for edge inference of deep neural networks. Nat Electron 1, 216–222 (2018). https://doi.org/10.1038/s41928-018-0059-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41928-018-0059-3

This article is cited by

-

Detecting unregistered users through semi-supervised anomaly detection with similarity datasets

Journal of Big Data (2023)

-

Deep learning with coherent VCSEL neural networks

Nature Photonics (2023)

-

A large-scale integrated vector–matrix multiplication processor based on monolayer molybdenum disulfide memories

Nature Electronics (2023)

-

The importance of resource awareness in artificial intelligence for healthcare

Nature Machine Intelligence (2023)

-

The physics of optical computing

Nature Reviews Physics (2023)