Abstract

Recent advances in large language models (LLMs) have demonstrated remarkable successes in zero- and few-shot performance on various downstream tasks, paving the way for applications in high-stakes domains. In this study, we systematically examine the capabilities and limitations of LLMs, specifically GPT-3.5 and ChatGPT, in performing zero-shot medical evidence summarization across six clinical domains. We conduct both automatic and human evaluations, covering several dimensions of summary quality. Our study demonstrates that automatic metrics often do not strongly correlate with the quality of summaries. Furthermore, informed by our human evaluations, we define a terminology of error types for medical evidence summarization. Our findings reveal that LLMs could be susceptible to generating factually inconsistent summaries and making overly convincing or uncertain statements, leading to potential harm due to misinformation. Moreover, we find that models struggle to identify the salient information and are more error-prone when summarizing over longer textual contexts.

Similar content being viewed by others

Introduction

Fine-tuned pre-trained models have been the leading approach in text summarization research, but they often require sizable training datasets which are not always available in specific domains, such as medical evidence in the literature. The recent success of zero- and few-shot prompting with large language models (LLMs) has led to a paradigm shift in NLP research1,2,3,4. The success of prompt-based models (GPT-3.55 and recently ChatGPT) brings new hope for medical evidence summarization, where the model can follow human instructions and summarize zero-shot without updating parameters. Medical evidence summarization refers to the process of extracting and synthesizing key information from a large number of medical research studies and clinical trials into a concise and comprehensive summary. While recent work has analyzed and evaluated this strategy for news summarization6 and biomedical literature abstract generation7, there is no study yet on medical evidence summarization and appraisal.

In this study, we conduct a systematic study of the potential and possible limitations of zero-shot prompt-based LLMs on medical evidence summarization using GPT-3.5 and ChatGPT models. We then explore their impact on the summarization of medical evidence findings in the context of evidence synthesis and meta-analysis.

Results

Study overview

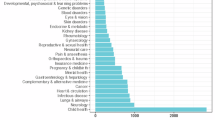

We used Cochrane Reviews obtained from the Cochrane Library and focused on six distinct clinical domains—Alzheimer’s disease, Kidney disease, Esophageal cancer, Neurological conditions, Skin disorders, and Heart failure (Table 1). Domain experts verified each review to confirm that they have significant research objectives.

In our study, we tackle the single-document summarization setting where we focus on the abstracts of Cochrane Reviews. Abstracts of Cochrane Reviews can be read as stand-alone documents8. They summarize the key methods, results and conclusions of the review. An abstract does not contain any information that is not in the main body of the review, and the overall messages should be consistent with the conclusions of the review. In addition, abstracts of Cochrane Reviews are freely available on the Internet (e.g., MEDLINE). As some readers may be unable to access the full review, abstracts may be the only source readers have to understand the review results.

Each abstract includes the Background, Objectives of the review, Search methods, Selection criteria, Data collection and analysis, Main results, and Author’s conclusions. The average length of the abstracts we evaluated can be found in Table 1. We chose the Author’s Conclusions section, the last section of the abstract, as the human reference summary for the Cochrane Reviews in our study. This section contains the most salient details of the studies analyzed within the specific clinical context. Clinicians often consult the conclusions first when seeking answers to a clinical question, before deciding whether to read the full abstract and subsequently the entire study. It also allows the authors to interpret the evidence presented in the review, assess the strength of the evidence, and provide their own conclusions or recommendations concerning the efficacy and safety of the intervention under review.

We assessed the zero-shot performance of medical evidence summarization using two models: GPT-3.55 (text-davinci-003) and ChatGPT9. To evaluate the models’ capabilities, we designed two distinct experimental setups. In the first setup, the models were given the entire abstract, excluding the Author’s Conclusions (ChatGPT-Abstract). In the second setup, the models received both the Objectives and the Main Results sections from the abstract as the input (ChatGPT-MainResult and GPT3.5-MainResult). Here, we chose Main Results as the input document because it includes the findings of all important benefit and harm outcomes. It also summarizes the impact of the risk of bias on trial design, conduct, and reporting. We did not evaluate GPT3.5-Abstract because our pilot study indicated that ChatGPT-MainResult performs generally better than ChatGPT-Abstract. In both settings, we used [input] + “Based on the Objectives, summarize the above systematic review in four sentences” as a prompt, emphasizing the importance of referring to the Objectives section for aspect-based summarization. We decided to summarize the review into four sentences since it is close to the length of human reference summaries on average. The average words of the generated summary for each system are 107, 111, and 95 for ChatGPT-MainResult, ChatGPT-Abstract, and GPT3.5-MainResult, respectively. More details can be found in Supplementary Table 1.

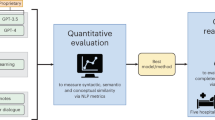

Automatic evaluation

To evaluate the quality of the automatically generated summaries, we employed various automatic metrics (Fig. 1a), including ROUGE-L10, METEOR11, and BLEU12, comparing them against a reference summary. Their values range from 0.0 to 1.0, with a score of 1.0 indicating the generated summaries are identical to the reference summary. Our findings reveal that all models exhibit similar performance with respect to these automatic metrics. A relatively high ROUGE score demonstrates that these models can effectively capture the key information from the source document. In contrast, a low BLEU score implies that the generated summary is written differently from the reference summary. Consistent METEOR scores across the models suggest that the summaries maintain a similar degree of lexical and semantic similarity to the reference summary.

We also assessed the degree of abstraction by measuring the extractiveness13 and the percentage of novel n-grams in the summary with respect to the input. Compared to human-written summaries, those generated by LLMs tend to be more extractive, exhibiting significantly lower n-gram novelty (Fig. 1b). Notably, ChatGPT-MainResult demonstrates a higher level of abstraction compared to ChatGPT-Abstract and GPT3.5-MainResult, but there remains a substantial gap between ChatGPT-MainResult and human reference. Finally, approximately half of the reviews are from 2022 and 2023, which is after the cutoff date of GPT3.5 (June 2021) and ChatGPT (September 2021). However, we observed no significant difference in quality metrics before and after 2022.

Human evaluation

To obtain a comprehensive understanding of the summarization capabilities of LLMs, we conducted an extensive human evaluation of the model-generated summaries, which goes beyond the capabilities of automatic metrics14. Specifically, the lack of standardized terminology of error types for medical evidence summarization necessitated our use of human evaluation to invent new error definitions. Our evaluation methods drew from qualitative methods in grounded theory, which involved open coding of qualitative descriptions of factual inconsistencies, further contributing to the development of error definitions. Additionally, we included a measure of perceived potential for harm, as it is a clinically relevant outcome that automatic metrics are unable to capture. Our evaluation defined summary quality along four dimensions: (1) Coherence; (2) Factual Consistency; (3) Comprehensiveness; and (4) Harmfulness and the results are presented in Fig. 2a–d.

Coherence refers to the capability of a summary to create a coherent body of information about a topic through connections between sentences. Figure 2a shows that annotators rated most of the summaries as coherent. Specifically, summaries generated by ChatGPT are more cohesive than those generated by GPT3.5-MainResult (64% vs. 55% in Strong agreement).

Factual Consistency measures whether the statements in the summary are supported by the source document. As illustrated in Fig. 2b, fewer than 10% of summaries produced by ChatGPT-MainResult exhibit factual inconsistency errors, which is significantly lower compared to those generated by other LLM configurations. Medical evidence summaries should be perfectly accurate. To understand the types of factual inconsistency errors that LLMs produce, we categorize these errors into three types of errors using an open coding approach on annotators’ comments (Supplementary Fig. 1). Examples can be found in Supplementary Table 2.

Comprehensiveness refers to whether a summary contains comprehensive information to support the systematic review. As shown in Fig. 2c, both ChatGPT-MainResult and ChatGPT-Abstract provide comprehensive summaries more than 75% of the time, with ChatGPT-MainResult having significantly more summaries annotated as Strongly Agree. In contrast, GPT3.5-MainResult generates noticeably less comprehensive summaries. It would be possible that extending the length of the summary would lead to a more comprehensive summary. However, ChatGPT-MainResult strikes a balance between providing enough information and being concise. The next evaluation was conducted to determine whether the omission of information relevant to the objectives might lead to medical harm.

Harmfulness refers to the potential of a summary to cause physical or psychological harm or undesired changes in therapy or compliance due to the misinterpretation of information. Figure 2d shows the error type distributions from summaries that contain harmful information, with ChatGPT-MainResult generating the fewest medically harmful summaries (<10%).

Supplementary Fig. 2 further breaks down the human evaluation for each domain. We observe annotation variations across six different clinical domains, and these variations can be attributed to several factors. (1) The complexity of specific domains or review types may contribute to the observed variability, as some may be less complex than others, making it easier for LLMs to summarize. (2) Domain experts might evaluate the summaries according to their unique internal interpretations of quality metrics. (3) Individual preferences may influence the decision on what key information should be incorporated in the summary.

Human preference

Figure 3 shows the percentage of times humans express a preference for summaries generated by a specific summarization model. Notice that we allow multiple summaries to be selected as the most or least preferred for each source document. As shown in Fig. 3a, ChatGPT-MainResult is significantly more preferred among the three LLMs configurations, generating the most preferred summaries approximately half of the time, outperforming its counterparts by a considerable margin. In Fig. 3b, we categorize the considerations driving such preference. We find that ChatGPT-MainResult is favored because it produces the most comprehensive summary and includes more salient information. In Fig. 3c, the leading reasons for choosing a summary as the least preferred are missing important information, fabricated errors, and misinterpretation errors. This aligns with our finding that ChatGPT-MainResult is the most preferred since it commits the fewest amount of factual inconsistency errors and contains the least harmful or misleading statements.

Discussion

Are existing automatic metrics well-suited for evaluating medical evidence summaries? Research has demonstrated that automatic metrics often do not strongly correlate with the quality of summaries14. Moreover, there is no off-the-shelf automatic evaluation metric specifically designed to assess the factuality of summaries generated by the most recent summarization systems6,15. We believe this likely extends to the absence of a tailored factuality metric for evaluating medical evidence summaries generated by LLMs as well. In our study, we observed similar results for three model settings when using automatic metrics, which fall short of accurately measuring factual inconsistency, potential for medical harmfulness, or human preference for LLM-generated summaries. Therefore, human evaluation becomes an essential component to properly assess the quality and factuality of medical evidence summaries generated by LLMs at this time, and more effective automatic evaluation methods should be developed for this field.

What causes Factual Inconsistency? We categorize factual inconsistency errors into three types of errors using an open coding approach on annotators’ comments (Supplementary Fig. 2). Examples can be found in Supplementary Table 1.

First, through our qualitative analysis of the annotators' comments, we discover that auto-generated summaries often contain Misinterpretation errors. These errors can be problematic, as readers might trust the summary’s accuracy without being aware of the potential for falsehoods or distortions. To better understand these errors, we further categorize them into two main subtypes. The first is Contradiction, which arises when there is a discrepancy between the conclusions drawn from the medical evidence results and the summary. For example, a summary might assert that atypical antipsychotics are effective on psychosis in dementia, whereas the review indicates that the effect is negligible16. The other is the Certainty Illusion, which occurs when there is an inconsistency in the degree of certainty between the summary and the source document. Such errors may cause summaries to be overly convincing or uncertain, potentially leading readers to rely too heavily on the accuracy of the presented information. For instance, the abstract of Cochrane Review17 asserts moderate-certainty evidence that endovascular therapy (ET) plus conventional medical treatment (CMT) compared to CMT alone causes a higher risk of short‐term stroke and death. However, we found that the generated summary conveys low-quality confidence.

Fabricated errors arise when a statement appears in a summary, but no evidence from the source document can be found to support or refute the statement. For instance, a summary states that exercise could enhance satisfaction and quality of life for patients with chronic neck pain, but the review does not mention those two outcomes for patients18. Interestingly, in our human evaluation, we did not find ChatGPT producing any fabricated errors.

Finally, Attribute errors refer to any errors on non-key elements in the review question (i.e., Patient/Problem, Intervention, Comparison, and Outcome) and may arise in summaries under three circumstances: (a) Fabricated attribute: This error occurs when a summary incorporates an attribute for a specific symptom or outcome that is not referenced in the source document. For example, a review17 draws conclusions about a population with intracranial artery stenosis (ICAS), but the summary states the population with recent symptomatic severe ICAS, where “recent” and “severe” cannot be inferred from the document and would impact one’s interpretation of the review; (b) Omitted attribute: This error occurs when a summary neglects an attribute for a specific symptom or outcome, such as neglecting to specify the subtype of dementia discussed in the review, leading to overgeneralization of the conclusion16; and (c) Distorted attribute: This error occurs when the specified attribute is incorrect, like stating four trials are included in the study while the source document indicates that only two trials are included19.

We observed that ChatGPT-MainResult has the lowest proportion of all three types of errors when compared to the other two model configurations. Moreover, it is important to note that LLMs generally generate summaries with few fabricated errors. This is a promising finding, as it is crucial for generated statements to be supported by source documents. However, LLMs do display a noticeable occurrence of attribute errors and misinterpretation errors, with ChatGPT-Abstract and GPT3.5-MainResult displaying a higher incidence of the latter. Drawing inaccurate conclusions or conveying incorrect certainty regarding evidence could lead to medical harm as shown in later sections.

What causes medical harmfulness? We further identify three reasons that could potentially cause medical harmfulness: misinterpretation errors, fabricated errors, and incomprehensiveness (such as missing Population, Intervention, Comparison, Outcome elements (PICO)). Notably, we did not find any instances of medical harmfulness resulting from attribute errors in the summaries we analyzed. However, given the limited number of summaries we examined, we cannot definitively conclude that attribute errors could never cause harm. Our study suggests that medical harmfulness caused by LLMs is mainly due to misinterpretation errors and incomprehensiveness. Although our human evaluation showed that LLMs tend to make relatively few fabricated errors when completing our tasks, we cannot exclude the possibility that such errors could lead to harmful consequences. However, not all summaries with these errors would bring medical harm. For example, although the summary makes a significant error by misspecifying the number of trials in a study19, our domain experts do not think this could bring medical harm.

How do human-generated summaries compare to LLM-generated summaries? We observe that human-generated summaries contain a higher proportion (28%) of fabricated errors, resulting in more factual inconsistency and potential for harmfulness in human references. However, it is essential to approach this finding with caution, as our human evaluation on human-generated summaries relies solely on the abstracts of Cochrane Reviews as a proxy. There is a possibility that statements deemed to contain fabricated errors could, in fact, be validated by other sections within the full-length Cochrane Review. For example, the severity of intracranial atherosclerosis (ICAS) of the studied population (see the example for Fabricated Attribute in Supplementary Table 1) is not mentioned in the abstract of the review but is mentioned in the Results section of the whole review. Therefore, we decide to exclude the human reference from our comparison figures. Nevertheless, despite these errors, human-generated summaries are still preferred (34%) compared to ChatGPT-Abstract (26%) and GPT3.5-MainResult (21%). It is worth noting that human-generated summaries may contain valuable interpretations of reviews, which account for why they are chosen as the best summaries. However, it is important to avoid over-extrapolating from the source document, as this could lead to less desirable outcomes (as illustrated in Fig. 3b and c).

Does providing longer input lead to better summaries generated by LLMs? It is important to emphasize that the main difference between ChatGPT-MainResult and ChatGPT-Abstract is that the latter generates summaries based on the entire abstract. Our findings show that having longer text actually negatively impacts ChatGPT’s capability to identify and extract the most pertinent information, as evidenced by the lower comprehensiveness. Furthermore, a longer context leads to an increased likelihood of ChatGPT making factual inconsistency errors and generating summaries that are more misleading. These factors combined make ChatGPT-Abstract less preferred compared to ChatGPT-MainResult in human evaluation.

How can we automatically detect factual inconsistency and improve summaries? Given that the types of errors made by the most recent summarization systems are constantly evolving15, future factuality and harmfulness evaluation should be adaptable to these shifting targets. One possible approach is to leverage the power of LLMs to identify potential errors within summaries. However, effectively identifying the most important information from long contexts and making high-quality summaries remains a challenging task for LLMs that we have evaluated. Methods such as segment-then-summarize20 and extract-then-abstract21 for handling summarizing long context are shown to not work well for zero-shot LLM6,15. Furthermore, the presence of non-textual data, such as tables and figures in Cochrane Reviews, may increase the complexity of the summarization task. To address these challenges and improve the quality of summaries, future work could explore and evaluate the efficacy of GPT-4 in summarizing reviews with longer contexts and multiple modalities, while also incorporating techniques for detecting factuality inconsistencies and medical harmfulness.

Our study has limitations. Our evaluation of ChatGPT and GPT-3.5 is based on a semi-synthetic task, which involves summarizing Cochrane Reviews using only their abstracts or part of the abstracts. A more genuine task here would be a multi-document summarization setting that involves summarizing all relevant study reports within a review addressing specific research questions. The rationale behind this choice is three-fold. First, the abstracts of Cochrane Reviews is a stand-alone documents8 not contain any information that is not in the main body of the review, and the overall messages should be consistent with the conclusions of the review. Second, abstracts of Cochrane Reviews are freely available and may be the only source readers can assess the review results. Finally, we need to accommodate the input length constraints of large language models, as the full Cochrane Review would surpass their capacity. As a result, our experiment assesses these LLMs' ability to summarize medical evidence under a modified summarization framework. Our rigorous systematic evaluation finds that ChatGPT tends to generate less factually accurate summaries when conditioned on the entire abstract, which could potentially indicate that the model may be susceptible to distraction from irrelevant information within longer contexts. This finding raises concerns about the model’s effectiveness when presented with the full scope of a Cochrane Review, and suggests that it may not perform optimally in such scenarios.

Secondly, the prompt in this study is adapted from previous work6. Given the lack of a systematic method for searching over the prompt space, it is conceivable that future studies could potentially discover more effective prompts.

Third, ChatGPT and GPT-3.5 were not required to produce a particular word count, but merely a specific sentence length. Consequently, the enhanced performance of ChatGPT-MainResult in comparison to ChatGPT3.5-MainResult may be attributed to its generation of summaries of a slightly longer length, averaging about 8 words (Supplementary Table 1).

Our evaluation of LLM-generated summaries focused on six clinical domains, with one designated expert assigned to each domain. Such evaluation requires domain knowledge, making it difficult for non-experts to carry out the evaluation. This constraint limits the total amount of summaries we are able to annotate. Further, we chose to use only the abstracts of Cochrane Reviews to evaluate human-generated summaries (Author’s Conclusions section) since examining the entire Cochrane Review is a time-consuming process. Therefore, it is possible that some of the errors identified in human reference summaries may actually be substantiated by other sections of the full-length review.

Methods

Materials

A Cochrane Review is a systematic review of scientific evidence that aims to provide a comprehensive summary of all relevant studies related to a specific research question. Reviews follow a rigorous methodology, which includes a comprehensive search for relevant studies, the critical appraisal of study quality, and the synthesis of study findings. The primary objective of Cochrane Reviews is to provide an unbiased and comprehensive summary and meta-analysis of the available evidence, to help healthcare professionals make informed decisions about the most effective treatment options.

In this work, we utilized Cochrane Reviews extracted from the Cochrane Library, which is a large database that provides high-quality and up-to-date information about the effects of healthcare interventions. It covers a diverse range of healthcare topics, and our study focuses on six specific topics drawn from this resource— Alzheimer’s disease, Kidney disease, Esophageal cancer, Neurological conditions, Skin disorders, and Heart failure. In particular, we have collected up to ten of the most recent reviews on each topic. Each review was verified by domain experts to ensure they had important research objectives. Table 1 shows the basic summary statistics. We focus on the abstracts of Cochrane Reviews in our study, which can be read as stand-alone documents. Each abstract includes the Background, Objectives of the review, Search methods, Selection criteria, Data collection and analysis, Main results, and Author’s conclusions.

Experimental setup

In this study, we aim to evaluate the zero-shot performance of summarizing systematic reviews using two OpenAI-developed models: GPT-3.5 (text-davinci-003) and ChatGPT. GPT-3.5 is built upon the GPT-3 model but has undergone further training using reinforcement learning with a human feedback procedure to provide better outputs preferred by humans. ChatGPT has gathered significant attention due to its ability to generate high-quality and human-like responses to conversational text prompts. Despite its impressive capabilities, it remains unclear whether ChatGPT can generalize and perform high-quality zero-shot summarization of medical evidence reviews. Therefore, we seek to investigate the comparative performance of ChatGPT and GPT-3.5 in summarizing systematic reviews of medical evidence data.

To evaluate the capabilities of the models, we have designed two distinct setups for input. In the first setup, the models take the whole abstract except for the Author’s Conclusions as the input (ChatGPT-Abstract). The second setup takes both the objectives and the Main results sections of the abstract as the model input (ChatGPT-MainResult and GPT3.5-MainResult). The objective section outlines the specific research question in the PICO formulation that the review aims to address, while the Main results section provides a summary of the results of the studies included in the review, including key outcomes and any statistical data while highlighting the strengths and limitations of the evidence.

In both settings, we use [input] + “Based on the Objectives, summarize the above systematic review in four sentences” to prompt the model to perform summarization, where we emphasize the purpose of the summary by providing the Objectives section. We use the Author’s Conclusions section as the human reference (see explanations in the “Introduction” section) and compare it against the models’ generated outputs.

Automatic evaluation metrics

We select several metrics widely used in text generation and summarization. ROUGE-L10 measures the overlap between the generated summary and the reference summary, focusing on the recall of the n-grams. METEOR11 measures the harmonic mean of unigram precision and recall, based on stemming and synonym matching. BLEU12 measures the overlap between the generated summary and the reference summary, focusing on the precision of the n-grams. These metrics have values ranging from 0 to 1, where 1 indicates perfect matching between the generation and the reference, while 0 indicates no overlap. In addition, we selected two reference-free metrics. Extractiveness13 measures the percentage of words in a summary from some extractive fragments of the source document, where extractive fragments are a set of shared sequences of tokens in the document and the summary. The percentage of novel n-grams signifies the proportion of n-grams in the summary that differ from the original source document.

Design of the human evaluation

We systematically evaluate the quality of generated summaries via human evaluation. We propose to evaluate summary quality along several dimensions: (1) Factual consistency; (2) Medical harmfulness; (3) Comprehensiveness; and (4) Coherence. These dimensions have been previously identified and serve as essential factors in evaluating the overall quality of generated summaries22,23,24. Factual consistency measures whether the statements in the summary are supported by the systematic review. Medical harmfulness refers to the potential of a summary that leads to physical or psychological harm or unwanted changes in therapy or compliance due to the misinterpretation of information. Comprehensiveness evaluates whether a summary contains sufficient information to cover the objectives of the systematic review. Coherence refers to the ability of a summary to build a coherent body of information about a topic through sentence-to-sentence connections.

To assess the quality of the generated summaries, we include six domain experts, with each annotating summaries for a specific topic. During the annotation process, participants are presented with the whole abstract of the systematic review, along with four summaries: (1) the Authors’ conclusion section; (2) ChatGPT-MainResult; (3) ChatGPT-Abstract; and (4) GPT3.5-MainResult. The order in which the summaries are presented is randomized to minimize potential order effects during the evaluation process. We utilize a 5-point Likert scale for the evaluation of each dimension. If the summary received a low score on any of the dimensions, we further asked participants to explain the reason for the low score in a provided text box for each dimension. This approach enables us to perform a qualitative analysis of the responses and identify common themes to define a terminology of error types for medical evidence summarization where none exists. In addition to evaluating the quality of the summaries, we also asked participants to indicate their most and least preferred summaries and to provide reasons for their choices. This approach enables us to identify specific subcategories of reasons and gain insights into the potential of using model-generated summaries to assist in completing the systematic review process.

The 5-point Likert scales between models were assessed by the Mann–Whitney U test25. The response categories of a 5-point Likert item are coded 1–5 which were used as numerical scores in the Mann–Whitney U test for differences. The p-value reflects if the responses of the summaries generated by the two models are different, assuming the null hypothesis means there is no difference between the results generated by the two models. We used 1000 bootstrap samples to obtain a distribution of the Likert scales and reported p-values.

Reporting summary

Further information on research design is available in the Nature Research Reporting Summary linked to this article.

Data availability

The summarization data that support the findings of this study can be assessed through the PubMed ID. The AI-generated text data that support the findings of this study are available from the corresponding author upon reasonable request. The authors declare that all other data supporting the findings of this study are available within the paper and its supplementary information files.

Code availability

The codes that support the findings of this study are available from the corresponding author upon reasonable request.

References

Wei, J. et al. Chain-of-thought prompting elicits reasoning in large language models. In Advances in Neural Information Processing Systems Vol. 35 (eds Koyejo, S. et al.) 24824–24837 (Curran Associates, Inc., 2022).

Brown, T. et al. Language models are few-shot learners. In Advances in Neural Information Processing Systems Vol. 33 (eds Larochelle, H., Ranzato, M., Hadsell, R., Balcan, M. F. & Lin, H.) 1877–1901 (Curran Associates, Inc., 2020).

Chowdhery, A. et al. PaLM: scaling language modeling with pathways. Preprint at https://arxiv.org/abs/2204.02311 (2022).

Kojima, T., Gu, S. S., Reid, M., Matsuo, Y. & Iwasawa, Y. Large language models are zero-shot reasoners. In Advances in Neural Information Processing Systems Vol. 35 (eds Koyejo, S. et al.) 22199–22213 (Curran Associates, Inc., 2022).

Ouyang, L. et al. Training language models to follow instructions with human feedback. In Advances in Neural Information Processing Systems Vol. 35 (eds Koyejo, S. et al.) 27730–27744 (Curran Associates, Inc., 2022).

Goyal, T., Li, J. J. & Durrett, G. News summarization and evaluation in the era of GPT-3. Preprint at https://arxiv.org/abs/2209.12356 (2022).

Gao, C. A. et al. Comparing scientific abstracts generated by ChatGPT to real abstracts with detectors and blinded human reviewers. npj Digit. Med. 6, 75 (2023).

Beller, E. M. et al. PRISMA for abstracts: reporting systematic reviews in journal and conference abstracts. PLoS Med. 10, e1001419 (2013).

OpenAI. Introducing ChatGPT. https://openai.com/blog/chatgpt (2023).

Lin, C.-Y. ROUGE: a package for automatic evaluation of summaries. In Text Summarization Branches Out. 8 74–81, Barcelona, Spain (Association for Computational Linguistics, 2004).

Banerjee, S. & Lavie, A. METEOR: an automatic metric for MT evaluation with improved correlation with human judgments. In Proc. ACL Workshop on Intrinsic and Extrinsic Evaluation Measures for Machine Translation and/or Summarization 65–72, Ann Arbor, Michigan (Association for Computational Linguistics, 2005).

Papineni, K., Roukos, S., Ward, T. & Zhu, W.-J. BLEU: a method for automatic evaluation of machine translation. In Proc. 40th Annual Meeting on Association for Computational Linguistics 311–318 (Association for Computational Linguistics, 2002).

Grusky, M., Naaman, M. & Artzi, Y. Newsroom: a dataset of 1.3 million summaries with diverse extractive strategies. In Proc. 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 1 (Long Papers) 708–719 (Association for Computational Linguistics, 2018).

Fabbri, A. R. et al. SummEval: re-evaluating summarization evaluation. Trans. Assoc. Comput. Linguist. 9, 391–409 (2021).

Tang, L. et al. Understanding factual errors in summarization: errors, summarizers, datasets, error detectors. In Proc. 61st Annual Meeting of the Association for Computational Linguistics (Vol. 1: Long Papers) 11626–11644 (Association for Computational Linguistics, Toronto, Canada, 2023).

Mühlbauer, V. et al. Antipsychotics for agitation and psychosis in people with Alzheimer’s disease and vascular dementia. Cochrane Database Syst. Rev. 12, CD013304 (2021).

Luoa, J. et al. Endovascular therapy versus medical treatment for symptomatic intracranial artery stenosis. Cochrane Database Syst. Rev. 8, CD013267 (2023).

Gross, A. et al. Exercises for mechanical neck disorders. Cochrane Database Syst. Rev. 1, CD004250 (2015).

Kamo, T. et al. Repetitive peripheral magnetic stimulation for impairment and disability in people after stroke. Cochrane Database Syst. Rev. 9, CD011968 (2022).

Zhang, Y. et al. SummN: a multi-stage summarization framework for long input dialogues and documents. In Proc. 60th Annual Meeting of the Association for Computational Linguistics (Vol. 1: Long Papers) 1592–1604 (Association for Computational Linguistics, 2022).

Zhang, Y. et al. An exploratory study on long dialogue summarization: what works and what’s next. In Findings of the Association for Computational Linguistics: EMNLP 2021 4426–4433 (Association for Computational Linguistics, 2021).

Singhal, K. et al. Large language models encode clinical knowledge. Nature 620, 172–180 (2023).

Tang, L. et al. EchoGen: generating conclusions from echocardiogram notes. In Proc. 21st Workshop on Biomedical Language Processing 359–368 (Association for Computational Linguistics, 2022).

Jeblick, K. et al. ChatGPT makes medicine easy to swallow: an exploratory case study on simplified radiology reports. Preprint at https://arxiv.org/abs/2212.14882 (2022).

Clason, D. & Dormody, T. Analyzing data measured by individual Likert-type items. J. Agric. Educ. 35, 31–35 (1994).

Acknowledgements

This work was supported by the National Library of Medicine (NLM) of the National Institutes of Health (NIH) under grant number 4R00LM013001, 1R01LM014306, and 5R01LM009886, NIH Bridge2AI (OTA-21-008), National Cancer Institute (NCI) of NIH under grant number P30CA013696, National Science Foundation under grant numbers 2145640, 2019844, and 2303038, and Amazon Research Award. The content is solely the responsibility of the authors and does not necessarily represent the official views of the NIH and NSF.

Author information

Authors and Affiliations

Contributions

L.T. implemented the methods, conducted the experiments, and wrote the paper. Z.S. implemented the methods and conducted the experiments. B.I., J.G.N., A.S., P.A.E., Z.X., and J.R. conducted the human evaluation. Y.D. and G.D. edited the paper. J.R., C.W., and Y.P. advised on all aspects of the work involved in this project and assisted in the paper writing. All authors read and approved the final manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Tang, L., Sun, Z., Idnay, B. et al. Evaluating large language models on medical evidence summarization. npj Digit. Med. 6, 158 (2023). https://doi.org/10.1038/s41746-023-00896-7

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41746-023-00896-7