Abstract

Pain is a complex and personal experience that presents diverse measurement challenges. Different sensing technologies can be used as a surrogate measure of pain to overcome these challenges. The objective of this review is to summarise and synthesise the published literature to: (a) identify relevant non-invasive physiological sensing technologies that can be used for the assessment of human pain, (b) describe the analytical tools used in artificial intelligence (AI) to decode pain data collected from sensing technologies, and (c) describe the main implications in the application of these technologies. A literature search was conducted in July 2022 to query PubMed, Web of Sciences, and Scopus. Papers published between January 2013 and July 2022 are considered. Forty-eight studies are included in this literature review. Two main sensing technologies (neurological and physiological) are identified in the literature. The sensing technologies and their modality (unimodal or multimodal) are presented. The literature provided numerous examples of how different analytical tools in AI have been applied to decode pain. This review identifies different non-invasive sensing technologies, their analytical tools, and the implications for their use. There are significant opportunities to leverage multimodal sensing and deep learning to improve accuracy of pain monitoring systems. This review also identifies the need for analyses and datasets that explore the inclusion of neural and physiological information together. Finally, challenges and opportunities for designing better systems for pain assessment are also presented.

Similar content being viewed by others

Introduction

In 2020, the International Association for the Study of Pain (IASP) revised the definition of pain, which currently reads1: “An unpleasant sensory and emotional experience associated with, or resembling that associated with, actual or potential tissue damage.” This definition encapsulates that pain has sensory and affective elements, both nociceptive (physiological encoding and processing of noxious stimuli) and neuropathic (it can happen at any time without a pain-inducing event) pain experiences, and a cognitive element indicated in the anticipation of potential harm. In addition, the revised IASP definition notes that a “verbal description is only one of several behaviours to express pain.” Based on this definition and interpretation, for this review, one can argue that pain can be measured in multiple ways and in multiple contexts.

Pain can be thought as a construct that can be assessed using different approaches: self report, behaviour (e.g., vocalisations, facial expressions, body movement), and physiological activity2. Self reports, or patient reported measures, have been considered the gold standard in pain assessment in clinical practice. Self-reporting tools, such as the numerical rating scale (NRS) or the visual analogue scale (VAS), provide a fast and simple way to measure pain, require minimal effort to administer and are easily understood by the clinician and patient3. These metrics rely on the patients’ ability to assess and communicate their own pain experience. Another alternative is the verbal rating scale (VRS), which is sometimes used for individuals (e.g., young children, adolescents or adults with speech problems or learning disabilities) who have difficulty translating their pain experience into a numerical value, thus, this metric uses words to describe the magnitude of pain experience. A disadvantage of this metric is that patients may find the VRS difficult to answer, since the answers describing pain may be ambiguous and may not represent the best fit to their pain experience4. In addition, fluency in the language used for the VRS can be a barrier to effective assessment of pain5.

Behavioural measures can be used in individuals with impaired cognition or language skills, or in patients for whom self report is not possible or invalid. These tools capture facial expressions (including grimace, opened mouth, raising of eyebrows)6, vocalisations (e.g., crying, moaning, screaming)7, or bodily movements (e.g., posture, rigid or tense body, rest and sleep)8 as indicators of pain from the perspective of an external observer, e.g., nurses, doctors, or carers. Thus, these metrics are completely dependent on others to be attentive to nonverbal signs in pain, which represents a challenge since the trained observers must be able to reliably distinguish pain from a variety of other facial and bodily expressions9. A clear limitation of these type of metrics is observer error and bias, since there is a possibility that two trained observers might interpret behaviours differently. Other limitations of this measure include (1) individual differences in the expression of pain, which may be considerable from patient to patient; (2) the tendency of patients to alter their behaviour in the presence of an observer10; or (3) the inability of some populations to display signs of pain due to early developmental stage in infants or the use of sedatives or blocking agents that may mask pain behaviours11.

Physiological measures are an alternative when self reports are not available or as a complement to a clinical assessment. Physiological measures might be more accurate than behavioural measures for patients with intellectual disability or non-verbal patients12. Physiological measures of pain are based on the assumption that pain induces changes in autonomic activity of the nervous system and that these variations can be observed using different sensors that measure nervous system physiology. Studies of physiological responses in non-verbal patients, infants, and children in clinical settings often include methods of assessment observing derived cardiovascular and respiratory parameters, such as heart rate, blood pressure, respiration rate, oxygen saturation rate, skin sweating, and pupil size variations13,14. In this context, the use of sensors to measure physiological changes are of great importance, since their use can provide a precise, systematic, and simultaneous assessment of different physiological indicators.

The appropriate management of pain is an essential element of care. This ethical duty to treat pain was highlighted by the Declaration of Montreal, which calls for “access to pain management as a fundamental human right”15. To support this ethical duty, there is a need to obtain an objective, reliable, and accurate physiologic marker of pain that can assist clinicians to establish the most beneficial treatment for patients in pain. The field of pain management would benefit enormously from further advancement in objective, physiologic markers of pain3. To gain insight into how neurophysiological indicators can serve as valid measures of pain, it is important to understand the underlying mechanisms of how neurophysiological signals can be used to capture pain. To this end, we will discuss some of the most common aspects of the nervous system that provide insights into pain.

Aetiology of pain

In the event of painful stimuli on the body, pain is mediated by processing in the nervous system. The nervous system consists of two subsystems, the peripheral nervous system and the central nervous system (CNS). The main function of the CNS is the integration and processing of sensory information in the body. The CNS consists of the brain and the spinal cord. Information regarding the effect of painful stimuli on the affected area (within the body or on extremities and trunk) is transported through the peripheral nervous system to the central and autonomic nervous systems by means of neural afferent pathways16. This process, in which the brain is informed of actual or potential tissue damage, is known as nociception. It is worth noting that in most cases, nociceptive stimulation (e.g., bruises, cuts, fractures) leads to pain; however, pain (e.g., phantom limb pain) can occur in the absence of any noxious stimulation. In this regard, nociceptive pain is often acute and brief in duration, and originates in response to a sufficiently intense stimulus.

Sensory pain receptors (also known as nociceptors) are sensory neurons that are attached to thin afferent nerve fibres (located at the skin, muscle, joints, bone, and viscera) and terminate in the dorsal horn of the spine17. Nociceptors help detect signals from potentially damaging stimuli in the body and can be activated when the stimulus is sufficiently intense. A nociceptor is a type of receptor with high threshold that respond to noxious thermal, chemical, or mechanical forces. This may be an intense mechanical stimuli such as cutting or pinching of the skin, intense heat or cold on the skin, or exposure to noxious chemicals16. When nociceptors are activated, the nociceptive signals are relayed to the spinal cord and transmitted to the thalamus within the CNS. The thalamus serves as central hub for sensory information before the signal is transported to different cortical areas of the brain responsible for integrating the information and response18. Contrary to well-defined sensory areas of the brain, such as the auditory cortex or visual cortex, there is no single pain cortex associated with pain perception19. Instead, there are multiple cortical and sub-cortical areas (also known as the pain matrix) that are associated with pain processing. These include the primary and secondary somatosensory cortex (S1, S2), anterior cingulate cortex (ACC), prefrontal cortex (PFC), insular cortex, nucleus accumbens, amygdala and thalamus20,21.

The peripheral nervous systems has two major subdivisions. The somatic nervous system is associated with predominantly voluntary activities, such as relaying instructions from the CNS to the muscles for voluntary movement. The autonomic nervous system is related to the regulation of involuntary, physiologic processes, such as regulating heart rate, blood pressure, or respiration22. The autonomic system is particularly associated with pain, since painful stimuli elicit an autonomic response (e.g., muscles will tighten, heart rate increases, skin temperature will fall) as an automatic defensive response in the body to avoid any further damage and to escape the painful stimulus. This autonomic response occurs irrespective of awareness or the pain experience16,23. In addition, the body will remember the noxious experience and can replicate the responses in the occurrence of similar events.

The autonomic nervous system (ANS) has two main branches that act the same time, the sympathetic (SNS) and parasympathetic nervous systems (PNS). These two regulate and control different autonomic functions in a number of vital organs. For instance, in case of painful stimuli, the SNS is involved in the body’s immediate response to danger (e.g., severe or acute pain); this autonomic reaction to pain is also known as the “fight or flight” response. The SNS activates a neurophysiological response that includes the regulation of blood flow, blood pressure, and vascular tone, and produces changes in blood pressure (BP), heart rate, sweat release, and pupil diameter24; thus, pain produces an increase of heart rate, blood pressure, oxygen intake, and sweat release, and leads to pupil dilation25. On the other hand, the PNS exhibits an inhibitory mechanism to pain that helps conserve and restore those neurophysiological responses exited during the sympathetic response. Among other functions, PNS slows the heart rate, decreases respiratory rate, and constricts pupils. These different functions make the measurement of pain complex.

Currently, there are sensing technologies that afford a non-invasive measure into the ANS and CNS. For instance, available metrics to measure changes in the ANS include, heart rate (HR) and heart rate variability (HRV) (both HR and HRV can be obtained either by electrocardiography (ECG) or photoplethysmography (PPG)), electrodermal activity (EDA), respiration (RESP), electromyography (EMG), and pupillometry. Similarly, there are non-invasive methods to measure activity in the CNS. Brain activity is measured by means of imaging techniques, such as electroencephalography (EEG), functional near-infrared spectroscopy (fNIRS), functional magnetic resonance imaging (fMRI), and magnetoencephalography (MEG). However, fMRI and MEG tend to be more intrusive26 and are, therefore, not considered in this review. The data obtained from each sensing technology must be subsequently analysed to enable an assessment of pain to be made.

The recording or measurement of physiological response require either real-time or post-collection processing and analysis. A range of processing and analysis techniques have been used in conjunction with the sensing technologies over many decades. In addition, there have been considerable advances in the use of tools, such as artificial intelligence (AI), to rapidly and more reliably determine an individual’s pain state. The development of AI tools combined with appropriate analytical models has made physiological sensors an ideal source of data to assist clinicians to make a more reliable and well-informed diagnosis of pain. With different advances in non-invasive sensing technologies and analysis approaches, which have emerged over the past decade, there is a need to explore the critical role they play in pain assessment.

Therefore, the purpose of this review is to integrate the literature on the different sensing technologies for the objective assessment of pain. This review also identifies how the information obtained by these sensors can be employed to develop autonomous mechanisms that can assist in estimating an individual’s pain. The contributions of this paper can be summarised as follows: (a) presenting a summary of the different sensing technologies that can be used in measuring an individual’s pain experience, which also includes a summary of the expected neurophysiological response (e.g., increase or decrease) during pain; (b) offering a detailed summary of analytical methods, including pre-processing, feature extraction and optimisation, and learning problem (e.g., classification or regression) results; (c) presenting a summary of the practical implications on the used of each sensing technology; and (d) a detailed discussion on the identified challenges and possible opportunities for designing better systems for pain assessment.

Results

In this section, the study selection process including the steps considered for the selection of articles in this review is presented. In addition, the results for each of the research questions is presented in the following subsections.

Study selection

Figure 1 presents the article identification and selection process. The search strategy retrieved 553 studies from the three databases and and additional 8 papers were manually included after searching in the reference lists of the identified studies. After removing duplicates found in different sources, 435 studies remained for further review. After screening the titles and abstracts against the inclusion criteria, 382 articles were discarded. The remaining 53 articles were read in their entirety. From those, a total of five papers were rejected for the following reasons: (1) they were different instances of the same study (n = 3), and (2) there was not enough information about the sensors and/or the analysis (n = 2). The remaining 48 papers were included in this review.

An overview of selected papers for this review is presented in Table 1. The summary presents information about the type of pain (e.g., electrical, thermal, postoperative, and sickle cell disease), the type of noxious stimulation used to elicit pain (e.g., laser, cold pressor test, thermode) and its anatomical location where the stimuli was applied (e.g., hand, arm, abdomen), and the population included (e.g., gender and age range) in each study. In addition, the sensor name to measure the neurophysiological response is also included. Based on the anatomical location, two categories can be observed in this summary: sensors that measure neural/brain activity (e.g., fNIRS, EEG) and sensors that measure other physiological activity (e.g., EDA, ECG, EMG, PPG)—it is worth mentioning that due to the exclusion criteria (non-invasive methods), surface EMG (sEMG) will be used hereinafter. Therefore, in the remaining of this paper, these two categories are referred as neurological and physiological sensors, respectively.

Sensors used for pain assessment

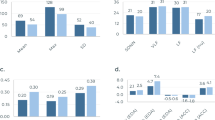

As described earlier, the two main categories of sensors used for assessing pain in the literature are: neurological and physiological sensors. Among the 48 studies, 27 studies (56%) assessed pain using physiological sensors, 20 studies (42%) assessed pain using neurological sensors, and only 1 study (2%) with a combination of neurological and physiological sensors. It is clear that the most popular sensors for the assessment of pain were EDA (n = 20), ECG (n = 14), and EEG (n = 14), while the least popular were movements sensors (accelerometer and gyroscope), Pupil, SpO2, and BP. Although, SpO2, BP, and pulse can be obtained from PPG, there was no indication of the type of sensor employed to obtain these metrics; therefore, we decided to maintain these metrics separately in the plot. It is also important to mention that, while the majority of studies used a single type of sensor (unimodal = 30), some of the retrieved studies used a combination (multimodal = 18) of two or more sensors for the assessment of pain. In addition, those studies using a multimodal approach, most of them employed physiological sensors (n = 17) only. Those studies using unimodal sensing, most of them (n = 20) used neurological sensors and only some (n = 10) used physiological sensors. Figure 2 presents the distribution of the reviewed studies along with their type of sensor modality (unimodal/multimodal).

EDA electrodermal activity, ECG electrocardiogram, EEG electroencephalography, PPG photoplethysmography, EMG electromyography, fNIRS functional near-infrared spectroscopy, RESP respiration, SKT skin temperature, MOVE gyroscope/accelerometer/steps, Pupil pupillometry, SpO2 oxygen saturation, BP blood pressure.

In the following subsections, we report on the different sensors used of pain assessment. In addition, information regarding the effect of pain on the observed neurophysiological response (e.g., increase or decrease) with respect to each type of sensor and the location of the recording sensor on the participants of each study are also reported. This information is important for the interested reader who wants to explore the use of these sensors and replicate similar results.

Physiological measures

In the search for an accurate and objective method for pain recognition, various physiological signals have been explored as potential indicators for pain. These sensors are: Electrodermal activity (EDA), surface Electromyography (sEMG), Electrocardiogram (ECG), Respiration (RESP), Oxygen saturation (SpO2), Blood Pressure (BP), Electrocardiogram (ECG), Movement (MOVE), Skin temperature (SKT), Pupillary response (PUPIL), and Photoplethysmography (PPG). A summary of physiological sensors used in the assessment of pain is presented in Table 2.

Among all of the physiological signals identified in the literature, electrodermal activity (EDA) was the most popular. In addition, EDA has been one of the most widely used physiological indicators in psychology, psychiatry, and psychophysiology research27. It is also refered as galvanic skin response (measuring changes in the skin’s electrical resistance) or skin conductance response (measuring changes in the skin’s electrical conductance)28. This type of sensor measures the changes in sweat gland activity which are affected by the sympathetic nervous system29. Increased sweating reduces (↓) skin resistance level (SRL), or in other words, the skin conductance level (SCL) increases (↑). When external stimuli are presented (e.g., visual, auditory, noxious, etc.), the skin momentarily becomes a better conductor of electricity27. These phenomena is normally recorded from palmar and plantar surfaces because of the higher density of glands in these areas30. In the searched literature, most studies used the palmar surface of two fingers (e.g., index and middle fingers) using electrodes29,31,32,33,34,35,36,37,38,39,40,41, while a small number of studies used a wristband to measure EDA on the wrist42,43,44,45.

Another popular physiological measure for indicating pain perception is electrocardiography (ECG). Since the autonomic nervous system regulates internal bodily functions involuntarily (e.g., those of the cardiovascular system), the heart plays a primary role. The heart provides a consistent flow of oxygenated blood (HbO), first by pumping deoxygenated blood (HbR) into the lungs (pulmonary circulation) for re-oxygenation, and then by sending HbO to the rest of the body (systemic circulation)27. The ECG signal is an indication of the heart’s contractile activity. The most significant parameters related to pain that have been derived from ECG signals are heart rate (HR) and heart rate variability (HRV)46. HR is a measure of the number (in heartbeats per minute or bpm) of contractions of the ventricles and HRV is a measure of the variance in time between heartbeats. An increase (↑) of HR and a decrease (↓) of HRV is associated with a stress response47,48. ECG can be measured on the thorax surface or in the limbs. Most surveyed studies used the thorax34,35,36,37,38,39,40,41,49, while other studies used either the wrists or the ankles33,46 to monitor the heart activity.

Electromyography (EMG) is another typical physiological recording method used to assess pain. This technique measures the electrical muscle activity originated by muscle contractions and propagated through the surface of the skin (i.e., surface EMG). In general, muscle contractions are associated with reflexes and actions characteristic of behaviour27. Electrical muscle activity is also a sign of general psychophysiological stimulation, as higher muscle tone is related to increased sympathetic nervous system activity and reduced somatomotor activity is primarily due to parasympathetic stimulation39. Although there is no a single muscle that can be targeted to be used as indicator of pain, in the area of affective computing, increased activity (↑) of the trapezious muscle is related to high stress39, while increase (↑) activity in the zygomaticus and corrugator muscles has been linked to an elicited response to unpleasant imagery50. In the reviewed literature, the trapezious muscle (located in the upper back, neck, near the shoulder area)34,35,37,38,39,39, and the zygomaticus major and corrugator supercilii muscles (located in the cheek and above brow, respectively)41 were used to measure muscle activity.

Another effective technique in detecting physiological changes is photoplethysmography (PPG). It is an optical method for detecting blood volume changes in the microvascular bed of tissue. It is frequently used to take measurements at the skin’s surface in a non-invasive manner, either by transmittance mode (e.g., a clip in the earlobe or finger) or by reflectance mode (e.g., a wrist watch)51. The PPG signal is multi-functional because it enables the extraction of many physiological indicators such as HR (or pulse rate), peripheral oxygen saturation (SpO2), and respiration rate reflecting the autonomic nervous system reaction52. The most common place to measure blood volume are the finger or the earlobe27. The thumb33 and the index finger40,49,52 of the non-dominant hand, and the wrist43,44 were the most popular anatomical locations in the reviewed literature. A decrease (↓) of blood volume pulse33,40, an increase (↑) in HR43,44,49,52,53, and an decrease (↓) of pulse transit time33 have been reported during different pain stimuli.

Skin temperature (SKT) is another metric for the identification of pain in the reviewed literature. The skin is a natural barrier that prevents the entry of external matter into the body and also allows the transmission of bodily fluids from the bloodstream to the exterior of the body27. The skin helps in the maintenance of water balance and core body temperature, which is achieved by generation of sweat (thermoregulation). Evidence suggest that generation of sweat changes the skin resistance, i.e., the higher the sweat, the lower the skin resistance27. Similar to EDA, this metric can be measured in palmar and plantar surfaces because of the higher density of glands in these areas30. In refs. 43,44, the skin temperature was measured in the wrist, while in ref. 33 it was measured in the finger tips. In addition, a decrease (↓) of local skin temperature has been observed after painful stimuli53.

Other various metrics such as respiration (RESP), oxygen saturation (SpO2), blood pressure (BP), movement (MOVE), and pupil changes (PUPIL) were also identified as possible indicators of pain. Respiration rate (also know as breathing rate) is a measure of the number of breaths per minute and it can be measured using an elastic belt worn around the thorax34,41. Respiratory changes often occur in response to pain, for instance, an inspiratory gasp with a subsequent breath-hold in response to acute pain54. In the reviewed studies, an increase (↑) in inspiration34,41 and a decrease of breathing rate were reported53. Although, RESP, SpO2 and BP can be obtained using PPG, in ref. 53 there was no indication of the method used to acquire these metrics; in this study, it was reported a decrease (↓) of breathing rate, an increase (↑) of oxygen saturation, and decrease (↓) of systolic and diastolic blood pressure. Movement metrics have been used to indicate changes in motor behaviour (e.g., slow movement, shorter duration, or fractionated movement)55. In ref. 53, a decrease (↓) in movement and number of steps as measure by 3-axial accelerometer and 3-axial gyroscope were reported. Finally, pupillary response to pain was investigated using cameras mounted on eye tracking glasses, it was reported that a decrease in pupillary diameter (i.e., dilation) was observed as a response to painful stimulation56.

Neurological measures

As human brain is the centre of any response to a certain stimulus, it is believed that neurological signals are highly correlated to complex integrative functions, such as sensory and motor integration57. In the reviewed literature, two types of neurological sensors were used to assess pain: (1) electroencephalography (EEG) and (2) functional near-infrared spectroscopy (fNIRS). Table 2 also presents a summary of the reviewed studies using neurological sensors.

In the reviewed studies, the most popular method of assessment of pain using neurological sensors was electroencephalography (EEG). EEG measures the brain’s electrical activity and pattern analysis of this activity is used to indicate neural activation associated with pain under certain frequencies. Spectral analysis is employed to decompose EEG signals into its constituting frequency components, between 1 and 60 Hz58. Typically, EEG data are partitioned into five bands (from slowest to fastest: delta, theta, alpha, beta, and gamma). The power spectral density (PSD) in each band is computed and used to compare the conditions being studied (i.e., pain vs. no pain). EEG is considered the most popular approach in the literature to objectively assess other cognitive states such as attention, cognitive workload, or vigilance.

EEG studies have identified correlations between pain and the power at different EEG frequency bands. Power in Delta band (1–4 Hz) has exhibited an increase (↑) after mechanical (pressure) pain59 and cold pressor test60,61. Power in Theta band (4–8 Hz) has also shown an increase (↑) after mechanical59 and thermal pain62. Power in Alpha band (8–12 Hz) was the most common indicator in the literature, the power in this band showed a decreased (↓) after cold pressor test60,61,63 and noxious laser stimulation64. Power in Beta band (12–30 Hz) showed an increase (↑) after heat stimulation65. Finally, power in Gamma band (30–45 Hz) exhibited an increased (↑) after heat stimuli62,65 and cold pressor test63.

The second approach to assess pain with neurological sensors is to measure activation of different brain regions using function near-infrared spectroscopy (fNIRS). This technique examines the levels of oxygenation (HbO) and deoxygenated (HbR) haemoglobin concentration in the cerebral cortex66,67. fNIRS is commonly used in this regard to measure the amount of HbO, due to its better signal-to-noise ratio than HbR, in a given brain region in response to a noxious stimulation68. Different studies have reported that increased (↑) levels of HbO in different cortical areas correlates with increased stimulation after heat and cold66,69, mechanical and electrical70, and cold pressor test71. Although, neither of these studies reported the use of HbR, it is well known that HbR has an opposite effect than HbO.

Techniques for the analysis of sensor data in pain assessment

Several studies have addressed the problem of automatic pain assessment using machine learning or deep learning methods by analysing both individual or multiple physiological signals. Traditional machine leaning methods rely on the design of manual feature extraction and feature optimisation to improve model performance. On the other hand, deep learning methods learn the intrinsic representations of the data to extract features directly from the data without the need of hand-crafted features. Despite of the method for feature derivation, two type of learning problems were identified in the reviewed literature: (1) classification, which qualitatively predicts the pain intensity by classifying trials into two (e.g., pain, no pain) or more levels (no pain, low, medium, high pain); and (2) regression, which quantitatively predicts the pain intensity as a continuous value (e.g., 0–10)72. In this section, we present the analytical methods used to decode pain from neurophysiological signals in the reviewed literature. Table 3 exhibits a summary of the analytical methods used in the reviewed studies. In addition, Fig. 3 presents the data analysis pipeline, this diagram is used to present the findings from the literature into four main categories: pre-processing, feature extraction, feature optimisation, and learning models (classification/regression).

Pre-processing methods

The first step in the data analysis pipeline is pre-processing, which is generally done after signal acquisition. It is imperative to reduce or eliminate noise present in the captured data by implementing different pre-processing strategies, which are generally specific for each type of sensor. Noise (e.g., artefacts) can be defined as the unwanted changes that a signal may capture during data acquisition73. The signal within each sensor is typically a combination of the neurophysiological response to pain (or to the presented stimulus), environmental noise (e.g., power lines, electronic equipment), confounding factors (e.g., respiration or heartbeat in fNIRS data), or motion artefacts (e.g., body movement, poor sensor contact with the skin). When the noise components are stronger than the physiological data, the quality of the data may interfere with the analysis, its interpretation, and the validity of the data. The objective of pre-processing is to improve the quality of the signal by removing trends, filtering noise and artefacts, and in some cases, re-sampling the signal to reduce complexity in the analysis. Thus, pre-processing methods are an important step in the data analysis pipeline. In the following paragraphs, the processing steps for each individual sensor are presented.

EDA is vulnerable to several types of noise, including those generated from electronic noise, or movement between the skin and the recording electrodes. In the reviewed literature, the most widely used technique to remove signal artefacts and noise is using low-pass filters. This technique is able to smooth variations (e.g., movement, electrode pressure) in the signal31,34,35,45 and also to remove noise from the power source (50 Hz or 60 Hz)42 by attenuating unwanted (high) frequencies from the signal above a given cutoff frequency. A similar technique to reduce high-frequency components associated with artefacts is using moving windows, the moving media window29,31,32 and the moving average window37,38,40,42,43 are two examples of this technique. These windowing techniques eliminate extraneous data by computing the median and mean value within the window width (e.g., 5-s width), respectively. Another popular pre-processing technique used in the reviewed literature is downsampling the original signal. Downsampling is often used to reduce the data rate or the size of the data to speed up computation and reduce complexity29,31,32,40,42,44,45.

sEMG data are also susceptible to different types of noise. For instance, electromagnetic radiations from the power sources, high skin impedance, contamination due to relative motion of the sensor on the skin, cross-talk from nearby muscles, interference with the electrode cable or sensor detachment (also known as clipping), or ECG artefacts often observed from the muscles in the trunk74. Common motion artefacts, such as, electrode interface or electrode cable detachment, can be reduced by a better design of the system set-up75. On the other hand, inherent noise due to muscle cross-talk or instability of sEMG signals is believed to affect frequencies in the region of 0 to 20 Hz, which is the firing rate of the motor units75. In the reviewed literature, these kind of noise were attenuated with a band-pass filter to remove low and high frequencies from the signal34,35,37,38,39,41. The low-frequency cutoff (typically around 20 Hz) of the filter removes muscle cross-talk and inherent sEMG instability, while the high-frequency cutoff (typically greater than 200 Hz) removes high-frequency interference, such as movement artefacts, cable interference and clipping76.

ECG signals are mainly affected by sources of noise such as baseline drift, artefacts due to electrode motion, muscle contractions, and power source interference77. Baseline drift is a type of noise that presents an erratic up and down movement in the baseline signal, which is often a result of improper electrode placement or movement78. Muscle noise (i.e., EMG cross-talk) also affects the ECG signals, in particular in recordings involving human activity or exercise. In the reviewed studies, a moving average window40,46 or a linear detrend37,49 were used to smooth the signal and remove baseline drift (0.05–1 Hz). A band-pass filter35,38,39 was used to remove the baseline drift, movement (muscular and electrode) artefacts, and muscle cross-talk. A high-pass filter40 can be used to remove powerline interference (50 or 60 Hz), or motion artefacts and muscle cross-talk (ranging from 20 to 1000 Hz)79.

PPG is corrupted by various kinds of noise regardless of the type of the sensor design (reflectance or transmission). A typical PPG signal contains two main components, a large DC component due to the light absorbed when passing through the skin, tissue, and bone; and a small AC component due to the light passing through pulsating arteries caused by the heartbeat80. Common sources of noise in PPG signals are, powerline noise caused by electromagnetic interference (e.g., ambient light, computer screens), motion artefacts from sensor and/or body movements affecting the optical path, and physiological confounders (e.g., breathing). In the reviewed literature, a Savitzky-Golay filter49 was used for smoothing artefacts. A combination of low-pass and high-pass filter to remove high-frequency interference and to baseline drift (low-frequency), respectively52. Similarly, a band-pass filter40 was used to remove both low- and high-frequency noise. The application of a moving average window at two different periods were used to make the vasoconstriction and heartbeat intervals more salient in the PPG signal and remove motion artefacts52.

Other physiological signals such as, RESP, MOVE (gyroscope, accelerometer, and steps count), PUPIL, and SKT are also susceptible to noise. For instance, MOVE signals are highly affected by large voluntary and involuntary movement81. Similarly, respiration data are affected by movement artefacts. Blinks is the major source of noise in pupillometry. Skin temperature sensors are affected by movement artefacts or sensor detachment. In reviewed literature, a band-pass filter was used to compensate for movement artefacts in RESP data34, a moving average window was implemented to smooth the signal and reduce artefacts in the MOVE and SKT data43, and pupil diameter velocity method was used to remove high-frequency noise56.

EEG signals are also susceptible to various forms of noise, which affect their data quality. Often sweating and drifts in electrode impedance lead to slow changes in the measured potential, producing amplifier saturation, distortions, and lost data in the EEG signals. Similarly, muscle contractions typically affect the EEG signals above 100 Hz82. For these reasons, it is recommended to filter the frequencies below 0.01 Hz and above 100 Hz. In the reviewed literature, two types of filters were implemented to filter these type of noise. In ref. 62, a high-pass filter with a cutoff frequency of 0.25 Hz, while in refs. 63,64,72,83,84 a band-pass filter with a low- and high-cutoff frequency of 0.01–1 Hz and 30–100 Hz, were used respectively. Another common source of noise is the power line interference at 50 or 60 Hz, in ref. 64 a Butterworth band-stop filter (49–51 Hz) was used to remove the power source frequency interference. Another distinct source of noise is eye movements and blinks, in ref. 63 a visual inspection and a subsequent manual rejection was implemented; while, in refs. 62,72,83,84 independent component analysis (ICA) was used, and in ref. 72 the multiple artefact rejection algorithm (MARA) was implemented.

fNIRS data is generally contaminated by different sources of noise and pre-processing is required. Motion artefacts are generally seen as large spikes in the fNIRS data and in the reviewed literature, two hybrid methods were used to remove these artefacts: a discrete wavelet transform and statistical analysis66,69, and spline interpolation and Saviszky-Golay filter70. In addition, it is expected that high-frequency oscillations in the fNIRS data do not have a neural basis and are contaminated by cardiac pulsation (0.8–1.5 Hz) and respiration (0.16–0.6 Hz)68. Thus, in the work of refs. 66,69,70,71,85,86, a low-pass filter with a cutoff frequency below 0.16 Hz was applied to remove high frequency noise. Another distinct problem in fNIRS data is the inclusion of extracerebral hemodynamics from the scalp, skull, and systemic variables (e.g., blood pressure) that affect the data, in refs. 69,66 a de-noising procedure using principal component analysis (PCA) was implemented to identify and delete those components representing spurious signals in the fNIRS signals.

Feature extraction

The second step in the data analysis pipeline is feature extraction and its subsequent feature optimisation. A feature can be defined as an individual independent variable that serves as input data in a predictive/learning model. Feature extraction refers to the process to convert the sensor signals into numerical features (i.e., properties or characteristics) that can be used to create a predictive model using machine learning or deep learning. Features that better discriminate between input data from different classes (e.g., low, moderate, high pain), generally produce simpler and more accurate results. Since, features directly influence the performance of the predictive models and their results, thoughtful consideration to the feature extraction process (also called feature engineering) should be placed during the data analysis process. There are several feature extraction techniques that are applicable to most signals (e.g., statistical features), however, there are also some that are specific for each type of sensor (e.g., QRS-complex from ECG signals).

Statistical metrics are the most widely used features in many signal classification tasks. These kind of features often result in a good approximation to describe changes over time (e.g., summary statistics) across the values in the processing window (also called epoch)87. There are several popular statistical metrics including mean, standard deviation, range, kurtosis, or skewness. In the reviewed literature, in ref. 39 several statistical features were further divided into stationarity, entropy, linearity, variability, and similarity. In addition, statistical features were obtained from most sensors, PPG33,40,43,44, ECG36,37,38,39,40,41,46, sEMG34,37,38,39,41, EDA33,34,37,38,38,40,41,42,43,44, MOVE43,44, PUPIL56, RESP34,41, and SKT33,43,44.

Time-domain features are also popular features in time series analysis. These type of features are used to understand the shape of the signals within each window. Time-domain features are useful to find specific information about the signal, e.g., height (amplitude), length, or time to specific events (e.g., peaks, peak-to-peak amplitude). These metrics are important to compare the signals in different conditions (e.g., no pain and high pain) and also with respect to each sensor. Some of the most popular metrics obtained in the time domain include, maximum (max) and minimum (min) values, peak-to-peak values, time to peak, root mean square (rms), wavelength, slope, and area under the curve (AUC). Similar to the statistical features, time-domain features were obtained from most sensors in the reviewed literature, EDA33,34,37,38,39,41, PUPIL56, sEMG34,37,38,39,41, ECG33,37,38,39,41,46, RESP34,41, SKT33, EEG83, and fNIRS69,86.

Frequency-domain features allow to observe several characteristics of the signals that are not evident in the time domain. In signal processing, frequency refers to the number of cycles completed by a signal per unit of time, i.e., frequency is a measure of the occurrence of events in a specified time period88. When the information of interest repeats over time, frequency-domain analysis can be used to isolate oscillatory information within and across frequencies presented in the time series signals89. It is worth noting that, each type of sensor has distinctive characteristics in their frequency spectrum and therefore, appropriate analysis requires identification and processing for the unique frequency spectrum. For instance, EEG analysis is often based on the frequency decomposition of distinctive bands (e.g., δ (0.3–4 Hz), θ (4–8 Hz)), α (8–13 Hz), β (13–30 Hz), and γ (30–50 Hz)), which can be associated with specific functional characteristics. The most popular technique for frequency analysis is the Fourier Transform, which is mathematical technique that transforms a function of time (e.g., x(t)) into a function of frequency (e.g., x(w)), characterised by sine and cosines. Frequency-domain features include mean power in a specific frequency band, maximum power, or median frequency. In the reviewed literature, frequency-domain features were obtained from sEMG34,38,39, ECG33,38,39,46,49, EDA33,34,38,39, RESP34, PPG33,49,52, SKT33, EEG59,61,62,63,64, fNIRS69,86.

Time-frequency features are a useful technique in various forms of signal analysis. There are two main methods for time-frequency analysis, the short-time Fourier transform (STFT) and wavelets88. A clear property of time-frequency features is that they provide local information simultaneously, in both, time and frequency domain90. Time-frequency features allow to observe at which frequencies the signal oscillates and at which time these oscillations occur91, which can be helpful to study the frequency content during specific time periods or events (e.g., onset of pain). In the literature, wavelet-based features were computed from ECG37,49, PPG49, sEMG37, EDA37, fNIRS36,66,69,86, and EEG84,92,93, while STFT-based features were used from ECG72,94.

Other specialised features were also identified in the reviewed literature. Deep learning-based features, are obtained by deep neural networks able to automatically learn complex mappings (features) from input data (e.g., sensor data) to output classes or labels for classification problems or numeric values for regression problems, in an end-to-end manner95. In83 autoencoder-based features were obtained by training an autoencoder neural network, which compress the input EEG data into a lower-dimensional representation and then reconstruct the output from this representation. Similarly, in a fNIRS study85 and a multimodal (EDA, ECG, sEMG) study35, deep learning-based features were obtained from sensor data to automatically learn and extract relevant information in the pain data. Other specialised feature extraction techniques found in the literature are EDA-based features29,31,32,36, these features are based on the assumption that EDA signals have two salient attributes, the tonic (slow changing) and the phasic (fast changing) components; both tonic and phasic components are widely used to assess sympathetic arousal.

Feature optimisation

In the context of this review, feature optimisation refers to the process of reducing the size of the input variables (i.e., number of features) by means of feature selection or dimensionality reduction. In both cases, the aim of feature optimisation is not only to decrease the computational cost by reducing the number of features, but also to improve the performance of the predictive models by removing the irrelevant features or noisy data.

Feature selection is the process to reduce the number of features by identifying and selecting those features that have a strong relationship with the target variable (e.g., level of pain) and are believed to be the most informative for the design of predictive models. Feature selection methods can be organised into two main streams: model-based (wrapper and embedded methods) and model-independent (filter methods) methods. Wrapper methods search the space of possible feature subsets using the evaluation (e.g., training and testing) of a specific learning model; thus a search algorithm is “wrapped” around the model. Examples of wrapped methods in the literature are: recursive feature elimination (RFE)44,71, forward feature selection (FFS)39,43,49, back feature elimination (BFE)43, and genetic algorithm (GA)40,56. Embedded methods look for an optimal subset of features during the model’s construction, i.e., the learning models have their own built-in feature selection methods; embedded methods found in the literature are: least absolute shrinkage and selection operator (LASSO)44, random forest (RF)44, and Elastic Net (Enet)44. Filter methods, on the other hand, evaluate the features independently of any classification model by assessing the intrinsic properties of the data according to a certain criteria. Common objective criteria identified in the literature by filter methods are: Gini Index42,46, Information Gain94, Joint Mutual Information61,69, Wilcoxon Test52, Analysis of Variance (ANOVA)52, and Partial Least Squares Regression (PLSR)72.

Dimensionality reduction, on the other hand, refers to the methods that project features (input data) into a lower-dimensional feature space, resulting in entirely new input features87. Dimensionality reduction methods identified in the literature were based on principal component analysis (PCA)40,45, which works on the idea of finding a number of principal components that explain a specified amount of the variance in the data. In the machine learning literature, PCA can also be considered a feature extraction technique, since the identified principal components, that exhibit most of the variance, are considered a linear combination (a new set of features) of the original features. One of the limitations of dimensionality reduction is the fact that the obtained features are an abstract representation from the initial set of features, and this often affects the explainability of the learning models.

Learning models

Several studies have addressed the problem of automatic pain assessment using machine learning or deep learning methods. Traditional machine leaning methods rely on the design of manual feature extraction and feature optimisation to improve model performance. On the other hand, deep learning methods learn the intrinsic representations of the data to extract features directly from the data without the need of hand-crafted features. Despite of the method for feature derivation, two type of learning problems were identified in the reviewed literature, classification and regression. In this section, we summarise the type of learning problem based on the learning models as presented in Table 3.

Classification methods qualitatively predict the pain intensity by classifying trials into two (e.g., pain, no pain) or more levels (e.g., no pain, low, medium, high pain). This type of learning problem focus on discrete nominal outputs, however, a numerical variable (e.g., numerical value between 1 and 10) can be converted to an ordinal variable by dividing the range of the numerical variable into bins (e.g., 1–2, 4–6, 8–10) and assigning values to each bin, process commonly know as discretisation87. A popular machine learning model used in the literature is support vector machines (SVM)43,94, which often solves learning problems by using kernel functions to map the input data into higher-dimensional space in which the data can be separable (e.g., no pain or pain); kernel methods identified in the reviewed literature are Linear (L-SVM)45,53,59,61,72 and Gaussian (G-SVM)31,39,40,46,62,69,71,92, with G-SVM showing better results than L-SVM. Other popular classification methods in the literature are random forest (RF)29,34,37,38,42,49,84, logistic regression (LR)32,36,70,83, k-nearest neighbour (KNN)66,86,93, discriminant function analysis (DFA)33, sparse Bayesian extreme learning machine (SBELM)64, and artificial neural networks (ANN)41,56,63. In addition, deep learning models such as deep belief network (DBN)52, convolutional neural networks(CNN)35,96, and bi-directional long-short term memory networks (Bi-LSTM)85 were implemented to decode pain from sensor data.

Regression methods, on the other hand, quantitatively predict the pain intensity as a continuous value (e.g., 0–10)72. In the reviewed literature, regression models are used to obtain a continuous pain intensity based on the numerical values from the verbal numeric rating scale, where the subject or patient grades their pain sensation on a scale between 0 and 1097. Identified regression models using classical machine learning models are support vector regression (SVR)43,72, random forest (RF)37, and using deep learning models such as long-short term memory networks (LSTM)36. Another identified method is stacking or stacked model44, which is based on a combination of two or more regression models with the aim to harness the advantages of the individual models and obtain better performance than any single model in the assemble87.

Practical implications

In order to understand the practical implications of each sensor, it is important to understand their advantages and disadvantages with respect to their use. In this section, we present a summary of the identified implications from the reviewed literature. The aim is not only to understand the main limitations, restrictions, and barriers but also, to identify the benefits and possible solutions to the application of these sensors in more realistic scenarios (e.g., clinical settings). Table 4 presents a summary of the limitations and advantages for each individual sensor.

The use of EDA sensors is well-established in clinical practice and research. Applications using EDA include psycho-physiology, physical and cognitive stress, sleepiness, or affective computing33. EDA signals are modulated by autonomic changes in the sympathetic nervous system31,42,45, which not only drives elements of pain, bu also drives elements of human behaviour, cognitive states, or emotion31,33. For instance, there is a well-known association between EDA and emotional arousal, as the electrodermal activity changes in response to the emotional state (e.g., stressed, happy, sad). However, this high sensitivity to sympathetic function makes the discrimination between pain and emotional states difficult to accomplish31; this is a possible cause of false positives in pain detection29. EDA has exhibited promising results when used in isolation29,31,32,42,45 and has showed better results when compared with other sensors, such as, sEMG and ECG35,36, and RESP, BVP, and EGM98.

sEMG sensors are used to measure the electrical activity of muscle contractions propagated through the skin. Two main anatomical regions were used, the trapezius muscle (upper back of the torso)35,37,38, and the facial muscles (corrugator supercilii, zygomaticus major, risorious, orbicularis oculi, levator labii superiors)39,41. A clear disadvantage in the use of sEMG sensors to measure pain is that changes in muscle tone are highly associated with different affective states (e.g., stress, happiness, or anxiety)35,39. An advantage to use facial sEMG is that facial expressions serve as a direct means to communicate pain to other people, since individual’s affective state can be obtained by observing the face37. Pain assessment by means of facial expressions implies continuous tracking of a patient’s face, which can be difficult and cumbersome in clinical settings using cameras37. A possible solution is to use facial sEMG as it is more sensitive to muscle activation than camera-based monitoring99.

The use of SKT sensors to measure the temperature of the human body has been widely used in clinical assessment. In pathological conditions (e.g., locomotor, vascular, or malignant diseases), skin temperature serves as a valuable diagnostic information and well-being43,100. A clear advantage of SKT sensors to measure pain is that, SKT can be easily integrated into wireless wearable sensors with other sensors, which allows continuous monitoring53. Features from SKT data have shown higher feature importance against features from other vital signs (BP, SpO2, Pulse, and RESP)53. The main disadvantage of SKT sensors is that skin temperature is markedly affected by environmental temperature an air movement33,100. In addition, skin temperature has shown a rapid change due to emotional stress, which can confound data interpretation33.

RESP sensors are commonly used in clinical settings to monitor a patient’s respiration for intervention or diagnosis. In the reviewed literature, respiration data were obtained using chest straps34,41. An advantage in the use of RESP sensors to monitor pain is that multiple sensors can be integrated with the chest strap to monitor simultaneously different physiological signals (e.g., ECG, SKT)41. Data obtained from continuous respiration can also provide evidence on a patient’s respiratory health and recovery53. In addition, increased respiratory response has been observed in cutaneous pain stimuli, even under anaesthesia, which allows pain recognition while patients are under surgery34. On the other hand, a clear disadvantage in the use of chest strap is that they are prone to slippage (leading to inaccuracies), can be cumbersome to wear for long period of times (leading to uncomfortable patients), and can be also obstructive to other sensors and to clinicians41,101. Finally, this type of sensor has not been used in an unimodal approach, and it has showed low effect against other metrics (e.g., temperature, BP, SpO2) to estimate pain, while used in a multimodal approach53.

fNIRS systems provide a method for non-invasive monitoring of brain dynamics. These systems are used in different clinical settings as a neuroimaging technique in the field of neuroscience. The use of several sensors affords monitoring of different cortical areas simultaneously. fNIRS are safe to conduct brain monitoring in prolong time intervals66. fNIRS offers superior spatial resolution than EEG, which allows better accuracy in identifying specific cortical areas responding to changes in pain71,86,102. Its superior spatial resolution has the potential to identify the anatomical area where pain originates, based on the cortical activity66. However, the use of large number of channels is not practical for clinical applications, since some cortical areas (e.g., occipital, temporal, or parietal) are not accessible for continuous monitoring while the patient is in a supine position71. In these cases, a possible solution is to focus on the frontal area, with the additional advantage to have less hair contamination70. Other factors to consider when using fNIRS systems is that, fNIRS data are affected by cardiac pulse and respiration signals69,86, and the haemodynamic activity measured by fNIRS presents a temporal delay from the onset of the neural activity69,71,85.

EEG is also considered a brain imaging technique that allows non-invasive monitoring of neural activity. EEG systems measure the electrical activity of the brain. It has been widely used in the medical field in the diagnosis of epilepsy or sleep disorders61,72. EEG has higher temporal resolution than fNIRS, which affords a faster monitoring response (millisecond-scale)59,83,84. Using large number of EEG electrodes (e.g., 64, 128 electrodes) permits measuring the entire scalp62,103. A clear disadvantage of these systems is the need of conductive EEG gel or saline solutions to increase the conductivity between the electrodes and the surface of the scalp, which is generally time consuming59. Wearing an EEG head cap for long periods of time tends to be cumbersome and uncomfortable92. In addition, EEG is highly affected by blinks and eye movements, which requires different cleaning procedures64,94.

Pupillary response has been used extensively in human-computer interaction, attention monitoring, driver drowsiness, and cognitive workload56. Measures of pupil response can be obtained with cameras embedded in eyeglasses (e.g., Tobii Glasses). Thus, there is not invasive contact between the sensors and the skin, which has the potential to be less uncomfortable for the patients56. In addition, eyeglasses allow high mobility and non-obstructive application, as compared to external cameras56. However, an evident disadvantage is that patients must have their eyes open, which limits their use in unconscious patients or while sleeping. In addition, data cannot be available for long periods of time, or cannot be completely continuous due to blinking.

ECG sensors allow to measure the electrical activity of the heart on the skin surface. ECG has been widely used in the medical field to measure the rate (e.g., HR) and regularity of heartbeats (e.g., HRV), as well as the presence of any damage to the heart, and the effects of drugs or devices used to regulate the heart, such as a pacemaker33,39,40. In addition, ECG data can be used to monitor cardiovascular activity and overall well-being40. ECG shows an strong response to sympathetic and parasympathetic activity33,46. However, ECG physiological response can vary due to positive o negative emotions such as surprise, fear, or stress33,34,40,49. Another limitation is that ECG signals have shown high intra- and inter-subject variability in pain responses, which may limit its usability36,40,46. Also, ECG signals have shown lower classification accuracy than BVP and EDA signals40, and than EDA and sEMG signals35,36,37. Due to the number of leads used for ECG electrodes, ECG measures tend to be more obstructive and less convenient to be embedded into wearable devices37,40,49.

PPG is an optical method to measure variations of blood circulation. PPG offers multiple physiological indicators from both cardiac variations in blood volume (e.g., BVP) that arise from heartbeats, and from respiration and thermoregulation104. PPG signals offer an insight into the activity of the sympathetic nervous system40. PPG sensors can be easily integrated into a smart wristband with other physiological sensors33,43,53. PPG sensors can be placed anywhere on the body, with the finger as the most common location in the reviewed literature40,104. However, PPG signals obtained from a finger clip are susceptible to motion artefacts, e.g., hand movements, in and out of the bed or chair, or use of restroom43. Although, BVP-based features have shown better classification accuracies than that of ECG40, HR-based features were found to be less important in pain assessment than EDA-based features44. A clear confounding factor is that PPG data can vary outside of pain when a patient is at rest based on factors including stress, excitement, and breathing33,43.

Body movement (MOVE) can also serve as an important indicator in automatic pain estimation using sensors. An advantage of the movement sensors (e.g., accelerometers, gyroscopes) is that they can be easily integrated with other physiological sensors into wearable sensors for continuous monitoring43,44. In addition, body movement data can be also used to monitor well-being and identify early sings of health conditions related to sedentarism and lack of exercise in patients. Body movement measures such as number of steps and accelerometer information have shown a negative correlation with pain scores; this might reflect the fact that patients in more pain move less frequently44. However, lack of body movement can be originated due to other factors such as sedation, rest, or sleep44.

Discussion

After a thorough survey of the literature, the challenges and future opportunities in the use of neurophysiological sensors for the assessment of acute pain are discussed in this section. Significant research is currently being done in the fields of sensor design, signal processing, time series analytics, and machine learning and deep learning. However, these efforts alone are not enough to solve a complex problem such as pain assessment. Thus, we should consider the challenges and limitations of the current state of the art methods and identify possible opportunities, which can make possible a smooth integration of the available methods and techniques for practical real-time applications. In this context, we highlight several challenges in the assessment of acute pain, and propose opportunities that can help us move closer towards the development of a bedside real-time monitor of pain. With that in mind, we present, in the following subsections, the challenges and opportunities with respect to each research question.

Sensors to measure physiological changes in pain

Assessing pain based on a single sensor modality has major limitations. Sensor reliability is an important factor to consider when using a single sensor, as sensor failure reduces not only the quality of data, but also it causes loss of the physiological signal being measured105. In the medical field, data reliability is imperative to assess the clinical situation of the patient, and failing to provide reliable data might affect patient care and lead to patient deterioration106. For instance, the deployment of a pain monitoring system based on pupillometry alone will impact the reliability of the system, since the patient will be left unchecked while sleeping. Sensor uncertainty is another element to consider, interference (e.g., electrical noise) or confounding variables can negatively influence the physiological data and make it more susceptible to errors. For example, in the use of fNIRS sensors to measure the haemodynamic response, the fNIRS signals are often contaminated by superficial tissue (scalp and skull) and by other physiological indicators such as respiration or heartbeat68,107 that interfere with the expected signal from the cerebral cortex. In these cases, the use of a single sensor modality will produce noisy data, since all fNIRS sensors will be affected by the same source of noise. In addition, sensor sensitivity to a specific autonomic function (e.g., respiration, heart rate, pupil size) is another factor to consider. The use of a single sensor modality to monitor a single physiological parameter will limit the understanding of pain. Pain evokes multiple simultaneous neurophysiological signals that can offer a better comprehension of pain39,85. Although, using multiple sensor modalities will help solve some of the limitations of single sensor modality, the use of multiple sensor modalities must be balance against the ease of setup and application in a clinical setting.

The use of multimodal sensors for the assessment of pain presents several opportunities. Sensor complementarity is an important property of multimodal systems in which each sensor modality contributes to the whole system with specific information that cannot be obtained from any other single modality in the setup108. In the event of pain, multiple neurophysiological signals are triggered and by using different sensors modalities, it is possible to provide a different dimension of pain that will allow the system to obtain a more complete assessment of pain. For instance, fNIRS and EEG can complement each other to obtain a better assessment since EEG has higher temporal resolution but has lower spacial specificity, while fNIRS presents better spatial resolution yet lacks time precision due to its delayed haemodynamic response. Another advantage of multimodal systems is improved observability. Including multiple sensor modalities improves the diversity of data to monitor the physical measure. For example, combining measures (e.g., pupil, EDA, and fNIRS) that are not related to the same aspect of pain can enhance the accuracy of the system and provide better insights into the physical problem, this might not be possible to achieve with a single sensor modality81. Sensor robustness is another benefit of multimodal systems, since the use of a single sensor modality might react to a particular confounding factor, the overall reliability of the system might be affected. However, with the use of multiple sensor modalities, validation of sensor data can be achieved. For instance, if a patient normally suffers from high blood pressure (HBP), the use of a pulse-based sensor (e.g., PPG) might have a negative effect on the collected data, on the other hand, if other sensor modalities (e.g., EEG and EDA) are used in conjunction with PPG, the collected data will be more reliable as the other two sensing technologies can be used to validate the effect of HBP in the affected sensor. Finally, multimodality is not a new concept and has been naturally performed by animals and humans to assess different situations in the environment. For example, animals use a combination of multiple senses (e.g., vision, smell, hearing) to avoid risks or threats and improve their chances of survival109. Therefore, with the use of multiple modalities (i.e., different dimensions) to monitor the different physiological changes in pain, medical practitioners can obtain a better understanding of the pain experienced by the patients.

Obtaining quality sensor data plays a vital role in providing correct decision-making outcomes. In general, the better the quality of the sensors and more reliable the data, the more valuable it is. In applications of health monitoring, good quality data is imperative, as data not only helps patients receive better care, but also it makes for better research and analysis110. Three main categories of sensor quality can be observed in the literature, consumer-grade sensors, research-grade sensors, and medical-grade sensors. Although, consumer-grade sensors have gained popularity in healthcare applications due to their lower cost, data quality can be insufficient in healthcare applications. For instance, some fitness trackers (e.g., HRM-Tri by Garmin, FitBit PurePulse, Microsoft Band) explicitly acknowledge that their devices are not for medical use and should not be relied upon for detecting health conditions, including pain111. Another limitation of consumer-grade sensors is the lack of raw data availability and the inclusion of proprietary steps in their data analyses112. Research-grade sensors represent an option to obtain relatively good-quality data and with the flexibility to access the raw data, which allows the development and testing of own custom algorithms. This also allows for algorithms to be easily implemented across other similar devices, as well as transparency to estimate the outcome variable. On the other hand, medical-grade sensors can produce high-quality sensor data, higher measurement accuracy, higher sensitivity, and are more stable and robust110. A limitation of such high-quality sensors is their relatively high cost, as having to deploy many highly accurate but expensive sensors will occur in higher deployment costs. However, the use of medical-grade sensors results in less time spent in their maintenance and calibration, which might lead to reduced overall operational costs in the long run. Finally, it is important to consider the quality of sensing systems in healthcare, as decision-making outcomes, better diagnosis, and improved patient care depend on having accurate and reliable information.

Analytical techniques for decoding pain

Most of the reviewed studies used classical machine learning (ML) models, however, they rely heavily on feature engineering. Although in many cases, ML models such as SVM, RF, and LR exhibited the best performance in twenty nine of the reviewed studies, the success of these models mostly depends on the feature engineering process. A clear challenge in the feature engineering process is the need of domain knowledge to create features that are relevant. For example, having knowledge of the typical physiological response exhibited by patients in pain can help in the identification of metrics that are more relevant and valuable for the problem at hand. However, this approach can be highly subjective and bias to the person’s creativity or expertise, which might result in missing potentially useful features that might be ignored113. During the feature engineering process, large number of features are often generated (e.g., applying mathematical/statistical functions to the same sensor data), which can make the ML suffer from the curse of dimensionality. This can lead to obtaining highly noisy features, correlated features (i.e., collinearity), and without significant benefit. This results in trying to solve more complex problems, decreasing ML model’s performance, and increasing computation cost114. In the reviewed literature, dimensionality reduction or feature selection techniques were implemented as a feature optimisation stage to remove irrelevant or correlated features, however, this process is often computational expensive. The feature engineering process is time consuming and it involves multiple steps, including the design of the features, test their efficiency with the model, modify some of features or try other features, and repeat the process until the model exhibits an acceptable performance. Overall, the feature engineering process should not be considered lightly as it plays a major role in determining the outcome of a ML model.

An alternative to the feature engineering problem is deep learning (DL). A common application of DL models is to automatically create candidate features directly from data. Automated feature engineering extends the concept of domain knowledge as DL leverages the use of multiple hidden layers to explore different connections and extract the best features to solve the learning problem. The multi-layer architectures used in DL are inspired in the process that take place in core sensorial regions within the human brain, in which the multi-layer data representation extracts low-level features in the first layers and high-level features in the last layers115. The main advantage of DL compared to ML models is that it automatically finds significant features without the need of feature engineering or human domain knowledge expertise. This approach has shown to be successful in different complex tasks and DL models have outperformed well-known ML techniques in several domains, including natural language processing, computer vision, bioinformatics, speech and audio processing, among others116. However, a key challenge in the success of DL models is the need for large amounts of training data, as the data increases, a well-behaved performance model can be obtained115. This in part may explain why most ML models outperformed DL models using the same dataset35,39 or with the use of the same sensor modality117,118. In many cases, large datasets (in particular, labelled datasets) may be too difficult or costly to be collected for many learning problems. A possible solution to overcome the need for large datasets is the use of retraining DL models, i.e., transfer learning119. Transfer learning aims at transferring the knowledge across different but related domains, this approach has shown that DL models already trained on a specific dataset and build to solve a specific task can be reused as the initial phase for training on a different dataset for a different task120. Although DL have achieved accuracies that are far beyond that of classical ML models in other domains, the need for large amounts of training data should be considered in DL applications for pain assessment.

Although there are some publicly available datasets for the assessment of pain, there is a need of datasets that combine neurological and physiological signals. Available datasets include videos of face121,122,123, physiological signals (EDA, sEMG, ECG) and videos of face124, and physiological signals (EDA, sEMG, ECG), audio signals, video of the body and face, and thermal video of the face125 in adults. There are also datasets from infants, including vital signs (Sp02,HR, RESP, BP) with videos of face126, and with cry signals alone127. However, these datasets do not explore neurological and physiological sensing technologies together. In the reviewed literature, the dataset containing EEG and EDA data128 was identified through the work of Sun et al.129, but only the EEG data was analysed. The lack of such datasets can also be reflected in the limited number of studies that were identified in this review that explored the combination of neurological and physiological signals. The combination of neurological and physiological information has been widely explored in other areas of research including cognitive workload105,130, neuroergonomics131,132, or learning and training26,133. In addition, recent studies in multimodal data fusion have found that the combination of multiple neurological sensing technologies such as EEG and fNIRS exhibited significantly better results in cognitive workload assessment tasks134. The combination of EEG and fNIRS offers a possible stream in pain research that has not been explored before. Therefore, the inclusion of neurological data in conjunction with physiological and behavioural data has the potential to improve the overall accuracy of the assessment systems, which also helps obtain a more complete understanding of pain that would be otherwise unavailable from neurological, physiological, or behavioural data alone.

There is a need for the adoption of context-aware systems that can use additional information (contextual information) to improve the performance of objective tools for pain assessment. In the paradigm of computing, contextual information can be defined as a set of real world parameters or information that can be used to characterise the situation of an agent (e.g., person, place, or physical or computational object)135. In this case, context could be, for example, the use of health records from the patient, socioeconomic information, patient’s disease, genetic or familial variables, or situational or emotional factors that can help (in addition to the neural and physiological information) to understand in a better way the patients’ pain sensation. In the reviewed literature, there are some studies43,44,53 in which physiological data, health records, and medication information were used in their assessment. While in the work of Fang et al.136, congenering information was employed to cluster patients based on their type of disease (e.g., pulmonary, renal, cardiovascular) and trained a dedicated model for each category, their method exhibited better results than training a single model with all patients combined. In another study, Kachele et al.37 designed a personalised model to focus on each individual rather than the whole group, which also showed improved classification accuracy. Overall, the use of contextual information can help improve the perception of patients’ pain and can serve as the basis of well-informed decisions, not only by the medical practitioners, but also, by autonomous systems to trigger actions upon particular circumstances.

With the use of multiple sensor data and rich contextual information, intelligent fusion architectures will be needed. The fusion architecture should be able to fuse different data from sensors as well as the additional contextual information. An advantage of the fusion architecture is conflict resolution, considering that the use of multiple input variable often leads to contradictions and inconsistencies in the data109. For example, if increased levels of HR and BP data are observed, which may indicate an episode of pain, but at the same time, no changes (e.g., increase/decrease) are detected in other parameters (e.g., PUPIL, EDA, or EEG) in the data, this event may create a conflict (e.g., a false positive) in the pain monitoring system. However, contextual information (e.g., health records) could indicate that the patient suffers from hypertension, therefore the intelligent fusion architecture will control this conflict and resolve it by using the contextual data. Another advantage of the intelligent fusion architecture is weighing information, since not all sensor data convey the same level of reliability, and in the event of pain, some sensors may provide information that has more value than others in certain circumstances108. For instance, if the patient is asleep and in pain, some metrics (e.g., pupillometry or movement) will not provide reliable information, but other measures (e.g., EDA, PPG, or RESP) will be more important and relevant to provide evidence of pain. Therefore, the fusion architecture will be able to apply individual weights with different divergence measures based on their relative importance depending on the time of day137. Eventually, the intelligent fusion architecture will be particularly useful in clinical settings where real-time decision making is imperative to deliver decision support to clinicians in the shortest amount of time.

Practical implications on the use of sensors