Abstract

The use of remote measurement technologies (RMTs) across mobile health (mHealth) studies is becoming popular, given their potential for providing rich data on symptom change and indicators of future state in recurrent conditions such as major depressive disorder (MDD). Understanding recruitment into RMT research is fundamental for improving historically small sample sizes, reducing loss of statistical power, and ultimately producing results worthy of clinical implementation. There is a need for the standardisation of best practices for successful recruitment into RMT research. The current paper reviews lessons learned from recruitment into the Remote Assessment of Disease and Relapse- Major Depressive Disorder (RADAR-MDD) study, a large-scale, multi-site prospective cohort study using RMT to explore the clinical course of people with depression across the UK, the Netherlands, and Spain. More specifically, the paper reflects on key experiences from the UK site and consolidates these into four key recruitment strategies, alongside a review of barriers to recruitment. Finally, the strategies and barriers outlined are combined into a model of lessons learned. This work provides a foundation for future RMT study design, recruitment and evaluation.

Similar content being viewed by others

Introduction

The use of mobile technology in healthcare (mobile health; mHealth) has the potential to revolutionise both clinical practice and research1. Novel remote measurement technologies (RMTs), for example, smartphone applications, sensors and wearable technologies, are a subsection of mHealth, and can enable frequent, longitudinal and personalised health monitoring2. Currently, the assessment, and subsequent treatment, of many chronic health conditions is limited to retrospective recall during routine clinic visits, which can be biased by cognitive and memory heuristics3, and social desirability bias4. RMT data offers the potential for high-frequency symptom monitoring, which is more reflective of an individual’s daily experience5.

RMT data hold particular relevance for conditions of a recurrent nature. Major depressive disorder (MDD) is a mental disorder characterised by persistent low mood or anhedonia, often fluctuating between periods of remission and recurrence. The prevalence of MDD worldwide increased 18.4% from 2005 to 20156. The economic burden of MDD is currently estimated at $326 billion (2020 values), exacerbated further by an increased risk of comorbidities and healthcare resource utilisation in those with high relapse and recurrence rates7,8. Data obtained through a combination of smartphone questionnaires (active RMT; aRMT) and inbuilt sensors (passive RMT; pRMT) from phones and wearables can provide rich, multi-parametric information on symptom change, risk factors, cognition, sleep and diurnal patterns of behaviour, sociability and physical activity2. Crucially, the integration of RMTs into MDD research could provide the temporal resolution needed to detect indicators of future depressive episodes9.

For the potential of RMTs to be achieved, it is important to understand whether and how people engage with research studies of this nature. Engagement is a multi-stage construct indicating the extent to which a resource is actively used10. Examining engagement with the research protocol in RMT studies, for example via recruitment rates, provides a necessary first step11. Whilst findings suggest that people with MDD endorse the view that RMTs could be used to detect and predict relapses12,13, work has highlighted potential barriers to recruitment into such research. These include user-related factors, such as personal agency and privacy concerns, as well as system-related factors, including perceived ease of use and convenience12,14. In practice, RMT studies currently present small sample sizes15; a recent systematic review of cross-sectional, case-control, cohort and RCT studies found the median sample size of the 51 identified studies to be N = 58 (ranging from N = 6 to N = 1714)16. Recruitment rates into mental health trials range between 0 and 75%17. Reduced help-seeking and motivational behaviours are often cited as reasons for low engagement with research on depression18,19. Low recruitment rates present several limitations, including an increased risk of selection bias, a loss of statistical power and reduced generalisability, hindering the ability to produce valid results worthy of implementation into clinical practice20. Despite calls for adherence to Strengthening the Reporting of Observational Studies in Epidemiology (STROBE21) guidelines, recruitment strategies and non-participation rates are not reported in the majority of RMT studies16. Thus, little is currently known about the ways in which recruitment can be maximised in research of this nature.

The need to understand recruitment into remote measurement studies has increased exponentially since the COVID-19 pandemic. Isolation and social distancing have forced both clinical research and practice to halt face-to-face appointments. Healthcare services are rapidly seeking innovative ways to monitor health remotely, not least for contact tracing of COVID-19 symptoms, but also for continued measurement of pre-existing conditions outside of the clinic22. Recruitment into studies of smartphone apps designed for tracking COVID-19 symptoms shows initial promise, reflecting a national effort to combat the pandemic23,24. A fundamental challenge in healthcare will be coordinating a sustained response beyond these times, during which adoption of, and engagement with, remote (mental) health tracking has been noted as of great importance25,26,27.

Currently, a framework of best practice for recruiting into RMT studies does not exist. In the wider mHealth literature, an emerging trend for papers sharing recruitment experiences has encouraged standardisation and evaluation in the field28, spanning common mental health conditions29, cardiovascular health30 and risky health behaviours28. However, RMTs fundamentally differ from mHealth interventions in that they require sustained engagement with observational data collection over long periods, crucially offering no tangible or immediate benefits to the user. Druce et al.31 reviewed successful recruitment into two aRMT apps for symptom tracking in rheumatoid arthritis between 30 days and 12 months. However, there is no clear consensus as to whether these considerations can be applied to multi-parametric studies that track depression for a longer time period, given the need to offset extra participant burden or address additional privacy concerns. Efforts to translate this body of work into the field of multi-parametric RMT research could provide the necessary first steps in understanding how best to recruit into such studies, and in doing so, produce high-quality results across academia and beyond.

The RADAR-MDD study

The Remote Assessment of Disease and Relapse-Major Depressive Disorder (RADAR-MDD) study is the largest multi-parametric, multi-site, longitudinal prospective cohort study to date (as part of the wider RADAR-Central Nervous System (CNS) public-private partnership study: https://www.radar-cns.org/). The study utilised the RADAR-base system32 to collect a wealth of data from aRMT (a smartphone app delivering validated mood questionnaires, cognitive games, speech tasks and an electronic diary) and pRMT (a wearable fitness device measuring ambient noise and light, Bluetooth connections, GPS locations) sources to predict depressive relapse (the primary outcome measure). Further information is available in the protocol paper33. Eligible participants met with the research team for a single enrolment session, before being followed up remotely for a minimum of 6 months, a maximum of 3 years. The study obtained ethical approval from the Camberwell St Giles Research Ethics Committee (REC reference: 17/LO/1154) in London, from the CEIC Fundacio Sant Joan de Deu (CI: PIC-128–17) in Barcelona, and from the Medische Ethische Toetsingscommissie VUms (METc VUmc registratienummer: 2018.012 – NL63557.029.17) in the Netherlands. All participants provided written informed consent.

RADAR-MDD aimed to recruit 600 participants across the UK, Spain, and the Netherlands. Recruitment began in November 2017 at the London, UK site, acting as a year-long pilot for the recruitment and enrolment process before the remaining two sites joined in September 2018 and February 2019 respectively. By end of follow-up in June 2020, RADAR-MDD had exceeded its overall recruitment target (N = 623), and in the London site alone had recruited 175% (N = 350) of its target sample, making it the largest longitudinal observational cohort study of RMTs to date2,16. It was also able to sustain a steady level of recruitment throughout the COVID-19 pandemic. Thus, the study provides an opportunity to increase the transparency of reporting on recruitment and common barriers for non-participation in this field, as a multi-parametric RMT study with an extensive follow-up period.

The current paper aims to reflect on the experiences of recruiting into the RADAR-MDD project’s UK site. Here the UK site was chosen as many of these experiences were collated as part of the year-long pilot study for the recruitment and enrolment procedures; learnings that were then implemented at the other sites when these joined. The current findings were collected over the entirety of the study in discussions with researchers across all three sites, the patient advisory board, direct participant feedback during the procedures, and informal focus groups with other key stakeholders.

More specifically, this paper will present key reflections on successful recruitment strategies. It will then explore some main obstacles to recruitment, before merging findings into a model to reflect our main lessons learned. This work provides a starting point for the evaluation of recruitment pathways in RMT research, alongside suggestions for future work in the public or private sector to build upon.

Reflections on recruitment strategies

The recruitment strategies that we reflect on from RADAR-MDD are split into four main areas of consideration. These key areas are: (1) the need for co-design with service-user involvement, (2) a recruitment team fulfilling key competencies, (3) minimising participant burden via streamlined procedures and (4) making enrolment accessible for all while being open to iterative change.

Co-design with service-user involvement

Co-design with service users underpinned every aspect of the RADAR-MDD study design. User involvement was essential to ensure usability and interest in the technology and was especially relevant for studies that struggle with low uptake and adherence17,34. Users can reference personal lived experience when evaluating a system, and can provide valuable feedback on the design, enrolment procedures, communication materials, and implementation into clinical practice35,36.

RADAR-MDD included a work-package dedicated to Patient and Public Involvement (PPI). A series of work has been published, including systematic reviews and focus groups, with those with lived experience of MDD across three countries, exploring RMTs to manage depression12,15. This work-package also facilitated a Patient Advisory Board (PAB33). The PAB had input from the initial design, with service users participating in wearable device selection that culminated in using the Fitbit Charge device for data collection13, reviewing of participant-facing documents and app usability testing. In addition to the PAB, the UK site also consulted two local service user advisory groups at King’s College London regarding payment plans and feedback data. Service user involvement allowed for tailored procedures and materials, the most acceptable to the target population, which in turn minimised the burden of an observational longitudinal study and incentivised recruitment.

This person-based approach has been widely recommended in interventional designs34; we suggest that it is equally important in observational research. In utilising the expertise of the PAB, the RADAR-MDD study was designed for, and by, individuals with similar lived experiences to those being recruited. This created a smooth transition from protocol design to recruitment.

The recruitment team

Through consultation with the PAB, and previous literature that cites human contact as a predictor of retention in longitudinal studies20,37, it became clear that a small participant-facing research team was essential. For the RADAR-MDD’s London site, this consisted of three full-time research assistants, who provided technological support and clinical risk assessments to participants. Here recruiting the right team, with the necessary competencies and providing training relevant to RMT study design, was essential.

Firstly, when hiring the patient-facing team, it was critical to consider the key competencies needed for an RMT study which features complexity in the study design, the technology used, and the likely support needed. In a systematic review of longitudinal clinical studies, eligible research team staff were screened for: specialist experience working with the target population, cultural competencies, communication skills, empathy and sensitivity37. For RMT studies specifically, we learned that patience and awareness of potential technological barriers is integral, as much of the contact with participants during recruitment involves communicating with individuals of varying technological abilities. The RADAR-MDD study London site screened for these competencies in behavioural interviews, consisting of a mock recruitment session with a colleague outside of the research team. The applicant was provided little information about the study in advance and asked to respond to questions from a mock participant. The aim of this activity was to assess situational judgement, and the ability to build social rapport, field participant questions, and create an atmosphere conducive to requesting further support if needed. This was based on work by Salado et al.38 indicating that conventional and behavioural interviews assess different constructs with the latter being more suited for this role.

During the recruitment period, it became clear that the team could benefit from additional staff to accommodate participant interest. To this end, a mental health nurse, based at the local NHS Foundation, was seconded to the team on a research placement for 12 months part-time. The benefits of this were three-fold: (1) increased capacity for recruitment calls and enrolment sessions; (2) provision of expert clinical advice for risk assessments; and (3) an attempt to accommodate participant queries on the use of RMTs in clinical practice.

Secondly, it was vital for recruitment staff to be trained on how to introduce the study most appropriately to the target population. Courteous and clear communication was key here, particularly when recruiting for an RMT study that featured complex designs and procedures for the participants. We learned that it is critical for staff to be able to discuss the study goals and procedures in a vernacular appropriate to the population, while following a standardised script. This was part of a larger training manual that was provided to the researchers, standardising the process across sites. Mental health cohorts may pose additional considerations, such as phone call anxiety, so the team needed to be confident in communicating procedures and diagnostic interviewing via various channels.

It was important for the research team to manage participant expectations by clearly explaining the participant burden and the aims of the study. This was particularly relevant as the RMT was not used as an intervention but rather as a data collection tool alone. Similarly, it was imperative that research staff could answer potential questions regarding technological concerns, data protection, data security, and confidentiality, as these present key areas of participant concern in RMT studies. This is especially relevant for longitudinal RMT studies, as participants are signing up to engage with (often new) technologies that will become a new part of their daily routine. This can seem daunting, thus a research team that can provide information that might eradicate concerns, and that is easily contactable throughout participation is likely to promote confidence in enroling. This highlights the importance of human contact during recruitment, regardless of the remote potential of the technologies. Using RMT in research is relatively novel, so the promise of a personable, knowledgeable, and trained research team, both during recruitment and throughout the study, was paramount to the success of study recruitment.

Minimising participant burden

A third consideration for successful recruitment was a focus on reducing participant burden. Where RMT, in particular pRMT, is by design often ‘invisible’, the addition of a wide range of exploratory aRMT variables might cause individuals to feel overwhelmed by the amount of data that is being tracked, and the time and energy it will cost to incorporate the technology into their life. We reflect on two ways the RADAR-MDD study attempted to make the recruitment process as streamlined and rewarding as possible: (1) easy participant onboarding and (2) incentivisation for participation.

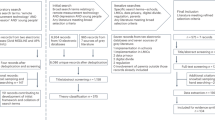

To streamline onboarding, the RADAR-MDD study created a standardised pathway to guide participants from initial contact to enrolment. This comprised of a system of statuses that helped identify, contact and track potential participant journeys to enrolment. The team used several methods to identify a pool of potentially eligible participants which included both team-initiated (Consent for Contact databases; C4C) and participant-initiated (form on the RADAR-CNS website) approaches. A central spreadsheet kept a log of contacts, whereby each individual was assigned a status from “needs contact” to “enrolled” (Fig. 1). This enabled the team to identify individuals quickly and progress them accordingly. Not only did this streamline the recruitment process, but it allowed for the easy creation of meaningful graphics to quantify the process to the extended team.

Another critical factor in offsetting participant burden was incentivisation. The debate over financial incentives for participants is a long-standing balancing act for ethics committees and principal investigators, regarding the influence over study design39,40. Commonly, agreement is found that allows for financial incentives when the risk to the participant is negligible and can result in more robust research outcomes41. When reflecting on the RADAR-MDD project, at the London site participants were paid £15 for the initial enrolment session and were reimbursed travel expenses. They were also informed that they would receive £5 for every 3-month outcome assessment they completed (long-form questionnaires sent via email) during the follow-up period. Additional, financial incentives were not offered for completion of aRMT questionnaires. This was based on feedback sessions with the RADAR-CNS PAB, the FAST-R group and research conducted by Mohr et al.42. Mohr et al. found that financial rewards can provide motivation for tasks that take more time, while these same rewards for engaging and quick tasks can be perceived as controlling or indicators that the individual lacks motivation.

In addition to monetary incentivisation, the offer of technology can be an intriguing prospect for potential participants. Here it was critical to provide equal opportunity and to address digital divide, as a substantial proportion of the population do not have access to smartphones and this group may be particularly relevant for mental health studies43. Thus, the RADAR-MDD study provided all technology free of charge (a Fitbit Charge device and an Android smartphone where not already owned). This was coupled with a technology user guide, and the offer of help with switching devices if required. As a result, owning or having experience with the technology was not a prerequisite for participation. This allowed for the recruitment of a diverse range of participants, some of whom had never used a smartphone before.

A third incentive for enrolment into RMT studies is moral alignment with the aims of the research, and the altruistic belief that engaging with such research will provide clinically valuable results44. During the RADAR-MDD recruitment process, participants were reminded at various points that the ultimate aims of the study were to implement findings into clinical practice. They were also informed that they could view some aspects of their data, during participation (e.g., heart rate, step counts on the FitBit dashboard) and as an infographic at the end (e.g., mood fluctuations across time)33. As a result, potential participants were encouraged to feel they were contributing to a tangible, innovative way of using technology to manage health, which will hopefully prove beneficial to themselves and the wider MDD community. Overall, we believe that combining a streamlined recruitment process with tailored incentives allowed for a reduction in participant burden and encouraged initial participation.

Iterative process

Finally, we aimed to strike a delicate balance between protocol adherence for increased reliability, and the need for flexibility, for example in accommodating participant needs or incorporating feedback.

When designing enrolment procedures, it was imperative for the RADAR-MDD study to take a flexible approach that was malleable to differing levels of technological expertise and various personal circumstances (e.g., mental health affecting the ability to travel for enrolment). At the London site, RADAR-MDD participants were offered a choice of three enrolment formats: (1) research centre visit for in-person session, (2) a home visit, or (3) a video call (and a separate procedure that was accessible to those with hearing impairments). During the in-person and home visit sessions, a member of the research team would introduce the study, remind the participant of the aims and set up the technology for them. Online sessions followed a similar procedure, but the participant set up the devices themselves once received in the post, following live instructions. These options of different enrolment procedures provided participants the flexibility to make study enrolment as effortless and comfortable as possible.

To standardise enrolment procedures, the research team created scripts and checklists for each method. However, it became apparent from early participant feedback that certain aspects of the session could be adapted further to promote engagement. For example, early participants noted feeling uncomfortable wearing the Fitbit at night, leading researchers to advise to ‘wear the device as much as you feel comfortable’ during the enrolment sessions. Incorporating early feedback into the recruitment and enrolment protocol served to enhance clarity, particularly as RMT research is relatively novel.

As well as adapting to individuals, RMT study protocols lend themselves to changing environmental circumstances. Though unprecedented, the COVID-19 pandemic provided a case study for one of the key benefits of working with RMTs: structures are likely in place to continue with data collection and recruitment, during times when face-to-face contact is not permitted. The RADAR-MDD study was able to continue the onboarding process with relative ease during the UK lockdown. In part, this is reflective of the decision to re-contact individuals who were previously unreachable. However, it is also reflective of the little adaptation needed to switch to fully remote onboarding procedures, given that this was already an established method of enrolment. Online recruitment procedures (explained previously) were followed as usual, with the team contacting individuals via work mobiles from home office. Technology delivery through postal networks was also unaffected. Overall, the RADAR-MDD study was able to take advantage of the remote nature that these technologies afford and continue recruitment.

Barriers to participation

When summarising our lessons learned in recruiting into a large-scale RMT study, it is also important to consider barriers to participation. In RADAR-MDD two key obstacles to recruitment were encountered: (1) technological barriers and (2) health-related barriers. Figure 2 shows the breakdown of reasons given for declining to participate in the study (n = 161) and ineligibility (n = 165). Here, we reflect on these and consider how we might have mitigated them. These data were collected during participant eligibility calls and logged as per the streamlined enrolment procedure outlined previously.

Technology-related barriers

When considering barriers to enrolment for the RADAR-MDD study, participants’ unwillingness to switch to an Android device was the most common reason for ineligibility (46%). Here participants expressed having set up their homes/work on devices on alternative operating systems, e.g., iOS, making the switch impractical. This highlights the importance of RMT device compatibility with a wide range of operating systems. Meanwhile, 23% declined the use of a smartphone, or felt generally uncomfortable wearing a watch or jewellery on their wrists for long periods.

It is critical to reflect on and acknowledge the biases introduced by this barrier. For example, in the RADAR-MDD study, despite the provision of technological support, the sample could be biased towards people who are already using smart watches and smartphones. However, it is possible that this is reflective of the type of sample that would choose to use symptom tracking routinely should it be implemented in clinical practice.

Health-related barriers

Another obstacle faced in RADAR-MDD recruitment was health-related barriers. An unexpected finding here was the necessity to reflect on the potential health impact the technology may have on certain comorbidities. For example, the presence of comorbid eating disorders made some (3%) decline participation. This was not a factor the team considered prior to recruitment, yet it highlights the importance of understanding the triggers that viewing some aspects of personal health data, in particular in relation to physical activity, might cause. Here, the potential effects of symptom monitoring on those with a history of eating disorders might be detrimental and thus had to be considered.

Of the reasons given for declining participation in the study, low mood only accounted for 1%. This was particularly interesting as it suggests that the presence of depression did not deter many individuals from participating, and indeed 61% of participants did report a current depressive episode at baseline. Conversely, it could be argued that the presence of low mood could prompt an interest in mood tracking, and thus an interest in participating.

A model of lessons learned

Figure 3 merges the reflections on strategies and barriers to participation from the RADAR-MDD study to depict the overall lessons learned during recruitment.

First, recruitment was preceded by thorough consultation with service users, and the acquisition of a suitably trained participant-facing team. Second, contacting eligible participants was streamlined, offering suitable financial and technological incentives during the recruitment and enrolment process. Third, we offered flexible enrolment procedures to suit individual needs and adapt to changing circumstances. While conducting this process, we learned that a consideration of barriers, such as phone swap, a reluctance to use the technologies, and medical comorbidities, will be key to maximising future recruitment.

Discussion

This paper aimed to present the key lessons learned during recruitment into the RADAR-MDD London site, a longitudinal, multi-parametric RMT study. Despite varying reports of initial engagement in the previous literature15,45, in particular in MDD cohorts19, the study successfully recruited 350 participants over 31 months from the London centre, exceeding a preliminary target of 200. Following a recent focus on recruitment ‘lessons learned’ literature from the wider mHealth field, this paper mirrored this approach in the rapidly expanding RMT space. As such, we summarised our lessons learned into a model, covering reflections on recruitment strategies and obstacles to participating (Fig. 3).

Implications for future study

This paper provides the foundations for understanding recruitment into multi-parametric RMT research. Future RMT studies should now build on the reflections presented here, alongside previous work, e.g., Druce et al.31, when considering their own recruitment design. Furthermore, we propose a need to create a framework of best practices that combines our insights with reflections from industry and other longitudinal studies (from different cultures, communities, populations) to better understand recruitment into RMT research. Such a body of work would provide for higher-quality trials with powered results to improve patient care.

Another valuable area of consideration for future work will be the extent to which successful recruitment into RMT studies is offset by feasibility. The RADAR-MDD study is part of the wider RADAR-CNS public-private partnership consortium, which involved a large amount of planning, resources, staff streamlining and funding before recruitment commenced. For the London site, this afforded three, full-time research assistants and access to service user groups. However, other projects might not have access to these resources, limiting the transferability of our reflections across studies. This reiterates the importance of future studies reflecting on their own lessons learned in recruitment, to build an inclusive framework for successful RMT recruitment that explores these trade-offs and their impacts on reaching recruitment targets.

In considering the COVID-19 climate, building upon the current work has never felt timelier. The RADAR-MDD study London site recruited between November 2017 and June 2020, and thus these results encapsulate both pre-pandemic and UK lockdown periods. Continuing to recruit during the pandemic has given the opportunity to exemplify RMTs as a viable and acceptable way to remotely collect health data. The transition towards a greater reliance on technology, as precipitated by COVID-19, may also act as a further facilitator of future RMT recruitment. Work should investigate the impact that the pandemic has had on recruitment strategies in various fields, and any resulting trends in utilising RMT in research and practice.

Strengths and limitations

This paper reviews lessons learned solely from the London site of the RADAR-MDD study. It is important to acknowledge that where the fundamental recruitment and enrolment procedures remained largely similar across the remaining two sites in Barcelona and Amsterdam, several local and national processes impact on the translation of this model. For example, staff recruitment protocols in Amsterdam meant that staff were hired from a rotating pool of research assistants, rather than exclusively for the RADAR-MDD project. In Barcelona, the core recruitment team comprised a post-doctoral researcher and a part-time research assistant, with an additional 40 clinicians and health professionals promoting recruitment into the study. Also, differing ethical procedures across the three different countries led to discrepancies in incentivisation and payments offered to participants. This highlights the need for cross-cultural perspectives to be accounted for in future reflection work and any standardised framework for successful RMT recruitment.

It should also be noted that the recruitment strategies outlined in this paper do not include those which combat biases within the recruited sample. Reviews of recruitment into remote mHealth studies have found age, geographical and ethnical biases in samples45. The present study faced similar challenges. No concerted efforts to target recruitment towards these areas were conducted, due to the lack of current understanding of how best to conduct this in an RMT study. However, offering free technology could have reduced the risk of digital exclusion in our sample. Future work will focus on analysing participant demographics and provide more detail into potential biases in the sample.

A final limitation is the inability to analyse the demographics or technological abilities of those who refused participation. This would have allowed for a comparison with those who did participate, and an exploration of correlating factors. Further investigation might be possible for those recruited via C4C databases, for whom such data are retained. However, in the current study this would not be representative of the total participants contacted. Thus, future work building on these lessons learned should consider collecting this data as it could provide valuable insight into the type of individuals inclined to participate in an RMT trial.

Notwithstanding these limitations, the RADAR-MDD study is an exemplar of over-recruitment into an RMT study of MDD. This adds weight to recent work that suggests that participants with MDD advocate the use of technology to manage their health12,13, and provides an encouraging foundation for future work.

The current paper outlined key lessons learned for successful RMT recruitment in the RADAR-MDD project. These insights, alongside future researchers’ own reflections, should be used to build a model for successful recruitment into RMT research. These reflections will assist future researchers and stakeholders with a vested interest in the field. They will also promote transparency and encourage others to review their recruitment strategies, creating a more standardised, and successful, approach.

Reporting summary

Further information on research design is available in the Nature Research Reporting Summary linked to this article.

Data availability

The data that support the findings of this study are available from the corresponding author upon reasonable request.

References

Liao, Y., Thompson, C., Peterson, S., Mandrola, J. & Beg, M. S. The future of wearable technologies and remote monitoring in health care. Am. Soc. Clin. Oncol. Educ. Book 115–121. https://doi.org/10.1200/EDBK_238919 (2019).

Matcham, F. et al. Remote Assessment of Disease and Relapse in Major Depressive Disorder (RADAR-MDD): recruitment, retention, and data availability in a longitudinal remote measurement study. https://doi.org/10.1186/s12888-022-03753-1 (2021).

Nahum, M. et al. Immediate Mood Scaler: tracking symptoms of depression and anxiety using a novel mobile mood scale. JMIR Mhealth Uhealth 5, e44 (2017).

Logan, D. E., Claar, R. L. & Scharff, L. Social desirability response bias and self-report of psychological distress in pediatric chronic pain patients. Pain 136, 366–372 (2008).

Jones, M. & Johnston, D. Understanding phenomena in the real world: the case for real time data collection in health services research. J. Health Serv. Res. Policy 16, 172–176 (2011).

Geneva: World Health Organization. Depression and Other Common Mental Disorders: Global Health Estimates (2017).

Greenberg, P. E. et al. The economic burden of adults with major depressive disorder in the United States (2010 and 2018). Pharmacoeconomics. https://doi.org/10.1007/s40273-021-01019-4 (2021).

Gauthier, G., Mucha, L., Shi, S. & Guerin, A. Economic burden of relapse/recurrence in patients with major depressive disorder. J. Drug Assess. 8, 97–103 (2019).

Naslund, J. A., Marsch, L. A., McHugo, G. J. & Bartels, S. J. Emerging mHealth and eHealth interventions for serious mental illness: a review of the literature. J. Ment. Health 24, 321–332 (2015).

O’Brien, H. L. & Toms, E. G. What is user engagement? A conceptual framework for defining user engagement with technology. J. Am. Soc. Inf. Sci. Technol. 59, 938–955 (2008).

White, K. M. et al. Exploring the denition, measurement, and reporting of engagement in remote measurement studies for physical and mental health symptom tracking: a systematic review. https://doi.org/10.21203/rs.3.rs-1625589/v1 (2022).

Simblett, S. et al. Barriers to and facilitators of engagement with mhealth technology for remote measurement and management of depression: qualitative analysis. JMIR Mhealth Uhealth 7, e11325 (2019).

Polhemus, A. et al. Data visualization in chronic neurological and mental health condition self-management: a systematic review of user perspectives. JMIR Ment. Health 9, e25249 (2022).

Bauer, A. M. et al. Acceptability of mHealth augmentation of collaborative care: a mixed methods pilot study. Gen. Hospital Psychiatry 51, 22–29 (2018).

Simblett, S. et al. Barriers to and facilitators of engagement with remote measurement technology for managing health: systematic review and content analysis of findings. J. Med. Internet Res. 20, e10480 (2018).

de Angel, V. et al. Digital health tools for the passive monitoring of depression: a systematic review of methods. npj Digital Med. 5, 1–14 (2022).

Liu, Y., Pencheon, E., Hunter, R. M., Moncrieff, J. & Freemantle, N. Recruitment and retention strategies in mental health trials – A systematic review. PLoS ONE 13, e0203127 (2018).

Sherdell, L., Waugh, C. E. & Gotlib, I. H. Anticipatory pleasure predicts motivation for reward in major depression. J. Abnorm. Psychol. 121, 51–60 (2012).

Brown, J. S. L., Murphy, C., Kelly, J. & Goldsmith, K. How can we successfully recruit depressed people? Lessons learned in recruiting depressed participants to a multi-site trial of a brief depression intervention (the ‘CLASSIC’ trial). Trials 20, 131 (2019).

Teague, S. et al. Retention strategies in longitudinal cohort studies: a systematic review and meta-analysis. BMC Med. Res. Methodol. 18, 151 (2018).

Vandenbroucke, J. P. et al. Strengthening the reporting of observational studies in epidemiology (STROBE): explanation and elaboration. PLoS Med. 4, e297 (2007).

Behar, J. A. et al. Remote health diagnosis and monitoring in the time of COVID-19. Physiological Meas. 41, 10TR01 (2020).

Drew, D. A. et al. Rapid implementation of mobile technology for real-time epidemiology of COVID-19. Science 368, 1362–1367 (2020).

Menni, C. et al. Real-time tracking of self-reported symptoms to predict potential COVID-19. Nat. Med. 26, 1037–1040 (2020).

Moreno, C. et al. How mental health care should change as a consequence of the COVID-19 pandemic. Lancet Psychiatry 7, 813–824 (2020).

Holmes, E. A. et al. Multidisciplinary research priorities for the COVID-19 pandemic: a call for action for mental health science. Lancet Psychiatry 7, 547–560 (2020).

Owens, A. P. et al. Implementing remote memory clinics to enhance clinical care during and after COVID-19. Front. Psychiatry 11, 579934 (2020).

Watson, N. L., Mull, K. E., Heffner, J. L., McClure, J. B. & Bricker, J. B. Participant recruitment and retention in remote ehealth intervention trials: methods and lessons learned from a large randomized controlled trial of two web-based smoking interventions. J. Med. Internet Res. 20, e10351 (2018).

Lattie, E. G. et al. A practical do-it-yourself recruitment framework for concurrent ehealth clinical trials: identification of efficient and cost-effective methods for decision making (Part 2). J. Med. Internet Res. 20, e11050 (2018).

Pfammatter, A. F., Mitsos, A., Wang, S., Hood, S. H. & Spring, B. Evaluating and improving recruitment and retention in an mHealth clinical trial: an example of iterating methods during a trial. Mhealth 3, 49–49 (2017).

Druce, K. L., Dixon, W. G. & McBeth, J. Maximizing Engagement in Mobile Health Studies. Rheum. Dis. Clin. North Am. 45, 159–172 (2019).

Ranjan, Y. et al. RADAR-base: open source mobile health platform for collecting, monitoring, and analyzing data usingsensors, wearables, and mobile devices. JMIR Mhealth Uhealth 7, e11734 (2019).

Matcham, F. et al. Remote assessment of disease and relapse in major depressive disorder (RADAR-MDD): a multi-centre prospective cohort study protocol. BMC Psychiatry 19, 72 (2019).

Yardley, L., Morrison, L., Bradbury, K. & Muller, I. The person-based approach to intervention development: application to digital health-related behavior change interventions. J. Med. Internet Res. 17, e30 (2015).

Anderson, A., Benger, J. & Getz, K. Using patient advisory boards to solicit input into clinical trial design and execution. Clin. Therapeutics 41, 1408–1413 (2019).

Bano, M. & Zowghi, D. A systematic review on the relationship between user involvement and system success. Inf. Softw. Technol. 58, 148–169 (2015).

Abshire, M. et al. Participant retention practices in longitudinal clinical research studies with high retention rates. BMC Med. Res. Methodol. 17, 30 (2017).

Salgado, J. F. & Moscoso, S. Comprehensive meta-analysis of the construct validity of the employment interview. Eur. J. Work Organ. Psychol. 11, 299–324 (2002).

Phillips, T. Exploitation in payments to research subjects. Bioethics 25, 209–219 (2011).

Parkinson, B. et al. Designing and using incentives to support recruitment and retention in clinical trials: a scoping review and a checklist for design. Trials 20, 624 (2019).

Zutlevics, T. Could providing financial incentives to research participants be ultimately self-defeating? Res. Ethics 12, 137–148 (2016).

Mohr, D. C., Cuijpers, P. & Lehman, K. Supportive accountability: a model for providing human support to enhance adherence to eHealth interventions. J. Med. Internet Res. 13, e30 (2011).

Greer, B. et al. Digital exclusion among mental health service users: qualitative investigation. J. Med. Internet Res. 21, e11696 (2019).

Anawar, S., Adnan, W. A. W. & Ahmad, R. A design guideline for non-monetary incentive mechanics in mobile health participatory sensing system. Int. J. Appl. Eng. Res. 12, 11039–11049 (2017).

Pratap, A. et al. Indicators of retention in remote digital health studies: a cross-study evaluation of 100,000 participants. npj Digital Med. 3, 21 (2020).

Acknowledgements

We thank our colleagues both within the RADAR-CNS consortium and across all involved institutions for their contribution the recruitment strategy for RADAR-MDD. Furthermore, we would like to thank the FAST-R group, a team with experience of mental health problems and their carers who have been specially trained to advise on research proposals and documentation through the Feasibility and Acceptability Support Team for Researchers (FAST-R): a free, confidential service in England provided by the National Institute for Health Research Maudsley Biomedical Research Centre via King’s College London and South London and Maudsley NHS Foundation Trust. We would also like to thank all members of the RADAR-CNS patient advisory board, who all have experience of living with or supporting those who are living with depression, epilepsy or multiple sclerosis. Finally, the RADAR-CNS project has received funding from the Innovative Medicines Initiative 2 Joint Undertaking under grant agreement No 115902. This Joint Undertaking receives support from the European Union’s Horizon 2020 research and innovation programme and EFPIA (www.imi.europa.eu). This communication reflects the views of the RADAR-CNS consortium and neither IMI nor the European Union and EFPIA are liable for any use that may be made of the information contained herein. The funding body has not been involved in the design of the study, the collection or analysis of data, or the interpretation of data. This paper represents an independent research part funded by the National Institute for Health Research (NIHR) Biomedical Research Centre at South London and Maudsley NHS Foundation Trust and King’s College London. The views expressed are those of the authors and not necessarily those of the NHS, the NIHR or the Department of Health and Social Care.

Author information

Authors and Affiliations

Consortia

Contributions

C.O. and K.M.W. are co-first authors of the paper. C.O. and K.M.W. wrote all versions of the manuscript and contributed to the study conducted in London. AI contributed to the study conducted in London. J.J. has contributed to the study conducted in London. D.L. contributed to the study design and data collection. G.L. has contributed to the study conducted in London. F.L. has contributed to the design and coordination of the study in Amsterdam. S.S. (Sara Siddi) has contributed to the design and coordination of the study in Barcelona. P.A. has contributed to the design and development of the study. S.A.G. contributed to the study conducted in Barcelona. J.M.H. has contributed to the development and design of the study. D.C.M. has contributed to the design and development of the study. B.W.J.H.P. has contributed to the design and development of the study. S.S. (Sara K. Simblett) has contributed to the design and development of the study. T.W. has contributed to the design and development of the study. V.A.N. has contributed to the design and development of the study. M.H. secured funding, is the principal investigator of the study and as such contributed to the overall study design and conduct. F.M. has contributed to the design and coordination of the study in London. All authors have been involved in reviewing the manuscript and have given approval for the it to be published. The authors read and approved the final manuscript.

Corresponding authors

Ethics declarations

Competing interests

M.H. is the principal investigator of the RADAR-CNS programme, a precompetitive public–private partnership funded by the Innovative Medicines Initiative and the European Federation of Pharmaceutical Industries and Associations. The programme receives support from Janssen, Biogen, MSD, UCB and Lundbeck. D.C.M. has accepted honoraria and consulting fees from Apple, Inc., Otsuka Pharmaceuticals, Pear Therapeutics, and the One Mind Foundation, royalties from Oxford Press, and has an ownership interest in Adaptive Health, Inc. P.A. is employed by the pharmaceutical company H. Lundbeck A/S. V.N. is an employee of Janssen Research & Development, LLC and hold company stocks/stock options. C.O. is supported by the UK Medical Research Council (MR/N013700/1) and King’s College London member of the MRC Doctoral Training Partnership in Biomedical Sciences. All other authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Oetzmann, C., White, K.M., Ivan, A. et al. Lessons learned from recruiting into a longitudinal remote measurement study in major depressive disorder. npj Digit. Med. 5, 133 (2022). https://doi.org/10.1038/s41746-022-00680-z

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41746-022-00680-z

This article is cited by

-

Behind the Screen: A Narrative Review on the Translational Capacity of Passive Sensing for Mental Health Assessment

Biomedical Materials & Devices (2024)