Abstract

Previous research investigating the transmission of political messaging has primarily taken a valence-based approach leaving it unclear how specific emotions influence the spread of candidates’ messages, particularly in a social media context. Moreover, such work does not examine if any differences exist across major political parties (i.e., Democrats vs. Republicans) in their responses to each type of emotional content. Leveraging more than 7000 original messages published by Senate candidates on Twitter leading up to the 2018 US mid-term elections, the present study utilizes an advanced natural language tool (i.e., IBM Tone Analyzer) to examine how candidates’ multidimensional discrete emotions (i.e., joy, anger, fear, sadness, and confidence) displayed in a given tweet—might be more likely to garner the public’s attention online. While the results indicate that positive joy-signaling tweets are less likely to be retweeted or favorited on both sides of the political spectrum, the presence of anger- and fear-signaling tweets were significantly associated with increased diffusion among Republican and Democrat networks, respectively. Neither expressions of confidence nor sadness had an impact on retweet or favorite counts. Given the ubiquity of social media in contemporary politics, here we provide a starting point from which to disentangle the role of specific emotions in the proliferation of political messages, shedding light on the ways in which political candidates gain potential exposure throughout the election cycle.

Similar content being viewed by others

Introduction

“Thinking we’re only one signature away from ending the war in Iraq” (Obama, 2007)Footnote 1

The tweet above, sent by Senator Barack Obama in April 2007, launched the very first Twitter campaign for a presidential election (Newkirk, 2016), providing an early glimpse into how social media might shape politics in the years to come (e.g., Becatti et al., 2019; Casteltrione and Pieczka, 2018). A decade later, with more than 154 million users utilizing Twitter daily (Pew Research Center, 2019), this platform has become an important resource for political candidates to conduct campaigns, gain direct access to potential voters, and strategically gauge constituents’ preferences in real-time (Straus et al., 2016). Given that all US Senators adopted Twitter during the 115th Congress (2017–2018; Congressional Research Service, 2018), recent studies have devoted considerable attention to the social media platform’s expanding power in contemporary politics (e.g., Gervais et al., 2020; Gross and Johnson, 2016).Footnote 2 The ubiquity of the post-and-share Twitter paradigm (Lyons and Veenstra, 2016), for example, has provided an unprecedented opportunity for candidates to engage an almost limitless number of followers and spread their messaging on a large scale (Hixson, 2017), attracting those most likely to vote, and offering the potential to expand their political clout throughout the election cycle (e.g., Druckman et al., 2007; 2010; Galdieri et al., 2017; Thurber et al., 2000).

Though numerous studies identify the widespread use of social media in political circles (e.g., Becatti et al., 2019; Casteltrione and Pieczka, 2018), less is known about what types of emotional messaging have a broad reach on such sites. Past research on the topic has largely taken a valence-based approach (Rodríguez-Hidalgo et al., 2015), revealing a positive-negative asymmetry in which negative content tends to be more effective at increasing voter turnout or choice (see Levin et al., 1998 on motivating supporters to participate, and see Miller et al., 2016 on fundraising). The 2018 US mid-terms, for example, were found to be a notably antagonistic election (Gervais et al., 2020; Nai and Maier, 2020), in which tweets containing emotional terms by politicians frequently generating worldwide attention, regardless of whether the content was deemed to uncivil or offensive (Thurber et al., 2000). Since broad campaigning tactics (e.g., what to post and when) can be carefully crafted (Gross and Johnson, 2016; Nai and Maier, 2020), a large body of work has identified that the incentive to embrace negative rhetoric is stronger (compared to traditional media) for candidates seeking all available venues to gain an edge through emotional appeals (see Druckman et al., 2010 for a review of negative posting strategies over three election cycles and Brady et al., 2017, 2019 for supporting evidence).

Specific emotions in retweeting

Specific positive and negative emotions have unique properties and may be distinguishable from one another (e.g., anger vs. sadness), regardless of the homogeneity of their valence. Anger, for example, is often considered a motivator, promoting action, whereas sadness reduces motivation, promoting withdrawal (Lerner et al., 2015; Lerner and Tiedens, 2006). Such work (e.g., Frijda, 1986) has been invaluable and highlights the importance of disentangling how distinct emotional messaging might shape behaviors differently. However, to the best of our knowledge, this has not been systematically examined in the context of social media politicking. Leveraging 7310 original Twitter messages from Senate candidates in the lead up to the 2018 US mid-term elections, we utilize an advanced natural language processing tool (i.e., IBM Tone Analyzer) to explore two important questions: (a) Are certain types of emotional content (i.e., joy, anger, fear, sadness and confidence) more likely to capture the public’s attention, thereby driving the spread of candidates’ messages (i.e., be directly retweeted or favorited) through social media networks?; and (b) if so, is this pattern similar or different across the political party divide (i.e., Democrats vs. Republicans)?

Although some argue that retweeting is random (e.g., Shi et al., 2020), the scarce research focusing on the influence of specific emotions for online behavior suggests that anger-inducing information tends to be the most likely content prompting user engagement (e.g., retweeting or favoriting) or attracting feedback (see Brady et al., 2017; Brady and Crockett, 2019). A recent report (Rose-Stockwell, 2018) found that when streams of political outrage and vitriol became the primary products of social media feeds during the 2016 US election, messages employing emotional terms often generated significant worldwide attention in online debates (see also Hughes and Van, 2018); the more outrageous words the candidates used, the more coverage they received (Brady et al., 2019). Similarly, the Pew Research Center (2019) suggests that certain types of messages (e.g., “things that my friend is angry about”) often garner more clicks, likes, or retweets. As Brady and Crockett (2019) noted, since online communication tools are literally just a few keystrokes, a person’s threshold for responding to negativity is probably lower than in offline conversations, i.e., the individual directly involved is neither confronted with the same physical risks, nor do they risk the same reputational damage.

Despite a nascent body of work showing that anger-charged content tends to promote the proliferation of messages online (e.g., Hughes and Van, 2018; Rose-Stockwell, 2018), thus far, there is little systematic research examining whether messages conveying other forms of negative emotion, such as fear and sadness, attract the same degree of attention in online political discourse. Existing research on the topic has mainly focused on the content of candidates’ campaigns (e.g., Nai and Maier, 2020; Straus et al., 2016), with limited analyses of the responses evoked among their followers or supporters. Though some research has assessed the proliferation of fear- or sadness-charged messages online, such work has focused almost entirely on public health or crisis communication (e.g., see Rus and Cameron, 2016, for the negative effects of sadness-related imagery on user engagement and Schultz et al., 2018, for supporting evidence), leaving it unclear as to how these emotions spread in the lead up to an election. Given that tweets expressing either condemnation or claiming great sadness may both spark a significant volume of retweets or favorites, it is of considerable interest to see if the effect truly differs, i.e., whether certain kinds of emotionally charged messages by candidates are indeed more effective at promoting online engagement, thus reaching a wider number of users over the course of a political campaign.

Retweeting patterns between Democrats vs. Republicans

In addition to examining specific emotions on message diffusion, the present study also explores what differences exist, if any, in retweeting and favoriting of online emotional content published by the two main US political parties (Democrats and Republicans). As prior research has found that political parties are tightly aligned with their ideology, with Democrats and Republicans corresponding closely with liberals and conservatives, respectively, here we draw on extensive work in the political psychology of ideology field (see Levendusky, 2009). According to motivated social cognition (MSC) theory, conservatives (rather than liberals) tend to be more sensitive to environmental stimuli, especially those that are negatively valenced (see also Janoff-Bulmann, 2009). A meta-analysis found the development of the conservative political ideology to be particularly associated with increased intolerance of uncertainty, fearfulness, and perceptions of risk (among other things; Jost et al., 2003). In a series of studies, Hibbing and colleagues (2014) came to the same conclusion, suggesting that individuals who identify with conservative political parties are more responsive (than their liberal counterparts) to negatively valenced words or images (for details, see Inbar et al., 2009)—a relationship borne out by longitudinal (e.g., Bonanno and Jost, 2006), physiological (e.g., Oxley et al., 2008) and psychological evidence (e.g., Amodio et al., 2007).

However, although much of the literature examined thus far suggests that conservatives do have a negativity bias, several studies indicate that such a relationship is considerably more nuanced (e.g., Choma and Hodson, 2017; Greenberg and Jonas, 2003). For example, Bakker et al. (2020) contradicted the main findings based on the MSC perspective, reporting that the reactions to environmental stimuli follow a comparable trajectory regardless of any underpinning ideological orientation (e.g., for expressing prejudice to an equal degree, see Crawford et al., 2015). Moreover, a few studies in social and political psychology even posit an opposing pattern, i.e., people who are relatively left-wing may respond more strongly to negative stimuli (e.g., Crawford, 2017).

Taken together, the findings on the psychological differences between liberals and conservatives are often mixed. This may be due to reliance on either self-reported surveys assessing college students, who may not yet have formed stable political attitudes (see the review by Crawford et al., 2015), or on behaviors exhibited in a laboratory experiment, which can be lower in external validity (Barberá et al., 2015). Additionally, as Choma and Hodson (2017) suggest, perhaps such long-assumed relations cannot be reduced to simple statements and should be operationalized narrowly (Duckitt, 2001; Onraet et al., 2014), with conservatives reporting more responsiveness to certain types of negative stimuli and liberals reporting more responsiveness towards other types. With these considerations in mind, the current work utilizes an extensive media-based database to unobtrusively observe user behaviors (e.g., retweets or favorites) in a naturalistic setting. By looking beyond an approach based solely on valence, the present study will focus on disentangling the role that these specific emotions (i.e., joy, anger, fear, sadness, and confidence) play in driving the online spread of political information, shedding light on the differences or similarities, if any, in responses to Democratic and Republican candidates based on the emotional content of their social media messages. Our data reveal that joy-related tweets were less likely to be retweeted or considered a favorite regardless of whether the message came from Democratic or Republican candidates. However, fear-based tweets were more likely to garner social media engagement when posted by Democratic candidates, while anger-based tweets were more likely to garner engagement when created by Republican candidates.

Methods

Dataset

The current study employed a Twitter dataset, examining original messages published by Senate candidates over four weeks leading up to the 2018 US mid-term elections (09 October–06 November 2018; Election Day: 06 November 2018). The outcome of this election was significantly important: depending on how well the Democrats did, the party could regain control of the legislature against the Republican White House of Donald Trump (Cohn and Kesterton, 2018).

By pulling directly from a user’s timeline via Twitter API with Tweepy’s userTimeline function (Hasan et al., 2018), we collected 7310 original postsFootnote 3 (Democrats: n = 3711; Republicans: n = 3599) published by 65 Senate candidates across the two major political parties, including 29 incumbents who were seeking re-election and 36 significant challengers who have taken ~30% or more of the vote in the final round of elections (for details, see the Supplementary Table S1). Overall, a total of 35 states were contested in the 2018 US mid-term elections, including the special elections in Minnesota and Mississippi. Two states (i.e., Vermont and Wisconsin) were either contested by third-party candidates (i.e., Independents) or had unavailable tweets (e.g., tweets sent by a non-verified Twitter account), leaving 33 states considered for analysis, with each state including the top-two candidates who received the most votes advancing to elections (except Maine; for full details on the sampling procedure, see Supplementary Table S1).

Text pre-processing

Unlike emotions expressed in other textual sources (e.g., articles, emails, or product reviews), Twitter messages (a) often utilize a high number of colloquialisms (e.g., FTL is used in place of the phrase “for the loss”), symbols (e.g.,  for anger;

for anger;  for sadness), special characters (e.g., #endgunviolencetogether), or letter repetition (e.g., “stroong”), and (b) can only be a limited length, which has created a new way of expressing emotion online (Wang et al., 2012).

for sadness), special characters (e.g., #endgunviolencetogether), or letter repetition (e.g., “stroong”), and (b) can only be a limited length, which has created a new way of expressing emotion online (Wang et al., 2012).

To convey tweets in a way that optimizes the analysis, we conducted text pre-processing (Naveed et al., 2011). As Table 1 presents, we removed the URLs and user-mentions (e.g., @TOMS), and replaced any character that occurred multiple times consecutively (e.g., stroong) with the correct spelling of that word (e.g., strong). As Twitter users often use the hashtag symbol “#” before a relevant keyword or phrase in their tweet to describe a topic or theme, it would have been risky to remove all the text included in a hashtag (Hasan et al., 2019). In such cases, we only removed the special character “#” and kept the keywords that followed it. In addition, as emoticons (e.g.,  ,

,  ,

,  ) are frequently used online to express emotions that affect the meaning of the tweet (Hasan et al., 2019; Liu et al., 2012), the pre-processing work maintained this feature to increase the accuracy of the analysis.

) are frequently used online to express emotions that affect the meaning of the tweet (Hasan et al., 2019; Liu et al., 2012), the pre-processing work maintained this feature to increase the accuracy of the analysis.

Detecting emotional rhetoric in tweets using IBM Watson’s tone analyzer

Over the past decade, while there have been numerous studies exploring whether the words expressed in written text provide clues to an individual’s emotional states (e.g., Bleich et al., 2021; Young and Soroka, 2012), those studies mainly focus on textual sources, such as articles, emails, or product reviews, leaving it unclear how to examine such states in a more naturalistic environment (IBM Cloud, 2015). Given the uniqueness of social media expressions, using a traditional approach, such as counting the word frequency from a built-in dictionary (e.g., LIWC), may result in low validity due to the lack of inclusion of sentiment-oriented language in the social media context (for reviews, see Hutto and Gilbert, 2014).

In 2016, IBM Watson released the Tone Analyzer as a natural language functionality to detect correlations between the psycholinguistic features of a given text and a user’s thinking styles, intrinsic needs, values, and emotional states, especially on social media (IBM Cloud, 2015). In a digital world full of ever-expanding datasets (Hutto and Gilbert, 2014), such tools aim to more accurately and automatically detect those features disclosed in an online message. Instead of just reading text and counting the frequency of words associated with different emotions from a build-in dictionary, IBM’s Tone Analyzer is built on a stacked generalization-based ensemble framework that uses multiple machine learning algorithms to classify emotion categories (IBM Cloud, 2015). Specifically, given a training dataset (e.g., 2.6 million user requests from Twitter customer-support forums) and associated emotive tones (e.g., human-annotated; for details, see IBM Cloud, 2020), such an ensemble framework is built on a machine learning model that combines several other learning algorithms (e.g., Logistic Regression, Support Vector Machine, etc) to better predict new emotionally relevant utterances (e.g., see Liang and Yi, 2021 for the predictive accuracy in ensembles of text classification). Leveraging a variety of features, the Tone Analyzer also considers (a) N-gram features (i.e., allowing the model to learn occurrences of every two and three-word combinations to determine the probability of a word occurring after a certain word; Srinidhi, 2019), (b) lexical features from various dictionaries (e.g., a base or root word without considering any prefixes or suffixes), (c) some dialog-specific features, such as the topic-inferring “thank you” or “apologizing”, (d) several higher-level features, such as the existence of consecutive question marks or exclamation marks, or emoticons, and other slang words commonly used in social media communications (IBM Cloud, 2017; 2020), and (e) negations in a sentence (e.g., “Not disappointed in them”; this issue is often solved by a supervised approach with high-order n-grams to detect special prefixes and suffixes appended to negation, such as “not”, “!!!” or “?”, processing those features as negated words) to handle grammatical constructs and drive the accuracy of outputs at both the sentence and document levels (IBM Cloud, 2016).

With nearly 30% of training samples having more than one associated tone (IBM Cloud, 2020), it is worth noting that the Tone Analyzer moves beyond a valence-based model (e.g., positive vs. negative) to detect multi-label classifications from various dimensions. Being trained by a One-vs-Rest classifier (i.e., using binary classification algorithms for multi-class classification; Brownlee, 2020), the Tone Analyzer gives optimal weight to certain words, including considerations of sequential importance, particularly for imbalanced samples (see IBM Cloud, 2020 for a demonstration of model accuracy).

Once our Twitter corpus was cleaned by the pre-processor, as stated above, a string of text was sent through the Tone Analyzer API and a JSON object returned as output (see the Supplementary Appendix I for a JSON sample). For each given input (e.g., a tweet), the Tone Analyzer produced a confidence score for every single predicted tone taken from the following set of dimensions: Joy, Anger, Fear, Sadness, and Confidence. For example, for the given text “The NRA has had lawmakers in its pocket for too long—and our country has suffered the consequences. If we fight together, if we channel our outrage, our heartbreak and our frustration, we can take on the NRA and put a stop to this senseless violence” (Gillibrand, 2018),Footnote 4 the output is presented as anger (0.51) and sadness (0.56). Based on the returned scores, the results illustrate that the text mainly expresses “sadness” and “anger”, with 56% and 51% confidence, respectively, indicating that such emotions are likely to be perceived in the content. To provide a valid predictive result, the Tone Analyzer returns an emotional tone only when the confidence score is greater than 0.5 (IBM Cloud, 2020). As the input in the Tone Analyzer can be processed either on an individual sentence basis or for the entire block of text, to simplify the tone features gathered in our analysis, here we used the entire block of text as the given input (see the Supplementary Appendix II for a large sample of tweets processed by IBM’s Tone Analyzer).Footnote 5

Twitter engagement

Several studies investigating social media indicate that political candidates have given considerable attention to online posts and their engagement metrics (Brady et al., 2019; 2020). Twitter, for example, has proven to be one of the most valuable sources of information for political advertising as it allows references to appeal for votes or soliciting financial support—although the company has recently updated the policy to prohibit certain political content (for details, see Twitter, 2021). The ubiquity of its accessibility, coupled with the ease of publishing multimedia materials, has created a platform on which candidates can engage an almost limitless number of followers and drive their speech spreading on an unprecedented scale through the connected world of social media (Park, 2013).

Several activities in response to tweets, such as replies, retweets (including direct retweets and retweets with additional commentary—currently known as “quote tweets”) and favorites (currently known as “likes”) are commonly used as measures of engagement in the digital realm (Park, 2013; Sainudiin et al., 2019). Here, our analysis focuses on direct retweets and favorites as these often represent endorsement or trust in the message organizer (Park, 2013; Sainudiin et al., 2019), while quoted tweets and replies to tweets can capture a broad range of meanings (e.g., expressing divergent opinions, ridicule, satire; Park, 2013). Especially in political campaigns, direct retweeting and favoriting frequently occur as a means to support the endorsed candidate or serve as a way of saying “me too” in response to the original poster (Dang-Xuan et al., 2013). While numerous studies have examined retweeting as a political engagement indicator, little is known about the impact of the distinct emotive content on those responses specifically, or whether, compared to retweeting, a similar pattern would emerge when examining the number of favorites.

By directly pulling tweets from a user’s timeline via Twitter API using Tweepy’s userTimeline function (Hasan et al., 2018), we extracted the original retweet and favorite counts attached to each message. Due to the limitation of Twitter API,Footnote 6 it is important to note that our target predictor of a direct retweet was met by using API in combination with web-page-scraping to filter out commented retweets from the total retweets (for full details on collection approach, see Data Syntax). All other metadata, including favorite counts, presence of URLs, and the number of followers and friends of that user, were pulled directly from Twitter API at the time of data collection (October–December, 2018).

Statistical models

Given the present sample is organized in a hierarchical structure (e.g., several tweets sent by the same user), traditional methods (e.g., linear regression) may return biased parameter estimates as the assumption of independence is no longer tenable (Bates, 2010; Doran et al., 2007). This covariance motivated us to (a) incorporate random factors and (b) characterize the true variances among units within similar groups (Bates, 2010).

Specifically, by using the lme4 package in R (Bates, 2010), we fit the linear mixed-effects models to examine which emotional factors explain the direct retweet and favorite counts (log-transformed both predictors to reduce skewness), with the controlling variables (i.e., the number of followers and friends that a given candidate has, their incumbency status, and the competitiveness of the race that can potentially influence the engagement level).Footnote 7 As our data were measured on different scales, meaning that variables with a large interval (e.g., the number of followers) can outweigh variables ranging between 0 and 1 (e.g., emotional factors), we rescaled the data to have values between 0 and 1 according to equation (1):

where x′ represents the normalized feature within the range [0,1], and xmin and xmax represent the minimum and maximum values of the feature, respectively (Paulson, 1942).

Based on the hierarchical structure, the outcome variance should be considered as being either within-groups or between-groups (Bates, 2010). Some candidates may have sent a series of tweets containing a specific emotion (e.g., anger) much more often than others, thus the variance within a candidate’s data sample could be more homogeneous (Doran et al., 2007). To overcome such a hierarchical structure among observations, we incorporated random factor grouping by each level of candidate in conjunction with time effects (aggregated to a week-level factor to reduce variances; see Supplementary Nested vs. Crossed Grouping Factors in LMER for more details).

To evaluate how well these random terms fit our model, we specified various criteria to compare the results with other models (see Supplementary Model Comparison). Given the lowest value regarding both Akaike’s Information Criterion (AIC) and Schwartz’s Bayesian criterion (BIC), results indicate that the present model is significantly better at capturing the data than others using different combinations of random factor sets (e.g., Democrats: x2(1) = 205.1, p < 0.001; Republicans: x2(1) = 223.3, p < 0.001). We also measured the distribution for both residuals and random effects to ensure that the statistical assumptions are satisfied (see Supplementary Model Diagnostics).

Results

Out of the total original tweets examined in our sample, more than 13% (n = 969) were retweeted at least 500 times, nearly 40% (n = 2833) over 100 times, and only less than 1% (n = 6) had zero retweets (i.e., generated no observable engagement). To measure whether the spread of online information was driven by a negativity bias, we examined the effect that each type of emotionally charged message had on the retweet and favorite counts of both the Democratic and Republican candidates.

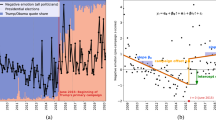

Breakdown of emotional content across retweets and favorites

As Fig. 1a presents, out of the emotionally charged messages published by Democratic candidates (n = 1952), ~62% of fear-based content was retweeted over 100 times, with the next highest rate identified as anger (58%), followed by sadness (55%), confidence (50%), and joy (33%).Footnote 8 When analyzing those messages with an especially broad reach (i.e., messages with retweets >500), a similar pattern was found, with fear tending to be the leading emotion and having the greatest impact on capturing the public’s attention (see Fig. 2a for a similar pattern regarding favoriting levels). As Table 2 shows, fear-based messages published by Democratic candidates were retweeted an average of 950 times (s.e.m. = 311.9, 95% CI [316, 1585]), proving to be nearly three times more effective in motivating action than positive tweets signaling joy (Mean = 349, s.e.m. = 53.61, 95% CI [244, 454]). These differences are statistically significant (x2(4) = 90.54, p < 0.001).

Concerning the dispersal of emotional content among Republicans, although the retweeting levels remained comparatively low for joy-charged messages, a different pattern emerged in response to tweets signaling anger and fear in particular (see Fig. 1b). Of all the emotionally charged messages published by Republican candidates (n = 1765), ~52% of anger-charged tweets were retweeted over 100 times, followed by 31% of those conveying confidence, 30% for joy, and under 25% for tweets conveying fear or sadness. For those messages with an especially broad reach (i.e., messages with retweets > 500), anger remained the key driver most likely to attract subsequent responses when compared to all other types of emotional content (see Fig. 2b for a similar pattern regarding favoriting levels). Anger-charged tweets by a Republican candidate received on average 362 retweets each (s.e.m. = 106.4, 95% CI [148, 576]), proving to be nearly 1.3 time more effective at motivating responses than those conveying fear (Mean = 274, s.e.m. = 165.7, 95% CI [34, 614]). Such differences are statistically significant, as shown in Table 2 (x2(4) = 14.56, p < 0.01).

Predicted effects on retweets and favorites

Controlling certain variables (i.e., the number of followers and friends that a given candidate has, their incumbency status, and race competitiveness), here, we applied linear mixed-effect models to measure the emotional factors to respectively predict the direct retweets and favorites across the political party divide (i.e., Democrats vs. Republicans). Utilizing the ANOVA function from the R–lmerTest package, we implemented the Satterthwaite (1941) approximation to estimate the degrees of freedom for F statistics and to show the importance of the components (Kuznetsova et al., 2017).

Although goodness-of-fit metrics like the R-squared value are well developed, it is worth noting that the application of such values to correlated data is rare (for reviews, see Pek and Flora, 2018; Rights and Sterba, 2018). To estimate the variance explained by the selected variables in our model, we applied the following: (a) a conditional R2 (for methodology development, see Nakagawa et al., 2013; for implementation, see Lefcheck and Freckleton, 2016) and (b) the squared correlation coefficient between the observed and fitted values (Xu, 2003). Overall, with the conditional R2 and Ω2 ranging from 0.744 to 0.794, ~74 to 80% of variation in the results can be explained by the selected variables, suggesting a quality model validity structure (Pek and Flora, 2018; Rights and Sterba, 2018).

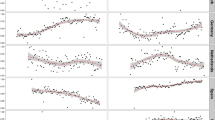

As Figs. 3 and 4 reveal, by adjusting the other variables at a fixed value, both camps became less inclined to act upon, or respond to, positive tweets expressing joy, with a negative association in response to Democratic candidates (predicted retweets: β = −0.66, s.e.m. = 0.05, 95% CI [−0.75, −0.56], F(1, 3662) = 173.1, p < 0.001; predicted favorites: β = −.35, s.e.m. = 0.04, 95% CI [−0.44, −0.25], F(1, 3661) = 52.49, p < 0.001) and Republican candidates (predicted retweets: β = −0.40, s.e.m. = 0.04, 95% CI[−0.48, −0.29]), F(1, 3364) = 67.04, p < 0.001; predicted favorites: β = −0.18, s.e.m. = 0.04, 95% CI[−0.26, −0.07]), F(1, 3404) = 12.93, p < 0.001). However, expressions of neither confidence nor sadness were associated with the predicted counts, with such effects identified on both sides of the political spectrum.

Predicted effects on a retweet and b favorite counts leading up to the 2018 US mid-term elections (09 October–06 November 2018) among Democrats. Conditional R2 (0.744) Ω2 and (0.765) measure the model’s variance between the observed and the predicted response. Recoded #, @, URL and emoji to dummy variables (x = 1 for a given tweet contains the feature). Bands reflect 95% CIs with follower/friend counts, race competitiveness and incumbency as offset variables. *p < 0.05, **p < 0.01, and ***p < 0.001 based on an F-test.

Predicted effects on a retweet and b favorite counts leading up to the 2018 US mid-term elections (09 October–06 November 2018) among Republicans. Conditional R2 (0.794) and Ω2 (0.792) measure the model’s variance between the observed and the predicted response. Recoded #, @, URL and emoji to dummy variables (x = 1 for a given tweet contains the feature). Bands reflect 95% CIs with follower/friend counts, race competitiveness and incumbency as offset variables. *p < 0.05, **p < 0.01, and ***p < 0.001 based on an F-test.

Dissimilarities tend to be clearer when examining responses to the remaining negative emotions. For Democrats, although messages signaling anger were not significantly associated with an increase in retweets (β = 0.27, s.e.m. = 0.23, 95% CI [-0.19, 0.74], F(1, 3656) = 1.29, p = 0.254; see Fig. 3a), candidates’ expressions of fear had the greatest impact on message diffusion, which was associated with a significant increase in retweets (β = 0.53, s.e.m. = 0.22, 95% CI [0.10, 0.98], F(1, 3648) = 5.60, p < 0.05). As Fig. 3b presents, a similar pattern emerged when examining the predicted favorite counts, with the more negative the emotions (i.e., fear) expressed by Democratic candidates in a given tweet, the greater the chance that tweet had of getting more likes (β = 0.58, s.e.m. = 0.21, 95% CI [0.16, 1.01], F(1, 3649) = 7.25, p < 0.01).

In contrast to this outlined pattern, when analyzing the retweet counts for Republican candidates, fear was found to have no significant impact on the level of retweets via online social networks (β = 0.23, s.e.m. = 0.23, 95% CI [−0.28, 0.61]), F(1, 3366) = 0.90, p = 0.342; see Fig. 4a). The results do indicate, however, that the diffusion among Republicans was driven largely by the expression of anger (β = 0.60, s.e.m. = 0.18, 95% CI [0.33, 1.06]), F(1, 3343) = 9.90, p < 0 .01). A similar pattern emerged concerning such effects on the level of favoriting, with the more negative the emotions (i.e., anger) expressed by Republican candidates in a given tweet, the greater the chance that tweet had of getting more likes (β = 0.43, s.e.m. = 0.19, 95% CI [0.16, 0.89]), F(1, 3381) = 5.22, p < 0.05; see Fig. 4b).

To further explore the asymmetry between Democrats and Republicans, we examined various features that may encourage users to interact with tweets (Hasan et al., 2019; Liu et al., 2012), including the use of hashtags to connect a tweet with certain topics, handles to interact directly with others, emoji to emphasize part of the content, or URLs to provide further information in the limited space. The results show that all these features had either a non-significant or a negative impact on the predicted count. For example, tweets containing a user-mention (e.g., β = −0.32, s.e.m. = 0.04, 95% CI [−0.39, −0.23], F(1, 3668) = 59. 02, p < 0.001) or URL (e.g., β = −0.44, s.e.m. = 0.06, 95% CI [−0.56, −0.30], F(1, 3604) = 41.85, p < 0.001) were significantly less likely to be retweeted or favorited when shared by Democratic candidates, whilst publishing a hashtag was negatively and significantly associated with the predicted counts achieved by candidates at both ends of the political spectrum (e.g., Democrats: β = −0.23, s.e.m. = 0.04, 95% CI [−0.31, −0.13], F(1, 3673) = 24.96, p < 0.001; Republicans: β = −0.16, s.e.m. = 0.04, 95% CI [−0.18, −0.02], F(1, 3389) = 13.34, p < 0.001).Footnote 9

Discussion

Though numerous studies investigating the transmission of political information identify candidate preferences on social media (e.g., Auter and Fine, 2016; Nai and Maier, 2020; Straus et al., 2016), little is known about how distinct emotions might specifically influence the spread of politicians’ messages on such sites. By looking beyond an approach based solely on valence, the current study addresses this gap through an extensive analysis of how candidates present—in terms of the multidimensional discrete emotions displayed in a given tweet— tends to be more likely to capture the public’s attention online. Leveraging more than 7000 original messages published by Senate candidates leading up to the 2018 US mid-term elections, results suggest that positive joy-signaling tweets were less likely to be retweeted or favorited on both sides of the political spectrum, whereas the presence of anger- and fear-based tweets were significantly associated with increased diffusion among Republicans and Democrats, respectively. Neither expressions of confidence nor sadness were associated with retweet or favorite counts.

These findings advance recent research (Brady et al., 2017; 2020; Brady and Crockett, 2019) showing an asymmetric pattern in that negative posts tend to be more effective at mobilizing or alienating voters than positive posts (e.g., Auter and Fine, 2016; Druckman et al., 2010; Gervais et al., 2020). Such work suggests that when negativity spreads online, they may become more easily accessible and require less effort to respond to (compared to traditional medium; Druckman et al., 2010). As evidenced by Brady et al. (2020), such diffusion is often reinforced within liberal and conservative ideological bubbles, minimizing the likelihood of backlash from the opposing side (Brady and Crockett, 2019), and offering potential to encourage both the candidates and their supporters to engage in harsh rhetoric with little concern for confronting sanctions (see also Nai and Maier, 2020). Recent work (e.g., Gervais et al., 2020; Gross and Johnson, 2016) highlights that the negative tendencies in social media campaigning might be reinforced in the Trump era and consistently yield mixed consequences. While some studies present the essential and occasionally virtuous characteristics of negative communication (e.g., Mattes and Redlawsk, 2015), it is important to note an ever-expanding polarization and political extremism in this digital age that may cause more harm than good to our public discourse (e.g., Bekafigo et al., 2019; Druckman et al., 2007; 2010; Wilson et al., 2020).

Though the positive-negative asymmetry in the spread of political messaging is clear, our current analysis of specific emotions reveals that not all negative emotions work in the same way and that the diffusion of patterns may vary across the political divide. Specifically, prior research reveals that anger is associated with approach and reward-related motivations; for example, the experience, expectation, and perception of anger often coincide with activities and behaviors that promote action instead of flight (Carver and Harmon-Jones, 2009; Frijda, 1986). Contrary to Janoff-Bulmann (2009) view of conservatives as avoidance-motivated or prevention-focused, here we found messages with anger-charged content to be strongly associated with a considerable increase in message diffusion for Republican candidates who are more likely to represent the conservative end of the political spectrum (compared to their Democratic counterparts). In contrast to anger, fear is often associated with avoidance motivation,Footnote 10 with the experience, expectation, and perception of fear typically— but not exclusively—coinciding with withdrawal activities and behaviors (Lerner et al., 2015; Frijda, 1986). Unlike the hypothesis addressed by Jost et al. (2003), i.e., relatively right-wing individuals report greater responsiveness to fear, threat, or uncertainty (see also Jost, 2017), the current work suggests a strikingly opposite pattern in which fear-based messaging among Democrats is more likely to gain attention and be diffused online (see Bakker et al., 2020; Crawford, 2017).

Given the diverse nature of emotional reactions, we suggest that the links between political orientation and response tendencies to specific emotions may be more nuanced and should be analyzed depending on the context (Van et al., 2016; see also Brady et al., 2020). For example, considering the salience of group identity in the experience and expression of emotion (Mackie et al., 2000; Turner et al., 2007), the context of “which political party is currently in power” may play an important role in this case (Auter and Fine, 2016; Druckman et al., 2007; 2010). Prior research highlights how feelings of uncertainty, particularly about things relevant to or reflecting one’s political identity may motivate behaviors aimed at reducing uncertainty and enhancing ingroup defense (Hogg, 2007).Footnote 11 As the party out of power in 2018 (Cohn and Kesterton, 2018), Democrats may have had a strong sense of existential angst, a sense of shared fate, common enemy, and the need to restore a positive identity relative to their rivals (Hogg, 2007; Hogg and Adelman, 2013). A heightened spread with fear-based content may, in this sense, reflect a psychological defense by the group, serving the specific purpose of removing or buffering potential threats (Belavadi et al., 2020; Hogg and Adelman, 2013). Such effects may not have been the case for Republicans who held presidential power at the time and were thus more susceptible to other types of appeal (e.g., approach- or reward-related appeals) instead of threats (Hohman and Hogg, 2015). However, it is essential to note that these explanations are speculative and future research is needed to examine whether groups in different power positions (e.g., being an underdog or in power) may respond differently to these specific emotional appeals (e.g., fear- or anger-based appeals).

Limitations and future research

One limitation of these findings is that we specifically focused on the 2018 US mid-term elections, the first major election during the Donald Trump presidency. Future research should replicate and extend this work in different political contexts. Another limitation is that as the proportion of messages conveying anger or fear is relatively small in our sample, they are perhaps more likely to activate political engagement than other types of emotional content. Although we utilized mixed-effects models to examine messages at both the inter- and intra-individual levels (Bates, 2010; Doran et al., 2007), thereby accommodating the homogeneity of variance more substantially in the prediction of the emotional effects on the retweet and favorites count, future work would benefit from a balanced dataset with more equally charged emotional content.

Additionally, given that Facebook continues to dominate the online landscape, with more than 60% of adult Americans reporting being users (compared to 25% for Twitter; Pew Research Center, 2021), it will be especially instructive to examine emotive posts on other venues to see whether the engagement patterns identified in our sample parallel those on Facebook. And finally, future research would benefit from expanding the current research beyond examining the role of specific emotions on social media engagement across the political spectrum and additionally examine how certain content by politicians that involve sharing news media content or those that refer to specific high-profile individuals (e.g., Donald Trump, Barack Obama) influence social media engagement. The current study provides a starting point from which to disentangle the role of specific emotions in online engagement across the political spectrum.

Data availability

Owing to Twitter’s policy, we cannot publicly share the original dataset. The sample dataset with codes used to collect/analyze data are available at Data Syntax.

Notes

The original tweet was published by @BarackObama, 30 April 2007. Available at https://twitter.com/BarackObama/status/44240662.

But see Hixson (2017) on the uncertainty of the exact nature of social media politicking.

We removed messages initialized with “RT” to ensure that the final dataset consisted only of original messages composed by the candidates’ accounts.

The original tweet was published by @SenGillibrand, 20 November 2018. Available at https://twitter.com/sengillibrand/status/1064624356557389824?lang=en.

See a demo presentation of IBM’s Tone Analyzer at https://tone-analyzer-demo.ng.bluemix.net/.

The previous API (standard v1.1) only allowed the collection of a mixed count of retweets, including both direct retweets and retweets with commentary. Since Twitter significantly changed the retweet function in 2020, the updated API (v2.0) is now able to extract the retweets (without commentary) directly.

The race competitiveness was measured based on the predicted ratings by the Cook Political Report (2018), with “0” indicating that the candidate was running a race deemed “Safe”, “1” indicating the race was deemed “Likely” for either the Democratic or Republican candidate, “2” indicating the race was “Leaning” toward either the Democratic or Republican candidate, and “3” indicating that the race was a “Tossup” with neither candidate having an advantage. For details, see Supplementary Table S1.

A number of messages express more than one type of emotion (n = 280) and, thus, are overrepresented in the total percentage.

Including an extended dataset (i.e., 09 October–04 December 2018) did not alter the results. For a separate analysis of emotive effects across the post-campaign period, see Supplementary Post-election Facts.

Although fear is primarily associated with avoidance motivations, it is worth noting that some studies identify certain situations where the experience, expectation, and perception of fear coincides with activities and behaviors related to approach motivation (e.g., Simunovic et al., 2013).

For an overview of uncertainty-identity theory, see also self‐uncertainty, social identity, and the solace of extremism, edited by Extremism and the Psychology of Uncertainty (Hogg, 2011).

References

Amodio DM, Jost JT, Master SL et al. (2007) Neurocognitive correlates of liberalism and conservatism. Nat Neurosci 10(10):1246–1247. https://doi.org/10.1038/nn1979

Auter ZJ, Fine JA (2016) Negative campaigning in the social media age: Attack advertising on facebook. Polit Behav 38(4):999–1020. https://doi.org/10.1007/s11109-016-9346-8

Bakker BN, Schumacher G, Gothreau C et al. (2020) Conservatives and liberals have similar physiological responses to threats. Nat Hum Behav 4(6):613–621. https://doi.org/10.1038/s41562-020-0823-z

Barberá P, Jost JT, Nagler J et al. (2015) Tweeting from left to right: Is online political communication more than an echo chamber? Psychol Sci 26(10):1531–1542. https://doi.org/10.1177/0956797615594620

Bates DM (2010) Lme4: Mixed-effects modeling with R. Springer

Becatti C, Caldarelli G, Lambiotte R et al. (2019) Extracting significant signal of news consumption from social networks: the case of Twitter in Italian political elections. Pal Commun 5(1):1–16

Belavadi S, Rinella MJ, Hogg MA (2020) When social identity-defining groups become violent: collective responses to identity uncertainty, status erosion, and resource threat. In: Belavadi S, Rhineland MJ, Hogg MA (eds) The handbook of collective violence. Routledge, pp. 17–30

Bekafigo MA, Stepanova EV, Eiler BA et al. (2019) The effect of group polarization on opposition to Donald Trump. Polit Psychol 40(5):1163–1178. https://doi.org/10.1111/pops.12584

Bleich E, van der Veen A, Maurits (2021) Media portrayals of muslims: a comparative sentiment analysis of american newspapers, 1996-2015. Polit Group Identit 9(1):20–39. https://doi.org/10.1080/21565503.2018.1531770

Bonanno GA, Jost JT (2006) Conservative shift among high-exposure survivors of the september 11th terrorist attacks. Basic Appl Soc Psychol 28(4):311–323. https://doi.org/10.1207/s15324834basp2804_4

Brady WJ, Wills JA, Jost JT et al. (2017) Emotion shapes the diffusion of moralized content in social networks. Proc. Natl Acad. Sci. USA 114(28):7313–7318. https://doi.org/10.1073/pnas.1618923114

Brady WJ, Crockett MJ (2019) How effective is online outrage? Trend Cogn Sci 23(2):79–80. https://doi.org/10.1016/j.tics.2018.11.004

Brady WJ, Wills JA, Burkart D et al. (2019) An ideological asymmetry in the diffusion of moralized content on social media among political leaders. J Exp Psychol 148(10):1802–1813. https://doi.org/10.1037/xge0000532

Brady WJ, Crockett MJ, Van Bavel JJ (2020) The MAD model of moral contagion: the role of motivation, attention, and design in the spread of moralized content online. Perspect Psychol Sci 15(4):978–1010. https://doi.org/10.1177/1745691620917336

Brownlee J (2020) One-vs-rest and one-vs-one for multi-class classification. https://machinelearningmastery.com/one-vs-rest-and-one-vs-one-for-multi-class-classification/. Accessed 31 Aug 2021

Casteltrione I, Pieczka M (2018) Mediating the contributions of Facebook to political participation in Italy and the UK: The role of media and political landscapes. Pal Commun 4(1):1–11

Carver CS, Harmon-Jones E (2009) Anger is an approach-related affect: Evidence and implications. Psychol Bull 135(2):183–204. https://doi.org/10.1037/a0013965

Choma BL, Hodson G (2017) Right-wing ideology: Positive (and negative) relations to threat. Soc Cogn 35(4):415–432. https://doi.org/10.1521/soco.2017.35.4.415

Congressional Research Service (2018) Social media adoption by members of congress: Trends and congressional considerations. https://crsreports.congress.gov/product/pdf/R/R45337. Accessed 08 Aug 2021

Cohn N, Kesterton D (2018) A Democratic blue wave? Don’t forget the republicans’ big hill. The New York Times. https://www.nytimes.com/interactive/2018/07/19/upshot/democrats-midterm-elections.html. Accessed 19 Jul 2020

Cook Political Report (2018) Senate race ratings for October 26, 2018. https://cookpolitical.com/ratings/senate-race-ratings/187540. Accessed 14 Oct 2021

Crawford JT, Mallinas SR, Furman BJ (2015) The balanced ideological antipathy model: explaining the effects of ideological attitudes on inter-group antipathy across the political spectrum. Person Soc Psychol Bull 41(12):1607–1622. https://doi.org/10.1177/0146167215603713

Crawford JT (2017) Are conservatives more sensitive to threat than liberals? It depends on how we define threat and conservatism. Soc Cogn 35(4):354–373. https://doi.org/10.1521/soco.2017.35.4.354

Dang-Xuan L, Stieglitz S, Wladarsch J et al. (2013) An investigation of influentials and the role of sentiment in political communication on twitter during election periods. Inform Commun Soc 16(5):795–825. https://doi.org/10.1080/1369118x.2013.783608

Doran H, Bates D, Bliese P et al. (2007) Estimating the multilevel rasch model: with the lme4 package. J Stat Softw 20:2

Druckman JN, Kifer MJ, Parkin M (2007) The technological development of congressional candidate Web sites: how and why candidates use web innovations. Soc Sci Comput Rev 25(4):425–442. https://doi.org/10.1177/0894439307305623

Druckman JN, Kifer MJ, Parkin M (2010) Timeless strategy meets new medium: going negative on congressional campaign web sites, 2002-2006. Polit Commun 27(1):88–103. https://doi.org/10.1080/10584600903502607

Duckitt J (2001) A dual-process cognitive-motivational theory of ideology and prejudice. Adv Exp Soc Psychol 33:41–113

Frijda NH (1986) The emotions. Cambridge University Press, Cambridge

Galdieri CJ, Lucas JC, Sisco TS (eds.) (2017) The role of Twitter in the 2016 US election. Palgrave Macmillan, London

Gervais BT, Evans HK, Russell A (2020) Tweeting for hearts and minds? Measuring candidates’ use of anxiety in tweets during the 2018 midterm elections. Polit Sci Polit 1–5. https://doi.org/10.1017/S1049096520000852

Gillibrand K (2018) The NRA has had lawmakers in its pocket for too long – and our country has suffered the consequences. If we fighttogether, if we channel our outrage, our heartbreak and our frustration, we can take on the NRA and put a stop to this senseless violence., Twitter, https://twitter.com/sengillibrand/status/1064624356557389824?lang=en

Greenberg J, Jonas E(2003) (2003) Psychological motives and political orientation-the left, the right, and the rigid: comment on Jost et al. (2003) Psychol Bull 129(3):376–382. https://doi.org/10.1037/0033-2909.129.3.376

Gross JH, Johnson KT (2016) Twitter taunts and tirades: Negative campaigning in the age of trump. Polit Sci Polit 49(4):748–754. https://doi.org/10.1017/S1049096516001700

Hasan A, Moin S, Karim A et al. (2018) Machine learning-based sentiment analysis for twitter accounts. Math Comput Appl 23(1):11. https://doi.org/10.3390/mca23010011

Hasan M, Rundensteiner E, Agu E (2019) Automatic emotion detection in text streams by analyzing twitter data. Int J Data Sci Anal 7(1):35–51. https://doi.org/10.1007/s41060-018-0096-z

Hibbing JR, Smith KB, Alford JR (2014) Differences in negativity bias underlie variations in political ideology. Behav Brain Sci 37(3):297–307. https://doi.org/10.1017/s0140525x13001192

Hixson K (2017) Candidate image: When tweets trump tradition. In: Galdieri CJ, Lucas JC, Sisco TS (eds.) The role of Twitter in the 2016 US election. London. Palgrave Macmillan, London, pp. 45–62

Hogg MA (2007) Uncertainty–identity theory. Adv Exp Soc Psychol 39:69–126

Hogg MA (2011) Self‐uncertainty, social identity, and the solace of extremism. In: Hogg MA, Blaylock DL (eds.) Extremism and the psychology of uncertainty. Wiley‐Blackwell, pp. 19–35

Hogg MA, Adelman J (2013) Uncertainty-identity theory: Extreme groups, radical behavior, and authoritarian leadership. J Soc Issue 69(3):436–454. https://doi.org/10.1111/josi.12023

Hohman ZP, Hogg MA (2015) Fearing the uncertain: Self-uncertainty plays a role in mortality salience. J Exp Soc Psychol 57:31–42. https://doi.org/10.1016/j.jesp.2014.11.007

Hutto C, Gilbert E (2014) Vader: A parsimonious rule-based model for sentiment analysis of social media text. Paper presented at the International AAAI Conference on Web and Social Media, May 2014

Hughes A, Van KP (2018) Anger topped “love” when Facebook users reacted to lawmakers’ posts after 2016 election. Pew Research Center. https://www.pewresearch.org/fact-tank/2018/07/18/anger-topped-love-facebook-after-2016-election/. Accessed 12 Dec 2020

IBM Cloud (2015) IBM Watson Tone Analyzer—new service now available. https://www.ibm.com/blogs/cloud-archive/2015/07/ibm-watson-tone-analyzer/. Accessed Feb May 2020

IBM Cloud (2016) Watson Tone Analyzer API goes GA with improved models. https://www.ibm.com/blogs/cloud-archive/2016/05/watson-tone-analyzer-api-with-improved-models/?mhsrc=ibmsearch_a&mhq=tone%20analyzer. Accessed 11 Aug 2021

IBM Cloud (2017) Tone Analyzer for customer engagement: 7 new tones to help you understand how your customers are feeling. https://www.ibm.com/blogs/cloud-archive/2017/04/tone-analyzer-customer-engagement-7-new-tones-help-understand-customers-feeling/. Accessed 25 Aug 2021

IBM Cloud (2020) Tone Analyzer: The science behind the service. https://cloud.ibm.com/docs/tone-analyzer?topic=tone-analyzer-ssbts. Accessed 01 Nov 2020

Inbar Y, Pizarro DA, Bloom P (2009) Conservatives are more easily disgusted than liberals. Cogn Emot 23(4):714–725. https://doi.org/10.1080/02699930802110007

Janoff-Bulman R (2009) To provide or protect: Motivational bases of political liberalism and conservatism. Psychol Inquiry 20(2-3):120–128. https://doi.org/10.1080/10478400903028581

Jost JT (2017) Ideological asymmetries and the essence of political psychology. Polit Psychol 38(2):167–208. https://doi.org/10.1111/pops.12407

Jost JT, Glaser J, Kruglanski AW et al. (2003) Political conservatism as motivated social cognition. Psychol Bull 129(3):339–375. https://doi.org/10.1037/0033-2909.129.3.339

Kuznetsova A, Brockhoff PB, Christensen RHB (2017) lmerTest package: Tests in linear mixed effects models. J Stat Softw 82(13):1–26. https://doi.org/10.18637/jss.v082.i13

Lefcheck JS, Freckleton R (2016) PiecewiseSEM: Piecewise structural equation modelling in R for ecology, evolution, and systematics. Method Ecol Evol 7(5):573–579. https://doi.org/10.1111/2041-210X.12512

Lerner JS, Li Y, Valdesolo P et al. (2015) Emotion and decision making. Ann Rev Psychol 66(1):799–823. https://doi.org/10.1146/annurev-psych-010213-115043

Lerner JS, Tiedens LZ (2006) Portrait of the angry decision maker: How appraisal tendencies shape anger’s influence on cognition. J Behav Decis Making 19(2):115–137. https://doi.org/10.1002/bdm.515

Levendusky M (2009) The partisan sort: How liberals became Democrats and conservatives became Republicans. University of Chicago Press, London

Levin IP, Schneider SL, Gaeth GJ (1998) All frames are not created equal: a typology and critical analysis of framing effects. Organ Behav Hum Decis Process 76:149–188

Liang D, Yi B (2021) Two-stage three-way enhanced technique for ensemble learning in inclusive policy text classification. Inform Sci 547:271–288. https://doi.org/10.1016/j.ins.2020.08.051

Liu K, Li W, Guo M (2012) Emoticon smoothed language models for twitter sentiment analysis. Paper presented at the 26th AAAI Conference on Artificial Intelligence, 1678–1684, 22–26 July 2012

Lyons BA, Veenstra AS (2016) How (not) to talk on twitter: Effects of politicians' tweets on perceptions of the twitter environment. Cyberpsychol Behav Soc Network 19(1):8–15. https://doi.org/10.1089/cyber.2015.0319

Mackie DM, Devos T, Smith ER (2000) Intergroup emotions: Explaining offensive action tendencies in an intergroup context. J Person Soc Psychol 79:602–616

Mattes K, Redlawsk DP (2015) The positive case for negative campaigning. University of Chicago Press

Miller JM, Krosnick JA, Holbrook A et al. (2016) The impact of policy change threat on financial contributions to interest groups. In: Political psychology: new explorations.pp. 172–202

Nakagawa S, Schielzeth H, O'Hara RB (2013) A general and simple method for obtaining R2 from generalized linear mixed-effects models. Method Ecol Evol 4(2):133–142. https://doi.org/10.1111/j.2041-210x.2012.00261.x

Nai A, Maier J (2020) Dark necessities? Candidates’ aversive personality traits and negative campaigning in the 2018 American midterms. Elect Stud 68. https://doi.org/10.1016/j.electstud.2020.102233

Naveed N, Gottron T, Kunegis J et al (2011) Bad news travel fast: A content-based analysis of interestingness on twitter. Paper presented at the 3rd international web science conference, Koblenz, Germany, 15–17 June 2011

Newkirk R (2016) The American idea in 140 characters. The Atlantic. https://www.theatlantic.com/politics/archive/2016/03/twitter-politics-last-decade/475131/. Accessed 24 Mar 2020

Obama B (2007) Thinking we’re only one signature away from ending the war in Iraq. Learn more at https://www.barackobama.com, Twitter, https://twitter.com/BarackObama/status/44240662

Onraet E, Dhont K, Van A (2014) The relationships between internal and external threats and right-wing attitudes: a three-wave longitudinal study. Person Soc Psychol Bull 40(6):712–725. https://doi.org/10.1177/0146167214524256

Oxley DR, Smith KB, Alford JR et al. (2008) Political attitudes vary with physiological traits. Science (American Association for the Advancement of Science 321(5896):1667–1670

Park CS (2013) Does twitter motivate involvement in politics? tweeting, opinion leadership, and political engagement. Comput Hum Behav 29(4):1641–1648. https://doi.org/10.1016/j.chb.2013.01.044

Paulson E (1942) An approximate normalization of the analysis of variance distribution. Ann Math Stat 13(2):233–235. https://doi.org/10.1214/aoms/1177731608

Pek J, Flora DB (2018) Reporting effect sizes in original psychological research: a discussion and tutorial. Psychol Method 23(2):208–225. https://doi.org/10.1037/met0000126

Pew Research Center (2019) Sizing up Twitter users. https://www.pewinternet.org/wp-content/uploads/sites/9/2019/04/twitter_opinions_4_18_final_clean.pdf. Accessed 06 Jun 2020

Pew Research Center (2021) Social media use in 2021. https://www.pewresearch.org/internet/2021/04/07/social-media-use-in-2021/. Accessed Sep 03 2021

Rodríguez-Hidalgo CT, Tan ES, Verlegh P (2015) The social sharing of emotion (SSE) in online social networks: A case study in live journal. Comput Hum Behav 52:364–372. https://doi.org/10.1016/j.chb.2015.05.009

Rose-Stockwell T (2018) How to design better social media. Medium. https://medium.com/s/story/how-to-fix-what-social-media-has-broken-cb0b2737128/. Accessed 14 Apr 2020

Rights JD, Sterba SK (2018) A framework of R-squared measures for single-level and multilevel regression mixture models. Psychol Method 23(3):434–457. https://doi.org/10.1037/met0000139

Rus HM, Cameron LD (2016) Health communication in social media: Message features predicting user engagement on diabetes-related facebook pages. Ann Behav Med 50(5):678–689. https://doi.org/10.1007/s12160-016-9793-9

Sainudiin R, Yogeeswaran K, Nash K et al. (2019) Characterizing the Twitter network of prominent politicians and SPLC-defined hate groups in the 2016 US presidential election. Soc Netw Anal Mining 9(1):34

Satterthwaite FE (1941) Synthesis of variance. Psychometrika 6(5):309–316

Schultz T, Fielding K, Newton F et al. (2018) The effect of images on community engagement with sustainable stormwater management: The role of integral disgust and sadness. J Environ Psychol 59:26–35. https://doi.org/10.1016/j.jenvp.2018.08.003

Shi J, Lai KK, Chen G (2020) Individual retweeting behavior on social networking sites. Springer, Singapore

Simunovic D, Mifune N, Yamagishi T (2013) Preemptive strike: An experimental study of fear-based aggression. J Exp Soc Psychol 49(6):1120–1123

Srinidhi S (2019) Understanding word n-grams and n-gram probability in natural language processing. https://towardsdatascience.com/understanding-word-n-grams-and-n-gram-probability-in-natural-language-processing-9d9eef0fa058. Accessed 28 Aug 2021

Straus JR, Williams RT, Shogan CJ, Glassman ME (2016) Congressional social media communications: evaluating Senate Twitter usage. Online Inform Rev 40(5):643–659. https://doi.org/10.1108/OIR-10-2015-0334

Thurber AJ, Nelson CJ, Dulio AD (eds.) (2000) Crowded airwaves: Campaign advertising in elections. Brookings Institution Press, Washington

Turner JC, Oakes PJ, Haslam SA et al. (2007) Self and collective: Cognition and social context. Person Soc Psychol Bull 20:454–463

Twitter (2021) Political content. https://business.twitter.com/en/help/ads-policies/ads-content-policies/political-content.html. Accessed 29 Jul 2021

Van BJ, Mende-Siedlecki P, Brady WJ et al. (2016) Contextual sensitivity in scientific reproducibility. Proc. Natl Acad. Sci. USA 113(23):6454–6459. https://doi.org/10.1073/pnas.1521897113

Wang H, Can D, Kazemzadeh A et al. (2012) A system for real-time Twitter sentiment analysis of 2012 U.S. presidential election cycle. Paper presented at the 50th Annual Meeting of the Association for Computational Linguistics, Korea, 8–14 July 2012

Wilson AE, Parker VA, Feinberg M (2020) Polarization in the contemporary political and media landscape. Curr Opin Behav Sci 34:223–228. https://doi.org/10.1016/j.cobeha.2020.07.005

Xu R (2003) Measuring explained variation in linear mixed effects models. Stat Med 22(22):3527–3541. https://doi.org/10.1002/sim.1572

Young L, Soroka S (2012) Affective news: The automated coding of sentiment in political texts. Polit Commun 29(2):205–231. https://doi.org/10.1080/10584609.2012.671234

Author information

Authors and Affiliations

Contributions

WMJ, YK, SS, and NK conceived of the presented idea and designed the research; WMJ collected and visualized the data; WMJ and SS analyzed the data; WMJ, YK, and NK interpreted the results and wrote the original paper; SS reviewed and edited the paper; YK and NK provided supervision; all authors approved the final version of the manuscript, with agreement to be accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

Corresponding author

Ethics declarations

Ethical approval

This article does not contain any studies with human participants performed by any of the authors.

Informed consent

This article does not contain any studies with human participants performed by any of the authors.

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Wang, MJ., Yogeeswaran, K., Sivaram, S. et al. Examining spread of emotional political content among Democratic and Republican candidates during the 2018 US mid-term elections. Humanit Soc Sci Commun 8, 300 (2021). https://doi.org/10.1057/s41599-021-00987-4

Received:

Accepted:

Published:

DOI: https://doi.org/10.1057/s41599-021-00987-4

This article is cited by

-

Morality and partisan social media engagement: a natural language examination of moral political messaging and engagement during the 2018 US midterm elections

Journal of Computational Social Science (2024)