Abstract

The detection of maxillary sinus wall is important in dental fields such as implant surgery, tooth extraction, and odontogenic disease diagnosis. The accurate segmentation of the maxillary sinus is required as a cornerstone for diagnosis and treatment planning. This study proposes a deep learning-based method for fully automatic segmentation of the maxillary sinus, including clear or hazy states, on cone-beam computed tomographic (CBCT) images. A model for segmentation of the maxillary sinuses was developed using U-Net, a convolutional neural network, and a total of 19,350 CBCT images were used from 90 maxillary sinuses (34 clear sinuses, 56 hazy sinuses). Post-processing to eliminate prediction errors of the U-Net segmentation results increased the accuracy. The average prediction results of U-Net were a dice similarity coefficient (DSC) of 0.9090 ± 0.1921 and a Hausdorff distance (HD) of 2.7013 ± 4.6154. After post-processing, the average results improved to a DSC of 0.9099 ± 0.1914 and an HD of 2.1470 ± 2.2790. The proposed deep learning model with post-processing showed good performance for clear and hazy maxillary sinus segmentation. This model has the potential to help dental clinicians with maxillary sinus segmentation, yielding equivalent accuracy in a variety of cases.

Similar content being viewed by others

Introduction

The maxillary sinus is the largest air-filled space around the nasal cavity and occupies most of the maxilla. It acts as a ventilation passage for incoming air through the nose1. The maxillary sinus begins to form as an invagination from the lateral wall of the middle meatus during fetal development and grows laterally and downward to the alveolar process at the age of 15–18 years. The inferior wall comprises the alveolar process, and the roots of premolars and molars are adjacent to or protrude into the maxillary sinus. Therefore, the maxillary sinus is highly relevant in the field of dentistry, and its evaluation and analysis are very important. Periapical lesions can cause sinusitis and an oroantral fistula may develop during tooth extraction procedures1,2. A sinus lift procedure may be required due to the lack of alveolar bone at the time of implant placement3. Conditions such as mucosal thickening of the maxillary sinus significantly affect the success rate and complications of implant surgery4. Patients with mucosal thickening greater than 50–75% of sinus volume should be referred to the otolaryngology department prior to surgery5,6,7. It is difficult to diagnose diseases affecting the maxilla early because the maxilla has multiple overlapping structures, so diseases are often discovered only after they are exacerbated8. Odontogenic cysts or tumors cause changes such as elevation of the sinus floor, and malignant tumors destroy the sinus floor. A close assessment of the maxillary sinus is helpful for making an early diagnosis.

Accurate segmentation (an anatomical annotation that delineates the outlines of important structures), is required as a cornerstone for automated diagnosis and treatment planning9 and constitutes the starting point for developing a model that segments only the area of mucosal thickening. When the maxillary sinus is segmented, its volume can be measured, thereby providing quantitative information on changes in maxillary sinus volume due to cysts and tumors. By providing the clinician with the volume ratio of the mucosal thickening area, accurate segmentation can offer guidance for sinus lift elevation during implant surgery.

Panoramic radiography is the most common imaging modality in dentistry10, but it has limitations in the accurate diagnoses of the maxillary sinus due to the presence of overlapping anatomical structures, such as the alveolar bone and zygomatic bone, and the geometric distortion of images. Cone-beam computed tomography (CBCT) has recently been used for the three-dimensional (3D) evaluation and diagnosis of oral and maxillofacial disease due to its advantages, which include a lower radiation dose, lower cost, and more convenient access compared with computed tomography (CT)11. CBCT provides 3D information through a multi-directional reconstruction process.

In clinical practice, manual segmentation by experts and semi-automatic segmentation are performed12,13,14. For an accurate analysis, multi-directional images must be considered, but doing so is laborious and time-consuming. Moreover, the results of the analysis may be highly dependent on experts’ knowledge and experience. For these reasons, deep learning15-based studies have been conducted with the goal of achieving equivalent accuracy in various cases, and several studies have made attempts to perform automatic segmentation of the maxillary sinus16,17,18. However, automatic segmentation of the maxillary sinus is challenging because it is connected to adjacent anatomical structures such as the frontal, ethmoid, and sphenoid sinuses, as well as nasal structures. Furthermore, automatic segmentation is even more difficult to perform on CBCT images because the quality of CBCT images is inferior to that of CT images due to image noise and poor soft tissue contrast19,20.

In recent studies, deep learning algorithms have shown good performance in automated detection, segmentation, and diagnosis based on medical images21,22,23. Various networks have been used for segmentation, and many studies have used U-Net algorithm24,25,26. Sinus segmentation has mostly utilized CT images16,17, whereas very few studies have analyzed CBCT images using deep learning18. We hypothesized that the maxillary sinus could be automatically and accurately segmented on CBCT images using a U-Net model with a post-processing algorithm to achieve similar accuracy to segmentation on CT images.

This study proposes a deep learning-based model for fully automated segmentation of maxillary sinus in various states (clear and various levels of haziness) using CBCT images and adds a post-processing step to the model for more accurate segmentation.

Methods

Datasets and image preparation

This study was approved by the Institutional Review Board (IRB) of Yonsei University Dental Hospital (NO. 2-2021-0059) and was conducted in accordance with relevant guidelines and ethical regulations. As a retrospective study, the requirement of patient informed consent was waived by the IRB of Yonsei University Dental Hospital. The data were anonymized to avoid identification of the patients.

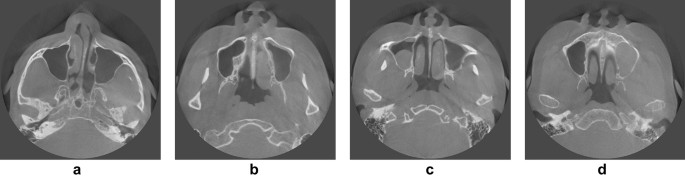

In total, 19,350 CBCT images were acquired from 90 maxillary sinuses of 45 patients (26 females and 19 males) who visited Yonsei University Dental Hospital from June 2020 to March 2021. There were 430 images per person, and the number of images including the sinus varied from 156 to 215. All CBCT data were acquired with a 16 × 10 cm field of view using a RAYSCAN Alpha Plus device (Ray Co. Ltd, Hwaseong-si, Korea). Cases with abnormalities or a history of surgery in the maxillary sinus were excluded, as were poor-quality images with artifacts. The 90 maxillary sinuses were clear or had various states of haziness, including mucosal thickening, mucous retention cysts, and fluid (Fig. 1). The datasets for training, validation, and testing were split into 6:2:2 ratios27,28. The characteristics of the subjects are summarized in Table 1.

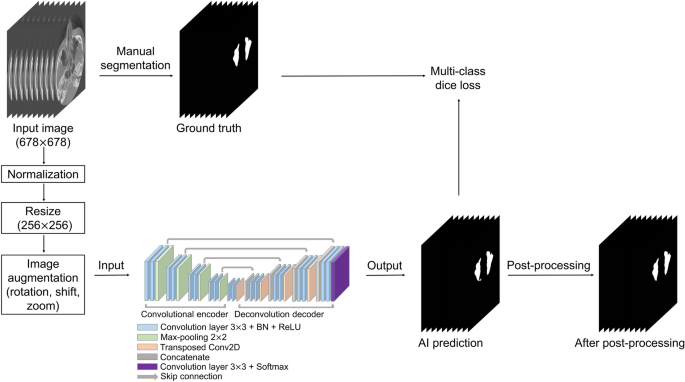

CBCT data were used in the Digital Imaging and Communications in Medicine (DICOM) format with a matrix size of 678 (width) × 678 (height) pixels. To generate a label mask for the ground truth, an oral radiologist with over 20 years of experience conducted manual segmentation of the maxillary sinus in each axial image using 3D Slicer (free open-source software for biomedical image analysis)29. The label images were exported in the DICOM format and used as the ground truth for network training. Input images of 678 × 678 pixels were normalized by using two-dimensional min/max normalization and resized to 256 × 256 pixels. Data augmentation was performed with rotation (− 5° to 5°), translation shift (0–30%), and zoom (0–30%). Figure 2 shows the overall process.

Network architecture

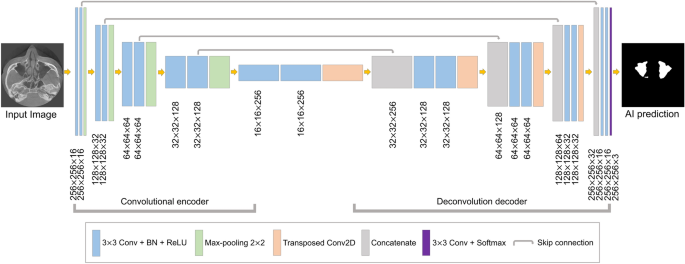

The segmentation model based on U-Net was designed for maxillary sinus segmentation. The U-Net model has demonstrated powerful performance in the segmentation of medical images24,25,26. This architecture is shown in Fig. 3. It consisted of a fully convolutional encoder and decoder, including 18 convolutional blocks, 4 max-pooling layers, 4 transposed convolution layers, and output layer. The convolutional block consisted of a 3 × 3 convolution with stride 1, batch normalization, and the ReLU activation function. At the end of the network, the softmax activation function in the output layer was used for multi-class segmentation of the background, right maxillary sinus, and left maxillary sinus. In the encoding block, the U-Net extracted the maxillary sinus features and passed them on to the next block. In the decoding block, the spatial information features were extracted, and then the size of feature maps was recovered to be the same as the input. The deep learning networks were implemented in Python 3 with the Keras library and trained on an NVIDIA Titan RTX (24 GB).

Loss function

For training, the Adam optimizer30 was applied, and the learning rate was initially set to 10−3. While monitoring the loss of the validation set, if the validation loss did not decrease for 20 epochs, the training was stopped using an early stopper. The loss function of a deep learning network is calculated for validating the quantitative difference between the network output and ground truth. The network parameters are iteratively adjusted in the training phase to minimize loss. In order to train the proposed model, we employed the Dice coefficient28–based multi-class loss function (DL). The DL is defined as:

where \({p}_{i}\) and \({g}_{i}\) represent the pixel values of prediction and ground truth, respectively, N is the number of pixels, and K is the number of classes (background, right maxillary sinus, and left maxillary sinus).

Post-processing

Post-processing was performed to reduce the prediction errors of the developed U-Net model. If the maxillary sinus is adjacent to an air area such as the ethmoid sinus or connected to the airway, it tends to be incorrectly predicted. This intrinsic limitation of neural networks can lead to pixel-level prediction errors31, especially at maxillary sinus boundaries. To solve these problems, we conducted post-processing to eliminate false positives in the maxillary sinus segmentation results of the U-Net (Fig. 4). A 3D volume of the maxillary sinus can be obtained by stacking 2D slice images, and volume information can be acquired by using a connection component of a voxel. Background voxels are labeled with 0, and voxels of the maxillary sinus region are labeled with 1. Labels of 1 for small volumes not connected to the maxillary sinus were removed. Then, the 3D volumetric image was converted to 2D images. This method was implemented using MATLAB 2021a (MathWorks, Natick, MA, USA).

Evaluation metrics

We used the dice similarity coefficient (DSC), precision, recall, and Hausdorff distance (HD)32 to evaluate the artificial intelligence (AI) prediction results and post-processing results. The DSC is the most frequently used evaluation method in medical image segmentation to compare a segmentation result R and the ground truth result G. The formula of the DSC is defined as:

To evaluate the segmentation quality, we used the precision and recall, which are defined as:

where TP, FP, and FN are true positive, false positive, and false negative, respectively. TP represents the number of pixels for which the maxillary sinus areas were accurately predicted. FP represents the number of pixels that were not maxillary sinus areas but were predicted to be maxillary sinuses. FN represents the number of unpredicted pixels in the maxillary sinus areas.

The HD measures the degree of difference between the two results by considering the spatial distance between the segmented objects as an index33. The closer the HD value is to 0, the more similar the prediction result is to the ground truth. The HD measures the maximum distance between two point sets, X and Y. The HD is defined as:

where \(x\) and \(y\) denote two points between the ground truth and prediction result of the maxillary sinuses, and \(d\left(x,y\right)\) is the distance between the two points.

Results

Figures 5 and 6 show the best and worst results of AI prediction for maxillary sinus segmentation. The 3D reconstruction results of AI prediction and after post-processing are shown in Fig. 7. False positives were removed in post-processing. The average prediction results of AI prediction and after post-processing are shown in Table 2. The DSC of the AI model was 0.9090 ± 0.1921 and the HD was 2.7013 ± 4.6154. After post-processing, the DSC was 0.9099 ± 0.1914 and the HD was 2.1470 ± 2.2790. Table 3 shows the final AI prediction results after post-processing according to sinus conditions. The average segmentation time per maxillary sinus was 46.2 s for the AI method, whereas it took 48.7 min for the manual method, and the post-processing process took an average of 16 s.

Three-dimensional reconstruction results. (a) Clear maxillary sinus, (b) hazy maxillary sinus. Red: the ground truth (manual labels); green: the results of artificial intelligence (AI) prediction; yellow: the results after post-processing of AI prediction. The average segmentation time per maxillary sinus was 48.7 min for the manual method and 46.2 s for the AI method, and the post-processing process took an average of 16 s.

Discussion

The present study proposed a CNN model for the fully automatic segmentation of various maxillary sinuses on CBCT images. The inferior wall of the maxillary sinus is part of the alveolar process, making it highly relevant for dentistry (e.g., tooth extraction, implants, and apical lesions). When a disease such as a cyst or a tumor occurs in the maxilla, the change in the maxillary sinus is an important aspect of the diagnosis, and evaluation of the maxillary sinus is also important in sinus lift procedures as part of implant surgery. Therefore, automatic maxillary sinus segmentation will play a starting point for clinical diagnoses and quantitatively measuring volume. By providing the volume ratio of the mucosal thickening area, automatic segmentation will be able to offer guidance for referral to an otolaryngologist before sinus lift elevation for implant surgery.

The maxillary sinus is connected to adjacent anatomical structures, which are very difficult to separate accurately. Inflammation of the sinus appears in various forms, such as thickening of the mucous membrane. If there is mucosal thickening, the border is not clearly defined, which may make automatic segmentation more difficult. A few studies have explored the application of automatic maxillary sinus segmentation using CT images16,17. Xu et al.16 used an improved V-Net to segment the maxillary sinus and reported that the DSC value was 0.94 in clear maxillary sinuses. However, it has been reported that the accuracy was still unsatisfactory in cases of mucosal inflammation. In another study, Iwamoto et al.17 developed a maxillary sinus segmentation model using a fully convolutional network with a probability atlas, and the DSC value was reported to be 0.83 in hazy maxillary sinuses.

CBCT is widely used in dental clinics because it has several advantages over traditional CT and panoramic radiography. It provides 3D information, unlike panoramic radiography, and it has a significantly lower radiation dose and substantially lower costs than CT. CBCT is often used to diagnose the condition of the maxillary sinus and for planning extraction and implant placement in the maxilla. To the best of our knowledge, only one study has performed automatic maxillary sinus segmentation using CBCT images18. Jung et al.18 segmented the air area and the lesion area in the maxillary sinus and obtained DSCs of 0.93 and 0.76, respectively. However, the results of the entire maxillary sinus segmentation were not shown. It seems more reasonable to accurately segment the maxillary sinus first and then segment the air and the lesion area. Hazy sinuses are particularly important because they are associated with a higher likelihood of complications after tooth extraction or implantation, but the performance of segmentation is worse for hazy sinuses than for clear sinuses. Therefore, we added more data on hazy sinuses, and the model performance was good, with a DSC value of 0.9 or higher for both clear and hazy sinuses.

In this study, we proposed a deep learning-based method using a U-Net model with post-processing. The non-maxillary sinus area was incorrectly predicted as the sinus area in some cases, and post-processing was used to reduce these false-positive errors. After post-processing, the average DSC value increased from 0.9090 ± 0.1921 to 0.9099 ± 0.1914 and the average HD value decreased from 2.7013 ± 4.6154 to 2.1470 ± 2.2790. The change in the predicted area due to the removal of false positives was small, but the HD value considerably improved. Our U-Net with post-processing method showed similar or higher performance in comparison with previous CT studies16,17. In our model, there is no need for an expert to manually select the maxillary sinus from all images for segmentation. We automatically and accurately segmented the maxillary sinus from CBCT images within 1 min, which was much more efficient than manual segmentation, which took more than 30 min. The developed model makes it possible to analyze the CBCT images of many patients in a short time.

Although our study showed good performance for automatic segmentation of the maxillary sinus, some limitations should be addressed in future research. First, if false positive pixels are connected to the maxillary sinus region, they are not removed by post-processing. To overcome this limitation, a process for detecting the edge of the maxillary sinus is required. Using a variety of training data may also help solve this problem. Second, this study was conducted using a small sample size of 45 patients (90 maxillary sinuses), and data were obtained using a single CBCT device. Using more images from various CBCT devices will improve the performance of the algorithm. In further study, developing a segmentation model of areas of only haziness is needed. A model that could segment only the hazy area of the maxillary sinus and measure the ratio of the air area and hazy area of the maxillary sinus would be helpful in planning surgery or treatment.

Conclusion

The developed deep learning algorithm with post-processing showed good performance for both clear and hazy maxillary sinus segmentation with minimal time and effort. The proposed model has the potential to assist dental clinicians in segmenting the maxillary sinuses in various cases with equivalent accuracy in CBCT images.

Data availability

The data generated and analyzed during the current study are not publicly available due to privacy laws and policies in Korea but are available from the corresponding author on reasonable request.

References

Hauman, C., Chandler, N. & Tong, D. Endodontic implications of the maxillary sinus: A review. Int. Endod. J. 35, 127–141 (2002).

Yoo, B. J. et al. Treatment strategy for odontogenic sinusitis. Am. J. Rhinol. Allergy 35, 206–212 (2021).

Kim, J. S. et al. What affects postoperative sinusitis and implant failure after dental implant: A meta-analysis. Otolaryngol. Head Neck Surg. 160, 974–984 (2019).

Drumond, J. P. N., Allegro, B. B., Novo, N. F., de Miranda, S. L. & Sendyk, W. R. Evaluation of the prevalence of maxillary sinuses abnormalities through spiral computed tomography (CT). Int. Arch. Otorhinolaryngol. 21, 126–133 (2017).

Janner, S. F. et al. Sinus floor elevation or referral for further diagnosis and therapy: A comparison of maxillary sinus assessment by ENT specialists and dentists using cone beam computed tomography. Clin. Oral Implant. Res. 31, 463–475 (2020).

Chen, Y. W. et al. A paradigm for evaluation and management of the maxillary sinus before dental implantation. Laryngoscope 128, 1261–1267 (2018).

Friedland, B. & Metson, R. A guide to recognizing maxillary sinus pathology and for deciding on further preoperative assessment prior to maxillary sinus augmentation. Int. J. Periodontics Restor. Dent. 34, 807–815 (2014).

Na, J. Y., Kang, J. H., Choi, S.-H., Jeong, H.-G. & Han, S.-S. Squamous cell carcinoma of the maxillary sinus mimicking periodontitis. J. Korean Dent. Assoc. 55, 276–283 (2017).

Sharma, N. & Aggarwal, L. M. Automated medical image segmentation techniques. J. Med. Phys. 35, 3 (2010).

Rushton, V. & Horner, K. The use of panoramic radiology in dental practice. J. Dent. 24, 185–201 (1996).

Macleod, I. & Heath, N. Cone-beam computed tomography (CBCT) in dental practice. Dent. Update 35, 590–598 (2008).

Andersen, T. N. et al. Accuracy and precision of manual segmentation of the maxillary sinus in MR images—A method study. Br. J. Radiol. 91, 20170663 (2018).

Pirner, S. et al. CT-based manual segmentation and evaluation of paranasal sinuses. Eur. Arch. Oto-Rhino-Laryn. 266, 507–518 (2009).

Tingelhoff, K. et al. Comparison between manual and semi-automatic segmentation of nasal cavity and paranasal sinuses from CT images. In 2007 29th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC) 5505–5508 (IEEE, 2007).

LeCun, Y., Bengio, Y. & Hinton, G. Deep learning. Nature 521, 436–444 (2015).

Xu, J. et al. Automatic CT image segmentation of maxillary sinus based on VGG network and improved V-Net. Int. J. Comput. Assist. Radiol. Surg. 15, 1457–1465 (2020).

Iwamoto, Y. et al. Automatic segmentation of the paranasal sinus from computer tomography images using a probabilistic atlas and a fully convolutional network. In 2019 41st Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC) 2789–2792 (IEEE, 2019).

Jung, S.-K., Lim, H.-K., Lee, S., Cho, Y. & Song, I.-S. Deep active learning for automatic segmentation of maxillary sinus lesions using a convolutional neural network. Diagnostics 11, 688 (2021).

Zhang, Y. et al. Reducing metal artifacts in cone-beam CT images by preprocessing projection data. Int. J. Radiat. Oncol. Biol. Phys. 67, 924–932 (2007).

Katsumata, A. et al. Image artifact in dental cone-beam CT. Oral Surg. Oral Med. Oral Pathol. Oral Radiol. Endod. 101, 652–657 (2006).

Ha, E.-G., Jeon, K. J., Kim, Y. H., Kim, J.-Y. & Han, S.-S. Automatic detection of mesiodens on panoramic radiographs using artificial intelligence. Sci. Rep. 11, 1–8 (2021).

Yang, S. et al. Deep learning segmentation of major vessels in X-ray coronary angiography. Sci. Rep. 9, 1–11 (2019).

Bejnordi, B. E. et al. Diagnostic assessment of deep learning algorithms for detection of lymph node metastases in women with breast cancer. JAMA 318, 2199–2210 (2017).

Ronneberger, O., Fischer, P. & Brox, T. U-net: Convolutional networks for biomedical image segmentation. Med. Image Comput.Comput. Assist. Interv. Pt Iii 9351, 234–241 (2015).

Li, X. et al. H-DenseUNet: hybrid densely connected UNet for liver and tumor segmentation from CT volumes. IEEE Trans. Med. Imaging 37, 2663–2674 (2018).

Dong, H., Yang, G., Liu, F., Mo, Y. & Guo, Y. Automatic brain tumor detection and segmentation using U-Net based fully convolutional networks. In Annual Conference on Medical Image Understanding and Analysis (MIUA) 506–517 (Springer, 2017).

Li, H., Li, A. & Wang, M. A novel end-to-end brain tumor segmentation method using improved fully convolutional networks. Comput. Biol. Med. 108, 150–160 (2019).

Wang, L., Wang, C., Sun, Z. & Chen, S. An improved dice loss for pneumothorax segmentation by mining the information of negative areas. IEEE Access 8, 167939–167949 (2020).

Fedorov, A. et al. 3D Slicer as an image computing platform for the quantitative imaging network. Magn. Reson. Imaging 30, 1323–1341 (2012).

Kingma, D. P. & Ba, J. Adam: A method for stochastic optimization. arXiv preprint https://arxiv.org/abs/1412.6980 (2014).

Wang, S., Yang, D. M., Rong, R., Zhan, X. & Xiao, G. Pathology image analysis using segmentation deep learning algorithms. Am. J. Pathol. 189, 1686–1698 (2019).

Huttenlocher, D. P., Klanderman, G. A. & Rucklidge, W. J. Comparing images using the Hausdorff distance. IEEE Trans. Pattern Anal. Mach. Intell. 15, 850–863 (1993).

Taha, A. A. & Hanbury, A. Metrics for evaluating 3D medical image segmentation: Analysis, selection, and tool. BMC Med. Imag. 15, 1–28 (2015).

Funding

This work was funded by a National Research Foundation of Korea (NRF) grant funded by the Korean Government (MSIT) (No. 2022R1A2B5B01002517).

Author information

Authors and Affiliations

Contributions

S.H. proposed the ideas; H.C., K.J. and Y.K. collected data; H.C. designed the deep learning model; K.J., C.L. analyzed and interpreted data; H.C., K.J., E.H. and S.H. critically reviewed the content; and H.C, K.J, and S.H. drafted and critically revised the article.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Choi, H., Jeon, K.J., Kim, Y.H. et al. Deep learning-based fully automatic segmentation of the maxillary sinus on cone-beam computed tomographic images. Sci Rep 12, 14009 (2022). https://doi.org/10.1038/s41598-022-18436-w

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-022-18436-w

This article is cited by

-

Automatic diagnosis of true proximity between the mandibular canal and the third molar on panoramic radiographs using deep learning

Scientific Reports (2023)

-

Iterative learning for maxillary sinus segmentation based on bounding box annotations

Multimedia Tools and Applications (2023)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.