Abstract

This paper considers a linear regression model with stochastic restrictions,we propose a new mixed Kibria–Lukman estimator by combining the mixed estimator and the Kibria–Lukman estimator.This new estimator is a general estimation, including OLS estimator, mixed estimator and Kibria–Lukman estimator as special cases. In addition, we discuss the advantages of the new estimator based on MSEM criterion, and illustrate the theoretical results through examples and simulation analysis.

Similar content being viewed by others

Introduction

Consider the following linear regression model:

where y is the response variable vector of \(n \times 1, X\) is the column full rank independent variables matrix of \(n \times (p+1), \beta\) is the unknown coefficient vector of \(p \times 1, \varepsilon\) is the random error vector of n dimension such that \(E(\varepsilon )=0\) and \({\text {Cov}}(\varepsilon )=\sigma ^{2} I\), where \(\sigma ^{2}>0\) is mean squared error.

In the estimation of unknown coefficient vector \(\beta\), the OLS estimator is the most commonly used:

It is easy to know from formula (2), \(E \hat{\beta }=\beta\), and the OLS estimator has been widely used because of its unbiased nature and concise form. However, the ill condition of the design matrix X caused by the increasing number of dependent predictors often makes the OLS estimates unstable.

Massy1 proposed principal component estimator. Hoerl and Kennard2 obtained the ridge estimation by introducing a ridge parameter k into the design \(X'X\) matrix calculation. Swindel3 proposed a modified ridge estimator with prior information while Lukman et al.4 proposed the two-parameter form of the ridge estimator called the modified ridge estimator (MRT). Liu5 obtained a linearized form of the ridge estimator called the Liu estimator. Akdeniz and Kaciranlara6 proposed the generalized Liu estimator. Liu7 obtained a two-parameter form of the Liu estimator.

Many scholars have found that a new estimator can be obtained by combining the two estimators, which generally have good statistical properties. Baye and Parker8 proposed r–k estimator by combining ridge estimator and principal component estimator. Kaciranlar and Sakallioglu9 proposed r–d estimator by combining Liu estimator and principal component estimator. Ozkale and Kaciranlar10 proposed two parameter estimator by combining the James–Stein Shrinkage estimator and the modified ridge estimator proposed by Swindel. Batah et al.11 proposed a modified r-k estimator combining unbiased ridge estimator and principal component estimator. Yang and Chang12 proposed another two parameter estimator based on ridge estimator and Liu estimator. Lukman et al.13 proposed a new estimator by combining modified ridge estimator (MRT) and principal component estimator. Kibria and Lukman14 proposed Kibria–Lukman estimator by combining ridge estimator and Liu estimator.

In practice, in addition to the sample information given by model (1), additional information about parameters in the sample information, such as certain deterministic or stochastic restrictions on unknown parameters, can also be considered. This method can also overcome the complex collinearity problem. Theil and Goldberger15 and Theil16 proposed mixed estimator by comprehensively considering sample information and constraints. Schiffrin and Toutenburg17 proposed weighted mixed estimator for the different importance of sample information and prior information.

In recent years, biased estimation and estimation methods with prior information are often combined to form a broader biased estimation. Hubert and Wijekoon18 proposed a stochastic restricted Liu estimator by combining Liu estimator and mixed estimator. Yang and Xu19 obtained another stochastic mixed Liu estimator. In the same year, Yang and Chang further studied the stochastic mixed Liu estimator and obtained the weighted mixed Liu estimator. Yang and Li12 proposed another stochastic mixed ridge estimator. Ozbay and Kaciranlar20 integrated two parameter estimator and mixed estimator and proposed a two parameter mixed estimator.

In this paper, a new mixed KL estimator under stochastic restrictions is proposed, and its excellent properties under certain conditions are proved theoretically. The above theoretical results are verified and analyzed by examples and data simulation.

The proposed estimator

Hoerl and Kennard2 proposed the ridge estimator (RE):

where \(k>0\) is the parameter. In fact, ridge estimator is obtained by solving the following extreme value problem:

where c is constant, k is the Lagrange constant.

Kibria and Lukman14 proposed the Kibria Lukman (KL) estimator:

where \(k>0\) is the parameter.KL estimator is obtained by solving the following extreme value problem:

where c is constant, k is the Lagrange constant.

Consider the following stochastic restrictions:

where r is the known random vector of \(j \times 1\), R is the row full rank sample data matrix of \(j \times p\), let e be the \(j \times 1\) random error vector and independent of each other, and \(\psi\) be the known positive definite matrix.

Theil and Goldberger15 and Theil16 proposed the mixed estimator by integrating sample information and constraints. The derivation idea is to rewrite models (1) and (6) into a new linear model:

If \(\tilde{y}=\left( \begin{array}{l}y \\ r\end{array}\right) , \tilde{X}=\left( \begin{array}{l}X \\ R\end{array}\right) , \tilde{\varepsilon }=\left( \begin{array}{l}\varepsilon \\ e\end{array}\right)\), above model is transformed into

By applying the least square estimator to the new linear model (7), the mixed estimator (ME)of parameter \(\beta\) is obtained:

Combined mixed estimator and ridge estimator and proposed stochastic mixed ridge estimation (RME):

The estimator proposed in this paper is obtained by solving the following extreme value problem:

where c is constant, k is Lagrange constant.

Regular equations can be obtained:

from Eqs. (11) and (12), we can get the mixed KL estimator:

It can be seen from Eq. (13) that mixed estimator, KL estimator and OLS estimator can be regarded as special cases of mixed KL estimator.Namely

When \(k=0, \hat{\beta }_{ME}=\hat{\beta }_{MKL}=\left( X^{\prime } X+R^{\prime } \psi ^{-1} R\right) ^{-1}\left( X^{\prime } y+R^{\prime } \psi ^{-1} r\right)\) is mixed estimator;

When \(R=0, \hat{\beta }_{K L}=\hat{\beta }_{MKL}=(X^{'}X+k I)^{-1}(X'y-k \hat{\beta })\) is \(\mathrm {KL}\) estimator;

When \(k=0, R=0, \hat{\beta }_{OLS}=\hat{\beta }_{MKL}=(X^{'}X)^{-1}X^{'}y\) is OLS estimator.

The performance of the new estimator

If \(\hat{\beta }\) is the estimation of \(\beta\), then the mean square error matrix \((\mathrm {MSEM})\) of \(\hat{\beta }\) is given as:

where \({\text {Cov}}(\hat{\beta })\) is the covariance matrix of \(\hat{\beta }\), and \({\text {Bias}}(\hat{\beta })=E(\hat{\beta })-\beta\) is the deviation vector. Two estimates \(\hat{\beta }_{1}\) and \(\hat{\beta }_{2}\), \(\hat{\beta }_{2}\) are better than \(\hat{\beta }_{1}\) under MSEM criterion if and only if:

Lemma 3.1

Suppose two \(n \times n\) matrix \(M>0, N \ge 0\), then \(M>N \Leftrightarrow \lambda _{1}\left( N M^{-1}\right) <1\), where \(\lambda _{1}\left( N M^{-1}\right)\) is the maximum eigenvalue of matrix \(N M^{-1}\).

The mean square error matrix of mixed KL estimator \(\hat{\beta }_{MKL}\) is calculated as follows:

where \(A_{k}=\left( X^{'}X+k I+R^{\prime } \psi ^{-1} R\right) ^{-1}\).

Deviation vector: \({\text {Bias}}\left( \hat{\beta }_{MKL}\right) =E\left( \hat{\beta }_{MKL}\right) =-2 k A_{k} \beta\).

Therefore,

where \(b_{1}=-2 k A_{k} \beta .\)

By substituting \(k=0\) into Eq. (16), the mean square error matrix of the mixed estimator can be obtained:

where \(M=X^{\prime } X+R^{\prime } \psi ^{-1} R\).

By substituting \(R=0\) into Eq. (16), the mean square error matrix of the KL estimator can be obtained:

where \(S_{k}=X^{\prime } X+k I, b_{2}=-2 k S_{k}^{-1} \beta\).

By substituting \(k=0, R=0\) into Eq. (16), the mean square error matrix of the OLS estimator can be obtained:

Mean square error matrix of mixed ridge estimator:

Deviation vector: \({\text {Bias}}\left( \hat{\beta }_{MRE}\right) =E\left( \hat{\beta }_{MRE}\right) -\beta =-k A_{k} \beta .\)

Therefore,

Comparison between mixed KL estimator and mixed estimator

From Eqs. (16) and (17), we make

Because

from \(k>0\),so \(M^{-1}-A_{k}\left( M-k S^{-1}\right) A_{k}>0\), Theorem 3.2 is obtained.

Theorem 3.2

The necessary and sufficient conditions for mixed KL estimator \(\hat{\beta }_{MKL}\) to be superior to mixed estimator \(\hat{\beta }_{M E}\) under MSEM criterion are as follows:

Comparison between mixed KL estimator and KL estimator

From Eqs. (16) and (18), we make

Because

where\(N=S-kS^{-1}, Q=R^{\prime } \psi ^{-1} R, B=N S_{k}^{-1} Q+Q S_{k}^{-1} N+Q S_{k}^{-1} N S_{k}^{-1} Q-Q\)

According to the Lemma 3.1, it can be obtained that if \(k<\min \nolimits _{i=1}^{p}\lambda _{i}^{2}\), then \(N>0\). So \(B>0\) if and only if \(k<\min \nolimits _{i=1}^{p}\lambda _{i}^{2},\lambda _{1} Q\left( N S_{k}^{-1} Q+Q S_{k}^{-1} N+Q S_{k}^{-1} N S_{k}^{-1} Q\right) ^{-1}<1\).

As long as \(k<\min \nolimits _{i=1}^{p}\lambda _{i}^{2},\lambda _{1} Q\left( N S_{k}^{-1} Q+Q S_{k}^{-1} N+Q S_{k}^{-1} N S_{k}^{-1} Q\right) ^{-1}<1\), following conclusions can be obtained:

Theorem 3.3

When \(k<\min \limits _{i=1}^{p}\lambda _{i}^{2},\lambda _{1} Q\left( N S_{k}^{-1} Q+Q S_{k}^{-1} N+Q S_{k}^{-1} N S_{k}^{-1} Q\right) ^{-1}<1\), the necessary and sufficient conditions for mixed KL estimator \(\hat{\beta }_{MKL}\) to be superior to KL estimator \(\hat{\beta }_{KL}\) under MSEM criterion are as follows:

Comparison between mixed KL estimator and OLS estimator

From Eqs. (16) and (19), we make

Because

where \(C=S^{-1} Q+Q S^{-1}\).

Because \(C=C^{\prime }\), and \(\lambda _{i}\left( S^{-1} Q\right) =\lambda _{i}\left( S^{-\frac{1}{2}} Q S^{-\frac{1}{2}}\right) >0\), we can get \(C>0\), so \(2 k I+k S^{-1}+k^{2} S^{-1}+Q+k C+Q S^{-1} Q>0\), that is \(S^{-1}-A_{k}\left( X^{\prime } X-k S^{-1}+R^{\prime } \psi ^{-1} R\right) A_{k}>0\), Theorem 3.4 is obtained.

Theorem 3.4

The necessary and sufficient conditions for mixed KL estimator \(\hat{\beta }_{MKL}\) to be superior to \(\hat{\beta }_{OLS}\) under MSEM criterion are as follows:

Comparison between mixed KL estimator and mixed ridge estimator

From Eqs. (16) and (22), we make

Theorem 3.5

The necessary and sufficient conditions for mixed KL estimator \(\hat{\beta }_{MKL}\) to be superior to the mixed ridge estimator \(\hat{\beta }_{MRE}\) under MSEM criterion are as follows:

.

Numerical example and simulation study

In order to further explain the theoretical results, this section will verify and analyze the above theoretical results through examples.

The example analysis data adopts the percentage data of research and development expenses in GNP of several countries from 1972 to 1986 used by Gruber21, Akdeniz and Erol22, in which \(x_{1}\) represents France, \(x_{2}\) represents West Germany, \(x_{3}\) represents Japan, \(x_{4}\) represents the former Soviet Union and y represents the United States. See Table 1 for specific data.

The data in Table 1 are processed as follows

Firstly, it is easy to calculate that the eigenvalue of \(X^{\prime } X\) is \(\lambda _{1}=302.9626\), \(\lambda _{2}=0.7283\), \(\lambda _{3}=0.0446, \lambda _{4}=0.0345\),the OLS estimator of \(\sigma ^{2}\) is \(\hat{\sigma }^{2}=0.0015\), and \(\mathrm {OLS}\) estimator of \(\beta\) is \(\hat{\beta }_{O L S}=(0.6455,0.0896,0.1436,0.1526)^{\prime }\).

We can use the method proposey by Kibria and Lukman14 to choose the biasing parameter k, and we can also use the generalized cross validation (GCV) criterion and the cross validation (CV) to choose the biasing parameter, the reference can refer to Arashi et al.23, Roozbeh24, and Roozbeh et al.25. In this paper we use the method propose by Kibria and Lukman14 to choose the biasing parameter k, which is given as follows:

we take \(k=\hat{k}_{\min }\).

Consider the following stochastic restrictions, this can refer to Roozbeh et al.26 and Roozbeh and Hamzah27:

For the mixed estimator, KL estimator, OLS estimator, mixed ridge estimator and mixed KL estimator proposed in this paper. The MSE is presented in Table 2.

As can be seen from Table 2:

When k takes \(\hat{k}_{\min }=0.018\), the MSE value of mixed KL estimator \(\hat{\beta }_{M K L}\) is better than that of mixed estimator, KL estimator,OLS estimator and mixed ridge estimator. Consistent with the theoretical results of this paper, it can be concluded that adding stochastic restrictions may have better estimation effect under certain conditions. So in practice we can use the stochastic restrictions to address the multicollinearity.

Next, we consider Monte Carlo simulation analysis.

Firstly, the generation of relevant parameters and data in the process of simulation analysis is briefly described.

The data generation of explanatory variables adopts the same method as McDonald and Galarneau28, Gibbons29), that is, it is generated by the following equation:

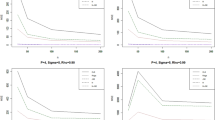

where \(z_{i j}\) is the random number generated by the standard normal random variable, \(\rho\) is the given constant, and \(\rho ^{2}\) theoretically represents the correlation between two different variables, so \(\rho ^{2}\) reflects the degree of complex collinearity of the model to some extent. In this simulation analysis, we consider three cases \(\rho =0.85,0.9,0.99\), set \(p=3, r=1, R=\left( \begin{array}{lll}1&-2&-2\end{array}\right) , e \sim (0, \sigma ^{2}),n=30,50,70,100\).

For a given design matrix X, we take the orthogonalized eigenvector corresponding to the maximum eigenvalue of \(X^{\prime } X\) as the real value of parameter vector \(\beta\).

The data corresponding to the response variable is generated by the following equation:

where \(\varepsilon _{i}\) is the mean of zero, and random vector with variance of \(\sigma ^{2}=0.1,1,5,10\).

See Tables 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17 and 18 for simulation analysis and calculation results.

Based on Tables 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17 and 18, the following conclusions are drawn:

-

(1)

The mean square error of all estimates increases with the increase of \(\rho\) and decreases with the increase of n;

-

(2)

The new estimator mixed KL estimator always has the minimum MSE when all given n and \(\sigma ^{2}\) ,and k takes \(\hat{k}_{\min }\). Consistent with the results of Theorems 3.2–3.5 in this paper, under certain conditions, mixed KL estimator \(\hat{\beta }_{MKL}\) is better than mixed estimator \(\hat{\beta }_{M E}\), KL estimator \(\hat{\beta }_{K L}\), least square estimator \(\hat{\beta }_{OLS}\) and mixed ridge estimator \(\hat{\beta }_{M R E}\) under MSE criterion;

-

(3)

Under the same conditions, mixed estimator \(\hat{\beta }_{ME}\),mixed ridge estimator \(\hat{\beta }_{MRE}\) and mixed KL estimator \(\hat{\beta }_{MKL}\) are better than unconstrained least squares estimator \(\hat{\beta }_{OLS}\) under MSE criterion, mixed KL estimator \(\hat{\beta }_{M K L}\) is better than unconstrained KL estimator \(\hat{\beta }_{K L}\) under MSE criterion.

Conclusions

In this paper, a new mixed KL estimator considering the prior information about parameters in sample information in linear model is proposed, and the properties of the new estimator are discussed. The necessary and sufficient conditions for KL estimator to be better than mixed estimator, KL estimator, OLS estimator and mixed ridge estimator under the criterion of mean square error matrix are given, and the proofs are given respectively. Then the theoretical results are verified by examples and simulation analysis.

References

Massy, W. F. Principal components regression in exploratory statistical research. J. Am. Stat. Assoc. 60, 234–256 (1965).

Hoerl, A. E. & Kennard, R. W. Ridge regression: Biased estimation for nonorthogonal problems. Technometrics 12, 55–67 (1970).

Swindel, B. F. Good estimators based on prior information. Commun. Stat. Theroy Methods 5, 1065–1075 (1976).

Lukman, A. F., Ayinde, K., Binuomote, S. & Onate, A. C. Modified ridge-type estimator to cambat multicollinearity. J. Chemom. e3125, 1–12 (2019).

Liu, K. J. A new class of biased estimate in linear regression. Commun. Stat. Theroy Methods 22, 393–402 (1993).

Akdeniz, F. & Kaciranlar, S. On the almost unbiased generalized Liu estimator and unbiased estimation of the bias and MSE. Commun. Stat. Theroy Methods 24, 1789–1797 (1995).

Liu, K. J. Using Liu-type estimator to combat collinearity. Commun. Stat. Theroy Methods 32, 1009–1020 (2003).

Baye, M. R. & Parker, D. F. Combining ridge and principal component regression: A money demand illustration. Commun. Stat. Theroy Methods 13, 197–225 (1984).

Kaciranlar, S. & Sakallioglu, S. Combining the Liu estimator and the principal component regression estimator. Commun. Stat. Theroy Methods 30, 2699–2705 (2001).

Ozkale, M. R. & Kaciranlar, S. The restricted and unrestricted two-parameter estimators. Commun. Stat. Theroy Methods 36, 2707–2725 (2007).

Batah, F. M., Ozkale, M. R. & Gore, S. D. Combining unbiased ridge and principal component regressions estimators. Commun. Stat. Theroy Methods 38, 2201–2209 (2009).

Yang, H. & Chang, X. F. A new two-parameter estimator in linear regression. Commun. Stat. Theroy Methods 39(6), 923–934 (2010).

Lukman, A. F., Ayinde, K., Oludoun, O. & Onate, C. A. Combining modified ridge-type and principal component regression estimators. Sci. Afr. e536, 1–8 (2020).

Kibria, B. M. G. & Lukman, A. F. A new ridge-type estimator for the linear regression model: Simulations and applications. Scientificahttps://doi.org/10.1155/2020/9758378 (2020).

Theil, H. & Goldberger, A. S. On pure and mixed estimation in econometrics. Int. Econ. Rev. 2, 65–78 (1961).

Theil, H. On the use of incomplete prior information in regression analysis. J. Am. Stat. Assoc. 58, 401–414 (1963).

Schiffrin, B. & Toutenburg, H. Weighted mixed regression. Z. Angew. Math. Mech. 70, 735–738 (1990).

Hubert, M. H. & Wijekoon, P. Improvement of the Liu estimator in linear regression coefficient. Stat. Pap. 47, 471–479 (2006).

Yang, H. & Xu, J. W. An alternative stochastic restricted Liu estimator in linear regression model. Stat. Pap. 50, 369–647 (2009).

Ozbay, N. & Kaciranlar, K. S. Estimation in a linear regression model with stochastic linear restrictions: A new two-parameter-weighted mixed estimator. J. Stat. Comput. Simul. 88, 1669–1683 (2018).

Gruber, M. H. J. Improving Efficiency by Shrinkage: The James–Stein and Ridge Regression estimators (Marcel Dekker Inc, 1998).

Akdeniz, F. & Erol, H. Mean Squared error matrix comparisons of some biased estimator in linear regression. Commun. Stat. Theroy Methods 32(12), 2389–2413 (2003).

Arashi, M. et al. Ridge regression and its applications in genetic studies. PLoS One 16(4), e0245376 (2021).

Roozbeh, M. & Azen, S. P. Optimal QR-based estimation in partially linear regression models with correlated errors using GCV criterion. Comput. Stat. Data Anal. 117, 45–61 (2018).

Roozbeh, M., Arahi, M. & Hamzah, N. A. Generalized cross-validation for simultaneous optimization of tuning parameters in ridge regression. Iran. J. Sci. Technol. Trans. A, Sci. 44(2), 473–485 (2020).

Roozbeh, M., Hesamianb, G. & Akbaric, M. G. Ridge estimation in semi-parametric regression models under the stochastic restriction and correlated elliptically contoured errors. J. Comput. Appl. Math. 378, 112940 (2020).

Roozbeh, M. & Hamzah, N. A. Uncertain stochastic ridge estimation in partially linear regression models with elliptically distributed errors. Statistics 3, 494–523 (2022).

McDonald, M. C. & Galarneau, D. I. A Monte Carlo evaluation of ridge-type estimators. J. Am. Stat. Assoc. 70, 407–416 (1975).

Gibbons, D. G. A simulation study of some ridge estimators. J. Am. Stat. Assoc. 76, 131–139 (1981).

Funding

The authors are highly obliged to the editor and the reviewers for the comments and suggestions which improved the paper in its present form.This work was sponsored by the Natural Science Foundation of Chongqing [grant number cstc2020jcyj-msxmX0028] and the Scientific Technological Research Program of Chongqing Municipal Education Commission [grant number KJQN202001321].

Author information

Authors and Affiliations

Contributions

H.C. and J.W. wrote the main manuscript text. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Chen, H., Wu, J. On the mixed Kibria–Lukman estimator for the linear regression model. Sci Rep 12, 12430 (2022). https://doi.org/10.1038/s41598-022-16689-z

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-022-16689-z

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.