Abstract

Spatial navigation is a complex cognitive process based on multiple senses that are integrated and processed by a wide network of brain areas. Previous studies have revealed the retrosplenial complex (RSC) to be modulated in a task-related manner during navigation. However, these studies restricted participants’ movement to stationary setups, which might have impacted heading computations due to the absence of vestibular and proprioceptive inputs. Here, we present evidence of human RSC theta oscillation (4–8 Hz) in an active spatial navigation task where participants actively ambulated from one location to several other points while the position of a landmark and the starting location were updated. The results revealed theta power in the RSC to be pronounced during heading changes but not during translational movements, indicating that physical rotations induce human RSC theta activity. This finding provides a potential evidence of head-direction computation in RSC in healthy humans during active spatial navigation.

Similar content being viewed by others

Introduction

Spatial navigation is an essential human skill that helps individuals track their changes in position and orientation by integrating self-motion cues from linear and angular motion1. Spatial navigation involves several brain regions for spatial information processing2,3, including the retrosplenial complex (RSC)4, to translate spatial representations based on egocentric and allocentric reference frames5. Head direction (HD) cells that compute HD and orientation6 provide vital information for the translation of information based on distinctive spatial reference frames. HD cells have been found in several brain regions, including the parahippocampal7,8 and entorhinal regions9 as well as the thalamus10 and the RSC10,11,12. Theta oscillations have been described as an essential frequency underlying the computation of HD and spatial coding in grid cell models13,14,15,16 in actively orienting rodents. Due to its anatomical connections, the RSC is also a central hub in a human brain network underlying several cognitive functions, including spatial orientation. The RSC has a direct connection to V4 (occipital), the parietal cortex, and the hippocampus and indirect connections to the middle prefrontal cortex5. HD cells in the RSC encode both local and global landmarks simultaneously5, supporting the central role of the RSC in encoding and translating different spatial representations.

Several brain imaging studies using electroencephalography (EEG) to investigate the fast-paced time course of the neural basis of spatial cognitive processes have shown that the RSC translates between egocentric and allocentric spatial information17,18. The RSC works with the occipital and parietal cortices to translate egocentric visual-spatial information embedded in an egocentric (retinotopic) reference frame into an allocentric reference frame5,19. Most previous studies, however, were conducted in a stationary setup, and they did not investigate the neural mechanisms contributing to navigation in real-world environments, including motor efference, visual, proprioception, vestibular, and kinesthetic system information input or subject-driven allocation of attention20,21. During active navigation, proprioceptive and motor-related signals significantly contribute to the estimation of self-motion, leading to higher accuracy in estimating travel distance and self-velocity22,23,24,25. Importantly, heading changes in naturalistic navigation are associated with vestibular input, which, together with the visual system and proprioception, is the driving input for HD cells1,20,26. Although a few studies examined active spatial navigation in humans, their experimental designs did not reflect the brain dynamics associated with unrestricted near-real-life navigation experiences18,27, or they relied on specific patient populations28. In summary, there is little knowledge about the brain dynamics underlying spatial navigation in actively navigating human participants and how these dynamics subserve the computation and translation of spatial information embedded in distinct frames of reference for orientation.

In the present study, we investigated the brain dynamics of healthy human participants during active navigation. In an effort to overcome the restrictions of established imaging modalities, we adapted the Mobile Brain/Body Imaging (MoBI) approach29,30,31,32, allowing physical movement of the participants. Thus, we recorded high-density EEG synchronized to head-mounted virtual reality (VR) while participants physically performed a spatial navigation task. Participants tracked their location and orientation by using self-motion cues from the vestibular, proprioception, and kinesthetic systems as well as motor efferences. At the end of the trial, after traversing paths that included several turns and straight segments, participants pointed to previously encoded landmark locations (Fig. 1). Their brain dynamics were analysed using independent component analyses (ICA) on high-density EEG data and subsequent source reconstruction. This approach allowed us to assess the brain dynamics originating in or near the RSC during the active navigation, focusing on the effect of active locomotion on brain dynamics compared with established desktop setups. The results demonstrate that active movement through space significantly changes the preferred use of spatial reference frames. Furthermore, naturalistic navigation reveals strong theta synchronization in the RSC during navigation phases that require heading computation and a substantial covariation of alpha desynchronization with the accuracy in a landmark pointing task.

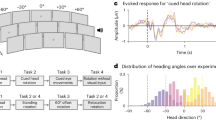

Experimental design. (A) At the beginning of the trial, the participants were given 4 seconds to remember a landmark position that appeared approximately 200 meters in front of them. They then performed two physical navigation tasks, walk1x and walk2x, each of which contained 2 to 3 random turns. After walk 1, they were also asked to remember a series of 3, 5, or 7 letters of the English alphabet (the number of letters chosen and the order in which the letters appeared were both random). Between the two walks and at the end of each trial, the participants were asked to point to the landmark location and their starting location and to confirm whether a randomly chosen letter appeared in the list of letters they had been asked to remember. Green squares indicate the landmark pointing task (R1, R2a, and R5a) and the starting point task (R2b and R5b). Red squares indicate the letter retrieval task. (B) Full participation in the experiment constituted 23 sessions, where each session consisted of four trials, each trial consisted of two walks, and each walk contained 2 to 3 turns in random directions. As such, each session involved a total of 20 turning points, shown as red dots. After 20 turns, the participant reset his or her location to dot number 0 before starting the next session to ensure that the total navigation segments stayed within the experimental space. The reference frame proclivity test (RFPT) was based on the participant’s response at dot number 12, where the answers for homing directions were clearly distinguishable between two the RFP strategies. Participants were considered egocentric or allocentric if their response was the left arrow or right arrow, respectively.

Results

Behavior

The participants were asked to follow were pre-defined navigation routes. First, they were shown a global landmark and asked to point to it. After the landmark disappeared, they followed a path with several turns and, after two or three changes in course, they were asked to point to the (now invisible) landmark. The navigation task resumed with two or three more turns, and the trial concluded by asking them to point to the invisible landmark a final time. The landmark pointing error (in degrees) response were first checked the normally distributed hypothesis. A Shapiro-Wilk test showed a significant departure from normality, W(102) = 0.907, p< \(10^{-5}\). We, then further checked the normality distribution of each number of turns (NT) condition with the results of W(17) = 0.918, p = 0.139 for NT0, W(17) = 0.739, p = 0.0.0003 for NT2, W(17) = 0.833, p = 0.006 for NT3, W(17) = 0.834, p = 0.0061 for NT4, W(17) = 0.836, p = 0.0065 for NT5, W(17) = 0.906, p = 2.261\(10^{-6}\) for NT6. The landmark pointing error (in degrees) was statistically significantly different at the different NT using the Friedman test, (\(\chi ^2\)(5)=53.34, p<.0001) (Fig. 2A). The pairwise Wilcoxon signed rank test (with false discovery rate-FDR corrected) between groups revealed statistically significant differences in landmark pointing error between NT0 and NT2 (p =.000046); NT0 and NT3 (p =.000046); NT0 and NT4 (p =.000046); NT0 and NT5 (p =.000046); NT0 and NT6 (p =.000046); NT2 and NT4 (p =.007); NT3 and NT4 (p =.00011); NT3 and NT6 (p =.003). The median error was 5.79 (degrees) with 0 turns, 29.63 after 2 turns, 32.76 after 3 turns, 45.57 after 4 turns, 44.94 after 5 turns, and 60.99 after 6 turns. The response data was then further transformed into a logarithmic scale. The Shapiro-Wilk test showed a fairly normal distribution (W(85)=0.97049, p =0.0483) of newly transformed data. Then, a mixed-effects model was used to test the effect of the number of turns to response behavior (using R software33, version 1.2.5033 with the lme4 package34. The landmark-pointing response (removed NT0) was used as the dependent variable, number of turns was used as fixed effect, and participant was used as a random effect. Significance was estimated using the lmerTest package35, which applies Satterthwaite’s method to estimate degrees of freedom and generate p-values for mixed models. The model formulated as follows: landmark pointing error number of turns + (1|Participant). There was significant main effect of number of turns (beta = 0.1116, SE = 0.0253, df = 68, t = 4.413, p = .000037), reflecting the effect of number of turns into the behavioral response (Fig. 2B).

The results of participant behaviour. (A) Participant behavior in the landmark pointing task (using R software33). The X axis indicates the number of turn points in the trial, the Y axis indicates the absolute error of the participants when they performed the landmark pointing task (the error was measured by the angular difference between the pointing vector and the participant to landmark vector) (*, **, ***, **** indicated for p <.05, p <.01, p <.001, p <.0001 respectively, FDR-corrected). (B) The result of mixed-effects model analysis (using lme434, and lmerTest package35), the regression was visualized by the blue line. (C) The RFPT results were in both the passive condition (stationary test with the tunnel paradigm) and active condition (based on the participant’s response at position 12, path 3 in the Fig. 1B). Three groups of strategies egocentric, mixed and allocentric were color coded in green, blue and red, respectively.

Retrosplenial complex (RSC) event-related spectral perturbation (ERSP). (A) Dipole locations of independent component (in or near the retrosplenial complex (RSC) cluster at the sagittal, coronal, and top view respectively and the corresponding mean of the scalp map. (B) The RSC ERSP with respect to the segment of turns from 1 to 6 turns. (C) The permutation test (n=2000, FDR-correlated) of the RSC ERSP in 6 segments.

Event-related spectral perturbation (ERSP)

Repeated k-means clustering of independent components (ICs) resulted in 25 clusters with centroids located in several structures of the brain including the frontal cortex, the left and right motor cortices, the parietal cortex, the RSC, and the occipital cortex. Focusing on power spectrum changes in the RSC cluster, we computed event-related spectral perturbation (ERSP) in the frequency range of 3 to 50 Hz (Fig. 3). Broadband alpha and beta desynchronization were prominent during the straight segments of navigation, replicating previous results from both passive and active navigation studies17,18,27. In addition, a prominent theta burst became apparent directly after each turn, i.e., at the time when people were computing heading changes before proceeding along the next straight path. Moreover, the theta burst was present during all turns, while the alpha and low beta desynchronization became more desynchronized as the number of turns increased (Fig. 4).

RSC ERSP correlated with participant performance in the first 10 percent (A) and mid 10 percent (B) of the segment length at both the participant and trial level respectively. The top figure shows the computed correlation coefficients (the number at the bottom right corner, red color indicated for statistical significance; *, **, ***, **** indicated for p <.05, p <.01, p <.001, p <.0001 respectively - FDR-corrected) between individual performance and RSC ERSP at participant level in different frequency band at theta (4-8 Hz), low Alpha (8-10 Hz), high Alpha (10-12 Hz), and beta (12-30 Hz). The row indicates the RFP strategy in the passive RFPT response: red indicates an allocentric, and blue indicates an egocentric strategy. The bottom figure shows the correlation coefficients computed between individual performance and RSC ERSP at the trial level in same the range of frequency as in the top figure. The color-coding indicates the RFP strategy; the lighter color in the same RFP strategy group indicates an active allocentric response, while the darker color indicates an active egocentric trial response.

Neural correlations with spatial updating

Heading computations

Next, we calculated the correlations between power modulations in the RSC and the landmark pointing errors. For a comprehensive analysis, we divided the power modulations into different frequency bands and the pointing errors by allocentric or egocentric reference frames for the entire course of the navigation task. Further, to assess the impact of rotational compared with translational movement on RSC spectral modulations, we extracted the first 10\(\%\) of each segment, which included the turn, and the middle 10\(\%\) of each segment (50-60\(\%\)), where participants were only moving in a straight line, and calculated the correlations between power in different bands and pointing errors with just these segments.

Participant-level analysis

The allocentric group showed a significant positive correlation between errors in the landmark pointing tasks and power changes in the following broadband frequency ranges: theta (r(34)=0.48, p =.00048), low alpha (r(34)=0.5, p =.00051), high alpha (r(34)=0.46, p =.00047) and beta band (r(34)=0.4, p =.0012) (FDR-corrected) (see Fig. 5A). Egocentric participants demonstrated a negative correlation between the performance pointing task (error) and power changes in the following frequency ranges: low alpha (r(22)=-0.34, p =.0.006), and high alpha (r(22)=-0.24, p =.011) (FDR-corrected)(Fig. 5A). The inverse pattern of correlation coefficients of power and individual performance error for allocentric and egocentric participants was consistent across the entire navigation phase (10x10\(\%\) time bins), revealing that RSC activity depends on their stationary reference frames used to encode and integrate the spatial information.

Trial-level analysis

In contrast to previous stationary navigation studies18,27, participants moved actively through the environment, receiving sensory feedback from not only the visual system but also converging sensory evidence about changes in position and orientation from their vestibular and proprioceptive systems. This phenomenon opens the possibility that the participants’ preferred use of spatial reference frames might change depending on the sensory input available to them21,36. Therefore, we further investigated the relationship between individual performance error and power changes in RSC on a single-trial level. The pointing response at the end of the path required a binary decision regarding whether the homing location was located to the left or right with respect to the current position and orientation of the navigators (at point 12, Fig. 1B). The results of the single-trial reference frame classification demonstrated that a large portion of participants preferentially used an egocentric reference frame in stationary setups but switched to an allocentric reference frame with active navigation (Fig. 2C). In contrast, participants with a preference for using an allocentric reference frame in stationary setups kept the same allocentric reference frame in the active navigation scenario (Fig. 2C). Importantly, whenever participants used an allocentric reference frame in the pointing task, irrespective of their habitual proclivity toward an egocentric or an allocentric reference frame in stationary settings, there was a positive correlation between the pointing error and power changes in the lower alpha band (r(100)=0.37, p =.000037 for stationary allocentric; r(44)=0.17, p =.015 for stationary egocentric), higher alpha band (r(100)=0.13, p =.013 for stationary allocentric; r(44)=0.13, p =.016 for stationary egocentric), and beta band (r(100)=0.34, p =.00009 for stationary allocentric; r(44)=0.0012, p =.0.00015 for stationary egocentric). However, pointing based on an egocentric reference frame (in physical navigation) still revealed a negative covariation with power in the higher alpha band (r(12)=-0.10, p =.024) (dark-blue color, Fig. 5A) and the beta band (r(12)=-0.86, p =.000035 for stationary egocentric). Thus, the single-trial reference frame analyses clearly revealed a systematic and more pronounced desynchronization in the alpha band whenever an allocentric reference frame was used to respond to a homing challenge.

Discussion

Spatial navigation is vital to purposeful movement as it requires a representation of our position and orientation in space as well as our homing trajectories. In this study, we explored these processes through a typical stationary navigation task but also a physical navigation task where the participants actually moved through a large virtual space while we recorded and analyzed their brain dynamics using a MoBI approach29,30,31,37. By using this modified MoBI approach provided this first-ever opportunity to describe theta synchronization in the RSC during heading computation in actively rotating navigators. From our analyses, we find that alpha desynchronization in the RSC occurs when retrieving spatial information from an allocentric reference frame and translating it into an egocentric during active navigation.

Remarkably, navigators switched from their preferred egocentric reference frame to an allocentric reference frame when they were allowed to actively move through space. Thus, the reference frame proclivity (RFP) observed in stationary navigation tasks is not consistent with that observed in active navigation tasks, including naturally occurring sensory feedback from the vestibular, proprioception, and kinesthetic systems. More specifically, the majority of egocentric navigators switched to an allocentric reference frame during physical navigation, while the allocentric group consistently used their preferred allocentric strategy. To anchor a cognitive map with the environment, navigators can use local and global landmarks (e.g., a mailbox, a building) and/or self-motion cues. In our navigation scenario, we gave the participants a single prominent landmark only at the very beginning of a trial that was invisible for the rest of the navigation task. Participants then moved through space, walking straight toward a point and then locating and changing directions several times while moving away from their starting location (Fig. 1). Consequently, the participants tended to derive their orientations and positions in space by converging multiple sensory inputs to represent the original global landmark position. Most participants, including the egocentric strategy group, responded as allocentric navigators in the homing direction test (Fig. 2C). This finding suggests that human spatial navigation strategies are quite flexible, exploit multisensory information, and depend on the particular type of response that is required at the given moment. In contrast to previous desktop experiments asking for a simple homing response that demonstrated a preference for distinct reference frames17,18,38 the current task showed that the majority of participants preferred an allocentric reference frame. Having to constantly update one’s own position as well as the position of other entities in space (landmark, home) likely fosters the use of an allocentric reference frame.

Moreover, the behavioral results in the landmark pointing task (Fig. 2) might follow the leaky integrator model39,40. This model assumes that the encoded orientation, as the variable state, is incremented with movement by multiplying the actual orientation gain factor. This process tends to decay by an orientation-dependent leaky factor. In our trials, there were no visible landmarks within the navigation task. Therefore, participants could not use external landmarks to anchor their cognitive map. Instead, they had to derive their orientation with each turn based on idiothetic information only. Thus, errors accumulated at each turning point (Fig. 2) and increased somewhat proportionally to the number of turns in the scenario. In other words, the errors in the landmark pointing task (at R1, R2a, and R5a, Fig. 1) are correlated to the number of turning points (Fig. 2).

Using a spatial filter approach and subsequent clustering of independent components, we demonstrate the RSC to reflect specific aspects of the navigation task. EEG contains subcortical activity and allows to localize deeper brain structures41. Previous desktop studies have already revealed navigation-related activity in or near the RSC17,18,42. However, even though theta oscillation is an important mechanism for computing head orientation and providing a grid cell network13,14,16, it has not been reported in human brain imaging studies using stationary setups. Notably, we found a strong theta synchronization in the RSC during periods of heading changes, which indicates that physical rotations induce RSC activity (Fig. 3). This has not been reported in previous stationary studies. This theta oscillation was stably observed with each turn by navigators along the path. Through MoBI, our participants were able to make use of naturally occurring spatial information, such as motor efference and cues from the vestibular, proprioception, and kinesthetic systems. Therefore, participants could extract their head direction from HD cells activity10,43, which is often eliminated in stationary setups. In addition, there is evidence that the firing rate of HD cells decreases with restraints during navigation. Compared with those in active locomotion, loosely restrained rats in passive movement showed a nearly 24\(\%\) reduction in the peak firing rates of HD cells44,45, while tightly restrained rats46,47 showed near or complete suppression. The suppression of the HD cell firing rate is due to disruption of the vestibular system, which is the essential signal for estimating head direction20,26,48. In previous human spatial navigation studies, the population was limited to stationary participants. Thus, the vestibular information for HD signals may have been eliminated21. In this study, the participants received vestibular information in addition to all other naturally occurring sensory modalities while turning and walking. Accordingly, sufficient multi-modal sensory information was available for them to compute their head directions. Therefore, theta oscillation occurred after each turning onset (Fig. 3), indicating heading computation activity in the RSC, providing evidence of heading computation in healthy participants in a physical spatial locomotion study replicating similar theta activity in participants rotating on the spot49.

Furthermore, we replicated RSC alpha suppression, which has been previously observed in spatial learning for maintaining orientation in both passive17,18,42,50 and active navigation tasks27. This alpha desynchronization might indicate ongoing spatial transformations from egocentric to allocentric coordinates and vice versa17. We expected to estimate the robustness of the RSC feature in the navigational task. Therefore, the letter task was added in the middle of the trial (Fig. 1), which mimicked the typical situations in real-life navigation. Interestingly, both theta synchronization and alpha desynchronization showed the similar pattern across six walking segments (Fig. 3), indicating a navigation-related activity in the RSC activity. Although Kim et. al51 demonstrated that RSC activity is correlated with behavioral performance in three-dimensional space, how the use of distinct reference frames during navigation impacts RSC activity was still unclear. Remarkably, in this study, we found that RSC activity systematically covaried with behavioral responses, and that this correlation depended on the reference frame used. The use of an allocentric strategy revealed a positive correlation of individual performance and alpha power, while an egocentric strategy was associated with a negative correlation (Fig. 5). This general pattern was confirmed using a single-trial analysis approach that identified the reference frame underlying the single-trial pointing response of participants irrespective of their general reference frame proclivity. The results clearly indicate that only the use of an allocentric response and not the use of an egocentric response was associated with desynchronization in alpha band activity.

Overall, we exposed some of the behaviors and neural dynamics associated with physical navigation. We found that theta oscillations originating from the RSC are present during human navigation, more prominent while computing direction changes, and consistently synchronized irrespective of the length of the total path walked. Further, the results suggested that alpha desynchronization is essential for translating between spatial reference in active and passive navigation. As the first evidence of how our sense of direction works in healthy moving humans, these findings demonstrate that, for some, our brain dynamics are not the same when simulating navigating through space as opposed to actually moving through space.

Materials and methods

Participants

Eighteen healthy adults (age 27.8±4.2, 2 females) participated in this experiment. All participants reported normal or corrected-to-normal vision. Each received 60 for their participation. The protocol was approved by the University of Technology Sydney (UTS) (grant number: UTS HREC REF NO. ETH17-2095). All the experiments were performed in accordance with relevant guidelines and regulations. Participants were given an informed consent form and signed before the main experiment.

Experiment design and tasks

Reference frame proclivity test (RFPT)

Prior to the main experiment, the participants completed an online RFPT36,38 to classify them as allocentric, egocentric, or mixed-strategy navigators. In the test, participants had to navigate through a tunnel on the flat screen monitor that included direction changes of various angles in the horizontal plane. When they reached the end, they were asked to select which of two homing vectors pointed back to the start of the tunnel. This choice, made over 40 trials, determined their navigation style: egocentric or allocentric if they consistently used that reference frame in at least 70\(\%\) of the trials, or mixed-strategy navigators if they switched between frames. Of the 18 participants, five were egocentric navigators, seven were allocentric, and six were mixed.

Main experiment design

The experiment comprised a series of straightforward physical navigation exercises interspersed with spatial encoding/retrieval tasks but complicated by letter encoding/retrieval tasks to impose an additional cognitive workload on the participants. Each participant first performed four learning trials to familiarize themselves with the tasks and instructions and subsequently completed 23 sessions, with each session consisting of four trials, over the course of the full experiment. Each trial proceeded as follows. First, participants were shown a global landmark and given 4 seconds to remember its position before it disappeared. Participants were then given the following instruction: “Point to the landmark location” . They responded by pointing a controller at their reckoned location and clicking the hair-trigger (R1, Fig. 1A). A beep sounded to indicate their response had been registered and that they that should now start the first navigation task—straight walking with two to three direction changes over the walk while keeping track of their location in the space. More specifically, participants walked forward toward a floating red sphere at eye height, which disappeared once they reached it. A new red sphere then appeared, showing the next direction and distance and disappearing once they reached that, and so on. In Fig. 1A, this task is denoted as “walk 1x” , where 1 indicates the first walk and x indicates the number of red spheres. Once participants reached the last red sphere, they stopped walking and the text “Attention” appeared in front of them for 3 seconds, signaling the first spatial retrieval task was about to begin. First, participants were instructed to “Point to the landmark location” by pointing their controller to the landmark location as they remembered it and clicking the hair-trigger (R2a, Fig. 1A). Next, two homing arrows appeared in front of them—one pointing left, the other right—and they were asked: “Where is the starting location?” . Responses were given by pointing their controller at one of the arrows and clicking the trigger (R2b, Fig. 1A). The letter encoding task to impose additional cognitive burden followed. The participants were shown a series of 3, 5, or 7 letters of the English alphabet at one-second intervals between letters and asked to remember them. The number of letters chosen and the order in which the letters appeared were both random. Three levels of difficulty were included to avoid familiarity with the task, ensuring the cognitive load remained high. Then, three seconds after the last letter appeared, participants were shown a random letter and asked whether or not that letter belonged to their letter list. Clicking the trigger indicated yes; pressing the touchpad indicated no (R3, Fig. 1A). Participants then had 2 seconds of rest before starting the second walk. A beep sound signaled the beginning of the second navigation task (walk 2x, Fig. 1A), which followed the same straight walk to the red sphere format as the first. However, this time, the participants had to remember their letter list as well as keep track of their orientation toward the landmark location and starting position. When the second walk was finished, the participants were asked to do three things: first, to confirm whether or not a random letter belonged to their letter list (R4, Fig. 1A); second, to point to the landmark location; and, third, to point to the starting location (R5a and R5b, Fig. 1A). The next trial started when the participant indicated their readiness by clicking both bottom grips on the controller. The maths of four trials with 2 to 3 random turns in each of two walks meant that each session consisted of a total of 20 turns, as shown in Fig. 1B. After 20 turns, the participant reset his or her location to dot number 0 before starting the next session to ensure that the total navigation segments were within the experimental space.

Data recordings

The scenario was developed in Unity (version 2017.3) with the VRTK plug-in and performed in a VR environment using a head-mounted display (HTC Vive Pro; 2x 1440 x 1600 resolution, 90 Hz refresh rate, 110 field of view). All data streams from the EEG cap, and head-mounted display were synchronized by the Lab Streaming Layer52. The EEG data were recorded from 64 active electrodes placed equidistantly on an elastic cap (EASYCAP, Herrsching, Germany) with a sampling rate of 500 Hz (LiveAmps System, Brain Products, Gilching, Germany). The data were referenced to the electrode located closest to the standard position, FCz. The impedance of all sensors was kept below 5 kOhm.

EEG analysis

Pre-processing

All pre-processing steps were performed using MABLAB version 2018a (Mathworks Inc., Natick, Massachusetts, USA) and custom scripts based on EEGLAB version 14.1.253 (Fig. 6). The raw EEG data were downsampled to 250 Hz before applying a bandpass filter (1-100 Hz). Line noise (50 Hz) and associated harmonics were removed using the cleanline function in EEGLAB. Dead channels were then removed based on flatline periods (threshold=3 seconds), correlations with other channels (threshold=0.85), and abnormal data distributions (standard deviation=4). Missing channels were interpolated by spherical splines before re-referencing to the average of all channels. Noisy portions of continuous data were removed through automatic continuous data cleaning based on the spectrum value (threshold=10 dB) and power with a criteria of maximum bad channels (maximum fraction of bad channels=0.15) and relative to a robust estimate of the clean EEG power distribution in the channel ([minimum, maximum]=[-3.5 5]). Then, adaptive mixed independence component analysis (AMICA)54,55 was used to decompose the data into a series of statistically maximally independent components (ICs) with the time source as the unit. The approximate source location of each IC was computed using the equivalent dipole models in EEGLAB’s DIFIT2 routines56. Last, the spatial filter matrix and dipole models were copied back to the pre-processed but uncleaned EEG data (no cleaning in the time domain) for further analysis (Fig. 6).

Event-related spectral perturbation (ERSP)

Epoched data sets for each walking condition (walk1x or walk2x) were extracted at the onset of navigation for a length of 14.5 seconds, including a baseline period of 2.5 seconds prior. Bad epochs were identified and removed in the sensor space (for strongly affected head and body motion artifacts) and subsequently in the component space (for artifact noise in the ICs). (i) In the sensor space, the bad epochs were labeled based on the epoch mean, standard deviation (std=5), absolute raw value (threshold value=1000 V), and kurtosis activity (refer to the pop\(\_\)autorej.m function in EEGLAB). From 2714 epochs, 12.5\(\%\) were deemed bad and removed. (ii) In the component space, the time-warped ERSP patterns for each epoch were calculated by computing single spectrograms for each IC and single trial based on the newtimef() function using the standard parameters. The timewarp option was used to linearly warp each epoch to a standard length based on the median time point of sphere collision events. The time period before the onset of the active navigation at the beginning of the trial was used as the baseline to estimate the ERSP for each epoch with divisive baseline correction57 to minimize single-trial noise. Epochs were then identified as bad if the z-score of the ERSP epoch was larger than 3 standard deviations from the median of all ERSP epochs (see the power of this method in Figs. 7, 8 and 9). Approximately 6\(\%\) of the epochs were bad and removed based on their ERSP in the component space.

The RSC ERSP before and after noise removal. The RSC ERSP before and after noise removal at the participant level. The left figure shows the average ERSP for participants before noise removal. The right figure shows the average ERSP for participants after noise removal at: (A) one egocentric participant; and (B) one allocentric participant.

Clustering

We first clustered the ICs based on the conventional EEGLAB k−means method. Then, we repeated the clustering process 10000 times before performing an evaluation to identify the best cluster of interest (COI) based on the region of interest (ROI) cluster centroid49. All ICs with an RV of less than 30\(\%\) for all participants were grouped based on their dipole locations (weight=6), ERSPs (weight=3), mean log spectra (weight=1), and scalp topography (weight=1). Then, the weighted IC measures were summed and compressed with principal component analysis (PCA), resulting in a 10-dimensional vector, followed by the k−mean method (with 25 clusters). The target cluster centroid in Talairach space (RSC, x=0, y=-45, z=10) was evaluated from 10000 clustering results based on the score of each cluster solution, including: (i) the number of participants (weight=2); (ii) ratio of the number of ICs per participant (weight=-3); (iii) cluster spreading (mean squared distance of each IC to the cluster centroid) (weight=-1); (iv) mean RV of the fitted dipoles (weight=-1); (v) distance of the cluster centroid to the ROI (weight=-3); and (vi) Mahalanobis distance to the median distribution of the 10000 solutions (weight=-1). The final COI score of -1.7 was derived from 15 participants, 29 ICs, a spread of 677, a mean residual variance (RV) of 11.76\(\%\), and a distance of 7.3 units in Talairach space. In the ERSP group-level analysis, the ERSP at COI was calculated first at the IC level, then at the participant level, and finally at the group level. The time-frequency data of all ICs of the same participant were averaged. Then, the ERSPs of all participants were averaged for the final ERSP at the group level. The statistical test for ERSPs was performed by a permutation test with 2000 permutations and a multiple comparison correction using the false discovery rate (FDR, \(\alpha\)=0.05).

Effective brain connectivity

We further evaluated the effect of the muscle cluster activity on the brain cluster activity. First, the ERSP of some brain clusters was plotted (Fig. 8). We then used the Source of Information Flow Toolbox (SIFT) from the EEGLAB-compatible toolbox to estimate the effective connectivity between clusters58. The multi-channel EEG data was modeled by vector autoregressive (VAR) with the model order p. Then, the Akaike information criterion (AIC) was used to evaluate the model fitting parameter p. Next, the model fitting was validated by three tests (i) whiteness test, (ii) percentage of consistency, and (iii) stationary59. And finally, the granger causality was calculated to estimate the information flow between clusters (Fig. 9).

References

McNaughton, B. L., Battaglia, F. P., Jensen, O., Moser, E. I. & Moser, M.-B. Path integration and the neural basis of the’cognitive map’. Nat. Rev. Neurosci. 7, 663 (2006).

Doeller, C. F., Barry, C. & Burgess, N. Evidence for grid cells in a human memory network. Nature 463, 657–661 (2010).

Ekstrom, A. D. et al. Cellular networks underlying human spatial navigation. Nature 425, 184 (2003).

Epstein, R. A. Parahippocampal and retrosplenial contributions to human spatial navigation. Trends Cognit. Sci. 12, 388–396 (2008).

Vann, S. D., Aggleton, J. P. & Maguire, E. A. What does the retrosplenial cortex do?. Nat. Rev. Neurosci. 10, 792–802 (2009).

Clark, B. J., Bassett, J. P., Wang, S. S. & Taube, J. S. Impaired head direction cell representation in the anterodorsal thalamus after lesions of the retrosplenial cortex. J. Neurosci. 30, 5289–5302 (2010).

Bellmund, J. L., Deuker, L., Schröder, T. N. & Doeller, C. F. Grid-cell representations in mental simulation. Elife 5, e17089 (2016).

Vass, L. K. & Epstein, R. A. Common neural representations for visually guided reorientation and spatial imagery. Cerebral Cortex 27, 1457–1471 (2017).

Chadwick, M. J., Jolly, A. E., Amos, D. P., Hassabis, D. & Spiers, H. J. A goal direction signal in the human entorhinal/subicular region. Current Biol. 25, 87–92 (2015).

Shine, J. P., Valdés-Herrera, J. P., Hegarty, M. & Wolbers, T. The human retrosplenial cortex and thalamus code head direction in a global reference frame. J. Neurosci. 36, 6371–6381 (2016).

Baumann, O. & Mattingley, J. B. Medial parietal cortex encodes perceived heading direction in humans. J. Neurosci. 30, 12897–12901 (2010).

Marchette, S. A., Vass, L. K., Ryan, J. & Epstein, R. A. Anchoring the neural compass: coding of local spatial reference frames in human medial parietal lobe. Nat. Neurosci. 17, 1598 (2014).

Brandon, M. P. et al. Reduction of theta rhythm dissociates grid cell spatial periodicity from directional tuning. Science 332, 595–599 (2011).

Koenig, J., Linder, A. N., Leutgeb, J. K. & Leutgeb, S. The spatial periodicity of grid cells is not sustained during reduced theta oscillations. Science 332, 592–595 (2011).

Maidenbaum, S., Miller, J., Stein, J. M. & Jacobs, J. Grid-like hexadirectional modulation of human entorhinal theta oscillations. Proc. Natl. Acad. Sci. 115, 10798–10803 (2018).

Winter, S. S., Clark, B. J. & Taube, J. S. Disruption of the head direction cell network impairs the parahippocampal grid cell signal. Science 347, 870–874 (2015).

Gramann, K. et al. Human brain dynamics accompanying use of egocentric and allocentric reference frames during navigation. J. Cognit. Neurosci. 22, 2836–2849 (2010).

Lin, C.-T., Chiu, T.-C. & Gramann, K. EEG correlates of spatial orientation in the human retrosplenial complex. NeuroImage 120, 123–132 (2015).

Lin, C.-T., Chiu, T.-C., Wang, Y.-K., Chuang, C.-H. & Gramann, K. Granger causal connectivity dissociates navigation networks that subserve allocentric and egocentric path integration. Brain Res. 1679, 91–100 (2017).

Cullen, K. E. & Taube, J. S. Our sense of direction: progress, controversies and challenges. Nat. Neurosci. 20, 1465 (2017).

Gramann, K. Embodiment of spatial reference frames and individual differences in reference frame proclivity. Spatial Cognit. Comput. 13, 1–25 (2013).

Becker, W., Nasios, G., Raab, S. & Jürgens, R. Fusion of vestibular and podokinesthetic information during self-turning towards instructed targets. Exp. Brain Res. 144, 458–474 (2002).

Frissen, I., Campos, J. L., Souman, J. L. & Ernst, M. O. Integration of vestibular and proprioceptive signals for spatial updating. Exp. Brain Res. 212, 163 (2011).

Jürgens, R. & Becker, W. Perception of angular displacement without landmarks: evidence for bayesian fusion of vestibular, optokinetic, podokinesthetic, and cognitive information. Exp. Brain Res. 174, 528–543 (2006).

Telford, L., Howard, I. P. & Ohmi, M. Heading judgments during active and passive self-motion. Exp. Brain Res. 104, 502–510 (1995).

Stackman, R. W., Golob, E. J., Bassett, J. P. & Taube, J. S. Passive transport disrupts directional path integration by rat head direction cells. J. Neurophysiol. 90, 2862–2874 (2003).

Ehinger, B. V. et al. Kinesthetic and vestibular information modulate alpha activity during spatial navigation: a mobile EEG study. Front. Human Neurosci. 8, 71 (2014).

Bohbot, V. D., Copara, M. S., Gotman, J. & Ekstrom, A. D. Low-frequency theta oscillations in the human hippocampus during real-world and virtual navigation. Nat. Commun. 8, 14415 (2017).

Gramann, K. et al. Cognition in action: imaging brain/body dynamics in mobile humans. Rev. Neurosci. 22, 593–608 (2011).

Gramann, K., Jung, T.-P., Ferris, D. P., Lin, C.-T. & Makeig, S. Toward a new cognitive neuroscience: modeling natural brain dynamics. Front. Human Neurosci. 8, 444 (2014).

Makeig, S., Gramann, K., Jung, T.-P., Sejnowski, T. J. & Poizner, H. Linking brain, mind and behavior. Int. J. Psychophysiol. 73, 95–100 (2009).

Lin, C.-T. & Do, T.-T.N. Direct-sense brain-computer interfaces and wearable computers. IEEE Trans. Syst. Man Cybern. Syst 51, 298–312 (2021).

R Core Team. R: A Language and Environment for Statistical Computing. R Foundation for Statistical Computing, Vienna, Austria, 201 (2020).

Bates, D., Mächler, M., Bolker, B. & Walker, S. Fitting linear mixed-effects models using lme4. J. Stat. Softw. 67, 1–48 (2015).

Kuznetsova, A. et al. lmertest package: tests in linear mixed effects models. J. Stat. Softw. 82, 1–26 (2017).

Goeke, C. et al. Cultural background shapes spatial reference frame proclivity. Sci. Rep. 5, 11426 (2015).

Jungnickel, E., Gehrke, L., Klug, M. & Gramann, K. Mobi–mobile brain/body imaging. In Neuroergonomics, 59–63 (Elsevier, 2019).

Gramann, K., Müller, H. J., Eick, E.-M. & Schönebeck, B. Evidence of separable spatial representations in a virtual navigation task. J. Exp. Psychol. Human Percept. Perform. 31, 1199 (2005).

Lappe, M., Jenkin, M. & Harris, L. R. Travel distance estimation from visual motion by leaky path integration. Exp. Brain Res. 180, 35–48 (2007).

Harris, M. A. & Wolbers, T. Ageing effects on path integration and landmark navigation. Hippocampus 22, 1770–1780 (2012).

Seeber, M. et al. Subcortical electrophysiological activity is detectable with high-density EEG source imaging. Nat. Commun. 10, 1–7 (2019).

Chiu, T.-C. et al. Alpha modulation in parietal and retrosplenial cortex correlates with navigation performance. Psychophysiology 49, 43–55 (2012).

Chen, X., DeAngelis, G. C. & Angelaki, D. E. Flexible egocentric and allocentric representations of heading signals in parietal cortex. Proc. Natl. Acad. Sci. 115, E3305–E3312 (2018).

Zugaro, M. B., Tabuchi, E., Fouquier, C., Berthoz, A. & Wiener, S. I. Active locomotion increases peak firing rates of anterodorsal thalamic head direction cells. J. Neurophysiol. 86, 692–702 (2001).

Bassett, J. P. et al. Passive movements of the head do not abolish anticipatory firing properties of head direction cells. J. Neurophysiol. 93, 1304–1316 (2005).

Taube, J. S. Head direction cells recorded in the anterior thalamic nuclei of freely moving rats. Journal of Neuroscience 15, 70–86 (1995).

Knierim, J. J., Kudrimoti, H. S. & McNaughton, B. L. Place cells, head direction cells, and the learning of landmark stability. J. Neurosci. 15, 1648–1659 (1995).

Muir, G. M. et al. Disruption of the head direction cell signal after occlusion of the semicircular canals in the freely moving chinchilla. J. Neurosci. 29, 14521–14533 (2009).

Gramann, K., Hohlefeld, F. U., Gehrke, L. & Klug, M. Heading computation in the human retrosplenial complex during full-body rotation. BioRxiv 417972, (2018).

Plank, M., Müller, H. J., Onton, J., Makeig, S. & Gramann, K. Human EEG correlates of spatial navigation within egocentric and allocentric reference frames. In International Conference on Spatial Cognition, 191–206 (Springer, 2010).

Kim, M. & Maguire, E. A. Encoding of 3d head direction information in the human brain. Hippocampus 29, 619–629 (2019).

Kothe, C. Lab streaming layer (LSL). (2014).

Delorme, A. & Makeig, S. EEGLAB: an open source toolbox for analysis of single-trial EEG dynamics including independent component analysis. J. Neurosci. Methods 134, 9–21 (2004).

Delorme, A., Palmer, J., Onton, J., Oostenveld, R. & Makeig, S. Independent EEG sources are dipolar. PloS one 7, 30135 (2012).

Palmer, J. A., Kreutz-Delgado, K. & Makeig, S. Super-Gaussian mixture source model for ICA. In International Conference on Independent Component Analysis and Signal Separation, 854–861 (Springer, 2006).

Oostenveld, R. & Oostendorp, T. F. Validating the boundary element method for forward and inverse EEG computations in the presence of a hole in the skull. Human Brain Map. 17, 179–192 (2002).

Grandchamp, R. & Delorme, A. Single-trial normalization for event-related spectral decomposition reduces sensitivity to noisy trials. Front. Psychol. 2, 236 (2011).

Delorme, A. et al. EEGLAB, SIFT, NFT, BCILAB, and ERICA: new tools for advanced EEG processing. Comput. Intell. Neurosci. (2011).

Do, T.-T. N., Wang, Y.-K. & Lin, C.-T. Increase in brain effective connectivity in multitasking but not in a high-fatigue state. IEEE Trans. Cognit. Develop. Syst. (2020).

Acknowledgements

This work was supported in part by the Australian Research Council (ARC) under discovery grant DP180100670, DP180100656, and DP210101093. Research was also sponsored in part by the Australia Defence Innovation Hub under Contract No. P18-650825, US Office of Naval Research Global under Cooperative Agreement Number ONRG - NICOP - N62909-19-1-2058, and AFOSR – DST Australian Autonomy Initiative agreement ID10134. We also thank the NSW Defence Innovation Network and NSW State Government of Australia for financial support in part of this research through grant DINPP2019 S1-03/09. We thank H.T. Chen and A.K. Singh for their useful discussions, C.A. Tirado for help with the virtual reality programming, and all the participants for giving their time for this study.

Author information

Authors and Affiliations

Contributions

T-T.N.D., K.G. and C-T.L. designed the experiment. T-T.N.D acquired and analyzed the data together with K.G. T-T.N.D., C-T.L and K.G. wrote the manuscript. All authors discussed the results and contributed edits to the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Do, TT.N., Lin, CT. & Gramann, K. Human brain dynamics in active spatial navigation. Sci Rep 11, 13036 (2021). https://doi.org/10.1038/s41598-021-92246-4

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-021-92246-4

This article is cited by

-

Multimodal fusion for anticipating human decision performance

Scientific Reports (2024)

-

Electrophysiological signatures of veridical head direction in humans

Nature Human Behaviour (2024)

-

Guiding Human Navigation with Noninvasive Vestibular Stimulation and Evoked Mediolateral Sway

Journal of Cognitive Enhancement (2024)

-

Investigating the different domains of environmental knowledge acquired from virtual navigation and their relationship to cognitive factors and wayfinding inclinations

Cognitive Research: Principles and Implications (2023)

-

Mobile cognition: imaging the human brain in the ‘real world’

Nature Reviews Neuroscience (2023)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.