Abstract

Obesity is a serious medical condition highly associated with health problems such as diabetes, hypertension, and stroke. Obesity is highly associated with negative emotional states, but the relationship between obesity and emotional states in terms of neuroimaging has not been fully explored. We obtained 196 emotion task functional magnetic resonance imaging (t-fMRI) from the Human Connectome Project database using a sampling scheme similar to a bootstrapping approach. Brain regions were specified by automated anatomical labeling atlas and the brain activity (z-statistics) of each brain region was correlated with body mass index (BMI) values. Regions with significant correlation were identified and the brain activity of the identified regions was correlated with emotion-related clinical scores. Hippocampus, amygdala, and inferior temporal gyrus consistently showed significant correlation between brain activity and BMI and only the brain activity in amygdala consistently showed significant negative correlation with fear-affect score. The brain activity in amygdala derived from t-fMRI might be good neuroimaging biomarker for explaining the relationship between obesity and a negative emotional state.

Similar content being viewed by others

Introduction

Obesity is defined as a state of excessive accumulation of body fat and it is a worldwide issue affecting billions of people as it might cause negative health problems such as diabetes, hypertension, and stroke1. In addition to physical problems, strong links between obesity and emotional states have been demonstrated in previous studies2,3,4. Using a questionnaire, Ozier et al. found a strong correlation between obesity and emotion- and stress-induced eating behaviors2. Previous studies found that feelings of anger, loneliness, and disgust were highly linked to eating disorders and obesity, and thus the negative emotional states should be managed properly to prevent and treat eating disorders and obesity3, 4. These studies indicated that negative emotional states were related to obesity and they emphasized that understanding the cause of psychological problems that affect obesity was necessary2,3,4.

The previous non-neuroimaging studies largely depended on the questionnaires subject to large individual-level variations. Neuroimaging analysis can provide quantitative information of brain function and thus can complement existing non-neuroimaging studies5,6,7,8. It is shown that emotional states such as fear, anger, and sadness are highly associated with changes in brain activity5, 6. Previous studies adopted functional magnetic resonance imaging (fMRI) technique and found altered activations in amygdala, insula, prefrontal cortex, anterior cingulate cortex, and hippocampus during emotional stimuli5, 6. Recent obesity-related studies have utilized neuroimaging to detect structural or functional brain alterations in people with obesity compared to people with healthy weight7, 8. In these studies, reward-, emotion-, and cognitive control-related brain regions (i.e., orbitofrontal cortex, amygdala, hippocampus, and insula) showed significant functional differences between people with healthy weight and obesity7, 8. However, the finding that emotion-related brain regions show differences between people with healthy weight and obesity based on neuroimaging does not imply that the brain activity in those regions is directly related to emotional states. Most existing obesity related neuroimaging studies aimed to find brain regions that had different brain activity between people with healthy weight and obesity and they did not link emotional states with regional brain activity which this study aimed to accomplish7, 8. As there is scarcity of neuroimaging studies that quantitatively link brain function and emotional states in people with obesity, we did not have a priori hypothesis regarding what brain regions to explore. In this study, we adopted the neuroimaging analysis to reveal associative links between brain activity and emotion scores for certain brain regions selected from a set of regions that were related to obesity.

In this study, we used emotion task fMRI (t-fMRI) and hypothesized that regional changes in brain activity would be linked with emotional states. The emotion task was designed to measure the ability to recognize the visual stimuli of angry or fearful facial expression9,10,11. We first sought to find regional imaging features related to obesity. We then quantitatively linked identified neuroimaging features with negative emotional states measured using the NIH toolbox12, 13.

Results

Identification of obesity-related regions from brain activity

A total of 196 participants who performed the emotion task10, 11 were randomly selected to have matched number of sample size and gender ratio among groups of healthy weight (HW), overweight (OW), class 1 obesity (OB1), and class 2 or 3 obesity (OB23) using a sampling scheme similar to a bootstrapping approach for 1,000 times. Participant-level brain activity during emotion task was measured using FSL software (see Methods section)14. The brain activity was quantified using z-statistics and they were considered as quantitative imaging features. Brain regions were specified by automated anatomical labeling (AAL) atlas via image registration15. The brain regions that consistently showed significant correlation (mean p < 0.05, false discovery rate (FDR) corrected) from 1,000 sets of samples were the left hippocampus, amygdala, and inferior temporal gyrus (mean r = −0.2240, mean p = 0.0322; mean r = −0.2017, mean p = 0.0468; mean r = −0.2180, mean p = 0.0406, FDR corrected, respectively) (Fig. 1). The correlation between brain activity and BMI showed negative correlation which implied that the brain activity in people with healthy weight was higher than that in people with obesity.

Brain regions that consistently showed significant correlation between brain activity features (z-statistics) and BMI for 1,000 times. The histograms of the r- and p-values were reported in the upper rows and 3D rendered version of the identified regions were shown in the bottom row. The p-values were FDR corrected ones.

Associations between imaging features and emotion-related clinical scores

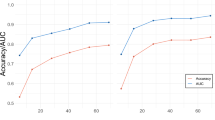

The brain activity of the identified clusters was quantified using z-statistics and they were considered as quantitative imaging features. The brain activity features (z-statistics) were stacked across randomly selected participants and they were correlated with emotion-related clinical scores measured using NIH toolbox for 1,000 times (see Methods section)12, 13. Only the fear-affect score showed significant correlation with brain activity features in amygdala (mean r = −0.1433, mean p = 0.0320, Holm-Bonferroni corrected) (Table 1 and Fig. 2). No brain activity features for hippocampus and inferior temporal gyrus showed significant correlation with emotion-related clinical scores. We performed additional correlation analysis between BMI and emotion-related clinical scores to determine if BMI alone explained emotional states, but no emotion-related scores showed significant correlation with BMI (Table 1).

Brain activity in identified regions and obesity

We found significant negative correlation between brain activity features in amygdala and fear-affect score. The results indicated that a person with stronger brain activity in amygdala during emotion t-fMRI might feel less fear-affect than a person with weaker brain activity in the same region. We also found negative correlation between brain activity in amygdala and BMI (Fig. 1), which indicated that brain activity in amygdala of people with obesity were lower than that in people with healthy weight. The results suggested that the brain activity was weaker in amygdala in people with obesity, and it might be associated with increased susceptibility to fear.

Discussion

We explored differences in brain activity across full range of BMI values using emotion t-fMRI. The brain activity in hippocampus, amygdala, and inferior temporal gyrus showed significant correlation with BMI. The z-statistics extracted from the identified brain regions were used as imaging features to explain emotion-related clinical scores. The brain activity features (z-statistics) were correlated with emotion-related clinical scores and only the features of amygdala showed significant correlation with fear-affect score. The brain activity of hippocampus and inferior temporal gyrus did not show significant correlation with clinical scores.

It is shown that amygdala plays an important role in emotional recognition especially the expression of fear response9, 16, 17. A previous study observed increased brain activity in amygdala when fear condition was given and patients with damaged amygdala showed less response to the conditioned fear18. Amygdala is also related to obesity as well as emotional processing9, 19, 20. Holsen et al. found increased activation in amygdala to food stimuli and King et al. found association between dysfunction in amygdala and excessive weight gain19, 20. Our results demonstrated that only the left, not right, amygdala showed significant correlation with fear-affect score. Amygdala is known to show functional asymmetry between left and right hemispheres. The left amygdala is more engaged in processing of fearful stimuli than right amygdala21,22,23,24,25. Breiter et al. found significant brain activity changes in left amygdala when an individual watched fearful faces23 and Morris et al. found increased brain activity in left amygdala when fearful faces were presented compared to happy faces24, 25. Our results corroborated previous studies21,22,23,24,25. Hippocampus is widely regarded as an important region responsible for cognitive dysfunction and dementia, but recent studies have indicated that structural and functional alterations of hippocampus are highly related to obesity26,27,28,29,30,31. Smaller hippocampal volumes were found in obese adolescents with metabolic syndrome and a strong relationship between midlife obesity and hippocampal atrophy was identified28, 29. Previous study demonstrated that dysfunction in hippocampus is highly associated with excessive food intake which might lead weight gain32. A genetic study indicated that the mechanism of SIRT1 gene expression, one of the memory-associated genes, in hippocampus was suppressed in people with obesity and it led to impairment in memory27. Inferior temporal gyrus is known to be partly related to obesity33, 34. In the previous studies, people with obesity showed increased cerebral blood flow in temporal cortex compared to people with healthy weight and significant brain activation was found in inferior temporal gyrus to food stimuli33, 34. Previous studies showed hippocampus, amygdala, and inferior temporal gyrus were related to obesity and amygdala was also highly associated with emotional processing27,28,29, 35,36,37. The adopted stimuli and direction of differential effects were not exactly same as our study, but the results that amygdala was related to both obesity and emotional states were partly consistent with our results.

Our study has a few limitations. First, the number of participants in class 3 obesity was insufficient. Future studies with larger samples in class 3 obesity are necessary. Second, we used only t-fMRI. Multimodal imaging features that can be derived from other complementary imaging modalities such as rs-fMRI and diffusion tensor imaging might provide better information linking neuroimaging findings with emotional scores.

In summary, we identified brain regions that were significantly related to BMI using emotion t-fMRI. Hippocampus, amygdala, and inferior temporal gyrus showed significant correlation with BMI. Only brain activity for amygdala, not hippocampus and inferior temporal gyrus, showed significant correlation with negative emotional state of fear-affect score. Our results might be used as neuroimaging biomarker for future obesity and emotion-related studies.

Methods

Subjects and imaging data

The Institutional Review Board (IRB) of Sungkyunkwan University approved this study. Our study was performed in full accordance with local IRB guidelines. Informed consent was obtained from all participants. We obtained T1- and T2-weighted structural MRI and emotion t-fMRI data from the Human Connectome Project (HCP), an openly accessible research database38. The HCP team scanned all participants using a Siemens Skyra 3T scanner at Washington University in St. Louis. Imaging parameters of structural MRI were: voxel resolution = 0.7 mm3; number of slices = 256; field of view (FOV) = 224 mm; repetition time (TR) = 2,400 ms for T1-weighted data and 3,200 ms for T2-weighted data; and echo time (TE) = 2.14 ms for T1-weighted data and 565 ms for T2-weighted data. Imaging parameters of emotion t-fMRI data were: voxel resolution = 2.0 mm3; number of slices = 72; number of volumes = 176; TR = 720 ms; TE = 33.1 ms; and FOV = 208 × 180 mm. Subjects with drug ingestion or attention problem based on the Diagnostic and Statistical Manual IV (DSM-IV) were excluded39, 40. Twin subjects and participants with same parents were excluded. The remaining participants were randomly adjusted to have similar number of sample size and gender ratio among the groups of HW, OW, OB1, and OB23 using a sampling scheme similar to a bootstrapping approach for 1,000 times. Each group had approximately 50 participants with equal ratio between males and females. We considered the BMI as a continuous variable but only for adjusting the number of sample size and gender ratio, participants were grouped into four groups of HW, OW, OB1, and OB23. We matched number of samples in each group since having disproportionally more participants in one group leads to biased result of the particular group and hence increase type I error41. BMI in the HW group was greater than or equal to 18.5 and less than 25; BMI in the OW group was greater than or equal to 25 and less than 30; BMI in the OB1 group was greater than or equal to 30 and less than 35; BMI in the OB23 group was greater than or equal to 3542. Detailed demographic information is in Table 2.

Task paradigm

All participants performed the following emotion task10, 11. Three faces with either angry or fearful facial expressions were presented on a screen (Fig. 3a). One target face was presented on the top, and two probe faces were presented on the bottom. Participants were asked to select a probe face with the same emotional expression as the target face. The participants saw real human faces as shown in the illustration (Fig. 3a). The control task was the same as the emotion task except that faces were replaced with geometric shapes (Fig. 3b). The task paradigm was designed to match faces with the same emotional expression not to differentiate between emotions. The emotion task paradigm consisted of three tasks and three control blocks that were presented for 21 s. Each block consisted of six trials of 2 s of stimulus (face or shape) and 1 s of inter-task interval (ITI). At the end of all blocks, 8 s of fixation block was presented (Fig. 3c).

Image preprocessing

We used preprocessed imaging data provided by the HCP database38, 43. Imaging data were preprocessed using FSL and FreeSurfer software14, 44. T1- and T2-weighted structural MRI data were processed as follows. Gradient nonlinearity and b0 distortions were corrected. T1- and T2-weighted data were registered to each other and averaged. Averaged structural data was aligned onto the Montreal Neurological Institute (MNI) standard space using rigid body transformation. Non-brain tissue was removed by warping the standard MNI brain mask to individual brain data. Magnetic field bias was corrected and registered onto the MNI standard space using nonlinear transformation. Emotion t-fMRI data were processed as follows. Gradient nonlinearity distortion and head motion were corrected. Low-resolution fMRI data were registered onto high-resolution T1-weighted structural data and subsequently onto the MNI standard space. Bias field was corrected, and the skull was removed by applying the standard MNI brain mask to individual participant spaces. Intensity normalization with a mean value of 10,000 was applied to the entire 4D data. Artifacts of head motion, cardiac- and breathing-related contributions, white matter, and scanner-related artifacts were removed using FIX software45. We performed the following additional processes. We divided t-fMRI data into several blocks to separate task and control states. Task blocks consisted of fMRI volumes from 6 s of task onset to the first 2 s of task offset to consider delays in hemodynamic response46. The HCP database provided data with two distinct phase-encoded directions, “left-to-right” and “right-to-left.” FMRI volumes for task blocks of two phase-encoded data were averaged using the 3dMean function in AFNI software47. Volumes of control blocks were formed using the average of both phase-encoding directions. Task blocks and control blocks were concatenated using the fslmerge function in FSL software14.

Task fMRI analysis

Participant-level analysis was conducted using the FEAT framework in FSL software14. High-pass filter with cutoff of 200 s and spatial smoothing with full width at half maximum (FWHM) of 4 mm were applied. Two kinds of contrasts were considered. The first was activation of BOLD signals in task compared to control state, and the second was deactivation. A general linear model was constructed to estimate effect sizes as β coefficients. Time series of a voxel was the dependent variable, and a design matrix of the start time of task onset and duration was the independent variable. Participant-level contrast of parameter estimate (COPE) was calculated by the linear combination of contrast weight vector and estimated effect size. The t-statistics map was computed by dividing COPE with its standard deviation and it was transformed to z-statistics map. Brain regions were specified by AAL atlas and the z-statistics map of all subjects were used to compute regional brain activity15. The regional brain activity was spatial average of activations of a given region. We then correlated regional brain activity with BMI and brain regions that showed significant correlation (p < 0.05, FDR corrected) were regarded as significant regions related to obesity.

Linking imaging features and emotion-related clinical scores

Emotion-related clinical scores were measured using the NIH toolbox12, 13. The emotion domain of the toolbox contained four subdomains: negative affect, psychological well-being, stress and self-efficacy, and social relationships12, 13. Our study was an exploratory one regarding what negative emotion to focus on and thus we chose to correlate our neuroimaging findings with available negative emotion scores in negative affect subdomain of NIH toolbox with stringent multiple comparison correction. The negative affect subdomain in emotion domain of the NIH toolbox includes anger, fear, and sadness. Anger is the attitudes of hostility and criticism and it includes three sub-components: (1) anger as an emotion (anger-affect), (2) anger as a cynical attitude (anger-hostility), and (3) anger as a behavior (anger-physical aggression). Fear is a symptom of anxiety and perception of threat and it includes two sub-components: (1) psychological emotion of fear and anxiety (fear-affect) and (2) somatic symptoms (fear-somatic arousal). Sadness is a state of low levels of positive affect such as poor mood or depression. Detailed score-related information is reported in Table 2. Identified imaging features of all participants were linearly correlated with emotion-related clinical scores, and the quality of the correlation was assessed using r- and p-value statistics. P-values were corrected using the Holm-Bonferroni method48. The behavioral tests of NIH toolbox and emotion task fMRI scan were completed on the same day so that clinical scores of NIH toolbox were reflective of the states that might correlate with the fMRI scan data10, 38, 49.

References

Raji, C. A. et al. Brain Structure and Obesity. Hum Brain Mapp 31, 353–364 (2010).

Ozier, A. D. et al. Overweight and Obesity Are Associated with Emotion- and Stress-Related Eating as Measured by the Eating and Appraisal Due to Emotions and Stress Questionnaire. J. Am. Diet. Assoc. 108, 49–56 (2008).

Zeeck, A., Stelzer, N., Linster, H. W., Joos, A. & Hartmann, A. Emotion and eating in binge eating disorder and obesity. Eur. Eat. Disord. Rev. 19, 426–437 (2011).

Edman, J. L., Yates, A., Aruguete, M. S. & DeBord, K. A. Negative emotion and disordered eating among obese college students. Eat. Behav. 6, 308–317 (2005).

Lindquist, K. A., Wager, T. D., Kober, H., Bliss-Moreau, E. & Barrett, L. F. The brain basis of emotion: A meta-analytic review. Behav. Brain Sci. 35, 121–202 (2012).

Nummenmaa, L. et al. Emotions promote social interaction by synchronizing brain activity across individuals. Proc. Natl. Acad. Sci. 109, 9599–9604 (2012).

Carnell, S., Gibson, C., Benson, L., Ochner, C. N. & Geliebter, A. Neuroimaging and obesity: current knwoledge and future directions. Obes Rev. 13, 43–56 (2012).

Lips, Ma et al. Resting-state functional connectivity of brain regions involved in cognitive control, motivation, and reward is enhanced in obese females. Am. J. Clin. Nutr. 100, 524–531 (2014).

Adolphs, R. Neural systems for recognizing emotion. Curr. Opin. Neurobiol. 12, 169–177 (2002).

Barch, D. M. et al. Function in the human connectome: Task-fMRI and individual differences in behavior. Neuroimage 80, 169–189 (2013).

Hariri, A. R. et al. Serotonin Transporter Genetic Variation and the Response of the Human Amygdala. Science (80-). 297, 400–403 (2002).

Salsman, J. M. et al. EMOTION assessment using the NIH Toolbox. Neurology 80, S76–86 (2013).

Gershon, R. C. et al. NIH toolbox for assessment of neurological and behavioral function. Neurology 80, S2–6 (2013).

Jenkinson, M., Beckmann, C. F., Behrens, T. E. J., Woolrich, M. W. & Smith, S. M. Fsl. Neuroimage 62, 782–790 (2012).

Tzourio-Mazoyer, N. et al. Automated anatomical labeling of activations in SPM using a macroscopic anatomical parcellation of the MNI MRI single-subject brain. Neuroimage 15, 273–289 (2002).

Davis, M. The role of the amygdala in fear and anxiety. Annu. Rev. Neurosci. 15, 353–375 (1992).

Duvarci, S. & Pare, D. Amygdala microcircuits controlling learned fear. Neuron 82, 966–980 (2014).

Phelps, E. A. & LeDoux, J. E. Contributions of the amygdala to emotion processing: From animal models to human behavior. Neuron 48, 175–187 (2005).

King, B. M., Cook, J. T., Rossiter, K. N. & Rollins, B. L. Obesity-inducing amygdala lesions: examination of anterograde degeneration and retrograde transport. Am. J. Physiol. Regul. Integr. Comp. Physiol. 284, R965–982 (2002).

Holsen, L. M. et al. Neural mechanisms underlying food motivation in children and adolescents. Neuroimage 27, 669–676 (2005).

Markowitsch, H. J. Differential Contribution of Right and Left Amygdala to Affective Information Processing. Behav. Neurol. 11, 233–244 (1999).

Davidson, R. J. & Irwin, W. The functional neuroanatomy of emotion and affective style. Trends Cogn. Sci. 3, 11–21 (1999).

Breiter, H. C. et al. Response and habituation of the human amygdala during visual processing of facial expression. Neuron 17, 875–887 (1996).

Morris, J. S. et al. A neuromodulatory role for the human amygdala in processing emotional facial expressions. Brain 121, 47–57 (1998).

Morris, J. S. et al. A differential neural response in the human amygdala to fearful and happy facial expressions. Nature 383, 812–815 (1996).

Nguyen, J. C. D., Killcross, A. S. & Jenkins, T. A. Obesity and cognitive decline: Role of inflammation and vascular changes. Front. Neurosci. 8, 1–9 (2014).

Heyward, F. D. et al. Obesity Weighs down Memory through a Mechanism Involving the Neuroepigenetic Dysregulation of Sirt1. J. Neurosci. 36, 1324–1335 (2016).

Yau, P. L. P. L., Castro, M. G., Tagani, A., Tsui, W. H. & Convit, A. Obesity and Metabolic Syndrome and Functional and Structural Brain Impairments in Adolescence. Pediatrics 130, e856–e864 (2012).

Debette, S. et al. Midlife vascular risk factor exposure accelerates structural brain aging and cognitive decline. Neurology 77, 461–468 (2011).

Lee, S.-H., Seo, J., Lee, J. & Park, H. Differences in early and late mild cognitive impairment tractography using a diffusion tensor MRI. Neuroreport 25, 1393–1398 (2014).

Den Heijer, T. et al. A 10-year follow-up of hippocampal volume on magnetic resonance imaging in early dementia and cognitive decline. Brain 133, 1163–1172 (2010).

Davidson, T. L., Kanoski, S. E., Schier, L. A., Clegg, D. J. & Benoit, S. C. A Potential Role for the Hippocampus in Energy Intake and Body Weight Regulation. Curr Opin Pharmacol. 7, 613–636 (2007).

Cornier, M. A. et al. Effects of overfeeding on the neuronal response to visual food cues in thin and reduced-obese individuals. PLoS One 4, e6310 (2009).

Gautier, J. F. et al. Effect of satiation on brain activity in obese and lean women. Obes. Res. 9, 676–684 (2001).

Stranahan, A. M. et al. Diabetes impairs hippocampal function through glucocorticoid-mediated effects on new and mature neurons. Nat. Neurosci. 11, 309–317 (2008).

Castanon, N., Luheshi, G. & Laye, S. Role of neuroinflammation in the emotional and cognitive alterations displayed by animal models of obesity. Front. Neurosci. 9, 1–14 (2015).

Guo, M., Huang, T.-Y., Garza, J. C., Chua, S. C. & Lu, X.-Y. Selective deletion of leptin receptors in adult hippocampus induces depression-related behaviours. Int. J. Neuropsychopharmacol. 16, 857–867 (2013).

Van Essen, D. C. et al. The WU-Minn Human Connectome Project: an overview. Neuroimage 80, 62–79 (2013).

American Psychiatric Association. Diagnostic and Statistical Manual of Mental Disorders. (American Psychiatric Press, Washington, DC 1994).

Achenbach, T. M. & Rescorla, L. A. Manual for the ASEBA Adult Forms & Profiles. (Burlington, VT: University of Vermont, Research Center for Children, Youth, & Families 2003).

Rusticus, Sa & Lovato, C. Y. Impact of Sample Size and Variability on the Power and Type I Error Rates of Equivalence Tests: A Simulation Study. Pract. Assesment, Res. Eval. 19, 1–10 (2014).

WHO expert consultation. Appropriate body-mass index for Asian populations and its implications for policy and intervention strategies. Lancet 363, 157–163 (2004).

Glasser, M. F. et al. The minimal preprocessing pipelines for the Human Connectome Project. Neuroimage 80, 105–124 (2013).

Fischl, B. FreeSurfer. Neuroimage 62, 774–781 (2012).

Salimi-Khorshidi, G. et al. Automatic denoising of functional MRI data: Combining independent component analysis and hierarchical fusion of classifiers. Neuroimage 90, 449–468 (2014).

Koshino, H. et al. Functional connectivity in an fMRI working memory task in high-functioning autism. Neuroimage 24, 810–21 (2005).

Cox, R. W. AFNI: Software for Analysis and Visualization of Functional Magnetic Resonance Neuroimages. Comput. Biomed. Res. 29, 162–173 (1996).

Holm, S. A Simple Sequentially Rejective Multiple Test Procedure. Scand. J. Stat. 6, 65–70 (1979).

HCP. WU-Minn HCP 900 Subjects Data Release: Reference Manual (2015).

Acknowledgements

This work was supported by the Institute for Basic Science (grant number IBS-R015-D1). This work was also supported by the National Research Foundation of Korea (grant numbers NRF-2016H1A2A1907833 and NRF-2016R1A2B4008545). Data were provided by the Human Connectome Project, WU-Minn Consortium (Principal Investigators: David Van Essen and Kamil Ugurbil; 1U54MH091657) funded by the 16 NIH Institutes and Centers that support the NIH Blueprint for Neuroscience Research and by the McDonnel Center for Systems Neuroscience at Washington University.

Author information

Authors and Affiliations

Contributions

B.P. and H.P. wrote the manuscript and researched data. J.H. aided the experiment. H.P. is the guarantor of this work and had full access to all the data in the study and takes responsibility for the integrity of the data and accuracy of the data analysis.

Corresponding author

Ethics declarations

Competing Interests

The authors declare that they have no competing interests.

Additional information

Publisher's note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Park, By., Hong, J. & Park, H. Neuroimaging biomarkers to associate obesity and negative emotions. Sci Rep 7, 7664 (2017). https://doi.org/10.1038/s41598-017-08272-8

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-017-08272-8

This article is cited by

-

Oxytocin reduces the functional connectivity between brain regions involved in eating behavior in men with overweight and obesity

International Journal of Obesity (2020)

-

Structural and functional consequences in the amygdala of leptin-deficient mice

Cell and Tissue Research (2020)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.