Abstract

The motion energy model is the standard account of motion detection in animals from beetles to humans. Despite this common basis, we show here that a difference in the early stages of visual processing between mammals and insects leads this model to make radically different behavioural predictions. In insects, early filtering is spatially lowpass, which makes the surprising prediction that motion detection can be impaired by “invisible” noise, i.e. noise at a spatial frequency that elicits no response when presented on its own as a signal. We confirm this prediction using the optomotor response of praying mantis Sphodromantis lineola. This does not occur in mammals, where spatially bandpass early filtering means that linear systems techniques, such as deriving channel sensitivity from masking functions, remain approximately valid. Counter-intuitive effects such as masking by invisible noise may occur in neural circuits wherever a nonlinearity is followed by a difference operation.

Similar content being viewed by others

Introduction

Linear system analysis, first introduced in visual neuroscience decades ago1, 2, has been highly influential and continues to be successfully applied in several domains including contrast, disparity and motion perception3,4,5,6. This is despite the fact that neurons have many well-known nonlinearities. For example, nonlinearity is fundamental in accounting for our ability to perceive the direction of moving patterns7,8,9. Increasingly, contemporary models in neuroscience consist of a cascade of linear-nonlinear interactions10,11,12. At each stage of these models, inputs are pooled linearly and then processed with a nonlinear operator such as divisive normalization. It is therefore somewhat surprising that linear systems analyses work as well as they do.

In a linear system, noise injected at a frequency to which a sensory system does not respond has no effect on the system’s ability to detect a signal. This property is often taken for granted in the study of perception. For example, since humans cannot hear ultrasound, our ability to discriminate speech is not affected by the presence of ultrasound noise. In fact, our auditory system consists of frequency-selective and independently-operating linear channels so that even noise at frequencies to which we are sensitive may not affect our hearing performance, if the noise is not detected by the same channel as the signal13. Similar frequency-selective channels mediate the detection of contrast and motion in the visual system1, 5, 14,15,16. Whether a system consists of multiple channels or not, it may seem obvious that the performance of the system cannot be affected by noise at frequencies to which the system does not respond. However, this property does not necessarily hold in nonlinear systems. It is possible to build a nonlinear system that is unresponsive to signals at a particular frequency but whose performance is significantly affected if noise of that frequency is added to a signal. Although there are many well-understood nonlinearities in vision17,18,19,20,21,22,23,24,25, interactions of this kind are often ignored26,27,28,29. The prominent models in the domain of motion perception, for example, include well-known nonlinearities but are still assumed to not respond to noise outside their frequency sensitivity band30.

Here, we show that this assumption is not generally true for the standard models of motion perception. The nonlinearity of these models means that a moving signal at a highly visible frequency can be “masked” (made less detectable) by noise at frequencies outside the detector’s sensitivity band (i.e. invisible noise). So far, this effect has been neglected because it does not occur when the filtering prior to motion detection is spatially bandpass, as it is in mammals. Masking techniques in humans and other mammals could therefore be applied successfully while ignoring this effect. However, in insects, early filtering is lowpass, and so we predict that invisible noise will be able to obscure a moving signal.

To test this prediction, we used the optomotor response of the praying mantis Sphodromantis lineola, in response to drifting gratings with and without noise. We have previously shown that the optomotor response is most sensitive to gratings at around 0.03 cycles per degree (cpd) and is largely insensitive to signals below 10−2 cpd31. However, we show here that noise as low as 10−3 cpd – an order of magnitude lower – has the same effect as noise of the same amplitude presented at the optimal spatial frequency. This is quite different from published results in humans5, where noise has most effect when presented at spatial frequencies close to the optimal frequency of the relevant channel, and has no effect when presented at frequencies to which the organism is not sensitive. However, the same model structure correctly predicts the qualitatively different behaviour in the two species, reflecting a difference in early filtering. Thus, although we here reveal a profound difference in how noise affects motion perception in insects versus humans, we also show that this need not conflict with existing evidence that both species use a similar mechanism to compute visual motion.

Results

Modelling biological motion detection

The standard models of early biological motion detection are the Hassenstein-Reichardt Detector (Figure 1A), originally developed to describe behaviour in insects32, and the Motion Energy Model (Figure 1B), originally developed to explain human perception8. Both models use nonlinear operators to combine the outputs of several spatial and temporal filters and obtain a direction-sensitive measure of motion strength, known as motion energy, via a final opponent step (Figure 1). This opponent step ensures that they respond only to directional motion, and not to non-directional changes in luminance such as counterphase-modulation or flicker33.

Opponent energy models of motion detection. The Reichardt Detector (RD) and the Energy Model (EM) are two prominent opponent models in the literature of insect and mammalian motion detection. The two models are formally equivalent when the spatial and temporal filters are separable (as shown) and so their outputs and response properties are identical even though their structures are different. Both models use the outputs of several linear spatial and temporal filters (SF 1, SF 2, TF 1 and TF 2) to calculate two opponent terms and then subtract them to obtain a direction-sensitive measure of motion (opponent energy). Nonlinear processing is a fundamental ingredient of calculating motion energy and so both models include nonlinear operators before the opponency stage (multiplication in the RD and squaring in the EM). (Redrawn after Fig. 18 from ref. 8).

The two models are traditionally associated with particular early spatiotemporal filters. For the energy model, the filters are often taken to be building blocks for two quadrature pairs of oriented linear responses8. “Quadrature” here means that the pairs differ by π/2 in phase, e.g. one responds best to a moving light/dark edge while the other responds best to a light bar flanked by dark on each side. The attraction of the quadrature assumption is that it makes leftward and rightward responses to a simple moving grating constant, despite the temporal modulation of the stimulus. This is consistent with the behaviour of directionally-selective complex cells in primary visual cortex9, 34, which are assumed to be the physiological substrate of directionally-selective motion detection channels in humans35, although the phase-independence of complex cells is probably achieved by summing many inputs at a range of phases, rather than just two inputs in quadrature36. For the Reichardt detector, the spatial filters are usually assumed to be identical but displaced in space, reflecting the view that their correlates are two neighboring ommatidia in an insect’s eye37. These filter choices originate from the studies in insects and mammals where the models have their historical roots, but are not intrinsic features of the models themselves. In fact, although the circuits originally proposed for the motion energy and Reichardt detectors are structurally different (Figure 1), if the same spatiotemporally separable filters are used in each model, the output of the two models is mathematically identical8, 38,39,40. Critically, as Figure 1 shows, both models involve opponency, i.e. they compute the difference between motion energy in opposite directions, either explicitly or implicitly. Our discussion and conclusions will therefore apply to both models equally.

Opponency in motion perception

One feature of opponent energy models of motion detection is that the spatiotemporal filters and the detector itself as a unit may have different spatiotemporal tuning. This can be illustrated by considering the response of a motion energy detector to a single drifting sinusoidal grating with the contrast function

where x is the horizontal position of a point in the grating, t is time, f T is temporal frequency, f S is spatial frequency, β is the grating’s phase and C is contrast. Following the schematics in Figure 1, both models integrate this stimulus over space and then pass the results through temporal filters to generate the separable time responses

where G T1, G T2, ϕ T1, ϕ T2 are the gains and phase responses of temporal filters at the stimulus temporal frequency f T and G S1, G S2, ϕ S1, ϕ S2 are likewise for the spatial filters. In the energy model (Figure 1B), these signals are combined into distinct rightward and leftward terms:

that are subtracted to produce the model output: opponent energy. The Reichardt detector combines the separable responses differently (Figure 1A) but produces the same output (up to a scaling factor of 4):

Figure 2 illustrates the Fourier spectrum of rightward, leftward and opponent energy for typical human and insect filters. The red and blue lines in Figure 2A,B mark the passband of rightward and leftward energies respectively (Equations 6 and 7). Figure 2A does this for filters designed to model human vision, while Figure 2B is for filters designed to model insect vision; see Methods for details. Figure 2C,D shows the opponent energy (defined as rightward minus leftward energy, Equation 8), which is the output of the motion detector.

Spatiotemporal filter and opponent energy tuning in an opponent motion model. (A,B) Fourier spectra of rightward and leftward energies ((A + B′)2 + (A′ − B)2, Equation 6, and (A − B′)2 + (A′ + B)2, Equation 7) for example mammalian and insect opponent energy motion detectors (see Methods for details, Equations 18–22). The colored lines in each plot are 0.25 sensitivity contours. The Fourier spectra of leftward and rightward energies are very similar to the model’s filters in each case: spatially bandpass in mammals and low-pass in insects. (C,D) Opponent energy, AB′ − A′B, computed as the difference between rightward and leftward energies. In mammals, rightward and leftward responses do not overlap because the spatial filter are band-pass (panel A). In insects, the low-pass spatial filters cause an overlap between rightward and leftward responses (panel B) but this overlap is canceled at the opponency stage making opponent energy insensitive to low spatial frequencies (panel D).

For mammals, early spatiotemporal filters are typically relatively narrow-band, with little response to DC30. The rightward and leftward energies are therefore also bandpass and clearly separated in Fourier space (Figure 2A), very similar to those of the input filters. The regions of Fourier space where the opponent energy is positive (bounded by solid contours in Figure 2C) are simply the same regions where there is rightward energy (bounded by red in Figure 2A), and similarly for negative/leftward (dotted in C, blue in A). Thus, there are no frequencies that elicit a strong response from the individual filters and not from the opponent model as a whole.

For insects, the situation is different. The two spatial inputs to a Reichardt detector are usually taken to be a pair of adjacent ommatidia41, 42, so the spatial filter is simply the angular sensitivity function of an ommatidium, which is lowpass, roughly Gaussian39, 43. Accordingly, as shown in Figure 2B, insects have substantial leftward and rightward energy responses at zero spatial frequency. Crucially, these are canceled out in the opponency step, meaning that the Reichardt detector as a whole does not respond to whole-field changes in brightness to which individual photoreceptors do respond. Thus the opponent energy terms are bandpass (Figure 2D). This means that, for insects, the spatiotemporal filters and the model itself as a unit may have different spatiotemporal tuning.

Mathematically, after substituting for the filter outputs in Equation 8 and simplifying, the output of the motion detector can be expressed as

where G is the product of the filter gains, G = G S1 G S2 G T1 G T2, and the ϕ are the phases of the filter responses, defined above in Equations 2–5. Since G is spacetime separable, it is not direction-selective. The direction-selectivity is created by the phase-difference terms. Since the filters are real, the filter phase is an odd function of frequency. This means that the energy is positive in the first and third Fourier quadrants and negative in the second and fourth, as shown in Figure 2C,D.

The important point for our purposes is that the frequency tuning of the motion detector as a whole reflects both that of the filter gains G, and that of the phase-difference terms. For the Reichardt detector, the phase-difference terms make the motion detector spatially bandpass even though its spatial filters are lowpass. In the Reichardt detector, the spatial filters are identical but offset in position by a distance Δx, so the spatial phase-difference term in Equation 9 is sin (2πf S Δx) (see Supplementary Information). This term removes the response to the lowest frequencies, as we saw in Figure 2D.

In the energy model, the spatial filters are usually taken to be bandpass functions like Gabors or derivatives of Gaussians, differing in their phase but not position. For such functions, the phase difference is independent of frequency, so the phase-difference terms in Equation 9 just contribute an overall scaling and the spatial frequency tuning of the motion detector is determined solely by the spatial filter gains (see Supplementary Information). We shall show that this difference in the bandwidth of their spatial filters means that the energy model and Reichardt detector are affected very differently by motion noise, despite the fact that the model architecture is mathematically identical.

Response to a general stimulus

We now work through what happens when noise is added to a motion signal. We consider the response of an opponent model to an arbitrary stimulus composed of a sum of N drifting gratings:

Since the filters in an energy opponent motion detector are linear, the separable responses A, A′, B and B′ (Equations 2–5) can be expressed as a sum of the independent responses to the components present in a stimulus (see Supplementary Information). The model’s overall response to the compound grating in Equation 10 can therefore be written as:

where the subscripts denote responses to the components. To simplify, we extract the terms where j = k and re-write the expression as:

The response in Equation 12 consists of two parts. Terms within the first sum operator (the independent terms) are simply the summed responses to grating components when presented each on its own (Equation 8). Obviously, frequencies which do not elicit a response when presented in isolation do not contribute to this term. The remaining terms within the second sum operator represent crosstalk or interactions between component pairs at different spatial and/or temporal frequencies. These show more subtle behaviour.

Interactions differ from independent terms in a number of ways. First, if two components have different temporal frequencies then their interaction is a sinusoidal function of time, so has no net contribution to the response when integrated over time39, 44. When two components j and k have the same temporal frequency, however, their interaction results in the DC response:

where \(G(j,k)={G}_{S1j}{G}_{S2k}{G}_{T{1}_{j}}{G}_{T{2}_{j}}\), the product of the filter gains at the spatial and temporal frequencies in question. β j , β k are the phases of the stimulus components (Equation 10), ϕ S1j , ϕ S2k are the phases of the two spatial filters at the relevant spatial frequencies, f Sj and f Sk , and ϕ T1j , ϕ T2j are the phases of the two temporal filters at the temporal frequency f Tj . This response has a similar form to Equation 9 but differs in an important way: its spatial phase-difference term depends on the spatial filter phase responses to different stimulus components. Suppose there is a spatial frequency f Sj for which both spatial filters have substantial gains G S1j , G S2j and equal phases: ϕ S1j = ϕ S2j . Due to opponency, this component will not elicit any response when presented in isolation, because of the term sin (ϕ S1j − ϕ S2j ) in Equation 9; it will appear invisible to the detector. Yet its interaction with a visible component f Si will nevertheless add a constant offset to the model’s output, provided only that \(\sin \,({\beta }_{i}-{\beta }_{j}+{\varphi }_{S1i}-{\varphi }_{S2j})\ne 0\). This means that invisible noise at f Sj can mask a signal at f Si .

Early spatial filtering in insect vs mammalian motion detection

Does this effect actually occur in biological motion detectors? In mammals, it seems the answer is no. There, the spatial filters are bandpass functions like narrow-band Gabors or derivatives of Gaussians, which have roughly constant phase for all frequencies of a given sign. The two spatial filters are generally modelled as having the same position but different phase, which means that there are no components for which \({\varphi }_{S1j}={\varphi }_{S2j}\). If the filters had different positions as well as phases, such components could exist, but this would imply some strange properties of the motion detector (tuning to different directions for different frequency components) which have not been reported. For realistic mammalian filters, therefore, it is not possible for components to be invisible when presented in isolation and yet to affect the response to visible components.

However, for insect motion detectors, the spatial filters are believed to resemble Gaussians with a spatial offset Δx. For a component with spatial frequency f Sj , the phase difference between the two filters is 2πΔxf Sj . As the spatial frequency tends to zero, so does the phase difference and thus the response of the opponent energy motion detector (Equation 9). The opponent energy detector as a whole is therefore bandpass in its spatial frequency tuning, as has been confirmed many times for insects31, 37, 45,46,47. Yet since the Gaussian filters are low-pass, the gain G S2j remains high. This means that there can be a large interaction term between this frequency and visible frequencies f Si (Equation 13).

This analysis suggests that the interaction terms produced by the nonlinearity of the motion energy model can indeed be safely ignored for mammals, so long as the relevant spatial filters are bandpass. However, we predict that in insects, motion signals can be masked by invisible noise. This effect has not so far been demonstrated.

Mammalian motion detection is not affected by invisible noise

The spatiotemporal frequency tuning of motion detectors is often estimated psychophysically by measuring their responses to masked gratings. In these experiments, detecting a coherently-moving grating (the signal) is made more difficult by superimposing one or more gratings with different spatial/temporal frequencies but no coherent motion (the noise). The relative increase in detection threshold as a function of noise frequency (i.e. the masking function) is taken as the spatiotemporal sensitivity of the individual detector30. This technique is important because it enables the tuning of a single channel to be inferred, even though many channels contribute to the spatiotemporal sensitivity of the whole organism.

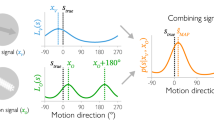

Figure 3 reproduces published data from ref. 5 showing such an experiment in humans. The reduction in sensitivity is greatest when noise is at the same spatial frequency as the signal. As noise moves away from the signal frequency, either higher or lower, it has progressively less effect. In this way ref. 5, deduced that human motion channels are bandpass with a bandwidth of 1 to 3 octaves.

Effect of noise on mammalian motion detectors. Measurements showing the effect of noise on motion detection sensitivity in humans (reproduced from Fig. 1b from ref. 5). The colored points show responses to different signal frequencies (marked by arrows); smooth curves represent masking functions drawn through the symbols by eye5. Noise is most effective at masking the signal when its frequency is the same and less effective as its frequency changes in either direction.

We model this by assuming that motion is detected when the output of an motion detector exceeds a threshold (see Methods for details). Because noise carries no motion signal, it has no effect on the mean detector output, but it increases its variability and hence decreases the proportion of above-threshold responses. This leads to a decrease in response rate and consequently threshold elevation. The factor by which threshold is elevated for noise at a given frequency forms the masking function, whose shape reflects the variability of the motion detector output.

Figure 4 shows the results of this simulation. Figure 4A shows the spatial sensitivity function of an energy model motion detector, i.e. its response to single drifting gratings as a function of their spatial frequency. This is also the detector’s mean response in the presence of noise. However, noise elevates the variability of the response, as shown in Figure 4C. Accordingly, the signal contrast needed for the model to reliably detect motion is increased, and we obtain the masking function shown in Figure 4D. As ref. 5 assumed, this accurately reflects the sensitivity of the underlying mechanisms (cf. Figure 4A,D). In particular, the bandpass filter tuning gives bandpass masking.

For mammalian bandpass filters, the masking function reflects sensitivity. (A) Spatial sensitivity function of a mammalian band-pass energy-model motion detector, i.e., its response to single drifting gratings as a function of the grating spatial frequency. (B) Direction discrimination model based on an array of opponent models with the spatial tuning plotted in panel A. Opponent model outputs are pooled and passed through a two-sided threshold of value T to produce a ternary judgment of motion direction per stimulus presentation. (C) The variability of opponent model outputs across 500 simulated presentations (per noise frequency point) of a noisy stimulus consisting of a signal grating of 3 cpd and temporally-broadband noise. Signal frequency is marked on the plot with an arrow. Signal and noise had \(\sqrt{2}\) and \(20\sqrt{2}\) RMS contrast respectively. Adding noise did not change the mean of opponent output but had a significant effect on its spread. Output variance was highest when noise frequency was 3 cpd and lower as noise frequency changed in either direction, closely resembling the shape of the opponent model’s sensitivity function. (D) The masking function (red) was calculated based on these simulated results as T(f n )/T 0 where T(f n ) is the threshold corresponding to a 90% detection rate at each noise frequency and T 0 is the detection threshold of an unmasked grating. The sensitivity function from (A) is reproduced, scaled, for comparison (blue dotted line). The masking function is a good approximation to the sensitivity.

Thus in mammals, the masking function can be used to infer (approximately) the spatiotemporal sensitivity of motion detection channels. This works because the initial filters are spatiotemporally bandpass5, 30, 48, 49.

Insect motion detection is affected by invisible noise

As we have seen, the response of insect motion detectors to masked grating stimuli is is expected to be qualitatively different. The lowpass tuning of the early spatial filters in models of insect motion detection predicts that low-frequency components which elicit no response when presented on their own will still influence the detector’s response to other frequencies.

This means that for insects, we predict differences between their motion masking and sensitivity functions. Specifically, when the mask contains components with the same temporal frequency as the signal, we expect the masking effect of noise to extend to spatial frequencies much lower than the sensitivity band of an insect motion detector. In this section, we present the results of experiments in which we tested this prediction.

Figure 5A shows the mantis optomotor response rates we measured in our experiment as a function of noise frequency. For insects, trials are slow, so we did not attempt to measure contrast thresholds for each combination of signal and noise as ref. 5 did in humans. Rather, we measured response rates at a single signal contrast, and used the drop in response rate to assess the effect of noise. To facilitate comparison with the corresponding plot in humans (Figure 3), we plot the masking rate, defined as \(M({f}_{n})=({R}_{0}-R({f}_{n}))/{R}_{0}\) where R(f n ) is the optomotor response rate at a given noise frequency and R 0 is the baseline response rate (measured without adding noise).

For mantis motion detection, masking function does not reflect sensitivity. (A) The spatial sensitivity of mantis motion detectors at 8 Hz, measured using the same experimental paradigm, showing bandpass sensitivity in the range 0.01 to 0.1 cpd (reproduction of Fig. 3a in ref. 31). (B) Measurements showing the effect of noise on the detection of a moving grating in the praying mantis. Circles are masking rate M defined as M = (R 0 − R)/R 0 where R is the response rate (proportion of trials in which mantids responded optomotorally in the same direction as the signal grating) and R 0 is the baseline (no-noise) response rate. Error bars are 95% confidence intervals calculated using simple binomial statistics. Signal frequency (0.0185 cpd) is marked on the plot with an arrow. The response rate measured at 0.03 cpd was slightly below baseline and so the calculated masking rate was negative. (C) Normalized sensitivity function of a motion energy model tuned to 0.03 cpd19. (D) Simulated masking function (red) with the simulated sensitivity function reproduced for comparison (blue dotted line, scaled to same peak). Masking and sensitivity functions in the mantis are qualitatively different: noise below the lower end of the sensitivity function (~0.01 cpd) continues to mask the signal.

As in mammals, adding noise to the stimulus causes a drop in response rate (corresponding to an increase in the masking rate). Unlike mammals, however, the impact of masking on the mantis is not predicted by its motion sensitivity function. For example, injecting noise near the peak spatial sensitivity (0.03 cpd, ref. 31) unsurprisingly causes severe masking; the masking rate is 80%. In the absence of noise, with a signal of contrast 0.125 at 0.0185 cpd, insects moved in the direction of the signal on R 0 = 60% of trials; after adding noise with contrast 0.198 at 0.03 cpd, this dropped to R(f n ) = 12%. Also unsurprisingly, injecting noise at frequencies much higher than the peak has little effect. For example, noise at 0.3 cpd produces masking which is not significantly different from zero; this is expected given that ref. 31 found sensitivity at 0.3 cpd was near zero (their Fig. 3b).

But counter-intuitively, noise injected at frequencies much lower than the peak continues to produce strong masking. For example ref. 31, found that sensitivity at 0.007 cpd, the lowest frequency they tested, was 15% of the peak value. Normally, we would expect the effect of noise to be reduced correspondingly. However, we find the masking rate at 0.0025 cpd is still 80%, just as severe as noise injected at the peak.

We tested the effect of noise on three further signal frequencies (Figure 6A–C). The amount of masking depends on the signal frequency. Since we always presented the signal grating at the same contrast, the effective strength of the signal depends on the sensitivity at the signal frequency. Accordingly, noise has least effect on signals at 0.037 cpd (maximum masking rate 50%, Figure 6A), and most effect at the lowest and highest signal frequencies (maximum masking rate 80% for 0.0185 cpd, Figure 5A, and 95% for 0.177 cpd, Figure 6C). As the noise frequency increases much beyond the signal frequency, it produces progressively less masking. The precise frequency at which the high frequency fall-off occurs depends on the signal frequency. This may reflect the contribution of motion detectors with different selectivity for spatial frequencies.

Mantis masking rate measurements at different signal frequencies. (A–C) Measurements of masking rate versus noise frequency in the mantis (for signal frequencies 0.037, 0.088 and 0.177 cpd) showing the same masking trends as Figure 5A (signal frequency 0.0185 cpd): noise continues to mask the signal significantly even if its frequency is below the spatial sensitivity passband of mantis motion detectors (~0.01 to 0.1 cpd). Circles are masking rate M defined as M = (R 0 − R)/R 0 where R is the response rate (proportion of trials in which mantids responded optomotorally in the same direction as the signal grating) and R 0 is the baseline (no-noise) response rate. Error bars are 95% confidence intervals calculated using simple binomial statistics. Signal frequency is marked on each plot by an arrow. (D) Masking rate fits for the four signal frequencies combined, demonstrating a masking effect that is qualitatively different from humans (cf. Figure 3). Fitted functions are in the form \(M(f)=a-\exp \,(b.\,\mathrm{log}(x)-c)\). Arrows indicate signal frequencies. Data-points are replotted from panels (A–C) and from Figure 5B.

Critically, in every case, we found the same dependence on noise frequency: noise injected at frequencies at or below the signal frequency produced essentially the same amount of masking, regardless of the precise frequency it was injected at. In Figure 6D, we plot the data for all 4 signal frequencies on the same axes, in the same format as the mammalian data in Figure 3, to facilitate comparison between the two species. This shows clearly that whereas mammalian masking is bandpass, insect masking is lowpass, even though the insects’ motion sensitivity is bandpass (Figure 5A). This is the signature interaction effect we predicted we would find in insects, due to their lowpass early spatial filtering combined with the nonlinearity of the standard models of motion detection.

Discussion

We show that the standard model of motion detection produces nonlinear interactions between the spatial components of moving stimuli. Stimuli that elicit no response can nonetheless have a powerful masking effect, if the filters that precede motion detection are spatially lowpass. We show that this sort of mask does effectively disrupt the optomotor response of the praying mantis. This is very different from the effects of masking noise in humans, but our analysis suggests that this reflects the same motion computation in both species, computed after different initial filters are applied. This highlights the fact that simple nonlinearities can have complex effects. In human studies, it is commonly assumed that nonlinear interactions take place only within the sensitivity band of a given channel within a system30, 50, where a “channel” is a pool of neurons with similar tuning1, 14,15,16, 51. This turns out to be a good approximation only if the sensitivity band is set by the inputs to the channel, rather than by subsequent nonlinearities. This is true for humans, but not in insects.

Here, we have analysed the standard model of motion detection. This is mathematically equivalent to both the Reichardt Detector and to the Motion Energy Model, the standard accounts of motion detection in insects and mammals respectively30, 32. The two accounts have different circuitry but are mathematically equivalent when the same filters are used as inputs8, 38,39,40. We derived equations 12 and 13 showing how such motion detectors can be affected by frequency components outside their sensitivity band18. In these models, interaction terms with different temporal frequencies average to zero over time, producing “pseudo-linearity”39. Crucially, however, we show that cross-frequency interactions can survive opponency and time-averaging. When low spatial frequencies are transmitted by early spatiotemporal filters, even if they are normally cancelled subsequently by the opponency step, these “invisible” components can affect the response to other, visible signals.

For mammalian motion sensors, this effect may be a mathematical curiosity. Motion sensors are built at a relatively late stage, following early neural filtering52 which is spatially bandpass for both spatial and temporal frequency, even as early as the output of the retina. The opponency in models of mammalian motion detection sharpens direction selectivity, but has little effect on spatial frequency tuning. In contrast, current models of insect motion sensors postulate that they are constructed at a much earlier stage, directly from individual ommatidia. The filters are spatially-lowpass, reflecting largely optical, rather than neural, factors43, 53. Our analysis predicted that this would make insect motion detectors subject to interference from “invisible” low-frequency noise. We have confirmed this behaviourally in an insect model, the praying mantis.

This type of masking has not previously been reported and its possible ecological implications are unknown. Low-frequency visual noise could be generated by, for example, foliage swaying back and forth in the path of a sunbeam, producing flicker between light and dark across much of an insect’s visual field. Our results suggest this might impair the insect’s ability to detect the motion of a predator or prey. This novel form of motion camouflage could potentially be tested in the field; for example, if prey species are more likely to move during such episodes of sun-flicker.

Given the differences between humans and mantises, it is remarkable that the experimental data in both species is so well described by a model of exactly the same structure (Figures 4 and 5). This model employs a simple decision rule in which motion is perceived when the average activity of a group of motion detectors exceeds a threshold. The only difference is the early spatiotemporal filters used for each species: spatially bandpass for mammals and spatially lowpass for insects. In both cases the masking function reflects this early spatial filtering. For mammals, this is the same as the spatiotemporal sensitivity of the whole organism, but for insects it is not. Thus, the same circuitry results in very different behaviour.

Although motion perception presumably evolved independently in insects and mammals, the underlying circuits may be much older. The circuit relies on two very common operations: an output nonlinearity and a subtraction. These are both very common operations, so similar circuits are likely to be widespread in nervous systems. These common operations can lead to very different behaviour, given only slight differences in the inputs. It seems likely that other behavioural differences may be explained in equally simple ways.

Materials and Methods

We used a masking paradigm to test visual motion detection in the praying mantis. In the context of motion detection, a “signal” is an image that moves smoothly in a given direction, to “detect the signal” is to report the direction of motion and “noise” is a sequence of images with no consistent motion. Mantises were placed in front of a CRT screen and viewed full screen gratings drifting either leftward or rightward in each trial. In a subset of trials, the moving grating elicited the optomotor response, a postural stabilization mechanism that causes mantises to lean in the direction of a moving large-field stimulus. An observer coded the direction of the elicited optomotor response in each trial (if any) and these responses were later used to calculate motion detection probability as the proportion of trials in which mantises leaned in the same direction as the stimulus. Videos of mantises responding optomotorally to a moving grating using same experimental paradigm are available on (http://www.edge-cdn.net/video_839277?playerskin=37016) and (http://www.edge-cdn.net/video_839281?playerskin=37016) (supplementary material to ref. 31).

Insects

The insects used in experiments were 11 individuals (6 males and 5 females) of the species Sphodromantis lineola. Each insect was stored in a plastic box of dimensions 17 × 17 × 19 cm with a porous lid for ventilation and fed a live cricket twice per week. The boxes were kept at a temperature of 25 °C and were cleaned and misted with water twice per week.

Experimental Setup

The setup consisted of a CRT monitor (HP P1130, gamma corrected with a Minolta LS-100 photometer) and a 5 × 5 cm Perspex base onto which mantises were placed hanging upside down facing the (horizontal and vertical) middle point of the screen at a distance of 7 cm. The Perspex base was held in place by a clamp attached to a retort stand and a web camera (Kinobo USB B3 HD Webcam) was placed underneath providing a view of the mantis but not the screen. The monitor, Perspex base and camera were all placed inside a wooden enclosure to isolate the mantis from distractions and maintain consistent dark ambient lighting during experiments.

The screen had physical dimensions of 40.4 × 30.2 cm and pixel dimensions of 1600 × 1200 pixels. At the viewing distance of the mantis the horizontal extent of the monitor subtended a visual angle of 142°. The mean luminance of the stimuli was 51.4 cd/m2 and its refresh rate was 85 Hz.

The monitor was connected to a Dell OptiPlex 9010 (Dell, US) computer with an Nvidia Quadro K600 graphics card and running Microsoft Windows 7. All experiments were administered by a Matlab 2012b (Mathworks, Inc., Massachusetts, US) script which was initiated at the beginning of each experiment and subsequently controlled the presentation of stimuli and the storage of keyed-in observer responses. The web camera was connected and viewed by the observer on another computer to reduce processing load on the rendering computer’s graphics card and minimize the chance of frame drops. Stimuli were rendered using Psychophysics Toolbox Version 3 (PTB-3)54,55,56.

Experimental Procedure

Each experiment consisted of a number of trials in which an individual mantis was presented with moving gratings of signal and noise components. An experimenter observed the mantis through the camera underneath and coded its response as “moved left”, “moved right” or “did not move”. The camera did not show the screen and the experimenter was blind to the stimulus. There were equal repeats of left-moving and right-moving gratings of each condition in all experiments. Trials were randomly interleaved by the computer. In between trials, a special “alignment stimulus” was presented and used to steer the mantis back to its initial body and head posture as closely as possible. The alignment stimulus consisted of a chequer-like pattern which could be moved in either horizontal direction via the keyboard and served to re-align the mantis by triggering the optomotor response.

Visual Stimulus

The stimulus consisted of superimposed “signal” and “noise” vertical sinusoidal gratings. The signal grating had one of the spatial frequencies 0.0185, 0.0376, 0.0885 or 0.177 cpd, a temporal frequency of 8 Hz and was drifting coherently to either left or right in each trial. Signal temporal frequency was chosen to maximize the optomotor response rate based on the mantis contrast sensitivity function31. Noise had a spatial frequency in the range 0.0012 to 0.5 cpd and its phase was randomly updated on each frame to make it temporally broadband (with a Nyquist frequency of 42.5 Hz) without net coherent motion in any direction. Each presentation lasted for 5 seconds.

Since mantises were placed very close to the screen (7 cm away), any gratings that are uniform in cycles/px would have appeared significantly distorted in cycles/deg5. To correct for this we applied a nonlinear horizontal transformation so that grating periods subtend the same visual angle irrespective of their position on the screen. This was achieved by calculating the visual degree corresponding to each screen pixel using the function:

where x is the horizontal pixel position relative to the center of the screen, θ(x) is its visual angle, R is the horizontal screen resolution in pixels/cm and D is the viewing distance. To an observer standing more than D cm away from the screen, a grating rendered with this transformation looked more compressed at the center of the screen compared to the periphery. At D cm away from the screen, however, grating periods in all viewing directions subtended the same visual angle and the stimulus appeared uniform (in degrees) as if rendered on a cylindrical drum. This correction only works perfectly if the mantis head is in exactly the intended position at the start of each trial and is most critical at the edges of the screen. As an additional precaution against spatial distortion or any stimulus artifacts caused by oblique viewing we restricted all gratings to the central 85° of the visual field by multiplying the stimulus luminance levels L(x, y, t) with the following Butterworth window:

where x is the horizontal pixel position relative the middle of the screen, S w is the window’s Full Width at Half Maximum (FWHM), chosen as 512 pixels in our experiment (subtending a visual angle of 85° at the viewing distance of the mantis), and n is the window order (chosen as 10). This restriction minimized any spread in spatial frequency at the mantis retina due to imperfections in our correction formula described by Equation 14. We have previously shown that the mantis optomotor response is largely driven by the central visual field, such that a stimulus covering the central 85° should elicit around 84% of the response which would have been elicited by a stimulus covering the entire visual field57.

With the above manipulations the presented stimulus was:

where x, y are the horizontal and vertical positions of a screen pixel, k is frame number, I is pixel luminance, in normalized units where 0 and 1 are the screen’s minimum and maximum luminance levels (0.161 and 103 cd/m2 respectively), A s is signal Michelson contrast (0.125), A n is noise contrast (0.198), f s is signal spatial frequency (0.0185, 0.0376, 0.0885 or 0.177 cpd), f n is noise frequency (varied across trials), f t is signal temporal frequency (8 Hz), d indicates motion direction (either 1 or −1 on each trial), ϕ j is chosen randomly from a uniform distribution between 0 and 1 on each frame, t is time in seconds (given by t = j/85), and θ(x) is the pixel visual angle according to Equation 14. Still frames, space-time plots and spatiotemporal Fourier amplitude spectra of the stimulus are shown in Figure 7.

Masked grating visual stimuli used in the experiment. (A,D) Spatiotemporal Fourier spectra, (B,E) space-time plots and (C,F) still frames of the visual stimulus in two conditions of the experiment. Panels (A–C) represent a no-noise condition: the stimulus is a moving grating at 0.0185 cpd and 8 Hz with no added noise. Panels (D–F) represent a masked condition: the stimulus consists of the same signal grating but with non-coherent temporally-broadband noise added at 0.05 cpd. There were in total 44 conditions in the experiment (4 unmasked and 40 masked gratings). Noise was always temporally broadband and its spatial frequency varied across conditions (in the range 0.0012 to 0.5 cpd).

Modeling

Figures 4 and 5 contain simulation results from the model shown in Figure 4B. The model consists of 10 opponent energy motion detectors (based on the schematics shown in Figure 1B), placed at different positions on a virtual retina, a linear sum and a two-sided threshold of the form:

The choice of 10 motion detectors was necessarily arbitrary. Our experimental stimuli covered 85° horizontally and 130° vertically of the central visual field, and would be expected to simulate hundreds of elementary motion detectors. We found that our simulation results did not change significantly with more than 10 detectors, and so used this small number in order to keep the simulations simple and fast to run.

The model was simulated numerically in Matlab. The spatial resolution of simulations was 0.01 deg, time step was 1/85 seconds and each simulated presentation was 1 second long.

The spatial and temporal sensitivity of energy model filters were adjusted to approximate the sensitivities of insects and mammals in different simulations. For simulations of humans (Figures 2A,C and 4), spatial filters were second and third derivatives of Gaussians (σ = 0.08°) and temporal filters were

where n = 3 for TF 1, n = 5 for TF 2 and k = 105 for both filters. These filter functions and parameters were taken from the published literature on human motion perception and spatiotemporal tuning8, 58. For mantises (Figures 2B,D and 5), we used Gaussian spatial filters and first-order low/high pass temporal filters:

The Laplace transforms of these temporal filters are:

Insect filter functions and parameters were again taken from the published literature31, 37, 43: where τ L = 13 ms, τ H = 40 ms, Δx = 4°, σ = 2.56°. The models were normalized such that all gave a mean response of 1 to a drifting grating at the optimal spatial and temporal frequency.

In each simulated trial, the model was presented with a 1D version of the grating used in the experiments. Energy model outputs were summed and averaged over the duration of each presentation then passed through thresh (x) (Equation 17) to produce a direction judgment similar to the one made by human observers in our experiment and the psychophysics experiments of ref. 5. When simulating the model with noisy gratings, up to 500 presentations were repeated per noise frequency point.

In simulations of insect motion detectors, response rates were calculated as the proportion of presentations in which the direction of motion computed by the model was the same as the signal component in the stimulus. In simulations of mammalian motion detectors, we calculated detection threshold as the threshold T of the function thresh (x) that resulted in the model judging motion direction correctly in 90% of the presentations.

Data Availability

The data collected in this study will be made available on https://github.com/m3project

References

Campbell, F. W. & Robson, J. Application of Fourier analysis to the visibility of gratings. Journal of Physiology 197, 551 (1968).

Carandini, M. What simple and complex cells compute. The Journal of Physiology 577, 463–466 (2006).

Burge, J. & Geisler, W. S. Optimal disparity estimation in natural stereo images. Journal of Vision 14, 1–1 (2014).

Batista, J. & Araújo, H. et al. Stereoscopic depth perception using a model based on the primary visual cortex. PLoS One 8, e80745 (2013).

Anderson, S. J. & Burr, D. C. Spatial and temporal selectivity of the human motion detection system. Vision Research 25, 1147–1154 (1985).

Carandini, M. et al. Do we know what the early visual system does? The Journal of Neuroscience 25, 10577–10597 (2005).

Clifford, C. W. & Ibbotson, M. Fundamental mechanisms of visual motion detection: models, cells and functions. Progress in Neurobiology 68, 409–437 (2002).

Adelson, E. H. & Bergen, J. R. Spatiotemporal models for the perception of motion. JOSA A 2, 284–299 (1985).

Emerson, R. C., Bergen, J. R. & Adelson, E. H. Directionally selective complex cells and the computation of motion energy in cat visual cortex. Vision Research 32, 203–218 (1992).

Hunter, I. & Korenberg, M. The identification of nonlinear biological systems: Wiener and Hammerstein cascade models. Biological Cybernetics 55, 135–144 (1986).

Meister, M. & Berry, M. J. The neural code of the retina. Neuron 22, 435–450 (1999).

Chichilnisky, E. A simple white noise analysis of neuronal light responses. Network: Computation in Neural Systems 12, 199–213 (2001).

Patterson, R. D. & Nimmo-Smith, I. Off-frequency listening and auditory-filter asymmetry. The Journal of the Acoustical Society of America 67, 229–245 (1980).

Sachs, M. B., Nachmias, J. & Robson, J. G. Spatial-frequency channels in human vision. JOSA 61, 1176–1186 (1971).

Blakemore, C. T. & Campbell, F. On the existence of neurones in the human visual system selectively sensitive to the orientation and size of retinal images. The Journal of physiology 203, 237 (1969).

Graham, N. & Nachmias, J. Detection of grating patterns containing two spatial frequencies: A comparison of single-channel and multiple-channels models. Vision Research 11, 251–IN4 (1971).

Pollen, D. A., Gaska, J. P. & Jacobson, L. D. Responses of simple and complex cells to compound sine-wave gratings. Vision Research 28, 25–39 (1988).

Chen, H.-W., Jacobson, L. D., Gaska, J. P. & Pollen, D. A. Cross-correlation analyses of nonlinear systems with spatiotemporal inputs (visual neurons). IEEE transactions on biomedical engineering 40, 1102–1113 (1993).

Zhou, Y.-X. & Baker, C. L. A processing stream in mammalian visual cortex neurons for non-Fourier responses. Science 261, 98–98 (1993).

Burton, G. Evidence for non-linear response processes in the human visual system from measurements on the thresholds of spatial beat frequencies. Vision Research 13, 1211–1225 (1973).

Marr, D. & Hildreth, E. Theory of edge detection. Proceedings of the Royal Society of London B: Biological Sciences 207, 187–217 (1980).

Morrone, M. C. & Burr, D. Feature detection in human vision: A phase-dependent energy model. Proceedings of the Royal Society of London B: Biological Sciences 235, 221–245 (1988).

Burr, D. C. Sensitivity to spatial phase. Vision Research 20, 391–396 (1980).

Lawton, T. B. The effect of phase structures on spatial phase discrimination. Vision Research 24, 139–148 (1984).

Badcock, D. R. Spatial phase or luminance profile discrimination? Vision Research 24, 613–623 (1984).

Legge, G. E. Adaptation to a spatial impulse: implications for Fourier transform models of visual processing. Vision Research 16, 1407–1418 (1976).

Harvey, L. O. & Gervais, M. J. Visual texture perception and Fourier analysis. Perception & Psychophysics 24, 534–542 (1978).

Stromeyer, C. F. & Julesz, B. Spatial-frequency masking in vision: Critical bands and spread of masking. Journal of the Optical Society of America 62, 1221–1232 (1972).

Maffei, L. & Fiorentini, A. The visual cortex as a spatial frequency analyser. Vision Research 13, 1255–1267 (1973).

Anderson, S. J. & Burr, D. C. Receptive field properties of human motion detector units inferred from spatial frequency masking. Vision Research 29, 1343–1358 (1989).

Nityananda, V. et al. The contrast sensitivity function of the praying mantis Sphodromantis lineola. Journal of Comparative Physiology A 201, 741–750 (2015).

Hassenstein, B. & Reichardt, W. Systemtheoretische Analyse der Zeit-, Reihenfolgen-und Vorzeichenauswertung bei der Bewegungsperzeption des Rüsselkäfers Chlorophanus. Zeitschrift für Naturforschung B 11, 513–524 (1956).

Qian, N., Andersen, R. & EH, A. Transparent motion perception as detection of unbalanced motion signals. III. Modeling. Journal of Neuroscience 14, 7381–7392 (1994).

Movshon, J. A., Thompson, I. D. & Tolhurst, D. J. Spatial summation in the receptive fields of simple cells in the cat’s striate cortex. The Journal of Physiology 283, 79–99 (1978).

Levinson, E. & Sekuler, R. The independence of channels in human vision selective for direction of movement. The Journal of Physiology 250, 347–366 (1975).

Rust, N. C., Schwartz, O., Movshon, J. A. & Simoncelli, E. P. Spatiotemporal elements of macaque v1 receptive fields. Neuron 46(6), 945–56 (2005).

Borst, A. Neural circuits for elementary motion detection. Journal of neurogenetics 28, 361–373 (2014).

Lu, Z.-L. & Sperling, G. The functional architecture of human visual motion perception. Vision Research 35, 2697–2722 (1995).

Van Santen, J. P. & Sperling, G. Temporal covariance model of human motion perception. Journal of the Optical Society of America A 1, 451–473 (1984).

Borst, A. & Helmstaedter, M. Common circuit design in fly and mammalian motion vision. Nature Neuroscience 18, 1067–1076 (2015).

Buchner, E. Elementary movement detectors in an insect visual system. Biological Cybernetics 24, 85–101 (1976).

Pick, B. & Buchner, E. Visual movement detection under light-and dark-adaptation in the fly, Musca domestica. Journal of Comparative Physiology 134, 45–54 (1979).

Rossel, S. Regional differences in photoreceptor performance in the eye of the praying mantis. Journal of Comparative Physiology 131, 95–112 (1979).

Van Santen, J. P. & Sperling, G. Elaborated Reichardt detectors. Journal of the Optical Society of America A 2, 300–321 (1985).

Dvorak, D., Srinivasan, M. & French, A. The contrast sensitivity of fly movement-detecting neurons. Vision Research 20, 397–407 (1980).

O’Carroll, D. C., Bidwell, N. J., Laughlin, S. B. & Warrant, E. J. Insect motion detectors matched to visual ecology. Nature 382, 63–66 (1996).

O’Carroll, D., Laughlin, S., Bidwell, N. & Harris, R. Spatiotemporal properties of motion detectors matched to low image velocities in hovering insects. Vision Research 37, 3427–3439 (1997).

Burr, D., Ross, J. & Morrone, M. Seeing objects in motion. Proceedings of the Royal Society of London B: Biological Sciences 227, 249–265 (1986).

Burr, D. C., Ross, J. & Morrone, M. C. Smooth and sampled motion. Vision Research 26, 643–652 (1986).

Daugman, J. G. Spatial visual channels in the Fourier plane. Vision Research 24, 891–910 (1984).

De Valois, K. K. & Tootell, R. Spatial-frequency-specific inhibition in cat striate cortex cells. The Journal of Physiology 336, 359 (1983).

Morgan, M. J. Spatial filtering precedes motion detection. Nature 355, 344–346 (1992).

Snyder, A. W., Stavenga, D. G. & Laughlin, S. B. Spatial information capacity of compound eyes. Journal of Comparative Physiology 116, 183–207 (1977).

Brainard, D. H. The psychophysics toolbox. Spatial Vision 10, 433–436 (1997).

Pelli, D. G. The VideoToolbox software for visual psychophysics: Transforming numbers into movies. Spatial Vision 10, 437–442 (1997).

Kleiner, M. et al. What’s new in Psychtoolbox-3. Perception 36, 1 (2007).

Nityananda, V., Tarawneh, G., Errington, S., Serrano-Pedraza, I. & Read, J. C. The optomotor response of the praying mantis is driven predominantly by the central visual field. Journal of Comparative Physiology A in press (2017).

Robson, J. Spatial and temporal contrast-sensitivity functions of the visual system. Journal of the Optical Society of America 56, 1141–1142 (1966).

Acknowledgements

G.T., V.N. and R.R. are funded by a Leverhulme Trust Research Leadership Award RL-2012-019 to J.R. I.S.P. is supported by grant PSI2014-51960-P from Ministerio de Economía y Competitividad (Spain). We are grateful to Adam Simmons for excellent insect husbandry and to Sid Henriksen for helpful comments on the manuscript.

Author information

Authors and Affiliations

Contributions

Designed research: G.T., V.N., R.R., J.R., I.S.P. Performed research: G.T., S.E., W.H., J.R., I.S.P. Contributed unpublished reagents/analytic tools: G.T., I.S.P. Analyzed data: G.T., J.R., I.S.P. Wrote the paper: G.T., J.R., I.S.P., B.G.C.

Corresponding author

Ethics declarations

Competing Interests

The authors declare that they have no competing interests.

Additional information

Publisher's note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Electronic supplementary material

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Tarawneh, G., Nityananda, V., Rosner, R. et al. Invisible noise obscures visible signal in insect motion detection. Sci Rep 7, 3496 (2017). https://doi.org/10.1038/s41598-017-03732-7

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-017-03732-7

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.