Abstract

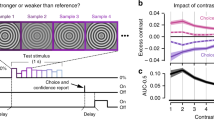

Many decisions under uncertainty entail the temporal accumulation of evidence that informs about the state of the environment. When environments are subject to hidden changes in their state, maximizing accuracy and reward requires non-linear accumulation of evidence. How this adaptive, non-linear computation is realized in the brain is unknown. We analyzed human behavior and cortical population activity (measured with magnetoencephalography) recorded during visual evidence accumulation in a changing environment. Behavior and decision-related activity in cortical regions involved in action planning exhibited hallmarks of adaptive evidence accumulation, which could also be implemented by a recurrent cortical microcircuit. Decision dynamics in action-encoding parietal and frontal regions were mirrored in a frequency-specific modulation of the state of the visual cortex that depended on pupil-linked arousal and the expected probability of change. These findings link normative decision computations to recurrent cortical circuit dynamics and highlight the adaptive nature of decision-related feedback to the sensory cortex.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 print issues and online access

$209.00 per year

only $17.42 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

Raw behavioral and eye-tracking data for experiments 1 and 2 are available at https://doi.org/10.6084/m9.figshare.14035935. Raw MEG data for experiment 1 are available at https://doi.org/10.6084/m9.figshare.14039384. Source-reconstructed MEG data for experiment 1 are available at https://doi.org/10.6084/m9.figshare.14170432. Source data for figures are available at https://doi.org/10.6084/m9.figshare.14039465. Behavioral, eye-tracking and MEG data for experiment 3 will be made available following publication of a follow-up manuscript currently in preparation that will cover other research questions that this dataset was collected to address.

Code availability

All analysis code is available at https://github.com/DonnerLab/2021_Murphy_Adaptive-Circuit-Dynamics-Across-Human-Cortex.

References

Bogacz, R., Brown, E., Moehlis, J., Holmes, P. & Cohen, J. D. The physics of optimal decision making: a formal analysis of models of performance in two-alternative forced-choice tasks. Psychol. Rev. 113, 700–765 (2006).

Yang, T. & Shadlen, M. N. Probabilistic reasoning by neurons. Nature 447, 1075–1080 (2007).

Brody, C. D. & Hanks, T. D. Neural underpinnings of the evidence accumulator. Curr. Opin. Neurobiol. 37, 149–157 (2016).

Gold, J. I. & Shadlen, M. N. The neural basis of decision making. Annu. Rev. Neurosci. 30, 535–574 (2007).

de Lange, F. P., Rahnev, D. A., Donner, T. H. & Lau, H. Prestimulus oscillatory activity over motor cortex reflects perceptual expectations. J. Neurosci. 33, 1400–1410 (2013).

Donner, T. H., Siegel, M., Fries, P. & Engel, A. K. Buildup of choice-predictive activity in human motor cortex during perceptual decision making. Curr. Biol. 19, 1581–1585 (2009).

Fischer, A. G., Nigbur, R., Klein, T. A., Danielmeier, C. & Ullsperger, M. Cortical beta power reflects decision dynamics and uncovers multiple facets of post-error adaptation. Nat. Commun. 9, 5038 (2018).

Hanks, T. D. et al. Distinct relationships of parietal and prefrontal cortices to evidence accumulation. Nature 520, 220–223 (2015).

Mante, V., Sussillo, D., Shenoy, K. V. & Newsome, W. T. Context-dependent computation by recurrent dynamics in prefrontal cortex. Nature 503, 78–84 (2013).

Wyart, V., de Gardelle, V., Scholl, J. & Summerfield, C. Rhythmic fluctuations in evidence accumulation during decision making in the human brain. Neuron 76, 847–858 (2012).

O’Connell, R. G., Dockree, P. M. & Kelly, S. P. A supramodal accumulation-to-bound signal that determines perceptual decisions in humans. Nat. Neurosci. 15, 1729–1735 (2012).

Yu, A. J. & Dayan, P. Uncertainty, neuromodulation, and attention. Neuron 46, 681–692 (2005).

Glaze, C. M., Kable, J. W. & Gold, J. I. Normative evidence accumulation in unpredictable environments. eLife 4, e08825 (2015).

Ossmy, O. et al. The timescale of perceptual evidence integration can be adapted to the environment. Curr. Biol. 23, 981–986 (2013).

Wimmer, K. et al. Sensory integration dynamics in a hierarchical network explains choice probabilities in cortical area MT. Nat. Commun. 6, 6177 (2015).

Wang, X. J. Probabilistic decision making by slow reverberation in cortical circuits. Neuron 36, 955–968 (2002).

Nienborg, H. & Cumming, B. G. Decision-related activity in sensory neurons reflects more than a neuron’s causal effect. Nature 459, 89–92 (2009).

Kiani, R., Hanks, T. D. & Shadlen, M. N. Bounded integration in parietal cortex underlies decisions even when viewing duration is dictated by the environment. J. Neurosci. 28, 3017–3029 (2008).

Piet, A. T., El Hady, A. & Brody, C. D. Rats adopt the optimal timescale for evidence integration in a dynamic environment. Nat. Commun. 9, 4265 (2018).

Cheadle, S. et al. Adaptive gain control during human perceptual choice. Neuron 81, 1429–1441 (2014).

Talluri, B. C., Urai, A. E., Tsetsos, K., Usher, M. & Donner, T. H. Confirmation bias through selective overweighting of choice-consistent evidence. Curr. Biol. 28, 3128–3135 (2018).

Tsetsos, K., Chater, N. & Usher, M. Salience driven value integration explains decision biases and preference reversal. Proc. Natl Acad. Sci. USA 109, 9659–9664 (2012).

Weiss, A., Chambon, V., Lee, J. K., Drugowitsch, J. & Wyart, V. Interacting with volatile environments stabilizes hidden-state inference and its brain signatures. Nat. Commun. 12, 2228 (2021).

Murphy, P. R., Boonstra, E. & Nieuwenhuis, S. Global gain modulation generates time-dependent urgency during perceptual choice in humans. Nat. Commun. 7, 13526 (2016).

Murray, J. D. et al. A hierarchy of intrinsic timescales across primate cortex. Nat. Neurosci. 17, 1661–1663 (2014).

Wang, X. J. Macroscopic gradients of synaptic excitation and inhibition in the neocortex. Nat. Rev. Neurosci. 21, 169–178 (2020).

Wilming, N., Murphy, P. R., Meyniel, F. & Donner, T. H. Large-scale dynamics of perceptual decision information across human cortex. Nat. Commun. 11, 5109 (2020).

McGinley, M. J. et al. Waking state: rapid variations modulate neural and behavioral responses. Neuron 87, 1143–1161 (2015).

Bastos, A. M. et al. Visual areas exert feedforward and feedback influences through distinct frequency channels. Neuron 85, 390–401 (2015).

van Kerkoerle, T. et al. Alpha and gamma oscillations characterize feedback and feedforward processing in monkey visual cortex. Proc. Natl Acad. Sci. USA 111, 14332–14341 (2014).

Felleman, D. J. & van Essen, D. C. Distributed hierarchical processing in the primate cerebral cortex. Cereb. Cortex 1, 1–47 (1991).

Gwilliams, L. & King, J. R. Recurrent processes support a cascade of hierarchical decisions. eLife 9, e56603 (2020).

Urai, A. E., De Gee, J. W., Tsetsos, K. & Donner, T. H. Choice history biases subsequent evidence accumulation. eLife 8, e46331 (2019).

Haefner, R. M., Berkes, P. & Fiser, J. Perceptual decision-making as probabilistic inference by neural sampling. Neuron 90, 649–660 (2016).

Eckhoff, P., Wong-Lin, K. & Holmes, P. Optimality and robustness of a biophysical decision-making model under norepinephrine modulation. J. Neurosci. 29, 4301–4311 (2009).

Dayan, P. & Yu, A. J. Phasic norepinephrine: a neural interrupt signal for unexpected events. Network 17, 335–350 (2006).

Filipowicz, A. L. S., Glaze, C. M., Kable, J. W. & Gold, J. I. Pupil diameter encodes the idiosyncratic, cognitive complexity of belief updating. eLife 9, e57872 (2020).

Nassar, M. R. et al. Rational regulation of learning dynamics by pupil-linked arousal systems. Nat. Neurosci. 15, 1040–1046 (2012).

de Gee, J. W. et al. Dynamic modulation of decision biases by brainstem arousal systems. eLife 6, e23232 (2017).

Joshi, S., Li, Y., Kalwani, R. M. & Gold, J. I. Relationships between pupil diameter and neuronal activity in the locus coeruleus, colliculi, and cingulate cortex. Neuron 89, 221–234 (2016).

Reimer, J. et al. Pupil fluctuations track rapid changes in adrenergic and cholinergic activity in cortex. Nat. Commun. 7, 13289 (2016).

Mobbs, D., Trimmer, P. C., Blumstein, D. T. & Dayan, P. Foraging for foundations in decision neuroscience: insights from ethology. Nat. Rev. Neurosci. 19, 419–427 (2018).

Marr, D. & Poggio, T. From Understanding Computation to Understanding Neural Circuitry. (Massachusetts Institute of Technology, Artificial Intelligence Laboratory, 1976).

Harris, K. D. & Thiele, A. Cortical state and attention. Nat. Rev. Neurosci. 12, 509–523 (2011).

Behrens, T. E., Woolrich, M. W., Walton, M. E. & Rushworth, M. F. Learning the value of information in an uncertain world. Nat. Neurosci. 10, 1214–1221 (2007).

McGuire, J. T., Nassar, M. R., Gold, J. I. & Kable, J. W. Functionally dissociable influences on learning rate in a dynamic environment. Neuron 84, 870–881 (2014).

Donner, T. H. & Siegel, M. A framework for local cortical oscillation patterns. Trends Cogn. Sci. 15, 191–199 (2011).

Amit, D. J. & Brunel, N. Model of global spontaneous activity and local structured activity during delay periods in the cerebral cortex. Cereb. Cortex 7, 237–252 (1997).

Inagaki, H. K., Fontolan, L., Romani, S. & Svoboda, K. Discrete attractor dynamics underlies persistent activity in the frontal cortex. Nature 566, 212–217 (2019).

Friston, K. J. The free-energy principle: a unified brain theory? Nat. Rev. Neurosci. 11, 127–138 (2010).

Brainard, D. H. The psychophysics toolbox. Spat. Vis. 10, 433–436 (1997).

Teufel, H. J. & Wehrhahn, C. Evidence for the contribution of S cones to the detection of flicker brightness and red–green. J. Opt. Soc. Am. A 17, 994–1006 (2000).

Maris, E. & Oostenveld, R. Nonparametric statistical testing of EEG- and MEG-data. J. Neurosci. Methods 164, 177–190 (2007).

Birge, B. in Proceedings of the IEEE Swarm Intelligence Symposium https://ieeexplore.ieee.org/document/1202265 (IEEE Swarm Intelligence Symposium, 2003).

Drugowitsch, J., Wyart, V., Devauchelle, A. D. & Koechlin, E. Computational precision of mental inference as critical source of human choice suboptimality. Neuron 92, 1398–1411 (2016).

Nassar, M. R., Wilson, R. C., Heasly, B. & Gold, J. I. An approximately Bayesian delta-rule model explains the dynamics of belief updating in a changing environment. J. Neurosci. 30, 12366–12378 (2010).

Prat-Ortega, G., Wimmer, K., Roxin, A. & de la Rocha, J. Flexible categorization in perceptual decision making. Nat. Commun. 12, 1283 (2021).

Oostenveld, R., Fries, P., Maris, E. & Schoffelen, J. M. FieldTrip: open source software for advanced analysis of MEG, EEG, and invasive electrophysiological data. Comput. Intell. Neurosci. 2011, 156869 (2011).

Gramfort, A. et al. MEG and EEG data analysis with MNE-Python. Front. Neurosci. 7, 267 (2013).

Van Veen, B. D., van Drongelen, W., Yuchtman, M. & Suzuki, A. Localization of brain electrical activity via linearly constrained minimum variance spatial filtering. IEEE Trans. Biomed. Eng. 44, 867–880 (1997).

Dale, A. M., Fischl, B. & Sereno, M. I. Cortical surface-based analysis. I. Segmentation and surface reconstruction. Neuroimage 9, 179–194 (1999).

Fischl, B., Sereno, M. I. & Dale, A. M. Cortical surface-based analysis. II: Inflation, flattening, and a surface-based coordinate system. Neuroimage 9, 195–207 (1999).

Wang, L., Mruczek, R. E., Arcaro, M. J. & Kastner, S. Probabilistic maps of visual topography in human cortex. Cereb. Cortex 25, 3911–3931 (2015).

Glasser, M. F. et al. A multi-modal parcellation of human cerebral cortex. Nature 536, 171–178 (2016).

Wandell, B. A., Dumoulin, S. O. & Brewer, A. A. Visual field maps in human cortex. Neuron 56, 366–383 (2007).

Donoghue, T. et al. Parameterizing neural power spectra into periodic and aperiodic components. Nat. Neurosci. 23, 1655–1665 (2020).

Pedregosa, F. et al. Scikit-learn: machine learning for Python. J. Mach. Learn. Res. 12, 2825–2830 (2011).

Acknowledgements

We thank K. Wimmer for discussion and advice on the cortical circuit model and F. Meyniel for comments on the manuscript. This work was funded by the Deutsche Forschungsgemeinschaft (German Research Foundation, DO 1240/3-1, DO 1240/4-1 and SFB 936 (Projekt-Nr. A7)).

Author information

Authors and Affiliations

Contributions

P.R.M., conceptualization, methodology, investigation, software, formal analysis, visualization, writing (original draft, review and editing); N.W., conceptualization, methodology, software, writing (review and editing); D.C.H.-B., investigation, formal analysis; G.P.O., software, formal analysis, writing (review and editing); T.H.D., conceptualization, methodology, resources, writing (original draft, review and editing), supervision.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Peer review information Nature Neuroscience thanks Laurence Hunt, Valentin Wyart, and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data

Extended Data Fig. 1 Sensitivity of normative evidence accumulation to change-point probability and uncertainty across a range of generative task statistics.

a, Non-linearity in normative model for different hazard rates (H): Posterior belief after accumulating the most recent sample (Ln) is converted into prior belief for next sample (ψn+1, both variables expressed as log-odds for each alternative) through a non-linear transform, which saturates (slope ≈ 0) for strong Ln and entails more moderate information loss (0<<slope<1) for weak Ln. In a static environment (H = 0), the model combines new evidence (log-likelihood ratio for sample n, LLRn) with prior (ψn) into updated belief without information loss (that is Ln = ψn + LLRn; ψn+1 = Ln). In an unpredictable environment (H = 0.5), the new belief is determined solely by the most recent evidence sample (that is Ln = LLRn; ψn+1 = 0) so that no evidence accumulation takes place. b, Example trajectories of the model decision variable (L) as function of the same evidence stream, but for different levels of H. c, Upper panels of each grid segment: Change point-triggered dynamics of change-point probability (CPP) and uncertainty (−|ψ|) derived from normative model as function of H (grid rows) and evidence signal-to-noise ratio (SNR, difference in generative distribution means over their standard deviation; grid columns). Lower panels of each grid point: Contribution of computational variables (including CPP and −|ψ|) to normative belief updating, expressed as coefficients of partial determination for terms of a linear model predicting the updated prior belief for a forthcoming sample. Yellow background (center of grid): generative statistics of the task used in Experiment 1; reproduced from main Fig. 1e for comparison. Data for each parameter combination were derived from a simulated sequence of 10 million observations. d, Psychophysical kernels of the normative model for the task used in experiment 1 (12 samples per trial, generative SNR ≈ 1.2) but for each level of H from panel c. Kernels reflect the time-resolved weight of evidence on final choice (left) and the modulation of this evidence weighting by sample-wise change-point probability (CPP, middle) and uncertainty (−|ψ|, right). Kernels were produced by adding a moderate amount of decision noise (v = 0.7, see Methods) to the ideal observer (that is the normative model with perfect knowledge of generative statistics); without noise, coefficients at high H (where choices are based almost entirely on the final evidence sample) are not identifiable.

Extended Data Fig. 2 Consistency of human choices with those of idealized choice strategies, and dependence of choice accuracy on final state duration.

a, Choices for n = 17 independent participants as function of log-posterior odds (z-scored) derived from each of three alternative strategies: basing choices only on final evidence sample (gray); perfect evidence accumulation (magenta); and an ideal observer with perfect knowledge of the generative task statistics and employing the normative accumulation process for the task (blue). Points and error bars show observed data ± s.e.m.; lines and shaded areas show mean ± s.e.m. of fits of sigmoid functions to the data. Slopes of fitted sigmoids were steepest for ideal observer (ideal vs. perfect accumulation: t16 = 5.9, p < 0.0001; ideal vs. last-sample: t16 = 9.6, p < 10−7; two-tailed paired t-tests), indicating that human choices were most consistent with those of the ideal observer. b, Choice accuracy for the same n = 17 participants as a function of duration of the final environmental state for the human participants (black), idealized strategies from panel a, fits of the normative model (cyan), and the circuit model (orange). Participants’ choice accuracy increased with the number of samples presented after the final state change on each trial, consistent with temporal accumulation. Error bars and shaded regions indicate s.e.m.

Extended Data Fig. 3 Effect of sample onset asynchrony (SOA) on diagnostic signatures of adaptive evidence accumulation.

Psychophysical kernels reflecting the time-resolved weight of evidence on final choice (left), and the modulation of this evidence weighting by sample-wise change-point probability (CPP, middle) and uncertainty (−|ψ|, right), separately for the two conditions of experiment 2 (n = 4 independent participants) in which participants performed the decision-making task at fast (0.2 s) and slow (0.6 s) sample onset asynchronies. Thin unsaturated lines are individual participants, thick saturated lines are means across participants. Cluster-based permutation tests (10,000 permutations) of SOA effects on each kernel type revealed no significant effects.

Extended Data Fig. 4 Fit to human behavior of alternative evidence accumulation schemes.

Alternative accumulation schemes are leaky accumulation (gray), where the evolving decision variable is linearly discounted by a fixed leak term after every updating step; and perfect accumulation toward non-absorbing bounds (green), which imposes upper and lower limits on the evolving decision variable without terminating the decision process, thus enabling changes of mind even after the bound has been reached. Both schemes combined can approximate the normative accumulation process across generative settings (ref. 13). All panels based on data from n = 17 independent participants. a, Choice accuracies for human participants (grey bars) and both model fits. b, Choice accuracy as a function of duration of the final environmental state, for the participants and the models. c, Regression coefficients reflecting the subjective weight of evidence associated with binned stimulus locations, for the participants and the models. d, Psychophysical kernels for the data and the model reflecting the time-resolved weight of evidence on final choice (left), and the modulation of this evidence weighting by sample-wise change-point probability (CPP, middle) and uncertainty (−|ψ|, right). In panels b-d, error bars and shaded regions indicate s.e.m. Fits of the model versions used to generate behavior in all panels included free parameters for both a non-linear stimulus-to-LLR mapping function and a gain factor on inconsistent samples (see Extended Data Fig. 5). Even with these additional degrees of freedom, the leaky accumulator model failed to reproduce the CPP and −|ψ| modulations characteristic of human behavior. By contrast, like the normative model, perfect accumulation to non-absorbing bounds captured all qualitative features identified in the behavioral data. This is in line with the insight that this form of accumulation closely approximates the normative model in settings like ours, where strong belief states are formed often (that is low noise and/or low H; ref. 13). This form of accumulation also captures a key feature of the dynamics of the circuit model that we interrogate in the main text; that is a saturating decision variable in response to consecutive consistent samples of evidence.

Extended Data Fig. 5 Model comparison and qualitative signatures indicate approximation of normative belief updating by measured human behavior.

All panels based on data from n = 17 independent participants. a, Bayes Information Criteria (BIC) for all models fit to the human choice data, relative to model with lowest group-mean BIC. Lines, group mean; dots, individual participants. Model constraints are specified by the tick labels. Colors denote unbounded perfect accumulator (magenta), leaky accumulator (gray), perfect accumulator with non-absorbing bounds (green), and the normative accumulation process with a subjective hazard rate (cyan). Labels refer to the following: ‘Linear’ (‘Nonlinear’)’=linear (non-linear) scaling of stimulus-to-LLR mapping function; ‘Gain on inconsist.’=multiplicative gain term applied to inconsistent samples; ‘Leak’=accumulation leak; ‘Bound’= height of non-absorbing bounds; ‘H’ = subjective hazard rate. ‘Perfect’ is a special case of the leaky accumulator where leak=0. All models included a noise term applied to the final log-posterior odds per trial. See Methods for additional model details. Labels in black text highlight models plotted in main Fig. 2 and Extended Data Fig. 4. Model with lowest group-mean BIC (‘full normative fit’ in remaining panels) employs normative accumulation with subjectivity in hazard rate, a gain term on inconsistent samples, and non-linear stimulus-to-LLR mapping. b, Subjective hazard rates from full normative fits (cyan), with true hazard rate in blue. Participants underestimated the volatility in the environment (t16 = −7.7, p < 10−6, two-tailed one-sample t-test of subjective H against true H). c, Non-linearity in evidence accumulation estimated directly from choice data (black) and from full normative model fits (cyan). Non-linearity of the ideal observer shown in blue. Shaded areas, s.e.m. d, Multiplicative gain factors applied to evidence samples that were inconsistent with the existing belief state, estimated from full normative fits. Gain factor>1 reflects relative up-weighting of inconsistent samples beyond that prescribed by normative model; gain factor<1 reflects relative down-weighting of inconsistent samples. The ideal observer employing the normative accumulation process uses gain factor=1 (dashed blue line). Participants assigned higher weight to inconsistent samples than the ideal observer (t16 = 8.2, p < 10−6, two-tailed one-sample t-test of fitted weights against 1). e, Regression coefficients reflecting modulation of evidence weighting by change-point probability (CPP). Shown are coefficients for the human participants (black line), full normative fits (cyan), normative model fits without gain factor applied to inconsistent evidence (grey), and the ideal observer with matched noise (blue). Error bars and shaded regions, s.e.m. f, Mapping of stimulus location (polar angle; x-axis) onto evidence strength (LLR; y-axis) across the full range of stimulus locations. Blue line reflects the true mapping given the task generative statistics, used by the ideal observer. Cyan line and shaded areas show mean ± s.e.m of subjective mappings used by the human participants, estimated as an interpolated non-parametric function (Methods). g, Regression coefficients reflecting subjective weight of evidence associated with binned stimulus locations, for the human participants (black line), full normative model fits including the non-linear LLR mapping shown in panel f (cyan), normative model fits allowing only a linear LLR mapping (grey), and the ideal observer with matched noise (blue). Error bars and shaded regions, s.e.m.

Extended Data Fig. 6 Assessment of boundary conditions at which circuit model approximates normative evidence accumulation.

A reduction of the spiking-neuron circuit model (ref. 57) was used to explore the impact of different dynamical regimes on behavioral signatures of evidence weighting. Left two columns: shape of model’s energy landscape (‘potential’ φ) described by equations: \(\frac{{dX}}{{dt}} = - \frac{{d\varphi }}{{dX}} + \sigma \xi _t,\hspace{3mm}\varphi \left( X \right) = - k\mu _tX - \frac{{aX^2}}{2} + \frac{{bX^4}}{4}\) in response to ambiguous (LLR = 0, left) or strong evidence (LLR = mean+1 s.d. of LLRs across experiment=1.35, second from left). µt was the differential stimulus input to the choice-selective populations at time point t relative to trial onset (in our case the per-sample LLR, which changed every 0.4 s) that was linearly scaled by parameter k (fixed at 2.2); ξt was a zero-mean, unit-variance Gaussian noise term that was linearly scaled by parameter σ (fixed at 0.8); and a and b shape the potential. Middle-to-right columns: psychophysical kernels and modulations of evidence weighting by CPP and −|ψ|. a, Double-well potential with small barrier between wells (a = 2, b = 1), corresponding to weak bi-stable attractor dynamics. This model variant featured a saturating decision variable in the face of multiple consistent evidence samples (akin to non-absorbing bounds), wells that maintained commitment states but were sufficiently shallow for changes-of-mind to occur in response to strongly inconsistent evidence, and sensitivity to new input when at the ‘saddle point’ between wells (that is during periods of uncertainty). As a consequence, it produced all key qualitative features of normative evidence accumulation (recency, strong modulation by CPP; weak modulation by uncertainty). b, Single-well potential with no barrier (a = 0, b = 1). This model variant also featured a saturating decision variable, but no stable states of commitment. Thus, it exhibited stronger recency in evidence weighting than the one in panel a. c, Flat potential with no wells (a = 0, b = 0), corresponding to perfect evidence accumulation without bounds. This model lacked both a saturating decision variable and stable states. As a consequence, it weighed all evidence samples equally (flat psychophysical kernel) and did not produce clear modulations by CPP or uncertainty. d, Double-well potential with high barrier between wells (a = 5, b = 1), corresponding to strong bi-stable attractor dynamics. In this model variant, the wells were too deep for changes-of-mind to occur for all but the most extreme inconsistent evidence. Thus, it produced extreme primacy in evidence weighting. The evidence weighting signatures of the regimes in panels c, d were qualitatively inconsistent with the signatures of participants’ behavior (compare Fig. 2b). All three signatures were qualitatively re-produced by the regimes in both a and b, with the closest approximation to human behavior provided by the weak bi-stable attractor regime in panel a (highlighted in yellow).

Extended Data Fig. 7 Intrinsic fluctuation dynamics across visual cortical hierarchy.

a, Power spectra and associated model fits of intrinsic fluctuations across cortex (n = 17 independent participants). For each hemisphere, ROI, and trial, we computed the power spectrum (1–120 Hz) from a 1-second ‘baseline’ period preceding trial onset and averaged these spectra across trials and hemispheres. Shaded areas show mean +/− s.e.m of observed power spectra. We modeled the power spectra as a linear superposition of an aperiodic component (power law scaling) and a variable number of periodic components (band-limited peaks) using the FOOOF toolbox (ref. 66; default constraints, without so-called ‘knees’ which were absent in the measured spectra, presumably due to the short intervals; 49–51 Hz and 99–101 Hz excluded from fits due to contamination by line noise). The fitted aperiodic components only are overlaid as dashed lines. b, Power law scaling exponents of the aperiodic components estimated from fits in a, providing a measure of the relative contributions of fast vs. slow fluctuations to the measured power spectrum. Lines, group mean; dots, individual participants. ‘Forward’ indicates direction of hierarchy inferred from fitted exponents across the dorsal set of visual field map ROIs (V1, V2-V4, V3A/B, IPS0/1, IPS2/3); P-value derived from two-tailed permutation test on slope of line fitted to power law exponents ordered by position along visual cortical hierarchy.

Extended Data Fig. 8 Normative accumulation, model fits and motor choice signal for experiment 3.

a, Contribution of computational variables (including CPP and −|ψ|) to normative belief updating in generative contexts from experiment 3, expressed as coefficients of partial determination (upper) and regression coefficients (lower) for terms of a linear model predicting the updated prior belief for a forthcoming sample (ψn+1). Derived from application of the normative model to a single sequence of 10 million simulated samples, generated using the same statistics as the task in experiment 3 (H = {0.1, 0.9}; SNR = 2). Note that the strength of the contributions of each term is the same across the two levels of H, which is expected when the chosen levels of H are symmetric around 0.5 as here. However, the sign of the contributions of prior (ψn) and new evidence (LLRn) are reversed under high H, accounting for the flipping of belief sign imposed by the normative non-linearity (Fig. 7b, main text). Note also the minimal contribution of −|ψ| in this generative setting, which prompted us to not consider −|ψ| modulatory effects in analyses of the data from human participants. b, Bayes Information Criteria (BIC) for all models fit to the human choice data from experiment 3 (n = 30 independent participants), relative to model with the lowest BIC score averaged over participants. Lines, group mean; dots, individual participants. Model constraints are specified by the tick labels. All models included at least one subjective hazard rate, linear scaling parameter applied to the stimulus-to-LLR function, and a decision noise term applied to the final log-posterior odds per trial. Models could also include a gain parameter applied to evidence that is inconsistent with either the posterior after the last sample (Ln-1) or the prior for the next sample (ψn). We fit models with a variety of different parameter constraints over H conditions, as specified via the tick labels. See Methods for additional model details. Label in black text highlights model used for remaining analyses. This model included a gain on inconsistent evidence relative to ψn, and allowed H and noise to vary across H conditions. This model produced BIC scores that were marginally, but not significantly, higher than a less parsimonious model in which the gain parameter was also free to vary across H conditions. c, Weight of residual fluctuations in the MEG lateralization signal from M1 on final choice, over and above the weight on choice exerted by key model variables (n = 29 independent participants; 1 participant for whom regression models did not converge excluded). Effects shown are averaged over final 3 evidence samples (positions 8-10) in the trial, and averaged over H conditions. Colors reflect group-level t-scores. Black contours, p < 0.05, two-tailed cluster-based permutation test; gray contours, largest sub-threshold clusters (p < 0.05, two-tailed t-test). The highlighted frequency band encompassing the majority of the choice-predictive effect in M1 (12-17 Hz) was used to identify choice signals in early visual cortex (main text Fig. 7f).

Extended Data Fig. 9 Effect of sample-wise pupil response (derivative) on source-localized, hemisphere-averaged MEG signal.

Regions of interest (ROIs) are plotted on cortical surface in top left and top right insets, with colors corre-sponding to those of ROI-specific time-frequency map titles. Color scale reflects group-level t-scores derived from subject-specific regression coefficients (n = 17 independent participants). Contours, p < 0.05 (two-tailed cluster-based permutation test). Large pupil responses were associated with a strong decrease in low-frequency (<8 Hz) power across all ROIs, a decrease in alpha- and beta-band (8-35 Hz) power predominantly in intraparietal and (pre)motor regions, and a weak increase in high-frequency (65-100 Hz) power in a restricted set of ROIs (uncorrected two-tailed permutation tests on regression coefficients averaged over time-points: VO1/2, p = 0.0004; PHC, p = 0.025; IPS0/1, p = 0.049; IPS2/3, p = 0.010; aIPS, p = 0.003).

Extended Data Fig. 10 Change-point probability modulates normative belief updating and phasic arousal responses more strongly than alternative metrics of surprise.

a, Alternative surprise metrics as a function of posterior belief after previous sample (Ln-1) and evidence provided by the current sample (LLRn). (a1) CPP (see main text). (a2) ‘Unconditional’ surprise, U, defined as Shannon surprise (negative log probability) associated with new sample location (‘locn’), given only knowledge of the generative distributions associated with each environmental state S = {l,r}. In our task, U varied monotonically with absolute evidence strength (|LLR|) and was uncorrelated with log(CPP) (Spearman’s ρ = 0.00). (a4) ‘Conditional’ surprise Z: Shannon surprise associated with new sample, conditioned on both knowledge of the generative distributions and one’s current belief about the environmental state. Z was moderately correlated with log(CPP) in our design (Spearman’s ρ = 0.36). Unlike CPP, which we use solely to decompose normative evidence accumulation and relate it to neurophysiological signals, in some models this form of surprise serves as the objective function to be minimized by the inference algorithm (for example ref. 50). (a3) In our task setting, Z was closely approximated (Spearman’s ρ = 0.996) by a linear combination of log(CPP) and U (weights: w and 1-w, respectively; determined by Nelder-Mead simplex optimization as w = 0.274). b, Characterization of another form of surprise derived from a model of phasic locus coeruleus activity by ref. 36, hence denoted as ‘Dayan & Yu surprise’ and abbreviated to DY. For the oddball target detection task modelled in ref. 36, DY was defined as the ratio between the fixed prior probability of an unlikely environmental state and its posterior probability given a new stimulus. In our 2AFC task, where the prior varies during decision formation, we defined DY as indicated below the heatmap. We found that this DY measure was highly similar to CPP (ρ = 0.97; compare panels b and a1). c, Modulations of normative belief updating by CPP, U and Z derived from application of the normative model to a single sequence of 10 million simulated samples, generated using the same statistics as the task in Fig. 1a (main text). For each surprise metric, we fit a linear model to updated prior belief (same form as model 1 in Methods; inset: X, surprise metric). Left: coefficients of partial determination; right: contributions expressed as regression coefficients. CPP exerted the strongest and only positive modulatory effect on evidence weighting, consistent with the observed effects on behavior, cortical dynamics, and pupil (see main text). d, As panel c, but for expanded linear model that included modulations of evidence weighting by CPP and U, which combine linearly into Z. Only CPP yielded a robust, positive effect on evidence weighting. e, Encoding of surprise metrics in pupil response (temporal derivative) to evidence samples (n = 17 independent participants). Encoding time course for Z was computed by a regression model that included X- and Y-gaze positions as nuisance variables. Encoding time courses for CPP and U were derived from an expanded regression model that included both terms. CPP had the strongest effect of the three considered surprise metrics, whereas the univariate effect of Z was almost completely accounted for by a weaker effect of U. Thus, while the magnitude of the pupil response was sensitive to both Z components, CPP was clearly the stronger contributor. Shaded area, s.e.m., significance bars, p < 0.05 (two-tailed cluster-based permutation test).

Supplementary information

Supplementary Information

Supplementary Note 1.

Supplementary Table

Supplementary Table 1

Rights and permissions

About this article

Cite this article

Murphy, P.R., Wilming, N., Hernandez-Bocanegra, D.C. et al. Adaptive circuit dynamics across human cortex during evidence accumulation in changing environments. Nat Neurosci 24, 987–997 (2021). https://doi.org/10.1038/s41593-021-00839-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41593-021-00839-z

This article is cited by

-

Stable choice coding in rat frontal orienting fields across model-predicted changes of mind

Nature Communications (2022)

-

Efficient stabilization of imprecise statistical inference through conditional belief updating

Nature Human Behaviour (2022)

-

Persistent activity in human parietal cortex mediates perceptual choice repetition bias

Nature Communications (2022)

-

Time estimation and arousal responses in dopa-responsive dystonia

Scientific Reports (2022)

-

The Effects of Neural Gain on Reactive Cognitive Control

Computational Brain & Behavior (2022)