Abstract

Well-designed visual displays have the power to convey health messages in clear, effective ways to non-experts, including journalists, patients and policymakers. Poorly designed visual displays, however, can confuse and alienate recipients, undermining health messages. In this Perspective, we propose a structured framework for effective visual communication of health information, using case examples of three common communication tasks: comparing treatment options, interpreting test results, and evaluating risk scenarios. We also show simple, practical ways to evaluate a design’s success and guide improvements. The proposed framework is grounded in research on health risk communication, visualization and decision science, as well as our experience in communicating health data.

Similar content being viewed by others

Main

Well-designed visual displays have unique potential for communicating complex information. They can show the structure of the data in ways that are impossible with text. They can allow users to explore the data in personally relevant ways. They can improve access for users with limited reading ability. They can reveal holistic (gestalt) patterns not readily rendered in words1,2. Poorly designed visual displays, however, can confuse and alienate recipients, undermining their ability to make sound health decisions and communicate with healthcare providers. In a painful ongoing example, ineffective communications, including poorly executed visuals, have been rife during the pandemic, revealing the limits to current practice in visual communication of health information3.

The potential for visual displays to enhance communication and public health has produced a torrent of visualization research, accelerated by the availability of computer programs for generating them. Most of that research addresses technical issues such as computational efficiency, statistical analyses, database integration or refresh rates, but a growing and important stream of research involves empirical studies of how well displays work for their intended users4,5,6,7,8. These studies, however, typically evaluate the relative usability of displays that differ in some theoretically interesting way (for example, color pallet, icon style9), and, as a result, they have little to say about the absolute usability of displays for real-world tasks. These studies suggest design principles for potentially successful displays (for example, which icons work better), but the range and variation in potential displays and potential uses is so complex that studies of general design principles cannot predict how well any one display item will perform for its intended task. Nor can they say whether that performance is good enough, given the decisions and health outcomes that depend on it. As a measure of that complexity, one systematic review found that the literature contained evaluations of 392 different combinations of visual display design features and uses10. Therefore, any graphic for conveying health data needs dedicated testing to assess how well it performs with people such as the intended users.

There are many thoughtful summaries of best practices for display design4,11,12, informed, to varying degrees, by behavioral research. However, ‘best practices’ can still be inadequate, depending on the context. In this Perspective, we offer a simple and concise framework for designing visual displays of health information, informed by the experience captured in such best practices, while also recognizing the need for testing all displays when health depends on them. We illustrate the framework with three detailed case examples, showing the kinds of design decisions that are involved in creating visual displays for common communication tasks: comparing treatment options, interpreting test results and evaluating risk scenarios.

Designing effective visual displays

Our framework applies a decision science perspective13, integrating research in health communication, visualization and behavioral science, drawing on our own experience in health communication research and practice. The key steps, which apply to any health communication context, are discussed below and summarized in Box 1 (refs. 14,15).

Analyze the audience members’ decision, to establish communication goals

A disciplined approach to communicating health information begins by setting clear communication goals for a well-defined target audience. This means thinking about the decision that audience members need to take to act appropriately on the information in the display and distinguishing the facts that they most need to know from the facts that it would be nice to know, among all the health facts that could possibly be shared. Decision science has formal procedures for identifying these critical facts16. For example, value-of-information analysis assesses the probability that learning a fact will change a decision17. However, talking to relevant audience members and thinking about the decisions that they face can go a long way toward focusing a communication.

Describe audience members’ current beliefs to identify communication challenges

Audience members may already know some of the critical facts. Repeating those facts can reinforce that knowledge; however, it can also de-emphasize or bury the facts that recipients do not know already. The only way to learn what people do and do not know is to ask them. Health literacy tests can suggest how much people know in general for the domains captured in their items18,19. However, these tests are unlikely to provide information about the audience’s knowledge of a specific topic.

Design draft displays to address communication challenges, drawing on principles from behavioral research

The limits to human judgment in processing uncertain technical information have been the subject of extensive research13,20. The more that health communicators know about this research and its applications, the better they can create useful draft displays, building on recipients’ strengths and addressing their weaknesses. Some basic design principles for display construction are outlined in Box 1. All displays need iterative testing and refinement. For practical purposes, a ‘think aloud’ protocol21,22 will often suffice: ask a group of people from the target audience, with diverse backgrounds, to say whatever comes into their minds as they examine a draft. Make it clear that they are testing the draft, not being tested themselves. These simple tests routinely reveal content that appeared clear to the drafter but was not clear to the recipient, content that seemed clear but was interpreted differently than intended, content that seemed to go without saying but did not and content that unwittingly struck the wrong tone. By thinking aloud, participants can not only reveal unexpected problems but also suggest ways to remedy them.

Evaluate the final draft to assess its adequacy

Once revisions are complete, the final draft can be evaluated to anticipate users’ experiences with the display. Successful displays achieve high performance and high acceptability. Measures of performance ask how well people could use a display, if they tried. Measures of acceptability ask how willing users are to try. High performance should increase acceptability by rewarding the user’s efforts. High acceptability should increase users’ willingness to invest in the effort that successful performance requires.

For performance measures, we follow Galesic and Garcia-Retamero23, who categorize them as assessing users’ ability to (1) read the data, finding facts in a display and interpreting them as intended; (2) read between the data, comparing facts (for example, the benefits of different treatments); or (3) read beyond the data, applying the facts to practical problems. These steps echo Larkin and Simon2, who argued that whether ‘a diagram is worth ten thousand words’ depends on how well it facilitates three functions: search, recognition and inference. For acceptability measures, we follow Hullman et al.10, who offer a general schema emphasizing satisfaction, reading ease and trust (or confidence).

As we focus on displays for general audiences, we do not consider individual difference predictors of recipients’ performance and judgments of acceptability. Peters provides an accessible general introduction24 to the extensive research literature on measures of numeracy25, perceived numeracy26, literacy, scientific reasoning ability27, decision-making competence28 and health literacy29. Good display design may bring the greatest benefits to individuals with lower levels of these abilities. Recognizing that diversity is critical in the development of effective messages; broad recruitment of individuals for the pretests and evaluations is vitally important.

We emphasize testing because it is not only essential but also troublingly rare30. One likely reason for that rarity is that people tend to overestimate how well they understand one another, making testing seem unnecessary31. Indeed, the greater the communicators’ expertise, the greater is their ‘curse of knowledge’, limiting their ability to anticipate recipients’ needs and responses32. As a result, experts may create a display that looks good to them and then mistakenly assume that it works equally well for an audience whose members have very different backgrounds. A second likely reason for the rarity of testing is that ‘testing’ can connote elaborate procedures, beyond the formal skills and material resources of many experts with something to communicate. As a result, we emphasize simple methods that anyone can follow (for example, think aloud protocols), hoping that resources are available for more extensive testing (per the examples below, tested in randomized trials) when individuals’ health depends on understanding communications.

Case examples

Creating successful visual displays requires a design process, informed by principles grounded in behavioral research and disciplined by testing. The set of potentially relevant design principles is too vast to summarize or absorb in one article. Instead, we illustrate design thinking, structured around a simple framework for organizing the design process, and basic measures for evaluating its success, and we illustrate the process with three examples, chosen to represent common heath communication tasks, for which a visual display may be worth at least a thousand words. These tasks are (1) comparing medical treatments, illustrated with a drug fact box; (2) interpreting medical test results, illustrated with an infographic for a widely distributed coronavirus disease 2019 (COVID-19) home test; and (3) evaluating exposure scenarios, illustrated with the risk heatmap used in negotiations around reopening movie sets before the availability of COVID-19 vaccines. Each example went through multiple rounds of pretesting before evaluation of the final product.

A drug fact box for comparing two medical treatments

Establish communication goals

Informed decisions about medical treatments require estimates of their expected risks, benefits and uncertainties, contrasted with those of alternative treatments. This evidence is often hard for patients to find and sometimes even for providers. Patients and providers need not only trustworthy sources but also quantitative estimates of the expected effects: how large are the expected harms and benefits? How strong is the evidence? Instead, they often find vague verbal quantifiers of promises (‘can improve’) and fears (‘may cause’), sometimes in overwhelming laundry lists of things that can go right and wrong. Many studies document how poorly verbal quantifiers communicate, as they can be interpreted differently by different people in one context and by the same individual in different contexts33,34. For example, one study found that people assigned probabilities ranging from 0% to 48% to the word ‘unlikely’ (ref. 33).

Identify communication challenges

Satisfying users’ essential information need for quantitative estimates means first identifying the outcomes that matter to them; next, estimating these outcomes for alternative treatments (including perhaps no treatment); and, finally, conveying these estimates in useful form. The first challenge is addressed by talking to patients to find out which outcomes matter. The second challenge requires eliciting medical experts’ substantive knowledge. The third challenge requires helping patients make sense of diverse facts, expressed in unfamiliar terms, perhaps swirling in their minds along with counterclaims and intuitive mental models. Each challenge requires respecting the diversity of users whose personal circumstances may make different choices appropriate for them.

Design draft displays

Drug fact boxes35, like the one in Fig. 1, address this challenge, guided by several widely applicable design principles36,37. One principle is structuring the users’ task to contrast the benefits and risks of the treatment options. A second principle is expressing the outcomes in everyday terms, consistent with medical evidence and using numbers to avoid the ambiguity of verbal quantifiers. A third principle is expressing effects as absolute risks, rather than relative ones, avoiding the ambiguity when, for example, ‘doubling’ a risk might mean changing its probability from 10% to 20% or from 0.0001% to 0.0002% over a single dose or a lifetime of usage. Other key principles illustrated by this example include using common units (in this case, percentages), describing the evidence base and giving the gist38 of the uncertainty (e.g., bottom of Fig. 1) without cumbersome quantification, such as confidence intervals. Crucially, the fact box’s communication of the Food and Drug Administration (FDA)’s evaluation of the drug takes no stance on patients’ decisions, consistent with ‘FDA approval’ meaning that the FDA allows, but does not recommend, product use. Of note, this example expresses outcomes as both probabilities and frequencies, a practice that later research found unnecessary, as people understood probabilities better39.

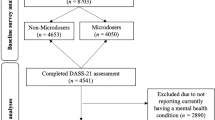

The image shows a drug fact box, created as the intervention component of a randomized trial that compared it to standard drug information on measures of consumer knowledge and judgments about prescription medication choices. The display underwent pretesting (cognitive interviews, before–after studies) and was evaluated in two randomized trials35,36,37.

Evaluate the final draft

The fact box in Fig. 1 has a lot of information, including many numbers. As with all displays, how well it works is an empirical question. It was evaluated in a randomized controlled trial, in which a representative sample of US adults received an actual direct-to-consumer newspaper ad for Pridclo (clopidogrel) to reduce heart attack risk, with either the legally required (small print) ‘brief summary’ or the drug fact box35. The evaluation study asked simple questions assessing users’ ability to read the data, by reporting estimates in the box; to read between the data, by comparing estimates with and without the treatment; and to read beyond the data, by indicating which treatment they would choose, if they had a relevant medical condition. The evaluation study assessed acceptability with simple rating scales for how easy it was to find information in the box, how easy it was to understand that information and how important it was to have it. By all measures, the fact box outperformed the usual ‘brief summary’. In most respects, the box also performed well in absolute terms; that is, it put people in a position to make informed choices. The same was true with three other drug fact boxes tested in the same randomized controlled trial.

An infographic for interpreting test results

Establish communication goals

Throughout the COVID-19 pandemic, testing has been assigned a pivotal role in helping people to protect themselves and others. Recognizing the cost and complexity of administering PCR tests, the United States (and other countries) encouraged use of home antigen tests, with governments providing hundreds of millions of free tests. When the US FDA approves a test, it also approves its instructions, including, crucially, how to interpret and act on test results. However, whereas the FDA requires detailed evidence on the efficacy of the test itself, it requires none for the instructions on test interpretation and subsequent actions, relying instead on regulators’ intuitions about user behavior.

Identify communication challenges

When test results are not definitive, interpreting them requires integrating their uncertain message with the uncertain belief that motivated taking the test, namely, the prior probability of having the condition. With COVID-19 tests, the prior probability should be higher if users have COVID-19-like symptoms or think that they might have been exposed to the virus. However, people often pay undue attention to the test result and insufficient attention to the prior probability, a bias sometimes called ‘base-rate neglect’ in decision science research40. In the context of COVID-19 testing, neglecting an elevated prior probability (due to feeling bad or being exposed) could mean relying too heavily on a test result that might be a false negative, given the home test’s imperfect sensitivity. This misinterpretation would lead, in turn, to taking unrealized and undesired risks. Overcoming this bias is the primary challenge in interpreting test results. Of note, the high specificity of the tests means that false positives are rare, and, when they occur, the result is arguably less problematic than a false negative; a false positive could simply lead to undue caution, if users neglect the prior probability associated with feeling fine and having no suspected exposure.

Design draft displays

The FDA-approved instructions for the first home test kit with emergency-use authorization followed several sound design principles. These principles included having a single comprehensive document, offering more than one format (a folded pamphlet and an electronic version) providing background information about COVID-19 and the test kit, giving step-by-step instructions for performing the test, answering frequently asked questions and giving test-performance statistics in numeric terms (although, unfortunately, using ones that may have misled users, namely, laboratory-based measures such as ‘percent agreement’ with a PCR test that likely overestimated real-life performance). The FDA-approved document also described what to do with a positive or negative test. However, that section seemed long, cramped and dense, with vital information about test interpretation seemingly buried in general information. It briefly mentioned the implications of having symptoms but not of potential exposures.

We designed the infographic visual display41 in Fig. 2 as a possible alternative. A critical design principle is walking users through the steps of interpreting test results, highlighting their prior probability and its implications for interpreting test results. Other design principles include using colors that evoke the associated action states, varying text size to highlight critical material, adding icons to structure the display and break up the text, including a reminder summary and keeping unnecessary text to a minimum. Unlike the drug fact box and FDA-approved instructions, the display includes no numerical estimates. Recognizing the robustness of the base-rate bias, it does the integration for users.

The image shown was used as the intervention component in a randomized trial to help consumers understand how to act on COVID-19 home test kit results. The infographic was designed according to decision science principles; pretesting included a 'think aloud' protocol. The format was tested in a randomized trial against the FDA-authorized instructions or no instructions; the outcome measure was the proportion of participants following CDC guidelines for a given test result. The format proved superior to the approved instructions, which, in some cases, were worse than no instructions at all41.

Evaluate the final draft

In a randomized controlled trial41, members of a diverse (but not nationally representative) online sample were randomized to receive the FDA-authorized instructions, the visual display in Fig. 2 or no instructions at all. In each condition, we tested their ability to read the data by asking for the likelihood of being infected, given a positive or negative test result. It was appropriately higher when participants were asked to assume symptoms, exposure and a positive result, indicating both knowledge and attention to the instructions.

We tested participants’ ability to read beyond the data by asking what action they would take, with and without prior exposures or COVID-19-like symptoms. Our primary outcome was whether they chose actions consistent with US Centers for Disease Control and Prevention (CDC) guidelines. When asked to assume a positive test result, almost all participants in each group reported the appropriate action (that is, quarantine). However, when asked to assume a negative test result and symptoms or exposure, they were more likely to report the appropriate action with our visual display than with the approved instructions, which were sometimes worse than no instructions at all. When asked to assume a negative result and no symptoms or exposure, the groups responded similarly (that is, no quarantine), meaning that the visual display did not merely produce more cautious behavior in general, rather, it encouraged behavior in line with CDC guidance. Despite its superior performance, the visual display was rated similarly to the authorized instructions on three measures of acceptability: usefulness, helpfulness and reading ease. Thus, better acceptability ratings are no guarantee of better performance.

A risk heatmap for evaluating risk scenarios

Establish communication goals

Movie sets were one of many workplaces closed in the COVID-19 lockdown. They might have been one of the most challenging to reopen, with critical work requiring close, unmasked interpersonal contact. The governors of two of the three US states with the largest movie industries (California and New York but not Georgia) asked the industry to produce a reopening proposal for their approval. Four union-led groups coalesced around a single plan, ‘the Safe Way Forward’ (ref. 42), as a basis for negotiating with the studios and the states. The plan specified work practices such as no buffet and no shared makeup and restricted access to the close contact zone (for example, no catering or drivers).

The plan, however, left open the amount of testing required. Instead, it used the eight risk heatmaps43 in Fig. 3 to show the disease risks associated with multiple scenarios, involving different testing protocols and pandemic conditions. Visual displays are well suited to presenting such complex information. In their negotiations, the states, studios and unions had expert support in interpreting the heatmaps. Once the sets were opened, however, individual workers were on their own. Each had to decide whether the risks were personally acceptable. The heatmaps could inform these decisions, if users could understand them.

The image shown was evaluated in a survey-based study in which respondents, drawn from an online convenience sample, were given the risk map with brief instructions and asked a series of questions assessing comprehension and the ability to use the map information appropriately. The figure shows the expected number of COVID-19 cases (left) and probability of at least one case (right) for four testing frequencies. ‘Zone A’ denotes the close contact zone. The bar at the right shows the risk associated with the color for combinations of community infection (% CI) and community transmission (R0) rates for a 30-day shoot with 100 people, following the Safe Way Forward42 protocol43.

Identify communication challenges

For most people, the heatmaps are an unfamiliar display, with an unfamiliar kind of data (risk estimates based on model simulations), determined by three unfamiliar parameters: testing frequency, community infection rate (% CI) and community transmission rate (R0). They hold constant two other parameters: the on-set transmission rate, reflecting the practices in the plan (R0 = 0.95), and the extent of the exposure (30 days for 100 people). Successful users would have to be able to orient themselves in this space, interpret its elements and use them appropriately.

Design draft displays

The heatmaps embody several design principles. They structure a complex domain in an orderly, transparent way and focus on the dimensions most critical to determining risks. They use an intuitive color scheme44. They convey an appropriate degree of precision and allow users to find risk estimates relevant to their own circumstances. In addition, the accompanying text introduces the heatmap elements sequentially, rather than throwing users into a complex display. Another key principle is incorporated in the simple and supportive writing style, assuming that users will understand. The text within the display, along with the rest of the Safe Way Forward plan, was iteratively revised in consultation with union members, addressing the need for pretesting.

Evaluate the final draft

A study was conducted43 to evaluate whether non-experts could understand and use the heatmaps when presented as they were in the Safe Way Forward. The study used a diverse online sample (like that in the previous study). Participants were asked to imagine being a star who wanted a role but did not need the money. Researchers then explained the display elements sequentially, indicating that (1) the color shows the risk level; (2) the bar on the right shows what the color means; (3) the horizontal axis shows how high the disease level is in the community (% CI); (4) the vertical axis shows how fast the disease is going around (R0); and (5) the panel shows the frequency of testing. Participants considered heatmaps for one of the two measures: one person’s probability of getting COVID-19 (on the right) or the expected number of cases (on the left).

Study tasks asked participants to read the data, by reporting the risk associated with one combination of percent CI and R0 values, for each heatmap; to read between the data, by reporting whether they would accept the risk for five (% CI, R0) combinations in each heatmap; and to read beyond the data, by reporting the minimal acceptable testing frequency for three (% CI, R0) combinations. Open-ended questions asked participants to elaborate on these structured responses. Their performance revealed substantial mastery of all three tasks. Most reported that the heatmaps were well explained on the one acceptability question.

Conclusion

Visual displays can be effective ways of communicating complex information. Computer programs now offer vast arrays of options to even casual users (for example, templates, colors, icons, layouts). Whether visual displays fulfill their potential depends on how well the intended audience can use them (performance) and how willing they are to try (acceptability). The growing behavioral research literature on visualization4,6,7, building on generations of prior research on perceptual and cognitive processes, offers design principles that can help professionals design effective displays for the endless possible combinations of tasks, topics, users and settings.

Effective communication of health data is more important than ever before, particularly given the prominence of misinformation and the myriad sources of online information. By providing a simple framework and illustrative case examples for use of visual displays of healthcare information, we hope to have helped medical professionals in this vital mission.

References

Tversky, B. Visualizing thought. Top. Cogn. Sci. 3, 499–535 (2011).

Larkin, J. H. & Simon, H. A. Why a diagram is (sometimes) worth ten thousand words. Cogn. Sci. 11, 65–100 (1987).

COVID Crisis Group. Lessons from the COVID War: an Investigative Report (PublicAffairs, 2023).

Franconeri, S. L., Padilla, L. M., Shah, P., Zacks, J. M. & Hullman, J. The science of visual data communication: what works. Psychol. Sci. Public Interest 22, 110–161 (2021).

Hildon, Z., Allwood, D. & Black, N. Impact of format and content of visual display of data on comprehension, choice and preference: a systematic review. Int. J. Qual. Health Care 24, 55–64 (2012).

Sibrel, S. C., Rathore, R., Lessard, L. & Schloss, K. B. The relation between color and spatial structure for interpreting colormap data visualizations. J. Vis. 20, 7 (2020).

Spicer, J., Zhu, J. Q., Chater, N. & Sanborn, A. N. Perceptual and cognitive judgments show both anchoring and repulsion. Psychol. Sci. 33, 1395–1407 (2022).

Zhang, Y. et al. Mapping the landscape of COVID-19 crisis visualizations. In Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems 1–23 (ACM, 2021).

Zikmund-Fisher, B. J. et al. Blocks, ovals, or people? Icon type affects risk perceptions and recall of pictographs. Med. Decis. Making 34, 443–453 (2014).

Hullman, J., Qiao, X., Correll, M., Kale, A. & Kay, M. In pursuit of error: a survey of uncertainty visualization evaluation. IEEE Trans. Vis. Comput. Graph. 25, 903–913 (2019).

National Cancer Institute Office of Communications and Education. Making Data Talk: a Workbook https://www.cancer.gov/publications/health-communication/making-data-talk.pdf (2011).

Centers for Disease Control and Prevention. Visual Communication Resources https://www.cdc.gov/healthliteracy/developmaterials/visual-communication.html (2023).

Kahneman, D. Thinking, Fast and Slow (Farrar, Straus and Giroux, 2013).

Fischhoff, B. & Broomell, S. B. Judgment and decision making. Annu. Rev. Psychol. 71, 331–355 (2020).

Fischhoff, B. The sciences of science communication. Proc. Natl Acad. Sci. USA 110, 14033–14039 (2013).

Bruine De Bruin, W. & Bostrom, A. Assessing what to address in science communication. Proc. Natl Acad. Sci. USA 110, 14062–14068 (2013).

von Winterfeldt, D. & Edwards, W. Decision Analysis and Behavioral Research (Cambridge University Press, 1986).

Centers for Disease Control and Prevention. Health Literacy https://www.cdc.gov/healthliteracy/index.html (2023).

Santana, S. et al. Updating health literacy for Healthy People 2030: defining its importance for a new decade in public health. J. Public Health Manag. Pract. 27, S258–S264 (2021).

Tversky, A. & Kahneman, D. Judgment under uncertainty: heuristics and biases. Science 185, 1124–1131 (1974).

Ericsson, K. A. & Simon, H. A. Protocol Analysis: Verbal Reports as Data (MIT Press, 1993).

Merton, R. K. The focused interview and focus groups: continuities and discontinuities. Public Opin. Q. 51, 550–566 (1987).

Galesic, M. & Garcia-Retamero, R. Graph literacy: a cross-cultural comparison. Med. Decis. Making 31, 444–457 (2011).

Peters, E. Innumeracy in the Wild: Misunderstanding and Misusing Numbers (Oxford University Press, 2020).

Peters, E. et al. Numeracy and decision making. Psychol. Sci. 17, 407–413 (2006).

Fagerlin, A. et al. Measuring numeracy without a math test: development of the subjective numeracy scale. Med. Decis. Making 27, 672–680 (2007).

Drummond, C. & Fischhoff, B. Development and validation of the scientific reasoning scale. J. Behav. Decis. Mak. 30, 26–38 (2017).

Parker, A. M., Bruine de Bruin, W., Fischhoff, B. & Weller, J. Robustness of decision-making competence: evidence from two measures and an 11-year longitudinal study. J. Behav. Decis. Mak. 31, 380–391 (2018).

Nutbeam, D. Health literacy as a public health goal: a challenge for contemporary health education and communication strategies into the 21st century. Health Promot. Int. 15, 259–267 (2000).

Morgan, K. & Fischhoff, B. Mental models for scientists communicating with the public. Issues Sci. Technol. 39, 58–61 (2023).

Nickerson, R. S. How we know —and sometimes misjudge—what others know: imputing one’s own knowledge to others. Psychol. Bull. 125, 737–759 (1999).

Tullis, J. G. & Feder, B. The ‘curse of knowledge’ when predicting others’ knowledge. Mem. Cogn. https://doi.org/10.3758/s13421-022-01382-3 (2022).

Woloshin, K. K., Ruffin, M. T. 4th & Gorenflo, D. W. Patients’ interpretation of qualitative probability statements. Arch. Fam. Med. 3, 961–966 (1994).

Bryant, G. D. & Norman, G. R. Expressions of probability: words and numbers. N. Engl. J. Med. 302, 411 (1980).

Schwartz, L. M., Woloshin, S. & Welch, H. G. Using a drug facts box to communicate drug benefits and harms: two randomized trials. Ann. Intern. Med. 150, 516–527 (2009).

Schwartz, L. M. & Woloshin, S. The drug facts box: improving the communication of prescription drug information. Proc. Natl Acad. Sci. USA 110, 14069–14074 (2013).

Schwartz, L. M., Woloshin, S. & Welch, H. G. The drug facts box: providing consumers with simple tabular data on drug benefit and harm. Med. Decis. Making 27, 655–662 (2007).

Reyna, V. F. A scientific theory of gist communication and misinformation resistance, with implications for health, education, and policy. Proc. Natl Acad. Sci. USA 118, e1912441117 (2021).

Woloshin, S. & Schwartz, L. M. Communicating data about the benefits and harms of treatment: a randomized trial. Ann. Intern. Med. 155, 87–96 (2011).

Kahneman, D. & Tversky, A. On the psychology of prediction. Psychol. Rev. 80, 237–251 (1973).

Woloshin, S., Dewitt, B., Krishnamurti, T. & Fischhoff, B. Assessing how consumers interpret and act on results from at-home COVID-19 self-test kits: a randomized clinical trial. JAMA Intern. Med. 182, 332–341 (2022).

Director’s Guild of America, Screen Actors Guild–American Federation of Television and Radio Artists, International Alliance of Theatrical Stage Employees & Teamsters Motion Picture & Theatrical Trade Division. The Safe Way Forward: a Joint Report of the DGA, SAG-AFTRA, IATSE and Teamsters’ Committees for COVID-19 Safety Guidelines https://www.sagaftra.org/files/sa_documents/ProductionSafetyGuidelines_June2020EditedP.pdf (2023).

Rodriguez, V. L., Fischhoff, B. & Davis, A. L. Risk heatmaps as visual displays: opening movie studios after the COVID‐19 shutdown. Risk Anal. https://doi.org/10.1111/risa.14017 (2022).

Moreland, K. Why we use bad color maps and what you can do about it. Electron. Imaging 28, art00022 (2016).

Acknowledgements

Preparation of this Perspective was supported in part by the CDC through a financial assistance award to the Department of Machine Learning, Carnegie Mellon University. The contents are those of the authors and do not necessarily represent the official view of nor an endorsement by the CDC–HHS or the US Government. The sponsor had no role in the manuscript.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Medicine thanks the anonymous reviewers for their contribution to the peer review of this work. Primary Handling Editor: Karen O’Leary, in collaboration with the Nature Medicine team.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Woloshin, S., Yang, Y. & Fischhoff, B. Communicating health information with visual displays. Nat Med 29, 1085–1091 (2023). https://doi.org/10.1038/s41591-023-02328-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41591-023-02328-1