Abstract

With an estimated 160,000 deaths in 2018, lung cancer is the most common cause of cancer death in the United States1. Lung cancer screening using low-dose computed tomography has been shown to reduce mortality by 20–43% and is now included in US screening guidelines1,2,3,4,5,6. Existing challenges include inter-grader variability and high false-positive and false-negative rates7,8,9,10. We propose a deep learning algorithm that uses a patient’s current and prior computed tomography volumes to predict the risk of lung cancer. Our model achieves a state-of-the-art performance (94.4% area under the curve) on 6,716 National Lung Cancer Screening Trial cases, and performs similarly on an independent clinical validation set of 1,139 cases. We conducted two reader studies. When prior computed tomography imaging was not available, our model outperformed all six radiologists with absolute reductions of 11% in false positives and 5% in false negatives. Where prior computed tomography imaging was available, the model performance was on-par with the same radiologists. This creates an opportunity to optimize the screening process via computer assistance and automation. While the vast majority of patients remain unscreened, we show the potential for deep learning models to increase the accuracy, consistency and adoption of lung cancer screening worldwide.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 print issues and online access

$209.00 per year

only $17.42 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

This study used three datasets that are publicly available: LUNA: https://luna16.grand-challenge.org/data/; LIDC: https://wiki.cancerimagingarchive.net/display/Public/LIDC-IDRI; NLST: https://biometry.nci.nih.gov/cdas/learn/nlst/images/

The dataset from Northwestern Medicine was used under license for the current study, and is not publicly available.

Code availability

The code used for training the models has a large number of dependencies on internal tooling, infrastructure and hardware, and its release is therefore not feasible. However, all experiments and implementation details are described in sufficient detail in the Methods section to allow independent replication with non-proprietary libraries. Several major components of our work are available in open source repositories: Tensorflow: https://www.tensorflow.org; Tensorflow Estimator API: https://www.tensorflow.org/guide/estimators; Tensorflow Object Detection API: https://github.com/tensorflow/models/tree/master/research/object_detection—the lung segmentation model and cancer ROI detection model were trained using this framework; Inflated Inception: https://github.com/deepmind/kinetics-i3d—the full-volume model and the second-stage model were trained using this feature extractor.

Change history

28 June 2019

An amendment to this paper has been published and can be accessed via a link at the top of the paper.

References

American Lung Association. Lung cancer fact sheet. American Lung Association http://www.lung.org/lung-health-and-diseases/lung-disease-lookup/lung-cancer/resource-library/lung-cancer-fact-sheet.html (accessed 11 September 2018).

Jemal, A. & Fedewa, S. A. Lung cancer screening with low-dose computed tomography in the United States—2010 to 2015. JAMA Oncol. 3, 1278 (2017).

US Preventive Services Task Force. Final update summary: lung cancer: screening (1AD). US Preventive Services Task Force https://www.uspreventiveservicestaskforce.org/Page/Document/UpdateSummaryFinal/lung-cancer-screening (2018).

National Lung Screening Trial Research Team et al. Reduced lung-cancer mortality with low-dose computed tomographic screening. N. Engl. J. Med. 365, 395–409 (2011).

Black, W. C. et al. Cost-effectiveness of CT screening in the National Lung Screening Trial. N. Engl. J. Med. 371, 1793–1802 (2014).

Lung CT screening reporting & data system. American College of Radiology https://www.acr.org/Clinical-Resources/Reporting-and-Data-Systems/Lung-Rads (accessed 11 September 2018).

van Riel, S. J. et al. Observer variability for Lung-RADS categorisation of lung cancer screening CTs: impact on patient management. Eur. Radiol. 29, 924–931 (2019).

Singh, S. et al. Evaluation of reader variability in the interpretation of follow-up CT scans at lung cancer screening. Radiology 259, 263 (2011).

Mehta, H. J., Mohammed, T.-L. & Jantz, M. A. The American College of Radiology lung imaging reporting and data system: potential drawbacks and need for revision. Chest 151, 539–543 (2017).

Martin, M. D., Kanne, J. P., Broderick, L. S., Kazerooni, E. A. & Meyer, C. A. Lung-RADS: pushing the limits. Radiographics 37, 1975–1993 (2017).

Winkler Wille, M. M. et al. Predictive accuracy of the pancan lung cancer risk prediction model—external validation based on CT from the Danish Lung Cancer Screening Trial. Eur. Radiol. 25, 3093–3099 (2015).

De Koning, H., Van Der Aalst, K., Ten Haaf, M. & Oudkerk, H. D. K. C. PL02.05 Effects of volume CT lung cancer screening: mortality results of the NELSON randomised-controlled population based tria. J. Thorac. Oncol. 13, S185 (2018).

Field, J. K. et al. UK Lung Cancer RCT Pilot Screening Trial: baseline findings from the screening arm provide evidence for the potential implementation of lung cancer screening. Thorax 71, 161–170 (2016).

McMahon, P. M. et al. Cost-effectiveness of computed tomography screening for lung cancer in the United States. J. Thorac. Oncol. 6, 1841–1848 (2011).

Goffin, J. R. et al. Cost-effectiveness of lung cancer screening in canada. JAMA Oncol. 1, 807 (2015).

Tomiyama, N. et al. CT-guided needle biopsy of lung lesions: a survey of severe complication based on 9783 biopsies in Japan. Eur. J. Radiol. 59, 60–64 (2006).

Wiener, R. S., Schwartz, L. M., Woloshin, S. & Welch, H. G. Population-based risk for complications after transthoracic needle lung biopsy of a pulmonary nodule: an analysis of discharge records. Ann. Intern. Med. 155, 137–144 (2011).

Ciompi, F. et al. Towards automatic pulmonary nodule management in lung cancer screening with deep learning. Sci. Rep. 7, 46479 (2017).

Gillies, R. J., Kinahan, P. E. & Hricak, H. Radiomics: images are more than pictures, they are data. Radiology 278, 563–577 (2016).

Gulshan, V. et al. Development and validation of a deep learning algorithm for detection of diabetic retinopathy in retinal fundus photographs. JAMA 316, 2402–2410 (2016).

Bogoni, L. et al. Impact of a computer-aided detection (CAD) system integrated into a picture archiving and communication system (PACS) on reader sensitivity and efficiency for the detection of lung nodules in thoracic CT exams. J. Digit. Imaging 25, 771–781 (2012).

Ye, Xujiong et al. Shape-based computer-aided detection of lung nodules in thoracic CT images. IEEE Trans. Biomed. Eng. 56, 1810–1820 (2009).

Bellotti, R. et al. A CAD system for nodule detection in low-dose lung CTs based on region growing and a new active contour model. Med. Phys. 34, 4901–4910 (2007).

Sahiner, B. et al. Effect of CAD on radiologists’ detection of lung nodules on thoracic CT scans: analysis of an observer performance study by nodule size. Acad. Radiol. 16, 1518–1530 (2009).

Firmino, M., Angelo, G., Morais, H., Dantas, M. R. & Valentim, R. Computer-aided detection (CADe) and diagnosis (CADx) system for lung cancer with likelihood of malignancy. Biomed. Eng. Online 15, 2 (2016).

Armato, S. G. et al. Lung cancer: performance of automated lung nodule detection applied to cancers missed in a CT screening program. Radiology 225, 685–692 (2002).

Valente, I. R. S. et al. Automatic 3D pulmonary nodule detection in CT images: a survey. Comput. Methods Prog. Biomed. 124, 91–107 (2016).

Das, M. et al. Performance evaluation of a computer-aided detection algorithm for solid pulmonary nodules in low-dose and standard-dose MDCT chest examinations and its influence on radiologists. Br. J. Radiol. 81, 841–847 (2008).

Quantitative Insights. Quantitative Insights gains industry’s first FDA clearance for machine learning driven cancer diagnosis. PRNewswire https://www.prnewswire.com/news-releases/quantitative-insights-gains-industrys-first-fda-clearance-for-machine-learning-driven-cancer-diagnosis-300495405.html (2018).

Russakovsky, O. et al. ImageNet large scale visual recognition challenge. Int. J. Comput. Vis. 115, 211–252 (2015).

Lin, T.-Y. et al. Microsoft COCO: Common Objects in Context. Comput. Vis. ECCV 2014, 740–755 (2014).

Liao, F., Liang, M., Li, Z., Hu, X. & Song, S. Evaluate the malignancy of pulmonary nodules using the 3D deep leaky noisy-or network. Preprint at https://arxiv.org/abs/1711.08324 (2017).

Pinsky, P. F. et al. Performance of Lung-RADS in the national lung screening trial: a retrospective assessment. Ann. Intern. Med. 162, 485 (2015).

Manos, D. et al. The Lung Reporting and Data System (LU-RADS): a proposal for computed tomography screening. Can. Assoc. Radiol. J. 65, 121–134 (2014).

Sun, Y. & Sundararajan, M. Axiomatic attribution for multilinear functions. In Proc. 12th ACM Conference on Electronic Commerce—EC ’11 https://doi.org/10.1145/1993574.1993601 (2011).

Varela, C., Karssemeijer, N., Hendriks, J. H. C. L. & Holland, R. Use of prior mammograms in the classification of benign and malignant masses. Eur. J. Radiol. 56, 248–255 (2005).

Trajanovski, S. et al. Towards radiologist-level cancer risk assessment in CT lung screening using deep learning. Preprint at https://arxiv.org/abs/1804.01901 (2019).

Pinsky, P. F., Gierada, D. S., Nath, P. H., Kazerooni, E. & Amorosa, J. National lung screening trial: variability in nodule detection rates in chest CT studies. Radiology 268, 865–873 (2013).

Armato, S. G. 3rd et al. The Lung Image Database Consortium (LIDC): an evaluation of radiologist variability in the identification of lung nodules on CT scans. Acad. Radiol. 14, 1409–1421 (2007).

Kazerooni, E.A. et al. ACR–STR practice parameter for the performance and reporting of lung cancer screening thoracic computed tomography (CT). J. Thorac. Imaging 29, 310–316 (2014).

National Cancer Institute. National Lung Screening Trial https://www.cancer.gov/types/lung/research/nlst (2018)

The American College of Radiology. Adult lung cancer screening specifications. https://www.acr.org/Clinical-Resources/Lung-Cancer-Screening-Resources (2014).

Abadi, M. et al. Tensorflow: a system for large-scale machine learning. OSDI 16, 265–283 (2016).

He, K., Gkioxari, G., Dollar, P. & Girshick, R. Mask R-CNN. In IEEE International Conference on Computer Vision (ICCV) https://doi.org/10.1109/iccv.2017.322 (IEEE, 2017).

Setio, A. A. A. et al. Validation, comparison, and combination of algorithms for automatic detection of pulmonary nodules in computed tomography images: The LUNA16 challenge. Med. Image Anal. 42, 1–13 (2017).

Huang, J. et al. Speed/accuracy trade-offs for modern convolutional object detectors. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR) https://doi.org/10.1109/cvpr.2017.351 (IEEE, 2017).

Lin, T.-Y., Goyal, P., Girshick, R., He, K. & Dollar, P. Focal loss for dense object detection. IEEE Trans. Pattern Anal. Mach. Intell. Preprint at https://doi.org/10.1109/TPAMI.2018.2858826 (2018).

Lin, T.-Y. et al. Feature pyramid networks for object detection. in IEEE Conference on Computer Vision and Pattern Recognition (CVPR) https://doi.org/10.1109/cvpr.2017.106 (IEEE, 2017).

Carreira, J. & Zisserman, A. Quo vadis, action recognition? A new model and the kinetics dataset. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR) https://doi.org/10.1109/cvpr.2017.502 (IEEE, 2017).

Szegedy, C., Vanhoucke, V., Ioffe, S., Shlens, J. & Wojna, Z. Rethinking the Inception architecture for computer vision. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR) https://doi.org/10.1109/cvpr.2016.308 (IEEE, 2017).

J. Deng et al. ImageNet: a large-scale hierarchical image database. In IEEE Conference on Computer Vision and Pattern Recognition https://doi.org/10.1109/cvprw.2009.5206848 (IEEE, 2009).

Chihara, L. M. & Hesterberg, T. C. Mathematical Statistics with Resampling and R (John Wiley & Sons, 2014).

Acknowledgements

The authors acknowledge the NCI and the Foundation for the National Institutes of Health for their critical roles in the creation of the free publicly available LIDC/IDRI/NLST Database used in this study. All participants enrolled in NLST signed an informed consent developed and approved by the screening center’s IRBs, the NCI IRB and the Westat IRB. The authors thank the NCI for access to NCI data collected by the NLST. The statements herein are solely those of the authors and do not represent or imply concurrence or endorsement by the NCI. The authors would like to thank M. Etemadi and his team at Northwestern Medicine for data collection, de-identification and research support. These team members include E. Johnson, F. Garcia-Vicente, D. Melnick, J. Heller and S. Singh. We also thank C. Christensen and his team at Northwestern Medicine IT, including M. Lombardi, C. Wilbar and R. Atanasiu. We would also like to acknowledge the work of the team working on labeling infrastructure and, specifically, J. Yoshimi, who implemented many of the features we needed for labeling of ROIs, and J. Wong for coordinating and recruiting radiologist labelers. We would also like to acknowledge the work of the team that put together the data-handling infrastructure, including G. Duggan and K. Eswaran. Lastly, we would like to acknowledge the helpful feedback on the initial drafts from Y. Liu and S. McKinney.

Author information

Authors and Affiliations

Contributions

D.A., A.P.K., S.B. and B.C. developed the network architecture and data/modeling infrastructure, training and testing setup. D.A. and A.P.K. created the figures, wrote the methods and performed additional analysis requested in the review process. J.J.R., S.S., D.T., D.A., A.P.K. and S.B. wrote the manuscript. D.P.N. and J.J.R. provided clinical expertise and guidance on the study design. G.C and S.S. advised on the modeling techniques. M.E., S.S., J.J.R., B.C., W.Y. and D.A. created the datasets, interpreted the data and defined the clinical labels. D.A., B.C., A.P.K. and S.S. performed statistical analysis. S.S, L.P. and D.T. initiated the project and provided guidance on the concept and design. S.S. and D.T supervised the project.

Corresponding author

Ethics declarations

Competing interests

D.P.N. and J.J.R. are paid consultants of Google Inc. D.P.N. is on the Medical Advisory Board of VIDA Diagnostics, Inc. and Exact Sciences. M.E.’s lab received funding from Google Inc. to support the research collaboration. This study was funded by Google Inc. The remaining authors are employees of Google Inc. and own stock as part of the standard compensation package. The authors have no other competing interests to disclose.

Additional information

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data

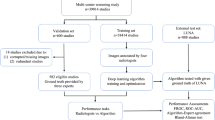

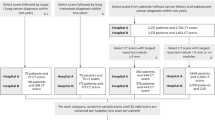

Extended Data Fig. 1 NLST STARD diagram.

a, Diagram describing exclusions made in our analysis. b, Table describing exclusions made by the NCI when selecting images to release from NLST. Note that there were 623 screen-detected cancers but a total of 638 cancer-positive patients. The additional 15 patients were diagnosed during the screening window, but not due to a positive screening result. In this case Row 3 ‘Relevant Images’ meant that, for cancer-positive patients, there were images from the year of the cancer diagnosis, and for cancer-negative patients it meant that all 3 years of screening images were available. Note that the publicly available version of NLST downsampled the screening groups 3 (no nodule, some abnormality) and 4 (no nodule, no abnormalities). In Extended Data Figs. 2, 3, 4 and Supplementary Table 4 we present another version of the main analysis that compensates for this downsampling by upweighting patients within these groups.

Extended Data Fig. 2 Results from the reader study—lung cancer screening on a single CT volume: reweighted.

a–e, Identical to Fig. 2, except that we took into account the biased sampling done in the selection of the NLST data released. This meant that examples in screening groups 3 (no nodule, some abnormality) and 4 (no nodule, no abnormality) were upweighted by the same factor by which they were downsampled (see Extended Data Fig. 1 for further details on the groups). Model performance shown in the AUC curve and summary tables is based on case-level malignancy score. LUMAS buckets refers to operating points selected to match the predicted probability of cancer for Lung-RADS 3+, 4A+ and 4B/X. a, Performance of model (blue line) versus average radiologist for various Lung-RADS categories (crosses) using a single CT volume. The lengths of the crosses represent the confidence intervals. The area highlighted in blue is magnified in b to show the performance of each of the six radiologists at various Lung-RADS risk buckets. c, Sensitivity comparison between model and average radiologist. d, Specificity comparison between model and average radiologist. Both sensitivity and specificity analysis were conducted with n = 507 volumes from 507 patients, with P values computed using a two-sided permutation test with 10,000 random resamplings of the data. e, Hit rate localization analysis used to measure how often the model correctly localized a cancerous lesion.

Extended Data Fig. 3 Results from the reader study—lung cancer screening using current and prior CT volume: reweighted.

a–e, Identical to Fig. 3, except that we took into account the sampling done in the selection of the 15,000 patient NLST data released. This meant that for screening groups 3 (no nodule, some abnormality) and 4 (no nodule, no abnormality) we upweighted each example by the same factor by which they were downsampled. Model performance in the AUC curve and summary tables is based on case-level malignancy score. The term LUMAS buckets refers to operating points selected to represent sensitivity/specificity at the 3+, 4A+ and 4B/X thresholds. a, Performance of model (blue line) versus average radiologist at various Lung-RADS categories (crosses) using a CT volume and a prior CT volume for a patient. The length of the crosses represents the 95% confidence interval. The area highlighted in blue is magnified in b to show the performance of each of the six radiologists at various Lung-RADS categories in this reader study. c, Sensitivity comparison between model and average radiologist. d, Specificity comparison between model and average radiologist. Both sensitivity and specificity analysis were conducted with n = 308 volumes from 308 patients with P values computed using a two-sided permutation test with 10,000 random resamplings of the data. e, Hit rate localization analysis used to measure how often the model correctly localized a cancerous lesion.

Extended Data Fig. 4 Results from the full NLST test set and independent test set: reweighted.

a,b, Identical to Fig. 4 except that we took into account the biased sampling done in the selection of the NLST data released. This meant that for screening groups 3 (no nodule, some abnormality) and 4 (no nodule, no abnormality) we upweighted each example by the same factor by which they were downsampled. The comparison was performed on n = 6,716 cases, using a two-sided permutation test with 10,000 random resamplings of the data. a, Comparison of model performance to NLST reader performance on the full NLST test set. NLST reader performance was estimated by retrospectively applying Lung-RADS 3 criteria to the NLST reads. b, Sensitivity and specificity comparisons between the model and Lung-RADS retrospectively applied to NLST reads.

Extended Data Fig. 5 Independent dataset ROC curve and intersection over union for localization.

a, AUC curve for the independent data test set with n = 1,139 cases using a two-sided permutation test with 10,000 random resamplings of the data. b, For each detection that was a ‘hit’ (overlapped with a labeled malignancy), this plot shows the volume of the intersection between the detection and the ground truth divided by the volume of the union of the ground truth and the detection. In 3D, intersection over union (IOU) drops much faster than in two dimensions (2D). For example, given a 1-mm3 nodule and a correctly centered 2-mm3 bounding box, the resulting IOU will be 0.125. In 2D, a similar situation would result in an IOU of 0.25.

Extended Data Fig. 6 Examples of ROIs from the detection model and examples of cases where the model prediction differs from the consensus grade.

a, Example slices from cancer ROIs (cyan) determined by bounding boxes (red) detected by the cancer ROI detection model. The final classification model uses the larger additional context as input illustrated by the cyan ROI. b, Sample alignment of prior CT with current CT based on the detected cancer bounding box, which is performed by centering both sub-volumes at the center of their respective detected bounding boxes. When a prior detection is not available, the lung center is used for an approximate alignment. Note that features derived from this large, 90-mm3 context are compared for classification at a late stage in the model after several max-pooling layers that can discard spatial information. Therefore, a precise voxel-to-voxel alignment is not necessary. c, Example cancer-negative case with scarring that was correctly downgraded from a consensus grade of Lung-RADS 4B to LUMAS 1/2 by the model. d, Example cancer-positive case with a nodule (size graded as 7–12 mm, depending on the radiologist) correctly upgraded from grades of Lung-RADS 3 and 4A (depending on the radiologist) to LUMAS 4B/X by the model.

Extended Data Fig. 7 Attribution maps generated using integrated gradients.

a, Example of model attributions for a cancer-positive case. The top row shows the input volume for the full-volume and cancer risk prediction models, respectively. The lower row shows the attribution overlay with positive (magenta) and negative (blue) region contributions to the classifications. In all cancer cases under the attributions study, the readers strongly agreed that the model focused on the nodule. Also, in 86% of these cases, the global and second-stage models focused on the same region. b, Example of model attributions for a cancer-negative case. The left-hand image shows a slice from the input subset volume. The right-hand image image shows positive (magenta) and negative (blue) attributions overlayed. The readers found that, in 40% of the negative cases examined, the model focused on vascular regions in the parenchyma.

Extended Data Fig. 8 Example LUMAS false positive cases.

a, 4B/X false positives. b, 4A+ false positives.

Extended Data Fig. 9 STARD diagram of low-dose-screening CT patients from an academic medical center used for the independent validation test set.

We require a minimum of 1 year of follow-up for cancer-negative cases. This resulted in a median follow-up time of 625 d across all patients once all exclusion criteria were taken into account. To clarify, this means that the median amount of time from the first screening CT to either a cancer diagnosis or the last follow-up event was 625 d. There were 209 patients (232 cases) with priors in this set of 1,139.

Extended Data Fig. 10 Illustration of the architecture of the end-to-end cancer risk prediction model.

The model is trained to encompass the entire CT volume and automatically produce a score predicting the cancer diagnosis. In all cases, the input volume is first resampled into two different fixed voxel sizes as shown. Two ROI detections are used per input volume, from which features are extracted to arrive at per-ROI prediction scores via a fully connected neural network. The prior ROI is padded to all zeros when a prior is not available.

Supplementary information

Supplementary Information

Supplementary Tables 1–9 and Supplementary Discussion

Source data

Source Data Fig. 2

Raw model and reader results for all cases in prior reader study

Source Data Fig. 3

Raw model and reader results for all cases in non prior reader study

Source Data Fig. 4

Raw model and Lung-RADS results for all cases in NLST

Rights and permissions

About this article

Cite this article

Ardila, D., Kiraly, A.P., Bharadwaj, S. et al. End-to-end lung cancer screening with three-dimensional deep learning on low-dose chest computed tomography. Nat Med 25, 954–961 (2019). https://doi.org/10.1038/s41591-019-0447-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41591-019-0447-x

This article is cited by

-

Deep learning model for pleural effusion detection via active learning and pseudo-labeling: a multisite study

BMC Medical Imaging (2024)

-

Development and validation of a machine learning model to predict time to renal replacement therapy in patients with chronic kidney disease

BMC Nephrology (2024)

-

Applying the UTAUT2 framework to patients’ attitudes toward healthcare task shifting with artificial intelligence

BMC Health Services Research (2024)

-

Generative models improve fairness of medical classifiers under distribution shifts

Nature Medicine (2024)

-

Deep representation learning of tissue metabolome and computed tomography annotates NSCLC classification and prognosis

npj Precision Oncology (2024)