Abstract

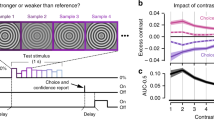

In dynamic environments, subjects often integrate multiple samples of a signal and combine them to reach a categorical judgment1. The process of deliberation can be described by a time-varying decision variable (DV), decoded from neural population activity, that predicts a subject’s upcoming decision2. Within single trials, however, there are large moment-to-moment fluctuations in the DV, the behavioural significance of which is unclear. Here, using real-time, neural feedback control of stimulus duration, we show that within-trial DV fluctuations, decoded from motor cortex, are tightly linked to decision state in macaques, predicting behavioural choices substantially better than the condition-averaged DV or the visual stimulus alone. Furthermore, robust changes in DV sign have the statistical regularities expected from behavioural studies of changes of mind3. Probing the decision process on single trials with weak stimulus pulses, we find evidence for time-varying absorbing decision bounds, enabling us to distinguish between specific models of decision making.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 51 print issues and online access

$199.00 per year

only $3.90 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The data that support the findings of the current study are available from the corresponding authors upon reasonable request. Source data are provided with this paper.

Code availability

The analysis code was developed in MATLAB (Mathworks) and is available from the corresponding authors upon reasonable request.

References

Shadlen, M. N. & Kiani, R. Decision making as a window on cognition. Neuron 80, 791–806 (2013).

Kiani, R., Cueva, C. J., Reppas, J. B. & Newsome, W. T. Dynamics of neural population responses in prefrontal cortex indicate changes of mind on single trials. Curr. Biol. 24, 1542–1547 (2014).

Resulaj, A., Kiani, R., Wolpert, D. M. & Shadlen, M. N. Changes of mind in decision-making. Nature 461, 263–266 (2009).

Kaufman, M. T., Churchland, M. M., Ryu, S. I. & Shenoy, K. V. Vacillation, indecision and hesitation in moment-by-moment decoding of monkey motor cortex. eLife 4, e04677 (2015).

Bollimunta, A., Totten, D. & Ditterich, J. Neural dynamics of choice: single-trial analysis of decision-related activity in parietal cortex. J. Neurosci. 32, 12684–12701 (2012).

van den Berg, R. et al. A common mechanism underlies changes of mind about decisions and confidence. eLife 5, e12192 (2016).

Lemus, L. et al. Neural correlates of a postponed decision report. Proc. Natl Acad. Sci. USA 104, 17174–17179 (2007).

Rich, E. L. & Wallis, J. D. Decoding subjective decisions from orbitofrontal cortex. Nat. Neurosci. 19, 973–980 (2016).

Kiani, R., Hanks, T. D. & Shadlen, M. N. Bounded integration in parietal cortex underlies decisions even when viewing duration is dictated by the environment. J. Neurosci. 28, 3017–3029 (2008).

Britten, K. H., Shadlen, M. N., Newsome, W. T. & Movshon, J. A. The analysis of visual motion: a comparison of neuronal and psychophysical performance. J. Neurosci. 12, 4745–4765 (1992).

Peixoto, D. et al. Population dynamics of choice representation in dorsal premotor and primary motor cortex. Preprint at https://doi.org/10.1101/283960 (2018).

Kiani, R. & Shadlen, M. N. Representation of confidence associated with a decision by neurons in the parietal cortex. Science 324, 759–764 (2009).

Shadlen, M. N. & Newsome, W. T. Neural basis of a perceptual decision in the parietal cortex (area LIP) of the rhesus monkey. J. Neurophysiol. 86, 1916–1936 (2001).

Smith, P. L. & Ratcliff, R. Psychology and neurobiology of simple decisions. Trends Neurosci. 27, 161–168 (2004).

Usher, M. & McClelland, J. L. The time course of perceptual choice: the leaky, competing accumulator model. Psychol. Rev. 108, 550–592 (2001).

Ditterich, J. Evidence for time-variant decision making. Eur. J. Neurosci. 24, 3628–3641 (2006).

Cisek, P., Puskas, G. A. & El-Murr, S. Decisions in changing conditions: the urgency-gating model. J. Neurosci. 29, 11560–11571 (2009).

Hanks, T., Kiani, R. & Shadlen, M. N. A neural mechanism of speed–accuracy tradeoff in macaque area LIP. eLife 3, e02260 (2014).

Huk, A. C. & Shadlen, M. N. Neural activity in macaque parietal cortex reflects temporal integration of visual motion signals during perceptual decision making. J. Neurosci. 25, 10420–10436 (2005).

Churchland, A. K., Kiani, R. & Shadlen, M. N. Decision-making with multiple alternatives. Nat. Neurosci. 11, 693–703 (2008).

Thura, D., Beauregard-Racine, J., Fradet, C.-W. & Cisek, P. Decision making by urgency gating: theory and experimental support. J. Neurophysiol. 108, 2912–2930 (2012).

Wong, K.-F. & Wang, X.-J. A recurrent network mechanism of time integration in perceptual decisions. J. Neurosci. 26, 1314–1328 (2006).

Wong, K.-F., Huk, A. C., Shadlen, M. N. & Wang, X.-J. Neural circuit dynamics underlying accumulation of time-varying evidence during perceptual decision making. Front. Comput. Neurosci. 1, 6 (2007).

Inagaki, H. K., Fontolan, L., Romani, S. & Svoboda, K. Discrete attractor dynamics underlies persistent activity in the frontal cortex. Nature 566, 212–217 (2019).

Ratcliff, R. & McKoon, G. The diffusion decision model: theory and data for two-choice decision tasks. Neural Comput. 20, 873–922 (2008).

Wang, X.-J. Probabilistic decision making by slow reverberation in cortical circuits. Neuron 36, 955–968 (2002).

Drugowitsch, J., Moreno-Bote, R., Churchland, A. K., Shadlen, M. N. & Pouget, A. The cost of accumulating evidence in perceptual decision making. J. Neurosci. 32, 3612–3628 (2012).

Standage, D., You, H., Wang, D. H. & Dorris, M. C. Gain modulation by an urgency signal controls the speed-accuracy trade-off in a network model of a cortical decision circuit. Front. Comput. Neurosci. 5, 7 (2011).

Seidemann, E., Meilijson, I., Abeles, M., Bergman, H. & Vaadia, E. Simultaneously recorded single units in the frontal cortex go through sequences of discrete and stable states in monkeys performing a delayed localization task. J. Neurosci. 16, 752–768 (1996).

Andersen, R. A., Aflalo, T. & Kellis, S. From thought to action: The brain-machine interface in posterior parietal cortex. Proc. Natl Acad. Sci. USA 116, 26274 (2019).

Collinger, J. L. et al. High-performance neuroprosthetic control by an individual with tetraplegia. Lancet 381, 557–564 (2013).

Hochberg, L. R. et al. Reach and grasp by people with tetraplegia using a neurally controlled robotic arm. Nature 485, 372–375 (2012).

Moritz, C. T., Perlmutter, S. I. & Fetz, E. E. Direct control of paralysed muscles by cortical neurons. Nature 456, 639–642 (2008).

Ethier, C., Oby, E. R., Bauman, M. J. & Miller, L. E. Restoration of grasp following paralysis through brain-controlled stimulation of muscles. Nature 485, 368–371 (2012).

Pandarinath, C. et al. High performance communication by people with paralysis using an intracortical brain-computer interface. eLife 6, e18554 (2017).

Willett, F. R. et al. Hand knob area of premotor cortex represents the whole body in a compositional way. Cell 181, 396–409 (2020).

Musallam, S., Corneil, B. D., Greger, B., Scherberger, H. & Andersen, R. A. Cognitive control signals for neural prosthetics. Science 305, 258–262 (2004).

Pesaran, B., Musallam, S. & Andersen, R. A. Cognitive neural prosthetics. Curr. Biol. 16, R77–R80 (2006).

Andersen, R. A., Hwang, E. J. & Mulliken, G. H. Cognitive neural prosthetics. Annu. Rev. Psychol. 61, 169–190 (2010).

Golub, M. D., Chase, S. M., Batista, A. P. & Yu, B. M. Brain–computer interfaces for dissecting cognitive processes underlying sensorimotor control. Curr. Opin. Neurobiol. 37, 53–58 (2016).

Shanechi, M. M. Brain–machine interfaces from motor to mood. Nat. Neurosci. 22, 1554–1564 (2019).

Wallis, J. D. Decoding cognitive processes from neural ensembles. Trends Cogn. Sci. 22, 1091–1102 (2018).

Schafer, R. J. & Moore, T. Selective attention from voluntary control of neurons in prefrontal cortex. Science 332, 1568–1571 (2011).

Acknowledgements

We thank all members of the Newsome and Shenoy labs at Stanford University for comments on the methods and results throughout the execution of the project. D.P. was supported by the Champalimaud Foundation, Portugal and Howard Hughes Medical Institute. J.R.V. was supported by Stanford MSTP NIH training grant 4T32GM007365 and supported by the Howard Hughes Medical Institute. R.K. was supported by Simons Collaboration on the Global Brain grant 542997, Pew Scholarship in Biomedical Sciences, National Institutes of Health Award R01MH109180 and a McKnight Scholars Award. J.C.K. was supported by NSF graduate research fellowship. P.N. was supported by NIDCD award R01DC014034. C.C. was supported by K99NS092972 and 4R00NS092972-03 award from the NINDS and supported as a research specialist by the Howard Hughes Medical Institute. J.B. and S.F. were supported by the Howard Hughes Medical Institute. K.V.S. was supported by the following awards: NIH Director’s Pioneer Award 8DP1HD075623, DARPA-BTO ‘NeuroFAST’ award W911NF-14-2-0013, the Simons Foundation Collaboration on the Global Brain awards 325380 and 543045, and ONR award N000141812158. W.T.N. and K.V.S. were supported by the Howard Hughes Medical Institute.

Author information

Authors and Affiliations

Contributions

D.P., J.R.V., R.K., S.F., K.V.S. and W.T.N. designed the experiments. D.P., J.R.V. and S.F. trained the animals and collected the data. D.P., J.R.V. and W.T.N. wrote initial draft of the paper. S.I.R., D.P. and R.K. performed the surgical procedures. D.P., J.C.K., P.N., C.C. and J.B. implemented the real-time decoding setup. D.P., R.K. and C.C. designed, and D.P. and J.R.V. implemented, the decoder training algorithm to obtain the decoder weights and normalization matrices. D.P. and J.R.V. analysed the data. All authors contributed analytical insights and commented on statistical tests, discussed the results and implications, and contributed extensively to the multiple subsequent drafts of the paper.

Corresponding authors

Ethics declarations

Competing interests

K.V.S. consults for Neuralink Corp and CTRL-Labs Inc (part of Facebook Reality Labs) and is on the scientific advisory boards of MIND-X Inc, Inscopix Inc and Heal Inc. These entities did not support this work. The remaining authors declare no competing interests.

Additional information

Peer review information Nature thanks Timothy Hanks, Hendrikje Nienborg and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data figures and tables

Extended Data Fig. 1 Monkey and decoder performance.

a, Psychophysical performance, motion discrimination task. Trials were sorted for stimulus duration in quartiles from long (dark green) to short (light green). Data points (black) correspond to mean accuracy ± s.e.m. Data from each quartile were fit separately by a Weibull curve (Supplementary Methods 6). Inset: fit parameter α (psychophysical threshold) for each quartile. For monkey H (F): data from 12516 (12365) open-loop trials. For both subjects, median threshold for short duration stimuli (Q1, Q2 combined) was higher than for longest duration stimuli (Q3, Q4 combined, two-sided Wilcoxon rank-sum test: P = 6.188 × 10−30 for monkey H, P = 2.136 × 10−65 for monkey F). The x axis shows natural log scale spacing. b, Real-time choice prediction accuracy. Same as Fig. 1c, for individual monkeys (16,468, 15,286 trials for monkey H, F). Accuracy departed from baseline 174.5 ± 18.8 ms (214.5 ± 8.09 ms) after dots onset for monkey H (F). c, Average DV during dots. Same as Fig. 1d, for individual monkeys. For monkey H (F) coherence is a significant regressor of DV (for at least one of the choices) for the period between [190, 870] ms ([230, 970] ms) aligned to dots onset. Minimum 1,220 (1,332) trials per condition shown for monkey H (F). d, Prediction accuracy as a function of DV magnitude. Same as Fig. 2c, for individual monkeys. Data from 2,973 (2,518) trials – minimum 495 (484) trials per condition shown – from monkey H (F). e, Prediction accuracy as a function of DV magnitude and stimulus coherence. Same data and conventions as in Fig. 2e, for individual monkeys and pre-sorted by high versus low coherences. Minimum 234 (238) trials per condition shown for monkey H (F). f, Prediction accuracy as a function of DV magnitude and stimulus duration. Same data and conventions as Fig. 2f, for individual monkeys and pre-sorted by stimulus duration (median split). Minimum 239 (235) trials per condition shown for monkey H (F). g, Prediction accuracy as a function of DV magnitude and time before stimulus termination. Prediction accuracy as a function of DV at time t+DT before termination. Each curve corresponds to a different DT from 0 ms (dark blue) to −400 ms (light blue). Accuracy: percentage of correctly predicted choices. Data from 2,973 (2,518) trials from monkey H (F). h, Single trial DVs substantially increase choice prediction accuracy. Same as Fig. 2d, for individual monkeys.

Extended Data Fig. 2 Prediction accuracy online versus offline.

a, Online and offline classifiers result in similar performance, on average, for targets, dots, delay, and post-go epochs – monkey H. Average prediction accuracy (Supplementary Methods 9, 10, 12.3) over time ± s.e.m. (across sessions) for monkey H. Online/offline classifier results are plotted in black/red. Data in black are same as Fig. 1c, but for monkey H only. Prediction accuracy is very similar online and offline across the trial (see c). Data from 17 sessions (16,468 trials). b, Same as a, but for monkey F. Data from 15 sessions (15,286 trials). c, Summary of performance difference between online and offline classifiers within each epoch – monkey H. Average performance difference between online and offline classifiers (accuracy difference in proportion correct) for each of the epochs plotted in a (same sessions). Data points (black dots) correspond to mean accuracy difference ± s.e.m. (across sessions). Positive numbers correspond to better online classifier performance and negative numbers to better offline classifier performance. Black asterisks correspond to windows for which the differences were significantly different from zero (Wilcoxon signed-rank test, P < 0.01 two-sided, P values: 0.0004, 0.00009, 0.00009, 0.00009). d, Same as c, for monkey F (P values: 0.0051, 0.0001, 0.0017, 0.0001).

Extended Data Fig. 3 Choice prediction accuracy calculated offline on single trials: PMd versus M1, multiple versus single classifiers.

a, PMd predicts choices slightly better than M1 during stimulus presentation using a single classifier per epoch (monkey H). Mean prediction accuracy (Supplementary Methods 12.3) over time ± s.e.m. across sessions. Black dots denote time bins for which prediction accuracy was significantly different between the two areas (two-sided Wilcoxon signed-rank test, P < 0.05 Holm–Bonferroni correction for multiple comparisons). Same data as in c, d (dark traces). Data from 17 sessions (16,468 trials). b, Same as a, but using a different classifier for each 50 ms window. Same data as in c, d (light traces). c, Single and multiple classifiers yield similar performance for targets, dots and go epochs but not for reach epoch for PMd (monkey H). Same trials and statistical conventions as a. Average prediction accuracy ± s.e.m. across sessions for PMd using a single classifier (trained on data across all time points within an epoch) or multiple classifiers (a new decoder trained for every 50 ms window) per epoch. In the dots and go cue periods, performance is nearly identical with single versus multiple classifiers, reflecting the stability of choice representation during these periods. In contrast, multiple classifiers (trained for every time point) perform better during the movement period when choice representation changes rapidly with time. d, Equivalent to c, but for M1. e, Same as c, but for monkey F. Data from 15 sessions (15,286 trials).

Extended Data Fig. 4 Neural population choice prediction accuracy calculated offline on single trials when applying classifiers across epochs.

a, Only dots and go classifiers perform well across epochs. Average prediction accuracy (see Supplementary Methods 12.3) over time ± s.e.m. (across sessions) for monkey H for decoders trained in the targets (cyan), dots (dark yellow), go (magenta) and reach (black) periods. If the choice subspaces for two independent epochs are similar, the decoder from one epoch ought to accurately predict choice in the other epoch. Dots decoder performs well during go period and vice-versa. Targets and reach decoders perform poorly across other epochs. Data from 17 sessions (16,468 trials). b, Equivalent to a, but for monkey F. Same conventions apply. Data from 15 sessions (15,286 trials). c, Summary of performance difference between single and multiple classifiers within each epoch. Average performance difference between within-epoch classifier and across-epoch classifiers for each of the epochs plotted in a (same sessions). Error bars correspond to ± s.e.m. across sessions. Zero difference corresponds to the performance of the classifier trained and tested within the same epoch. d, Same as c, for monkey F. For both subjects in the dots and go periods the loss in decoding accuracy across epochs was very small, suggesting similar choice representation in both periods.

Extended Data Fig. 5 Choice prediction accuracy for correct and incorrect trials as a function of coherence.

Choice prediction accuracy obtained from real-time readout for correct and incorrect trials for each level of coherence. Prediction accuracy during the dots epoch for each coherence level is plotted for correct (black) and error (magenta) trials. Red dashed line corresponds to chance level. Insets show total number of correct (C) and error (E) trials used in the analysis (correct and incorrect designation was randomly assigned for 0% coherence stimuli). Data for monkey H and F are shown in top and bottom panels, respectively. Mean prediction accuracy for error trials after neural latency (180 ms after stimulus presentation) is outside (and lower than) the 95% CI for correct trials for 1.6%, 3.2%, 6.4%, 12.8% and 25.6% coherences for monkey H and for 12.8%, 25.6% and 51.2% coherences for monkey F (1,000 bootstrap iterations). Results for the highest coherence for each monkey should be interpreted carefully due to the extremely low number of error trials for these conditions resulting from excellent behavioural performance. (Dashed pink lines represent individual error trials at the highest coherence for monkey F.).

Extended Data Fig. 6 Real-time decoding: performance reliability, decoder weights, and Mu and Sigma stability.

a, Decoding performance is stable across sessions. Mean prediction accuracy late in the stimulus presentation (600–1,200 ms) across all sessions for monkey H (top panels) and monkey F (bottom panels). D1–D23 denote different decoders (sets of beta weights) used for the recorded sessions. For monkey H the same decoder (D1) was used for the first 14 sessions. The breaks on the x axis correspond to sessions that occurred on non-consecutive days. b, Real-time decoder β weights. β weights during the dots period (left panel) ranked by absolute magnitude for an example decoder (D1 from monkey H) used in real-time experiments. Channels with no or little choice predictive activity during this period had their weights set to zero using LASSO regularization to prevent over fitting. Delay period and post go cue β weights are shown in the middle and right panels respectively. c, Mu and Sigma matrices are very stable over dozens of sessions – monkey H. Average spike counts within a 50-ms window (Mu, left panel) and standard deviation of spike counts (Sigma, right panel) are plotted as a function of channel (y axis) and trial (x axis) for the sessions comprising closed loop experiments 1 and 2 for monkey H. Red lines, breaks between sessions. d, Same as c, for monkey F.

Extended Data Fig. 7 Validation of putative changes of mind.

a, Choice prediction accuracy for all trials collected during the CoM detection experiment. Trials were split in 6 quantiles sorted by DV magnitude (absolute value) at termination. Prediction accuracy and median DV magnitude were calculated and plotted separately for each quantile (blue line with black data points). Blue error bars show standard error of the mean for a binomial distribution. Dashed black line shows predicted accuracy from log-odds equation used to fit the DV model, and red dashed line shows chance level. Left: Data from 985 CoM trials (164 trials per condition) from monkey H. Right: Data from 1,727 CoM trials (287 trials per condition) from monkey F. b, CoM frequency as a function of coherence. Same as Fig. 3c for individual monkeys. c, CoM frequency as a function of coherence and direction. Same as Fig. 3d for individual monkeys. Median corrective and erroneous CoM counts: 530 and 242 for monkey H and 1,046 and 443 for monkey F, respectively (Wilcoxon rank-sum test P < 0.001). d, CoM frequency as a function of time in the trial. Same as Fig. 3e for individual monkeys. e, CoM time as a function of coherence. Same as Fig. 3f for individual monkeys. CoM time was negatively correlated with stimulus coherence (monkey H: P = 1.8 × 10−17; monkey F: P = 3.0 × 10−30).

Extended Data Fig. 8 Motion pulse effects over DVPERL and time.

a, Average change in post-pulse DV, time-locked to estimated Pulse Evidence Representation Latency (PERL), mean subtracted. Same as Fig. 4c for individual monkeys. PERL = 170 ms (180 ms) for monkey H (F). b, Average change in post-pulse DV for each DV boundary, time-locked to PERL, mean subtracted. Same as Fig. 4d for individual monkeys. Minimum 1,507 (1,731) trials per condition shown for monkey H (F). c, Residual behavioural pulse effects over |DVPERL|. Same as Fig. 4e for individual monkeys. Minimum 501 (504) trials per condition shown for monkey H (F). d, Residual neural pulse effects over |DVPERL|. Same as Fig. 4f for individual monkeys. e, Residual behavioural pulse effects over time. Same as Fig. 4g for individual monkeys. Minimum 1,122 (1,217) trials per condition shown for monkey H (F). f, Residual neural pulse effects over time. Same as Fig. 4h for individual monkeys. g, Pooled residual behavioural pulse effects over signed DV†PERL (Supplementary Methods 16.1, step 4). Black: mean residual pulse effects on choice for trials in each DV†PERL bin, ± s.e.m.; asterisks denote significantly non-zero means at 95% confidence (Supplementary Methods 16.1). Blue: nonlinear regression model fit (MATLAB fitnlm function) of the residuals to a Gaussian over DV†PERL, including the P value (two-sided t-statistic) for the fit amplitude coefficient. h, Pooled residual neural pulse effects over signed DVPERL. Black: mean residual pulse effects on ∆DV for trials in each DV†PERL bin, ± s.e.m.; asterisks denote significantly non-zero means at 95% confidence (Supplementary Methods 16.1). Blue: nonlinear regression model fit (MATLAB fitnlm function) of the residuals to a Gaussian over DV†PERL, including the P value (two-sided t-statistic) for the fit amplitude coefficient. i, Residual behavioural pulse effects over DV†PERL, single subjects. Same as g, for individual monkeys. Minimum 149 (151) trials per condition shown for monkey H (F). j, Residual neural pulse effects over DV†PERL, single subjects. Same as h, for individual monkeys.

Extended Data Fig. 9 Correlation analysis between DV and stimulus motion energy (ME).

a, Correlation between ME and DV across coherences – monkey H. Proportion of variance explained when regressing DV as a function of signed stimulus coherence (grey) or ME. Each green trace corresponds to a separate regression between DV and ME, offset by 180 ms to compensate for neural response delay (Supplementary Methods 14). Darker traces correspond to regressions in which ME was averaged over a longer period of time within each trial. Rises to peak in green traces appear right-shifted by approximately 60 ms due to edge effects from filtering ME at the beginning of each trial. Across all coherence levels ME and signed coherence explain a large fraction of DV variance. b, Same as a, for monkey F. c, Correlation between ME and DV within each signed stimulus coherence level – monkey H. Proportion of variance explained when regressing DV for each time point and within each level of signed coherence as a function of the ME (offset by 180 ms; see a). Within each level of signed coherence, the DV fluctuations are not explained by the ME traces. d, Same as c, for monkey F. e, Correlation between ME and DV slope during putative changes of mind within each signed coherence level – monkey H. Proportion of variance explained when regressing signed DV slope during the CoM for each level of signed coherence as a function of ME. ME was averaged over the 100 ms preceding the CoM, offset by 180 ms. DV slope was calculated over a 100 ms window centred around the zero-crossing defining the CoM. Within each level of signed coherence, the direction and magnitude of the CoM zero-crossings are not explained by ME. P values displayed are uncorrected (linear regression); none of the fit coefficients are significantly non-zero after correction for multiple comparisons. Data from 985 CoM trials from monkey H. f, Same as e, for monkey F. None of the fit coefficients are significantly non-zero after correction for multiple comparisons. Data from 1,727 CoM trials from monkey F.

Extended Data Fig. 10 Within trial DV variability decreases over time for long duration stimuli.

a, Average DV derivative as a function of time and choice – monkey H. DV derivative was calculated for each trial as the difference between consecutive DV estimates spaced out by 10 ms (Supplementary Methods 13). Traces show average DV derivative ± s.e.m. for right choices (red trace) and left choices (blue trace) during stimulus presentation. DV derivative initially starts increasing around the expected stimulus latency (170 ms) but progressively decreases for long (>600 ms) stimulus presentations. Minimum 8,176 trials per condition shown. b, Same as a, but for monkey F. Minimum 8,685 trials per condition shown. c, Average DV derivative as a function of time, coherence, and choice (correct trials only) – monkey H. Same data as in a, but with DV derivative averaged separately for each choice and motion coherence level. Right choices are plotted in red and left choices in blue as in a. Darker traces correspond to stronger coherences. Minimum 557 trials per condition shown. d, Same as c, but for monkey F. Minimum 692 trials per condition shown.

Supplementary information

Supplementary Information

This file contains the full Methods section, six supplementary notes, and five supplementary tables. Supplementary notes contain additional analyses and discussion. Supplementary tables detail regression results and experimental parameters.

Source data

Rights and permissions

About this article

Cite this article

Peixoto, D., Verhein, J.R., Kiani, R. et al. Decoding and perturbing decision states in real time. Nature 591, 604–609 (2021). https://doi.org/10.1038/s41586-020-03181-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41586-020-03181-9

This article is cited by

-

Real-time analysis of large-scale neuronal imaging enables closed-loop investigation of neural dynamics

Nature Neuroscience (2024)

-

Abstract deliberation by visuomotor neurons in prefrontal cortex

Nature Neuroscience (2024)

-

A unifying perspective on neural manifolds and circuits for cognition

Nature Reviews Neuroscience (2023)

-

Initial conditions combine with sensory evidence to induce decision-related dynamics in premotor cortex

Nature Communications (2023)

-

Dynamical flexible inference of nonlinear latent factors and structures in neural population activity

Nature Biomedical Engineering (2023)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.