Abstract

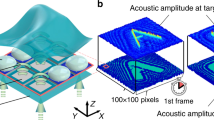

Science-fiction movies portray volumetric systems that provide not only visual but also tactile and audible three-dimensional (3D) content. Displays based on swept-volume surfaces1,2, holography3, optophoretics4, plasmonics5 or lenticular lenslets6 can create 3D visual content without the need for glasses or additional instrumentation. However, they are slow, have limited persistence-of-vision capabilities and, most importantly, rely on operating principles that cannot produce tactile and auditive content as well. Here we present the multimodal acoustic trap display (MATD): a levitating volumetric display that can simultaneously deliver visual, auditory and tactile content, using acoustophoresis as the single operating principle. Our system traps a particle acoustically and illuminates it with red, green and blue light to control its colour as it quickly scans the display volume. Using time multiplexing with a secondary trap, amplitude modulation and phase minimization, the MATD delivers simultaneous auditive and tactile content. The system demonstrates particle speeds of up to 8.75 metres per second and 3.75 metres per second in the vertical and horizontal directions, respectively, offering particle manipulation capabilities superior to those of other optical or acoustic approaches demonstrated until now. In addition, our technique offers opportunities for non-contact, high-speed manipulation of matter, with applications in computational fabrication7 and biomedicine8.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 51 print issues and online access

$199.00 per year

only $3.90 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The data that support the plots within this paper and other findings of this study are available in the main text and the Extended Data Figures. Additional information is available from the corresponding author upon reasonable request.

Code availability

Custom C++ code used for controlling our MATD during our tests is available on GitHub for anyone under a Creative Commons Attribution-Noncommercial-ShareAlike license at https://github.com/RyujiHirayama/MATD.

References

Sawalha, L. et al. A large 3D swept-volume video display. J. Disp. Technol. 8, 256–268 (2012).

Langhans, K., Oltmann, K., Reil, S., Goldberg, L. & Hatecke, H. in Lecture Notes in Computer Science Vol. 3805 (ed. Subsol, G.) 22–31 (Springer 2005).

Blanche, P. A. et al. An updatable holographic display for 3D visualization. J. Disp. Technol. 4 424–430 (2008).

Smalley, D. E. et al. A photophoretic-trap volumetric display. Nature 553, 486–490 (2018).

Ochiai, Y. et al. Fairy lights in femtoseconds: aerial and volumetric graphics rendered by focused femtosecond laser combined with computational holographic fields. ACM Trans. Graph. 35, 17 (2016).

Gao, X. et al. High brightness three-dimensional light field display based on the aspheric substrate Fresnel-lens-array with eccentric pupils. Opt. Commun. 361, 47–54 (2016).

Foresti, D. et al. Acoustophoretic printing. Sci. Adv. 4, eaat1659 (2018).

Watanabe, A., Hasegawa, K. & Abe, Y. Contactless fluid manipulation in air: droplet coalescence and active mixing by acoustic levitation. Sci. Rep. 8, 10221 (2018).

Smalley, D. E. OSA Display Technical Group Illumiconclave I. (OSA, 2015); http://holography.byu.edu/Illumiconclave1.html.

Saito, H., et al. Laser-plasma scanning 3D display for putting digital contents in free space. Proc. SPIE 6803, 680309 (2008).

Perlin, K. & Han, J. Y. Volumetric display with dust as the participating medium. US patent 6,997,558 (2006).

Sahoo, D. R. et al. JOLED: a mid-air display based on electrostatic rotation of levitated Janus objects. In Proc. of the 29th Annual Symposium on User Interface Software and Technology 437–448 (ACM, 2016).

Norasikin, M. A. et al. SoundBender: dynamic acoustic control behind obstacles. In Proc. of the 31st Annual Symposium on User Interface Software and Technology 247–259 (ACM, 2018).

Marzo, A. & Drinkwater, B. W. Holographic acoustic tweezers. Proc. Natl Acad. Sci. USA 116 84–89 (2018).

Brandt, E. H. Acoustic physics: suspended by sound. Nature 413, 474–475 (2001).

Wu, J. Acoustical tweezers. J. Acoust. Soc. Am. 89, 2140–2143 (1991).

Bruus, H. Acoustofluidics 7: the acoustic radiation force on small particles. Lab Chip 12 1014–1021 (2012).

Omirou, T., Marzo, A., Seah, S. A. & Subramanian, S. Levipath: modular acoustic levitation for 3D path visualisations. In Proc. of the 2015 CHI Conference on Human Factors in Computing Systems 309–312 (ACM, 2015).

Memoli, G. et al. Metamaterial bricks and quantization of meta-surfaces. Nat. Commun. 8, 14608 (2017).

Baresch, D., Thomas, J. L. & Marchiano, R. Observation of a single-beam gradient force acoustical trap for elastic particles: acoustical tweezers. Phys. Rev. Lett. 116, 024301 (2016).

Whymark, R. R. Acoustic field positioning for containerless processing. Ultrasonics 13, 251–261 (1975).

Marzo, A. et al. Holographic acoustic elements for manipulation of levitated objects. Nat. Commun. 6, 8661 (2015).

Bowen, R. W., Pola, J. & Matin, L. Visual persistence: effects of flash luminance, duration and energy. Vision Res. 14 295–303 (1974).

Gelfand, S. A. Essentials of Audiology 3rd edn (Thieme, 2010).

Makous, J. C., Friedman, R. M. & Vierck, C. J. A critical band filter in touch. J. Neurosci. 15, 2808–2818 (1995).

Frier, W., Pittera, D., Ablart, D., Obrist, M. & Subramanian, S. Sampling strategy for ultrasonic mid-air haptics. In Proc. of the 2019 CHI Conference on Human Factors in Computing Systems 121 (ACM, 2019).

Carter, T., Seah, S. A., Long, B., Drinkwater, B. & Subramanian, S. UltraHaptics: multi-point mid-air haptic feedback for touch surfaces. In Proc. of the 26th Annual ACM Symposium on User interface Software and Technology 505–514 (ACM, 2013).

Gan, W. S., Yang, J. & Kamakura, T. A review of parametric acoustic array in air. Appl. Acoust. 73, 1211–1219 (2012).

Ochiai, Y., Hoshi, T. & Suzuki, I. Holographic whisper: rendering audible sound spots in three-dimensional space by focusing ultrasonic waves. In Proc. of the 2017 CHI Conference on Human Factors in Computing Systems 4314–4325 (ACM, 2017).

Shvedov, V., Davoyan, A. R., Hnatovsky, C., Engheta, N. & Krolikowski, W. A long-range polarization-controlled optical tractor beam. Nat. Photon. 8, 846–850 (2014).

Acknowledgements

We thank R. Morales Gonzalez and B. Danesh-Kazemi from the University of Sussex, who helped with building the FPGA array, and L. F. Veloso from the University of Sussex, who helped with the supplementary videos. We acknowledge funding from the EPSRC project EP/N014197/1 ‘User interaction with self-supporting free-form physical objects’, the EU FET-Open project Levitate (grant agreement number 737087), the Royal Academy of Engineering Chairs in Emerging Technology Scheme (CiET1718/14), the Rutherford fellowship scheme, the Japan Society for the Promotion of Science through Grant-in-Aid number 18J01002 and the Kenjiro Takayanagi Foundation.

Author information

Authors and Affiliations

Contributions

D.M.P. and S.S. conceived the idea. R.H. and D.M.P. implemented the system and gathered experimental data demonstrating the idea. Data analysis was led by R.H., with contributions from all authors. D.M.P. led the optimization design with contributions from R.H. and S.S. R.H. optimized the firmware code with contributions from N.M. R.H. wrote the paper, with contributions from all authors.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Peer review information Nature thanks Daniel Smalley and the other, anonymous reviewer(s), for their contribution to the peer review of this work.

Extended data figures and tables

Extended Data Fig. 1 Overview of the MATD prototype.

Experiments were performed using two opposed arrays of 16 × 16 transducers,aligned on top of each other and with a separation of 23.4 cm.

Extended Data Fig. 2 Phase and amplitude control of the transducers used.

a, Square-wave input from the FPGA, used to drive the transducer phase and amplitude by controlling their phase delays and duty cycles. b, Nonlinear correlation between transducer pressure and duty cycle; measurements (dots) and analytical approximation (line). c, Measured sinusoidal responses of transducers driven by the square waves shown in a.

Extended Data Fig. 3 Preliminary characterization of particle sizes and update rates.

a, Camera setup used to measure the sphericity and diameter of the particles. b, Maximum linear speeds for different particle sizes. In all graphs the markers correspond to experimental values and the lines represent spline curves fitted to the data. c, POV representation using different particle diameters. d, Particle size distribution and sphericity of the 2-mm-diameter particles used. e, Maximum linear speeds along the vertical (downward) path for different update rates and for each mode (OSTM, PSTM and PDTM).

Extended Data Fig. 4 Speed measurement setup.

a, A camera takes a long-exposure photograph of the moving particle, which is illuminated by the LED at steps of 1 ms. b, c, Images captured during the horizontal and vertical linear speed tests at three different conditions (OSTM, PSTM and PDTM). d, Approximation of horizontal and vertical radiation forces exerted on a particle located around a levitation trap, as analytically approximated using the Gor’kov potential.

Extended Data Fig. 5 Speed, distance between the acoustic trap and the levitated particle (Δp) and acceleration.

a–c, Data measured during our speed tests along the horizontal (a), upward (b) and downward (c) directions.

Extended Data Fig. 6 Summary of the particle-control performance tests of the MATD for each of the experimental conditions tested.

a–c, Maximum linear speeds and accelerations for each mode (OSTM, PSTM and PDTM). The paths denote the speed of the levitation trap, not observed particle trajectories. d, Maximum linear speeds achieved by particles following circular paths of increasing radii for each mode.

Extended Data Fig. 7 Spectral analysis of the audio response of the MATD.

a, Signals used for input: chirp (left), 250 Hz (tactile, centre) and signals combined in the frequency domain (right). b, Output of the system when only sound is created (left) and when multiplexed with tactile content using amplitude multiplexing (centre) and combined signals (right). c, Effects of position multiplexing on an amplitude-multiplexed signal (left) and our combined signal (right) for a 75%–25% duty cycle. d, Effects of position multiplexing when applied to 50%–50% duty cycle signals.

Extended Data Fig. 8 Audio modes supported by the MATD.

a, b, Illustration of the two different modes (scatter and directional) and the sound tests. c, Audio measurement setup. d, e, Measured SPL distribution of the modes. The SPL distributions were measured under two conditions—sound only and sound plus tactile feedback—across the horizontal and vertical planes.

Extended Data Fig. 9 Characterization of tactile feedback.

a, Measurement setup. b, Visual content used, together with the tactile point. c, Measurement setup with a silicone hand (KI-RHAND, from Killer Inc. Tattoo). d, Results of the horizontal and vertical scans of the SPL for each of our conditions while delivering only tactile feedback, tactile and visual content, and all three modalities (tactile, visual and audio). e, Results of vertical and horizontal scans in the presence of a hand for all three conditions.

Extended Data Fig. 10 Other applications of the MATD.

a, Simultaneous levitation of six EPS particles in a diamond pattern (16.7% duty cycle for each particle; maximum number of particles levitated so far). b, c, Frequency modulation at 148 Hz to produce resonant oscillations (n = 2) for a 2-mm-diameter water droplet, captured from the side.

Supplementary information

Video 1:

Overview of the MATD system. In this video we demonstrate the Multimodal Acoustic Trapping Display (MATD) system. The video first demonstrates the volumetric display capabilities, followed by a combined system. This final combined system includes a countdown timer which the user can see and hear. The system also allows the user to start and stop using a finger click gesture. Every time the user starts or stops the countdown, they receive tactile feedback from the MATD system.

Video 2:

Demonstration of volumetric POV contents. Here we show several volumetric POV contents displayed using MATD. The first example shows a 3:2 torus knot, illustrating our ability to provide rich colour volumetric content. The second example shows a butterfly flying around in our system. These videos were taken with 0.1s exposure time and thus are visible to the naked eye.

Video 3:

Speed tests. In this video we show how the speed tests was carried out. The first and the second tests were carried out to determine the horizontal and vertical maximum linear speeds of MATD. The particle started at rest and was constantly accelerated to reach maximum speed at the centre of the array. The third one is for measuring the sharp corner speed. The particle is moved forward at constant speed and is forced to perform a complete change in direction (i.e. 180-degree turn). The last one shows the maximum displacement speed that can be achieved for a particle moving along a circular path of various radius (5 cm in this video). For each type of speed, we tested an increasing range of speeds, identifying the maximum speed at which such a manipulation was feasible.

Video 4:

Visual content with hands. This video is included to demonstrate two things. Firstly, it shows that the levitated object is not disturbed by the presence of a hand near it – allowing for the creation of an interactive display in which we can create tactile sensations close to the levitated object. Secondly, it shows that object is visible in normal indoor lighting conditions.

Video 5:

Visualisation of the tactile sensation with a visual content. This video illustrates the strength of the tactile sensation in the presence of a levitated display. While 75% of the cycles is used for the primary trap to create a visual content (the shape of 8), the secondary lobe (25% duty cycle) is focused on a paper (80gr) with 8 strips cut into it. By moving the location of the secondary lobe, the strips move up-and-down as they experience acoustic radiation pressure that would result in an equivalent tactile sensation if the finger was placed near it.

Video 6:

Other applications of the MATD. This video shows our ability to generate multiple traps for levitation and for liquid mode control. By time-multiplexing the position of the primary trap, six beads can be stably levitated and transferred. The levitated water droplet is oscillating due to amplitude modulation of the trap.

Rights and permissions

About this article

Cite this article

Hirayama, R., Martinez Plasencia, D., Masuda, N. et al. A volumetric display for visual, tactile and audio presentation using acoustic trapping. Nature 575, 320–323 (2019). https://doi.org/10.1038/s41586-019-1739-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41586-019-1739-5

This article is cited by

-

Integral imaging near-eye 3D display using a nanoimprint metalens array

eLight (2024)

-

Color liquid crystal grating based color holographic 3D display system with large viewing angle

Light: Science & Applications (2024)

-

A magnetically actuated dynamic labyrinthine transmissive ultrasonic metamaterial

Communications Materials (2024)

-

Adaptive tactile interaction transfer via digitally embroidered smart gloves

Nature Communications (2024)

-

Programmable photoacoustic patterning of microparticles in air

Nature Communications (2024)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.