Abstract

Variations in the El Niño/Southern Oscillation (ENSO) are associated with a wide array of regional climate extremes and ecosystem impacts1. Robust, long-lead forecasts would therefore be valuable for managing policy responses. But despite decades of effort, forecasting ENSO events at lead times of more than one year remains problematic2. Here we show that a statistical forecast model employing a deep-learning approach produces skilful ENSO forecasts for lead times of up to one and a half years. To circumvent the limited amount of observation data, we use transfer learning to train a convolutional neural network (CNN) first on historical simulations3 and subsequently on reanalysis from 1871 to 1973. During the validation period from 1984 to 2017, the all-season correlation skill of the Nino3.4 index of the CNN model is much higher than those of current state-of-the-art dynamical forecast systems. The CNN model is also better at predicting the detailed zonal distribution of sea surface temperatures, overcoming a weakness of dynamical forecast models. A heat map analysis indicates that the CNN model predicts ENSO events using physically reasonable precursors. The CNN model is thus a powerful tool for both the prediction of ENSO events and for the analysis of their associated complex mechanisms.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 51 print issues and online access

$199.00 per year

only $3.90 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

Data related to this paper can be downloaded from: SODA version 2.2.4, https://climatedataguide.ucar.edu/climate-data/soda-simple-ocean-data-assimilation; GODAS, https://www.esrl.noaa.gov/psd/data/gridded/data.godas.html; ERA-Interim, https://apps.ecmwf.int/datasets/data/interim-full-daily; NMME phase 1, https://iridl.ldeo.columbia.edu/SOURCES/.Models/.NMME/; and The CMIP5 database, https://esgf-node.llnl.gov/projects/cmip5/.

Code availability

TensorFlow (https://www.tensorflow.org) libraries were implemented to formulate the statistical forecast model using the CNN. The code for the CNN model can be downloaded at https://doi.org/10.5281/zenodo.3244463.

References

McPhaden, M. J., Zebiak, S. E. & Glantz, M. H. ENSO as an integrating concept in Earth science. Science 314, 1740–1745 (2006).

Barnston, A. G., Tippett, M. K., L’Heureux, M. L., Li, S. & DeWitt, D. G. Skill of real-time seasonal ENSO model predictions during 2002–11: is our capability increasing? Bull. Am. Meteorol. Soc. 93, 631–651 (2012).

Taylor, K. E., Stouffer, R. J. & Meehl, G. A. An overview of CMIP5 and the experiment design. Bull. Am. Meteorol. Soc. 93, 485–498 (2012).

Cane, M. A., Zebiak, S. E. & Dolan, S. C. Experimental forecasts of El Niño. Nature 321, 827–832 (1986).

Luo, J.-J., Masson, S., Behera, S. K. & Yamagata, T. Extended ENSO predictions using a fully coupled ocean–atmosphere model. J. Clim. 21, 84–93 (2008).

Tang, Y. et al. Progress in ENSO prediction and predictability study. Natl Sci. Rev. 5, 826–839 (2018).

Chen, D., Cane, M. A., Kaplan, A., Zebiak, S. E. & Huang, D. Predictability of El Niño over the past 148 years. Nature 428, 733–736 (2004).

Gao, C. & Zhang, R. H. The roles of atmospheric wind and entrained water temperature (Te) in the second-year cooling of the 2010–12 La Niña event. Clim. Dyn. 48, 597–617 (2017).

Hu, S. & Fedorov, A. V. Exceptionally strong easterly wind burst stalling El Niño of 2014. Proc. Natl Acad. Sci. USA 113, 2005–2010 (2016).

Gebbie, G. & Tziperman, E. Predictability of SST-modulated westerly wind bursts. J. Clim. 22, 3894–3909 (2009).

Izumo, T. et al. T. Influence of the state of the Indian Ocean Dipole on the following year’s El Niño. Nat. Geosci. 3, 168–172 (2010).

Park, J. H., Kug, J. S., Li, T. & Behera, S. K. Predicting El Niño beyond 1-year lead: effect of the Western Hemisphere warm pool. Sci. Rep. 8, 14957 (2018).

LeCun, Y., Bengio, Y., & Hinton, G. Deep learning. Nature 521, 436–444 (2015).

Krizhevsky, A., Sutskever, I. & Hinton, G. E. Imagenet classification with deep convolutional neural networks. Adv. Neural Inf. Process. Syst. 25, 1097–1105 (2012).

Oquab, M., Bottou, L., Laptev, I. & Sivic, J. Learning and transferring mid-level image representations using convolutional neural networks. In Proc. IEEE Conf. on Computer Vision And Pattern Recognition 1717–1724 (IEEE, 2014).

Giese, B. S. & Ray, S. El Niño variability in simple ocean data assimilation (SODA), 1871–2008. J. Geophys. Res. Oceans 116, https://doi.org/10.1029/2010JC006695 (2011).

Bellenger, H., Guilyardi, É., Leloup, J., Lengaigne, M. & Vialard, J. ENSO representation in climate models: from CMIP3 to CMIP5. Clim. Dyn. 42, 1999–2018 (2014).

Yosinski, J., Clune, J., Bengio, Y. & Lipson, H. How transferable are features in deep neural networks? Adv. Neural Inf. Process. Syst. 27, 3320–3328 (2014).

Webster, P. J. & Yang, S. Monsoon and ENSO: selectively interactive systems. Q. J. R. Meteorol. Soc. 118, 877–926 (1992).

Zhou, B., Khosla, A., Lapedriza, A., Oliva, A. & Torralba, A. Learning deep features for discriminative localization. In Proc. IEEE Conference on Computer Vision and Pattern Recognition 2921–2929 (IEEE, 2016).

Anderson, B. T. On the joint role of subtropical atmospheric variability and equatorial subsurface heat content anomalies in initiating the onset of ENSO events. J. Clim. 20, 1593–1599 (2007).

Kug, J. S. & Kang, I. S. Interactive feedback between ENSO and the Indian Ocean. J. Clim. 19, 1784–1801 (2006).

Yeh, S. W. et al. El Niño in a changing climate. Nature 461, 511 (2009).

Zhang, Z., Ren, B. & Zheng, J. A unified complex index to characterize two types of ENSO simultaneously. Sci. Rep. 9, 8373 (2019).

Pillai, P. A. et al. How distinct are the two flavors of El Niño in retrospective forecasts of Climate Forecast System version 2 (CFSv2)? Clim. Dyn. 48, 3829–3854 (2017).

Johnson, N. C. How many ENSO flavors can we distinguish? J. Clim. 26, 4816–4827 (2013).

Webster, P. J., Moore, A. M., Loschnigg, J. P. & Leben, R. R. Coupled ocean–atmosphere dynamics in the Indian Ocean during 1997–98. Nature 401, 356–360 (1999).

Ham, Y. G., Kug, J. S. & Park, J. Y. Two distinct roles of Atlantic SSTs in ENSO variability: north tropical Atlantic SST and Atlantic Niño. Geophys. Res. Lett. 40, 4012–4017 (2013).

Zeiler, M. D. & Fergus, R. Visualizing and understanding convolutional networks. In Eur. Conf. On Computer Vision 818–833 (Springer, 2014)

Reichstein, M. et al. Deep learning and process understanding for data-driven Earth system science. Nature 566, 195–204 (2019).

Hunter, J. D. Matplotlib: a 2D graphics environment. Comput. Sci. Eng. 9, 90–95 (2007).

Goodfellow, I., Bengio, Y., Courville, A. & Bengio, Y. Deep Learning (MIT Press, 2016).

Yoo, J. H. & Kang, I. S. Theoretical examination of a multi-model composite for seasonal prediction. Geophys. Res. Lett. 32, L18707 (2005).

Kalchbrenner, N., Grefenstette, E. & Blunsom, P. A convolutional neural network for modelling sentences. In Proc. 52nd Ann. Meet. Association for Computational Linguistics 655–665 (Association for Computational Linguistics, 2014).

Wu, A., Hsieh, W. W. & Tang, B. Neural network forecasts of the tropical Pacific sea surface temperatures. Neural Netw. 19, 145–154 (2006).

Behringer, D. W. & Xue, Y. Evaluation of the global ocean data assimilation system at NCEP: The Pacific Ocean. In Proc. Eighth Symp. on Integrated Observing and Assimilation Systems for Atmosphere, Oceans, and Land Surface (AMS 84th Annual Meeting) (AMS, 2004).

Dee, D. P. et al. The ERA-Interim reanalysis: configuration and performance of the data assimilation system. Q. J. R. Meteorol. Soc. 137, 553–597 (2011).

Kirtman, B. P. et al. The North American multimodel ensemble: phase-1 seasonal-to-interannual prediction; phase-2 toward developing intraseasonal prediction. Bull. Am. Meteorol. Soc. 95, 585–601 (2014).

Luo, J.-J., Liu, G., Hendon, H., Alves, O. & Yamagata, T. Inter-basin sources for two-year predictability of the multi-year La Niña event in 2010–2012. Sci. Rep. 7, 2276 (2017).

Acknowledgements

This study is funded by the Korea Meteorological Administration Research and Development Program under grant KMI2018-03214. Y.-G.H. was supported by the Basic Science Research Program through the National Research Foundation of Korea (NRF) funded by the Ministry of Education (NRF-2016R1A6A1A03012647). J.-J.L. is supported by ‘The Startup Foundation for Introducing Talent’ of NUIST. We are grateful to W. Merryfield for providing comments and to T. Doi for providing part of the SINTEX-F hindcast data used in the validation.

Author information

Authors and Affiliations

Contributions

Y.-G.H. and J.-H.K. designed the experiments and conducted the analyses. Y.-G.H. wrote most of the manuscript. J.-H.K. and Y.-G.H. performed the CNN hindcast experiments. J.-J.L. conducted the SINTEX-F hindcast experiments and reported the results. All authors discussed the study results and reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data figures and tables

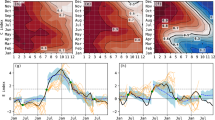

Extended Data Fig. 1 Comparison of the ENSO correlation skill between the CNN model and the feed-forward neural network model.

a, The all-season correlation skill of the three-month-moving-averaged Nino3.4 index as a function of the lead months of the forecast in the CNN model (red) and the feed-forward neural network model (blue). The validation period is between 1984 and 2017. b, c, The correlation skill of the Nino3.4 index targeted to each calendar month in the CNN model (b) and in the feed-forward neural network model (c). Hatching highlights the forecasts with correlation skill exceeding 0.5.

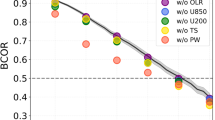

Extended Data Fig. 2 The improvement in the skill of the CNN model due to the CMIP5 dataset and the transfer learning.

a, The all-season correlation skill of Nino3.4 index at a lead of 18 months with a different number of CMIP5 samples. The red area denotes the number of available observed samples. We note that transfer learning is not applied to the series of sensitivity tests (that is, observations during the training period are not used to set up the CNN model). b, The all-season Nino3.4 correlation skill as a function of the lead months of the forecast with and without the transfer learning. The CNN model without the transfer learning is formulated by using all the CMIP5 and the observed samples during the training period (that is, 1871 to 1973) in a single training period. Therefore, the number of samples for the CNN model without the transfer learning is exactly the same with those with the transfer learning.

Extended Data Fig. 3 The time series of climate indices.

a–d, The time series of the IOD index (difference of the area-averaged SST over 50–70° E, 10° S–10° N from that over 90–110° E, 15°–0° S) during the SON season (a), the Indian Ocean Basin-wide warming (IOBW) index (area-averaged SST over 40–110° E, 15° S–10° N) during the JFM season (b), the Western Hemispheric Warm Pool (WHWP) index (area-averaged SST over 60–105° E, 10–35° N) during the MJJ season (c), and the Pacific Meridional Mode (PMM) index (first Maximum Covariance Analysis (MCA) principal components over the 175° E–95° W, 21° S–32° N using SST and 10-m winds) during the DJF season (d). The values preceding the 1997/98 El Niño event are denoted by the red star when the value is positive and the blue star when the value is negative.

Extended Data Fig. 4 The time evolution of the 1997/98 El Niño event.

The SST (shading) and 850-hPa wind vector (vectors) at: a, MJJ 1996; b, ASO 1996; c, NDJ 1996; and d, FMA 1997. The global map is generated in Matplotlib31.

Extended Data Fig. 5 The area-averaged heat map values for El Niño events.

a, b, The area-averaged heat map for EP-type El Niño events (a), and CP-type El Niño events (b) among all El Niño events over five ocean domains (that is, south Pacific, equatorial Pacific, north Pacific, Indian Ocean and equatorial Atlantic). These areas are defined as: south Pacific, [160° E–60° W, 57.5°–17.5° S]; equatorial Pacific, [120° E–80° W, 17.5° S–22.5° N]; north Pacific [120° E–100° W, 22.5–62.5° N]; Indian Ocean, [40°–120° E, 37.5° S–22.5° N]; and equatorial Atlantic [60°–0° W, 17.5° S–22.5° N]. The horizontal dashed line denotes one standard deviation of the heat map value for the displayed El Niño events for five ocean basins. We note that only the heat maps of the El Niño events for which the type is correctly predicted in the CNN are analysed.

Extended Data Fig. 6 The SST pattern developed by precursors for CP El Niño.

a, b, The SST anomalies for forecasts with leads of 12 months regressed onto the pattern regression index for CP-type El Niño precursors over the Indian Ocean (a) and the south Pacific (b) at the NDJ season. The pattern regression index for CP-type El Niño precursors is obtained by calculating the pattern regression of the NDJ SST and heat content anomalies onto the selected anomaly for CP El Niño event in Fig. 4f. The black box denotes the region in which the pattern regression index was calculated. We note that both regressed SST patterns are classified as the CP-type El Niño24. The global map is generated in Matplotlib31.

Supplementary information

Supplementary Figures

This file contains Supplementary Figures 1-4.

Rights and permissions

About this article

Cite this article

Ham, YG., Kim, JH. & Luo, JJ. Deep learning for multi-year ENSO forecasts. Nature 573, 568–572 (2019). https://doi.org/10.1038/s41586-019-1559-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41586-019-1559-7

This article is cited by

-

Application of a weighted ensemble forecasting method based on online learning in subseasonal forecast in the South China

Geoscience Letters (2024)

-

Artificial intelligence predicts normal summer monsoon rainfall for India in 2023

Scientific Reports (2024)

-

Deep learning reveals moisture as the primary predictability source of MJO

npj Climate and Atmospheric Science (2024)

-

Multi-scale spatial features and temporal attention mechanisms: advancing the accuracy of ENSO prediction

Intelligent Marine Technology and Systems (2024)

-

Deep learning with autoencoders and LSTM for ENSO forecasting

Climate Dynamics (2024)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.