Abstract

Online hate and extremist narratives have been linked to abhorrent real-world events, including a current surge in hate crimes1,2,3,4,5,6 and an alarming increase in youth suicides that result from social media vitriol7; inciting mass shootings such as the 2019 attack in Christchurch, stabbings and bombings8,9,10,11; recruitment of extremists12,13,14,15,16, including entrapment and sex-trafficking of girls as fighter brides17; threats against public figures, including the 2019 verbal attack against an anti-Brexit politician, and hybrid (racist–anti-women–anti-immigrant) hate threats against a US member of the British royal family18; and renewed anti-western hate in the 2019 post-ISIS landscape associated with support for Osama Bin Laden’s son and Al Qaeda. Social media platforms seem to be losing the battle against online hate19,20 and urgently need new insights. Here we show that the key to understanding the resilience of online hate lies in its global network-of-network dynamics. Interconnected hate clusters form global ‘hate highways’ that—assisted by collective online adaptations—cross social media platforms, sometimes using ‘back doors’ even after being banned, as well as jumping between countries, continents and languages. Our mathematical model predicts that policing within a single platform (such as Facebook) can make matters worse, and will eventually generate global ‘dark pools’ in which online hate will flourish. We observe the current hate network rapidly rewiring and self-repairing at the micro level when attacked, in a way that mimics the formation of covalent bonds in chemistry. This understanding enables us to propose a policy matrix that can help to defeat online hate, classified by the preferred (or legally allowed) granularity of the intervention and top-down versus bottom-up nature. We provide quantitative assessments for the effects of each intervention. This policy matrix also offers a tool for tackling a broader class of illicit online behaviours21,22 such as financial fraud.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 51 print issues and online access

$199.00 per year

only $3.90 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The dataset is provided as Source Data. The open-source software packages Gephi and R were used to produce the networks in the figures. No custom software was used.

References

The UK Home Affairs Select Committee. Hate Crime: Abuse, Hate and Extremism Online. session 2016–17 HC 609 https://publications.parliament.uk/pa/cm201617/cmselect/cmhaff/609/609.pdf (2017).

Patrisse Cullors. Online Hate Is A Deadly Threat https://edition.cnn.com/2018/11/01/opinions/social-media-hate-speech-cullors/index.html (2017).

Beirich, H., Hankes, K., Piggott, S., Schlatter, E. & Viets, S. The Year in Hate and Extremism https://www.splcenter.org/fighting-hate/intelligence-report/2017/year-hate-and-extremism (2017).

Hohmann, J. Hate Crimes Are a Much Bigger Problem than Even the New FBI Statistics Show https://www.washingtonpost.com/news/powerpost/paloma/daily-202/2018/11/14/daily-202-hate-crimes-are-a-much-bigger-problem-than-even-the-new-fbi-statistics-show/5beba5bd1b326b39290547e2/?utm_term=.e203814306e8 (2018).

Reitman, J. U.S. Law Enforcement Failed to See the Threat of White Nationalism. Now they Don’t Know How to Stop It https://www.nytimes.com/2018/11/03/magazine/FBI-charlottesville-white-nationalism-far-right.html (2018).

Southern Poverty Law Center (SPLC). Extremist Groups https://www.splcenter.org/fighting-hate/extremist-files/groups (2018).

John, A. et al. Self-harm, suicidal behaviours, and cyberbullying in children and younG people: systematic review. J. Med. Internet Res. 20, e129 (2018).

Berman, M. Prosecutors Say Accused Charleston Church Gunman Self-Radicalized Online https://www.washingtonpost.com/news/post-nation/wp/2016/08/22/prosecutors-say-accused-charleston-church-gunman-self-radicalized-online/?utm_term=.4f17303dffd4 (2016).

Pagliery, J. The Suspect in Congressional Shooting Was Bernie Sanders Supporter, Strongly Anti-Trump http://www.cnn.com/2017/06/14/homepage2/james-hodgkinson-profile/index.html (2017).

Yan, H., Simon, D. & Graef, A. Campus Killing: Suspect is a Member of ‘Alt-Reich’ Facebook Group http://www.cnn.com/2017/05/22/us/university-of-maryland-stabbing/index.html (2017).

Amend, A. Analyzing a Terrorist’s Social Media Manifesto: the Pittsburgh Synagogue Shooter’s Posts on Gab https://www.splcenter.org/hatewatch/2018/10/28/analyzing-terrorists-social-media-manifesto-pittsburgh-synagogue-shooters-posts-gab (2018).

Gill, P. & Corner, E. in Terrorism Online: Politics, Law, Technology (eds Jarvis, L. et al.) Ch. 1 (Routledge, 2015).

Gill, P. et al. Terrorist use of the internet by the numbers quantifying behaviors, patterns, and processes. Criminol. Public Pol. 16, 99–117 (2017).

Gill, P. Lone Actor Terrorists: A Behavioral Analysis (Routledge, 2015).

Gill, P., Horgan, J. & Deckert, P. Bombing alone: tracing the motivations and antecedent behaviors of lone-actor terrorists. J. Forensic Sci. 59, 425–435 (2014).

Schuurman, B. et al. End of the lone wolf: the typology that should not have been. Stud. Conflict Terrorism 42, 771–778 (2017).

Panin, A. & Smith, L. Russian Students Targeted as Recruits by Islamic State https://www.bbc.co.uk/news/world-europe-33634214 (2015).

Foster, M. The Racist Online Abuse of Meghan Markle Has Put Royal Staff on High Alert https://www.cnn.com/2019/03/07/uk/meghan-kate-social-media-gbr-intl/index.html (2019).

Wakefield, J. Christchurch Shootings: Social Media Races to Stop Attack Footage https://www.bbc.com/news/technology-47583393 (2019).

O’Brien, S. A. Moderating the Internet is Hurting Workers https://www.cnn.com/2019/02/28/tech/facebook-google-content-moderators/index.html (2019).

KrebsOnSecurity. Deleted Facebook Cybercrime Groups Had 300,000 Members https://krebsonsecurity.com/2018/04/deleted-facebook-cybercrime-groups-had-300000-members/ (2019).

Wong, J. C. Anti-Vaxx Mobs: Doctors Face Harassment Campaigns on Facebook https://www.theguardian.com/technology/2019/feb/27/facebook-anti-vaxx-harassment-campaigns-doctors-fight-back (2019).

Martínez, A. G. Want Facebook to Censor Speech? Be Careful What You Wish For https://www.wired.com/story/want-facebook-to-censor-speech-be-careful-what-you-wish-for/ (2018).

Stanley, H. E. Introduction to Phase Transitions and Critical Phenomena (Oxford Univ. Press, 1988).

Hedström, P., Sandell, R. & Stern, C. Meso-level networks and the diffusion of social movements. Am. J. Sociol. 106, 145–172 (2000).

Grinberg, N., Joseph, K., Friedland, L., Swire-Thompson, B. & Lazer, D. Fake news on Twitter during the 2016 U.S. presidential election. Science 363, 374–378 (2019).

Johnson, N. F. et al. New online ecology of adversarial aggregates: ISIS and beyond. Science 352, 1459–1463 (2016).

Havlin, S., Kenett, D. Y., Bashan, A., Gao, J. & Stanley, H. E. Vulnerability of network of networks. Eur. Phys. J. Spec. Top. 223, 2087 (2014).

Palla, G., Barabási, A. L. & Vicsek, T. Quantifying social group evolution. Nature 446, 664–667 (2007).

Jarrett, T. C., Ashton, D. J., Fricker, M. & Johnson, N. F. Interplay between function and structure in complex networks. Phys. Rev. E 74, 026116 (2006).

Acknowledgements

N.F.J. is supported by US Air Force (AFOSR) grant FA9550-16-1-0247.

Author information

Authors and Affiliations

Contributions

All authors contributed to the research design, the analysis and writing the paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Peer review information Nature thanks Paul Gill, Nour Kteily and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Extended data figures and tables

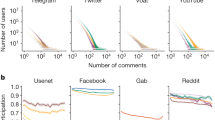

Extended Data Fig. 1 Power laws.

a, b, Power laws for the KKK ecology (a) and the ecology of illicit financial activities (b). Their power-law exponents (α) are similar in a and b, and also consistent with c. c, The results from aggregating data from different thematic subsystems, each of which has a power-law distribution with an exponent (βi) distributed around 2.5. d, Summary of the simulation procedure. N power-law distributions are created with a power-law exponent distributed around 2.5. Power-law exponents were then sampled, followed by a power-law test. e, Distribution of the resulting power-law exponents from this simulation procedure, for different values of the mean number of points in the underlying distributions (mu values). The resulting power law exponents α are centred near 1.7, as observed in a and b.

Extended Data Fig. 2 Cluster loop.

a, Cluster loop from Fig. 2. b, Example of a loop of clusters.

Extended Data Fig. 3 Predicted policy effects.

a, The effects of policy 1, with on average more than 550 widely spaced time steps for τ = 10 and N = 104. If the size of an aggregate remains within the range smin to smax for a particular time period τ, that aggregate then fragments. b, The effects of policy 2. Colours represent different intervention starting times (tI) in units of days (vertical grey lines): green tI = 80, red tI = 120, blue tI = 200. Line types represent different percentages of individuals randomly removed (that is, banned) at time tI: dashed line 10%, dotted line 30%, solid line 50%. c, Results for policy 3 of the time to extinction (T) as a function of the initial population partition (N + P = 1,000 fixed, with N being the initial size of the hate population and P being the initial size of the anti-hate population) and the banning rate of the platform, from numerical simulations and also analytic theory. d, Policy 4 shows effect of different allocations of 100 peacekeepers in the hate-cluster versus anti-hate-cluster scenario. nc is the number of clusters of peacekeepers (that is, individuals of type C) that have size sc.

Supplementary information

Supplementary Information

Supplementary Methods, Supplementary Discussion, Supplementary Equations and Supplementary Notes.

Source data

Rights and permissions

About this article

Cite this article

Johnson, N.F., Leahy, R., Restrepo, N.J. et al. Hidden resilience and adaptive dynamics of the global online hate ecology. Nature 573, 261–265 (2019). https://doi.org/10.1038/s41586-019-1494-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41586-019-1494-7

This article is cited by

-

Persistent interaction patterns across social media platforms and over time

Nature (2024)

-

Long online discussions are consistently the most toxic

Nature (2024)

-

Do users adopt extremist beliefs from exposure to hate subreddits?

Social Network Analysis and Mining (2024)

-

Rise of post-pandemic resilience across the distrust ecosystem

Scientific Reports (2023)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.