Abstract

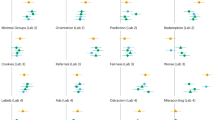

Many researchers rely on meta-analysis to summarize research evidence. However, there is a concern that publication bias and selective reporting may lead to biased meta-analytic effect sizes. We compare the results of meta-analyses to large-scale preregistered replications in psychology carried out at multiple laboratories. The multiple-laboratory replications provide precisely estimated effect sizes that do not suffer from publication bias or selective reporting. We searched the literature and identified 15 meta-analyses on the same topics as multiple-laboratory replications. We find that meta-analytic effect sizes are significantly different from replication effect sizes for 12 out of the 15 meta-replication pairs. These differences are systematic and, on average, meta-analytic effect sizes are almost three times as large as replication effect sizes. We also implement three methods of correcting meta-analysis for bias, but these methods do not substantively improve the meta-analytic results.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The data used in this paper are posted at the project’s OSF repository (link: https://osf.io/vw3p6).

Code availability

The analysis code for all analyses are available at the project’s OSF repository (link: https://osf.io/vw3p6).

Change history

02 June 2020

A Correction to this paper has been published: https://doi.org/10.1038/s41562-020-0864-3

References

Siddaway, A. P., Wood, A. M. & Hedges, L. V. How to do a systematic review: a best practice guide for conducting and reporting narrative reviews, meta-analyses, and meta-syntheses. Annu. Rev. Psychol. 70, 747–770 (2019).

Cumming, G. The new statistics: why and how. Psychol. Sci. 25, 7–29 (2014).

Stanley, T. D. Wheat from chaff: meta-analysis as quantitative literature review. J. Econ. Perspect. 15, 131–150 (2001).

Gurevitch, J., Koricheva, J., Nakagawa, S. & Stewart, G. Meta-analysis and the science of research synthesis. Nature 555, 175–182 (2018).

Camerer, C. F. et al. Evaluating replicability of laboratory experiments in economics. Science 351, 1433–1436 (2016).

Camerer, C. F. et al. Evaluating the replicability of social science experiments in Nature and Science between 2010 and 2015. Nat. Hum. Behav. 2, 637 (2018).

Klein, R. A. et al. Investigating variation in replicability: a “Many Labs” replication project. Soc. Psychol. 45, 142–152 (2014).

Klein, R. A. et al. Many Labs 2: investigating variation in replicability across samples and settings. Adv. Methods Pract. Psychol. Sci. 1, 443–490 (2018).

Ebersole, C. R. et al. Many Labs 3: evaluating participant pool quality across the academic semester via replication. J. Exp. Soc. Psychol. 67, 68–82 (2016).

Open Science Collaboration. Estimating the reproducibility of psychological science. Science 349, aac4716 (2015).

Duval, S. & Tweedie, R. A nonparametric “trim and fill” method of accounting for publication bias in meta-analysis. J. Am. Stat. Assoc. 95, 89–98 (2000).

Ioannidis, J. P. Why most published research findings are false. PLoS Med. 2, e124 (2005).

Ioannidis, J. P. Why most discovered true associations are inflated. Epidemiology 19, 640–648 (2008).

Simmons, J. P., Nelson, L. D. & Simonsohn, U. False-positive psychology: undisclosed flexibility in data collection and analysis allows presenting anything as significant. Psychol. Sci. 22, 1359–1366 (2011).

Gelman, A. & Carlin, J. Beyond power calculations: assessing type S (sign) and type M (magnitude) errors. Perspect. Psychol. Sci. 9, 641–651 (2014).

Gelman, A. & Loken, E. The statistical crisis in science. Am. Sci. 102, 460 (2014).

Brodeur, A., Lé, M., Sangnier, M. & Zylberberg, Y. Star wars: the empirics strike back. Am. Econ. J. Appl. Econ. 8, 1–32 (2016).

Andrews, I. & Kasy, M. Identification of and correction for publication bias. Am. Econ. Rev. 109, 2766–2794 (2019).

Schäfer, T. & Schwarz, M. A. The meaningfulness of effect sizes in psychological research: differences between sub-disciplines and the impact of potential biases. Front. Psychol. 10, article 813 (2019).

John, L. K., Loewenstein, G. & Prelec, D. Measuring the prevalence of questionable research practices with incentives for truth telling. Psychol. Sci. 23, 524–532 (2012).

Franco, A., Malhotra, N. & Simonovits, G. Publication bias in the social sciences: unlocking the file drawer. Science 345, 1502–1505 (2014).

Franco, A., Malhotra, N. & Simonovits, G. Underreporting in political science survey experiments: comparing questionnaires to published results. Polit. Anal. 23, 306–312 (2015).

Sterne, J. A., Gavaghan, D. & Egger, M. Publication and related bias in meta-analysis: power of statistical tests and prevalence in the literature. J. Clin. Epidemiol. 53, 1119–1129 (2000).

Rothstein, H. R., Sutton, A. J. & Borenstein, M. Publication Bias in Meta-Analysis: Prevention, Assessment and Adjustments (Wiley, 2005).

Schwarzer, G., Carpenter, J. R. & Rücker, G. in Meta-analysis with R. Use R! 107–141 (Springer, 2015).

Polanin, J. R., Tanner-Smith, E. E. & Hennessy, E. A. Estimating the difference between published and unpublished effect sizes: a meta-review. Rev. Educ. Res. 86, 207–236 (2016).

Nelson, L. D., Simmons, J. & Simonsohn, U. Psychology’s renaissance. Annu. Rev. Psychol. 69, 511–534 (2018).

Vosgerau, J., Simonsohn, U., Nelson, L. D. & Simmons, J. P. 99% impossible: a valid, or falsifiable, internal meta-analysis. J. Exp. Psychol. Gen. 148, 1628 (2019).

Vevea, J. L. & Hedges, L. V. A general linear model for estimating effect size in the presence of publication bias. Psychometrika 60, 419–435 (1995).

Hedges, L. V. Modeling publication selection effects in meta-analysis. Stat. Sci. 7, 246–255 (1992).

Stanley, T. D. & Doucouliagos, H. Meta‐regression approximations to reduce publication selection bias. Res. Synth. Methods 5, 60–78 (2014).

Iyengar, S. & Greenhouse, J. B. Selection models and the file drawer problem. Stat. Sci. 3, 109–117 (1988).

Simonsohn, U., Nelson, L. D. & Simmons, J. P. P-curve and effect size: correcting for publication bias using only significant results. Perspect. Psychol. Sci. 9, 666–681 (2014).

Carter, E. C., Schönbrodt, F. D., Gervais, W. M. & Hilgard, J. Correcting for bias in psychology: a comparison of meta-analytic methods. Adv. Methods Pract. Psychol. Sci. 2, 115–144 (2019).

McShane, B. B., Böckenholt, U. & Hansen, K. T. Adjusting for publication bias in meta-analysis: an evaluation of selection methods and some cautionary notes. Perspect. Psychol. Sci. 11, 730–749 (2016).

Stanley, T. D. Limitations of PET-PEESE and other meta-analysis methods. Soc. Psychol. Personal. Sci. 8, 581–591 (2017).

Simons, D. J., Holcombe, A. O. & Spellman, B. A. An introduction to registered replication reports at perspectives on psychological science. Perspect. Psychol. Sci. 9, 552–555 (2014).

Oppenheimer, D. M., Meyvis, T. & Davidenko, N. Instructional manipulation checks: detecting satisficing to increase statistical power. J. Exp. Soc. Psychol. 45, 867–872 (2009).

Tversky, A. & Kahneman, D. The framing of decisions and the psychology of choice. Science 211, 453–458 (1981).

Husnu, S. & Crisp, R. J. Elaboration enhances the imagined contact effect. J. Exp. Soc. Psychol. 46, 943–950 (2010).

Schwarz, N., Strack, F. & Mai, H.-P. Assimilation and contrast effects in part-whole question sequences: a conversational logic analysis. Public Opin. Q. 55, 3–23 (1991).

Hauser, M., Cushman, F., Young, L., Kang‐Xing Jin, R. & Mikhail, J. A dissociation between moral judgments and justifications. Mind Lang. 22, 1–21 (2007).

Critcher, C. R. & Gilovich, T. Incidental environmental anchors. J. Behav. Decis. Mak. 21, 241–251 (2008).

Graham, J., Haidt, J. & Nosek, B. A. Liberals and conservatives rely on different sets of moral foundations. J. Personal. Soc. Psychol. 96, 1029 (2009).

Jostmann, N. B., Lakens, D. & Schubert, T. W. Weight as an embodiment of importance. Psychol. Sci. 20, 1169–1174 (2009).

Monin, B. & Miller, D. T. Moral credentials and the expression of prejudice. J. Personal. Soc. Psychol. 81, 33 (2001).

Schooler, J. W. & Engstler-Schooler, T. Y. Verbal overshadowing of visual memories: some things are better left unsaid. Cogn. Psychol. 22, 36–71 (1990).

Sripada, C., Kessler, D. & Jonides, J. Methylphenidate blocks effort-induced depletion of regulatory control in healthy volunteers. Psychol. Sci. 25, 1227–1234 (2014).

Rand, D. G., Greene, J. D. & Nowak, M. A. Spontaneous giving and calculated greed. Nature 489, 427 (2012).

Strack, F., Martin, L. L. & Stepper, S. Inhibiting and facilitating conditions of the human smile: a nonobtrusive test of the facial feedback hypothesis. J. Personal. Soc. Psychol. 54, 768 (1988).

Srull, T. K. & Wyer, R. S. The role of category accessibility in the interpretation of information about persons: some determinants and implications. J. Personal. Soc. Psychol. 37, 1660 (1979).

Mazar, N., Amir, O. & Ariely, D. The dishonesty of honest people: a theory of self-concept maintenance. J. Mark. Res. 45, 633–644 (2008).

Hagger, M. S., Wood, C., Stiff, C. & Chatzisarantis, N. L. Ego depletion and the strength model of self-control: a meta-analysis. Psychol. Bull. 136, 495 (2010).

Feltz, A. & May, J. The means/side-effect distinction in moral cognition: a meta-analysis. Cognition 166, 314–327 (2017).

Meissner, C. A. & Brigham, J. C. A meta‐analysis of the verbal overshadowing effect in face identification. Appl. Cogn. Psychol. 15, 603–616 (2001).

Kivikangas, J. M., Lönnqvist, J.-E. & Ravaja, N. Relationships between moral foundations and political orientation–local study and meta-analysis. in Annual Convention of Society for Personality and Social Psychology https://doi.org/10.13140/RG.2.1.2277.0964 (2016).

DeCoster, J. & Claypool, H. M. A meta-analysis of priming effects on impression formation supporting a general model of informational biases. Personal. Soc. Psychol. Rev. 8, 2–27 (2004).

Roth, S., Robbert, T. & Straus, L. On the sunk-cost effect in economic decision-making: a meta-analytic review. Bus. Res. 8, 99–138 (2015).

Rabelo, A. L., Keller, V. N., Pilati, R. & Wicherts, J. M. No effect of weight on judgments of importance in the moral domain and evidence of publication bias from a meta-analysis. PloS One 10, e0134808 (2015).

Henriksson, K. A. C. Irrelevant Quantity Effects: A Meta-analysis. Master Thesis (California State University, Fresno, 2015).

Miles, E. & Crisp, R. J. A meta-analytic test of the imagined contact hypothesis. Group Process. Intergr. Relat. 17, 3–26 (2014).

Belle, N. & Cantarelli, P. What causes unethical behavior? A meta-analysis to set an agenda for public administration research. Pub. Adm. Rev. 77, 327–339 (2017).

Blanken, I., van de Ven, N. & Zeelenberg, M. A meta-analytic review of moral licensing. Pers. Soc. Psychol. Bull. 41, 540–558 (2015).

Rand, D. G. Cooperation, fast and slow: meta-analytic evidence for a theory of social heuristics and self-interested deliberation. Psychol. Sci. 27, 1192–1206 (2016).

Schimmack, U. & Oishi, S. The influence of chronically and temporarily accessible information on life satisfaction judgments. J. Pers. Soc. Psychol. 89, 395–406 (2005).

Coles, N. A., Larsen, J. T. & Lench, H. C. A meta-analysis of the facial feedback literature: effects of facial feedback on emotional experience are small and variable. Psychol. Bull. 145, 610–651 (2019).

Kühberger, A. The influence of framing on risky decisions: a meta-analysis. Org. Behav. Hum. Dec. Proc. 75, 23–55 (1998).

Verschuere, B. et al. Registered replication report on Mazar, Amir, and Ariely (2008). Adv. Methods Pract. Psychol. Sci. 1, 299–317 (2018).

Bouwmeester, S. et al. Registered replication report: Rand, Greene, and Nowak (2012). Perspect. Psychol. Sci. 12, 527–542 (2017).

McCarthy, R. J. et al. Registered replication report on Srull and Wyer (1979). Adv. Methods Pract. Psychol. Sci. 1, 321–336 (2018).

Wagenmakers, E.-J. et al. Registered replication report: Strack, Martin, & Stepper (1988). Perspect. Psychol. Sci. 11, 917–928 (2016).

Hagger, M. S. et al. A multilab preregistered replication of the ego-depletion effect. Perspect. Psychol. Sci. 11, 546–573 (2016).

Alogna, V. et al. Registered replication report: Schooler and Engstler-Schooler (1990). Perspect. Psychol. Sci. 9, 556–578 (2014).

Benjamin, D. J. et al. Redefine statistical significance. Nat. Hum. Behav. 2, 6 (2018).

Fanelli, D., Costas, R. & Ioannidis, J. P. Meta-assessment of bias in science. Proc. Natl Acad. Sci. USA 114, 3714–3719 (2017).

Augusteijn, H. E., van Aert, R. & van Assen, M. A. The effect of publication bias on the Q test and assessment of heterogeneity. Psychol. Methods 24, 116 (2019).

Stanley, T., Carter, E. C. & Doucouliagos, H. What meta-analyses reveal about the replicability of psychological research. Psychol. Bull. 144, 1325–1346 (2018).

van Aert, R. C., Wicherts, J. M. & van Assen, M. A. Conducting meta-analyses based on P values: reservations and recommendations for applying P-uniform and P-curve. Perspect. Psychol. Sci. 11, 713–729 (2016).

Simonsohn, U., Nelson, L. D. & Simmons, J. P. P-curve: a key to the file-drawer. J. Exp. Psychol. Gen. 143, 534 (2014).

LeLorier, J., Gregoire, G., Benhaddad, A., Lapierre, J. & Derderian, F. Discrepancies between meta-analyses and subsequent large randomized, controlled trials. N. Engl. J. Med. 337, 536–542 (1997).

Nosek, B. A., Ebersole, C. R., DeHaven, A. C. & Mellor, D. T. The preregistration revolution. Proc. Natl Acad. Sci. USA 115, 2600–2606 (2018).

Mullen, B. Strength and immediacy of sources: a meta-analytic evaluation of the forgotten elements of social impact theory. J. Personal. Soc. Psychol. 48, 1458 (1985).

Holleman, B. Wording effects in survey research using meta-analysis to explain the forbid/allow asymmetry. J. Quant. Linguist. 6, 29–40 (1999).

Carter, E. C., Kofler, L. M., Forster, D. E. & McCullough, M. E. A series of meta-analytic tests of the depletion effect: self-control does not seem to rely on a limited resource. J. Exp. Psychol. Gen. 144, 796 (2015).

Baumeister, R. F., Bratslavsky, E. & Muraven, M. Ego depletion: is the active self a limited resource? J. Personal. Soc. Psychol. 74, 1252–1265 (2018).

Thaler, R. Mental accounting and consumer choice. Mark. Sci. 4, 199–214 (1985).

Borenstein, M., Hedges, L. V., Higgins, J. P. & Rothstein, H. R. Introduction to Meta-analysis (Wiley, 2011).

Galinsky, A. D., Magee, J. C., Inesi, M. E. & Gruenfeld, D. H. Power and perspectives not taken. Psychol. Sci. 17, 1068–1074 (2006).

Finkel, E. J., Rusbult, C. E., Kumashiro, M. & Hannon, P. A. Dealing with betrayal in close relationships: does commitment promote forgiveness? J. Personal. Soc. Psychol. 82, 956 (2002).

Higgins, J. P., Thompson, S. G. & Spiegelhalter, D. J. A re‐evaluation of random‐effects meta‐analysis. J. R. Stat. Soc. Ser. A 172, 137–159 (2009).

Acknowledgements

For financial support we thank J. Wallander and the Tom Hedelius Foundation (grant no. P2015-0001:1), the Swedish Foundation for Humanities and Social Sciences (grant no. NHS14-1719:1) and the Meltzer Fund in Bergen. The funders had no role in study design, data collection and analysis, decision to publish or preparation of the manuscript.

Author information

Authors and Affiliations

Contributions

A.K., E.S. and M.J. designed research and wrote the paper. A.K. and E.S. collected and analysed data. All authors approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Peer review information Primary handling editor: Aisha Bradshaw

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Supplementary Information

Supplementary Tables 1–16 and Supplementary References.

Rights and permissions

About this article

Cite this article

Kvarven, A., Strømland, E. & Johannesson, M. Comparing meta-analyses and preregistered multiple-laboratory replication projects. Nat Hum Behav 4, 423–434 (2020). https://doi.org/10.1038/s41562-019-0787-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41562-019-0787-z

This article is cited by

-

TDCS over PPC or DLPFC does not improve visual working memory capacity

Communications Psychology (2024)

-

Transparency in Cognitive Training Meta-analyses: A Meta-review

Neuropsychology Review (2024)

-

Increasing Value and Reducing Waste of Research on Neurofeedback Effects in Post-traumatic Stress Disorder: A State-of-the-Art-Review

Applied Psychophysiology and Biofeedback (2024)

-

Publication bias impacts on effect size, statistical power, and magnitude (Type M) and sign (Type S) errors in ecology and evolutionary biology

BMC Biology (2023)

-

An umbrella review of randomized control trials on the effects of physical exercise on cognition

Nature Human Behaviour (2023)