Abstract

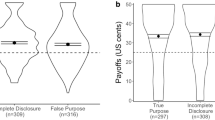

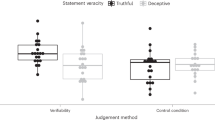

Concerns over wrongful convictions have spurred an increased focus on understanding criminal justice decision-making. This study describes an experimental approach that complements conventional mock-juror experiments and case studies by providing a rapid, high-throughput screen for identifying preconceptions and biases that can influence how jurors and lawyers evaluate evidence in criminal cases. The approach combines an experimental decision task derived from marketing research with statistical modelling to explore how subjects evaluate the strength of the case against a defendant. The results show that, in the absence of explicit information about potential error rates or objective reliability, subjects tend to overweight widely used types of forensic evidence, but give much less weight than expected to a defendant’s criminal history. Notably, for mock jurors, the type of crime also biases their confidence in guilt independent of the evidence. This bias is positively correlated with the seriousness of the crime. For practising prosecutors and other lawyers, the crime-type bias is much smaller, yet still correlates with the seriousness of the crime.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

All data used in the analyses are available and documented at https://github.com/pearsonlab/legal.

References

Gross, S. R. & O’Brien, B. Frequency and predictors of false conviction: why we know so little, and new data on capital cases. J. Empir. Leg. Stud. 5, 927–962 (2008).

Gross, S. R. Convicting the innocent. Annu. Rev. Law Soc. Sci. 4, 173–192 (2008).

Gould, J. B., Carrano, J., Leo, R. A. & Hail-Jares, K. Predicting erroneous convictions. Iowa Law Rev. 99, 471–522 (2014).

Garrett, B. L. & Neufeld, P. J. Invalid forensic science testimony and wrongful convictions. Va. Law Rev. 95, 1–97 (2009).

Garrett, B. L. Convicting the Innocent. Where Criminal Prosecutions Go Wrong (Harvard Univ. Press, Cambridge, 2011).

Cole, S. A. Forensic science and wrongful convictions: from exposer to contributor to corrector. N. Engl. Law Rev. 46, 711–736 (2011).

Vidmar, N. Case studies of pre- and midtrial prejudice in criminal and civil litigation. Law Hum. Behav. 26, 73–105 (2002).

Vidmar, N. When all of us are victims: juror prejudice and terrorist trials. Chic. Kent Law Rev. 78, 1143–1178 (2003).

Wiener, R. L., Arnot, L., Winter, R. & Redmond, B. Generic prejudice in the law: sexual assault and homicide. Basic Appl. Soc. Psych. 28, 145–155 (2006).

Vidmar, N. Generic prejudice and the presumption of guilt in sex abuse trials. Law Hum. Behav. 21, 5–25 (1997).

Gastwirth, J. L. & Sinclair, M. D. A re-examination of the 1966 Kalven-Zeisel study of judge-jury agreements and disagreements and their causes. Law Probab. Risk 3, 169–191 (2004).

Fabricant, M. C. & Carrington, T. The shifted paradigm: forensic science’s overdue evolution from magic to law. Va J. Crim. Law 4, 1–115 (2016).

Lander, E. S. et al. Forensic Science in Criminal Courts: Ensuring Scientific Validity of Feature-Comparison Methods (Executive Office of The President’s Council of Advisors on Science and Technology, 2016); https://obamawhitehouse.archives.gov/administration/eop/ostp/pcast/docsreports

McQuiston-Surrett, D. & Saks, M. J. The testimony of forensic identification science: what expert witnesses say and what factfinders hear. Law Hum. Behav. 33, 436–453 (2009).

Morrison, G. S. Special issue on measuring and reporting the precision of forensic likelihood ratios: introduction to the debate. Sci. Justice 56, 371–373 (2016).

Ulery, B. T., Hicklin, R. A., Buscaglia, J. & Roberts, M. A. Accuracy and reliability of forensic latent fingerprint decisions. Proc. Natl Acad. Sci. USA 108, 7733–7738 (2011).

National Commission on Forensic Sciences Reflecting Back—Looking Toward the Future (US Department of Justice, 2017); https://www.justice.gov/archives/ncfs/page/file/959356/download

Albright, T. D. Why eyewitnesses fail. Proc. Natl Acad. Sci. USA 114, 7758–7764 (2017).

National Reseach Council. Identifying the Culprit: Assessing Eyewitness Identification (National Academies Press, 2014); https://doi.org/10.17226/18891

Steblay, N. K. Scientific advances in eyewitness identification evidence. William Mitchell Law Rev. 41, 1090–1127 (2015).

Wells, G. L. in Modern Scientific Evidence: The Law and Science of Expert Testimony Vol. 2 (eds Faigman, D. L. et al.) 615–662 (Thomson/West, Eagan, 2016).

Thompson, W. C., Kaasa, S. O. & Peterson, T. Do jurors give appropriate weight to forensic identification evidence? J. Empir. Leg. Stud. 10, 359–397 (2013).

Smith, L. L. & Bull, R. Identifying and measuring juror pre-trial bias for forensic evidence: development and validation of the Forensic Evidence Evaluation Bias Scale. Psychol. Crime Law 18, 797–815 (2012).

Smith, L. L., Bull, R. & Holliday, R. Understanding juror perceptions of forensic evidence: investigating the impact of case context on perceptions of forensic evidence strength. J. Forensic. Sci. 56, 409–414 (2011).

Schweitzer, N. J. & Saks, M. J. The CSI effect: popular fiction about forensic science affects the public’s expectations about real forensic science. Jurimetrics 47, 357–364 (2007).

Rind, B., Jaeger, M. & Strohmetz, D. B. Effect of crime seriousness on simulated jurors’ use of inadmissible evidence. J. Soc. Psychol. 135, 417–424 (1995).

Nance, D. A. & Morris, S. B. Juror understanding of DNA evidence: an empirical assessment of presentation formats for trace evidence with a relatively small random-match probability. J. Legal. Stud. 34, 395–444 (2005).

Martire, K. A., Kemp, R. I., Sayle, M. & Newell, B. R. On the interpretation of likelihood ratios in forensic science evidence: presentation formats and the weak evidence effect. Forensic. Sci. Int. 240, 61–68 (2014).

Lieberman, J. D., Carrell, C. A., Miethe, T. D. & Krauss, D. A. Gold versus platinum: do jurors recognize the superiority and limitations of DNA evidence compared to other types of forensic evidence? Psychol. Public. Policy Law. 14, 27–62 (2008).

Kim, Y. S., Barak, G. & Shelton, D. E. Examining the ‘CSI-effect’ in the cases of circumstantial evidence and eyewitness testimony: multivariate and path analyses. J. Crim. Justice. 37, 452–460 (2009).

Kaye, D. H., Hans, V. P., Dann, B. M., Farley, E. & Albertson, S. Statistics in the jury box: how jurors respond to mitochondrial DNA match probabilities. J. Empir. Leg. Stud. 4, 797–834 (2007).

Kassin, S. M., Dror, I. E. & Kukucka, J. The forensic confirmation bias: problems, perspectives, and proposed solutions. J. Appl. Res. Mem. Cogn. 2, 42–52 (2013).

Garrett, B. & Mitchell, G. How jurors evaluate fingerprint evidence: the relative importance of match language, method information, and error acknowledgment. J. Empir. Leg. Stud. 10, 484–511 (2013).

Eisenberg, T. & Hans, V. P. Taking a stand on taking the stand: the effect of a prior criminal record on the decision to testify and on trial outcomes. Cornell Law. Rev. 94, 1353–1390 (2009).

Danziger, S., Levav, J. & Avnaim-Pesso, L. Extraneous factors in judicial decisions. Proc. Natl Acad. Sci. USA 108, 6889–6892 (2011).

Hastie, R. & Pennington, N. Psychology of Learning and Motivation Vol. 32 (eds Busemeyer, J., Hastie, R. & Medin, D. L.) 1–31 (Academic Press, San Diego, 1995).

Pennington, N. & Hastie, R. Explaining the evidence: tests of the Story Model for juror decision making. J. Pers. Soc. Psychol. 62, 189–206 (1992).

Kalven, H. & Zeisel, H. The American Jury (Little, Brown & Company, Chicago, 1966).

Young, D. M., Levinson, J. D. & Sinnett, S. Innocent until primed: mock jurors' racially biased response to the presumption of innocence. PLoS ONE 9, e92365 (2014).

Simon, D. In Doubt: The Psychology of the Criminal Justice Process (Harvard Univ. Press, Cambridge, 2012).

O’Brien, B. & Findley, K. Psychological Perspectives: Cognition and Decision Making. Examining Wrongful Convictions: Stepping Back, Moving Forward (Carolina Academic Press, Durham, 2014).

O’Brien, B. Prime suspect: an examination of factors that aggravate and counteract confirmation bias in criminal investigations. Psychol. Public. Policy Law. 15, 315–334 (2009).

Steblay, N., Hosch, H. M., Culhane, S. E. & McWethy, A. The impact on juror verdicts of judicial instruction to disregard inadmissible evidence: a meta-analysis. Law Hum. Behav. 30, 469–492 (2006).

O’Brien, B. A recipe for bias: an empirical look at the interplay between institutional incentives and bounded rationality in prosecutorial decision making. Miss. Law Rev. 74, 999–1050 (2009).

Hastie, R. in Better Than Conscious? Decision Making, the Human Mind, and Implications for Institutions (eds Engel, C. & Singer, W.) 371–390 (MIT Press, Cambridge, 2008).

Pennington, N. & Hastie, R. Evidence evaluation in complex decision making. J. Pers. Soc. Psychol. 51, 242–258 (1986).

Gustafsson, A., Harrman, A. & Huber, F. Conjoint Measurement: Methods and Applications (Springer, Berlin, Heidelberg, New York, 2013).

Gelman, A. & Hill, J. Data Analysis Using Regression and Multilevel/Hierarchical Models (Cambridge Univ. Press, New York, 2006).

Gelman, A., Fagan, J. & Kiss, A. An analysis of the New York City police department’s ‘stop-and-frisk’ policy in the context of claims of racial bias. J. Am. Stat. Assoc. 102, 813–823 (2007).

Brent, L. J. N. et al. Genetic origins of social networks in rhesus macaques. Sci. Rep. 3, 1042 (2013).

Chang, S. W. C. et al. Neural mechanisms of social decision-making in the primate amygdala. Proc. Natl Acad. Sci. USA 112, 16012–16017 (2015).

Watson, K. K. et al. Genetic influences on social attention in free-ranging rhesus macaques. Anim. Behav. 103, 267–275 (2015).

Buckholtz, J. W. & Marois, R. The roots of modern justice: cognitive and neural foundations of social norms and their enforcement. Nat. Neurosci. 15, 655–661 (2012).

Treadway, M. T. et al. Corticolimbic gating of emotion-driven punishment. Nat. Neurosci. 17, 1270–1275 (2014).

Kerr, N. L. Severity of prescribed penalty and mock jurors’ verdicts. J. Pers. Soc. Psychol. 36, 1431–1442 (1978).

Bellin, J. The silence penalty. Iowa Law Rev. 103, 395–434 (2018).

Jones, A. M. & Penrod, S. Improving the effectiveness of the Henderson instruction safeguard against unreliable eyewitness identification. Psychol. Crime Law 24, 177–193 (2018).

Magnussen, S., Melinder, A., Stridbeck, U. & Raja, A. Q. Beliefs about factors affecting the reliability of eyewitness testimony: a comparison of judges, jurors and the general public. Appl. Cogn. Psychol. 24, 122–133 (2010).

Martire, K. A. & Kemp, R. I. The impact of eyewitness expert evidence and judicial instruction on juror ability to evaluate eyewitness testimony. Law Hum. Behav. 33, 225–236 (2009).

Safer, M. A. et al. Educating jurors about eyewitness testimony in criminal cases with circumstantial and forensic evidence. Int. J. Law Psychiatry 47, 86–92 (2016).

Scurich, N. The differential effect of numeracy and anecdotes on the perceived fallibility of forensic science. Psychiatry, Psychol. Law 22, 616–623 (2015).

Buhrmester, M., Kwang, T. & Gosling, S. D. Amazon’s Mechanical Turk a new source of inexpensive, yet high-quality, data? Perspect. Psychol. Sci. 6, 3–5 (2011).

Paolacci, G., Chandler, J. & Ipeirotis, P. Running experiments on Amazon Mechanical Turk. Judgm. Decis. Mak. 5, 411–419 (2010).

Stewart, N. et al. The average laboratory samples a population of 7,300 Amazon Mechanical Turk workers. Judgm. Decis. Mak. 10, 479–491 (2015).

Chandler, J., Mueller, P. & Paolacci, G. Nonnaïveté‚ among Amazon Mechanical Turk workers: consequences and solutions for behavioral researchers. Behav. Res. Methods 46, 112–130 (2014).

Carpenter, B. et al. Stan: a probabilistic programming language. J. Stat. Softw. 76, 1–32 (2017).

National Research Council Strengthening Forensic Science in the United States: A Path Forward (National Academies Press, 2009).

Laudan, L. & Allen, R. J. The devastating impact of prior crimes evidence and other myths of the criminal justice process. J. Crim. Law Criminol. 101, 493–527 (2011).

Gross, S. R., O’Brien, B., Hu, C. & Kennedy, E. H. Rate of false conviction of criminal defendants who are sentenced to death. Proc. Natl Acad. Sci. USA 111, 7230–7235 (2014).

Ngo, L. et al. Two distinct moral mechanisms for ascribing and denying intentionality. Sci. Rep. 5, 17390 (2015).

Cole, S. A. Implementing counter-measures against confirmation bias in forensic science. J. Appl. Res. Mem. Cogn. 2, 61–62 (2013).

Desmarais, S. L. & Read, J. D. After 30 years, what do we know about what jurors know? A meta-analytic review of lay knowledge regarding eyewitness factors. Law Hum. Behav. 35, 200–210 (2011).

Ginther, M. R. et al. The language of mens rea. Vanderbilt Law Rev. 67, 1327 (2014).

Koehler, J. J. & Meixner, J. B. in T he Psychology of Juries (ed. Kovera, M. B.) 161–183 (American Psychological Association, Washington DC, 2017).

Scurich, N. What do experimental simulations tell us about the effect of neuro/genetic evidence on jurors? J. Law. Biosci. 5, 204–207 (2018).

National Research Council Identifying the Culprit: Assessing Eyewitness Identification (National Academies Press, 2014); https://doi.org/10.17226/18891

Rouder, J. N. Optional stopping: No problem for Bayesians. Psychon. Bull. Rev. 21, 301–308 (2014).

Pennington, N. & Hastie, R. Explanation-based decision making: effects of memory structure on judgment. J. Exp. Psychol. Learn. Mem. Cogn. 14, 521–533 (1988).

Thompson, W., Black, J., Jain, A. & Kadane, J. Forensic Science Assessments: A Quality and Gap Analysis—Latent Fingerprint Examination (American Association for the Advancement of Science, 2017).

Gelman, A. et al. Bayesian Data Analysis (CRC Press, Boca Raton, 2013).

Acknowledgements

We thank D. Angel for valuable discussion throughout this project. A. Demasi, M. Rabil, M. Nerheim, P. Campos and A. Buras provided invaluable help recruiting subjects for these studies. We are especially grateful to the law students, prosecutors and other lawyers who volunteered their time as research subjects. This work was supported by an Incubator Award from the Duke Institute for Brain Sciences, NSF grant no. 1655445 and by a career development award from the NIH Big Data to Knowledge Program (grant no. K01-ES-025442 to J.M.P.). The funders had no role in study design, data collection and analysis, decision to publish or preparation of the manuscript.

Author information

Authors and Affiliations

Contributions

J.M.P. and J.H.P.S. conceived the project. All authors contributed to the task design. D.A.B., D.H.B. and J.A.G.S. wrote the crime scenarios and evidence modules. J.L., J.A.G.S. and J.M.P. designed and coded the online task, ran the mTurk experiments and processed the data. J.H.P.S. and D.H.B. recruited the legally trained subjects and administered the in-person sessions. J.M.P. wrote/designed the computational models. J.L., J.A.G.S. and J.M.P. analysed the data. J.H.P.S., J.M.P. and R.M.C. wrote the paper.

Corresponding author

Ethics declarations

Competing Interests

D.A.B. and A.M. are partners in a trial consulting firm, whose practise includes civil litigation and criminal defence. A.M., J.H.P.S. and D.H.B. are attorneys, part of whose practises include criminal defence. J.H.P.S. works with both criminal defence attorneys and prosecutors. D.H.B. represents plaintiffs in civil cases and death row inmates in post-conviction criminal cases.

Additional information

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Supplementary Information

Supplementary Methods, Supplementary Tables 1–10, Supplementary Figures 1–12, Supplementary References

41562_2018_451_MOESM2_ESM.pdf

Reporting Summary. Further information on research design is available in the Nature Research Reporting Summary linked to this article.

Rights and permissions

About this article

Cite this article

Pearson, J.M., Law, J.R., Skene, J.A.G. et al. Modelling the effects of crime type and evidence on judgments about guilt. Nat Hum Behav 2, 856–866 (2018). https://doi.org/10.1038/s41562-018-0451-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41562-018-0451-z

This article is cited by

-

Student-Athlete Male-Perpetrated Sexual Assault Against Men: Racial Disparities in Perceptions of Culpability and Punitiveness

American Journal of Criminal Justice (2023)

-

The Influence of Inconsistency in Eyewitness Reports, Eyewitness Age and Crime Type on Mock Juror Decision-Making

Journal of Police and Criminal Psychology (2022)

-

Attenuated total reflection-Fourier transform infrared spectroscopy: a universal analytical technique with promising applications in forensic analyses

International Journal of Legal Medicine (2022)

-

Methodological triangulation

Nature Human Behaviour (2018)