Abstract

Many atomic descriptors are currently limited by their unfavourable scaling with the number of chemical elements S e.g. the length of body-ordered descriptors, such as the SOAP power spectrum (3-body) and the (ACE) (multiple body-orders), scales as (NS)ν where ν + 1 is the body-order and N is the number of radial basis functions used in the density expansion. We introduce two distinct approaches which can be used to overcome this scaling for the SOAP power spectrum. Firstly, we show that the power spectrum is amenable to lossless compression with respect to both S and N, so that the descriptor length can be reduced from \({{{\mathcal{O}}}}({N}^{2}{S}^{2})\) to \({{{\mathcal{O}}}}\left(NS\right)\). Secondly, we introduce a generalised SOAP kernel, where compression is achieved through the use of the total, element agnostic density, in combination with radial projection. The ideas used in the generalised kernel are equally applicably to any other body-ordered descriptors and we demonstrate this for the (ACSF).

Similar content being viewed by others

Introduction

Increases in computational power have made it possible to use quantum mechanical methods1,2,3 to model materials at a level of accuracy where chemical transformations can be usefully studied. This ability has opened the door for in silico materials modelling to usefully compliment, and sometimes even replace, the need for costly experiments. A major drawback of such computational techniques is the limited simulation sizes and timescales that can be used. Empirical potentials do away with the electrons and model the total energy as a sum of local atomic energies which depend only on the local environment of each atom. These simplifications lead to potentials that are typically many orders of magnitude faster and, crucially, a computational cost that scales linearly with the number of atoms. Moving from hand-crafted potentials with tens of parameters to machine-learned potentials4,5 has led to a substantial increase in the accuracy and transferability of such potentials in recent years6,7,8,9,10. This improvement has enabled vast, and accurate, simulations involving hundreds of thousands of atoms11 that would otherwise be completely inaccessible.

Another area which has seen rapid development is the application of both supervised and unsupervised learning techniques to large scale materials data12,13,14. Such techniques have already seen great success and will undoubtedly prove useful in expediting material design and discovery15 as well as understanding observed trends. Both potential fitting and, more broadly, materials machine learning require mathematical descriptions of material structures that can be used as inputs to models. Many different types of descriptors have been proposed16, almost all of which are invariant to translations, rotations, and permutation of equivalent atoms. Incorporating these symmetries within the descriptor avoids models having to learn them, leading to greater data efficiency. Another commonality is the rapid increase in descriptor size for environments composed of multiple elements. For instance, the size of the SOAP power spectrum17 scales quadratically with the number of elements S whilst the length of the bispectrum scales as S3. This increase poses challenges for interatomic potentials, both in terms of evaluation speed and the memory required for fitting, as well as more generally for the storage of descriptors in databases. Before introducing some of the existing approaches it is worth noting that the problem can be somewhat mitigated by exploiting sparsity where possible. For instance, the SOAP power spectrum is sparse with respect to elements, so that even if there are Stotal elements present across a given dataset, only those present in a given environment Senv need be considered when computing an individual descriptor. Whilst this can facilitate a storage saving, it does not always lead to a reduction in the number of model parameters, e.g. in a linear model, nor does it fully solve the problem for higher-body order descriptors; the corresponding SOAP bispectrum for a high entropy alloy liquid environment with Senv = 8 is ~500 times larger than if a single element were present.

A great deal of effort has already gone into ways of reducing this scaling, with many interesting approaches being used. In refs. 18,19 constant complexity in S is achieved by concatenating two descriptors, one of which is element agnostic and another where the contributions from each element are weighted. This effectively amounts to embedding the elemental information into two dimensions, rather than keeping different elements entirely distinct. A similar strategy was used in refs. 20,21, except here the element embeddings were optimised during model fitting, so that the final embeddings contained a data-driven measure of chemical similarity between the elements. Approaching the problem from an information content perspective, the recent work of ref. 22, demonstrated that a model fitted to as few as 10% of the power spectrum components led to negligible degradation in force errors on an independent test set, suggesting that significant compression can be achieved. Similar results were seen in ref. 23, where descriptors were selected using CUR matrix decomposition24, in ref. 25, where state-of-the-art model performance was achieved on the QM9 dataset with a heavily compressed descriptor obtained though repeated tensor products and reduction using Principal Component Analysis (PCA), and also in ref. 26 where a data-driven approach to constructing an optimal radial basis was employed with great success.

A complementary strategy is to focus on general, non-data driven, ways of reducing the scaling with S whilst minimising the loss of information. Such compression strategies could prove useful in situations where the dataset evolves over time, e.g. adding configurations with new elements, or for the storage of descriptors in databases such as NOMAD14,27, where the use-case for the descriptors is not yet known. Analytic compression strategies could also be used before applying data-driven compressions, allowing for larger and more diverse datasets to be treated in this way. The recently introduced Moment Invariant Local Atomic Descriptors descriptors19 follow this philosophy by using a minimal set of rotational invariants as the descriptor, such that the environment can be recovered by inverting the descriptor. Whilst the primary focus of that work was not to reduce the scaling with S, we stress that the core ideas used are highly pertinent and that similar themes are present here.

In this work we introduce two non-data driven approaches for compressing the SOAP power spectrum; the power spectrum is available through the quippy28,29, dscribe30 and librascal31 packages. Firstly, by considering the ability to recover the density expansion coefficients from the power spectrum, we introduce a compressed power spectrum and show that, under certain conditions, it is possible to recover the original descriptor from the compressed version. Secondly, we introduce a generalisation of the SOAP kernel which affords compression with respect to both S and the number of radial basis functions N used in the density expansion. This kernel retains a useful physical interpretation and the ideas used are applicable to all body-ordered descriptors, which is demonstrated using the ACSFs32. Finally, we evaluate the performance of the compressed descriptors across a variety of datasets using numerical tests which probe the information content, sensitivity to small perturbations and the accuracy of fitted energy models and force-fields.

Results

SOAP

The SOAP kernel, introduced in ref. 17, provides a way of computing the similarity between a pair of atomic environments. Invariance to permutation of equivalent atoms is achieved by forming densities,

where a separate density is constructed for each element α, σ controls the width of the Gaussians used to construct the density, fcut(r) is a cutoff function that ensures atoms enter the environment smoothly and ri and si denote the position and element of the ith neighbour atom respectively. The kernel is made invariant to the orientation of environments by integrating over all possible rotations \(\hat{R}\in SO(3)\) with the full multi-species kernel33 defined as

where \(k(\rho ,{\rho }^{\prime})\) is the linear SOAP kernel and

is the polynomial SOAP kernel typically used in models.

Expanding the density using the spherical harmonics \({Y}_{lm}(\hat{{{{\bf{r}}}}})\), and a set of orthogonal radial basis functions gn(r),

and substituting into the definition of the SOAP kernel with ν = 2 yields,

where p and \({{{\bf{{p}}}^{\prime}}}\) are the SOAP power spectrums for the environments. In this form the rotational invariance of the power spectrum can be seen by noting that density expansion coefficients transform under rotations as

where D is a unitary Wigner-D matrix, so that the terms \({{{{\bf{c}}}}}_{nl}^{\alpha * }\cdot {{{{\bf{c}}}}}_{{n}^{\prime}l}^{\beta }\) are individually invariant17. Taking σ → 0 (for convienience) in Eq. (2) with ν = 2 reveals that the power spectrum is a 3-body descriptor,

where i, j and p, q index atoms in the first and second environment respectively. The integration over all rotations ensures that each matching pair of triangles, where one vertex is the central atom and the others are neighbour atoms, between the environments contributes to the kernel, so that the power spectrum is effectively a histogram of triangles. Increasing ν increases the body order of the descriptor, so that the bispectrum (ν = 3) corresponds to a histogram of tetrahedra and so on. With multiple elements, the neighbour atoms at the corner of each triangle must also match, so that there is a histogram of triangles for each pair of elements. In general, the length of the descriptor scales as Sν where S is the number of elements and the body-order is ν + 1, rendering these descriptors impractical for large S. In this work we investigate a number of alternatives which aim to circumvent this scaling, with a particular focus on compressing the power spectrum.

Information content

After exploiting symmetry, \({p}_{n{n}^{\prime}l}^{\alpha \beta }={p}_{{n}^{\prime}nl}^{\beta \alpha }\), the length of the power spectrum is \(\frac{1}{2}NS(NS+1)(L+1)\), where N, L and S are the number of radial basis functions, highest order of spherical harmonic and total number of elements respectively. Here we show that the power spectrum is amenable to lossless compression, with the final descriptor having length NS(L + 1)2. We start by highlighting that the sum over m in Eq. (6) can instead be viewed as a dot product between density expansions coefficient vectors \({{{{\bf{c}}}}}_{nl}^{\alpha }\) of length 2l + 1. Then, because all products between coefficient vectors with equal l index are taken, the power spectrum can be re-shaped from a vector into a sequence of l-slices

where the l index has been suppressed as it is the same for all vectors in a given slice. Viewed as such, Pl is a Gram matrix between the coefficient vectors, \({P}_{l}={X}_{l}^{T}{X}_{l}\) where \({X}_{l}=({{{{\bf{c}}}}}_{1}^{\alpha },{{{{\bf{c}}}}}_{2}^{\alpha },\ldots ,{{{{\bf{c}}}}}_{N}^{S})\). As Pl only contains information on the relative orientations of the \({{{{\bf{c}}}}}_{n}^{\alpha }\), the \({{{{\bf{c}}}}}_{n}^{\alpha }\) can, at best, be recovered from Pl up to a global rotation in the 2l + 1 dimensional space. A direct consequence of this is that simultaneously rotating all coefficient vectors with equal l index does not affect the power spectrum, so that there are many different densities which share the same power spectrum. Fortunately, this issue is mitigated as atomic descriptors do not have to distinguish all possible infinite dimensional densities, but only atomic densities constructed from \({{{\mathcal{O}}}}(10)\) neighbour atoms as in Eq. (1). Furthermore, we are typically interested in physically relevant atomic configurations where the atomic nuclei do not overlap, which further restricts the region of configuration space that must be described. In combination, these effects make the problem of finding concise descriptors for atomic densities significantly more tractable than for general densities.

Returning to the power spectrum, it is interesting that whilst Pl is an NS × NS matrix, its rank is at most 2l + 1, as the rank of the (2l + 1) × NS matrix Xl is at most 2l + 1. This means that provided the first 2l + 1 columns in Xl form a basis, then all terms in Pl can be recovered from only the first 2l + 1 rows. This observation suggests that the power spectrum is amenable to non-data-driven lossless compression when 2l + 1 < NS. For situations with many different elements NS ≫ 2l + 1 so that the potential compression factor is large. Rather than simply storing the first 2l + 1 rows, we propose storing 2l + 1 random linear combinations of all rows,

where W is an NS × (2l + 1) matrix of randomly chosen weights.

A set of density expansion coefficients consistent with the original power spectrum can then be recovered from WTPl by diagonalising WTPlW = UTΛU, taking \(({{{{\bf{b}}}}}_{1},{{{{\bf{b}}}}}_{2},\ldots ,{{{{\bf{b}}}}}_{2l+1})={{{\Lambda }}}^{\frac{1}{2}}U\) and then forming \({X}_{l}={{{\Lambda }}}^{-\frac{1}{2}}U{W}^{T}{P}_{l}\), where U is a unitary matrix whose columns are the eigenvectors of WTPlW and Λ is a diagonal matrix of the eigenvalues34. This procedure fails if (b1, b2, … , b2l+1) does not form a basis, so that Λ has one or more zero eigenvalues. This could arise because rank(WTXl) < rank(Xl) ≤ 2l + 1, which is highly unlikely because of the random weights, or because rank(WTXl) = rank(Xl) = r < 2l + 1, which occurs frequently as explained below. From a recovery perspective the latter is not problematic as the same procedure can be carried out using (b1, b2, … , br) as a basis by discarding rows and columns from WTPlW as required.

By compressing all l-slices in this way only NS(L + 1)2 invariants need be stored, compared to \(\frac{1}{2}NS(NS+1)(L+1)\) for the uncompressed power spectrum (Fig. 1). Interestingly, slightly more compression can be achieved by forming the symmetric matrix QTPlQ, where Q is an NS × NS matrix of random weights and then storing only the upper right triangular portion—the original power spectrum can still be recovered in an entirely analogous fashion. The symmetry of QTPlQ means that there are actually fewer invariants then density expansion coefficients; per l slice, there are l(2l + 1) fewer invariants, which corresponds exactly to the number of distinct rotations in 2l + 1 dimensions. However, the cost of this additional compression is a loss of sparsity. Whereas only the entries of WTPl (and Pl) corresponding to elements present in the local atomic environment will be non-zero, QTPlQ will be a dense matrix as all the \({{{{\bf{c}}}}}_{nl}^{\alpha }\) are mixed together. Retaining this sparsity is exceptionally important for efficient descriptor storage as often S ≫ Senv, where S is the total number of elements present in a dataset whereas Senv is the typical number present in any given environment.

Moving from left to right Pl, WTPl and QTPlQ are shown for l = 0, 1, 2 and NS = 8. Typically NS will be significantly larger than shown here. Only the elements shown in colour need to be stored to determine all remaining elements which are shown in grey. The total length of each descriptor is listed underneath.

Finally, we note that there is an additional restriction on the rank of Pl, namely rank(Pl) ≤ nneighb where nneighb is the number of neighbouring atoms contained within the cutoff. This occurs because the density expansion vectors for the density of a single neighbour atom corresponding to different radial basis functions are all parallel. This is shown explicitly in the Supporting Information but can be understood by noting that the projections of a Gaussian onto different radial shells will vary only in magnitude, so that the angular part of the expansion, which determines the direction of \({{{{\bf{c}}}}}_{nl}^{\alpha }\), will be identical. This observation is consistent with intuition—the information content should depend on the number of neighbours—and may prove useful when constructing concise descriptors for datasets where nneighb is known to be bounded.

Generalised kernel

In the previous section we showed that it is possible to compress the SOAP power spectrum in a lossless manner. Here we introduce a family of physically interpretable compression options based on generalising the SOAP kernel. The first step is to generalise Eq. (2) to

where the first factor is as before and the second factor involves the total density ρ(r) = ∑αρ(r)α. The advantage of this approach is that the total body order is now partitioned into element-sensitive and element-agnostic terms, so that the scaling with S can be controlled separately from the overall body-order. For instance, ν, μ = 1, 1 results in a modified power spectrum \({p}_{n{n}^{\prime}l}^{\alpha }\) with a length that scales linearly with S and is related to the original power spectrum, ν = 2, via,

The ν, μ = 1, 1 power spectrum still corresponds to a histogram of triangles, but now only one vertex of each triangle is element-sensitive, as shown in Fig. 2. Clearly, this idea can be applied just as well for higher body orders to provide compression with respect to S. In the previous section we also achieved compression with respect to N. Following a similar approach as for S, we further generalise the kernel to

where \(\hat{\rho }(\hat{{{{\bf{r}}}}})\) is the projection of the density onto the surface of the unit sphere. As before, Eq. (13) still corresponds to comparing all possible triangles for \(\nu +\hat{\nu }+\mu +\hat{\mu }=2\). However now some of the vertices may be projected onto the surface of the unit sphere, as well as potentially being element insensitive. The full range of 3-body compression options is depicted in Fig. 2, with the interpretation of the original power spectrum, ν = 2 (only non-zero index values are listed), shown in the upper right corner.

Schematic showing the physical interpretation of the generalised power spectrum for various choices of ν, \(\hat{\nu }\), μ and \(\hat{\mu }\). For such 3-body terms \(\nu +\hat{\nu }+\mu +\hat{\mu }=2\); indices which are zero are not listed. The vertices shown in blue and red are element-sensitive whilst those shown in grey are not. The grey dashed line indicates the unit sphere. When the projection results in two distinct triangles both are shown, otherwise only one is shown.

Utilising these compressions offers a significant reductions in descriptor size but does result in a descriptor that is typically less informative. An example of such an information loss is shown in Fig. 3, where the two environments shown are distinguished by ν = 2 but not by μ, ν = 1, 1, as now there is only 1 element-sensitive vertex per triangle, rather than two. Returning to the idea of the previous section, we see that ν, μ = 1, 1 corresponds to using \(\left\{{{{{\bf{C}}}}}_{n}\right\}\), where \({{{{\bf{C}}}}}_{n}={\sum }_{\alpha }{{{{\bf{c}}}}}_{n}^{\alpha }\), in place of the random basis (b1, b2, … , b2l+1). In this case, the mirror symmetry of the total density means that rank(C1, C2, … , CN) = 2 < rank(c1, c2, …, cNS) = 4, so that the full power spectrum cannot be recovered from the compression. However, this pair of environments are a handcrafted example and more generally, in the absence of similar symmetries, we would expect \(\left\{{{{{\bf{C}}}}}_{n}\right\}\) to span the required space, provided N ≥ 2l + 1, so that the compression would be lossless. This line of thought motivates the introduction of a further compression, denoted ν, μ* = 1, 1, where the kernel is as defined in Eq. (11), but 2l + 1 radial basis functions are used for the total density expansion. This choice achieves the same level of compression as WTPl and, provided rank(C1, C2, … , C2l+1) = rank(c1, c2, … , cNS), it will also be lossless. Such arguments suggest that compressing beyond ν, μ* = 1, 1 will necessarily be lossy, with the analysis for higher body orders being left to future work. We also note that ν, μ* = 1, 1 is slightly faster to compute than WTPl as fewer operations are required to form Cn than bi. However, the difference is small and the latter is more flexible as it can be applied when NS ≥ 2l + 1 whilst the former requires N ≥ 2l + 1. At first glance the degeneracies introduced by choosing ν, μ = 1, 1 appear catastrophic. However, it should be noted that the original power spectrum ν = 2 has similar degeneracies. A simple, single-element example was given in ref. 35, which is trivially modified to contain multiple elements in Supplementary Fig. 1. Despite this, the full ν = 2 power spectrum has been widely used with great success across a wide variety of applications6,36,37, as have other descriptors which are known to be mathematically incomplete32,38. As such, whilst it is useful to understand the origin of any additional degeneracies in compressed descriptors, the most practically interesting question is whether or not they lead to a significant degradation in model performance across typical datasets. We assess this for the variants of the SOAP power spectrum listed in Table 1 by performing numerical tests which are discussed in the results section.

Finally, whilst we only test compressions of the power spectrum in this work, these compression ideas can be applied just as easily to higher body-orders. For instance, choosing \(\nu ,\hat{\nu },\mu =1,1,1\) would result in a compressed version of the bispectrum that scales quadratically with S and N, rather than cubically. In general, the length of the descriptor will scale as \({S}^{\nu +\hat{\nu }}{N}^{\nu +\mu }\) with the total body order given by \(\nu +\hat{\nu }+\mu +\hat{\mu }\), so that the scaling with S and N can be chosen independently from the overall body-order. We anticipate these compressions being particularly useful for descriptors such as ACE39,9 where ν ≥ 4 is commonly used.

Element embedding

An alternative approach to constructing concise descriptors for systems with large S was used in refs. 18,19,40. Rather than using a separate density for each element they instead used two density channels; the total, element agnostic, density and an element weighted density defined as ρZ(r) = ∑αwαρα(r) where wα is an element dependent weight. The descriptor can then be formed using these two density channels, so that the length of the power spectrum is N(2N + 1)(L + 1), independent of S.

The element-weighted power spectrum is an instance of a more general type of constant complexity approach, where each element α is represented by a vector uα, where \(\dim ({\overrightarrow{u}}_{\alpha })={d}_{J} \,<\, S\), so that the chemical elements are effectively embedded into a lower dimensional space. The uα can then be optimised during model fitting so that the alchemical similarity between different elements, kαβ = uα ⋅ uβ21, is learned from the data. This approach was used in refs. 21,20, where in both cases the optimised embedding was consistent with known chemical trends, and more recently, similar, learnable mappings have been used in refs. 41,42 and 43,44,45.

This approach was taken further still in ref. 26 where PCA was used to determine a reduced optimal radial basis for a given dataset. By allowing basis changes, followed by truncation’s, that also mix different elemental channels this approach can be seen as a simultaneous embedding of both the elemental and radial information into a lower dimensional space. Interestingly, the random weight matrix W in Eq. (10) can be interpreted as performing an analogous embedding, \({X}_{l}=({{{{\bf{c}}}}}_{1}^{\alpha },{{{{\bf{c}}}}}_{2}^{\alpha },\ldots ,{{{{\bf{c}}}}}_{N}^{S})\to ({{{{\bf{b}}}}}_{1},{{{{\bf{b}}}}}_{2},\ldots ,{{{{\bf{b}}}}}_{2l+1})={X}_{l}W\). This identification connects the approaches and motivates further work where W is optimised for a given dataset.

Using element embedding, with fixed or optimisable embedding vectors, offers an alternative route to reducing the scaling with S and we include a corresponding compressed power spectrum, denoted as ν = 2, dJ, in our numerical tests for comparison. A summary of all ways of compressing the power spectrum is given in Table 1. An alternative way of evaluating the full SOAP kernel is outlined in the next section with the numerical results following afterwards. The datasets used are described briefly in ‘Datasets’.

Alternative evaluation of the SOAP kernel

For typical (KRR) SOAP models a significant fraction of the time taken to evaluate the model is spent computing the SOAP kernel between pairs of environments. The kernel is typically evaluated as \(k(\rho ,{\rho }^{\prime})={{{\bf{p}}}}\cdot {{{\bf{{p}}}^{\prime}}}\) where computing the dot product requires \({{{\mathcal{O}}}}\left({N}^{2}{S}^{2}L\right)\) operations. An alternative way to evaluate the kernel is

where evaluating the final line requires \({{{\mathcal{O}}}}(NS{L}^{3})\) operations, so becomes competitive for NS > L2. The evaluation of a single kernel could also employ both methods, with this approach used only for terms where NS ≳ 2(2l + 1)2. An advantage of computing kernels in this way is the reduction in the memory requirement, as only the density expansion coefficients need be stored. For completeness we note that the matrix form of the above is \(k(\rho ,{\rho }^{\prime})={\sum }_{l}{{{\rm{tr}}}}\left({X}_{l}^{{\dagger} }{X}_{l}{X}_{l}^{^{\prime} {\dagger} }{X}_{l}^{\prime}\right)={\sum }_{l}| | {X}_{l}^{\prime}{X}_{l}^{{\dagger} }| {| }_{2}^{2}\), where Xl is defined as in ‘Information Content’.

Distance–distance correlation and information imbalance

The similarity between a pair of environments can be quantified by the Euclidean distance between their respective descriptor vectors, so that different descriptors give different measures of similarity. These distances can be used to quantify the relative information content of descriptors22 and, more generally, to compare how different descriptors encode a given dataset. We follow ref. 46 using distance-distance correlation plots to compare the distances implied by the compressed descriptors to those of the full power spectrum, where it is desirable for a compression to preserve the distances as faithfully as possible. In Fig. 4 the distance–distance (left) and ranked distance-ranked distance (right) correlations between the ν = 2 power spectrum and two of the compressed alternatives are shown for all pairs of environments within liquid configurations, S ≥ 3, in the HEA dataset. The advantage of comparing the ranked distances is that the ranking process eliminates scaling and monotonic transformations of the distances, leaving only the correlation structure behind22,47.

The correlation between the distances (left column) and ranked distances (right column) between all pairs of environments within liquid configurations with S ≥ 3 in the HEA dataset are shown. See Supplementary Fig. 5 for the correlations for μ = 2, \(\nu ,\hat{\mu }=1,1\) and ν, μ* = 1, 1.

The correlations between both the distances and ranked distances for the ν, μ = 1, 1 compression and ν = 2 are strong, with a notable absence of points in the top left and bottom-right of each plot. The same is not true of both the constant complexity alternatives, where there are many environments deemed well separated by ν = 2 but which are poorly distinguished by ν, dJ = 2, 2 (and μ = 2). An example of such an environment is shown in Supplementary Fig. 2, with the distances according to each descriptor listed alongside. The most striking difference is seen in the ranked-distance correlation plots which are near uniform for ν, dJ = 2, 2 (and μ = 2), demonstrating that the original correlation structure is almost completely lost.

We also compute the information imbalance (introduced in ref. 22) between the different descriptors; the information imbalance is a way of measuring the relative information content of different distances measures, see ‘Information Imbalance’ for details. The information imbalance planes for the HEA and amino acid datsets are shown in Figs. 5 and 6, where points in the bottom left, top left and along the diagonal indicate descriptors which encode the same, less and orthogonal (different) information to ν = 2 respectively. The results for the HEA dataset provide quantitative evidence for the trends seen in Fig. 4, demonstrating that for this dataset, the ν, dJ = 2, 2 (and μ = 2) descriptors are significantly less informative than the others. Conversely, these descriptors carry almost identical information to ν = 2 on the amino acid dataset. We believe this is because all the amino acid molecules are geometry optimised, so that the atom type information can be inferred from the atomic positions alone; this is backed up by Δ(μ = 2 → ν = 2) ≪ 1. The stark differences seen between these two datasets suggests that whilst low-dimensional element embeddings are undoubtedly useful, they are not suitable as information preserving compressions for all datasets.

Fitting to energies

The ultimate test of any descriptor is the accuracy and overall performance of the models which use it. In this work we test the proposed SOAP compressions by fitting interatomic potentials to total energies for four separate datasets using both Ridge Regression (RR) and KRR models, see ‘KRR Models’ for details.

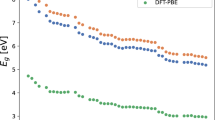

Learning curves for all models and datasets are shown in Fig. 7 whilst the total length of each descriptor is shown in the bottom left of each plot. It is important to note that all descriptors other than ν = 2, dJ are sparse with respect to the elements, so that in practice their storage requirements scale with Senv, the typical number of elements present within each environment, rather than Stotal, the total number of elements present in the dataset. For the elpasolite dataset Senv = 4 for all structures, so in this case the lengths of the sparse descriptors are indicated in white. However, whilst the descriptors themselves are sparse the number of model parameters is dependent on the full length of the descriptor. As such, we stress that both sparsity in Senv and reducing the scaling with respect to Stotal are highly desirable. As a final point, the amino acids and Li-TM datasets were also used in ref. 18 to test the accuracy achievable combining a constant complexity descriptor and a neural network potential. We stress that the errors reported there are on the training set, acting as a proof of principle, whilst the larger errors reported here are on a distinct test set, so that the numbers are not comparable.

For the Li-TM dataset ζ = 1, 2, 4 and 8 were all tested but only the models with ζ = 8 are shown. The \(\bar{d}_{J}=2\) and \(\bar{d}_{J}=4\) curves for the Elpasolites are taken from ref. 21 where the embedding was optimised. All models for the Elpasolites were fitted using ζ = 1. The total length of the descriptors is indicated in the bar chart. The overlaid white bars indicate the number of non-zero elements present, computed using Senv = 4.

The trends seen across the QM9, Li-TM and amino acid datasets are broadly similar, with ν, μ = 1, 1 achieving the same accuracy as ν = 2 with a significantly reduced descriptor size. The results with WTPl and ν, μ* = 1, 1 are ~5–15% less accurate then with ν = 2 but, crucially, both these descriptors offer additional compression with respect to the radial channels and, as outlined in the previous section, contain sufficient information to recover the full power spectrum under certain conditions. Compressing beyond the recoverable limit with \(\nu ,\hat{\mu }=1,1\)—projecting the element agnostic vertex onto the unit sphere—offers an additional factor of ~5 in terms of compression. On the elpasolite dataset this does not compromise the accuracy at all whilst for QM9 this compression incurs a 66% increase in the MAE, which is limited to ~30% for both the Li-TM’s and amino acids. Comparing these results to the errors achieved using μ = 2 and ν = 2 hints at the relative importance of atom type and geometric information for the different datasets. Unsurprisingly, knowledge of the atom types is much more important for the elapsolites, where all structures are in the same crystal prototype, whilst for the chemically reasonable organic molecules found in the QM9 and the amino acid datasets a reasonable model can be fit using geometric information alone—MAE of 0.62 kcal mol−1 for μ = 2 on QM9. This is consistent with the information imbalance analysis on the amino acids and suggests that for these molecules, in their equilibrium geometries, it is possible to use known bond lengths and coordination numbers to infer the atom type information from the geometry alone.

The (unoptimised) embedding ν, dJ = 2, 2 performs relatively well with errors 27%, 57% and 26% larger then ν = 2 for the QM9, Li-TM, and amino acids datsets respectively. This performance is most comparable to \(\nu ,\hat{\mu }=1,1\), which, particularly after exploiting sparsity, offers a greater level of compression. A clear, qualitative difference in behaviour is seen for the embedding approaches on the elpasolite dataset, which contains a much larger number of elements. Here, the unoptimised embedding performs no better than using μ = 2 and has a greatly diminished final learning rate compared to ν = 2. For comparison, the optimised embeddings, denoted using a \(\bar{d}_{J}\), from ref. 21, which use similar SOAP parameters (rcut = 5Å, N = 12, L = 9), are overlaid. This shows that optimising the embedding with only two dimensions leads to minimal improvement although a significant gain can be made using \(\bar{d}_{J}=4\). However, the final learning rate for \(\bar{d}_{J}=4\) is still diminished, relative to ν = 2, again indicating a qualitative difference in behaviour from the compressions which keep separate densities for each element. It is also worth noting that as Senv = 4 for the elpasolites, using dJ = 4 does not offer any descriptor compression compared to ν = 2 once sparsity is exploited. The embedding approaches do however offer a clear advantage in the low-data regime, which we believe is due to the reduced dimensionality of the descriptor space. If each data point occupies a certain volume of descriptor space then such embeddings greatly increase the relative fraction of descriptor space occupied by physically reasonable configurations. As such, the relevant descriptor space is covered faster so that shortest distance from a test data point to any point in the training set decreases faster with new training data.

Fitting a force-field

The compression options illustrated in Fig. 2 were tested further by training sparse KRR models on energies, forces and stresses for the HEA dataset using the gap_fit program48, with the same parameters used in ref. 49. This dataset is of particular interest as in previous work, ref. 49, it was found that using a model fitted using a 2-body + 3-body descriptor performed better than using a 2-body descriptor + the full multi-element power spectrum, ν = 2. This result is explained by the power spectrum being a higher dimensional descriptor, so that much more training data is required to span the full descriptor space corresponding to the relevant atomic environments. In the low data regime, i.e. before this condition is satisfied, the model will generalise very poorly outside of the training set. However, provided sufficient data, one would expect a model trained on a more informative descriptor to provide greater accuracy. This effect can be seen in the left hand panel of Fig. 8 where the force errors for \(\nu ,\hat{\mu }=1,1\) are initially better than for ν = 2, but then plateau quickly. In contrast the errors for the ν = 2 model continue to decrease, however, here the very large descriptor meant that only 4000 sparse points could be used due to a memory restriction of 1.5 TB of RAM.

The left hand panel shows how the energy and force errors on the test set change with the number of sparse points. Note that the 2b + 3b model is from ref. 49 where it was reported that increasing the number of sparse points did not improve performance.

Interestingly the best model, subject to the practical restrictions on memory and quantity of training data, makes use of the ν, μ = 1, 1 compression. Whilst the increase in performance, compared to the 2b + 3b, is modest across the entire test set it is much more dramatic on the quinary alloy liquid configurations where the energy RMSE is reduced from 96 meV atom−1 to 12.3 meV atom −1, Supplementary Fig. 3.

To assess whether the effectiveness of the compression schemes depends on the diversity of the dataset we also fit models using a subset of the original dataset where the liquid, surface and defect configurations were removed. The trends seen in Fig. 8 are replicated in Supplementary Fig. 6, suggesting that the applicability of these compression schemes is not strongly dependent on the intrinsic dimensionality of the dataset.

Sensitivity analysis

When using atomic descriptors for tasks such as regression it is highly desirable that they are sensitive to small perturbations of a given atomic environment, with in depth analysis of well known descriptors carried out in refs. 23,46. The latter introduced the concept of the sensitivity matrix Λ, see ‘Sensitivity Matrix’, where the eigenvalues λ can be used to determine if there are any perturbations of the local environment which a descriptor is insensitive to.

The results in Fig. 9 and Supplementary Fig. 4 show that the proposed compressions do not significantly affect the sensitivity. In particular, no perturbations were found for which any of the compressed descriptors had near-zero sensitivity, whilst the original ν = 2 did not. This behaviour is not entirely unexpected, as for instance, choosing μ = 2 is equivalent to using the original power spectrum with a single element environment. Perhaps more surprising is that using \(\nu ,\hat{\mu }=1,1\)—projecting one atom onto the unit sphere—did not reduce the sensitivity more drastically. Of course, these tests are not exhaustive and it is probable that special environments, likely closely related to any additional degeneracies, exist where this is not the case50. However, we find these results promising and leave further investigation to future work.

A scatter plot showing eigenvalues, λ, of the sensitivity matrix for 50 randomly chosen liquid environments from the HEA dataset. The first six eigenvalues are not shown. The grey dashed line indicates y = x. The eigenvalues have been scaled, so that the largest eigenvalue for each environment is 1.

Atom-centred symmetry functions

Whilst the compression presented in ‘Information Content’ is specific to the SOAP power spectrum, the ideas used to form the generalised SOAP kernel are equally applicable to all body-ordered descriptors. To demonstrate this we fit KRR models to the QM9 and Li-TM datasets using compressed versions of the popular ACSFs introduced in ref. 32. We also fit models based on element agnostic and element-weighted ACSFs18,40 for comparison. As before a polynomial kernel, \(k({{{{\boldsymbol{x}}}}}_{i},{{{{\bf{x}}}}}_{j})={\left({{{{\bf{x}}}}}_{i}\cdot {{{{\bf{x}}}}}_{j}\right)}^{\zeta }\), was employed and the regularisation strength was chosen using k-fold cross-validation with k = 10. The G2 (2-body) and G4 (3-body) ACSF functions were used with the traditional parameters23,32 scaled to cutoffs of 4 Å and 6 Å for the QM9 and Li-TM datasets respectively. Compression was applied to the 3-body terms only and the descriptors are labelled using the notation outlined in Fig. 2, so that ν = 2 is the conventional \(\overrightarrow{{{{\rm{G4}}}}}^{\alpha \beta }\) which has length \({{{\mathcal{O}}}}({S}^{2})\), ν, μ = 1, 1 corresponds to \(\overrightarrow{{{{\rm{G4}}}}}^{\alpha }={\sum }_{\beta }\overrightarrow{{{{\rm{G4}}}}}^{\alpha \beta }\) so has length \({{{\mathcal{O}}}}(S)\) and μ = 2 is element agnostic, \(\overrightarrow{{{{\rm{G4}}}}}={\sum }_{\alpha \beta }{\overrightarrow{{{{\rm{G4}}}}}}^{\alpha \beta }\). For consistency with the existing literature we label the element-weighted ACSF as wACSF40, rather than ν, dJ = 2, 2. The dscribe30 python package was used to compute the full ACSF descriptor, ν = 2, and the compressed variants were formed by summing over elements as required.

Learning curves are shown in Fig. 10, where it can be seen that using the ν, μ = 1, 1 compression does not cause a noticeable decrease in model accuracy on either dataset. For the Li-TM dataset models were fit using ζ = 1, 2, 4, 8 and 16 to assess the effect of varying the flexibility of the model. As expected, the shorter descriptors, μ = 2 and wACSF, benefited more from increasing ζ but still never achieved comparable accuracy to the others. More interestingly, using ν, μ = 1, 1 provided the same accuracy as ν = 2 even with ζ = 1, despite the full descriptor being more than 5× as long. These results clearly show how the ideas behind the generalised SOAP kernel can be successfully applied to other body-ordered descriptors.

Discussion

As the number of elements S increases, the length of many atomic descriptors increases drastically, with Sν scaling common for ν + 1-body order descriptors. In this work we have sought non-data-driven ways to reduce this scaling, with a focus on the SOAP power spectrum. We started by investigating the degree to which the density expansion coefficients can be recovered from the power spectrum. This analysis revealed that the power spectrum can be viewed as a collection of Gram matrices Pl, one for each total angular momentum number l, and that storing only a subset of components WTPl is sufficient to preserve full information. This compression reduces the descriptor size from \(\frac{1}{2}NS(NS+1)(L+1)\) to NS(L + 1)2, where N and L are the number of radial basis functions and highest order of spherical harmonic used in the density expansion respectively. Compressing the power spectrum in this way requires a single matrix of random weights W to be stored and used consistently to compress all descriptors for a given dataset.

Next, we introduced the generalised SOAP kernel. The standard power spectrum, ν = 2, is a 3-body descriptor corresponding to a histogram of triangles, with one histogram for each pair of species. In the generalised power spectrum the number of triangle vertices which are element-sensitive can be varied, as can the number of vertices which are projected onto the surface of the unit sphere. These modifications allow the scaling of the descriptor size with S and N to be set independently of the overall body-order and are applicable to all body-ordered descriptors. By again considering the ability to reconstruct the original power spectrum from the generalised one we showed that, subject to certain conditions, choosing ν, μ* = 1, 1 (or ν, μ = 1, 1 where N ≥ 2l + 1) does not lead to any loss of information. We also stress that descriptors based on the generalised kernel retain the element-wise sparsity of the original kernel, so that the number of non-zero components scales only with the number of elements present in a given environment, rather than total number present across a dataset.

The real-world performance of the compressions was tested using multiple numerical tests across a total of five pre-existing datasets. First, the information content, relative to the original power spectrum was analysed using the information imbalance approach of ref. 22. This analysis indicated that retaining element sensitivity on only one vertex was sufficient to ensure a minimal loss of information across all tested datasets. The constant complexity compression approaches performed well for the geometry optimised amino acids but incurred severe information loss on the liquid environments within the high-entropy alloy dataset. We believe this is because the atom type information for the QM9 and amino acid datasets is effectively encoded in the equilibrium geometry of the molecules, which suggests that such datasets, in particular QM9, are not well suited to assess the ability of a given descriptor to encode multi-element information.

Secondly, models were fitted to the total energies for the QM9, Li-TM, amino acid and elpasolite datasets using linear and kernel ridge regression. The most promising compressions achieved very similar results to the full power spectrum across all datasets, whilst being significantly shorter. A notable deviation in behaviour was seen for the element-embedding fits to the elpasolite dataset, highlighting the differences between compression approaches. KRR models were also fitted to the QM9 and Li-TM datasets using compressed versions of ACSF’s, where, as before, the errors achieved with the ν, μ = 1, 1 compression was almost identical to those achieved with the full descriptor. Following this, KRR models using the generalised SOAP power spectrum were fitted to energies, forces and virials for the HEA dataset using the gap_fit program48. The most accurate model that could be fitted, subject to practical restrictions on the quantity of training data and available memory, made use of the ν, μ = 1, 1 compression, providing concrete evidence that these compressions will be useful when fitting force-fields. Finally, the sensitivity of all descriptors to small perturbations was evaluated using the sensitivity matrix introduced in ref. 46. None of the compressions were found to significantly reduce the sensitivity of the descriptor, which is unsurprising given their relation to the single element power spectrum.

We anticipate that these compressions will prove useful across a wide range of applications and that some of the ideas may be applicable to other body-ordered atomic descriptors, as was shown explicitly for ACSFs. In particular, the generalised kernel could provide compression for approaches such as ACE9,39, where the high body-orders, ν ≥ 4, that are needed limit the number of different elements that can be treated. Furthermore, we stress that the compression ideas presented here can often be combined with pre-existing techniques, such as element-embedding, and that, in general, choosing the appropriate compression methods for a given situation is crucial.

Methods

Datasets

The datasets used for the numerical tests are:

-

1.

QM9: The QM9 dataset51 contains ~140,000 geometry optimised organic molecules containing only H, C, N, O and F. In this work we fit the internal energy at 0 K, reported as U0, and hereafter refer to this as energy.

-

2.

HEA: A quinary (Mo, Nb, Ta, V and W) high entropy alloy dataset from ref. 49 containing 2329 configurations including bulk crystals, surfaces, vacancies, alloys and liquid structures. The training set was used for the distance-distance correlation, information imbalance and sensitivity analysis whilst the independent test from ref. 49 was used to assess the accuracy of the force-fields.

-

3.

Li-TM: This dataset from ref. 18 consists of 16,407 Lithium Transition Metal Oxides formed from 11 differnet elements, (Li, Ti, O, Mn, Ni, Sc, V, Cr, Mn, Fe, Co and Cu).

-

4.

Amino Acids: A collection of 45,701 geometry optimised amino acids from ref. 52 containing a total of 11 different elements. The dataset covers a total of 280 molecular systems - product of 20 proteingenic amino acids with 2 different backbone types (N-terminally acetylated or C-terminally amino-methylated) and 7 different cation additions (None, Ca2+, Ba2+, Sr2+, Cd2+, Pb2+ or Hg2+).

-

5.

Elpasolites: A collection of ~10,500 geometry optimised structures from ref. 53. All main group elements up to Bi are present, 39 elements in total, and all structures share the elpasolite structural prototype.

Element embedding parameters

For the element embeddings, dJ = 2 was used for most datasets with an additional test performed using dJ = 3 for the elpasolite dataset. These embeddings were not optimised, as in refs. 18,19, and were both constructed using \({w}_{\alpha }^{1}=1\), so that the first density channel is element agnostic. For dJ = 2 the weights for the second channel were chosen by ordering the elements according to atomic number and then assigning weights of 1, 2, 3,... so that for QM9 the \({w}_{\alpha }^{2}\) used were 1, 2, 3, 4 and 5 for H,C,N,O and F respectively. For dJ = 3 the weights for the second and third density channels were assigned using the group and period of each element in the periodic table, so that for sulphur w1α = 1, w2α = 3 and w3α = 16. This was done in an attempt to capture the chemical similarity encoded in the periodic table and is similar to the encoding used in ref. 53. For the elpasolite dataset the results of using optimised embeddings, denoted using \(\bar{d}_{J}=2\) and \(\bar{d}_{J}=4\), from ref. 21 are also shown.

Information imbalance

The information imbalance is a way of measuring the relative information content of different distance measures. Whilst the distance–distance correlation compares all pairs of distances, the information imbalance is only concerned that the nearest neighbours of each environment are the same according to descriptor A and descriptor B. More precisely, for each environment the distances to all other environments are computed using both distance A and distance B. These distances are then sorted and ranked from 0 − N so that each environment has a rank according to A, rA and according to B, rB. Then the information imbalance from A to B is defined as

where N is the number of environments in the dataset and 〈rB∣rA = 1〉 is the conditional average of rB given that rA = 1. Defined in this way ΔA→B is statistically confined to lie between 0, A contains the information in B, and 1, A is not informative about B. By comparing ranks, rather than distances, ΔA→B is insensitive to changes in scale and by considering only nearest neighbour distances ΔA→B is also well suited to handle non-linear relationships. Please refer to ref. 22 for more details.

Sensitivity matrix

Here we give a brief explanation of how the sensitivity matrix Λ is constructed, please refer to ref. 46 for full details. The distance d between the original environment and the perturbed environment is given by

where Δxi is the change in component i of the descriptor x. In terms of the atomic displacements this can be re-written as

where ΔR is a vector of length 3N containing the small perturbations to the atomic positions. Defined as such, the distance between the original environment and one perturbed along an eigenvector u of Λ is given by \(d=\sqrt{\lambda }| {{{\boldsymbol{u}}}}|\) where Λu = λu. Thus, by examining the eigenvalues of Λ we can detect if there are any perturbations that a given descriptor is insensitive to. We note that there will always be six zero eigenvalues, corresponding to three translations and three rotations, and that we expect additional zero eigenvalues for perturbations about symmetric atomic configurations50. This is demonstrated in Supplementary Fig. 4 where there are only 6 zero eigenvalues for the asymmetric liquid environments from the HEA dataset but many more zero eigenvalues for the symmetric environments found in the elpasolite dataset.

KRR models

A simple linear model was used to fit the average chemical potential μα for each element so that the predicted energy \({\hat{E}}_{j}\) for configuration j, denoted by Aj, was given by,

where njα is the number of atoms of type α in Sj, β is a coefficient vector, and \({{{\bf{k}}}}\left({A}_{j}\right)\) is shorthand for the vector of kernels between Aj and the structures in the training set,

where xi is the descriptor of atom i, so that the kernel between structures is the sum over all pairwise atomic kernels. A polynomial kernel was used throughout so that \(k({{{{\bf{x}}}}}_{i},{{{{\bf{x}}}}}_{j})={\left({{{{\bf{x}}}}}_{i}\cdot {{{{\bf{x}}}}}_{j}\right)}^{\zeta }\) where ζ = 1 is equivalent to RR and ζ = 2, 4, 8 or 16 were used for KRR. We follow ref. 54 in using the following loss function, motivated by the Gaussian Process Regression view,

where Kij = k(Ai, Aj), E is the vector of total energies for structures in the training set, \(\hat{{{{\bf{E}}}}}\) are the predicted energies and Σij = σniδij where ni is the total number of atoms in Si. Minimising L is equivalent to minimising the sum of the RMSE per atom on the training set and an L2 regression penalty on β. Doing so yields the following well known solution55,

In all cases multiple models were trained using a randomly selected train:test split, with all data not in the training set used as test data. The average MAE achieved across these models is reported with error bars indicating one standard deviation, whilst the regularisation strength σ was chosen using k-fold cross validation, k ~ 10−20.

Data availability

All datasets used are freely available and the data used to generate all plots are available at https://doi.org/10.5281/zenodo.5793851.

Code availability

The compressions outlined in Fig. 2 have been implemented in the Gaussian Approximation Potential fitting code gap_fit48. A Jupyter notebook demonstrating how the WTPl compressed power spectrum is computed, and how the original power spectrum can be recovered from it, is available at https://doi.org/10.5281/zenodo.5793851.

References

Burke, K. Perspective on density functional theory. J. Chem. Phys. 136, 150901 (2012).

Nightingale, M. P. & Umrigar, C. J. Quantum Monte Carlo Methods in Physics and Chemistry. 525 (Springer Science & Business Media, 1998).

Bartlett, R. J. & Musiał, M. Coupled-cluster theory in quantum chemistry. Rev. Mod. Phys. 79, 291 (2007).

Behler, J. & Parrinello, M. Generalized neural-network representation of high-dimensional potential-energy surfaces. Phys. Rev. Lett. 98, 146401 (2007).

Bartók, A. P., Payne, M. C., Kondor, R. & Csányi, G. Gaussian approximation potentials: the accuracy of quantum mechanics, without the electrons. Phys. Rev. Lett. 104, 136403 (2010).

Bartók, A. P., Kermode, J., Bernstein, N. & Csányi, G. Machine learning a general-purpose interatomic potential for silicon. Phys. Rev. X 8, 041048 (2018).

Zuo, Y. et al. Performance and cost assessment of machine learning interatomic potentials. J. Phys. Chem. A 124, 731–745 (2020).

Shapeev, A. V. Moment tensor potentials: A class of systematically improvable interatomic potentials. Multiscale Model Sim 14, 1153–1173 (2016).

van der Oord, C., Dusson, G., Csányi, G. & Ortner, C. Regularised atomic body-ordered permutation-invariant polynomials for the construction of interatomic potentials. Mach. Learn. Sci. Technol. 1, 015004 (2020).

Faber, F. A., Christensen, A. S., Huang, B. & Von Lilienfeld, O. A. Alchemical and structural distribution based representation for universal quantum machine learning. J. Chem. Phys. 148, 241717 (2018).

Deringer, V. L. et al. Origins of structural and electronic transitions in disordered silicon. Nature 589, 59–64 (2021).

Jain, A., Persson, K. A. & Ceder, G. Research update: the materials genome initiative: data sharing and the impact of collaborative ab initio databases. APL Mater. 4, 053102 (2016).

Saal, J. E., Kirklin, S., Aykol, M., Meredig, B. & Wolverton, C. Materials design and discovery with high-throughput density functional theory: the open quantum materials database (oqmd). JOM 65, 1501–1509 (2013).

Draxl, C. & Scheffler, M. Nomad: The fair concept for big data-driven materials science. Mrs Bull. 43, 676–682 (2018).

Bernstein, N., Csányi, G. & Deringer, V. L. De novo exploration and self-guided learning of potential-energy surfaces. npj Comput. Mater. 5, 1–9 (2019).

Musil, F. et al. Physics-inspired structural representations for molecules and materials. Chem. Rev. 121, 9759–9815 (2021).

Bartók, A. P., Kondor, R. & Csányi, G. On representing chemical environments. Phys. Rev. B 87, 184115 (2013).

Artrith, N., Urban, A. & Ceder, G. Efficient and accurate machine-learning interpolation of atomic energies in compositions with many species. Phys. Rev. B 96, 014112 (2017).

Uhrin, M. Through the eyes of a descriptor: constructing complete, invertible descriptions of atomic environments. Phys. Rev. B 104, 144110 (2021).

Schütt, K. T., Sauceda, H. E., Kindermans, P.-J., Tkatchenko, A. & Müller, K.-R. Schnet–a deep learning architecture for molecules and materials. J. Chem. Phys. 148, 241722 (2018).

Willatt, M. J., Musil, F. & Ceriotti, M. Feature optimization for atomistic machine learning yields a data-driven construction of the periodic table of the elements. Phys. Chem. Chem. Phys. 20, 29661–29668 (2018).

Glielmo, A., Zeni, C., Cheng, B., Csányi, G. & Laio, A. Ranking the information content of distance measures. PNAS Nexus 1, 1–8 (2022).

Onat, B., Ortner, C. & Kermode, J. R. Sensitivity and dimensionality of atomic environment representations used for machine learning interatomic potentials. J. Chem. Phys. 153, 144106 (2020).

Mahoney, M. W. & Drineas, P. Cur matrix decompositions for improved data analysis. Proc. Natl Acad. Sci. 106, 697–702 (2009).

Nigam, J., Pozdnyakov, S. & Ceriotti, M. Recursive evaluation and iterative contraction of n-body equivariant features. J. Chem. Phys. 153, 121101 (2020).

Goscinski, A., Musil, F., Pozdnyakov, S., Nigam, J. & Ceriotti, M. Optimal radial basis for density-based atomic representations. J. Chem. Phys. 155, 104106 (2021).

Draxl, C. & Scheffler, M. The nomad laboratory: from data sharing to artificial intelligence. J. Phys. Mater. 2, 036001 (2019).

Csányi, G. et al. Expressive programming for computational physics in fortran 95+. IoP Comput. Phys. Newsletter 1−24 (2007).

Kermode, J. R. f90wrap: an automated tool for constructing deep python interfaces to modern fortran codes. J. Phys.: Condens. Matter 32, 305901 (2020).

Himanen, L. et al. DScribe: library of descriptors for machine learning in materials science. Comput. Phys. Commun. 247, 106949 (2020).

Musil, F. et al. Efficient implementation of atom-density representations. J. Chem. Phys. 154, 114109 (2021).

Behler, J. Atom-centered symmetry functions for constructing high-dimensional neural network potentials. J. Chem. Phys. 134, 074106 (2011).

De, S., Bartók, A. P., Csányi, G. & Ceriotti, M. Comparing molecules and solids across structural and alchemical space. Phys. Chem. Chem. Phys. 18, 13754–13769 (2016).

Riley, K. F., Hobson, M. P. & Bence, S. J. Mathematical Methods for Physics and Engineering (American Association of Physics Teachers, 1999).

Pozdnyakov, S. N. et al. Incompleteness of atomic structure representations. Phys. Rev. Lett. 125, 166001 (2020).

Deringer, V. L., Caro, M. A. & Csányi, G. A general-purpose machine-learning force field for bulk and nanostructured phosphorus. Nat. Commun. 11, 1–11 (2020).

Bartók, A. P. et al. Machine learning unifies the modeling of materials and molecules. Sci. Adv. 3, e1701816 (2017).

Artrith, N. & Urban, A. An implementation of artificial neural-network potentials for atomistic materials simulations: performance for tio2. Comp. Mater. Sci. 114, 135–150 (2016).

Drautz, R. Atomic cluster expansion for accurate and transferable interatomic potentials. Phys. Rev. B 99, 014104 (2019).

Gastegger, M., Schwiedrzik, L., Bittermann, M., Berzsenyi, F. & Marquetand, P. wacsf-weighted atom-centered symmetry functions as descriptors in machine learning potentials. J. Chem. Phys. 148, 241709 (2018).

Batzner, S. et al. E (3)-equivariant graph neural networks for data-efficient and accurate interatomic potentials. Nat. Commun. 13, 1–11 (2022).

Anderson, B., Hy, T. S. & Kondor, R. Cormorant: covariant molecular neural networks. In Proc. 33rd Conference on Neural Information Processing Systems (NeurIPS 2019) (2019).

Geiger, M. et al. Euclidean neural networks: e3nn. https://doi.org/10.5281/zenodo.5292912 (2020).

Weiler, M., Geiger, M., Welling, M., Boomsma, W. & Cohen, T. S. 3d steerable cnns: learning rotationally equivariant features in volumetric data. In Proc. 32nd Conference on Neural Information Processing Systems (2018).

Thomas, N. et al. Tensor field networks: Rotation-and translation-equivariant neural networks for 3d point clouds. Preprint at https://doi.org/10.48550/arXiv.1802.08219 (2018).

Parsaeifard, B. et al. An assessment of the structural resolution of various fingerprints commonly used in machine learning. Mach. Learn. Sci. Technol. 2, 015018 (2021).

Calsaverini, R. S. & Vicente, R. An information-theoretic approach to statistical dependence: Copula information. Europhys. Lett. 88, 68003 (2009).

The gap_fit code. https://github.com/libAtoms/GAP.

Byggmästar, J., Nordlund, K. & Djurabekova, F. Modeling refractory high-entropy alloys with efficient machine-learned interatomic potentials: Defects and segregation. Phys Rev B 104, 104101 (2021).

Pozdnyakov, S. N., Zhang, L., Ortner, C., Csányi, G. & Ceriotti, M. Local invertibility and sensitivity of atomic structure-feature mappings [version 1; peer review: 2 approved]. Open Res. Europe 2021 (2021).

Ramakrishnan, R., Dral, P. O., Rupp, M. & von Lilienfeld, O. A. Quantum chemistry structures and properties of 134 kilo molecules. Sci. Data 1, 1−7 (2014).

Ropo, M., Schneider, M., Baldauf, C. & Blum, V. First-principles data set of 45,892 isolated and cation-coordinated conformers of 20 proteinogenic amino acids. Sci. Data 3, 1–13 (2016).

Faber, F. A., Lindmaa, A., Von Lilienfeld, O. A. & Armiento, R. Machine learning energies of 2 million elpasolite (a b c 2 d 6) crystals. Phys. Rev. Lett. 117, 135502 (2016).

Deringer, V. L. et al. Gaussian process regression for materials and molecules. Chem. Rev. 121, 10073–10141 (2021).

Williams, C. K. & Rasmussen, C. E. Gaussian Processes for Machine Learning, vol. 2 (MIT press Cambridge, MA, 2006).

Caro, M. A. Optimizing many-body atomic descriptors for enhanced computational performance of machine learning based interatomic potentials. Phys. Rev. B 100, 024112 (2019).

Caro, M. A. Turbogap compression. https://github.com/mcaroba/turbogap/tree/master/tools/compress_indices.

Acknowledgements

The authors would like to thank Luca Ghiringhelli, Ioan-Bogdan Magdău and Albert Bartók-Pártay for helpful discussions. We acknowledge support from the NOMAD Centre of Excellence, funded by the European Commission under grant agreement 951786. J.R.K. acknowledges additional support provided by the Leverhulme Trust under grant RPG-2017-191. We are grateful for computational support from the UK national high performance computing service, ARCHER, for which access was obtained via the UKCP consortium and funded by EPSRC grant reference EP/P022065/1.

Author information

Authors and Affiliations

Contributions

J.R.K. and G.C. jointly designed the research. JPD developed the compression strategies, performed the calculations and wrote the paper, with input from J.R.K. and G.C. at all stages. All authors revised the paper and approved its final version.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Darby, J.P., Kermode, J.R. & Csányi, G. Compressing local atomic neighbourhood descriptors. npj Comput Mater 8, 166 (2022). https://doi.org/10.1038/s41524-022-00847-y

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41524-022-00847-y

This article is cited by

-

Chemical-motif characterization of short-range order with E(3)-equivariant graph neural networks

npj Computational Materials (2024)

-

Learning local equivariant representations for large-scale atomistic dynamics

Nature Communications (2023)

-

Efficient interatomic descriptors for accurate machine learning force fields of extended molecules

Nature Communications (2023)

-

Exploiting Machine Learning in Multiscale Modelling of Materials

Journal of The Institution of Engineers (India): Series D (2023)