Abstract

Kinetic Ising models are powerful tools for studying the non-equilibrium dynamics of complex systems. As their behavior is not tractable for large networks, many mean-field methods have been proposed for their analysis, each based on unique assumptions about the system’s temporal evolution. This disparity of approaches makes it challenging to systematically advance mean-field methods beyond previous contributions. Here, we propose a unifying framework for mean-field theories of asymmetric kinetic Ising systems from an information geometry perspective. The framework is built on Plefka expansions of a system around a simplified model obtained by an orthogonal projection to a sub-manifold of tractable probability distributions. This view not only unifies previous methods but also allows us to develop novel methods that, in contrast with traditional approaches, preserve the system’s correlations. We show that these new methods can outperform previous ones in predicting and assessing network properties near maximally fluctuating regimes.

Similar content being viewed by others

Introduction

Advances in high-throughput data acquisition technologies for very large biological and social systems are providing unprecedented possibilities to investigate their complex, non-equilibrium dynamics. For example, optical recordings from genetically modified neural populations make it possible to simultaneously monitor activities of the whole neural network of behaving C. elegans1 and zebrafish2, as well as thousands of neurons in the mouse visual cortex3. Such networks generally exhibit out-of-equilibrium dynamics4, and are often found to self-organize near critical regimes at which their fluctuations are maximized5,6. Evolution of such systems cannot be faithfully captured by methods assuming an asymptotic equilibrium state. Therefore, in general, there is a pressing demand for mathematical tools to study the dynamics of large-scale, non-equilibrium complex systems and to analyze high-dimensional datasets recorded from them.

The kinetic Ising model with asymmetric couplings is a prototypical model for studying such non-equilibrium dynamics in biological7,8 and social systems9. It is described as a discrete-time Markov chain of interacting binary units, resembling the nonlinear dynamics of recurrently connected neurons. The model exhibits non-equilibrium behavior when couplings are asymmetric or when model parameters are subject to rapid changes, ruling out quasi-static processes. These conditions induce a time reversal asymmetry in dynamical trajectories, leading to positive entropy production (the second law of thermodynamics) as revealed by the fluctuation theorem10,11,12,13,14,15 (see refs. 16,17 for reviews). This time-asymmetry is characteristic of non-equilibrium systems as it can only be displayed by systems in which energy dissipation takes place18. In the case of symmetric connections and static parameters, the model converges to an equilibrium stationary state. Consequently, it is a generalization of its equilibrium counterpart known as the (equilibrium) Ising model19.

The forward Ising problem refers to calculating statistical properties of the model, such as mean activation rates (mean magnetizations of spins) and correlations, given the parameters of the model. In contrast, inference of the model parameters from data is called the inverse Ising problem20. In this regard, kinetic Ising models21,22 and their equilibrium counterparts23,24,25 have become popular tools for modeling and analyzing biological and social systems. In addition, they capture memory retrieval dynamics in classical associative networks. Namely, they are equivalent to the Boltzmann machine, extensively used in machine learning applications20. Unfortunately, exact solutions of the forward and inverse problems often become computationally too expensive due to the combinatorial explosion of possible patterns in large, recurrent networks or the high volume of data, and applications of exact or sampling-based methods are limited in practice to around a hundred of neurons5,25,26. In consequence, analytical approximation methods are necessary for analysing large systems. In this endeavour, mean-field methods have emerged as powerful tools to track down otherwise intractable statistical quantities.

The standard mean-field approximations to study equilibrium Ising models are the classical naive mean-field (nMF) and the more accurate Thouless-Anderson-Palmer (TAP) approximations27. These methods have also been employed to solve the inverse Ising problem28,29,30,31. Plefka demonstrated that the nMF and TAP approximations for the equilibrium model can be derived using the power series expansion of the free energy around a model of independent spins, a method which is now referred to as the Plefka expansion32. This expansion up to the first and second orders leads to the nMF and TAP mean-field approximations respectively. The Plefka expansion was later formalized by Tanaka and others in the framework of information geometry33,34,35,36,37.

In non-equilibrium networks, however, the free energy is not directly defined, and thus it is not obvious how to apply the Plefka expansion. Kappen and Spanjers38 proposed an information geometric approach to mean-field solutions of the asymmetric Ising model with asynchronous dynamics. They showed that their second-order approximation for an asymmetric model in the stationary state is equivalent to the TAP approximation for equilibrium models. Later, Roudi and Hertz derived TAP equations for nonstationary states using a Legendre transformation of the generating functional of the set of trajectories of the model39. Another study by Roudi and Hertz extended mean-field equations to provide expressions for the nonstationary delayed correlations assuming the presence of equal-time correlations at the previous step40. Yet another interesting method proposed by Mézard and Sakellariou approximates the local fields by a Gaussian distribution according to the central limit theorem, yielding more accurate results for fully asymmetric networks41. This method was later extended to include correlations at the previous time step, improving the results for symmetric couplings42. More recently, Bachschmid-Romano et al. extended the path-integral methods in ref. 39 with Gaussian effective fields43, not only recovering ref. 41 for fully asymmetric networks but also proposing a method that better approximates mean rate dynamics by conserving autocorrelations of units. Although many choices of mean-field methods are available, the diversity of methods and assumptions makes it challenging to advance systematically over previous contributions.

Here, we propose a unified approach for mean-field approximations of the Ising model. While our method is applicable to symmetric and equilibrium models, we focus for generality on asymmetric kinetic Ising models. Our approach is defined as a family of Plefka expansions in an information geometric space. This approach allows us to unify and relate existing mean-field methods and to provide expressions for other statistics of the systems such as pairwise correlations. Furthermore, our approach can be extended beyond classical mean-field assumptions to propose novel approximations. Here, we introduce an approximation based on a pairwise model that better captures network correlations, and we show that it outperforms existing approximations of kinetic Ising models near a point of maximum fluctuations. We also provide a data-driven method to reconstruct and test if a system is near a phase transition by combining the forward and inverse Ising problems, and demonstrate that the proposed pairwise model more accurately estimates the system’s fluctuations and its sensitivity to parameter changes. These results confirm that our unified framework is a useful tool to develop methods to analyze large-scale, non-equilibrium biological and social dynamics operating near critical regimes. In addition, since the methods are directly applicable to Boltzmann machine learning, the geometrical framework introduced here is relevant in machine learning applications.

The paper is organized as follows. First, we introduce the kinetic Ising model and its statistical properties of interest. Second, we introduce our framework for the Plefka approximation methods from a geometric perspective. To explain how it works, we derive the classical naive and TAP mean-field approximations under the proposed framework. Third, we show that our approach can unify other known mean-field approximation methods. We then propose a novel pairwise approximation under this framework. Finally, we compare different mean-field approximations in solving the forward and inverse Ising problems, as well as in performing the data-driven assessment of the system’s sensitivity. The last section is devoted to discussion.

Results

The kinetic Ising model

The kinetic Ising model is the least structured statistical model containing delayed pairwise interactions between its binary components (i.e., a maximum caliber model44). The system consists of N interacting binary variables (down or up of Ising spins or inactive or active of neural units) si,t ∈ { − 1, + 1}, i = 1, 2, . . , N, evolving in discrete-time steps t with parallel dynamics. Given the configuration of spins at t − 1, st−1 = {s1,t−1, s2,t−1, …, sN,t−1}, spins st at time t are conditionally independent random variables, updated as a discrete-time Markov chain, following

The parameters H = {Hi} and J = {Jij} represent local external fields at each spin and couplings between pairs of spins respectively. When the couplings are asymmetric (i.e., Jij ≠ Jji), the system is away from equilibrium because the process is irreversible with respect to time. Given the probability mass function of the previous state P(st−1), the distribution of the current state is:

This marginal distribution P(st) is not factorized (except at J = 0), but it rather exhibits a complex statistical structure, generally containing higher-order spin interactions. We can apply this equation recursively, e.g., decomposing \(P\left({{\bf{s}}}_{t-1}\right)\) in terms of the distribution P(st−2), and trace the evolution of the system from the initial distribution P(s0).

In this article, we use variants of the Plefka expansion to calculate some statistical properties of the system. Namely, we investigate the average activation rates mt, correlations between pairs of units (covariance function) Ct, and delayed correlations Dt given by

Note that mt and Dt are sufficient statistics of the kinetic Ising model. Therefore, we will use them in solving the inverse Ising problem (see Methods). We additionally consider the equal-time correlations Ct as they are commonly used to describe neural systems, and are investigated by some of the mean-field approximations in the literature40. Calculation of these expectation values is analytically intractable and computationally very expensive for large networks, due to the combinatorial explosion of the number of possible states. To reduce this computational cost, we approximate the marginal probability distributions (Eq. (3)) by the Plefka expansion method that utilizes an alternative, tractable distribution.

Geometrical approach to mean-field approximation

Information geometry37,45,46 provides clear geometrical understanding of information-theoretic measures and probabilistic models15,47,48. Using the language of information geometry, we introduce our method for mean-field approximations of kinetic Ising systems.

Let \({{\mathcal{P}}}_{t}\) be the manifold of probability distributions at time t obtained from Eq. (3). Each point on the manifold corresponds with a set of parameter values. The manifold \({{\mathcal{P}}}_{t}\) contains submanifolds \({{\mathcal{Q}}}_{t}\) of probability distributions with analytically tractable statistical properties (See Fig. 1). We use this tractable manifold, i.e., a reference model, to approximate a target point P(st∣H, J) in the manifold \({{\mathcal{P}}}_{t}\) and its statistical properties mt, Ct, Dt.

The point P(st) is the marginal distribution of a kinetic Ising model at time t. The submanifold \({{\mathcal{Q}}}_{t}\) is a set of tractable distributions, for example a manifold of independent models. The points in \({\mathcal{A}}\) correspond to a m-geodesic, that is a linear mixture of P(st) and Q*(st) on \({{\mathcal{Q}}}_{t}\), where for independent \({{\mathcal{Q}}}_{t}\) all points on \({\mathcal{A}}\) share the same mean values mt. Geometrically, \({\mathcal{A}}\) constitutes the m-projection from P(st) to \({{\mathcal{Q}}}_{t}\), defining Q*(st) as the closest point in the submanifold \({{\mathcal{Q}}}_{t}\) to the point P(st)47. The Plefka expansion is defined by expanding an α-dependent distribution Pα(st) that satisfies Pα=0(st) = Q*(st) and Pα=1(st) = P(st).

The simplest submanifold \({\mathcal{Q}}_{t}\) is the manifold of independent models, used in classical mean-field approximations to compute average activation rates. Each point on this submanifold corresponds to a distribution

where Θt = {Θi,t} is the vector of parameters that represents a point in \({{\mathcal{Q}}}_{t}\). This distribution does not include couplings between units, and its average activation rate is immediately given as \({m}_{i,t}=\tanh {{{\Theta }}}_{i,t}\).

Our first goal is to find the average activation rates of the target distribution P(st∣H, J). It turns out that they can be obtained from the independent model Q(st∣Θt) that minimizes the following Kullback-Leibler (KL) divergence from P(st):

The independent model \(Q({{\bf{s}}}_{t}| {\bf{\Theta}}_{t}^{* })(\equiv\!{Q}^{* }({{\bf{s}}}_{t}))\) that minimizes the KL divergence has activation rates mt identical to those of the target distribution P(st)38 because the minimizing points \({{{\mathrm{{\Theta }}}}}_{i,t}^{* }\) satisfy (for i = 1, …, N)

where \({m}_{i,t}^{P}\) and \({m}_{i,t}^{{Q}^{* }}\) are respectively expectation values of si,t by P(st) and \(Q({{\bf{s}}}_{t}| {{{{\mathbf{\Theta}}}}}_{t}^{* })\). As these values are equal, for the rest of the paper we will drop their superscripts and just write mi,t for simplicity. The result of this approximation is indifferent to the system’s correlations. Later in the paper we will consider approximations that take into account pairwise correlations.

From an information geometric point of view, given mt (or \({{{{\mathbf{\Theta}} }}}_{t}^{* }\)), we may consider a family of points defined as a linear mixture of P(st) and \(Q({{\bf{s}}}_{t}| {{{{\mathbf{\Theta}}}}}_{t}^{* })\) for which mt is kept constant (the dashed line \({\mathcal{A}}\) in Fig. 1). This is known as an m-geodesic, and it is orthogonal to the e-flat manifold \({{\mathcal{Q}}}_{t}\), constituting an m-projection to this manifold37,47. Thus, the previous search of \(Q({{\bf{s}}}_{t}| {{{{\mathbf{\Theta}} }}}_{t}^{* })\) given P(st∣H, J) is equivalent to finding the orthogonal projection point from P(st∣H, J) to the manifold \({{\mathcal{Q}}}_{t}\) of independent models36,37.

The Plefka expansion

Although the m-projection provides the exact and unique average activation rates, its calculation in practice requires the complete distribution P(st). In the Plefka expansion, we relax the constraints of the m-projection, and introduce another set of more tractable distributions that passes only through P(st∣H, J) and \(Q({{\bf{s}}}_{t}| {{{{\mathbf{\Theta}} }}}_{t}^{* })\) (the solid line in Fig. 1). This distribution is defined using a new conditional distribution introducing a parameter α that connects a distribution on the manifold \({{\mathcal{Q}}}_{t}\) with the original distribution P(st):

At α = 0, Pα=0(st∣st−1) = Q(st∣Θt), and α = 1 leads to Pα=1(st∣st−1) =P(st∣st−1). Using this alternative conditional distribution Pα(si,t∣st−1), we construct an approximate marginal distribution Pα(st). Consequently, expectation values with respect to Pα(st) are functions of α. We thus write the statistics of the approximate system as mt(α), Ct(α), and Dt(α).

The Plefka expansions of these statistics are defined as the Taylor series expansion of these functions around α = 0. In the case of the mean activation rate, the expansion up to the nth-order leads to:

where \({\mathcal{O}}({\alpha }^{(n+1)})\) stands for the residual error of the approximation of order n + 1 and higher. For the nth-order approximation, we neglect the residual terms as \({\mathcal{O}}({\alpha }^{(n+1)}){\left|\right.}_{\alpha = 1}\approx 0\). Note that all coefficients of expansion are functions of Θt. The mean-field approximation is computed by setting α = 1 and finding the value of \({{{{\mathbf{\Theta}} }}}_{t}^{* }\) that satisfies Eq. (12). Since the original marginal distribution is recovered at α = 1, the equality of Eq. (9) holds: mt(α = 1) = mt(α = 0). Then, we have

which should be solved with respect to the parameters Θt. Since we neglected the terms higher than the n-th order, the solution may not lead to the exact projection, \(Q({{\bf{s}}}_{t}| {{\bf{\Theta }}}_{t}^{* })\). In this study, we investigate the first (n = 1) and second (n = 2) order approximations. Moreover we can apply the same expansion to approximate the correlations Ct and Dt, using Eq. (10).

What is the difference between this approach and other mean-field methods? Conventionally, naive mean-field approximations are obtained by minimizing D(Q∣∣P) as opposed to D(P∣∣Q) (Eq. (8))36,49. This approach is typically used in variational inference to construct a tractable approximate posterior in machine learning problems. Following the Bogolyubov inequality, minimizing this divergence is equivalent to minimizing the variational free energy. Geometrically, it comprises an e-projection of P(st∣H, J) to the submanifold \({{\mathcal{Q}}}_{t}\), which does not result in \(Q({{\bf{s}}}_{t}| {{{{\mathbf{\Theta}}}}}_{t}^{* })\). Namely, minimizing D(Q∣∣P), as well as minimization of other α-divergences except for D(P∣∣Q), introduces a bias in the estimation of the mean-field approximation36,37. In contrast, if we consider the m-projection point that minimizes D(P∣∣Q), we can approximate the exact value of mt using Eq. (12) up to an arbitrary order.

In the subsequent sections we show that different approximations of the marginal distribution P(st) in Eq. (3) can be constructed by replacing P(si,τ∣sτ−1) with Pα(si,τ∣sτ−1) for different pairs i, τ (here we will explore the cases of τ = t and τ = t − 1). More generally, we show in Supplementary Note 1 that this framework can be extended to a marginal path of arbitrary length k, P(st−k+1, …, st). In addition, we are not restricted to manifolds of independent models. The independent model is adopted as a reference model to approximate the average activation rate, but one can also more accurately approximate correlations using this method. In this vein, we can extend our framework to use reference manifolds \({{\mathcal{Q}}}_{t-k+1:t}\) (of models Q(st−k+1, …, st)) that include interactions, e.g., pairwise couplings between elements at two different time points, to more accurately approximate the delayed correlations (see Supplementary Note 1). By systematically defining these reference distributions, we will provide a unified approach to derive Plefka approximations of mt, Ct, and Dt, including the one that utilizes a pairwise structure.

Plefka[t − 1, t]: expansion around independent models at times t − 1 and t

Before elaborating different mean-field approximations, we demonstrate our method by deriving the known results of the classical nMF and TAP approximations for the kinetic Ising model38,39. In order to derive these classical mean-field equations, we make a Plefka expansion around the points \({{{{\mathbf{\Theta}} }}}_{t}^{* }\) and \({{{{\mathbf{\Theta}} }}}_{t-1}^{* }\) that are, respectively, obtained by orthogonal projection to the independent manifolds \({{\mathcal{Q}}}_{t}\) and \({{\mathcal{Q}}}_{t-1}\), computed as in Eq. (9). Here we should note that assuming an approximation where previous distributions (e.g., t − 2, t − 3, … ) are also independent yields exactly the same result. In this way, we derive the nMF and TAP equations of a model defined by a marginal probability distribution \({P}_{\alpha }^{[t-1:t]}\). Using Eqs. (3) and (10), we write

where \({P}_{\alpha = 0}^{[t-1:t]}({{\bf{s}}}_{t})=Q({{\bf{s}}}_{t})\) and the original distribution is recovered for \({P}_{\alpha = 1}^{[t-1:t]}({{\bf{s}}}_{t})=P({{\bf{s}}}_{t})\).

Following Eq. (13), for the first order approximation we have \(\frac{\partial {m}_{i,t}(\alpha =0)}{\partial \alpha }=0\). Since the derivative of the first order moment is

by solving the equation, we find \({{{\Theta }}}_{i,t}^{* }\approx {H}_{i}+\mathop{\sum }\nolimits_{j}{J}_{ij}{m}_{j,t-1}\) that leads to the naive mean-field approximation:

We apply the same expansion to approximate the correlations, expanding Cik,t(α) and Dil,t(α) around α = 0 up to the first order using \({{{\Theta }}}_{i,t}={{{\Theta }}}_{i,t}^{* }\). Then we obtain

Detailed calculations are presented in Supplementary Note 2.

To obtain the second-order approximation, we need to solve \(\frac{\partial {m}_{i}(\alpha =0)}{\partial \alpha }+\frac{1}{2}\frac{{\partial }^{2}{m}_{i}(\alpha =0)}{\partial {\alpha }^{2}}=0\) from Eq. (13). Here the second-order derivative is given as

where terms of the order higher than quadratic were neglected (see Supplementary Note 2 for further details). From these equations, we find \({{{\Theta }}}_{i,t}^{* }\approx {H}_{i}+\mathop{\sum }\nolimits_{j}{J}_{ij}{m}_{j,t-1}-{m}_{i,t}\mathop{\sum }\nolimits_{j}{J}_{ij}^{2}(1-{m}_{j,t-1}^{2})\) leading to the TAP equation:

Having \({{{\Theta }}}_{i,t}^{* }\), we can incorporate TAP approximations of the correlations by expanding Cik,t(α) and Dil,t(α) (see Supplementary Note 2 for details) as:

In these approximations, Eqs. (16) and (20) of activation rates mt correspond to the classical nMF and TAP equations of the kinetic Ising model38,39. The mean-field equations for the equal-time and delayed correlations (Eqs. (17), (18), (21), and (22)) are novel contributions from applying the Plefka expansion to correlations.

Using the equations above, we can compute the approximate statistical properties of the system at t (mt, Ct, Dt) from mt−1. Therefore, the system evolution is described by recursively computing mt from an initial state m0 (for both transient and stationary dynamics), although approximation errors accumulate over the iterations. After we introduce a unified view of mean-field approximations in the subsequent sections, we will numerically examine approximation errors of these various methods in predicting statistical structure of the system.

Generalization of mean-field approximations

In the previous section, we described a Plefka expansion that uses a model containing independent units at time t − 1 and t to construct a marginal probability distribution \({P}_{\alpha }^{[t-1:t]}({{\bf{s}}}_{t})\). This is, however, not the only possible choice of approximation. As we mentioned above, other approximations have been introduced in the literature. In ref. 40, expressions are provided for the nonstationary delayed correlations Dt as a function of Ct−1. In ref. 41, an approximation is derived by assuming that units at state st−1 are independent while correlations of st are preserved.

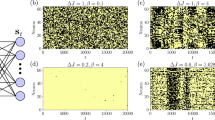

In the following sections, we show that various approximation methods, including those mentioned above, can be unified as Plefka expansions. Each method of the approximation corresponds to a specific choice of the submanifold \({{\mathcal{Q}}}_{t}\) at each time step. Fig. 2 shows the corresponding submanifolds \({{\mathcal{Q}}}_{t-1:t}\) of possible approximations, where gray lines represent interactions that are affected by α in the Plefka expansion. The mean-field approximations in the previous section were obtained by using the model represented in Fig. 2B, where the couplings at time t − 1 and t are affected by α. Below, we present systematic applications of the Plefka expansions around other reference models in order to approximate the original distribution (Fig. 2C–E). By doing so, we not only unify the previously reported mean-field approximations but also provide novel solutions that can provide more precise approximations than known methods.

Original model (A) and family of generalized Plefka expansions (B–E). Gray lines represent connections that are proportional to α and thus removed in the approximated model to perform the Plefka expansions, while solid black lines are conserved and dashed lines are free parameters. Plefka[t − 1, t] (B) retrieves the classical naive and TAP mean-field equations38,39. Plefka[t] (C) results in a novel method which preserves correlations of the system at t − 1, incorporating equations similar to ref. 40. Plefka[t − 1] (D) assumes independent activity at t-1, and in its first order approximation reproduces the results in ref. 41. Plefka2[t] (E) represents a novel pairwise approximation which performs better in approximating correlations.

Plefka[t]: expansion around an independent model at time t

For the Plefka[t − 1, t] approximation, explained above, the system becomes independent for α = 0 at t as well as t − 1. This leads to approximations of mt, Ct, Dt being specified by mt−1, while being independent of Ct−1 and Dt−1. In ref. 40, the authors describe a mean-field approximation by performing new expansion over the classical nMF and TAP equations that takes into account previous correlations Ct−1. Here, our framework allows us to obtain similar results by considering only a Plefka expansion over manifold \({{\mathcal{Q}}}_{t}\) while assuming that we know the properties of P(st−1) (Fig. 2C). Therefore, we denote this approximation as \({P}_{\alpha }^{[t]}\) and consider

In Supplementary Note 3 we derive the equations for this approximation. For the first order, we obtain

Note that Eqs. (24) and (25) are the same as the nMF Plefka[t − 1, t] equations. Equation (26) includes Ct−1, being exactly the same result obtained in ref. 40, Eq. (4). The second-order approximations leads to:

All update rules include the effect of Ct−1. We can see that if we use the covariance matrix of the independent model at t − 1, we recover the results of the Plefka[t − 1, t] approximation in the previous section. In contrast with ref. 40, we provide a novel approximation method that depends on previous correlations using a single expansion (instead of two subsequent expansions), and additionally present approximated equal-time correlations.

Plefka[t − 1]: expansion around an independent model at time t − 1

In ref. 41, a mean-field method is proposed by approximating the effective field ht as the sum of a large number of independent spins, approximated by a Gaussian distribution, yielding exact results for fully asymmetric networks in the thermodynamic limit. In our framework, we describe this approximation as an expansion around the projection point from P(st−1) to the submanifold \({{\mathcal{Q}}}_{t-1}\), using a model where only st−1 are independent (Fig. 2D). In this case (see Supplementary Note 4), the effective field ht at the submanifold is a sum of independent terms, which for large N yields a Gaussian distribution.

By defining

we see that now the expansion is defined for the marginal distribution of the path {st−1, st} (see Supplementary Note 1). The first order equations for this method are

Here we use \({{\rm{D}}}_{x}=\frac{{\rm{d}}x}{\sqrt{2\pi }}\exp (-\frac{1}{2}{x}^{2})\), \({{\rm{D}}}_{xy}^{{\rho }_{ik}}=\frac{{\rm{d}}x{\rm{d}}y}{2\pi \sqrt{1-{\rho }_{ik}^{2}}}\exp (-\frac{1}{2}\frac{({x}^{2}+{y}^{2})-2{\rho }_{ik}xy}{1-{\rho }_{ik}^{2}})\), \({{{\Delta }}}_{i,t}=\mathop{\sum }\nolimits_{j}{J}_{ij}^{2}(1-{m}_{j,t-1}^{2})\) and \({\rho }_{ik}=\sum_{j}{J}_{ij}{J}_{kj}(1-{m}_{j,t-1}^{2})/\sqrt{{{{\Delta }}}_{i,t}{{{\Delta }}}_{j,t}}\). Derivations are described in Supplementary Note 4. These results are exactly the same as those presented for mt, Dt in ref. 41, adding an additional expression for Ct. For this approximation, we do not consider the second-order equations since they are computationally much more expensive than the other approximations.

Plefka2[t]: expansion around a pairwise model

The proposed framework is also a powerful tool to develop novel Plefka expansions. To make the expansions more accurately approximate target statistics, we can consider a reference manifold composed of multiple time steps while maintaining some of the parameters in the system (see Supplementary Note 1). Motivated by this idea, here we propose new methods that directly approximate pairwise activities of the units by choosing a reference manifold that preserves a coupling term.

Let us first consider the joint probability of any arbitrary pair of units at time t − 1 and t to compute the delayed correlations (Fig. 2E, left). Namely, we consider the joint probability of spins si,t and sl,t−1:

with s⧹l,t−1 containing all elements of st−1 except sl,t−1. As a reference manifold \({{\mathcal{Q}}}_{t-1:t}\), we consider the dependency among only the units i and l:

where θi,t(sl,t−1) = Θi,t + Δil,tsl,t−1. The orthogonal projection to \({{\mathcal{Q}}}_{t}\) is equivalent to minimizing the KL divergence D(P∣∣Q) with respect to the parameters:

with

As in the previous approximations, P(si,t, sl,t−1) is connected to \(Q({s}_{i,t},{s}_{l,t-1}| {{\boldsymbol{\theta }}}_{t}^{* },{{\bf{\Theta }}}_{t-1}^{* })\) through an α-dependent probability

with conditional probabilities given by

As in the cases above, we can calculate the equations for the first and second-order approximations (see Supplementary Note 5). Here, for the second-order approximation (which is more accurate than the first order) we have that:

which directly leads to calculation of means and delayed correlations as:

These results are related to previous work43 that included autocorrelations as one of the constraints to derive the Plefka approximation. Instead, here we provide a Plefka approximation that includes delayed correlations between any pair of units.

To compute the above approximations, we need to know Ct−1 and Ct−2. Here, we provide similar pairwise Plefka approximations for the pairwise distribution at time t, P(si,t, sk,t). Since si,t, sk,t are conditionally independent, we can construct a model in which first sk,t is computed from st−1, and then si,t is computed conditioned on sk,t, st−1 (Fig. 2E, right):

with conditional probabilities given by

Here θi,t is a function of sk,t that accounts for equal-time correlations between si,t and sk,t. Computed similarly to delayed correlations, the second-order approximation yields (see Supplementary Note 5):

Using these equations, approximate equal-time correlations are given as

Note that the approximation of equal-time correlations may not be symmetric for Cik,t and Cki,t. In the results of this paper we use the average of the two.

Comparison of the different approximations

In the subsequent sections, we compare the family of Plefka approximation methods described above by testing their performance in the forward and inverse Ising problems. More specifically, we compare the second-order approximations of Plefka[t − 1, t] and Plefka[t], the first order approximation of Plefka[t − 1], and the second-order pairwise approximation of Plefka2[t]. We define an Ising model as an asymmetric version of the kinetic Sherrington-Kirkpatrick (SK) model, setting its parameters around the equivalent of a ferromagnetic phase transition in the equilibrium SK model. External fields Hi are sampled from independent uniform distributions \({\mathcal{U}}(-\beta {H}_{0},\beta {H}_{0})\), H0 = 0.5, whereas coupling terms Jij are sampled from independent Gaussian distributions \({\mathcal{N}}(\beta \frac{{J}_{0}}{N},{\beta }^{2}\frac{{J}_{\sigma }^{2}}{N})\), J0 = 1, Jσ = 0.1, where β is a scaling parameter (i.e., an inverse temperature).

Generally, mean-field methods are suitable for approximating properties of systems with small fluctuations. However, there is evidence that many biological systems operate in critical, highly fluctuating regimes5,6. In order to examine different approximations in such a biologically plausible yet challenging situation, we select the model parameters around a phase transition point displaying large fluctuations.

To find such conditions, we employed path-integral methods to solve the asymmetric SK model (Supplementary Note 6). We find that the stationary solution of the asymmetric model displays for our choice of parameters a non-equilibrium analogue of a critical point for a ferromagnetic phase transition, which takes place at βc ≈ 1.1108 in thermodynamic limit (see Supplementary Note 6, Supplementary Fig. 1). The uniformly distributed bias terms H shift the phase transition point from β = 1 obtained at H = 0. By simulation of the finite size systems, we confirmed that the maximum fluctuations in the model are found near the theoretical βc, which shows maximal covariance values (see Supplementary Note 6, Supplementary Fig. 2).

Fluctuations of a system are generally expected to be maximized at a critical phase transition19. In addition, entropy production (a signature of time irreversibility) has been suggested as an indicator of phase transitions. For example, it presents a peak at the transition point of a continuous phase transition in a non-equilibrium Curie-Weiss Ising model with oscillatory field50 and some instances of mean-field majority vote models51,52. We found that the entropy production of the kinetic Ising system is also maximized around βc (discussed later, see also Methods for its derivation).

Forward Ising problem

We examine the performance of the different Plefka expansions in predicting the evolution of an asymmetric SK model of size N = 512 with random H and J. To study the nonstationary transient dynamics of the model, we start from s0 = 1 (all elements set to 1 at t = 0) and recursively update its state for T = 128 steps. We repeated this stochastic simulation for R = 106 trials for 21 values of β in the range [0.7βc, 1.3βc] (except for the reconstruction of the phase transition where we used R = 105 and 201 values of β in the same range). Using the R samples, we computed the statistical moments and cumulants of the system, mt, Ct, and Dt at each time step. We then computed their averages over the system units, i.e., \({\langle {m}_{i,t}\rangle }_{i}\), \({\langle {C}_{ik,t}\rangle }_{ik}\) and \({\langle {D}_{il,t}\rangle }_{il}\), where the angle bracket denotes average over indices of its subscript.

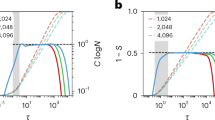

The black solid lines in Fig. 3A–C display nonstationary dynamics of these averaged statistics from t = 0, …, 128, simulated by the original model at \(\beta = {\beta}_c \). In comparison, color lines display these statistics predicted by the family of Plefka approximations that are recursively computed using the obtained equations, starting from the initial state m0 = 1, C0 = 0 and D0 = 0. We observe that although the recursive application of all the approximation methods provides good predictions for the transient dynamics of the mean activation rates mt until its convergence (Fig. 3A), the predictions using Plefka[t] and especially the proposed Plefka2[t] approximations are closer to the true dynamics than the others. Evolution of the mean equal-time and time-delayed correlations Ct, Dt is precisely captured only by our new method Plefka2[t]. In contrast, Plefka[t] overestimates correlations while Plefka[t − 1] and Plefka[t − 1, t] underestimate correlations.

Top: Evolution of average activation rates (magnetizations) (A), equal-time correlations (B), and delayed correlations (C) found by different mean-field methods for β = βc. Middle: Comparison of the activation rates (D), equal-time correlations (E), and delayed correlations (F) found by the different Plefka approximations (ordinate, p superscript) with the original values (abscissa, o superscript) for β = βc and t = 128. Black lines represent the identity line. Bottom: Average squared error of the magnetizations \({\epsilon }_{{\bf{m}}}={\langle {\langle {({m}_{i,t}^{o}-{m}_{i,t}^{p})}^{2}\rangle }_{i}\rangle }_{t}\) (G), equal-time correlations \({\epsilon }_{{\bf{C}}}={\langle {\langle {({C}_{ik,t}^{o}-{C}_{ik,t}^{p})}^{2}\rangle }_{ik}\rangle }_{t}\) (H), and delayed correlations \({\epsilon }_{{\bf{D}}}={\langle {\langle {({D}_{ik,t}^{o}-{D}_{ik,t}^{p})}^{2}\rangle }_{il}\rangle }_{t}\) (I) for 21 values of β in the range [0.7βc, 1.3βc].

Performance of the methods in predicting individual activation rates and correlations are displayed in Fig. 3D–F by comparing vectors mt, Ct and Dt at the last time step (t = 128) of the original model (o superscript) and those of the Plefka approximations (p superscript). For activation rates mt, the proposed Plefka2[t] and Plefka[t] perform slightly better than the others (see also Fig. 3A). While being overestimated by Plefka[t], underestimated moderately by Plefka[t − 1] and significantly by Plefka[t − 1, t], equal-time and time-delayed correlations Ct, Dt are best predicted by Plefka2[t] (Fig. 3E, F).

The above results are obtained at the critical β = βc, intuitively the most challenging point for mean-field approximations. In order to further show that our novel approximation Plefka2[t] systematically outperforms the others in a wider parameter range, we repeated the analysis for different inverse temperatures β (the same random parameters are applied for all β). Fig. 3G, H, I, respectively, show the averaged squared errors (averaged over time and units) of the activation rates ϵm, equal-time correlations ϵC and delayed correlations ϵD between the original model and approximations, averaged over units and time for 21 values of β in the range [0.7βc, 1.3βc]. Fig. 3G–I shows that Plefka2[t] outperforms the other methods in computing mt, Ct, Dt (with the exception of a certain region of β > βc in which Plefka[t] is slightly better), yielding consistently a low error bound for all values of β. Errors of these approximations are smaller when the system is away from βc.

Inverse Ising problem

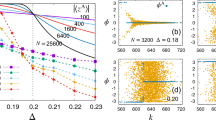

We apply the approximation methods to the inverse Ising problem by using the data generated above for the trajectory of T = 128 steps and R = 106 trials to infer the parameters of the model, H and J. The model parameters are estimated by the Boltzmann learning method under the maximum likelihood principle: H and J are updated to minimize the differences between the average rates mt or delayed correlations Dt of the original data and the model approximations, which can significantly reduce computational time (see Methods). While Boltzmann learning requires to compute the likelihood of every point in a trajectory and every trial (RT calculations) each iteration, we can estimate the gradient at each iteration in a one-shot computation by applying the Plefka approximations (Methods). At β = βc (Fig. 4A, B), we observe that the classical Plefka[t − 1, t] approximation adds significant offset values to the fields H and couplings J. In contrast, Plefka[t], Plefka[t − 1] and Plefka2[t] are all precise in estimating the values of H and J.

Top: Inferred external fields (A) and couplings (B) found by different mean-field models plotted versus the real ones for β = βc. Black lines represent the identity line. Bottom: Average squared error of inferred external fields \({\epsilon }_{{\bf{H}}}={\langle {({H}_{i}^{o}-{H}_{i}^{p})}^{2}\rangle }_{i}\) (C) and couplings \({\epsilon }_{{\bf{J}}}={\langle {({J}_{ij}^{o}-{J}_{ij}^{p})}^{2}\rangle }_{ij}\) (D) for 21 values of β in the range [0.7βc, 1.3βc].

Fig. 4C, D shows the mean squared error ϵH, ϵJ for bias terms and couplings between the original model and the inferred values for different β. In this case, errors are large in the estimation of J for Plefka[t − 1, t]. In comparison, Plefka[t], Plefka[t − 1] and Plefka2[t] work equally well even in the high fluctuation regime (β ≈ βc). Since the inverse Ising problem is solved by applying approximation one single time step (per iteration), it is not as challenging as the forward problem that can accumulate errors by recursively applying the approximations. Therefore, different approximations other than the classical mean-field Plefka[t − 1, t] perform equally well in this case.

Phase transition reconstruction

We have shown how different methods perform in computing the behavior of the system (forward problem) and inferring the parameters of a given network from its activation data (inverse problem). Combining the two, we can ask how well the methods explored here can reconstruct the behavior of a system from data, potentially exploring behaviors under different conditions than the recorded data.

First, in Fig. 5A–C we examine how the different approximation methods approximate fluctuations (equal-time and time-delayed covariances) and the entropy production (see Methods) at t = 128 after solving the forward problem by recursively applying the approximations for the 128 steps. As we mentioned above, the asymmetric SK model explored here presents maximum fluctuations and maximum entropy production around β = βc (Supplementary Note 6, Supplementary Fig. 2). However, we see that Plefka[t − 1, t] and Plefka[t − 1] cannot reproduce the behavior of correlations Ct and Dt of the original SK model around the transition point. Plefka[t] and Plefka2[t] show much better performance in capturing the behavior of Ct and Dt in the phase transition, although Plefka[t] overestimates both correlations. Additionally, all the methods capture the phase transition in entropy production, though Plefka[t] overestimates its value around βc and Plefka2[t] is more precise than the other methods.

Top: Average of the Ising model’s equal-time correlations (A), delayed correlations (B), and entropy production (shown as an exponential for better presentation of its maximum) (C), at the last step t = 128 found by different mean-field methods for β = βc. Bottom (D–F): The same as above using the reconstructed network H, J by solving the inverse Ising problem at β = βc and multiplying a fictitious inverse temperature \(\tilde{\beta }\) to the estimated parameters. The stars are marked at the values of \(\tilde{\beta }\) that yield maximum fluctuations or maximum entropy production.

Next, we combine the forward and inverse Ising problem and try to reproduce the transition in the asymmetric SK model in the models inferred from the data. We first take the values of H, J from solving the inverse problem from the data sampled at β = βc, and next we solve again the forward problem with those estimated parameters rescaled by a new inverse temperature \(\tilde{\beta }\). The results for the correlations (Fig. 5D, E) show that in this case Plefka[t − 1, t] works badly, not being able to capture the transition. Plefka[t − 1] shows similar performances as in the forward problem, and Plefka[t] and Plefka2[t] have a similar behavior, underestimating fluctuations slightly. When we analyze entropy production of the system (Fig. 5F), we find that Plefka2[t] exhibits better performance with a high precision, with Plefka[t − 1] slightly overestimating it, Plefka[t] underestimating it, and Plefka[t − 1, t] not capturing the phase transition. Overall, the results above suggest that Plefka2[t] is better suited to identify non-equilibrium phase transitions in models reconstructed from experimental data.

Discussion

We have proposed a framework that unifies different mean-field approximations of the evolving statistical properties of non-equilibrium Ising models. This allows us to derive approximations premised on specific assumptions about the correlation structure of the system previously proposed in the literature. Furthermore, using our framework we derive a new approximation (Plefka2[t]) using atypical assumptions for mean-field methods, i.e., the maintenance of pairwise correlations in the system. This new pairwise approximation outperforms existing ones for approximating the behavior of an asymmetric SK model near the non-equilibrium equivalent of a ferromagnetic phase transition (see Supplementary Note 6), where classical mean-field approximations face problems. This shows that the proposed methods are useful tools to analyze large-scale, non-equilibrium dynamics near critical regimes expected for biological and social systems. However, we note that low-temperature spin phases (e.g., the spin-glass phase in symmetric models) also impose limitations on mean-field approximations32,41, which could be further explored with methods like the ones presented here.

The generality of this framework allows us to picture other approximations with atypical assumptions. For example, the Sessak-Monasson expansion53 for an equilibrium Ising model assumes a linear relation between α and spin correlations. An equivalent equilibrium expansion could use an effective field h(α) nonlinearly dependent on α, satisfying linear Ct(α) = αCt or Dt(α) = αDt relations. As another extension, Plefka2[t] could incorporate higher-order interactions. As Eqs. (43) and (52) are each equivalent to two mean-field approximations with sl,t−1 = ± 1 respectively, a generalized PlefkaM[t] would involve 2M−1 equations, increasing accuracy but also computational costs. In general, reference models Q(st) set coupling parameters of the model to zero at some steps of its dynamics. Other parameters (e.g., fields) are either free parameters fitted as m-projection from P(st), or preserved to their original value (see Supplementary Note 7 for comparing free and fixed parameters of each model). Augmenting accuracy by increasing parameters often involves a computational cost. As a practical guideline for using each method, Supplementary Note 7 compares their precision and computation time in the forward and inverse problems (see also Supplementary Figs. 3 and 4).

Asides from its theoretical implications, our unified framework offers analysis tools for diverse data-driven research fields. In neuroscience, it has been popular to study the activity of ensembles of neurons by inferring an equilibrium Ising model with homogeneous (fixed) parameters23 or inhomogeneous (time-dependent) parameters25,54 from empirical data. Extended analyses based on the equilibrium model have reported that neurons operate near a critical regime5,6. However, studies of non-equilibrium dynamics in neural spike trains are scarce7,26,55, partly due to the lack of systematic methods for analysing large-scale non-equilibrium data from neurons exhibiting large fluctuations. The proposed pairwise model Plefka2[t] is suitable for simulating such network activities, being more accurate than previous methods in predicting the network evolution at criticality (Fig. 3) and in testing if the system is near the maximally fluctuating regime (Fig. 5). In particular, application of our methods for computing entropy production in non-equilibrium systems could provide tools for characterizing the non-equilibrium dynamics of neural systems56.

In summary, a unified framework of mean-field theories offers a systematic way to construct suitable mean-field methods in accordance with the statistical properties of the systems researchers wish to uncover. This is expected to foster a variety of tools to analyze large-scale non-equilibrium systems in physical, biological, and social systems.

Methods

Boltzmann learning in the inverse Ising problem

Let \({{\bf{S}}}_{t}^{r}=\{{S}_{1,t}^{r},{S}_{2,t}^{r},\ldots ,{S}_{N,t}^{r}\}\) for t = 1, …, T be observed states of a process described by Eq. (1) at the r-th trial (r = 1, …, R). We also define S1:T to represent the processes from all trials. The inverse Ising problem consists in inferring the external fields H and couplings J of the system. These parameters can be estimated by maximizing the log-likelihood \(\ell\)(S1:T) of the observed states under the model:

with \({h}_{i,t}^{r}={H}_{i}+{\sum }_{j}{J}_{ij}{S}_{j,t-1}^{r}\). The learning steps are obtained as:

where 〈⋅〉r denotes average over trials. We solve the inverse Ising problem by applying these equations as a gradient ascent rule adjusting H and J. The second terms of Eqs. (56) and (57) need to be computed at every iteration, thus the computational cost grows linearly with R × T. However, the use of mean-field approximations can significantly reduce the cost when a large number of samples R and time bins T are used to correctly estimate activation rates and correlations in large networks. Here the second terms can be written as

where \(\overline{P}(\tilde{{\bf{s}}})=\frac{1}{RT}{\sum }_{r,t}\delta (\tilde{{\bf{s}}},{{\bf{S}}}_{t}^{r})\) is the empirical distribution averaged over trials and trajectories (with δ being a Kronecker delta) and \({\tilde{m}}_{l}\) is the average activation rate computed from the empirical distribution. \(P({\bf{s}}| \tilde{{\bf{s}}})\) is defined as Eq. (1). We then approximate mi and Dil using the mean-field equations. Note that when we apply the mean-field equations, we replaced all statistics related to the previous step with those computed by the empirical distribution. By applying the mean-field methods, we reduced the computation of R trials of trajectories of length T into a single computation (instead of RT calculations). In our numerical tests, gradient ascent was executed using learning coefficients \({\eta }_{H}=0.1/RT,{\eta }_{J}=1/(RT\sqrt{N})\), starting from H = 0, J = 0.

Entropy production of the kinetic Ising model

The entropy production is defined as the KL divergence between the forward and backward path, quantifying the irreversibility of the system17,55,57:

where PB(st−1∣st) is a probability of the backward trajectory defined as in Eq. (1) but switching st and st−1. Assuming a non-equilibrium steady state, where P(st) = P(st−1), the entropy production of the kinetic Ising system is computed as:

Data availability

The datasets generated and analysed in this study are available under CC BY license at Zenodo https://zenodo.org/record/431898358 (https://doi.org/10.5281/zenodo.4318983).

Code availability

The source code for implementing the methods and results in this work is available under GPL license at GitHub https://github.com/MiguelAguilera/kinetic-Plefka-expansions59 (https://doi.org/10.5281/zenodo.4357634).

References

Nguyen, J. P. et al. Whole-brain calcium imaging with cellular resolution in freely behaving Caenorhabditis elegans. Proc. Natl Acad. Sci. USA 113, E1074 (2016).

Ahrens, M. B., Orger, M. B., Robson, D. N., Li, J. M. & Keller, P. J. Whole-brain functional imaging at cellular resolution using light-sheet microscopy. Nat. Methods 10, 413 (2013).

Stringer, C., Pachitariu, M., Steinmetz, N., Carandini, M. & Harris, K. D. High-dimensional geometry of population responses in visual cortex. Nature https://doi.org/10.1038/s41586-019-1346-5 (2019).

Nicolis, G. & Prigogine, I. Self-Organization in Nonequilibrium Systems: From Dissipative Structures to Order through Fluctuations. 1st edn. (Wiley, New York, 1977).

Tkačik, G. et al. Thermodynamics and signatures of criticality in a network of neurons. Proc. Natl Acad. Sci. USA 112, 11508 (2015).

Mora, T., Deny, S. & Marre, O. Dynamical criticality in the collective activity of a population of retinal neurons. Phys. Rev. Lett. 114, 078105 (2015).

Hertz, J., Roudi, Y. & Tyrcha, J. Ising model for inferring network structure from spike data. In Principles of Neural Coding, 527–546 (CRC Press, 2013).

Roudi, Y., Dunn, B. & Hertz, J. Multi-neuronal activity and functional connectivity in cell assemblies. Curr. Opin. Neurobiol. 32, 38 (2015).

Bouchaud, J. P. Crises and collective socio-economic phenomena: simple models and challenges. J. Stat. Phys. 151, 567 (2013).

Evans, D. J., Cohen, E. G. D. & Morriss, G. P. Probability of second law violations in shearing steady states. Phys. Rev. Lett. 71, 2401 (1993).

Jarzynski, C. Nonequilibrium equality for free energy differences. Phys. Rev. Lett. 78, 2690 (1997).

Crooks, G. E. Nonequilibrium measurements of free energy differences for microscopically reversible Markovian systems. J. Stat. Phys. 90, 1481 (1998).

Crooks, G. E. Entropy production fluctuation theorem and the nonequilibrium work relation for free energy differences. Phys. Rev. E 60, 2721 (1999).

Lebowitz, J. L. & Spohn, H. A Gallavotti-Cohen-type symmetry in the large deviation functional for stochastic dynamics. J. Stat. Phys. 95, 333 (1999).

Ito, S., Oizumi, M. & Amari, S.-I. Unified framework for the entropy production and the stochastic interaction based on information geometry. Phys. Rev. Res. 2, 033048 (2020).

Evans, D. J. & Searles, D. J. The fluctuation theorem. Adv. Phys. 51, 1529 (2002).

Seifert, U. Stochastic thermodynamics, fluctuation theorems and molecular machines. Rep. Prog. Phys. 75, 126001 (2012).

Gaspard, P. Time Asymmetry in Nonequilibrium Statistical Mechanics. In Special Volume in Memory of Ilya Prigogine, 83–133 (John Wiley, Sons, Ltd, 2007).

Salinas, S. R. A. The Ising Model. In Introduction to Statistical Physics, Graduate Texts in Contemporary Physics (ed. Salinas, S. R. A.) 257–276 (Springer New York, New York, NY, 2001).

Ackley, D. H., Hinton, G. E. & Sejnowski, T. J. A learning algorithm for Boltzmann machines. Cogn. Sci. 9, 147 (1985).

Witoelar, A. & Roudi, Y. Neural network reconstruction using kinetic Ising models with memory. BMC Neurosci. 12, P274 (2011).

Donner, C. & Opper, M. Inverse Ising problem in continuous time: a latent variable approach. Phys. Rev. E 96, 062104 (2017).

Schneidman, E., Berry, M. J., Segev, R. & Bialek, W. Weak pairwise correlations imply strongly correlated network states in a neural population. Nature 440, 1007 (2006).

Cocco, S., Leibler, S. & Monasson, R. Neuronal couplings between retinal ganglion cells inferred by efficient inverse statistical physics methods. Proc. Natl Acad. Sci. USA 106, 14058 (2009).

Shimazaki, H., Amari, S.-i, Brown, E. N. & Grün, S. State-space analysis of time-varying higher-order spike correlation for multiple neural spike train data. PLoS Comput. Biol. 8, e1002385 (2012).

Tyrcha, J., Roudi, Y., Marsili, M. & Hertz, J. The effect of nonstationarity on models inferred from neural data. J. Stat. Mech. 2013, P03005 (2013).

Thouless, D. J., Anderson, P. W. & Palmer, R. G. Solution of ’Solvable model of a spin glass. Philos. Mag. 35, 593 (1977).

Kappen, H. J. & Rodríguez, F. B. Efficient learning in Boltzmann machines using linear response theory. Neural Comput. 10, 1137 (1998).

Roudi, Y., Aurell, E. & Hertz, J. A. Statistical physics of pairwise probability models. Front. Comput. Neurosci. https://doi.org/10.3389/neuro.10.022.2009 (2009).

Roudi, Y., Tyrcha, J. & Hertz, J. Ising model for neural data: model quality and approximate methods for extracting functional connectivity. Phys. Rev. E 79, 051915 (2009).

Donner, C., Obermayer, K. & Shimazaki, H. Approximate inference for time-varying interactions and macroscopic dynamics of neural populations. PLoS Comput. Biol. 13, e1005309 (2017).

Plefka, T. Convergence condition of the TAP equation for the infinite-ranged Ising spin glass model. J. Phys. A 15, 1971 (1982).

Tanaka, T. Mean-field theory of Boltzmann machine learning. Phys. Rev. E 58, 2302 (1998).

Tanaka, T. A theory of mean field approximation. In Advances in Neural Information Processing Systems, 351–357 (1999).

Bhattacharyya, C. & Keerthi, S. S. Information geometry and Plefkaas mean-field theory. J. Phys. A 33, 1307 (2000).

Tanaka, T. Information Geometry of Mean-Field Approximation. In Advanced mean field methods: Theory and practice 351–360 (MIT press, 2001).

Amari, S., Ikeda, S. & Shimokawa, H. Information Geometry of Alpha-Projection in Mean Field Approximation. In Advanced Mean Field Methods: Theory and Practice (MIT Press, 2001).

Kappen, H. J. & Spanjers, J. J. Mean field theory for asymmetric neural networks. Phys. Rev. E 61, 5658 (2000).

Roudi, Y. & Hertz, J. Dynamical TAP equations for non-equilibrium Ising spin glasses. J. Stat. Mech. 2011, P03031 (2011).

Roudi, Y. & Hertz, J. Mean field theory for nonequilibrium network reconstruction. Phys. Rev. Lett. 106, 048702 (2011).

Mézard, M. & Sakellariou, J. Exact mean-field inference in asymmetric kinetic Ising systems. J. Stat. Mech. 2011, L07001 (2011).

Mahmoudi, H. & Saad, D. Generalized mean field approximation for parallel dynamics of the Ising model. J. Stat. Mech. 2014, P07001 (2014).

Bachschmid-Romano, L., Battistin, C., Opper, M. & Roudi, Y. Variational perturbation and extended Plefka approaches to dynamics on random networks: the case of the kinetic Ising model. J. Phys. A 49, 434003 (2016).

Pressé, S., Ghosh, K., Lee, J. & Dill, K. A. Principles of maximum entropy and maximum caliber in statistical physics. Rev. Mod. Phys. 85, 1115 (2013).

Amari, S. & Nagaoka, H. Methods of information geometry. Vol. 191 (American Mathematical Soc., 2007).

Amari, S. Information geometry and its applications, Vol. 194 (Springer, 2016).

Amari, S., Kurata, K. & Nagaoka, H. Information geometry of Boltzmann machines. IEEE Trans. Neural Netw. 3, 260 (1992).

Oizumi, M., Tsuchiya, N. & Amari, S.-I. Unified framework for information integration based on information geometry. Proc. Natl Acad. Sci. USA 113, 14817 (2016).

Saul, L. K. & Jordan, M. I. Exploiting tractable substructures in intractable networks. In Advances in Neural Information Processing Systems 486–492 (1996).

Zhang, Y. & Barato, A. C. Critical behavior of entropy production and learning rate: Ising model with an oscillating field. J. Stat. Mech. 2016, 113207 (2016).

Crochik, L. & Tomé, T. Entropy production in the majority-vote model. Phys. Rev. E 72, 057103 (2005).

Noa, C. F., Harunari, P. E., de Oliveira, M. & Fiore, C. Entropy production as a tool for characterizing nonequilibrium phase transitions. Phys. Rev. E 100, 012104 (2019).

Sessak, V. & Monasson, R. Small-correlation expansions for the inverse Ising problem. J. Phys. A 42, 055001 (2009).

Granot-Atedgi, E., Tkačik, G., Segev, R. & Schneidman, E. Stimulus-dependent maximum entropy models of neural population codes. PLoS Comput. Biol. 9, e1002922 (2013).

Cofré, R., Videla, L. & Rosas, F. An introduction to the non-equilibrium steady states of maximum entropy spike trains. Entropy 21, 884 (2019).

Lynn, C. W., Cornblath, E. J., Papadopoulos, L., Bertolero, M. A. & Bassett, D. S. Non-equilibrium dynamics and entropy production in the human brain. Preprint at arXiv 2005.02526 (2020).

Schnakenberg, J. Network theory of microscopic and macroscopic behavior of master equation systems. Rev. Mod. Phys. 48, 571 (1976).

Aguilera, M. A unifying framework for mean field theories of asymmetric kinetic Ising systems [Dataset]. Zenodo https://doi.org/10.5281/zenodo.4318983 (2020).

Aguilera, M. A unifying framework for mean field theories of asymmetric kinetic Ising systems [Code]. GitHub https://doi.org/10.5281/zenodo.4357634 (2020).

Acknowledgements

We thank Yasser Roudi and Masanao Igarashi for valuable comments and discussions on this manuscript. This work was supported in part by the Cooperative Intelligence Joint Research between Kyoto University and Honda Research Institute Japan, MEXT/JSPS KAKENHI Grant Number JP 20K11709, and the grant of Joint Research by the National Institutes of Natural Sciences (NINS Program No. 01112005). M.A. was funded by the European Union’s Horizon 2020 research and innovation programme under the Marie Skłodowska-Curie grant agreement No 892715 and the University of the Basque Country UPV/EHU post-doctoral training program grant ESPDOC17/17, and supported in part by the Basque Government project IT 1228-19 and project Outonomy PID2019-104576GB-I00 by the Spanish Ministry of Science and Innovation.

Author information

Authors and Affiliations

Contributions

M.A., S.A.M., and H.S. designed and reviewed research; M.A. contributed analytical and numerical results; M.A., S.A.M., and H.S. wrote the paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Peer review information Nature Communications thanks the anonymous reviewer(s) for their contribution to the peer review of this work. Peer reviewer reports are available.

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Aguilera, M., Moosavi, S.A. & Shimazaki, H. A unifying framework for mean-field theories of asymmetric kinetic Ising systems. Nat Commun 12, 1197 (2021). https://doi.org/10.1038/s41467-021-20890-5

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41467-021-20890-5

This article is cited by

-

Heavy-tailed neuronal connectivity arises from Hebbian self-organization

Nature Physics (2024)

-

Nonequilibrium thermodynamics of the asymmetric Sherrington-Kirkpatrick model

Nature Communications (2023)

-

Modelling time-varying interactions in complex systems: the Score Driven Kinetic Ising Model

Scientific Reports (2022)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.