Abstract

Complex behavior poses challenges in extracting models from experiment. An example is spin liquid formation in frustrated magnets like Dy2Ti2O7. Understanding has been hindered by issues including disorder, glass formation, and interpretation of scattering data. Here, we use an automated capability to extract model Hamiltonians from data, and to identify different magnetic regimes. This involves training an autoencoder to learn a compressed representation of three-dimensional diffuse scattering, over a wide range of spin Hamiltonians. The autoencoder finds optimal matches according to scattering and heat capacity data and provides confidence intervals. Validation tests indicate that our optimal Hamiltonian accurately predicts temperature and field dependence of both magnetic structure and magnetization, as well as glass formation and irreversibility in Dy2Ti2O7. The autoencoder can also categorize different magnetic behaviors and eliminate background noise and artifacts in raw data. Our methodology is readily applicable to other materials and types of scattering problems.

Similar content being viewed by others

Introduction

Extracting the correct interactions from experimental data is essential for modeling. For magnetic insulators, the model is described by the spin Hamiltonian equation, dictated by symmetry, single-ion properties, and electron overlap between ions. The problem of extracting a spin Hamiltonian from neutron scattering data (inverse scattering problem) is often ill-posed and compounded by the need to use theory to interpret scattering data. Further, the available experimental data may not be enough to accurately determine the model parameters because of limited access to experimental data, a large noise magnitude at each scattering wavevector, or systematic errors associated with, e.g., background subtraction. Selecting the optimal Hamiltonian to model the experimental data is often a formidable task, especially when many parameters must be simultaneously determined. Tools for doing so are needed to uncover the physics that is emerging from large classes of complex magnetic materials1,2.

Dy2Ti2O7 is a highly frustrated magnet showing complex behavior including spin liquid formation3,4,5,6,7,8. The magnetism originates from Dy3+ ions which behave as classical Ising spins on a pyrochlore lattice of corner-linked tetrahedra, as in Fig. 1a. Figure 1b shows the four essential magnetic interactions including a ferromagnetic coupling that results from the combination of exchange with a large dipolar interaction. This FM coupling makes Dy2Ti2O7 a canonical spin-ice material, i.e., the spins on each tetrahedron obey the ice rules that only allow for two-in two-out configurations9,10. This divergenc-free condition leads to a spin liquid with macroscopic degeneracy that features north and south charged magnetic monopoles interacting via a 1/r potential at elevated temperatures3. A full low temperature characterization demands the study of a vast number of spins subject to short and long-range interactions. Spin dynamics occurs through millisecond quantum tunneling processes11 and the measured characteristic equilibration time τ increases drastically upon lowering the temperature, leading to irreversible behavior below 600 mK12,13,14,15. This slowdown has resulted in major difficulties16,17 in measuring and interpreting experiments such as heat capacity at low temperatures.

a Atomic structure of Dy2Ti2O7 comprised of tetrahedra of magnetic Dy ions (blue) and nonmagnetic octahedra of oxygen ions (red) surrounding Ti ions (cyan). b The magnetic moments located on Dy ions are constrained by crystal field interactions to point in or out of the tetrahedra. They form a corner sharing pyrochlore lattice. The pathways of nearest neighbor (1), next-nearest-neighbor (2) and two inequivalent next-next-nearest neighbor (3 and 3′) interactions are shown as thick colored lines.

Here we introduce an autoencoder-based approach that can potentially address important modeling challenges, such as a proper background and noise subtraction, more reliable inference of model Hamiltonians, improved transferability to other physical systems, and efficiency. We apply our method to neutron scattering measurements of Dy2Ti2O7 in order to infer the optimal parameters for a dipolar spin ice model description.

Results

Neutron scattering measurements and simulations

Here we use diffuse neutron scattering from time-of-flight techniques (see Methods: Experimental details) on the CORELLI instrument at the Spallation Neutron Source, Oak Ridge National Laboratory to measure the magnetic state of Dy2Ti2O7. Three-dimensional (3D) volumes of diffuse scattering were measured in the 100 –960 mK temperature range. In view of the low temperature equilibration challenge, we undertake our analysis on data sets at 680 mK, which is low enough for correlations to be well developed but sufficiently high to reach equilibrium over a short time scale. Figure 2a shows the background-subtracted data at 680 mK. This is proportional to the modulus squared of the spin components in the wavevector space. However, an additional aspect of neutron scattering is that it samples only the spin components perpendicular to the wavevector transfer Q.

a The scattering function Sexp(Q) for the Dy2Ti2O7 crystal at 680 mK. b The same experimental data after being filtered by the autoencoder, \(S_{{\mathrm{AE}}}^{{\mathrm{exp}}}({\mathbf{Q}})\). c Simulated scattering data from the optimal model spin Hamiltonian, \(S_{{\mathrm{opt}}}^{{\mathrm{sim}}}({\mathbf{Q}})\). In going from (a) to (b), the autoencoder has filtered out experimental artifacts such as the red peaks, the missing data at the dark patches, etc. Using both scattering and heat capacity data, we determine the optimal spin Hamiltonian couplings to be J2 = 0.00(6) K, J3 = 0.014 (16) K and \(J_{3^\prime}\) = 0.096(12) K with J1 = 3.41 K and D = 1.3224 K having been fixed in prior work17.

We employ a dipolar spin-ice Hamiltonian that includes exchange terms up to third-nearest neighbors:

where Si can be viewed as an Ising spin of the ith ion, Fig. 1b. The model includes first, second, and two different third nearest neighbor interaction strengths, J1, J2, J3, and J3′, respectively. There is also a dipolar interaction with strength D, which couples the ith and the jth spins, according to their displacement vector rij. Prior work has determined D = 1.3224 K and J1 = 3.41 K to high accuracy17,18,19,20,21. In the present modeling effort, we seek to determine the three unknown parameters J2, J3, and J3′ without any use of prior knowledge. Given a model Hamiltonian H, we use Metropolis Monte Carlo to generate a simulated structure factor, Ssim(Q), to be compared with the experimental data Sexp(Q) (Methods: Simulation details).

Optimizing Hamiltonian for diffuse scattering data

In a direct approach, one might try to minimize the squared distance,

between the raw experiment and simulation data. We introduce a factor \(m\left( {\mathbf{Q}} \right) \in \{ 0,1\}\) masking selected Q-points where experimental artifacts can be identified (see Supplementary Fig. 4). The number of non-masked Q-points is \(N = \mathop {\sum}\nolimits_{\mathbf{Q}} m ({\mathbf{Q}}) \approx 1.2 \times 10^5\). We initially investigated optimization methods, such as the particle swarm method22, to overcome local barriers and find the global minimum of \(\chi _{S\left( {\mathbf{Q}} \right)}^2\). However, despite nominal success in optimization, we quickly ran into reliability issues stemming from errors in the experimental and simulation data. As we will discuss below, \(\chi _{S\left( {\mathbf{Q}} \right)}^2\) is both noisy and effectively flat around its minimum, such that many distinct model Hamiltonians could achieve similarly small values of the \(\chi _{S\left( {\mathbf{Q}} \right)}^2\) error measure. Thus, even if we could find the global minimum of \(\chi _{S\left( {\mathbf{Q}} \right)}^2\), it might still be far from the physically correct model for Dy2Ti2O7.

To address the ill-posed nature of this inverse scattering problem, we present two strategies: (1) We employ machine learning techniques to replace \(\chi _{S\left( {\mathbf{Q}} \right)}^2\). with our error measure \(\chi _{S_L}^2\) that is more robust to errors in the experimental and simulation data, and puts more weight on “characteristic features” of the structure factor. (2) Rather than reporting just the single “best” model, we sample from the entire set of Hamiltonian models for which the error measure is below some tolerance threshold. In this way, our method will report not just a model, but also a model uncertainty.

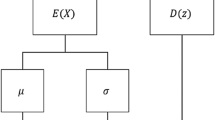

We use an autoencoder23 to formulate \(\chi _{S_L}^2\), our choice of error measure. Autoencoders were originally developed in the context of computer vision, where they are known to be effective at image compression and denoising tasks. Here we apply them to interpret structure factor data, and to disambiguate among many possible solutions of the inverse scattering problem. Our autoencoder is a neural network that takes an S(Q) as input (either simulated or experimental), encodes it into a compressed latent space representation SL, and then decodes to an output SAE(Q) that captures the essence of the input S(Q), while removing irrelevant noise and artifacts.

The autoencoder’s latent space \(S_L = (S_1,S_2, \ldots S_D)\) provides a low-dimensional characterization of the S(Q) data. The dimension D of the latent space should strike a balance between overfitting and underfitting. Keeping D relatively small limits the autoencoder’s ability to fit irrelevant noise in the training data. On the other hand, D should be large enough to allow the autoencoder flexibility to capture physically relevant characteristics in S(Q). We selected D = 30 based on the D-dependance of ΔS(Q) (error over the validation dataset). (see Supplementary Fig. 7)

Note that the physical S(Q) data will contain many more scalar components than the 30 available in the latent space. Thus, by design, the autoencoder’s output SAE(Q) can only be an approximation to its input S(Q). After proper training, one hopes that the autoencoder will be able to extract the relevant characteristics of a given S(Q), while discarding irrelevant information such as noise and experimental artifacts. The autoencoder determines what information is relevant according to its ability to encode and faithfully decode the training data (Methods: Training details).

We employ the simplest possible autoencoder architecture: a fully-connected neural network with a single hidden layer. The hidden space activations (i.e., the latent space representation) are defined as \(S_L = f(\mathop {\sum}\nolimits_{\mathbf{Q}} {W_{L,{\mathbf{Q}}}} m({\mathbf{Q}})S({\mathbf{Q}}) + b_L)\), where S(Q) is the input to the autoencoder, and the matrix WL,Q and vector bL are to be determined from the machine learning training process. Given simulated structure factor data as input, we interpolate to the experimental Q-points as necessary. The output of the autoencoder is defined as \(S_{{\mathrm{AE}}}({\mathbf{Q}}) = f( {\mathop {\sum}\nolimits_{L = 1}^{30} {W_{{\mathbf{Q}},L}^\prime } S_L + b_{\mathbf{Q}}^\prime })\), where the new matrix \(W_{{\mathbf{Q}},L}^\prime\) and vector \(b_{\mathbf{Q}}^\prime\) are also trainable. We employ the logistic activation function \(f\left( x \right) = 1/(1 + e^{ - x})\) at both layers. This choice guarantees that the output SAE(Q) is non-negative.

Figure 2 illustrates how the trained autoencoder processes the Dy2Ti2O7 scattering data. Figure 2a shows the raw experimental data Sexp(Q) while Fig. 2b shows how the autoencoder filters the experimental data to produce \(S_{{\mathrm{AE}}}^{{\mathrm{exp}}}\left( {\mathbf{Q}} \right)\). The autoencoder preserves important qualitative features of the data, while being very effective at removing experimental artifacts. Figure 2c shows the simulated data \(S_{{\mathrm{opt}}}^{{\mathrm{sim}}}\left( {\mathbf{Q}} \right)\) for the optimal Hamiltonian model Hopt, without any autoencoder filtering. We will describe later our procedure to determine Hopt. Note that the best model, \(S_{{\mathrm{opt}}}^{{\mathrm{sim}}}\left( {\mathbf{Q}} \right)\), is in remarkably good agreement with \(S_{{\mathrm{AE}}}^{{\mathrm{exp}}}\left( {\mathbf{Q}} \right)\). This agreement is consistent with the fact that the autoencoder was trained specifically to reproduce simulated data.

Figure 3 provides another way to understand how the autoencoder is processing the S(Q) data. In Fig. 3a we show a cross section of Sexp(Q) in the high symmetry plane \(\left( {\left[ {h, - h,0} \right] - \left[ {k,k, - 2k} \right]} \right)\). Figure 3b shows the corresponding simulated data \(S_{{\mathrm{opt}}}^{{\mathrm{sim}}}\left( {\mathbf{Q}} \right)\) for the optimal model Hamiltonian. Now we perturb Hopt to a new model Hperturb, which keeps all parameters from Hopt except modifies J2 from 0.16 K to −0.18 K. Despite the relatively significant change \(\Delta J_2 \cong 0.1J_1\), there is very little change to the structure factor. Indeed, Fig. 3c shows that \({\mathrm{\Delta }}S^{{\mathrm{sim}}} = S_{{\mathrm{opt}}}^{{\mathrm{sim}}}\left( {\mathbf{Q}} \right) - S_{{\mathrm{perturb}}}^{{\mathrm{sim}}}\left( {\mathbf{Q}} \right)\) is an order of magnitude smaller than the peaks in \(S_{{\mathrm{opt}}}^{{\mathrm{sim}}}\left( {\mathbf{Q}} \right)\), and relatively noisy. This illustrates the inherent difficulty of our inverse scattering problem: many J2 values seem to produce similarly good Hamiltonians.

a Experimental scattering data Sexp(Q) in the high symmetry plane. Dark patches indicate zero signal due to nuclear Bragg peaks. b The corresponding simulated data for the optimal model Hamiltonian. c Change in the simulated structure factor ΔSsim when the J2 coupling is perturbed from 0.16 K to −0.18 K. The relatively weak response ΔSsim to a perturbation in J2 of ±0.05 J1 illustrates the challenge in inferring the correct spin Hamiltonian. d–f The change in the autoencoder output when 1, 6, and 12 latent space components, respectively, are updated to account for the perturbation on J2 (see main text for details).

The autoencoder latent space can be effective in extracting and amplifying important S(Q) features that might otherwise be hidden. To show this, we consider the latent space representations SL and \(S_L^\prime\) for \(S_{{\mathrm{opt}}}^{{\mathrm{sim}}}\left( {\mathbf{Q}} \right)\) and \(S_{{\mathrm{perturb}}}^{{\mathrm{sim}}}\left( {\mathbf{Q}} \right)\), respectively. We ask: How much does SL need to be modified toward \(S_L^\prime\) in order to capture the important characteristics of \(S_{{\mathrm{perturb}}}^{{\mathrm{sim}}}\left( {\mathbf{Q}} \right)\), i.e., the perturbations to the structure factor? To answer this question, we replace 1, 6, and 12 latent space components of SL with the corresponding ones in \(S_L^\prime\). The components selected are those with the largest deviations, \(|S_L - S_L^\prime |\). Figure 3d–f show the change in autoencoder output, after substitution of the latent space components. Panel f captures some physically important characteristics of ΔSsim while discarding irrelevant noise. Latent space components beyond 12 carry little information about ΔSsim.

Now we show how the autoencoder can assist in solving the inverse scattering problem, i.e., in finding the optimal model Hamiltonian Hopt given the experimental data Sexp(Q). To illustrate the important ideas, we first focus on determining J2, assuming the other parameters of Hopt are already known.

Figure 4a shows \(\chi _{S({\mathbf{Q}})}^2\) as a function of J2, illustrating the difficulty in making direct comparisons between experimental and simulated scattering data, Eq. (2). In principle the minimum of \(\chi _{S({\mathbf{Q}})}^2\) would give J2, but in practice one must contend with relatively large uncertainties in the data. The visible scatter in Fig. 4a is mostly a consequence of limited statistics of the simulated data. Other sources of error, such as systematic experimental error, will also exist and are more difficult to quantify.

Inferring the spin Hamiltonian for Dy2Ti2O7. a \(\chi _{S({\mathbf{Q}})}^2\) directly measures the distance between experimental and simulated S(Q) data. It is relatively flat and noisy around its minimum, thus yielding a large uncertainty in the J2 coupling (any value below the dashed line, \(C_{S\left( {\mathbf{Q}} \right)}^2\), is a reasonable candidate). b \(\chi _{S_{{\mathrm{AE}}}({\mathbf{Q}})}^2\) measures the distance between S(Q) data after being filtered through the autoencoder. c \(\chi _{S_L}^2\) measures the distance between the 30-dimensional latent space representations of the S(Q) data. The Gaussian process model \(\hat \chi _{S_L}^2\left( H \right)\)(red curve) accurately approximates \(\chi _{S_L}^2\), even in the full space of J2, J3, and \(J_{3^\prime}\). d Once a good model \(\hat \chi _{S_L}^2(H)\) has been constructed, we can rapidly identify the optimal Hamiltonian model (magenta cross). The autoencoder-based error measure \(\chi _{S_L}^2\) yields much smaller model uncertainty (blue region) than the naïve one \(\chi _{S({\mathbf{Q}})}^2\) (cyan region). Model uncertainty is further reduced using a multi-objective error measure \(\chi _{{\mathrm{multi}}}^2\) that incorporates heat capacity data (dark-blue region). Three most popular Hamiltonian sets currently used from refs. 17,18,20 have also been marked as green, red and gray crosses respectively.

A natural modification is to replace \(\chi _{S({\mathbf{Q}})}^2\) with the squared distance of autoencoder-filtered structure factors,

This measure should be more robust to artifacts in both the experimental and simulation data. Indeed, as shown in Fig. 4b, it does slightly better in identifying an optimal J2. The behavior of \(\chi _{S_{{\mathrm{AE}}}\left( {\mathbf{Q}} \right)}^2\) as a function of the Hamiltonian parameters is similar to the one obtained from the latent space representation of the S(Q) data using a linear autoencoder, which is equivalent to the principal component analysis (PCA).

Here we propose an alternative error measure. The 30-dimensional latent space representation SL should, in some sense, capture the most relevant information in S(Q). This suggests that to compare Sexp(Q) to Ssim(Q) we should actually look at the squared distance of their latent space vectors

Figure 4c shows that this error measure produces the clearest minimum, and thus the most precise identification of J2. We will use \(\chi _{S_L}^2\) as our optimization cost function in what follows.

The inverse scattering problem for Dy2Ti2O7 requires finding not just one, but three unknown Hamiltonian parameters: J2, J3, and \(J_{3^\prime}\). We employ a variant of the Efficient Global Optimization algorithm to find the Hamiltonian Hopt that minimizes \(\chi _{S_L}^2\)24. In this approach, one iteratively constructs a dataset of carefully sampled Hamiltonians H. For each Hamiltonian H, we calculate a simulated structure factor Ssim(Q) and corresponding deviation \(\chi _{S_L}^2\) from the experimental data. With all such data, one builds a Gaussian process regression model \(\hat \chi _{S_L}^2(H)\) that predicts \(\chi _{S_L}^2\) for Hamiltonians H not yet sampled. The low-cost model \(\hat \chi _{S_L}^2(H)\) can be rapidly scanned over the space of Hamiltonians. Also, \(\hat \chi _{S_L}^2(H)\) acts as a denoiser, effectively “averaging out” uncorrelated stochastic errors in the \(\chi _{S_L}^2\) data. As more data is collected, the improved models \(\hat \chi _{S_L}^2(H)\) will progressively become more faithful to \(\chi _{S_L}^2\). Optimization, as described in methods (Methods: Optimization) gives the optimal parameters as J2 = 0.34(6) K, J3 = −0.134(18) K and \(J_{3^\prime}\) = 0.102(32) K. The red curve of Fig. 4c shows a cross section of the final \(\hat \chi _{S_L}^2(H)\) model. The minimum at J2 = 0 is readily apparent. The dashed line in Fig. 4a indicates our empirically selected error tolerance threshold \(C_{S({\mathbf{Q}})}^2\). The dashed line in Fig. 4c shows \(C_{S_L}^2\), the corresponding tolerance threshold for the latent space error. We calculated \(C_{S_L}^2\) from \(C_{S({\mathbf{Q}})}^2\) under the assumption of a fixed amount of uncertainty in the scattering data [Methods: Uncertainty quantification]. Figure 4d shows the three-dimensional regions of uncertainty corresponding to \(\chi _{S({\mathbf{Q}})}^2 \, < \, C_{S({\mathbf{Q}})}^2\) (cyan) and \(\chi _{S_L}^2 \, < \, C_{S_L}^2\) (blue).

Multi-modal optimization

With more experimental constraints, we can further reduce uncertainty in Hopt. For this purpose, we define a new error measure \(\chi _{{\mathrm{multi}}}^2 = \chi _{S_L}^2 \times \chi _{c_v}^2\), where \(\chi _{C_v}^2 = \left( {c_v^{{\mathrm{exp}}} - c_v^{{\mathrm{sim}}}} \right)^2\) denotes the squared error between experimental4 and simulated heat capacities, \(c_v = \frac{1}{{T^2}}\left\langle {U^2 - \left\langle U \right\rangle ^2} \right\rangle\). Minimizing this multi-objective error function slightly modifies the model parameters: J2 = 0.00(6) K, J3 = −0.014(16) K and \(J_{3^\prime}\) = 0.102(16) K, pictured as a green cross in Fig. 4d. But perhaps more importantly, the uncertainties in these parameters have decreased significantly. This is illustrated by the very compact dark-blue region in Fig. 4d, for which \(\chi _{{\mathrm{multi}}}^2 \, < \, C_{{\mathrm{multi}}}^2\), where \(C_{{\mathrm{multi}}}^2\) is again calculated as a function of \(C_{S({\mathbf{Q}})}^2\).

The agreement between Sexp(Q) and \(S_{{\mathrm{opt}}}^{{\mathrm{sim}}}({\mathbf{Q}})\) is quite good, as previously observed in Figs. 2 and 3. Further comparisons are shown in Supplementary Fig. 2. To truly validate the model, however, we should compare to experimental data that has not been used during the model optimization process. For this purpose, we use the magnetic field dependence of different physical properties shown in Fig. 5. The optimal spin model reproduces the measured field dependence of the magnetization25, the zero-field cooled (ZFC) and field cooled (FC) magnetic susceptibility12, and the diffuse scattering at multiple temperature and applied field conditions, confirming that we have indeed found a model Hamiltonian adequate to describe the magnetic properties of Dy2Ti2O7 including the onset of irreversibility and glassiness.

a Magnetization as a function of magnetic field and temperature, b zero-field cooled (ZFC) – field cooled (FC) susceptibility, c–f magnetic diffuse scattering measured at different temperature and magnetic field combinations: [680 mK, 0.2 T], [100 mK, 0 T], [680 mK, 0.6 T] and [900 mK, 0.2 T] respectively. All the experiments and simulations shown here are done under magnetic field along the [1,1,1] direction. The magnetization and the ZFC-FC data are extracted from refs. [5,26, respectively.

Discussion

Our present study has primarily focused on robust inference of the optimal model Hamiltonian. There are two important aspects of our methodology that we wish to emphasize. First, our use of an autoencoder, trained on large quantities of simulation data, provides a distance measure \(\chi _{S_L}^2\) that allows robust comparisons to experimental scattering data. Second, our use of Gaussian process regression models \(\hat \chi _{S_L}^2\) as a low-cost predictor for \(\chi _{S_L}^2\) improves the quality of our optimized Hamiltonians. Gaussian process regression averages out uncorrelated stochastic error in \(\chi _{S_L}^2\), and helps in making uncertainty estimates. The latter is crucial for guiding the design of future experiments or simulations. Whereas traditional analysis of diffraction and inelastic neutron scattering is time consuming and error prone, our methodology is fully automated, and helps overcome difficulties of visualizing 3D or 4D data.

Finally, we remark that the autoencoder latent space provides an interesting characterization of structure factor data in its own right. Supplementary Fig. 5 in the supplement illustrates how the 30-dimensional latent space variables map to S(Q). Supplementary Fig. 6 illustrates the activations of each latent space variable at varying points in the space of \(J_3 - J_{3^\prime}\) parameters.

Future studies might explore more direct application of autoencoders to the problems of background subtraction and of denoising experimental data. Here, we investigate another interesting application of the autoencoder: It can delineate different magnetic regimes. To demonstrate this, we will explore the space of J3 and \(J_{3^\prime}\) parameters, while keeping J2 = 0 K fixed. Our goal is to build a map of regimes with different dominant spatial magnetic correlations within this two-dimensional Hamiltonian space. We caution that the transitions between regimes will typically not be sharp phase transitions, so our modeling will not produce a phase diagram in the strict sense.

Figure 6a shows the result of our clustering analysis on the simulated data (Methods: Clustering). The optimal spin Hamiltonian for Dy2Ti2O7 is marked as Hopt near the center of this map. The corresponding Ssim(Q) data, sliced in the high symmetry plane, had previously been shown in Fig. 3b. Figure 6b–i show the Ssim(Q) data for alternative Hamiltonians, as marked on the map. It is clear from these results that the spin Hamiltonian of Dy2Ti2O7 is close to the confluence of multiple regimes. This fact reveals an additional source of complexity that explains the difficulties that were encountered in previous characterizations of this material. This analysis suggests a roadmap for further experimental studies. For example, the application of relatively small external fields and pressures or dopings should be enough to push Dy2Ti2O7 into new magnetic regimes. For instance, the proximity to the ferromagnetic phase (blue regime in Fig. 6a) indicates that the saturation field is small, as confirmed by magnetization, Fig. 5a.

The map is generated by varying J3 and \(J_{3^\prime}\), with the remaining Hamiltonian parameters J1, J2, and D being fixed to their optimal values. The colors in a indicate different groups of S(Q), which correspond to regimes with different patterns of magnetic correlations. b–i Simulated S(Q) data sliced in the high symmetry plane at specific points indexed on panel (a). The large dark-blue regime (1) corresponds to long-range ferromagnetic order, as indicated by the Bragg peaks in panel (b). c–i Correspond to different patterns of short-range correlations arising from subsets of states that still obey ice rules. The pattern (g) falls within the same orange regime as Hopt, and qualitatively captures the Coulombic correlations expected for spin ice [cf. experimental scattering data in Fig. 3a].

In summary, a fundamental bottleneck in experimental condensed matter physics is model optimization and assessment of confidence levels. We have developed a machine learning-based approach that addresses both challenges in an automated way. Applied to Dy2Ti2O7 our method produces a model that accounts for the diffuse scattering data as well as the lack of magnetic ordering at low temperature. Our approach readily extends to the analysis of dynamical correlations, parametric data sets in e.g. field and temperature, and other scattering data.

Methods

Experimental details

To measure the diffuse scattering of Dy2Ti2O7 an isotopically enriched single crystal sample of Dy2Ti2O7 was grown using an optical floating-zone method in a 5 atm oxygen atmosphere. Starting material Dy2O3 (94.4% Dy-162) and TiO2 powder were first mixed in proper ratios and then annealed in air at 1400 °C for 40 h before growth in the image furnace as previously described26. Then the sample was further annealed in oxygen at 1400 °C for 20 h after the floating-zone growth. The lack of a nuclear spin moment in Dy-162 means that nuclear spin relaxation channels for the spins are cut off which is important in order to study the quench behavior in the material. In addition, the incoherent scattering from natural dysprosium is high (54.4 barns) whereas for Dy-162 it is zero and the absorption cross section is decreased from 994 barns (2200 ms−1 neutrons) for natural dysprosium to 194 barns for isotope 162. A best growth was achieved with a pulling speed of 3 mm/hour. One piece of crystal with the mass ≈ 200 mg was aligned in the (111) plane for the neutron investigation at the single crystal diffuse scattering spectrometer CORELLI at the Spallation Neutron Source, Oak Ridge National Laboratory. The crystal was prepared as a sphere to minimize absorption corrections and demagnetization corrections.

CORELLI is a time-of-fight instrument where the elastic contribution is separated by a pseudo-statistical chopper27. The crystal was rotated through 180 degrees with the step of 5 degree horizontally with the vertical angular coverage of ±8 degree (limited by the magnet vertical opening) for survey on the elastic and diffuse peaks in reciprocal space. The dilution refrigerator insert and cryomagnet were used to enable the measurements down 100 mK and fields up to 1.4 T. The data were reduced using Mantid28 and Python script available at Corelli. Background runs at 1.4 Tesla were made to remove all diffuse signal and the extra scattering at Bragg peak positions due to the polarized spin contribution was accounted for by using the zero field intensities. (see Supplementary Fig. 01) Figs. 2a and 3a shows a 3D plot and a slice of the high symmetry plane of the background-subtracted diffuse scattering measurement at 680 mK and 0 T respectively.

Simulations details

Given a model Hamiltonian H, we use Metropolis Monte Carlo to generate a simulated structure factor, Ssim(Q), to be compared with the experimental data Sexp(Q). We use simulated annealing to properly estimate Ssim(Q)29. Beginning at an initial temperature of 50 K, we iterate through 11 exponentially spaced intermediate temperatures, until finally reaching the target temperature of 680 mK. At each intermediate temperature, 5 × 106 Monte-Carlo sweeps were performed. At every sweep, each spin is updated once on average, according to the Metropolis acceptance criterion30. We perform our simulations using 4 × 4 × 4 cubic supercells, giving a total of 1024 spins in the pyrochlore lattice. The magnetic form factor of Dy3+ and the neutron scattering polarization factor that enter in the calculation of the spin structure factor, S(Q), are accounted before comparison to the background corrected experimental data. To correctly account for the long-range dipolar interactions, we used Ewald summation31, implemented with the fast Fourier transform.

Training details

To train the autoencoder, we require a dataset sufficiently broad to cover all potentially important characteristic features of the Dy2Ti2O7 scattering data. For this purpose, we employ 1000 model Hamiltonians of the form Eq. (1). Each model has individually randomized coupling strengths J2, J3, and J3′, sampled uniformly from the range −0.6 K and 0.6 K. For each model, we use simulated annealing to generate equilibrated three-dimensional Ssim(Q) data at the target temperature of 680 mK. Our training data will thus consist of 1000 model Hamiltonians, each labeled by simulated data. The autoencoder tries to minimize the deviation between its input Ssim(Q). and filtered output, summed over all random models in the dataset.

Training the autoencoder corresponds to determining the parameters (i.e., W, b, W′, and b′) that minimize a loss function \({\cal{L}}\). Primarily, we are interested in minimizing the squared error between the simulated data and autoencoder-filtered output, summed over all models H in the training dataset,

The second and third terms are relatively weak, and include two types of regularization: An L2 regularization on the weight matrices W and W′, and a sparsity regularization on the latent space activations SL32. The sparsity regularization is a Kullback-Leibler divergence of average activation value, \(\hat \rho _D\) of the hidden layer neurons and the desired average activation value, ρ has been set to 0.05. The regularizer coefficients λ and β are set to 0.001 and 1, respectively. This regularization seems to improve the physical interpretability of the latent space representation. Despite having millions of trainable parameters in the neural network, the autoencoder does not seem prone to overfitting; the low-dimensionality of the latent space itself acts as a strong regularizer. To find the model parameters that minimize \({\cal{L}}\), we use the scaled conjugate gradient descent algorithm33, as it is implemented in Matlab. We also experimented with a Keras autoencoder implementation, and found that it made little qualitative difference in our final results.

We found that a simple fully-connected autoencoder works well for experimental artifact removal from diffuse scattering data although other architectures can be explored in detail such as multilayer convolutional neural networks (CNN) or variational autoencoders. Note that artifact removal is inherent to our implementation of the AE due to the nature of the training data. Specifically, our dataset contained only simulated S(Q) data, and the autoencoder is trained to reproduce that. Because experimental artifacts are not present in the simulated data, the autoencoder inherently filters them out.

Optimization

Optimization proceeds iteratively. We initially select 100 random Hamiltonians, where J2, J3, and \(J_{3^\prime}\) are each sampled uniformly from the range −0.6 K to 0.6 K. At each subsequent iteration, we use all available data to build \(\hat \chi _{S_L}^2\), the low-cost approximator to \(\chi _{S_L}^2\). Next, we randomly select 100 new Hamiltonians H for inclusion in the dataset, each being sampled uniformly, subject to the constraint \(\hat \chi ^2\left( H \right) \, < \, c\). The cut-off parameter c decreases exponentially, rescaling by a factor 0.9 at each iteration. Consequently, later iterations in the optimization procedure are focused on regions where \(\chi _{S_L}^2\) is smallest. The optimization procedure terminates after about 40 iterations, at which point we take Hopt to be the minimizer of \(\hat \chi _{S_L}^2(H)\).

Uncertainty quantification

How can we compare uncertainties of J2, as estimated from \(\chi _{S({\mathbf{Q}})}^2\) vs. \(\chi _{S_L}^2\)? From Fig. 4a alone, one might estimate that J2 could lie anywhere between −0.3 K and 0.5 K. This is the region for which \(\chi _{S({\mathbf{Q}})}^2 \, < \, C_{S\left( {\mathbf{Q}} \right)}^2\), where \(C_{S\left( {\mathbf{Q}} \right)}^2\) is an empirically selected tolerance denoted by the dashed horizontal line. Working backwards, we can then ask: How much noise in the simulated Ssim(Q) data would it take for \(C_{S\left( {\mathbf{Q}} \right)}^2\) to be the actual stochastic uncertainty in \(\chi _{S({\mathbf{Q}})}^2\)? Assuming that Ssim(Q) contains this level of noise magnitude, we can then measure the corresponding stochastic uncertainty \(C_{S_L}^2\) of \(\chi _{S_L}^2\), which we plot as the dashed line in Fig. 4c. Comparing with Fig. 4a, we conclude that the autoencoder-based error measure \(\chi _{S_L}^2\) is more robust to stochastic noise, i.e., allows more precise estimation of J2. Thus, we have selected \(\chi _{S_L}^2\) as the best cost function for inferring the model Hamiltonian from the structure factor data.

Given experimental heat capacity data cv, we introduced a multi-objective cost function \(\chi _{{\mathrm{multi}}}^2\). Repeating the same procedure as above, we can define the multi-objective tolerance threshold \(C_{{\mathrm{multi}}}^2\) in terms of the raw tolerance \(C_{S\left( {\mathbf{Q}} \right)}^2\).

Clustering

To determine magnetic regimes, we employ the agglomerative hierarchical clustering algorithm34. For this, we use the same dataset as was used to train the autoencoder, i.e., a random selection of 1000 model Hamiltonians, and their corresponding Ssim(Q) data. The clustering algorithm requires as input the pairwise distances between all points in the dataset. We again employ the squared distance in the autoencoder latent space, i.e., as it appeared in \(\chi _{S_L}^2\).

Data availability

The data that support the findings of this study are available from the corresponding author upon reasonable request.

Code availability

The computer codes that support the finding of this study are available from the corresponding author upon reasonable request.

References

Gardner, J. S., Gingras, M. J. & Greedan, J. E. Magnetic pyrochlore oxides. Rev. Mod. Phys. 82, 53 (2010).

Tennant, D. Studies of spinons, majoranas, and monopoles in spin liquid and quantum critical magnets with neutrons. J. Phys. Soc. Jpn. 88, 081009 (2019).

Castelnovo, C., Moessner, R. & Sondhi, S. L. Magnetic monopoles in spin ice. Nature 7174, 42 (2008).

Morris, D. J. P. et al. Dirac strings and magnetic monopoles in the spin ice Dy2Ti2O7. Science 5951, 411–414 (2009).

Fennell, T. et al. Magnetic Coulomb phase in the spin ice Ho2Ti2O7. Science 326, 415–417 (2009).

Henley, C. L. The “Coulomb phase” in frustrated systems. Annu. Rev. Condens. Matter Phys. 1, 179–210 (2010).

Castelnovo, C., Moessner, R. & Sondhi, S. L. Spin ice, fractionalization, and topological order. Annu. Rev. Condens. Matter Phys. 3, 35–55 (2012).

Rehn, J. & Moessner, R. Maxwell electromagnetism as an emergent phenomenon in condensed matter. Philos. Trans. R. Soc. A: Math., Phys. Eng. Sci. 374, 20160093 (2016).

Harris, M. J., Bramwell, S. T., McMorrow, D. F., Zeiske, T. H. & Godfrey, K. W. Geometrical frustration in the ferromagnetic pyrochlore Ho2Ti2O7. Phys. Rev. Lett. 79, 2554 (1997).

Bramwell, S. T. & Gingras, M. J. P. Spin ice state in frustrated magnetic pyrochlore materials. Science 294, 1495–1501 (2001).

Castelnovo, C., Moessner, R. & Sondhi, S. L. Thermal quenches in spin ice. Phys. Rev. Lett. 104, 107201 (2010).

Snyder, J. et al. Low-temperature spin freezing in the Dy2Ti2O7 spin ice. Phys. Rev. B 69, 064414 (2004).

Snyder, J., Slusky, J. S., Cava, R. J. & Schiffer, P. How ‘spin ice’freezes. Nature 413, 48 (2001).

Jaubert, L. D. C. & Holdsworth, P. C. W. Magnetic monopole dynamics in spin ice. J. Phys.: Condens. Matter 23, 164222 (2011).

Jaubert, L. D. C. & Holdsworth, P. C. W. Signature of magnetic monopole and Dirac string dynamics in spin ice. Nat. Phys. 5, 258 (2009).

Melko, R. G., den Hertog, B. C. & Gingras, M. J. P. Long-range order at low temperatures in dipolar spin ice. Phys. Rev. Lett. 87, 067203 (2001).

Borzi, R. A. et al. Intermediate magnetization state and competing orders in Dy2Ti2O7 and Ho2Ti2O7. Nat. Commun. 7, 12592 (2016).

Yavors’kii, T., Fennell, T., Gingras, M. J. & Bramwell, S. T. Dy2Ti2O7 spin ice: a test case for emergent clusters in a frustrated magnet. Phys. Rev. Lett. 101, 037204 (2008).

Henelius, P. et al. Refrustration and competing orders in the prototypical Dy2Ti2O7 spin ice material. Phys. Rev. B 93, 024402 (2016).

Bovo, L. et al. Special temperatures in frustrated ferromagnets. Nat. Commun. 9, 1999 (2018).

Giblin, S. R. et al. Pauling entropy, metastability, and equilibrium in Dy2Ti2O7 Spin Ice. Phys. Rev. Lett. 121, 067202 (2018).

Pérez, J. I. H. Parallel Architectures and Bioinspired Algorithms. (Springer, Heidelberg, 2012)..

Goodfellow, I., Bengio, Y. & Courville, A. Deep Learning (MIT press, 2016).

Jones, D. R., Matthias, S. & Welch, W. J. Efficient global optimization of expensive black-box functions. J. Glob. Optim. 13, 455–492 (1998).

Matsuhira, K., Hiroi, Z., Tayama, T., Takagi, S. & Sakakibara, T. A new macroscopically degenerate ground state in the spin ice compound Dy2Ti2O7 under a magnetic field. J. Phys.: Condens. Matter 14, L559 (2002).

Li, Q. J. et al. Single crystal growth of the pyrochlores R2Ti2O7 (R = rare earth) by the optical floating-zone method. J. Cryst. Growth 377, 96–100 (2013).

Ye, F., Liu, Y., Whitfield, R., Osborn, R. & Rosenkranz, S. Implementation of cross correlation for energy discrimination on the time-of-flight spectrometer CORELLI. J. Appl. Crystallogr. 51, 315–322 (2018).

Arnold, O. et al. Mantid—Data analysis and visualization package for neutron scattering and μ SR experiments. Nucl. Instrum. Methods Phys. Res, 764, 156–166 (2014).

Kirkpatrick, S., Gelatt, C. D. & Vecchi, M. P. Optimization by simulated annealing. Science 220, 671–680 (1983).

Metropolis, N. et al. Equation of state calculations by fast computing machines. J. Chem. Phys. 21, 1087–1092 (1953).

Rapaport, D. C. & Rapaport Rapaport, D. C. The Art of Molecular Dynamics Simulation (Cambridge University Press, 2004).

Olshausen, B. A. & David, J. F. Sparse coding with an overcomplete basis set: a strategy employed by V1? Vis. Res. 37, 3311–3325 (1997).

Møller, M. F. A scaled conjugate gradient algorithm for fast supervised learning. Neural Netw. 6, 525–533 (1993).

Zhang W., Wang X., Zhao D. & Tang X. Graph degree linkage: Agglomerative clustering on a directed graph. European Conference on Computer Vision 428–441 (Springer, Berlin, Heidelberg, 2012).

Acknowledgements

A.M.S., Q.Z., and F.Y. acknowledge the support from the US DOE office of scientific user facilities. Z.L.D and H.D.Z thank the NSF for support with grant number DMR-1350002. A portion of this research used resources at Spallation Neutron Source, a DOE Office of Science User Facility operated by the Oak Ridge National Laboratory. The research by D.A.T. was sponsored by the DOE Office of Science, Laboratory Directed Research and Development program (LDRD) of Oak Ridge National Laboratory, managed by UT-Battelle, LLC for the U.S. Department of Energy. (Project ID 9566). Support for Q.Z. was provided by US DOE under EPSCoR Grant No. DESC0012432 with additional support from the Louisiana board of regent. K.B. acknowledges support from the LDRD program at Los Alamos National Laboratory. Y.W.L., M.E., and resources for computer modeling are sponsored by the Oak Ridge Leadership Computing Facility, which is supported by the Office of Science of the U.S. Department of Energy under contract no. DE-AC05-00OR22725. D.A.T. and A.M.S. would like to thank Guannan Zhang for useful discussions.

Author information

Authors and Affiliations

Contributions

D.A.T. conceived and coordinated the project. H.Z. and Z.L.D. prepared and characterized the high-quality single crystal. A.M.S., D.A.T, F.Y., and Q.Z. performed the neutron experiments. A.M.S., D.A.T., and Q.Z. undertook the initial data analysis. A.M.S. performed the numerical simulations and machine learning analysis with input and guidance from D.A.T., C.D.B., K.B., Y.W.L, S.A.G., and M.E. D.A.T., A.M.S., C.D.B., K.B., and S.A.G. wrote the paper. V.S. contributed in double checking and verifying ML results. All the authors discussed the data and its interpretation.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Peer review information Nature Communications thanks Ying-Jer Kao and the other, anonymous, reviewer(s) for their contribution to the peer review of this work. Peer reviewer reports are available.

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Samarakoon, A.M., Barros, K., Li, Y.W. et al. Machine-learning-assisted insight into spin ice Dy2Ti2O7. Nat Commun 11, 892 (2020). https://doi.org/10.1038/s41467-020-14660-y

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41467-020-14660-y

This article is cited by

-

Understanding temperature-dependent SU(3) spin dynamics in the S = 1 antiferromagnet Ba2FeSi2O7

npj Quantum Materials (2023)

-

Capturing dynamical correlations using implicit neural representations

Nature Communications (2023)

-

Classification of magnetic order from electronic structure by using machine learning

Scientific Reports (2023)

-

Integration of machine learning with neutron scattering for the Hamiltonian tuning of spin ice under pressure

Communications Materials (2022)

-

Investigation of the monopole magneto-chemical potential in spin ices using capacitive torque magnetometry

Nature Communications (2022)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.