Abstract

To increase transparency in science, some scholarly journals are publishing peer review reports. But it is unclear how this practice affects the peer review process. Here, we examine the effect of publishing peer review reports on referee behavior in five scholarly journals involved in a pilot study at Elsevier. By considering 9,220 submissions and 18,525 reviews from 2010 to 2017, we measured changes both before and during the pilot and found that publishing reports did not significantly compromise referees’ willingness to review, recommendations, or turn-around times. Younger and non-academic scholars were more willing to accept to review and provided more positive and objective recommendations. Male referees tended to write more constructive reports during the pilot. Only 8.1% of referees agreed to reveal their identity in the published report. These findings suggest that open peer review does not compromise the process, at least when referees are able to protect their anonymity.

Similar content being viewed by others

Introduction

Scholarly journals are coping with increasing requests for transparency and accountability of their internal processes by academics and various science stakeholders1. This sense of urgency is due to the increased importance of publications for tenure and promotion in an academic job market, which is now hypercompetitive worldwide2. Not only could biased peer review distort academic credit allocation; bias could also have-negative implications on scientific knowledge and innovation, and erode the legitimacy and credibility of science3,4,5,6.

Under the imperative of open science, certain learned societies, publishers and journals have started to experiment with open peer review as a means to open the black box of internal journal processes7,8,9. The need for more openness and transparency of peer review has been a subject of debate since the 1990s10,11,12. Recently, some journals, such as The EMBO Journal, eLife and those from Frontiers, have enabled various forms of pre-publication interaction and collaboration between referees, editors and in some cases even authors, with F1000 implementing advanced collaborative platforms to engage referees in post-publication open reviews. Although very important, these experiments have not led to a univocal and consensual framework13,14. This is because they have been performed only by individual journals, and mostly without any attempts to measure the effect of manipulation of peer review across different journals15,16.

Our study aims to fill this gap by presenting data on an open peer review pilot run at five Elsevier journals in different fields simultaneously, in which referees were asked to agree to publish their reports. Starting with 62,790 individual observations, including 9220 submissions and 18,525 completed reviews from 2010 to 2017, we estimated referee behavior before and during the pilot in a quasi natural experiment. In order to minimize any bias due to the non-experimental randomization of these five pilot journals, we accessed similar data on a set of comparable Elsevier journals, so achieving a total number of 138,117 individual observations, including 21,647 manuscripts (pilot + group control journals).

Our aim was to understand whether knowing that their report would be published affected the referees’ willingness to review, the type of recommendations, the turn-around time and the tone of the report. These are all aspects that must be considered when assessing the viability and sustainability of open peer review. By reconstructing the gender and academic status of referees, we also wanted to understand whether these innovations were perceived differently by certain categories of scholars8,17.

It is important here to note that while open peer review is an umbrella term for different approaches to transparency13, publishing peer review reports is probably the most important and less problematic form. Unlike pre-publication open interaction, post-publication or decoupled reviews, this form of openness neither requires complex management technologies nor it depends on external resources (e.g., a self-organized volunteer community). At the same time, not only do open peer review reports increase transparency of the process, they also stimulate reviewer recognition and transform reports in training material for other referees1,7,8.

Results

The Pilot

In November 2014, five Elsevier journals agreed to be involved in the Publication of Peer Review reports as articles (from now on, PPR) pilot. During the pilot, these five journals openly published typeset peer review reports with a separate DOI, fully citable and linked to the published article on ScienceDirect. Review reports were published freely available regardless of the journal’s subscription model (two of these journals were open access, while three were published under the subscription-based model). For each accepted article, all revision round review reports were concatenated under the first round for each referee, with all content published as a single review report. Different sections were used in cases of multiple revision rounds. For the sake of simplicity, once agreed to review, referees were not given any opt-out choice and were asked to give their consent to reveal their identity. In agreement with all journal editors, a text was added to the invitation letter to inform referees about the PPR pilot and their options. At the same time, authors themselves were fully informed about the PPR when they submitted their manuscripts. Note that while one of these journals started the pilot earlier in 2012, for all journals the pilot ended in 2017 (further details as SI).

Figure 1 shows the overall submission trend in these five journals during the period considered in this study. We found a general upward trend in the number of submissions, although this probably did not reflect-specific trends due to the pilot (see details in the SI file).

Following previous studies18, in order to increase the coherence of our analysis, we only considered the first round of review, i.e., 85% of observations in our dataset. For observation, we meant any relevant event and activity that were recorded in the journal database, e.g., the day a referee responded to the invitation or the recommendation he/she provided (see Methods)

Willingness to review

We found that only 22,488 (35.8%) of invited referees eventually agreed to review, with a noticeable difference before and after the beginning of the pilot, 43.6% vs. 30.9%. However, it is worth noting that while the acceptance rate varied significantly among journals, there was an overall declining trend, possibly starting before the beginning of the pilot (Fig. 2).

Descriptive statistics also highlighted certain changes in referee profile. More senior academic professors agreed less to review during the pilot, whereas younger scholars, with or without a Ph.D. degree, were more keen to review. We did not find any relevant gender effect (Fig. 3).

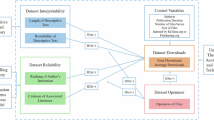

The first impression was that the number of potential referees who accepted to review actually declined to do so in the pilot. However, considering that the number of review invitations increased over time, this may have simply reflected the larger number of editorial requests. To control for these possible confounding factors, we estimated a mixed-effect logistic model with referees’ acceptance of editors’ invitation as outcome. To consider the problem of repeated observations on the same paper and the across-journal nature of the dataset, we also included random effects for both the individual submission and the journal. Besides the open review dummy, we estimated fixed effects for the year, where the start date of the dataset was indicated as zero and each subsequent year by increasing integers, the referee’s declared status, with “professor”, “doctor” and “other” as levels, and the referee’s gender, with three levels, “female”, “male” and “uncertain” (in case our text mining algorithm did not assign a specific gender). The year variable allowed us to control for any underlying trend in the data, such as the increased number of submissions and reviews, or the increased referee pool. Furthermore, to check whether the open review condition had a different effect on specific sub-groups of referees, we estimated fixed effects for the interaction between this variable and the status and gender of referees (Table 1).

Results suggest that the apparent decline of review invitation acceptance simply reflected a time trend, which was independent of the open review condition and probably due to the increasing number of submissions and requests. The pure effect of the open review condition was not statistically significant. Furthermore, although several referee characteristics had an effect on the willingness of reviewing, only the interaction effect with the “other” status was significant. Referees without a professor or doctoral degree, and so probably younger or non-academic, were actually more keen to review during the pilot. However, by comparing the pilot with a sample of five comparable Elsevier journals, we found that this decline of willingness to review was neither journal-specific nor trial-induced, i.e., influenced by open peer review (see Supplementary Tables 1–3 and Supplementary Figure 1).

Recommendations

The distribution of recommendations changed slightly during the pilot, with more frequent rejections and major revisions (Fig. 4). On the other hand, the distribution of recommendations by referees who accepted to have their names published with the report was noticeably different, with many more-positive recommendations. Given that revealing identity was a decision made by referees themselves after completing their review, it is probable that these differences in recommendations could reflect a self-selection process. Referees who wrote more-positive reviews were more keen to reveal their identity later as a reputational signal to authors and the community. However, it is worth noting that only a small minority of referees (about 8.1%) accepted to have their names published together with their report.

In order to control for time trends and journal characteristics, we estimated another model, including the open review dummy and all relevant interaction effects. As the outcome was an ordinal variable with four levels (reject, major revisions, minor revisions, accept), we estimated a mixed-effect cumulative-link model including the same random and fixed effects as the previous model. Table 2 shows that the pilot did not bias recommendations. Among the various referee characteristics, only referee status had any significant interaction effect, with younger and non-academic referees (i.e., the “other” group) who submitted on average more positive recommendations. Note that these results were confirmed by our robustness check test with five comparable Elsevier journals not involved in the pilot (Supplementary Table 2).

Review time

We analysed the number of days referees took to submit their report before and after the beginning of the pilot. Previous research suggests that open peer review could increase review time as referees could be inclined to write their reports in more structured and correct language, given that they are eventually published8. The average 28.2 ± 4.6 days referees took to complete their reports before the pilot increased to 30.4 ± 4.4 days during it. However, after estimating models that considered the increasing number of observations over time, we did not find any significant effect on turn-round time (see Table 3). When considering interaction effects, we only found that referees with a doctoral degree tended to take more time to complete their report, but differences were minimal. Note that results were further confirmed by analysing five comparable Elsevier journals not involved in the pilot (Supplementary Table 3).

Review reports

In order to examine whether the linguistic style of reports changed during the pilot, we performed a sentiment analysis on the text of reports by considering polarity—i.e., whether the tone of the report was mainly negative or positive (varying in the [−1, 1] interval, with larger numbers indicating a more positive tone)—and subjectivity—i.e., whether the style used in the reports was predominantly objective ([0, 1] interval, higher numbers indicating more subjective reports). A graphical analysis showed only minimal differences before and during the pilot, with reviews only slightly more severe and objective in the open peer review condition (Fig. 5).

Two mixed-effects models were estimated using the polarity and subjectivity indexes as outcome. The pilot dummy, the recommendation, the (log of) the number of characters of the report, the year, and the gender and status of the referees (plus interactions), respectively, were included as fixed effects. As before, the submission and journal IDs were used as random effects. Table 4 shows that the pure effect of open review was not significant. However, we found a positive and significant interaction effect with gender. Indeed, male referees tended to write more-positive reports under the open review condition, although this effect was statistically significant only at the 5% level. However, considering the large number of observations in our dataset, any inference to open peer review effects from such a significance level should be considered cautiously19.

When testing a similar model on subjectivity, we only found that younger and non-academic referees were more objective, whereas no significant effect was found for other categories (Table 5).

Discussion

Our findings suggest that open peer review does not compromise the inner workings of the peer review system. Indeed, we did not find any significant negative effects on referees’ willingness to review, their recommendations, or turn-around time. This contradicts recent research on individual cases, in which various forms of open peer review had a negative effect on these same factors16,20. Here, only younger and non-academic referees were slightly sensitive to the pilot. They were more keen to accept to review, more objective in their reports, and less demanding on the quality of submissions when under open peer review, but effects were minor.

Interestingly, we found that the tone of the report was less negative and subjective, at least when referees were male and younger. While this could be expected in case referees opting to reveal their identity, as this could be a reputational signal for future cooperation by published authors, this was also true when referees decided not to reveal their identity.

However, it is worth noting that unlike recent survey results14, here only 8.1% of referees agreed to reveal their identity. Although certain benefits of open science and open evaluation are incontrovertible21,22, our findings suggest that the veil of anonymity is key also for open peer review. It is probable that this reflects the need for protection from possible retaliation or other unforeseen implications of open peer review, perhaps as a consequence of the hyper-competition that currently dominates academic institutions and organizations23,24. In any case, this means that research is still needed to understand the appropriate level of transparency and openness of internal processes of scholarly journals8,13.

In this respect, although our cross-journal dataset allowed us to have a more composite and less fragmented picture of peer review25, it is possible that our findings were still context specific. For instance, a recent survey on scientists’ attitudes towards open peer review revealed that scholars in certain fields, such as the humanities and social sciences, were more skeptical about these innovations14. Previous research suggests that peer review reflects epistemic differences in evaluation standards and disciplinary traditions26,27. Furthermore, while here we focused on referee behavior, it is probable that open peer review could influence author behavior and publication strategies, making journals more or less attractive also depending on their type of peer review and their level of transparency.

This indicates that the feasibility and sustainability of open peer review could be context specific and that the diversity of current experiments probably reflects this awareness by responsible editors and publishers8,13,14. While large-scale comparisons and across-journal experimental tests are required to improve our understanding of these relevant innovations, these efforts are also necessary to sustain an evidence-based journal management culture.

Methods

Our dataset included records concerning authors, reviewers and handling editors of all peer reviewed manuscripts submitted to the five journals included in the pilot. The data included 62,790 observations linked to 9220 submissions and 18,525 completed reviews from January 2010 to November 2017. Sharing internal journal data were possible thanks to a protocol signed by the COST Action PEERE representatives and Elsevier28.

We applied text mining techniques to estimate the gender of referees by using two Python libraries that contain more than 250,000 names from 80 countries and languages, namely gender-guesser 0.4.0 and genderize.io. This allowed us to minimize the number of “uncertain” cases (20.7%). For each subject, we calculated his/her academic status as filled in the journal management platform and performed an alphanumeric case-insensitive matching in the concatenation of title and academic degree. This allowed us to assign everyone the status of “professor” (i.e., full, associate or assistant professors), “Doctor” (i.e., someone who held a doctorate), and “Other” (i.e., an engineer, BSc, MSc, PhD candidate, or a non-academic expert).

To perform the sentiment analysis of the report text, we used a pattern analyzer provided by the TextBlob 0.15.0 library in Python, which averages the scores of terms found in a lexicon of around 2900 English words that occur frequently in product reviews. TextBlob is one of the most commonly used libraries to perform sentiment analysis and extract polarity and subjectivity from texts. It is based on two standard libraries to perform natural language processing in Python, that is, Pattern and NLTK (Natural Language Toolkit). We used the former to crawl and parse a variety of online text sources, while the latter, which has more than 50 corpora and lexical resources, allowed us to process text for classification, tokenization, stemming, tagging, parsing, and semantic reasoning29. This allowed us to consider valence shifters (i.e., negators, amplifiers (intensifiers), de-amplifiers (downtoners), and adversative conjunctions) through an augmented dictionary lookup. Note that we considered only reports including at least 250 characters, corresponding to a few lines of text.

All statistical analyses were performed using the R 3.4.4 platform30 with the following additional packages: lme4, lmerTest, ordinal and simpleboot. Plots were produced using the ggplot2 package. The dataset and R script used to estimate the models are provided as supplementary information.

Mixed-effects linear models (Tables 1, 3–5) included random effects (random intercepts) for submissions and journals. The mixed-effects cumulative-link model31 (Table 2) used the same random effects structure of the linear models. This allowed us to test different model specifications, with all predictors except the open review dummy and the year either dropped or sequentially included. Note that the p-value for the open review dummy was never below conventional significance thresholds.

To test our findings robustness, we selected five extra Elsevier journals as a control group. These journals were selected to match the discipline/field, impact factor, number of submissions and submission dynamics of the five pilot journals. We included both the pilot and control journals in three separate models to estimate their effect on willingness to review, referee recommendations and review time. Results confirmed our findings (see details in the SI file).

While all robustness checks provided in the SI file allowed us to confirm our findings, it is worth noting that our individual observations could be sensitive to dependency. Indeed, the same referee could have reviewed many manuscripts either for the same or for other journals (this case was perhaps less probable given the different journal domains). While unfortunately we could not obtain consistent referee IDs across journals, we believe that the potential effect of this dependency on our models was minimal considering the large size of the dataset.

Data availability

The journal dataset required a data sharing agreement to be established between authors and Elsevier. The agreement was possible thanks to the data sharing protocol entitled “TD1306 COST Action New frontiers of peer review (PEERE) policy on data sharing on peer review”, which was signed by all partners involved in this research on 1 March 2017. The protocol was as part of a collaborative project funded by the EU Commission28. The dataset and data scripts are available as source data files.

References

Walker, R. & Rocha da Silva, P. Emerging trends in peer review—a survey. Front. Neurosci. 9, 169 (2015).

Teele, D. L. & Thelen, K. Gender in the journals: Publication patterns in political science. PS Polit. Sci. Polit. 50, 433–447 (2017).

Siler, K., Lee, K. & Bero, L. Measuring the effectiveness of scientific gatekeeping. Proc. Natl Acad. Sci. USA 112, 360–365 (2015).

Strang, D. & Siler, K. Revising as reframing: Original submissions versus published papers in Administrative Science Quarterly, 2005 to 2009. Sociol. Theor. 33, 71–96 (2015).

Balietti, S., Goldstone, R. L. & Helbing, D. Peer review and competition in the art exhibition game. Proc. Natl Acad. Sci. USA 113, 8414–8419 (2016).

Jubb, M. Peer review: The current landscape and future trends. Lear. Publ. 29, 13–21 (2016).

Wicherts, J. M. Peer review quality and transparency of the peer-review process in open access and subscription journals. PLoS ONE 11, 1–19 (2016).

Tennant, J. et al. A multi-disciplinary perspective on emergent and future innovations in peer review. F1000Res. 6, 1151 (2017).

Wang, P. & Tahamtan, I. The state of the art of open peer review: early adopters. Proc. ASIS T 54, 819–820 (2017).

Smith, R. Opening up BMJ peer review. BMJ 318, 4–5 (1999).

Walsh, E., Rooney, M., Appleby, L. & Wilkinson, G. Open peer review: a randomised controlled trial. Br. J. Psychiatry 176, 47–51 (2000).

Jefferson, T., Alderson, P., Wager, E. & Davidoff, F. Effects of editorial peer review: a systematic review. JAMA 287, 2784–2786 (2002).

Ross-Hellauer, T. What is open peer review? A systematic review. F1000Res. 6, 588 (2017).

Ross-Hellauer, T., Deppe, A. & Schmidt, B. Survey on open peer review: attitudes and experience amongst editors, authors and reviewers. PLoS ONE 12, 1–28 (2017).

van Rooyen, S., Godlee, F., Evans, S., Black, N. & Smith, R. Effect of open peer review on quality of reviews and on reviewers’recommendations: a randomised trial. BMJ 318, 23–27 (1999).

Bruce, R., Chauvin, A., Trinquart, L., Ravaud, P. & Boutron, I. Impact of interventions to improve the quality of peer review of biomedical journals: a systematic review and meta-analysis. BMC Med. 14, 85 (2016).

Rodríguez‐Bravo, B. et al. Peer review: the experience and views of early career researchers. Learn. Publ. 30, 269–277 (2017).

Bravo, G., Farjam, M., Grimaldo Moreno, F., Birukou, A. & Squazzoni, F. Hidden connections: Network effects on editorial decisions in four computer science journals. J. Informetr. 12, 101–112 (2018).

Benjamin, D. J. et al. Redefine statistical significance. Nat. Hum. Behav. 2, 6–10 (2017).

Almquist, M. et al. A prospective study on an innovative online forum for peer reviewing of surgical science. PLoS ONE 12, 1–13 (2017).

Pöschl, U. Multi-stage open peer review: scientific evaluation integrating the strengths of traditional peer review with the virtues of transparency and self-regulation. Front. Comput. Neurosci. 6, 33 (2012).

Kriegeskorte, N. Open evaluation: a vision for entirely transparent post-publication peer review and rating for science. Front. Comput. Neurosci. 6, 79 (2012).

Fang, F. C. & Casadevall, A. Competitive science: Is competition ruining science? Infect. Immunol. 83, 1229–1233 (2015).

Edwards, M. A. & Siddhartha, R. Academic research in the 21st century: Maintaining scientific integrity in a climate of perverse incentives and hypercompetition. Env. Sci. Eng. 34, 51–61 (2017).

Grimaldo, F., Marusic, A. & Squazzoni, F. Fragments of peer review: A quantitative analysis of the literature (1969-2015). PLoS ONE 13, 1–14 (2018).

Squazzoni, F., Bravo, G. & Takacs, K. Does incentive provision increase the quality of peer review? An experimental study. Res. Policy 42, 287–294 (2013).

Tomkins, A., Zhang, M. & Heavlin, W. D. Reviewer bias in single- versus double-blind peer review. Proc. Natl Acad. Sci. USA 114, 12708–12713 (2017).

Squazzoni, F., Grimaldo, F. & Marusic, A. Publishing: journals could share peer-review data. Nature 546, 352 (2017).

Bird, S., Klein, E. & Loper, E. Natural Language Processing with Python 1st edn, (O’Reilly Media, Inc., Sebastopol, CA, 2009).

R Core Team. R: A Language and Environment for Statistical Computing. (R Foundation for Statistical Computing, Vienna, Austria, 2018).

Agresti, A. Categorical Data Analysis. Second Edition, (John Wiley & Sons, Hoboken, NJ, 2002).

Acknowledgements

This work is supported by the COST Action TD1306 New frontiers of peer review (www.peere.org). The statistical analysis was performed exploiting the high-performance computing facilities of the Linnaeus University Centre for Data Intensive Sciences and Applications. Finally, we would like to thank Mike Farjam and three anonymous referees for useful comments and suggestions on a preliminary version of the manuscript.

Author information

Authors and Affiliations

Contributions

B.M. designed and ran the pilot. F.G. and E.L.-I. created the dataset. F.G., E.L.-I. and G.B. analyzed results. F.G. and F.S. managed data sharing policies. B.M., F.S., F.G., G.B. designed the research and wrote the paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Journal peer review information: Nature Communications thanks the anonymous reviewers for their contribution to the peer review of this work. Peer reviewer reports are available.

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Bravo, G., Grimaldo, F., López-Iñesta, E. et al. The effect of publishing peer review reports on referee behavior in five scholarly journals. Nat Commun 10, 322 (2019). https://doi.org/10.1038/s41467-018-08250-2

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41467-018-08250-2

This article is cited by

-

Bias of AI-generated content: an examination of news produced by large language models

Scientific Reports (2024)

-

Behavioral consequences of second-person pronouns in written communications between authors and reviewers of scientific papers

Nature Communications (2024)

-

On the peer review reports: does size matter?

Scientometrics (2024)

-

Data, measurement and empirical methods in the science of science

Nature Human Behaviour (2023)

-

Monitoring the impact of climate extremes and COVID-19 on statewise sentiment alterations in water pollution complaints

npj Clean Water (2023)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.