Abstract

Autism spectrum disorder (ASD) is classically associated with poor face processing skills, yet evidence suggests that those with obsessive-compulsive disorder (OCD) and attention deficit hyperactivity disorder (ADHD) also have difficulties understanding emotions. We determined the neural underpinnings of dynamic emotional face processing across these three clinical paediatric groups, including developmental trajectories, compared with typically developing (TD) controls. We studied 279 children, 5–19 years of age but 57 were excluded due to excessive motion in fMRI, leaving 222: 87 ASD, 44 ADHD, 42 OCD and 49 TD. Groups were sex- and age-matched. Dynamic faces (happy, angry) and dynamic flowers were presented in 18 pseudo-randomized blocks while fMRI data were collected with a 3T MRI. Group-by-age interactions and group difference contrasts were analysed for the faces vs. flowers and between happy and angry faces. TD children demonstrated different activity patterns across the four contrasts; these patterns were more limited and distinct for the NDDs. Processing happy and angry faces compared to flowers yielded similar activation in occipital regions in the NDDs compared to TDs. Processing happy compared to angry faces showed an age by group interaction in the superior frontal gyrus, increasing with age for ASD and OCD, decreasing for TDs. Children with ASD, ADHD and OCD differentiated less between dynamic faces and dynamic flowers, with most of the effects seen in the occipital and temporal regions, suggesting that emotional difficulties shared in NDDs may be partly attributed to shared atypical visual information processing.

Similar content being viewed by others

Introduction

Face processing deficits are widely reported in psychiatric populations and negatively affect family and social relationships1. Although autism spectrum disorder (ASD) is classically associated with poor face processing skills2, increasing evidence shows emotional and social-cognitive impairments in obsessive-compulsive disorder (OCD) and attention deficit hyperactivity disorder (ADHD)3,4. As these neurodevelopmental disorders (NDDs) can be comorbid and share overlapping symptoms5,6,7, there are common difficulties in cognitive domains. Beyond comorbidity, NDDs can share common cognitive deficits but the underlying pathophysiology might be different. We investigated brain function underpinning face processing, contrasting fMRI measures of dynamic emotional face processing across these three clinical paediatric groups, compared with typically developing (TD) controls in a large, single-site cohort.

Facial expressions of emotion arise from facial movements and are rich sources of social information. Recognizing and understanding emotional expressions are essential for appropriate social behaviour. We typically process faces rapidly with minimal attentional resources8,9, being very effective at discerning emotions from facial movements. Behavioural10 and neuroimaging studies11,12,13,14 show that, compared to static expressions (i.e., photographs), dynamic facial expressions convey compelling information that is more similar to what we encounter in everyday social interactions. Dynamic presentation of facial emotions improves identification of emotion15,16 and increases the ecological validity12,13,14,17. Nevertheless, static photographs of facial expressions have been predominantly used in imaging studies.

In response to static facial expressions, activity is seen in core face-processing regions, including fusiform gyri, amygdalae and temporal cortices, with the fusiform and the superior temporal sulci (STS) being implicated in the detailed perception of faces9,18,19,20. These same areas are active to dynamic faces, particularly the STS21 and V511,12,13,22. However, dynamic faces also include activation in frontal areas, including inferior and orbital frontal gyri14,22. Normative studies with dynamic faces found increases in core face-processing regions, consistent with increased salience, with frontal increases consistent with greater social-cognitive processing. Given the increased ecological validity and salience of dynamic faces, these stimuli are beginning to be used in clinical populations with emotional processing difficulties. Below we review briefly the neuroimaging literature on dynamic emotional face processing in ASD, OCD and ADHD.

ASD

The classic work of Kanner23 described emotional abnormalities in autism, which have since been confirmed by many studies24,25,26. Social communication difficulties are a key symptom of ASD, and central to social interactions is understanding emotions and their expression.

Structural and functional imaging studies have found abnormalities in brain regions associated with emotional face processing in ASD (e.g.27,28,29). Studies including the use of dynamic faces in ASD are, however, almost exclusively behavioural. Enticott et al.30 reported that dynamic faces improved recognition of angry but not sad faces in adults with ASD, while Zane et al.31 reported that children with ASD did not show the same sensitivity to positive or negative valence with dynamic faces as controls. In teenagers with ASD, Law Smith et al.32 found reduced accuracy in identifying emotional expressions, particularly at a lower intensity, despite them being dynamic, similarly to Weiss et al.33 in adolescents and adults with ASD. However, one fMRI study34 reported that with dynamic faces there were no activation differences between adults with and without ASD. Given the wealth of other neuroimaging data showing group differences in face and emotional face processing, and the evidence that those with ASD experience difficulties with understanding emotions, we expected to find abnormalities in activation to emotional faces in this population, but that group differences may decrease with age.

OCD

Emotional dysfunction is often considered a key component in OCD, with emphasis on recognition of disgust35,36. Daros et al.37 completed a meta-analysis and found that across ten behavioural studies, those with OCD were less accurate in recognising emotional faces, particularly disgust and anger. Others reported OCD patients had lower social-cognitive awareness38 and poorer performance on a facial recognition task39.

Few neuroimaging studies have explored emotional face processing in OCD, showing either enhanced face network activity40 or reduced amygdalae responses to happy, fearful and neutral faces;41 this reduction in activity to faces was also seen in a paediatric group42. These latter two studies had very small sample sizes (n = 10 and 12, respectively), and were likely underpowered. We anticipate, that of the three NDD groups, the OCD would show the fewest differences from the TDs in the neural responses to dynamic happy and angry faces.

ADHD

ADHD is one of the most common paediatric psychiatric disorders43. Behavioural and imaging research has focused on the classic indicators of inattention, hyperactivity and impulsivity, yet increasing evidence suggests that ADHD involves social-cognitive and emotional difficulties also3,44. Studies have linked emotional impulsiveness and temperamental dysregulation with ADHD symptoms45,46. Yuill et al.47 found that boys with ADHD performed poorly when matching emotional faces to situations, but performed similarly to controls with a non-face task. Kats-Gold et al.48 reported that boys at risk for ADHD had impaired emotional face identification, and this played a significant role in their social functioning and behaviour. When dynamic faces were used, children with ADHD still had lower accuracy in identifying basic emotions49. Hence, we expected to find neuroimaging markers of emotional difficulties in children with ADHD; i.e., reduced activity reflecting reduced awareness or salience of emotions to these children.

Thus, the aims of this study were to determine (a) if the processing of emotional faces differs across the three NDDs, and (b) if the neural mechanisms underlying emotional face processing develop differently over childhood in these groups compared to TD children.

Materials and methods

Children and adolescents (n = 279, 5–19 year olds) were included in the current study (128 ASD, 54 ADHD, 43 OCD and 54 TD). Children were recruited through the Province of Ontario Neurodevelopmental Disorders (POND) network. The children with NDDs were assessed clinically and diagnosed with one of the primary clinical diagnoses. The presence of co-morbidities and the use of psychotropic medication were noted in the participants, but none were excluded on this basis (see Supplemental Information and Supplemental Tables 1 and 2 for further details).

fMRI Paradigm

The fMRI stimuli consisted of dynamic faces (neutral-to-happy or angry) and dynamic flowers (closed-to-open). Static images of faces (the same faces, neutral and happy and neutral and angry) were taken from the MacBrain Face Stimulus Set1 and made dynamic (morphing from neutral to either happy or angry) using Win Morph software. Nature videos of flowers opening and closing in grayscale were used as the non-face stimuli, as detailed in Arsalidou et al.22 These stimuli were organized into blocks (13.5 s) of nine trials where the dynamic image was displayed for 480 ms before being replaced by a fixation cross for 1020 ms. Within every block, one of the nine trials was a vigilance trial consisting of a blue star to which the children pressed a button. Each run consisted of 18 pseudo-randomized blocks (six each of happy, angry, flowers) with a 27 s fixation rest period at the halfway point. The stimuli were displayed using Presentation (Neurobehavioral Systems Inc.) software on MR-compatible goggles, and participants were instructed to fixate on the stimuli and respond to the vigilance trials using a dual button MR-compatible keypad. Anatomical images were acquired along with the functional images. Details on the imaging protocols can be found in the Supplemental Information.

Preprocessing

Image preprocessing of functional data used a combination of AFNI50,51 and FSL52,53 tools. Slice-timing and motion correction were performed, and the six motion parameters were estimated, from which framewise displacement (FD) was calculated54. Volumes with FD > 0.9mm55 were censored; participants with more than one third of their volumes censored were excluded from the analyses. Data were smoothed (6 mm FWHM Gaussian kernel), intensity-normalized, and temporally filtered (0.01–0.2 Hz). Signal contributions from the white matter, CSF, whole-brain and six motion parameters were regressed from the data; ICA de-noising was performed via FSL’s FIX.

Preprocessed data were then analyzed with FSL’s FILM56. The three dynamic blocks (happy, angry and flowers) were used as explanatory variables and convolved with the hemodynamic response function. The previously estimated six motion parameters and signals from the white matter, CSF and whole brain were used as confound explanatory variables, along with a confound matrix corresponding to the censored volumes to completely remove the effects of the corrupted time points. Within-subject contrasts were generated between each pair of stimuli (happy/angry, happy/flowers, and angry/flowers). Before group-level analyses, images were registered to the Montreal Neurological Institute template using FSL’s Boundary-Based Registration57.

Statistical analysis

Kruskal–Wallis tests were used to compare the median ages and mean FDs in the four diagnostic groups. With significant results, follow-up pairwise comparisons were used and the resulting p-values were Bonferroni corrected and significance was held at pcorr < 0.05. Chi-squared tests were used to determine the presence of proportion differences in sex and acquisition scanner amongst the four diagnostic groups. Upon significance, the Marascuillo procedure58 was used to determine which pairwise difference was driving the effect. All tests were run in MATLAB.

For each of the three contrasts, group-level analyses were performed using FSL’s FLAME59. Within-group means were determined using one-sample t-tests (see Supplemental Information). Differences amongst the four diagnostic groups, along with group-by-age interactions, were investigated using voxelwise F-tests. Upon significance of an F-test, voxelwise post hoc pairwise t-tests between the four diagnostic groups were subsequently run to identify which diagnostic group(s) were driving the significant effect, and in what direction. For all analyses, sex and mean FD were included as covariates, along with a voxelwise covariate modelling the effect of acquisition scanner (see Supplemental Information). Gaussian Random Field theory was used and clusters were determined by Z > 2.3, and a cluster-corrected significance threshold of pcorr < 0.05 was used for the F-tests, while cluster significance was held at pcorr < 0.008 to control for multiple comparisons across the six pairwise comparisons for the t-tests.

Results

After removing subjects who failed to meet the motion criteria, 222 children remained: 49 TD, 87 ASD, 44 ADHD and 42 OCD (Table 1). There was no significant difference in mean FD amongst the four diagnostic groups (H(3) = 7.68, p = 0.05), nor a significant difference in sex (Χ2 = 7.14, p = 0.07); however, there was a significant difference in age (H(3) = 11.75, p = 0.01), driven by a difference between the ASD and ADHD participants (see Supplemental Information).

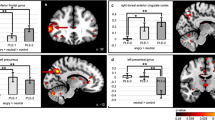

The voxelwise F-tests revealed a similar effect of the diagnostic group when comparing both happy (Fig. 1) and angry (Fig. 2) faces to flowers, which, as revealed by the post hoc t-tests, were being driven by differences between the TD and NDD children. A significant effect of diagnostic group was also present when comparing emotion (Fig. 3), caused by differences between the ASD and remaining groups. A significant group-by-age interaction in the emotion contrast was also found (Fig. 4), which was driven by differences in age-related changes between TD and NDD children. A description of these results are detailed in the following paragraphs. Within-group effects can be found in the Supplemental Information, Supplemental Fig. 1, and Supplemental Tables 3–6.

Significant (Z > 2.3, pcorr < 0.05) between-group differences in the happy > flowers contrast as revealed by one-tailed F test (A), the distribution of the effect across groups (B) along with the post hoc pairwise t tests (Z > 2.3, pcorr < 0.008) between the four diagnostic groups (C, D and E contrasts between the TDs and the three NDDs and F, G and H the contrasts between the NDDs).

Significant (Z > 2.3, pcorr < 0.05) between-group differences in the angry > flowers contrast as shown by one-tailed F test, along with the post hoc pairwise t tests (Z > 2.3, pcorr < 0.008) between the four diagnostic groups, as in Fig. 1.

Significant (Z > 2.3, pcorr < 0.05) between-group differences in the angry > happy contrast as revealed by one-tailed F test, along with the post hoc pairwise t tests (Z > 2.3, pcorr < 0.008) between the four diagnostic groups, as in Fig. 1.

For between-group differences in the happy versus flower contrast, the F-test showed significance in the bilateral lingual, inferior occipital and fusiform gyri, and right middle occipital gyrus (Fig. 1A; Table 2). Figure 1B shows that activation to happy faces compared to flowers in these visual regions is reduced across all groups (mean COPE values are negative). The post hoc pairwise t-tests (Fig. 1C–H; Table 2) demonstrated that the significant reduction in activation was driven by differences between the TD and NDD children. In occipital regions, TDs had greater decrease in activation to happy faces compared to flowers than the three NDD groups (Fig. 1C–E) and, within the NDD groups, the OCD children showed increased activation compared to the ASD and ADHD children, in visual and right parietal areas, respectively.

In the angry versus flower contrast, a comparable pattern was seen with the F-test, with significant between-group differences occurring in occipital regions including bilateral middle and inferior occipital gyri (Fig. 2A; Table 3). Similarly to happy faces, these regions showed decreased activation to angry faces with respect to flowers (Fig. 2B). The post hoc t-tests (Fig. 2C–H; Table 3) confirmed that the differential activation to angry faces versus flowers in TDs was significantly greater than both the ASD and OCD children (but not ADHD), while there were no significant differences amongst the NDD groups. There were no significant group-by-age interactions in either the happy or angry versus flowers contrasts.

More interesting for our questions of emotional face processing, were the between-emotion contrasts. For the angry versus happy faces between-group contrast, significant F-test differences were found in a small cluster straddling the right inferior occipital, inferior and middle temporal gyri (Fig. 3A, B; Table 4). The post hoc t-tests (Fig. 3C–H; Table 4) revealed that this cluster was significant in the ASD pairwise comparisons, with ASD children showing increased activation to angry compared to happy faces compared to all three of the other groups, who showed minimal difference between the two emotions. The TD children, however, showed greater activation than the ASD to angry than happy faces in the cuneus and occipital areas bilaterally and in left temporal regions (Fig. 3D). The TD also showed greater activity to angry than happy faces than the OCD in occipital-temporal areas (Fig. 3E), while there were no differences between the ADHD and TD in this contrast (Fig. 3C).

Finally, in happy versus angry faces, the only significant group-by-age interaction was found: increased activity in the left superior and medial frontal gyri (Fig. 4A, B; Table 5), which was driven by differences in age-related changes between TD and both the ASD and OCD children (Fig. 4D–E; Table 5). The ASD and OCD groups recruited these frontal regions less when processing happy compared to angry faces during childhood but more in adolescence, while the TD children showed the opposite effect; the ADHD children showed no activation difference between happy and angry faces in this region, and this remained constant over age. There were no differences amongst the NDD groups in this contrast (Fig. 4F–G).

The within group means for each of the four groups for these four contrasts are shown in Supplemental Fig. 1.

Discussion

Using dynamic happy and angry faces, we demonstrated that the children with NDDs shared neural processing of emotional faces, seen particularly in occipital and temporal regions, compared to their TD peers. Although these patterns varied with the emotion expressed, our findings suggest similar neural mechanisms underlying socio-emotional difficulties across NDDs. Importantly, in the contrast between happy and angry faces, there was an age-by-group interaction that involved the left superior frontal gyrus, indicating different developmental trajectories of brain areas engaged in emotional face processing, a widening gap between NDDs and TD and increasing difficulties in the NDDs with age. These findings are discussed below, in relation to emotional processing in these child psychiatric populations.

Shared alterations in medial and lateral occipital activity between dynamic faces and dynamic flowers in the NDDs may reflect their shared difficulties in emotional face recognition. The TD group had a greater decrease in activation to faces compared to flowers than the NDD group, which suggests more distinctive processing of the dynamic facial stimuli by the TD; in contrast, the NDDs showed more similar processing of the dynamic stimuli, regardless of whether they were faces or flowers. Face recognition is subserved by a distributed brain network that engages in processing and integrating visual information and the inferior occipital and fusiform regions have key roles in this function. This network is present at birth and matures by late adolescence and adulthood60, with maturation facilitating efficiency in the speed and accuracy of face processing61. Greater decreases to faces than flowers in the TD group may reflect a more mature face network in the TD youth such that less effort was needed in face processing but greater activation was induced to the novelty of dynamic flowers. Atypical salience processing of both the novel dynamic flowers and the socially salient faces may be common across NDDs. Under-connectivity in the salience and visual networks has frequently been shown in ASD62,63, but our findings suggest the ADHD and OCD groups also demonstrate alterations in salience processing, and that this effect was greatest for the OCD group.

Impaired emotional processing has been implicated in OCD, particularly with aversive emotions, such as disgust. Although atypical involvement of limbic areas41,42 and fronto-striatal circuits has been reported in emotional processing in OCD, a recent meta-analysis of 25 neuroimaging studies reported an expanded brain network including the middle temporal and inferior occipital regions in OCD. In this network context, limbic hyperactivation influences early recruitment in the occipital region to visual stimuli, and this is then linked to upregulated amygdala activity64. In addition, a study investigating metabolic activity also suggested visual processing deficits in OCD65. Our OCD cohort showed the least neural differentiation between faces and flowers which may reflect generally reduced activation in processing visual objects, regardless of stimuli or emotional valence in an emotional context. For the OCD-ADHD contrast, greater activity in the right postcentral/supramarginal gyri was seen in the OCD—an area involved in emotional understanding, including egocentricity of emotions66, suggesting that compared to ADHD, they were engaging these regions appropriately for dynamic face stimuli. Others have also found this ability intact in ASD;67 thus, these data suggest the difficulty of egocentricity in emotional perception is more prominent in youth with ADHD.

Although both happy and angry faces, contrasted to dynamic flowers, demonstrated comparable patterns across participants, the decreased occipital activity was less marked to angry than happy faces in TD, indicating that dynamic angry faces are more salient visual stimuli and require more effort for processing even in TD68. This is consistent with the asynchronous maturation of emotional face recognition, where it is later for angry than happy faces69. This neural differentiation between angry faces versus flowers shown in TD, however, was not seen in children with ASD or OCD. This may reflect the difficulties that those with ASD have with angry expressions (negative emotions) from infancy to adulthood70, as well as those with OCD have with negative emotions, including anger37,64. Although challenges in facial emotion recognition in ASD have been observed in both positive and negative emotions, children with ASD generally show worse performance for negative emotions71,72,73. Our study also supported greater impairment in processing negative emotions in ASD in the analysis contrasting the emotional faces.

Angry faces led to greater activation in the right inferior occipital and middle and inferior temporal areas, consistent with studies that show greater visual activity to negative than positive faces68,74. Interestingly, the group differences were driven by the ASD who showed reduced activity in the cuneus and occipital area bilaterally and in left superior and middle temporal regions compared to TDs. The superior and middle temporal regions are closely linked with a biological motion, including facial movements21. Given that the expression of anger is usually less common than happy expressions in everyday life, we suggest that the TD group are attending more to angry than happy faces. The ASD group recruited these areas less than their TD peers, yet showed greater activity than TD, ADHD and OCD in primary face processing regions (right middle, inferior temporal and inferior occipital-fusiform regions), suggesting greater visual salience for angry than happy faces. The increased activity in TD compared to ASD and OCD youth (Fig. 3D–E) was see in more lateral, temporal, medial posterior regions, suggesting higher-order processing areas were recruited by the TD.

The only age-related changes were seen with the happy > angry faces contrast in the left superior frontal gyrus. While activation of this region decreased with age in TD children, it increased for ASD and OCD and showed no age effects in ADHD. Thus, TD children recruited the left superior frontal gyrus more for angry faces in adolescence, while the OCD and ASD recruited it more for happy faces in adolescence. Previous literature has shown that the maturation in prefrontal regions supports the detection and evaluation of angry faces in the TD population75. Superior frontal gyrus activation in emotional face processing was reported across studies in a meta-analysis76 and the left superior frontal gyrus was associated with cognitive activities including processing pleasant and unpleasant emotions77, self-criticism78 and attention to negative emotions79. The ability to recognise and interpret emotions matures from early childhood through adolescence80,81 and frontal engagement would be refined with age, particularly for the emotions which are experienced less frequently. Happiness is the only one of the six basic emotions that is definitely positive and is the first emotion to be accurately identified in early development82. Studies have reported typical processing of happy effect in ASD, which was interpreted as due to greater familiarity with happy faces83. Our results, however, showed processing of happy effect requiring more frontal engagement with age in ASD and OCD; thus, even though they may understand and respond appropriately to happy faces, processing them may still remain difficult. This finding is supported by a meta-analytic review for facial emotion recognition in ASD that showed that difficulties increase with age in recognising happiness but not anger84. This suggests that the recognition for happy faces improves with age in TDs, but not in ASD. In addition, the ability for those with ASD to recognize negative emotions such as anger may lag behind their TD peers. The finding from the OCD group is of particular interest, suggesting that they experience as much difficulty in processing positive emotions as the ASD group, and it may also worsen with age. Only the ADHD group showed no age-related effects. As there was a significant difference in age between the ASD and ADHD children, however, the lack of group-by-age interaction between these two groups should be interpreted cautiously.

In summary, the present study demonstrated that NDD youth shared alterations in processing dynamic emotional faces: a similar functional mechanism was engaged for both dynamic faces and flowers. This suggests that the emotional difficulties shared by NDDs may be partly attributed to atypical visual information processing interfering with social-emotional information management. Although these patterns were similar across the NDDs, the OCD group showed the least differentiation between faces and flowers and between happy and angry faces, which may be attributed to reduced visual processing in OCD, related to hyperactivity in fronto-striatal circuit. In addition, those with OCD required greater engagement of frontal regions to process emotions that increased with age. Contrary to our expectations, OCDs exhibited the least differentiation of the dynamic visual stimuli, and shared increased difficulties with age for happy faces with the ASD group.

The youth with ASD demonstrated more marked impairments in processing negative emotion and the negative-emotion-related activity in temporo-occipital regions was unique to the ASD group. As with the OCD youth, the ASD group also showed increased frontal involvement with age to happy faces. Together these findings indicate that although happy faces are recognisable in ASD, frontal engagement does not decline with age, suggesting that the emotional processing requires similar (or increased) efforts at a neural level, contributing to their life-long difficulties. In contrast, brain responses to angry faces were indistinguishable from the dynamic flowers in ASD and OCD youth, suggesting that angry faces were treated simply as visual stimuli. The ADHD group showed the least impairment across all contrasts in our study. Thus, although all three diagnostic groups shared some alterations in dynamic face processing, there were also some distinct patterns reflecting specific aspects of emotional processing within each disorder.

The significant overlap we report across disorders supports a growing literature that suggests that the neurobiological susceptibility in NDDs needs understanding beyond traditional diagnosis-based categories. Alternative approaches, such as subgrouping by data-driven factors based on dimensional measures have been attempted, but to subgroup NDDs into neurobiologically homogeneous groups is still very challenging. A deep-and-big data approach considering multiple dimensions, and their interactions, engaged in human cognitive processes may be necessary to understand shared neurobiology.

Lastly, we note that the primary diagnosis in NDDs was accounted for in the current study and the Supplementary information revealed a significant portion of children have a co-occurring diagnosis. Thus, the results and discussion should be considered in light of this further evidence of overlap in the NDDs. The purpose of this study, however, was to determine if shared mechanisms underlay socio-emotional difficulties commonly existing in the NDDs. Our results demonstrated that all three NDD groups shared alterations in processing dynamic emotional faces compared to their TD peers, and suggested that these groups of children need to be investigated together in a single cohort to identify biologically homogeneous groups above and beyond their diagnoses.

References

Collin, L., Bindra, J., Raju, M., Gillberg, C. & Minnis, H. Facial emotion recognition in child psychiatry: a systematic review. Res. Dev. Disabil. 34, 1505–1520 (2013).

Spezio, M. L., Adolphs, R., Hurley, R. S. E. & Piven, J. Abnormal use of facial information in high-functioning autism. J. Autism Dev. Disord. 37, 929–939 (2006).

Dickstein, D. P. & Castellanos, F. X. Face processing in attention deficit/hyperactivity disorder. Behav. Neurosci. Atten. Deficit Hyperact. Disord. Its Treat. 9, 219–237 (2011).

Uekermann, J. et al. Social cognition in attention-deficit hyperactivity disorder (ADHD). Neurosci. Biobehav. Rev. 34, 734–743 (2010).

Lai, M.-C. et al. Prevalence of co-occurring mental health diagnoses in the autism population: a systematic review and meta-analysis. Lancet Psychiatry 6, 819–829 (2019).

Hollingdale, J., Woodhouse, E., Young, S., Fridman, A. & Mandy, W. Autistic spectrum disorder symptoms in children and adolescents with attention-deficit/hyperactivity disorder: a meta-analytical review - Corrigendum. Psychol. Med. 1, 1–14 (2019).

Ruzzano, L., Borsboom, D. & Geurts, H. M. Repetitive behaviors in autism and obsessive–compulsive disorder: new perspectives from a network analysis. J. Autism Dev. Disord. 45, 192–202 (2014).

Vuilleumier, P. & Schwartz, S. Emotional facial expressions capture attention. Neurology 56, 153–158 (2001).

Palermo, R. & Rhodes, G. Are you always on my mind? A review of how face perception and attention interact. Neuropsychologia 45, 75–92 (2007).

Biele, C. & Grabowska, A. Sex differences in perception of emotion intensity in dynamic and static facial expressions. Exp. Brain Res. 171, 1–6 (2006).

Kilts, C. D., Egan, G., Gideon, D. A., Ely, T. D. & Hoffman, J. M. Dissociable neural pathways are involved in the recognition of emotion in static and dynamic facial expressions. Neuroimage 18, 156–168 (2003).

LaBar, K. S. Dynamic perception of facial affect and identity in the human brain. Cereb. Cortex 13, 1023–1033 (2003).

Sato, W., Kochiyama, T., Yoshikawa, S., Naito, E. & Matsumura, M. Enhanced neural activity in response to dynamic facial expressions of emotion: an fMRI study. Cogn. Brain Res. 20, 81–91 (2004).

Trautmann, S. A., Fehr, T. & Herrmann, M. Emotions in motion: dynamic compared to static facial expressions of disgust and happiness reveal more widespread emotion-specific activations. Brain Res. 1284, 100–115 (2009).

Harwood, N. K., Hall, L. J. & Shinkfield, A. J. Recognition of facial emotional expressions from moving and static displays by individuals with mental retardation. Am. J. Ment. Retard. 104, 270 (1999).

Wehrle, T., Kaiser, S., Schmidt, S. & Scherer, K. R. Studying the dynamics of emotional expression using synthesized facial muscle movements. J. Pers. Soc. Psychol. 78, 105–119 (2000).

Carter, E. J. & Pelphrey, K. A. Friend or foe? Brain systems involved in the perception of dynamic signals of menacing and friendly social approaches. Soc. Neurosci. 3, 151–163 (2008).

Haxby, J. V., Hoffman, E. A. & Gobbini, M. I. The distributed human neural system for face perception. Trends Cogn. Sci. 4, 223–233 (2000).

Adolphs, R. Neural systems for recognizing emotion. Curr. Opin. Neurobiol. 12, 169–177 (2002).

Vuilleumier, P. & Pourtois, G. Distributed and interactive brain mechanisms during emotion face perception: evidence from functional neuroimaging. Neuropsychologia 45, 174–194 (2007).

Allison, T., Puce, A. & McCarthy, G. Social perception from visual cues: role of the STS region. Trends Cogn. Sci. 4, 267–278 (2000).

Arsalidou, M., Morris, D. & Taylor, M. J. Converging evidence for the advantage of dynamic facial expressions. Brain Topogr. 24, 149–163 (2011).

Kanner, L. Autistic disturbances of affective contact. Nerv. Child 2, 217–250 (1943).

Baron-Cohen, S. E., Tager-Flusberg, H. E. & Cohen, D. J. J. (Eds). Understanding Other Minds: Perspectives from Autism (Oxford University Press, New York, NY, 1994).

Celani, G., Battacchi, M. W. & Arcidiacono, L. The understanding of the emotional meaning of facial expressions in people with autism. J. Autism Dev. Disord. 29, 57–66 (1999).

Weeks, S. J. & Hobson, R. P. The salience of facial expression for autistic children. J. Child Psychol. Psychiatry 28, 137–152 (1987).

Pierce, K., Müller, R.-A., Ambrose, J., Allen, G. & Courchesne, E. Face processing occurs outside the fusiform ‘face area’ in autism: evidence from functional MRI. Brain 124, 2059–2073 (2001).

Kleinhans, N. M. et al. Reduced neural habituation in the Amygdala and social impairments in autism spectrum disorders. Am. J. Psychiatry 166, 467–475 (2009).

Lombardo, M. V., Chakrabarti, B. & Baron-Cohen, S. The Amygdala in autism: not adapting to faces? Am. J. Psychiatry 166, 395–397 (2009).

Enticott, P. G. et al. Emotion recognition of static and dynamic faces in autism spectrum disorder. Cogn. Emot. 28, 1110–1118 (2014).

Zane, E. et al. Motion-capture patterns of voluntarily mimicked dynamic facial expressions in children and adolescents with and without ASD. J. Autism Dev. Disord. 49, 1062–1079 (2018).

Law Smith, M. J., Montagne, B., Perrett, D. I., Gill, M. & Gallagher, L. Detecting subtle facial emotion recognition deficits in high-functioning Autism using dynamic stimuli of varying intensities. Neuropsychologia 48, 2777–2781 (2010).

Weiss, E. M., Rominger, C., Hofer, E., Fink, A. & Papousek, I. Less differentiated facial responses to naturalistic films of another person’s emotional expressions in adolescents and adults with high-functioning Autism Spectrum Disorder. Prog. Neuro-Psychopharmacol. Biol. Psychiatry 89, 341–346 (2019).

Kliemann, D. et al. Cortical responses to dynamic emotional facial expressions generalize across stimuli, and are sensitive to task-relevance, in adults with and without Autism. Cortex 103, 24–43 (2018).

Sprengelmeyer, R. et al. Disgust implicated in obsessive–compulsive disorder. Proc. R. Soc. Lond. Ser. B Biol. Sci. 264, 1767–1773 (1997).

Corcoran, K. M., Woody, S. R. & Tolin, D. F. Recognition of facial expressions in obsessive–compulsive disorder. J. Anxiety Disord. 22, 56–66 (2008).

Daros, A. R., Zakzanis, K. K. & Rector, N. A. A quantitative analysis of facial emotion recognition in obsessive–compulsive disorder. Psychiatry Res. 215, 514–521 (2014).

Kang, J. I., Namkoong, K., Yoo, S. W., Jhung, K. & Kim, S. J. Abnormalities of emotional awareness and perception in patients with obsessive–compulsive disorder. J. Affect. Disord. 141, 286–293 (2012).

Aigner, M. et al. Cognitive and emotion recognition deficits in obsessive–compulsive disorder. Psychiatry Res. 149, 121–128 (2007).

Cardoner, N. et al. Enhanced brain responsiveness during active emotional face processing in obsessive compulsive disorder. World J. Biol. Psychiatry 12, 349–363 (2011).

Cannistraro, P. A. et al. Amygdala responses to human faces in obsessive-compulsive disorder. Biol. Psychiatry 56, 916–920 (2004).

Britton, J. C. et al. Amygdala activation in response to facial expressions in pediatric obsessive-compulsive disorder. Depress Anxiety 27, 643–651 (2010).

Polanczyk, G. & Jensen, P. Epidemiologic considerations in attention deficit hyperactivity disorder: a review and update. Child Adolesc. Psychiatr. Clin. N. Am. 17, 245–260 (2008).

Castellanos, F. X., Sonuga-Barke, E. J. S., Milham, M. P. & Tannock, R. Characterizing cognition in ADHD: beyond executive dysfunction. Trends Cogn. Sci. 10, 117–123 (2006).

Barkley, R. A. & Fischer, M. The unique contribution of emotional impulsiveness to impairment in major life activities in hyperactive children as adults. J. Am. Acad. Child Adolesc. Psychiatry 49, 503–513 (2010).

Martel, M. M. & Nigg, J. T. Child ADHD and personality/temperament traits of reactive and effortful control, resiliency, and emotionality. J. Child Psychol. Psychiatry 47, 1175–1183 (2006).

Yuill, N. & Lyon, J. Selective difficulty in recognising facial expressions of emotion in boys with ADHD. Eur. Child Adolesc. Psychiatry 16, 398–404 (2007).

Kats-Gold, I., Besser, A. & Priel, B. The role of simple emotion recognition skills among school aged boys at risk of ADHD. J. Abnorm. Child Psychol. 35, 363–378 (2007).

Jusyte, A., Gulewitsch, M. D. & Schönenberg, M. Recognition of peer emotions in children with ADHD: evidence from an animated facial expressions task. Psychiatry Res. 258, 351–357 (2017).

Cox, R. W. AFNI: software for analysis and visualization of functional magnetic resonance neuroimages. Comput Biomed. Res. 29, 162–173 (1996).

Cox, R. W. & Hyde, J. S. Software tools for analysis and visualization of fMRI data. NMR Biomed. 10, 171–178 (1997).

Jenkinson, M., Beckmann, C. F., Behrens, T. E. J., Woolrich, M. W. & Smith, S. M. FSL. Neuroimage 62, 782–790 (2012).

Woolrich, M. W. et al. Bayesian analysis of neuroimaging data in FSL. Neuroimage 45, S173–S186. (2009).

Power, J. D., Barnes, K. A., Snyder, A. Z., Schlaggar, B. L. & Petersen, S. E. Spurious but systematic correlations in functional connectivity MRI networks arise from subject motion. Neuroimage 59, 2142–2154 (2012).

Siegel, J. S. et al. Data quality influences observed links between functional connectivity and behavior. Cereb. Cortex 27, 4492–4502 (2016).

Woolrich, M. W., Ripley, B. D., Brady, M. & Smith, S. M. Temporal autocorrelation in univariate linear modeling of FMRI data. Neuroimage 14, 1370–1386 (2001).

Greve, D. N. & Fischl, B. Accurate and robust brain image alignment using boundary-based registration. Neuroimage 48, 63–72 (2009).

Marascuilo, L. A. & McSweeney, M. Nonparametric post hoc comparisons for trend. Psychol. Bull. 67, 401–412 (1967).

Woolrich, M. W., Behrens, T. E. J. & Smith, S. M. Constrained linear basis sets for HRF modelling using Variational Bayes. Neuroimage 21, 1748–1761 (2004).

Leppänen, J. M. & Nelson, C. A. Tuning the developing brain to social signals of emotions. Nat. Rev. Neurosci. 10, 37–47 (2009).

Haist, F. & Anzures, G. Functional development of the brain’s face-processing system. Wiley Interdiscip. Rev. Cogn. Sci. 8, e1423 (2017).

Uddin, L. Q. Salience processing and insular cortical function and dysfunction. Nat. Rev. Neurosci. 16, 55–61 (2014).

Delbruck, E., Yang, M., Yassine, A. & Grossman, E. D. Functional connectivity in ASD: atypical pathways in brain networks supporting action observation and joint attention. Brain Res. 1706, 157–165 (2019).

Thorsen, A. L. et al. Emotional processing in obsessive-compulsive disorder: a systematic review and meta-analysis of 25 functional neuroimaging studies. Biol. Psychiatry Cogn. Neurosci. Neuroimaging 3, 563–571 (2018).

Kwon, J. S. et al. Neural correlates of clinical symptoms and cognitive dysfunctions in obsessive–compulsive disorder. Psychiatry Res. Neuroimaging 122, 37–47 (2003).

Silani, G., Lamm, C., Ruff, C. C. & Singer, T. Right supramarginal gyrus is crucial to overcome emotional egocentricity bias in social judgments. J. Neurosci. 33, 15466–15476 (2013).

Hoffmann, F., Koehne, S., Steinbeis, N., Dziobek, I. & Singer, T. Preserved self-other distinction during empathy in autism is linked to network integrity of right supramarginal gyrus. J. Autism Dev. Disord. 46, 637–648 (2015).

Batty, M. & Taylor, M. J. Early processing of the six basic facial emotional expressions. Cogn. Brain Res. 17, 613–620 (2003).

Zhang, Y., Padmanabhan, A., Gross, J. J. & Menon, V. Development of human emotion circuits investigated using a big-data analytic approach: stability, reliability, and robustness. J. Neurosci. 39, 7155–7172 (2019).

Kuusikko, S. et al. Emotion recognition in children and adolescents with autism spectrum disorders. J. Autism Dev. Disord. 39, 938–945 (2009).

Bal, E. et al. Emotion recognition in children with autism spectrum disorders: relations to eye gaze and autonomic state. J. Autism Dev. Disord. 40, 358–370 (2010).

Wright, B. et al. Emotion recognition in faces and the use of visual context Vo in young people with high-functioning autism spectrum disorders. Autism 12, 607–626 (2008).

Leung, R. C., Ye, A. X., Wong, S. M., Taylor, M. J. & Doesburg, S. M. Reduced beta connectivity during emotional face processing in adolescents with autism. Mol. Autism https://doi.org/10.1186/2040-2392-5-51 (2014).

García-García, I. et al. Neural processing of negative emotional stimuli and the influence of age, sex and task-related characteristics. Neurosci. Biobehav. Rev. 68, 773–793 (2016).

Thomas, L. A., De Bellis, M. D., Graham, R. & LaBar, K. S. Development of emotional facial recognition in late childhood and adolescence. Dev. Sci. 10, 547–558 (2007).

Fusar-Poli, P. et al. Laterality effect on emotional faces processing: ALE meta-analysis of evidence. Neurosci. Lett. 452, 262–267 (2009).

Lane, R. D. et al. Neuroanatomical correlates of pleasant and unpleasant emotion. Neuropsychologia 35, 1437–1444 (1997).

Longe, O. et al. Having a word with yourself: neural correlates of self-criticism and self-reassurance. Neuroimage 49, 1849–1856 (2010).

Kerestes, R. et al. Abnormal prefrontal activity subserving attentional control of emotion in remitted depressed patients during a working memory task with emotional distracters. Psychol. Med. 42, 29–40 (2011).

Batty, M. & Taylor, M. J. The development of emotional face processing during childhood. Dev. Sci. 9, 207–220 (2006).

Rodger, H., Vizioli, L., Ouyang, X. & Caldara, R. Mapping the development of facial expression recognition. Dev. Sci. 18, 926–939 (2015).

Widen, S. C. & Russell, J. A. Young children’s understanding of other’s emotions. In: Handbook of Emotions, (Eds Lewis, M., Jeannette Haviland-Jones, J. M. & Barrett, L. F.) Guilford Press3, 348–363 (2008).

Farran, E. K., Branson, A. & King, B. J. Visual search for basic emotional expressions in autism; impaired processing of anger, fear and sadness, but a typical happy face advantage. Res. Autism Spectr. Disord. 5, 455–462 (2011).

Lozier, L. M., Vanmeter, J. W. & Marsh, A. A. Impairments in facial affect recognition associated with autism spectrum disorders: a meta-analysis. Dev. Psychopathol. 26, 933–945 (2014).

Acknowledgements

We would like to thank all participants and their families who took part in the study. We also thank all those involved in participant recruitment and data collection, including Julie Lu, Tammy Rayner and Ruth Weiss for their valuable support and contributions. Funding was provided by the Ontario Brain Institute (IDS-I l-02).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

E.A. has served as a consultant to Roche and quadrant therapeutics. She has received in kind support from AMO pharma, royalties from APPI and Springer, and editorial honoraria from Wiley. She also holds a patent for the device, “Anxiety Meter.” R.J.S. has consulted with Highland Therapeutics, Eli Lilly and Co., and Purdue Pharma. He has commercial interest in a cognitive rehabilitation software company, “eHave”. The remaining authors (M.M.V., E.J.C., C.H., P.A., J.P.L. and M.J.T.) declare no biomedical financial interests or potential conflicts of interest.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Vandewouw, M.M., Choi, E., Hammill, C. et al. Emotional face processing across neurodevelopmental disorders: a dynamic faces study in children with autism spectrum disorder, attention deficit hyperactivity disorder and obsessive-compulsive disorder. Transl Psychiatry 10, 375 (2020). https://doi.org/10.1038/s41398-020-01063-2

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41398-020-01063-2

This article is cited by

-

The Effectiveness of Sensory-Motor Integration Exercises on Social Skills and Motor Performance in Children with Autism

Journal of Autism and Developmental Disorders (2024)

-

Functional brain network alterations in the co-occurrence of autism spectrum disorder and attention deficit hyperactivity disorder

European Child & Adolescent Psychiatry (2024)

-

Emotion Recognition in Children and Adolescents with ASD and ADHD: a Systematic Review

Review Journal of Autism and Developmental Disorders (2023)