Key Points

-

Precision medicine describes the definition of disease at a higher resolution by genomic and other technologies to enable more precise targeting of subgroups of disease with new therapies. Prominent examples include cystic fibrosis and cancer.

-

Clinical genomics exists at the intersection of sequencing-led discovery genetics in population cohorts and historical low-throughput approaches to genetic diagnosis in patients. As a result of the different aims of these two endeavours, technologies and algorithms that have been developed for discovery genomics need to be optimized before application to clinical medicine.

-

Areas of need include the improvement of sequencing technologies. Current short-read approaches are limited in areas of the genome of low complexity (such as repeats), regions of high GC content, regions that are highly polymorphic or that include small-scale (indel) or large-scale (structural variant) disruption of the open reading frame.

-

Possible routes to such improvements include long-read sequencing, improved algorithms for indel and structural variant calling, graph reference approaches and standardization of nomenclature.

-

One area that requires specific attention is the quality and coverage of sequence data for clinical genetic testing. In general, the emerging consensus standard is that the coding regions of interest (plus two base pairs on either side) should be covered by 20 high-quality (Q20) reads that are uniquely mapped.

-

To improve assertions of the disease causality of genetic variants, data sharing of both phenotypic and genotypic information across communities will be required. Projects such as ClinGen and its associated database ClinVar represent an important step in this direction. Large-scale population sequencing projects such as the UK Biobank and the US Precision Medicine Initiative Cohort Program will enhance our understanding of population-scale genetic variation in a way that optimizes our care of the individual with genetic disease.

Abstract

There is great potential for genome sequencing to enhance patient care through improved diagnostic sensitivity and more precise therapeutic targeting. To maximize this potential, genomics strategies that have been developed for genetic discovery — including DNA-sequencing technologies and analysis algorithms — need to be adapted to fit clinical needs. This will require the optimization of alignment algorithms, attention to quality-coverage metrics, tailored solutions for paralogous or low-complexity areas of the genome, and the adoption of consensus standards for variant calling and interpretation. Global sharing of this more accurate genotypic and phenotypic data will accelerate the determination of causality for novel genes or variants. Thus, a deeper understanding of disease will be realized that will allow its targeting with much greater therapeutic precision.

This is a preview of subscription content, access via your institution

Access options

Subscribe to this journal

Receive 12 print issues and online access

$189.00 per year

only $15.75 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

References

Collins, F. S. Implications of the Human Genome Project for medical science. JAMA 285, 540 (2001).

Ng, S. B. et al. Targeted capture and massively parallel sequencing of 12 human exomes. Nature 461, 272–276 (2009).

Choi, M. et al. Genetic diagnosis by whole exome capture and massively parallel DNA sequencing. Proc. Natl Acad. Sci. USA 106, 19096–19101 (2009).References 2 and 3 were among the earliest studies to show that exome sequencing could be used to diagnose a genetic condition.

Ashley, E. A. et al. Clinical assessment incorporating a personal genome. Lancet 375, 1525–1535 (2010).This paper presented a framework for clinical whole-genome interpretation and described the earliest example of whole-genome-based personalized medicine.

Worthey, E. A. et al. Making a definitive diagnosis: successful clinical application of whole exome sequencing in a child with intractable inflammatory bowel disease. Genet. Med. 13, 255–262 (2011).This paper describes a diagnosis made by exome sequencing that led to a dramatic therapeutic response in a young boy.

Bainbridge, M. N. et al. Whole-genome sequencing for optimized patient management. Sci. Transl. Med. 3, 87re3 (2011).

Johnson, J. A et al. Clopidogrel: a case for indication-specific pharmacogenetics. Clin. Pharmacol. Ther. 91, 774–776 (2012).

Dewey, F. E. et al. Clinical interpretation and implications of whole-genome sequencing. JAMA 311, 1035–1045 (2014).

Vassy, J. L. et al. The MedSeq Project: a randomized trial of integrating whole genome sequencing into clinical medicine. Trials 15, 85 (2014).

Manolio, T. A. & Green, E. D. Leading the way to genomic medicine. Am. J. Med. Genet. C. Semin. Med. Genet. 166C, 1–7 (2014).

Green, E. D., Guyer, M. S. & Human, N. Charting a course for genomic medicine from base pairs to bedside. Nature 470, 204–213 (2011).

Bryc, K., Durand, E. Y., Macpherson, J. M., Reich, D. & Mountain, J. L. The genetic ancestry of African Americans, Latinos, and European Americans across the United States. Am. J. Hum. Genet. 96, 37–53 (2015).

Collins, F. S. & Varmus, H. A. New initiative on precision medicine. N. Engl. J. Med. 372, 793–795 (2015).

Ashley, E. A. The Precision Medicine Initiative: a new national effort. JAMA 313, 2119–2120 (2015).

National Research Council (US) Committee on a Framework for Developing a New Taxonomy of Disease, 2011. Toward Precision Medicine: Building a Knowledge Network for Biomedical Research and a New Taxonomy of Disease (National Academies Press, 2011).

Lek, M. et al. Analysis of protein-coding genetic variation in 60,706 humans. Nature http://dx.doi.org/10.1038/nature19057 (in the press) (2016).This paper describes the Exome Aggregation Consortium.

Homburger, J. R. et al. Multidimensional structure-function relationships in human β-cardiac myosin from population-scale genetic variation. Proc. Natl Acad. Sci. USA 113, 6701–6706 (2016).

Waggott, D. et al. The next generation precision medical record — a framework for integrating genomes and wearable sensors with medical records. Preprint at bioRxiv http://dx.doi.org/10.1101/039651 (2016).

Ramsey, B. W. et al. A CFTR potentiator in patients with cystic fibrosis and the G551D mutation. N. Engl. J. Med. 365, 1663–1672 (2011).A paper describing precision therapy for cystic fibrosis.

Brodlie, M., Haq, I. J., Roberts, K. & Elborn, J. S. Targeted therapies to improve CFTR function in cystic fibrosis. Genome Med. 7, 101 (2015).

Rehman, A., Baloch, N. U.-A. & Janahi, I. A. Lumacaftor-Ivacaftor in patients with cystic fibrosis homozygous for Phe508del CFTR. N. Engl. J. Med. 373, 1783 (2015).

Brewington, J. J., McPhail, G. L. & Clancy, J. P. Lumacaftor alone and combined with ivacaftor: preclinical and clinical trial experience of F508del CFTR correction. Expert Rev. Respir. Med. 10, 5–17 (2016).

Lindeman, N. I. et al. Molecular testing guideline for selection of lung cancer patients for EGFR and ALK tyrosine kinase inhibitors. J. Thorac. Oncol. 8, 823–859 (2013).

Blumenthal, G. Next-generation sequencing in oncology in the era of precision medicine. JAMA Oncol. 2, 13–14 (2015).

Sosman, J. A. et al. Survival in BRAF V600–mutant advanced melanoma treated with vemurafenib. N. Engl. J. Med. 366, 707–714 (2012).

Sharma, P. & Allison, J. P. Immune checkpoint targeting in cancer therapy: toward combination strategies with curative potential. Cell 161, 205–214 (2015).

Linnemann, C. et al. High-throughput epitope discovery reveals frequent recognition of neo-antigens by CD4+ T cells in human melanoma. Nat. Med. 21, 81–85 (2015).

Schadendorf, D. et al. Pooled analysis of long-term survival data from phase II and phase III trials of ipilimumab in unresectable or metastatic melanoma. J. Clin. Oncol. 33, 1889–1894 (2015).

Lawrence, M. S. et al. Comprehensive genomic characterization of head and neck squamous cell carcinomas. Nature 517, 576–582 (2015).

Hackl, H., Charoentong, P., Finotello, F. & Trajanoski, Z. Computational genomics tools for dissecting tumour–immune cell interactions. Nat. Rev. Genet. 17, 441–458 (2016).

Gubin, M. M. et al. Checkpoint blockade cancer immunotherapy targets tumour-specific mutant antigens. Nature 515, 577–581 (2014).An important paper describing checkpoint blockade.

Newman, A. M. et al. An ultrasensitive method for quantitating circulating tumor DNA with broad patient coverage. Nat. Med. 20, 548–554 (2014).An early paper describing the concept of 'liquid biopsy'.

Klein, T. E. et al. Estimation of the warfarin dose with clinical and pharmacogenetic data. N. Engl. J. Med. 360, 753–764 (2009).A key paper describing warfarin pharmacogenomics.

Eckman, M. H., Rosand, J., Greenberg, S. M. & Gage, B. F. Cost-effectiveness of using pharmacogenetic information in warfarin dosing for patients with nonvalvular atrial fibrillation. Ann. Intern. Med. 150, 73–83 (2009).

Epstein, R. S. et al. Warfarin genotyping reduces hospitalization rates results from the MM-WES (Medco-Mayo Warfarin Effectiveness study). J. Am. Coll. Cardiol. 55, 2804–2812 (2010).

Mega, J. L. et al. Reduced-function CYP2C19 genotype and risk of adverse clinical outcomes among patients treated with clopidogrel predominantly for PCI: a meta-analysis. JAMA 304, 1821–1830 (2010).

Mega, J. L. et al. Genetic variants in ABCB1 and CYP2C19 and cardiovascular outcomes after treatment with clopidogrel and prasugrel in the TRITON–TIMI 38 trial: a pharmacogenetic analysis. Lancet 376, 1312–1319 (2010).

Paré, G. Effects of CYP2C19 genotype on outcomes of clopidogrel treatment. N. Engl. J. Med. 363, 1704–1714 (2010).

Nissen, S. Pharmacogenomics and clopidogrel: irrational exuberance? J. Am. Med. Assoc. 306, 2011–2012 (2012).

Johnson, J. A. et al. Clopidogrel: a case for indication-specific pharmacogenetics. Clin. Pharmacol. Ther. 91, 774–776 (2012).

Roberts, J. D. et al. Point-of-care genetic testing for personalisation of antiplatelet treatment (RAPID GENE): a prospective, randomised, proof-of-concept trial. Lancet 379, 1705–1711 (2012).

Wiviott, S. D. et al. Prasugrel versus clopidogrel in patients with acute coronary syndromes. N. Engl. J. Med. 357, 2001–2015 (2007).

Dunnenberger, H. M. et al. Preemptive clinical pharmacogenetics implementation: current programs in five US medical centers. Annu. Rev. Pharmacol. Toxicol. 55, 89–106 (2015).

Altman, R. B. PharmGKB: a logical home for knowledge relating genotype to drug response phenotype. Nat. Genet. 39, 426 (2007).

Klein, T. E. et al. Integrating genotype and phenotype information: an overview of the PharmGKB project: an overview of the PharmGKB project. Pharmacogenomics J. 1, 167–170 (2001).

Caudle, K. E. et al. Incorporation of pharmacogenomics into routine clinical practice: the Clinical Pharmacogenetics Implementation Consortium (CPIC) guideline development process. Curr. Drug Metab. 15, 209–217 (2014).

Herr, T. M. et al. Practical considerations in genomic decision support: The eMERGE experience. J. Pathol. Inform. 6, 50 (2015).

Obama, B. S.3822 — 109th Congress: Genomics and Personalized Medicine Act of 2006. Congress.gov https://www.congress.gov/bill/109th-congress/senate-bill/3822 (2006).

Collins, F. S. The case for a US prospective cohort study of genes and environment. Nature 429, 475–477 (2004).

Collins, R. What makes UK Biobank special? Lancet 379, 1173–1174 (2012).

Stanford Medicine MyHeart Counts iPhone Application. https://med.stanford.edu/myheartcounts.html (2016)

The International HapMap Consortium. A haplotype map of the human genome. Nature 437, 1299–1320 (2005).

1000 Genomes Project Consortium et al. An integrated map of genetic variation from 1,092 human genomes. Nature 491, 56–65 (2012).

Manolio, T. A. et al. Finding the missing heritability of complex diseases. Nature 461, 747–753 (2009).

Henderson, L. B. et al. The impact of chromosomal microarray on clinical management: a retrospective analysis. Genet. Med. 16, 1–8 (2014).

Gahl, W. A. et al. The National Institutes of Health Undiagnosed Diseases Program: insights into rare diseases. Genet. Med. 14, 51–59 (2012).

Gahl, W. A., Wise, A. L. & Ashley, E. A. The Undiagnosed Diseases Network of the National Institutes of Health: a national extension. JAMA 314, 1797–1798 (2015).

MacArthur, D. G. et al. Guidelines for investigating causality of sequence variants in human disease. Nature 508, 469–476 (2013).Detailed guidance on how to assess causality of variants for rare disease.

Biesecker, L. G. & Spinner, N. B. A genomic view of mosaicism and human disease. Nat. Rev. Genet. 14, 307–320 (2013).

Church, D. M. et al. Extending reference assembly models. Genome Biol. 16, 13 (2015).

Genome Reference Consortium. Human Genome Assembly Data. GRC http://www.ncbi.nlm.nih.gov/projects/genome/assembly/grc/human/data (2015).

Ezkurdia, I. et al. Multiple evidence strands suggest that there may be as few as 19 000 human protein-coding genes. Hum. Mol. Genet. 23, 5866–5878 (2014).

Platzer, M. The human genome and its upcoming dynamics. Genome Dyn. 2, 1–16 (2006).

Pruitt, K. D., Tatusova, T., Brown, G. R. & Maglott, D. R. NCBI Reference Sequences (RefSeq): current status, new features and genome annotation policy. Nucleic Acids Res. 40, D130–D135 (2012).

López-Flores, I . & Garrido-Ramos, M. A. The repetitive DNA content of eukaryotic genomes. Genome Dyn. 7, 1–28 (2012).

National Center for Biotechnology Information. NCBI Homo sapiens annotation release 107. NCBI http://www.ncbi.nlm.nih.gov/genome/annotation_euk/Homo_sapiens/107 (2015).

Goldfeder, R. et al. Medical implications of technical accuracy in clinical genome sequencing. Genome Med. 8, 1–12 (2016).

Gymrek, M. et al. Abundant contribution of short tandem repeats to gene expression variation in humans. Nat. Genet. 48, 22–29 (2016).

Budworth, H. & McMurray, C. T. A brief history of triplet repeat diseases. Methods Mol. Biol. 1010, 3–17 (2013).

Iyer, R. R., Pluciennik, A., Napierala, M. & Wells, R. D. DNA triplet repeat expansion and mismatch repair. Annu. Rev. Biochem. 84, 199–226 (2015).

Rufini, S. et al. Stevens–Johnson syndrome and toxic epidermal necrolysis: an update on pharmacogenetics studies in drug-induced severe skin reaction. Pharmacogenomics 16, 1989–2002 (2015).

Chung, W.-H. et al. Medical genetics: a marker for Stevens–Johnson syndrome. Nature 428, 486 (2004).

Mallal, S. et al. Association between presence of HLA-B*5701, HLA-DR7, and HLA-DQ3 and hypersensitivity to HIV-1 reverse-transcriptase inhibitor abacavir. Lancet 359, 727–732 (2002).

Dilthey, A., Cox, C., Iqbal, Z., Nelson, M. R. & McVean, G. Improved genome inference in the MHC using a population reference graph. Nat. Genet. 47, 682–688 (2015).

Tewhey, R., Bansal, V., Torkamani, A., Topol, E. J. & Schork, N. J. The importance of phase information for human genomics. Nat. Rev. Genet. 12, 215–223 (2011).

Rosenfeld, J. A., Malhotra, A. K. & Lencz, T. Novel multi-nucleotide polymorphisms in the human genome characterized by whole genome and exome sequencing. Nucleic Acids Res. 38, 6102–6111 (2010).

Tilgner, H., Grubert, F., Sharon, D. & Snyder, M. P. Defining a personal, allele-specific, and single-molecule long-read transcriptome. Proc. Natl Acad. Sci. USA 111, 9869–9874 (2014).

Zheng, G. X. et al. Haplotyping germline and cancer genomes with high-throughput linked-read sequencing. Nat. Biotechnol. 34, 303–311 (2016).

Chaisson, M. J. P. et al. Resolving the complexity of the human genome using single-molecule sequencing. Nature 517, 608–611 (2014).

Goodwin, S., McPherson, J. D. & McCombie, W. R. Coming of age: ten years of next-generation sequencing technologies. Nat. Rev. Genet. 17, 333–351 (2016).

Chaisson, M. J. P., Wilson, R. K. & Eichler, E. E. Genetic variation and the de novo assembly of human genomes. Nat. Rev. Genet. 16, 627–640 (2015).

Chakraborty, M., Baldwin-Brown, J. G., Long, A. D. & Emerson, J. J. Contiguous and accurate de novo assembly of metazoan genomes with modest long read coverage. Preprint at bioRxiv http://dx.doi.org/10.1101/029306 (2015).

Huang, Y.-T. & Liao, C.-F. Integration of string and de Bruijn graphs for genome assembly. Bioinformatics 32, 1301–1307 (2016).

Korlach, J. Returning to more finished genomes. Genom. Data 2, 46–48 (2014).

Goodwin, S. et al. Oxford Nanopore sequencing, hybrid error correction, and de novo assembly of a eukaryotic genome. Genome Res. 25, 1750–1756 (2015).

Pendleton, M. et al. Assembly and diploid architecture of an individual human genome via single-molecule technologies. Nat. Methods 12, 780–786 (2015).

Stephens, Z. D. et al. Big Data: astronomical or genomical? PLOS Biol. 13, e1002195 (2015).

Hsi-Yang Fritz, M., Leinonen, R., Cochran, G. & Birney, E. Efficient storage of high throughput DNA sequencing data using reference-based compression. Genome Res. 21, 734–740 (2011).

Ewing, B. & Green, P. Base-calling of automated sequencer traces using phred. II. Error probabilities. Genome Res. 8, 186–194 (1998).

Ewing, B., Hillier, L., Wendl, M. C. & Green, P. Base-calling of automated sequencer traces using phred. I. Accuracy assessment. Genome Res. 8, 175–185 (1998).

Malysa, G. et al. QVZ: lossy compression of quality values. Bioinformatics 31, 3122–3129 (2015).

Ochoa, I., Hernaez, M., Goldfeder, R., Weissman, T. & Ashley, E. Effect of lossy compression of quality scores on variant calling. Brief. Bioinform. http://dx.doi.org/10.1093/bib/bbw011 (2016).

Yu, Y. W., Yorukoglu, D., Peng, J. & Berger, B. Quality score compression improves genotyping accuracy. Nat. Biotechnol. 33, 240–243 (2015).

Iqbal, Z., Caccamo, M., Turner, I., Flicek, P. & McVean, G. De novo assembly and genotyping of variants using colored de Bruijn graphs. Nat. Genet. 44, 226–232 (2012).

Dewey, F. E. et al. Phased whole-genome genetic risk in a family quartet using a major allele reference sequence. PLoS Genet. 7, e1002280 (2011).

Needleman, S. B. & Wunsch, C. D. A general method applicable to search for similarities in amino acid sequence of two proteins. J. Mol. Biol. 48, 443–453 (1970).

Smith, T. F. & Waterman, M. S. Identification of common molecular subsequences. J. Mol. Biol. 147, 195–197 (1981).

Priest, J. R. et al. De novo and rare variants at multiple loci support the oligogenic origins of atrioventricular septal heart defects. PLoS Genet. 12, e1005963 (2016).

Korpar, M. & Šikic, M. SW#–GPU-enabled exact alignments on genome scale. Bioinformatics 29, 2494–2495 (2013).

Langmead, B. & Salzberg, S. L. Fast gapped-read alignment with Bowtie 2. Nat. Methods 9, 357–359 (2012).

Li, H. & Durbin, R. Fast and accurate short read alignment with Burrows–Wheeler transform. Bioinformatics 25, 1754–1760 (2009).

Manber, U. & Myers, G. Suffix arrays: a new method for on-line string searches. SIAM J. Comput. 22, 935–948 (1993).

Saunders, C. J. et al. Rapid whole-genome sequencing for genetic disease diagnosis in neonatal intensive care units. Sci. Transl. Med. 4, 154ra135 (2012).

Priest, J. R. et al. Molecular diagnosis of long QT syndrome at 10 days of life by rapid whole genome sequencing. Heart Rhythm 11, 1707–1713 (2014).

Dewey, F. E. E. et al. Sequence to medical phenotypes (STMP): a clinical research tool for interpretation of next generation sequencing data. PLoS Genet. 11, e1005496 (2015).

Kalyana-Sundaram, S. et al. Expressed pseudogenes in the transcriptional landscape of human cancers. Cell 149, 1622–1634 (2012).

Poliseno, L. et al. A coding-independent function of gene and pseudogene mRNAs regulates tumour biology. Nature 465, 1033–1038 (2010).

Bauer, K. A. The thrombophilias: well-defined risk factors with uncertain therapeutic implications. Ann. Intern. Med. 135, 367–373 (2001).

Lam, H. Y. K. et al. Performance comparison of whole-genome sequencing platforms. Nat. Biotechnol. 30, 78–82 (2012).

Zook, J. M. et al. Integrating human sequence data sets provides a resource of benchmark SNP and indel genotype calls. Nat. Biotechnol. 32, 246–251 (2014).The US National Institute for Standards and Technology paper providing a consensus resource for the community for one genome.

Krier, J., Barfield, R., Green, R. C. & Kraft, P. Reclassification of genetic-based risk predictions as GWAS data accumulate. Genome Med. 8, 20 (2016).

Human Genome Variation Society. Human Genome Variation Society. HGVS http://www.hgvs.org/dblist/glsdb.html (updated 30 May 2016).

Vis, J. K., Vermaat, M., Taschner, P. E. M., Kok, J. N. & Laros, J. F. J. An efficient algorithm for the extraction of HGVS variant descriptions from sequences. Bioinformatics 31, 3751–3757 (2015).

Hart, R. K. et al. A Python package for parsing, validating, mapping and formatting sequence variants using HGVS nomenclature. Bioinformatics 31, 268–270 (2015).

Albers, C. a. et al. Dindel: accurate indel calls from short-read data. Genome Res. 21, 961–973 (2011).

Narzisi, G. et al. Accurate de novo and transmitted indel detection in exome-capture data using microassembly. Nat. Methods 11, 1–7 (2014).

Rimmer, A. et al. Integrating mapping-, assembly- and haplotype-based approaches for calling variants in clinical sequencing applications. Nat. Genet. 46, 1–9 (2014).

Ye, K. et al. Systematic discovery of complex insertions and deletions in human cancers. Nat. Med. 22, 1–10 (2015).

Yang, R., Nelson, A. C., Henzler, C., Thyagarajan, B. & Silverstein, K. A. T. ScanIndel: a hybrid framework for indel detection via gapped alignment, split reads and de novo assembly. Genome Med. 7, 127 (2015).

Huddleston, J. et al. Reconstructing complex regions of genomes using long-read sequencing technology. Genome Res. 24, 688–696 (2014).

Gilissen, C. et al. Genome sequencing identifies major causes of severe intellectual disability. Nature 511, 344–347 (2014).

Xie, C. & Tammi, M. T. CNV-seq, a new method to detect copy number variation using high-throughput sequencing. BMC Bioinformatics 10, 80 (2009).

Retterer, K. et al. Clinical application of whole-exome sequencing across clinical indications. Genet. Med. 18, 696–704 (2016).

Retterer, K. et al. Assessing copy number from exome sequencing and exome array CGH based on CNV spectrum in a large clinical cohort. Genet. Med. 17, 623–629 (2015).

Patwardhan, A. et al. Achieving high-sensitivity for clinical applications using augmented exome sequencing. Genome Med. 7, 71 (2015).

Santani, A. et al. Medical Exome: Towards achieving complete coverage of disease related genes. (Abstract #371) The 64th Annual Meeting of The American Society of Human Genetics, San Diego, California http://www.ashg.org/2014meeting/pdf/2014_ASHG_Meeting_Platform_Abstracts.pdf (18–22 Oct 2014).

Mandelker, D. et al. Navigating highly homologous genes in a molecular diagnostic setting: a resource for clinical next-generation sequencing. Genet. Med. http://dx.doi.org/10.1038/gim.2016.58 (2016).

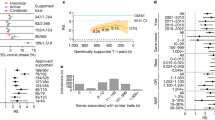

McRae, J. F. et al. Prevalence, phenotype and architecture of developmental disorders caused by de novo mutation. Preprint at bioRxiv http://dx.doi.org/10.1101/049056 (2016).

Goldfeder, R. & Ashley, E. A precision metric for clinical genomic sequencing. Preprint at bioRxiv http://dx.doi.org/10.1101/051490 (2016).

Li, H. On HiSeq X10 Base Quality. http://lh3.github.io/2014/11/03/on-hiseq-x10-base-quality (2014).

Altman, R. B., Khuri, N., Salit, M. & Giacomini, K. M. Unmet needs: Research helps regulators do their jobs. Sci. Transl. Med. 7, 315ps22 (2015).

Kass-Hout, T. & Litwack, D. Advancing precision medicine by enabling a collaborative informatics community. FDA Voice http://blogs.fda.gov/fdavoice/index.php/2015/08/advancing-precision-medicine-by-enabling-a-collaborative-informatics-community (2015).

Pearl, J. Causality (Cambridge Univ. Press, 2009).

Richards, C. S. et al. ACMG recommendations for standards for interpretation and reporting of sequence variations: Revisions 2007. Genet. Med. 10, 294–300 (2008).

Kutalik, Z., Whittaker, J., Waterworth, D., Beckmann, J. S. & Bergmann, S. Novel method to estimate the phenotypic variation explained by genome-wide association studies reveals large fraction of the missing heritability. Genet. Epidemiol. 35, 341–349 (2011).

Wood, A. R. et al. Defining the role of common variation in the genomic and biological architecture of adult human height. Nat. Genet. 46, 1173–1186 (2014).

Rehm, H. L. et al. ClinGen — The Clinical Genome Resource. N. Engl. J. Med. 372, 2235–2242 (2015).A description of the Clinical Genome Resource (ClinGen).

Amendola, L. M. et al. Performance of ACMG-AMP variant-interpretation guidelines among nine laboratories in the clinical sequencing exploratory research consortium. Am. J. Hum. Genet. 98, 1067–1076 (2016).

Caleshu, C. & Ashley, E. Taming the genome. Genome Med. 8, 70 (2016).

Fisher, K. E. et al. Clinical validation and implementation of a targeted next-generation sequencing assay to detect somatic variants in non-small cell lung, melanoma, and gastrointestinal malignancies. J. Mol. Diagn. 18, 299–315 (2016).

Acknowledgements

The author extends his grateful thanks to R. Goldfeder, A. Dainis, M. Grove, D. Church, M.J. Clark, S. Garcia, G. Chandratillake and C. Caleshu for helpful discussion and suggestions on the manuscript.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

E.A.A. is a co-founder of, and an advisor at, Personalis Inc.

Related links

FURTHER INFORMATION

Glossary

- Checkpoint receptors

-

Mediate important immune autoinhibitory pathways, including programmed cell death 1 (PD1) and cytotoxic T lymphocyte-associated protein 4 (CTLA4).

- Pharmacogenomics

-

The study and application of the effect of genetic variation on the response to pharmaceuticals.

- Black box warnings

-

Named for the black border surrounding the text of the warning on the package insert or label of a drug. They detail the safety concerns that are of a more serious nature than those described elsewhere on the package or label. The border is used when a serious adverse event can be caused by the medication or can be prevented by appropriate use of the medication.

- Companion diagnostics

-

Diagnostic tests that help to direct the appropriateness of a specific drug therapy.

- Linkage analysis

-

An approach to establish the probability that a given genomic region is associated with a phenotype, usually in an extended pedigree.

- HapMap project

-

An international consortium aimed at characterizing the haplotype diversity of the human genome.

- Shotgun

-

In shotgun sequencing, longer DNA fragments are broken into smaller fragments for sequencing using chain termination (Sanger) chemistry.

- Pseudogenes

-

Copies of a gene that are no longer functional in the same way as the original gene, usually because of deactivating mutations, such as premature stop codons. Pseudogenes can be either processed (derived from retrotransposition of a mature transcript) or non-processed (derived from a DNA duplication event that includes a modification leading to a loss of transcription or translation).

- Segmental duplications

-

Typically pericentromeric or subtelomeric duplications, concentrated in the Y chromosome, generally tens to hundreds of kilobases in length.

- Short tandem repeats

-

Microsatellite DNA motifs consisting of 2–6 bp repeated elements of median length 25 bp and accounting for 1% of the genome. They predispose to DNA polymerase slippage events and high mutation rates. Recent work suggests an important role in gene expression.

- Transposon-derived repeats

-

Repeats derived from transposons, which are DNA elements that can change their positions within the genome.

- Paralogy

-

A paralogue is a gene related to another by duplication. In this Review, the words paralogy and paralogous are used as umbrella terms for areas of the human genome that are identical to each other. Note that paralogues can be formally distinguished from homologues (genes related to one another by descent from a common ancestor) and orthologues (genes related to one another by speciation).

- De novo assembly

-

Arranging DNA sequence reads in the most likely order of origination without alignment to a reference sequence.

- Structural variant

-

A region of DNA usually greater than 500 bases variant from a defined reference.

- Lossy compression

-

A class of data encoding that reduces data size for storage, handling and transmission at the expense of loss of content.

- Lossless compression

-

A class of data encoding where the original can be perfectly restored from the compressed file.

- Compression heuristic

-

An approach to compression that is not designed to be optimal but is rather designed to be practical.

- Variant call format

-

(VCF). A file format standard for the cataloguing of genetic variation in one or many genomes.

- Major allele

-

The most common allele in a given population.

- Mendelian disease

-

A genetic disease that follows traditionally recognized patterns of simple inheritance, for example, autosomal dominant.

- Parsers

-

An algorithm with a specific application in translating one terminology to another.

- ClinVar

-

A curated database of clinically relevant human genetic variation along with the evidence for its disease causality.

- dbSNP

-

A minimally curated database of single nucleotide human genetic variation.

- Splice dinucleotides

-

The almost invariant canonical dinucleotides that are crucial for splicing (GT: donor; AG: acceptor).

- Univariate

-

Depending on only one variable.

- Multivariate

-

Depending on multiple variables.

Rights and permissions

About this article

Cite this article

Ashley, E. Towards precision medicine. Nat Rev Genet 17, 507–522 (2016). https://doi.org/10.1038/nrg.2016.86

Published:

Issue Date:

DOI: https://doi.org/10.1038/nrg.2016.86

This article is cited by

-

Conceptual modelling for life sciences based on systemist foundations

BMC Bioinformatics (2023)

-

Hemoglobin signal network mapping reveals novel indicators for precision medicine

Scientific Reports (2023)

-

A photo-triggered self-accelerated nanoplatform for multifunctional image-guided combination cancer immunotherapy

Nature Communications (2023)

-

Decentralized digital twins of complex dynamical systems

Scientific Reports (2023)

-

Predicting and Mitigating Freshmen Student Attrition: A Local-Explainable Machine Learning Framework

Information Systems Frontiers (2023)