Abstract

Cognitive accounts of gambling suggest that the experience of almost winning—so-called ‘near-misses’—encourage continued play and accelerate the development of pathological gambling (PG) in vulnerable individuals. One explanation for this effect is that near-misses signal imminent winning outcomes and heighten reward expectancy, galvanizing further play. Determining the neurochemical processes underlying the drive to gamble could facilitate the development of more effective treatments for PG. With this aim in mind, we evaluated rats' performance on a novel model of slot machine play, a form of gambling in which near-miss events are particularly salient. Subjects responded to a series of three flashing lights, loosely analogous to the wheels of a slot machine, causing the lights to set to ‘on’ or ‘off’. A winning outcome was signaled if all three lights were illuminated. At the end of each trial, rats chose between responding on the ‘collect’ lever, resulting in reward on win trials, but a time penalty on loss trials, or starting a new trial. Rats showed a marked preference for the collect lever when both two and three lights were illuminated, indicating heightened reward expectancy following near-misses similar to wins. Erroneous collect responses were increased by amphetamine and the D2 receptor agonist quinpirole, but not by the D1 receptor agonist SKF 81297 or receptor subtype selective antagonists. These data suggest that dopamine modulates reward expectancy following the experience of almost winning during slot machine play, via activity at D2 receptors, and this may result in an enhancement of the near-miss effect and facilitate further gambling.

Similar content being viewed by others

INTRODUCTION

People gamble despite being aware that the odds are stacked in the house's favor. This behavior has resulted in a highly profitable gambling industry that continues to grow even in times of recession. As gambling becomes more prevalent and socially acceptable, public debate is growing as to its potentially harmful consequences (Shaffer and Korn, 2002). The majority of people enjoy recreational gambling with no adverse effects. However, for a significant minority, gambling develops into a compulsive and pathological behavior that strongly resembles substance abuse (Potenza, 2008), and current estimates as to the lifetime prevalence of such pathological gambling (PG) vary between 0.2–2% (Shaffer et al, 1999; Petry et al, 2005). Determining why people gamble could therefore provide valuable insight into addictive behaviors, as well as furthering our knowledge of non-normative or ‘irrational’ decision making.

Cognitive accounts of PG propose that gambling is sustained because of the erroneous or distorted beliefs about the independence of gambling outcomes, the intervention of luck, and the ability of personal skills to confer success when gambling (Ladouceur et al, 1988; Toneatto et al, 1997). One prominent hypothesis is that the experience of almost-winning—a so-called ‘near-miss’—can invigorate gambling activity, and may accelerate the development of PG in vulnerable individuals (Reid, 1986; Griffiths, 1991; Clark, 2010). Near-miss events can produce similar psychological and physiological changes as winning outcomes (Griffiths, 1991). Near-misses may therefore heighten reward expectancy due to their similarity to wins, making continued play more likely (Reid, 1986). In line with this theory, near-misses have been shown to increase the desire to continue gambling (Kassinove and Schare, 2001; Cote et al, 2003; MacLin et al, 2007) and to enhance neural activity within the mid-brain and the ventral striatum (Clark et al, 2009; Habib and Dixon, 2010). These observations suggest that near-misses convey a positive reward signal encoded by the dopaminergic circuits that support reward expectancy and reinforcement learning (Schultz et al, 1997; Schultz, 1998; Fiorillo et al, 2003).

In support of this general hypothesis, drugs that alter dopaminergic activity have been shown to modify slot-machine play, a form of gambling in which near-misses are particularly salient. The psychostimulant drug amphetamine, which potentiates dopamine's (DA) actions, can increase the motivation to play slot machines (Zack and Poulos, 2004), whereas the preferential D2 receptor antagonist, haloperidol, can enhance the rewarding properties of such behavior (Zack and Poulos, 2007). Aberrant DA signaling is a critical component of drug addiction, and drives the increased incentive salience of drug-paired cues that galvanize drug seeking (Robinson and Berridge, 1993). The observation that slot machine play is often the most common gambling activity in pathological gamblers has lead to the suggestion that slot machine gambling may be particularly compulsive (Breen and Zimmerman, 2002; Choliz, 2010). Given that animal research has significantly advanced our understanding of goal-directed behavior and addiction, an animal model of slot machine play may make a valuable contribution to gambling research (Potenza, 2009), and a preliminary report indicates that rats are capable of learning such a task (Peters et al, 2010).

To summarize, current evidence suggests that the DA system may be critically involved in the development of pathological slot machine gambling, and in the manifestation of the near-miss effect, because of its role in signaling reward expectancy. Determining the neurochemical processes underlying the expectation of reward when gambling could assist in the development of effective treatments for PG. Using a novel rodent slot machine paradigm, we therefore aimed to determine whether the experience of ‘almost winning’ would increase the behavioral expression of reward expectancy in rats in a manner analogous to a near-miss effect, and whether such behavior could be modulated by dopaminergic drugs.

MATERIALS AND METHODS

Subjects

Subjects were 16 male Long Evans rats (Charles River Laboratories, St Constant, NSW, Canada) weighing 250–275 g at the start of testing. Subjects were food restricted to 85% of their free feeding weight and maintained on 14 g rat chow given daily. Water was available ad libitum. All animals were pair-housed in a climate-controlled colony room maintained at 21°C on a reverse 12 h light–dark schedule (lights off 0800). Behavioral testing and housing were in accordance with the Canadian Council of Animal Care and all experimental protocols were approved by the UBC Animal Care Committee.

Behavioral Apparatus

Testing took place in eight standard five-hole operant chambers, each enclosed within a ventilated sound-attenuating cabinet (Med Associates St Albans, Vermont). The configuration of the chambers was identical to that described previously (Zeeb et al, 2009), with the addition of retractable levers located on either side of the food tray. Chambers were controlled by software written in MED-PC by CAW running on an IBM-compatible computer.

Behavioral Testing

Habituation and training

In brief, subjects were initially habituated to the testing chambers and learned to respond on each of the retractable levers to earn food reward. Animals were then trained on a succession of simplified versions of the slot machine program that gradually increased in complexity. A detailed description of each training stage is provided in Supplementary Information.

Slot machine task

A task schematic is provided in Figure 1. The middle three holes within the five-hole array were used in the task (holes 2–4). The rat initiated each trial by pressing the roll lever. This lever then retracted and the light inside hole 2 began to flash at a frequency of 2 Hz (Figure 1a). Once, the rat made a nosepoke response at this aperture, the light inside set to on or off (summarized henceforth as ‘1’ or ‘0’) for the remainder of the trial. Depending on the illumination status of the light, either a 20 kHz (‘on’) or 12 kHZ (‘off’) tone sounded for 1 s, after which the light in hole 3 began to flash (Figure 1b). Again, a nosepoke response caused the light to set to on or off and triggered the presentation of a 1 s 20/12 kHZ tone, after which the light in hole 4 began to flash (Figure 1c). Once the rat had responded in hole 4 and the light inside set to on or off, again accompanied by the relevant tone, both the collect and roll levers were presented (Figure 1d and e).

Schematic diagram showing the trial structure for the slot machine task. A response on the roll lever starts the first light flashing (a). Once the animal responds in each flashing aperture, the light inside sets to on or off and the neighboring hole starts to flash (b, c). Once all three lights have been set, the rat has the choice to start a new trial, by responding on the roll lever, or responding on the collect lever. On win trials, where all the lights have set to on, a collect response delivers 10 sugar pellets (d). If any of the lights have set to off, a response on the collect lever instead results in a 10 s time-out period (e). There are eight possible light patterns (f). A win is clearly signaled by all three lights setting to on, and a clear loss is evident when all of the lights are set to off.

The rat was then required to respond on one or other lever, and the optimum choice was indicated by the illumination status of the lights in holes 2–4. On win trials, all three lights were set to on (1,1,1), and a response on the collect lever delivered 10 sugar pellets (Figure 1d). If any of the lights had set to off (eg, Figure 1e), then a response on the collect lever lead to a 10 s time-out period during which reward could not be earned. The use of three active holes resulted in eight possible trial types (Figure 1f, (1,1,1); (1,1,0); (1,0,1); (0,1,1); (1,0,0); (0,1,0); (0,0,1); (0,0,0)), the incidence of which was pseudo-randomly distributed evenly throughout the session on a variable ratio 8 schedule. If the rat chose the roll lever on any trial, then the potential reward or time-out was canceled, and a new trial began. Hence, on win trials, the optimal strategy was to respond on the collect lever to obtain the scheduled reward, whereas on loss trials, the optimal strategy was to instead respond on the roll lever and start a new trial. If the rat chose to collect, both levers retracted until the end of the reward delivery/time-out period, after which the roll lever was presented and the rat could initiate the next trial. The task was entirely self-paced in that animals were not required to make any of the responses within a particular time window; if necessary, the program would continue to wait for the animal to make the next valid response in the sequence until the end of the session. The only point at which the rat could fail to complete a trial was therefore if the session ended partway through. Animals received five daily testing sessions per week until statistically stable patterns of responding had been established over five sessions (maximum number of sessions taken to reach criteria, including all training sessions: 49–54). Animals were deemed to have successfully acquired the task if they completed >50 trials per session and made <50% collect responses on clear loss (0,0,0) trials.

The current paradigm is similar to a previous attempt to model slot machine play in rats (Peters et al, 2010), in that animals were required to choose between a collect lever and ‘spin’ or ‘roll’ lever depending on a light pattern. However, in the report by Peters et al (2010), the previous hole had to be illuminated in order for the subsequent light to be turned on. As a result, subjects could solve the discrimination by attending solely to the last light illuminated in the sequence. In the current study, the animals were also required to nosepoke in the response holes to ensure that they were attending to, or at least facing, the stimulus lights during the trial.

Pharmacological Challenges

Once, stable baseline behavior had been established, the response to the following compounds was determined: d-amphetamine (0, 0.6, 1.0, 1.5 mg/kg), eticlopride (0, 0.01, 0.03, 0.06 mg/kg), SCH 23390 (0, 0.001, 0.003, 0.01 mg/kg), quinpirole (0, 0.0375, 0.125, 0.25 mg/kg), and SKF 81297 (0, 0.03, 0.1, 0.3 mg/kg). Drugs were administered 10 min before testing according to a series of diagram-balanced Latin square designs for doses A-D: ABCD, BDAC, CABD, DCBA; p.329 (Cardinal and Aitken, 2006). Each drug/saline test day was preceded by a drug-free baseline day and followed by a day on which animals were not tested. Animals were tested drug free for at least 1 week between each series of injections to allow a stable behavioral baseline to be re-established.

Extinction and Reinstatement

The extinction/reinstatement test was of a similar design to that used in drug self-administration experiments. The aim of this manipulation was to observe whether task performance would extinguish more slowly if putative near-miss trials were present, in keeping with some reports in the human literature (Kassinove and Schare, 2001; MacLin et al, 2007). Near-miss trials were defined as any trial type on which two out of three active holes were illuminated (see results section for rationale). Following completion of all the pharmacological challenges, animals were divided into two groups matched for both the number of trials completed and the pattern of collect responses observed across different trial types. Both groups then performed the slot machine task in extinction, during which a collect response after a win trial no longer resulted in delivery of reward. For one group of rats, near-miss trials were omitted from play. The incidence of wins and clear loss trials was kept equal across both groups. After 10 extinction sessions, all rats were reinstated on the standard slot machine task for a further 10 sessions during which win trials were once again rewarded. More rapid reinstatement could be indicative of increased engagement in the slot machine task. Near-miss trials were present for both groups during reinstatement.

Drugs

All drug doses were calculated as the salt and dissolved in 0.9% sterile saline. All drugs were prepared fresh daily and administered via the intraperitoneal route in a volume of 1 mg/ml. Eticlopride hydrochloride, SCH 23390 hydrochloride and quinpirole hydrochloride were purchased from Sigma-Aldrich (Oakville, Canada). SKF 81297 hydrobromide was purchased from Tocris Bioscience (Ellisville, MO). D-amphetamine hemisulfate was purchased under an exemption from Health Canada from Sigma-Aldrich UK (Dorset, England).

Data Analysis

The following variables were analyzed for each trial type: the percentage of trials on which animals pressed the collect lever (arcsine transformed), the average latency to respond on the collect lever, and the latency to respond in each aperture when the light inside was flashing. The number of trials completed per session was also analyzed. The latency to choose the roll lever after each trial was not included in the formal analysis as this measure was skewed by the higher incidence of erroneous collect responses, resulting in a 10 s time penalty, on some trial types, and the time taken to consume sugar pellets on win trials. All data were subjected to within-subjects repeated measures analysis of variance (ANOVAs), conducted using SPSS software (SPSS v16.0, Chicago, IL).

During training, the collect lever choice and collect lever latency were analyzed in five session (weekly) bins with session (five levels) and trial type (eight levels) as within-subjects factors. A stable baseline was defined as the lack of a significant effect of session or trial type × session interaction. To determine the impact of the number of lights illuminated, regardless of spatial position, data were pooled across 2-light trials ((1,1,0), (1,0,1), and (0,1,1)) and one-light trials ((1,0,0), (0,1,0), and (0,0,1)). ANOVA was then performed with session and lights illuminated (4 levels, 0–3) as within-subjects factors. The latency to respond at the array was first subjected to ANOVA with session, trial type, and hole (3 levels) as within-subjects factors. In order to determine whether responding on the next hole was affected by the illumination of the previous hole, the average latency to respond in the middle hole if the first hole had set to on or off was calculated, regardless of trial type. Likewise, the average latency to respond in the last hole if the middle hole had set to on or off was determined. These data were then subjected to ANOVA with session, hole (two levels: middle and last) and previous hole state (two levels: on and off) as within-subjects factors. Trials completed per session were analyzed by a simple ANOVA with session as the only within-subjects factor. The response to the different pharmacological challenges was analyzed using similar ANOVA methods, but the session factor was replaced with a dose factor.

Data from the 10 extinction and reinstatement sessions were likewise analyzed by ANOVA in 3–4 day bins, with the addition of group (2 levels) as a between-subjects factor. As analysis of all other variables was confounded by the fact that not all trial types were present for both groups, the only variable analyzed from the extinction sessions was the number of trials completed. In all analyses, the significance level was set at p<0.05. If the probability of an event occurring was found to be <0.1, the observation was described as a trend.

RESULTS

Baseline Performance

Four animals were excluded from the analysis because of failure to meet the following learning criteria: these rats did not perform at least 50 trials per session, nor did they make fewer than 50% collect errors on clear loss (0,0,0) trials. The final number of rats included in the study was therefore 12.

Lever choice

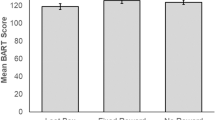

On win trials, rats responded on the collect lever virtually 100% of the time, thereby ensuring delivery of the scheduled reward (Figure 2a and b). In contrast, if none of the lights set to on (a ‘clear’ loss), rats showed a strong preference for the now-advantageous roll lever. However, even on such clear loss trials, rats still erroneously responded on the collect lever on approximately 20% of trials. Preference for the collect lever varied significantly across the other trial types (Figure 2b, trial type: F7, 77=56.75, p<0.01). The clearest predictor of the choice pattern observed was the degree to which the trial resembled a win, as illustrated by the strong positive correlation observed between the number of lights illuminated and the percentage of collect responses (Figure 2a). Thus, the presence of putative ‘win’ signals on loss trials linearly increased the likelihood that the rat would respond as if the trial was a win trial, and make a maladaptive collect response. In this way, such erroneous collect responses could reflect a process similar to a ‘near-miss’ effect. This effect is strongest on 2-light loss trials, in which the preference for the collect lever is significantly higher than chance, and also higher than that observed for 1-light losses or clear losses (lights illuminated: F3, 33=245.23, p<0.01; 2 vs 1-lights: F1, 11=143.57, p<0.01; 2 vs 0 lights: F1, 11=249.20, p<0.01), although it is still significantly lower than that observed during win trials (2 vs 3 lights: F3, 33=128.92, p<0.01).

Baseline performance of the slot machine task. On win trials, when all three lights had set to on ((1,1,1)), animals chose the collect lever 100% of the time (a, b). As the number of lights illuminated decreased, so did the preference for the collect lever (a). Animals consistently showed a strong preference for the collect lever on 2-light losses, or near-miss trials. The proportion of collect responses made on both 2-light and 1-light losses also varied according to the precise pattern of lights illuminated (b). In the first week of training, rats were slower to respond in the subsequent hole if the previous hole had set to off (c). However, this differential effect was no longer observed once stable choice behaviour has been established. This pattern was observed for both the middle and last holes, therefore, the graph reflects the combined data from both holes. All data shown are the mean across five sessions±SEM.

Although the overall number of lights illuminated per trial is a better predictor of collect lever choice than illumination of any one light in particular, there tended to be some variation between the error rates on 1-light (trial type: F2, 22=3.061, p=0.067) and 2-light losses (trial type: F2, 22=3.717, p=0.041), potentially indicating that the spatial location of the exact holes illuminated could affect the rats' bias towards the collect or roll lever. Numerically, the highest number of erroneous responses occurred when the last light was illuminated. It is possible that an attentional bias may have developed to this aperture, potentially due to its close proximity in space and time to the collect lever. However, comparing the 1-light losses, illumination of the final light in the series led to a higher error rate than illumination of the middle hole ((0,1,0) vs (0,0,1): F1, 11=5.026, p=0.047), but not the first hole ((1,0,0) vs (0,0,1): F1, 11=2.682, NS). Similarly, if the final hole was not illuminated in a 2-light loss, a lower error rate was observed as compared with a loss in which the first and final holes were set to on ((1,1,0) vs (1,0,1): F1, 44=7.643, p=0.018), but not if just the last two lights were illuminated ((1,1,0) vs (0,1,1): F1, 44=2.970, NS). On the basis of the statistical analyses, it would therefore appear that a win signal in the middle of the sequence is less powerful than one at the end or beginning, but illumination of any particular hole is not sufficient, in and of itself, to determine lever choice. Whether presenting the cues in a random order, rather than from left to right, would ameliorate these effects remains to be determined.

Response latencies

In contrast to the distribution of collect lever responses, the latency to respond on the collect lever did not vary depending on the light pattern (Supplementary Table S1: trial type: F7, 77=0.784, NS). The latency to respond in each successive hole decreased steadily from the first to the last hole across the trial, regardless of the trial type (Supplementary Table S2: hole: F2, 22=17.773, p<0.01, trial type: F7, 77=1.724, NS). From a theoretical perspective, if illumination of a light in the sequence was interpreted as a positive reinforcement signal, then this outcome should facilitate subsequent responding. Hence, one might expect a decrease in the latency to respond in the subsequent hole if the previous hole had set to on. Conversely, the latency to respond at the next hole should increase if the previous hole had set to off. In order to investigate whether this was the case, the latency to respond at the middle hole was analyzed depending on whether the first hole had set to on or off, regardless of trial type. Similarly, the latency to respond at the last hole was analyzed depending on the state of the middle hole. Earlier in training, there was a significant effect of the previous hole state on the speed of responding, in that rats took longer to respond in the subsequent hole if the previous hole had set to off rather than on (Figure 2c; previous hole state week 1: F1, 11=6.105, p=0.031; -week 2: F1, 11=10.779, p=0.007). However, once a stable baseline pattern of choice had been established, this effect was no longer significant (week 3: previous hole state: F1, 11=0.007, NS).

Trials completed

The average number of trials completed per session once a stable behavioral baseline had been achieved was 71.0±3.61 (SEM). Over the course of the experiment, this number gradually increased (Supplementary Table S3), which may be indicative of a general improvement in task engagement with repeated testing. However, the overall distribution of collect responses across trial type remained constant.

Effect of Amphetamine Administration on Task Performance

Amphetamine selectively increased the number of collect responses made on loss trials, but this depended on the number of lights set to on as indicated by a significant interaction between dose and the number of lights illuminated (Figure 3a; dose × lights illuminated- all doses: F9, 99=3.636, p=0.001). Analysis of simple effects showed that amphetamine dose-dependently increased collect responses following clear losses (dose: F3, 33=4.923, p=0.006; saline vs 1.0 mg/kg: F1, 11=9.709, p=0.01; saline vs 1.5 mg/kg: F1, 11=7.014, p=0.023), and there was a trend for an increase in collect errors on 1-light loss trials (dose: F3, 33=3.128, p=0.039; saline vs 1.0 mg/kg: F1, 11=3.510, p=0.09). Regarding the latter observation, the ability of amphetamine to boost collect errors was only statistically significant when the last light was illuminated (Figure 3b; dose × trial type: F21, 231=2.521, p=0.022; dose (0,0,1): F3, 33=3.234, p=0.035; (0,1,0): F3, 33=0.754, NS; (1,0,0): F3, 33=2.169, NS).

Effects of amphetamine on performance of the slot machine task. Amphetamine dose-dependently increased the proportion of collect errors on clear loss and 1-light loss trials (a). More specifically, amphetamine significantly increased collect responses on on (0,0,0) and (0,0,1) trial types (b). The lowest and highest dose of amphetamine also made animals more sensitive to the illumination status of the holes, in that they were once more faster to respond if the previous hole had set to on rather than off (c). Data are shown as the mean±SEM.

Amphetamine also selectively increased the latency to respond on the collect lever on the same trial types on which significantly more erroneous collect errors were made (Supplementary Table S1, dose × trial type all doses: F21, 231=2.010, p=0.007; saline vs 1.0 mg/kg: F7, 77=2.529, p=0.021; saline vs 1.5 mg/kg: F7, 77=3.720, p=0.002; (0,0,0): F3, 33=4.892, p=0.006; −(0,0,1): F3, 33=3.764, p=0.02). In contrast, amphetamine generally decreased the latency to respond at the apertures regardless of the trial type (Supplementary Table S2, dose: F3, 33=12.649, p=0.0001; trial type: F7, 77=1.652, NS; saline vs 0.6 mg/kg: dose: F1, 11=7.977, p=0.017; saline vs 1.0 mg/kg: F1, 11=10.820, p=0.017; saline vs 1.5 mg/kg: F1, 11=12.888, p=0.004). Furthermore, amphetamine tended to make rats faster to respond in a hole if the previous hole had set to on rather than off, reminiscent of their behavior during task acquisition (Figure 3c; dose × previous hole state: F3, 33=2.710, p=0.096; previous hole state saline: F1, 11=0.625, NS; −1.5 mg/kg: F1, 11=7.052, p=0.022). Amphetamine did not alter the total trials completed per session (Supplementary Table S3; dose: F3, 33=1.385, NS). Amphetamine therefore increased the speed of responding at the array, particularly following a positive signal (illuminated light), yet impaired the use of the light pattern to guide lever choice, such that collect responses were made despite minimal or no indicators that reward was likely.

Effect of the D2 Receptor Antagonist Eticlopride on Task Performance

The highest dose of eticlopride reduced the average number of trials completed to less than 20, therefore this dose was not included in the analysis. All data are provided in Supplementary information (Supplementary Figure S1, Supplementary Tables S1–S3). Although the term ‘D2 receptor’ is used here for clarity, it is acknowledged that both eticlopride and quinpirole bind with less affinity to other D2-like receptors (D3 and D4), and that some of these findings may be attributed to actions at the D2 receptor family rather than to the D2 receptor specifically.

Eticlopride did not affect the proportion of collect responses made regardless of the number of lights illuminated per trial (dose × lights illuminated: F6, 66=1.489, NS) or the exact light pattern (dose × trial type: F14, 154=1.182, NS). The higher dose of eticlopride tended to increase the latency to respond on the collect lever (dose: F2, 22=3.306, p=0.056; saline vs 0.03 mg/kg: dose: F1, 11=12.544, p=0.005). Both doses increased the latency to respond at the array (dose: F2, 22=15.797, p<0.01; dose saline vs 0.01 mg/kg: F1, 11=7.322, p=0.02; saline vs 0.03 mg/kg: F1, 11=19.462, p<0.01) and significantly decreased the numbers of trials completed (dose: F2, 22=31.790, p<0.01; saline vs 0.01 mg/kg: F1, 11=11.196, p=0.007; saline vs 0.03 mg/kg: F1, 11=43.949, p<0.01; trials completed 0.01 mg/kg: 59.0±6.22; −0.03 mg/kg: 17.67±4.06). This pattern of data indicate that the D2 receptor antagonist generally decreased motor activity, rather than specifically affecting any cognitive aspects of the task pertaining to the decision to respond on the collect lever.

Effect of the D1 Receptor Antagonist SCH 23390 on Task Performance

All data are provided in Supplementary information (Supplementary Figure S2, Supplementary Tables S1–S3).

SCH 23390 did not affect the preference for the collect lever regardless of the number of lights illuminated (dose × lights on: F9, 99=0.569, NS) or specific trial type (dose × trial type: F21, 231=0.764, NS). Although the highest dose increased the latency to respond on the collect lever (dose: F3, 33=5.968, p=0.002; saline vs 0.01 mg/kg dose: F1, 11=10.496, p<0.01) and increased the latency to respond at the array (dose: F3, 33=4.603, p=0.008), the number of trials completed under this dose was also dramatically decreased (trials completed under 0.01 mg/kg: 20.7±5.0; dose: F3, 33=40.66, p=0.0001; saline vs 0.01 mg/kg: F1, 11=60.601, p=0.0001). Hence, similar to the effects of eticlopride, the highest dose moderately decreased motor output, yet did not affect any cognitive aspects of the task.

Effect of the D2 Agonist Quinpirole on Task Performance

The highest dose of quinpirole reduced the average number of trials completed to less than 20, therefore this dose was not included in the analysis.

Quinpirole significantly increased the proportion of erroneous collect responses made on both ‘near-miss’ trials and clear loss trials (Figure 4a; dose × lights illuminated: F6, 66=7.586, p=0.002; saline vs 0.0375 mg/kg: F3, 33=8.163, p=0.0001; saline vs 0.125 mg/kg: dose × lights illuminated F3, 33=14.865, p=0.0001). Breaking the data down by the precise pattern of lights, significant effects of the drug were observed on all trial types except win trials (Figure 4b; dose: F2, 22=16.481, p=0.0001; dose × trial type: F14, 154=4.746, p=0.0001; dose (1,1,1) F2, 22=1.068, NS all other trial types F>3.25, p<0.05). Comparing the two doses of drug, the higher dose appeared to induce a greater increase in collect errors, particularly on 0-light trials (0.0375 vs 0.125 mg/kg: dose × trial type: F7, 77=2.880, p=0.01).

Effects of quinpirole on performance of the slot machine task. Quinpirole dose-dependently increased collect errors on all loss trials (a, b). This effect was particularly pronounced on 1-light and 2-light losses at the lowest dose tested. Quinpirole also increased the latency to respond at the array regardless of the illumination status of the holes (c). Data are shown as the mean±SEM.

Quinpirole also increased the latency to respond on the collect lever, regardless of trial type or dose (Supplementary Table S1; dose: F2, 22=14.035, p=0.0001, dose × trial type: F14, 154=0.475, NS; saline vs 0.0375 mg/kg: F1, 11=18.563, p=0.001; saline vs 0.125 mg/kg: F1, 11=30.540, p=0.0001). Similarly, both doses increased the latency to respond at the array regardless of trial type (Supplementary Table S2; dose: F2, 22=8.986, p=0.001; dose × trial type: F14, 154=1.500, NS; saline vs 0.0375 mg/kg dose: F1, 11=9.891, p=0.009; saline vs 0.125 mg/kg dose: F1, 11=20.08, p=0.001) or the illumination state of the previous hole (Figure 4c; dose × previous hole state: F2, 22=0.291, NS). Both doses of quinpirole also decreased the number of trials completed to a similar degree (Supplementary Table S3; trials completed −0.0375 mg/kg: 47.08±5.8; −0.125 mg/kg: 40.92±3.8; dose: F2, 22=44.726, p=0.0001; saline vs 0.0375 mg/kg: F1, 11=45.633, p=0.0001; saline vs 0.125 mg/kg: F1, 11=57.513, p=0.0001; 0.0375 vs 0.125 mg/kg: F1, 11=1.268, NS). In summary, although quinpirole did reduce motor output, both doses lead to an increase in erroneous collect responses on loss trials that were particularly pronounced on 1-light and 2-light losses.

Effect of the D1 Receptor Agonist SKF 81297 on Task Performance

All data are provided in Supplementary information (Supplementary Figure S3, Supplementary Tables S1–S3). SKF 81297 had very little effect on performance of the task. The proportion of collect responses remained unchanged (dose: F3, 33=0.086, NS; dose × trial type: F21, 231=1.185, NS; dose × lights illuminated: F9, 99=1.516, NS) as did the latency to press the collect lever (dose: F3, 33=0.742, NS; dose × trial type: F21, 231=0.765, NS). The highest dose marginally decreased the number of trials completed (dose F3, 33=4.764, p=0.007, saline vs 0.03 mg/kg: F1, 11=10.227, p=0.008) and increased the latency to respond at the array regardless of the illuminate state of any of the holes (dose: F3, 45=4.644, p=0.007; saline vs 0.03 mg/kg: F1, 11=15.416, p=0.002; dose × previous hole state: F3, 33=2.047, NS).

Extinction and Reinstatement

When collect responses after win trials were no longer rewarded, all rats showed a steady decrease in the number of trials completed (Figure 5a; day: F9, 90=50.3, p<0.01). The presence or absence of 2-light ‘near-miss’ trials did not alter the rate of extinction (day × group: F9, 90=0.503, NS; group: F1, 10=0.365, NS). However, when win trials were once again valid indicators that reward was available, the number of trials completed began to increase and animals re-engaged in the task. Although both groups of animals were performing comparable numbers of trials after 10 sessions, the initial rate of ‘reinstatement’ of slot machine play was more rapid in rats which had not experienced near-miss trials during extinction (Figure 5a; days 1–3: session × group: F2, 20=4.310, p=0.028; days 4–6: session × group: F2, 20=4.677, p=0.022; days 7–10 session × group: F3, 30=1.323, NS). Despite this difference in the number of trials completed, the proportion of collect lever responses made on the various trial types, and the latency to press the collect lever, did not differ between the groups at any stage during reinstatement (days 1–3, 4–6, and 7–10: session × group, session × group × trial type, all Fs<2.1, NS). Even in the first 3 days of testing, the distribution of collect responses across the various trial types strongly resembled that seen before extinction (Figure 5b).

Effect of removing near-miss trials during extinction on both the rate of extinction and subsequent reinstatement of task performance. The presence or absence of near-miss trials did not affect the rate of extinction as indicated by the number of trials completed per session (a). However, rats which had not experienced near-miss trials during extinction were faster to pick up the task again once win trials were rewarded. During this reinstatement phase, near-miss trials were again present for both groups. Despite the difference in the number of trials completed, the proportion of collect responses made across the different trial types was similar in both groups, even within the first three sessions of reinstatement (b). Although rats that did not experience near-miss trials during extinction were initially faster to response in the subsequent hole if the previous hole had set to on (c), both groups of rats were sensitive to the illumination status of the holes by the end of reinstatement (c, d).

As the number of trials completed per session increased, the latency to respond at the array decreased, but this was observed to the same degree in both groups (Supplementary Table S2; days 1–3: session: F2, 20=14.182, p=0.0001; session × group: F2, 20=1.772, NS; days 4–6, 7–10: session, session × group: all Fs<2.3, NS). However, animals which had not been exposed to ‘near-miss’ trials during extinction were much more sensitive to the illumination state of the previous hole during these early reinstatement sessions, in that they tended to respond faster if the previous light had set to on rather than off (Figure 5c days 1–3: session × previous hole state × group: F2, 20=3.798, p=0.04; ‘no near-miss’ group- session × previous hole state: F2, 10=3.583, p=0.067; ‘near-miss’ group- session × previous hole state: F2, 10=0.234, NS). Hence, although the presence or absence of near-miss trials did not superficially affect the rate of extinction, animals that had not experienced near-miss trials under conditions of non-reward were quicker to re-engage in the task.

DISCUSSION

Cognitive accounts of gambling propose that the experience of almost-winning can sustain gambling behavior and may promote PG in vulnerable individuals (Reid, 1986; Griffiths, 1991; Clark, 2010). Here, we show that rats are capable of performing a complex conditional discrimination (CD) task that is structurally analogous to a simple slot machine. Rats learned that illumination of all three lights in the array signaled that reward was available if a response was made on the collect lever, whereas making this response after any other light pattern would lead to a 10 s time out. Animals were successfully able to discriminate whether a response on the collect lever was advantageous on the majority of trials. However, rats consistently made a high rate of erroneous collect responses when two out of the three lights were illuminated, and these were the only trials on which the error rate was consistently and markedly higher than chance. Such erroneous responding suggests that 2-light trials produce a near-miss effect, in that they are interpreted as more similar to a win than a loss despite the lack of reinforcement delivered. Both amphetamine and the D2 receptor agonist quinpirole increased collect errors on non-win trials, suggesting that increased DA signaling may enhance the expectation of reward delivery on loss trials.

In contrast to our previous finding that eticlopride improved performance of a rat gambling task (rGT; Zeeb et al, 2009), the D2 receptor antagonist did not alter behavior on the slot machine task. This rudimentary comparison supports the suggestion that pharmaceutical compounds will not necessarily have similar effects on all forms of gambling behavior (Grant and Kim, 2006). However, it is also important to note that the D2 receptor antagonist haloperidol has different effects on slot-machine play in healthy controls vs those with PG (Zack and Poulos, 2007; Tremblay et al, 2010), and care must be taken when extrapolating between animal models and human patient populations. In addition, although this rodent paradigm shares some key features with a simple slot machine, there are some obvious differences that should be acknowledged. For example, the rats could not adjust the size of the wager, nor choose to risk a larger amount for the chance of a greater pay-off, even though such contingencies are a feature of some commercial slot machines (Kassinove and Schare, 2001; Weatherly et al, 2004; Harrigan and Dixon, 2010). Furthermore, rats were required to stop each light individually, rather than waiting for all three lights to set following a single response. This feature might have differentially engaged the mechanisms underlying instrumental learning at the expense of the (Pavlovian) approach behavior thought to underlie aspects of slot-machine gambling (Reid, 1986; Griffiths, 1991). That said, some modern slot-machine games afford a variety of opportunities for humans to intervene to directly terminate reel-spins and influence the timing of otherwise random events (Harrigan, 2008). Not withstanding the above limitations, therefore, our experiments do demonstrate that loss trials that resemble wins can heighten the behavioral expression of reward expectancy in rats in a way described by cognitive theories of gambling behavior, and that this effect is susceptible to at least two manipulations of dopamine activity.

It could be argued that the higher proportion of collect responses observed on 2-light loss trials could have arisen simply because animals struggled to discriminate between these and 3-light win trials on a perceptual level, rather than reflecting differences in the cognitive interpretation of the trial outcomes. Although perceptual similarity must, de facto, contribute to the effects seen here, there are several reasons to suppose that our findings are not artifacts of impaired discriminations between the light patterns. First, under baseline conditions, it was clear that animals were able to discriminate reliably between winning and near-miss outcome as evidenced by the significantly greater number of collect responses following the latter compared with the former. Second, different number of erroneous collect responses were observed following different outcomes consisting of just two lights set to on (c.f. (1,1,0) vs (1,0,1)), again indicating that the rats could discriminate reliably between the various light patterns. Third, the doses of quinpirole that produced such marked increases in the error rates on near-miss trials do not impair accuracy of target detection on the five-choice serial reaction time task, a well-validated measure of visuospatial attention (Winstanley et al, 2010). Such data tend to exclude the possibility that our demonstration of near-miss effects on reward expectancy in rats can be attributed simply to difficulties in visual discrimination.

Alternatively, it is possible that the erroneous responses on the collect lever following near-misses merely reflect the vestigial effects of earlier training; as the complexity of the task was gradually increased across different training stages, there were instances in which reward was delivered if only one or two lights were illuminated. However, again, the finding that rats' collect responses were not distributed evenly across 2-light trials argues against this possibility: the pattern (1,0,1) was never associated with a rewarding outcome in training, yet collect responses were most frequent on this trial type. Furthermore, because of the repeated testing required for pharmacological challenges, animals experienced hundreds of non-reinforced 2-light losses over the course of the experiment compared with the relatively small number of rewarded 2-light trials experienced in a few training sessions. It is not uncommon for animals to be shaped to make a response during training that they are then required to subsequently inhibit in a cognitive task (eg during strategy learning (Floresco et al, 2008)). It is therefore unlikely that the limited period of reinforcement received during training could account for the persistent preference for the collect lever on near-miss trials.

The response latency data also indicate that the rats were both capable of detecting the illumination status of the holes and were sensitive to the consequences, in that when a particular hole had set to off, responding in the subsequent hole was slower. However, this effect was only observed earlier in training, before task performance stabilized. By this metric, it would therefore appear that animals became less sensitive to the moment-to-moment feedback provided during a trial as training continued, even though such information could determine whether reward was ultimately available. It is tempting to use such data to argue that performance of the task became more ‘automatic’ or compulsive over time (Jentsch and Taylor, 1999; Robbins and Everitt, 1999). However, rats remained acutely sensitive to the cancellation of expected reward as evidenced by the sharp drop in trials completed during extinction. These data may indicate that performance was still largely goal-directed rather than habitual, although this remains to be confirmed using a more exacting test, such as devaluing rather than omitting the expected reward (Balleine and Dickinson, 1998). Contrary to some previous reports in human subjects, extinction of task performance was not slower in the presence of near-miss trials. However, near-misses do not always retard extinction, and this effect appears to depend critically on the frequency of near-miss events (Kassinove and Schare, 2001) and the number of gambles undertaken (MacLin et al, 2007). The extinction paradigm used here, while typical in design for an animal learning theory experiment, is also not comparable to the kind of extinction experienced during some gambling episodes in which wins simply fail to occur. Further work is therefore needed to determine whether near-miss trials affect the rate of extinction in rats using a more similar set of parameters to those used in the pertinent human studies.

Although the absence of near-miss trials did not affect the time course of extinction, reinstatement in task performance was more rapid in this group, and these rats were more sensitive to the illumination status of the response holes during the first few sessions. Hence, if near-miss stimuli had not been explicitly paired with a devalued win stimulus, the near-miss trials retained their ability to evoke a representation of a positive outcome and invigorate behavior. It would therefore appear that the incentive salience of a near-miss stimulus is not automatically updated when the hedonic value of a win declines. The idea that the hedonic and incentive value systems can be disconnected is a central tenet of the incentive-sensitization hypothesis of addiction, in which environmental stimuli associated with drug come to exert considerable influence over behavior despite the dwindling pleasure associated with drug-taking (Robinson and Berridge, 1993; Wyvell and Berridge, 2000, 2001). It will be interesting to determine, therefore, whether near-miss stimuli have a similar role in facilitating gambling behavior as drug-paired cues do with respect to substance abuse, promoting relapse and craving even after periods of abstinence (Dackis and O'Brien, 2001). We can explore this idea explicitly in further experiments, for example by observing whether 2-light near-miss trials can enhance reinstatement even if win trials are absent. The findings presented here also suggest that breaking the association between near-miss trials and rewarding outcomes could limit the maintenance of gambling behavior. In the current experiment, this was done by repeatedly pairing near-miss trials with non-reinforced win stimuli- an event which may be difficult to convincingly introduce to human gamblers. However, recent work aiming to break these associations via CD training has yielded encouraging results (Zlomke and Dixon, 2006; Dixon et al, 2009), suggesting this could be an important relationship to target from a therapeutic perspective.

Repeated exposure to addictive drugs may induce a hyper-dopaminergic state, and this aberrant DA signaling is thought to underline the enhanced sensitivity to conditioned stimuli observed in drug-dependent subjects (Berridge and Robinson, 1998). Likewise, PG may also involve impaired reward signaling via disruption of DA pathways (Reuter et al, 2005), and repeated administration of DA agonist therapy may induce PG in some Parkinsonian patients (Voon et al, 2009). Psychological accounts suggest that structural characteristics of slot machines, including near-misses, low cognitive demands and high rates of play, might promote excessive or compulsive gambling (Breen and Zimmerman, 2002; Harrigan, 2008; Choliz, 2010). The DA system may therefore have an important role in mediating engagement with slot machines, and the data presented here provide some support for this hypothesis.

Administration of the psychostimulant amphetamine, which potentiates DA's actions, decreased the latency to respond at the array, particularly after presentation of a putative win signal (illuminated light). This observation fits with the well-known ability of acute amphetamine to increase the response to conditioned cues (Robbins, 1978; Beninger et al, 1981; Robbins et al, 1983; Mazurski and Beninger, 1986). Indeed, the increase in collect responses made following amphetamine administration could simply be another example of this drug's ability to increase pre-potent responding for reward, as exemplified by increased response rates on differential reinforcement of low rate schedules (Segal, 1962; Sanger, 1978) and elevated premature responding on the five-choice serial reaction time task (Cole and Robbins, 1987; Harrison et al, 1997). However, although this may play a role in the effects observed, amphetamine did not increase preference for the collect lever on every trial type. If the effects of amphetamine arise through an increased drive to respond on the reward-paired lever, then this should be observed regardless of the light pattern. In fact, this effect only reached significance on certain 1-light loss and clear loss trials, ie on trials in which the fewest positively conditioned stimuli (a stimulus associated with reward delivery: CS+) were present. Furthermore, the erroneous collect lever responses induced by amphetamine were made more slowly, potentially indicative of enhanced decision conflict, and again countering any suggestion that animals were simply perseverating in choice of the response associated with reward (Robbins, 1976). Hence, although animals appear hyper-sensitive to the illumination status of the individual lights, amphetamine's ability to enhance responding for reward or rewarding stimuli is not sufficient to explain the drug's effects on lever choice.

However, amphetamine has been reported to induce deficits on a CD task, such that animals could not use cues to determine which action was appropriate (Dunn et al, 2005). Somewhat similar to the response latency effects we observed here, the affective information encoded by the cues used in the CD was still being processed, as indicated by intact Pavlovian-to-instrumental transfer (Dunn et al, 2005). Amphetamine's effects on the slot machine task could therefore be attributed to impaired CD performance. However, the CD impairments caused by amphetamine are reversed by co-administration of a D1, but not a D2, antagonist (Dunn and Killcross, 2006), suggesting that accurate CD performance is influenced by D1-dependent activity. The finding that D1-selective compounds did not affect preference for the collect lever may indicate that overt difficulty with processing conditional rules cannot entirely explain amphetamine's effects. Furthermore, task performance was not globally impaired: animals were still 100% accurate on win trials, and their error rates were unchanged on the majority of trial types. Given that the largest increase in errors was observed on clear-loss trials that were least, rather than most, similar to a win, it also seems unlikely that amphetamine acted by broadening the stimulus generalization gradient, although this drug has been found to increase false-positive errors on a visual discrimination task (Hampson et al, 2010).

One explanation of amphetamine's effects is that the ability of the stimulant to potentiate DA signaling modified stimulus-outcome representations, leading to a bias in responding to stimuli as if they were paired with reward. In support of this suggestion, the D2 receptor agonist quinpirole had somewhat similar effects to amphetamine, dose-dependently increasing the number of collect errors on loss trials, although this effect was more pronounced on 1- and 2-light trials rather than clear losses at the lowest dose. As to whether this effect could reflect an increase in the pre-potent response for reward, the lower doses of quinpirole used here do not enhance differential responding to a CS+ (Beninger and Ranaldi, 1992), and decrease rather than increase premature responding on the 5CSRT (Winstanley et al, 2010). Presentation of a CS+ leads to a spike in DA release, whereas cancellation of an expected reward leads to a lull in dopaminergic activity (Schultz et al, 1997; Gan et al, 2010). Given this general premise, it is possible that the steady illumination of a flashing response hole would produce a transient increase in DA, whereas no change or perhaps a dip in DA would result if a hole set to the off position. These signals could form the basis of a reward prediction error that would bias choice towards either the collect or roll levers, as suggested by the response of dopaminergic neurons to complex reward-predictive stimuli in monkeys (Nomoto et al, 2010).

In recent models, it has been suggested that over-activation of D2 receptors would impair discrimination of salient from irrelevant information by reducing the signal-to-noise ratio, and preventing the appropriate tuning of the phasic DA response (Floresco et al, 2003; Seamans and Yang, 2004). As such, the dopaminergic response to a loss stimulus would resemble that observed after a win stimulus, biasing animals towards selection of the collect lever. In recent neuroimaging studies of slot-machine play, activation of the midbrain dopaminergic region in response to a near-miss was positively correlated with level of gambling severity in recreational gamblers (Chase and Clark, 2010), and the distribution of signals was most like winning outcomes in pathological gamblers but losing outcomes in healthy non-pathological controls (Habib and Dixon, 2010). Collectively, these findings suggest that activity within the DA system significantly contributes to the propensity to gamble maladaptively. With regard to Parkinson's disease, it has been suggested that chronic over-stimulation of D2 receptors—predominantly within the indirect pathways—prevents the detection of dips in dopamine activity that follow bad decision outcomes, and therefore promotes gambling behavior in vulnerable individuals (Frank et al, 2004; Frank and Claus, 2006). In light of these observations, one future research goal is to determine whether quinpirole's ability to promote collect responses on loss trials results from the inability to detect a negative prediction error (insensitivity to punishment) or the generation of a positive reward expectancy, or both.

It has previously been reported that near-miss trials, though aversive, increase the desire to continue gambling on slot machines (Kassinove and Schare, 2001; Cote et al, 2003; MacLin et al, 2007), and this may affect the speed with which subjects initiate the next gamble. Unfortunately, the latency to respond on the roll lever could not be used to assess the motivation to initiate the next trial, as this measure was affected by both the time taken to consume sugar pellets after a win and the 10 s time-out periods caused by an erroneous collect response. Including an inter-trial interval, such that a separate roll lever response would be required to begin the next trial, might improve the validity of this measure, and enable us to determine whether a particular trial type affected the willingness to begin a new trial. An accurate recording of this variable could likewise reveal whether manipulations that altered the number of trials completed, and/or affected choice of the collect lever, differentially modulated this aspect of task engagement.

Modeling gambling processes in animals and humans, including the cognitive biases that confer vulnerability for pathological disorders (Ladouceur et al, 1988; Toneatto et al, 1997), could provide novel opportunities to determine the neural circuitry and neurotransmitter systems which mediate the drive to gamble (Campbell-Meiklejohn et al, 2011). The demonstration that rats can perform a task similar to a slot machine, and show evidence of a near-miss effect, may indicate that rats are susceptible to some of the cognitive errors that are thought to contribute to maintaining gambling behavior (Clark, 2010; Griffiths, 1991; Reid, 1986). The data reported here also indicate that DA, via D2 receptors, may have a significant role in modulating the expectancy of reward during slot machine play. In conjunction with clinical investigations, this approach may fundamentally improve our understanding of recreational and problem gambling, and facilitate the development of new treatments for PG.

References

Balleine BW, Dickinson A (1998). Goal-directed instrumental action: contingency and incentive learning and their cortical substrates. Neuropharmacology 37: 407–419.

Beninger RJ, Hanson DR, Phillips AG (1981). The acquisition of responding with conditioned reinforcement: effects of cocaine, (+)-amphetamine and pipradrol. Br J Pharmacol 74: 149–154.

Beninger RJ, Ranaldi R (1992). The effects of amphetamine, apomorphine, SKF 38393, quinpirole and bromocriptine on responding for conditioned reward in rats. Behav Pharmacol 3: 155–163.

Berridge KC, Robinson TE (1998). What is the role of dopamine in reward: hedonic impact, reward learning, or incentive salience? Brain Res Brain Res Rev 28: 309–369.

Breen RB, Zimmerman M (2002). Rapid onset of pathological gambling in machine gamblers. J Gambl Stud 18: 31–43.

Campbell-Meiklejohn DK, Wakeley J, Herbert V, Cook J, Scollo P, Kar Ray M et al (2011). Serotonin and dopamine play complementary roles in gambling to recover losses. Neuropsychopharmacology 36: 402–410.

Cardinal RN, Aitken M (2006). ANOVA for the behavioural sciences researcher. Lawrence Erlbaum Associates: London.

Chase HW, Clark L (2010). Gambling severity predicts midbrain response to near-miss outcomes. J Neurosci 30: 6180–6187.

Choliz M (2010). Experimental analysis of the game in pathological gamblers: effect of the immediacy of the reward in slot machines. J Gambl Stud 26: 249–256.

Clark L (2010). Decision-making during gambling: an integration of cognitive and psychobiological approaches. Philos Trans R Soc Lond B Biol Sci 365: 319–330.

Clark L, Lawrence AJ, Astley-Jones F, Gray N (2009). Gambling near-misses enhance motivation to gamble and recruit win-related brain circuitry. Neuron 61: 481–490.

Cole BJ, Robbins TW (1987). Amphetamine impairs the discriminative performance of rats with dorsal noradrenergic bundle lesions on a 5-choice serial reaction time task: new evidence for central dopaminergic-noradrenergic interactions. Psychopharmacology 91: 458–466.

Cote D, Caron A, Aubert J, Desrochers V, Ladouceur R (2003). Near wins prolong gambling on a video lottery terminal. J Gambl Stud 19: 433–438.

Dackis CA, O'Brien CP (2001). Cocaine dependence: a disease of the brain's reward centers. J Subst Abuse Treat 21: 111–117.

Dixon MR, Nastally BL, Jackson JE, Habib R (2009). Altering the near-miss effect in slot machine gamblers. J Appl Behav Anal 42: 913–918.

Dunn MJ, Futter D, Bonardi C, Killcross S (2005). Attenuation of d-amphetamine-induced disruption of conditional discrimination performance by alpha-flupenthixol. Psychopharmacology (Berl) 177: 296–306.

Dunn MJ, Killcross S (2006). Differential attenuation of d-amphetamine-induced disruption of conditional discrimination performance by dopamine and serotonin antagonists. Psychopharmacology (Berl) 188: 183–192.

Fiorillo CD, Tobler PN, Schultz W (2003). Discrete coding of reward probability and uncertainty by dopamine neurons. Science 299: 1898–1902.

Floresco SB, Block AE, Tse MT (2008). Inactivation of the medial prefrontal cortex of the rat impairs strategy set-shifting, but not reversal learning, using a novel, automated procedure. Behav Brain Res 190: 85–96.

Floresco SB, West AR, Ash B, Moore H, Grace AA (2003). Afferent modulation of dopamine neuron firing differentially regulates tonic and phasic dopamine transmission. Nat Neurosci 6: 968–973.

Frank MJ, Claus ED (2006). Anatomy of a decision: striato-orbitofrontal interactions in reinforcement learning, decision making, and reversal. Psychol Rev 113: 300–326.

Frank MJ, Seeberger LC, O'Reilly RC (2004). By carrot or by stick: cognitive reinforcement learning in parkinsonism. Science 306: 1940–1943.

Gan JO, Walton ME, Phillips PE (2010). Dissociable cost and benefit encoding of future rewards by mesolimbic dopamine. Nat Neurosci 13: 25–27.

Grant JE, Kim SW (2006). Medication management of pathological gambling. Minnesota Medicine 89: 44–48.

Griffiths M (1991). Psychobiology of the near-miss in fruit machine gambling. J Psychol 125: 347–357.

Habib R, Dixon MR (2010). Neurobehavioral evidence for the ‘near-miss’ effect in pathological gamblers. J Exp Anal Behav 93: 313–328.

Hampson CL, Body S, den Boon FS, Cheung TH, Bezzina G, Langley RW et al (2010). Comparison of the effects of 2,5-dimethoxy-4-iodoamphetamine and D-amphetamine on the ability of rats to discriminate the durations and intensities of light stimuli. Behav Pharmacol 21: 11–20.

Harrigan KA (2008). Slot machine structural characteristics: creating near misses using high award symbol ratios. Int J Mental Health Addict 6: 353–368.

Harrigan KA, Dixon M (2010). Government sanctioned ‘tight’ and ‘loose’ slot machines: how having multiple versions of the same slot machine game may impact problem gambling. J Gambl Stud 26: 159–174.

Harrison AA, Everitt BJ, Robbins TW (1997). Central 5-HT depletion enhances impulsive responding without affecting the accuracy of attentional performance: interactions with dopaminergic mechanisms. Psychopharmacology 133: 329–342.

Jentsch JD, Taylor JR (1999). Impulsivity resulting from frontostriatal dysfunction in drug abuse: implications for the control of behavior by reward-related stimuli. Psychopharmacology 146: 373–390.

Kassinove JI, Schare ML (2001). Effects of the ‘near miss’ and the ‘big win’ on persistence at slot machine gambling. Psychol Addict Behav 15: 155–158.

Ladouceur R, Gaboury A, Dumont M, Rochette P (1988). Gambling: Relationship between the frequency of wins and irrational thinking. J Psychol: Interdisciplinary Applied 122: 409–414.

MacLin OH, Dixon MR, Daugherty D, Small SL (2007). Using a computer simulation of three slot machines to investigate a gambler's preference among varying densities of near-miss alternatives. Behav Res Methods 39: 237–241.

Mazurski EJ, Beninger RJ (1986). The effects of (+)-amphetamine and apomorphine on responding for a conditioned reinforcer. Psychopharmacology (Berl) 90: 239–243.

Nomoto K, Schultz W, Watanabe T, Sakagami M (2010). Temporally extended dopamine responses to perceptually demanding reward-predictive stimuli. J Neurosci 30: 10692–10702.

Peters H, Hunt M, Harper D (2010). An Animal Model of Slot Machine Gambling: The Effect of Structural Characteristics on Response Latency and Persistence. J Gambl Stud 26: 521–531.

Petry NM, Stinson FS, Grant BF (2005). Comorbidity of DSM-IV pathological gambling and other psychiatric disorders: results from the National Epidemiologic Survey on Alcohol and Related Conditions. J Clin Psychiatry 66: 564–574.

Potenza MN (2008). Review. The neurobiology of pathological gambling and drug addiction: an overview and new findings. Philos Trans R Soc Lond B Biol Sci 363: 3181–3189.

Potenza MN (2009). The importance of animal models of decision making, gambling, and related behaviors: implications for translational research in addiction. Neuropsychopharmacology 34: 2623–2624.

Reid RL (1986). The psychology of the near miss. J Gambl Behav 2: 32–39.

Reuter J, Raedler T, Rose M, Hand I, Glascher J, Buchel C (2005). Pathological gambling is linked to reduced activation of the mesolimbic reward system. Nat Neurosci 8: 147–148.

Robbins TW (1976). Relationship between reward-enhancing and stereotypical effects of psychomotor stimulant drugs. Nature 264: 57–59.

Robbins TW (1978). The acquisition of responding with conditioned reinforcement: effects of pipradrol, methylphenidate, d-amphetamine, and nomifensine. Psychopharmacology (Berl) 58: 79–87.

Robbins TW, Everitt BJ (1999). Drug addiction: bad habits add up. Nature 398: 567–570.

Robbins TW, Watson BA, Gaskin M, Ennis C (1983). Contrasting interactions of pipradol, d-amphetamine, cocaine, cocaine analogues, apomorphine and other drugs with conditioned reinforcement. Psychopharmacology 80: 113–119.

Robinson TE, Berridge KC (1993). The neural basis of drug craving: an incentive-sensitization theory of addiction. Brain Res Brain Res Rev 18: 247–291.

Sanger DJ (1978). Effects of d-amphetamine on temporal and spatial discrimination in rats. Psychopharmacology (Berl) 58: 185–188.

Schultz W (1998). Predictive reward signal of dopamine neurons. J Neurophysiol 80: 1–27.

Schultz W, Dayan P, Montague PR (1997). A neural substrate of prediction and reward. Science 275: 1593–1599.

Seamans JK, Yang CR (2004). The principal features and mechanisms of dopamine modulation in the prefrontal cortex. Prog Neurobiol 74: 1–58.

Segal EF (1962). Effects of dl-amphetamine under concurrent VI DRL reinforcement. J Exp Anal Behav 5: 105–112.

Shaffer HJ, Hall MN, Vander Bilt J (1999). Estimating the prevalence of disordered gambling behavior in the United States and Canada: a research synthesis. Am J Public Health 89: 1369–1376.

Shaffer HJ, Korn DA (2002). Gambling and related mental disorders: a public health analysis. Annu Rev Public Health 23: 171–212.

Toneatto T, Blitz-Miller T, Calderwood K, Dragonetti R, Tsanos A (1997). Cognitive distortions in heavy gambling. J Gambl Stud 13: 253–266.

Tremblay AM, Desmond RC, Poulos CX, Zack M (2010). Haloperidol modifies instrumental aspects of slot machine gambling in pathological gamblers and healthy controls. Addiction Biology (10 March e-pubahead of print).

Voon V, Fernagut PO, Wickens J, Baunez C, Rodriguez M, Pavon N et al (2009). Chronic dopaminergic stimulation in Parkinson's disease: from dyskinesias to impulse control disorders. Lancet Neurology 8: 1140–1149.

Weatherly JN, Sauter JM, King BM (2004). The ‘big win’ and resistance to extinction when gambling. J Psychol 138: 495–504.

Winstanley CA, Zeeb FD, Bedard A, Fu K, Lai B, Steele C et al (2010). Dopaminergic modulation of the orbitofrontal cortex affects attention, motivation and impulsive responding in rats performing the five-choice serial reaction time task. Behav Brain Res 210: 263–272.

Wyvell CL, Berridge KC (2000). Intra-accumbens amphetamine increases the conditioned incentive salience of sucrose reward: enhancement of reward ‘wanting’ without enhanced ‘liking’ or response reinforcement. J Neurosci 20: 8122–8130.

Wyvell CL, Berridge KC (2001). Incentive sensitization by previous amphetamine exposure: increased cue-triggered ‘wanting’ for sucrose reward. J Neurosci 21: 7831–7840.

Zack M, Poulos CX (2004). Amphetamine primes motivation to gamble and gambling-related semantic networks in problem gamblers. Neuropsychopharmacology 29: 195–207.

Zack M, Poulos CX (2007). A D2 antagonist enhances the rewarding and priming effects of a gambling episode in pathological gamblers. Neuropsychopharmacology 32: 1678–1686.

Zeeb FD, Robbins TW, Winstanley CA (2009). Serotonergic and dopaminergic modulation of gambling behavior as assessed using a novel rat gambling task. Neuropsychopharmacology 34: 2329–2343.

Zlomke KR, Dixon MR (2006). Modification of slot-machine preferences through the use of a conditional discrimination paradigm. J Appl Beh Anal 39: 351–361.

Acknowledgements

This work was supported by an operating grant awarded to CAW from the Canadian Institutes for Health Research (CIHR). CAW also receives salary support through the Michael Smith Foundation for Health Research and the CIHR New Investigator Award program. We thank Dr Stan B Floresco for engaging in such valuable discussions during the development of this project.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

CAW has previously consulted for Theravance on an unrelated matter. No authors have any other conflicts of interest or financial disclosures to make.

Additional information

Supplementary Information accompanies the paper on the Neuropsychopharmacology website

Rights and permissions

About this article

Cite this article

Winstanley, C., Cocker, P. & Rogers, R. Dopamine Modulates Reward Expectancy During Performance of a Slot Machine Task in Rats: Evidence for a ‘Near-miss’ Effect. Neuropsychopharmacol 36, 913–925 (2011). https://doi.org/10.1038/npp.2010.230

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/npp.2010.230

Keywords

This article is cited by

-

The Near-Miss Effect in Slot Machines: A Review and Experimental Analysis Over Half a Century Later

Journal of Gambling Studies (2020)

-

Can Slot-Machine Reward Schedules Induce Gambling Addiction in Rats?

Journal of Gambling Studies (2019)

-

Effects of disulfiram on choice behavior in a rodent gambling task: association with catecholamine levels

Psychopharmacology (2018)

-

Chronic administration of the dopamine D2/3 agonist ropinirole invigorates performance of a rodent slot machine task, potentially indicative of less distractible or compulsive-like gambling behaviour

Psychopharmacology (2017)

-

Categorical Discrimination of Sequential Stimuli: All SΔ Are Not Created Equal

The Psychological Record (2017)