Abstract

Cerebellar granule cells, which constitute half the brain's neurons, supply Purkinje cells with contextual information necessary for motor learning, but how they encode this information is unknown. Here we show, using two-photon microscopy to track neural activity over multiple days of cerebellum-dependent eyeblink conditioning in mice, that granule cell populations acquire a dense representation of the anticipatory eyelid movement. Initially, granule cells responded to neutral visual and somatosensory stimuli as well as periorbital airpuffs used for training. As learning progressed, two-thirds of monitored granule cells acquired a conditional response whose timing matched or preceded the learned eyelid movements. Granule cell activity covaried trial by trial to form a redundant code. Many granule cells were also active during movements of nearby body structures. Thus, a predictive signal about the upcoming movement is widely available at the input stage of the cerebellar cortex, as required by forward models of cerebellar control.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 print issues and online access

$209.00 per year

only $17.42 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

References

Ito, M. Cerebellar circuitry as a neuronal machine. Prog. Neurobiol. 78, 272–303 (2006).

Arenz, A., Bracey, E.F. & Margrie, T.W. Sensory representations in cerebellar granule cells. Curr. Opin. Neurobiol. 19, 445–451 (2009).

Marr, D. A theory of cerebellar cortex. J. Physiol. (Lond.) 202, 437–470 (1969).

Albus, J.S. A theory of cerebellar function. Math. Biosci. 10, 25–61 (1971).

Spanne, A. & Jörntell, H. Questioning the role of sparse coding in the brain. Trends Neurosci. 38, 417–427 (2015).

Huang, C.C. et al. Convergence of pontine and proprioceptive streams onto multimodal cerebellar granule cells. Elife 2, e00400 (2013).

Houck, B.D. & Person, A.L. Cerebellar premotor output neurons collateralize to innervate the cerebellar cortex. J. Comp. Neurol. 523, 2254–2271 (2015).

Heiney, S.A., Wohl, M.P., Chettih, S.N., Ruffolo, L.I. & Medina, J.F. Cerebellar-dependent expression of motor learning during eyeblink conditioning in head-fixed mice. J. Neurosci. 34, 14845–14853 (2014).

Eccles, J.C., Faber, D.S., Murphy, J.T., Sabah, N.H. & Táboríková, H. Afferent volleys in limb nerves influencing impulse discharges in cerebellar cortex. I. In mossy fibers and granule cells. Exp. Brain Res. 13, 15–35 (1971).

Powell, K., Mathy, A., Duguid, I. & Häusser, M. Synaptic representation of locomotion in single cerebellar granule cells. Elife 4 e07290 (2015).

van Beugen, B.J., Gao, Z., Boele, H.J., Hoebeek, F. & De Zeeuw, C.I. High frequency burst firing of granule cells ensures transmission at the parallel fiber to Purkinje cell synapse at the cost of temporal coding. Front. Neural Circuits 7, 95 (2013).

Medina, J.F. & Mauk, M.D. Computer simulation of cerebellar information processing. Nat. Neurosci. 3 (Suppl.), 1205–1211 (2000).

Freeman, J.H. & Steinmetz, A.B. Neural circuitry and plasticity mechanisms underlying delay eyeblink conditioning. Learn. Mem. 18, 666–677 (2011).

Shadmehr, R., Smith, M.A. & Krakauer, J.W. Error correction, sensory prediction, and adaptation in motor control. Annual Rev. Neurosci. 33, 89–108 (2010).

Wolpert, D.M., Miall, R.C. & Kawato, M. Internal models in the cerebellum. Trends Cogn. Sci. 2, 338–347 (1998).

Ozden, I., Dombeck, D.A., Hoogland, T.M., Tank, D.W. & Wang, S.S.-H. Widespread state-dependent shifts in cerebellar activity in locomoting mice. PLoS One 7, e42650 (2012).

Kuhn, B., Ozden, I., Lampi, Y., Hasan, M.T. & Wang, S.S.-H. An amplified promoter system for targeted expression of calcium indicator proteins in the cerebellar cortex. Front. Neural Circuits 6, 49 (2012).

Yaguchi, M. et al. Characterization of the properties of seven promoters in the motor cortex of rats and monkeys after lentiviral vector-mediated gene transfer. Hum. Gene Ther. Methods 24, 333–344 (2013).

Boele, H.-J., Koekkoek, S.K.E. & De Zeeuw, C.I. Cerebellar and extracerebellar involvement in mouse eyeblink conditioning: the ACDC model. Front. Cell. Neurosci. 3, 19 (2010).

Thompson, R.F. & Krupa, D.J. Organization of memory traces in the mammalian brain. Annu. Rev. Neurosci. 17, 519–549 (1994).

Heiney, S.A., Kim, J., Augustine, G.J. & Medina, J.F. Precise control of movement kinematics by optogenetic inhibition of Purkinje cell activity. J. Neurosci. 34, 2321–2330 (2014).

Hesslow, G. Correspondence between climbing fibre input and motor output in eyeblink-related areas in cat cerebellar cortex. J. Physiol. (Lond.) 476, 229–244 (1994).

Mostofi, A., Holtzman, T., Grout, A.S., Yeo, C.H. & Edgley, S.A. Electrophysiological localization of eyeblink-related microzones in rabbit cerebellar cortex. J. Neurosci. 30, 8920–8934 (2010).

Najafi, F., Giovannucci, A., Wang, S.S.-H. & Medina, J.F. Sensory-driven enhancement of calcium signals in individual Purkinje cell dendrites of awake mice. Cell Rep. 6, 792–798 (2014).

Najafi, F., Giovannucci, A., Wang, S.S.-H. & Medina, J.F. Coding of stimulus strength via analog calcium signals in Purkinje cell dendrites of awake mice. Elife 3, e03663 (2014).

Pnevmatikakis, E.A. et al. Simultaneous denoising, deconvolution, and demixing of calcium imaging data. Neuron 89, 285–299 (2016).

Narayanan, N.S., Kimchi, E.Y. & Laubach, M. Redundancy and synergy of neuronal ensembles in motor cortex. J. Neurosci. 25, 4207–4216 (2005).

Puchalla, J.L., Schneidman, E., Harris, R.A. & Berry, M.J. Redundancy in the population code of the retina. Neuron 46, 493–504 (2005).

Freeman, J.H. Cerebellar learning mechanisms. Brain Res. 1621, 260–269 (2015).

Gao, Z. et al. Excitatory cerebellar nucleocortical circuit provides internal amplification during associative conditioning. Neuron 89, 645–657 (2016).

Ankri, L. et al. A novel inhibitory nucleo-cortical circuit controls cerebellar Golgi cell activity. Elife 4, e06262 (2015).

Jörntell, H. Cerebellar physiology: links between microcircuitry properties and sensorimotor functions. J. Physiol. (Lond.) 595, 11–27 (2017).

Medina, J.F. & Lisberger, S.G. Variation, signal, and noise in cerebellar sensory-motor processing for smooth-pursuit eye movements. J. Neurosci. 27, 6832–6842 (2007).

ten Brinke, M.M. et al. Evolving models of Pavlovian conditioning: cerebellar cortical dynamics in awake behaving mice. Cell Rep. 13, 1977–1988 (2015).

Lisberger, S.G. & Medina, J.F. How and why neural and motor variation are related. Curr. Opin. Neurobiol. 33, 110–116 (2015).

Jirenhed, D.-A. & Hesslow, G. Are Purkinje cell pauses drivers of classically conditioned blink responses? Cerebellum 15, 526–534 (2016).

Lee, K.H. et al. Circuit mechanisms underlying motor memory formation in the cerebellum. Neuron 86, 529–540 (2015).

Billings, G., Piasini, E., Lőrincz, A., Nusser, Z. & Silver, R.A. Network structure within the cerebellar input layer enables lossless sparse encoding. Neuron 83, 960–974 (2014).

Lubow, R.E. Latent inhibition. Psychol. Bull. 79, 398–407 (1973).

Wang, H. et al. High-speed mapping of synaptic connectivity using photostimulation in channelrhodopsin-2 transgenic mice. Proc. Natl. Acad. Sci. USA 104, 8143–8148 (2007).

Zuiderveld, K. Contrast limited adaptive histogram equalization. in Graphics Gems IV (ed., Heckbert, P.S.) 474–485 (Academic Press Professional, Inc., 1994).

Dalal, N. & Triggs, B. Histograms of oriented gradients for human detection. IEEE Computer Society Conference on Computer Vision and Pattern Recognition. 886–893 (2005).

Otsu, N. A threshold selection method from gray-level histograms. IEEE Trans. Syst. Man Cybern. 9, 62–66 (1979).

Myronenko, A. & Song, X. Point set registration: coherent point drift. IEEE Trans. Pattern Anal. Mach. Intell. 32, 2262–2275 (2010).

Huber, D. et al. Multiple dynamic representations in the motor cortex during sensorimotor learning. Nature 484, 473–478 (2012).

Badura, A. et al. Climbing fiber input shapes reciprocity of Purkinje cell firing. Neuron 78, 700–713 (2013).

Jaarsma, D. et al. A role for bicaudal-D2 in radial cerebellar granule cell migration. Nat. Commun. 5, 3411 (2014).

Zhou, H. et al. Cerebellar modules operate at different frequencies. Elife 3, e02536 (2014).

Chung, K. & Deisseroth, K. CLARITY for mapping the nervous system. Nat. Methods 10, 508–513 (2013).

Kloth, A.D. et al. Cerebellar associative sensory learning defects in five mouse autism models. Elife 4, e06085 (2015).

Acknowledgements

The authors thank L. Lynch for expert laboratory assistance, A.C.H.G. Ijpelaar for technical assistance and Dr. H. Boele of the Neuroscience department at the Erasmus Medical Center for their input in eyeblink recordings, M.J. Berry II for discussion of the information calculation, D. Dombeck, J.P. Rickgauer and C. Domnisoru for experimental advice, and D. Pacheco-Pinedo, J.L. Verpeut, P. Sanchez-Jauregui and I. Witten for comments and suggestions. This work was supported by National Institutes of Health grants R01 NS045193 (S.W.) and R01 MH093727 (J.M.), New Jersey Council on Brain Injury Research fellowship CBIR12FEL031 (A.G.), the Searle Scholars program (J.M.), DARPA N66001-15-C-4032 (L.P.), National Science Foundation Graduate Research Fellowship DGE-1148900 (T.P.), the Nancy Lurie Marks Family Foundation (S.W.), the Netherlands Organization for Scientific Research (Innovational Research Incentives Scheme Veni; A.B. and Z.G.), the Dutch Fundamental Organization for Medical Sciences (ZonMW; C.I.D.Z.), Life Sciences (NWO-ALW; C.I.D.Z.) and Social and Behavioral Sciences (NWO-MAGW; C.I.D.Z.), as well as ERC-adv and ERC-POC (C.I.D.Z.).

Author information

Authors and Affiliations

Contributions

A.G. designed behavioral and imaging experiments, established the behavioral and imaging setup and molecular methods, performed in vivo imaging experiments, developed analyses and drafted the manuscript. A.B., Z.G. and C.I.D.Z. designed and/or performed brain slice experiments. A.B. performed histological and combined behavioral-pharmacological experiments. F.N., I.O., B.D. and A.D.K. established the behavioral and imaging setup and methods. B.D. performed in vivo imaging experiments and developed analyses. T.D.P., E.P. and L.P. developed analyses. J.F.M. and S.S.-H.W. designed experiments and developed analyses. All authors edited the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing financial interests.

Integrated supplementary information

Supplementary Figure 1 GCaMP-positive structures in the granule cell layer are predominantly granule cells.

(a) A Neurod1 mouse injected with AAV1.CAG.Flex.GCaMP6f.WPRE.SV40. GCaMP6f-positive cells negative for α6GABAA were sparse in the granule cell layer, and are indicated by white arrows. The dashed white line denotes the location of Purkinje cells at the border between granule and molecular layers. The scatter plot shows absolute numbers of α6GABAA negative cells >7.5 μm wide in the granule cell layer in 10,000 μm2 fields of view (FOV). Horizontal bars represent means (Neurod1 = 1.2±0.6 12 FOVs, and hSyn = 0.9±0.5, 13 FOVs; data from 2 mice per genotype). (b) High-magnification images of the granule cell layer revealed that the majority of round, small (between 4.5 and 7.5 μm in diameter) GCaMP6f-positive structures were both positive for α6GABAA and contained a distinct nucleus revealed by DAPI staining. White arrows point to putative granule cell dendrites, positive for both GCaMP6f and α6GABAA but without nuclear staining.

Supplementary Figure 2 GCaMP6f signals in cerebellar brain slices.

(a) Granule cells (GrCs) expressing GCaMP6f in a brain slice imaged under two-photon microscopy. The inset shows a fluorescence image of two GrCs during stimulation of mossy fibers via a saline-filled glass electrode placed more than 100 microns away. Traces above the inset show fluorescence responses and extracellular spikes to a 100 ms train of mossy fiber stimulation at 100 Hz. Stimulation artifacts were truncated. (b) Signal decay times and amplitudes in GrCs recorded using mossy fiber (MF) stimulation (N=8 neurons from 5 mice). The center subpanel represents data from a single GrC. Error bars in the left graph represent SEMs. Right graph is a box-and-whisker plot. (c) GCaMP signals evoked by direct depolarization of GrCs. Top. A GCaMP-expressing GrC counterstained with Alexa 568 electroporated into the cell by repeated depolarization and recorded by a cell-attached patch pipet. Action potentials (red dots) were evoked by applying voltage steps (200 mV, 1 ms). Middle. Fluorescence signals as a function of the number of evoked action potentials from all recorded cells (N=18 neurons from 5 mice); shaded areas indicate ΔF/F range in vivo during locomotion and spiking range of GrCs recorded in vivo by Powell et al. (eLife, 2015). Bottom. Averaged data (bin size 3 spikes). Error bars represent SEMs. (d) Similar recordings for Golgi cells (N=2 neurons from 2mice). Arrows indicate failure to evoke an action potential. (e) GCaMP signal amplitudes in five GrCs stimulated to fire 2-12 action potentials at 600 Hz by cell-attached pipet. (f) Decay times of depolarization-triggered firing in granule cells (N=18 neurons from 5 mice). Error bars represent SEMs.

Supplementary Figure 3 Stability of granule cell response properties across days.

(a) For light-CS mouse (N=29 neurons), average calcium traces in response to periorbital airpuff stimulation on days 1-3 and 7-9. (b) Same as (a), but for a whisker CS in days 1-2 and 5-6 (N=60 neurons). As a measure of granule cell responses to US stimulus, P was defined as the integral of the response between 64 and 240 msec after US stimulus delivery. (c-d) Evolution of the response to periorbital stimulation in individual granule cells during training for light (panel c) and whisker (panel d) CS animals. The response was stable across days, so the variation in CS responses observed during training did not arise from changes in neuronal physiology nor as an artifact of data analysis. (e-f) Two-photon imaging of granule cell activity. (e) Craniotomy over cerebellar lobule VI in example whisker-CS mouse. The dashed lines indicate the interlobule boundary (black) and the brain midline (white). In fields of view across training yellow arrows indicate representative cells. Corresponding granule cell fluorescence traces are shown underneath. Shaded areas indicate times of stimulus delivery. (f) Craniotomy over cerebellar lobule VI in example light-CS mouse. The dashed lines indicate the brain midline (white). In fields of view across training yellow arrows indicate representative cells. Corresponding granule cell fluorescence traces are shown underneath. Shaded areas indicate times of stimulus delivery.

Supplementary Figure 4 Channelrhodopsin-2 optogenetic stimulation of cerebellar mossy fibers.

(a) Expression of channelrhodopsin-2 in cerebellar cortex of B6.Cg-Tg(Thy1-COP4/EYFP)18Gfng/J mice is restricted to mossy fibers (MFs). Z-projected image of a parasagital 500-μm-thick cerebellar cortex section cleared using CLARITY and imaged with two-photon microscopy. (b) Experimental design. Left. Schematic of a mouse in the setup. Mice were headfixed and allowed to locomote on a treadmill while optogenetic stimulation (470 nm) was applied. Eyes were shielded to avoid light-evoked startle responses. Right. Frame from camera recording behavior along with different regions of interest whose fluctuations in pixel intensity maximally reflected movement of whiskers, proximal forelimb, distal forelimb and oral zone. Each region of interest corresponds to a pixel group whose fluctuations we observed to covary with a named body part. (c) Triggered aligned (N=16 trials) time course of movements elicited by stimulation of the surface of crus I. (d) Coarse map of responses elicited by cerebellar stimulation. Colors as in panels b and c. Eyeblinks were not measured.

Supplementary Figure 5 Pharmacological inactivation in all tested animals.

Left column. Red circles depict size and location of the muscimol injections using the Allen Brain Atlas coordinate system in cerebellar coronal sections. Distance from bregma is indicated next to each section. Middle column. Cerebellar coronal sections from all mice used in the experiments. Location and extent of muscimol injections is shown in red. Muscimol was mixed with Evans Blue and visualized by excitation at 594 nm. Sections were counterstained with DAPI (blue) and aldolase C antibody to reveal zebrin-positive and zebrin-negative zones in the Purkinje cell layer (green). Right column. Cumulative frequency graphs of the amplitude of conditional responses in trained animals during: baseline before saline injection, after saline injections, during baseline before muscimol injections and after muscimol. US-only trials are plotted for reference.

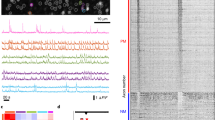

Supplementary Figure 6 Monitoring representations in cerebellar granule cells over the course of learning (whisker CS).

(a) Eyelid movement (left), locomotion activity (center), and single-cell calcium signal (right) over six days of CS-plus-US training. Each horizontal line represents a single trial. Date labels indicate the start of a day's training. Vertical solid lines indicate onset and offset of CS stimulus. Dashed vertical lines indicate delivery time of the US stimulus. (b) Top. In CS-US trials, data grouped according to whether the CS did (“CR+”, middle) or did not (“CR−”, top) evoke an anticipatory eyelid closure prior to the US. Trials with significant locomotion (> 2 cm/s) during the CS are excluded. Bottom. Overlaid average responses in US trials (US), and at the final stage of training when the animal did (“CR+”) or did not (“CR−”) produce a CR. f* is the integral of the neural response in an 85 ms window before the UR.

Supplementary Figure 7 Measures of animal motion were estimated from images of behavioral recordings.

(a) Because the behavior camera (blue) was positioned non-orthogonally to the wheel's surface (black arrows), a model based on physical measurements of the wheel was used to estimate a projective transform (T) that reprojected image pixels onto the imaging plane of a theoretically orthogonally positioned camera (red). (b) By applying this transform to a region of the image containing mostly wheel pixels, a reprojected view was computed such that vertical displacements corresponded directly to wheel motion. (c) After tracking regions in the projected images, an estimate of the actual physical velocity could be calculated by scaling to the model parameters. To compensate for image noise, all pixels in the fictive field of view were tracked and their displacements yielded a distribution whose summary measures quantitatively reflected animal locomotion. (d) In order to measure animal snout movement, raw images were segmented and their boundaries traced to yield a set of points that putatively included the contour of the snout. (e) Seed points were selected along the contour of the snout and then matched to boundary points across subsequent frames via a robust point set registration algorithm. This approach was capable of dealing with substantial noise (inset) by imposing constraints on the rigidity of the original contour. (f) All seed points around the contour of the snout were tracked across time and their absolute displacements yielded a distribution over point velocities. Despite nonlinear shape deformations, summary measures of these point velocities served as a robust estimate for animal’s snout movement.

Supplementary Figure 8 Response properties of granule cells.

(a) Left. Example of traces from individual granule cells that are highly correlated with CR (r for CR>0.3) superimposed on averaged eyelid and snout response during the CR+ trials in the last training session in 1 mouse (N = 140 cells). Traces are normalized to the standard deviation of the noise computed over the pre-stimulus period (1 second before CS). Right. Histograms of latencies corresponding to the traces on the left. Latencies were calculated between the onset of the average conditional response in CR+ trials (first crossing of the 3 standard deviation threshold) and the onsets of each neuron averaged in CR+ trials (same normalization and 3 standard deviation crossing). Data comes from mice (N=2) scanned using a fast resonant scanner (N = 185 cells). (b) Histograms of latencies as described in (a) calculated for all granule cell responses strongly correlated with the CR (r>0.3) in all mice. (c) 40 runs of the bootstrapping procedure sampling f* in CR+ and CR- trials from 30 neurons per FOV per animal (N=6 mice, N=480 cells eyelid amplitude CR+ trials, N=1120 cells eyelid amplitude CR- trials, N=459 cells percentage responses CR+ trials, N=1109 cells perc responses CR− trials) and then binned according to average eyelid amplitude in the whole session (top) or average performance in the whole session (% CR, bottom). Error bars represent SEMs. (d) Histograms of granule cell responses in 4 fully trained mice (from top left to bottom right N= 60, 35, 50, 20 cells). Each histogram bar represents the response of a single granule cell to a CS stimulus without wheel movement. Red histograms show ΔF/F for trials with no conditional response (CR−, same time bin as f*). Black histograms show difference in ΔF/F between CR+ and CR− trial responses for the same cells, i.e. the CR+ excess (same time bin as f*). One field of view from each mouse.

Supplementary information

Supplementary Text and Figures

Supplementary Figures 1–8 (PDF 1658 kb)

A B6.Cg-Tg(NeuroD1-Cre).GN135.Gsat mouse was injected with 200 nL of AAV1.CAG.Flex.GCaMP6f.WPRE.SV40.

Spontaneous activity in parallel-fiber boutons of lobule VI was recorded in a mouse walking on a cylindrical treadmill. Images were acquired at 512 by 128 pixels (130×30 μm), 10 ms per frame. Playback speed, 20 Hz (5× slower than acquisition). (AVI 16157 kb)

Granule cell identification by constrained matrix factorization.

Raw Data. Original movie after motion correction (horizontal black bands arise from motion correction). The red circles indicate four granule cells identified by the factorization procedure. Denoised. Data with noise removed and synchronized neuropil activity retained. No Neuropil. Denoised movie with neuropil contribution to each pixel removed. Neuropil synchronized activity. Activity of the neuropil displayed separately from neurons. Representative spatial components. Time course of the four example granule cells. Raw data patches. Corresponding patches of raw data for the spatial components. (AVI 237048 kb)

Wheel tracking corrects for perspective and estimates locomotor velocity.

Left. A model of the wheel was constructed from physical measurements and manually fitted to the behavioral recording of the animal. Top-right. The parameters of the model defined a projective transform to remap the image pixels to the perspective of the surface normal of the wheel. Bottom-right. The tracked vertical displacement of the reprojected wheel pixels was rescaled to physical units to estimate the true instantaneous locomotor velocity. (AVI 36400 kb)

Snout motion is quantified by tracking snout shape trajectories.

Left. The outline of the shape of the snout was manually traced to provide a set of seed points that was displaced when the animal generated facial movements. Top-right. The images were thresholded and a robust point set registration algorithm was applied to track the trajectories of the seed points even when segmentation was made difficult by background artifacts. Bottom-right. The magnitude of the seed point velocities was used as a measure of facial movements. (AVI 25059 kb)

Rights and permissions

About this article

Cite this article

Giovannucci, A., Badura, A., Deverett, B. et al. Cerebellar granule cells acquire a widespread predictive feedback signal during motor learning. Nat Neurosci 20, 727–734 (2017). https://doi.org/10.1038/nn.4531

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/nn.4531

This article is cited by

-

Local synaptic inhibition mediates cerebellar granule cell pattern separation and enables learned sensorimotor associations

Nature Neuroscience (2024)

-

Heterogeneous encoding of temporal stimuli in the cerebellar cortex

Nature Communications (2023)

-

Structured cerebellar connectivity supports resilient pattern separation

Nature (2023)

-

Developmental timing-dependent organization of synaptic connections between mossy fibers and granule cells in the cerebellum

Communications Biology (2023)

-

Synaptic basis of a sub-second representation of time in a neural circuit model

Nature Communications (2022)