Abstract

A comprehensive picture of object processing in the human brain requires combining both spatial and temporal information about brain activity. Here we acquired human magnetoencephalography (MEG) and functional magnetic resonance imaging (fMRI) responses to 92 object images. Multivariate pattern classification applied to MEG revealed the time course of object processing: whereas individual images were discriminated by visual representations early, ordinate and superordinate category levels emerged relatively late. Using representational similarity analysis, we combined human fMRI and MEG to show content-specific correspondence between early MEG responses and primary visual cortex (V1), and later MEG responses and inferior temporal (IT) cortex. We identified transient and persistent neural activities during object processing with sources in V1 and IT. Finally, we correlated human MEG signals to single-unit responses in monkey IT. Together, our findings provide an integrated space- and time-resolved view of human object categorization during the first few hundred milliseconds of vision.

This is a preview of subscription content, access via your institution

Access options

Subscribe to this journal

Receive 12 print issues and online access

$209.00 per year

only $17.42 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

References

Grill-Spector, K. & Malach, R. The human visual cortex. Annu. Rev. Neurosci. 27, 649–677 (2004).

Hung, C.P., Kreiman, G., Poggio, T. & DiCarlo, J.J. Fast readout of object identity from macaque inferior temporal cortex. Science 310, 863–866 (2005).

Kriegeskorte, N. et al. Matching categorical object representations in inferior temporal cortex of man and monkey. Neuron 60, 1126–1141 (2008).

Kourtzi, Z. & Connor, C.E. Neural representations for object perception: structure, category, and adaptive coding. Annu. Rev. Neurosci. 34, 45–67 (2011).

DiCarlo, J.J., Zoccolan, D. & Rust, N.C. How does the brain solve visual object recognition? Neuron 73, 415–434 (2012).

Felleman, D.J. & Van Essen, D.C. Distributed hierarchical processing in the primate cerebral cortex. Cereb. Cortex 1, 1–47 (1991).

Ungerleider, L.G. & Mishkin, M. Two visual systems. In Analysis of Visual Behavior. (eds. Ingle, D.J., Goodale, M.A. & Mansfield, R.J.W.) 549–586 (MIT Press, 1982).

Milner, A.D. & Goodale, M.A. The Visual Brain in Action (Oxford Univ. Press, 2006).

Schmolesky, M.T. et al. Signal timing across the macaque visual system. J. Neurophysiol. 79, 3272–3278 (1998).

Luck, S.J. An Introduction to the Event-Related Potential Technique (MIT Press, 2005).

Mormann, F. et al. Latency and selectivity of single neurons indicate hierarchical processing in the human medial temporal lobe. J. Neurosci. 28, 8865–8872 (2008).

Baillet, S., Mosher, J.C. & Leahy, R.M. Electromagnetic brain mapping. IEEE Signal Process. Mag. 18, 14–30 (2001).

Hari, R. & Salmelin, R. Magnetoencephalography: from SQUIDs to neuroscience: Neuroimage 20th anniversary special edition. Neuroimage 61, 386–396 (2012).

Dale, A.M. et al. Dynamic statistical parametric mapping: combining fMRI and MEG for high-resolution imaging of cortical activity. Neuron 26, 55–67 (2000).

Debener, S., Ullsperger, M., Siegel, M. & Engel, A.K. Single-trial EEG–fMRI reveals the dynamics of cognitive function. Trends Cogn. Sci. 10, 558–563 (2006).

Logothetis, N.K. & Sheinberg, D.L. Visual object recognition. Annu. Rev. Neurosci. 19, 577–621 (1996).

Carlson, T.A., Hogendoorn, H., Kanai, R., Mesik, J. & Turret, J. High temporal resolution decoding of object position and category. J. Vis. 11 (10): 9 (2011).

Haynes, J.-D. & Rees, G. Decoding mental states from brain activity in humans. Nat. Rev. Neurosci. 7, 523–534 (2006).

Carlson, T., Tovar, D.A., Alink, A. & Kriegeskorte, N. Representational dynamics of object vision: the first 1000 ms. J. Vis. 13 (10): 1 (2013).

Tong, F. & Pratte, M.S. Decoding patterns of human brain activity. Annu. Rev. Psychol. 63, 483–509 (2012).

Thorpe, S., Fize, D. & Marlot, C. Speed of processing in the human visual system. Nature 381, 520–522 (1996).

Bentin, S., Allison, T., Puce, A., Perez, E. & McCarthy, G. Electrophysiological studies of face perception in humans. J. Cogn. Neurosci. 8, 551–565 (1996).

VanRullen, R. & Thorpe, S.J. The time course of visual processing: from early perception to decision-making. J. Cogn. Neurosci. 13, 454–461 (2001).

Edelman, S. Representation is representation of similarities. Behav. Brain Sci. 21, 449–467, discussion 467–498 (1998).

Kriegeskorte, N. Representational similarity analysis – connecting the branches of systems neuroscience. Front. Syst. Neurosci. 2, 4 (2008).10.3389/neuro.06.004.2008

Kiani, R., Esteky, H., Mirpour, K. & Tanaka, K. Object category structure in response patterns of neuronal population in monkey inferior temporal cortex. J. Neurophysiol. 97, 4296–4309 (2007).

Nichols, T.E. & Holmes, A.P. Nonparametric permutation tests for functional neuroimaging: a primer with examples. Hum. Brain Mapp. 15, 1–25 (2002).

Maris, E. & Oostenveld, R. Nonparametric statistical testing of EEG- and MEG-data. J. Neurosci. Methods 164, 177–190 (2007).

Kruskal, J.B. & Wish, M. Multidimensional scaling. University Paper Series on Quantitative Applications in the Social Sciences, Series 07-011 (Sage Publications, 1978).

Shepard, R.N. Multidimensional scaling, tree-fitting, and clustering. Science 210, 390–398 (1980).

Allison, T. et al. Face recognition in human extrastriate cortex. J. Neurophysiol. 71, 821–825 (1994).

Kanwisher, N., McDermott, J. & Chun, M.M. The fusiform face area: a module in human extrastriate cortex specialized for face perception. J. Neurosci. 17, 4302–4311 (1997).

McCarthy, G., Puce, A., Belger, A. & Allison, T. Electrophysiological studies of human face perception. II: Response properties of face-specific potentials generated in occipitotemporal cortex. Cereb. Cortex 9, 431–444 (1999).

Downing, P.E., Jiang, Y., Shuman, M. & Kanwisher, N. A cortical area selective for visual processing of the human body. Science 293, 2470–2473 (2001).

Liu, J., Harris, A. & Kanwisher, N. Stages of processing in face perception: an MEG study. Nat. Neurosci. 5, 910–916 (2002).

Harrison, S.A. & Tong, F. Decoding reveals the contents of visual working memory in early visual areas. Nature 458, 632–635 (2009).

Liu, H., Agam, Y., Madsen, J.R. & Kreiman, G. Timing, timing, timing: fast decoding of object information from intracranial field potentials in human visual cortex. Neuron 62, 281–290 (2009).

Stekelenburg, J.J. & de Gelder, B. The neural correlates of perceiving human bodies: an ERP study on the body-inversion effect. Neuroreport 15, 777–780 (2004).

Thierry, G. et al. An event-related potential component sensitive to images of the human body. Neuroimage 32, 871–879 (2006).

Jeffreys, D.A. Evoked potential studies of face and object processing. Vis. Cogn. 3, 1–38 (1996).

Halgren, E., Raij, T., Marinkovic, K., Jousmäki, V. & Hari, R. Cognitive response profile of the human fusiform face area as determined by MEG. Cereb. Cortex 10, 69–81 (2000).

Sadeh, B., Podlipsky, I., Zhdanov, A. & Yovel, G. Event-related potential and functional MRI measures of face-selectivity are highly correlated: a simultaneous ERP-fMRI investigation. Hum. Brain Mapp. 31, 1490–1501 (2010).

Tsao, D.Y., Freiwald, W.A., Tootell, R.B.H. & Livingstone, M.S. A cortical region consisting entirely of face-selective cells. Science 311, 670–674 (2006).

Mack, M.L. & Palmeri, T.J. The timing of visual object categorization. Front. Psychol. 2, 165 (2011).

Kravitz, D.J., Saleem, K.S., Baker, C.I., Ungerleider, L.G. & Mishkin, M. The ventral visual pathway: an expanded neural framework for the processing of object quality. Trends Cogn. Sci. 17, 26–49 (2013).

Sugase-Miyamoto, Y., Matsumoto, N. & Kawano, K. Role of temporal processing stages by inferior temporal neurons in facial recognition. Front. Psychol. 2, 141 (2011).

Brincat, S.L. & Connor, C.E. Dynamic shape synthesis in posterior inferotemporal cortex. Neuron 49, 17–24 (2006).

Freiwald, W.A. & Tsao, D.Y. Functional compartmentalization and viewpoint generalization within the macaque face-processing system. Science 330, 845–851 (2010).

Tadel, F., Baillet, S., Mosher, J.C., Pantazis, D. & Leahy, R.M. Brainstorm: a user-friendly application for MEG/EEG analysis. Comput. Intell. Neurosci. 2011, 879716 (2011).

Müller, K.R., Mika, S., Rätsch, G., Tsuda, K. & Schölkopf, B. An introduction to kernel-based learning algorithms. IEEE Trans. Neural Netw. 12, 181–201 (2001).

Kriegeskorte, N., Simmons, W.K., Bellgowan, P.S.F. & Baker, C.I. Circular analysis in systems neuroscience: the dangers of double dipping. Nat. Neurosci. 12, 535–540 (2009).

Benson, N.C. et al. The retinotopic organization of striate cortex is well predicted by surface topology. Curr. Biol. 22, 2081–2085 (2012).

Dale, A.M., Fischl, B. & Sereno, M.I. Cortical surface-based analysis: I. Segmentation and surface reconstruction. Neuroimage 9, 179–194 (1999).

Maldjian, J.A., Laurienti, P.J., Kraft, R.A. & Burdette, J.H. An automated method for neuroanatomic and cytoarchitectonic atlas-based interrogation of fMRI data sets. Neuroimage 19, 1233–1239 (2003).

Acknowledgements

This work was funded by US National Eye Institute grant EY020484 (to A.O.), US National Science Foundation grant BCS-1134780 (to D.P.) and a Humboldt Scholarship (to R.M.C.), and was conducted at the Athinoula A. Martinos Imaging Center at the McGovern Institute for Brain Research, Massachusetts Institute of Technology.

Author information

Authors and Affiliations

Contributions

R.M.C., D.P. and A.O. designed the research. R.M.C. and D.P. performed experiments and analyzed the data. R.M.C., D.P. and A.O. wrote the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing financial interests.

Integrated supplementary information

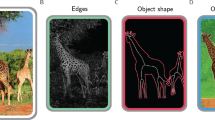

Supplementary Figure 1 Experimental design in MEG, fMRI and behavioral experiments.

Participants (n = 16) viewed the same 92 images (2.9 degrees visual angle overlaid with a gray fixation cross). (a) For MEG, images were presented in random order every 1.5 – 2 s. Every 3 – 5 trials, a paper clip was presented prompting a button press response. (b) For fMRI, stimulus onset asynchrony was 3s, or 6s when a null trial (uniform gray background) was shown. During null trials the fixation cross changed to dark gray, prompting a button press response. (c) For behavioral testing, participants classified pairs of images either by identity (same/different image) or by category for 5 different categorizations: animacy, naturalness, face versus body, human versus non-human body, human versus non-human face in blocks of 24 trials each. Before every block, participants received instructions about the categorization task (e.g. animate versus inanimate). Each trial consisted of a red fixation cross (0.5 s) then two images (0.5 s, separating offset 0.5 s). Participants were instructed to respond as fast and accurately as possible, indicating whether the two images were same or different with respect to the instructed classification by pressing a button. Participants completed 8 runs, each consisting of a random sequence of the 6 blocks, given the 6 classification tasks. Results (reaction times for correct responses, and percent correct responses) were determined for each block and then averaged by participant.

Supplementary Figure 2 Linear separability of categorical subdivisions.

(a) We determined whether the membership of an image to a category (here shown for animacy) can be linearly discriminated by visual representation directly. Analysis was conducted independently for each participant and session, and for each time point from –100 to 1200 ms in 10ms steps. For each category subdivision, we subsampled the set of objects by randomly drawing M (12) objects. Each object was presented N times. We assigned (N–1) × (M–1) trials to a training set of a linearized SVM (liblinear, http://www.csie.ntu.edu.tw/~cjlin/liblinear/) in the L2-regularized L2-loss SVM (primal) configuration. We tested the SVM on independent trials in two ways: from objects included in the training set ('identical' condition, dark gray), or held out from the training set ('held-out' condition, light gray). We repeated the above procedure 100 times, using different subsamples of objects and random assignment of trials to training and testing sets. Decoding accuracy was averaged across repetitions. (b–f) The upper panel shows the decoding accuracy time courses for objects included or held-out from the training set (color-coded as in (a)). The lower panel illustrates the difference of decoding accuracy between identical and held-out objects. Stars indicate time points with significant effects (sign-permutation test, n = 16, cluster-defining threshold P < 0.001, corrected significance level P < 0.05). For details see Supplementary Table 1e. Abbreviations: dec. acc. = decoding

Supplementary Figure 3 Relation of behavior to peak latency of decoding accuracy.

We determined whether (a) reaction time and (b) correctness are linearly related to peak latency of decoding accuracy (Pearson's R). We assessed significance by bootstrapping the sample of participants (n = 16, P < 0.05). Reaction time shows a positive relationship (R = 0.53, P = 0.003); correctness a negative relationship (R = –0.49, P = 0.012).

Supplementary Figure 4 Representational similarity analysis of fMRI responses in human V1 and IT.

Our analyses corroborated previous major findings3 by a random-effects analysis. (a) Representational dissimilarity matrices for human V1 and IT. Dissimilarity between fMRI pattern responses is color-coded as percentiles of dissimilarity (1– Spearman's R). (b) MDS and (c) hierarchical clustering of fMRI responses. MDS (criterion: metric stress) showed a grouping of images into inanimate objects, faces, and bodies in IT (stress = 0.24), but not in V1 (stress = 0.20). Unsupervised hierarchical clustering (criterion: average fMRI response pattern dissimilarity) revealed a nested hierarchical structure dividing animate and inanimate objects, and animates into faces and bodies in IT, but not in V1. (d) We compared dissimilarity (1 – Spearman's R) within versus between the subdivision of animate and inanimate objects. A large animacy effect was observed in IT, and a small effect in V1. A sign permutation test (n = 15, 50,000 iterations) showed that the effect was significant both in IT (P = 2e – 5) and in V1 (P = 0.0046), and significantly larger in IT (P = 2e – 5).

Supplementary Figure 5 Representational similarity analysis related MEG and fMRI responses in IT for the six subdivisions of the image set.

Representational dissimilarities were similar for all subdivisions except non-human faces. Stars above the time course indicate time points of statistical significance (sign permutation test, n = 16, cluster-defining threshold P < 0.001, corrected significance level P < 0.05). For details see Supplementary Table 1f.

Supplementary Figure 6 Representational similarity analysis related MEG and fMRI responses in human IT based on previously reported fMRI data.

MEG correlated significantly with human IT: onset at 68 ms (57 – 71 ms), peak at 158 ms (152 – 300 ms), showing reproducibility of effects across distinct data sets3. Stars above the time course indicate time points of statistical significance (sign-permutation test, n = 16, cluster-defining threshold P < 0.001, corrected significance level P < 0.05).

Supplementary Figure 7 Representational similarity analysis related MEG and fMRI for central and peripheral V1.

(a) fMRI signals in both central and peripheral V1 correlated with early MEG signals (for details see Supplementary Table 1d). (b) MEG signals correlated more strongly with fMRI signals in central than peripheral V1, demonstrating the refined spatial specificity achieved by combining MEG and fMRI by representational similarity analysis. Stars above the time course indicate time points of statistical significance (sign-permutation test, n = 16, cluster-defining threshold P < 0.001, corrected significance level P < 0.05).

Supplementary information

Supplementary Text and Figures

Supplementary Figures 1–7 and Supplementary Table 2 (PDF 3988 kb)

Supplementary Table 1

Comparison of peak latencies for discrimination of individual images at different levels of categorization. The table reports P-values determined by bootstrapping the sample of participants (50,000 samples). Significant comparisons are indexed with a star (P < 0.05, Bonferroni corrected). Latency differences between the classifications of 'Human versus non-human body' and 'Individual images' were in line with predictions, but did not pass Bonferroni correction. (XLSX 39 kb)

Decoding accuracy matrices and accompanying MDS solutions.

To allow a temporally unbiased and complete view of the MEG decoding accuracy data, we generated a movie from −100 to +1,000 ms in 1 ms steps, showing the averaged decoding accuracy across participants (n = 16) and the respective MDS solution (first two dimensions). To allow comparison of the common structure in the MDS across time, we used Procrustes alignment between the first two dimensions of the MDS solutions at neighboring time points. (AVI 8959 kb)

Rights and permissions

About this article

Cite this article

Cichy, R., Pantazis, D. & Oliva, A. Resolving human object recognition in space and time. Nat Neurosci 17, 455–462 (2014). https://doi.org/10.1038/nn.3635

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/nn.3635

This article is cited by

-

Representing stimulus motion with waves in adaptive neural fields

Journal of Computational Neuroscience (2024)

-

A Novel Approach to Screen for Somatosensory Evoked Potentials in Critical Care

Neurocritical Care (2024)

-

Testing cognitive theories with multivariate pattern analysis of neuroimaging data

Nature Human Behaviour (2023)

-

Temporal differences and commonalities between hand and tool neural processing

Scientific Reports (2023)

-

High-precision mapping reveals the structure of odor coding in the human brain

Nature Neuroscience (2023)