Abstract

A promising approach to solving hard binary optimization problems is quantum adiabatic annealing in a transverse magnetic field. An instantaneous ground state—initially a symmetric superposition of all possible assignments of N qubits—is closely tracked as it becomes more and more localized near the global minimum of the classical energy. Regions where the energy gap to excited states is small (for instance at the phase transition) are the algorithm’s bottlenecks. Here I show how for large problems the complexity becomes dominated by O(log N) bottlenecks inside the spin-glass phase, where the gap scales as a stretched exponential. For smaller N, only the gap at the critical point is relevant, where it scales polynomially, as long as the phase transition is second order. This phenomenon is demonstrated rigorously for the two-pattern Gaussian Hopfield model. Qualitative comparison with the Sherrington-Kirkpatrick model leads to similar conclusions.

Similar content being viewed by others

Introduction

Quantum algorithms offer hope for tackling computer science problems that are intractable for classical computers1. However, exponential speed-ups seen in, for example, number factoring2, have not materialized for more difficult non-deterministic polynomial time (NP)-complete problems3. Those problems are targeted by the quantum adiabatic annealing algorithm (QA)4,5,6. Any NP-hard problem can be recast as quadratic binary optimization. QA solves it by implementing a quantum Hamiltonian, written with the aid of Pauli matrices as

Here the first two terms, diagonal in z-basis, encode the objective function. The last term represents the magnetic field in the transverse direction, which is decreased from Γ(0)1 to Γ(Tann)=0. The time Tann needed by the algorithm is determined by a condition that the annealing rate is sufficiently low to inhibit non-adiabatic transitions:

These are most likely near points where the instantaneous gap to excited states ΔE attains a minimum as a function of Γ; further, ΔΓ is defined as the width of the region where the gap remains comparable to its minimum value.

QA offers no worst-case guarantees on time complexity7, but initial assessments of typical case complexity were optimistic. Both experimental8 and theoretical9 evidence hinted at performance improvement over simulated annealing for finite-dimensional glasses; however, some empirical evidence in support of the theory has recently been called into question10. Early exact diagonalization studies also observed polynomially small gaps in the constraint satisfaction problem (CSP) on random hypergraphs11, but that finding had been challenged by quantum Monte Carlo studies involving larger sizes12. Benchmarking of a hardware implementation of QA, courtesy of D-Wave Systems, shows no improvement in the scaling of the performance13,14. Whether that might be attributable to a finite temperature at which the device operates or its intrinsic noise is unclear at present15,16,17.

Statistical physics offers some intuitive guidance: Small gaps develop at the quantum phase transition point and become exponentially small when the transition is first-order18,19,20,21 or when different parts of the system become critical at different times for strong-disorder continuous phase transitions22. The most promising candidates for QA are thus problems with bona fide second-order phase transitions, where the disorder is irrelevant at the quantum critical point (QCP).

The scaling analysis described here suggests a polynomially small gap at the critical point of the archetypal spin glass: the Sherrington-Kirkpatrick (SK) model23,24,25. It has been pointed out9,26 that QA may still be doomed by the bottlenecks in the spin-glass phase. Exponentially small gaps away from the critical point have been observed in simulations27, but adequate theoretical description of this phenomenon has proven challenging. A perturbative argument in support of this qualitative picture has been considered in ref. 26. However, the results were not borne out by more accurate analysis that took into account the extreme value statistics of energy levels28.

The present manuscript sheds light on the mechanism of tunnelling bottlenecks in the spin-glass phase. Using exact, non-perturbative, methods, this is illustrated for a simple model, but the main findings are expected to be valid for quantum annealing of more realistic spin glasses. During annealing, the system must undergo a cascade of tunnellings at some specific values of Γ1,Γ2,… in an approximate geometric progression. For a finite system size, these bottlenecks are few, O (log N), and may not even appear until N is sufficiently large, highlighting the challenge of interpreting the results of numerical studies. Bottlenecks also become increasingly easier as Γ→0, counter to expectations that tunnellings are inhibited as the model becomes more classical. A related finding is that the time complexity of QA is exponential only in some fractional power of problem size: a mild improvement over more pessimistic estimates26.

Results

Summary

The spin-glass phase, which is entered below some critical value of the transverse field Γc, is characterized by a large number of valleys. Often, this transition is abrupt, driven by competition between an extended state and a valley (localized state) with the lowest energy, as occurs in the random energy model19,20. The exponentially small overlap between the two states then determines the gap at the phase transition. However, even if new valleys develop in a continuous manner as Γ decreases, small changes in the transverse field may result in a chaotic reordering of associated energy levels, leading to Landau–Zener avoided crossings and attendant exponentially small gaps.

Nonetheless, attempts to make this intuition exact are fraught with potential pitfalls. For increasing Γ, two randomly chosen valleys are equally likely to come either closer together or further apart in energy. In the case of the former—and further, if the sensitivity of energy levels to changes in the transverse field is so large that the levels ‘collide’ before either valley disappears—avoided crossing will occur. This may not be necessarily the case when one considers ‘collisions’ with the ground state, which are of particular concern to QA. The ground state corresponds to a valley with the lowest energy; this and other low-lying valleys obey fundamentally different statistics of the extremes.

A case in point is the analysis of ref. 26, which develops perturbation theory in Γ. The classical limit (Γ=0) is used as a starting point; how that analysis might be extended to Γ>0 has also been discussed29. A type of CSP has been considered: classical energy levels are discrete non-negative integers (number of violated constraints) but have exponential degeneracy. ‘Zeeman splitting’ for Γ>0 scales as  , which, for large problems, may be sufficient to overcome the O(1) classical gap and cause avoided crossings of levels associated with different classical energies. Yet this trend disappears if only levels with the smallest energies (after splitting) are considered; these are relevant for avoided crossings with the ground state. This about-face is not immediately apparent, only coming into play for N100, when the exponential degeneracy of the classical ground state sets in. It has, however, been confirmed with analytical argument and numerics28. Consequently, arguments based on perturbation theory cannot be used to establish the phenomenon.

, which, for large problems, may be sufficient to overcome the O(1) classical gap and cause avoided crossings of levels associated with different classical energies. Yet this trend disappears if only levels with the smallest energies (after splitting) are considered; these are relevant for avoided crossings with the ground state. This about-face is not immediately apparent, only coming into play for N100, when the exponential degeneracy of the classical ground state sets in. It has, however, been confirmed with analytical argument and numerics28. Consequently, arguments based on perturbation theory cannot be used to establish the phenomenon.

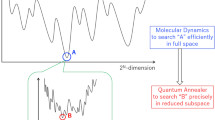

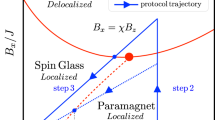

This manuscript offers a fresh perspective, illustrated by studying quantum annealing of the Hopfield model. Mean-field analysis correctly describes thermodynamics if the number of random ‘patterns’ is small. The method is further extended to extract information about exact quantum energy levels. Importantly, the classical energy landscape is made much more complex by insisting that the distribution of disorder is Gaussian. Figure 1 sketches a ‘phase diagram’ obtained for this model. For decreasing Γ the gap changes as follows: (1) it is finite (does not scale with N) in the paramagnetic phase, Γ>Γc; (2) scales as 1/N1/3 in the narrow region of width 1/N2/3 around Γ=Γc; (3) increases slightly, with typical values scaling as 1/N1/4 for Γ<Γc. In addition, there are avoided crossings at isolated points Γ1,Γ2,….

Sketch of the behaviour of the gap in a Hopfield model with the Gaussian distribution of disorder variables (units are arbitrary): in the paramagnetic phase (Γ>Γc), in the glassy phase (Γ<Γc) and in the critical region (Γ≈Γc). Scaling of the typical gap in these regions is indicated in bold letters using big-O notation. The area Γ<Γmin∼1/N is where the discrete nature of the energy landscape becomes manifest: the ground state becomes nearly completely localized. The glassy phase also contains log N isolated bottlenecks at Γ1, Γ2, Γ3, and so on. (indicated with red arrows), where the gaps scale as a stretched exponential. a.u., arbitrary units.

The first non-trivial example requires two Gaussian patterns. In this case the ‘energy landscape’ is effectively one-dimensional, which greatly simplifies the analysis. The most important element of this analysis is accounting for the extreme value statistics associated with the valleys (local minima) having the lowest energies. To this end, the distribution of energies of the classical landscape must be conditioned so that they are never below the energy of the global minimum. This becomes feasible when reformulated as a continuous random process, in the limit N→∞.

This particular model is not as interesting from the computer science perspective, not least because it affords an efficient classical algorithm. It is sufficiently simple so that a complete quantitative analysis presented later on in the manuscript has been possible. Yet, the model captures the essential properties of the spin glass: its qualitative features directly apply to much more general models, including Sherrington-Kirkpatrick. The most important feature of the classical energy landscape is that it exhibits fractal properties, which both ensures that hard bottlenecks are present in the spin-glass phase and also governs their distribution. The role of the transverse field is to approximately coarse-grain it on scales determined by Γ, eliminating small barriers; thus the number of valleys decreases as Γ grows. A specific random process, corresponding to the energy landscape of the ‘infinite’-size instance, will contain every possible realization of itself at some ‘length scale’. Some realizations will contain high barriers that cannot be easily overcome; these will lead to tunnelling bottlenecks.

This intuition can be used to immediately establish the scaling of the number of tunnelling bottlenecks. Since the model contains no inherent length scale in the limit N→∞, it can be argued that the expected number of tunnelling bottlenecks in a finite interval [Γ1;Γ2] should be a function of the ratio Γ2/Γ1. The logarithm is the only function that respects additivity, that is,  . To obtain the total number of bottlenecks, one considers the interval [Γmin;Γc]. Here Γc∼1 is the boundary of the spin-glass phase. The lower cutoff, Γmin, corresponds to the lowest energy scale of the classical model, which scales as an inverse power of system size, for example, as 1/N for the Gaussian Hopfield model. In a sense, tunnelling bottlenecks are connected to the Γ=0 ‘fixed point’ (note that the classical gap vanishes asymptotically). To summarize, the number of tunnelling bottlenecks will grow as

. To obtain the total number of bottlenecks, one considers the interval [Γmin;Γc]. Here Γc∼1 is the boundary of the spin-glass phase. The lower cutoff, Γmin, corresponds to the lowest energy scale of the classical model, which scales as an inverse power of system size, for example, as 1/N for the Gaussian Hopfield model. In a sense, tunnelling bottlenecks are connected to the Γ=0 ‘fixed point’ (note that the classical gap vanishes asymptotically). To summarize, the number of tunnelling bottlenecks will grow as

Locations (in Γ) will depend on specific disorder realization, but self-similarity ensures that the successive ratios Γn/Γn+1 converge to a universal distribution.

This logarithmic rise is far weaker than a power law seen in some phenomenological models of temperature chaos30 and, as has been argued above, likely to be a universal feature. The prefactor is model-dependent; its numerical value can be used to estimate the minimum problem size for which the mechanism becomes relevant, via  . A value of α≈0.15 is obtained for the problem at hand, so that additional bottlenecks become an issue for N1,000. Prior numerical studies similarly required large sizes before the exponentially small minimum gap was observed27, and so far there has been no evidence of two or more exponentially small gaps coexisting. The slow, logarithmic increase of the expected number of bottlenecks is the most likely culprit.

. A value of α≈0.15 is obtained for the problem at hand, so that additional bottlenecks become an issue for N1,000. Prior numerical studies similarly required large sizes before the exponentially small minimum gap was observed27, and so far there has been no evidence of two or more exponentially small gaps coexisting. The slow, logarithmic increase of the expected number of bottlenecks is the most likely culprit.

A notable feature of these results is that tunnellings become progressively ‘easier’ as Γ→0 despite the fact that the model becomes more classical. Tunnelling gaps increase as

Notice that they cease to be exponentially small for Γ≲Γmin; at that point the ground state is already localized near the correct global minimum. The power law exponent for this stretched exponential is model-dependent, related to the scaling of barrier heights. These scale as N1/2 for the Gaussian Hopfield model, which, together with O(N) scaling of the effective mass, gives rise to the N3/4 term in the exponent.

The finding that the gaps increase for smaller Γ deserves explanation. Typically, valleys with similar energies differ by up to N/2 spin flips. This changes once lowest energies are considered: All spin configurations with energies less than  above the global minimum are contained in a neighbourhood of radius

above the global minimum are contained in a neighbourhood of radius  , using Hamming distance as a metric. The problem is not rendered easy by the mere fact that the global minimum is so pronounced (although theoretical analysis inspired an efficient classical algorithm for the p=2 Hopfield network, briefly described later on). It does imply, however, that the ground state wavefunction does not jump chaotically: Every subsequent tunnelling involves shorter distances, with O(ΓN) spin flips, and achieves progressively better approximation to the true global minimum. Absent such a trend, annealing would be most difficult towards the end of the algorithm, when Γ∼1/N, and the minimum gap would exhibit less favourable exponential scaling26.

, using Hamming distance as a metric. The problem is not rendered easy by the mere fact that the global minimum is so pronounced (although theoretical analysis inspired an efficient classical algorithm for the p=2 Hopfield network, briefly described later on). It does imply, however, that the ground state wavefunction does not jump chaotically: Every subsequent tunnelling involves shorter distances, with O(ΓN) spin flips, and achieves progressively better approximation to the true global minimum. Absent such a trend, annealing would be most difficult towards the end of the algorithm, when Γ∼1/N, and the minimum gap would exhibit less favourable exponential scaling26.

In what follows, the model and its solution are described in greater detail. First, finite-size scaling of the ‘easy’ QCP bottleneck is linked to the thermodynamics of the phase transition. The next part goes beyond thermodynamics, considering small corrections to the extensive contribution to the free energy. The entire low-energy spectrum, which depends on disorder realization, is mapped onto that of a simple quantum mechanical particle in a random potential. Finally, extreme value statistics is applied to investigate the properties of that random potential near its global minimum by mapping it to a Langevin process. This yields the distribution of hard bottlenecks in a universal regime (Γ<<1).

Quantum Hopfield network

Consider a model with rank-p matrix of interactions and no longitudinal field (hi=0):ref.31

(cf. rk Jik=N for SK model), where  are taken to be independent and identically distributed (i.i.d.) random variables of unit variance. The thermodynamics of this quantum Hopfield model has been worked out in great detail by Nishimori and Nonomura32. In fact, that study motivated the development of QA4.

are taken to be independent and identically distributed (i.i.d.) random variables of unit variance. The thermodynamics of this quantum Hopfield model has been worked out in great detail by Nishimori and Nonomura32. In fact, that study motivated the development of QA4.

When p is small (Jik∼1/N), it is appropriate to replace local longitudinal fields with their mean values  . The identity

. The identity  is used to close this system of equations. For a transverse field below the critical value, Γ<Γc=1, there appears a non-trivial (m0) solution to the self-consistency equation for the macroscopic order parameter, a vector with p components:

is used to close this system of equations. For a transverse field below the critical value, Γ<Γc=1, there appears a non-trivial (m0) solution to the self-consistency equation for the macroscopic order parameter, a vector with p components:

Here, the disorder variables are also written using vector notation:  .

.

This model is equivalent to the Curie–Weiss (quantum) ferromagnet, which has a continuous phase transition characterized by a set of mean-field critical exponents. Two of these are particularly useful in the analysis of the annealing complexity: the one for the singular component of the ground state energy  as well as the dynamical exponent for the gap (E1−E0∝γb), where γ=Γ−Γc is the ‘distance’ from the critical point. These exponents are defined for the infinite system, yet fairly general heuristic analysis (see the Methods section) predicts finite-size scaling for the QCP bottleneck:

as well as the dynamical exponent for the gap (E1−E0∝γb), where γ=Γ−Γc is the ‘distance’ from the critical point. These exponents are defined for the infinite system, yet fairly general heuristic analysis (see the Methods section) predicts finite-size scaling for the QCP bottleneck:

Substituting values a=2 and b=1/2 for the problem at hand, one may estimate the gap at the critical point and the width of the critical region to be O (N−1/3) and O (N−2/3), respectively.

Worse-than-any-polynomial complexity of quantum annealing might be expected for the first order phase transition, which exhibits no critical scaling (but see ref. 33). Another possibility is for the dynamical exponent to diverge at the infinite randomness QCP: the finite-size gap scales as  in a random Ising chain22. For the Hopfield model, however, this scaling is polynomial, as the disorder is irrelevant at the critical point. More intriguing is the fact that these pessimistic scenarios are not found in SK spin glass either: the model is characterized by the same set of critical exponents, albeit with logarithmic corrections23,24,25. These corrections to scaling increase the gap and, respectively, decrease the width of the critical region by a factor of log2/9 N. Thus, as long as TannN, non-adiabatic transitions at the critical point should be suppressed. This presents a conundrum as SK model is known to be an NP-hard problem; finding a polynomial-time (even in typical case) quantum algorithm would be a surprising development. The heuristic analysis is clearly insufficient, but ‘digging’ deeper into a problem would require a more ‘microscopic’ analysis. In the following, the problem is mapped to ordinary quantum mechanics to uncover its low-energy spectrum that explicitly depends on a particular realization of disorder, {ξi}.

in a random Ising chain22. For the Hopfield model, however, this scaling is polynomial, as the disorder is irrelevant at the critical point. More intriguing is the fact that these pessimistic scenarios are not found in SK spin glass either: the model is characterized by the same set of critical exponents, albeit with logarithmic corrections23,24,25. These corrections to scaling increase the gap and, respectively, decrease the width of the critical region by a factor of log2/9 N. Thus, as long as TannN, non-adiabatic transitions at the critical point should be suppressed. This presents a conundrum as SK model is known to be an NP-hard problem; finding a polynomial-time (even in typical case) quantum algorithm would be a surprising development. The heuristic analysis is clearly insufficient, but ‘digging’ deeper into a problem would require a more ‘microscopic’ analysis. In the following, the problem is mapped to ordinary quantum mechanics to uncover its low-energy spectrum that explicitly depends on a particular realization of disorder, {ξi}.

Exact low-energy spectrum

Mean-field theory can be derived in a more systematic manner via Hubbard–Stratonovich transformation. General overview is presented below; additional details can be found in Methods and the Supplementary Note 1. Finite-temperature partition function Z(β)=Tr(e−βH) can be written as a path integral over m(t), which now acquires a dependence on the imaginary time 0⩽t⩽β, with periodic boundary conditions: m(β)=m(0). The value of the integral is dominated by stationary paths corresponding to the minimum of an effective potential  . While the discussion above has been deliberately equivocal on the distribution of disorder variables, it is now instructive to contrast bimodal

. While the discussion above has been deliberately equivocal on the distribution of disorder variables, it is now instructive to contrast bimodal  and Gaussian choices. The shapes of the effective potential for both scenarios are depicted in Fig. 2.

and Gaussian choices. The shapes of the effective potential for both scenarios are depicted in Fig. 2.

The plots show the disorder-averaged  below the critical point (Γ=0.5<Γc) for (a) the bimodal distribition and (b) the Gaussian distribution of disorder variables. Minima of the potential are highlighted with magenta: the 2p-fold degenerate global minima organized in pockets corresponding to encoded patterns in a, and a continuum of degenerate minima (connected by arbitrary rotations) in b.

below the critical point (Γ=0.5<Γc) for (a) the bimodal distribition and (b) the Gaussian distribution of disorder variables. Minima of the potential are highlighted with magenta: the 2p-fold degenerate global minima organized in pockets corresponding to encoded patterns in a, and a continuum of degenerate minima (connected by arbitrary rotations) in b.

The conventional bimodal choice defines the model of associative memory: In the limit Γ=0 the ‘patterns’ can be perfectly recalled (si=±ξ(μ)) when p is small. For the Gaussian choice, the global minimum corresponds to a mixture34

rendering memory useless. In the bimodal case, such ‘spurious’ states only become stable once the number of patterns scales with the problem size35: p>0.05N. The BCp (bimodal) or O(p) (Gaussian) symmetry of the effective potential is only approximate, to leading order in N. The degeneracy of the ground state is 2 (due to global spin inversion symmetry) for almost all disorder realizations when p⩾3 or p⩾2 in the bimodal and Gaussian scenarios, respectively (note that that the p=2 bimodal case possesses an additional symmetry, which makes the ground state 4-fold degenerate). The system is in a symmetric superposition of the degenerate global minima at the end of QA. It evolves entirely in the symmetric subspace since the time-dependent Hamiltonian commutes with  . Thus, it should be noted that small gaps between symmetric and antisymmetric wavefunctions are irrelevant to QA and can be ignored.

. Thus, it should be noted that small gaps between symmetric and antisymmetric wavefunctions are irrelevant to QA and can be ignored.

Disorder fluctuations ‘nudge’ QA towards the ‘correct’ pattern as it passes the critical point in the bimodal Hopfield model. No further bottlenecks are encountered; gaps for Γ<Γc as well as the ‘classical’ (Γ=0) gap are O(1). By contrast, the classical gap scales as O(1/N) in the Gaussian Hopfield model, alerting to a ‘danger’ posed by the Γ=0 ‘fixed point’. To find the low-energy spectrum when Γ<Γc, note that the dominant contribution to the path integral is from paths where the magnitude of the ‘magnetization’ vector remains approximately constant, close to its saddle-point value, while the angle is a slow function of time:  for p=2. Integrating out the amplitude degrees of freedom, the partition function is rewritten as

for p=2. Integrating out the amplitude degrees of freedom, the partition function is rewritten as

which describes a quantum-mechanical particle of mass M=O(N) moving on a ring, subjected to a random potential

where  and the last term, written in shorthand, adds a constant offset so that 〈V()〉ξ=0. Notice that

and the last term, written in shorthand, adds a constant offset so that 〈V()〉ξ=0. Notice that  via central limit theorem, thereby representing a higher-order correction to the extensive part of the free energy.

via central limit theorem, thereby representing a higher-order correction to the extensive part of the free energy.

Since the partition function  encodes information about the spectrum, low-energy (Goldstone) excitations of the many-body problem are in one-to-one correspondence with the energy levels of a quantum mechanical particle, up to a constant shift. The next step is to find the properties of this potential near a global minimum. These are relevant in a regime away from the critical point, Γ<<Γc, where the universal behaviour characterized by the appearance of ‘hard’ bottlenecks sets in.

encodes information about the spectrum, low-energy (Goldstone) excitations of the many-body problem are in one-to-one correspondence with the energy levels of a quantum mechanical particle, up to a constant shift. The next step is to find the properties of this potential near a global minimum. These are relevant in a regime away from the critical point, Γ<<Γc, where the universal behaviour characterized by the appearance of ‘hard’ bottlenecks sets in.

Evolution of the random potential

Scaling of the gap for Γ<Γc can be obtained via semiclassical analysis. Small level splitting due to tunnelling between wells at the two degenerate global minima (this degeneracy is a consequence of the global Z2 symmetry: VΓ(+π)=VΓ()) is not relevant to QA. Higher degeneracies are statistically unlikely; quantization rules predict O(N−1/4) gaps between energy levels within the symmetric subspace. But this refers to the typical gap, obtained for fixed Γ for almost all realizations of disorder. As quantum annealing sweeps the transverse field for a fixed realization of disorder, VΓ () might undergo global bifurcation. This would result in a small tunnelling gap for a specific value of Γ when the competing minima are in resonance.

Such a scenario is impossible near the QCP. Coefficients in the Fourier expansion of the random potential, Σk(ak cos 2k+bk sin 2k), decrease as m2k/Γ2k−1 so that the first harmonic dominates for Γ≈Γc. Semiclassical analysis confirms a O(N−1/3) gap at the critical point (where the curvature of the effective potential vanishes, leaving only the quartic part). As Γ decreases, the random potential becomes more rugged (see Fig. 3a), smooth only on scales Δ∼Γ, which makes global bifurcations more likely. Furthermore, it exhibits properties that allow one to make important predictions without detailed calculations. Rescaling the potential in the vicinity of either global minimum  ,

,

(a) Potential VΓ(θ) for a specific realization of disorder as a function of Γ. A perspective-projection three-dimensional plot that zooms on a region near the global minimum of V0(θ) is shown below. (b) The top part plots a specific realization of a stochastic process ν(τ) for the Langevin potential  shown in the inset. The bottom part sketches χ(τ) and (τ) (up to a constant factor) obtained by integrating equation (12). The inset is a parametric plot χ() using linear scale. Fluctuations with ν(τ)<0 (between the dotted vertical lines) are responsible for the appearance of local minima. a.u., arbitrary units.

shown in the inset. The bottom part sketches χ(τ) and (τ) (up to a constant factor) obtained by integrating equation (12). The inset is a parametric plot χ() using linear scale. Fluctuations with ν(τ)<0 (between the dotted vertical lines) are responsible for the appearance of local minima. a.u., arbitrary units.

describes the same model but for the rescaled  and a different realization of disorder. This scale invariance is responsible for the logarithmic scaling of the number of tunnelling bottlenecks as has been explained earlier in the text. However, it still remains necessary to demonstrate that the density of bottlenecks is non-zero, which entails an examination of the properties of the random potential in the limit Γ=0.

and a different realization of disorder. This scale invariance is responsible for the logarithmic scaling of the number of tunnelling bottlenecks as has been explained earlier in the text. However, it still remains necessary to demonstrate that the density of bottlenecks is non-zero, which entails an examination of the properties of the random potential in the limit Γ=0.

The classical optimization problem corresponds to maximizing the magnitude of  . A necessary condition for a local minimum is that two sets of vectors, {ξi|si=+1} and {ξi|si=−1}, can be separated by a line. As the angle of this line changes, fluctuations of the amplitude give rise to a random potential V0 () (see Fig. 4). This suggests a linear-time algorithm for finding a global minimum: Sort all vectors by angle (this may introduce an extra log N factor due to sorting overhead) and exhaustively check all possible angles. Of course, QA algorithm is too generic to exploit the specific structure of the problem; moreover ad hoc efficient algorithms are unlikely to exist for more general spin-glass problems.

. A necessary condition for a local minimum is that two sets of vectors, {ξi|si=+1} and {ξi|si=−1}, can be separated by a line. As the angle of this line changes, fluctuations of the amplitude give rise to a random potential V0 () (see Fig. 4). This suggests a linear-time algorithm for finding a global minimum: Sort all vectors by angle (this may introduce an extra log N factor due to sorting overhead) and exhaustively check all possible angles. Of course, QA algorithm is too generic to exploit the specific structure of the problem; moreover ad hoc efficient algorithms are unlikely to exist for more general spin-glass problems.

Illustration for a random instance with N=20. (a) A vector m() (black arrow) is defined by drawing a separating line at angle (in brown) and assigning spins with ξk on either side of separating line values sk=+1 (turquoise arrows) and sk=−1 (magenta arrows), respectively. (b) Cartoon shows how m() changes as the separating line rotates counterclockwise for increasing : the vector m() is incremented/decremented by (2/N)ξk (turquoise arrows) whenever sk changes sign. (c) These increments form a closed path, approximated by a circle; fluctuations around the ideal circle (dashed black line) define a random potential (in blue).

On short intervals, the random process is described as an undamped Langevin process36,37 in the continuous (N→∞) limit (hence the exponent of 3/2 in equation (11), corresponding to its fractal dimension). Properly taking into account the statistics of the extremal properties, the process must be conditioned on the fact that  away from the global minimum. As described in Methods and the Supplementary Notes 2 and 3, such a conditioned process consists of two uncorrelated branches,

away from the global minimum. As described in Methods and the Supplementary Notes 2 and 3, such a conditioned process consists of two uncorrelated branches,  Integrating equations

Integrating equations

defines χ+() and χ−() parametrically, in terms of random processes ν(τ) that correspond to a Brownian motion in a non-linear potential depicted in Fig. 3b. This potential is biased towards positive ‘velocities’ ν so that  from equation (12). It will, however, experience arbitrary percentage drops due to subpaths with ν<0 (albeit with decreasing probability).

from equation (12). It will, however, experience arbitrary percentage drops due to subpaths with ν<0 (albeit with decreasing probability).

For small but finite Γ, the ‘separating line’ becomes blurred. The random potential adds a ‘quantum correction’ (see Methods and the Supplementary Note 2),  . For two minima to come into resonance, they cannot be more than Δ∼Γ apart (that is, O (NΓ) spin flips). The tunnelling exponent is given by under-the-barrier action

. For two minima to come into resonance, they cannot be more than Δ∼Γ apart (that is, O (NΓ) spin flips). The tunnelling exponent is given by under-the-barrier action  , where the effective mass M∼N/Γ2 and

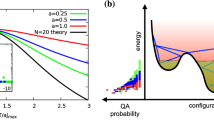

, where the effective mass M∼N/Γ2 and  , leading to equation (4). Numerical results for the universal distribution of tunnelling jumps and exponents are exhibited in Fig. 5.

, leading to equation (4). Numerical results for the universal distribution of tunnelling jumps and exponents are exhibited in Fig. 5.

(a–c) The L-shaped figure formed by the three panels shows the distribution of the tunnelling jumps and the tunnelling exponents. Here Δ/Γ is proportional to the number of spin flips for the tunnelling event, normalized by ΓN. The distribution of the tunnelling gaps is quantified using a prefactor in the exponent of equation (4), namely c=|ln ΔEtunn|/(ΓN)3/4. The panel a is a scatterplot of c and Δ/Γ. The histograms of univariate distributions of c and Δ/Γ (‘projections’ of the scatterplot) are plotted in panels b and c, respectively. (d) A histogram of distribution of Γn/Γn+1 for the successive tunnelling events.

Discussion

Poor scalability of classical annealing of spin-glass models had been linked to the phenomenon of temperature chaos38. Interestingly, its existence in mean-field glasses had been debated39,40,41, although it is uncontroversial in finite-dimensional models42,43. Similarly, failures of quantum annealing might be attributed to transverse field chaos. The phenomenon described in this manuscript represents a much stronger finding. The mere fact that ground states at Γ and Γ+ΔΓ will have vanishingly small overlap as N→∞ is not inconsistent with the continuously evolving ground state and poses no ‘threat’ to QA. By contrast, ‘hard’ bottlenecks correspond to isolated discontinuities that persist as ΔΓ→0.

To dwell upon the generality of these results, first note that scaling of the tunnelling exponent will depend on the universality class of the model. The SK model, for instance, exhibits different scaling of barrier heights, believed to be ∝N1/3 (see for example, ref. 44). In the model studied, the decrease in the number of spins involved in tunnellings offsets the divergence of the effective mass in the classical limit Γ→0. As the height of the barriers also decreases, the tunnelling gaps widen towards the end of the algorithm. One might expect qualitatively similar behaviour in realistic spin glasses.

The logarithmic scaling of the number of bottlenecks is due to self-similar properties of the free energy landscape in the interval [Γmin, Γc]. The lower cutoff should correspond to the smallest energy scale in the classical limit, which for the SK model is also a negative power of N, namely  . This is related to the linear vanishing of the density of distribution of effective fields as h→0 at zero temperature45 (since

. This is related to the linear vanishing of the density of distribution of effective fields as h→0 at zero temperature45 (since  ). The picture is less clear for CSPs, where the energies are constrained to be non-negative integers; that is, the classical gap is O(1). These energy levels have exponential degeneracy, which is lifted by the transverse field. A value sufficient to make the spectrum effectively quasi-continuous might serve as an appropriate lower cutoff Γmin in problems of this type. Lack of ‘hard’ bottlenecks in the Hopfield model with the bimodal distribution of disorder and p=O (1) could be attributed to the fact that the number of valleys is finite, which is not representative of a true spin glass.

). The picture is less clear for CSPs, where the energies are constrained to be non-negative integers; that is, the classical gap is O(1). These energy levels have exponential degeneracy, which is lifted by the transverse field. A value sufficient to make the spectrum effectively quasi-continuous might serve as an appropriate lower cutoff Γmin in problems of this type. Lack of ‘hard’ bottlenecks in the Hopfield model with the bimodal distribution of disorder and p=O (1) could be attributed to the fact that the number of valleys is finite, which is not representative of a true spin glass.

An interesting observation is that since ‘hard’ bottlenecks correspond to Landau–Zener crossings, annealing times need not be exponentially small. The probability that QA fails to follow the ground state every single time in n repeated experiments is

which exactly matches the probability of failure for the annealing rate that is n times slower. Using shorter annealing cycles with many repetitions can minimize the effects of decoherence. It suffices that non-adiabatic transitions are suppressed at the critical point only,  .

.

Even with polynomial annealing rates, coherent evolution would require much better isolation from the environment than what is currently feasible. The only commercial implementation of QA (D-Wave) must contend with a fairly strong coupling to a thermal bath. On the positive side, it accommodates faster annealing cycles, acting as a ‘safety valve’ to dissipate any heat generated during the non-adiabatic process. At the same time, it all but ensures that the system is always in thermal equilibrium with the environment.

The theory presented here breaks down when Γ<T so that quantum bottlenecks described here may not be a limiting factor if ln (Γc/T)≲1/α. Main source of errors will be from exponentially many thermally occupied excited states. If the annealing profile were adjusted so that the energy spacing increased beyond T towards the end, this would effectively implement classical annealing. An intricate relationship between temperature, problem size, and the properties of the spin-glass model might determine which mechanism (quantum or classical annealing) will be dominant.

Yet another tradeoff in the design of D-Wave chip is a quasi-two-dimensional (2D) topology of interactions Jik due to fabrication constraints, which incurs significant performance penalty when mean-field models are ‘embedded’ into a ‘Chimera’ graph46. So-called ‘native’ problems corresponding to uniformly random instances on this quasi-2D lattice have been argued to be poor candidates for QA due to the lack of a finite-temperature classical phase transition47; at the same time, a quantum phase transition at Γc>0 is expected in 2D glasses48.

Whereas first-order phase transition immediately implies exponential complexity, even for small sizes, problems having a continuous phase transition may remain tractable up to a threshold, Nc, beyond which tunnelling bottlenecks become dominant (α ln Nc∼1). This ‘tractability threshold’ serves as a silver lining fot this otherwise negative result. Moreover, the picture of ‘hard’ bottlenecks may be equally applicable to classical annealing. A recent study demonstrated that classically ‘hard’ instances that exhibit temperature chaos also take much longer time to solve on a D-Wave machine49. While in some crafted examples classical annealing is at a unique disadvantage due to first-order phase transition50, for most interesting problems both classical and quantum transitions are second-order. In such a scenario, the density of bottlenecks becomes a tie-breaker for evaluating relative performance. Whether quantum annealing can be advantageous in terms of this metric and determining which models will benefit is a practically important question for follow-up work.

There remains a question whether the failure mechanism described here can be somehow circumvented. Such a feat is feasible, for example, for a disordered Ising chain, where an exponentially small gap develops at the critical point, which is a manifestation of Griffiths singularities22. Modification of QA that requires controlling the transverse field for each spin individually can suppress these singularities and restore a polynomial gap.

Based on comparison with another exactly solvable model, it seems that frustration—in addition to disorder—is essential for the appearance of small gaps in the spin-glass phase proper. The seemingly random profile of the energy landscape for finite Γ heralds difficulties in avoiding these bottlenecks in generic spin glasses. Although any prospects of exponential speedup should be met with skepticism, heuristics inspired by spin-glass theory revolutionalized branch-and-bound algorithms for CSPs51. One can remain hopeful that theoretical advances can similarly aid quantum optimization.

Methods

Scaling analysis

Finite-size scaling of the gap at QCP is best understood using an example of a finite-dimensional system. In thermodynamic limit, both correlation length and characteristic time diverge near the phase transition as

In a finite system this divergence is smoothed out as soon as the correlation length becomes comparable to lattice size (ξc∼N1/d). The minimum gap (the reciprocal of τ) and the width of the critical region should scale as N−z/d and N−1/(dν), respectively. In this paper, the product zν has been labelled as exponent b. Singular behaviour of normalized ground state energy (free energy) is related to the specific heat exponent (a=2−α). Dimensionality d can be eliminated with the aid of hyperscaling relation 2−α=(d+z)ν to yield equation (7) of the main text. Independent estimates of the specific heat exponent can be obtained from the exponents for the order parameter and the susceptibility (2−α=2β+γ).

Mapping to quantum mechanics

Finite-temperature partition function can be written as a sum over a set of paths [si(t)] with 0⩽t⩽β, where si (t) alternates between the values ±1. Hubbard–Stratonovich transformation can be used to rewrite it as a path integral

The ‘kinetic’ term  in the first equation penalises kinks, representing Γ-dependent ferromagnetic couplings between Trotter slices. As the interaction term is decoupled, the problem reduces to that of evaluating the single-site partition function Zi for a spin subjected to a magnetic field with the transverse component Γ and the ‘time-dependent’ longitudinal component hi (t)=ξim (t). To leading order in N, the saddle-point of the path integral (15) is a solution of mean-field equations. This becomes a degenerate manifold for Gaussian disorder distribution; to determine higher-order contribution all paths such that |m(t)|=mΓ are considered. Evaluating Zi is best performed in the adiabatic basis that diagonalizes the 2-level Hamiltonian

in the first equation penalises kinks, representing Γ-dependent ferromagnetic couplings between Trotter slices. As the interaction term is decoupled, the problem reduces to that of evaluating the single-site partition function Zi for a spin subjected to a magnetic field with the transverse component Γ and the ‘time-dependent’ longitudinal component hi (t)=ξim (t). To leading order in N, the saddle-point of the path integral (15) is a solution of mean-field equations. This becomes a degenerate manifold for Gaussian disorder distribution; to determine higher-order contribution all paths such that |m(t)|=mΓ are considered. Evaluating Zi is best performed in the adiabatic basis that diagonalizes the 2-level Hamiltonian  ,

,

Here  is diagonal with eigenvalues

is diagonal with eigenvalues  . Its fluctuations around the mean give rise to the random potential VΓ(). Non-adiabatic terms due to rotation of the basis

. Its fluctuations around the mean give rise to the random potential VΓ(). Non-adiabatic terms due to rotation of the basis  are treated using second order perturbation theory, giving rise to a kinetic term ∝(d/dt)2. Note a simple form of the perturbative term in equation (16) owing to the fact that

are treated using second order perturbation theory, giving rise to a kinetic term ∝(d/dt)2. Note a simple form of the perturbative term in equation (16) owing to the fact that  commute for all t. The details of this calculation are given in Supplementary Note 1.

commute for all t. The details of this calculation are given in Supplementary Note 1.

Continuous limit

In the limit N→∞, the random potential VΓ() defined in equation (10) in the main text converges to a Gaussian process that can be specified completely by its covariance matrix 〈VΓ()VΓ′(′)〉. This can be ‘diagonalized’, alternatively expressing the random potential as a linear combination of white-noise processes {ζn()}. One representation, as a convolution series  with kernels

with kernels

relies on orthogonal properties of associated Laguerre polynomials. The choice α=1 ensures that only n=0 term survives in the limit Γ=0. The random potential should satisfy a stochastic equation

As a side note observe that a similar equation is obtained by taking a continuous limit in the identities that follow from elementary geometry (see Fig. 4 in the main text,  ):

):

For finite Γ, the form of the potential is modified as follows: It is convolved with a smoothing kernel of width Δ∼Γ, which raises the global minimum by  . Additional random contributions (from n⩾1) have similar scaling. This derivation is presented in greater detail in Supplementary Note 2.

. Additional random contributions (from n⩾1) have similar scaling. This derivation is presented in greater detail in Supplementary Note 2.

Extreme value statistics

In the vicinity of the global minimum, the statistics of the classical potential is fundamentally different. The ‘returning force’ in equation (18) can be neglected; additionally the process should be conditioned on the fact that it stays above its value at =0 (no generality is lost by choosing the global minimum as the origin). This problem has been a subject of a considerable body of work36,37, although important aspects ought to be revisited. Here, I present a particularly simple self-contained derivation.

A pair (χ,)—where  is interpreted as the ‘coordinate’ and =dχ/d as the ‘velocity’ ( being ‘time’)—forms a Markov process. The probability distribution p (; χ, ) satisfies, for >0,

is interpreted as the ‘coordinate’ and =dχ/d as the ‘velocity’ ( being ‘time’)—forms a Markov process. The probability distribution p (; χ, ) satisfies, for >0,

serves as a boundary condition for the absorbing boundary. This probability is ‘renormalized’ to condition on the fact that it survives until some Θ. It becomes a conserved quantity, but the diffusion equation adds a drift, −(log PΘ)/, repelling from the boundary. The ‘survival’ probability PΘ in the limit Θ→∞ is, up to ‘time-reversal’, the universal asymptotic solution of (20), reduced to ordinary differential equation using scaling ansatz:

This exploits a fact that fractal dimensions of ‘velocity’ and ‘coordinate’ are []=[]1/2 and [χ]=[]3/2. The asymptotics is dominated by solutions with the smallest possible exponent, α=1/4 out of the infinite set of eigenvalues for the ordinary differential equation. This matches a known value obtained with a different method36.

The next step performs a change of variables, introducing ‘dimensionless’ velocity ν=/χ1/3, and ‘logarithmic’ coordinate μ=ln χ. With the ‘time’ variable redefined via dτ=d/χ2/3, Markov process is described by a tuple (, μ, ν). Marginalizing out μ and in the equation for p (τ; , μ, ν) produces Fokker–Planck equation for a stochastic motion of particle in a potential

Given a particular realization of ν(τ), the full process (μ, ν, ) is determined deterministically, by integration (see equation (12) in the main text). The construction of a realization of a random process is performed independently for >0 and <0. More detailed analysis is presented in Supplementary Note 3.

Numerical simulations rescale this random potential instead of evolving the transverse field:  and

and  . The process is extended to larger values of τ as needed (details of the process for small τ, where they fall below the numerical precision, are ‘forgotten’). A fairly large range of τ is required to gather the sufficient statistics.

. The process is extended to larger values of τ as needed (details of the process for small τ, where they fall below the numerical precision, are ‘forgotten’). A fairly large range of τ is required to gather the sufficient statistics.

Data availability

The data that support the findings of this study are available from the corresponding author upon request.

Additional information

How to cite this article: Knysh, S. Zero-temperature quantum annealing bottlenecks in the spin-glass phase. Nat. Commun. 7:12370 doi: 10.1038/ncomms12370 (2016).

References

Nielsen, M. & Chuang, I. Quantum Computation and Quantum Information 10th edn Cambridge University Press (2011).

Shor, P. W. Algorithms for quantum computation: disctete logarithms and factoring. Proc. 35th Ann. Symp. Foundations of Computer Science (ed. Goldwasser. S.) 124–134 (IEEE Computer Society Press, 1994).

Garey, M. R. & Johnson, D. S. Computers and Intractability: A Guide to the Theory of NP-Completeness W. H. Freeman (1979).

Kadowaki, T. & Nishimori, H. Quantum annealing in the transverse Ising model. Phys. Rev. E 58, 5355 (1998).

Farhi, E., Goldstone, J., Gutmann, S. & Sipser, M. Quantum computation by adiabatic evolution. Preprint at http://arxiv.org/abs/quant-ph/0001106 (2000).

Das, A. & Chakrabarti, B. K. Quantum annealing and analog quantum computation. Rev. Mod. Phys. 80, 1061–1081 (2008).

van Dam, W., Mosca, M. & Vazirani, U. How powerful is adiabatic quantum computation? Proc. 42nd IEEE Symp. FOCS 279–287 (2001).

Brooke, J., Bitko, D., Rosenbaum, F.T. & Aeppli, G. Quantum annealing of a disordered magnet. Science 284, 779–781 (1999).

Santoro, G., Martoňák, R., Tosatti, E. & Car, R. Theory of quantum annealing of spin glasses. Science 295, 2427–2430 (2002).

Heim, B., Rønnow, T. F., Isakov, S. V. & Troyer, M. Quantum versus classical annealing of Ising spin glasses. Science 348, 215–217 (2015).

Farhi, E. et al. A quantum adiabatic evolution algorithm applied to random instances of an NP-complete problem. Science 292, 472–475 (2001).

Young, A. P., Knysh, S. & Smelyanskiy, V. N. First order phase transition in the quantum adiabatic algorithm. Phys. Rev. Lett. 104, 020502 (2010).

Boixo, S. et al. Evidence for quantum annealing with more than one hundred qubits. Nat. Phys. 10, 218–224 (2014).

Rønnow, T. F. et al. Defining and detecting quantum speedup. Science 345, 420–424 (2014).

Boixo, S. et al. Computational role of multiqubit tunneling in a quantum annealer. Nat. Commun. 7, 10327 (2016).

Katzgraber, H. G., Hamze, F., Zhu, Z., Ochoa, A. J. & Munos-Bauza, H. Seeking quantum speedup through spin glasses: the good, the bad, and the ugly. Phys. Rev. X 5, 031026 (2015).

Zhu, Z., Ochoa, A. J., Schnabel, S., Hamze, F. & Katzgraber, H. G. Best-case performance of quantum annealers on native spin-glass benchmarks: How chaos can affect success probabilities. Phys. Rev. A 93, 012317 (2016).

Smelyanskiy, V. N., von Toussaint, U. & Timucin, D. A. Dynamics of quantum adiabatic evolution algorithm for number partitioning. Preprint at http://arxiv.org/abs/quant-ph/0202155 (2002).

Goldschmidt, Y. Y. Solvable model of the quantum spin glass in a transverse field. Phys. Rev. B 41, 4858–4861 (1990).

Jörg, T., Krzakaa, F., Kurchan, J. & Maggs, A. C. Simple glass models and their quantum annealing. Phys. Rev. Lett. 101, 147204 (2008).

Jörg, T., Krzakaa, F., Semerjian, G. & Zamponi, F. First-order transitions and the performance of quantum algorithms in random optimization problems. Phys. Rev. Lett. 104, 207206 (2010).

Fisher, D. S. Critical behavior of random transverse-field Ising spin chains. Phys. Rev. B 51, 6411–6461 (1995).

Miller, J. & Huse, D. Zero-temperature critical behavior of the infinite-range quantum Ising spin glass. Phys. Rev. Lett. 70, 3147–3150 (1993).

Ye, J., Sachdev, S. & Read, N. Solvable spin glass of quantum rotors. Phys. Rev. Lett. 70, 4011–4014 (1993).

Read, N., Sachdev, S. & Ye, J. Landau theory of quantum spin glasses of rotors and Ising spins. Phys. Rev. B 52, 384–410 (1995).

Altshuler, B., Krovi, H. & Roland, J. Anderson localization casts clouds over adiabatic quantum optimization. Proc. Natl Acad. Sci. USA 107, 12446–12450 (2010).

Farhi, E. et al. The performance of the quantum adiabatic algorithm on random instances of two optimization problems on regular hypergraphs. Phys. Rev. A 86, 052334 (2012).

Knysh, S. & Smelyanskiy, V. N. On the relevance of avoided crossings away from quantum critical point to the complexity of quantum adiabatic algorithm. Preprint at http://arxiv.org/abs/1005.3011 (2010).

Laumann, C. R., Moessner, R., Scardicchio, A. & Sondhi, S. L. Quantum annealing: the fastest route to quantum computation? Eur. Phys. J. 224, 75–88 (2015).

Krzakaa, F. & Martin, O. C. Chaotic temperature dependence in a model of spin glasses. Eur. Phys. J. B 28, 199–209 (2002).

Hopfield, J. Neural networks and physical systems with emergent collective computational abilities. Proc. Natl Acad. Sci 79, 2554–2558 (1982).

Nishimori, H. & Nonomura, Y. Quantum effects in neural networks. J. Phys. Soc. Jpn. 65, 3780–3796 (1996).

Laumann, C. R., Moessner, R., Scardicchio, A. & Sondhi, S. L. The quantum adiabatic algorithm and scaling of gaps at first order quantum phase transitions. Phys. Rev. Lett. 109, 030502 (2012).

Bovier, A., van Enter, A. C. D. & Niederhauser, B. Stochastic symmetry-breaking in a gaussian Hopfield model. J. Stat. Phys. 95, 181–213 (1999).

Hertz, J., Krogh, A. & Palmer, R. G. Introduction to the Theory of Neural Computation Addison-Wesley (1995).

Sinai, Y. G. On the distribution of some functions of the integral of a random walk. Theor. Math. Phys. 90, 219–241 (1992).

Groeneboom, P., Jongbloed, G. & Wellner, J. A. Integrated brownian motion, conditioned to be positive. Ann. Prob. 27, 1283–1303 (1999).

Bray, A. J. & Moore, M. A. Chaotic nature of the spin-glass phase. Phys. Rev. Lett. 58, 57–60 (1987).

Mulet, R., Pagnani, A. & Parisi, G. Against temperature chaos in naive Thouless-Anderson-Palmer equations. Phys. Rev. B 63, 184438 (2001).

Rizzo, T. & Crisanti, A. Chaos in temperature in the Sherrington-Kirkpatrick model. Phys. Rev. Lett. 90, 137201 (2003).

Billoire, A. Rare events analysis of temperature chaos in the Sherrington-Kirkpatrick model. J. Stat. Mech. 2014, P040016 (2014).

Kondor, I. On chaos in spin glasses. J. Phys. A 22, L163–L168 (1989).

Katzgraber, H. G. & Krzakaa, F. Temperature and disorder chaos in three-dimensional Ising spin glasses. Phys. Rev. Lett. 98, 017201 (2007).

Vertechi, D. & Virasoro, M. A. Enegy barriers in SK spin-glass model. J. Phys. France 50, 2325–2332 (1989).

Sommers, H. J. & Dupont, W. Distribution of frozen fields in the mean-field theory of spin glasses. J. Phys C17, 5785–5793 (1984).

Venturelli, D. et al. Quantum annealing of fully-connected spin glass. Phys. Rev. X 5, 031040 (2015).

Katzgraber, H. G., Hamze, F. & Andrist, R. S. Glassy chimeras may be blind to quantum speedup. Phys. Rev. X 4, 021008 (2014).

Rieger, H. & Young, A. P. Zero-temperature quantum phase transition of a two-dimensional Ising spin glass. Phys. Rev. Lett. 72, 4141–4144 (1994).

Martín-Mayor, V. & Hen, I. Uraveling quantum annealers using classical hardness. Sci. Rep. 5, 15324 (2015).

Farhi, E., Goldstone, J. & Gutmann, S. Quantum adiabatic annealing algorithms versus simulated annealing. Preprint at http://arxiv.org/abs/quant-ph/0201031 (2002).

Mézard, M., Parisi, G. & Zecchina, R. Analytic and algorithmic solution of random satisfiability problems. Science 297, 812–815 (2002).

Acknowledgements

I would like to thank Vadim Smelyanskiy for useful discussions. This work was supported in part by the Office of the Director of National Intelligence (ODNI), Intelligence Advanced Research Projects Activity (IARPA), via IAA 145483, and by the Air Force Research Laboratory (AFRL) Information Directorate under grant F4HBKC4162G001. The views and conclusions contained herein are those of the author and should not be interpreted as necessarily representing the official policies or endorsements, either expressed or implied, of ODNI, IARPA, AFRL or the U.S. Government. The U.S. Government is authorized to reproduce and distribute reprints for Governmental purpose notwithstanding any copyright annotation thereon.

Author information

Authors and Affiliations

Contributions

S.K. performed the research and wrote the manuscript.

Corresponding author

Ethics declarations

Competing interests

The author declares no competing financial interests.

Supplementary information

Supplementary Information

Supplementary Notes 1-3 (PDF 220 kb)

Rights and permissions

This work is licensed under a Creative Commons Attribution 4.0 International License. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in the credit line; if the material is not included under the Creative Commons license, users will need to obtain permission from the license holder to reproduce the material. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/

About this article

Cite this article

Knysh, S. Zero-temperature quantum annealing bottlenecks in the spin-glass phase. Nat Commun 7, 12370 (2016). https://doi.org/10.1038/ncomms12370

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/ncomms12370

This article is cited by

-

Proof of Avoidability of the Quantum First-Order Transition in Transverse Magnetization in Quantum Annealing of Finite-Dimensional Spin Glasses

Journal of Statistical Physics (2024)

-

Many-body localization enables iterative quantum optimization

Nature Communications (2022)

-

Prospects for quantum enhancement with diabatic quantum annealing

Nature Reviews Physics (2021)

-

The effects of the problem Hamiltonian parameters on the minimum spectral gap in adiabatic quantum optimization

Quantum Information Processing (2020)

-

A coherent quantum annealer with Rydberg atoms

Nature Communications (2017)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.