- COMMENT

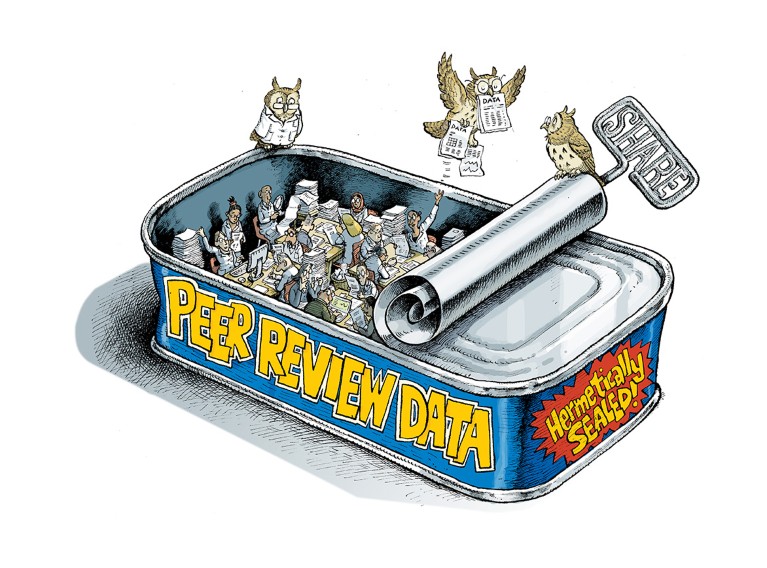

Unlock ways to share data on peer review

-

By

- Flaminio Squazzoni0,

- Petra Ahrweiler1,

- Tiago Barros2,

- Federico Bianchi3,

- Aliaksandr Birukou4,

- Harry J. J. Blom5,

- Giangiacomo Bravo6,

- Stephen Cowley7,

- Virginia Dignum8,

- Pierpaolo Dondio9,

- Francisco Grimaldo10,

- Lynsey Haire11,

- Jason Hoyt12,

- Phil Hurst13,

- Rachael Lammey14,

- Catriona MacCallum15,

- Ana Marušić16,

- Bahar Mehmani17,

- Hollydawn Murray18,

- Duncan Nicholas19,

- Giorgio Pedrazzi20,

- Iratxe Puebla21,

- Peter Rodgers22,

- Tony Ross-Hellauer23,

- Marco Seeber24,

- Kalpana Shankar25,

- Joris Van Rossum26 &

- …

- Michael Willis27

-

Flaminio Squazzoni

-

Flaminio Squazzoni is director of the BehaveLAB at the Department of Social and Political Sciences, University of Milan, Italy.

You can also search for this author in PubMed Google Scholar

-

-

Petra Ahrweiler

-

Petra Ahrweiler is a professor at the University of Mainz, Germany.

You can also search for this author in PubMed Google Scholar

-

-

Tiago Barros

-

Tiago Barros is a product lead at Publons, London, UK.

You can also search for this author in PubMed Google Scholar

-

-

Federico Bianchi

-

Federico Bianchi is a postdoctoral researcher at the University of Brescia, Italy.

You can also search for this author in PubMed Google Scholar

-

-

Aliaksandr Birukou

-

Aliaksandr Birukou is editorial director, computer science at Springer Nature, Heidelberg, Germany.

You can also search for this author in PubMed Google Scholar

-

-

Harry J. J. Blom

-

Harry J. J. Blom is vice-president, publishing development, and head of the editorial department in astronomy at Springer Nature, New York, USA.

You can also search for this author in PubMed Google Scholar

-

-

Giangiacomo Bravo

-

Giangiacomo Bravo is a professor at the Linnaeus University, Växjö, Sweden.

You can also search for this author in PubMed Google Scholar

-

-

Stephen Cowley

-

Stephen Cowley is a professor at the University of Southern Denmark, Odense, Denmark.

You can also search for this author in PubMed Google Scholar

-

-

Virginia Dignum

-

Virginia Dignum is a professor at Umeå University, Sweden.

You can also search for this author in PubMed Google Scholar

-

-

Pierpaolo Dondio

-

Pierpaolo Dondio is a lecturer at the Technological University Dublin, Ireland

You can also search for this author in PubMed Google Scholar

-

-

Francisco Grimaldo

-

Francisco Grimaldo is vice-dean of the School of Engineering at the University of Valencia, Spain.

You can also search for this author in PubMed Google Scholar

-

-

Lynsey Haire

-

Lynsey Haire is head of electronic editorial systems at Taylor & Francis Group, Oxford, UK.

You can also search for this author in PubMed Google Scholar

-

-

Jason Hoyt

-

Jason Hoyt is co-founder and chief executive officer at PeerJ, London, UK.

You can also search for this author in PubMed Google Scholar

-

-

Phil Hurst

-

Phil Hurst is publisher at the Royal Society, London, UK.

You can also search for this author in PubMed Google Scholar

-

-

Rachael Lammey

-

Rachael Lammey is head of community outreach at Crossref, Oxford, UK.

You can also search for this author in PubMed Google Scholar

-

-

Catriona MacCallum

-

Catriona MacCallum is director of open science at Hindawi, London, UK.

You can also search for this author in PubMed Google Scholar

-

-

Ana Marušić

-

Ana Marušić is chair of the Department of Research in Biomedicine and Health at the University of Split School of Medicine, Croatia.

You can also search for this author in PubMed Google Scholar

-

-

Bahar Mehmani

-

Bahar Mehmani is reviewer experience lead in the Global Publishing Development Department at Elsevier, Amsterdam, the Netherlands

You can also search for this author in PubMed Google Scholar

-

-

Hollydawn Murray

-

Hollydawn Murray is data project lead at F1000 Research, London, UK.

You can also search for this author in PubMed Google Scholar

-

-

Duncan Nicholas

-

Duncan Nicholas is director of DN Journal Publishing Services, Brighton, UK.

You can also search for this author in PubMed Google Scholar

-

-

Giorgio Pedrazzi

-

Giorgio Pedrazzi is an assistant professor at the University of Brescia, Italy.

You can also search for this author in PubMed Google Scholar

-

-

Iratxe Puebla

-

Iratxe Puebla is senior managing editor at PLoS ONE, Cambridge, UK.

You can also search for this author in PubMed Google Scholar

-

-

Peter Rodgers

-

Peter Rodgers is features editor of e-Life, Cambridge, UK.

You can also search for this author in PubMed Google Scholar

-

-

Tony Ross-Hellauer

-

Tony Ross-Hellauer is a postdoctoral researcher at the Know-Center, Graz, Austria.

You can also search for this author in PubMed Google Scholar

-

-

Marco Seeber

-

Marco Seeber is an associate professor at the University of Agder, Kristiansand, Norway.

You can also search for this author in PubMed Google Scholar

-

-

Kalpana Shankar

-

Kalpana Shankar is a professor in the School of Information and Communication Studies, University College Dublin, Ireland.

You can also search for this author in PubMed Google Scholar

-

-

Joris Van Rossum

-

Joris Van Rossum is research data director at the International Association of STM Publishers, Oxford, UK.

You can also search for this author in PubMed Google Scholar

-

-

Michael Willis

-

Michael Willis is EMEA regional manager of peer review at Wiley, Oxford, UK.

You can also search for this author in PubMed Google Scholar

-

Illustration by David Parkins

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 51 print issues and online access

$199.00 per year

only $3.90 per issue

Prices may be subject to local taxes which are calculated during checkout

Nature 578, 512-514 (2020)

doi: https://doi.org/10.1038/d41586-020-00500-y

Authors A.B. and H.J.J.B. are employees of Springer Nature (which publishes Nature); Nature is editorially independent of its publisher.

References

Publons. 2018 Global State of Peer Review (Clarivate Analytics, 2018).

Rennie, D. Nature 535, 31–33 (2018).

Grimaldo, F., Marušić, A. & Squazzoni, F. PLoS ONE 13, e0193148 (2018).

Polka, J. K., Kiley, R., Konforti, B. & Vale, R. D. Nature 560, 545–547 (2018).

Teplitskiy, M., Acuna, D., Elamrani-Raoult, A., Körding, K. & Evans, J. K. Res. Policy 47, 1825–1841 (2018).

Fox, C. W., Burns, C. S., Muncy, A. D. & Meyer, J. A. Funct. Ecol. 31, 270–280 (2017).

Bravo, G., Grimaldo, F., López-Iñesta, E., Mehmani, B. & Squazzoni, F. Nature Commun. 10, 322 (2019).

Squazzoni, F., Grimaldo, F. & Marušić, A. Nature 546, 352 (2017).

Related Articles

Subjects

Latest on:

Jobs

-

Junior Group Leader

The Imagine Institute is a leading European research centre dedicated to genetic diseases, with the primary objective to better understand and trea...

Paris, Ile-de-France (FR)

Imagine Institute

-

Director of the Czech Advanced Technology and Research Institute of Palacký University Olomouc

The Rector of Palacký University Olomouc announces a Call for the Position of Director of the Czech Advanced Technology and Research Institute of P...

Czech Republic (CZ)

Palacký University Olomouc

-

Course lecturer for INFH 5000

The HKUST(GZ) Information Hub is recruiting course lecturer for INFH 5000: Information Science and Technology: Essentials and Trends.

Guangzhou, Guangdong, China

The Hong Kong University of Science and Technology (Guangzhou)

-

Suzhou Institute of Systems Medicine Seeking High-level Talents

Full Professor, Associate Professor, Assistant Professor

Suzhou, Jiangsu, China

Suzhou Institute of Systems Medicine (ISM)

-

Postdoctoral Fellowships: Early Diagnosis and Precision Oncology of Gastrointestinal Cancers

We currently have multiple postdoctoral fellowship positions within the multidisciplinary research team headed by Dr. Ajay Goel, professor and foun...

Monrovia, California

Beckman Research Institute, City of Hope, Goel Lab

Nature will publish peer review reports as a trial

Nature will publish peer review reports as a trial

Publish peer reviews

Publish peer reviews

Let’s make peer review scientific

Let’s make peer review scientific