Abstract

This study evaluated and compared the performance of three self-report measures: (1) 30-item Inventory of Depressive Symptomatology-Self-Report (IDS-SR30); (2) 16-item Quick Inventory of Depressive Symptomatology-Self-Report (QIDS-SR16); and (3) Patient Global Impression-Improvement (PGI-I) in assessing clinical outcomes in depressed patients during a 12-week, acute phase, randomized, controlled trial comparing nefazodone, cognitive-behavioral analysis system of psychotherapy (CBASP), and the combination in the treatment of chronic depression. The IDS-SR30, QIDS-SR16, PGI-I, and the 24-item Hamilton Depression Rating Scale (HDRS24) ratings were collected at baseline and at weeks 1–4, 6, 8, 10, and 12. Response was defined a priori as a ⩾50% reduction in baseline total score for the IDS-SR30 or for the QIDS-SR16 or as a PGI-I score of 1 or 2 at exit. Overall response rates (LOCF) to nefazodone were 41% (IDS-SR30), 45% (QIDS-SR16), 53% (PCI-I), and 47% (HDRS17). For CBASP, response rates were 41% (IDS-SR30), 45% (QIDS-SR16), 48% (PGI-I), and 46% (HDRS17). For the combination, response rates were 68% (IDS-SR30 and QIDS-SR16), 73% (PGI-I), and 76% (HDRS17). Similarly, remission rates were comparable for nefazodone (IDS-SR30=32%, QIDS-SR16=28%, PGI-I=22%, HDRS17=30%), for CBASP (IDS-SR30=32%, QIDS-SR16=30%, PGI-I=21%, HDRS17=32%), and for the combination (IDS-SR30=52%, QIDS-SR16=50%, PGI-I=25%, HDRS17=49%). Both the IDS-SR30 and QIDS-SR16 closely mirrored and confirmed findings based on the HDRS24. These findings raise the possibility that these two self-reports could provide cost- and time-efficient substitutes for clinician ratings in treatment trials of outpatients with nonpsychotic MDD without cognitive impairment. Global patient ratings such as the PGI-I, as opposed to specific item-based ratings, provide less valid findings.

Similar content being viewed by others

INTRODUCTION

Most randomized, controlled trials (RCTs) of medication or of psychotherapy rely on clinician ratings of symptoms to assess treatment effects and to define both response and remission (Depression Guideline Panel, 1993). The 17- and 21-item versions of the Hamilton Depression Rating Scale (HDRS) (Hamilton, 1960; 1967) and the 10-item Montgomery-Åsberg Depression Rating Scale (MADRS) (Montgomery and Åsberg, 1979) are the most commonly used clinical ratings in RCTs of adults with major depressive disorder (MDD) (Yonkers and Samson, 2000), although neither scale rates all of the nine criterion symptom domains needed to diagnose a major depressive episode. The heavy reliance on clinician ratings as opposed to self-report ratings such as the Beck Depression Inventory (BDI) (Beck et al, 1961; 1979), the Zung Self-Rating Scale (ZSRS) for depression (Zung, 1965; 1986), or the Carroll Rating Scale (CRS) for depression (Carroll et al, 1981) may be due to several factors, including: (1) unavailability of self-reports during early clinical trials, (2) concerns that self-reports may be biased by patients' expectations of improvement, (3) regulatory agency preferences for using clinician ratings, and (4) in some cases, copyright issues. Thus, if one rating were to be valued above all others, many would argue that clinician ratings are more ‘valid’ and potentially more sensitive to change than self-reports.

This assumption, however, has been challenged (Greenberg et al, 1992). These authors have argued that clinicians involved in placebo-controlled medication RCTs may detect subtle cues, thereby ‘knowing’ which patients are on active medication (Hughes and Krahn, 1985; Rabkin et al, 1986; Stallone et al, 1975). They contend that this argument is supported by the fact that in some studies only clinician ratings, but not self-reports, distinguish ‘active’ medication from placebo (Edwards et al, 1984; Lambert et al, 1986). This perspective suggests that self-reports might be more valid than clinician ratings.

Self-report measures have other strengths that argue for reconsidering their role in both clinical trials and other settings. From a research perspective, the move toward large, multisite, ‘simple’ effectiveness trials such as the Texas Medication Algorithm Project (TMAP) (Rush et al, 1999a; 1999b; 2003a; Trivedi et al, 2004a; Biggs et al, 2000) or the NIMH-sponsored Sequenced Treatment Alternatives to Relieve Depression (STAR*D) study (Fava et al, 2003; Rush et al, 2004) would be facilitated by valid self-reports of depressive symptoms because they would reduce the time, effort, and costs incurred in large-scale clinical trials. Additionally, if self-report instruments were shown to accurately reflect clinical status, clinicians in practice might use them to help patients manage their long-term disease and determine what action(s) might be needed (eg visit the clinician to consider dose or treatment change).

Items on self-report scales, such as the BDI or ZSRS, commonly differ from items on clinician ratings such as the HDRS or MADRS. Correlations between the BDI and HDRS range from 0.61 to 0.86, and for the ZSRS and HDRS, they range from 0.56 to 0.79 (Yonkers and Samson, 2000). Correlations between the HDRS and CRS, which have similar items to the HDRS, are slightly higher (0.80) (Bech, 1992).

In addition to differences in the items themselves, other factors might account for the apparent discrepancies between clinician and patient ratings. It has been suggested that cognitive change items are among the last to improve with otherwise effective medication treatment (Prusoff et al, 1972). If true, self-reports of symptom severity, especially those containing a substantial number of ‘cognitive items,’ may be less sensitive than clinician ratings in detecting symptomatic changes, especially in treatment trials of short duration. A self-report that matches the items on the clinician rating would allow a more accurate test of Greenberg et al's (1992) suggestion.

The 30-item Inventory of Depressive Symptomatology-Self-Report (IDS-SR30) and the matched clinician rating (IDS-C30) (Rush et al, 1986; 1996) were developed to carefully assess all core criterion diagnostic depressive symptoms, as well as all Diagnostic and Statistical Manual of Mental Disorders, Fourth Edition (DSM-IV) (American Psychiatric Association, 1994) atypical symptom features (eg weight gain, appetite increase, interpersonal rejection sensitivity, hypersomnia, and leaden paralysis), and DSM-IV melancholic symptom features (eg anhedonia, unreactive mood, distinct quality to mood, etc.). The IDS-C30 and IDS-SR30 have identical items. Both exclude uncommon symptoms (ie obsessions and compulsions, lack of insight, paranoia/suspiciousness, depersonalization/derealization) that are included in the 21-item version of the HDRS (HDRS21). Thus, the item content of both the IDS-C30 and IDS-SR30 are consistent with recent recommendations to exclude uncommonly encountered symptoms (Mazure et al, 1986; Gibbons et al, 1993; Snaith, 1993; Grundy et al, 1994; Gullion and Rush, 1998).

Evidence of acceptable psychometric properties of the IDS-C30 and IDS-SR30 in depressed outpatients (Rush et al, 1986; 1996; 2000; 2003b; Gullion and Rush, 1998; Trivedi et al, 2004b) and depressed inpatients (Corruble et al, 1999a; 1999b) has been reported. There is also a substantial correlation between total scores on the IDS-C30, IDS-SR30, and HDRS17 (Rush et al, 1986; 1996; Gullion and Rush, 1998). IDS ratings have been shown to differentiate endogenous from nonendogenous forms of depression (Rush et al, 1987; Domken et al, 1994), dysthymic disorder from MDD (Rush et al, 1987), depressed from nondepressed radiation oncology patients (Jenkins et al, 1998), and depressed from nondepressed cocaine-dependent jail inmates (Surís et al, 2001).

There is substantial evidence of the correspondence between individual items and total scores on the IDS-C30 and IDS-SR30 (Rush et al, 1986; 1996; Tondo et al, 1988; Corruble et al, 1999a; 1999b; Biggs et al, 2000; Trivedi et al, 2004b). Therefore, it is useful to examine whether the IDS-SR30 or a shorter version (16-item Quick Inventory of Depressive Symptomatology-Self-Report) (QIDS-SR16) (Rush et al, 2000; 2003b; IsHak et al, 2002; Trivedi et al, 2004b) might provide an alternative, sensitive means to evaluate comparative outcomes in RCTs and other settings. Recent findings by others (eg Carpenter et al, 2002) suggest that in small trials, the IDS-SR30 is sensitive to change and is comparable to the HDRS17.

Data from a recently reported, large, multicenter, controlled trial (Keller et al, 2000) provided an opportunity to evaluate the performance of several self-reports of overall depressive illness severity. This 12-week acute phase, randomized, controlled trial compared nefazodone, cognitive-behavioral analysis system of psychotherapy (CBASP) (McCullough, 2000), and the combination of both nefazodone and CBASP in the treatment of outpatients with chronic, nonpsychotic MDD. This dataset allowed for this post hoc comparison of the performance of clinician-rated HDRS24 with the IDS-SR30, QIDS-SR16, and the Patient Global Impression-Improvement (PGI-I) (adapted from the Clinical Global Impression-Improvement) (CGI-I) (Guy, 1976) in comparing outcomes with three different treatments (nefazodone, CBASP, or combined nefazodone and CBASP).

MATERIALS AND METHODS

Subjects

Institutional review boards at each of the 12 participating sites approved the study. Written informed consent was obtained from all 681 outpatient participants. The methods and overall outcomes of this 12-week acute phase, three-cell RCT have been detailed elsewhere (Keller et al, 2000). The Structured Clinical Interview for DSM-IV™ Axis I Disorders (SCID-I) (First et al, 1997) established the diagnosis. All participants, 18–75 years of age, met DSM-IV criteria for a major depressive episode that was (1) of at least 2 years in duration, (2) superimposed on antecedent dysthymia (double depression), or (3) recurrent with incomplete interepisode recovery and total illness duration of ⩾2 years. The HDRS24 score was ⩾20 at study entry. (See Keller et al, 2000 for inclusion/exclusion criteria.)

Treatment

Nefazodone (b.i.d.) was titrated from 200 mg/day during the first week to 300 mg/day during week 2, followed by weekly dose adjustments in 100 mg/day increments (up to 600 mg/day) to achieve maximum efficacy and acceptable tolerability. CBASP was implemented according to a treatment manual (McCullough, 2000) with twice-weekly sessions (until week 4) and weekly sessions thereafter until week 12.

Outcome Measures

The present study is based on the IDS-SR30, the QIDS-SR16 that was derived from the IDS-SR30, and the PGI-I. The PGI-I, IDS-SR30, QIDS-SR16, and 24-item HDRS (HDRS24) (Miller et al, 1985) were obtained at each treatment visit, with the latter being acquired by an evaluator blind to treatment assignment. There were no specific instructions as to the order of test administration. Thus, the PGI-I, IDS-SR30, and HDRS24 could have been given in any order depending on clinical convenience.

The HDRS24 was the primary outcome measure in the Keller et al (2000) RCT. The HDRS24 includes 24 items, each rated on a 0–2, 0–3, or 0–4 scale (range=0–75). The HDRS24 was obtained by trained and certified assessors who were masked to treatment assignment, and who were not asked to review nor were they specifically provided patient self-reports prior to obtaining the HDRS24 (though some may have had access to these self-reports). The HDRS24, as well as the IDS-SR30 and the PGI-I, were obtained at weeks 0, 1, 2, 3, 4, 6, 8, 10, and 12.

The IDS-SR30 includes 30 items, of which 28 are rated on a 0–3 scale. Both weight gain (or loss) and appetite increase (or decrease) are rated at any occasion, which results in 28 items being rated (range of 0–84). Item total correlations, Cronbach's alpha (Cronbach, 1951), and measures of concurrent validity have established acceptable psychometric properties for the IDS-SR30 (Rush et al, 1986; 1996; Gullion and Rush, 1998; Corruble et al, 1999a; Trivedi et al, 2004b).

The QIDS-SR16 contains 16 items that were selected in this study from items provided by the IDS-SR30 to evaluate the overall severity of each of the nine criterion symptom domains used to diagnose DSM-IV MDD (ie sleep, appetite/weight, concentration, energy, sad mood, suicidal ideation, self-concept/guilt, psychomotor activity, and interest) (Rush et al, 2000; 2003b; Trivedi et al, 2004b). For three domains (sleep, psychomotor, appetite/weight disturbances), multiple questions are used (eg early, middle, late insomnia, and hypersomnia to evaluate sleep disturbance). For the sleep domain, the highest score on any of the four sleep items; for the appetite/weight domain, the highest score on any one of the four items, and for psychomotor changes, the highest score on either one of the two items were chosen to represent the domain. Each symptom item is scored on a 0–3 scale. The QIDS-SR16 total score is computed by adding scores for the nine criterion domains. The QIDS-SR16 total score ranges from 0 to 27. The PGI-I provided a seven-point rating with 1 designating ‘very much improved’ and 7 designating ‘very much worse.’

Statistical Methods

For these analyses, response was defined a priori as a ⩾50% reduction in baseline total score for each scale (IDS-SR30, QIDS-SR16, and HDRS24) or a score of 1 or 2 for the PGI-I. For the HDRS24, a total score ⩽8 was used to indicate remission, since it was used by Keller et al (2000). The remission thresholds for the IDS-SR30 of ⩽14 and QIDS-SR16 of ⩽5 were established using Item Response Theory (IRT) (Orlando et al, 2000) analysis. These thresholds were chosen as they correspond to a score of ⩽7 on the HDRS17 (Rush et al, 2003b). These thresholds were verified (unpublished data) in a second dataset described by Trivedi et al (2001). The strength of agreement between each self-report (IDS-SR30, QIDS-SR16, and PGI-I) and the HDRS24 was assessed for all patients and completers by the intraclass correlation coefficient (Shrout and Fleiss, 1979).

Response and remission rates were determined at exit for all patients (intent-to-treat) (ITT) and for completers. Exit status was assessed whenever the subject left the study (ITT sample) or at completion of the full 12 weeks of treatment (completer sample). The groups were compared by χ2 tests. Kappa statistics were used to measure agreement between response or remission groups as defined by each instrument and the HDRS24. Change from baseline scores was compared between groups by t-test at each week. Patients were divided into response/remission groups based on HDRS24 exit scores, and differences in IDS-SR30, QIDS-SR16, and PGI-I score were compared for these groups by t-test.

A mixed effects linear model similar to that used by Keller et al (2000) compared the treatment groups using the self-reports (IDS-SR30, QIDS-SR16, and PGI-I) and the clinician rating (HDRS24) in the ITT sample. Thus, the treatment groups were compared based on the rate of change in the outcome measure from baseline to week 4 and from week 4 to exit as two separate analyses. The models have a random intercept and slope and terms for treatment group, site, time, and treatment × time interaction.

Evaluable samples had to include completed baseline and exit measurements (IDS-SR30, PGI-I, and HDRS24). Therefore, our sample of 602 subjects differs slightly from the Keller et al (2000) modified ITT sample of 656 subjects with postrandomization HDRS24 scores (due to missing baseline PGI-I (n=1), baseline IDS-SR30 (n=27); missing exit IDS-SR30 (n=26)), although all of the Keller et al (2000) findings were confirmed in this sample (n=602).

Effect sizes were computed for HDRS24, IDS-SR30, and QIDS-SR16 total scores as well as each item of the HDRS24 and the IDS-SR30 and for each domain of the QIDS-SR16. Sensitivity to change was assessed by computing the effect size for change over time within each treatment group for total scores and for each item or domain (computed as mean change (baseline minus exit) divided by the standard deviation of the mean change). The ability of total scores and each item/domain to distinguish among treatment groups was assessed by computing the effect size of the treatment group effect (computed as the proportion of variance accounted for by treatment group membership as determined from an analysis of variance of baseline to exit change (ω2, Hays, 1988). The 95 percent confidence intervals (CI) were computed for within and between group total score effect sizes using the distribution of effect sizes generated from 5000 bootstrap samples (Davison and Hinkley, 1997).

RESULTS

Completion Rates

Of the 4788 actual visits, 122 visits (2.5%) were missing the IDS-SR30, while 103 (2.2%) were missing the HDRS24 rating. The PGI-I (which is not assessed at baseline) was missing for 132 out of 4186 post baseline visits (3.2%).

Intraclass Correlation Coefficients and Coefficients of Variation

Intraclass correlation coefficients (ICC) were calculated for each of the three self-reports in relation to the clinician-rated HDRS24 (Table 1) using all subjects (n=602) at exit and all completers (n=490) exit data. These ICCs are largely within the excellent (⩾0.75) range (Fleiss, 1986).

The coefficients of variation (CV) were calculated for each scale using exit scores across all patients in all treatment groups. The CV (mean÷standard deviation) were 1.46 (HDRS24), 1.53 (IDS-SR30), 1.52 (QIDS-SR16), and 1.97 (PGI-I) indicating that for the IDS-SR30 and QIDS-SR16, there is no clinically significant difference in variability as compared to the HDRS24. The PGI-I shows numerically higher CV suggestive of greater clinical variability. Note that the PGI-I generally has lower agreement with the HDRS24 for remission at exit (Table 2 κ's).

Response and Remission Rates

A total of 602 patients were evaluable using the IDS-SR30 (or QIDS-SR16) and the PGI-I. Table 2 shows the response and remission rates for the ITT (n=602) and completer (n=490) samples using the IDS-SR30, QIDS-SR16, PGI-I, and HDRS24. Each of the four ratings, in both the ITT and completer samples, revealed significantly higher response rates for the combination groups as compared to nefazodone alone or CBASP alone (all χ2⩾27.8; all p<0.0001). Response rates did not differ between the two monotherapies based on any of the three self-reports or on the HDRS24 for both the ITT or the completer samples (all χ2⩽1.6; all p⩾0.20). The κ's for agreement between each instrument and the HDRS24 were substantial (range=0.61–0.80) (Landis and Koch, 1977), except for completers in the combination group, where the κ's were moderate (range=0.41–0.60) for the IDS-SR30 and QIDS-SR16.

Remission rates followed the same pattern (Table 3). The combination treatment was associated with higher remission rates based on the IDS-SR30, QIDS-SR16, and HDRS24 (all χ2⩾18.4, all p⩽0.0001) but not on the PGI-I (both χ2⩽1.09, p⩾0.58) as compared to either monotherapy. Remission rates did not differentiate the two monotherapies for either the ITT or completer samples for any of the four outcome measures (all χ2⩽0.47, all p⩾0.49).

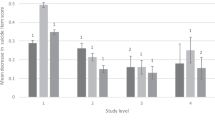

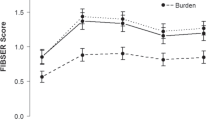

Figure 1a shows the baseline and week-by-week total HDRS24 scores for each treatment group. Figures 1b, c, and d reveal very similar findings on a week-by-week basis using the IDS-SR30, QIDS-SR16, and PGI-I, respectively. In general, the same significant between-group differences in change from baseline on a week-by-week basis as results obtained with the HDRS24 were found. However, the IDS-SR30 did not distinguish CBASP from nefazodone or from the combination at week 1 and at week 6, and it did not distinguish nefazodone from CBASP at week 6. The QIDS-SR16 revealed similar significant differences at the same visit occasions as the HDRS24 except for lack of significant differences between CBASP and the other two treatments at week 1 and between nefazodone and the other two treatments at week 6. The PGI-I, while following a pattern similar to that found with the HDRS24, was less consistent. The PGI-I did not differentiate the groups at week 1. Only trend differences were observed at week 2 (comparing the combination to CBASP alone) and at week 6 (comparing the combination to CBASP). However, exit findings with all three self-reports reflected the same significant between-group differences found with the HDRS24 (significance test results not shown).

(a) Mean change from baseline to week 12 in HDRS24 total scores (observed case). (b) Mean change from baseline to week 12 in IDS-SR30 total scores (observed case). (c) Mean change from baseline to week 12 in QIDS-SR16 total scores (observed case). (d) Mean change from baseline to week 12 in PGI-I total scores (observed case).

Table 4 provides results based on the HDRS24 exit scores to define different groups (ie responder/nonresponder; remitter/nonremitter). All three self-reports distinguished HDRS24 responders from HDRS24 nonresponders, as well as HDRS24 remitters from HDRS24 nonremitters for each treatment group.

A further analysis compared HDRS24 responders with residual symptoms (ie ⩾50% decrease from baseline but with an HDRS24 >8) with HDRS24 remitters (ie HDRS24 score ⩽8 at exit). Results revealed mean IDS-SR30 scores to be 18.2±7.8 for HDRS24 responders with residual symptoms vs 9.2±5.9 for HDRS24 remitters (t=−11.0, df=191, p<0.0001) at exit. For the QIDS-SR16 results, HDRS24 responders with residual symptoms averaged 7.1±3.2 as compared to 3.8±2.6 for HDRS24 remitters (t=−9.5, df=194, p<0.0001). Finally, the mean score on the PGI-I for HDRS24 responders with residual symptoms was 2.1±0.6 as compared to 1.5±0.6 for HDRS24 remitters (t=−7.2, df=340, p<0.0001).

We used a mixed effects model to parallel the original report based on the HDRS24 (Keller et al, 2000), dividing the observation periods into the first 4 weeks and then the subsequent 8 weeks of the 12-week trial. In this sample (baseline to week 4 (n=602) and week 4 to week 12 (n=560)), the HDRS24 distinguished nefazodone from CBASP (p<0.0008) and distinguished the combination from CBASP (p<0.0005) over the first 4 weeks of the trial. The IDS-SR30, QIDS-SR16, and PGI-I mixed effects model analyses revealed similar statistically significant results. For the latter period (weeks 4–12), the HDRS24, IDS-SR30, and QIDS-SR16 revealed greater efficacy for the combination than for either nefazodone alone or for CBASP alone (Table 5), while the PGI-I revealed greater efficacy for the combination vs nefazodone, but not for the combination vs CBASP alone.

Table 6 shows two different types of effect sizes for the HDRS24 items, while Table 7 provides analogous information for the IDS-SR30 items and QIDS-SR16 domains. The first effect size is for change from baseline to exit within each treatment group expressed in standard deviation units. The second effect size is for the difference between treatment groups in change from baseline to exit expressed as a percent of variance explained. For the HDRS24 items, item 1 (depressed mood) showed large within-group effects and the largest between treatment group effect of all the HDRS24 items. Several HDRS24 items showed very small within or between treatment group effects (eg items 9, 15, 17, 19, 20, and 21).

Considering the IDS-SR30 items, item 5 (sad mood) had the largest within-group effects, and one of the largest between treatment group effects of all the IDS-SR30 items. Hypersomnia (item 4) showed a very small treatment group effect for the IDS-SR30, likely due to the smaller proportion of patients with this symptom at baseline.

Turning to the QIDS-SR16, nine domains had effect sizes above 0.50, although the appetite/weight domain had a very small treatment group effect (ie substantial change occurred in all three groups, but that change did not differentiate among the three groups).

Table 8 shows that the HDRS24, IDS-SR30, and QIDS-SR16 total scores all had similar within-group and between-group effect sizes. The IDS-SR30 had the numerically largest effect size in the nefazodone and CBASP groups, while the HDRS24 had the largest effect size in the combination group. The numerically somewhat larger within treatment group effect sizes achieved with the PGI-I were accompanied by the numerically smallest between-group effect size. These findings suggest that patients globally reported larger effects of any treatment as compared to the itemized reports. This larger effect, perhaps due to the global nature of the ratings, likely limited the capacity of the PGI-I ratings to detect between-group differences (ie small between-group effect size).

DISCUSSION

Overall, these post hoc analyses based on self-reports confirmed the between-group differences reported by Keller et al (2000) using the HDRS24 completed by highly trained, certified raters who were masked to treatment assignment. The IDS-SR30 and the QIDS-SR16 were generally as sensitive to both change over time and to differences between treatment cells as was the masked HDRS24 rating. In addition, the IDS-SR30 and QIDS-SR16 confirmed response and remission rates obtained using the HDRS24. Correspondence between the PGI-I and the HDRS24 was far less impressive.

In addition, changes in IDS-SR30 and QIDS-SR16 total scores at each measurement occasion closely paralleled those obtained with the HDRS24. PGI-I total scores were less consistent than changes in IDS-SR30, QIDS-SR16, or HDRS24 total scores. When exit status was defined by the HDRS24 (responders vs nonresponders, remitters vs nonremitters, and responders with residual symptoms vs remitters), the IDS-SR30, QIDS-SR16, and the PGI-I exit scores significantly differentiated these groups.

While the PGI-I, a global self-report, did not perform as well as the IDS-SR30, QIDS-SR16, or the HDRS24, the major results of this study were still confirmed with the PGI-I. Perhaps the specific wording on each of the four (0–3) ratings for each IDS-SR30/QIDS-SR16 symptom item reduced between-subject (or within-subject over time) variability, which sharpened their capacity to reliably differentiate the three treatment groups. Global ratings such as the PGI-I (as opposed to anchored, itemized symptom ratings) by depressed patients may be more influenced by the well-known cognitive bias found in symptomatic patients. Present results would recommend against use of the PGI-I when itemized clinician or self-reports are used in a trial, as the PGI-I was least useful in detecting between-group differences.

These results contradict the suggestion by Greenberg et al (1992) and others (Edwards et al 1984; Lambert et al, 1986; Murray, 1989) that clinical ratings and self-reports diverge in assessing outcomes across different treatments. However, given the absence of pill placebo in this trial, these findings do not fully address the concerns raised by Greenberg et al (1992) regarding placebo-controlled clinical trials (although CBASP was a nonmedication treatment cell). These results also address the issue raised by Duncan and Miller (2000) who questioned whether the use of patient self-reports in the Keller et al (2000) trial would have resulted in less robust outcomes based on treatment cell assignments.

The present results also reveal substantial agreement between the HDRS24 total scores and those of the IDS-SR30 and QIDS-SR16, with intraclass correlations largely ⩾0.8. However, differences are to be noted as well. For example, the proportion of responders was generally 6–9 percentage points lower with the IDS-SR30 or QIDS-SR16 than for the HDRS24, when a ⩾50% reduction in baseline severity at exit was used as a threshold for response for all three measures. Notably, remission rates were nearly identical for all three measures. These two findings suggest that some subjects who achieved an HDRS24 response did not achieve an equivalent degree of benefit as viewed by the self-reports. Perhaps those who achieve an HDRS24 response but who continue to have some symptoms (ie not achieve an HDRS24 remission) may continue to suffer a negative cognitive bias and, therefore, may overrate their self-reported symptoms in some cases, such that a response by either self-report is not quite achieved. Alternatively, the HDRS24 may overestimate response compared to the self-reports if for some patients the baseline HDRS24 total ratings were inadvertently inflated (since a minimal HDRS24 threshold was required for study entry). The inflation of baseline severity based on the HDRS24, if present, would only affect response rates; it would not affect remission rates.

The relatively modest κ values for response rates or poorer κ values for remission rates deserve comment. Who is a responder by HDRS24 is tightly tied to the baseline HDRS24 total score. As noted above, there is a tendency in acute phase RCTs towards inflation in baseline severity scores.

The most likely explanation for the modest κ's is that the two scales measure different enough constructs that agreement is modest. The differences between the scale items are in fact by design. Evidence for differences is suggested by the effect sizes found for each item/domain on each scale (Tables 6, 7 and 8).

The item effect sizes obtained both for within treatment groups (baseline to exit) and across treatment groups (baseline to exit) deserve comment. If one considers both monotherapies only, and accepts an effect size of ⩾0.5 as moderate and meaningful (Cohen, 1988), only nine of 24 HDRS24 items achieve this level of significance. The items include depressed mood, guilt feelings, middle insomnia, work and activities, psychic anxiety, somatic symptoms, and helplessness, hopelessness, and worthlessness. Notably, only six of the 17 items of the HDRS17 achieve this threshold. Furthermore, for the HDRS24 or the HDRS17, only three core criterion symptoms (sad mood, guilt, and interest—or work and activities) have effect sizes of ⩾0.5, although middle insomnia (one of the four potential types of sleep disturbance) also has an effect size ⩾0.5.

All nine domains for the QIDS-SR16 have a ⩾0.5 effect size for both monotherapies. For both the HDRS24 and the QIDS-SR16, some domains have similar effect sizes (eg sad mood). On the other hand, some important DSM-IV criterion symptom domains (eg suicide, psychomotor changes, sleep disturbances) have greater effect sizes when measured by the QIDS-SR16 than when measured by the HDRS24.

Turning to the IDS-SR30, using items with effect sizes ⩾0.5 for both monotherapies as a threshold, the following items did not achieve this level of significance: early morning insomnia, hypersomnia, mood reactivity, diurnal variation, weight change, sexual interest, psychomotor agitation, somatic complaints, sympathetic nervous system arousal, panic attacks, and gastrointestinal complaints. Most of these items are not core criterion symptoms of a major depressive episode. Note that the effect sizes for each individual sleep item on the IDS-SR30, while substantial (save for hypersomnia), are more modest than the effect size for the sleep disturbance domain on the QIDS-SR16 (0.70, 0.61, 0.91) for each of the three treatment cells. These data suggest that all sleep items for the IDS-SR30 or potentially for the HDRS17 might be combined to form a single domain score, as is done on the QIDS-SR16.

Finally, turning from item effect sizes to total score effect sizes, Table 8 shows the HDRS24, IDS-SR30, and QIDS-SR16 to be equally sensitive in detecting baseline to exit change within each treatment group (note the degree of overlap among the confidence intervals within each treatment group). These three itemized self-report measures are also equally sensitive in distinguishing between treatment groups (again, note the overlapping confidence intervals in the last column of Table 8). The QIDS-SR16 captures as much information about the differential effects of the three treatments as the IDS-SR30 or HDRS24.

The PGI-I produces larger within treatment group effect sizes than the other measures—with the largest effect in the CBASP group (2.11) unlike the other measures, which show the largest effect in the combination group. The PGI-I does the poorest job of separating the treatment groups. Perhaps global judgments made by depressed patients vary more widely across subjects, thereby reducing the chances of identifying between-group differences. Self-reports like the IDS-SR30 or QIDS-SR16 that provide clear anchors for responding to each item appear as useful as clinician ratings.

Is it actually so surprising that the IDS-SR30 and QIDS-SR16, which focus on specific depressive symptoms, correspond to standard clinician ratings such as the HDRS24? In fact, most itemized clinician ratings actually rely on patients' self-reports to questions posed by the interviewer (eg suicidal thinking, nature of sleep, energy, guilt, etc.). The interviewer has the context of other depressed patients by which to gauge his/her rating, and the opportunity to clarify for patients (during the interview) the meaning of the question and the nature of the answer if patients have difficulty understanding the question or have difficulty articulating the appropriate response. However, a few HDRS24 items do require direct observation (eg psychomotor changes) and do not score subject reports of feeling agitated or slowed down. This difference could lead to increased sensitivity to psychomotor changes in self-reported measures, or at least lead to discrepancies between self-report and clinician ratings of these items.

The present results suggest that the QIDS-SR16 and the IDS-SR30 (and potentially other self-reports) with clear anchors for each item rated may provide sufficient consistency of responses across patients that results with clinical ratings are accurately portrayed by patient self-report, at least in depressed outpatients without cognitive impairment. Such self-reports may be a cost-effective substitute for more time-consuming clinician ratings. Obviously, very severely depressed psychotic or cognitively impaired depressed patients may be unable to provide accurate enough self-reported symptoms to allow for use of self-reports instead of clinician ratings.

It is notable that we were missing IDS-SR30 measures for only 2.5% of visits, which was comparable to the missing rates (2.2%) for the HDRS24 measures, while the latter was defined a priori as the primary outcome measure. Such a result suggests a high degree of acceptance of self-reports by both clinicians and patients.

Given these results, it is logical to ask whether the IDS-SR30 should be replaced by the QIDS-SR16. The QIDS-SR16 is as, or more, sensitive to change as the IDS-SR30. The 16 items assess nine domains, each of which has at least a moderate effect size. Furthermore, the overall effect sizes of the total scores are equivalent and as robust as the HDRS17 total scores. Thus, to measure the nine criterion symptom domains (either in practice or in trials), the QIDS is entirely satisfactory, and the IDS is not needed.

The IDS, however, provides an accounting of all melancholic and atypical symptoms, and it measures common important associated symptoms (eg irritability, anxiety, and sympathetic nervous system arousal). The IDS is a useful initial measure to provide information as to depressive ‘subtypes.’ In addition, most of these noncriterion items also have substantial effect sizes, some of which also differentiate between treatment groups (Table 7) (eg sexual interest, reactivity of mood, psychomotor slowing, and future outlook). The present report also shows that the full IDS-SR30 total score does, in fact, perform as well as the HDRS24 or QIDS-SR16 in spite of the wider range of symptoms being assessed. Thus, investigators or clinicians can select either scale as an acceptable, sensitive outcome measure depending on time constraints and their wish to assess only core criterion symptoms or a wider range of symptoms.

The current report includes the following limitations. First, the analyses are post hoc. However, all of these self-reports were included in the original protocol and data analysis plans as secondary or confirmatory analyses. Second, patients were not blind to the type of treatment they received (nefazodone, CBASP, or the combination). It is interesting, however, to note that the outcomes using nonblind, patient-itemized self-reports were virtually identical to outcomes obtained by highly trained, certified, and masked raters who completed the HDRS24. Third, these findings may have limited generalizability as the patient sample was restricted to a largely Caucasian sample of outpatients with nonpsychotic MDD with various types of chronic courses. Fourth, there was no predetermined method by which the order of test administration was given. Fifth, while we do not believe that HDRS24 raters reviewed any self-report data, we cannot guarantee that some may have had access to these data before conducting the HDRS24 interview. No raters were aware of the data analyses to compare these ratings post hoc. Finally, the QIDS-SR16 was derived from the items obtained with the IDS-SR30 rather than from an independently administered measure.

These results clearly suggest that itemized self-reports may potentially substitute for clinician ratings, especially in large, multisite clinical trials with substantial sample sizes. Obtaining these self-reports could be facilitated by a telephone-based interview that elicits responses from the subject to each symptom item. These so-called Interactive Voice Response (IVR) systems have been used successfully in randomized clinical trials (Piette, 2000; Kobak et al, 1997; 1999), although studies using IDS-SR30 or QIDS-SR16 scores obtained via IVR have yet to be reported. The STAR*D trial (Fava et al, 2003; Rush et al, 2004) is comparing rater-acquired HDRS17 and IDS-C30 with an IVR-acquired QIDS-SR16 to compare the utility, sensitivity to change, and psychometric properties of each type of rating.

The present findings are consistent with the idea of using a self-report in lieu of a clinician rating as a primary outcome, or to gauge treatment benefit in routine practice. To further evaluate the potential of self-reports in research, one needs a comparison between identical clinician and self-report measures in placebo-controlled trials, with an experimental drug, a standard drug, and a placebo to ascertain whether self-reports are as sensitive as clinician ratings in differentiating drug from placebo. Further studies to compare self-reports (obtained either by IVR or paper and pencil tests) and clinician ratings (both the Montgomery Asberg Depression Rating Scale and the Hamilton Rating Scale for Depression) are indicated in diverse populations of depressed patients and in epidemiologic samples.

References

American Psychiatric Association (1994). Diagnostic and Statistical Manual of Mental Disorders, 4th edn. American Psychiatric Association: Washington, DC.

Bech P (1992). Symptoms and assessment of depression. In: Paykel ES (ed). Handbook of Affective Disorders. Guilford Press: New York. pp 3–14.

Beck AT, Rush AJ, Shaw BF, Emery G (1979). Cognitive Therapy of Depression. The Guilford Press: New York.

Beck AT, Ward CH, Mendelson M, Mock J, Erbaugh J (1961). An inventory for measuring depression. Arch Gen Psychiatry 4: 561–571.

Biggs MM, Shores-Wilson K, Rush AJ, Carmody TJ, Trivedi MH, Crismon ML et al (2000). A comparison of alternative assessments of depressive symptom severity: a pilot study. Psychiatry Res 96: 269–279.

Carpenter LL, Yasmin S, Price LH (2002). A double-blind, placebo-controlled study of antidepressant augmentation with mirtazapine. Biol Psychiatry 51: 183–188.

Carroll BJ, Feinberg M, Smouse PE, Rawson SG, Greden JF (1981). The Carroll Rating Scale for depression. Development, reliability and validation. Br J Psychiatry 138: 194–200.

Cohen J (1988). Statistical Power Analysis for the Behavioral Sciences, 2nd edn. L. Erlbaum Associates: Hillsdale, NJ.

Corruble E, Legrand JM, Duret C, Charles G, Guelfi JD (1999a). IDS-C and IDS-SR: psychometric properties in depressed in-patients. J Affect Disord 56: 95–101.

Corruble E, Legrand JM, Zvenigoroweki H, Duret C, Guelfi JD (1999b). Concordance between self-report and clinician's assessment of depression. J Psychiatr Res 33: 457–465.

Cronbach LJ (1951). Coefficient alpha and the internal structure of tests. Psychometrika 16: 297–334.

Davison AC, Hinkley DV (1997). Bootstrap Methods and their Application. Cambridge University Press: Cambridge, UK.

Depression Guideline Panel (1993). Clinical Practice Guideline. Number 5. Depression in Primary Care: Vol. 1. Detection and Diagnosis, AHCPR Publication No. 93-0550 US Department of Health and Human Services, Agency for Health Care Policy and Research: Rockville, MD.

Domken M, Scott J, Kelly P (1994). What factors predict discrepancies between self and observer ratings of depression? J Affect Disord 31: 253–259.

Duncan BL, Miller SD (2000). Nefazodone, psychotherapy, and their combination for chronic depression [correspondence]. N Eng J Med 343: 1042.

Edwards BC, Lambert MJ, Moran PW, McCully T, Smith KC, Ellingson AG (1984). A meta-analytic comparison of the Beck Depression Inventory and the Hamilton Rating Scale for Depression as measures of treatment outcome. Br J Clin Psychol 23: 93–99.

Fava M, Rush AJ, Trivedi MH, Nierenberg AA, Thase ME, Sackeim HA et al (2003). Background and rationale for the Sequenced Treatment Alternatives to Relieve Depression (STAR*D) study. Psychiatr Clin North Am 26: 457–494.

First MB, Spitzer RL, Gibbon M, Williams JBW (1997). Structured Clinical Interview for DSM-IV™ Axis I Disorders (SCID-I), Clinician Version. American Psychiatric Publishing, Inc.: Washington, DC.

Fleiss JL (1986). The Design and Analysis of Clinical Experiments. John Wiley & Sons: New York, NY.

Gibbons RD, Clark DC, Kupfer DJ (1993). Exactly what does the Hamilton Depression Rating Scale measure? J Psychiatr Res 27: 259–273.

Greenberg RP, Bornstein RF, Greenberg MD, Fisher S (1992). A meta-analysis of antidepressant outcome under ‘blinder’ conditions. J Consult Clin Psychol 60: 664–669.

Grundy CT, Kunnen KM, Lambert MJ, Ashton JE, Tovey DR (1994). The Hamilton Rating Scale for Depression: one scale or many? Clin Psychol Sci Pract 1: 197–205.

Gullion CM, Rush AJ (1998). Toward a generalizable model of symptoms in major depressive disorder. Biol Psychiatry 44: 959–972.

Guy W (1976). ECDEU Assessment Manual for Psychopharmacology, Revised Edition. Department of Health, Education and Welfare Publication No. 76-338. US Government Printing Office: Washington, DC.

Hamilton M (1960). A rating scale for depression. J Neurol Neurosurg Psychiatry 23: 56–62.

Hamilton M (1967). Development of a rating scale for primary depressive illness. Br J Soc Clin Psychol 6: 278–296.

Hays WL (1988). Statistics, 4th edn. Rinehart & Winston, Inc.: Fort Worth, TX.

Hughes JR, Krahn D (1985). Blindness and the validity of the double-blind procedure. J Clin Psychopharmacol 5: 138–142.

IsHak WW, Burt T, Sederer LI (eds) (2002). Outcome Measurement in Psychiatry. A Critical Review. American Psychiatric Publishing, Inc.:Washington, DC. pp 439–441.

Jenkins C, Carmody TJ, Rush AJ (1998). Depression in radiation oncology patients: a preliminary evaluation. J Affect Disord 50: 17–21.

Keller MB, McCullough JP, Klein DN, Arnow B, Dunner DL, Gelenberg AJ et al (2000). A comparison of nefazodone, the cognitive behavioral-analysis system of psychotherapy, and their combination for the treatment of chronic depression. N Eng J Med 342: 1462–1470.

Kobak KA, Greist JH, Jefferson JW, Mundt JC, Katzelnick DJ (1999). Computerized assessment of depression and anxiety over the telephone using interative voice response. MD Comput 16: 64–68.

Kobak KA, Tayler LH, Dottl SL, Greist JH, Jefferson JW, Burroughs D et al (1997). Computerized screening for psychiatric disorders in an outpatient community mental health clinic. Psychiatr Serv 48: 1048–1057.

Lambert MJ, Hatch DR, Kingston MD, Edwards BC (1986). Zung, Beck, and Hamilton rating scales as measures of treatment outcome: A meta-analytic comparison. J Consult Clin Psychol 54: 54–59.

Landis RJ, Koch GG (1977). The measurement of observer agreement for categorical data. Biometrics 33: 159–174.

Mazure C, Nelson JC, Price LH (1986). Reliability and validity of the symptoms of major depressive illness. Arch Gen Psychiatry 43: 451–456.

McCullough Jr JP (2000). Treatment for Chronic Depression. Cognitive Behavioral Analysis System of Psychotherapy (CBASP). Guilford Press: New York.

Miller IW, Bishop S, Norman WH, Maddever H (1985). The modified Hamilton Rating Scale for Depression: reliability and validity. Psychiatry Res 14: 131–142.

Montgomery SA, Åsberg M (1979). A new depression scale designed to be sensitive to change. Br J Psychiatry 134: 382–389.

Murray E (1989). Measurement issues in the evaluation of psychopharmacological therapy. In: Fisher S, Greenberg RP (eds). The Limits of Biological Treatments for Psychological Distress: Comparisons with Psychotherapy and Placebo. Erlbaum: Hillsdale, NJ. pp 39–68.

Orlando M, Sherbourne CD, Thissen D (2000). Summed-score linking using item response theory: application to depression measurement. Psychol Assess 12: 354–359.

Piette JD (2000). Interactive voice response systems in the diagnosis and management of chronic disease. Am J Manag Care 6: 817–827.

Prusoff BA, Klerman GL, Paykel ES (1972). Concordance between clinical assessments and patients' self-report in depression. Arch Gen Psychiatry 26: 546–552.

Rabkin JG, McGrath P, Stewart JW, Harrison W, Markowitz JS, Quitkin F (1986). Follow-up of patients who improved during placebo washout. J Clin Psychopharmacol 6: 274–278.

Rush AJ, Carmody T, Reimitz P-E (2000). The Inventory of Depressive Symptomatology (IDS): clinician (IDS-C) and self-report (IDS-SR) ratings of depressive symptoms. Int J Methods Psychiatr Res 9: 45–59.

Rush AJ, Crismon ML, Kashner TM, Toprac MG, Carmody TJ, Trivedi MH et al (2003a). Texas Medication Algorithm Project, Phase 3 (TMAP-3): rationale and study design. J Clin Psychiatry 64: 357–369.

Rush AJ, Crismon ML, Toprac MG, Shon SS, Rago WV, Miller AL et al (1999a). Implementing guidelines and systems of care: experiences with the Texas Medication Algorithm Project (TMAP). J Pract Psychiatry Behav Health 5: 75–86.

Rush AJ, Fava M, Wisniewski SR, Lavori PW, Trivedi MH, Sackeim HA et al (2004). Sequenced Treatment Alternatives to Relieve Depression (STAR*D): rationale and design. Control Clin Trials 25: 119–142.

Rush AJ, Giles DE, Schlesser MA, Fulton CL, Weissenburger JE, Burns CT (1986). The Inventory for Depressive Symptomatology (IDS): preliminary findings. Psychiatry Res 18: 65–87.

Rush AJ, Gullion CM, Basco MR, Jarrett RB, Trivedi MH (1996). The Inventory of Depressive Symptomatology (IDS): psychometric properties. Psychol Med 26: 477–486.

Rush AJ, Hiser W, Giles DE (1987). A comparison of self-reported versus clinician-rated symptoms in depression. J Clin Psychiatry 48: 246–248.

Rush AJ, Rago WV, Crismon ML, Toprac MG, Shon SP, Suppes T et al (1999b). Medication treatment of the severely and persistently mentally ill: the Texas Medication Algorithm Project. J Clin Psychiatry 60: 284–291.

Rush AJ, Trivedi MH, Ibrahim HM, Carmody TJ, Arnow B, Klein DN et al (2003b). The 16-item Quick Inventory of Depressive Symptomatology (QIDS) Clinician Rating (QIDS-C) and Self-Report (QIDS-SR): a psychometric evaluation in patients with chronic major depression. Biol Psychiatry 54: 573–583.

Snaith P (1993). What do depression rating scales measure? Br J Psychiatry 163: 293–298.

Stallone F, Mendlewicz J, Fieve R (1975). Double-blind procedure: an assessment in a study of lithium prophylaxis. Psychol Med 5: 78–82.

Shrout PE, Fleiss JL (1979). Intraclass correlations: uses in assessing rater reliability. Psychol Bull 86: 420–428.

Surís A, Kashner TM, Gillaspy Jr JA, Biggs M, Rush AJ (2001). Validation of the Inventory of Depressive Symptomatology (IDS) in cocaine dependent inmates. J Offender Rehab 32: 15–30.

Tondo L, Burrai C, Scamonatti L, Weissenburger JE, Rush AJ (1988). A comparison between clinician-rated and self-reported depressive symptoms in Italian psychiatric patients. Neuropsychobiology 19: 1–5.

Trivedi MH, Rush AJ, Crismon ML, Kashner TM, Toprac MG, Carmody TJ et al (2004a). The Texas Medication Algorithm Project (TMAP): clinical results for patients with major depressive disorder. Arch Gen Psychiatry 61: 669–680.

Trivedi MH, Rush AJ, Ibrahim HM, Carmody TJ, Biggs MM, Suppes T et al (2004b). The Inventory of Depressive Symptomatology, Clinician Rating (IDS-C) and Self-Report (IDS-SR), and the Quick Inventory of Depressive Symptomatology, Clinician Rating (QIDS-C) and Self-Report (QIDS-SR) in public sector patients with mood disorders, a psychometric evaluation. Psychol Med 34: 73–82.

Trivedi MH, Rush AJ, Pan J-Y, Carmody TJ (2001). Which depressed patients repond to nefazodone and when? J Clin Psychiatry 62: 158–163.

Yonkers KA, Samson J (2000). Mood disorders measures. In: American Psychiatric Association Task Force for the Handbook of Psychiatric Measures (ed). Handbook of Psychiatric Measures. American Psychiatric Association: Washington, DC. pp 515–548.

Zung WWK (1965). A self-rating depression scale. Arch Gen Psychiatry 12: 63–70.

Zung WWK (1986). Zung Self-Rating Depression Scale and depression status inventory. In: Sartorius N, Ban TA (eds). Assessment of Depression. Springer: Berlin. pp 211–231.

Acknowledgements

This research was supported in part by grants from Bristol-Myers Squibb Company to the 12 participating sites, by NIMH Grant #MH68851, and by the Betty Jo Hay Distinguished Chair, the Rosewood Corporation Chair in Biomedical Science, and by the Sara E and Charles M Seay Center for Basic Research in Psychiatry. We thank Fast Word Inc., Dallas, Texas for secretarial assistance in preparation of this manuscript.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Rush, A., Trivedi, M., Carmody, T. et al. Self-Reported Depressive Symptom Measures: Sensitivity to Detecting Change in a Randomized, Controlled Trial of Chronically Depressed, Nonpsychotic Outpatients. Neuropsychopharmacol 30, 405–416 (2005). https://doi.org/10.1038/sj.npp.1300614

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/sj.npp.1300614

Keywords

This article is cited by

-

Design of a real-world, prospective, longitudinal, observational study to compare vortioxetine with other standard of care antidepressant treatments in patients with major depressive disorder: a PatientsLikeMe survey

BMC Psychiatry (2023)

-

AI-based dimensional neuroimaging system for characterizing heterogeneity in brain structure and function in major depressive disorder: COORDINATE-MDD consortium design and rationale

BMC Psychiatry (2023)

-

Mindfulness-based resilience training for aggression, stress and health in law enforcement officers: study protocol for a multisite, randomized, single-blind clinical feasibility trial

Trials (2020)

-

Interaction of oxytocin level and past depression may predict postpartum depressive symptom severity

Archives of Women's Mental Health (2016)

-

The two-year course of late-life depression; results from the Netherlands study of depression in older persons

BMC Psychiatry (2015)