Abstract

The resolution of the Maxwell’s demon paradox linked thermodynamics with information theory through information erasure principle. By considering a demon endowed with a Turing-machine consisting of a memory tape and a processor, we attempt to explore the link towards the foundations of statistical mechanics and to derive results therein in an operational manner. Here, we present a derivation of the Boltzmann distribution in equilibrium as an example, without hypothesizing the principle of maximum entropy. Further, since the model can be applied to non-equilibrium processes, in principle, we demonstrate the dissipation-fluctuation relation to show the possibility in this direction.

Similar content being viewed by others

Introduction

Statistical mechanics has been developed in order to describe the behavior of systems that have a large number of microscopic degrees of freedom so that it is consistent with thermodynamics1. While it is no doubt the best theory we have today to explain the dynamics of such systems, its foundations are not as solid as they may appear. Particularly, the principle of equal a priori probabilities, or the ergodicity of the system, lacks a clear physical rationale, which led to coexistence of various approaches on which the theory is based2,3,4,5. The history of each school can be found in e.g., ref. 6 and also in the references of a more recent research paper7. This situation is not very comfortable also from the standpoint that physical laws should be constructed based on physical operations, even in a thought experiment, as in the Newtonian mechanics, electromagnetism and the theory of special relativity.

Thermodynamics, on the other hand, is constructed upon firmly established empirical and operational evidence on macroscopic objects8. Further, it is believed to explain a variety of physical phenomena, regardless of the details of the system constituents. Thus we take the universality and robustness of thermodynamics as a guiding principle in our attempt to lay the foundations of statistical mechanics9,10.

Our motivation is in describing physics in terms of operations, i.e., under the concept of operationalism11,12,13,14. In this respect, we need the notion of probability in the consideration to bridge thermodynamics and statistical mechanics and it should be introduced through operations. Fortunately, from the viewpoint of the frequentism15, probabilities can be defined as a limit of relative frequencies of events in a large number of trials or operations. Then, the standard information theory16,17 can fit in the argument based on operations naturally, since the amount of information, such as the Shannon entropy18, is defined through probabilities.

Moreover, information processing can also be seen as a physical operation, since once information is encoded in a physical state any computational manipulation is realized as an operation on the state19,20. This way, we can construct an operational scenario, incorporating the notion of probability via information with thermodynamics21.

As a concrete example, here we consider the derivation of the Boltzmann distribution in the canonical ensemble. Perhaps its most notable derivation using the concept of information (or entropy) is the one by Jaynes22, who claimed the principle of maximum entropy (PME). Jaynes identified the equilibrium as the state that maximizes the Shannon entropy with respect to the probability of each microscopic configuration under the constraint on the total energy.

While Jaynes’ approach has been very successful, the PME is essentially based on the principle of equal a priori probabilities (Bayesian view of probability). This means that no operations are involved in the a priori probabilities for the premise of the PME, unlike in those of frequentism.

More recent work that may be relevant is the formulation of the canonical ensemble in the language of quantum mechanics23,24. They showed that the state ρ of a small system is approximately equal to the canonical state exp(−H/kBT), as a result of entanglement between the system and its environment, provided the interaction between the system and the environment is weak. Here, H, kB, and T are the Hamiltonian of the system, the Boltzmann constant and the temperature of the environment. Their results are very smart and elegant in their own right, however, they have assumed the a priori equiprobability and it is still unclear whether the consideration of quantum entanglement is requisite for the foundations of statistical mechanics.

In this paper, we derive the Boltzmann distribution for the canonical ensemble in an operational manner, i.e., constructing an operation-based scenario, with which we define a function to discuss equilibrium. This approach is useful to clarify the role of information, albeit implicit, in what we already see as a common sense in physics.

A key ingredient in our work that brings the notion of information into physics is information processing, or more specifically, information erasure. The physics of information erasure clarified the link between thermodynamic and information-theoretic entropies25,26,27,28,29,30,31 and it played a central role in resolving the paradox of Maxwell’s demon. It states that the erasure of one bit of information (in the demon’s memory) requires a work consumption of at least kBT ln 2. Here, kB is the Boltzmann constant and T is the temperature of the heat bath with which the memory system is in contact. Incidentally, despite the extremely small value of kB ln 2, which is roughly 1 × 10−24 J/K, strong experimental evidence for the information erasure principle has recently been reported32,33,34,35. If the information content in an N-bit string is NH(p) < N, where  is the Shannon entropy, then the minimum work for erasure becomes NkBTH(p) ln 2, as shown in ref. 21. This is because the optimal data compression makes the length of the string from N to NH(p) and after this compression we erase information in the NH(p) bits in which 0 and 1 appear with equal probability, spending NkBTH(p) ln 2 of work.

is the Shannon entropy, then the minimum work for erasure becomes NkBTH(p) ln 2, as shown in ref. 21. This is because the optimal data compression makes the length of the string from N to NH(p) and after this compression we erase information in the NH(p) bits in which 0 and 1 appear with equal probability, spending NkBTH(p) ln 2 of work.

Here, we make the demon play as a symbolic entity that carries out operations, as we shall present below. Also, because the definition of equilibrium is independent of operations, our scenario has a potential to be applied to nonequilibrium statistical mechanics, as we will describe briefly, taking the fluctuation-dissipation theorem36 as an example.

Result

Let us clarify first what we mean by “Maxwell’s demon”, as sometimes this can be a source of confusion. We basically follow the original idea by Maxwell37, although our demon does not intend to violate the second law of thermodynamics38,39,40,41,42.

In this paper, the demon is an entity that can measure and change the energy levels of particles and manipulate/process information encoded in memory registers (cells). As it will be clearer below, the particles can have only two distinct energy levels and this is what the demon measures and handles. The demon can of course access the heat bath, thus extract and discard energy from/to it via appropriate tools, complying with the laws of thermodynamics.

The memory is embedded on a long tape, as in the Turing machine that is an abstract, but common, model for information processing. The tape can also be used as a working space for computation, if necessary.

We note that the demon should be able to work autonomously, once the protocol and algorithm for its task are given. The phrase “autonomous system” may refer to a system consisting of mechanical parts that is designed to work on its own (without energy supply or active control from outside), e.g., a Szilard-engine-type machine presented in ref. 43. Nevertheless, for our purpose, it is sufficient to consider a system that proceeds deterministically reacting to the input from outside, complying with physical laws. Naturally, in order to work independently, it should not be fed any extra information or energy as a whole.

So, the name “demon” has merely a symbolic meaning here; it can be replaced with a machine that is capable of storing and processing information and manipulating the particle states. Although it could be done with some inspiration from an example in ref. 43, devising such a structure in detail is out of our scope and would be left for future work.

Thermo-Turing model

The primary components of our model are a set P of N particles with two energy levels E0 and E1(>E0) and a long tape M, which represents the demon’s memory and contains a sequence of N memory cells. We let Φ0, Φ1 and Ε denote the two (ground and excited) states of the particles and the energy gap E1 − E0, and assume that each particle is numbered to make a correspondence with a memory cell. The memory tape M can be thought of as a part of the demon and it is very similar to the one we typically consider in the context of Turing machine. Each memory cell can store a binary information, either 0 or 1 and it can be modelled as the Szilard engine44, which is a one-molecule gas with a partition at the center of cylinder. We call the mechanism comprising of M and the demon a “thermo-Turing model” in the following discussion.

In the context of (thermodynamic and algorithmic) entropy from the operational point of view, Zurek considered a model of demon with a Turing machine in ref. 28. Here, we incorporate the notions of information processing a la Turing and of thermodynamic consideration of Maxwell’s demon to step in the field of statistical mechanics.

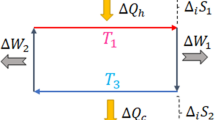

In our thought experiment, the interaction between P and the heat bath is mediated by the demon (or the thermo-Turing machine). The rough idea is as follows. The interaction with heat bath causes noise on P and an energy change in it. The degree of noise depends on the bath temperature T, but we represent it only by probability p of a state flip. Equilibrium is defined as the state in which the energy change in P is balanced with the energy consumption for subsequent manipulations of the memory tape M at T. Thus, the temperature T comes in to the discussion explicitly only through demon’s actions on P. Note that it is legitimate to assume that M is designed to make the stored information insensitive to thermal fluctuation. This picture (of having a direct effect of T on M) may appear strange from the viewpoint of the conventional deductive approach. However, this scenario allows us to use the demon as a subject of physical ‘operations’ to bring thermodynamic notions into the discussion. The elements of the thermo-Turing model and basic operations therein are depicted in Fig. 1.

Elements of the thermo-Turing model.

Two basic operations on the memory cells, encoding and erasure, are depicted on the left. Each memory cell is modeled with a single molecule gas contained in a cylinder. Encoding is carried out by slowly displacing the region of volume V/2 in which the molecule is kept, thus no work is consumed, or by simply rotating the cylinder by 180 degrees when the value is one. Information erasure requires a work consumption of kBT ln 2H(p), where H(p) is the amount of information stored in the tape. The two-level particle system is sketched on the right. Energy transfer to the particles can be done with a work reservoir and an unspecified mechanism, or with a fictitious Carnot engine. All these operations on the memory cells and the particles are controlled by the demon (not shown).

Naturally, we consider the memory tape M to let it reflect the state of particles in P. Suppose a situation in which the fraction p of a set of N particles are in the excited state, i.e., pN particles are in Φ1, while (1 − p)N in Φ0. Let F be the amount of work that the entire system (P + M) can potentially exert towards the outside, when we let it be in the state where all particles are in Φ0 and all memory cells store ‘0’. It simply means that we take the state with all in Φ0 and ‘0’ as the origin for the quantity F.

The energy stored in P contributes to F positively and its amount is  . On the other hand, in order to erase all information on the tape, we need to consume some energy Wer. As a result, we have

. On the other hand, in order to erase all information on the tape, we need to consume some energy Wer. As a result, we have

Since we are naturally interested in the optimal (largest) value of F for a given p in order to characterize the state uniquely, Wer needs to be minimized. Thus, we have Wer = NH(p)kBT ln 221, which leads to

Equation (2) resembles the Helmholtz free energy, i.e., F = U − TS, however, the conceptual difference behind them should be emphasized. The point is in presenting the operational scenario for statistical mechanics by identifying the thermodynamic entropy with the information entropy.

With the definition of F, which is computable for any physical state, we shall now define the equilibrium in terms of F. We call that the state is in equilibrium when its F is stationary, i.e.,

against small noises on the particles. We consider the NOT (flipping) operation on a particle as an elementary process of the noise, thus Eq. (3) is a condition against a small number of random NOT operations on P. This definition of equilibrium is associated with the stationarity of the principal system and the memory tape, rather than the largest likeliness of the state as in Jaynes’ argument22. Our definition fits the operational point of view better, because the quantity F can be computed by considering physical operations. The operational process (by the demon) will be presented below, soon after deriving the expression of the Boltzmann distribution. Also, a comparison with Jaynes’ work is given in Supplementary Material.

Let us compute ΔF for a probability change from p to p′.

where Δp = p′ − p and ΔH(p) = H(p′) − H(p). Since the number of errors is small (Δp ≪ 1), ΔH(p) = dH(p)/dpΔp ≡ H′(p)Δp. The equilibrium condition, ΔF = 0, gives

Suppose that the probability change, p → p′, is induced by thermal noise that flips the state of a randomly chosen particle in P between Φ0 and Φ1. Noting that ln 2 ⋅ H′(p) = ln[(1 − p)/p], we see that Eq. (5) reduces to

which is nothing but the Boltzmann distribution. The generalization of the model to d-level physical systems is presented later in this section.

A similar analysis on the effect of the Toffoli gate is also insightful, however, it is summarized in the Supplementary Material so that we focus on the derivation of the Boltzmann distribution here.

Next, we describe the operational scenario that naturally leads to the equilibrium condition, ΔF = 0 with Eq. (4). Figure 2 depicts the process.

-

a

One-to-one correspondence between the data stored in the memory tape M and the state of each particle in P is established. That is, if a particle is in the ground state Φ0 the corresponding memory cell stores ‘0’ and if the particle is in Φ1 the memory has ‘1’. This correspondence can be made by measuring the particle state and copying the result to the memory, which can be done without energy consumption26,27.

-

b

During some time interval Δt, a NOT (flipping) operation is applied to a few randomly chosen particles. This may be induced by noise or thermal fluctuation, i.e., the interaction between particles and the heat bath. Since the interaction with heat bath is not under the demon’s control, he spends zero energy here. Δt can be taken so that the number of flips is much smaller than N.

-

c

The demon swaps the states of particles and memory registers in the tape. The effect of the NOT operations in (b) is now transferred to the tape, while the state of particles is restored to be the one in (a). The energy of

is acquired by the demon to change the particle state, while the SWAP operation for information can be performed without energy consumption as it keeps the entire entropy unchanged. M now has the Shannon entropy H(p′).

is acquired by the demon to change the particle state, while the SWAP operation for information can be performed without energy consumption as it keeps the entire entropy unchanged. M now has the Shannon entropy H(p′). -

d

The demon transforms the Shannon entropy of M from H(p′) to H(p). This can be done by the process depicted in Fig. 3, which is explained in detail below. The energy required for this entropy transformation is NkBT ln 2 ⋅ ΔH := NkBT ln 2(H(p′) − H(p)).

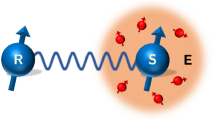

Figure 3 State transformation to change the probability distribution from p′ to p.

All memory cells are reset to ‘0’, consuming kBT ln 2H(p′) of work (from (i) to (ii)). In (iii), the one-molecule gases in NH(p) cells are expanded isothermally, giving the demon kBT ln 2H(p) of work. The ‘*’ sign in (iii) represents a randomized memory state with no physical distinction between 0 and 1; the molecule can move around in the whole configuration space of the cylinder. Since the state in (iii) is the same as the resulting state of data compression for an N-bit string containing NH(p) bits of information, data decompression leads to the string in which pN bits are in ‘1’ and the rest are in ‘0’ as in (iv). By permutating the bit string, which can be done for free of energy, the memory tape with NH(p) of information and the perfect correlation with the particles’ state can be realized.

The virtual process for which we consider the change of F.

(a) Each memory cell has a perfect correlation with the corresponding particle’s state. (b) The interaction between the particles and heat bath causes a NOT (=flipping) operation to a small number of particles randomly. (c) The demon swaps the information stored in the memory and the particle state, e.g., 0-Φ1 becomes 1-Φ0 and vice versa. (d) Both M and P return to the original state that is the same as (a).

The resulting state in (d) of the above process is the same as (a) and all steps can be made completely autonomous. That is, no traces of the actions by demon are left not only inside M and P, but also in their surrounding environment, while the only possibility of the trace is the amount of energy the environment received. Therefore, for the the joint thermo-Turing system M + P to be in equilibrium, i.e., no macroscopic change detectable from outside, the energy transfer between the joint system and the environment should be zero. Indeed, this condition can be written as

which is ΔF = 0.

Figure 3 shows the process to change the state of memory tape so that its entropy is transformed from H(p′) to H(p). Incidentally, this process can be seen as a special (classical) case of the one in Fig. 2 of ref. 45, which presented a thermodynamical transformation of quantum state from σ to ρ. Here, let us proceed with Fig. 3 solely.

From Fig. 3(i) to (ii), the demon erases all the information stored in the tape, consuming at least NkBT ln 2 ⋅ H(p′) of work.

In Fig. 3(iii), the demon extracts NkBT ln 2 ⋅ H(p) of work from the heat bath by letting the gas in NH(p) cells expand isothermally, which is possible since each memory cell can be modelled by a one-molecule gas. Note that the demon can always have the values of p and p′ since measurement can be done for free. Inserting a partition at center of each cell, now there are NH(p)/2 cells that represent ‘0’ and another NH(p)/2 cells ‘1’ (with negligible fluctuation when N is large enough).

Then the (Shannon) data decompression18 is performed on all the N cells to have pN cells in ‘1’ and (1 − p)N cells in ‘0’ as in Fig. 3(iv). Now that the number of cells in ‘1’ is the same as that of particles in excited state Φ1 in Fig. 2(d), the demon can sort the order of memory cells to make one-to-one correspondence with the particle states. The sorting process can be done isentropically, thus autonomously, since it is achieved simply by applying an appropriate permutation. Alternatively, a controlled-NOT operation may be applied between M and P, with a memory cell as a control bit and the corresponding particle as a target bit. Because the number of particles in Φ1 is the same at Steps (iv) and (v), no extra energy is necessary as a whole.

In summary, we have devised a physical scenario with which we can derive the Boltzmann equilibrium distribution in the statistical mechanics in an operational manner. The operations are performed on the particles and a virtual Turing-machine-type memory cells. We have symbolically used Maxwell’s demon-type intelligent being as the principal operator, but all actions are autonomous and leave no traces observable from outside, thus the demonic actions can be programmed in the Turing machine per se.

The erasure principle, stemming from the paradox of Maxwell’s demon, bridges thermodynamics and statistical mechanics via the notion of probability in information theory. It should be emphasized that we did not base our argument on the equiprobability principle. That is, we did not rely on the standard micro-canonical statistical mechanics, in which the entropy S is given by the Boltzmann formula S = kBlogΩ(E) with Ω(E) being the number of states under a given energy E.

Also, the above model can be used to justify the equivalence between thermodynamic and information theoretic entropies, which was discussed in our previous work21 in a different context. A brief argument is given in this line in Supplementary Material.

Generalization to d-level system

The above argument to derive the Boltzmann distribution can be generalized to the systems of arbitrary levels. That is, the cells of the tape can store d values from 0 to d − 1 and there are d possible states for particles, Φ0, Φ1, ..., Φd−1, whose energy levels are E0, E1, ..., Ed−1, respectively. Let pi be the ratio of the number of particles in the state Φi. Suppose that the k-th cell of the tape stores the value i when the k-th particle is excited to Φi. This state preparation can be completed by simply copying the measurement result about the particle state.

Instead of the noise-induced random NOT studied above, let us consider random SWAP operations that change the state of a particle. Let SWAPij denote a SWAP between two states Φi and Φj, namely, SWAPij maps the state Φi of a randomly chosen particle to Φj and vice versa. Note that the NOT operation between two levels is effectively the same as the SWAP between them, so the process for the thermo-Turing system is basically the same as the one described above (and Fig. 2) with the replacement of NOT with SWAP.

Suppose that a SWAPij has occurred to one of the particles. The SWAPij changes its state if it is in either Φi or Φj, otherwise nothing happens. The probability of such a ‘successful’ SWAPij is pi + pj. After the demon swaps the information between M and P, the memory tape M contains information after the SWAPij and the particles P returns to the state before the SWAPij (as in Step (c) above). Thus energy change in P due to this operation is (Ej − Ei)(pi − pj)/(pi + pj) on average.

The change in the erasure entropy times temperature after the single SWAPij and swap between M and P is

where

Making the change in F equal to zero as in the case of bits and two-level particles, we arrive at the desired relation:

This relation holds for any pairs of i and j, hence pi ∝ exp(−Ei/kBT) for all i.

Discussion

In principle, our thermo-Turing model can be applied to generic non-equilibrium processes, as far as we can assume that the operations by demon can be carried out sufficiently fast, compared with the dynamics. Here, we present a modest step to this direction, choosing a particular model which exhibits a characteristic feature of fluctuation-dissipation theorem.

Suppose that a spatially fluctuating external field that works as a perturbation to energy levels is applied to let the system P deviate from macroscopic equilibrium. This field causes a small change to the energy gap of the particle at the n-th site to make it  and we assume

and we assume  and

and  for simplicity. Such a change may be seen as a result of the Stark or the Zeeman effect, but we do not need to specify the origin of the shift for our discussion.

for simplicity. Such a change may be seen as a result of the Stark or the Zeeman effect, but we do not need to specify the origin of the shift for our discussion.

In order to discuss statistical quantities for each particle, the site n = 1, 2, …, N should be regarded as a block, which consists of sufficiently many members. For the n-th block, due to the energy shift un, the local equilibrium distribution becomes

Under this distribution, the operations by demon within the n-th block balance with the external field. The index ‘leq’ for p in Eq. (11) stands for local equilibrium.

What we are interested in is the amount of dissipation, given the fluctuation of {un},  . Imagine that the demon now looks at all the blocks as a whole and attempts to make all particles return to the same equilibrium state, i.e., un = 0 all blocks. This is done by changing the energy state of each block and erasing information about the spatial variation of the energy shifts. The work that needs to be done by demon in order to change the distribution pleq to that of equilibrium

. Imagine that the demon now looks at all the blocks as a whole and attempts to make all particles return to the same equilibrium state, i.e., un = 0 all blocks. This is done by changing the energy state of each block and erasing information about the spatial variation of the energy shifts. The work that needs to be done by demon in order to change the distribution pleq to that of equilibrium  is

is

where  ,

,  and

and  and

and  are evaluated at p = peq. Also, we have used

are evaluated at p = peq. Also, we have used  in the fourth equality and the condition Eq. (5) for equilibrium in the fifth equality. From Eq. (11), we have

in the fourth equality and the condition Eq. (5) for equilibrium in the fifth equality. From Eq. (11), we have

therefore,

Equation (13) means that the response of the system to the external field results in the positive work by demon, 〈W〉 > 0, which is dissipated into the heat bath. Further, it is proportional to the fluctuation of the external field. It is a simple expression of the dissipation-fluctuation theorem in the linear approximation of the fluctuating potential un. Also, Eq. (13) is interesting in the sense that our model explicitly takes into account of the cost of ‘forgetting the past’, which is simply neglected in the standard consideration of Markovian processes.

The readers who are familiar with the standard dissipation-fluctuation theorem36 would feel more comfortable with the fluctuation in time rather than the spatial one of the external potential. In that case, one can reorder the site numbers according to the order of occurrence of un. Then, the n can be interpreted as time and the average 〈⋅〉 can be understood as that over a long time.

Additional Information

How to cite this article: Hosoya, A. et al. Operational derivation of Boltzmann distribution with Maxwell's demon model. Sci. Rep.5, 17011; doi: 10.1038/srep17011 (2015).

References

Gibbs, J. W. Elementary principles in statistical mechanics. Cambridge University Press, New York (1902).

Khinchin, A. I. Mathematical Foundations of Statistical Mechanics. (Dover, New York, 1949).

Tolman, R. C. The Principles of Statistical Mechanics. (Dover, New York, 1979).

Landau, L. D. & Lifshitz, E. M. Statistical Physics. 3rd Ed., Vol. 5 (Butterworth-Heinemann, Oxford, 1980).

ter Haar, D. Foundations of Statistical Mechanics. Rev. Mod. Phys. 27, 289 (1955).

Uffink, J. Compendium to the foundations of classical statistical physics in Handbook for the Philosophy of Physics, Butterfield, J. & Earman, J. (eds) (Elsevier, Amsterdam, 2007), pp. 924–1074

Reimann, P. Foundation of Statistical Mechanics under Experimentally Realistic Conditions. Phys. Rev. Lett. 101, 190403 (2008).

Lieb, E. H. & Yngvason, J. The physics and mathematics of the second law of thermodynamics. Phys. Rep. 310, 1 (1999).

Hill, T. L. An Introduction to Statistical Thermodynamics (Dover, New York, 2012), originally published in Addison-Wesley (1960).

Chandler, D. Introduction to Modern Statistical Mechanics. (Oxford University Press, Oxford, 1987).

Bridgman, P. W. The Logic of Modern Physics. (Macmillan, New York, 1927).

Brillouin, L. Maxwell’s Demon Cannot Operate: Information and Entropy. I. J. Appl. Phys. 22, 334 (1951).

Brillouin, L. Science and Information Theory. (Dover, Minesota, 1956).

Shikano, Y. These from Bits, in It From Bit or Bit From It, The Frontiers Collection, 113 (2015).

Kendall, M. G. On the Reconciliation of Theories of Probability. Biometrika 36(1/2), 101 (1949).

Cover, T. M. & Thomas, J. A. Elements of information theory, 2nd Edition (Wiley-Interscience, New York, 2006).

Ash, R. B. Information Theory. (Interscience, New York, 1965).

Shannon, C. E. A Mathematical Theory of Communication. Bell System Technical Journal 27, 379, 623 (1948).

Turing, A. M. On Computable Numbers, with an Application to the Entscheidungsproblem. Proc. London Math. Soc. 42, 230 (1937).

Turing, A. M. On Computable Numbers, with an Application to the Entscheidungsproblem: A correction. Proc. London Math. Soc. 43, 544 (1938).

Hosoya, A., Maruyama, K. & Shikano, Y. Maxwell’s demon and data compression. Phys. Rev. E 84, 061117 (2011).

Jaynes, E. T. Information Theory and Statistical Mechanics. Phys. Rev. 106, 620 (1957).

Goldstein, S., Lebowitz, J. L., Tumulka, R. & Zanghì, N. Canonical Typicality. Phys. Rev. Lett. 96, 050403 (2006).

Popescu, S., Short, A. J. & Winter, A. Entanglement and the foundations of statistical mechanics, Nature Physics 2, 754 (2006).

Landauer, R. Irreversibility and Heat Generation in the Computing Process. IBM J. Res. Dev. 5, 183 (1961).

Bennett, C. H. The Thermodynamics of Computation - A Review. Int. J. Theor. Phys. 21, 905 (1982).

Bennett, C. H. Logical Reversibility of Computation. IBM J. Res. Dev. 17, 525 (1973).

Zurek, W. H. Algorithmic randomness and physical entropy. Phys. Rev. A 40, 4731 (1989).

Shizume, K. Heat generation required by information erasure. Phys. Rev. E 52, 3495 (1995).

Maruyama, K., Nori, F. & Vedral, V. Colloquium: The physics of Maxwell’s demon and information. Rev. Mod. Phys. 81, 1 (2009).

Mandal, D. & Jarzynski, C. Work and information processing in a solvable model of Maxwell’s demon. Proc. Natl. Acad. Sci. USA 109, 11641 (2012).

Bérut, A. et al. Experimental verification of Landauer’s principle linking information and thermodynamics. Nature 483, 187 (2012).

Jun, Y., Gavrilov, M. & Bechhoefer, J. High-Precision Test of Landauer’s Principle in a Feedback Trap. Phys. Rev. Lett. 113, 190601 (2014).

Roldán, É., Martínez, I. A., Parrondo, J. M. R. & Petrov, D. Universal features in the energetics of symmetry breaking. Nature Physics 10, 457 (2014).

Koski, J. V., Maisi, V. F., Pekola, J. P. & Averin, D. V. Experimental realization of a Szilard engine with a single electron. Proc. Natl. Acad. Sci. USA 111, 13786 (2014).

Kubo, R. The fluctuation-dissipation theorem. Rep. Prog. Phys. 29, 255 (1966).

Maxwell, J. C. Letter to P. G. Tait, 11 December 1867. p. 213 in Life and Scientific Work of Peter Guthrie Tait, C. G. Knott (ed.) (Cambridge University Press, London, 1911).

Earman, J. & Norton, J. D. Exorcist XIV: The Wrath of Maxwell’s Demon. Part I. From Maxwell to Szilard. Stud. Hist. Phil. Mod. Phys. B 29, 435 (1998).

Earman, J. & Norton, J. D. Exorcist XIV: The Wrath of Maxwell’s Demon. Part II. From Szilard to Landauer and Beyond. Stud. Hist. Phil. Mod. Phys. B 30, 1 (1999).

Bub, J. Maxwell’s Demon and the Thermodynamics of Computation. Stud. Hist. Phil. Mod. Phys. B 32, 569 (2001).

Leff, H. S. & Rex, A. F. Maxwell’s Demon 2, IOP, Bristol (2003).

Parrondo, J. M. R., Horowitz, J. M. & Sagawa, T. Thermodynamics of information. Nature Physics, 11 131 (2015).

Lu, Z., Mandal, D. & Jarzynski, C. Engineering Maxwell’s demon. Phys. Today 67(8), 60 (2014).

Szilard, L. Über die Entropieverminderung in einem thermodynamischen System bei Eingriffen intelligenter Wesen. Z. Phys. 53, 840 (1929).

Maruyama, K., Brukner, C. & Vedral, V. Thermodynamical cost of accessing quantum information, J. Phys. A: Math. Gen. 38, 7175 (2005).

Acknowledgements

The authors acknowledge the Yukawa Institute for Theoretical Physics at Kyoto University on the YITP workshop YITP-W-11-25 on “Hierarchy in Physics through Information – Its Control and Emergence –”. K.M. acknowledges support from the JSPS Kakenhi (C) Grants No. 26400400. This work was partially supported by the NINS Youth Collaborative Project and DAIKO Foundation.

Author information

Authors and Affiliations

Contributions

A.H. provided the preliminary idea and results on the thermo-Turing model. K.M. and Y.S. clarified and refined the ideas and results, particularly those that led to Eq. (13). Further, K.M. provided the generalization of the thermo-Turing model. All authors contributed to writing the manuscript.

Ethics declarations

Competing interests

The authors declare no competing financial interests.

Electronic supplementary material

Rights and permissions

This work is licensed under a Creative Commons Attribution 4.0 International License. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in the credit line; if the material is not included under the Creative Commons license, users will need to obtain permission from the license holder to reproduce the material. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/

About this article

Cite this article

Hosoya, A., Maruyama, K. & Shikano, Y. Operational derivation of Boltzmann distribution with Maxwell’s demon model. Sci Rep 5, 17011 (2015). https://doi.org/10.1038/srep17011

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/srep17011

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.

is acquired by the demon to change the particle state, while the SWAP operation for information can be performed without energy consumption as it keeps the entire entropy unchanged. M now has the Shannon entropy H(p′).

is acquired by the demon to change the particle state, while the SWAP operation for information can be performed without energy consumption as it keeps the entire entropy unchanged. M now has the Shannon entropy H(p′).