Abstract

Cooperativeness is a defining feature of human nature. Theoreticians have suggested several mechanisms to explain this ubiquitous phenomenon, including reciprocity, reputation and punishment, but the problem is still unsolved. Here we show, through experiments conducted with groups of people playing an iterated Prisoner's Dilemma on a dynamic network, that it is reputation what really fosters cooperation. While this mechanism has already been observed in unstructured populations, we find that it acts equally when interactions are given by a network that players can reconfigure dynamically. Furthermore, our observations reveal that memory also drives the network formation process and cooperators assort more, with longer link lifetimes, the longer the past actions record. Our analysis demonstrates, for the first time, that reputation can be very well quantified as a weighted mean of the fractions of past cooperative acts and the last action performed. This finding has potential applications in collaborative systems and e-commerce.

Similar content being viewed by others

Introduction

Human life would be inconceivable without the high levels of cooperation that our species is capable of1,2,3. Our complex society strongly relies on this defining and intrinsic feature of our nature. The study of human cooperation is often framed in the stylized, strict form of a Prisoner's Dilemma, a situation which is vulnerable to exploitation by free riders. It is well established that, in repeated prisoner's dilemma games, the global cooperation level rapidly decays down to values around 10%4,5. In order to explain how this effect is circumvented in social interactions, several mechanisms have been proposed that allow cooperators to assort and avoid free riders. Reciprocity6, reputation7, or punishment8,9 are prototypical. Somewhat less obvious is network reciprocity10, a mechanism that has attracted a lot of interest motivated by the increasing importance of networks in social research. This mechanism enhances the assortment of cooperators by limiting interactions to one's social neighbours (see Ref. 11 for a review). However, recent experiments with human subjects revealed that simply structuring a population is not enough to foster and sustain cooperation, no matter the underlying social network12,13,14,15,16. The reason is that individuals do not base their decision on payoffs17, but behave with their neighbours just as they do in public good games on groups—cooperating conditionally on the number of cooperative acts they receive18 as well as on their own previous action15,16,17,19. This inevitably leads to the decay of cooperation irrespective of the underlying network structure20.

To be more specific, the networks used in the above experiments are static, predefined structures on which individuals are constrained to interact. Similar experiments have been carried out with dynamic networks, where individuals are allowed to change their social ties as they play. The latter mechanism is able to induce and sustain high levels of cooperation21,22,23,24 (note that in Ref. 21 players choose independent actions for every partner, opposite to all other experiments). Dynamic rewiring introduces a mechanism for punishment, namely to break links as a response to defective partners and indeed, it has been found that the promotion of cooperation depends on the rate at which connections can be modified22,23. However, an important point which seems to have been overlooked is that in all cases participants received, to some extent, information on their opponents' past actions and therefore, the possibility that it is this information that is driving the rise of cooperation can not be excluded.

Experiments

To address this issue, we conducted 24 experiments involving 243 participants distributed in groups of between 17 and 25 individuals. Initially, participants in any of the experiments were randomly assigned to the nodes of a ring with links to nearest and next-nearest neighbours. Sub-sequently, they were allowed to change their ties along the experiment. The game consisted of 25 rounds (although this number was unknown to the participants). Each round had two phases. In phase one, each participant played a multi-player Prisoner's Dilemma with all participants she was linked to (neighbours) by choosing one action: cooperate (C) or defect (D). The payoff they obtained was proportional to the number nC of neighbours who chose C: if they cooperated they received 7nC points, whereas if they defected they received 10nC points. These payoffs correspond to the same payoff matrix used in previous experiments with fixed networks15,16 and were so chosen for the sake of comparison. In phase two, they were allowed to break any of their current ties and to propose up to five new ones with participants they were not yet linked to (this is the protocol used in Ref. 23). To make their decisions they were informed of a certain number (m = 0, 1, 3, 5) of past actions of every participant in the experiment (henceforth referred to as “memory”). In the case of memory m = 0, nothing identified the participants, not even those they were connected with (i.e., they knew nC but not who among their neighbours cooperated or defected). At the end of phase two, all participants decided whether to accept or reject each received proposal for a new link. Every participant ran two (and only two) consecutive sessions with different values of m. (For further details see Supplementary Information).

Our results establish clearly that reputation governs the way individuals change their ties and, as a consequence, cooperation can be sustained, but only when information on others' past actions is available. We have also found that people assign reputation to partners based mainly on their last action and their average cooperation. Thus, the results of this study suggest that, in e-commerce and related contexts, adding information on the last action performed to the usual practice of providing the average reliability as a proxy for reputation could largely enhance trust and transaction efficiency.

Results

The outcome of the experiments run without memory (m = 0) is in stark contrast with that of the experiments run with memory (m > 0) (Figure 1). Without information on the past actions of other players, cooperation decays from about 50% down to around 20% and the average connectivity of players grows quickly toward a saturation value close to 85% of all possible links. When some past actions record is available, the level of cooperation remains roughly constant along the experiment at a value around 50–60% and the connectivity grows more slowly. After a short transient, growth is almost linear (showing no sign of saturation), reaching a final connectivity close to 60%. Moreover, the cases of m = 3 and m = 5 are statistically indistinguishable and the level of cooperation for m = 1 is slightly lower (about 50%) than for m > 1 (about 60%). The connectivity is however very similar except for the short transient, where it remains below that of m > 1.

The level of cooperation is significantly higher when the past actions record of players to whom to connect is available.

(A): Level of cooperation (fraction of cooperative actions) per round, averaged over all experiments for the same treatment (memory m = 0, 1, 3, 5). (B): Average connectivity (ratio of actual links to potential links) per round, averaged over all experiments for the same treatment. Equivalently, fraction of links of the actual graph with respect to the complete graph. In both, (A) and (B), error bars represent plus/minus one standard deviation of a binomial distribution over the size of the treatment concerned and the number of analyzed rounds. (C): Representation of the connectivity graphs resulting from experiments with 25 participants for all four treatments. Node areas are proportional to their degrees at that round. Node colors represent the cooperation level (fraction of cooperative actions) up to that round. (We will show below that level of cooperation is an important part of reputation.) Link widths are proportional to their persistence (number of rounds they have been present). Although represented differently for the sake of consistency, the initial condition is, in all four cases, topologically equivalent to a ring where each node is linked to its first and second neighbours.

In the absence of memory, given that linking with a defector bears no cost, increasing connectivity as much as possible is the rational strategy because that maximizes the number of cooperative neighbours. This explains the behaviour shown in Figure 1B. The difference between the resulting network and a complete graph is likely due to a fraction of punishing actions from some cooperators who tried to cut their ties with defecting neighbours. However, this amount of punishment is insufficient to sustain cooperation and the level of cooperation undergoes a ‘tragedy of the commons' similar to that observed in fixed networks15,16.

The rational linking strategy is the same when the past actions record comes in and the number of links indeed increases with rounds (Figure 1B). However, this trend is counterbalanced by the punishing of defectors, as players attempt to break ties with them while maximizing the number of links with highly cooperative individuals (Figures 1C and Supplementary Figures 1 to 3). This indirectly forces individuals to cooperate more, thus increasing the overall level of cooperation (Figure 1A). At the end of the 25 rounds of the experiment the total number of links is still increasing, so presumably the final connectivity and cooperation level would have been much higher had the experiment lasted longer.

Looking deeper into the differences between the case m = 0 and the other three cases sheds more light into the experimental observations, in particular when the linking dynamics is examined in detail. To this end, we include in Table 1 a number of magnitudes that serve the purpose of illustrating player's behaviour in the four different treatments studied in the experiments. As we have already mentioned, the Table shows the differences in the levels of cooperation and average connectivity, but other relevant features can be observed. In particular:

-

a

The average number of link proposals shows little variation between m = 0 and m = 1 and decreases for m > 1.

-

b

The average number of link proposals that are accepted shows a strong decreasing trend upon increasing m.

-

c

The average number of broken links increases from m = 0 to m = 1 and then decreases for m > 1—quite abruptly from m = 1 to m = 3.

-

d

The average number of rounds along which a link persists before it is broken decreases from m = 0 to m = 1 and then increases for m > 1—quite abruptly from m = 1 to m = 3. Note, however, that for this magnitude the large values of the standard deviation in Table 1 do not allow to statistically confirm this intuition.

Observations about the level of cooperation and average connectivity, along with items (b) and (c) above are all clear indications that memory has a strong effect on players' behaviour, i.e., it functions as reputation. Thus, cooperation can only be sustained if players can punish defectors by breaking their ties with them, hence cooperation is higher when the past actions of players are known. On the other hand, whereas in all cases increasing the number of links to its maximum possible is the rational behaviour (linking with defectors is costless), connectivity dramatically drops from m = 0 to m > 0 as an effect of the above mentioned punishment. Besides, the longer the past actions record (memory) the fewer link proposals are accepted (observation b) and the more links are broken (observation c), again an effect of punishment. The weak dependence of link proposals with m (a) is probably associated to the fact that proposing links is free and can only be beneficial. Nevertheless, it slightly decreases with m probably due to a self-censoring effect. Surprisingly though, the average number of proposals is significantly below the five proposals limit that players had, even for m = 0. We thus see that reputation, assigned by players using the information they have in the different setups, is likely to be an important driver, if not the most important one, of players' behaviour.

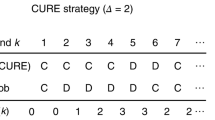

Indeed, further insight on the way reputation influences rewiring can be obtained by analyzing the fraction of link proposals which are accepted as a function of the proponent's past actions (Figure 2). These data provide two important pieces of information. On the one hand, they show that the fraction of accepted links increases monotonically with the number of cooperative actions in the past actions record. On the other hand, by sorting the fractions of accepted links in increasing order, we can observe (Figures 2A and B) that those whose past actions record is identified by the same value of just two features—that is, the last action and the same fraction of cooperative actions—cluster together. Accordingly, we can define a reputation for every player with a single measure, namely an average of these two features. The value of the relative weight of the fraction of cooperative actions in the player's record (referred to as w in the Methods section below) can be obtained through a linear fit to the fractions of accepted link proposals as shown in Figures 2C and D (see Methods; see also Supplementary Information for further details). That these two features are the only information about past behaviour that subjects seem to be using to make their decisions is an important outcome of these experiments—particularly useful for future modeling and for practical applications. The existence of a reputation-based punishment induces high reputation values among players (Figure 3), which in turn translates into a higher level of cooperation than rational behaviour would lead to.

Reputation is a weighted combination of average cooperation and last action and it strongly conditions linking.

If the fraction of link proposals accepted from a proponent with a given past actions record are sorted in increasing order, those corresponding to past actions records sharing the last action (Clast) and the fraction of cooperative actions ( ) are statistically indistinguishable. This holds both for memory m = 3 (A) and memory m = 5 (B). (Labels nC|X denote memory records with last action X and n cooperative previous actions.) This implies that the reputation that players are taking into account is a weighted combination of Clast and

) are statistically indistinguishable. This holds both for memory m = 3 (A) and memory m = 5 (B). (Labels nC|X denote memory records with last action X and n cooperative previous actions.) This implies that the reputation that players are taking into account is a weighted combination of Clast and  , i.e.,

, i.e.,  . The value of w can be obtained through a linear fit to the fractions of accepted link proposals as a function of r (C and D; see Methods and Supplementary Information for details). The results are w = 0.280 ± 0.024 for m = 3 and w = 0.165 ± 0.015 for m = 5. Notice that the weight for m = 5 can be obtained from the observation (B) that the fraction of accepted proposals for past action records 3C|D is statistically indistinguishable from that for past action records 1C|C. This implies (1 − w)(3/5) = (1 − w)(2/5) + w, i.e., w = 1/6 (compare with the value obtained through the linear fit).

. The value of w can be obtained through a linear fit to the fractions of accepted link proposals as a function of r (C and D; see Methods and Supplementary Information for details). The results are w = 0.280 ± 0.024 for m = 3 and w = 0.165 ± 0.015 for m = 5. Notice that the weight for m = 5 can be obtained from the observation (B) that the fraction of accepted proposals for past action records 3C|D is statistically indistinguishable from that for past action records 1C|C. This implies (1 − w)(3/5) = (1 − w)(2/5) + w, i.e., w = 1/6 (compare with the value obtained through the linear fit).

Subjects try to hold a high reputation but not the highest.

The histogram of link lifetimes shows a fast exponential decay (A; average lifetimes are 2.75 rounds for m = 1, 3.21 for m = 3 and 3.23 for m = 5). This is a consequence of the fact that most individuals keep a record of 2 cooperative actions out of 3 (B) or 2–4 out of 5 (C); in other words, subjects often defect but not too much—that would ruin their reputation. But this sporadic defection has a drastic effect on the linking dynamics because reputation is very much influenced by subjects' last action.

The dynamics of links in the presence of reputation (m > 0) is also interesting (without memory, formation and breaking of ties is basically random). Figure 3A shows that links hardly last longer than ~10 rounds, their average lifetime being 2.75 (m = 1), 3.21 (m = 3) and 3.23 (m = 5) rounds. At first this looks at odds with a reputation-driven linking. However, two elements can easily explain this behaviour. Figure 3B and C show that, for m = 3, most subjects exhibit two cooperative actions in their memory record and for m = 5, this number is in the range 2–4. This implies that even individuals who try to keep a high reputation often defect (Supplementary Figure 9 shows that hardly anyone cooperates for more than 10 times in a row and most people typically cooperate no longer than 2 or 3 times in a row). From our finding that reputation strongly depends on the last action performed, this means that a high fraction of these defective actions will cause a serious damage to the subject's reputation and triggers the breaking of some ties. The increasing lifetime of links observed as m increases is therefore consistent with the corresponding lower weight that the last action has in the quantitative measure of reputation (in other words, defections are less damaging to reputation the larger m). This suggests that keeping full history records could make ties highly persistent.

Discussion

To summarize, our experiments show that the punishing mechanism implicit in the network plasticity requires knowledge of the partner's reputation in order to work as a cooperation driver. Otherwise, rewiring simply leads to cooperation levels similar to those found in rigid networks. This conclusion aligns nicely with the experimental observation that good reputation and having more social supporting partners are correlated25.

Compared to previous experiments with a similar setup23, the significantly lower level of cooperation we observe in ours is striking. The most relevant difference in the experimental conditions is the different payoff matrix: in Ref. 23 players played a true prisoner's dilemma (defector's payoff is higher than cooperator's when confronted to a defector) whereas in our experiments they played a weak prisoner's dilemma (both payoffs are equal). In static networks, this has the effect of decreasing the level of cooperation17. Paradoxically, in dynamic networks it may have the opposite effect. The reason is that, with a defective neighbour, cooperating or defecting makes no difference in a weak prisoner's dilemma, whereas cooperating with a defective partner is costly in a true prisoner's dilemma. Thus, players who are prone to cooperate need to break ties with all their defective neighbours to increase their payoff. Because of this strong punishment, players have more incentives to cooperate than to defect, which rises the global level of cooperation.

Interestingly, aside form the impact of reputation in cooperation, our results also shed light on the mechanisms that drive network formation. As shown here, the reconfiguration of the underlying network at any given time is greatly influenced by the cooperative reputation of players. This is a new feature that has not been taken into account in network formation models proposed so far, except for a few cases26,27 in which network growth is coupled to an evolutionary dynamics—albeit in a different way and limited to one step memory. Therefore, there is much research to be done along these lines: relevant open questions include finding the outcome of evolutionary network growth when memory lasts longer, how this relates to the duration and pace of evolution and the extension to the case when the number of subjects in the network can vary (i.e., the number of players is not fixed and can increase or decrease). We think that theoretical or experimental efforts aimed at tackling these questions could shed further light on how human societies and organizations arise and evolve.

Finally, we stress that a very important outcome of our experimental results is that they provide convincing evidence that reputation is based on just two features of past actions records: the fraction of cooperative actions and the last action performed. This fact and the strong effect that the last action has on trust—revealed by the fast turnover dynamics observed in the experiments—do not seem to have been sufficiently acknowledged before. Reputation, in the way we have characterized it, appears to be used for interactions with a moderate number of possible partners; situations with very many options may lead to further simplifications in the relation between memory and reputation, while others with few candidate partners may allow to retain the whole sequence of actions to form a reputation. A particularly interesting context where reputation, as observed here, plays such an important role is e-commerce28, as the number of possible partners at any given time is typically in the intermediate range we are considering here. At the time of writing, we are not aware of any platform where information on the last transaction of agents is provided along with the percentage of fulfilled past transactions. Our experiments suggest that this could have a strong effect in enforcing well-behaving commercial practice through the promotion of reputation-based trust.

Methods

Ethics statement

All participants in the experiments reported in the manuscript signed an informed consent to participate. Besides, their anonymity was always preserved (in agreement with the Spanish Law for Personal Data Protection) by assigning them randomly a username which would identify them in the system. No association was ever made between their real names and the results. As it is standard in socio-economic experiments, no ethic concerns are involved other than preserving the anonymity of participants. This procedure was checked and approved by the Viceprovost of Research of Universidad Carlos III de Madrid, the institution responsible for the funding for the experiment. The experiment was subsequently carried out in accordance with the approved guidelines.

Participants were recruited from the volunteer pool of the Experimental Economics Lab of the Departamento de Economía of Universidad Carlos III de Madrid, where experiments were also conducted. The volunteer pool is open to students enrolled at the University at any degree and level, including postgraduate studies and the possibility to join the pool was reminded to the students through the University mailing list for the experiment. In every session, participants were initially linked to four other players and then allowed to connect to anybody in the session, i.e., every session was a unique group. Each group participated in two treatments in a row. Sessions were conducted on June 5 and 6, 2013, with groups of 17, 23, 24 and two of 25 participants for the m = 0,1 treatments and on September 26 and 27 and October 25, 28 and 31, with groups of 18, 19, two of 21 and two of 25 participants for the m = 3,5 treatments. Half on the groups performed the experiments in an order and the other half in the opposite order. The specific order has some effect in the results (especially in the links lifetime for m = 0), but it has a small or null influence on the relevant observables. The total number of participants was 243 and the total cost of the experiment was around 6000 [euro]. Detailed instructions (see Supplementary Information) were both read aloud at the beginning of the experiment and provided in a written document that participants kept until the end of it. After each session participants were informed of the amount of money they had won and immediately paid. Typical earnings ranged 15–30 euros, including a 5 euros show-up fee. Experiments were carried out on a web-based platform (see Supplementary Information) and results gathered in a database for further analysis. Individuals played anonymously and could not interact during the experiment. Participants anonymity was always guaranteed and data collected in a way that their authors could never be traced.

Defining reputation as  , where

, where  is the fraction of cooperative actions in the past actions record and Clast is the last action (1 if it is cooperation, 0 otherwise) and the dependence A(r) = α + βr of the fraction of accepted links as a function of reputation, then

is the fraction of cooperative actions in the past actions record and Clast is the last action (1 if it is cooperation, 0 otherwise) and the dependence A(r) = α + βr of the fraction of accepted links as a function of reputation, then  , where β1 = βw and β2 = β(1 − w). Coefficients α,β1,β2 are obtained through a least squares linear fit of this expression to the data and w is determined from them as w = β1/(β1 + β2).

, where β1 = βw and β2 = β(1 − w). Coefficients α,β1,β2 are obtained through a least squares linear fit of this expression to the data and w is determined from them as w = β1/(β1 + β2).

References

Maynard Smith, J. & Szathmary, E. The Major Transitions in Evolution. (Freeman, Oxford, 1995).

Axelrod, R. & Hamilton, W. D. The evolution of cooperation. Science. 211, 1390–1396 (1981).

Seabright, P. The Company of Strangers: A Natural History of Economic Life. (Princeton University Press, Princeton, 2010).

Ledyard, J. O. Public goods: A survey of experimental research. In Handbook of experimental economics, Nagel, J. H. and Roth,A. E., editors, 111–251. (Princeton University Press, Princeton, 1995).

Camerer, C. F. Behavioral Game Theory. (Princeton University Press, Princeton., 2003).

Trivers, R. L. The evolution of reciprocal altruism. Q. Rev. Biol. 46, 35–57 (1971).

Nowak, M. A. & Sigmund, K. Evolution of indirect reciprocity by image scoring. Nature. 393, 573–577 (1998).

Fehr, E. & Gächter, S. Cooperation and punishment in public goods experiments. Amer. Econ. Rev. 90, 980–994 (2000).

Sigmund, K., Hauert, C. & Nowak, M. A. Reward and punishment. Proc. Nat. Acad. Sci. 98, 10757–10762 (2001).

Nowak, M. A. Five rules for the evolution of cooperation. Science. 314, 1560–1563 (2006).

Roca, C. P., Cuesta, J. & Sánchez, A. Evolutionary game theory: temporal and spatial effects beyond replicator dynamics. Phys. Life Rev. 6, 208 (2009).

Kirchkamp, O. & Nagel, R. Naive learning and cooperation in network experiments. Games Econ. Behav. 58, 269–292 (2007).

Cassar, A. Coordination and cooperation in local, random and small world networks: Experimental evidence. Games Econ. Behav. 58, 209–230 (2007).

Traulsen, A., Semmann, D., Sommerfeld, R. D., Krambeck, H.-J. & Milinski, M. Human strategy updating in evolutionary games. Proc. Natl. Acad. Sci. USA. 107, 2962–2966 (2010).

Grujić, J., Fosco, C., Araujo, L., Cuesta, J. A. & Sánchez, A. Social experiments in the mesoscale: Humans playing a spatial prisoner's dilemma. PLoS ONE. 5, e13749 (2010).

Gracia-Lázaro, C. et al. Heterogeneous networks do not promote cooperation when humans play a prisoner's dilemma. Proc. Natl. Acad. Sci. USA. 109, 12922–12926 (2012).

Grujić, J. et al. Three is a crowd in iterated prisoner's dilemmas: experimental evidence on reciprocal behavior. Sci. Rep. 4, 4615 (2014).

Fischbacher, U., Gächter, S. & Fehr, E. Are people conditionally cooperative? Evidence from a public goods experiment. Econ. Lett. 71, 397–404 (2001).

Grujić, J., Eke, B., Cabrales, A., Cuesta, J. A. & Sánchez, A. Three is a crowd in iterated prisoner's dilemmas: experimental evidence on reciprocal behavior. Sci. Rep. 2, 638 (2012).

Gracia-Lázaro, C., Cuesta, J. A., Sánchez, A. & Moreno, Y. Human behavior in prisoner's dilemma experiments suppresses network reciprocity. Sci. Rep. 2, 325 (2012).

Fehl, K., van der Post, D. J. & Semmann, D. Co-evolution of behaviour and social network structure promotes human cooperation. Ecol. Lett. 14, 546–551 (2011).

Rand, D. G., Arbesman, S. & Christakis, N. A. Dynamic social networks promote cooperation in experiments with humans. Proc. Natl. Acad. Sci. USA. 108, 19193–19198 (2011).

Wang, J., Suri, S. & Watts, D. J. Cooperation and assortativity with dynamic partner updating. Proc. Natl. Acad. Sci. USA. 109, 14363–14368 (2012).

Shirado, H., Fu, F., Fowler, J. H. & Christakis, N. A. Quality versus quantity of social ties in experimental cooperative networks. Nature Comm. 4, 2814 (2013).

Lyle III, H. F. & Smith, E. A. The reputational and social network benefits of prosociality in an andean community. Proc. Natl. Acad. Sci. USA. 111, 4820–4825 (2014).

Poncela, J., Gómez-Gardeñes, J., Floría, L. M., Sánchez, A. & Moreno, Y. Complex cooperative networks from evolutionary preferential attachment. PLoS ONE. 3, e2449, Jun (2008).

Poncela, J., Gómez-Gardeñes, J., Floría, L. M., Moreno, Y. & Sánchez, A. Cooperative scale-free networks despite the presence of defector hubs. EPL. 88, 38003, Nov (2009).

Bolton, G. E., Katok, E. & Ockenfels, A. How effective are electronic reputation mechanisms? An experimental investigation. Manage. Sci. 50, 1587–1602 (2005).

Acknowledgements

We are thankful to Edoardo Gallo for his comments and for sharing his results prior to publication and to Gonzalo Ruiz for his help with the experimental software. We are grateful to the Departamento de Economía of Universidad Carlos III de Madrid for allowing us to use their lab. This work was supported in part by MINECO (Spain) through grants PRODIEVO, FIS2011-25167 and FIS2009-09689, by Comunidad de Madrid (Spain) through grant MODELICO-CM, by Comunidad de Aragón (Spain) through a grant to the group FENOL and by the EU FET Proactive project MULTIPLEX (contract no. 317532).

Author information

Authors and Affiliations

Contributions

J.C., C.G.-L., Y.M. and A.S. designed and performed research, analyzed the data and wrote the paper; A.F. designed and was in charge of the experimental platform. All authors reviewed and approved the manuscript.

Ethics declarations

Competing interests

The authors declare no competing financial interests.

Electronic supplementary material

Supplementary Information

Supplementary Information

Rights and permissions

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License. The images or other third party material in this article are included in the article's Creative Commons license, unless indicated otherwise in the credit line; if the material is not included under the Creative Commons license, users will need to obtain permission from the license holder in order to reproduce the material. To view a copy of this license, visit http://creativecommons.org/licenses/by-nc-sa/4.0/

About this article

Cite this article

Cuesta, J., Gracia-Lázaro, C., Ferrer, A. et al. Reputation drives cooperative behaviour and network formation in human groups. Sci Rep 5, 7843 (2015). https://doi.org/10.1038/srep07843

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/srep07843

This article is cited by

-

Strengths of social ties modulate brain computations for third-party punishment

Scientific Reports (2023)

-

Coordination and equilibrium selection in games: the role of local effects

Scientific Reports (2022)

-

Degree of satisfaction-based adaptive interaction in spatial Prisoner’s dilemma

Nonlinear Dynamics (2022)

-

Mindfulness Meditation Activates Altruism

Scientific Reports (2020)

-

Competing for congestible goods: experimental evidence on parking choice

Scientific Reports (2020)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.