Abstract

Super-resolution virtual imaging by micron sized transparent beads (microspheres) was recently demonstrated by Wang et al. Practical applications in microscopy require control over the positioning of the microspheres. Here we present a method of positioning and controllable movement of a microsphere by using a fine glass micropipette. This allows sub-diffraction imaging at arbitrary points in three dimensions, as well as the ability to track moving objects. The results are relevant to a broad scope of applications, including sample inspection, microfabrication and bio-imaging.

Similar content being viewed by others

Introduction

Development of imaging techniques with resolution beyond the diffraction limit (super-resolution) is of utmost importance for scientific community. Several super-resolution techniques have been implemented up to date, including: stimulated emission depletion microscopy (STED), structured illumination microscopy (SIM) and photo activated localization microscopy (PALM)1. However, the price tags for commercially available systems range at around 1 million dollars, they require specific sample preparation and involve sophisticated image processing algorithms. Scanning probe techniques, such as near-field scanning optical microscope (NSOM), may represent a more accessible alternative. However, they suffer from efficiency2 and rather slow acquisition times. Therefore, development of low-cost and easily implementable solutions for super-resolution imaging is highly desirable.

One such promising solution is the microsphere nanoscopy technique, pioneered by us. The technique uses transparent beads with typical diameters of few microns (microspheres) are used as near-field to far-field lenses3. The microsphere focuses incident light to a subwavelength spot and transfers a near-field image of the close-contacting object to a virtual image on the opposite side of the microsphere. The virtual image is unaffected by diffraction and can be observed by adjusting a focal plane of a microscope objective lens. The experimentally demonstrated resolution of the technique is 50 nm. The technique has also been demonstrated in a liquid environment, which is highly appealing for biological applications4.

Practical implementation of the technique requires high precision manipulation of the microsphere. First, the manipulation must be able to position the microsphere at a specific location on the sample surface, which is essential for sample inspection applications. Second, it should also be able to move the microsphere to track a moving object, which is essential for biological applications, such as observation of live bacteria and viruses. Optical tweezers have been used for locomotion of microspheres both for imaging and nano-focusing applications5,6,7,8. However, such setups may be costly, as they require additional components (light source, focusing objective lens and dichroic optics). Also, optical tweezers cannot be used when samples are non-transparent at the wavelength of the trapping laser and their operation in air is quite challenging. An alternative approach exploits random Brownian motion of imaging spheres in the vicinity of the object. A specific image processing algorithm is used to reveal a super-resolved image9. However it is essential that a drop of liquid is deposited on the sample surface, which may not always be desirable for sample inspection applications.

In this work, we introduce an accessible and cost effective method of positioning and controlling movement of individual microspheres by attaching them to a sharpen tip of a rigid shaft (glass rod) using air suction, or UV-curable glue. The shaft is mounted on a three dimensional translation stage. By driving the translation stage, the microsphere, attached to the shaft, can be moved to any desired position in three dimensional space. The inclusion of the shaft does not affect the super-resolution imaging capabilities of microspheres, although they are stuck together. This work provides a new technology platform for a variety of super-resolution applications based on microspheres, including imaging, single-molecule detection and laser nano-patterning.

Results

We tested our method with two samples: one with sub-200 nm feature and the other with sub-100 nm feature. The first sample is a polymer grating structure, fabricated by laser interference lithography on a silicon substrate. The scanning electron microscope (SEM) image of the structure is shown in Fig. 1a and an optical image is shown in Fig. 1b. The width of the stripes is about 170 nm with 550 nm gaps and the height of the grooves is about 500 nm.

(a) SEM image of the polymer sample deposited on a silicon substrate (b) Optical microscope image of the sample in air under 50× objective lens. The scale bar is 10 μm. (c) Virtual image of the structure in air magnified by the probe with a 50× objective lens. (d) Intensity profile of the magnified virtual image from a white dashed area in (c). X- and Y-axes scales in (d) correspond to pixel numbers.

The second sample is composed of gold squares, deposited next to each other on the silicon substrate. It is fabricated using electron beam lithography. The squares of 500 × 500 nm2 are separated by a 73 nm gap and have a height of 50 nm. The pairs of the split-squares have a 5 μm pitch in X- and Y- directions. The SEM image of one split square is shown in Fig. 2a and the microscope image is shown in Fig. 2b.

(a) SEM image of the gold split-squares nanostructure. (b) Optical microscope image of the structure in air under 50× objective lens. The scale bar is 10 μm. (c) Magnified virtual image of the structure in air by the microsphere under 50× objective lens. (d) Intensity profile of the magnified virtual image from a white dashed area in (c). X- and Y-axes scales correspond to pixel numbers.

Demonstration of the positioning of the microsphere with the polymer sample

Media 1 of Supplementary materials shows movement of the microsphere across and along the grating. The sphere is attached to the pipette tip using vacuum suction. The contact is proven to be tight, since the microsphere does not chirp off during the scan. Fig. 1c shows a still virtual image of the structure, magnified by the probe in approximately 6 times. The magnified virtual image is converted to gray scale and pixel values are retrieved using Mathematica. The corresponding intensity profile is shown in Fig. 1d. The positioning accuracy of the probe is limited by the resolution of the manipulator (~20 nm). As it was mentioned above, it is also possible to fix the position of the probe and move the microscope stage instead. In this case the accuracy of the positioning of the probe is provided by the resolution of the microscope stage (~5 nm).

From the shot-by-shot analysis of the Media 1, we find that it is possible to clearly recognize alternating features of the sample at the velocity of the translation of the probe of 1.5 μm/sec. The capturing rate of the camera is 15 frames per second. Hence for each frame, the object is shifted for about 100 nm, which is about sixty percent of the feature size of 170 nm.

Imaging of split-squares structure using the microsphere

In order to demonstrate relevance of the method to sample inspection applications, we use the sample with gold split-squares with 73 nm gap, see Fig. 2a. Optical image of the sample, see Fig. 2b, does not allow resolving the gap between the squares. The accurate positioning of the microsphere between the squares using the micropipette is shown in Media 2 of Supplementary materials. Using the micromanipulator for movement of the microsphere allows to visualize the gap, as well as the borders of the structure. The observed virtual image of the gap is shown in Fig. 2c. The corresponding intensity profile, obtained by the same algorithm as in Fig. 1d, is shown in Fig. 2d. In Fig. 2d the gap between the squares can be clearly observed.

The analysis of Media 2 is carried out to determine the translation speed of the probe at which the image of the gap does not get blur. With the camera frame rate set to 15 frames per second, we obtained the estimation for the translation speed of 650 nm/sec. Further increase of the translation speed can be achieved by using faster cameras and brighter light sources.

Discussion

To understand the influence of micropipette on the microsphere imaging, numerical simulations were performed with CST Microwave Studio for following cases at 600 nm wavelengths: sole microsphere and microsphere with micropipette. The results shown in Fig. 3 reveal that the micropipette has almost a negligible effect on the near-field focusing under the particle. In both cases, the focus spot size is about ~0.58λ, close to the diffraction limit; and the focal length is in a subwavelength scale: ~0.92λ away from the particle bottom. Due to the contacting configuration of particle and substrate, strong near-field interaction between them will take place, leading to the conversion of surface evanescent waves into propagating waves reaching the far-field with high resolution. Such case is similar to those in NSOM2. The difference is that the efficiency of conversion, in the case of NSOM is typically 10−5–10−6 while in the case of the microsphere the efficiency is close to unity.

Due to the diffraction one can expect resolution of Δλ = λ/2n, which for n = 1.5 and λ = 600 nm is equal to 200 nm. Our experiment, as well as the earlier studies3 exceeds this resolution (3–4 times). It is related to the virtual imaging, which permits to obtain far-field imaging at the distance R ≫ L > λ, where L is the object size. For this case presentation of the evanescent wave in the form of plane wave is not valid and the resolution limit can be found from the information theory11. At typical experimental conditions this limit is about λ/10–λ/17, which is consistent with our observations.

The resolution of the method is mainly defined by the imaging sphere rather than the objective lens. Using of higher magnification objective lens (100×, same NA) will not help improve the resolution; it is quite often that the imaging contrast was worsened. On the other side, when high NA liquid-immersion objective lens was used, the silica microspheres (n = 1.50) have to be replaced by some other higher-index microspheres, e.g., BaTiO3 with n = 1.90; otherwise the method will fail to work because of insufficient refractive index contrast between the microsphere and surrounding medium12. Meanwhile, the resolution has not been improved by using water-immersion lens. According to our estimation based on an empirical virtual-imaging model, a best resolution of 20 nm can be achieved when refractive index contrast between microsphere and surrounding medium is about 1.803.

It is possible to combine our approach with image processing techniques based on moving imaging spheres, by enabling mechanical vibrations to the shaft holding the microsphere9. Application of algorithmic imaging processing techniques such as Coherent Diffractive Imaging should help to improve the resolution even further13.

In conclusion, we improved the virtual image super-resolution technique by developing a method of controllable movement of imaging microspheres. The method allows controlling the position of the microsphere in three dimensions with high precision with no sacrifice of optical resolution. It represents a simple alternative to optical tweezers. It is important for applications, where accurate positioning of the optical probe is of crucial importance. These applications include, but not limited to quality control and inspection of samples, laser nano-patterning, tracing of moving biological objects such as living cells, viruses and bacteria.

Methods

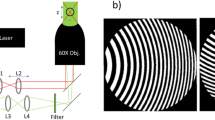

Optical setup

We use an upright metallurgical microscope (BXFM, Olympus) mounted on top of an anti-vibration optical table, see Fig. 4. The microscope is equipped with a white light source (halogen lamp, LG-PS2, Olympus) and a color CMOS camera (SC30, Olympus). In the described experiments we use a 50× objective lens (0.55 NA, M Plan Apo, Mitutoyo) with 13 mm working distance. The sample is placed on a 3 dimension piezo translation stage with 5 nm resolution along X, Y, Z axis (NanoMax-TS, Melles Griot). We use dry silica microspheres (6.1 μm ± 0.59 μm diameter, SS06N, Bangs lab), which follows the size suggested in a previous study3. To allow precise movement of a microsphere we attach it to a tip of a glass micropipette using air suction or a UV-curable optical glue (NOA81, Thorlabs). The pipette is mounted in a precision 3 dimensional manual flexure stage with 20 nm resolution in X, Y, Z axis (MDE 122 with MDE 216 HP adjusters, Elliot Scientific), referred to as a micromanipulator. The micropipette forms an angle of approximately 20° to the sample surface. Note that there is no strict requirement to use the high resolution piezo stage for movement of the sample or the micropipette. The microsphere can be moved relative to the fixed sample with a simple mechanical manipulator. In such simplified arrangement the estimated price of the components (excluding the price of the microscope) is approximately 2200 USD.

Layout of the optical setup (not to scale).

A microsphere lies on a sample and viewed under a microscope. It is attached to the micropipette using air suction or optical glue. The micropipette with the microsphere is attached to the micromanipulator via a V-grove holder. Driving the micromanipulator allows movement of the sphere in three dimensions. Inset shows the microscope image of the microsphere attached to the pipette by an optical glue. Image in air under 40× objective lens, the scale bar is 10 μm.

Micropipette fabrication

The micropipettes are pulled from borosilicate capillary glass (outer diameter 1.5 mm, inner diameter 1.12 mm, TW150-6, World Precision Instrument) by a micropipette puller (model P-97, Sutter Instruments). The parameters of the puller are set that the pulled micropipettes have opening diameters of 1 μm–2 μm and taper lengths of about 3 mm10. The fabricated micropipette is fixed in a metallic hollow rod-shape adapter, which is attached to the micromanipulator via a V-groove holder (KM100V, Thorlabs). The plastic air tubing is channeled through the adapter and tightly fit to the opposite end of the micropipette. The end of the tubing is connected to a standard 10 ml syringe.

Imaging with microspheres

In the experiment, a small quantity of microspheres is dispersed on the sample surface and observed with the microscope. The tip of the micropipette is brought close (1 μm–2 μm) to a single microsphere using the micromanipulator. Negative air pressure in the micropipette is created by the pulling the plunger of the syringe, attracts the microsphere and attaches it to the tip. If the UV-curable optical glue is used, then, as soon as the microsphere touches the glue, a UV exposure is applied to strengthen the contact, see Fig. 4. Then the pipette with the microsphere is lifted upwards and the microscope stage is moved to visualize the nanostructure. The micropipette with the attached microsphere is slowly lowered by using a z-translation of the stage, see Fig. 4. At some point slight displacement of the microsphere in the plane of the sample is observed (along X-axis in Fig. 4), which indicates that the microsphere touches the surface and the z-movement is stopped. Further forcing the z-movement would result in a break of the pipette. Through the microscope, one sees an image of the micropipette tip, the microsphere attached to it and the nanostructure at the same time. The virtual image of the nanostructure is observed by adjusting the focal plane of the objective lens3. The sphere can be positioned at any desired location by using the micromanipulator. Note that the approach allows positioning the microsphere at the point above the sample (Z-direction in Fig. 4). This provides an opportunity to study samples at various depths.

References

Schermelleh, L., Heintzmann, R. & Leonhardt, H. A guide to super-resolution fluorescence microscopy. J. Cell Biol. 190, 165–175 (2010).

Novotny, L. & Hecht, B. Principles of Nano-Optics (Cambridge University Press, 2006).

Wang, Z. et al. Optical virtual imaging at 50 nm lateral resolution with a white-light nanoscope. Nat. Commun. 2, 218 (2011).

Hao, X., Kuang, C., Liu, X., Zhang, H. & Li, Y. Microsphere based microscope with optical super-resolution capability. Appl. Phys. Lett. 99, 203102 (2011).

Yakovlev, V. V. & Luk'yanchuk, B. Multiplexed Nanoscopic Imaging. Laser Phys. 14, 1065–1071 (2004).

Faustov, A., Shcheslavskiy, V., Petrov, G. I., Lukyanchuk, B. & Yakovlev, V. V. Highly multiplexed scanning nanoscopic imaging. Proc. SPIE “Nano/biophotonics” 5331, 21–28 (2004).

Banas, A. et al. Fabrication and optical trapping of handling structures for re-configurable microsphere magnifiers. Proc. SPIE 8637, Complex Light and Optical Forces VII, 86370Y (2013).

McLeod, E. & Arnold, C. B. Subwavelength direct-write nanopatterning using optically trapped microspheres. Nat. Nanotechnol. 3, 413–417 (2008).

Gur, A., Fixler, D., Mico, V., Garcia, J. & Zalevsky, Z. Linear optics based nanoscopy. Opt. Exp. 18, 22222–22231 (2010).

Sutter Instrument Company. Pipette cook book 2011, http://www.sutter.com/PDFs/pipette_cookbook.pdf (2013).

Narimanov, E. E. The resolution limit for far-field optical imaging. in CLEO: 2013, OSA Technical Digest (online) (Optical Society of America, 2013), paper QW3A.7. http://www.opticsinfobase.org/abstract.cfm?URI=CLEO_QELS-2013-QW3A.7 (2013).

Guo, H., Han, Y., Weng, X., Zhao, Y., Sui, G., Wang, Y. & Zhuang, S. Near-field focusing of the dielectric microsphere with wavelength-scale radius. Opt. Exp. 21, 2434–2443 (2013).

Szameit, A. et al. Sparsity-based single-shot subwavelength coherent diffractive imaging. Nat. Mater. 11, 455–459 (2012).

Acknowledgements

We would like to thank Janaki DO Shanmugam for preparation of the samples, Reuben Bakker, Arseniy Kuznetsov and Guillaume Vienne for valuable discussions. The work was supported by the Joint Council Office grant (Project No: 1231AEG025) and SERC Metamaterials Program on Superlens (grant no. 092 154 0099).

Author information

Authors and Affiliations

Contributions

L.K. conceived the concept, led the experiments and wrote the manuscript. J.J.W. contributed to the experiments. Z.W. and B.L. contributed to the theoretical analysis and numerical simulations. B.L. provided general guidance and coordination of the project. All authors contributed to the scientific discussion and revision of the article.

Ethics declarations

Competing interests

The authors declare no competing financial interests.

Electronic supplementary material

Supplementary Information

Media 1

Supplementary Information

Media 2

Rights and permissions

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareALike 3.0 Unported License. To view a copy of this license, visit http://creativecommons.org/licenses/by-nc-sa/3.0/

About this article

Cite this article

Krivitsky, L., Wang, J., Wang, Z. et al. Locomotion of microspheres for super-resolution imaging. Sci Rep 3, 3501 (2013). https://doi.org/10.1038/srep03501

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/srep03501

This article is cited by

-

Microsphere-assisted, nanospot, non-destructive metrology for semiconductor devices

Light: Science & Applications (2022)

-

In situ printing of liquid superlenses for subdiffraction-limited color imaging of nanobiostructures in nature

Microsystems & Nanoengineering (2019)

-

Microsphere-mediated optical contrast tuning for designing imaging systems with adjustable resolution gain

Scientific Reports (2018)

-

Turning a normal microscope into a super-resolution instrument using a scanning microlens array

Scientific Reports (2018)

-

Surface Imaging Technique by an Optically Trapped Microsphere in Air Condition

Nanomanufacturing and Metrology (2018)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.